Evaluating the Checklist for Artificial Intelligence in Medical Imaging (CLAIM)-Based Quality of Reports Using Convolutional Neural Network for Odontogenic Cyst and Tumor Detection

Abstract

:1. Introduction

2. Materials and Methods

2.1. Inclusion and Exclusion Criteria

2.2. Information Sources and Search Strategy

2.2.1. Electronic Search

2.2.2. Manual Searching

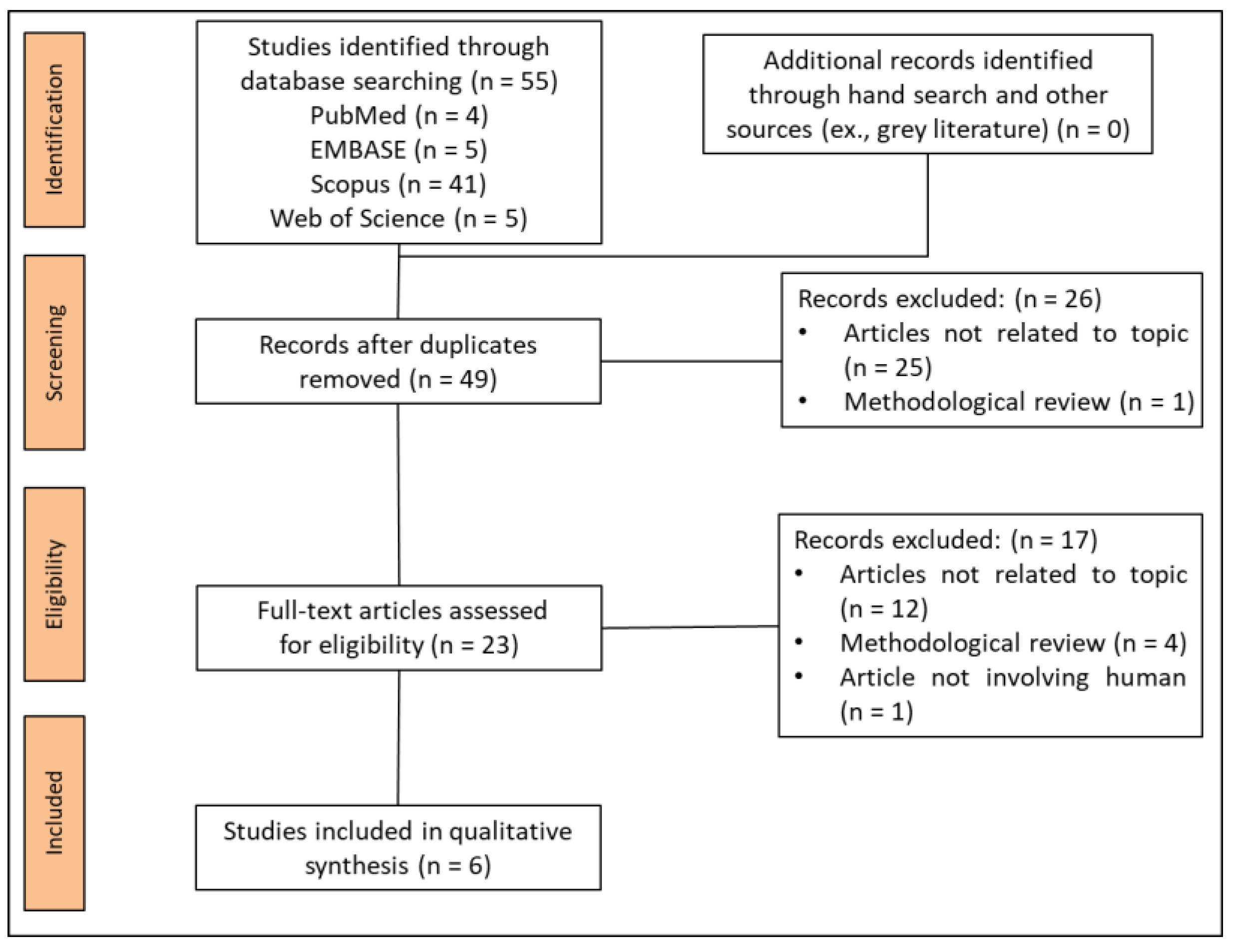

2.3. Study Selection

2.4. Data Extraction

2.5. Methods of Analysis

2.5.1. Reporting Epidemiological and Descriptive Characteristics

2.5.2. Reporting of Methodological Elements of the Included CNN Studies

2.5.3. Statistical Analysis

3. Results

3.1. Study Selection

3.2. Study Characteristics

3.2.1. Epidemiological and Descriptive Characteristics

3.2.2. General Characteristics

3.3. Synthesis of the Results

Reporting of CLAIM Items across the Included Studies

4. Discussion

Strengths and Limitations

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Schwendicke, F.; Golla, T.; Dreher, M.; Krois, J. Convolutional neural networks for dental image diagnostics: A scoping review. J. Dent. 2019, 91, 103226. [Google Scholar] [CrossRef] [PubMed]

- Shan, T.; Tay, F.R.; Gu, L. Application of artificial intelligence in dentistry. J. Dent. Res. 2021, 100, 232–244. [Google Scholar] [CrossRef] [PubMed]

- Wang, H.; Minnema, J.; Batenburg, K.J.; Forouzanfar, T.; Hu, F.J.; Wu, G. Multiclass CBCT image segmentation for orthodontics with deep learning. J. Dent. Res. 2021, 100, 943–949. [Google Scholar] [CrossRef] [PubMed]

- Jeon, S.J.; Yun, J.P.; Yeom, H.G.; Shin, W.S.; Lee, J.H.; Jeong, S.H.; Seo, M.S. Deep-learning for predicting C-shaped canals in mandibular second molars on panoramic radiographs. Dentomaxillofac. Radiol. 2021, 50, 20200513. [Google Scholar] [CrossRef]

- Caliskan, S.; Tuloglu, N.; Celik, O.; Ozdemir, C.; Kizilaslan, S.; Bayrak, S. A pilot study of a deep learning approach to submerged primary tooth classification and detection. Int. J. Comput. Dent. 2021, 24, e1–e9. [Google Scholar]

- Kwon, O.; Yong, T.H.; Kang, S.R.; Kim, J.E.; Huh, K.H.; Heo, M.S.; Lee, S.S.; Choi, S.C.; Yi, W.J. Automatic diagnosis for cysts and tumors of both jaws on panoramic radiographs using a deep convolution neural network. Dentomaxillofac. Radiol. 2020, 49, 20200185. [Google Scholar] [CrossRef] [PubMed]

- Lee, J.H.; Kim, D.H.; Jeong, S.N. Diagnosis of cystic lesions using panoramic and cone beam computed tomographic images based on deep learning neural network. Oral Dis. 2020, 26, 152–158. [Google Scholar] [CrossRef] [PubMed]

- Ariji, Y.; Yanashita, Y.; Kutsuna, S.; Muramatsu, C.; Fukuda, M.; Kise, Y.; Nozawa, M.; Kuwada, C.; Fujita, H.; Katsumata, A.; et al. Automatic detection and classification of radiolucent lesions in the mandible on panoramic radiographs using a deep learning object detection technique. Oral Surg. Oral Med. Oral Pathol. Oral Radiol. 2019, 128, 424–430. [Google Scholar] [CrossRef]

- Luo, W.; Phung, D.; Tran, T.; Gupta, S.; Rana, S.; Karmakar, C.; Shilton, A.; Yearwood, J.; Dimitrova, N.; Ho, T.B.; et al. Guidelines for developing and reporting machine learning predictive models in biomedical research: A multidisciplinary view. J. Med. Internet Res. 2016, 18, e323. [Google Scholar] [CrossRef] [Green Version]

- Handelman, G.S.; Kok, H.K.; Chandra, R.V.; Razavi, A.H.; Huang, S.; Brooks, M.; Lee, M.J.; Asadi, H. Peering into the black box of artificial intelligence: Evaluation metrics of machine learning methods. AJR Am. J. Roentgenol. 2019, 212, 38–43. [Google Scholar] [CrossRef]

- Park, S.H.; Han, K. Methodologic guide for evaluating clinical performance and effect of artificial intelligence technology for medical diagnosis and prediction. Radiology 2018, 286, 800–809. [Google Scholar] [CrossRef]

- Bluemke, D.A.; Moy, L.; Bredella, M.A.; Ertl-Wagner, B.B.; Fowler, K.J.; Goh, V.J.; Halpern, E.F.; Hess, C.P.; Schiebler, M.L.; Weiss, C.R. Assessing radiology research on artificial intelligence: A brief guide for authors, reviewers, and readers-from the radiology editorial board. Radiology 2020, 294, 487–489. [Google Scholar] [CrossRef] [Green Version]

- Bossuyt, P.M.; Reitsma, J.B.; Bruns, D.E.; Gatsonis, C.A.; Glasziou, P.P.; Irwig, L.; Lijmer, J.G.; Moher, D.; Rennie, D.; de Vet, H.C.; et al. STARD 2015: An updated list of essential items for reporting diagnostic accuracy studies. Radiology 2015, 277, 826–832. [Google Scholar] [CrossRef] [Green Version]

- Bossuyt, P.M.; Reitsma, J.B.; Bruns, D.E.; Gatsonis, C.A.; Glasziou, P.P.; Irwig, L.M.; Lijmer, J.G.; Moher, D.; Rennie, D.; de Vet, H.C. Towards complete and accurate reporting of studies of diagnostic accuracy: The STARD initiative. Radiology 2003, 226, 24–28. [Google Scholar] [CrossRef] [Green Version]

- Cohen, J.F.; Korevaar, D.A.; Altman, D.G.; Bruns, D.E.; Gatsonis, C.A.; Hooft, L.; Irwig, L.; Levine, D.; Reitsma, J.B.; de Vet, H.C.; et al. STARD 2015 guidelines for reporting diagnostic accuracy studies: Explanation and elaboration. BMJ Open 2016, 6, e012799. [Google Scholar] [CrossRef]

- Bossuyt, P.M.; Reitsma, J.B. The STARD initiative. Lancet 2003, 361, 71. [Google Scholar] [CrossRef]

- Schwendicke, F.; Krois, J. Better reporting of studies on artificial intelligence: CONSORT-AI and beyond. J. Dent. Res. 2021, 100, 677–680. [Google Scholar] [CrossRef] [PubMed]

- Schwendicke, F.; Singh, T.; Lee, J.H.; Gaudin, R.; Chaurasia, A.; Wiegand, T.; Uribe, S.; Krois, J. Artificial intelligence in dental research: Checklist for authors, reviewers, readers. J. Dent. 2021, 107, 103610. [Google Scholar] [CrossRef] [PubMed]

- Mongan, J.; Moy, L.; Kahn, C.E., Jr. Checklist for Artificial Intelligence in Medical Imaging (CLAIM): A guide for authors and reviewers. Radiol. Artif. Intell. 2020, 2, e200029. [Google Scholar] [CrossRef] [Green Version]

- Liu, Z.; Liu, J.; Zhou, Z.; Zhang, Q.; Wu, H.; Zhai, G.; Han, J. Differential diagnosis of ameloblastoma and odontogenic keratocyst by machine learning of panoramic radiographs. Int. J. Comput. Assist. Radiol. Surg. 2021, 16, 415–422. [Google Scholar] [CrossRef]

- Yang, H.; Jo, E.; Kim, H.J.; Cha, I.H.; Jung, Y.S.; Nam, W.; Kim, J.Y.; Kim, J.K.; Kim, Y.H.; Oh, T.G.; et al. Deep learning for automated detection of cyst and tumors of the jaw in panoramic radiographs. J. Clin. Med. 2020, 9, 1839. [Google Scholar] [CrossRef] [PubMed]

- Poedjiastoeti, W.; Suebnukarn, S. Application of convolutional neural network in the diagnosis of jaw tumors. Healthc. Inform. Res. 2018, 24, 236–241. [Google Scholar] [CrossRef] [PubMed]

- Cho, S.J.; Sunwoo, L.; Baik, S.H.; Bae, Y.J.; Choi, B.S.; Kim, J.H. Brain metastasis detection using machine learning: A systematic review and meta-analysis. Neuro-Oncol. 2021, 23, 214–225. [Google Scholar] [CrossRef]

- Si, L.; Zhong, J.; Huo, J.; Xuan, K.; Zhuang, Z.; Hu, Y.; Wang, Q.; Zhang, H.; Yao, W. Deep learning in knee imaging: A systematic review utilizing a Checklist for Artificial Intelligence in Medical Imaging (CLAIM). Eur. Radiol. 2021. [Google Scholar] [CrossRef] [PubMed]

- O’Shea, R.J.; Sharkey, A.R.; Cook, G.J.R.; Goh, V. Systematic review of research design and reporting of imaging studies applying convolutional neural networks for radiological cancer diagnosis. Eur. Radiol. 2021, 31, 7969–7983. [Google Scholar] [CrossRef]

- Liu, X.; Faes, L.; Kale, A.U.; Wagner, S.K.; Fu, D.J.; Bruynseels, A.; Mahendiran, T.; Moraes, G.; Shamdas, M.; Kern, C.; et al. A comparison of deep learning performance against health-care professionals in detecting diseases from medical imaging: A systematic review and meta-analysis. Lancet Digit. Health 2019, 1, e271–e297. [Google Scholar] [CrossRef]

- Nagendran, M.; Chen, Y.; Lovejoy, C.A.; Gordon, A.C.; Komorowski, M.; Harvey, H.; Topol, E.J.; Ioannidis, J.P.A.; Collins, G.S.; Maruthappu, M. Artificial intelligence versus clinicians: Systematic review of design, reporting standards, and claims of deep learning studies. BMJ 2020, 368, m689. [Google Scholar] [CrossRef] [Green Version]

- Wynants, L.; Smits, L.J.M.; Van Calster, B. Demystifying AI in healthcare. BMJ 2020, 370, m3505. [Google Scholar] [CrossRef]

- Shin, H.C.; Roth, H.R.; Gao, M.; Lu, L.; Xu, Z.; Nogues, I.; Yao, J.; Mollura, D.; Summers, R.M. Deep convolutional neural networks for computer-aided detection: CNN architectures, dataset characteristics and transfer learning. IEEE Trans. Med. Imaging 2016, 35, 1285–1298. [Google Scholar] [CrossRef] [Green Version]

| Items and Subcategory | No. (%) of Reports |

|---|---|

| Journal Category | |

| Biomedical engineering field | 2 (33%) |

| Dental or medical field | 4 (67%) |

| Location of corresponding author | |

| Asia | 6 (100%) |

| Europe | 0 (0%) |

| USA | 0 (0%) |

| Job of corresponding author * | |

| Doctor or dentist | 6 (86%) |

| Engineer | 1 (14%) |

| Type of reporting guideline | |

| STARD | 0 (100%) |

| Other | 0 (100%) |

| None | 6 (100%) |

| Funding source | |

| Both private and public | 0 (0%) |

| Private | 0 (0%) |

| Public | 4 (67%) |

| None | 2 (33%) |

| Unclear | 0 (0%) |

| # | Study | Country (Year) | Journal | Study Objectives | Number of Images | Annotators | CNN Model | Comparative Analysis | Outcome Metrics | CNN Performance |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | Liu et al. | China (2021) | International Journal of Computer Assisted Radiology and Surgery | Classification | 420 panoramic images: AM (209), OKC (211), Training (295), validation (42), and test (83) | Histopathologic diagnosis | VGG-19 and ResNet-50 | Radiologists | Sensitivity, specificity, accuracy, and AUC | Sensitivity (92.88%), specificity (87.8%), accuracy (90.36%), and AUC (0.946) |

| 2 | Kwon et al. | South Korea (2020) | Dentomaxillofacial Radiology | Detection and classification | 1282 maxillary and mandibular panoramic images: DC (350), periapical cyst (302), OKC (300), AM (230), no lesion (100) Training (946) and test (235) | Histopathologic diagnosis | A modified CNN from the YOLO v3 | NR | Sensitivity, specificity, accuracy, and AUC | Sensitivity (88.9%), specificity (97.2%), accuracy (95.6%), and AUC (0.94) |

| 3 | Yang et al. | South Korea (2020) | Journal of Clinical Medicine | Detection and classification | 1603 maxillary and mandibular panoramic images: DC (1094), OKC (316), AM (160), no lesion (33) Training (1422) and test (181) | Histopathologic diagnosis | YOLO v2 | OMFS (3), general practitioner (2) | Precision, recall, accuracy, and F1 score | Precision (0.707), recall (0.68), accuracy (0.663), and F1 score (0.693) |

| 4 | Ariji et al. | Japan (2019) | Oral Surgery Oral Medicine Oral Pathology Oral Radiology | Detection and classification | 285 mandibular panoramic images: AM (41), OKC (47), DC (90), radicular cyst (91), simple bone cyst (16) Training (21), test1 (50), test2 (25) | Histopathologic diagnosis | DIGITS using deep neural network Detect Net | NR | Sensitivity and false positive using IOU (threshold 0.6) | Detection of radiolucent lesions: sensitivity (0.88), false-positive rate per image for test1 (0.00) and test2 (0.04) Detection and classification sensitivity of each type of lesion using test1: AM (0.71 and 0.6), OKC (1 and 0.13), DC (0.88 and 0.82), and radicular cysts (0.81 and 0.82) |

| 5 | Lee et al. | South Korea (2019) | Oral Diseases | Detection and classification | 1140 panoramic and 986 CBCT images: OKC (260 + 188), DC (463 + 396), periapical cyst (417 + 402) | Histopathologic diagnosis | Google Net inception v3 | NR | AUC, sensitivity, and specificity | CBCT: AUC (0.914), sensitivity (96.1%), specificity (77.1%) Panoramic images: AUC (0.847), sensitivity (88.2%), specificity (77%) |

| 6 | Poedjiastoeti et al. | Thailand (2018) | Health Informatics Research | Detection and classification | 500 panoramic images: AM (250), OKC (250) Training (400) and test (100) | Histopathologic diagnosis | 16-layer CNN (VGG-16) | OMFS (5) | Sensitivity, specificity, accuracy, and diagnostic time | Sensitivity (81.8%), specificity (83.3%), accuracy (83%), and diagnostic time (38 s) |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Le, V.N.T.; Kim, J.-G.; Yang, Y.-M.; Lee, D.-W. Evaluating the Checklist for Artificial Intelligence in Medical Imaging (CLAIM)-Based Quality of Reports Using Convolutional Neural Network for Odontogenic Cyst and Tumor Detection. Appl. Sci. 2021, 11, 9688. https://doi.org/10.3390/app11209688

Le VNT, Kim J-G, Yang Y-M, Lee D-W. Evaluating the Checklist for Artificial Intelligence in Medical Imaging (CLAIM)-Based Quality of Reports Using Convolutional Neural Network for Odontogenic Cyst and Tumor Detection. Applied Sciences. 2021; 11(20):9688. https://doi.org/10.3390/app11209688

Chicago/Turabian StyleLe, Van Nhat Thang, Jae-Gon Kim, Yeon-Mi Yang, and Dae-Woo Lee. 2021. "Evaluating the Checklist for Artificial Intelligence in Medical Imaging (CLAIM)-Based Quality of Reports Using Convolutional Neural Network for Odontogenic Cyst and Tumor Detection" Applied Sciences 11, no. 20: 9688. https://doi.org/10.3390/app11209688

APA StyleLe, V. N. T., Kim, J.-G., Yang, Y.-M., & Lee, D.-W. (2021). Evaluating the Checklist for Artificial Intelligence in Medical Imaging (CLAIM)-Based Quality of Reports Using Convolutional Neural Network for Odontogenic Cyst and Tumor Detection. Applied Sciences, 11(20), 9688. https://doi.org/10.3390/app11209688