Transitioning Broadcast to Cloud

Abstract

1. Introduction

- on-prem: everything, including playout systems, encoders, multiplexers, servers, and other equipment for both broadcast and OTT distribution is installed and operated on premises, and

- cloud-based: almost everything is turned into software-based solutions, and operated using infrastructure of cloud service providers, such as AWS, GCP, Azure, etc.

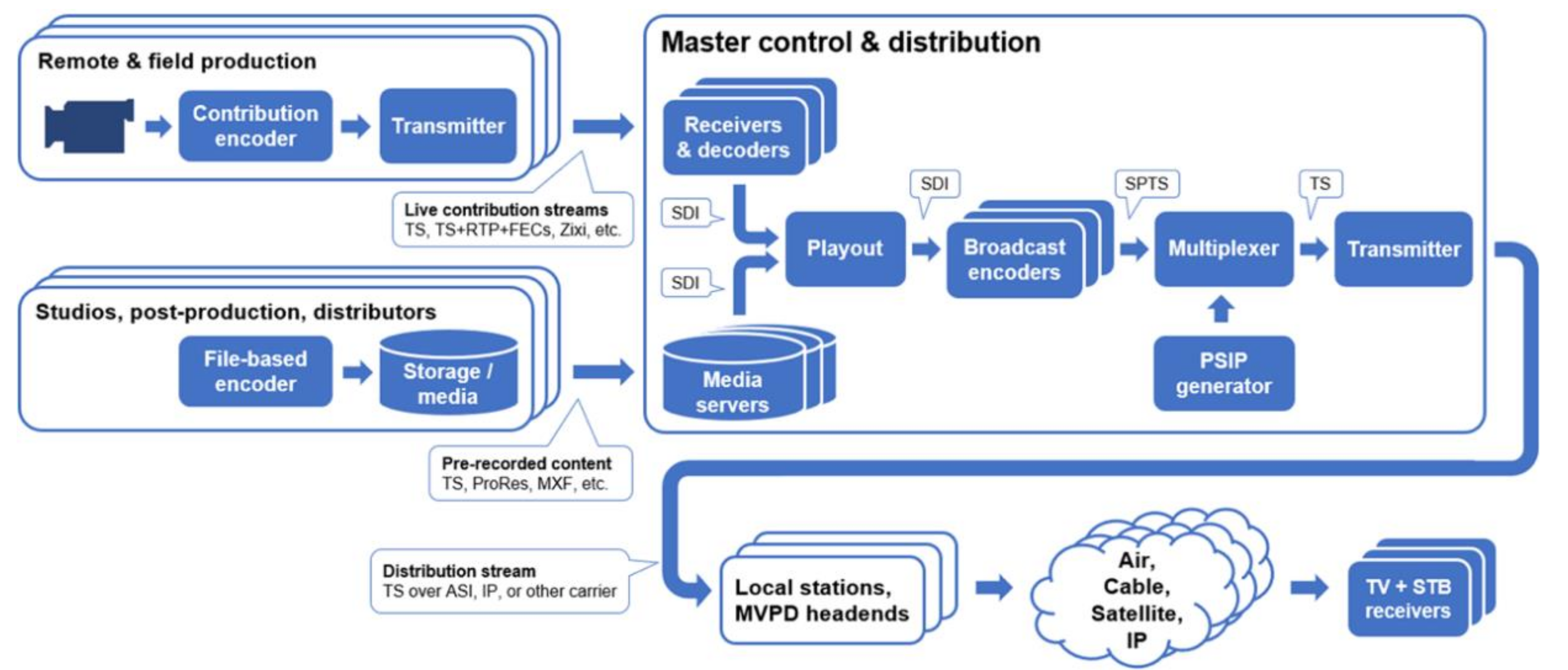

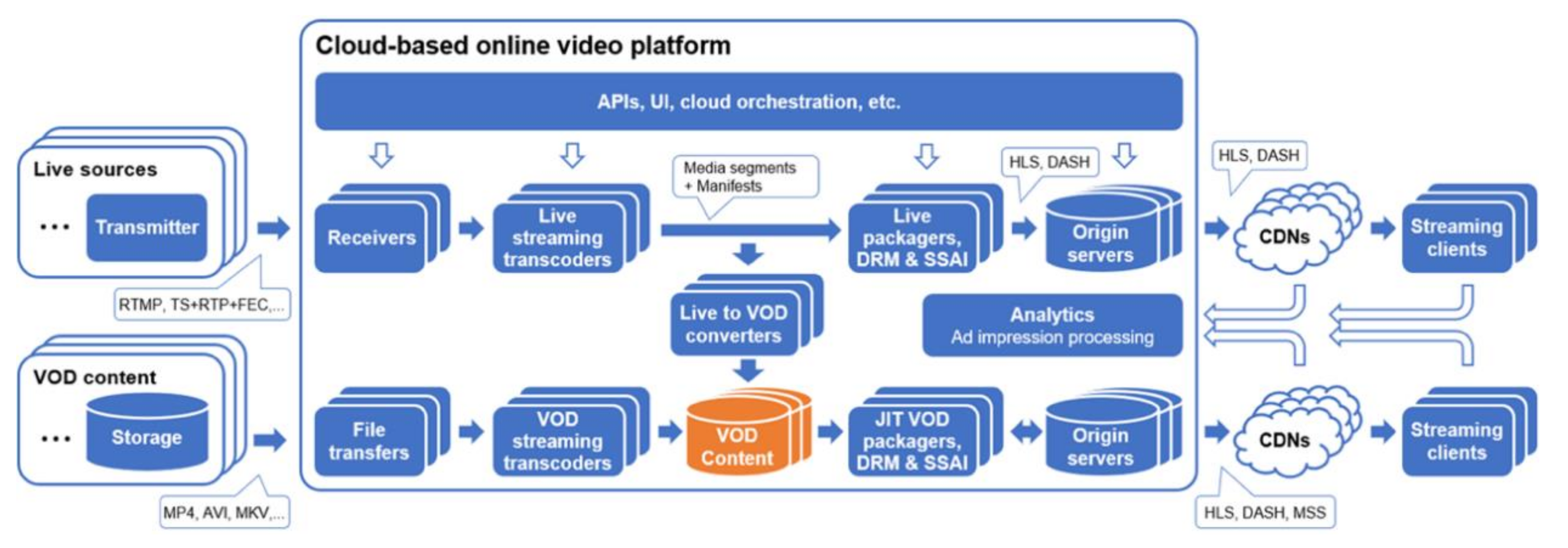

2. Processing Chains in Broadcast and Cloud-Based Online Video Systems

2.1. Main Functions and Distribution Flows

2.2. Contribution and Ingest

2.3. Video Formats and Elementary Streams

2.4. Distribution Formats

2.5. Ad Processing

2.6. Delay, Random Access, Fault Tolerance, and Signal Discontinuities

3. Technologies Needed to Support Convergence

3.1. Cloud Contribution Links and Protocols

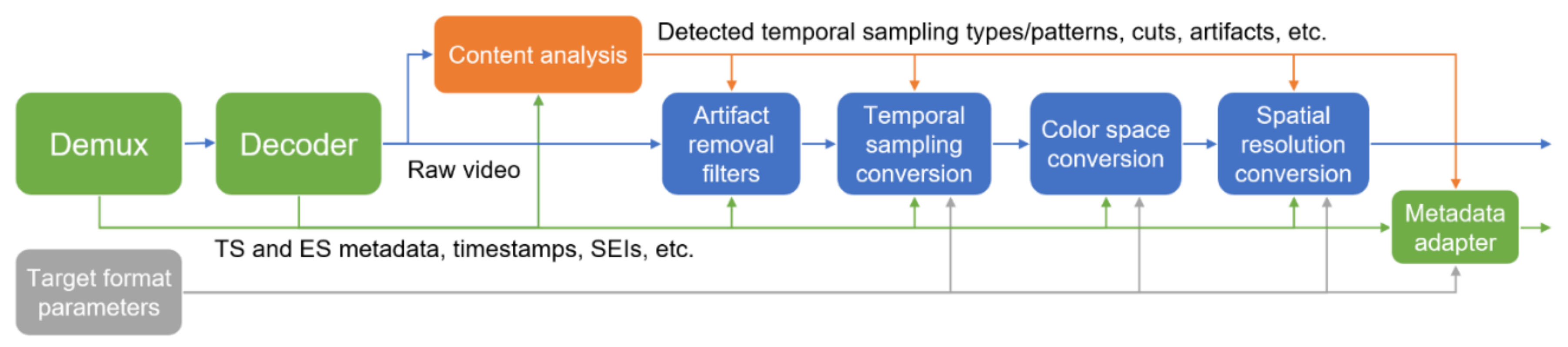

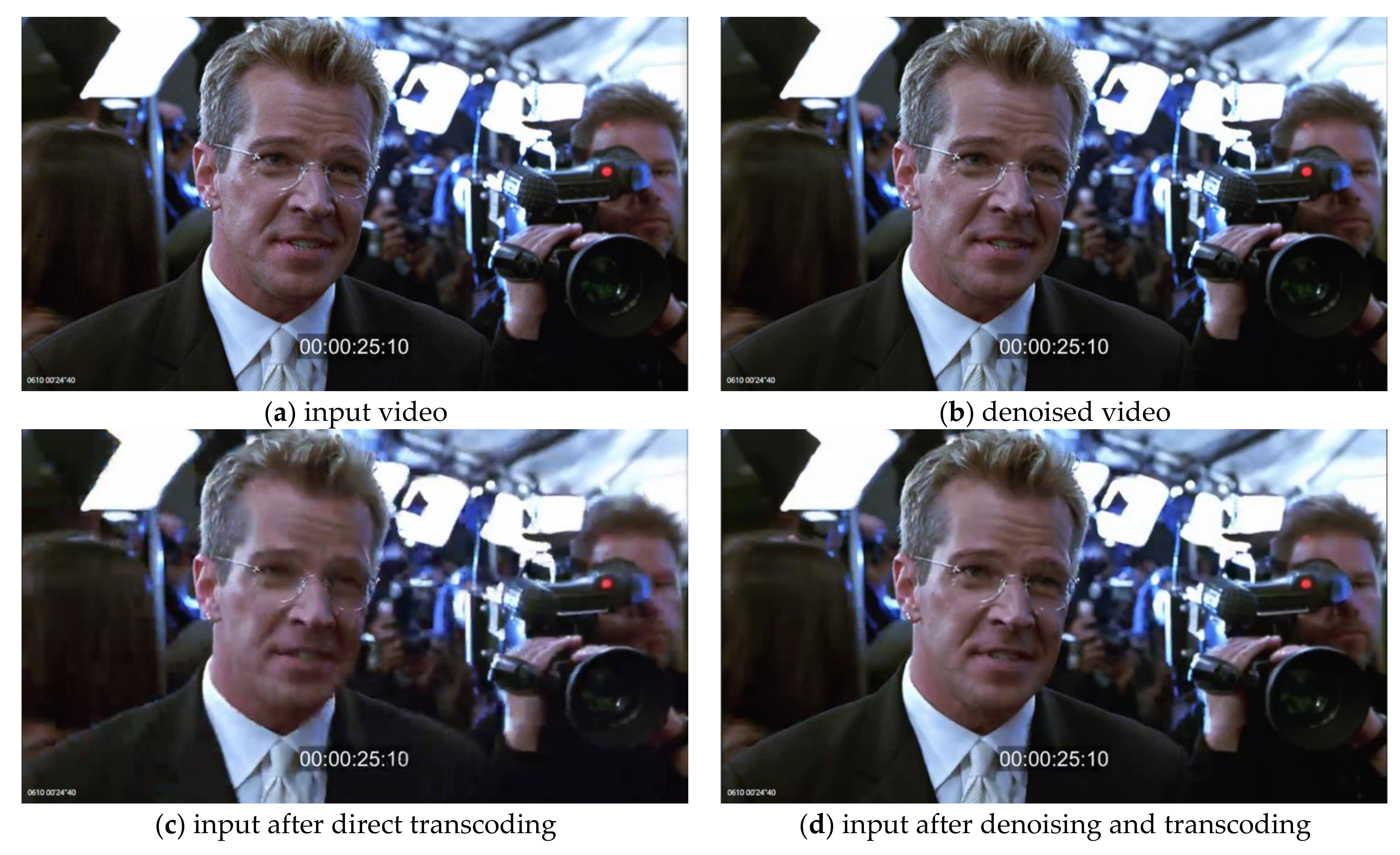

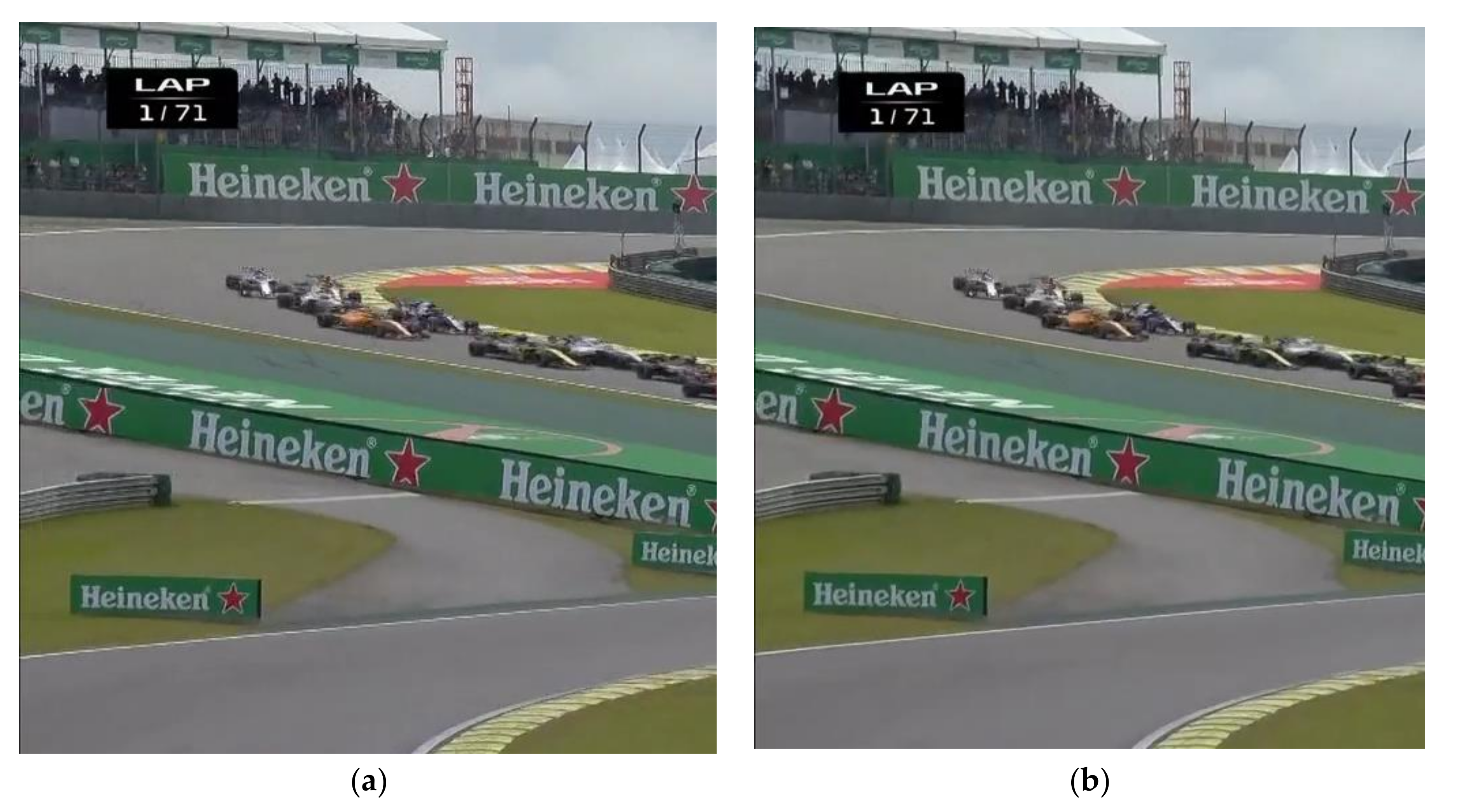

3.2. Signal Processing

3.3. Broadcast-Compliant Encoding

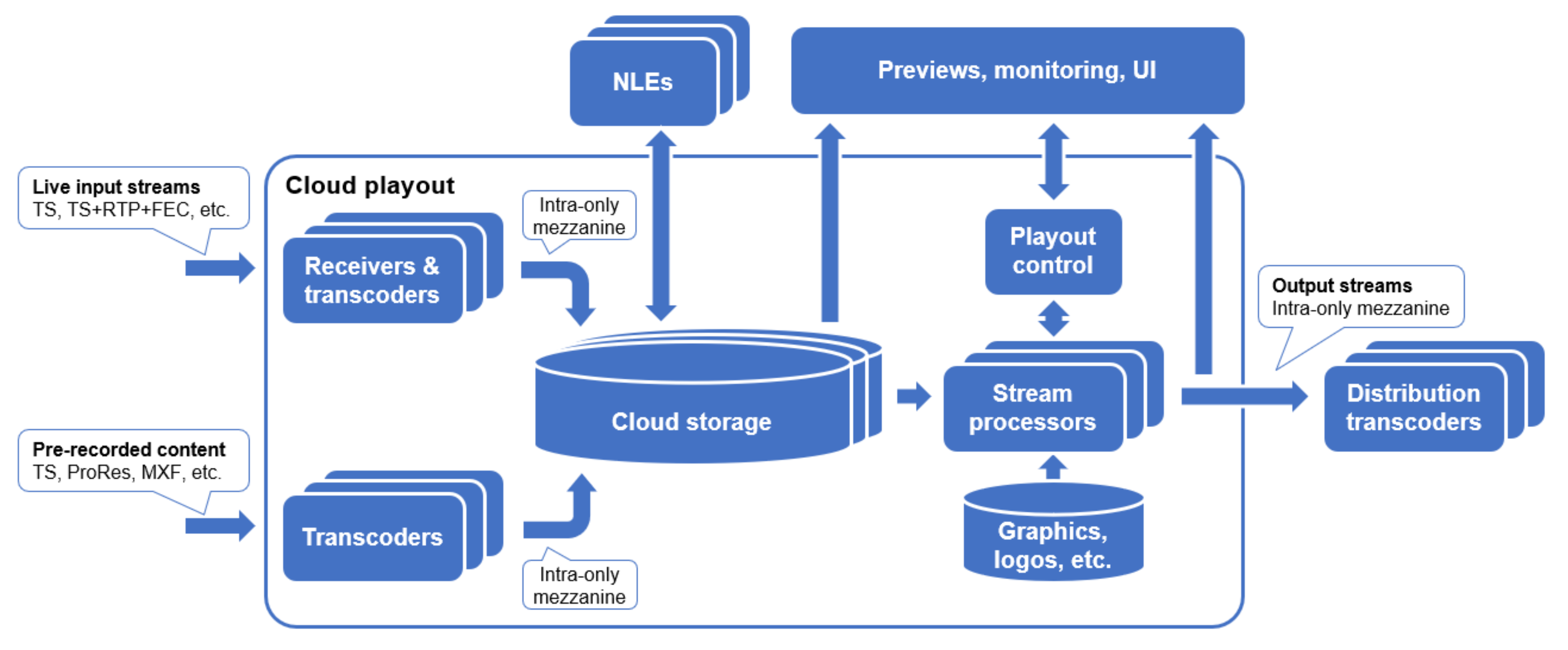

3.4. Cloud Playout

4. Transitioning Broadcast to Cloud

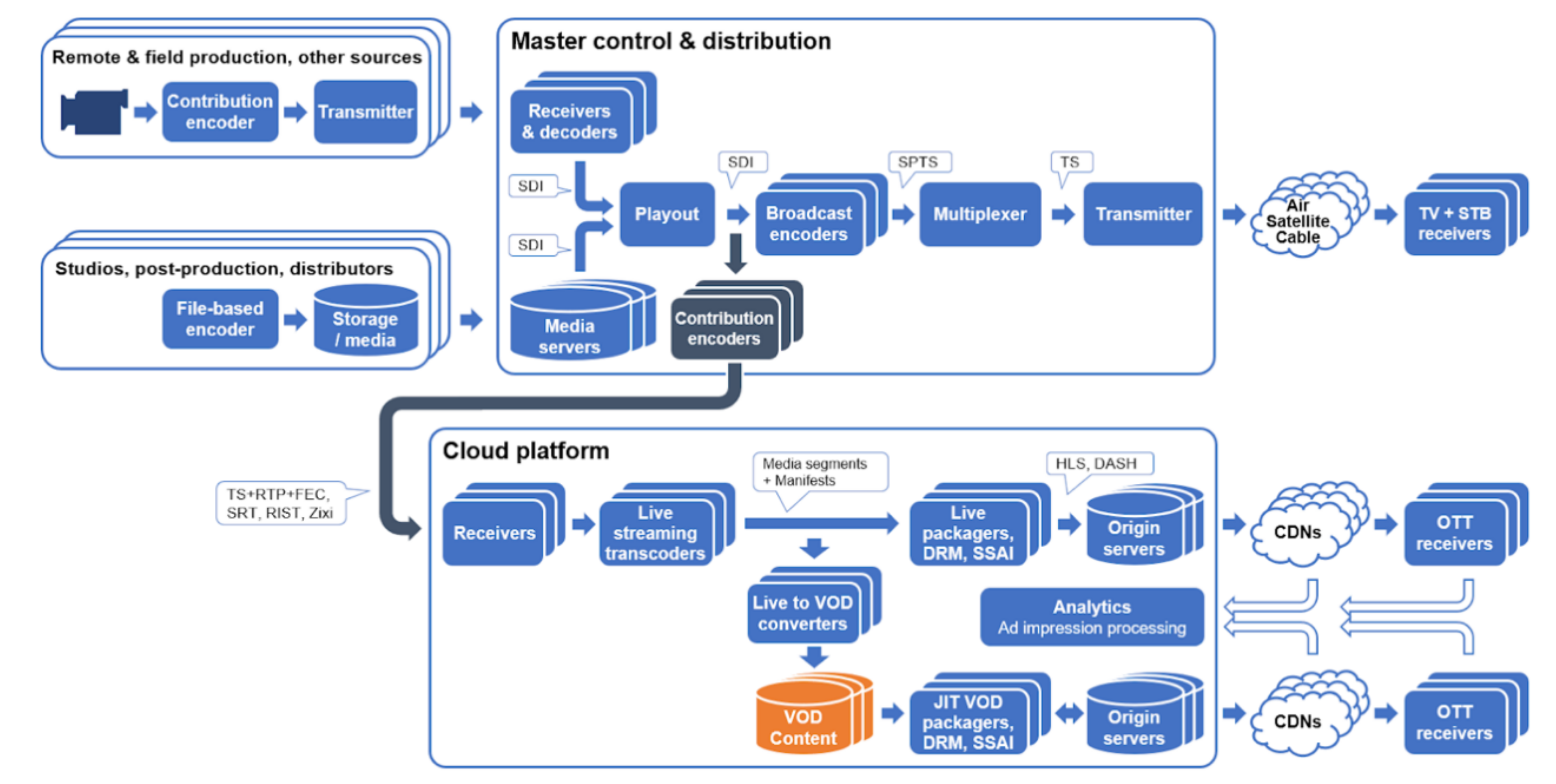

4.1. Cloud-Based OTT Systems

- reliable real-time ingest, e.g., using RTP+FEC, SRT, RIST, or Zixi-type of protocols and/or a dedicated link, such as AWS Direct connect [81];

- improvements in signal processing stack—achieving artifact-free conversion of broadcast formats to ones used in OTT/streaming;

- improvements in metadata handling, including full pass-through of SCTE-35 and compliant implementation of SSAI and CSAI functionality based on it.

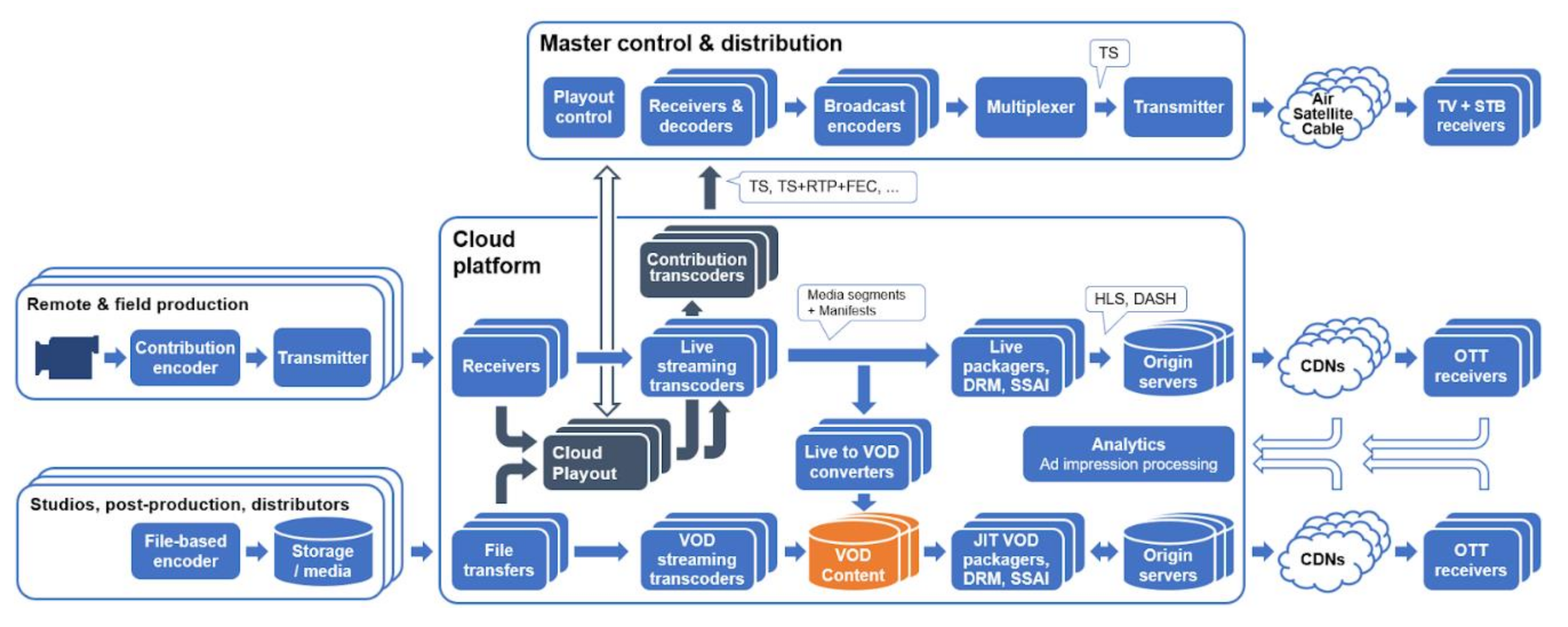

4.2. Cloud-Based Ingest, Playout, and OTT Delivery System

- cloud-based broadcast-grade playout system,

- direct link connection to cloud ensuring low latency monitoring and real-time responses in operation of cloud playout system,

- improvements in cloud-run encoders, specifically those acting as contribution transcoders sending broadcast-compliant streams back to the on-prem system.

4.3. Cloud-Based Broadcast and OTT Delivery System

- broadcast-grade transcoders and multiplexer should be natively implemented in cloud

- this includes implementation of statmux capability, generation and insertion of all related program and system information, possible addition of datacast service capability, etc.

4.4. Comparison of the Proposed Systems

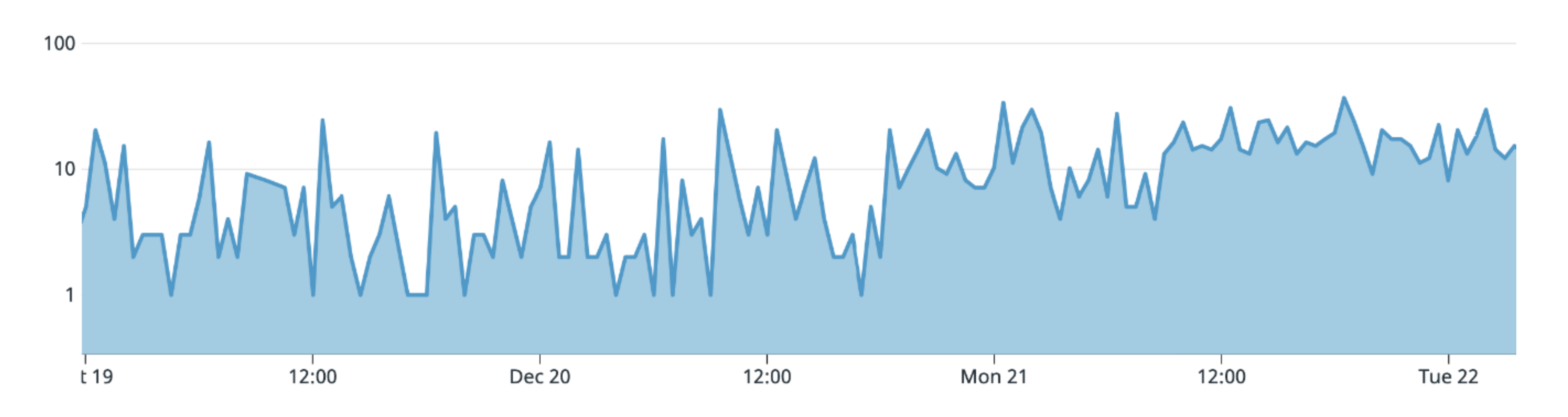

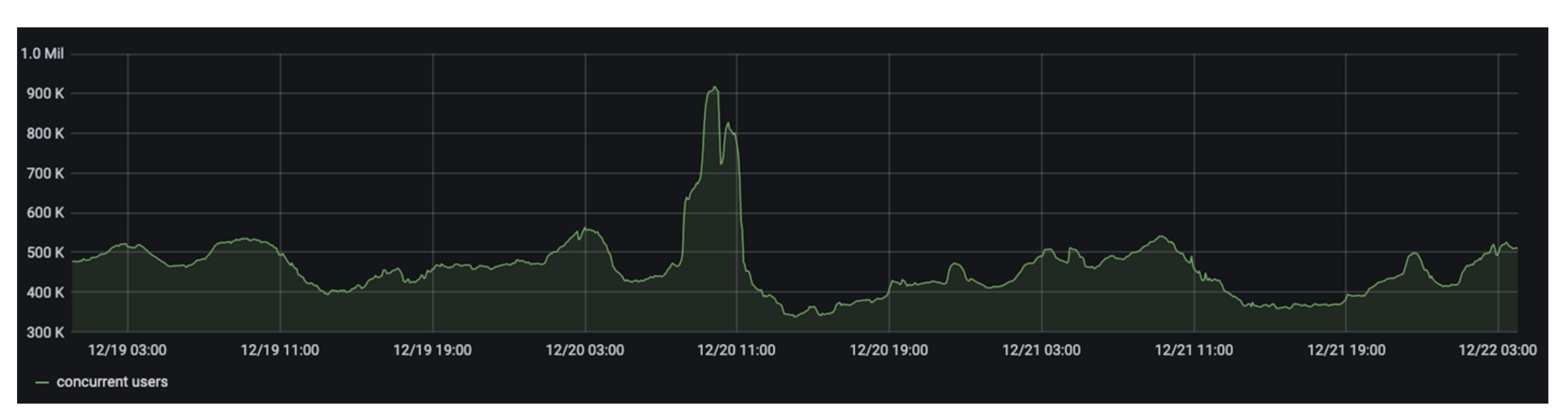

5. Scale and Performance of Cloud-Based Media Delivery Systems

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Pizzi, S.; Jones, G. A Broadcast Engineering Tutorial for Non-Engineers, 4th ed.; National Association Broadcasters (NAB): Chicago, IL, USA, 2014; 354p, ISBN 13:978-0415733380/10:0415733380. [Google Scholar]

- Luther, A.; Inglis, A. Video Engineering, 3rd ed.; McGraw-Hill: New York, NY, USA, 1999; 549p. [Google Scholar]

- Wu, D.; Hou, Y.T.; Zhu, W.; Zhang, Y.; Peha, J.M. Streaming video over the internet: Approaches and directions. IEEE Trans. Circuits Syst. Video Technol. 2001, 11, 282–300. [Google Scholar]

- Conklin, G.J.; Greenbaum, G.S.; Lillevold, K.O.; Lippman, A.F.; Reznik, Y.A. Video coding for streaming media delivery on the internet. IEEE Trans. Circuits Syst. Video Technol. 2001, 11, 269–281. [Google Scholar] [CrossRef]

- Pantos, R.; May, W. HTTP Live Streaming, RFC 8216. IETF. Available online: https://tools.ietf.org/html/rfc8216 (accessed on 1 November 2020).

- ISO/IEC 23009-1:2014. Information Technology—Dynamic Adaptive Streaming Over HTTP (DASH)—Part 1: Media Presentation Description and Segment Formats. ISO/IEC, 2014. Available online: https://www.iso.org/about-us.html (accessed on 1 November 2020).

- Microsoft Smooth Streaming. Available online: https://www.iis.net/downloads/microsoft/smooth-streaming (accessed on 1 November 2020).

- Evens, T. Co-opetition of TV broadcasters in online video markets: A winning strategy? Int. J. Digit. Telev. 2014, 5, 61–74. [Google Scholar] [CrossRef][Green Version]

- Nielsen Holdings Plc, Total Audience Report. 2020. Available online: https://www.nielsen.com/us/en/client-learning/tv/nielsen-total-audience-report-february-2020/ (accessed on 1 November 2020).

- Sandvine. The Global Internet Phenomena Report. 2019. Available online: https://www.sandvine.com/hubfs/Sandvine_Redesign_2019/Downloads/Internet%20Phenomena/Internet%20Phenomena%20Report%20Q32019%2020190910.pdf (accessed on 1 November 2020).

- Frost & Sullivan. Analysis of the Global Online Video Platforms Market. Frost & Sullivan. 2014. Available online: https://store.frost.com/analysis-of-the-global-online-video-platforms-market.html (accessed on 1 November 2020).

- ETSI TS 102 796. Hybrid Broadcast Broadband TV. ETSI, 2016. Available online: https://www.etsi.org/deliver/etsi_ts/102700_102799/102796/01.04.01_60/ts_102796v010401p.pdf (accessed on 1 November 2020).

- ATSC A/331:2020. Signaling, Delivery, Synchronization, and Error Protection. ATSC, 2020. Available online: https://www.atsc.org/atsc-documents/3312017-signaling-delivery-synchronization-error-protection/ (accessed on 1 November 2020).

- Stockhammer, T.; Sodagar, I.; Zia, W.; Deshpande, S.; Oh, S.; Champel, M. Dash in ATSC 3.0: Bridging the gap between OTT and broadcast. In Proceedings of the IET Conference Proceedings, IBC 2016 Conference, Amsterdam, The Netherlands, 8–12 September 2016; Volume 1, p. 24. [Google Scholar]

- AWS Elemental. Video Processing and Delivery Moves to the Cloud, e-book. 2018. Available online: https://www.elemental.com/resources/white-papers/e-book-video-processing-delivery-moves-cloud/ (accessed on 1 November 2020).

- Fautier, T. Cloud Technology Drives Superior Video Encoding; In SMPTE 2019; SMPTE: Los Angeles, CA, USA, 2019; pp. 1–9. [Google Scholar]

- ISO/IEC 13818-1:2019. Information Technology—Generic Coding of Moving Pictures and Associated Audio Information: Systems—Part 1: Systems. ISO/IEC, 2019; Available online: https://www.iso.org/standard/75928.html (accessed on 1 November 2020).

- ATSC A/65B. Program and System Information Protocol for Terrestrial Broadcast and Cable (PSIP). ATSC, 2013. Available online: https://www.atsc.org/wp-content/uploads/2015/03/Program-System-Information-Protocol-for-Terrestrial-Broadcast-and-Cable.pdf (accessed on 1 November 2020).

- ANSI/SCTE 35 2007. Digital Program Insertion Cueing Message for Cable. SCTE, 2007. Available online: https://webstore.ansi.org/standards/scte/ansiscte352007 (accessed on 1 November 2020).

- ANSI/SCTE 67 2010. Recommended Practice for SCTE 35 Digital Program Insertion Cueing Message for Cable. SCTE, 2010. Available online: https://webstore.ansi.org/standards/scte/ansiscte672010 (accessed on 1 November 2020).

- Lechner, B.J.; Chernock, R.; Eyer, M.; Goldberg, A.; Goldman, M. The ATSC transport layer, including Program and System Information (PSIP). Proc. IEEE 2006, 94, 77–101. [Google Scholar] [CrossRef]

- Reznik, Y.; Lillevold, K.; Jagannath, A.; Greer, J.; Corley, J. Optimal design of encoding profiles for ABR streaming. In Proceedings of the Packet Video Workshop, Amsterdam, The Netherlands, 12–15 June 2018. [Google Scholar] [CrossRef]

- Reznik, Y.; Li, X.; Lillevold, K.; Peck, R.; Shutt, T.; Marinov, R. Optimizing Mass-Scale Multi-Screen Video Delivery. In Proceedings of the 2019 NAB Broadcast Engineering and Information Technology Conference, Las Vegas, NV, USA, 6–11 April 2019. [Google Scholar]

- Davidson, G.A.; Isnardi, M.A.; Fielder, L.D.; Goldman, M.S.; Todd, C.C. ATSC Video and Audio Coding. Proc. IEEE 2006, 94, 60–76. [Google Scholar] [CrossRef]

- ATSC A/53 Part 1: 2013. ATSC Digital Television Standard: Part 1—Digital Television System. ATSC, 2013. Available online: https://www.atsc.org/wp-content/uploads/2015/03/A53-Part-1-2013-1.pdf (accessed on 1 November 2020).

- ATSC A/53 Part 4:2009. ATSC Digital Television Standard: Part 4—MPEG-2 Video System Characteristics. ATSC, 2009. Available online: https://www.atsc.org/wp-content/uploads/2015/03/a_53-Part-4-2009-1.pdf (accessed on 1 November 2020).

- ATSC A/54A. Recommended Practice: Guide to the Use of the ATSC Digital Television Standard. ATSC, 2003. Available online: https://www.atsc.org/wp-content/uploads/2015/03/a_54a_with_corr_1.pdf (accessed on 1 November 2020).

- ATSC A/72 Part 1:2015. Video System Characteristics of AVC in the ATSC Digital Television System. ATSC, 2015. Available online: https://www.atsc.org/wp-content/uploads/2015/03/A72-Part-1-2015-1.pdf (accessed on 1 November 2020).

- ATSC A/72 Part 2:2014. AVC Video Transport Subsystem Characteristics. ATSC, 2014. Available online: https://www.atsc.org/wp-content/uploads/2015/03/A72-Part-2-2014-1.pdf (accessed on 1 November 2020).

- ETSI TS 101 154. Digital Video Broadcasting (DVB): Implementation Guidelines for the use of MPEG-2 Systems, Video and Audio in Satellite, Cable and Terrestrial Broadcasting Applications. Doc. ETSI TS 101 154 V1.7.1. Annex, B., Ed.; 2019. Available online: https://standards.iteh.ai/catalog/standards/etsi/af36a167-779e-4239-b5a7-89356c6c2dde/etsi-ts-101-154-v2.6.1-2019-09 (accessed on 1 November 2020).

- ANSI/SCTE 43 2015. Digital Video Systems Characteristics Standard for Cable Television. SCTE, 2015. Available online: https://webstore.ansi.org/standards/scte/ansiscte432015 (accessed on 1 November 2020).

- ANSI/SCTE 128 2010-a. AVC Video Systems and Transport Constraints for Cable Television. SCTE, 2010. Available online: https://webstore.ansi.org/standards/scte/ansiscte1282010 (accessed on 1 November 2020).

- Schulzrinne, H.; Casner, S.; Frederick, R.; Jacobson, V. RTP: A Transport Protocol for Real-Time Applications. RFC 1889. IETF, 1996. Available online: https://tools.ietf.org/html/rfc1889 (accessed on 1 November 2020).

- SMPTE ST 2022-1:2007. Forward Error Correction for Real-Time Video/Audio Transport over IP Networks. ST 2022-1. SMPTE, 2007. Available online: https://ieeexplore.ieee.org/document/7291470/versions#versions (accessed on 1 November 2020).

- SMPTE ST 2022-2:2007. Unidirectional Transport of Constant Bit Rate MPEG-2 Transport Streams on IP Networks. ST 2022-2; SMPTE, 2007. Available online: https://ieeexplore.ieee.org/document/7291740 (accessed on 1 November 2020).

- Zixi, LLC. Streaming Video over the Internet and Zixi. 2015. Available online: http://www.zixi.com/PDFs/Adaptive-Bit-Rate-Streaming-and-Final.aspx (accessed on 1 November 2020).

- OC-SP-MEZZANINE-C01-161026. Mezzanine Encoding Specification. Cable Television Laboratories, Inc., 2016. Available online: https://community.cablelabs.com/wiki/plugins/servlet/cablelabs/alfresco/download?id=1d76e930-6d98-4de3-89ee-9d0fb4b5292a (accessed on 1 November 2020).

- Adobe Systems, Real-Time Messaging Protocol (RTMP) Specification. Version 1.0. 2012. Available online: https://www.adobe.com/devnet/rtmp.html (accessed on 1 November 2020).

- Haivision. Secure Reliable Transport (SRT). 2019. Available online: https://github.com/Haivision/srt (accessed on 1 November 2020).

- VSF TR-06-1. Reliable Internet Stream Transport (RIST) Protocol Specification—Simple Profile. Video Services Forum. 2018. Available online: http://vsf.tv/download/technical_recommendations/VSF_TR-06-1_2018_10_17.pdf (accessed on 1 November 2020).

- OC-SP-CEP3.0-I05-151104. Content Encoding Profiles 3.0 Specification. Cable Television Laboratories, Inc., 2015. Available online: https://community.cablelabs.com/wiki/plugins/servlet/cablelabs/alfresco/download?id=c7eb769e-1020-402c-b2f2-d839ee532945 (accessed on 1 November 2020).

- Ozer, J. Encoding for Multiple Devices. Streaming Media Magazine. 2013. Available online: http://www.streamingmedia.com/Articles/ReadArticle.aspx?ArticleID=88179&fb_comment_id=220580544752826_937649 (accessed on 1 November 2020).

- Ozer, J. Encoding for Multiple-Screen Delivery. Streaming Media East. 2013. Available online: https://www.streamingmediablog.com/wp-content/uploads/2013/07/2013SMEast-Workshop-Encoding.pdf (accessed on 1 November 2020).

- Apple. HLS Authoring Specification for Apple Devices. 2019. Available online: https://developer.apple.com/documentation/http_live_streaming/hls_authoring_specification_for_apple_devices (accessed on 1 November 2020).

- DASH-IF. DASH-IF Interoperability Points, v4.3. 2018. Available online: https://dashif.org/docs/DASH-IF-IOP-v4.3.pdf (accessed on 1 November 2020).

- ETSI TS 103 285 v1.2.1. Digital Video Broadcasting (DVB); MPEG-DASH Profile for Transport of ISO BMFF Based DVB Services Over IP Based Networks. 2020. Available online: https://www.etsi.org/deliver/etsi_ts/103200_103299/103285/01.03.01_60/ts_103285v010301p.pdf (accessed on 1 November 2020).

- SMPTE RP 145. Color Monitor Colorimetry. SMPTE, 1987. Available online: https://standards.globalspec.com/std/1284848/smpte-rp-145 (accessed on 1 November 2020).

- SMPTE 170M. Composite Analog Video Signal—NTSC for Studio Applications. SMPTE, 1994. Available online: https://standards.globalspec.com/std/892300/SMPTE%20ST%20170M (accessed on 1 November 2020).

- EBU Tech. 3213-E. E.B.U. Standard for Chromaticity Tolerances for Studio Monitors. EBU, 1975. Available online: https://tech.ebu.ch/docs/tech/tech3213.pdf (accessed on 1 November 2020).

- ITU-R Recommendation BT.601. Studio Encoding Parameters of Digital Television For standard 4:3 and Wide Screen 16:9 Aspect Ratios. ITU-R, 2011. Available online: https://www.itu.int/dms_pubrec/itu-r/rec/bt/R-REC-BT.601-7-201103-I!!PDF-E.pdf (accessed on 1 November 2020).

- ITU-R Recommendation BT.709. Parameter Values for the HDTV Standards for Production and International Programme Exchange. ITU-R. ITU-R, 2015. Available online: https://www.itu.int/dms_pubrec/itu-r/rec/bt/R-REC-BT.709-6-201506-I!!PDF-E.pdf (accessed on 1 November 2020).

- IEC 61966-2-1:1999. Multimedia Systems and Equipment—Colour Measurement and Management—Part 2-1: Colour Management—Default RGB Colour Space—sRGB. IEC, 1999. Available online: https://webstore.iec.ch/publication/6169 (accessed on 1 November 2020).

- Brydon, N. Saving Bits—The Impact of MCTF Enhanced Noise Reduction. SMPTE J. 2002, 111, 23–28. [Google Scholar] [CrossRef]

- ISO/IEC 13818-2:2013. Information Technology—Generic Coding of Moving Pictures and Associated Audio Information—Part 2: Video. ISO/IEC, 2013. Available online: https://www.iso.org/standard/61152.html (accessed on 1 November 2020).

- ISO/IEC 14496-10:2003. Information Technology—Coding of Audio-Visual Objects—Part 10: Advanced Video Coding. ISO/IEC, 2003. Available online: https://www.iso.org/standard/37729.html (accessed on 1 November 2020).

- ISO/IEC 23008-2:2013. Information Technology –High Efficiency Coding and Media Delivery in Heterogeneous Environments—Part 2: High Efficiency Video Coding. ISO/IEC, 2013. Available online: https://www.iso.org/standard/35424.html (accessed on 1 November 2020).

- AOM AV1. AV1 Bitstream & Decoding Process Specification, v1.0.0. Alliance for Open Media. 2019. Available online: https://aomediacodec.github.io/av1-spec/av1-spec.pdf (accessed on 1 November 2020).

- CTA 5001. Web Application Video Ecosystem—Content Specification. CTA WAVE, 2018. Available online: https://cdn.cta.tech/cta/media/media/resources/standards/pdfs/cta-5001-final_v2_pdf.pdf (accessed on 1 November 2020).

- Perkins, M.; Arnstein, D. Statistical multiplexing of multiple MPEG-2 video programs in a single channel. SMPTE J. 1995, 4, 596–599. [Google Scholar] [CrossRef]

- Boroczky, L.; Ngai, A.Y.; Westermann, E.F. Statistical multiplexing using MPEG-2 video encoders. IBM J. Res. Dev. 1999, 43, 511–520. [Google Scholar] [CrossRef]

- CEA-608-B. Line 21 data services. Consumer Electronics Association. 2008. Available online: https://webstore.ansi.org/standards/cea/cea6082008r2014ansi (accessed on 1 November 2020).

- CEA-708-B. Digital television (DTV) closed captioning. Consumer Electronics Association. 2008. Available online: https://www.scribd.com/document/70239447/CEA-708-B (accessed on 1 November 2020).

- CEA CEB16. Active Format Description (AFD) & Bar Data Recommended Practice. CEA, 2006. Available online: https://webstore.ansi.org/standards/cea/ceaceb162012 (accessed on 1 November 2020).

- SMPTE 2016-1. Standard for Television—Format for Active Format Description and Bar Data. SMPTE, 2007. Available online: https://www.techstreet.com/standards/smpte-2016-1-2009?product_id=1664006 (accessed on 1 November 2020).

- OC-SP-EP-I01-130118. Encoder Boundary Point Specification. Cable Television Laboratories, Inc., 2013. Available online: https://community.cablelabs.com/wiki/plugins/servlet/cablelabs/alfresco/download?id=2a4f4cc6-3763-40b9-9ace-7de923559187 (accessed on 1 November 2020).

- FairPlay Streaming. Available online: https://developer.apple.com/streaming/fps/ (accessed on 1 November 2020).

- PlayReady. Available online: https://www.microsoft.com/playready/overview/ (accessed on 1 November 2020).

- Widevine. Available online: https://www.widevine.com/solutions/widevine-drm (accessed on 1 November 2020).

- ISO/IEC 14496-12:2015. Information Technology—Coding of Audio-Visual Objects—Part 12: ISO Base Media File Format. 2015. Available online: https://www.iso.org/standard/68960.html (accessed on 1 November 2020).

- ISO/IEC 23000-19:2018. Information Technology—Coding of Audio-Visual Objects—Part 19: Common Media Application Format (CMAF) for Segmented Media. 2018. Available online: https://www.iso.org/standard/71975.html (accessed on 1 November 2020).

- ID3 Tagging System. Available online: http://www.id3.org/id3v2.3.0 (accessed on 1 November 2020).

- W3C WebVTT. The Web Video Text Tracks. W3C, 2018. Available online: http://dev.w3.org/html5/webvtt/ (accessed on 1 November 2020).

- W3C TTML1. Timed Text Markup Language 1. W3C, 2019. Available online: https://www.w3.org/TR/2018/REC-ttml1-20181108/ (accessed on 1 November 2020).

- W3C IMSC1. TTML Profiles for Internet Media Subtitles and Captions 1.0. W3C, 2015. Available online: https://dvcs.w3.org/hg/ttml/raw-file/tip/ttml-ww-pro-33files/ttml-ww-profiles.html (accessed on 1 November 2020).

- Apple. Incorporating Ads into A Playlist. Available online: https://developer.apple.com/documentation/http_live_streaming/example_playlists_for_http_live_streaming/incorporating_ads_into_a_playlist (accessed on 1 November 2020).

- ANSI/SCTE 30 2017. Digital Program Insertion Splicing API. SCTE, 2017. Available online: https://webstore.ansi.org/standards/scte/ansiscte302017 (accessed on 1 November 2020).

- ANSI/SCTE 172 2011. Constraints on AVC Video Coding for Digital Program Insertion. SCTE, 2011. Available online: https://webstore.ansi.org/preview-pages/SCTE/preview_SCTE+172+2011.pdf (accessed on 1 November 2020).

- SMPTE 259:2008. SMPTE Standard—For Television—SDTV—Digital Signal/Data—Serial Digital Interface. SMPTE, 2008. Available online: https://ieeexplore.ieee.org/document/7292109 (accessed on 1 November 2020).

- SMPTE 292-1:2018. SMPTE Standard—1.5 Gb/s Signal/Data Serial Interface. SMPTE, 2018. Available online: https://ieeexplore.ieee.org/document/8353270 (accessed on 1 November 2020).

- SMPTE 2110-20:2017. SMPTE Standard—Professional Media over Managed IP Networks: Uncompressed Active Video. SMPTE, 2017. Available online: https://ieeexplore.ieee.org/document/8167389 (accessed on 1 November 2020).

- AWS Direct Connect. Available online: https://aws.amazon.com/directconnect/ (accessed on 1 November 2020).

- Azure ExpressRoute. Available online: https://azure.microsoft.com/en-us/services/expressroute/ (accessed on 1 November 2020).

- FFMPEG Filter Documentation. Available online: https://ffmpeg.org/ffmpeg-filters.html (accessed on 1 November 2020).

- Vanam, R.; Reznik, Y. Temporal Sampling Conversion using Bi-directional Optical Flows with Dual Regularization. In Proceedings of the IEEE International Conference on Image Processing (ICIP), Abu Dhabi, UAE, 25–28 October 2020. [Google Scholar]

- Teranex Standards Converters. Available online: https://www.blackmagicdesign.com/products/teranex (accessed on 1 November 2020).

- Grass Valley KudosPro Converters. Available online: https://www.grassvalley.com/products/kudospro/ (accessed on 1 November 2020).

- Apple. Protocol Extension for Low-Latency HLS (Preliminary Specification). Apple Inc., 2020. Available online: https://developer.apple.com/documentation/http_live_streaming/protocol_extension_for_low-latency_hls_preliminary_specification (accessed on 1 November 2020).

- DASH-IF. DASH-IF ATSC3.0 IOP. 2012. Available online: https://dashif.org/docs/DASH-IF-IOP-for-ATSC3-0-v1.1.pdf (accessed on 1 November 2020).

- Law, W. Ultra Low Latency with CMAF. Available online: https://www.akamai.com/us/en/multimedia/documents/white-paper/low-latency-streaming-cmaf-whitepaper.pdf (accessed on 1 November 2020).

- Brightcove VideoCloud System. Available online: https://www.brightcove.com/en/online-video-platform (accessed on 1 November 2020).

| Format Characteristic | Broadcast Systems | Online Platforms/Streaming |

|---|---|---|

| Temporal sampling | SD: interlaced (bff), telecine HD: progressive, interlaced (tff), telecine | progressive only |

| Framerates [Hz] | 24,000/1001, 24, 25, 30,000/1001, 30, 50, 60,000/10,001, 60 | same as source, typically capped at 30 Hz or 60 Hz |

| Display Aspect Ratio (DAR) | SD: 4:3, 16:9 HD: 16:9 | same as indicated by the source |

| Sample Aspect Ratio (SAR) | SD: 1:1, 12:11, 10:11, 16:11, 40:33, 24:11, 32:11, 80:33, 18:11, 15:11, 64:33, 160:99 HD: 1:1 (most common), 4:3 (1440 mode) | 1:1 or nearest rounding to 1:1 SARs are usually preferred |

| Resolutions | SD (NTSC-derived): 480i SD (PAL, SECAM-derived): 576i HD: 720p, 1080i | same as source + down-scaled versions; additional restrictions may apply [45,46] |

| Chroma sampling | 4:2:0, 4:2:2 | 4:2:0 only |

| Primary colors | SD (NTSC-derived): SMPTE-C [47,48] SD (PAL, SECAM-derived): EBU [49,50] HD: ITU-R BT.709 [51] | ITU-R BT.709/sRGB [51,52] |

| Color matrix | SD: ITU-R BT.601 [50] HD: ITU-R BT.709 [51] | ITU-R BT.709 |

| Transfer characteristic | SD (NTSC-derived): power law 2.2 SD (PAL, SECAM-derived): power law 2.8 HD: ITU-R BT.709 [51] | ITU-R BT.709 |

| Stream Characteristic | Broadcast Systems | Online Platforms/Web Streaming |

|---|---|---|

| Number of outputs | single | multiple (usually 3–10) as needed to support ABR delivery [4,42,43] |

| Preprocessing | denoising, MCTF-type filters [24,53] | rarely used |

| Video codecs | MPEG-2 [54], MPEG-4/AVC [55] | MPEG-4/AVC—most deployments HEVC [56], AV1 [57]—special cases |

| Codec profiles, levels | fixed for each format. applicable standards: ATSC A/53 P4 [26], ATSC A/72 P1 [29], ETSI TS 101 154 [30], SCTE 128 [33] | based on target set of devices [42] guidelines: Apple HLS [44], ETSI TS 103 285 [46], CTA 5001 [58] |

| GOP length | 0.5 s | 2–10 s |

| GOP type | open, closed | closed |

| Error resiliency features | mandatory slicing of I/IDR pictures | N/A |

| Encoding modes | CBR, VBR (with statmux) many additional constraints apply, see ATSC A/53 P4 [26], ATSC A/54 A [27], ATSC A/72 P1 [29], ETSI TS 101 154 [30], SCTE 43 [31], SCTE 128 [33] | capped VBR max. bitrate is typically capped to 1.1x–1.5x target bitrate; HRD buffer size is typically limited by codec profile+ level constraints; |

| VUI/HRD parameters | required | optional, usually omitted |

| VUI/colorimetry data | required | optional, usually included |

| VUI/aspect ratio | required | optional, usually included |

| Picture timing SEI | required in some cases (e.g., in film mode) | optional, usually omitted |

| Buffering period SEI | optional | optional, usually omitted |

| ADF/bar data/T.35 | required and carried in video ES | not used |

| Closed captions | required and carried in video ES | optional, maybe carried out-of-band |

| Device Category | Players/Platforms | HLS | DASH | HLS + FairPlay | HLS + Widevine | DASH + Widevine | DASH + PlayReady | MSS + PlayReady |

|---|---|---|---|---|---|---|---|---|

| PCs/Browsers | Chrome | ✓ | ✓ | 🗴 | ✓ | ✓ | 🗴 | 🗴 |

| Firefox | ✓ | ✓ | 🗴 | ✓ | ✓ | 🗴 | 🗴 | |

| IE, Edge | ✓ | ✓ | 🗴 | 🗴 | 🗴 | ✓ | ✓ | |

| Safari | ✓ | 🗴 | ✓ | 🗴 | 🗴 | 🗴 | 🗴 | |

| Mobiles | Android | ✓ | ✓ | 🗴 | 🗴 | ✓ | ✓ | ✓ |

| iOS | ✓ | 🗴 | ✓ | 🗴 | 🗴 | 🗴 | 🗴 | |

| Set-top boxes | Chrome-cast | ✓ | ✓ | 🗴 | 🗴 | ✓ | ✓ | ✓ |

| Android TV | ✓ | ✓ | 🗴 | 🗴 | ✓ | ✓ | ✓ | |

| Roku | ✓ | ✓ | 🗴 | 🗴 | ✓ | ✓ | ✓ | |

| Apple TV | ✓ | 🗴 | ✓ | 🗴 | 🗴 | 🗴 | 🗴 | |

| Amazon Fire TV | ✓ | ✓ | 🗴 | 🗴 | ✓ | ✓ | ✓ | |

| Smart TVs | Samsung/Tenzen | ✓ | ✓ | 🗴 | 🗴 | ✓ | ✓ | ✓ |

| LG/ webOS | ✓ | ✓ | 🗴 | ✓ | 🗴 | 🗴 | 🗴 | |

| SmartTV Alliance | ✓ | ✓ | 🗴 | 🗴 | 🗴 | ✓ | ✓ | |

| Android TV | ✓ | ✓ | 🗴 | 🗴 | ✓ | ✓ | ✓ | |

| Game Consoles | Xbox One/ 360 | ✓ | 🗴 | 🗴 | 🗴 | 🗴 | 🗴 | ✓ |

| Characteristic/Components | On Prem Solution | Architecture 1 (Section 4.1) | Architecture 2 (Section 4.2) | Architecture 3 (Section 4.3) |

|---|---|---|---|---|

| Ingest from remote and field production | On prem | On prem | Cloud | Cloud |

| Playout | On prem | On prem | Cloud | Cloud |

| Broadcast distribution transcoding | On prem | On prem | On prem | Cloud |

| Broadcast multiplexers | On prem | On prem | On prem | Cloud |

| QAMs (Quadrature Amplitude Modulation) and links to distribution | On prem | On prem | On prem | On prem |

| OTT transcoding | On prem | Cloud | Cloud | Cloud |

| OTT packaging, DRM, and SSAI | On prem | Cloud | Cloud | Cloud |

| OTT origin servers | On prem | Cloud | Cloud | Cloud |

| Fault-tolerance and redundancy support | On prem | On prem for broadcast chain, cloud for OTT | Cloud | Cloud |

| % of physical equipment required | 100% | 50–75% | 25–50% | 10–20% |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Reznik, Y.; Cenzano, J.; Zhang, B. Transitioning Broadcast to Cloud. Appl. Sci. 2021, 11, 503. https://doi.org/10.3390/app11020503

Reznik Y, Cenzano J, Zhang B. Transitioning Broadcast to Cloud. Applied Sciences. 2021; 11(2):503. https://doi.org/10.3390/app11020503

Chicago/Turabian StyleReznik, Yuriy, Jordi Cenzano, and Bo Zhang. 2021. "Transitioning Broadcast to Cloud" Applied Sciences 11, no. 2: 503. https://doi.org/10.3390/app11020503

APA StyleReznik, Y., Cenzano, J., & Zhang, B. (2021). Transitioning Broadcast to Cloud. Applied Sciences, 11(2), 503. https://doi.org/10.3390/app11020503