Body-Part-Aware and Multitask-Aware Single-Image-Based Action Recognition

Abstract

1. Introduction

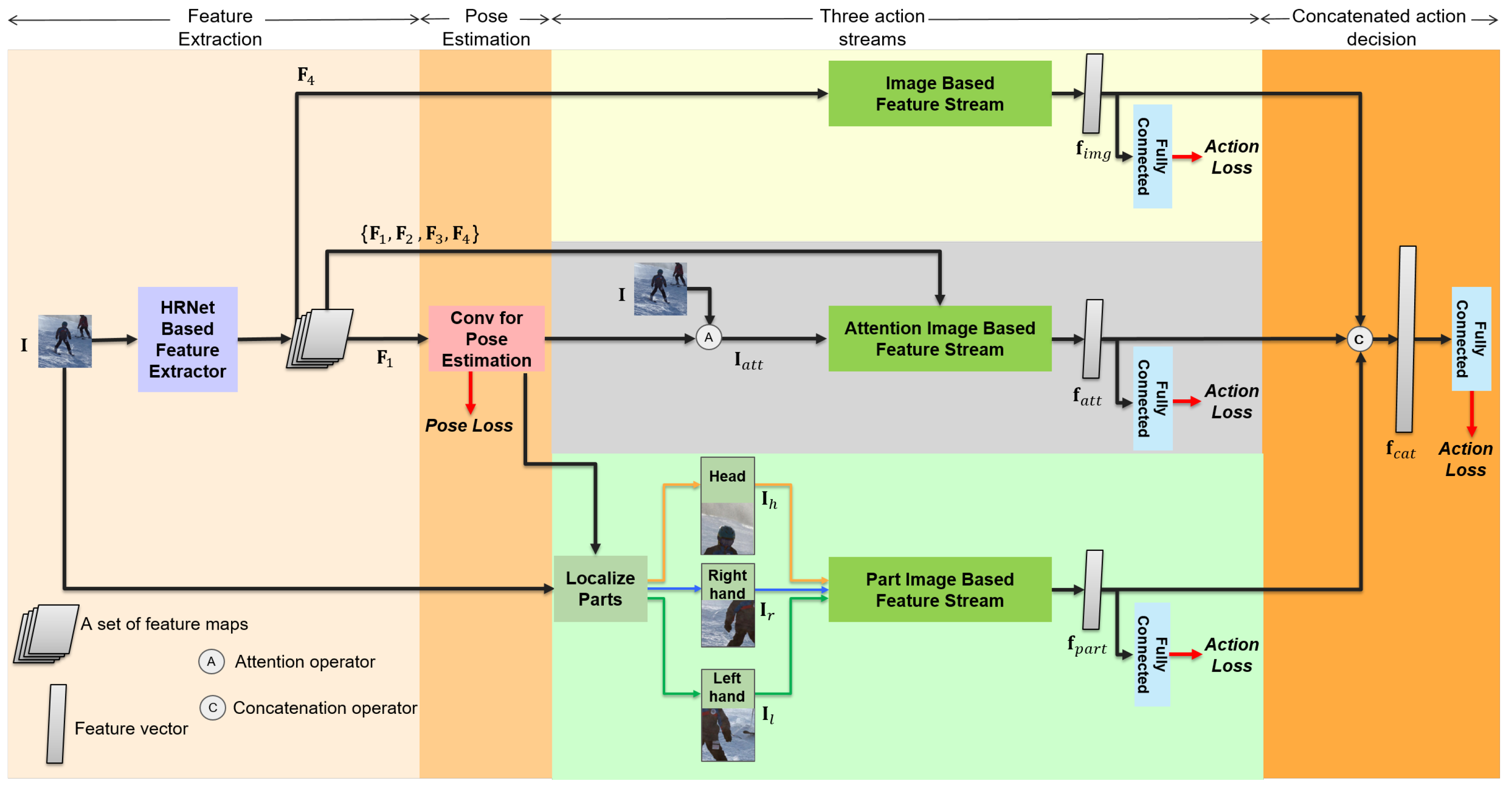

- Human action is an organic combination of the human pose, area of interaction, and interacting objects. In this context, we propose a deep network to efficiently fuse all the human-centric information based on multitask learning.

- The loss function in the proposed deep network is designed by a multitask-aware loss function. Thereby, the proposed method can efficiently handle various datasets, even if they do not include both human pose annotations and action labels.

- According to the experimental results, the proposed method achieved mean average precision (mAP) on the Stanford 40 Actions Dataset [15], while having very little effect on the pose estimation task in our multitask learning framework. Moreover, we demonstrated that the proposed method can be flexibly applied in multi-labels action recognition problem on the V-COCO Dataset [16].

2. Related Works

2.1. Human Pose Estimation

2.2. Single-Image-Based Action Recognition

2.3. Fine-Grained Classification Problems

2.4. Multitask Learning

3. Proposed Method

3.1. HRNet: Multitasking Feature Representation

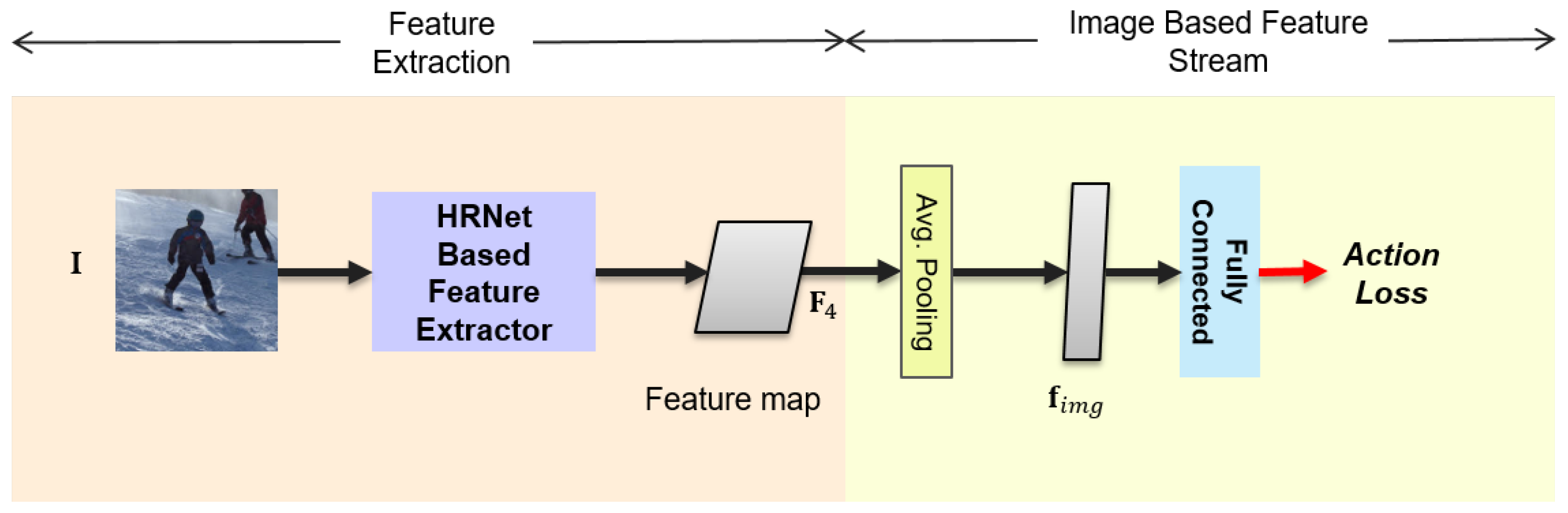

3.2. Image-Based Feature Stream

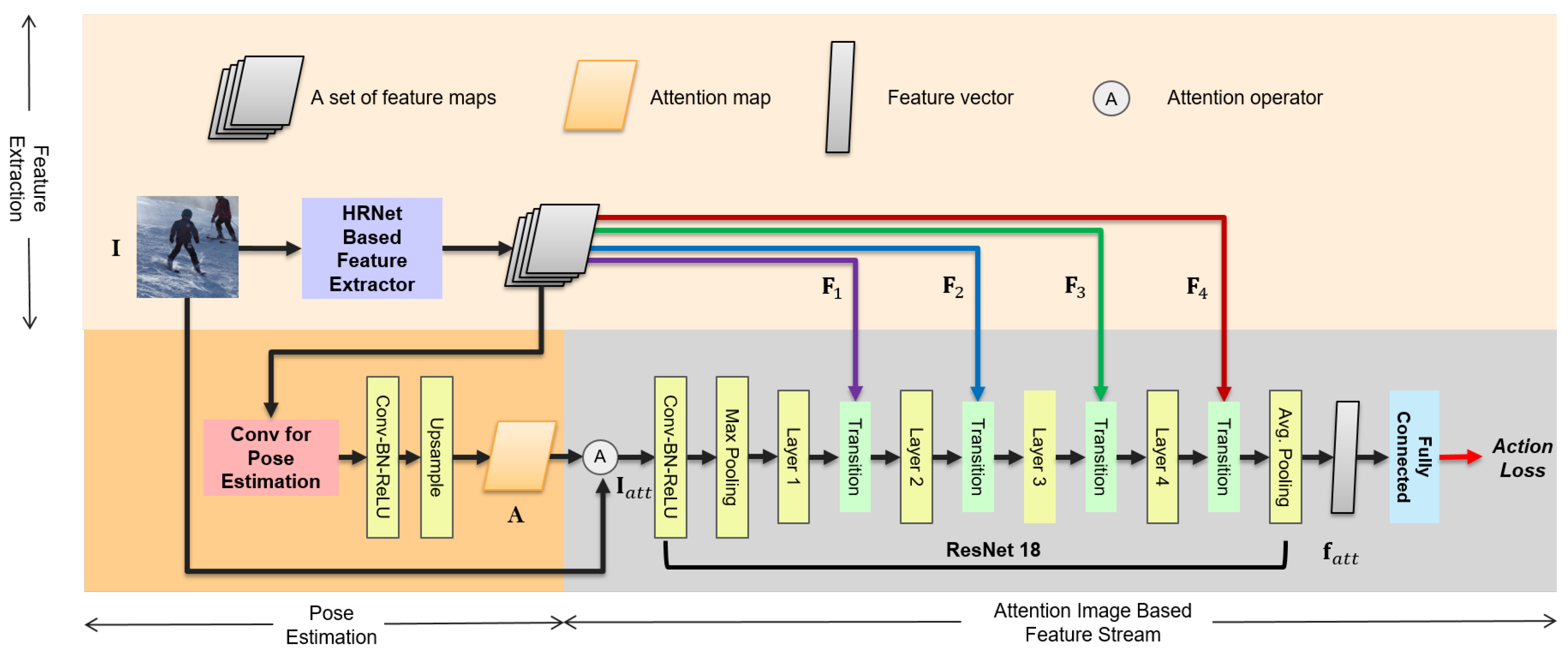

3.3. Attention-Image-Based Feature Stream

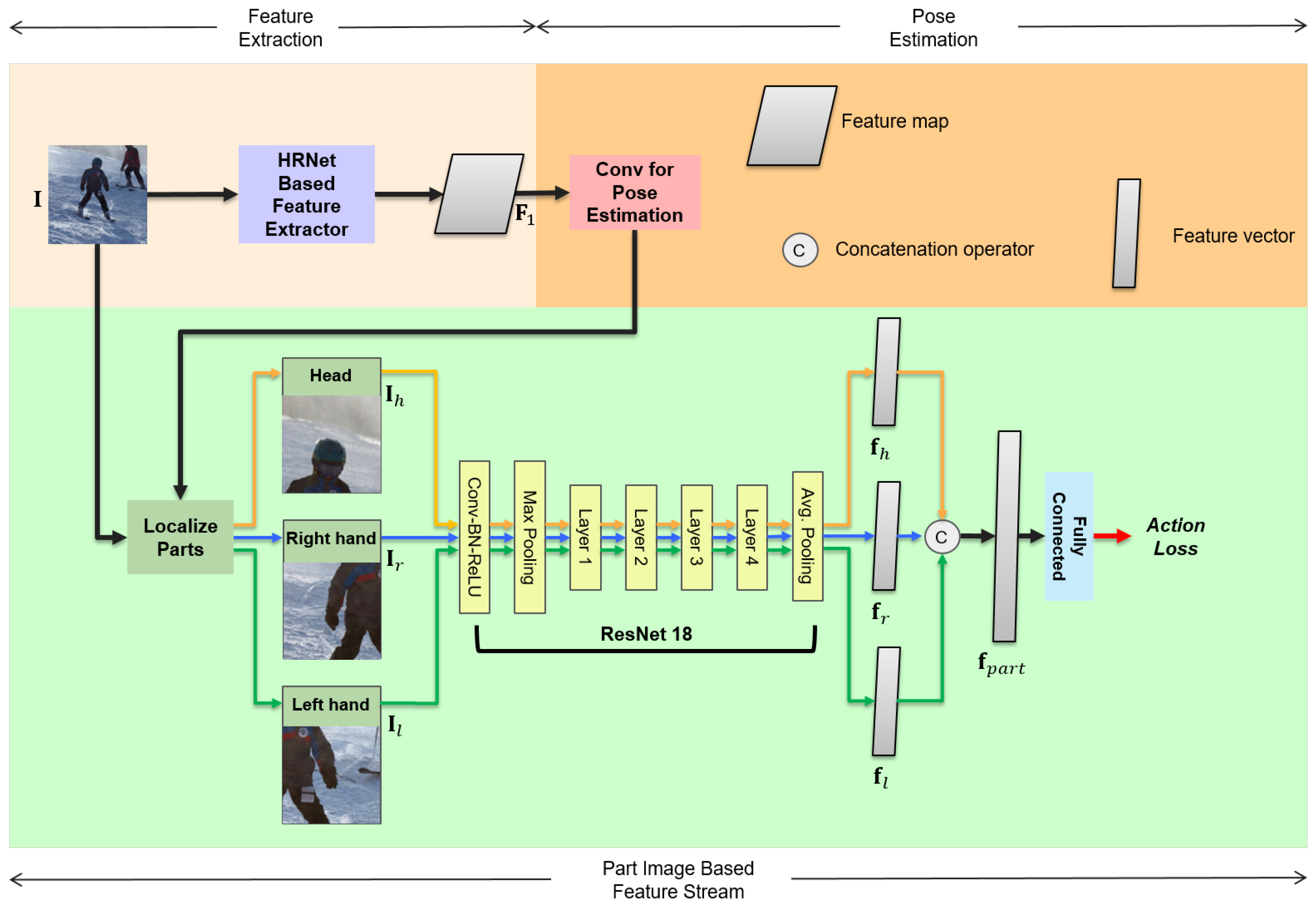

3.4. Part-Image-Based Feature Stream

3.5. Detailed Structure of the Three Feature Streams for Action Recognition

3.6. Multitask-Aware Loss Function

4. Experiments

4.1. Experimental Setup

4.2. Datasets

4.2.1. MPII Human Pose Dataset

4.2.2. Stanford 40 Actions Dataset

4.2.3. V-COCO Dataset

4.3. Evaluation of the Pose Estimation Task on the MPII Human Pose Dataset

4.4. Evaluation of the Action Recognition Task on the Stanford 40 Actions Dataset

4.5. Evaluation of the Action Recognition Task on the V-COCO Dataset

4.6. Qualitative Results

5. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Phyo, C.N.; Zin, T.T.; Tin, P. Complex Human–Object Interactions Analyzer Using a DCNN and SVM Hybrid Approach. Appl. Sci. 2019, 9, 1869. [Google Scholar] [CrossRef]

- Yang, H.; Zhang, J.; Li, S.; Lei, J.; Chen, S. Attend it again: Recurrent attention convolutional neural network for action recognition. Appl. Sci. 2018, 8, 383. [Google Scholar] [CrossRef]

- Cho, J.; Lee, M. Building a Compact Convolutional Neural Network for Embedded Intelligent Sensor Systems Using Group Sparsity and Knowledge Distillation. Sensors 2019, 19, 4307. [Google Scholar] [CrossRef] [PubMed]

- Yang, H.D.; Lee, S.W.; Lee, S.W. Multiple human detection and tracking based on weighted temporal texture features. Int. J. Pattern Recognit. Artif. Intell. 2006, 20, 377–391. [Google Scholar] [CrossRef]

- Luvizon, D.C.; Picard, D.; Tabia, H. 2d/3d pose estimation and action recognition using multitask deep learning. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Yan, S.; Xiong, Y.; Lin, D. Spatial temporal graph convolutional networks for skeleton-based action recognition. In Proceedings of the AAAI Conference on Artificial Intelligence, New Orleans, LA, USA, 2–7 February 2018. [Google Scholar]

- Choutas, V.; Weinzaepfel, P.; Revaud, J.; Schmid, C. Potion: Pose motion representation for action recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018. [Google Scholar]

- Liu, X.; Xia, T.; Wang, J.; Yang, Y.; Zhou, F.; Lin, Y. Fully convolutional attention networks for fine-grained recognition. arXiv 2016, arXiv:1603.06765. [Google Scholar]

- Zhang, X.; Xiong, H.; Zhou, W.; Lin, W.; Tian, Q. Picking deep filter responses for fine-grained image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

- Xiao, T.; Xu, Y.; Yang, K.; Zhang, J.; Peng, Y.; Zhang, Z. The application of two-level attention models in deep convolutional neural network for fine-grained image classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015. [Google Scholar]

- Kim, D.J.; Choi, J.; Oh, T.H.; Yoon, Y.; Kweon, I.S. Disjoint multi-task learning between heterogeneous human-centric tasks. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision, Lake Tahoe, NV, USA, 12–15 March 2018. [Google Scholar]

- Ranjan, R.; Patel, V.M.; Chellappa, R. Hyperface: A deep multi-task learning framework for face detection, landmark localization, pose estimation, and gender recognition. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 41, 121–135. [Google Scholar] [CrossRef] [PubMed]

- Huang, G.; Chen, D.; Li, T.; Wu, F.; van der Maaten, L.; Weinberger, K.Q. Multi-scale dense networks for resource efficient image classification. arXiv 2017, arXiv:1703.09844. [Google Scholar]

- Sun, K.; Xiao, B.; Liu, D.; Wang, J. Deep high-resolution representation learning for human pose estimation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019. [Google Scholar]

- Yao, B.; Jiang, X.; Khosla, A.; Lin, A.L.; Guibas, L.; Fei-Fei, L. Human action recognition by learning bases of action attributes and parts. In Proceedings of the IEEE International Conference on Computer Vision, Barcelona, Spain, 6–13 November 2011. [Google Scholar]

- Gupta, S.; Malik, J. Visual Semantic Role Labeling. arXiv 2015, arXiv:1505.04474. [Google Scholar]

- Andriluka, M.; Pishchulin, L.; Gehler, P.; Schiele, B. 2d human pose estimation: New benchmark and state of the art analysis. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Columbus, OH, USA, 23–28 June 2014. [Google Scholar]

- Cho, J.; Lee, M.; Oh, S. Single image 3D human pose estimation using a procrustean normal distribution mixture model and model transformation. Comput. Vis. Image Underst. 2017, 155, 150–161. [Google Scholar] [CrossRef]

- Newell, A.; Yang, K.; Deng, J. Stacked hourglass networks for human pose estimation. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016. [Google Scholar]

- Chen, Y.; Wang, Z.; Peng, Y.; Zhang, Z.; Yu, G.; Sun, J. Cascaded pyramid network for multi-person pose estimation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Xiao, B.; Wu, H.; Wei, Y. Simple baselines for human pose estimation and tracking. In Proceedings of the European Conference on Computer Vision, Munich, Germany, 8–14 September 2018. [Google Scholar]

- Cha, G.; Lee, M.; Cho, J.; Oh, S. Deep pose consensus networks. Comput. Vis. Image Underst. 2019, 182, 64–70. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollar, P.; Zitnick, C.L. Microsoft COCO: Common objects in context. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014. [Google Scholar]

- Guo, G.; Lai, A. A survey on still image based human action recognition. Pattern Recognit. 2014, 47, 3343–3361. [Google Scholar] [CrossRef]

- Khan, F.S.; Xu, J.; Van De Weijer, J.; Bagdanov, A.D.; Anwer, R.M.; Lopez, A.M. Recognizing actions through action-specific person detection. IEEE Trans. Image Process. 2015, 24, 4422–4432. [Google Scholar] [CrossRef] [PubMed]

- Zhao, Z.; Ma, H.; You, S. Single image action recognition using semantic body part actions. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017. [Google Scholar]

- Zhang, Y.; Cheng, L.; Wu, J.; Cai, J.; Do, M.N.; Lu, J. Action recognition in still images with minimum annotation efforts. IEEE Trans. Image Process. 2016, 25, 5479–5490. [Google Scholar] [CrossRef] [PubMed]

- Zhao, Z.; Ma, H.; Chen, X. Semantic parts based top-down pyramid for action recognition. Pattern Recognit. Lett. 2016, 84, 134–141. [Google Scholar] [CrossRef]

- Safaei, M.; Foroosh, H. Still Image Action Recognition by Predicting Spatial-Temporal Pixel Evolution. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision, Waikoloa Village, HI, USA, 8–10 January 2019. [Google Scholar]

- Chao, Y.W.; Liu, Y.; Liu, X.; Zeng, H.; Deng, J. Learning to detect human-object interactions. In Proceedings of the IEEE Winter Conference on Applications of Computer Vision, Lake Tahoe, NV, USA, 12–15 March 2018. [Google Scholar]

- Wan, B.; Zhou, D.; Liu, Y.; Li, R.; He, X. Pose-Aware Multi-Level Feature Network for Human Object Interaction Detection. In Proceedings of the IEEE International Conference on Computer Vision, Seoul, Korea, 27 October–2 November 2019. [Google Scholar]

- Dubey, A.; Gupta, O.; Guo, P.; Raskar, R.; Farrell, R.; Naik, N. Pairwise confusion for fine-grained visual classification. In Proceedings of the European Conference on Computer Vision, Munich, Germany, 8–14 September 2018. [Google Scholar]

- Fukui, H.; Hirakawa, T.; Yamashita, T.; Fujiyoshi, H. Attention branch network: Learning of attention mechanism for visual explanation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019. [Google Scholar]

- Ioffe, S.; Szegedy, C. Batch normalization: Accelerating deep network training by reducing internal covariate shift. arXiv 2015, arXiv:1502.03167. [Google Scholar]

- Dahl, G.E.; Sainath, T.N.; Hinton, G.E. Improving deep neural networks for LVCSR using rectified linear units and dropout. In Proceedings of the 2013 IEEE International Conference on Acoustics, Speech and Signal Processing, Vancouver, BC, Canada, 26–31 May 2013. [Google Scholar]

- Lin, T.Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. Imagenet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Miami, FL, USA, 20–25 June 2009. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Lan, X.; Zhu, X.; Gong, S. Knowledge distillation by on-the-fly native ensemble. In Proceedings of the International Conference on Neural Information Processing Systems, Montreal, QC, Canada, 3–8 December 2018. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Zhou, B.; Khosla, A.; Lapedriza, A.; Oliva, A.; Torralba, A. Learning deep features for discriminative localization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 26 June–1 July 2016. [Google Scholar]

| Layer Name | Image Based FS | Attention Image Based FS | Part Image Based FS |

|---|---|---|---|

| Conv | - | stride = 2 | stride = 2 |

| Max Pooling | - | stride = 2 | stride = 2 |

| Layer 1 | - | ||

| Transition | - | - | |

| Layer 2 | - | ||

| Transition | - | - | |

| Layer 3 | - | ||

| Transition | - | - | |

| Layer 4 | - | ||

| Transition | - | - | |

| Avg. Pooling | Average Pooling | Average Pooling | Average Pooling |

| Fully Connected | #classes-d | #classes-d | #classes-d |

| softmax | softmax | softmax |

| Arch | Head | Shoulder | Elbow | Wrist | Hip | Knee | Ankle | Mean |

|---|---|---|---|---|---|---|---|---|

| pose_hrnet_w32 [14] | 97.1 | 95.9 | 90.3 | 86.4 | 89.1 | 87.1 | 83.3 | 90.3 |

| pose_hrnet_w32_aug90 [14] | 97.3 | 96.1 | 90.9 | 86.2 | 89.2 | 87.0 | 82.8 | 90.4 |

| proposed_method | 96.7 | 95.4 | 88.7 | 83.3 | 87.5 | 84.4 | 79.4 | 88.5 |

| Feature Representation | mAP |

|---|---|

| Image-based feature stream | 85.57 |

| Attention-image-based feature stream | 85.38 |

| Part-image-based feature stream | 84.27 |

| Concatenation-Based Action Decision (Image + Attention + Part) | 91.76 |

| Proposed: Ensemble of (Image + Part + Concatenation) | 91.91 |

| Method | mAP |

|---|---|

| Action-Specific Detectors [26] | 75.4 |

| VGG-16&19 [42] | 77.8 |

| TDP [29] | 80.6 |

| ResNet-50 [23] | 81.2 |

| Action Mask [28] | 82.6 |

| Part-Based Network in [27] | 89.3 |

| Part Action Network in [27] | 91.2 |

| Proposed Method | 91.91 |

| Feature Representation | mAP |

|---|---|

| Image-based feature stream | 64.59 |

| Attention-image-based feature stream | 66.30 |

| Part-image-based feature stream | 62.50 |

| Proposed: Ensemble of (Image + Part + Concatenation) | 72.36 |

| Methods | mAP |

|---|---|

| Baseline 1 (trained with Image) | 68.08 |

| Baseline 2 (trained with Image + Part) | 69.15 |

| Proposed (trained with Image + Attention + Part) | 72.36 |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bhandari, B.; Lee, G.; Cho, J. Body-Part-Aware and Multitask-Aware Single-Image-Based Action Recognition. Appl. Sci. 2020, 10, 1531. https://doi.org/10.3390/app10041531

Bhandari B, Lee G, Cho J. Body-Part-Aware and Multitask-Aware Single-Image-Based Action Recognition. Applied Sciences. 2020; 10(4):1531. https://doi.org/10.3390/app10041531

Chicago/Turabian StyleBhandari, Bhishan, Geonu Lee, and Jungchan Cho. 2020. "Body-Part-Aware and Multitask-Aware Single-Image-Based Action Recognition" Applied Sciences 10, no. 4: 1531. https://doi.org/10.3390/app10041531

APA StyleBhandari, B., Lee, G., & Cho, J. (2020). Body-Part-Aware and Multitask-Aware Single-Image-Based Action Recognition. Applied Sciences, 10(4), 1531. https://doi.org/10.3390/app10041531