Comparison of Numerical Methods and Open-Source Libraries for Eigenvalue Analysis of Large-Scale Power Systems

Abstract

1. Introduction

- Vector iteration methods, which, in turn, are separated to single and simultaneous vector iteration methods. Single vector iteration methods include the power method [11] and its variants, such as the inverse power and Rayleigh quotient iteration. Simultaneous vector iteration methods include the subspace iteration method [12] and its variants, such as the inverse subspace method. Regarding the application of vector iteration methods in power systems, the inverse power iteration was first discussed in [13]. A Rayleigh quotient iteration based algorithm was proposed in [14,15], while recent studies have provided subspace accelerated and deflated versions of the same algorithm [16,17,18]. Finally, simultaneous vector iteration methods were studied in [19,20].

- Schur decomposition methods, which mainly include the QR algorithm [21], the QZ algorithm [22], and their variants, such as the QR algorithm with shifts. Schur decomposition based methods have been the standard methods employed for the eigenvalue analysis of small to medium size power systems [23,24].

- Krylov subspace methods, which basically include the Arnoldi iteration [25] and its variants, such as the implicitly restarted Arnoldi [26] and the Krylov–Schur method [27]. In this category also belong preconditioned extensions of the Lanczos algorithm, such as the non-symmetric versions of the Generalized Davidson and the Jacobi–Davidson method. In power systems, different versions of the Arnoldi method were proposed in [19,28,29], while a parallel version of the Krylov–Schur method was implemented very recently, in [30]. The Jacobi-Davidson method and an inexact version of the same method were discussed in [31,32], respectively.

2. Background

2.1. Power System Model

2.2. Eigenvalue Problem

2.3. Spectral Transforms

- To find the eigenvalues of interest. Some numerical methods, for example vector iteration-based methods, find the Largest Magnitude (LM) eigenvalues, whereas the eigenvalues of interest in SSSA are typically the ones with Smallest Magnitude (SM) or Largest real Part (LRP). Thus, it is necessary to apply a spectral transform, e.g., the invert or shift & invert.

- Address a singularity issue. A GEP with singular left hand side coefficient matrix can create problems to many numerical methods. Applying a Möbius transform can help address singularity issues.

- Accelerate convergence. Eigenvalues that are not very close to each other can lead to large errors and slow convergence of eigensolvers. Spectral transforms can help to magnify the eigenvalues of interest and speed up convergence.

3. Description of Numerical Algorithms

3.1. Single Vector Iteration Methods

3.2. Simultaneous Vector Iteration Methods

- The iteration starts with an initial matrix , , with orthonormal columns, i.e., .

- At the k-th step of the iteration, :

- (a)

- Matrix , , is formed as:

- (b)

- Matrix is updated by maintaining column-orthonormality, through the QR decomposition of matrix :where is a unitary matrix and is an upper triangular matrix.

3.3. Schur Decomposition Methods

3.4. Krylov Subspace Methods

3.4.1. Arnoldi Iteration

3.4.2. Krylov-Schur Method

3.4.3. Generalized Eigenvalue Problem

3.5. Contour Integration Methods

4. Open-Source Libraries

4.1. LAPACK

- Summary: LAPACK [38] is a standard library aimed at solving problems of numerical linear algebra, such as systems of linear equations and eigenvalue problems.

- Eigenvalue Methods: QR and QZ algorithms.

- Matrix Formats: cannot handle general sparse matrices, but is functional with dense matrices. In fact, LAPACK is the standard dense matrix data interface used by all other eigenvalue libraries.

- Returned Eigenvectors: LAPACK algorithms are 2-sided, i.e., return both right and left eigenvectors.

- Dependencies: a large part of the computations required by the routines of LAPACK are performed by calling the BLAS (Basic Linear Algebra Subprograms) [56]. In general, BLAS functionality is classified in three levels. Level 1 defines routines that carry out simple vector operations; Level 2 defines routines that carry out matrix-vector operations; and, Level 3 defines routines that carry out general matrix-matrix operations. Modern optimized BLAS libraries, such as ATLAS (Automatically Tuned Linear Algebra Software) [57] and Intel MKL (Math Kernel Library), typically support all three levels for both real and complex data types.

- GPU-based version: MAGMA [39] provides hybrid CPU/GPU implementations of LAPACK routines. It depends on NVidia CUDA. For general non-symmetric matrices, MAGMA includes the QR algorithm for the solution of the LEP, but does not support the solution of the GEP.

- Parallel version: ScaLAPACK (Scalable LAPACK) [58] provides implementations of LAPACK routines for parallel distributed memory computers. Similarly to the dependence of LAPACK on BLAS, ScaLAPACK depends on PBLAS (Parallel BLAS), which, in turn, depends on BLAS for local computations and BLACS (Basic Linear Algebra Communication Subprograms) for communication between nodes.

- Development: as a eigensolver, LAPACK is the successor of EISPACK [59]. The first version of LAPACK was released in 1992. When compared to EISPACK, LAPACK was restructured to include the block forms of QR and QZ algorithms, which allows for exploiting Level 3 BLAS and leads to improved efficiency [60]. The latest version of LAPACK is 3.7 and it was released in 2016.

4.2. ARPACK

- Summary: ARPACK [40] is a library developed for solving large eigenvalue problems with the IR-Arnoldi method.

- Eigenvalue Methods: IR-Arnoldi iteration.

- Matrix Formats: ARPACK supports the Reverse Communication Interface (RCI), which provides to the user the freedom to customize the matrix data format as desired. In particular, with RCI, whenever a matrix operation has to take place, control is returned to the calling program with an indication of the task required and the user can, in principle, choose the solver for the specific task independently from the library.

- Returned Eigenvectors: only right eigenvectors are calculated.

- Dependencies: ARPACK depends on a number of subroutines from LAPACK/BLAS. Moreover, ARPACK requires to be linked to a library that factorizes matrices. This can be either dense or sparse. In the simulations that are described in this paper, we linked ARPACK to the efficient library KLU, which is part of SuiteSparse [61], and that is particularly suited for sparse matrices whose structure originates from an electrical circuit.

- GPU-based version: to the best of the authors’ knowledge, a library that provides a functional GPU-based implementation of ARPACK is not available to date.

- Parallel version: PARPACK is an implementation of ARPACK for parallel computers. The message parsing layers supported by PARPACK are MPI (Message Passing Interface) [62] and BLACS.

- Development: the first version of ARPACK became available on Netlib in 1995. The last few years, ARPACK has stopped getting updated by Rice University. The library has been forked into ARPACK-NG, a collaborative effort among software developers, including Debian, Octave, and Scilab, to put together their own improvements and fixes of ARPACK. The latest version of ARPACK-NG is 3.7.0 and it was released in 2019.

4.3. Anasazi

- Summary: Anasazi [41] is a library that implements block versions of algorithms for the solution of large-scale eigenvalue problems.

- Eigenvalue Methods: the library includes methods for both symmetric and non-symmetric problems. Regarding non-symmetric problems, it provides a block extension of the Krylov–Schur method and the Generalized Davidson (GD) method.

- Matrix Formats: Anasazi depends on LAPACK as an interface for dense matrix and on Epetra as an interface for sparse CSR matrix formats.

- Returned Eigenvectors: only right eigenvectors are calculated.

- Dependencies: Anasazi depends on Trilinos [41] and LAPACK/BLAS.

- GPU-based version: Currently not supported.

- Parallel version: a parallel version of Anasazi is not currently available. On the other hand, the library has an abstract structure, which is, in principle, compatible with parallel implementations.

- Development: the latest version of Anasazi was released in 2014.

4.4. SLEPc

- Summary: SLEPc [42] is a library that focuses on the solution of large sparse eigenproblems.

- Eigenvalue Methods: SLEPc includes a variety of methods, for both symmetric and non-symmetric problems. For non-symmetric problems, it provides the following methods: power/inverse power/Rayleigh quotient iteration with deflation, in a single implementation; Subspace iteration with Rayleigh-Ritz projection and locking; Explicitly Restarted and Deflated (ERD) Arnoldi; Krylov–Schur; GD; Jacobi–Davidson (JD); CI-Hankel; and, CI-RR methods.

- Matrix Formats: SLEPc depends on LAPACK as an interface for dense matrix and on MUMPS [63] as an interface for sparse Compressed Sparse Row (CSR) matrix formats. In addition, it supports custom data formats, enabled by RCI.

- Returned Eigenvectors: only the power method and Krylov–Schur method implementations are two-sided. All other algorithms return only right eigenvectors.

- Dependencies: SLEPc depends on PETSc (Portable, Extensible Toolkit for Scientific Computation) [64]. By default, the matrix factorization routines that are provided by PETSc are utilized by SLEPc but, at the compilation stage, SLEPc can be linked to other more efficient solvers, e.g., MUMPS, which is recommended by SLEPc developers and exploits parallelism.

- GPU-based version: SLEPc supports GPU computing, which depends on NVidia CUDA.

- Parallel version: SLEPc includes a parallel version that depends on MPI. The parallel version employs MUMPS as its linear sparse solver.

- Development: the first version of SLEPc (2.1.1) was released in 2002. The latest version is SLEPc 3.13 and it was released in 2020.

4.5. FEAST

- Summary: FEAST [43] is the eigensolver that implements the FEAST algorithm, which was first proposed in [35]. Among other characteristics, the package includes the option to switch to IFEAST, which uses an inexact iterative solver to avoid direct matrix factorizations. This feature is particularly useful if the sparse matrices are very large and carrying out direct factorization is very expensive.

- Eigenvalue Methods: FEAST.

- Arithmetic: both real and complex types.

- Matrix Formats: FEAST depends on LAPACK as an interface for dense matrix, on SPIKE as an interface for banded matrix and on MKL-PARDISO [65] for sparse CSR matrix formats. In addition, FEAST includes RCI and, thus, data formats can be customized by the user. Using the sparse interface requires linking FEAST with Intel MKL. Linking the library with BLAS/LAPACK and not with MKL is possible, but seriously impacts the performance and the results of the library.

- Returned Eigenvectors: the FEAST algorithm implementation is two-sided.

- Dependencies: FEAST requires LAPACK/BLAS.

- GPU-based version: currently not supported.

- Parallel version: PFEAST and PIFEAST are the parallel implementations of FEAST and IFEAST, respectively. Both support three-Level MPI message parsing layer, with available options for the MPI library being Intel MPI, OpenMPI, and MPICH. PFEAST and PIFEAST employ MKL-Cluster-PARDISO and PBiCGStab, respectively, as their parallel linear sparse solvers.

- Development: the first version of FEAST (v1.0) was released in 2009 and supported only symmetric matrices. Through the years FEAST added many functionalities, such as support for non-symmetric matrices, parallel computing, and support for polynomial eigenvalue problems. Since 2013, Intel MKL has adopted FEAST v2.1 as its extended eigensolver. The latest version is FEAST v4.0, which was released in 2020.

4.6. z–PARES

- Summary: z-PARES [44] is a complex moment-based contour integration eigensolver for GEPs that finds the eigenvalues (and corresponding eigenvectors) that lie into a contour path defined by the user.

- Eigenvalue Methods: CI-Hankel, CI-RR.

- Matrix Formats: z-PARES depends on LAPACK for dense matrices and on MUMPS for sparse CSR matrices. In addition, it supports custom data formats, enabled by RCI.

- Returned Eigenvectors: only right eigenvectors are calculated.

- Dependencies: z-PARES requires BLAS/LAPACK to be installed.

- GPU-based version: currently not supported.

- Parallel version: z-PARES includes a parallel version, which exploits two-Level MPI layer and employs MUMPS as its sparse solver.

- Development: the latest version of z-PARES is v0.9.6a and it was released in 2014.

4.7. Summary of Library Features

5. Case Studies

5.1. All-Island Irish Transmission System

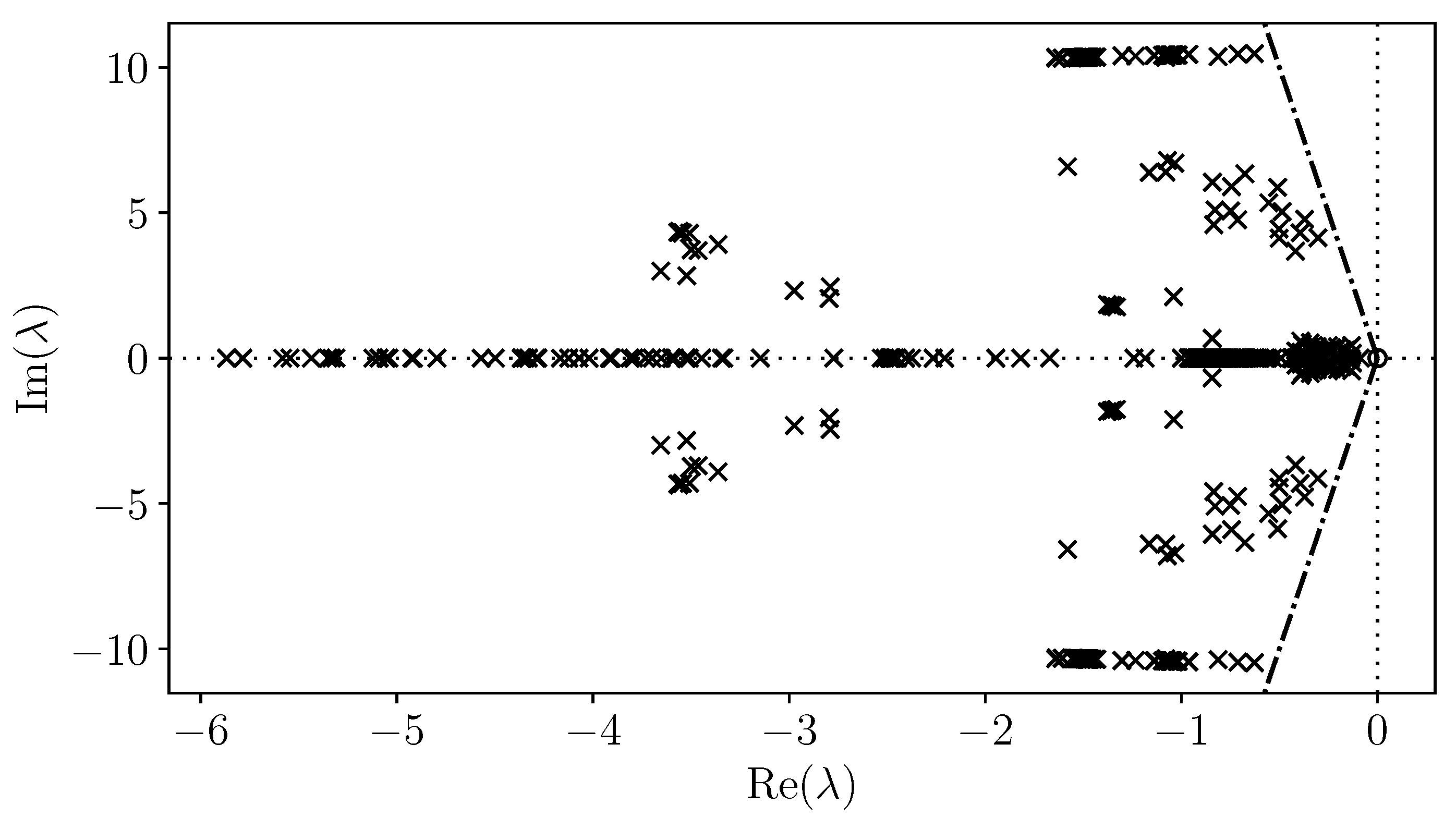

5.1.1. Dense Algorithms

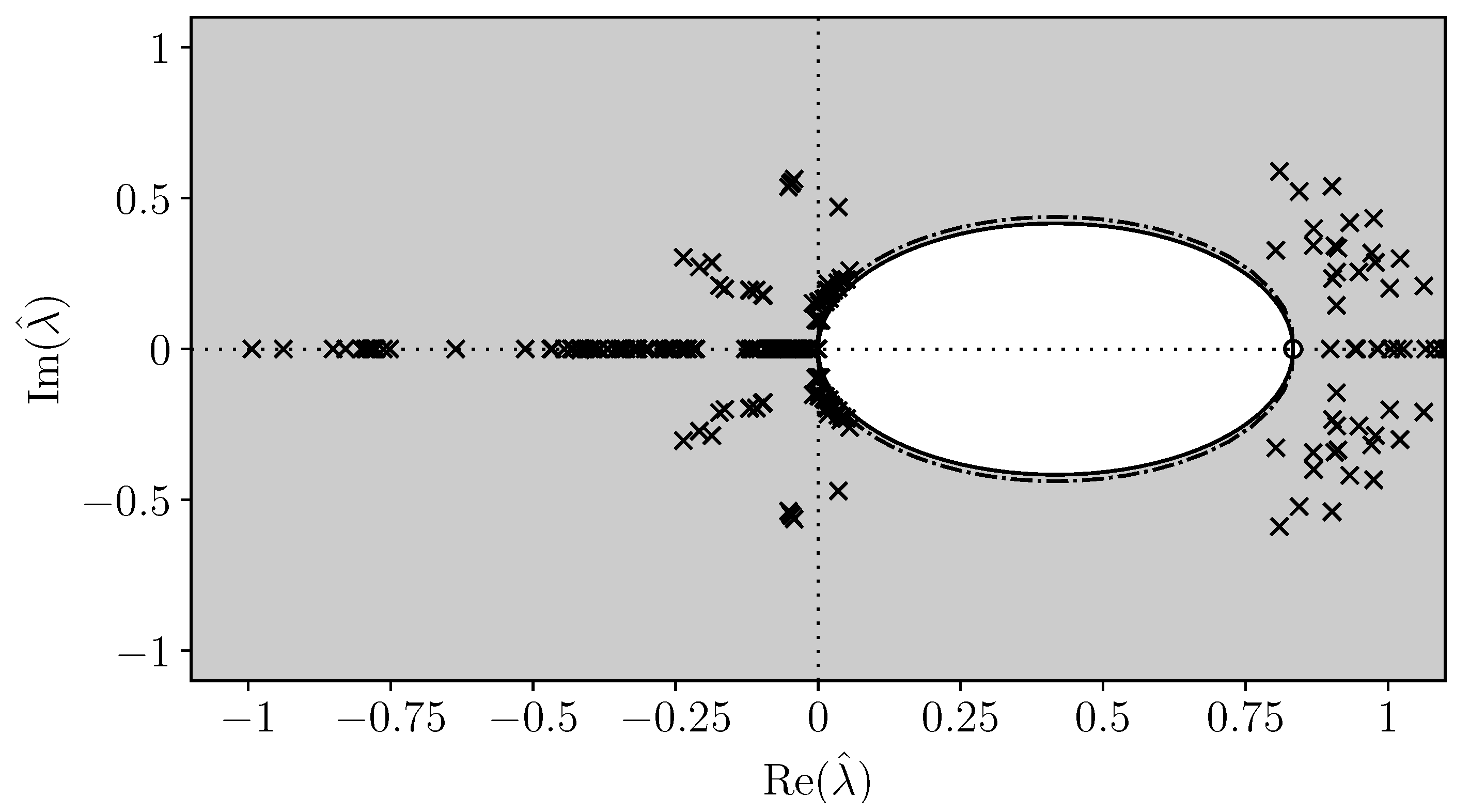

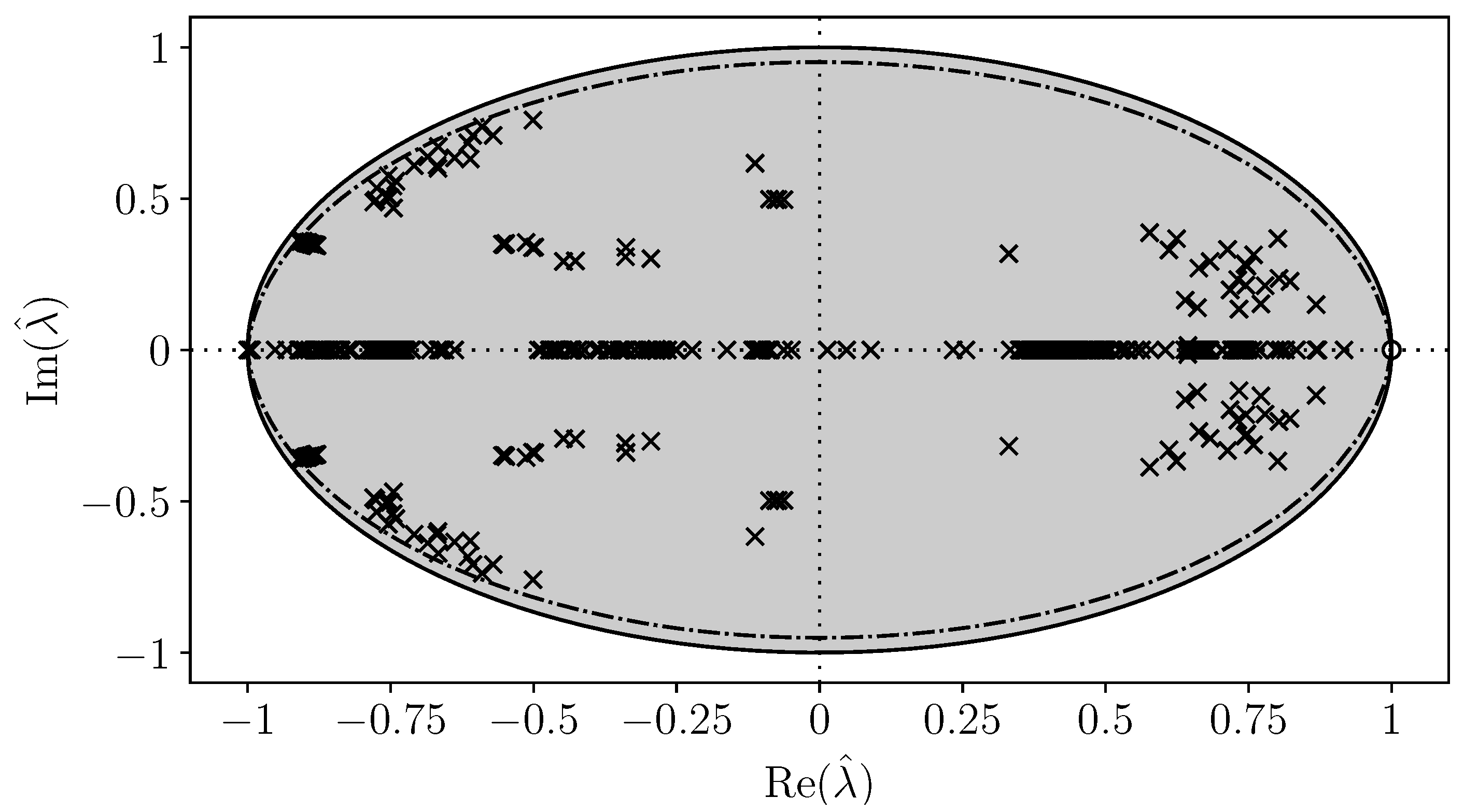

5.1.2. Möbius Transform Image of the Spectrum

5.1.3. Sparse Algorithms

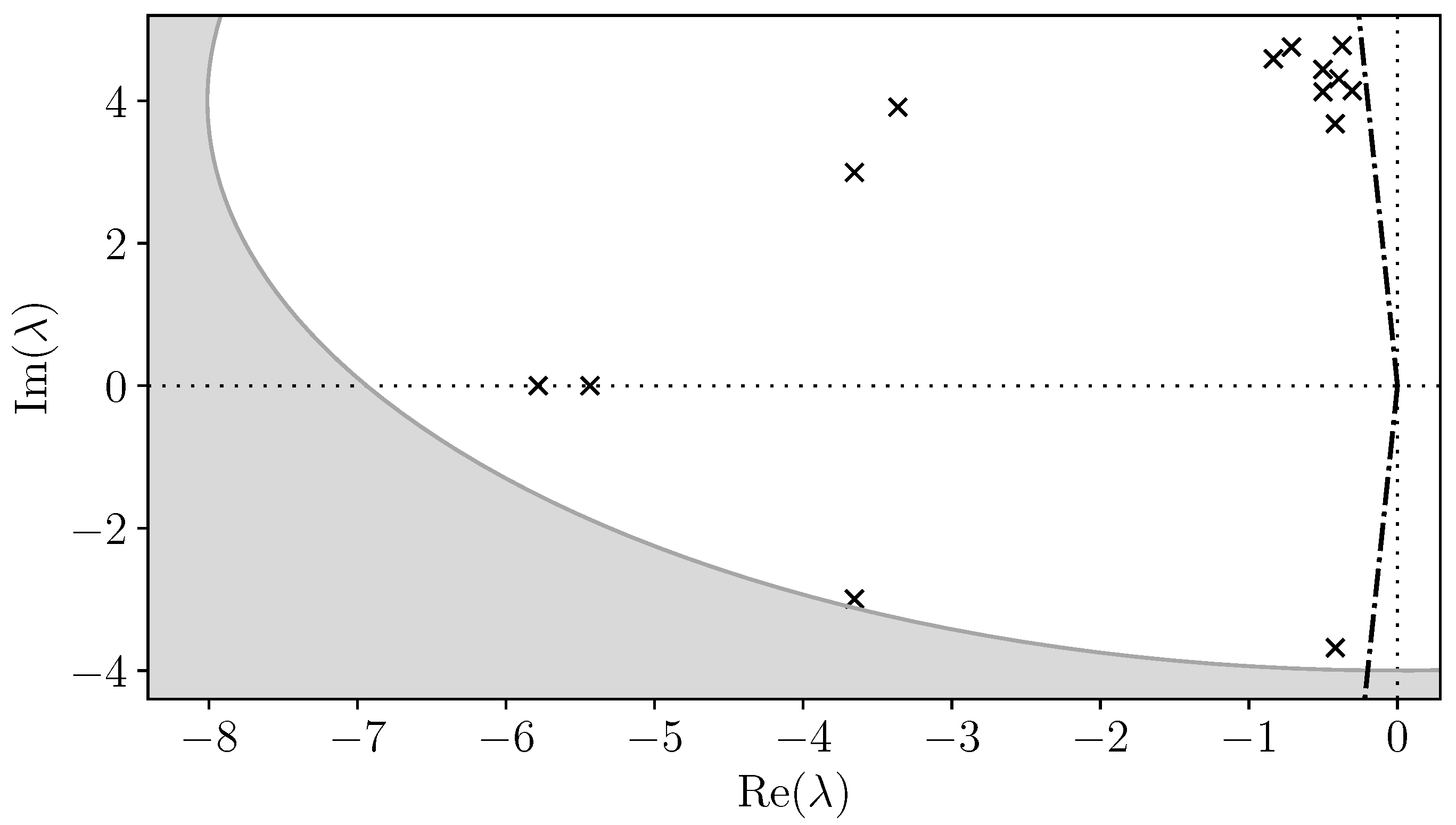

5.2. European Network of Transmission System Operators for Electricity

5.3. Remarks

- With regard to dense matrix methods, their main disadvantage is that they are computationally expensive. In addition, they generate complete fill-in in general sparse matrices and, therefore, cannot be applied to large sparse matrices simply because of massive memory requirements. Even so, LAPACK is the most mature among all computer-based eigensolvers and, as opposed to basically all sparse solvers, requires practically no parameter tuning. For small to medium size problems, the QR algorithm with LAPACK remains the standard and most reliable algorithm for finding the full spectrum for the conventional LEP.

- As for sparse matrix methods, convergence of vector iteration methods can be very slow and, thus, in practice, if not completely avoided, these algorithms should only be used for the solution of simple eigenvalue problems. An application where vector iteration methods may be more relevant, is in correcting eigenvalues and eigenvectors that have been computed with low accuracy, i.e., as an alternative to Newton’s method [69]. With regard to Krylov subspace methods, the main shortcoming of ARPACK’s implementation is the lack of support for general, non-symmetric left-hand side coefficient matrices, which is the form that commonly appears when dealing with the GEP of large power system models. On the other hand, the implementations of ERD-Arnoldi and Krylov–Schur by SLEPc do not have this limitation and exploit parallelism while providing good accuracy, although some parameter tuning effort is required. In addition, for the scale of the AIITS system and the GEP, these methods appear to be by far more efficient than LAPACK. Moreover, the implementation of contour integration by z-PARES is very efficient and can handle systems at the scale of the ENTSO-E. An interesting result is that, at this scale, smaller contour search regions, even if properly setup, do not necessarily imply faster convergence or, equivalently, smaller subspaces are not necessarily constructed faster by the CI-RR algorithm. The most relevant issue for z-PARES is that, depending on the problem, it may miss some critical eigenvalues, despite the defined search contour being reasonable. Although there may be some parameter settings for which this problem does not occur, those can not be known a priori.

- Finally, the presented comparison of numerical methods and libraries is not specific of power system problems, but it is relevant to any system with unsymmetric matrices. A relevant problem of the eigenvalue analysis for any dynamical system is to utilize its physical background in order to obtain an efficient approximation to some (components of) the eigenvectors. A computer algorithm that has successfully addressed this problem for conventional, synchronous machine dominated power systems is AESOPS [23,72], a heuristic quasi Newton–Raphson based method that aimed at finding dominant electromechanical oscillatory modes. On the other hand, how to effectively exploit the physical structure of modern, high-granularity power systems to shape efficient numerical algorithms is a challenging problem and an open research question.

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

Notation

| vector | |

| matrix | |

| matrix conjugate transpose (Hermitian) | |

| complex numbers | |

| Hessenberg matrix | |

| identity matrix of dimensions | |

| j | unit imaginary number |

| Hankel matrix | |

| upper triangular matrix of QR decomposition | |

| real numbers | |

| s | complex Laplace variable |

| upper triangular matrix of Schur decomposition | |

| right eigenvector | |

| left eigenvector | |

| vector of state variables | |

| vector of algebraic variables | |

| z | spectral transform |

| center of circular contour in the complex plane | |

| eigenvalue | |

| moment associated to matrix pencil | |

| spectral anti-shift | |

| radius of circular contour in the complex plane | |

| spectral shift | |

| contour integrals associated to matrix pencil | |

| zero matrix of dimensions |

Appendix A

- Construct an orthonormal basis , , which represents the subspace associated to p eigenvalues.

- Compute . Matrix is the projection of onto the subspace .

- Compute the eigenvalues and eigenvectors , of . The eigenvalues are called Ritz values and are also eigenvalues of .

- Form the vector , for . Each vector is called Ritz vector and corresponds to the eigenvalue .

- 2.

- Compute and .

- 3.

- Compute the eigenvalues and eigenvectors , of . The p eigenvalues found are called Ritz values and are also eigenvalues of .

References

- Sauer, P.W.; Pai, M.A. Power System Dynamics and Stability; Prentice Hall: Upper Saddle River, NJ, USA, 1998. [Google Scholar]

- Milano, F. Power System Modelling and Scripting; Springer: New York, NY, USA, 2010. [Google Scholar]

- Gibbard, M.; Pourbeik, P.; Vowles, D. Small-Signal Stability, Control and Dynamic Performance of Power Systems; University of Adelaide Press: Adelaide, Australia, 2015. [Google Scholar]

- Chow, J.H.; Cullum, J.; Willoughby, R.A. A Sparsity-Based Technique for Identifying Slow-Coherent Areas in Large Power Systems. IEEE Trans. Power Appar. Syst. 1984, PAS-103, 463–473. [Google Scholar] [CrossRef]

- Gao, B.; Morison, G.K.; Kundur, P. Voltage Stability Evaluation Using Modal Analysis. IEEE Trans. Power Syst. 1992, 7, 1529–1542. [Google Scholar] [CrossRef]

- Saad, Y. Numerical Methods for Large Eigenvalue Problems—Revised Edition; SIAM: Philadelphia, PA, USA, 2011. [Google Scholar]

- Kressner, D. Numerical Methods for General and Structured Eigenvalue Problems, 4th ed.; Springer: New York, NY, USA, 2015. [Google Scholar]

- Davidson, E.R. The iterative calculation of a few of the lowest eigenvalues and corresponding eigenvectors of large real-symmetric matrices. J. Comput. Phys. 1975, 17, 87–94. [Google Scholar] [CrossRef]

- Sorensen, D.C. Implicit Application of Polynomial Filters in a k-Step Arnoldi Method. SIAM J. Matrix Anal. Appl. 1992, 13, 357–385. [Google Scholar] [CrossRef]

- Knyazev, A.V. Toward the Optimal Preconditioned Eigensolver: Locally Optimal Block Preconditioned Conjugate Gradient Method. SIAM J. Sci. Comput. 2001, 23, 517–541. [Google Scholar] [CrossRef]

- von Mises, R.; Pollaczek-Geiringer, H. Praktische Verfahren der Gleichungsauflösung. Z. Angew. Math. Mech. 1929, 9, 152–164. [Google Scholar] [CrossRef]

- Bathe, K.J.; Wilson, E.L. Solution Methods for Large Generalized Eigenvalue Problems in Structural Engineering. Int. J. Numer. Methods Eng. 1973, 6, 213–226. [Google Scholar] [CrossRef]

- Martins, N. Efficient eigenvalue and frequency response methods applied to power System small-signal Stability Studies. IEEE Trans. Power Syst. 1986, 1, 217–224. [Google Scholar] [CrossRef]

- Martins, N.; Lima, L.T.G.; Pinto, H.J.C.P. Computing dominant poles of power system transfer functions. IEEE Trans. Power Syst. 1996, 11, 162–170. [Google Scholar] [CrossRef]

- Martins, N. The dominant pole spectrum eigensolver [for power system stability analysis]. IEEE Trans. Power Syst. 1997, 12, 245–254. [Google Scholar] [CrossRef]

- Rommes, J.; Martins, N. Efficient computation of transfer function dominant poles using subspace acceleration. IEEE Trans. Power Syst. 2006, 21, 1218–1226. [Google Scholar] [CrossRef]

- Gomes, S., Jr.; Martins, N.; Portela, C. Sequential Computation of Transfer Function Dominant Poles of s-Domain System Models. IEEE Trans. Power Syst. 2009, 24, 776–784. [Google Scholar]

- Rommes, J.; Martins, N.; Freitas, F.D. Computing Rightmost Eigenvalues for Small-Signal Stability Assessment of Large-Scale Power Systems. IEEE Trans. Power Syst. 2010, 25, 929–938. [Google Scholar] [CrossRef]

- Wang, L.; Semlyen, A. Application of sparse eigenvalue techniques to the small signal stability analysis of large power systems. IEEE Trans. Power Syst. 1990, 5, 635–642. [Google Scholar] [CrossRef]

- Campagnolo, J.M.; Martins, N.; Falcao, D.M. Refactored bi-iteration: A high performance eigensolution method for large power system matrices. IEEE Trans. Power Syst. 1996, 11, 1228–1235. [Google Scholar] [CrossRef]

- Francis, J.G.F. The QR transformation A unitary analogue to the LR transformation—Part 1. Comput. J. 1961, 4, 265–271. [Google Scholar] [CrossRef]

- Moler, C.B.; Stewart, G.W. An algorithm for generalized matrix eigenvalue problems. SIAM J. Numer. Anal. 1973, 10, 241–256. [Google Scholar] [CrossRef]

- Kundur, P.; Rogers, G.J.; Wong, D.Y.; Wang, L.; Lauby, M.G. A comprehensive computer program package for small signal stability analysis of power systems. IEEE Trans. Power Syst. 1990, 5, 1076–1083. [Google Scholar] [CrossRef]

- Milano, F.; Dassios, I. Primal and Dual Generalized Eigenvalue Problems for Power Systems Small-Signal Stability Analysis. IEEE Trans. Power Syst. 2017, 32, 4626–4635. [Google Scholar] [CrossRef]

- Arnoldi, W.E. The principle of minimized iterations in the solution of the matrix eigenvalue problem. Q. Appl. Math. 1951, 9, 17–29. [Google Scholar] [CrossRef]

- Lehoucq, R.B.; Sorensen, D.C. Deflation Techniques for an Implicitly Restarted Arnoldi Iteration. SIAM J. Matrix Anal. Appl. 1996, 17, 789–821. [Google Scholar] [CrossRef]

- Stewart, G.W. A Krylov–Schur Algorithm for Large Eigenproblems. SIAM J. Matrix Anal. Appl. 2002, 23, 601–614. [Google Scholar] [CrossRef]

- Chung, C.Y.; Dai, B. A Combined TSA-SPA Algorithm for Computing Most Sensitive Eigenvalues in Large-Scale Power Systems. IEEE Trans. Power Syst. 2013, 28, 149–157. [Google Scholar] [CrossRef]

- Liu, C.; Li, X.; Tian, P.; Wang, M. An improved IRA algorithm and its application in critical eigenvalues searching for low frequency oscillation analysis. IEEE Trans. Power Syst. 2017, 32, 2974–2983. [Google Scholar] [CrossRef]

- Li, Y.; Geng, G.; Jiang, Q. An efficient parallel Krylov-Schur method for eigen-analysis of large-scale power Systems. IEEE Trans. Power Syst. 2016, 31, 920–930. [Google Scholar] [CrossRef]

- Du, Z.; Liu, W.; Fang, W. Calculation of Rightmost Eigenvalues in Power Systems Using the Jacobi–Davidson Method. IEEE Trans. Power Syst. 2006, 21, 234–239. [Google Scholar] [CrossRef]

- Du, Z.; Li, C.; Cui, Y. Computing Critical Eigenvalues of Power Systems Using Inexact Two-Sided Jacobi-Davidson. IEEE Trans. Power Syst. 2011, 26, 2015–2022. [Google Scholar] [CrossRef]

- Sakurai, T.; Sugiura, H. A projection method for generalized eigenvalue problems using numerical integration. J. Comput. Appl. Math. 2003, 159, 119–128. [Google Scholar] [CrossRef]

- Sakurai, T.; Tadano, H. CIRR: A Rayleigh-Ritz type method with contour integral for generalized eigenvalue problems. Hokkaido Math. J. 2007, 36, 745–757. [Google Scholar] [CrossRef]

- Polizzi, E. Density-matrix-based algorithm for solving eigenvalue problems. Phys. Rev. B Am. Phys. Soc. 2009, 79, 115112. [Google Scholar] [CrossRef]

- Li, Y.; Geng, G.; Jiang, Q. A Parallel Contour Integral Method for Eigenvalue Analysis of Power Systems. IEEE Trans. Power Syst. 2017, 32, 624–632. [Google Scholar] [CrossRef]

- Li, Y.; Geng, G.; Jiang, Q. A parallelized contour integral Rayleigh–Ritz method for computing critical eigenvalues of large-scale power systems. IEEE Trans. Smart Grid 2018, 9, 3573–3581. [Google Scholar] [CrossRef]

- Angerson, E.; Bai, Z.; Dongarra, J.; Greenbaum, A.; McKenney, A.; Croz, J.D.; Hammarling, S.; Demmel, J.; Bischof, C.; Sorensen, D. LAPACK: A portable linear algebra library for high-performance computers. In Proceedings of the 1990 ACM/IEEE Conference on Supercomputing, New York, NY, USA, 12–16 November 1990. [Google Scholar]

- Tomov, S.; Dongarra, J.; Baboulin, M. Towards dense linear algebra for hybrid GPU accelerated manycore systems. Parallel Comput. 2010, 36, 232–240. [Google Scholar] [CrossRef]

- Lehoucq, R.B.; Sorensen, D.C.; Yang, C. ARPACK Users Guide: Solution of Large Scale Eigenvalue Problems by Implicitly Restarted Arnoldi Methods; SIAM: Philadelphia, PA, USA, 1997. [Google Scholar]

- Baker, C.; Hetmaniuk, U.; Lehoucq, R.; Thornquist, H. Anasazi software for the numerical solution of large-scale eigenvalue problems. ACM Trans. Math. Softw. 2009, 36, 351–362. [Google Scholar] [CrossRef]

- Hernandez, V.; Roman, J.E.; Vidal, V. SLEPc: A scalable and flexible toolkit for the solution of eigenvalue problems. ACM Trans. Math. Softw. 2005, 31, 351–362. [Google Scholar] [CrossRef]

- Polizzi, E. FEAST Eigenvalue Solver v4.0 User Guide. arXiv 2020, arXiv:2002.04807. [Google Scholar]

- Futamura, Y.; Sakurai, T. z-Pares Users’ Guide Release 0.9.5; University of Tsukuba: Tsukuba, Japan, 2014. [Google Scholar]

- Milano, F. Semi-implicit formulation of differential-algebraic equations for transient stability analysis. IEEE Trans. Power Syst. 2016, 31, 4534–4543. [Google Scholar] [CrossRef]

- Gantmacher, R. The Theory of Matrices I, II; Chelsea: New York, NY, USA, 1959. [Google Scholar]

- Dassios, I.; Tzounas, G.; Milano, F. The Möbius transform effect in singular systems of differential equations. Appl. Math. Comput. 2019, 361, 338–353. [Google Scholar] [CrossRef]

- Uchida, N.; Nagao, T. A new eigen-analysis method of steady-state stability studies for large power systems: S matrix method. IEEE Trans. Power Syst. 1988, 3, 706–714. [Google Scholar] [CrossRef]

- Lima, L.T.G.; Bezerra, L.H.; Tomei, C.; Martins, N. New methods for fast small-signal stability assessment of large scale power systems. IEEE Trans. Power Syst. 1995, 10, 1979–1985. [Google Scholar] [CrossRef]

- Parlett, B.N.; Poole, W.G. A geometric theory for QR, LU and power iteration. SIAM J. Numer. Anal. 1973, 10, 389–412. [Google Scholar] [CrossRef]

- Householder, A.S. Unitary triangularization of a nonsymmetric Matrix. J. ACM 1958, 5, 339–342. [Google Scholar] [CrossRef]

- Golub, G.H.; Loan, C.F.V. Matrix Computations, Fourth Edition; The Johns Hopkins University Press: Baltimore, MD, USA, 2013. [Google Scholar]

- Wu, K.; Simon, H. Thick-Restart Lanczos Method for Large Symmetric Eigenvalue Problems. SIAM J. Matrix Anal. Appl. 2000, 22, 602–616. [Google Scholar] [CrossRef]

- Asakura, J.; Sakurai, T.; Tadano, H.; Ikegami, T.; Kimura, K. A numerical method for nonlinear eigenvalue problems using contour integrals. JSIAM Lett. 2009, 1, 52–55. [Google Scholar] [CrossRef]

- Tang, P.T.P.; Polizzi, E. FEAST As A Subspace Iteration Eigensolver Accelerated By Approximate Spectral Projection. SIAM J. Matrix Anal. Appl. 2014, 35, 354–390. [Google Scholar] [CrossRef]

- Lawson, C.L.; Hanson, R.J.; Kincaid, D.R.; Krogh, F.T. Basic Linear Algebra Subprograms for FORTRAN Usage; Technical Report; University of Texas at Austin: Austin, TX, USA, 1977. [Google Scholar]

- Clint Whaley, R.; Petitet, A.; Dongarra, J.J. Automated empirical optimizations of software and the ATLAS project. Parallel Comput. 2001, 27, 3–35. [Google Scholar] [CrossRef]

- Blackford, L.S.; Choi, J.; Cleary, A.; D’Azevedo, E.; Demmel, J.; Dhillon, I.; Dongarra, J.; Hammarling, S.; Henry, G.; Petitet, A.; et al. ScaLAPACK Users’ Guide; SIAM: Philadelphia, PA, USA, 1997. [Google Scholar]

- Garbow, B.S. EISPACK—A package of matrix eigensystem routines. Comput. Phys. Commun. 1974, 7, 179–184. [Google Scholar] [CrossRef]

- Anderson, E.; Bai, Z.; Bischof, C.; Blackford, L.S.; Demmel, J.; Dongarra, J.J.; Du Croz, J.; Hammarling, S.; Greenbaum, A.; McKenney, A.; et al. LAPACK Users’ Guide, 3rd ed.; SIAM: Philadelphia, PA, USA, 1999. [Google Scholar]

- Davis, T.A. Direct Methods for Sparse Linear Systems; SIAM: Philadelphia, PA, USA, 2006. [Google Scholar]

- Snir, M.; Otto, S.; Huss-Lederman, S.; Walker, D.; Dongarra, J. MPI—The Complete Reference, Volume 1: The MPI Core; MIT Press: Cambridge, MA, USA, 1998. [Google Scholar]

- Amestoy, P.; Duff, I.S.; Koster, J.; L’Excellent, J.Y. A fully asynchronous multifrontal solver using distributed dynamic scheduling. SIAM J. Matrix Anal. Appl. 2001, 23, 15–41. [Google Scholar] [CrossRef]

- Argonne National Laboratory. PETSc Users Manual; Argonne National Laboratory: Lemont, IL, USA, 2020. [Google Scholar]

- Schenk, O.; Gartner, K. Solving Unsymmetric Sparse Systems of Linear Equations with PARDISO. J. Future Gener. Comput. Syst. 2004, 20, 475–487. [Google Scholar] [CrossRef]

- Pérez-Arriaga, I.J.; Verghese, G.C.; Schweppe, F.C. Selective Modal Analysis with Applications to Electric Power Systems, PART I: Heuristic Introduction. IEEE Trans. Power Appar. Syst. 1982, PAS-101, 3117–3125. [Google Scholar]

- Tzounas, G.; Dassios, I.; Milano, F. Modal Participation Factors of Algebraic Variables. IEEE Trans. Power Syst. 2020, 35, 742–750. [Google Scholar] [CrossRef]

- Dassios, I.; Tzounas, G.; Milano, F. Participation Factors for Singular Systems of Differential Equations. Circuits Syst. Signal Process. 2020, 39, 83–110. [Google Scholar] [CrossRef]

- Semlyen, A.; Wang, L. Sequential computation of the complete eigensystem for the study zone in small signal stability analysis of large power systems. IEEE Trans. Power Syst. 1988, 3, 715–725. [Google Scholar] [CrossRef]

- Milano, F. A Python-based software tool for power system analysis. In Proceedings of the 2013 IEEE Power & Energy Society General Meeting, Vancouver, BC, Canada, 21–25 July 2013. [Google Scholar]

- Murad, M.A.A.; Tzounas, G.; Liu, M.; Milano, F. Frequency control through voltage regulation of power system using SVC devices. In Proceedings of the 2019 IEEE Power & Energy Society General Meeting (PESGM), Atlanta, GA, USA, 4–8 August 2019. [Google Scholar]

- Byerly, R.T.; Bennon, R.J.; Sherman, D.E. Eigenvalue Analysis of Synchronizing Power Flow Oscillations in Large Electric Power Systems. IEEE Trans. Power Appar. Syst. 1982, PAS-101, 235–243. [Google Scholar] [CrossRef]

| Name | z | Pencil | s |

|---|---|---|---|

| Prime system | s | z | |

| Invert | |||

| Shift & invert | |||

| Cayley | |||

| Gen. Cayley | |||

| Möbius |

| M-System | a | b | c | d |

|---|---|---|---|---|

| Prime | 0 | 0 | ||

| Dual | 0 | 1 | 1 | 0 |

| Shift & invert | 1 | 1 | 0 | |

| Cayley | 1 | 1 | ||

| Gen. Cayley | 1 | 1 |

| Library | Method |

|---|---|

| LAPACK | QR, QZ |

| ARPACK | IR-Arnoldi |

| SLEPc | Power/Inverse Power/Rayleigh Quotient Iteration, |

| Subspace, ERD-Arnoldi, Krylov-Schur, | |

| GD, JD, CI-Hankel, CI-RR | |

| Anasazi | Block Krylov-Schur, GD |

| FEAST | FEAST |

| z-PARES | CI-Hankel, CI-RR |

| Library | Data Formats | Computing | 2-Sided | Real/Complex | Releases | |||||

|---|---|---|---|---|---|---|---|---|---|---|

| Dense | CSR | Band | RCI | GPU | Parallel | First | Latest | |||

| LAPACK | ✓ | ✗ | ✗ | ✗ | ✓ | ✓ | ✓ | ✓ | 1992 | 2016 |

| ARPACK | ✗ | ✗ | ✗ | ✓ | ✗ | ✓ | ✗ | ✓ | 1995 | 2019 |

| SLEPc | ✓ | ✓ | ✗ | ✓ | ✓ | ✓ | ✓ | ✓ | 2002 | 2020 |

| Anasazi | ✓ | ✓ | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ | 2008 | 2014 |

| FEAST | ✓ | ✓ | ✓ | ✓ | ✗ | ✓ | ✓ | ✓ | 2009 | 2020 |

| z-PARES | ✓ | ✓ | ✗ | ✓ | ✗ | ✓ | ✗ | ✓ | 2014 | 2014 |

| Library (Version) | Dependencies (Version) |

|---|---|

| LAPACK (3.8.0) | ATLAS (3.10.3) |

| MAGMA (2.2.0) | NVidia CUDA (10.1) |

| ARPACK-NG (3.5.0) | SuiteSparse KLU (1.3.9) |

| z-PARES (0.9.6a) | OpenMPI (3.0.0), MUMPS (5.1.2) |

| SLEPc (3.8.2) | PETSc (3.8.4), MUMPS (5.1.2) |

| Problem | Pencil | Size |

|---|---|---|

| LEP | ||

| GEP |

| Library | LAPACK | MAGMA | LAPACK |

|---|---|---|---|

| Problem | LEP | LEP | GEP |

| Method | QR | QR | QZ |

| Spectrum | All | All | All |

| Time [s] | |||

| Found | 1443 eigs. | 1443 eigs. | 8640 eigs. |

| LRP eigs. | |||

| Library | SLEPc |

|---|---|

| Method | Subspace |

| Spectrum | 50 LM |

| Transform | Invert |

| Time [s] | |

| Found | 50 |

| LRP eigs. | |

| Library | ARPACK | SLEPc | SLEPc |

|---|---|---|---|

| Method | IR-Arnoldi | ERD-Arnoldi | |

| Spectrum | 50 LRP | 50 TRP | 50 TRP |

| Transform | - | Shift & invert | Shift & invert |

| Time [s] | |||

| Found | 26 eigs. | 54 eigs. | 55 eigs. |

| LRP eigs. | |||

| Library | SLEPc | |

|---|---|---|

| Method | ERD-Arnoldi | Krylov-Schur |

| Spectrum | 50 TRP | 50 TRP |

| Transform | Shift & invert | Shift & invert |

| Time [s] | ||

| Found | 51 eigs. | 53 eigs. |

| LRP eigs. | ||

| Library | z-PARES | |

|---|---|---|

| Method | CI-RR | |

| Spectrum | ||

| Problem | LEP | GEP |

| Time [s] | ||

| Found | 49 eigs. | 52 eigs. |

| LRP eigs. | ||

| n | 49,396 |

| m | 96,770 |

| Dimensions of | 146,166 × 146,166 |

| Sparsity degree of [%] |

| Library | z-PARES | ||

|---|---|---|---|

| Problem | GEP | ||

| Method | CI-RR | ||

| c | |||

| 8 | 4 | 2 | |

| Time [s] | |||

| Found | 349 eigs. | 350 eigs. | 110 eigs. |

| Library | z-PARES | ||

|---|---|---|---|

| Problem | GEP | ||

| Method | CI-RR | ||

| Spectrum | |||

| Transform | - | Invert | Inverted Cayley |

| Time [s] | |||

| Found | 349 eigs. | 297 eigs. | 349 eigs. |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Tzounas, G.; Dassios, I.; Liu, M.; Milano, F. Comparison of Numerical Methods and Open-Source Libraries for Eigenvalue Analysis of Large-Scale Power Systems. Appl. Sci. 2020, 10, 7592. https://doi.org/10.3390/app10217592

Tzounas G, Dassios I, Liu M, Milano F. Comparison of Numerical Methods and Open-Source Libraries for Eigenvalue Analysis of Large-Scale Power Systems. Applied Sciences. 2020; 10(21):7592. https://doi.org/10.3390/app10217592

Chicago/Turabian StyleTzounas, Georgios, Ioannis Dassios, Muyang Liu, and Federico Milano. 2020. "Comparison of Numerical Methods and Open-Source Libraries for Eigenvalue Analysis of Large-Scale Power Systems" Applied Sciences 10, no. 21: 7592. https://doi.org/10.3390/app10217592

APA StyleTzounas, G., Dassios, I., Liu, M., & Milano, F. (2020). Comparison of Numerical Methods and Open-Source Libraries for Eigenvalue Analysis of Large-Scale Power Systems. Applied Sciences, 10(21), 7592. https://doi.org/10.3390/app10217592