Author Contributions

Conceptualization, A.C. and P.P.; Data curation, A.C.; Formal analysis, C.A.; Investigation, A.C. and P.P.; Methodology, P.P.; Project administration, C.A.; Resources, A.C.; Software, A.C.; Supervision, P.P. and C.A.; Validation, A.C. and P.P.; Writing—original draft, A.C. and P.P.; Writing—review and editing, C.A. All authors have read and agreed to the published version of the manuscript.

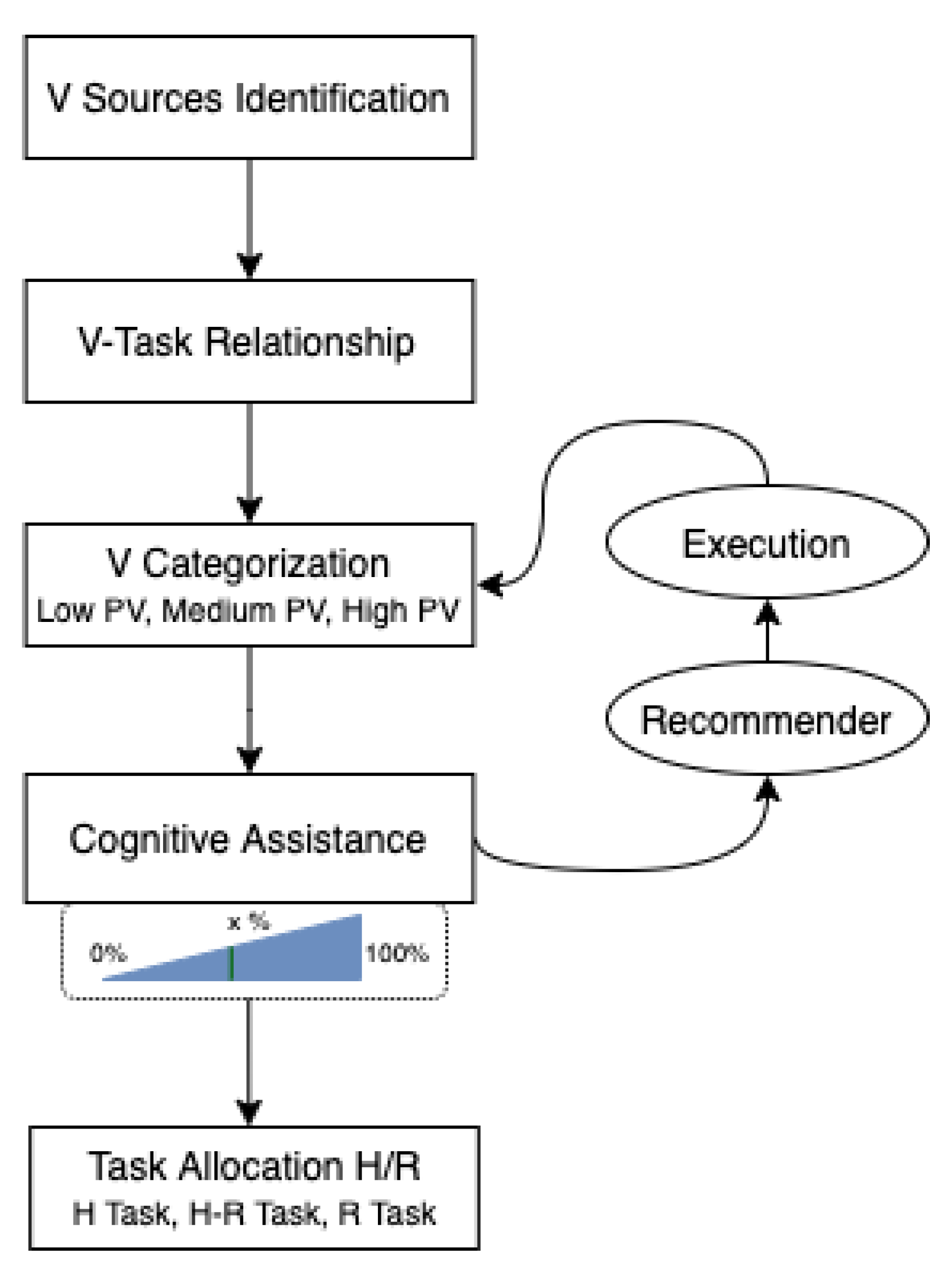

Figure 1.

Analysing process variability in human-robot collaborative tasks and task allocation.

Figure 1.

Analysing process variability in human-robot collaborative tasks and task allocation.

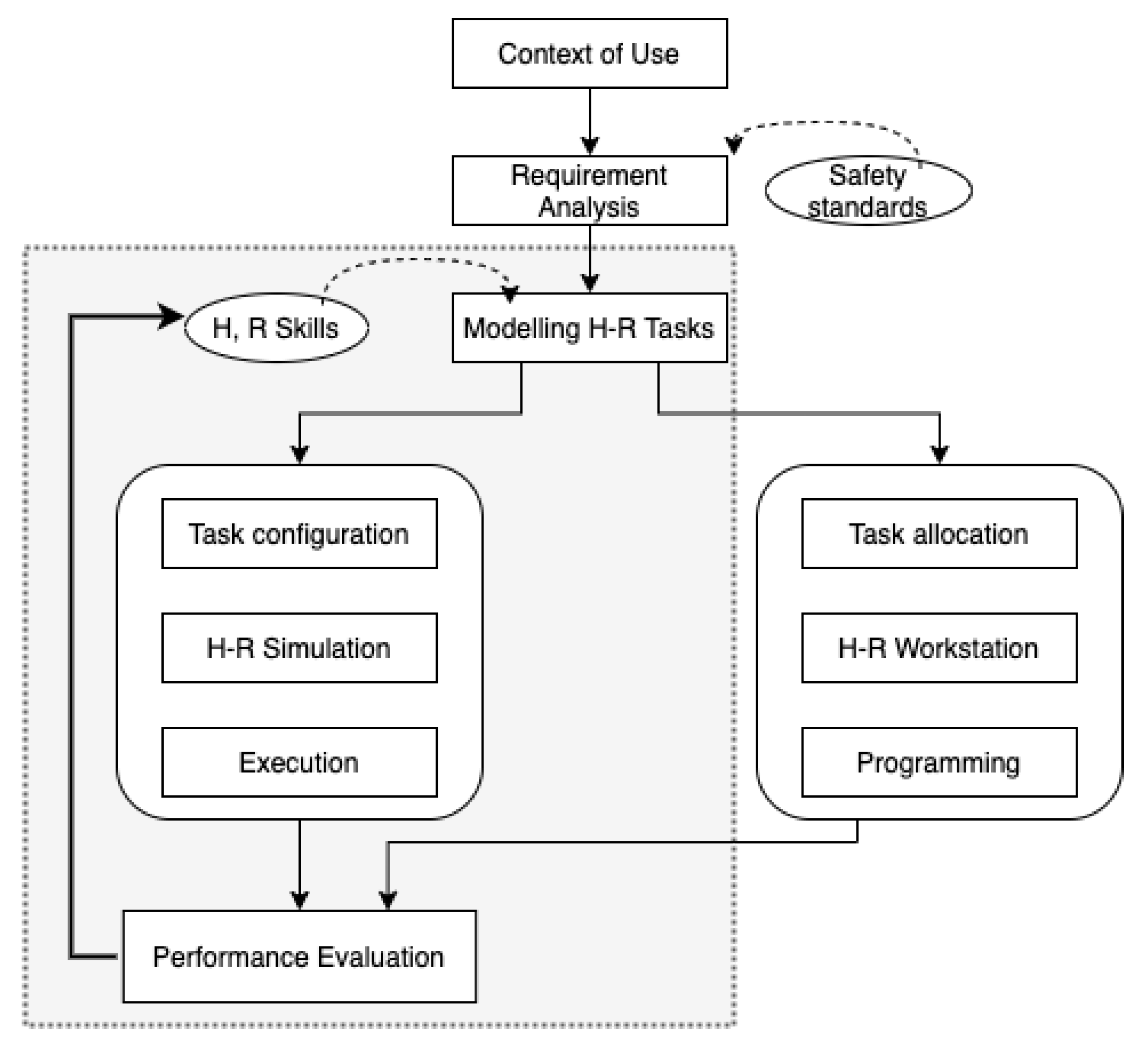

Figure 2.

Interactive model for human-robot tasks. The shadow zone is where our approach is focusing.

Figure 2.

Interactive model for human-robot tasks. The shadow zone is where our approach is focusing.

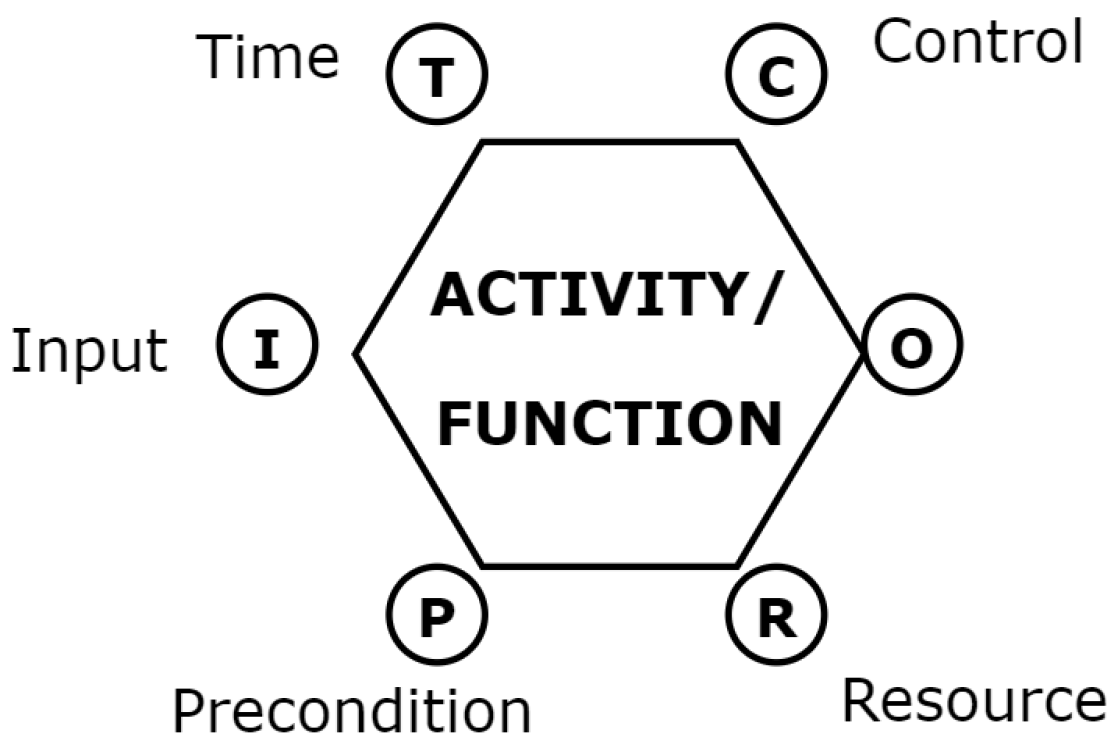

Figure 3.

FRAM activity/function representation [

45] in order to graphically represent instances in a FRAM study.

Figure 3.

FRAM activity/function representation [

45] in order to graphically represent instances in a FRAM study.

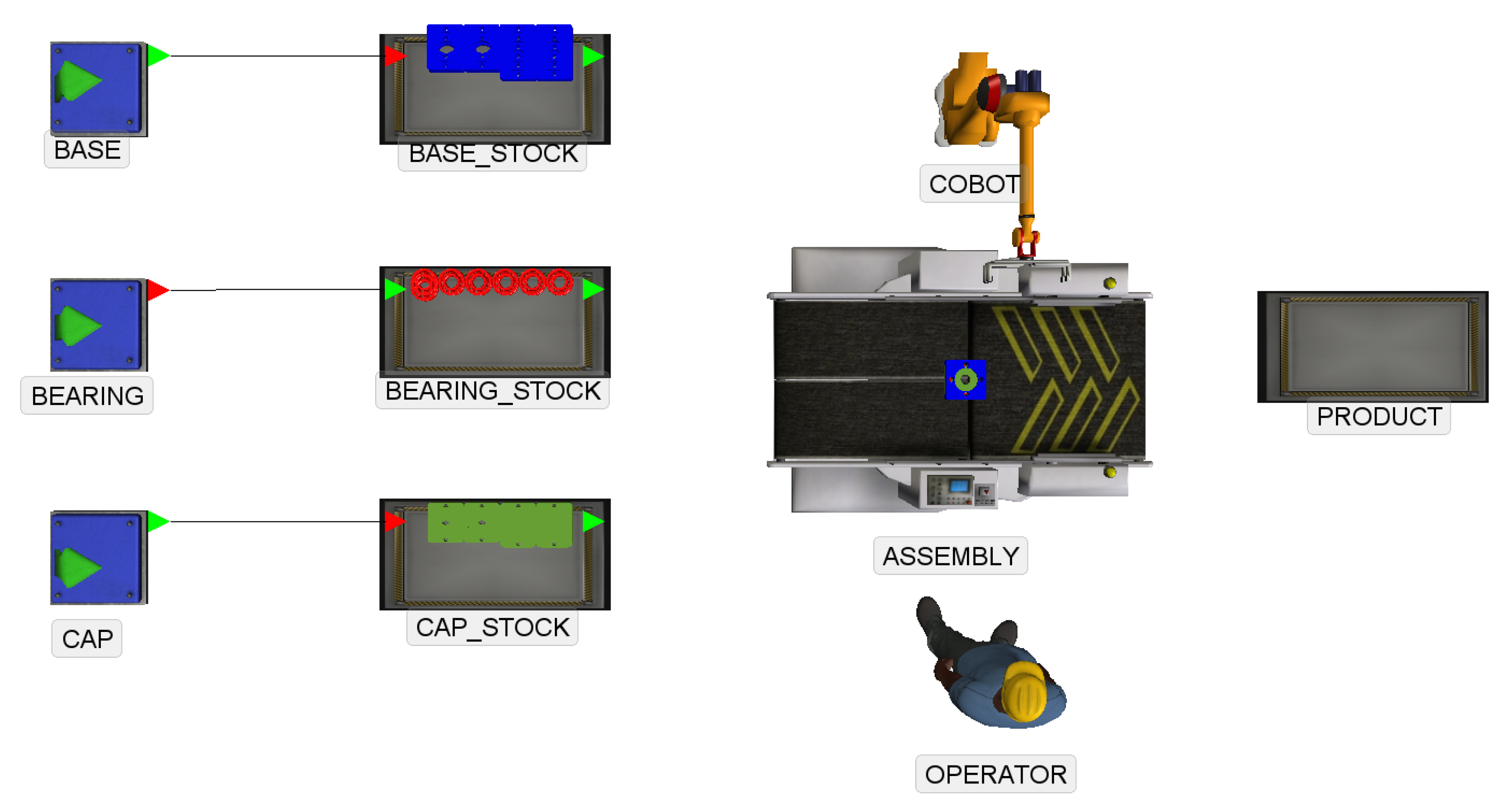

Figure 4.

Simulated layout of an assembly task.

Figure 4.

Simulated layout of an assembly task.

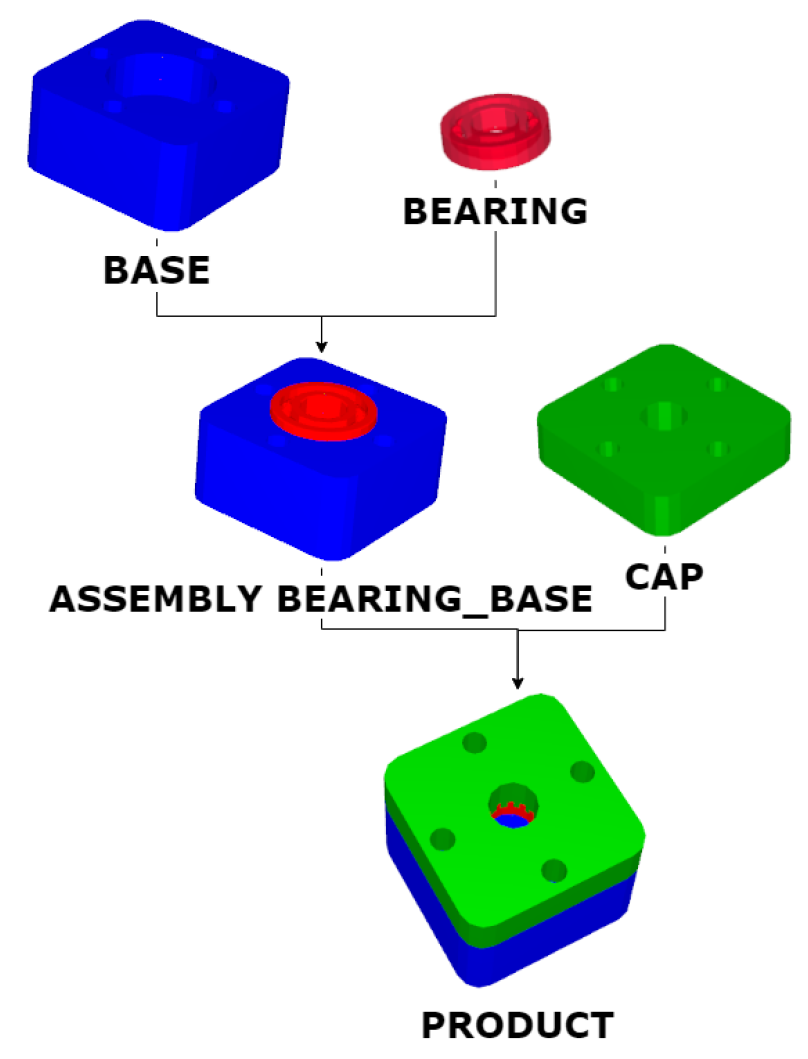

Figure 5.

Product and assembly steps.

Figure 5.

Product and assembly steps.

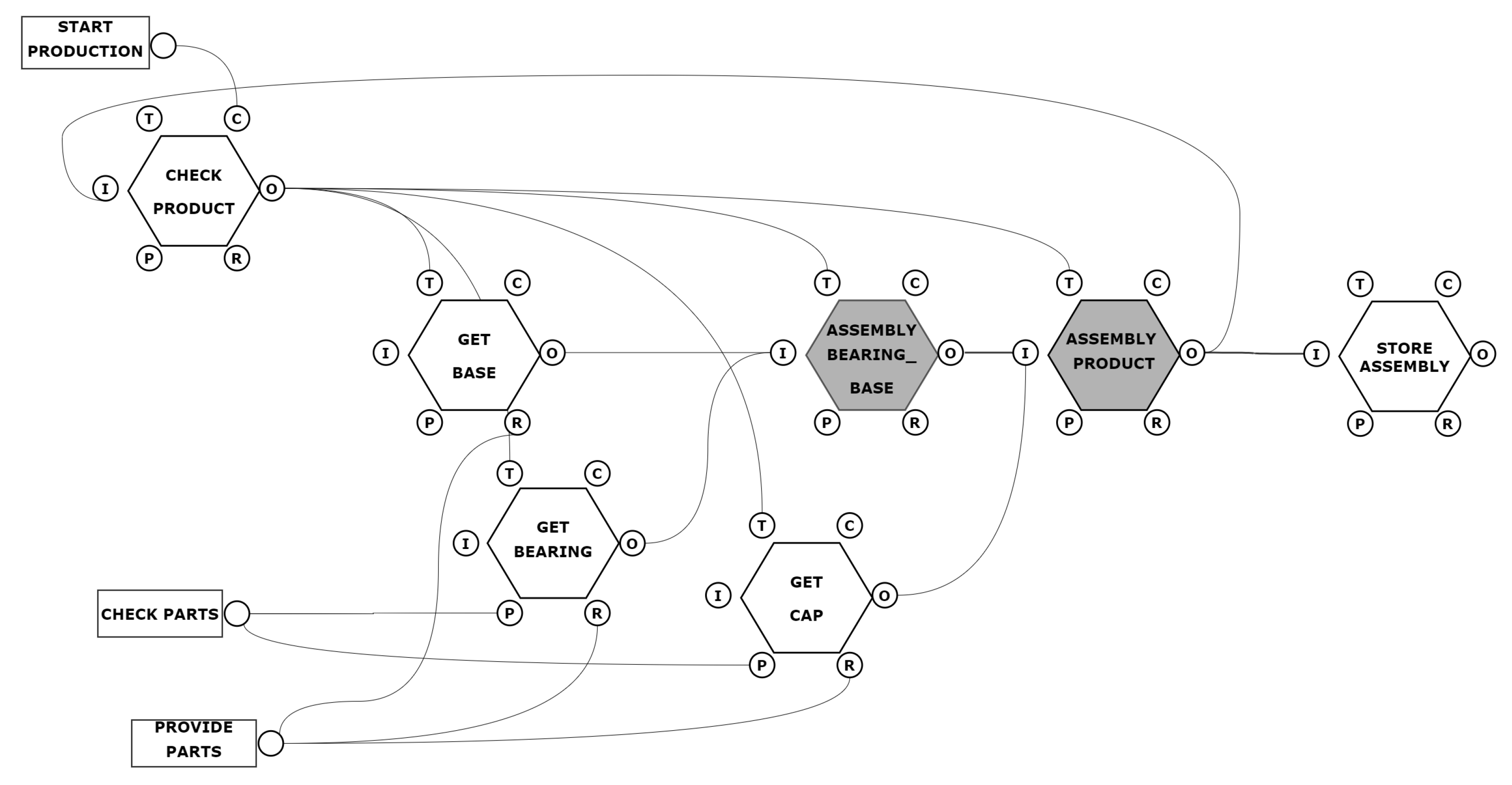

Figure 6.

The assembly task as an instance of the model FRAM.

Figure 6.

The assembly task as an instance of the model FRAM.

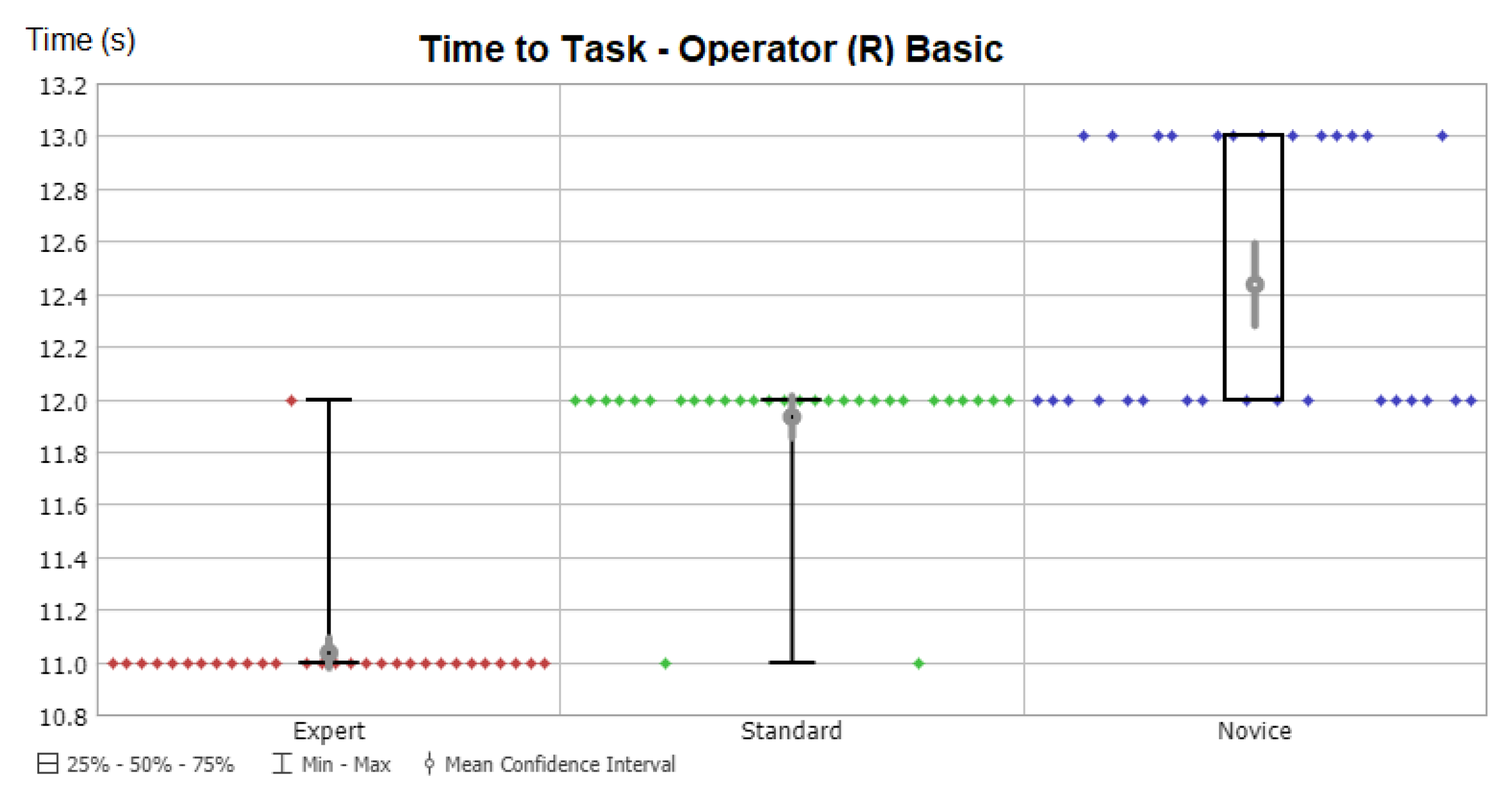

Figure 7.

Time variation with Operator Robotic Basic in Scenario 3.

Figure 7.

Time variation with Operator Robotic Basic in Scenario 3.

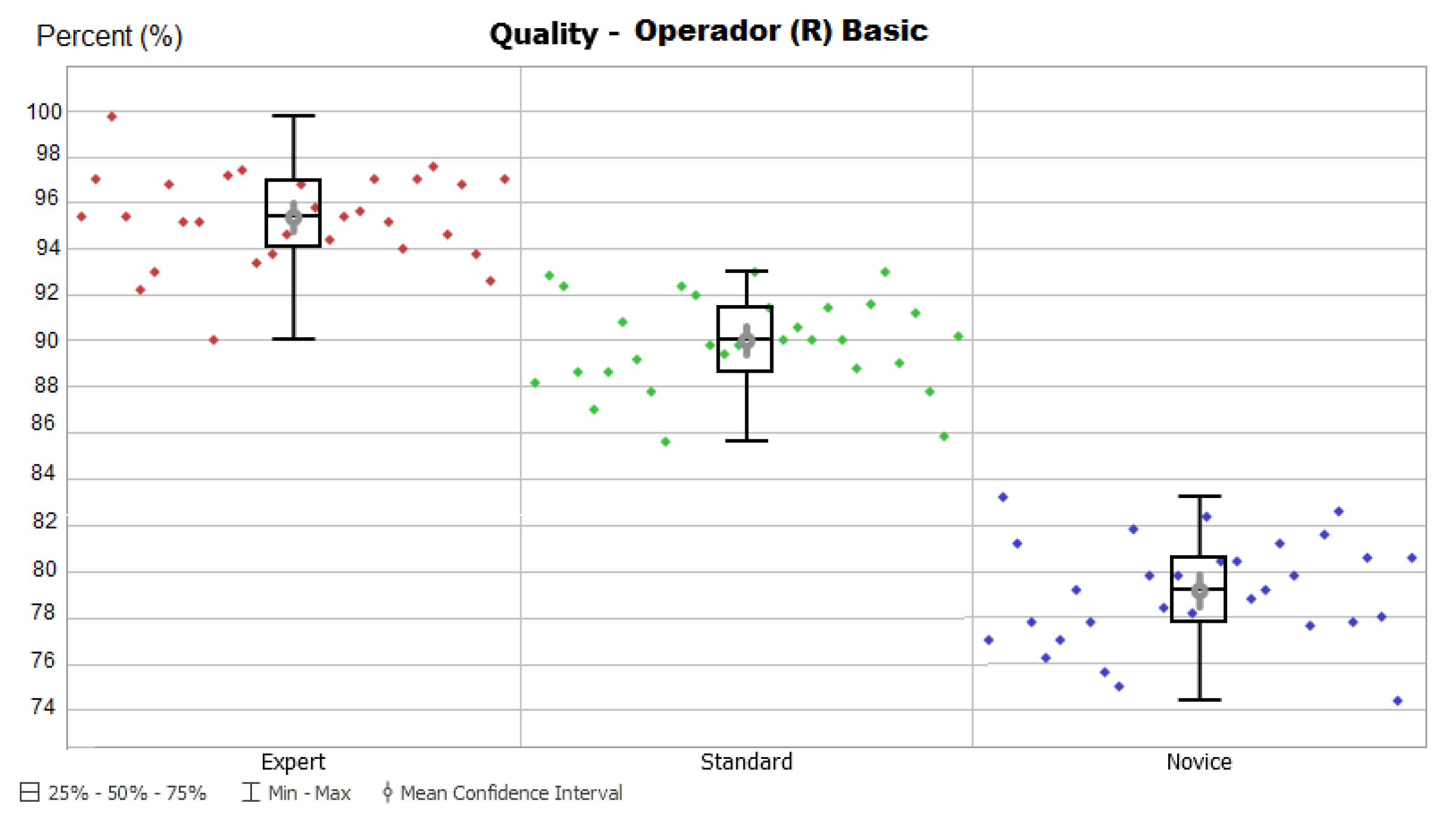

Figure 8.

Quality variation with Operator Human Standard in Scenario 3.

Figure 8.

Quality variation with Operator Human Standard in Scenario 3.

Table 1.

A comparison among several Human-Robot Interaction metrics. Columns show the robotics approach, the interaction level, the employed metrics and some particular features. H, R notation means human or robot (traded), while H-R means human and robot (shared).

Table 1.

A comparison among several Human-Robot Interaction metrics. Columns show the robotics approach, the interaction level, the employed metrics and some particular features. H, R notation means human or robot (traded), while H-R means human and robot (shared).

| Approach | Category | Metrics | Features |

|---|

| Research [15] | Cognitive | H,R task effectiveness, Interaction effort, Situational awareness | Review and Classification of common metrics |

| Research [17] | Physical and Cognitive | Human-robot teaming performance | Developing a metrology suite |

| Research [18] | Physical | Subjective metrics in a 7-point Likert scale survey (seven heuristics) | Physical human interaction is modelled as informative and not as a disturbance |

| Research [19] | Cognitive | Physiological, Task analysis | Model and comparison of H-H team, H-R team |

| Research [20] | Cognitive | Fluency | H-R teams research |

| Social [21] | Cognitive | System Usability Scale (SUS) questionnaire | Early detection neurological impairments such as dementia |

| Social [22] | Cognitive | Task effectiveness | H-R model with perception, knowledge, plan and action |

| Industry [23] | Physical | Human localization, Latency, Performance | Prediction of future locations of H,R for safety |

| Industry [24] | Physical | Completion task time | Robotic assembly systems |

| Industry [25] | Physical | H-R distance, speed, performance;

Time collision | Algorithm case studies, Standards ISO 13855, ISO TS 15066 |

| Industry [26] | Physical | H-R risk, Degree of collaboration, Task analysis | Assembly line with H-R shared tasks |

| Industry [27] | Physical and Cognitive | Level of Collaboration; H,R fully controlled, cooperation | Holistic perspective in human-robot cooperation |

Table 2.

Task effectiveness and task efficiency metrics for measuring performance of human-robot interaction.

Table 2.

Task effectiveness and task efficiency metrics for measuring performance of human-robot interaction.

| Metric | Detail |

|---|

| Task effectiveness | |

| TSR | (H, R) Task Success Rate with respect to the total number of tasks in the activity |

| F | Frequency with which the human requests assistance to complete their tasks |

| Task efficiency | |

| CAT | Concurrent Activity Time (H-R): percentage of time that the two agents are active in the same time interval |

| TT | Time to complete a task (H, R) |

| IT | Idle Time: percentage of time the agent (H, R) is idle |

| FD | Functional Delay: percentage of time between tasks when changing the agent (H, R) |

Table 3.

Aspects related to an <ACTIVITY>.

Table 3.

Aspects related to an <ACTIVITY>.

| Aspects | Description |

|---|

| Input (I) | It activates the activity and/or is used or transformed to produce the Output. Constitutes the link to upstream activities. |

| Output (O) | It is the result of the activity. Constitutes the link to downstream activities. |

| Precondition (P) | Conditions to be fulfilled before the activity can be performed. |

| Resource (R) | Components needed or consumed by the activity when it is active. |

| Control (C) | Supervises or regulates the activity such that it derives the desired Output. |

| Time (T) | Temporal aspects that affect how the activity is carried out. |

Table 4.

Function <ASSEMBLY PRODUCT>.

Table 4.

Function <ASSEMBLY PRODUCT>.

| Name of Function | <ASSEMBLY PRODUCT> |

|---|

| Description | Assembly the PRODUCT, put the CAP in the ASSEMBLY BEARING_BASE, end of process |

| Function Type | Not initially described |

| Aspect | Description of Aspect |

| Input | (BASE_BEARING) in position |

| Input | (CAP) in position |

| Output | PRODUCT |

| Time | Assembly Process |

Table 5.

Function <OPERATORS>.

Table 5.

Function <OPERATORS>.

| Name of Function | <OPERATORS> |

|---|

| Description | Oversight resource operator |

| Function Type | Human |

| Aspect | Description of Aspect |

| Input | Assembly Process |

| Output | OPERATORmathsizesmall |

| Control | Work Permit |

Table 6.

Function Type defined as ‘Human’ for all the activities in the manual assembly task.

Table 6.

Function Type defined as ‘Human’ for all the activities in the manual assembly task.

| Function | Function Type |

|---|

| <ASSEMBLY PRODUCT> | Human |

| <ASSEMBLY BEARING_BASE> | Human |

| <GET BASE> | Human |

| <GET BEARING> | Human |

| <GET CAP> | Human |

| <OPERATORS> | Human |

| <CHECK PRODUCT> | Human |

Table 7.

Function <OPERATORS>.

Table 7.

Function <OPERATORS>.

| Name of Function | <OPERATORS> |

|---|

| Description | Oversight resource operator |

| Function Type | Human |

| Aspect | Description of Aspect |

| Input | Assembly Process |

| Output | ROBOTmathsizesmall |

| Control | Work Permit |

Table 8.

Function Type defined as ‘Technological’ for all the activities in the Scenario 2.

Table 8.

Function Type defined as ‘Technological’ for all the activities in the Scenario 2.

| Function | Function Type |

|---|

| <ASSEMBLY PRODUCT> | Technological |

| <ASSEMBLY BEARING_BASE> | Technological |

| <GET BASE> | Technological |

| <GET BEARING> | Technological |

| <GET CAP> | Technological |

| <OPERATORS> | Human |

| <CHECK PRODUCT> | Technological |

Table 9.

Function Type defined as ‘Human’ or ‘Technological’ depending on the activities to be performed in the Scenario 3.

Table 9.

Function Type defined as ‘Human’ or ‘Technological’ depending on the activities to be performed in the Scenario 3.

| Function | Function Type |

|---|

| <ASSEMBLY PRODUCT> | Technological |

| <ASSEMBLY BEARING_BASE> | Human |

| <GET BASE> | Human |

| <GET BEARING> | Human |

| <GET CAP> | Technological |

| <OPERATORS> | Human |

| <CHECK PRODUCT> | Technological |

Table 10.

KPI quantitative variables definition.

Table 10.

KPI quantitative variables definition.

| Characteristic | KPI | Description |

|---|

| Time | Time to Task | Time to complete a product (total assembly) |

| Quality | High Quality Product Percentage | Ratio of high quality products to total production expressed as a percentage |

Table 11.

Potential output variability for Time in Scenario 1.

Table 11.

Potential output variability for Time in Scenario 1.

| Function | Type Operator | Output |

|---|

| <ASSEMBLY PRODUCT> | Expert | Too early: Time to Task down and keep regular in time |

| | Standard | On Time: Time to Task is according to design |

| | Novice | Too Late: Time to Task increases with irregular variations |

Table 12.

Potential output variability for Quality in Scenario 1.

Table 12.

Potential output variability for Quality in Scenario 1.

| Function | Type Operator | Output |

|---|

| <ASSEMBLY PRODUCT> | Expert | High Quality Product Percentage value is high |

| | Standard | High Quality Product Percentage value is typical |

| | Novice | High Quality Product Percentage value is low |

Table 13.

Potential output variability for Time in Scenario 2.

Table 13.

Potential output variability for Time in Scenario 2.

| Function | Type Operator | Output |

|---|

| <ASSEMBLY PRODUCT> | Optimised | Too early: Time to Task down |

| | Basic | On Time: Time to Task is the same all the time |

| | | Too late: Not possible, except in case of complete breakdown |

Table 14.

Potential output variability for Quality in Scenario 2.

Table 14.

Potential output variability for Quality in Scenario 2.

| Function | Type Operator | Output |

|---|

| <ASSEMBLY PRODUCT> | Optimised | High Quality Product Percentage value is high |

| | Basic | High Quality Product Percentage value is typical |

Table 15.

Potential output variability for Time in Scenario 3.

Table 15.

Potential output variability for Time in Scenario 3.

| Function | Type Operator | Output |

|---|

| <ASSEMBLY | Expert | Too early: Time to Task down and keep regular in time |

| BEARING_BASE> | Standard | On Time: Time to Task is according to design |

| | Novice | Too Late: Time to Task increases with irregular variations |

| <ASSEMBLY PRODUCT> | Optimized | Too early: Time to Task down |

| | Basic | On Time: Time to Task is the same all the time |

Table 16.

Potential Output variability for Quality in Scenario 3.

Table 16.

Potential Output variability for Quality in Scenario 3.

| Function | Type Operator | Output |

|---|

| <ASSEMBLY | Expert | High Quality Product Percentage value is high |

| BEARING_BASE> | Standard | High Quality Product Percentage value is typical |

| | Novice | High Quality Product Percentage value is low |

| <ASSEMBLY PRODUCT> | Optimised | High Quality Product Percentage value is high |

| | Basic | High Quality Product Percentage value is typical |

Table 17.

Time to Task values obtained from the RoboDK virtual model.

Table 17.

Time to Task values obtained from the RoboDK virtual model.

| Function | Time |

|---|

| <ASSEMBLY PRODUCT> | 3.3 s |

| <ASSEMBLY BEARING_BASE> | 1.0 s |

| <GET BASE> | 1.9 s |

| <GET BEARING> | 2.0 s |

| <GET CAP> | 2.3 s |

| Time to Task, | 10.6 s |

Table 18.

Values used in distribution functions for Time, the same for all the functions.

Table 18.

Values used in distribution functions for Time, the same for all the functions.

| Function | Type Operator | Value |

|---|

| Log-Normal() | Expert | s, s |

| | Standard | s, s |

| | Novice | s, s |

Table 19.

Values used in distribution functions for Quality, the same for all the functions.

Table 19.

Values used in distribution functions for Quality, the same for all the functions.

| Function | Type Operator | Output |

|---|

| Bernoulli() | Expert | 98% High Quality products |

| | Standard | 90% High Quality products |

| | Novice | 80% High Quality products |

Table 20.

Scenario 1: Results for Time (, ) and Quality (, ).

Table 20.

Scenario 1: Results for Time (, ) and Quality (, ).

| Type Operator | | | | |

|---|

| Expert | 11.87 | 0.43 | 98% | 3.01 |

| Standard | 13.77 | 0.57 | 90% | 3.79 |

| Novice | 15.20 | 0.81 | 80% | 4.03 |

Table 21.

Scenario 2: Results for Time (, ) and Quality (, ).

Table 21.

Scenario 2: Results for Time (, ) and Quality (, ).

| Type Operator | | | | |

|---|

| Optimized | 10.0 | 0.0 | 79% | 3.14 |

| Basic | 11.0 | 0.0 | 79% | 3.50 |

Table 22.

Scenario 3: Results for Time (, ) and Quality (, ).

Table 22.

Scenario 3: Results for Time (, ) and Quality (, ).

| Operator (R) | Operator (H) | | | | |

|---|

| Basic | Expert | 11.03 | 0.18 | 98% | 2.96 |

| | Standard | 11.93 | 0.25 | 89% | 2.99 |

| | Novice | 12.43 | 0.50 | 79% | 3.39 |

| Optimized | Expert | 10.93 | 0.25 | 98% | 3.07 |

| | Standard | 11.03 | 0.18 | 90% | 3.04 |

| | Novice | 11.80 | 0.41 | 79% | 3.43 |

Table 23.

Results for the overall activity (Manual Assembly). Values are the mean for each the activities related for the task.

Table 23.

Results for the overall activity (Manual Assembly). Values are the mean for each the activities related for the task.

| Operator (H) | | | |

|---|

| Expert | 2.34 | 0.07 | 2.99 |

| Standard | 2.70 | 0.09 | 3.33 |

| Novice | 2.99 | 0.10 | 3.34 |

Table 24.

Results for the overall tasks (Manual Assembly). Values are the mean for all the task.

Table 24.

Results for the overall tasks (Manual Assembly). Values are the mean for all the task.

| Operator (H) | | | |

|---|

| Expert | 11.87 | 0.43 | 3.62 |

| Standard | 13.77 | 0.57 | 4.14 |

| Novice | 15.20 | 0.81 | 5.33 |

Table 25.

Relation of Coefficient of Variation for Time and Quality.

Table 25.

Relation of Coefficient of Variation for Time and Quality.

| Scenario | Operator (H) | Operator (R) | | | | | | |

|---|

| 1 | Expert | | 2.99 | 3.62 | 121.12 | 4.3 | 3.07 | 71.43 |

| | Standard | | 3.33 | 4.14 | 124.18 | 4.3 | 3.79 | 97.93 |

| | Novice | | 3.34 | 5.33 | 159.36 | 4.3 | 5.04 | 117.15 |

| 2 | | Optimized | 0.0 | 0.0 | 0.0 | 4.3 | 3.97 | 92.43 |

| | | Basic | 0.0 | 0.0 | 0.0 | 4.3 | 4.43 | 103.03 |

| 3 | Expert | Optimized | 2.99 | 2.28 | 76.46 | 4.3 | 3.13 | 72.85 |

| | Standard | | 3.33 | 1.63 | 48.96 | 4.3 | 3.38 | 78.55 |

| | Novice | | 3.34 | 3.47 | 103.92 | 4.3 | 4.34 | 100.97 |

| 3 | Expert | Basic | 2.99 | 1.63 | 54.56 | 4.3 | 3.02 | 70.24 |

| | Standard | | 3.33 | 2.09 | 62.89 | 4.3 | 3.36 | 78.13 |

| | Novice | | 3.34 | 4.02 | 120.31 | 4.3 | 4.29 | 99.79 |