1. Introduction

Software-Defined Networking (SDN) has emerged as a promising approach for defining network architectures that are highly adaptable and robust. Realising the demands of the future Internet is becoming more attainable. SDN was conceived at Stanford University as a clean slate approach [

1] to redesign the network architecture and to facilitate the evolution of the future Internet. In a SDN, the data and control planes are physically decoupled which results in a network consisting of—(1) a centralised controller that maintains a global view of the network state, and (2) simple forwarding elements whose packet forwarding behaviour is dictated by the controller. Due to its programmable interfaces, SDN offers new opportunities to implement new routing strategies, customised traffic engineering, dynamic allocation of network resources and many other programmable functionalities. In other words, SDN opens the door to accommodate network innovations. So far, some leading companies such as Google, Microsoft and Huawei have employed SDNs in their data centers and in the near future SDN is expected to play a part in 5G [

2]. As with any new innovation, SDN faces several challenges such as those related to the management of network failures and the reconfiguration of the network by updating the network architecture [

3]. Network elements, such as forwarding devices and links, are susceptible to failure incidents. As a consequence, network facilities like routing will be harmed. Recovery from failure can be achieved either

proactively, which is also called

protection, or

reactively, which is also called

restoration [

4]. In protection, the alternative solution is pre-planned and reserved before a failure occurs. However, in restoration, the solution is not pre-planned and needs to be calculated dynamically (on demand) when a failure occurs.

On one hand, protection mechanisms are expensive [

5] as they require two paths to be installed for each flow and this could overwhelm critical network resources such as switch Ternary Content Addressable Memory (TCAM). Moreover, the backup path may not be available when it is needed. The backup path could fail earlier than the primary one. On the other hand, restoration mechanisms are time consuming as the network controller will first need to calculate an alternative path and then install the flow entries (i.e., forwarding rules) in the relevant switches. The time required to reconfigure the network includes two factors: (1) the time to calculate a new path and (2) to update the switches on the new path. In this paper, we focus on restoration techniques. Our main aim is to accelerate network recovery from a single link failure in large scale networks by reducing the duration of the network updating process, speeding up network restoration. Our SDN recovery methods exploit the inherent structured nature of networks to find a quick solution when a link failure is detected.

This paper is organised as follows—in

Section 2, various SDN fault management techniques are presented and discussed. In

Section 3, we introduce our network model along with the proposed methods. We then illustrate the proposed framework to tackle link failures using the proposed methods in

Section 4. Simulation and experimental results are presented in

Section 5 and we conclude by outlining our future work. Finally, the summary of this paper is provided in

Section 6.

2. Related Work

Link failures, which last for different time periods and have different causes, take place in everyday network operation [

6]. For example, an evaluation of the susceptibility to link failure of business critical processes in a data-centre, which manages 75% of Europe’s flight bookings, was undertaken in Reference [

7]. A crucial finding was that susceptibility to link failure was intrinsically linked to the network topology. Although much research in the literature has been dedicated to considering this issue from different perspectives (analysis, characterization, evaluation and recovery), the new network architecture of SDN requires more investigation. We discuss some recent works which are related to our proposed SDN recovery approach.

In References [

8,

9], the authors discussed the possibility of achieving carrier-grade reliability. This was defined as having the ability to recover from failures within 50

s. These approaches were based on following the loss of signal to ascertain if there were changes in the network topology such as failures. When a notification about link failure was received by the controller, the controller identified the affected paths, it calculated alternative paths, and finally, it sent the appropriate flow modification instructions to the switches. Evaluations were carried out by both studies using small-scale networks, which consisted of 6 and 14 nodes. These studies demonstrated that recovery times of less than 50

s could be achieved, satisfying the targets set for evaluating carrier-grade reliability. However, the authors conceded that the restoration time also depended on the number of flows (i.e., rules) that needed to be modified in the affected path, therefore, the recovery time was far from the carrier-grade threshold in some of their experiments. It is a significant challenge to meet the carrier-grade reliability requirement posed by large-scale networks. The authors did not take into account the correlation between the path length and the recovery speed. In addition, they did not attempt to reduce the operations required to set-up the backup paths. The authors of Reference [

10] proposed a restoration scheme called

Automatic Failure Recovery for OpenFlow (AFRO), which worked in two phases, namely,

record mode and

recovery mode. In record mode, AFRO recorded all the controller-switch activity (e.g.,

PACKET-IN and

FLOW-MOD) in order to create a clean state copy of the network when no failure was experienced. When a failure occurred, AFRO switched to the recovery mode. This was followed by generating a new instance of the controller (a

shadow controller). This copy was a copy of the original state but it excluded the failed elements. Then, all the recorded events were replicated for the sake of installing the different rule-set between the original and shadow copies. The authors produced a prototype of AFRO, however, the study lacked any simulation results and/or measurements to show the effectiveness of AFRO. Moreover, the process of switching from record mode to the recovery mode required time, which had the potential to cause service disruption.

The network resilience achieved by the approach in Reference [

11] was given as the reason for the convergence delay experienced after failure events. Speed of fail-over was attributed to two parameters: (1) the distance between the controller and the site of failure; and (2) the length of the alternative path that was computed and then installed by the controller. The authors increased the speed of failure recovery by deploying multiple centralized controllers to enable the dynamic computation of end-to-end paths; this innovation also had the benefit of increasing the resilience and the scalability of the solution. This work did not take into account the time required for the recovery process. Instead failure detection was accelerated.

CORONET was introduced in Reference [

12] to achieve fast network recovery from multiple data plane link failures in SDNs. CORONET was based on slicing the network into VLANs so that the ports of physical switches were mapped to different VLAN IDs. A route planning module computed multiple link-disjoint paths using Dijkstra’s algorithm [

13]. When one or more link failures occurred the controller used a set of pre-computed paths to assign an alternative route to address the link failure(s). A secondary benefit of CORONET was that calculated disjoint paths could be used to perform load balancing. Dynamic load balancing was achieved by using different paths in a round-robin manner based on monitoring reports from a traffic monitoring module which performed traffic analysis. The system was prototyped using the NOX controller and it is compatible with the standard OpenFlow protocol.

In Reference [

14], the authors proposed HiQoS, a SDN-based solution that finds multiple paths between the source and destination nodes to guarantee certain QoS constraints such as bandwidth, delay and throughput. HiQoS used a modified Dijkstra algorithm to learn the multiple paths required to meet the QoS requirements. Not only could the QoS be guaranteed with HiQoS, but also a fast failure recovery could be achieved. This is because when a link failed it caused a truncation or interruption in some paths. The controller then directly selected a working path from the already computed ones. The authors compared the performance of HiQoS, which supports multiple paths, against MiQoS, which is a single path solution, and experiments showed that HiQoS outperformed the MiQoS in terms of performance and resilience to failure. Unfortunately, the authors did not provide a deep explanation of how the proposed method accelerated the restoration and reduced the recovery time.

The authors introduced the Smart Routing framework for SDNs in Reference [

15], which allowed the network controller to receive forewarning messages about failures and therefore to reconfigure the potential paths before failure incidents occurred. However, this technique required historical data. This data may not be available in scenarios that do not require pre-existing infrastructure, for example, ad-hoc networks. In this scenario it is difficult to motivate the use of Smart Routing. Several studies like those in References [

16,

17,

18] have addressed the problem of optimising failure restoration as an Integer Linear Program (ILP). However ILP-based approaches may be slow to converge in large-scale networks [

19]. In practice, achieving fast recovery would require faster solvers. In References [

20,

21], the authors considered the problem of path-switching latency in heterogeneous networks, where nodes had different specifications. The proposed method selected alternative paths based on the switches which had the shortest processing time. However, the proposed approach was tested on a small-scale network. Also, the study did not discuss the cost associated with the improvements achieved by the method.

Notwithstanding our previous discussion on multi-controller solutions, SDN is traditionally a centralised networking architecture. One of the main responsibilities of its central point, the controller, is to maintain the routing tables of all the nodes that reside on its domain. Network forwarding elements potentially operate in harsh environments, such as unreliable wireless sensor networks, where failure rates are high, causing frequent changes in the network topology [

22]. Similarly, the forwarding elements might operate in dynamic environments where the installed rules have to be updated frequently, for example, every 1.5 to 5 s [

23]. In such a case, the re-routing activities need to take place quickly in order to cope with the frequent changes. In other words, searching for optimal solutions might be costly in terms of installing and updating rules considering that the lifespan for such rules may be short. Although the amount of research conducted in the area of SDN fault management is growing, most of the contributions so far have focused on replacing the affected paths with new optimal ones. In this paper, the main goal is to accelerate the set-up of alternative paths in order to facilitate fast switch-over.

We can summarise the contributions of this paper as follows.

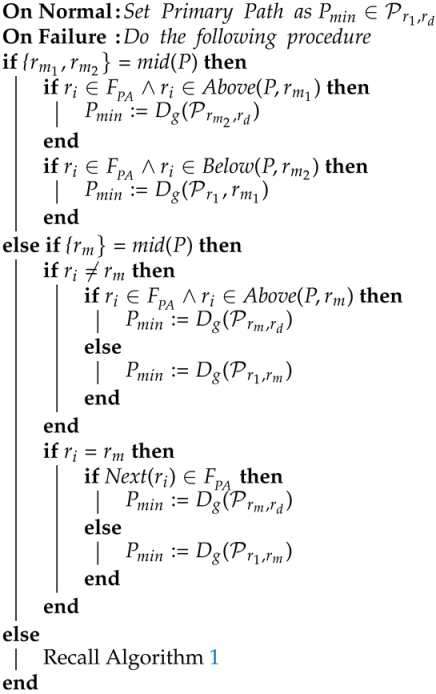

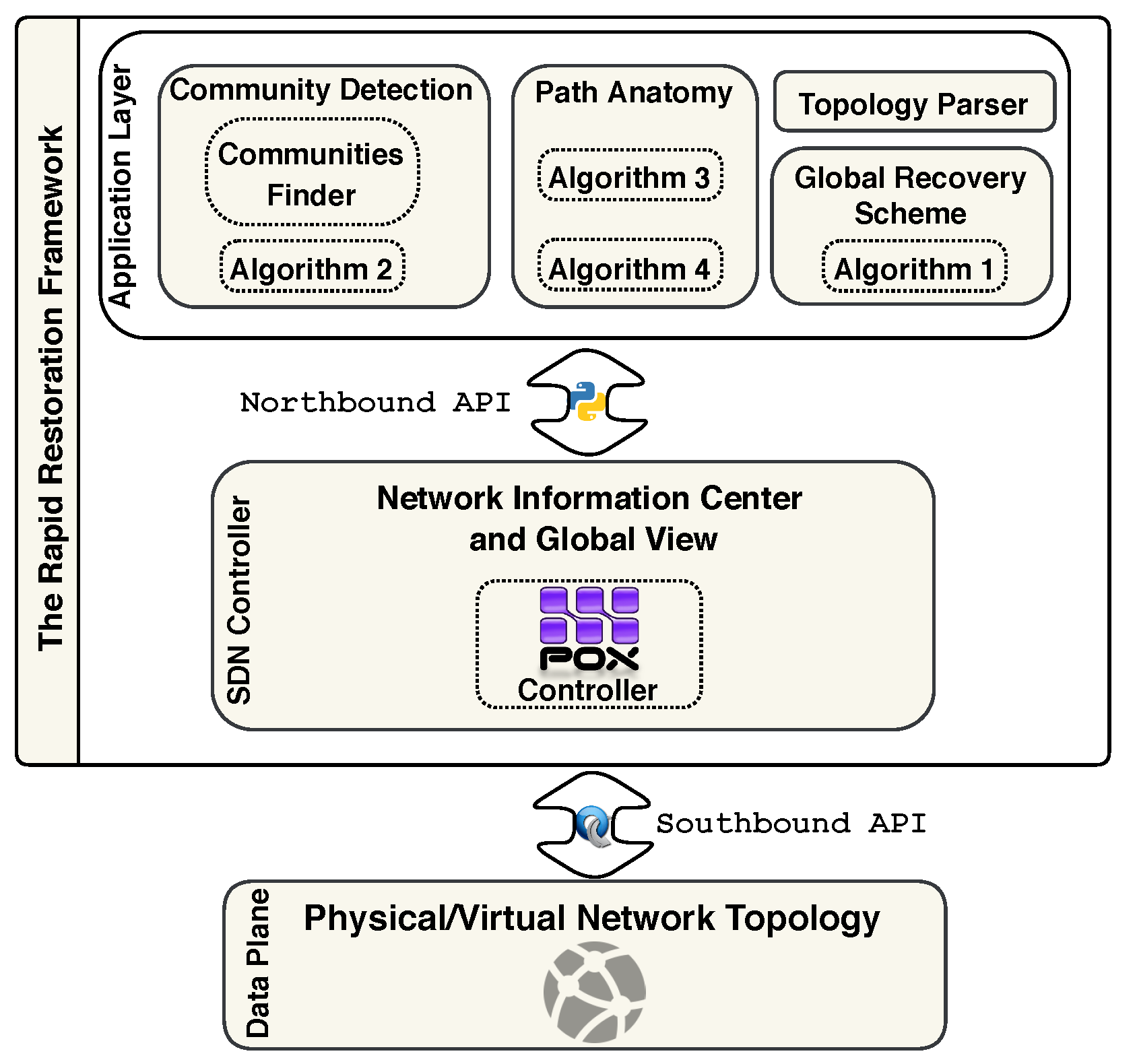

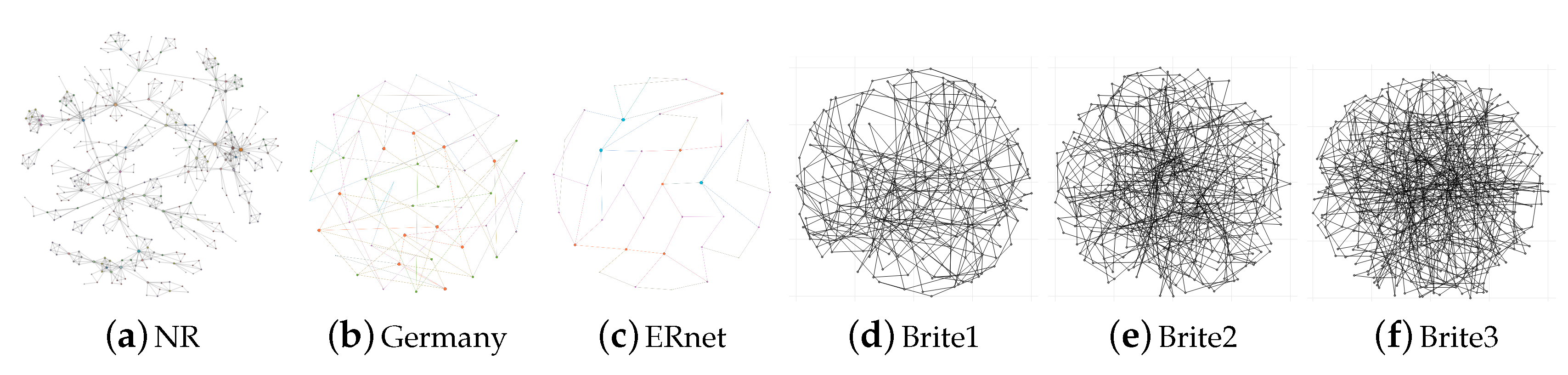

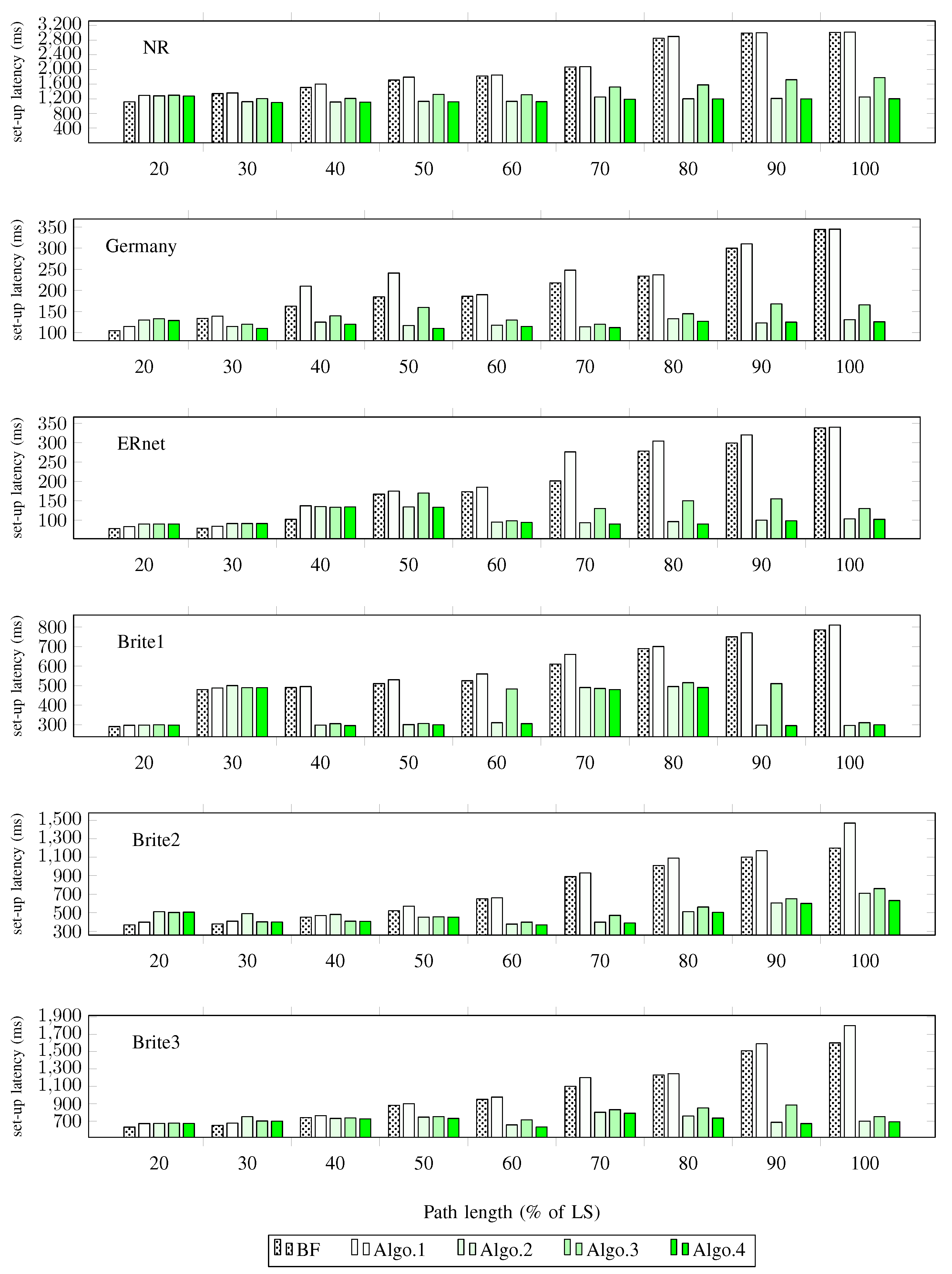

A Community Detection (CD) approach is proposed where the network topology is divided into a number of communities, which we denote N. The aim is to find the alternative paths within a sub-graph (i.e., community) rather than the whole graph.

A Path Anatomy (PA) approach is proposed in which the affected path is tackled partially rather than from end-to-end.

Our contributions enhance the reactiveness of SDN fault management. We achieve this reactivity by reducing the number of rules that must be replaced. This, in turn, lowers the number of nodes that need to be updated and therefore, speeds-up the switch-over.