Enhancing Healthcare Decision-Making Process: Findings from Orthopaedic Field

Abstract

1. Introduction

- To analyse possible decisional biases by the use of a cognitive tool;

- To understand if some decision-maker features influence cognitive biases.

2. Theoretical Background

- Missing information

- Lack of supervision

- Physicians’ knowledge

- Misunderstanding of a diagnostic test

- Cognitive errors

- System one, or the intuitive system, is characterised by quick thinking, unawareness, and little effort;

- as a contrast, system two, or reflective system is characterised by slow thinking, awareness, deductive reasoning, and more concentration.

- In internal medicine, there are contextual factors, interactions, and how information is collected and acquired (McBee et al. 2015).

- In physical therapy: situational circumstances, the perspective of the client, reasoning strategies, knowledge, and experience (Elvén et al. 2019; Wainwright et al. 2011).

- In dentistry: age of physician, number of dependents, perception of practice loans, and place of initial training. (Ghoneim et al. 2020).

- Anaesthesiology: Anchoring, Availability bias, Premature closure, Feedback bias, Confirmation bias, Framing effect, Commission bias, Overconfidence bias, Omission bias, Sunk costs, Visceral bias, Zebra retreat, Unpacking principle, Psych-out error (Stiegler et al. 2012).

- Neurology: Framing Effects, Anchoring, Availability, Representativeness, Blind Obedience (Vickrey et al. 2010).

- Medical imaging: Availability Bias, Alliterative Bias, Anchoring Bias, Framing Bias, Attribution Bias, Blind Spot Bias, Regret Bias, Satisfaction of Search, Scout Neglect Bias, Hindsight Bias (Itri and Patel 2018).

- Dermatology: Anchoring, Availability bias, Representativeness restraint (Dunbar et al. 2013).

- General surgery: Anchoring, Availability Bias, Commission Bias, Overconfidence Bias, Omission Bias, and Sunk Costs (Vogel and Vogel 2019).

3. Materials and Methods

- the head of a public “trauma-centre” hospital and university professor/director of a “Postgraduate School in Orthopaedics”, with more than 20 years of experience; (SD)

- the head of several orthopaedic surgery teams, working in private hospitals, with more than 15 years of experience (SP);

- the Coordinator of the orthopaedic emergency team in a public “trauma-centre” hospital, with less than five years of experience (SH).

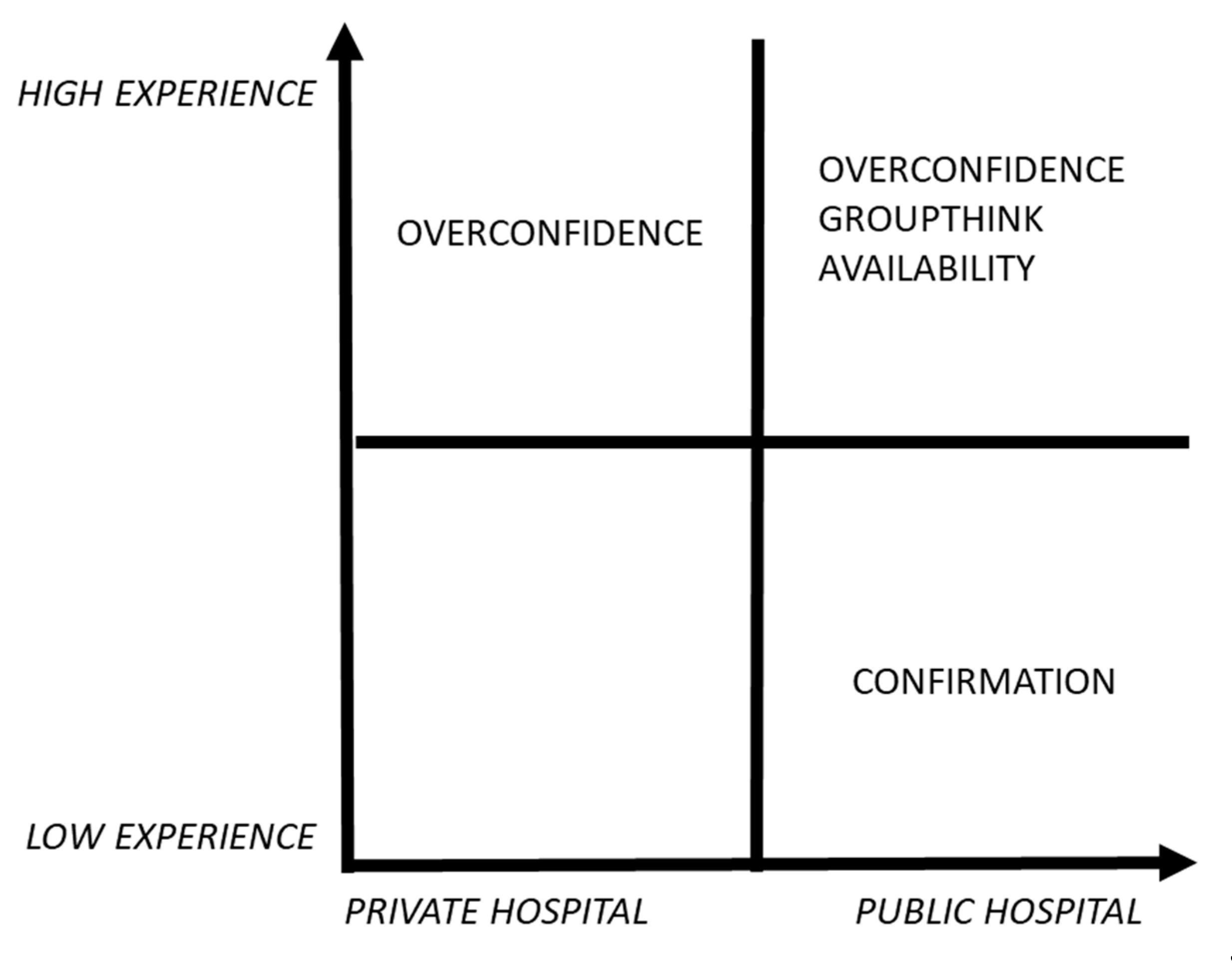

- a high-experienced physician, working in a public context (SD);

- a high-experienced physician, working in a private context (SP);

- a law-experienced physician, working in a public context (SH).

- C.Q. A was to verify if the interviewees agree on the concept of follow-up.

- C.Q. B was to understand which “non-clinical” information is considered as “necessary” in decision-making development.

- C.Q. C was to examine in depth how decisions are undertaken within a group of orthopaedists and if the group tends to review some decisions taken by a member.

- C.Q. D was to understand what it would mean for an orthopaedic surgeon to always take decisions without any discussions/debates with the team.

- C.Q. E was to analyse what would be considered a positive or a negative scenario in the orthopaedic context for the follow-up choices.

- C.Q. F was to understand which kind of self-interests should be involved in decision-making about orthopaedic patient follow-up

4. Results

5. Discussion

- from the evidence-based medicine (based on best practice) (Timmermans and Angell 2001),

- from the impact of an “opinion leader” in healthcare disciplines (Locock et al. 2001).

- SD and SP are more expert surgeons, they have more than fifteen years of experience, while SH has less than five years of experience;

- SD and SP are both directors of their department/surgery team;

- SD and SH share the same status of public employees, they both work in a public hospital;

- SP works in a private organisation, where he supervises only the operating theatre teams (whose he is the head) for elective surgeries.

6. Conclusions and Implications

Author Contributions

Funding

Conflicts of Interest

References

- Abatecola, Gianpaolo. 2014. Untangling self-reinforcing processes in managerial decision making. Co-evolving heuristics? Management Decision 52: 934–49. [Google Scholar] [CrossRef]

- Antonacci, Anthony C., Samuel P. Dechario, Caroline Antonacci, Gregg Husk, Vihas Patel, Jeffrey Nicastro, Gene Coppa, and Mark Jarrett. 2020. Cognitive Bias Impact on Management of Postoperative Complications, Medical Error, and Standard of Care. Journal of Surgical Research 258: 47–53. [Google Scholar] [CrossRef] [PubMed]

- Ashoorion, Vahid, Mohammad Javad Liaghatdar, and Peyman Adibi. 2012. What variables can influence clinical reasoning? Journal of Research in Medical Sciences 17: 1170. [Google Scholar] [PubMed]

- Atoum, Ibrahim A., and Nasser A. Al-Jarallah. 2019. Big data analytics for value-based care: Challenges and opportunities. International Journal of Advanced Trends in Computer Science and Engineering 8: 3012–16. [Google Scholar] [CrossRef]

- Augestad, Liv Ariane, Knut Stavem, Ivar Sønbø Kristiansen, Carl Haakon Samuelsen, and Kim Rand-Hendriksen. 2016. Influenced from the Start: Anchoring Bias in Time Trade-off Valuations. Quality of Life Research 25: 2179–91. [Google Scholar] [CrossRef] [PubMed]

- Austin, Jared P., and Stephanie A. C. Halvorson. 2019. Reducing the Expert Halo Effect on Pharmacy and Therapeutics Committees. JAMA 321: 453. [Google Scholar] [CrossRef]

- Balsamo, Brittany, Mark D. Geil, Rebecca Ellis, and Jianhua Wu. 2018. Confirmation Bias Affects User Perception of Knee Braces. Journal of Biomechanics 75: 164–70. [Google Scholar] [CrossRef]

- Baron, Robert A. 1998. Cognitive mechanisms in entrepreneurship: Why and when enterpreneurs think differently than other people. Journal of Business Venturing 13: 275–94. [Google Scholar] [CrossRef]

- Bouckaert, Geert, and John Halligan. 2007. Managing Performance: International Comparisons. Abingdon: Routledge. [Google Scholar]

- Braun, Virginia, and Victoria Clarke. 2006. Using thematic analysis in psychology. Qualitative Research in Psychology 3: 77–101. [Google Scholar] [CrossRef]

- Christensen, Nicole, Lisa Black, Jennifer Furze, Karen Huhn, Ann Vendrely, and Susan Wainwright. 2017. Clinical Reasoning: Survey of Teaching Methods, Integration, and Assessment in Entry-Level Physical Therapist Academic Education. Physical Therapy 97: 175–86. [Google Scholar] [CrossRef]

- Cohen, Jeffrey M., and Susan Burgin. 2016. Cognitive biases in clinical decision making: A primer for the practicing dermatologist. Jama Dermatology 152: 253–54. [Google Scholar] [CrossRef] [PubMed]

- Collin, Peter Hodgson. 2009. Dictionary of Medical Terms. London: A&C Black. [Google Scholar]

- Cook, Joan M., Paula P. Schnurr, Tatyana Biyanova, and James C. Coyne. 2009. Apples don’t fall far from the tree: Influences on psychotherapists’ adoption and sustained use of new therapies. Psychiatric Services 60: 671–76. [Google Scholar] [CrossRef] [PubMed]

- Cooper, Nicola, and John Frain. 2016. ABC of Clinical Reasoning. Hoboken: John Wiley & Sons. [Google Scholar]

- Cristofaro, Matteo. 2017a. Reducing Biases of Decision-Making Processes in Complex Organizations. Management Research Review 40: 270–91. [Google Scholar] [CrossRef]

- Cristofaro, Matteo. 2017b. Herbert Simon’s Bounded Rationality: Its Historical Evolution in Management and Cross-Fertilizing Contribution. Journal of Management History 23: 170–90. [Google Scholar] [CrossRef]

- Croskerry, Pat. 2003. The Importance of Cognitive Errors in Diagnosis and Strategies to Minimize Them. Academic Medicine 78: 775–80. [Google Scholar] [CrossRef]

- Del Mar, C., J. Doust, and P. Glasziou. 2006. Clinical Thinking; Evidence, Communication and Decision-Making. Oxford: Blackwell Publishing Ltd. BMJ Books. [Google Scholar]

- Dunbar, Miles, Stephen E. Helms, and Robert T. Brodell. 2013. Reducing Cognitive Errors in Dermatology: Can Anything Be Done? Journal of the American Academy of Dermatology 69: 810–13. [Google Scholar] [CrossRef]

- Durning, Steven, Anthony R. Artino, Louis Pangaro, Cees P. M. van der Vleuten, and Lambert Schuwirth. 2011. Context and Clinical Reasoning: Understanding the Perspective of the Expert’s Voice: Understanding the Perspective of the Expert’s Voice. Medical Education 45: 927–38. [Google Scholar] [CrossRef]

- Durning, Steven J., Anthony R. Artino, Lambert Schuwirth, and Cees van der Vleuten. 2013. Clarifying Assumptions to Enhance Our Understanding and Assessment of Clinical Reasoning. Academic Medicine 88: 442–48. [Google Scholar] [CrossRef]

- Edmondson, Amy C., and Stacy E. Mcmanus. 2007. Methodological Fit in Management Field Research. Academy of Management Review 32: 1246–64. [Google Scholar] [CrossRef]

- Eisenberg, John M. 1979. Sociologic Influences on Decision-Making by Clinicians. Annals of Internal Medicine 90: 957. [Google Scholar] [CrossRef]

- El Said, Ghada Refaat. 2017. Understanding how learners use massive open online courses and why they drop out: Thematic analysis of an interview study in a developing country. Journal of Educational Computing Research 55: 724–52. [Google Scholar] [CrossRef]

- Elston, Dirk M. 2020. Confirmation Bias in Medical Decision-Making. Journal of the American Academy of Dermatology 82: 572. [Google Scholar] [CrossRef]

- Elvén, Maria, Jacek Hochwälder, Elizabeth Dean, and Anne Söderlund. 2019. Predictors of Clinical Reasoning Using the Reasoning 4 Change Instrument with Physical Therapist Students. Physical Therapy 99: 964–76. [Google Scholar] [CrossRef] [PubMed]

- Fargen, Kyle M., and William A. Friedman. 2014. The science of medical decision making: Neurosurgery, errors, and personal cognitive strategies for improving quality of care. World Neurosurgery 82: e21–e29. [Google Scholar] [CrossRef] [PubMed]

- Flynn, Darren, Meghan A. Knoedler, Erik P. Hess, M. Hassan Murad, Patricia J. Erwin, Victor M. Montori, and Richard G. Thomson. 2012. Engaging patients in health care decisions in the emergency department through shared decision-making: A systematic review. Academic Emergency Medicine 19: 959–67. [Google Scholar] [CrossRef] [PubMed]

- Ghoneim, Abdulrahman, Bonnie Yu, Herenia Lawrence, Michael Glogauer, Ketan Shankardass, and Carlos Quiñonez. 2020. What Influences the Clinical Decision-Making of Dentists? A Cross-Sectional Study. A cura di Gururaj Arakeri. PLoS ONE 15: e0233652. [Google Scholar] [CrossRef] [PubMed]

- Goldsby, Elizabeth, Michael Goldsby, Christopher B. Neck, and Christopher P. Neck. 2020. Under Pressure: Time Management, Self-Leadership, and the Nurse Manager. Administrative Sciences 10: 38. [Google Scholar] [CrossRef]

- Grove, Amy, Aileen Clarke, and Graeme Currie. 2015. The barriers and facilitators to the implementation of clinical guidance in elective orthopaedic surgery: A qualitative study protocol. Implementation Science 10: 81. [Google Scholar] [CrossRef]

- Haley, Usha C. V., and Stephen A. Stumpf. 1989. Cognitive Trails in Strategic Decision-Making: Linking Theories of Personalities and Cognitions. Journal of Management Studies 26: 477–97. [Google Scholar] [CrossRef]

- Hausmann, Daniel, Cristina Zulian, Edouard Battegay, and Lukas Zimmerli. 2016. Tracing the Decision-Making Process of Physicians with a Decision Process Matrix. BMC Medical Informatics and Decision Making 16: 133. [Google Scholar] [CrossRef]

- Healy, William L., Michael E. Ayers, Richard Iorio, Douglas A. Patch, David Appleby, and Bernard A. Pfeifer. 1998. Impact of a clinical pathway and implant standardization on total hip arthroplasty: A clinical and economic study of short-term patient outcome. The Journal of Arthroplasty 13: 266–76. [Google Scholar] [CrossRef]

- Hendrick, Hal W. 1999. Handbook of human factors and ergonomics, edited by Gavriel Salvendy, 1997, New York: John Wiley & Sons, Inc., p. 2137, ISBN 0-471-11690-4. Human Factors and Ergonomics in Manufacturing & Service Industries 9: 321–22. [Google Scholar]

- Hess, Erik P., Corita R. Grudzen, Richard Thomson, Ali S. Raja, and Christopher R. Carpenter. 2015. Shared decision-making in the emergency department: Respecting patient autonomy when seconds count. Academic Emergency Medicine 22: 856–64. [Google Scholar] [CrossRef] [PubMed]

- Higgs, Joy, Gail M Jensen, Stephen Loftus, and Nicole Christensen. 2019. Clinical Reasoning in the Health Professions. Oxford: Elsevier Health Sciences. [Google Scholar]

- Hsieh, Hsiu-Fang, and Sarah E. Shannon. 2005. Three approaches to qualitative content analysis. Qualitative Health Research 15: 1277–88. [Google Scholar] [CrossRef]

- Ierano, Courtney, Karin Thursky, Trisha Peel, Arjun Rajkhowa, Caroline Marshall, and Darshini Ayton. 2019. Influences on surgical antimicrobial prophylaxis decision making by surgical craft groups, anaesthetists, pharmacists and nurses in public and private hospitals. PLoS ONE 14: e0225011. [Google Scholar] [CrossRef]

- Itri, Jason N., and Sohil H. Patel. 2018. Heuristics and cognitive error in medical imaging. American Journal of Roentgenology 210: 1097–105. [Google Scholar] [CrossRef]

- Jette, Diane U., Lisa Grover, and Carol P. Keck. 2003. A qualitative study of clinical decision making in recommending discharge placement from the acute care setting. Physical Therapy 83: 224–36. [Google Scholar] [CrossRef]

- Kaba, Alyshah, Ian Wishart, Kristin Fraser, Sylvain Coderre, and Kevin McLaughlin. 2016. Are We at Risk of Groupthink in Our Approach to Teamwork Interventions in Health Care? Medical Education 50: 400–8. [Google Scholar] [CrossRef]

- Kahneman, Daniel. 2011. Thinking, Fast and Slow. New York: Macmillan. [Google Scholar]

- Kahneman, Daniel, Dan Lovallo, and Olivier Sibony. 2011. Before you make that big decision. Harvard Business Review 89: 50–60. [Google Scholar]

- Kobus, David A., Sherry Proctor, and Steven Holste. 2001. Effects of experience and uncertainty during dynamic decision making. International Journal of Industrial Ergonomics 28: 275–90. [Google Scholar] [CrossRef]

- Lippa, Katherine D., Markus A. Feufel, F. Eric Robinson, and Valerie L. Shalin. 2017. Navigating the Decision Space: Shared Medical Decision Making as Distributed Cognition. Qualitative Health Research 27: 1035–48. [Google Scholar] [CrossRef] [PubMed]

- Lo, Bernard, and Mitchell H. Katz. 2005. Clinical decision making during public health emergencies: Ethical considerations. Annals of Internal Medicine 143: 493–98. [Google Scholar] [CrossRef] [PubMed]

- Locock, Louise, Sue Dopson, David Chambers, and John Gabbay. 2001. Understanding the role of opinion leaders in improving clinical effectiveness. Social Science & Medicine 53: 745–57. [Google Scholar]

- Mailoo, Venthan. 2015. Common Sense or Cognitive Bias and Groupthink: Does It Belong in Our Clinical Reasoning? The British Journal of General Practice 65: 27. [Google Scholar] [CrossRef]

- Makhinson, Michael. 2012. Biases in the Evaluation of Psychiatric Clinical Evidence. The Journal of Nervous and Mental Disease 200: 76–82. [Google Scholar] [CrossRef]

- Mamede, Sílvia, Marco Antonio de Carvalho-Filho, Rosa Malena Delbone de Faria, Daniel Franci, Maria do Patrocinio Tenorio Nunes, Ligia Maria Cayres Ribeiro, Julia Biegelmeyer, Laura Zwaan, and Henk G. Schmidt. 2020. “Immunising” Physicians against Availability Bias in Diagnostic Reasoning: A Randomised Controlled Experiment. BMJ Quality & Safety 29: 550–59. [Google Scholar] [CrossRef]

- McBee, Elexis, Temple Ratcliffe, Katherine Picho, Anthony R. Artino, Lambert Schuwirth, William Kelly, Jennifer Masel, Cees van der Vleuten, and Steven J. Durning. 2015. Consequences of Contextual Factors on Clinical Reasoning in Resident Physicians. Advances in Health Sciences Education 20: 1225–36. [Google Scholar] [CrossRef]

- Ministero della Salute. 2016. Tavole Rapporto SDO. Available online: http://www.salute.gov.it/portale/documentazione/p6_2_8_3_1.jsp?lingua=italiano&id=28 (accessed on 20 October 2020).

- Nagaraj, Guruprasad, Carolyn Hullick, Glenn Arendts, Ellen Burkett, Keith D. Hill, and Christopher R. Carpenter. 2018. Avoiding Anchoring Bias by Moving beyond “mechanical Falls” in Geriatric Emergency Medicine. Emergency Medicine Australasia 30: 843–50. [Google Scholar] [CrossRef]

- Neergaard, Mette Asbjoern, Frede Olesen, Rikke Sand Andersen, and Jens Sondergaard. 2009. Qualitative description–the poor cousin of health research? BMC Medical Research Methodology 9: 52. [Google Scholar] [CrossRef]

- Norman, Geoffrey. 2005. Research in Clinical Reasoning: Past History and Current Trends. Medical Education 39: 418–27. [Google Scholar] [CrossRef]

- Olszak, Celina M., and Kornelia Batko. 2012. Business intelligence systems. New chances and possibilities for healthcare organizations. Informatyka Ekonomiczna 5: 123–38. [Google Scholar]

- Otokiti, Ahm. 2019. Using informatics to improve healthcare quality. International Journal of Health Care Quality Assurance 32: 425–430. [Google Scholar] [CrossRef] [PubMed]

- Oxford Dictionary. 2012. Oxford Dictionary. Available online: https://www.oed.com/ (accessed on 20 November 2020).

- Oyewobi, Luqman Oyekunle, Abimbola Windapo, and James Olabode Bamidele Rotimi. 2016. Relationship between decision-making style, competitive strategies and organisational performance among construction organisations. Journal of Engineering, Design and Technology 14: 713–38. [Google Scholar] [CrossRef]

- Patton, Michael Quinn. 2002. Two decades of developments in qualitative inquiry: A personal, experiential perspective. Qualitative Social Work 1: 261–83. [Google Scholar] [CrossRef]

- Pinnarelli, Luigi, Alice Basiglini, Danilo Fusco, and Marina Davoli. 2015. Piano Nazionale Esiti. Available online: https://www.agenas.gov.it/tassi-di-assenza-giugno-2015/monitor-n-30 (accessed on 20 October 2020).

- Porter, Michael E. 2009. A Strategy for Health Care Reform—Toward a Value-Based System. New England Journal of Medicine 361: 109–12. [Google Scholar] [CrossRef] [PubMed]

- Rashid, Ahmad, and Halim Boussabiane. 2019. Conceptualizing the influence of personality and cognitive traits on project managers’ risk-taking behaviour. International Journal of Managing Projects in Business. [Google Scholar] [CrossRef]

- Raymond, Louis, Guy Paré, and Éric Maillet. 2017. IT-based clinical knowledge management in primary health care: A conceptual framework. Knowledge and Process Management 24: 247–56. [Google Scholar] [CrossRef]

- Robinson, James C., Alexis Pozen, Samuel Tseng, and Kevin J. Bozic. 2012. Variability in costs associated with total hip and knee replacement implants. JBJS 94: 1693–98. [Google Scholar] [CrossRef] [PubMed]

- Robinson, Jennifer, Marta Sinclair, Jutta Tobias, and Ellen Choi. 2017. More dynamic than you think: Hidden aspects of decision-making. Administrative Sciences 7: 23. [Google Scholar] [CrossRef]

- Robinson, Frank Eric, Markus A. Feufel, Valerie L. Shalin, Debra Steele-Johnson, and Brian Springer. 2020. Rational Adaptation: Contextual Effects in Medical Decision Making. Journal of Cognitive Engineering and Decision Making 14: 112–31. [Google Scholar] [CrossRef]

- Roski, Joachim, George W. Bo-Linn, and Timothy A. Andrews. 2014. Creating value in health care through big data: Opportunities and policy implications. Health Affairs 33: 1115–22. [Google Scholar] [CrossRef]

- Rubio-Navarro, Alfonso, Diego José García-Capilla, Maria José Torralba-Madrid, and Jane Rutty. 2020. Decision-Making in an Emergency Department: A Nursing Accountability Model. Nursing Ethics 27: 567–86. [Google Scholar] [CrossRef] [PubMed]

- Ryan, Aedin, Sophie Duignan, Damien Kenny, and Colin J. McMahon. 2018. Decision making in paediatric cardiology. Are we prone to heuristics, biases and traps? Pediatric Cardiology 39: 160–67. [Google Scholar] [CrossRef]

- Sackett, David L., William MC Rosenberg, JA Muir Gray, R. Brian Haynes, and W. Scott Richardson. 1996. Evidence Based Medicine: What It Is and What It Isn’t. BMJ 312: 71–72. [Google Scholar] [CrossRef] [PubMed]

- Safi, Asila, and Darrell Norman Burrell. 2007. Developing Advanced Decision-Making Skills in International Leaders and Managers. Vikalpa 32: 1–8. [Google Scholar] [CrossRef]

- Scapens, Robert W. 1990. Researching Management Accounting Practice: The Role of Case Study Methods. The British Accounting Review 22: 259–81. [Google Scholar] [CrossRef]

- Scranton, Pierce E., Jr. 1999. The cost effectiveness of streamlined care pathways and product standardization in total knee arthroplasty. The Journal of Arthroplasty 14: 182–86. [Google Scholar] [CrossRef]

- Simon, H. 1947. Administrative Behaviour. New York: The Free Press. [Google Scholar]

- Sizer, Phillip S., Manuel Vicente Mauri, Kenneth Learman, Clare Jones, Norman ‘Skip’ Gill, Chris R. Showalter, and Jean-Michel Brismée. 2016. Should Evidence or Sound Clinical Reasoning Dictate Patient Care? Journal of Manual & Manipulative Therapy 24: 117–19. [Google Scholar] [CrossRef]

- Skaržauskiene, A. 2010. Managing complexity: Systems thinking as a catalyst of the organization performance. Measuring Business Excellence 14: 49–64. [Google Scholar] [CrossRef]

- Smith, Megan, Joy Higgs, and Elizabeth Ellis. 2007. Physiotherapy decision making in acute cardiorespiratory care is influenced by factors related to the physiotherapist and the nature and context of the decision: A qualitative study. Australian Journal of Physiotherapy 53: 261–67. [Google Scholar] [CrossRef]

- Smith, Megan, Higgs Joy, and Elizabeth Ellis. 2010. Effect of experience on clinical decision making by cardiorespiratory physiotherapists in acute care settings. Physiotherapy Theory and Practice 26: 89–99. [Google Scholar] [CrossRef]

- Sousa, Maria José, António Miguel Pesqueira, Carlos Lemos, Miguel Sousa, and Álvaro Rocha. 2019. Decision-Making Based on Big Data Analytics for People Management in Healthcare Organizations. Journal of Medical Systems 43: 290. [Google Scholar] [CrossRef] [PubMed]

- Spano, Alessandro, and Anna Aroni. 2018. Organizational Performance in the Italian Health care Sector. In Outcome-Based Performance Management in the Public Sector. Cham: Springer, pp. 25–43. [Google Scholar]

- Stewart, Rebecca E., and Dianne L. Chambless. 2007. Does psychotherapy research inform treatment decisions in private practice? Journal of Clinical Psychology 63: 267–81. [Google Scholar] [CrossRef]

- Stiegler, M. P., Jacques P. Neelankavil, Cecilia Canales, and A. Dhillon. 2012. Cognitive errors detected in anaesthesiology: A literature review and pilot study. British Journal of Anaesthesia 108: 229–35. [Google Scholar] [CrossRef] [PubMed]

- Stylianou, Stelios. 2008. Interview control questions. International Journal of Social Research Methodology 11: 239–56. [Google Scholar] [CrossRef]

- Sun, Alexander Y., and Bridget R. Scanlon. 2019. How can Big Data and machine learning benefit environment and water management: A survey of methods, applications, and future directions. Environmental Research Letters 14: 073001. [Google Scholar] [CrossRef]

- Timmermans, Stefan, and Alison Angell. 2001. Evidence-based medicine, clinical uncertainty, and learning to doctor. Journal of Health and Social Behavior 42: 342–59. [Google Scholar] [CrossRef]

- To, Wai Ming, Billy T. W. Yu, and Peter K. C. Lee. 2018. How quality management system components lead to improvement in service organizations: A system practitioner perspective. Administrative Sciences 8: 73. [Google Scholar] [CrossRef]

- Torre, M., E. Carrani, and I. Luzi. 2017. Progetto Registro Italiano Artroprotesi. Potenziare la Qualità dei dati per Migliorare la Sicurezza dei Pazienti. Quarto Report. Rome: Il Pensiero Scientifico Editore. [Google Scholar]

- Twells, Laurie K. 2015. Evidence-Based Decision-Making 1: Critical Appraisal. In Clinical Epidemiology. Edited by a cura di Patrick S. Parfrey and Brendan J. Barrett. New York: Springer, vol. 1281, pp. 385–96. [Google Scholar] [CrossRef]

- Utter, Garth H., Ronald V. Maier, Frederick P. Rivara, and Avery B. Nathens. 2006. Outcomes after Ruptured Abdominal Aortic Aneurysms: The “Halo Effect” of Trauma Center Designation. Journal of the American College of Surgeons 203: 498–505. [Google Scholar] [CrossRef]

- Vickrey, Barbara G., Martin A. Samuels, and Allan H. Ropper. 2010. How neurologists think: A cognitive psychology perspective on missed diagnoses. Annals of Neurology 67: 425–33. [Google Scholar] [CrossRef]

- Vogel, P., and D. H. V. Vogel. 2019. Cognition errors in the treatment course of patients with anastomotic failure after colorectal resection. Patient Safety in Surgery 13: 4. [Google Scholar] [CrossRef]

- Vuong, Phoenix, Jason Sample, Mary Ellen Zimmermann, and Pierre Saldinger. 2017. Trauma Team Activation: Not Just for Trauma Patients. Journal of Emergencies, Trauma, and Shock 10: 151–53. [Google Scholar] [CrossRef] [PubMed]

- Waddington, L., and S. Morley. 2000. Availability Bias in Clinical Formulation: The First Idea That Comes to Mind. The British Journal of Medical Psychology 73, (Pt 1): 117–27. [Google Scholar] [CrossRef] [PubMed]

- Wainwright, Susan Flannery, Katherine F. Shepard, Laurinda B. Harman, and James Stephens. 2011. Factors that influence the clinical decision making of novice and experienced physical therapists. Physical Therapy 91: 87–101. [Google Scholar] [CrossRef] [PubMed]

- Wu, Tungju, Yenchun Jim Wu, Hsientang Tsai, and Yibin Li. 2017. Top management teams’ characteristics and strategic decision-making: A mediation of risk perceptions and mental models. Sustainability 9: 2265. [Google Scholar] [CrossRef]

- Yan, X., C. Dong, and C. Yao. 2017. The evolvement of evidence-based medicine research in the big data era. Chinese Journal of Evidence-Based Medicine 17: 249–54. [Google Scholar] [CrossRef]

- Yin, R. K. 2004. Case study methods. In Handbook of Complementary Methods in Education Research, American Educational Research Association. Edited by Judith. L. Green, Gregory Camilli and Patricia. B. Elmore. Washington, DC: Taylor & Francis Group, pp. 111–22. [Google Scholar]

- Yin, Robert K. 2017. Case Study Research and Applications: Design and Methods. Thousand Oaks: Sage Publications. [Google Scholar]

- Zavala, Alicia M., Gary E. Day, David Plummer, and Anita Bamford-Wade. 2018. Decision-making under pressure: Medical errors in uncertain and dynamic environments. Australian Health Review 42: 395–402. [Google Scholar] [CrossRef]

| Factors That Can Lead to Distortion | Bias/Code | Adjusted Checklist Questions | C.Q. * |

|---|---|---|---|

| Own interest of decision-maker | Self-interest | 1. In your choice of the patient’s follow-up path, do you think there is any reason to think that the personal motivations of the clinical operator (orthopaedic doctor) influenced the prescription (number and frequency of checks)? | A F |

| Preference of decision-maker about one alternative | Affect heuristic | 2. Is it possible that the choice of a specific follow-up path has been made on the basis of consolidated practice, rather than on the specific analysis from the context in reference to the specific contingencies of the patient? | A |

| Team communication or absence of communication among team members | Groupthink | 3. Are decisions regarding follow-up made at the operating team/ward level or at the individual doctor’s (orthopaedic patient’s) level? 3a. If conflicting opinions emerge, are they sufficiently examined? How are any “conflicts” resolved? | A C D |

| Past success | Saliency | 4. In your opinion, how much is the choice of a specific follow-up path influenced by the experience of the clinical operator regarding similar past situations? | A |

| No full evaluation of other alternatives | Confirmation | 5. When choosing a follow-up path, are different credible and reliable alternatives considered? | A |

| Information availability | Availability | 6. What clinical information is used to make decisions about the patient follow-up process? If you could have other information (non-clinical) which would you need? | A B |

| Information base | Anchoring | 7. Which source provides you with the data referred to in the previous question? | A |

| Connection between alternatives or situation and decision-maker | Halo effect | 8. When choosing a follow-up path, is it possible that the decision was made (or influenced) on the basis of similar decisions made by other departments or other clinical contexts? | A |

| History or past events | Sunk-cost fallacy | 9. When choosing a follow-up path, how does the patient’s medical history influence your decision? | A |

| Excessively optimistic | Overconfidence optimistic | 10. When choosing a follow-up path, do you usually consider extremely positive implication scenarios regarding the patient’s specific contingencies? | A E |

| Excessively pessimistic | Disaster neglect | 11. When choosing a follow-up path, do you always consider a realistic scenario regarding the patient’s specific contingencies? | A E |

| Excessively conservative | Loss aversion | 12. When choosing a follow-up path, do you usually consider extremely negative implication scenarios regarding the patient’s specific contingencies? | A E |

| n | * Control questions (C.Q.) | ||

| A | What do you mean by the follow-up process? | ||

| B | What else? | ||

| C | What does it happen if something happened during the surgery and a doctor thinks he wants to see that patient again? Is this kind of decision made by the group? | ||

| D | Do you decide only on your own, without discussing with your team? | ||

| E | What do you mean by a positive or negative scenario? | ||

| F | What other kinds of interest can bring you to define different timespans for follow-ups? | ||

| Questions | Bias/Code | Content Example | Respondent(s) | Bias Presence |

|---|---|---|---|---|

| In your choice of the patient’s follow-up path, do you think there is any reason to think that the personal motivations of the clinical operator (orthopaedic doctor) influenced the prescription (number and frequency of checks)? | Self-interest | “Most likely yes” | SD | NO |

| “Systematically not, however, there is a percentage of variability linked to the patient” | SP | NO | ||

| “No, because the prosthetic follow-up is completely standardised” | SH | NO | ||

| Is it possible that the choice of a specific follow-up path has been made on the basis of consolidated practice, rather than on the specific analysis from the context in reference to the specific contingencies of the patient? | Affect heuristic | “Probably yes” | SD | YES |

| “Yes, it is possible” | SP | YES | ||

| “Customisable follow-ups are rare. We do what the scientific literature reported” | SH | YES | ||

| Are decisions regarding follow-up made at the operating team/ward level or at the individual doctor’s (orthopaedic patient’s) level? | Groupthink | “Decisions are made by the operating team” | SD | YES |

| “In my working reality, the individual doctor decides because often the surgeons make follow-up in their private clinics” | SP | NO | ||

| “Decisions are made due to standardisation of wards” | SH | YES | ||

| a. If conflicting opinions emerge, are they sufficiently examined? How are any “conflicts” resolved? | “Conflicts are resolved by the team leader and/or the oldest one” | SD | / | |

| Not available | SP | / | ||

| “Yes. Conflicts are resolved by ward director” | SH | / | ||

| In your opinion, how much the choice of a specific follow-up path is influenced by the experience of the clinical operator regarding similar past situations? | Saliency | “A little, because it is probably connected also with what you want to evaluate and with what literature reported” | SD | YES |

| “Above all. The choice is influenced almost exclusively by similar past situations” | SP | YES | ||

| “It influences because, in addition to scientific bases, orthopaedic surgery also relies heavily on personal experience” | SH | YES | ||

| When choosing a follow-up path, are different credible and reliable alternatives considered? | Confirmation | “Yes, they are” | SD | NO |

| “Not much. Alternatives exist but we don’t consider them enough” | SP | NO | ||

| “There are not many alternatives to standard follow-up” | SH | YES | ||

| What clinical information is used to make decisions about the patient follow-up process? If you could have other information (non-clinical) which would you need? | Availability | “The ones reported by the literature” | SD | YES |

| “Patient’s pain, functional skills, lifestyle habits, and job” | SP | NO | ||

| “Mainly comorbidities and type of surgery are used to make a decision about follow-up.If I could have other information, I would like to know the patient’s lifestyle habits and where he/she lives” | SH | YES | ||

| Which source provides you with the data referred to in the previous question? | Anchoring | “Yes. Scientific literature gives us many things” | SH | YES |

| “The several international scores that you want to apply” | SD | YES | ||

| “Medical examination and patient itself” | SP | YES | ||

| When choosing a follow-up path, is it possible that the decision was made (or influenced) on the basis of similar decisions made by other departments or other clinical contexts? | Halo effect | “Certainly yes” | SD | YES |

| “Yes. Opinion leaders and their modus operandi matter a lot” | SP | YES | ||

| “A deeply patient’s anamnesis” | SH | YES | ||

| When choosing a follow-up path, how does the patient’s medical history influence your decision? | Sunk-cost fallacy | “As far as the case of the clinical sphere is concerned, probably the embedding parameters make the difference” | SD | NO |

| “It could make follow-up more frequent” | SP | NO | ||

| “It conditions a lot, for example in an epileptic or Parkinsonian patient it is known that a more frequent follow-up is necessary” | SH | NO | ||

| When choosing a follow-up path, do you usually consider extremely positive implication scenarios regarding the patient’s specific contingencies? | Overconfidence optimistic | “I determine the extent of the follow-up both for what I have read in literature and because I think that is the right period to detect the progress of that situation” | SD | YES |

| “I define the period according to the time for a patient to slowly start to have a normal life, without aids, without particular foreclosures” | SP | YES | ||

| “When choosing a follow-up path, I usually consider always positive scenarios” | SH | NO | ||

| When choosing a follow-up path, always consider a realistic scenario regarding the patient’s specific contingencies? | Disaster neglect | “Actually, the period is defined because at that point I should have data that tells me if that path is a positive or negative path.” | SD | NO |

| “That period has logic behind it. It is the healing time of the tissues from the intervention. I can imagine them repaired in a month and for this, I set that date for the medical examination” | SP | NO | ||

| “The choice is always ideal as it should be” | SH | NO | ||

| When choosing a follow-up path, do you usually consider extremely negative implication scenarios regarding the patient’s specific contingencies? | Loss aversion | “Actually, the period is defined because at that point I should have data that tells me if that path is a positive or negative path.” | SD | NO |

| “The guideline is the same logic that I said before” | SP | NO | ||

| “When choosing a follow-up path, I usually consider always positive scenarios because complications are infrequent in this kind of surgery” | SH | NO |

| Biases | Description * | Medical Literature | Errors Recognized By |

|---|---|---|---|

| Affect heuristic | The decision-maker tends to minimise the risks and costs and/or exaggerate the benefits of something he/she likes | (Makhinson 2012) | SD, SP, SH |

| Anchoring | The decision-maker makes the decision taking into consideration some initial reference data without adjusting its estimates according to the new information gained | (Nagaraj et al. 2018; Augestad et al. 2016) | SD, SP, SH |

| Halo effect | The decision-maker sees a story as more emotionally consistent than it really is | (Austin and Halvorson 2019; Vuong et al. 2017; Utter et al. 2006) | SD, SP, SH |

| Saliency | The decision-maker tends to approve a proposal that is similar to a successful one in the past | (Makhinson 2012; Vickrey et al. 2010) ** | SD, SP, SH |

| Groupthink | The inclination of groups to converge on a decision because it reduces the conflict and can gain large support | (Kaba et al. 2016; Mailoo 2015) | SD, SH |

| Availability | The decision-maker makes the decision with the available data without making an effort to find other useful information that is uncovered | (Mamede et al. 2020; Waddington and Morley 2000) | SD, SH |

| Overconfidence | The decision-maker with positive track records is prone to excessive optimism in forecasts | (Cohen and Burgin 2016; Vickrey et al. 2010) | SD, SP |

| Confirmation | The decision-maker tends to elaborate only one alternative for which he/she tries to find confirming data | (Balsamo et al. 2018; Elston 2020) | SH |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Schettini, I.; Palozzi, G.; Chirico, A. Enhancing Healthcare Decision-Making Process: Findings from Orthopaedic Field. Adm. Sci. 2020, 10, 94. https://doi.org/10.3390/admsci10040094

Schettini I, Palozzi G, Chirico A. Enhancing Healthcare Decision-Making Process: Findings from Orthopaedic Field. Administrative Sciences. 2020; 10(4):94. https://doi.org/10.3390/admsci10040094

Chicago/Turabian StyleSchettini, Irene, Gabriele Palozzi, and Antonio Chirico. 2020. "Enhancing Healthcare Decision-Making Process: Findings from Orthopaedic Field" Administrative Sciences 10, no. 4: 94. https://doi.org/10.3390/admsci10040094

APA StyleSchettini, I., Palozzi, G., & Chirico, A. (2020). Enhancing Healthcare Decision-Making Process: Findings from Orthopaedic Field. Administrative Sciences, 10(4), 94. https://doi.org/10.3390/admsci10040094