Subliminal Semantic Processing of Grasping Actions: Evidence from ERP Measures of Action-Verb Priming

Abstract

1. Introduction

2. Materials and Methods

2.1. Participants

2.2. Stimuli

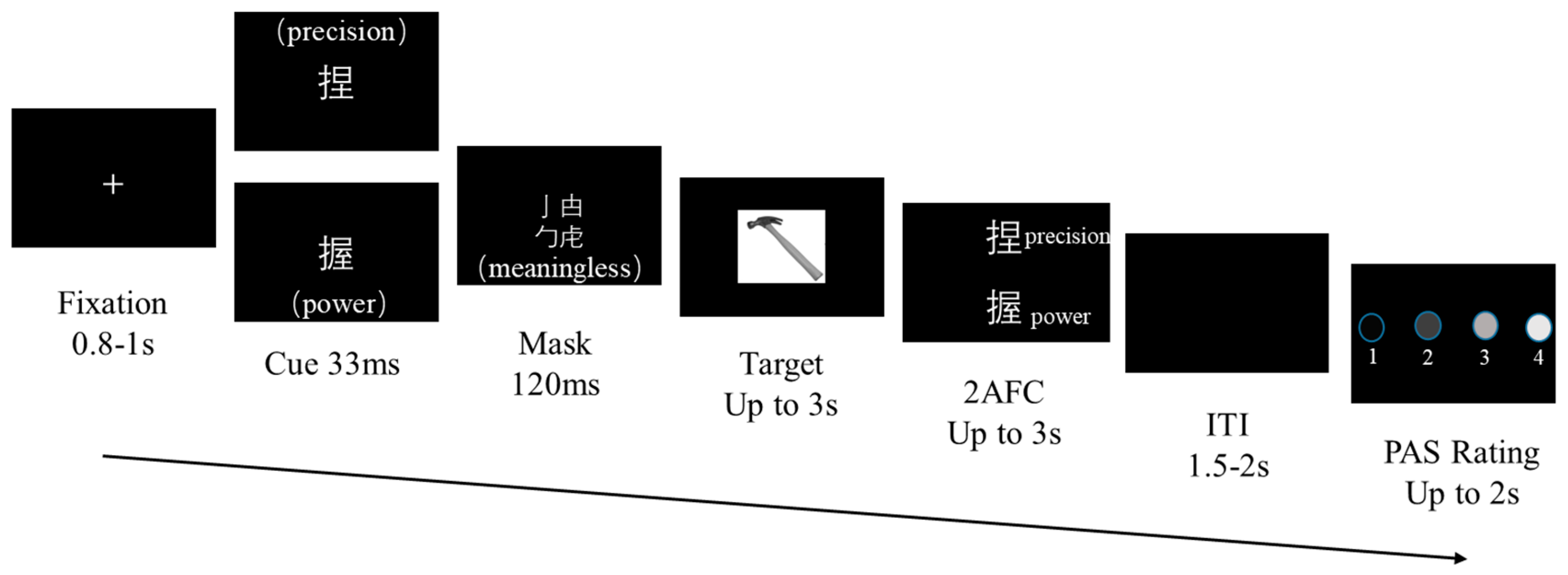

2.3. Task and Procedure

2.4. EEG Data Acquisition

2.5. EEG Data Analysis

3. Results

3.1. Behavior

3.1.1. An Objective Measure of Visual Awareness for Cue Stimuli

3.1.2. Subliminal Priming Effect

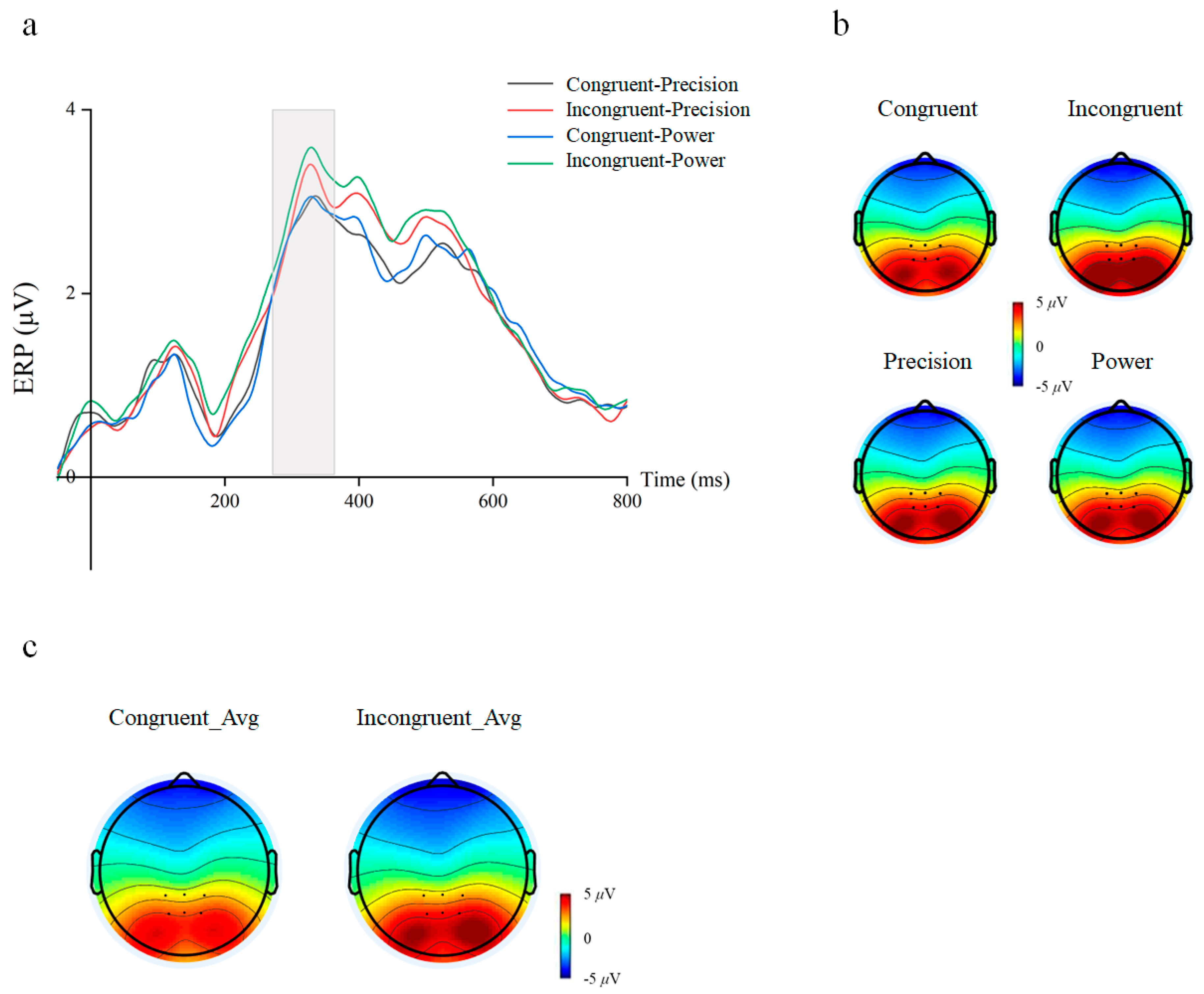

3.2. Electrophysiology Components of the Subliminal Priming Task

4. Discussion

4.1. Subthreshold Semantic Priming Effect of Action Verbs

4.2. Action Language and Action Recognition

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Almeida, J., Mahon, B. Z., Nakayama, K., & Caramazza, A. (2008). Unconscious processing dissociates along categorical lines. Proceedings of the National Academy of Sciences of the United States of America, 105(39), 15214–15218. [Google Scholar] [CrossRef] [PubMed]

- Arevalo, A., Perani, D., Cappa, S. F., Butler, A., Bates, E., & Dronkers, N. (2007). Action and object processing in aphasia: From nouns and verbs to the effect of manipulability. Brain Lang, 100(1), 79–94. [Google Scholar] [CrossRef]

- Augurelle, A. S., Smith, A. M., Lejeune, T., & Thonnard, J. L. (2003). Importance of cutaneous feedback in maintaining a secure grip during manipulation of hand-held objects. Journal of Neurophysiology, 89(2), 665–671. [Google Scholar] [CrossRef] [PubMed]

- Ball, L. V., Mak, M. H., Ryskin, R., Curtis, A. J., Rodd, J. M., & Gaskell, M. G. (2025). The contribution of learning and memory processes to verb-specific syntactic processing. Journal of Memory and Language, 141, 104595. [Google Scholar] [CrossRef]

- Beauprez, S. A., Blandin, Y., Almecija, Y., & Bidet-Ildei, C. (2020). Physical and observational practices of unusual actions prime action verb processing. Brain and Cognition, 138, 103630. [Google Scholar] [CrossRef]

- Bergstrom, F., Wurm, M., Valerio, D., Lingnau, A., & Almeida, J. (2021). Decoding stimuli (tool-hand) and viewpoint invariant grasp-type information. Cortex, 139, 152–165. [Google Scholar] [CrossRef]

- Brainard, D. H. (1997). The psychophysics toolbox. Spatial Vision, 10(4), 433–436. [Google Scholar] [CrossRef] [PubMed]

- Brandi, M. L., Wohlschlager, A., Sorg, C., & Hermsdorfer, J. (2014). The neural correlates of planning and executing actual tool use. Journal of Neuroscience, 34(39), 13183–13194. [Google Scholar] [CrossRef]

- Brodeur, M. B., Guérard, K., & Bouras, M. (2014). Bank of standardized stimuli (BOSS) phase II 930 new photos. PLoS ONE, 9(9), e106953. [Google Scholar] [CrossRef]

- Brown, C., & Hagoort, P. (1993). The processing nature of the N400: Evidence from masked priming. Journal of Cognitive Neuroscience, 5(1), 34. [Google Scholar] [CrossRef]

- Buxbaum, L. J., & Kalenine, S. (2010). Action knowledge, visuomotor activation, and embodiment in the two action systems. Annals of the New York Academy of Sciences, 1191, 201–218. [Google Scholar] [CrossRef]

- Buxbaum, L. J., Kyle, K. M., Tang, K., & Detre, J. A. (2006). Neural substrates of knowledge of hand postures for object grasping and functional object use: Evidence from fMRI. Brain Research, 1117(1), 175–185. [Google Scholar] [CrossRef]

- Collins, L., Allan, M., & Elizabeth, F. (1975). A spreading-activation theory of semantic processing. Psychological Review, 82(6), 407. [Google Scholar] [CrossRef]

- Courson, M., & Tremblay, P. (2020). Neural correlates of manual action language: Comparative review, ALE meta-analysis and ROI meta-analysis. Neuroscience & Biobehavioral Reviews, 116, 221–238. [Google Scholar] [CrossRef]

- Dam, V. (2010). Context effects in embodied lexical-semantic processing. Frontiers in Psychology, 1, 2102. [Google Scholar] [CrossRef] [PubMed]

- Danielle, S. D., Nicole, Y., & Wicha, Y. (2019). P300 amplitude and latency reflect arithmetic skill: An ERP study of the problem size effect. Biological Psychology, 148, 107745. [Google Scholar] [CrossRef]

- Delorme, A., & Makeig, S. (2004). EEGLAB: An open source toolbox for analysis of single-trial EEG dynamics including independent component analysis. Journal of Neuroscience Methods, 134, 9–21. [Google Scholar] [CrossRef]

- Deng, Y., Wu, Q., Wang, J., Feng, L., & Xiao, Q. (2016). Event-related potentials reveal early activation of syntax information in Chinese verb processing. Neuroscience Letters, 631, 19–23. [Google Scholar] [CrossRef]

- Dudschig, C. (2022). Language and non-linguistic cognition: Shared mechanisms and principles reflected in the N400. Biological Psychology, 169, 108282. [Google Scholar] [CrossRef] [PubMed]

- Emmorey, K., Akers, E. M., Martinez, P. M., Midgley, K. J., & Holcomb, P. J. (2025). Assessing sensitivity to semantic and syntactic information in deaf readers: An ERP study. Neuropsychologia, 215, 109171. [Google Scholar] [CrossRef] [PubMed]

- Errante, A., Ziccarelli, S., Mingolla, G. P., & Fogassi, L. (2021). Decoding grip type and action goal during the observation of reaching-grasping actions: A multivariate fMRI study. NeuroImage, 243, 118511. [Google Scholar] [CrossRef]

- Fagg, A. H., & Arbib, M. A. (1998). Modeling parietal-premotor interactions in primate control of grasping. Neural Networks, 11, 1277–1303. [Google Scholar] [CrossRef]

- Fang, F., & He, S. (2005). Cortical responses to invisible objects in the human dorsal and ventral pathways. Nature Neuroscience, 8(10), 1380–1385. [Google Scholar] [CrossRef] [PubMed]

- Faul, F., Erdfelder, E., Lang, A. G., & Buchner, A. (2007). G*Power 3: A flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behavior Research Methods, 39(2), 175–191. [Google Scholar] [CrossRef]

- Federico, G., Osiurak, F., Ciccarelli, G., Ilardi, C. R., Cavaliere, C., Tramontano, L., Alfano, V., Migliaccio, M., Di Cecca, A., Salvatore, M., & Brandimonte, M. A. (2023). On the functional brain networks involved in tool-related action understanding. Communications Biology, 6(1), 1163. [Google Scholar] [CrossRef]

- Federico, G., Osiurak, F., Ilardi, C. R., Cavaliere, C., Alfano, V., Tramontano, L., Ciccarelli, G., Cafaro, C., Salvatore, M., & Brandimonte, M. A. (2025). Mechanical and semantic knowledge mediate the implicit understanding of the physical world. Brain and Cognition, 183, 106253. [Google Scholar] [CrossRef]

- Federico, G., Osiurak, F., Reynaud, E., & Brandimonte, M. (2021). Semantic congruency effects of prime words on tool visual exploration. Brain and Cognition, 152, 105758. [Google Scholar] [CrossRef]

- Fu, X., Liu, Y., & Yu, W. (2018). Two kinds of action representation in manipulable object recognition. Advances in Psychological Science, 26(2), 229. [Google Scholar] [CrossRef]

- Garcea, F. E., & Buxbaum, L. J. (2019). Gesturing tool use and tool transport actions modulates inferior parietal functional connectivity with the dorsal and ventral object processing pathways. Human Brain Mapping, 40(10), 2867–2883. [Google Scholar] [CrossRef] [PubMed]

- Giacobbe, C., Raimo, S., Cropano, M., & Santangelo, G. (2022). Neural correlates of embodied action language processing: A systematic review and meta-analytic study. Brain Imaging and Behavior, 16(5), 2353–2374. [Google Scholar] [CrossRef] [PubMed]

- Gibson, K. R., & Ingold, T. (1994). Tools, language and cognition in human evolution. Cambridge University Press. [Google Scholar]

- Gratton, G., Coles, M. G., & Donchin, E. (1983). A new method for off-line removal of ocular artifact. Electroencephalography & Clinical Neurophysiology, 55(4), 468–484. [Google Scholar]

- Iversen, J. R., & Makeig, S. (2014). MEG/EEG data analysis using EEGLAB (Vol. 1, pp. 199–212). Springer. [Google Scholar]

- Jena, V.-I., Crawford, J. D., Luigi, C., & Simona, M. (2022). Action planning modulates the representation of object features in human fronto-parietal and occipital cortex. European Journal of Neuroscience, 56, 4803–4818. [Google Scholar]

- Kiefer, M., & Brendel, D. (2006). Attentional modulation of unconscious ‘automatic’ processes: Evidence from event-related potentials in a masked priming paradigm. Journal of Cognitive Neuroscience, 18(2), 184–198. [Google Scholar] [CrossRef] [PubMed]

- Kim, A. E., McKnight, S. M., & Miyake, A. (2024). How variable are the classic ERP effects during sentence processing? A systematic resampling analysis of the N400 and P600 effects. Cortex, 177, 130–149. [Google Scholar] [CrossRef]

- Klepp, A., Weissler, H., Niccolai, V., Terhalle, A., Geisler, H., Schnitzler, A., & Biermann-Ruben, K. (2014). Neuromagnetic hand and foot motor sources recruited during action verb processing. Brain Lang, 128(1), 41–52. [Google Scholar] [CrossRef]

- Kutas, M., & Federmeier, K. D. (2011). Thirty years and counting: Finding meaning in the N400 component of the event-related brain potential (ERP). Annual Review of Psychology, 62(1), 621. [Google Scholar] [CrossRef]

- Lee, C., Huang, H., Federmeier, K. D., & Buxbaum, L. J. (2018). Sensory and semantic activations evoked by action attributes of manipulable objects: Evidence from ERPs. NeuroImage, 167, 331–341. [Google Scholar] [CrossRef]

- Leynes, P. A., Verma, Y., & Santos, A. (2024). Separating the FN400 and N400 event-related potential components in masked word priming. Brain and Cognition, 182, 106226. [Google Scholar] [CrossRef] [PubMed]

- Li, L., & Wang, Q. (2016). An ERP study on the frequency and semantic priming of Chinese characters. Advances in Psychology, 6(12), 1273–1279. [Google Scholar] [CrossRef]

- Liu, T., Zhao, R., Lam, K.-M., & Kong, J. (2022). Visual-semantic graph neural network with pose-position attentive learning for group activity recognition. Neurocomputing, 491, 217–231. [Google Scholar] [CrossRef]

- Ludwig, K., Kathmann, N., Sterzer, P., & Hesselmann, G. (2015). Investigating category- and shape-selective neural processing in ventral and dorsal visual stream under interocular suppression. Human Brain Mapping, 36(1), 137–149. [Google Scholar] [CrossRef]

- Madan, C. R. (2014). Manipulability impairs association-memory: Revisiting effects of incidental motor processing on verbal paired-associates. Acta Psychologica, 149, 45–51. [Google Scholar] [CrossRef] [PubMed]

- Martens, U., Ansorge, U., & Kiefer, M. (2011). Controlling the unconscious: Attentional task sets modulate subliminal semantic and visuomotor processes differentially. Psychological Science, 22(2), 282. [Google Scholar] [CrossRef]

- Miall, R. C., Rosenthal, O., Orstavik, K., Cole, J. D., & Sarlegna, F. R. (2019). Loss of haptic feedback impairs control of hand posture: A study in chronically deafferented individuals when grasping and lifting objects. Experimental Brain Research, 237(9), 2167–2184. [Google Scholar] [CrossRef]

- Michel, P., & Claude, D. (1999). Developmental changes in prehension during childhood. Experimental Brain Research, 125, 239–247. [Google Scholar] [CrossRef] [PubMed]

- Moguilner, S., Birba, A., Fino, D., Isoardi, R., Huetagoyena, C., Otoya, R., Tirapu, V., Cremaschi, F., Sedeño, L., Ibáñez, A., & García, A. M. (2021). Structural and functional motor-network disruptions predict selective action-concept deficits: Evidence from frontal lobe epilepsy. Cortex, 144, 43–55. [Google Scholar] [CrossRef] [PubMed]

- Monaco, E., Mouthon, M., Britz, J., Sato, S., Stefanos-Yakoub, I., Annoni, J. M., & Jost, L. B. (2023). Embodiment of action-related language in the native and a late foreign language—An fMRI-study. Brain and Language, 244, 105312. [Google Scholar] [CrossRef]

- Ortu, D., Allan, K., & Donaldson, D. I. (2013). Is the N400 effect a neurophysiological index of associative relationships? Neuropsychologia, 51(9), 1742–1748. [Google Scholar] [CrossRef]

- Pappas, Z., & Mack, A. (2008). Potentiation of action by undetected affordant objects. Visual Cognition, 16(7), 892–915. [Google Scholar] [CrossRef]

- Pelli, D. G. (1997). The VideoToolbox software for visual psychophysics: Transforming numbers into movies. Spatial Vision, 10(4), 437–442. [Google Scholar] [CrossRef]

- Piotr, J., Skalska, B., & Verleger, R. (2006). How the self controls its “automatic pilot” when processing subliminal information. Journal of Cognitive Neuroscience, 15(6), 911–920. [Google Scholar]

- Plaut, D. C., & Erlbaums, L. (1998). Semantic and associative priming in a distributed attractor network. Lawrence Erlbaum Associates. [Google Scholar]

- Preston, B. (2012). A philosophy of material culture: Action, function, and mind. In Biological theory. Routledge. [Google Scholar]

- Rosenbaum, D. A., Chapman, K. M., Weigelt, M., Weiss, D. J., & van der Wel, R. (2012). Cognition, action, and object manipulation. Psychological Bulletin, 138(5), 924–946. [Google Scholar] [CrossRef]

- Seidel, G., Rijntjes, M., Gullmar, D., Weiller, C., & Hamzei, F. (2023). Understanding the concept of a novel tool requires interaction of the dorsal and ventral streams. Cereb Cortex, 33(16), 9652–9663. [Google Scholar] [CrossRef] [PubMed]

- Senna, I., Bolognini, N., & Maravita, A. (2014). Grasping with the foot: Goal and motor expertise in action observation. Human Brain Mapping, 35(4), 1750–1760. [Google Scholar] [CrossRef] [PubMed]

- Wu, H., Mai, X., Tang, H., Ge, Y., Luo, Y. J., & Liu, C. (2013). Dissociable somatotopic representations of Chinese action verbs in the motor and premotor cortex. Scientific Reports, 3, 2049. [Google Scholar] [CrossRef]

- Yang, Y., Zhou, J., Li, K., Hung, T., Pegna, A. J., & Yeh, S. (2017). Opposite ERP effects for conscious and unconscious semantic processing under continuous flash suppression. Consciousness and Cognition, 54, 114–128. [Google Scholar] [CrossRef]

- Zovko, M., & Kiefer, M. (2013). Do different perceptual task sets modulate electrophysiological correlates of masked visuomotor priming? Attention to shape and color put to the test. Psychophysiology, 50(2), 149–157. [Google Scholar] [CrossRef]

| Manipulable Objects | Grasping-Action Types (Sample Number = 198) | Chi-Square Test (Chi-Square Value, p Value) | |

|---|---|---|---|

| Precision | Power | Grasping-Action Types | |

| Brush | 195 | 3 | 186.18, <0.001 |

| Fan | 193 | 5 | 178.92, <0.001 |

| Hammer | 0 | 198 | 198, <0.001 |

| Dryer | 2 | 196 | 190.94, <0.001 |

| Clamp | 196 | 2 | 190.94, <0.001 |

| Scissor | 198 | 0 | 198, <0.001 |

| Stopwatch | 2 | 196 | 190.94, <0.001 |

| Bottle | 3 | 195 | 186.18, <0.001 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yu, Y.; Li, A. Subliminal Semantic Processing of Grasping Actions: Evidence from ERP Measures of Action-Verb Priming. Behav. Sci. 2026, 16, 206. https://doi.org/10.3390/bs16020206

Yu Y, Li A. Subliminal Semantic Processing of Grasping Actions: Evidence from ERP Measures of Action-Verb Priming. Behavioral Sciences. 2026; 16(2):206. https://doi.org/10.3390/bs16020206

Chicago/Turabian StyleYu, Yanglan, and Anmin Li. 2026. "Subliminal Semantic Processing of Grasping Actions: Evidence from ERP Measures of Action-Verb Priming" Behavioral Sciences 16, no. 2: 206. https://doi.org/10.3390/bs16020206

APA StyleYu, Y., & Li, A. (2026). Subliminal Semantic Processing of Grasping Actions: Evidence from ERP Measures of Action-Verb Priming. Behavioral Sciences, 16(2), 206. https://doi.org/10.3390/bs16020206