Toward the Trajectory Predictor for Automatic Train Operation System Using CNN–LSTM Network

Abstract

:1. Introduction

- We focus on learning hidden patterns from the normal operation process characterized by high repetition schedules. Therefore, we introduce the convolution layer used to extract local interactions in our previous method [24].

- We implement a modified version of the LSTM trajectory prediction model, which combines the CNN algorithm to train and learn large numbers of trajectories accurately and efficiently when the time horizon rised to 4 s, which means the proposed model performs better in long-term prediction.

- We compared our proposed algorithm with four trajectory prediction algorithms: RNN, GRU, LSTM, and stateful-LSTM-based. Our experiments used seven real-life train trajectory datasets from Chengdu Metro Line 6. To the best of our knowledge, this is the first time such a comprehensive deep learning model has been tested and compared on many real-life railway network trajectories.

2. Related Work

2.1. Statistical Method-Based Trajectory Prediction

2.2. Deep Learning-Based Trajectory Prediction

3. Methodology

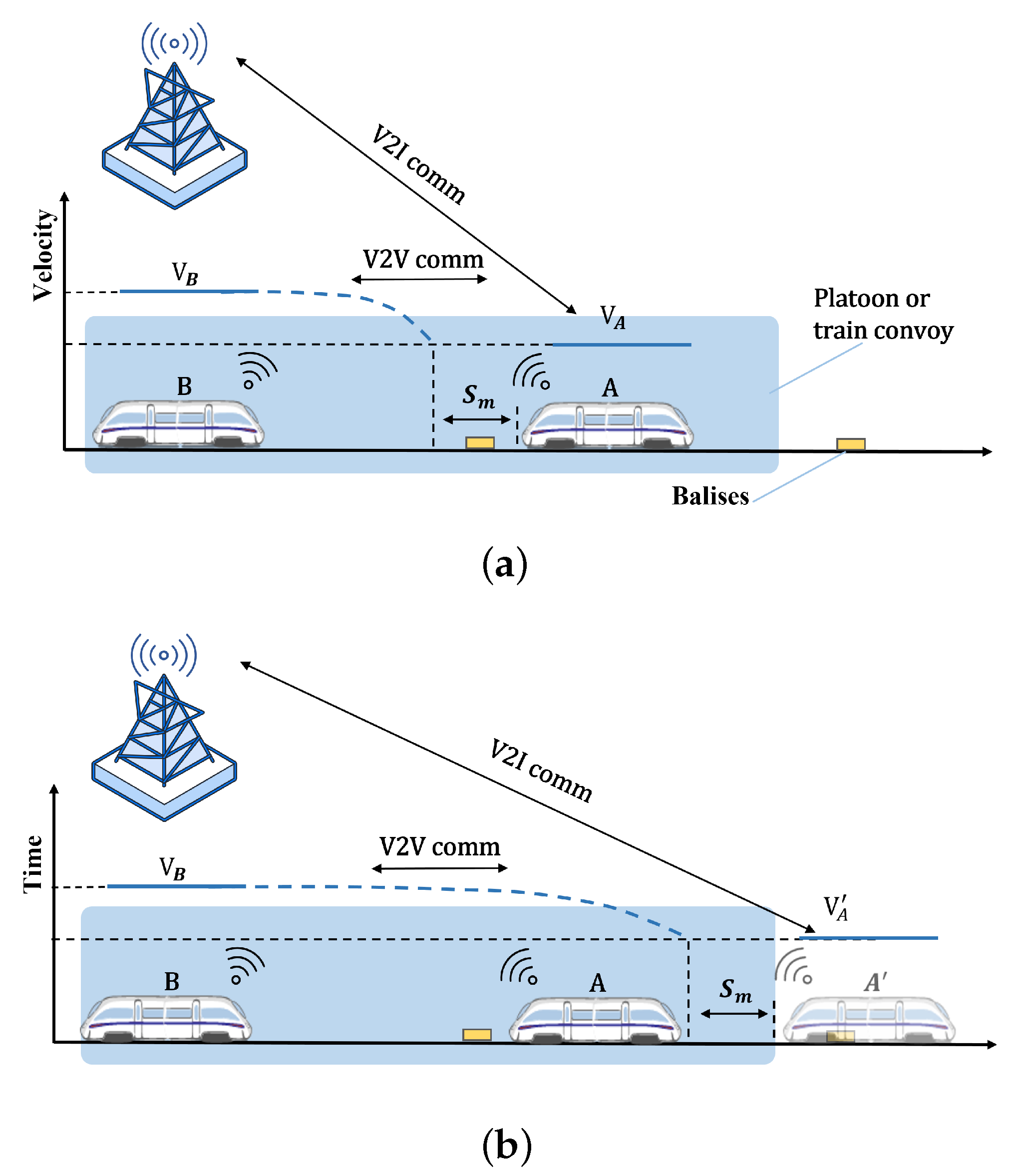

3.1. Problem Formulation

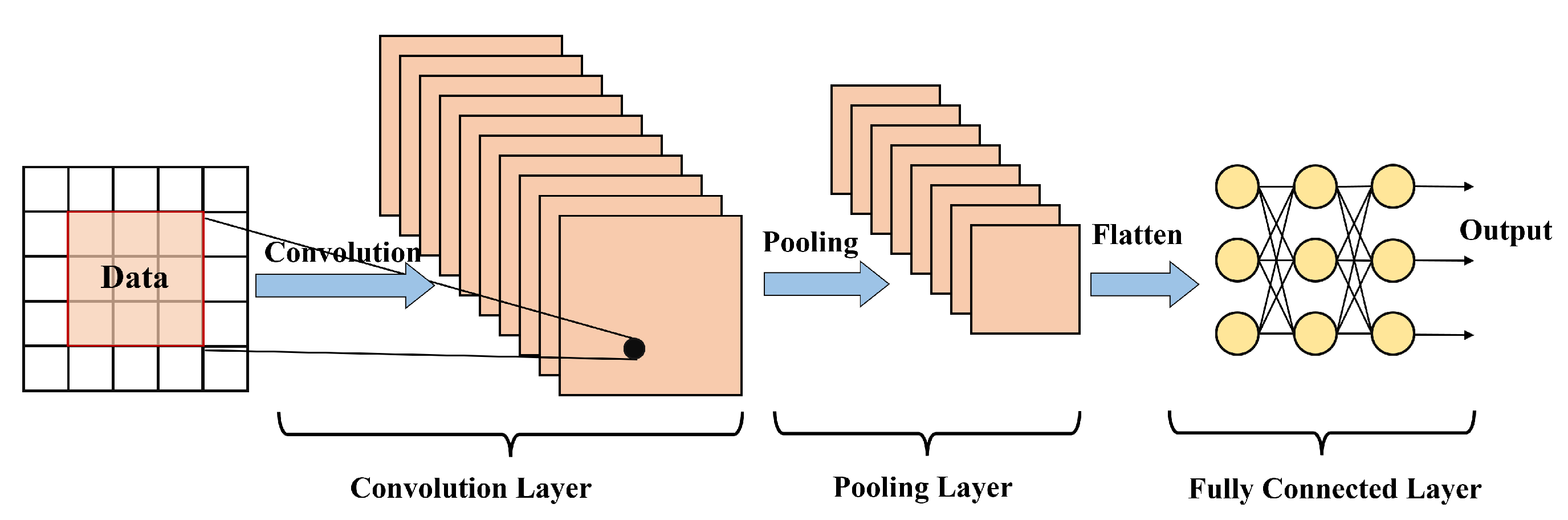

3.2. CNN Network

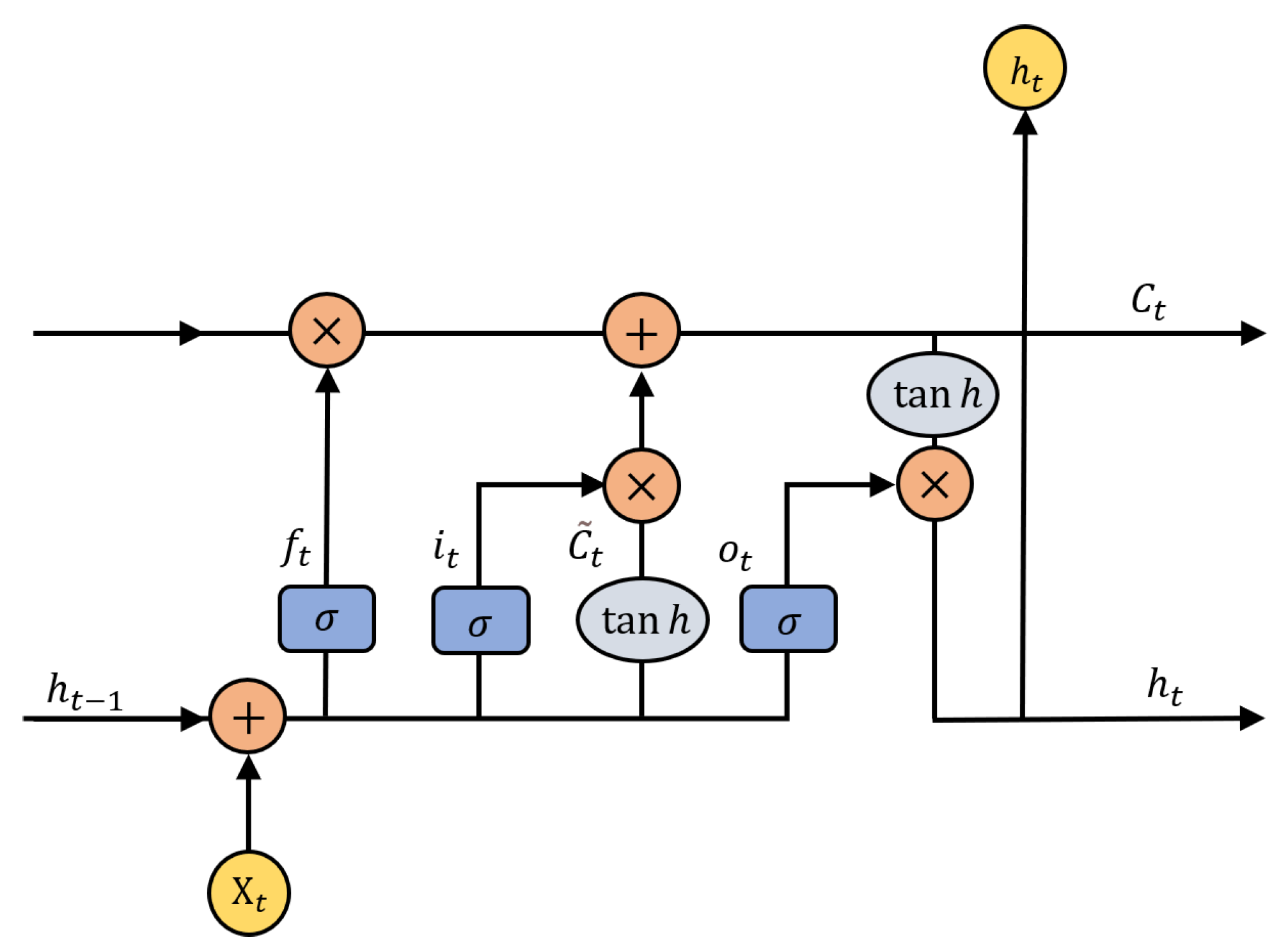

3.3. LSTM Network

3.3.1. Forget

3.3.2. Remember

3.3.3. Update

3.3.4. Output

3.4. Hybrid CNN–LSTM Model

| Algorithm 1 CNN–LSTM model |

Input: observed trajectory data of trains: , where M is the historical time steps. Output: A set of predicted trajectories

|

4. Experiment and Discussions

4.1. Datasets

4.2. Evaluation Metrics

4.3. Evaluation Setup

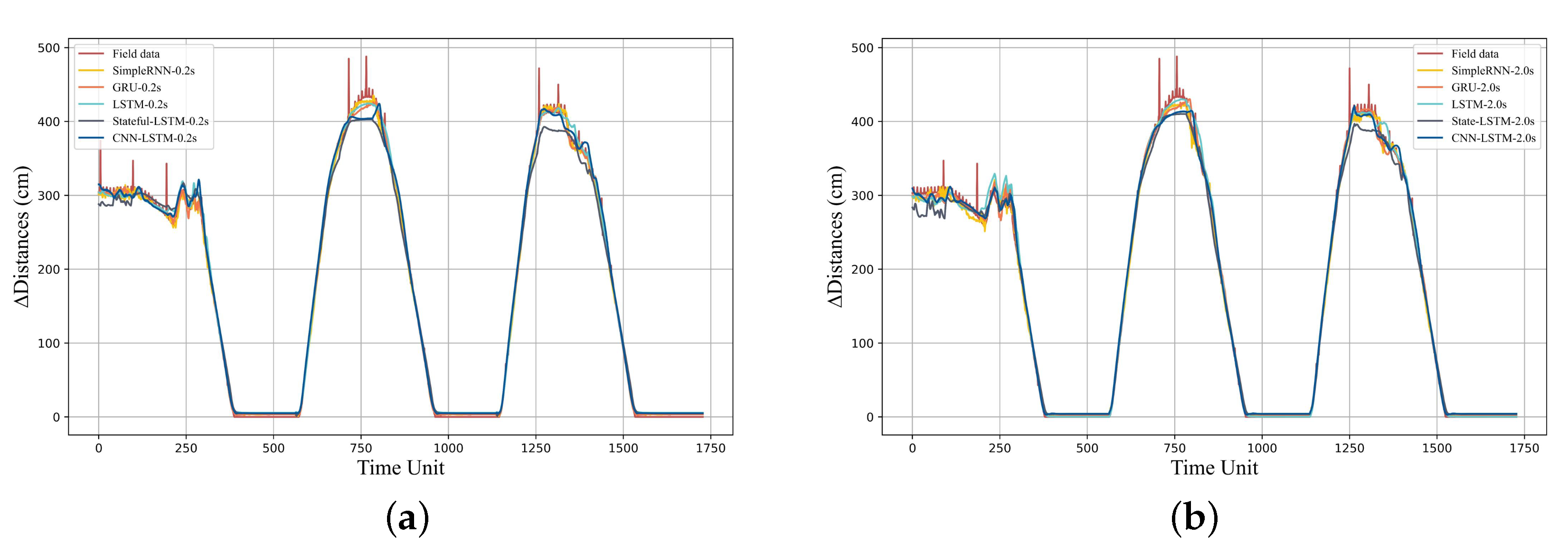

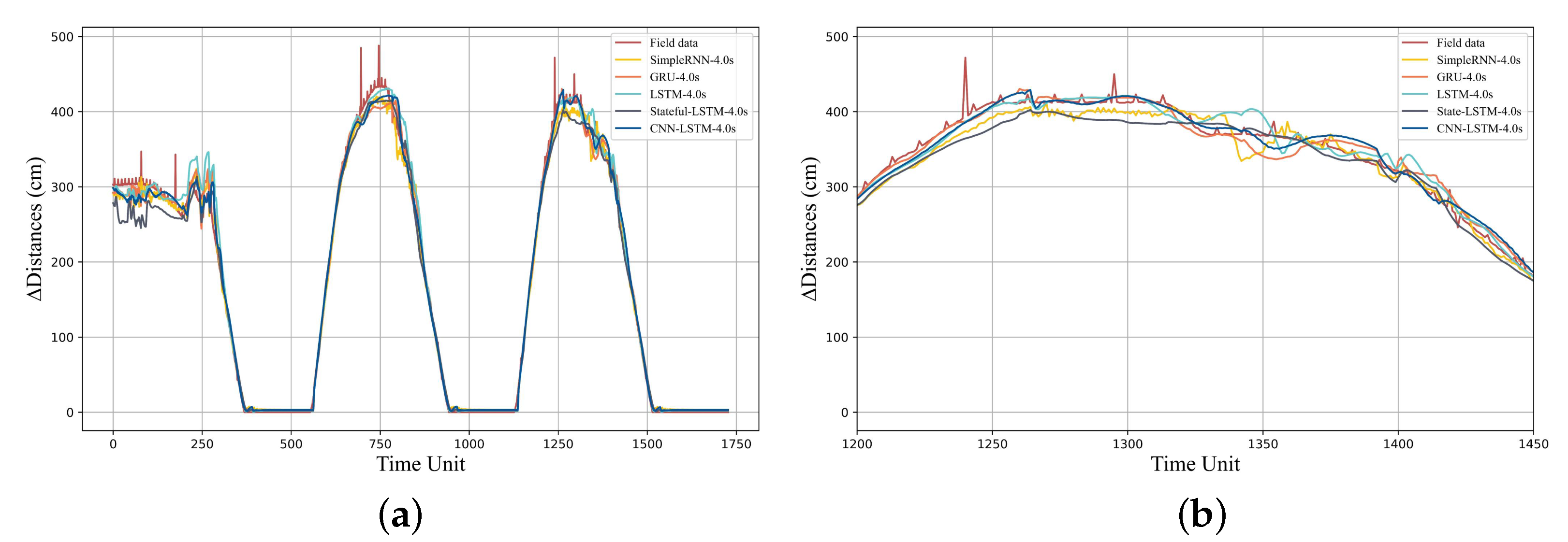

4.4. Comparative Analysis of Experimental Results

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Quaglietta, E.; Wang, M.; Goverde, R.M.P. A multi-state train-following model for the analysis of virtual coupling railway operations. J. Rail Transp. Plan. Manag. 2020, 15, 100195. [Google Scholar] [CrossRef]

- Helbing, D.; Molnár, P. Social force model for pedestrian dynamics. Phys. Rev. E 1995, 51, 4282. [Google Scholar] [CrossRef]

- Morzy, M. Mining Frequent Trajectories of Moving Objects for Location Prediction. In Proceedings of the Machine Learning and Data Mining in Pattern Recognition; Perner, P., Ed.; Springer: Berlin/Heidelberg, Germany, 2007; pp. 667–680. [Google Scholar]

- Qiao, S.; Han, N.; Wang, J.; Li, R.H.; Gutierrez, L.A.; Wu, X. Predicting Long-Term Trajectories of Connected Vehicles via the Prefix-Projection Technique. IEEE Trans. Intell. Transp. Syst. 2018, 19, 2305–2315. [Google Scholar] [CrossRef]

- Kortüm, R. Mechatronic developments for railway vehicles of the future. Control Eng. Pract. 2002, 10, 887–898. [Google Scholar]

- Wang, X.; Tang, T.; Su, S.; Yin, J.; Lv, N. An integrated energy-efficient train operation approach based on the space-time-speed network methodology. Transp. Res. Part E Logist. Transp. Rev. 2021, 150, 102323. [Google Scholar] [CrossRef]

- Shuai, S.A.; Xw, A.; Tao, T.A.; Gw, B.; Yuan, C. Energy-efficient operation by cooperative control among trains: A multi-agent reinforcement learning approach. Control Eng. Pract. 2021, 116, 104901. [Google Scholar]

- Sun, H.; Hou, Z.; Li, D. Coordinated Iterative Learning Control Schemes for Train Trajectory Tracking With Overspeed Protection. IEEE Trans. Autom. Sci. Eng. 2013, 10, 323–333. [Google Scholar] [CrossRef]

- Shahi, T.B.; Shrestha, A.; Neupane, A.; Guo, W. Stock Price Forecasting with Deep Learning: A Comparative Study. Mathematics 2020, 8, 1441. [Google Scholar] [CrossRef]

- Kong, J.; Yang, C.; Wang, J.; Wang, X.; Zuo, M.; Jin, X.; Lin, S. Deep-Stacking Network Approach by Multisource Data Mining for Hazardous Risk Identification in IoT-Based Intelligent Food Management Systems. Comput. Intell. Neurosci. 2021, 2021, 1194565. [Google Scholar] [CrossRef]

- Yin, J.; Ning, C.; Tang, T. Data-driven models for train control dynamics in high-speed railways: LAG-LSTM for train trajectory prediction. Inf. Sci. 2022, 600, 377–400. [Google Scholar] [CrossRef]

- Mishra, B.; Dahal, A.; Luintel, N.; Shahi, T.B.; Panthi, S.; Pariyar, S.; Ghimire, B.R. Methods in the spatial deep learning: Current status and future direction. Spatial Inf. Res. 2022, 30, 215–232. [Google Scholar] [CrossRef]

- Choi, D.; Yim, J.; Baek, M.; Lee, S. Machine learning-based vehicle trajectory prediction using v2v communications and on-board sensors. Electronics 2021, 10, 420. [Google Scholar] [CrossRef]

- Akiyama, T.; Inokuchi, H. Long term estimation of traffic demand on urban expressway by neural networks. In Proceedings of the 2014 Joint 7th International Conference on Soft Computing and Intelligent Systems (SCIS) and 15th International Symposium on Advanced Intelligent Systems (ISIS), Kita-Kyushu, Japan, 3–6 December 2014. [Google Scholar]

- Kim, B.D.; Kang, C.M.; Lee, S.H.; Chae, H.; Kim, J.; Chung, C.C.; Choi, J.W. Probabilistic Vehicle Trajectory Prediction over Occupancy Grid Map via Recurrent Neural Network. In Proceedings of the 2017 IEEE 20th International Conference on Intelligent Transportation Systems (ITSC), Yokohama, Japan, 16–19 October 2017. [Google Scholar]

- Altche, F.; Fortelle, A. An LSTM network for highway trajectory prediction. In Proceedings of the 2017 IEEE 20th International Conference on Intelligent Transportation Systems (ITSC), Yokohama, Japan, 16–19 October 2017. [Google Scholar]

- Graves, A.; Jaitly, N. Towards end-to-end speech recognition with recurrent neural networks. In Proceedings of the International Conference on Machine Learning, Beijing, China, 21–26 June 2014. [Google Scholar]

- Sutskever, I.; Martens, J.; Hinton, G.E. Generating Text with Recurrent Neural Networks. In Proceedings of the International Conference on Machine Learning, Bellevue, WA, USA, 29 June–1 July 2011. [Google Scholar]

- Gao, L.; Guo, Z.; Zhang, H.; Xu, X.; Shen, H.T. Video Captioning with Attention-based LSTM and Semantic Consistency. IEEE Trans. Multimedia 2017, 19, 2045–2055. [Google Scholar] [CrossRef]

- Xiong, X.; Bhujel, N.; Teoh, E.; Yau, W. Prediction of Pedestrian Trajectory in a Crowded Environment Using RNN Encoder-Decoder. In Proceedings of the ICRAI ’19: 2019 5th International Conference on Robotics and Artificial Intelligence, Singapore, 22–24 November 2019. [Google Scholar]

- Liu, H.; Wu, H.; Sun, W.; Lee, I. Spatio-Temporal GRU for Trajectory Classification. In Proceedings of the 2019 IEEE International Conference on Data Mining (ICDM), Beijing, China, 8–11 November 2020. [Google Scholar]

- Alahi, A.; Goel, K.; Ramanathan, V.; Robicquet, A.; Savarese, S. Social LSTM: Human Trajectory Prediction in Crowded Spaces. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016. [Google Scholar]

- Tba, B.; Adma, C.; Az, A.; Eo, A.; Ph, B. A graph CNN–LSTM neural network for short and long-term traffic forecasting based on trajectory data. Transp. Res. Part C Emerg. Technol. 2020, 112, 62–77. [Google Scholar]

- He, Y.; Lv, J.; Zhang, D.; Chai, M.; Liu, H.; Dong, H.; Tang, T. Trajectory Prediction of Urban Rail Transit Based on Long Short-Term Memory Network. In Proceedings of the 2021 IEEE International Intelligent Transportation Systems Conference (ITSC), Indianapolis, IN, USA, 19–22 September 2021; pp. 3945–3950. [Google Scholar] [CrossRef]

- Yan, Z. Traj-ARIMA: A Spatial-Time Series Model for Network-Constrained Trajectory. In Proceedings of the CTS 10;ACM SIGSPATIAL International Workshop on Computational Transportation Science, Chicago, IL, USA, 1 November 2011. [Google Scholar]

- Wang, T.; Huang, B. 4D flight trajectory prediction model based on improved Kalman filter. J. Comput. Appl. 2014, 34, 1812. [Google Scholar]

- Wiest, J.; Hoffken, M.; Kresel, U.; Dietmayer, K. Probabilistic trajectory prediction with Gaussian mixture models. In Proceedings of the 2012 IEEE Intelligent Vehicles Symposium (IV), Madrid, Spain, 3–7 June 2012. [Google Scholar]

- Yoon, Y.; Kim, C.; Lee, J.; Yi, K. Interaction-Aware Probabilistic Trajectory Prediction of Cut-In Vehicles Using Gaussian Process for Proactive Control of Autonomous Vehicles. IEEE Access 2021, 9, 63440–63455. [Google Scholar] [CrossRef]

- Rong, H.; Teixeira, A.P.; Soares, C.G. Ship trajectory uncertainty prediction based on a Gaussian Process model. Ocean Eng. 2019, 182, 499–511. [Google Scholar] [CrossRef]

- Anderson, S.; Barfoot, T.D.; Tong, C.H.; Särkkä, S. Batch nonlinear continuous-time trajectory estimation as exactly sparse Gaussian process regression. Auton. Robots 2015, 39, 221–238. [Google Scholar] [CrossRef]

- Qiao, S.; Shen, D.; Wang, X.; Han, N.; Zhu, W. A Self-Adaptive Parameter Selection Trajectory Prediction Approach via hidden Markov Models. IEEE Trans. Intell. Transp. Syst. 2015, 16, 284–296. [Google Scholar] [CrossRef]

- Sushmitha, T.V.; Deepika, C.P.; Uppara, R.; Sai, R.N. Vehicle Trajectory Prediction using Non-Linear Input-Output Time Series Neural Network. In Proceedings of the International Conference on Power Electronics Applications and Technology in Present Energy Scenario, Mangalore, India, 29–31 August 2019. [Google Scholar]

- Chen, C.; Liu, L.; Qiu, T.; Ren, Z.; Hu, J.; Ti, F. Driver’s Intention Identification and Risk Evaluation at Intersections in the Internet of Vehicles. IEEE Internet Things J. 2018, 5, 1575–1587. [Google Scholar] [CrossRef]

- Min, K.; Kim, D.; Park, J.; Huh, K. RNN-Based Path Prediction of Obstacle Vehicles With Deep Ensemble. IEEE Trans. Veh. Technol. 2019, 68, 10252–10256. [Google Scholar] [CrossRef]

- Lee, N.; Choi, W.; Vernaza, P.; Choy, C.B.; Torr, P.; Chandraker, M. DESIRE: Distant Future Prediction in Dynamic Scenes with Interacting Agents. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Park, S.H.; Kim, B.D.; Kang, C.M.; Chung, C.C.; Choi, J.W. Sequence-to-Sequence Prediction of Vehicle Trajectory via LSTM Encoder-Decoder Architecture. In Proceedings of the 2018 IEEE Intelligent Vehicles Symposium (IV), Changshu, China, 26–30 June 2018. [Google Scholar]

- Jin, X.B.; Gong, W.T.; Kong, J.L.; Bai, Y.T.; Su, T.L. PFVAE: A Planar Flow-Based Variational Auto-Encoder Prediction Model for Time Series Data. Mathematics 2022, 10, 610. [Google Scholar] [CrossRef]

- Berenguer, A.D.; Alioscha-Perez, M.; Oveneke, M.C.; Sahli, H. Context-aware human trajectories prediction via latent variational model. IEEE Trans. Circuits Syst. Video Technol. 2020, 31, 1876–1889. [Google Scholar] [CrossRef]

- Gupta, A.; Johnson, J.; Li, F.F.; Savarese, S.; Alahi, A. Social GAN: Socially Acceptable Trajectories with Generative Adversarial Networks. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018. [Google Scholar]

- Duives, D.; Wang, G.; Kim, J. Forecasting Pedestrian Movements Using Recurrent Neural Networks: An Application of Crowd Monitoring Data. Sensors 2019, 19, 382. [Google Scholar] [CrossRef]

- Adege, A.B.; Lin, H.P.; Wang, L.C. Mobility Predictions for IoT Devices Using Gated Recurrent Unit Network. IEEE Internet Things J. 2019, 7, 505–517. [Google Scholar] [CrossRef]

- Lawrence, S.; Giles, C.; Tsoi, A.C.; Back, A. Face recognition: A convolutional neural-network approach. IEEE Trans. Neural Netw. 1997, 8, 98–113. [Google Scholar] [CrossRef]

- Hochreiter, S.; Schmidhuber, J. Long Short-Term Memory. Neural Comput. 1997, 9, 1735–1780. [Google Scholar] [CrossRef]

| Time Steps | RNN | GRU | LSTM | State-LSTM | CNN–LSTM |

|---|---|---|---|---|---|

| 1 | 4.18 | 4.53 | 6.07 | 8.85 | 5.37 |

| 2 | 4.29 | 4.86 | 6.46 | 8.68 | 5.67 |

| 3 | 5.55 | 5.72 | 5.55 | 10.12 | 5.27 |

| 4 | 6.60 | 6.25 | 6.81 | 9.92 | 6.51 |

| 5 | 7.42 | 7.00 | 7.64 | 9.95 | 7.39 |

| 6 | 8.49 | 7.38 | 9.05 | 10.92 | 8.26 |

| 7 | 8.79 | 9.12 | 9.86 | 11.26 | 8.64 |

| 8 | 9.94 | 10.02 | 10.47 | 12.38 | 9.72 |

| 9 | 11.54 | 11.65 | 12.35 | 13.08 | 11.12 |

| 10 | 12.80 | 11.98 | 11.76 | 13.47 | 11.53 |

| 11 | 13.34 | 13.54 | 13.36 | 14.59 | 13.08 |

| 12 | 14.73 | 14.14 | 13.86 | 15.61 | 13.58 |

| 13 | 15.45 | 16.11 | 15.13 | 16.53 | 15.48 |

| 14 | 16.24 | 16.89 | 16.73 | 17.02 | 15.69 |

| 15 | 18.36 | 18.00 | 17.51 | 18.61 | 15.93 |

| 16 | 18.80 | 18.43 | 17.64 | 19.01 | 17.94 |

| 17 | 20.50 | 20.02 | 18.54 | 20.10 | 19.33 |

| 18 | 20.61 | 20.75 | 20.40 | 21.07 | 19.71 |

| 19 | 23.21 | 22.20 | 21.02 | 22.39 | 20.79 |

| 20 | 24.17 | 23.63 | 21.81 | 23.28 | 21.33 |

| Hit Rate | n = 1 | n = 5 | n = 10 | n = 15 | n = 20 |

|---|---|---|---|---|---|

| RNN | 0.98 | 0.97 | 0.96 | 0.93 | 0.91 |

| GRU | 0.98 | 0.98 | 0.96 | 0.94 | 0.89 |

| LSTM | 0.97 | 0.92 | 0.91 | 0.9 | 0.87 |

| state-LSTM | 0.9 | 0.88 | 0.88 | 0.88 | 0.83 |

| CNN–LSTM | 0.96 | 0.96 | 0.96 | 0.95 | 0.93 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

He, Y.; Lv, J.; Liu, H.; Tang, T. Toward the Trajectory Predictor for Automatic Train Operation System Using CNN–LSTM Network. Actuators 2022, 11, 247. https://doi.org/10.3390/act11090247

He Y, Lv J, Liu H, Tang T. Toward the Trajectory Predictor for Automatic Train Operation System Using CNN–LSTM Network. Actuators. 2022; 11(9):247. https://doi.org/10.3390/act11090247

Chicago/Turabian StyleHe, Yijuan, Jidong Lv, Hongjie Liu, and Tao Tang. 2022. "Toward the Trajectory Predictor for Automatic Train Operation System Using CNN–LSTM Network" Actuators 11, no. 9: 247. https://doi.org/10.3390/act11090247

APA StyleHe, Y., Lv, J., Liu, H., & Tang, T. (2022). Toward the Trajectory Predictor for Automatic Train Operation System Using CNN–LSTM Network. Actuators, 11(9), 247. https://doi.org/10.3390/act11090247