A Lightweight and Explainable AI Framework Toward Automated Infraocclusion Detection in Pediatric Panoramic Radiographs

Abstract

1. Introduction

1.1. Motivation

1.2. Related Works

1.3. The Scope and Contributions

- A computationally efficient two-stage deep learning framework is designed and evaluated for infraocclusion detection in pediatric panoramic radiographs.

- The proposed approach emphasizes lightweight architecture, reduced parameter complexity, and low inference latency while maintaining high diagnostic performance.

- Extensive comparative analyses against established classification and detection backbones are conducted to position the framework within current deep learning approaches.

- XAI techniques are integrated to enhance interpretability and support clinical validation.

2. System Architecture

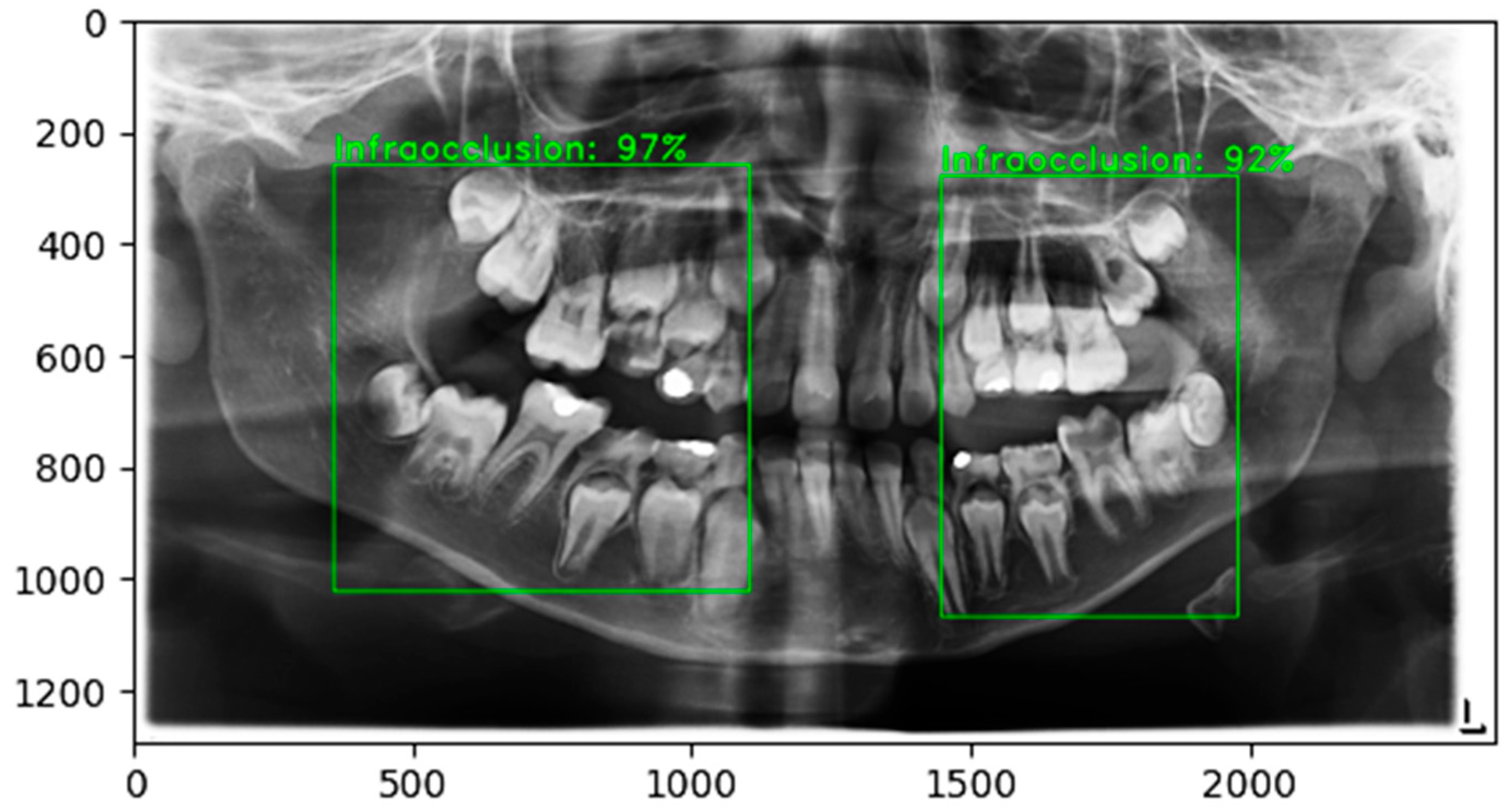

2.1. Stage 1: Detection of ROI

2.2. Stage 2: Infraocclusion Classification

3. Materials

3.1. Dataset

3.2. Data Augmentation

3.3. Training Details

3.4. Evaluation Metrics

3.5. Implementation

4. Results

4.1. Model Convergence

4.2. Detection Performance

4.3. Classification Performance and Overall System Accuracy

4.4. Computational Performance

4.5. Comparative Analysis

4.6. Interpretability

5. Discussion

5.1. Comparison to Prior Study and Clinical Relevance

5.2. Clinical Workflow Integration

5.3. Limitations and Future Directions

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Adamska, P.; Sobczak-Zagalska, H.; Stasiak, M.; Adamski, Ł.J.; Pylińska-Dąbrowska, D.; Barkowska, S.; Zedler, A.; Studniarek, M. Infraocclusion in the Primary and Permanent Dentition—A Narrative Review. Medicina 2024, 60, 423. [Google Scholar] [CrossRef]

- Mısır, M.; Gökcan, S.H.; Büncü, A.; Koruyucu, M. Prevalence and Etiology of Dental Ankylosis in Primary Teeth. Essent. Dent. 2025, 4, 1–11. [Google Scholar] [CrossRef]

- Dipalma, G.; Inchingolo, A.D.; Memè, L.; Casamassima, L.; Carone, C.; Malcangi, G.; Inchingolo, F.; Palermo, A.; Inchingolo, A.M. The Diagnosis and Management of Infraoccluded Deciduous Molars: A Systematic Review. Children 2024, 11, 1375. [Google Scholar] [CrossRef]

- Akgöl, B.B.; Üstün, N.; Bayram, M. Characterizing Infraocclusion in Primary Molars: Prevalence, Accompanying Findings, and Infraocclusion Severity and Treatment Implications. BMC Oral Health 2024, 24, 661. [Google Scholar] [CrossRef]

- Perschbacher, S. Interpretation of Panoramic Radiographs. Aust. Dent. J. 2012, 57, 40–45. [Google Scholar] [CrossRef] [PubMed]

- Choi, I.G.G.; Cortes, A.R.G.; Arita, E.S.; Georgetti, M.A.P. Comparison of Conventional Imaging Techniques and CBCT for Periodontal Evaluation: A Systematic Review. Imaging Sci. Dent. 2018, 48, 79. [Google Scholar] [CrossRef] [PubMed]

- Xu, M.; Wu, Y.; Xu, Z.; Ding, P.; Bai, H.; Deng, X. Robust Automated Teeth Identification from Dental Radiographs Using Deep Learning. J. Dent. 2023, 136, 104607. [Google Scholar] [CrossRef]

- Estai, M.; Tennant, M.; Gebauer, D.; Brostek, A.; Vignarajan, J.; Mehdizadeh, M.; Saha, S. Deep Learning for Automated Detection and Numbering of Permanent Teeth on Panoramic Images. Dentomaxillofacial Radiol. 2022, 51, 20210296. [Google Scholar] [CrossRef] [PubMed]

- Lian, L.; Zhu, T.; Zhu, F.; Zhu, H. Deep Learning for Caries Detection and Classification. Diagnostics 2021, 11, 1672. [Google Scholar] [CrossRef]

- Park, E.Y.; Cho, H.; Kang, S.; Jeong, S.; Kim, E.-K. Caries Detection with Tooth Surface Segmentation on Intraoral Photographic Images Using Deep Learning. BMC Oral Health 2022, 22, 573. [Google Scholar] [CrossRef]

- Jiang, L.; Chen, D.; Cao, Z.; Wu, F.; Zhu, H.; Zhu, F. A Two-Stage Deep Learning Architecture for Radiographic Staging of Periodontal Bone Loss. BMC Oral Health 2022, 22, 106. [Google Scholar] [CrossRef]

- Celik, M.E. Deep Learning Based Detection Tool for Impacted Mandibular Third Molar Teeth. Diagnostics 2022, 12, 942. [Google Scholar] [CrossRef]

- Balel, Y.; Sağtaş, K. Deep Learning-Based Approach to Third Molar Impaction Analysis with Clinical Classifications. Sci. Rep. 2025, 15, 23688. [Google Scholar] [CrossRef]

- Sivari, E.; Senirkentli, G.B.; Bostanci, E.; Guzel, M.S.; Acici, K.; Asuroglu, T. Deep Learning in Diagnosis of Dental Anomalies and Diseases: A Systematic Review. Diagnostics 2023, 13, 2512. [Google Scholar] [CrossRef]

- Gülşen, E.; Gülşen, İ.T.; Mahyaddinova, T. A Bibliometric Analysis of the Literature on the Use of Artificial Intelligence in Pediatric Dentistry. J. Dent. Sci. Educ. 2025, 3, 1–7. [Google Scholar] [CrossRef]

- Rosenbacke, R.; Melhus, Å.; McKee, M.; Stuckler, D. How Explainable Artificial Intelligence Can Increase or Decrease Clinicians’ Trust in AI Applications in Health Care: Systematic Review. JMIR AI 2024, 3, e53207. [Google Scholar] [CrossRef]

- Genc, M.Z.; Dalveren, Y.; Dalveren, G.G.M.; Kara, A.; Derawi, M.; Kubicek, J.; Penhaker, M. From Simulation to Clinical Translation: A Deep Learning Framework for Pancreatic Tumor Segmentation With GUI Integration. IEEE Access 2026, 14, 26767–26783. [Google Scholar] [CrossRef]

- Kshatri, S.S.; Singh, D. Convolutional Neural Network in Medical Image Analysis: A Review. Arch. Comput. Methods Eng. 2023, 30, 2793–2810. [Google Scholar] [CrossRef]

- Schwendicke, F.; Samek, W.; Krois, J. Artificial Intelligence in Dentistry: Chances and Challenges. J. Dent. Res. 2020, 99, 769–774. [Google Scholar] [CrossRef] [PubMed]

- You, W.; Hao, A.; Li, S.; Wang, Y.; Xia, B. Deep Learning-Based Dental Plaque Detection on Primary Teeth: A Comparison with Clinical Assessments. BMC Oral Health 2020, 20, 141. [Google Scholar] [CrossRef] [PubMed]

- Kılıc, M.C.; Bayrakdar, I.S.; Çelik, Ö.; Bilgir, E.; Orhan, K.; Aydın, O.B.; Kaplan, F.A.; Sağlam, H.; Odabaş, A.; Aslan, A.F. Artificial Intelligence System for Automatic Deciduous Tooth Detection and Numbering in Panoramic Radiographs. Dentomaxillofacial Radiol. 2021, 50, 20200172. [Google Scholar] [CrossRef] [PubMed]

- Mine, Y.; Iwamoto, Y.; Okazaki, S.; Nakamura, K.; Takeda, S.; Peng, T.; Mitsuhata, C.; Kakimoto, N.; Kozai, K.; Murayama, T. Detecting the Presence of Supernumerary Teeth during the Early Mixed Dentition Stage Using Deep Learning Algorithms: A Pilot Study. Int. J. Paediatr. Dent. 2022, 32, 678–685. [Google Scholar] [CrossRef]

- Karhade, D.S.; Roach, J.; Shrestha, P.; Simancas-Pallares, M.A.; Ginnis, J.; Burk, Z.J.; Ribeiro, A.A.; Cho, H.; Wu, D.; Divaris, K. An Automated Machine Learning Classifier for Early Childhood Caries. Pediatr. Dent. 2021, 43, 191–197. [Google Scholar]

- Zaborowicz, M.; Zaborowicz, K.; Biedziak, B.; Garbowski, T. Deep Learning Neural Modelling as a Precise Method in the Assessment of the Chronological Age of Children and Adolescents Using Tooth and Bone Parameters. Sensors 2022, 22, 637. [Google Scholar] [CrossRef]

- Liu, J.; Liu, Y.; Li, S.; Ying, S.; Zheng, L.; Zhao, Z. Artificial Intelligence-Aided Detection of Ectopic Eruption of Maxillary First Molars Based on Panoramic Radiographs. J. Dent. 2022, 125, 104239. [Google Scholar] [CrossRef]

- Caliskan, S.; Tuloglu, N.; Celik, O.; Ozdemir, C.; Kizilaslan, S.; Bayrak, S. A Pilot Study of a Deep Learning Approach to Submerged Primary Tooth Classification and Detection. Int. J. Comput. Dent. 2021, 24, 1–9. [Google Scholar]

- Soltani, P.; Sohrabniya, F.; Mohammad-Rahimi, H.; Mehdizadeh, M.; Mohammadreza Mousavi, S.; Moaddabi, A.; Mohammadmahdi Mousavi, S.; Spagnuolo, G.; Yavari, A.; Schwendicke, F. A Two-Stage Deep-Learning Model for Determination of the Contact of Mandibular Third Molars with the Mandibular Canal on Panoramic Radiographs. BMC Oral Health 2024, 24, 1373. [Google Scholar] [CrossRef]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.-C. Mobilenetv2: Inverted Residuals and Linear Bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 4510–4520. [Google Scholar]

- Kim, T.; Choi, N.; Kim, S. Prevalence, Severity, and Correlation with Agenesis of Permanent Successors of Infraoccluded Primary Molars at Chonnam National University Hospital’s Department of Pediatric Dentistry. J. Korean Acad. Pediatr. Dent. 2024, 51, 11–21. [Google Scholar] [CrossRef]

- Brearley, L.J. Ankylosis of Primary Molar Teeth. I. Prevalence and Characteristics. ASDC J. Dent. Child. 1973, 40, 54–63. [Google Scholar]

- Chollet, F. Deep Learning with Python; Manning Publications: New York, NY, USA, 2021. [Google Scholar]

- Lin, T.-Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft COCO: Common Objects in Context. In Computer Vision—ECCV 2014; Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T., Eds.; Lecture Notes in Computer Science; Springer International Publishing: Cham, Switzerland, 2014; Volume 8693, pp. 740–755. ISBN 978-3-319-10601-4. [Google Scholar]

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; The MIT Press: Cambridge, MA, USA, 2016. [Google Scholar]

- Tan, M.; Le, Q. Efficientnet: Rethinking Model Scaling for Convolutional Neural Networks. In Proceedings of the International Conference on Machine Learning, Long Beach, CA, USA, 9–15 June 2019; pp. 6105–6114. [Google Scholar]

- Howard, A.G.; Zhu, M.; Chen, B.; Kalenichenko, D.; Wang, W.; Weyand, T.; Andreetto, M.; Adam, H. MobileNets: Efficient Convolutional Neural Networks for Mobile Vision Applications. arXiv 2017, arXiv:1704.04861. [Google Scholar] [CrossRef]

- Kahouadji, N. A Comprehensive Comparison of the Wald, Wilson, and Adjusted Wilson Confidence Intervals for Proportions. arXiv 2025, arXiv:2508.10223. [Google Scholar] [CrossRef]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards Real-Time Object Detection with Region Proposal Networks. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 39, 1137–1149. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Szegedy, C.; Vanhoucke, V.; Ioffe, S.; Shlens, J.; Wojna, Z. Rethinking the Inception Architecture for Computer Vision. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 2818–2826. [Google Scholar]

- Xie, S.; Girshick, R.; Dollár, P.; Tu, Z.; He, K. Aggregated Residual Transformations for Deep Neural Networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 1492–1500. [Google Scholar]

- DeLong, E.R.; DeLong, D.M.; Clarke-Pearson, D.L. Comparing the Areas under Two or More Correlated Receiver Operating Characteristic Curves: A Nonparametric Approach. Biometrics 1988, 44, 837–845. [Google Scholar] [CrossRef] [PubMed]

- McNemar, Q. Note on the Sampling Error of the Difference between Correlated Proportions or Percentages. Psychometrika 1947, 12, 153–157. [Google Scholar] [CrossRef] [PubMed]

- Bhati, D.; Neha, F.; Amiruzzaman, M. A Survey on Explainable Artificial Intelligence (Xai) Techniques for Visualizing Deep Learning Models in Medical Imaging. J. Imaging 2024, 10, 239. [Google Scholar] [CrossRef] [PubMed]

- Liu, X.; Gao, K.; Liu, B.; Pan, C.; Liang, K.; Yan, L.; Ma, J.; He, F.; Zhang, S.; Pan, S.; et al. Advances in Deep Learning-Based Medical Image Analysis. Health Data Sci. 2021, 2021, 8786793. [Google Scholar] [CrossRef]

- Tjoa, E.; Guan, C. A Survey on Explainable Artificial Intelligence (Xai): Toward Medical Xai. IEEE Trans. Neural Netw. Learn. Syst. 2020, 32, 4793–4813. [Google Scholar] [CrossRef]

- Rai, T.; Malviya, R.; Sridhar, S.B. Explainable Artificial Intelligence and Responsible Artificial Intelligence for Dentistry. In Explainable and Responsible Artificial Intelligence in Healthcare; Malviya, R., Sundram, S., Eds.; Wiley: Hoboken, NJ, USA, 2025; pp. 145–163. ISBN 978-1-394-30241-3. [Google Scholar]

- Farook, T.H.; Ahmed, S.; Rashid, F.; Sifat, F.A.; Sidhu, P.; Patil, P.; Eusufzai, S.Z.; Jamayet, N.B.; Dudley, J.; Daood, U. Application of 3D Neural Networks and Explainable AI to Classify ICDAS Detection System on Mandibular Molars. J. Prosthet. Dent. 2025, 133, 1333–1341. [Google Scholar] [CrossRef]

- Sifat, F.A.; Arian, M.S.H.; Ahmed, S.; Farook, T.H.; Mohammed, N.; Dudley, J. An Application of 3D Vision Transformers and Explainable AI in Prosthetic Dentistry. Appl. AI Lett. 2024, 5, e101. [Google Scholar] [CrossRef]

- Kamran, M.; Waseem, M.; Shafi, N.; Zahid, A. Detection of Caries and Hypodontia Using Deep Learning and Explainable AI. In Proceedings of the 2024 18th International Conference on Open Source Systems and Technologies (ICOSST), Lahore, Pakistan, 26–27 December 2024; pp. 1–6. [Google Scholar]

- Ma, J.; Schneider, L.; Lapuschkin, S.; Achtibat, R.; Duchrau, M.; Krois, J.; Schwendicke, F.; Samek, W. Towards Trustworthy AI in Dentistry. J. Dent. Res. 2022, 101, 1263–1268. [Google Scholar] [CrossRef]

- Selvaraju, R.R.; Cogswell, M.; Das, A.; Vedantam, R.; Parikh, D.; Batra, D. Grad-Cam: Visual Explanations from Deep Networks via Gradient-Based Localization. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 618–626. [Google Scholar]

- Ennab, M.; Mcheick, H. Advancing AI Interpretability in Medical Imaging: A Comparative Analysis of Pixel-Level Interpretability and Grad-CAM Models. Mach. Learn. Knowl. Extr. 2025, 7, 12. [Google Scholar] [CrossRef]

- Smilkov, D.; Thorat, N.; Kim, B.; Viégas, F.; Wattenberg, M. SmoothGrad: Removing Noise by Adding Noise. arXiv 2017, arXiv:1706.03825. [Google Scholar] [CrossRef]

- Cheng, Z.; Wu, Y.; Li, Y.; Cai, L.; Ihnaini, B. A Comprehensive Review of Explainable Artificial Intelligence (XAI) in Computer Vision. Sensors 2025, 25, 4166. [Google Scholar] [CrossRef] [PubMed]

| Dataset Split | Images with Infraocclusion | Images with No Infraocclusion | Total Number of Samples |

|---|---|---|---|

| Training set | 933 | 933 | 1866 |

| Validation set | 201 | 200 | 401 |

| Test set | 200 | 200 | 400 |

| Total | 1334 | 1333 | 2667 |

| Metrics | AP50 | AP75 | |||

|---|---|---|---|---|---|

| Value | 0.99 | 0.99 | 0.99 | 0.99 | 0.89 |

| Metrics | Estimate | 95% Wilson CI (%) |

|---|---|---|

| 98.78% | [97.55, 99.42] | |

| 0.9878 | [97.55, 99.42] | |

| 0.9986 | [98.86, 99.98] | |

| 0.9960 | [98.60, 99.80] | |

| 0.9800 | [96.64, 99.07] |

| Stage | Parameters (M) | Model Size (MB) | Training Time (min) | Inference (ms) |

|---|---|---|---|---|

| Detection | 4.48 | 21.82 | 39 | 19 |

| Classification | 1.88 | 7.19 | 8 | 0.8 |

| Metrics | Faster R-CNN ResNet50 V1 | SSD MobileNet V2 FPNLite |

|---|---|---|

| Training Time (min) | 115 | 39 |

| Inference Latency (ms) | 44 | 19 |

| 0.99 | 0.99 | |

| 0.99 | 0.99 | |

| 0.99 | 0.99 | |

| AP50 | 0.99 | 0.99 |

| AP75 | 0.92 | 0.89 |

| Metrics | EfficientNetB0 | EfficientNetB3 | EfficientNetB6 | Inception V3 | ResNext50 | Proposed CNN |

|---|---|---|---|---|---|---|

| Training Time (min) | 10 | 16 | 44 | 26 | 25 | 8 |

| Total Parameters (M) | 4.13 | 10.89 | 41.10 | 21.88 | 19.73 | 1.88 |

| Size of Total Parameters (MB) | 15.43 | 41.18 | 156.29 | 83.38 | 79.43 | 7.19 |

| Inference Latency (ms) | 0.8 | 1 | 1.3 | 1.3 | 1.2 | 0.8 |

| Metrics | EfficientNetB0 | EfficientNetB3 | EfficientNetB6 | Inception V3 | ResNext50 | Proposed CNN |

|---|---|---|---|---|---|---|

| Accuracy (%) | 50 | 61.75 | 93.25 | 88.24 | 87.40 | 98.78 |

| Loss | 0.88 | 0.71 | 0.31 | 0.38 | 0.47 | 0.05 |

| Accuracy for Infraocclusion (%) | 0 | 28.00 | 88.5 | 86.48 | 80.80 | 99.25 |

| Accuracy for No infraocclusion (%) | 100 | 95.50 | 98 | 90 | 94 | 98.31 |

| 0.50 | 0.62 | 0.93 | 0.88 | 0.87 | 0.99 | |

| 0 | 0.28 | 0.88 | 0.86 | 0.94 | 0.98 | |

| 1 | 0.95 | 0.98 | 0.90 | 0.80 | 0.99 | |

| 0.5 | 0.62 | 0.96 | 0.96 | 0.93 | 0.99 |

| Model | 95% Wilson CI | -Value | ||

|---|---|---|---|---|

| (%) | DeLong’s Test | McNemar’s Test | ||

| EfficientNetB6 | [0.9083, 0.9528] | [0.9099, 0.9606] | 0.042 | 0.049 |

| EfficientNetB3 | [0.5635, 0.6628] | [0.5736, 0.6598] | 0.0009 | 0.0015 |

| EfficientNetB0 | [0.4912, 0.5088] | [0.4991, 0.5009] | 0.00002 | 0.00003 |

| Inception V3 | [0.8492, 0.9128] | [0.8507, 0.9087] | 0.0019 | 0.0023 |

| ResNext50 | [0.8391, 0.9039] | [0.8378, 0.9025] | 0.0021 | 0.0024 |

| Model | EfficientNetB0 | EfficientNetB3 | EfficientNetB6 | Inception V3 | ResNext50 | Proposed CNN |

|---|---|---|---|---|---|---|

| Localization Specificity | Low-Moderate | Low | Moderate | Moderate | Low | High |

| Anatomical Coherence | Moderate | Moderate | Moderate | Moderate | Low-Moderate | High |

| Spatial Diffusion | High | High | Moderate | Moderate | High | Low |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Palaz, Z.H.; Cege, E.E.; Maiga, B.; Dalveren, Y.; Dalveren, G.G.M.; Kara, A.; Soylu, A.; Derawi, M. A Lightweight and Explainable AI Framework Toward Automated Infraocclusion Detection in Pediatric Panoramic Radiographs. Diagnostics 2026, 16, 866. https://doi.org/10.3390/diagnostics16060866

Palaz ZH, Cege EE, Maiga B, Dalveren Y, Dalveren GGM, Kara A, Soylu A, Derawi M. A Lightweight and Explainable AI Framework Toward Automated Infraocclusion Detection in Pediatric Panoramic Radiographs. Diagnostics. 2026; 16(6):866. https://doi.org/10.3390/diagnostics16060866

Chicago/Turabian StylePalaz, Zeliha Hatipoglu, Ecem Elif Cege, Bamoye Maiga, Yaser Dalveren, Gonca Gokce Menekse Dalveren, Ali Kara, Ahmet Soylu, and Mohammad Derawi. 2026. "A Lightweight and Explainable AI Framework Toward Automated Infraocclusion Detection in Pediatric Panoramic Radiographs" Diagnostics 16, no. 6: 866. https://doi.org/10.3390/diagnostics16060866

APA StylePalaz, Z. H., Cege, E. E., Maiga, B., Dalveren, Y., Dalveren, G. G. M., Kara, A., Soylu, A., & Derawi, M. (2026). A Lightweight and Explainable AI Framework Toward Automated Infraocclusion Detection in Pediatric Panoramic Radiographs. Diagnostics, 16(6), 866. https://doi.org/10.3390/diagnostics16060866