Scalar-on-Function Relative Error Regression for Weak Dependent Case

Abstract

1. Introduction

2. The Re-Regression Model and Its Estimation

3. The Consistency of the Kernel Estimator

- (D1)

- For all , and .

- (D2)

- For all ,

- (D3)

- The covariance coefficient is , such that

- (D4)

- K is the Lipschitzian kernel function, which has as support and satisfies the following:

- (D5)

- The endogenous variable Y gives:

- (D6)

- For all ,

- (D7)

- There exist and

- Brief comment on the conditions: Note that the required conditions stated above are standard in the context of Hilbertian time series analysis. Such conditions explore the fundamental axes of this contribution. The functional path of the data is explored through the condition (D1), the nonparametric nature of the model is characterized by (D2), and the correlation degree of the Hilbertian time series is explored by conditions (D3) and (D6). The principal parameters used in the estimator, namely the kernel and the bandwidth parameter, are explored through the conditions, (D4), (D5), and (D6). Such conditions are of a technical nature. They allow for retaining the usual convergence rate in nonparametric Hilbertian time series analysis.

- (K1)

- has a bounded derivative on ;

- (K2)

- The function , such thatwhere , are positive and bounded functions, and is an invertible function;

- (K3)

- There exist and such that

4. Smoothing Parameter Selection

4.1. Leave-One-Out Cross-Validation Principle

4.2. Bootstrap Approach

- Step 1.

- We choose an arbitrary bandwidth (resp. ), and we calculate (resp. ).

- Step 2.

- We estimate (resp. ).

- Step 3.

- We create a sample of residual (resp. ) from the distributionwhere is the Dirac measure (see Hardle and Marron [19] for more details).

- Step 4.

- We reconstruct the sample (resp.

- Step 5.

- We use the sample to calculate and to calculate .

- Step 6.

- We repeat the previous steps times and put (resp. ), the estimators, at the replication r.

- Step 7.

- We select h (resp. k) according to the criteriaand

5. Computational Study

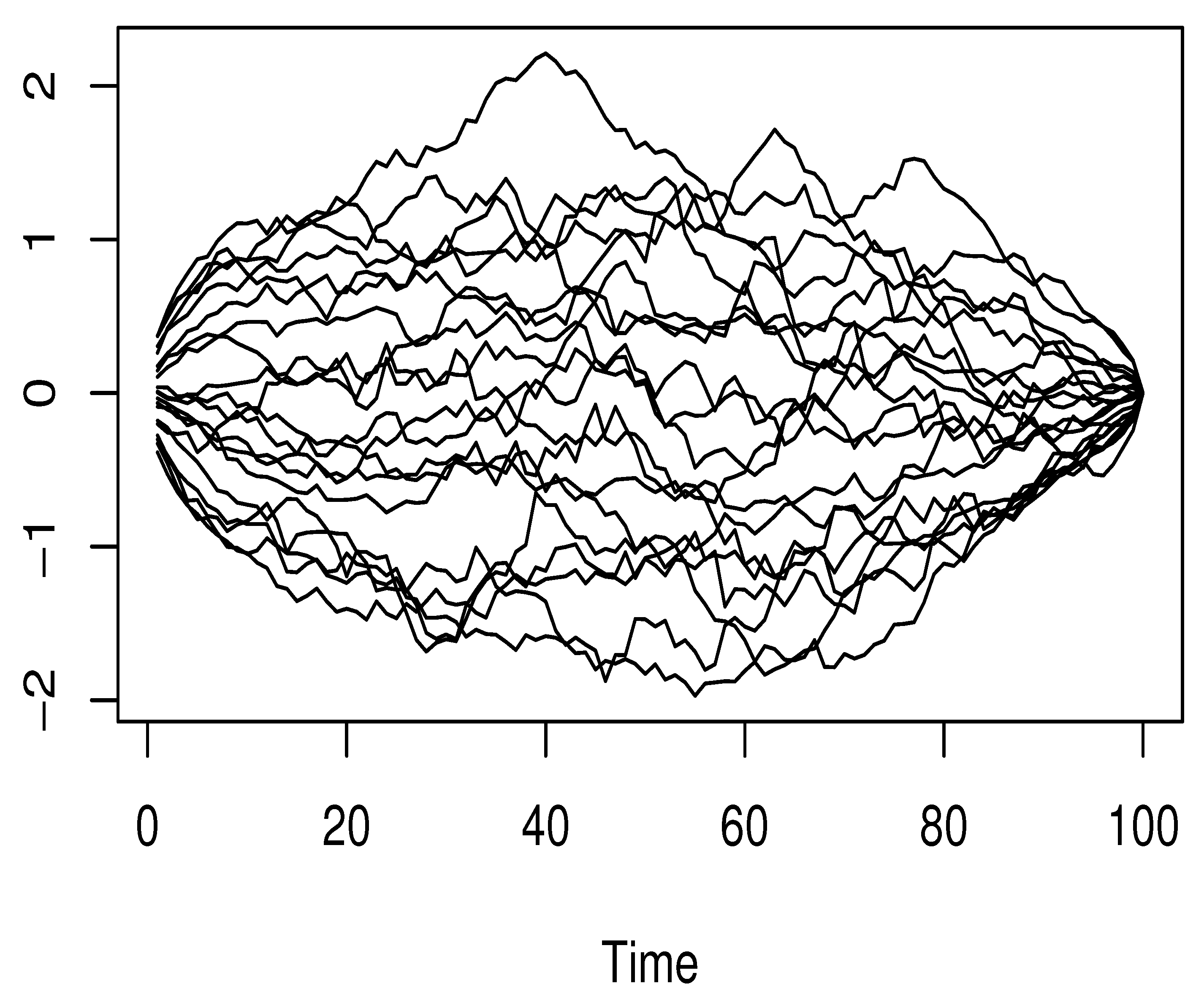

5.1. Empirical Analysis

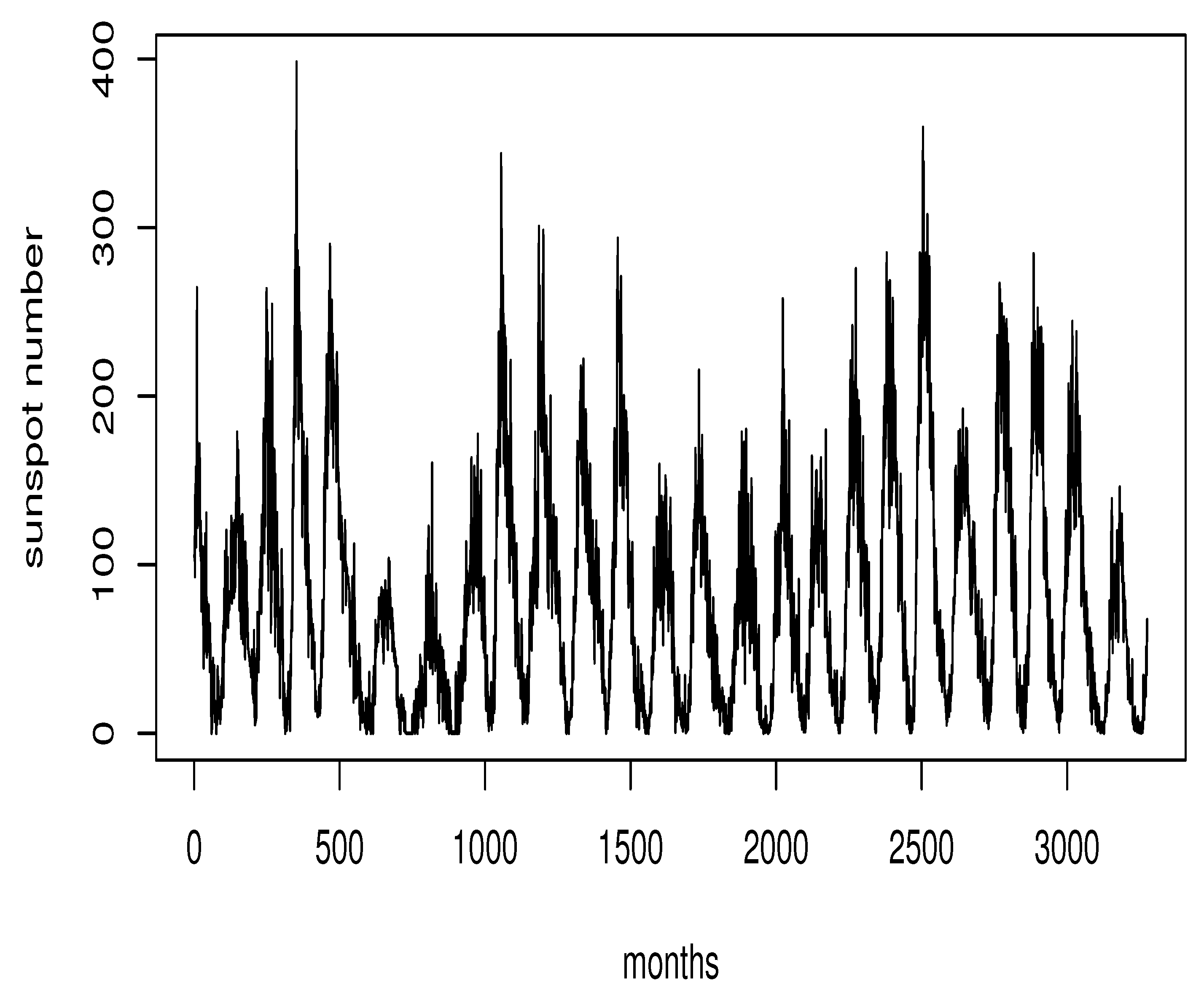

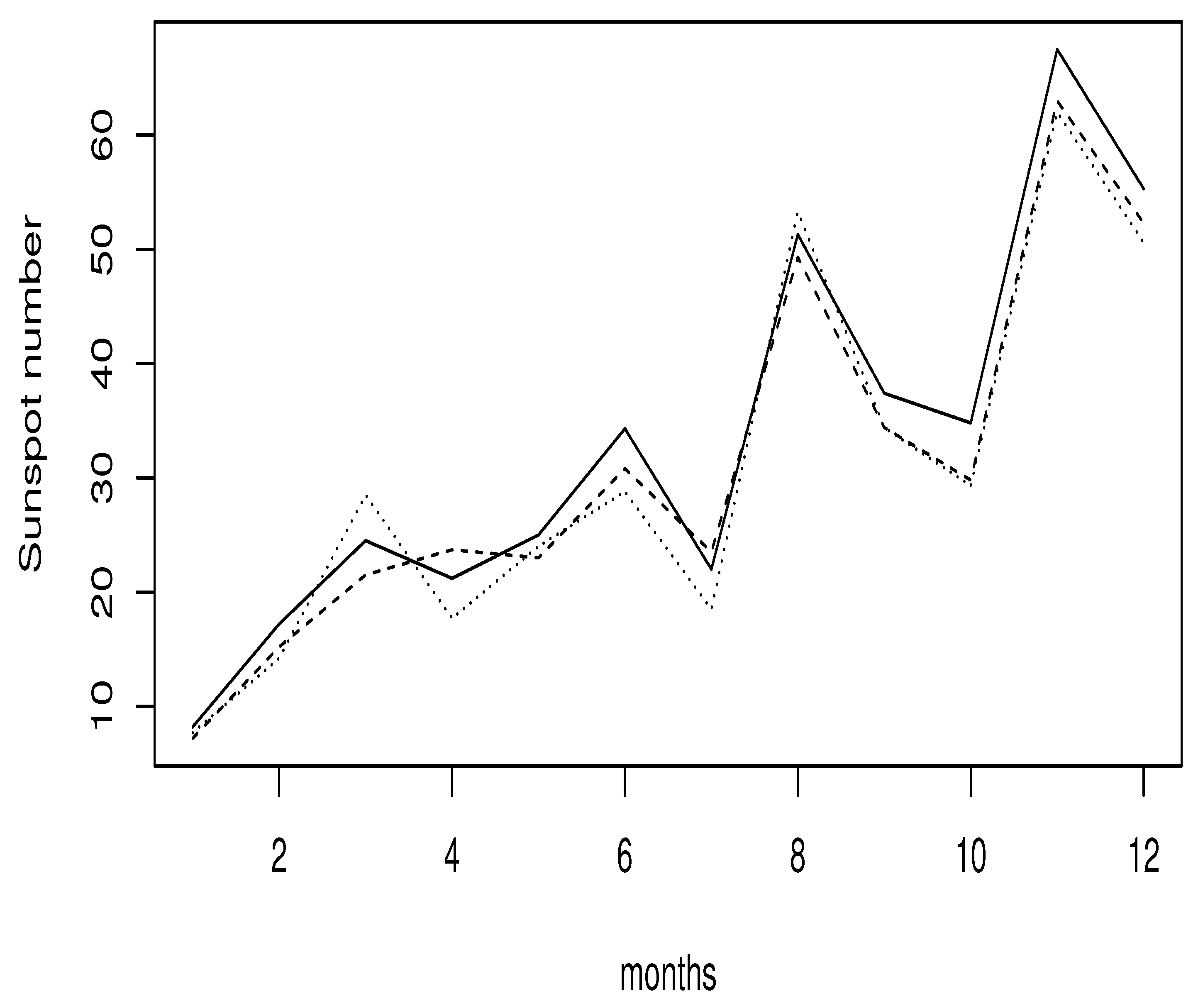

5.2. A Real Data Application

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

- The first case is ; based on the definition of quasi-association, we obtainOn the other hand, we haveFurthermore, taking a -power of (A9) and a -power of (A10), we get for

- The second one is where . In this case, we have

References

- Narula, S.C.; Wellington, J.F. Prediction, linear regression and the minimum sum of relative errors. Technometrics 1977, 19, 185–190. [Google Scholar] [CrossRef]

- Chatfield, C. The joys of consulting. Significance 2007, 4, 33–36. [Google Scholar] [CrossRef]

- Chen, K.; Guo, S.; Lin, Y.; Ying, Z. Least absolute relative error estimation. J. Am. Statist. Assoc. 2010, 105, 1104–1112. [Google Scholar] [CrossRef]

- Yang, Y.; Ye, F. General relative error criterion and M-estimation. Front. Math. China 2013, 8, 695–715. [Google Scholar] [CrossRef]

- Jones, M.C.; Park, H.; Shin, K.-I.; Vines, S.K.; Jeong, S.-O. Relative error prediction via kernel regression smoothers. J. Stat. Plan. Inference 2008, 138, 2887–2898. [Google Scholar] [CrossRef]

- Mechab, W.; Laksaci, A. Nonparametric relative regression for associated random variables. Metron 2016, 74, 75–97. [Google Scholar] [CrossRef]

- Attouch, M.; Laksaci, A.; Messabihi, N. Nonparametric RE-regression for spatial random variables. Stat. Pap. 2017, 58, 987–1008. [Google Scholar] [CrossRef]

- Demongeot, J.; Hamie, A.; Laksaci, A.; Rachdi, M. Relative-error prediction in nonparametric functional statistics: Theory and practice. J. Multivar. Anal. 2016, 146, 261–268. [Google Scholar] [CrossRef]

- Cuevas, A. A partial overview of the theory of statistics with functional data. J. Stat. Plan. Inference 2014, 147, 1–23. [Google Scholar] [CrossRef]

- Goia, A.; Vieu, P. An introduction to recent advances in high/infinite dimensional statistics. J. Multivar. Anal. 2016, 146, 1–6. [Google Scholar] [CrossRef]

- Ling, N.; Vieu, P. Nonparametric modelling for functional data: Selected survey and tracks for future. Statistics 2018, 52, 934–949. [Google Scholar] [CrossRef]

- Aneiros, G.; Cao, R.; Fraiman, R.; Genest, C.; Vieu, P. Recent advances in functional data analysis and high-dimensional statistics. J. Multivar. Anal. 2019, 170, 3–9. [Google Scholar] [CrossRef]

- Aneiros, G.; Horova, I.; Hušková, M.; Vieu, P. On functional data analysis and related topics. J. Multivar. Anal. 2022, 189, 3–9. [Google Scholar] [CrossRef]

- Chowdhury, J.; Chaudhuri, P. Convergence rates for kernel regression in infinite-dimensional spaces. Ann. Inst. Stat. Math. 2020, 72, 471–509. [Google Scholar] [CrossRef]

- Li, B.; Song, J. Dimension reduction for functional data based on weak conditional moments. Ann. Stat. 2022, 50, 107–128. [Google Scholar] [CrossRef]

- Douge, L. Théorèmes limites pour des variables quasi-associées hilbertiennes. Ann. L’Isup 2010, 54, 51–60. [Google Scholar]

- Bouzebda, S.; Laksaci, A.; Mohammedi, M. The k-nearest neighbors method in single index regression model for functional quasi-associated time series data. Rev. Mat. Complut. 2023, 36, 361–391. [Google Scholar] [CrossRef]

- Ferraty, F.; Vieu, P. Nonparametric Functional Data Analysis; Springer Series in Statistics; Theory and Practice; Springer: New York, NY, USA, 2006. [Google Scholar]

- Hardle, W.; Marron, J.S. Bootstrap simultaneous error bars for nonparametric regression. Ann. Stat. 1991, 16, 1696–1708. [Google Scholar] [CrossRef]

- Wilcox, R. Introduction to Robust Estimation and Hypothesis Testing; Elsevier Academic Press: Burlington, MA, USA, 2005. [Google Scholar]

- Kallabis, R.S.; Neumann, M.H. An exponential inequality under weak dependence. Bernoulli 2006, 12, 333–335. [Google Scholar] [CrossRef]

| Months | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| Outliers | 15 | 26 | 13 | 5 | 24 | 25 | 7 | 9 | 11 | 8 | 9 | 15 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chikr Elmezouar, Z.; Alshahrani, F.; Almanjahie, I.M.; Kaid, Z.; Laksaci, A.; Rachdi, M. Scalar-on-Function Relative Error Regression for Weak Dependent Case. Axioms 2023, 12, 613. https://doi.org/10.3390/axioms12070613

Chikr Elmezouar Z, Alshahrani F, Almanjahie IM, Kaid Z, Laksaci A, Rachdi M. Scalar-on-Function Relative Error Regression for Weak Dependent Case. Axioms. 2023; 12(7):613. https://doi.org/10.3390/axioms12070613

Chicago/Turabian StyleChikr Elmezouar, Zouaoui, Fatimah Alshahrani, Ibrahim M. Almanjahie, Zoulikha Kaid, Ali Laksaci, and Mustapha Rachdi. 2023. "Scalar-on-Function Relative Error Regression for Weak Dependent Case" Axioms 12, no. 7: 613. https://doi.org/10.3390/axioms12070613

APA StyleChikr Elmezouar, Z., Alshahrani, F., Almanjahie, I. M., Kaid, Z., Laksaci, A., & Rachdi, M. (2023). Scalar-on-Function Relative Error Regression for Weak Dependent Case. Axioms, 12(7), 613. https://doi.org/10.3390/axioms12070613