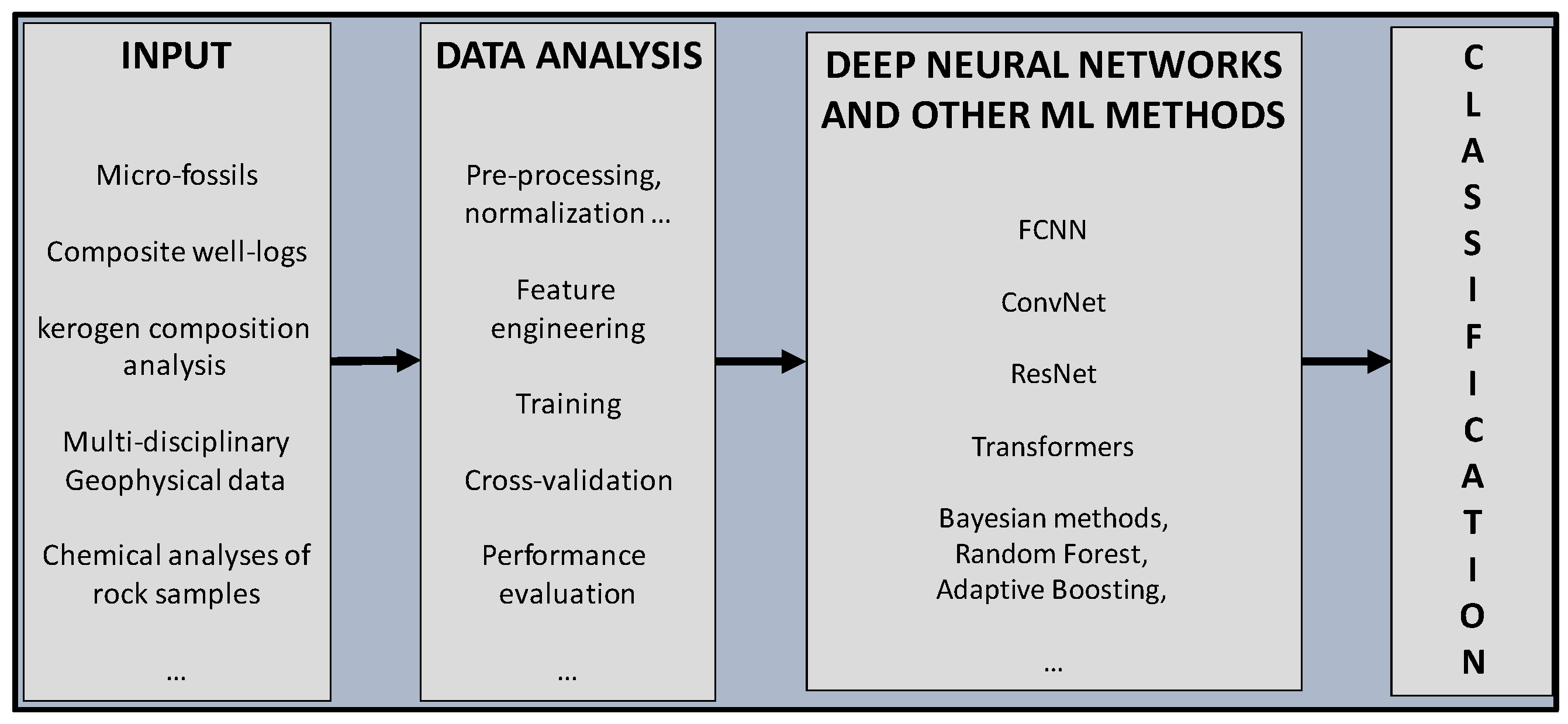

This section outlines the use of the various deep learning methods briefly explained in the previous section, starting with simpler fully connected neural networks and progressing to more advanced deep residual networks. We discuss how we applied these different architectures to classify images of mineral thin sections obtained from real samples. We applied these techniques to a test dataset with a didactical purpose, too. For that reason, in the following section, we explain the details of the main steps of the classification workflow, clarifying why and how we selected each specific hyper-parameter of our network architecture. Our goal is to show how it is possible to test and to evaluate the effectiveness of the different approaches in classifying the various mineral species through their microscope images.

3.1. Classification of Mineralogical Thin Sections Using FCNN

In this test, we utilized a dataset consisting of about 200 thin sections of rocks and minerals (link to the dataset:

http://www.alexstrekeisen.it/index.php, accessed on 20 February 2023; courtesy of Alessandro Da Mommio). We collected these images for creating a labeled dataset for training the deep learning models used, successively, for classifying new images. The thin sections are presented as low-resolution colored (RGB) JPEG images with a resolution of 96 dpi and a size of 275 × 183 pixels. The objective of this study was to classify the thin sections into four distinct classes: augite, biotite, olivine, and plagioclase. Although the classification may seem straightforward, it was relatively challenging due to the similarities in the geometric features of different minerals and the potential effects of corrosion and alteration. Furthermore, we performed an additional classification test of four types of sedimentary rocks, using a set of jpeg images of thin sections. In this second type of test, we applied a suite of machine learning techniques (decision tree, random forest, adaptive boosting, support vector machine, and naive Bayes), with the goal of comparing different classification approaches with deep learning methods.

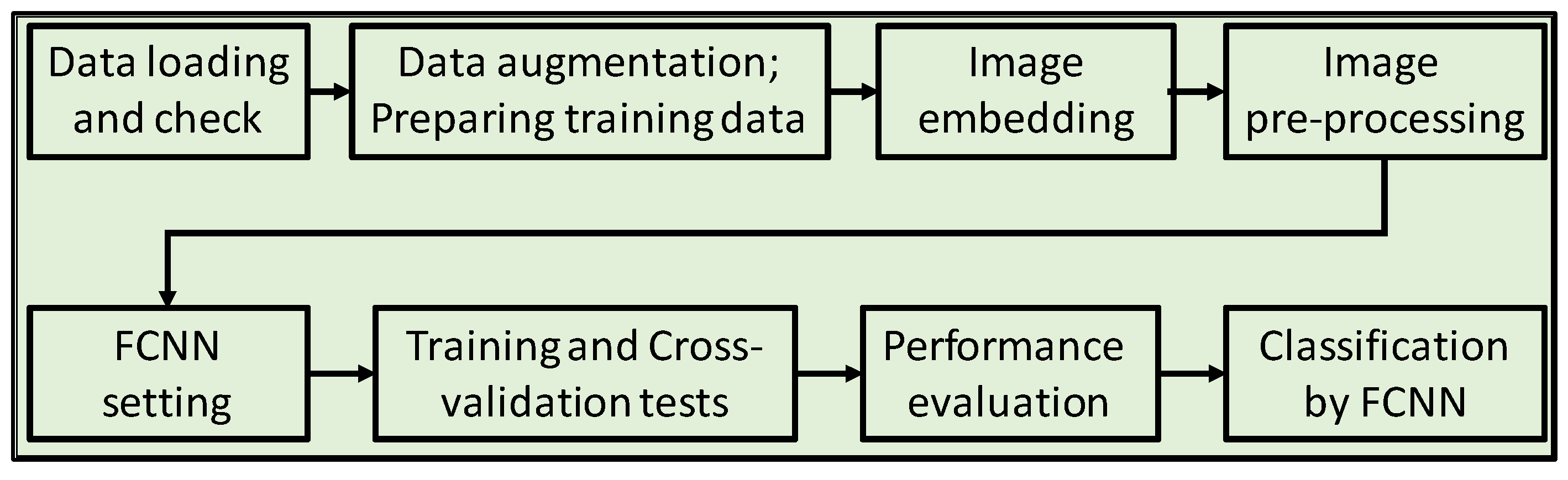

In the following part, we describe in detail all the steps of the workflow (as in the scheme of

Figure 2).

3.1.1. Data Augmentation and Preparation of the Training Dataset

After data loading and check, we created a set of examples to train the FCNN. In this phase, the assistance of an expert in mineralogy was crucial for labeling a significant number of representative images of the various mineralogical classes. Due to the limited number of available images, the training dataset used in this study was relatively small. Defining a minimum number of training examples is a challenging task because it varies depending on the complexity of the classification problem, image quality and heterogeneity, and algorithms used. To estimate the appropriate number of training data required, specific tests can be performed, as explained below in the section dedicated to cross-validation tests. To address the issue of the small training dataset, we applied dataset augmentation techniques to add “artificial” images. These techniques allow for the application of transformation operators to the original data, including flipping (vertically and horizontally), rotating, zooming and scaling, cropping, translating the image (moving along the x or y axis), and adding Gaussian noise (distortion of high-frequency features). For this pre-processing, we used Python libraries, available in the Tensorflow package. The underlying concept behind this data augmentation approach is that the accuracy of the neural network model can be significantly improved by combining different operators across the original dataset.

3.1.2. Image Embedding

Image embedding is a technique used in computer vision and machine learning to convert an image into a feature vector, which can then be used for tasks such as image classification, object detection, and image retrieval. It involves transforming an image into a set of numbers that can be easily processed by machine learning algorithms.

We tested various algorithms and techniques for embedding our images (jpeg files of mineralogical thin sections), and finally, we adopted the SqeezeNet technique. This is an algorithm with a neural network architecture that uses a combination of convolutional layers and modules to extract features from images. The reason for our selection was because SqeezeNet was more computationally efficient than the other methods, while still preserving a high accuracy. Other techniques that we tested are the following:

VGG-16 and VGG-19: These are convolutional neural networks that were developed by the Visual Geometry Group at Oxford University. They consist of 16 or 19 layers, respectively, and are known for their effectiveness in image classification tasks.

Painters: This is an algorithm developed by Google that creates an embedding of an image by synthesizing a new image from it. It works by training a neural network to generate a painting that is similar to the original image, and then using the internal representation of the network as the image embedding.

DeepLoc: This is an algorithm developed by the University of Oxford that creates an embedding of an image by combining information from multiple layers of a convolutional neural network. It is designed specifically for the task of protein subcellular localization, which involves determining the location of proteins within cells based on microscopic images.

3.1.3. Pre-Processing

Before training the FCNN and using it for image classification, it was necessary to pre-process all the images of the datasets. The following are the main pre-processing steps applied to our data.

Normalization: Normalizing image data is an important step to ensure that values across all images (after embedding operations) are on a common scale. This can prevent the dominance of certain features due to differences in their ranges of values. In general, when dealing with numerical features, centering the data by mean or median can help to shift the data so that the central value is closer to zero. Scaling the data by standard deviation can help to further adjust the data to a suitable range for analysis.

Randomization: Randomizing instances and classes is a useful step for reducing bias and ensuring that the future classification model is robust to different orders of presentation of data. This can help to ensure that the final model(s) is (are) not biased towards any particular pattern or structure in the data.

Removing sparse features: This is another useful step that can help to simplify the data and remove noise. Features with a high percentage of missing or zero values may not contribute much to the classification task and can be safely removed.

PCA: Principal component analysis is a common technique used for dimensionality reduction. It can help to identify the most important features in the data and reduce the number of features, while still retaining much of the original information.

CUR matrix decomposition: This is another technique for dimensionality reduction that can help to reduce the computational complexity of our analysis. Similar to PCA, it identifies the most important features in the data and reduces the number of features without sacrificing too much information. We tested it, but finally, we only applied PCA.

3.1.4. Fully Connected Neural Network (FCNN) Hyper-Parameters

After data preparation, features’ extraction, and pre-processing, the next crucial step was to optimize the hyper-parameters of our FCNN. There are many parameters to play with in order to make a deep neural network effective. Adjusting these parameters in an optimal way can be a difficult and subjective task. However, there are automatic approaches for that purpose, such as using specific reinforcement learning methods that help define the optimal parameters of a neural network for reproducing a desired output. Many combinations of hyper-parameters can be automatically tested, and the effectiveness of each combination is verified through cross-validation tests, as explained in the following. The crucial network parameters are:

N-hl and N-Neurons: These represent, respectively, the number of hidden layers and the number of neurons populating each hidden layer. We tested neural networks with a minimum of 1 up to a maximum of 10 hidden layers, using a number of neurons ranging from 100 to 300 for each hidden layer. Among the possible choices, after many tests, we selected a quite simple architecture with three hidden layers and 200 neurons for each layer.

Activation function for the hidden layers: In deep neural networks, activation functions are mathematical functions that are applied to the output of each neuron in the network. These functions introduce non-linearity to the output of each neuron, allowing the network to learn complex patterns in the input data. There are several types of activation functions, including:

Logistic Sigmoid Function: This function takes an input value and returns a value between 0 and 1, which can be interpreted as a probability. It is defined as:

σ(x) = 1/(1 + exp(−x)), where x represents the input vector.

Hyperbolic Tangent Function: This function takes a vector of input values

x and returns values between −1 and 1. It is defined as:

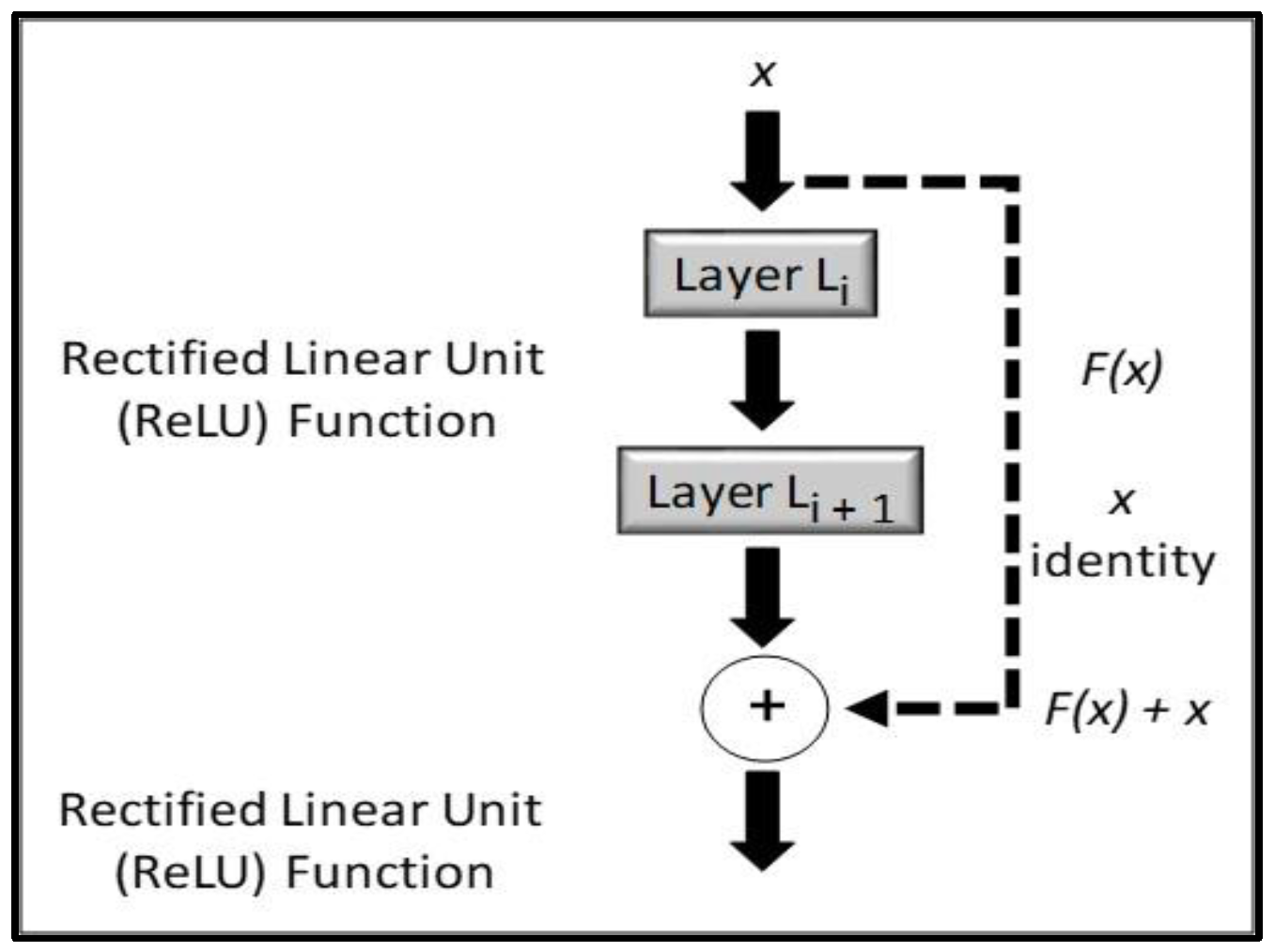

Rectified Linear Unit (ReLU) Function: This function returns the input value if it is positive, and 0 otherwise. It is defined as:

Leaky ReLU Function: This function is similar to ReLU but introduces a small slope for negative values, preventing the “dying ReLU” problem that can occur when the gradient of the function becomes 0. It is defined as:

Exponential Linear Unit (ELU) Function: This function is similar to leaky ReLU but uses an exponential function for negative values, allowing it to take negative values. It is defined as: ELU(x) = {x if x >= 0, alpha * (exp(x) − 1) if x < 0}, where alpha is a small constant.

Choosing the right activation function is important for the performance of a neural network. Some functions work better than others depending on the type of problem being solved and the architecture of the network. For example, ReLU and its variants are widely used in deep learning because of their simplicity and effectiveness, while sigmoid and tanh functions are less popular due to their saturation and vanishing gradient issues. In fact, we used ReLU in our final setting.

Solver for weight optimization: A solver for weight optimization in a neural network is a method used to find the set of weights that minimize the loss function of the network. The loss function measures the difference between the predicted output of the network and the actual output, and the goal of the solver is to find the set of weights that minimize this difference. There are various types of solvers used for weight optimization in neural networks, each with their own strengths and weaknesses. Outlined below are three commonly used types:

L-BFGS-B: This is an optimizer in the family of quasi-Newton methods, which are used for unconstrained optimization problems. It is a popular choice for optimizing the weights in a neural network because it is fast and efficient and can handle a large number of variables.

SGD: Stochastic gradient descent is a popular optimization algorithm that works by iteratively updating the weights in the network based on the gradient of the loss function with respect to the weights. It is simple to implement and computationally efficient, but it can be sensitive to the choice of the learning rate and can get stuck in local minima.

Adam: This is a stochastic gradient-based optimizer that is a modification of SGD. It uses an adaptive learning rate that adjusts over time based on the past gradients and includes momentum to prevent oscillations. Adam is often preferred over traditional SGD because it is less sensitive to the choice of the learning rate and can converge faster.

The choice of solver depends on the specific problem being solved, the size and complexity of the network, and the available computational resources. Finally, we selected the Adam solver for our network.

Alpha: L2 penalty (regularization term) parameter. In a neural network, the alpha parameter is a regularization term used to control the amount of L2 regularization applied to the weights of the network during training. L2 regularization is a technique used to prevent overfitting, which occurs when the network learns the training data too well and performs poorly on new, unseen data. The alpha parameter is multiplied by the sum of squares of all the weights in the network and added to the loss function during training. This penalty term encourages the network to learn smaller weights, which helps to reduce overfitting. Increasing the value of alpha increases the amount of regularization applied to the weights, which can help to reduce overfitting but may also result in underfitting if the regularization is too strong. On the other hand, decreasing the value of alpha reduces the amount of regularization applied to the weights, which can lead to overfitting. The optimal value of alpha depends on the specific problem being solved and the complexity of the network. It can be determined using techniques such as grid search or cross-validation. Regularization is an important technique for improving the performance of neural networks and should be considered when building a network. We ran many tests in a wide range of values for this parameter, from relatively small values (0.0005) in order to avoid underfitting, up to high values (100), in order to exclude the possibility of the opposite problem (overfitting). Finally, we selected an average value (0.1).

Max iterations: Maximum number of iterations. The maximum number of iterations in a deep neural network is the maximum number of times the training algorithm updates the weights of the network during training. The training process in a neural network involves feeding the input data into the network, computing the output, comparing it to the actual output, and updating the weights to minimize the difference between them. The number of iterations needed to train a deep neural network depends on various factors, such as the size and complexity of the network, the amount and complexity of the input data, the choice of activation functions, the optimization algorithm used, and the convergence criteria. To prevent overfitting, it is common to monitor the performance of the network on a validation set during training and stop the training when the performance on the validation set starts to degrade. This can help to avoid training the network for too many iterations, which can lead to overfitting. For our tests, we used a Max iterations number ranging from 100 to 200.

3.1.5. FCNN Training and Cross-Validation Tests

After setting the parameters of our FCNN, as explained above, we performed an automatic sequence of cross-validation tests. These represent a common technique used in machine learning, including deep neural networks, to evaluate the performance of a model on unseen data. It involves partitioning the available dataset into several subsets, or “folds”, where one fold is used as the validation set, while the remaining folds are used for training. This process is repeated several times, with each fold taking turns as the validation set.

In the context of deep neural networks, we applied cross-validation to assess how well our FCNN model generalizes to new data, as well as to tune hyper-parameters such as the learning rate, the number of layers, and the number of neurons in each layer. The goal was to find a model that performs well on the validation sets across all folds, without overfitting to the training data.

There are several types of cross-validation tests, including k-fold cross-validation and stratified k-fold cross-validation. In k-fold cross-validation, the dataset is divided into k equally sized folds, and the model is trained and evaluated k times, with each fold serving as the validation set once. In stratified k-fold cross-validation, the dataset is divided into k-folds that preserve the proportion of samples for each class, which can be especially useful when dealing with imbalanced datasets. In our case, we performed mainly k-fold tests using a number of folds ranging between 3 and 8.

3.1.6. FCNN Performance Evaluation

Once the cross-validation process was complete, the performance metrics for each fold were averaged to provide an estimate of the model’s performance on unseen data. These metrics can include accuracy, precision, recall, F1 score, and “area under the receiver operating characteristic curve” (AUC-ROC), depending on the specific problem being addressed. The following is a brief explanation of these metric indexes.

Accuracy: This metric measures the proportion of correct predictions made by the model. It is calculated by dividing the number of correct predictions by the total number of predictions. Accuracy is a straightforward metric, but it can be misleading if the dataset is imbalanced, meaning that one class has significantly more samples than the other.

Precision: This metric measures the proportion of true positive predictions out of all positive predictions made by the model. It is calculated by dividing the number of true positive predictions by the sum of true positive and false positive predictions. Precision is useful when the cost of false positives is high.

Recall: This metric measures the proportion of true positive predictions out of all actual positive samples in the dataset. It is calculated by dividing the number of true positive predictions by the sum of true positive and false negative predictions. Recall is useful when the cost of false negatives is high.

F1 Score: This metric is the harmonic mean of precision and recall and provides a single score that balances both metrics. It is calculated as: 2 × (precision × recall)/(precision + recall). The F1 score is useful when both precision and recall are important.

AUC-ROC: This metric measures the model’s ability to distinguish between positive and negative samples. It is calculated by plotting the true positive rate (TPR) against the false positive rate (FPR) at various threshold values and calculating the area under the curve of this plot. A perfect classifier will have an AUC-ROC score of 1, while a random classifier will have an AUC-ROC score of 0.5. AUC-ROC is useful when the cost of false positives and false negatives is similar.

Table 1 shows an example of performance evaluation through a k-fold test on our image data. In this specific case, we applied a fully connected deep neural network consisting of 5 hidden layers with 200 neurons each. The network uses a Rectified Linear Unit activation function, and an Adam solver running for a maximum of 200 iterations. We can see that all the indexes showed relatively high values, indicating that our FCNN model has good generalization performance on unseen data.

3.1.7. Classification

After optimizing the network parameters and after the cross-validation tests, we applied our “best” FCNN model for classifying the unlabeled (“unseen”) mineralogical thin sections not included in the training dataset. To be more precise, we selected a set of FCNN models with good performances. Finally, we created an “average model” from that set, simply by averaging the optimal hyper-parameters determined through the cross-validation tests (see FCNN parameters related to

Table 1).

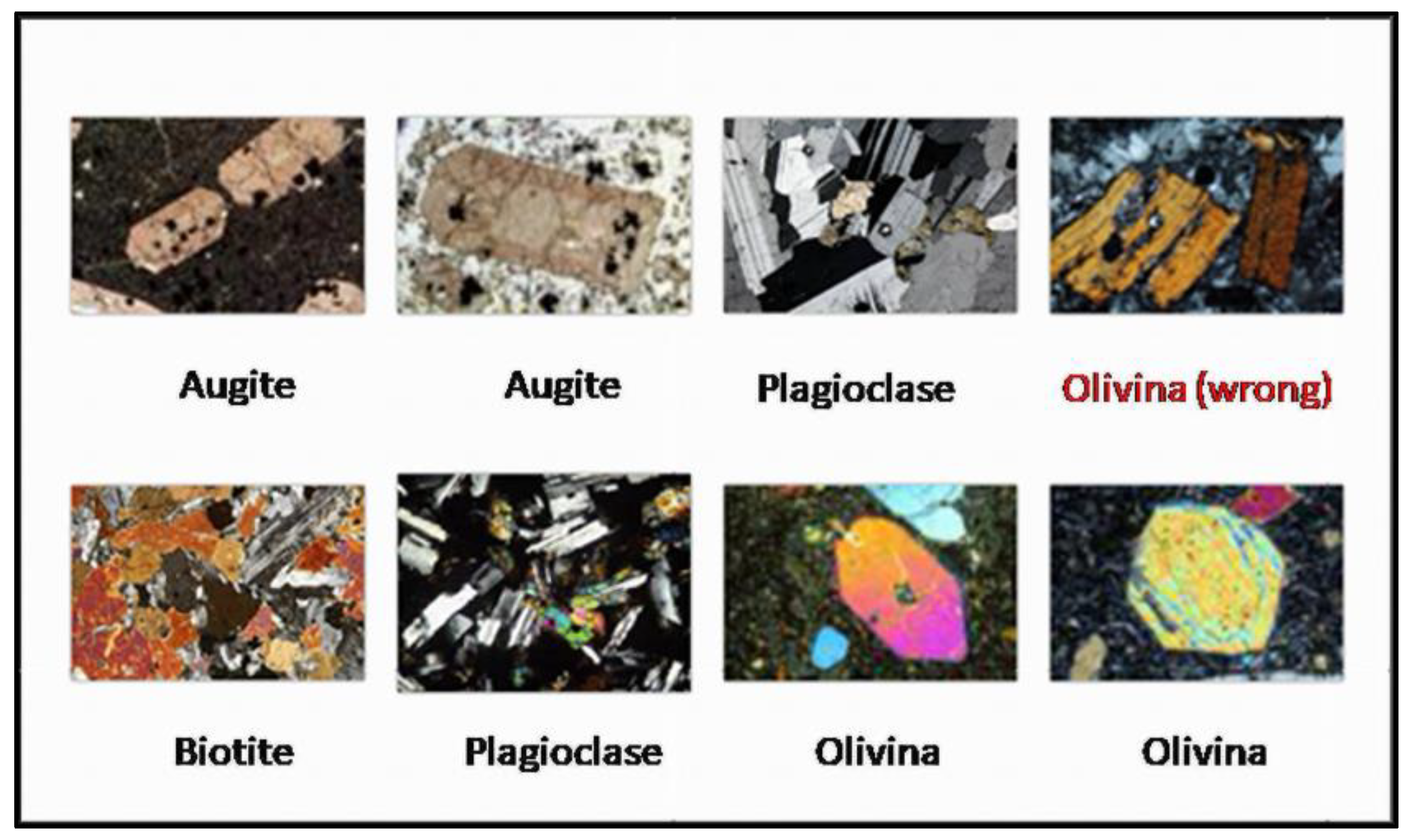

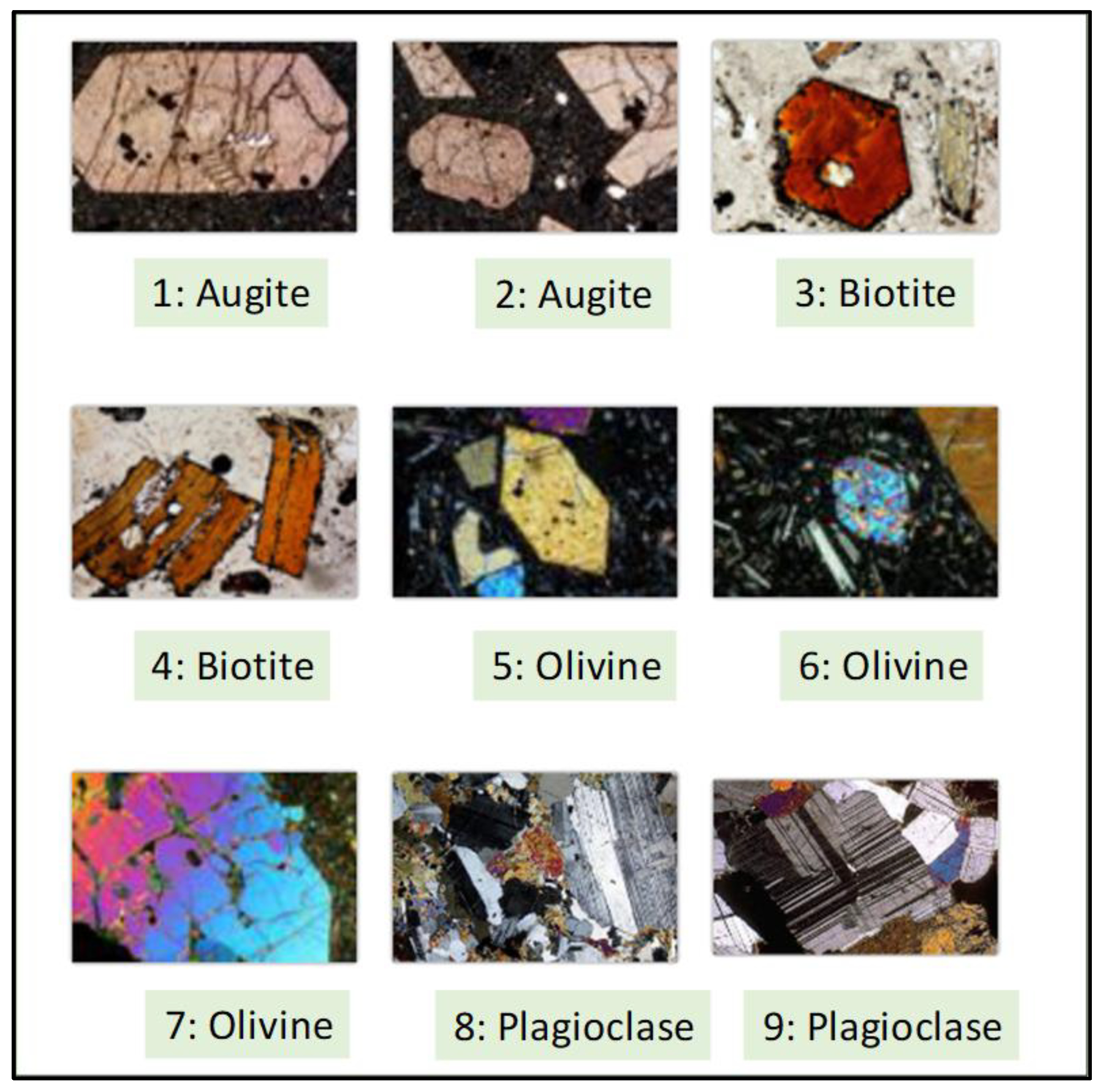

Figure 3 shows one illustrative case of a classification result using the above neural network architecture. The classification performance was good, even though one image of Biotite was misclassified as Olivine (the top-right image).

3.2. Classification of Mineralogical Thin Sections Using ResNet

In order to improve the classification performances, we applied the same workflow shown in

Figure 2, but this time using deep convolutional residual neural networks (ResNets) rather than FCNNs. The number of hidden layers in convolutional residual networks can be parameterized, allowing for the testing of their effectiveness with quantitative performance indicators. In our experiments, we tested ResNet_18, ResNet_34, ResNet_50, and ResNet_152, which have 18, 34, 50, and 152 deep layers, respectively. To accomplish this, we created a Jupyter notebook (Python) that utilizes the residual networks open-source code available for download from the “TORCHVISION.MODELS.RESNET” website:

https://pytorch.org/vision/0.8/_modules/torchvision/models/resnet.html (accessed on 10 January 2023).

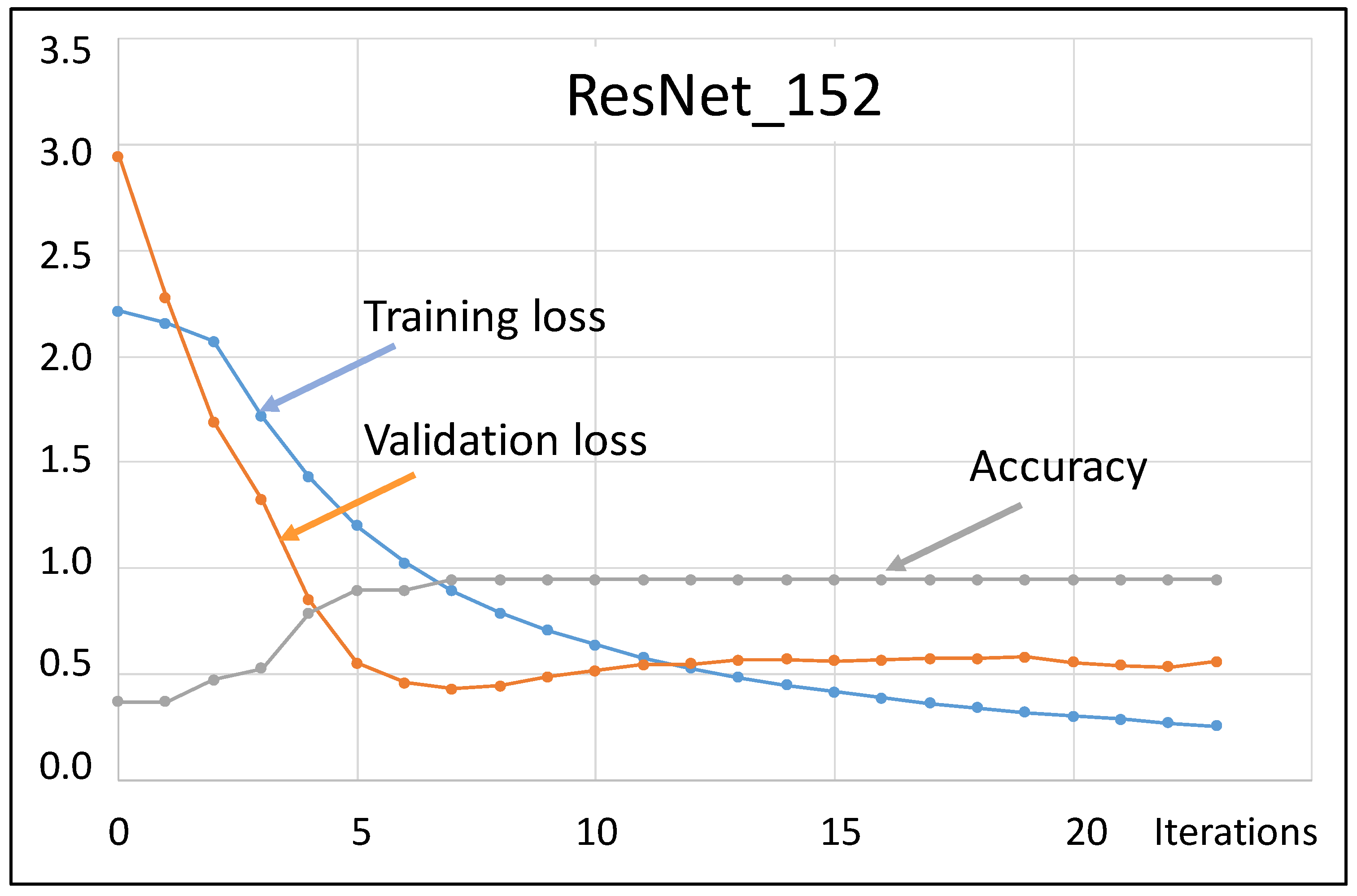

For each network architecture, we conducted cross-validation tests to assess its performance and plotted the accuracy and loss function against the iteration number. Here, accuracy represents the ratio of correct predictions to total input samples, and it increased over time, while the loss function decreased, theoretically converging towards zero. In particular, for ResNet_152, the accuracy reached almost the ideal value of 1 after a few iterations.

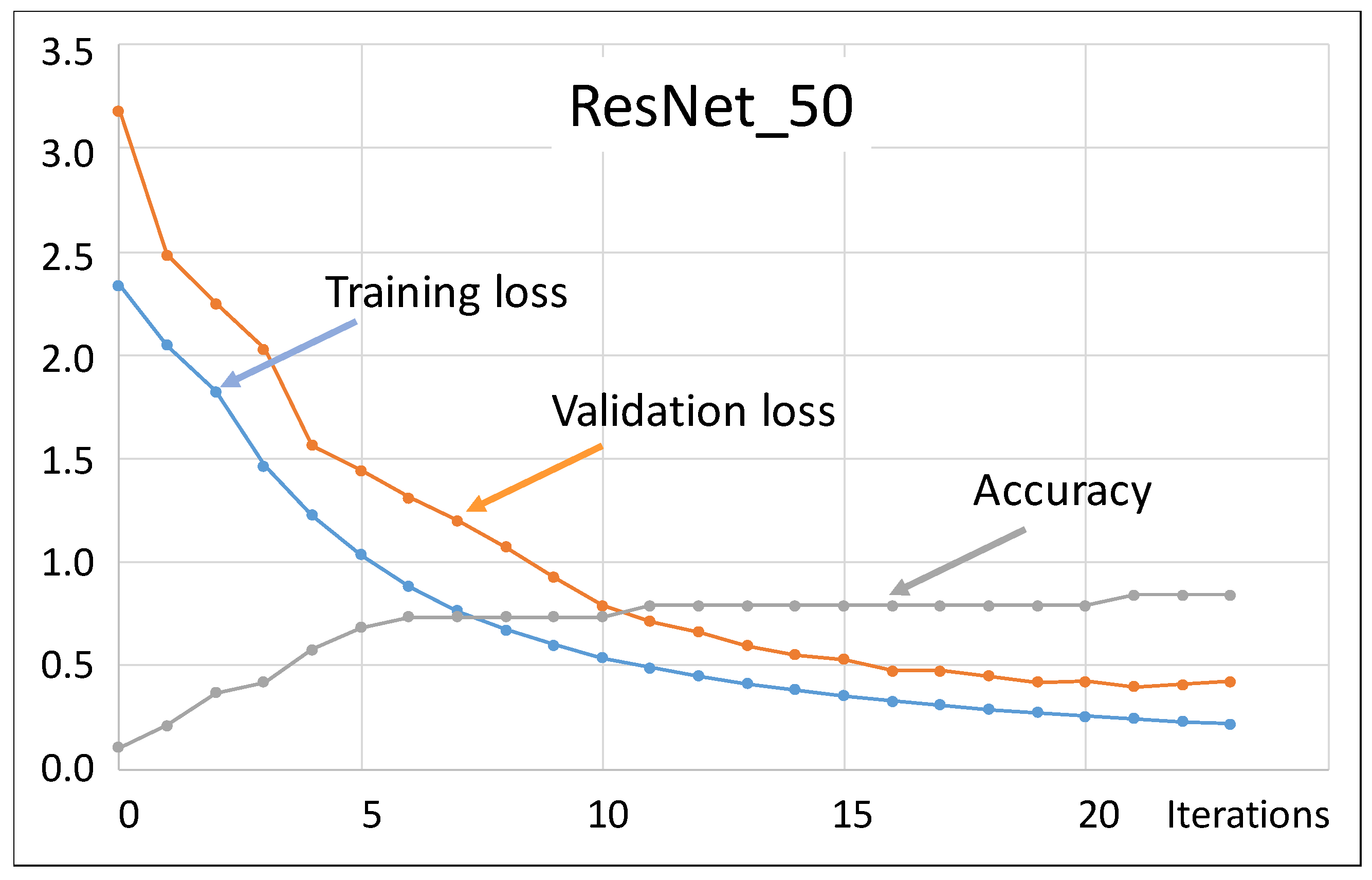

Figure 4 illustrates the graph of both the training and validation loss functions, plotted together with accuracy versus the iteration number.

Figure 4 is interesting because it shows both benefits and limitations of our classification approach based on ResNet. We remarked that training loss and validation loss are both measures of how well the model is performing on a given dataset, but they have different purposes. Training loss is the error metric used during the training phase to optimize the network parameters. It is calculated as the difference between the predicted output of the network and the true output of the training set. The objective during training is to minimize the training loss by adjusting the weights and biases of the network. The training loss is typically calculated after each batch or epoch of training and is used to update the model parameters. Instead, validation loss is a measure of how well the model generalizes to new, unseen data. It is calculated using the validation set, which is a subset of the data that is not used during training. The validation loss is a metric used to evaluate the performance of the model during training and to prevent overfitting. Overfitting occurs when the model performs well on the training data but poorly on the validation or test data. The goal during training is to minimize the validation loss, which indicates that the model is generalizing well to new data.

It is clear that in our test with ResNet_152, there was some overfitting, because the validation loss started increasing after seven iterations. In general, a good model should show decreasing values versus iterations for both training and validation losses. Sometimes, choosing a residual network with a high number of hidden layers (>100) could be the reason for overfitting effects, as occurred in our case with ResNet_152. For that reason, we tried to classify our thin-section images using a ResNet_50 (with 50 hidden layers).

Figure 5 shows the trend of the training and validation loss versus iterations for ResNet_50. This time, both curves decreased with regularity, showing much less overfitting problems than ResNet_152. Unfortunately, the accuracy was not as good as in the previous case. In other words, there was a trade-off between accuracy and overfitting. In conclusion, this tutorial test shows that reliable classification results should derive from a balanced compromise between an accurate training and limited overfitting effects.

Despite the different overfitting problems, in our specific case, both ResNet_152 and ResNet_50 performed well when we applied them for classifying our set of unlabeled thin mineralogical sections.

Figure 6 shows an example of classification obtained using ResNet_50. All the test images were properly classified, with variable values of probability (see

Table 2). For each test image, the value of the loss function was generally around 0.1–0.2 and the accuracy was generally around 0.8–0.9. This occurred after a few (20–25) iterations, indicating that the network converged quickly towards the correct predictions. Results with ResNet_152 were not very different (with similar probabilities of classification).

3.3. Comparison with Other Classification Approaches

The deep learning (DL) methods considered in the previous applications (FCNN, ConvNet, and ResNet) use learned features automatically extracted from the raw image data. In order to compare DL methods with some other completely data-driven techniques, or based on handcrafted features, we performed an independent classification of the same dataset using different machine learning methods, not based on neural networks, including decision tree, support vector machine, random forest, naive Bayes, and adaptive boosting. We applied these methods to features of the thin sections manually designed by human experts, including specific patterns, textures, or shapes in the image.

Table 3 below shows the comparison of classification performances between deep learning (FCNN, in this example) and the suite of alternative classifiers. Although techniques such as random forest or support vector machine seem to produce satisfactory classification results, as we can notice, a simple FCNN with just five hidden layers showed better performance indexes (highlighted in bold in

Table 3) than the other methods. However, none of the indexes were equal to one, indicating that the classification performance was good, but not perfect. Indeed, there were still misclassification cases, even when using FCNNs. We can expect that by increasing the size of the training dataset (that in our tests was relatively small), it will be possible to improve the classification results.

As an additional test, we expanded the dataset, including thin sections obtained from sedimentary rock samples.

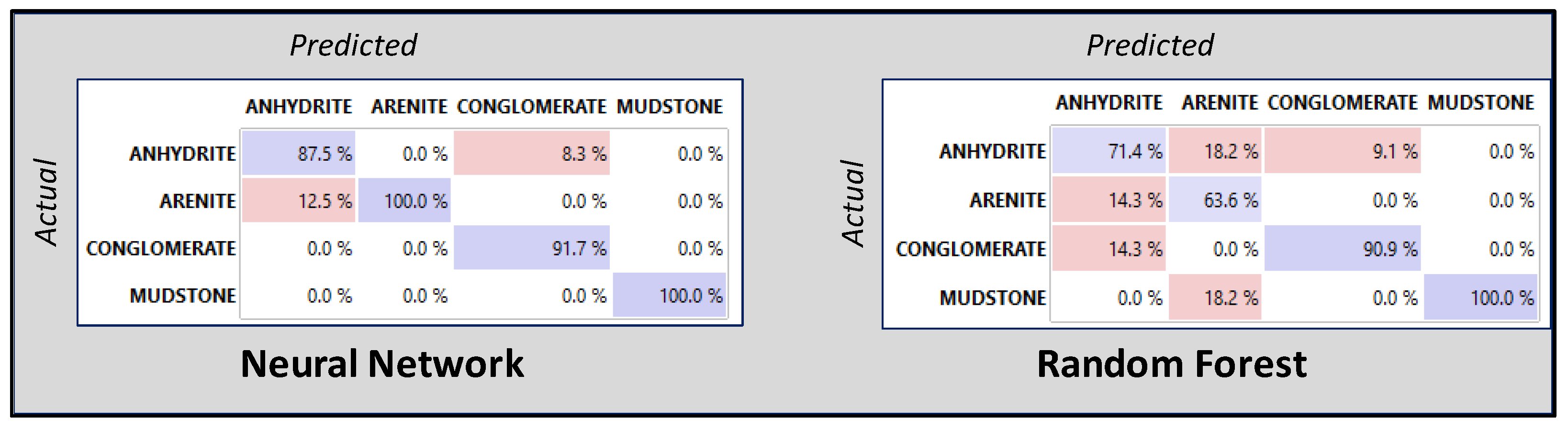

Figure 7 shows some examples of thin sections used for training all the classification methods mentioned above, in order to perform an additional performance comparison with DL methods.

Figure 8 shows an example of a performance comparison on the training dataset. In this case, instead of using performance indexes as in

Table 3, we used a visual evaluation approach based on a confusion matrix. Note that a confusion matrix in machine learning is a table that summarizes the performance of a model on a set of test data by comparing the actual target values with the predicted values. It is a way to evaluate the accuracy of a classification model and helps to identify where errors in the model were made. The matrix typically has

N rows and

N columns, representing the actual and predicted classes, respectively. True positives, false positives, true negatives, and false negatives are the four types of values in the matrix, and they are used to calculate metrics such as accuracy, precision, recall, and F1 score.

Figure 8 shows an example of a confusion matrix for a neural network (FCNN) and random forest, obtained through a cross-validation test applied to the sedimentary thin-section images shown in

Figure 7. We can notice that the values on the principal diagonal (percentage of correct predicted versus actual results) for the neural network were significantly higher than for random forest, indicating that higher classification accuracy was obtained through the deep learning approach. In conclusion, the confusion matrix technique also showed that the classification performance of deep neural networks was good, although not perfect.