1. Introduction

Urbanization transforms the land surface and alters the pre-development hydrologic cycle, and these changes amplify the need for surface water management. Since the 1970s, urban land development has led to declines in soil-water recharge by as much as 80% [

1]. The resulting runoff typically yields increased volume, increased flow rates, and shorter flow durations than the predevelopment hydrology. For example, a 10% increase in impervious surfaces within a watershed outside of New York, NY, yielded stormwater with a three-fold greater peak flow rate compared with a nearby undeveloped watershed; the flow duration was also shortened from approximately 75 to >20 min [

2]. The remaining pervious areas, such as beneath street trees and turfgrass lawn, can also exhibit decreased infiltration if soil compaction occurred during land development [

3,

4,

5]. Yet, urban vegetation may help mitigate the environmental consequences of urbanization [

6,

7,

8]. One popular solution to restore infiltration in urban areas is the best management practice known as a bioretention cell or rain garden; these vegetated catchments are specifically designed to collect, store, and infiltrate stormwater and thus dispel the large volumes and flow rates of surface runoff [

9].

The installation of bioretention areas to intercept stormwater continues to outpace our understanding of these practices as plant–soil systems. Research on bioretention areas has focused on catchment size [

10,

11], the composition of different soil mixtures [

12,

13], and internal design features that control the flow of water and potential pollutants [

14,

15]. Differences in vegetation and soil properties were associated with changes in bioretention cell performance and water chemistry [

16,

17,

18,

19,

20], but we are only beginning to appreciate the role of plants as altering soil hydrologic processes in bioretention systems [

21,

22]. Understanding how plants alter soil physical properties and drainage of these bioretention systems will guide how we use them for pollutant removal. Moreover, the plants used for bioretention range from macrophytes to water-tolerant flowering forbs, grasses, shrubs, or trees as recommended by numerous “how to” guides that offer lists of species for these systems [

23]. This variety in vegetation choice has helped popularize bioretention around the world, but it confounds our ability to quantify their hydrologic impact and assumes little to no difference related to plant type and behavior. Thus, the biophysical role of vegetation remains unclear in the context of bioretention [

24] because no study has tested the effect of vegetation type on real-time dynamics of soil water in a controlled experiment of replicated systems.

Plants are critical to the function of bioretention systems not only through their direct uptake of soil water but also through their indirect role in soil structural development. Soil structure describes the arrangement of solids and voids (pores) within a volume of soil where the amount and distribution of pore size controls such characteristics as soil water infiltration, retention, redistribution, and conductivity [

25]. The capacity for plants to build, alter, and stabilize soil structure depends on their root architecture and belowground productivity [

26], as roots physically enmesh soil aggregates [

27], create pores in soil [

28], and buffer the soil against slaking and infilling [

29,

30]. The effects of plants on soil pore spaces over time together embody a structural domain of soil porosity, enhancing the textural domain of soil porosity that was determined by the physical composition of the soil mixture [

31]. Ultimately, these plant-induced changes in soil structure modify soil hydrology through improved soil pore connectivity. Increased rates of soil saturated hydraulic conductivity (

Ks) observed under prairie plants with extensive root development exemplify this relationship [

32,

33,

34].

We hypothesize the volume and flow rate of drainage from bioretention systems will differ by vegetative treatment and size of stormwater input, and that these differences can be explained by plant-soil interactions that control the retention and movement of soil water. To test this hypothesis, we first sought to quantify the direct effect of vegetation type on the drainage response of replicated bioretention mesocosms that receive rooftop stormwater generated by natural rain events. Second, we used indices of soil structural development (infiltration rate, Ks, and soil water retention) to evaluate changes in porosity beneath each vegetation type.

2. Materials and Methods

2.1. Site Description

This research was conducted at an experimental facility located in the town of Verona, part of the greater Madison area in south-central Wisconsin (43°09′ N, 89°37′ W). The greater Madison area is the center for rapid urban growth and development in Dane County, currently supporting over 300,000 people, or 65% of the population county-wide. Groundwater is the primary source of potable water, and land use planners, therefore, support local practices that infiltrate stormwater [

35]. The region is characterized by a northern temperate climate with mean summer and winter temperatures of 21 °C and −6 °C, respectively, and a normal annual precipitation of 837 mm [

36]. In the two years of study, the mean summer air temperature was 19.4 °C and 22.5 °C, respectively, and the total annual rainfall each year was 60 mm greater than normal [

36].

2.2. Experimental Design

Twelve rain gardens were constructed as mesocosms to test the effects of vegetation type on hydrology and soil development by varying only the vegetative component. The experiment used a randomized complete block design of three blocks with four vegetative treatments per block: bare soil (control), turfgrass sod, wet-mesic prairie vegetation (mix of 18 species including both grasses and flowering forbs), and landscaping shrubs (a mix of 6 water-tolerant species) (

Table 1). These three plant assemblages were selected to represent the range in plant types used for bioretention [

14,

37].

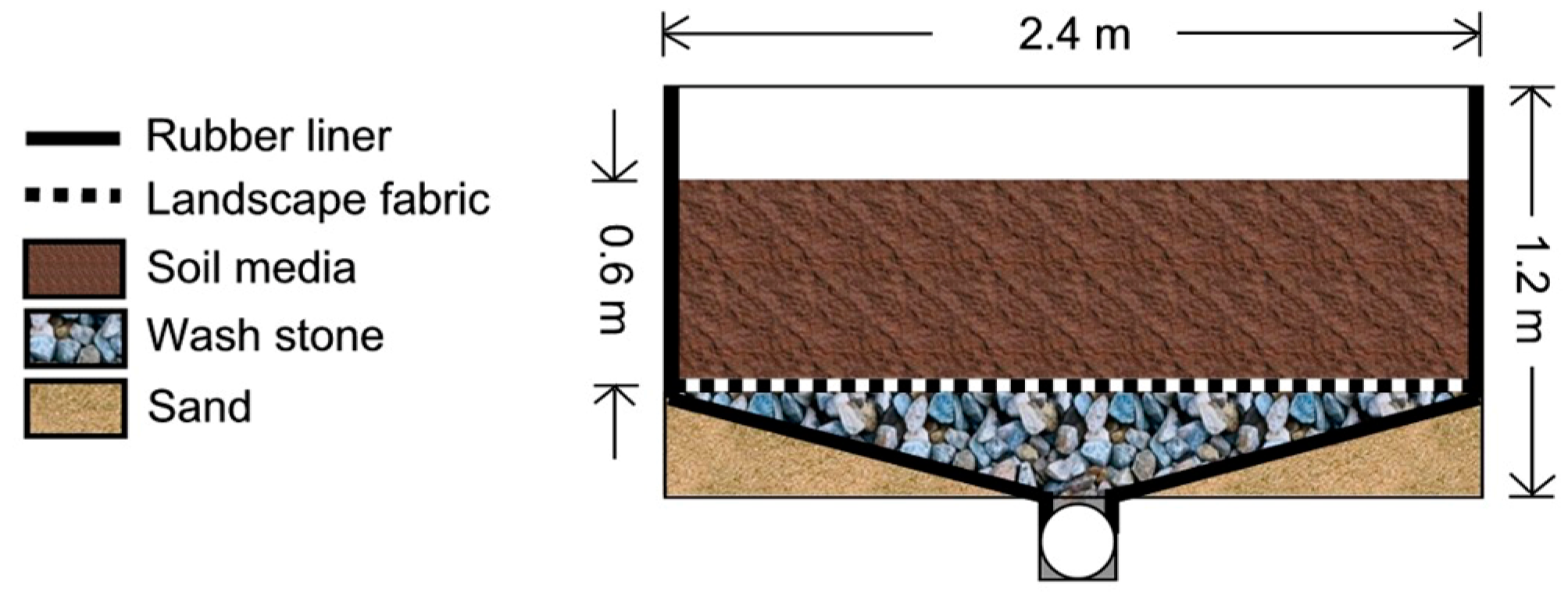

All mesocosms were constructed of wooden frames of equal size (2.44 m by 2.44 m by the total depth of 1.22 m) and filled with a soil mixture (ratio of 3:1:6 by volume of topsoil, compost, and sand, respectively) that is recommended locally for bioretention [

38]. This soil mixture was 43% sand, 40% silt, and 17% clay by mass (determined through textural analysis with hydrometer after hydrogen peroxide pretreatment [

39,

40]) and 6% soil organic matter (by loss on ignition [

41]). The soil mixture was not evaluated for chemical properties. The soil area per mesocosm (5.9 m

2) represented 17% of its respective contributing roof area (34.8 m

2) per sizing recommendations [

10,

42]. Mesocosms were designed to hold roof water from a typical storm event of 25.4-mm rainfall accumulation in south-central Wisconsin (e.g., storms with 2-h duration and 3-mo frequency [

43]).

Each mesocosm was an individual, closed system that drained freely to a central tile drain [

16,

20]. Each consisted of a wooden frame with a 30 mil rubber liner along the bottom and sidewalls, a bed of wash stone (5 cm diameter) below a layer of landscape fabric, and a rooting zone of soil media (

Figure 1). Two rings of bentonite clay were placed along the interior perimeter of each mesocosm at depths of 0.3 and 0.5 m to prevent preferential flow along the interior of the side walls. The effective rooting depth was 0.6 m. A central PVC pipe directed all drainage to a flow monitoring station. Water stored in the internal design features (e.g., wash stone) did not change over time within an individual system or treatment [

16,

20,

44]. Each mesocosm had a closed hydrologic budget in which 96 ± 6% of the input water volume was collected as drainage, stored in the soil, or lost via evapotranspiration [

44]. The 12 mesocosms were positioned in the landscape such that the planted areas were above flow monitoring stations located behind wooden retaining walls (

Figure 2). The four mesocosms within each block were randomly assigned a vegetative treatment.

The mesocosms were established in 2005 using plant stock from local nurseries. Turfgrass mesocosms were planted with Kentucky bluegrass sod (Poa pratensis L.) and maintained with hand-shears to a height of 7 cm. Prairie mesocosms were initially planted with plugs on a grid of 0.3-m spacing but spread to 80% soil coverage. Shrub mesocosms were planted with six shrubs, one per species, spaced equally apart from one another. Control (bare soil) mesocosms were raked annually in April and covered with fiberglass window screen to reduce the erosive impact of rainfall and the formation of surface crusts; these screens were removed before and replaced after stormwater applications. All mesocosms were maintained by hand-weeding.

2.3. Stormwater Inputs

The study site was equipped to collect and distribute natural rainfall to the mesocosms to simulate the episodic inputs of stormwater generated by impervious surfaces. Two tanks, one on either side of a metal-roofed shed, together stored 25.4 mm of rainfall over a total roof area of 417.6 m

2, which was then divided equally among the 12 mesocosms. With both tanks full, each mesocosm received 127.3 mm (751 L) of the collected stormwater. The volume of stormwater applied to the mesocosms was quantified via a digital in-line flow meter (±0.04 L, model 825, Systems, Tuthill Corporation, Burr Ridge, IL, USA) at a constant rate of inflow of 192 mm h

−1 (1133 L h

−1); this rate was selected to enable all 12 applications (one per mesocosm per rain event) to be completed on the same day. Perforated PVC tubes spanning the length and width of each mesocosm distributed stormwater across the soil surface [

16].

For each natural rain event, the total volume of rainfall collected from the roof was distributed equally among all mesocosms. The stormwater simulations (hereafter referred to as applications) on a given day were applied randomly first by block and then by mesocosm within the block. Stormwater applications were completed within 48 h of the preceding rain event, with few exceptions (e.g., when the weather forecast predicted additional rain within the next 24 h, we delayed the applications until the next dry day). We waited for mesocosm drainage generated by rainfall (if any) to return to the measurement threshold (defined as flow <0.25 mm h

−1). Thus, this study quantified drainage responses following inputs of stormwater in accordance with the stochastic distribution of rainfall over two growing seasons (May to September). This is different from previous work in which rainfall simulations were completed on a pre-determined schedule (such as in the summer months only [

20]).

In total, 41 stormwater applications were applied to the 12 mesocosms over two growing seasons (

Table 2). We applied the maximum amount of collected stormwater (127.3 mm per mesocosm) on two dates in year 1 and on nine dates in year 2. The reference treatment (non-vegetated controls) and turfgrass treatment produced a drainage response on every application date during the study except one date. When the smallest input (8.3 mm) was applied, none of the mesocosms produced drainage responses above the measurement threshold. The prairie and shrub treatments had six additional “no flow” situations, and the amount of stormwater input applied on these six dates ranged from 9.5–19.1 mm.

2.4. Drainage and Climate Monitoring

The timing and magnitude of drainage were measured with a flow monitoring station located at the outflow point of each mesocosm. A tipping-bucket (0.5 L tip

−1) wired to a data logger (CR1000, Campbell Scientific Inc., Logan, UT, USA) recorded drainage flow rates in 5-min intervals. We characterized the drainage response of each mesocosm per stormwater event, starting from the time of stormwater inflow (T

i) and stopping (T

thr) when the flow was again 0.25 mm h

−1 (Q

thr); we quantified the drainage volume (% of input), the lag time to peak flow defined here as T

i to T

pk (min), the peak flow rate of drainage (Q

pk, mm h

−1), and the response duration defined here as T

i to T

thr (h) (

Figure 3). In cases when drainage was less than our threshold, the response was considered a ‘no flow’ situation, and we assigned values of zero to drainage volume, lag time, peak flow, and response duration. The flow response to rainfall itself was not tested in this study because very few rain events generated drainage. Thus, we report data for only the drainage response of the mesocosms generated by stormwater inputs.

The study site was instrumented to continually monitor soil moisture and weather. Two soil moisture sensors were installed per mesocosm at depths of 0–0.15 and 0.30–0.45 m (CS616, Campbell Scientific Inc., Logan, UT, USA). The soil moisture sensors were calibrated to the specific soil mixture used in the mesocosms and tested for accuracy in the field [

44]. Soil moisture (m

3 m

−3) at each depth was monitored at 15-min intervals over the growing season (May–September), and antecedent soil moisture at each depth was recorded immediately prior to each stormwater application. A meteorological station recorded precipitation, air temperature, relative humidity, solar radiation, and wind speed at 5-min intervals at the study site (models TE525WS, HMP50, LI200X, and 03101; Campbell Scientific Inc., Logan, UT, USA).

2.5. Soil Structural Indices

We measured the infiltration rate, Ks, and soil water retention as indices of soil structural development beneath the four vegetative treatments. These measurements were made on dates when drainage responses were not evaluated. Different methods were selected to minimize disturbance to the belowground integrity of the bioretention system in each mesocosm. For example, infiltration rings were installed in the spring and remained in place over the growing season, whereas soil cores for laboratory analyses of soil water retention were extracted only at the end of the growing season.

Infiltration rate and

Ks were measured at the soil surface (0–0.05 m) by the single-ring method with multiple ponding heights [

45]. One ring (25.4 cm diameter) per mesocosm was inserted to a depth of 0.05 m and sealed around the interior perimeter with bentonite clay. All rings were installed one week prior to collecting data. We recorded the antecedent soil moisture, determined the infiltration rate over a period of 30–45 min at a ponding height of 0.05 m, and subsequently alternated the ponding height in the ring between 0.05 and 0.18 m to estimate surface

Ks using the appropriate fitting parameters [

45]. Infiltration and surface

Ks measurements were each conducted twice.

We measured

Ks within the root zone (0.30–0.45 m) of each mesocosm using a constant-rate soil permeameter [

46]. One borehole (0.06 m diameter) per mesocosm was made to a depth of 0.30–0.45 m. A ponding height of 0.15 m was maintained, and steady-state flow in each borehole was reached after approximately two hours per sample run. We calculated

Ks (using Glover’s solution [

47]) from measurements replicated twice in the same borehole over 48 h.

Soil water retention curves were determined in the laboratory, as retention data can be used to detect changes in soil porosity brought about by vegetation, soil fauna, management, and time [

25,

31]. After all stormwater measurements were complete, we used a soil core sampler to extract intact soil cores (0.076 m diameter by 0.076 m length) at three sampling depths (0–0.15 m, 0.15–0.30 m, and 0.30–0.45 m) from the center (one core per depth per mesocosm). Soil cores that were incomplete or contained protruding rocks were discarded and re-sampled. The soil cores were analyzed in three batches, one batch per sampling depth, on an apparatus designed to measure soil water retention on multiple cores simultaneously [

48]. After the final tension (approximately −25 kPa) was reached, the soil cores were dried at 105 °C and weighed to calculate soil bulk density and total porosity. The final tension of −25 kPa would have drained pores with an effective radius of 5.8 µm [

49]. Although the final tension did not reach −33 kPa, a field capacity set point for crops on loam soil, soil water retention over this range (0 to −25 kPa) allowed us to evaluate the effect of the vegetative treatments on soil structural porosity (previously displayed for effective pore radii of 50–80 µm in loam soil [

50]. We plotted soil water content (m

3 m

−3) by tension to display the drainage from larger pores, in which greater soil water loss represented an increase in pore size and connectivity.

2.6. Plant Traits

To help explain hydrologic data, plant height (m), standing leaf mass (kg m−2), the total projected leaf area (m2 m−2), and root mass density (kg m−3) were quantified for each of the three vegetative communities at peak biomass in the second year of the study. In the turfgrass systems, plant height in the turfgrass systems was maintained at 0.07 m by weekly clipping. Random 0.25-m2 plots in the turfgrass were used to obtain SLA, leaf mass, and projected leaf area from green leaf tissue analyzed with a leaf area meter (3100C, LI-COR Inc., Lincoln, NE, USA). In the prairie and shrub communities, the maximum height (±0.01 m) of each plant was recorded weekly until their peak biomass was reached in late summer. These species data were then averaged to compute the average maximum height of the prairie and shrub. Prairie and shrub total leaf mass and total project leaf area at peak biomass are similarly computed by species then summed for each mesocosm. Whole leaves (8–12 leaves per prairie plant, 10–15 leaves per shrub) from different positions in the plant crown were used to determine species-specific SLA. Shrub leaves were analyzed for SLA without petioles; petioles were removed, dried, and weighed to determine the petiole mass fraction (g g−1). When the plants were beginning to senesce, and hydrologic monitoring was complete, all prairie plants were clipped at the ground surface, dried at 60 °C for 48 h, and weighed (±0.01 g) by species. Shrubs were similarly processed for leaf mass by species. All leaves from the crown of the smaller shrubs (I. verticillata, P. melanocarpa, and V. opulus) were collected, dried, and weighed, whereas a representative fraction of the total crown of the larger shrubs (C. sericea, S. purpurea, and V. dentatum) was processed. The standing leaf mass for shrubs was corrected to remove petiole mass if applicable.

Root mass density (kg m−3) was determined for each vegetation type by soil coring near the end of the second growing season, which was at peak belowground biomass in accordance with previous sequential coring (data not shown). All systems, including the controls, received an identical method of soil sampling. Soil cores (5 cm dia., 10 cm length) were extracted from three sampling depths (0–0.15, 0.15–0.30, and 0.30–0.45 m) at three randomly selected locations within each mesocosm and stored at 5 °C for a maximum of one week prior to processing. In the laboratory, the cores were rinsed by hand over a sieve with openings of 0.25 mm diameter. Roots (≥1 mm diameter) were removed with forceps, rinsed three times under running water, dried at 60 °C for 48 h, and weighed (±0.001 g). Root mass density was computed from the dry root mass per volume of soil core. We did not attempt to distinguish between living and dead roots; most roots were light in color, plastic, and appeared to be living at the time of sampling. Holes in the ground from root sampling were filled with wetted soil from a supply of the original soil mixture and marked with flagging to prevent repeat sampling in the same locations. Soil cores were extracted from the controls (although no roots were recovered) to control for the effect of soil disturbance on hydrology.

2.7. Statistics and Data Analyses

We tested for differences in the hydrologic dynamics of drainage and soil development by vegetative treatment. The first test was whether antecedent soil moisture (measured at depths of 0–0.15 and 0.30–0.45 m immediately prior to each stormwater application) was significantly different by vegetative treatment. If antecedent soil moisture varied in accordance with the vegetative treatment, we could avoid a potential confounding effect of antecedent soil moisture on four drainage metrics. Vegetative treatment would thus serve as a proxy measure for antecedent soil moisture. We used a repeated-measures model with input date as the time factor (SAS proc mixed) because antecedent soil moisture data were collected multiple times throughout the study. Additional components of each model to test antecedent soil moisture were vegetative treatment as the main effect, year and block as random effects, and stormwater input size as a continuous fixed variable. These analyses of antecedent soil moisture data at each depth (0–0.15 m and 0.30–0.45 m) describe changes to soil moisture as influenced by stochastic rainfall.

We then evaluated the effect of vegetative treatment on drainage volume (i.e., percent output relative to stormwater input), the lag time to peak flow, the peak flow rate, and the response duration to inputs of stormwater. Because we were interested in the effect of changing the vegetation on each of these four metrics, and not the modeled relationship between each metric and the amount of stormwater applied, we divided the range of stormwater input sizes into thirds. This approach resulted in three categorical size classes that we labeled as small events (8–50 mm), medium events (51–90 mm), and large events (91–130 mm). There were 18 dates when stormwater applied to the mesocosms fell into the small class (8–50 mm), 10 dates in the medium class (51–90 mm), and 13 dates in the large class (91–130 mm) (

Table 2). Within each input size class, we tested the effect of vegetative treatment on the dependent variable (e.g., lag time to peak flow). Each analysis (one per combination of input class and drainage metric) consisted of a linear mixed model (SAS proc mixed) with vegetative treatment as the main effect, year and block as random effects, and repeated measures with sample date as the time factor.

Soil structural data (infiltration, Ks, and soil water retention) and plant trait data (height, leaf mass, leaf area, and root data) were analyzed with linear mixed models (SAS proc mixed) with vegetative treatment as a fixed effect and block as a random effect. Natural logarithmic transformations were applied to infiltration, Ks, and root mass density to analysis; all statistical tests were performed on the transformed data, and values on the untransformed scale are reported for ease of interpretation. The effect of antecedent soil moisture on infiltration was evaluated by linear regression (SAS proc reg). Soil water retention data were tested for differences among vegetative treatments within each sampling depth (0–0.15 m, 0.15–0.30 m, and 0.30–0.45 m, respectively) and, when applicable, each sequential step in tension, because the samples were similarly batched for lab analysis.

All analyses were performed with SAS v9.2 (SAS Institute Inc., Cary, NC, USA). All models were checked with plots of the raw data and model residuals for the assumptions of linearity, constant variance, and normality, as appropriate. We assessed differences among means when applicable with the Tukey–Kramer post hoc HSD test. Unless otherwise indicated, we report means with one standard error of the mean (SEM) as modeled by SAS, and we report statistical significance at p ≤ 0.05.

4. Discussion

Vegetation type changed both drainage volume and drainage rate through a functional shift in soil antecedent moisture, affirming our hypothesis that plants modify bioretention systems below ground. Despite regular inputs (41 storms in seven total months), plants increased retention (>25%) of the stormwater input volume compared with the non-vegetated control by changes in antecedent moisture at depth (0.30–0.45 m). This effect on the belowground structure was different across vegetation types. The most pronounced soil-water storage occurred beneath plants with greater leaf area and rooting mass at depth (shrubs and prairie vegetation), particularly when inputs were small to moderate. When inputs were sufficiently large, drainage volume was similar across vegetation types but still different from the non-vegetated control. Vegetation type also affected the timing and rate of drainage. Real-time monitoring showed that the peak flow rate and lag time of bioretention system drainage varied by 3-fold and 1.5-fold, respectfully, among vegetation types depending on storm size. These differences in drainage volume and rate might be summarized as belonging to three hydrologic responses, all else being equal. The turfgrass systems produced accelerated flow responses with the least storage, prairie plants produced flow rates similar to those of turfgrass, but with increased retention of stormwater, and shrubs produced subdued flow responses with the greatest soil-water storage. Thus, a difference in vegetation could potentially double soil-water storage and/or drainage rate through evapotranspiration, antecedent moisture, and thus the available pore space for soil-water retention.

Evapotranspiration was the principle control over changes in antecedent moisture between storm events and thus the ability of vegetated bioretention systems to retain stormwater. Indeed, throughout this study, shrub and prairie systems buffered the greatest number of storm events regardless of storm size and produced little to no drainage when inputs were small (≤50 mm) because of low antecedent moisture. Plant uptake, although not measured directly, was evident via a decline in soil moisture when there was no detectable drainage. Soil moisture beneath shrubs and prairie plants declined steeply between storm events at the surface (0–0.15 m) and was consistently 0.20 m

3 m

−3 drier at a depth below the rooting zone of turfgrass (0.30–0.45 m). This reduction in soil moisture from within the deeper portion of the soil profile equates to a loss of 3 mm d

−1 beneath the shrubs and prairie plants. From the change in soil water over a 4-d period of hot dry weather for the full rooting depth (0–0.45 m), we estimate an actual ET of 10 mm d

−1 from beneath the shrubs and prairie plants and 3 mm d

−1 from turfgrass. Direct evaporation of ponded stormwater over several hours was negligible to calculations of ET or budget calculations [

44]. A cursory calculation of potential ET from meteorological data collected on-site suggests ~3 mm d

−1 for well-watered turfgrass using the Penman-Monteith equation. The shrubs and prairie plants apparently tripled this reference value due to their height, green leaf mass, leaf area, and root mass at depth. Another study reported that shrubs and prairie plants lost between 33 and 62 mm during two stormwater simulations (in July and August), with an estimated ET of 6 to 9 mm d

−1 [

20]. Similarly, greater plant height, green aboveground biomass, and root length led to 0.15 m

3 m

−3 drier surface soil for 120 d among four subalpine plant communities in the French Alps [

51]. Lush vegetation and an ample water supply were similarly attributed to a doubling of transpiration (9.0 vs. 3.4 mm d

−1) between two weighing lysimeters containing sandy loam soil in Villanova, PA [

52]. We conclude that plants alter antecedent moisture to improve stormwater storage even when rain events are frequent.

Without plants, the redistribution of soil water within the bioretention system was not sufficient for the internal volume to receive and store inputs (even small ones) of stormwater. Soil moisture at a depth of 0–0.15 m in the non-vegetated controls declined by an average of 0.25 m

3 m

−3 between storm events, and thus this surface zone stored 6 mm of the input volume before reaching field capacity. Soil at a depth of 0.30–0.45 m remained nearly saturated throughout the period of study because of the slow

Ks and perhaps also because soil saturation above the central drain was necessary for drainage to occur in these open-air lysimeters [

53]. Although not measured, soil moisture at a depth of 0.15–0.30 m had a similar storage capacity as the upper 0.15 m (6 mm storage) through the calculation of total storage capacity. The controls retained 100% of the input volume following only the smallest storm event (8.3 mm input), and storage declined to less than 25% of the input volume as input volume increased from 8 to 50 mm. Soil bulk density ranged from approximately 1.3 to 1.5 Mg m

−3 which might be attributed to the sand content of the soil mixture used (3:1:6 of topsoil, compost, and sand by volume). These data suggest the initial soil mixture was destructured with entrapped air, disconnected (or somewhat disconnected) pore spaces, and/or a high volume of micropores. Thus, the controls were too wet to store more than ~12 mm of the input volume for stormwater inputs greater than 50 mm. Another study of two bioretention basins lacking ET (the plants were pre-emergent) showed that soil alone accommodated 28 to 100% of the input volume from 38 storm events of >50 mm, but an estimated 21% of this “retained” water was actually held in the soil [

54]. In the present study, sufficient dry weather (such as >6 days [

21]) was likely needed before antecedent moisture declined; the average period between stormwater inputs was 5 d, and only 7 of the 41 total rain events were preceded by at least a week of dry weather. We estimate from the control-soil

Ks (0.27 mm h

−1 at 0.30–0.45 m) that an additional 2 days between storms on average could have helped the controls drain by 0.09 m

3 m

−3 to reach field capacity. Regardless, inputs >18 mm would have yet exceeded soil water storage in the control. Ultimately, the marked contrast in soil-water storage between the control and the vegetated mesocosms reaffirms that—unless inputs are small relative to the soil volume or the storms are preceded by dry weather—plants are an essential component of bioretention systems expected to retain stormwater and reduce runoff in urban landscapes.

The deeper-rooting plants also improved soil physical properties to facilitate soil drainage and drier antecedent conditions at depth following storm events. Shrubs and prairie plants with greater root density at depth increased soil

Ks by as much as 25-fold compared with the control and turfgrass systems. Shrubs and prairie plants also increased the proportion of soil macropores that would be available to conduct free-draining soil water. Although we did not quantify the geometry of the soil matrix directly, the increase in

Ks and decrease in soil-water retention within an unchanged total soil pore volume (bulk density did not differ by treatment) point to changes in the shape and size distribution of soil pores under shrubs and prairie plants. Other studies have shown that subtle modifications to the diameter, shape, connectivity, and orientation of soil pores can improve soil drainage [

55,

56]. In a corn–soybean cropping system in Iowa, 13 years of a winter rye cover crop increased the volumetric water content at field capacity (−33 kPa) by 0.03 m

3 m

−3 compared with crop rotations lacking the cover crop, a 10% increase in retention [

57]. In our study, differences in soil water retention among the four vegetative treatments signify changes to the pore size distribution. Soil bulk density data show the soil beneath each of the four vegetative treatments at a given sampling depth had a similar total porosity. Moreover, as the tension increased, the soil-water retention curves became non-parallel (that is, different proportions of total porosity were drained as soil tension approached −25 kPa). Retention curves that display a greater reduction in soil moisture by the end of the analysis contain a greater proportion of the total porosity in larger pore spaces because larger pores drain more readily than smaller pores [

49]. In general, then, soil cores from beneath controls and turfgrass were likely destructured and contained the greatest proportion of smaller, unconnected pores, whereas soil cores from beneath prairie plants and shrubs contained a greater proportion of larger, connected pores. Assuming core data are representative of the surrounding soil matrix, the deeper rooting plants thus improved soil drainage and promoted dry antecedent conditions by increasing the proportion of interconnected macropore spaces at depth, in addition to uptake via ET.

However, a drier soil did not necessarily slow the hydrologic response because drainage volume and drainage rate were apparently controlled by different processes. Previous studies have modified soil texture, soil volume, and input volume under the assumption that systems which reduce drainage volume also abate drainage rate [

58]. The plants of bioretention systems have then been widely credited for further slowing a system’s release of stormwater inputs [

37,

58,

59,

60,

61,

62]. In the present study, an increased capacity for soil-water storage did not always coincide with slower flow rates. For example, the prairie systems were among the driest in this study, yet they displayed accelerated flow responses with some of the most rapid peak flow rates. A closer examination of the relationships among input size, antecedent soil water, drainage volume, and drainage rate shows how contrasting hydrologic responses emerged. Two vegetation types (prairie and turfgrass) produced accelerated drainage responses in which the response curves had short lag times and fast peak flow rates, whereas the others (shrubs, and the control) produced subdued drainage responses with long lag times and slow peak flow rates. The accelerated responses typically drained 50% of their total volume upon reaching the peak flow rate, whereas subdued responses drained only 20 to 30% of the total volume upon reaching the peak flow rate. Flow responses were not solely related to input or output volumes because different flow rates were most evident following large inputs (>90 mm) when all three vegetation types drained a similar volume (75% of input). Moreover, an accelerated drainage response was not due to wetter antecedent conditions (as modeled in plant-free systems [

10]) because some of the fastest peak flow rates occurred from dry soils (beneath the prairie plants). These findings suggest that while antecedent moisture-controlled drainage volume, input size and pore structure (i.e., pore diameter distribution, pore connectivity) were the primary factors contributing to differences in drainage rate. We note the two controlling processes are not entirely decoupled and will likely vary in explanatory power depending on the size of a storm. By extension, bioretention systems are often shown to receive and infiltrate stormwater without ponding or generating runoff, yet the assumption that the plant-soil system has also slowed the internal drainage rate may not be correct.

Our data also point to preferential flow and infiltration via changes in soil aggregation as explanations for the magnitude of peak flow rates quantified in this study. In preferential flow, the rate at which soil systems conduct water to depth depends more on the rate of water movement through connected macropores than it does soil

Ks [

63] or soil moisture [

64]. We measured peak flow rates of drainage that were an order of magnitude greater than the slowest (i.e., most restrictive)

Ks. To reconcile peak flow rates ranging from 50 to 150 mm h

−1 (all treatments when inputs >90 mm) with rates of

Ks ranging from 0.27 to 7.5 mm h

−1 (at a depth of 0.30–0.45 m), the hydraulic head by Darcy’s Law, which assumes homogeneous and saturated flow, would have had to have been ~9 to 110 m of ponded water above the soil surface. Because this ponding height was not possible within the design constraints of the study, we conclude preferential flow expedited drainage. Infiltration and surface crusting may also partially explain contrasts in peak flow rates. Shrub mesocosms were slow to infiltrate and consequently ponded stormwater at the surface for up to an hour when inputs were >50 mm; this lagged infiltration of stormwater led to subdued drainage responses even though the shrub systems had the fastest average

Ks at depth. In contrast, the prairie and turfgrass mesocosms had an infiltration rate similar to the rate at which stormwater was applied (192 mm h

−1) and accommodated most inputs without surface ponding. This type of phenomenon has been demonstrated in tile-drained fields, were rapid infiltration produced accelerated drainage responses following heavy rainfall despite a slow matrix

Ks [

65,

66]. Another study of similarly designed vegetated mesocosms found a mixed-form vegetation type to drain more slowly than turfgrass, with longer lag times and reduced flow rates [

22]. Perhaps the development of preferential flow paths in vegetated mesocosms can offset differences in leaf interception, infiltration, and soil-water storage within these systems. For example, although earthworms were not observed in the present study, their presence has been credited toward macropore flow in other bioretention systems [

17].

Bioretention practices should be recognized as systems in which a chosen soil mixture interacts with plants to determine drainage dynamics. Theoretically, all soil, including mixtures of different media used for bioretention, has two domains of soil porosity. Soil has (i) a textural domain of pore spaces created by the mineral composition of the initial mixture, and (ii) a structural domain that develops as pore spaces are formed and stabilized via organic matter, soil biota, management, and time [

25,

31]. In practice, the recommendations for designing a bioretention soil mixture focus on initial composition. Mineral soil is used (in the absence of plants) to stabilize the mixture upon repeated wetting (>20% mineral soil additions [

12]), and large proportions of compost are added to improve soil porosity and drainage (e.g., 8:2 compost/sand by volume [

13]). The structural domain of soil porosity in these mixtures, however, has been regarded largely in theory. Plants are assumed to “maintain the soil structure of the root zone” [

67] and to modify their environment via ecological engineering [

24]. Models that predict soil-water dynamics in bioretention systems should account for the possibility that soil mixtures are dynamic or that plants might alter

Ks over time [

68]. One analysis has suggested that the peak flow rate of drainage from bioretention systems might be underestimated by 20-to 40-fold when modeled as a function of static parametrization [

68]. Here, we find supportive evidence that the textural domain of soil porosity was unchanged, but after four years, plants altered the structural domain of the soil mixture by changing

Ks, infiltration, and soil water retention. These findings signify how plant roots can be the catalysts for the alteration of soil porosity and connectivity [

33,

69,

70]. Incorporating the dynamic processes of plant-induced changes to soil structure into hydrologic models is an important next step to understanding how these systems impact local hydrology within urban environments.

Practitioners should consider different types of vegetation to promote desirable ecosystem services expected of these surface water management tools. First, bioretention practices are used to redirect runoff to soil and thereby mitigate surface flooding and replenish local groundwater. Given the conditions of our study, turfgrass best satisfied this expected service via wetter antecedent conditions, rapid peak flow rates, and larger drainage volumes; we note that our study did not reproduce compacted soil conditions often observed in urban environments. Prairie vegetation may be effective at redirecting surface water into the soil, but at the possible reduction in groundwater recharge because of ET. Prairie plants and shrubs have gained popularity in Wisconsin because they dry the soil between storms and appear to “soak up” stormwater [

38]. We note that 25 to 100% of the stormwater retained by these two vegetation types across the two years of study was lost to the atmosphere via ET and not redirected as subsurface drainage. Not all vegetation may be reliable for “increasing the amount of water that filters into the ground, which recharges local and regional aquifers” [

42]. Second, bioretention systems are used to improve stormwater and runoff quality. While we did not measure soil or water chemistry, understanding how plants alter bioretention hydrology will inform pollutant retention because the flow rate and duration of drainage together predict the load and flux of chemical constituents. A functional shift in bioretention systems as being “dry” or “wet” via plant-induced changes in ET would impact phosphorus retention [

71,

72] and the amount of time pollutants are in contact with soil solids [

73]. Here, turfgrass maintained the greatest soil moisture content at depth, which represents a “wet” system but also implies that incoming stormwater would pass through the system both rapidly and unfiltered. Although we have no data tracking the exchange of “old” versus “new” stormwater throughout an application event to support this prediction, we speculate that the different drainage responses (accelerated vs. subdued) observed in this study may suggest plants impact the ability of bioretention systems to buffer or dampen stormwater inputs [

20]. In summary, we will be better equipped to select appropriate plants given any site-specific management needs by recognizing how vegetation interacts with soil to alter antecedent moisture and hydrology of bioretention practices.