Proximal Remote Sensing Buggies and Potential Applications for Field-Based Phenotyping

Abstract

:1. Introduction

2. Field Phenotyping Platforms: The Role of Field Buggies

2.1. Approaches Available

2.1.1. Mobile Field Platforms (“Buggies”)

- (a)

- The simplest approach that provides rigorous and constant observation geometry is to mount sensors on a light, hand-controlled cart; for example, a simple hand-pushed frame on bicycle wheels (2 m-wide by 1.2 m-long and with a 1-m clearance) has been described [34]. Such systems can be very cheap and permit the mounting of a wide range of sensors and associated recording equipment. In principle, it should also be possible to tag recordings to individual plots using high precision GPS.

- (b)

- The next step of sophistication is to incorporate drive mechanisms and autonomous control to allow the system to traverse the field automatically at a steady rate, without the need to be pushed (which can lead to crop trampling). A wide range of such systems of varying degrees of sophistication have been developed, including the “BoniRob” platform from Osnabrucke, Germany [35] and the “Armadillo” from Denmark and the University of Hohenheim [36]. BoniRob has a lighter, wheeled structure with adjustable ground clearance and configurable wheel spacing that is probably more suitable for taller crops.

- (c)

- The next stage of development involves the use of larger and even more sophisticated platforms (or “buggies”), usually with a driver, that can support a wider range of sensors and controls. Some examples of such custom-designed devices for field phenotyping include the system designed in Maricopa (Arizona) described by [37], the “BreedVision” system from Osnabrucke [38,39] and the Avignon system [40] and the “Phenomobile” designed at the High Resolution Plant Phenomics facility in Canberra (described in the following Section 4.1 and Figure 1).

- (d)

- There is also increasing convergence of such specialised “Phenomobiles” with the standard arrays of sensors commonly mounted on tractor booms for the routine monitoring of crop conditions, such as nitrogen status (e.g., Crop-Circle (Holland Scientific, Lincoln, Nebraska, USA), Yara-N sensor (Yara, Haninghof, Germany) and Greenseeker (Trimble Agriculture, Sunnyvale, California, USA)).

| Platform Type | Disadvantages | Advantages |

|---|---|---|

| Fixed systems | Generally expensive; can only monitor a very limited number of plots | Unmanned continuous operation; after-hours operation (e.g., night-time); good repeatability |

| Permanent platforms based on cranes, scaffolds or cable-guided cameras | Limited area of crop, so very small plots; expensive | Give precise, high resolution images from a fixed angle |

| Towers/cherry-pickers | Generally varying view angle; problems with distance (for thermal), bi-directional reflectance distribution function (BRDF), plot delineation, etc.; difficult to move, so limited areas covered | Good for the simultaneous view of the area; can be moved to view different areas |

| Mobile in-field systems | Generally take a long time to cover a field, so subject to varying environmental conditions | Very flexible deployment; good capacity for GPS/GIS tagging; very good spatial resolution |

| Hand-held sensors | Very slow to cover a field; only one sensor at a time; different operators can give different measurements | Good for monitoring |

| Hand-pushed buggies | Limited payload (weight); hard operation for large experiments | Relatively low cost; flexibility with payload and view angle geometry; very adaptable |

| Tractor-boom | Long boom may not be stable | Easy operation; constant view angle; wide swath (if enough sensors are mounted as on a spraying bar); mounting readily available (needs modification) |

| Manned buggies | Requires a dedicated vehicle (expensive) | Flexibility with the design of the vehicle (e.g., tall crops, row spacing); Constant view angle; very adaptable |

| Autonomous robots | Expensive; no commercial solutions available; safety mechanisms required | Unmanned continuous operation; after-hours operation (e.g., night-time) |

| Airborne | Limitations on the weight of the payload depending on the platform; a lack of turnkey systems; spatial resolution depends on speed and altitude | Can cover the whole experiment in a very short time, getting a snapshot of all of the plots without changes in the environmental conditions |

| Blimps/balloons | Limited to low wind speed; not very easily moved precisely; limited payload | Relatively cheap compared with other aerial platforms |

| UAVs | Limited payload (weight and size); limited altitude (regulations) and total flight time (hence, total covered area); less wind-affected than blimps; regulatory issues depending on the country | Relatively low cost compared with manned aerial platforms; GPS navigation for accurate positioning |

| Manned aircraft | Cost of operation can be expensive and may prohibit repeated flights, thereby reducing temporal resolution; problems of availability | Flexibility with the payload (size and weight); Can cover large areas rapidly |

2.1.2. Relative Advantages/Disadvantages of Different Platforms

3. Phenotyping Sensors for Field Buggies

| Sensor Type | Applications | Limitations |

|---|---|---|

| RGB Cameras | Imaging canopy cover and canopy colour. Colour information can be used for deriving information about chlorophyll concentration through greenness indices. The use of 3D stereo reconstruction from multiple cameras or viewpoints allows the estimation of canopy architecture parameters. | No spectral calibration, only relative measurements. Shadows and changes in ambient light conditions can result in under- or over-exposure and limit automation of image processing. |

| LiDAR and time of flight sensors | Canopy height and canopy architecture in the case of imaging sensors (e.g., LiDAR). Estimation of LAI, volume and biomass. Reflectance from the laser can be used for retrieving spectral information (reflectance in that wavelength). | Integration/synchronization with GPS and wheel encoder position systems is required for georeferencing. |

| Spectral sensors | Biochemical composition of the leaf/canopy. Pigment concentration, water content, indirect measurement of biotic/abiotic stress. Canopy architecture/LAI with NDVI. | Sensor calibration required. Changes in ambient light conditions influence signal and necessitate frequent white reference calibration. Canopy structure and camera/sun geometries influence signal. Data management is challenging. |

| Fluorescence | Photosynthetic status, indirect measurement of biotic/abiotic stress. | Difficult to measure in the field at the canopy scale, because of the small signal-to-noise ratio, though laser-induced fluorescence transients (LIFT) can extend the range available, while solar-induced fluorescence can be used remotely. |

| Thermal sensors | Stomatal conductance. Water stress induced by biotic or abiotic factors. | Changes in ambient conditions lead to changes in canopy temperature, making a comparison through time difficult, necessitating the use of references. Difficult to separate soil temperature from plant temperature in sparse canopies, limiting the automation of image processing. Sensor calibration and atmospheric correction are often required. |

| Other sensors: electromagnetic induction (EMI), ground penetrating radar (GPR) and electrical resistance tomography (ERT) | Mapping of soil physical properties, such as water content, electric conductivity or root mapping. | Data interpretation is challenging, as heterogeneous soil properties can strongly influence the signal. |

3.1. Types of Sensor

3.1.1. RGB Cameras

3.1.2. LiDAR and Time of Flight Sensors

3.1.3. Spectral Sensing

3.1.4. Fluorescence

3.1.5. Thermal Sensors

3.1.6. Other Sensors

3.2. Some Technical Challenges in the Use of Proximal Sensors Mounted on Buggies

- (a)

- Problems resulting from mixed pixels when a single pixel comprises both plant material and background soil (Jones and Sirault, submitted to this special issue).

- (b)

- Difficulties caused by variation in the solar illumination angle and the bi-directional reflectance distribution function (BRDF) (for example, the resulting variation in the amount of shadowing in a pixel and its dependence on canopy structure) [103].

- (c)

- Although the simplest application of in-field remote sensing, especially of spectral reflectance, is to use simple vegetation indices (VI) as indicators of variables of interest (e.g., N or water content, chlorophyll, LAI or photosynthesis), the values of the quantities being estimated can be very subject to environmental conditions and to canopy structure; this leads to substantial imprecision in the estimates of variables of interest and the need for site-specific calibration [82]. However, substantial improvements can be made in the estimation of these fundamental variables, by taking into account the detailed canopy structure and BRDF and the use of appropriate radiation transfer models [62,104,105]. This approach often requires significant computing power and may not often be suitable for real-time implementation on a mobile buggy.

- (d)

- Data handling. A particular and continuing challenge in the use of platform-mounted sensors remains the data handling and assimilation of data from different types of sensor (frame imagers, line-scan imagers, point sensor), each with their own scales of view, and their combination with GPS information to generate effective measurements for a particular experimental plot. This generally requires specialist software engineering skills.

4. Application to Phenotyping

| Trait | Primary Effect | Sensor Technology |

|---|---|---|

| Canopy structure | ||

| Leaf area index | RI | LiDAR, 2D and 3D RGB photogrammetry, ToF camera, spectral vegetation indices |

| Biomass | WUE/RUE | LiDAR, 2D and 3D RGB photogrammetry, ToF camera |

| Tillering | HI | LiDAR, 2D and 3D RGB photogrammetry, ToF camera |

| Canopy height | WUE/HI | LiDAR, 2D and 3D RGB photogrammetry, ToF camera |

| Awn presence | WUE/HI | LiDAR, 2D and 3D RGB photogrammetry, ToF camera |

| Leaf rolling | WUE/RI | LiDAR, 3D RGB photogrammetry and ToF camera |

| Leaf angle | RI | LiDAR, 3D RGB photogrammetry and ToF camera |

| Early vigour | WUE/WU | LiDAR, 2D RGB photogrammetry, spectral vegetation indices |

| Tissue damage | WU/RI | RGB camera, multi/hyperspectral camera |

| Leaf glaucousness/waxes | WUE/HI | Multi/hyperspectral camera |

| Pubescence | WUE/HI | Multi/hyperspectral camera |

| Grain fertility (number) | HI | Very high resolution RGB images |

| Function | ||

| Water loss/stomatal control | WUE/WU | Thermal camera, infra-red temperature sensor |

| Photosynthesis | RUE | Chlorophyll fluorescence, LIFT, PRI, estimation from biomass accumulation (see above) |

| Phenology | ||

| Stay green/senescence | HI/RI | LiDAR, multi/hyperspectral camera, thermal camera |

| Flowering date | HI | LiDAR, high resolution RGB images |

| Biochemistry | ||

| Stem carbohydrates | HI | hyperspectral camera |

| Nutrient content (e.g., N) | NUE | Multi/hyperspectral camera |

| Carotenoids, xanthophylls, anthocyanins, water indices | WU/RI | Multi/hyperspectral camera |

4.1. Case Study

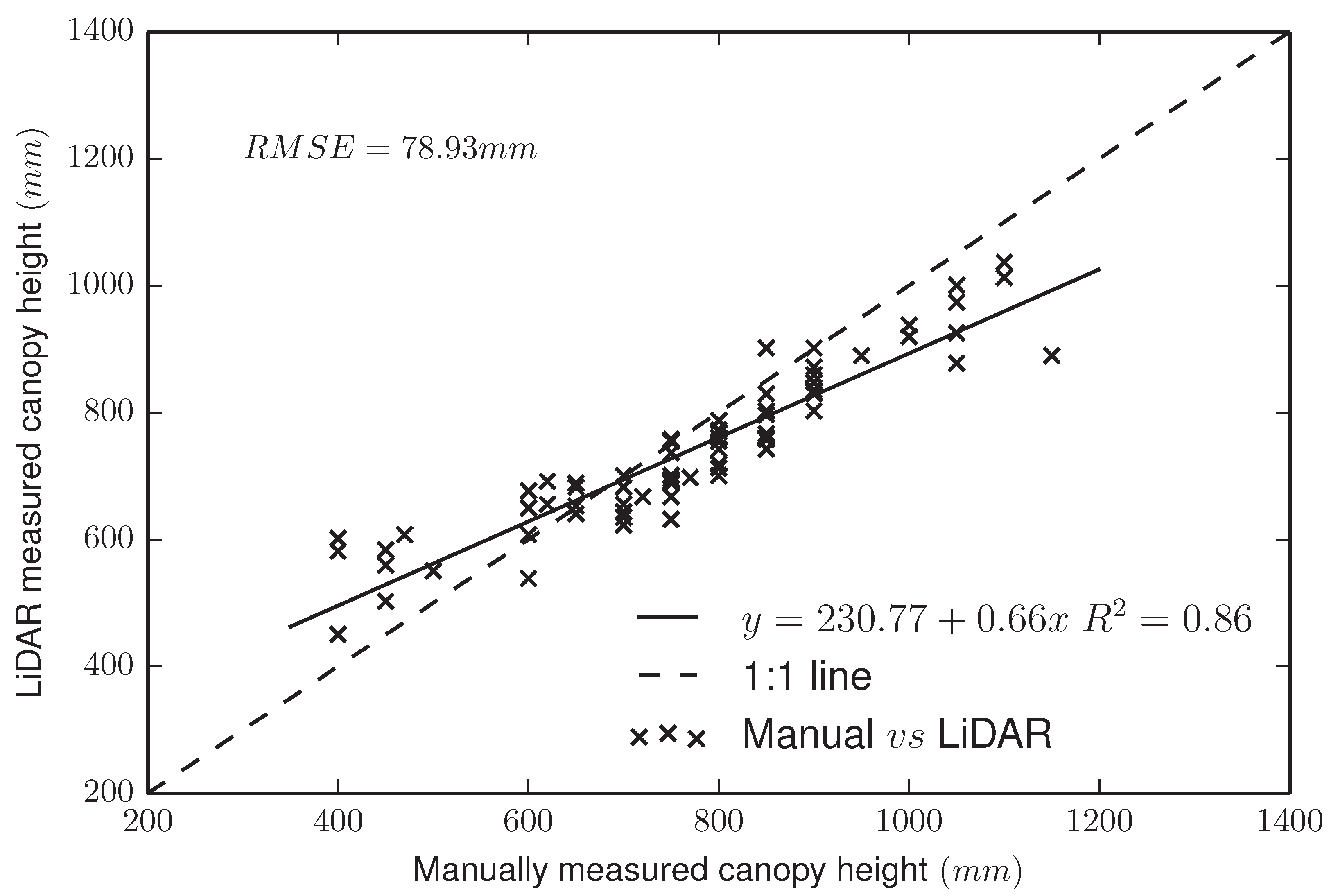

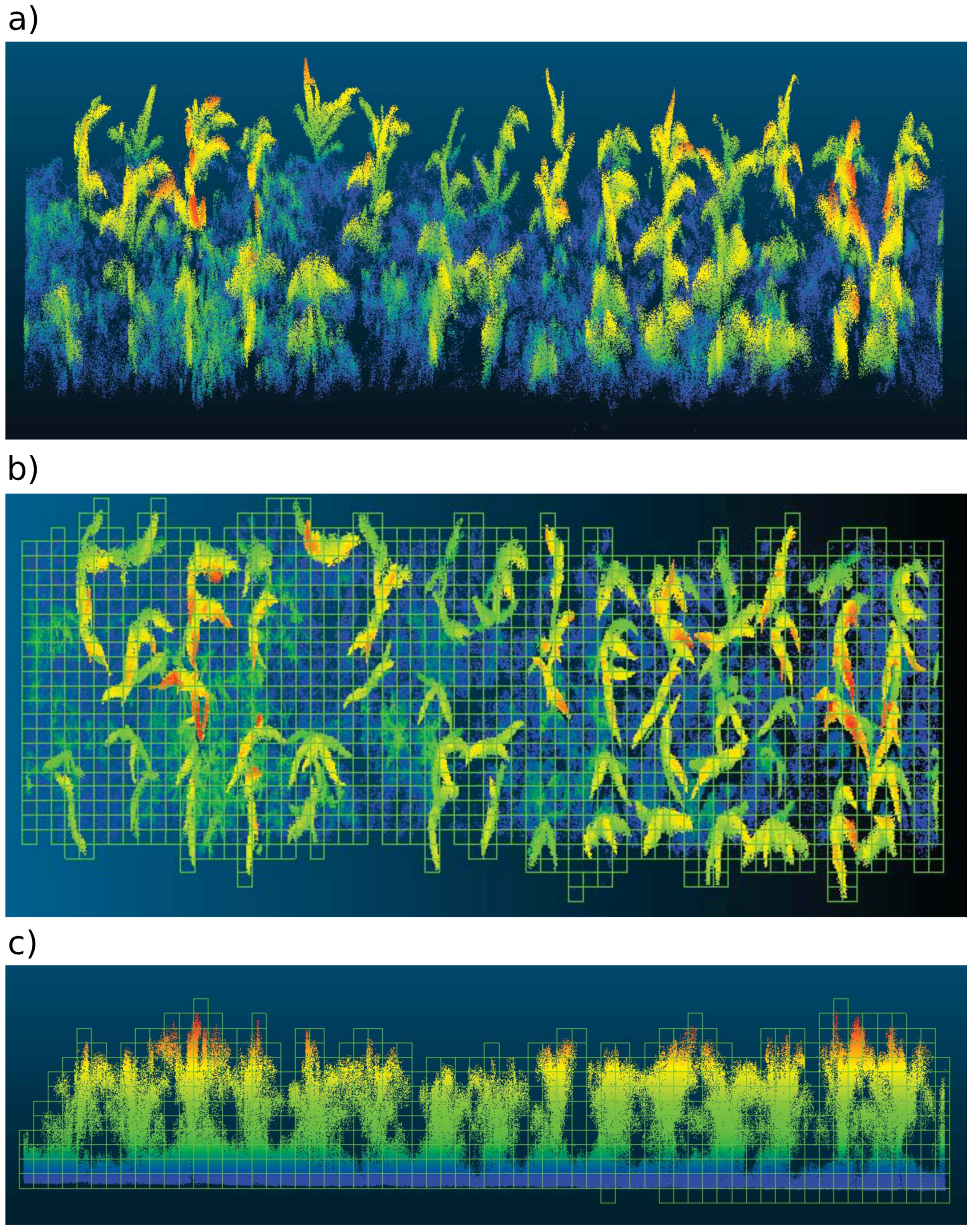

4.1.1. LiDAR Subsystem

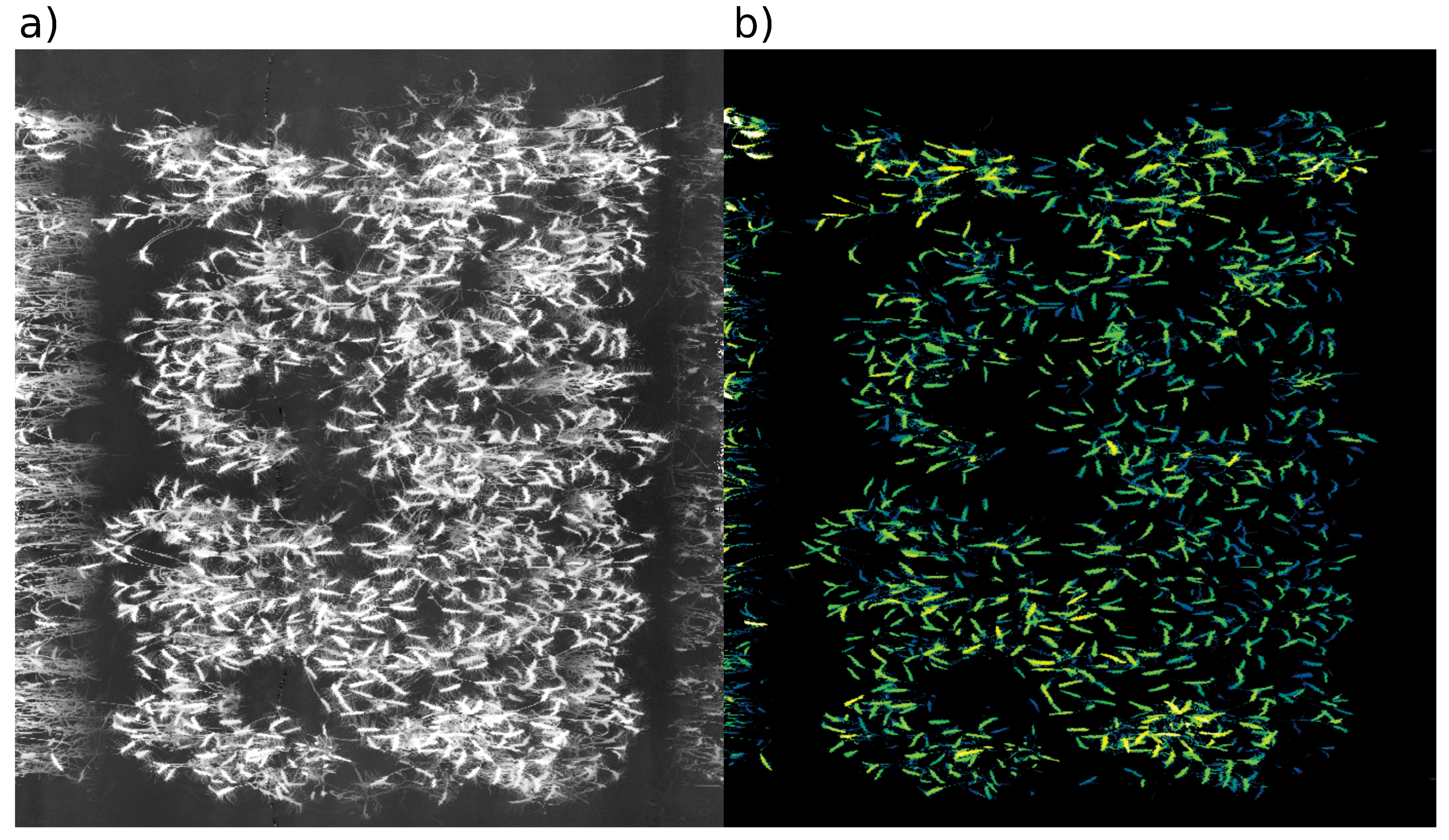

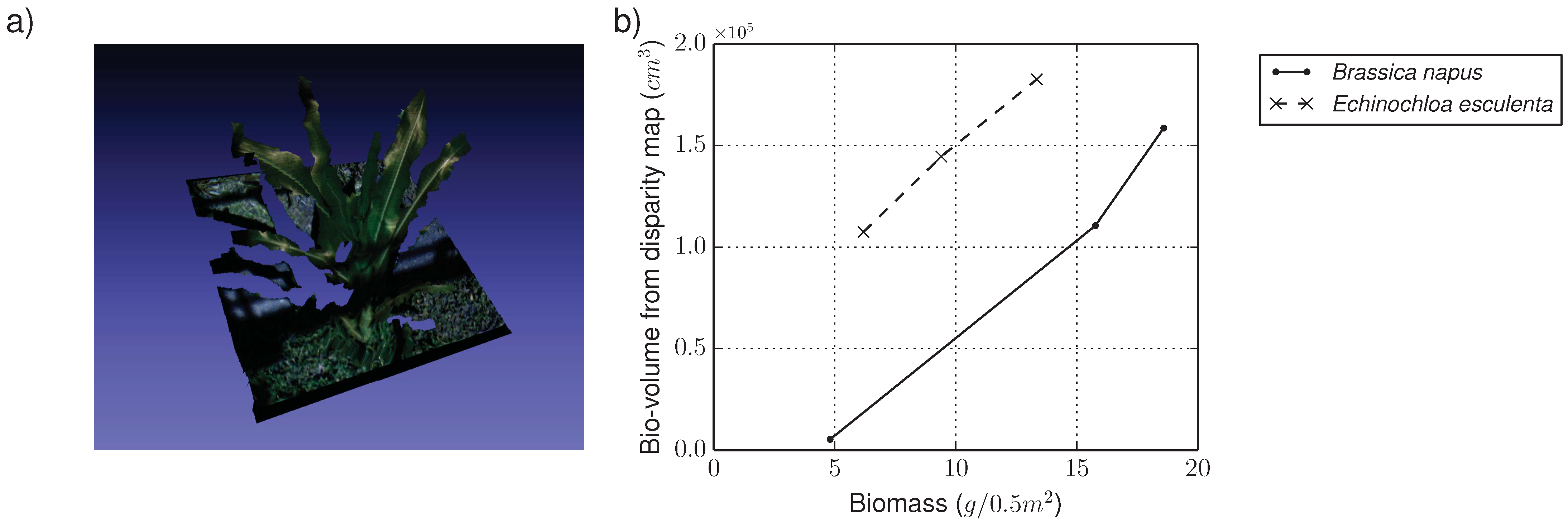

4.1.2. RGB Camera Subsystem

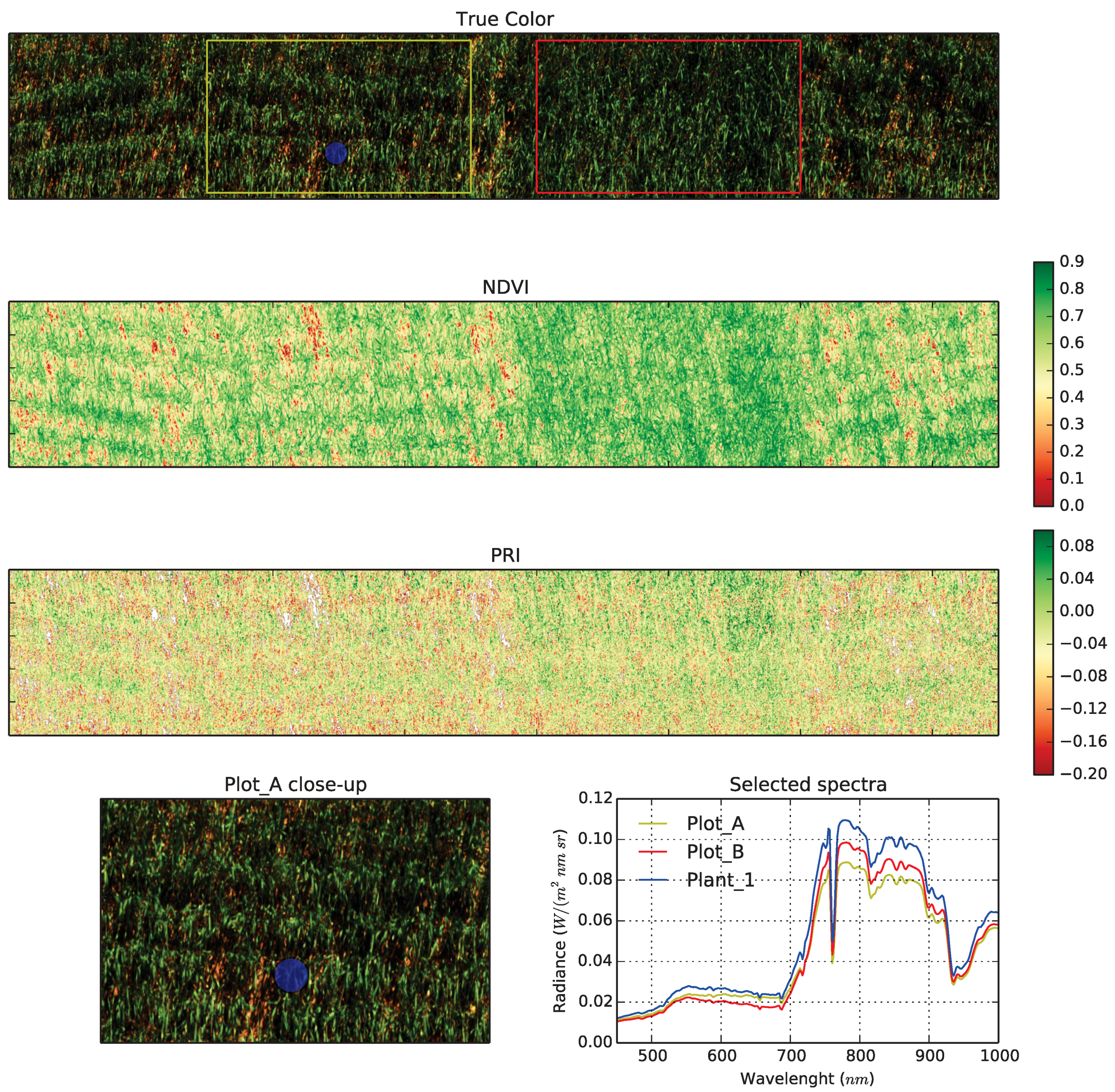

4.1.3. Hyperspectral Subsystem

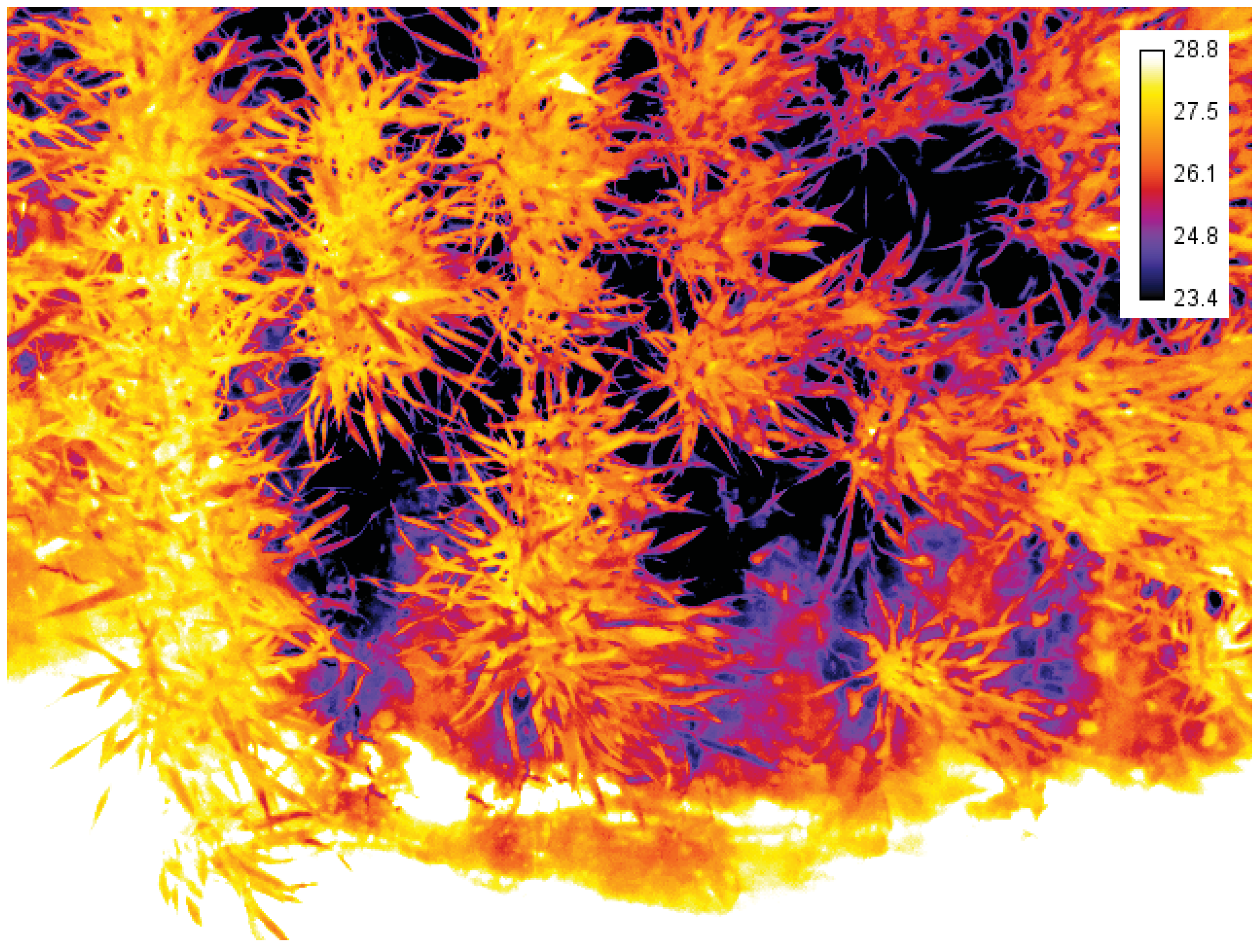

4.1.4. Thermal Infrared Camera

5. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Bruinsma, J. The resource outlook to 2050. By how much do land, water use and crop yields need to increase by 2050? In Proceedings of the FAO Expert Meeting on How to Feed the World in 2050, 24–26 June 2009; FAO: Rome, Italy, 2009. [Google Scholar]

- Royal Society of London. Reaping the Benefits: Science and the Sustainable Intensification of Global Agriculture; Technical Report; Royal Society: London, UK, 2009. [Google Scholar]

- Tilman, D.; Balzer, C.; Hill, J.; Befort, B.L. Global food demand and the sustainable intensification of agriculture. Proc. Natl. Acad. Sci. USA 2011, 108, 20260–20264. [Google Scholar] [CrossRef] [PubMed]

- Hall, A.; Wilson, M.A. Object-based analysis of grapevine canopy relationships with winegrape composition and yield in two contrasting vineyards using multitemporal high spatial resolution optical remote sensing. Int. J. Remote Sens. 2013, 34, 1772–1797. [Google Scholar] [CrossRef]

- Ingvarsson, P.K.; Street, N.R. Association genetics of complex traits in plants. New Phytol. 2011, 189, 909–922. [Google Scholar] [CrossRef] [PubMed]

- Rebetzke, G.; van Herwaarden, A.; Biddulph, B.; Moeller, C.; Richards, R.; Rattey, A.; Chenu, K. Field Experiments in Crop Physiology, 2013. Available online: http://prometheuswiki.publish.csiro.au/tiki-pagehistory.php?page=Field%20E%xperiments%20in%20Crop%20Physiology&preview=41 (accessed on 22 January 2014).

- Pask, A.; Pietragalla, J.; Mullan, D.; Reynolds, M. Physiological Breeding II: A Field Guide to Wheat Phenotyping; Technical Report; CIMMYT: Mexico, DF, Mexico, 2012. [Google Scholar]

- Tuberosa, R. Phenotyping for drought tolerance of crops in the genomics era. Front. Physiol. 2012, 3. [Google Scholar] [CrossRef] [PubMed]

- Cobb, J.N.; DeClerck, G.; Greenberg, A.; Clark, R.; McCouch, S. Next-generation phenotyping: Requirements and strategies for enhancing our understanding of genotype-phenotype relationships and its relevance to crop improvement. Theor. Appl. Genet. 2013, 126, 867–887. [Google Scholar] [CrossRef] [PubMed]

- Araus, J.L.; Cairns, J.E. Field high-throughput phenotyping: The new crop breeding frontier. Trends Plant Sci. 2014, 19, 52–61. [Google Scholar] [CrossRef] [PubMed]

- White, J.W.; Andrade-Sanchez, P.; Gore, M.A.; Bronson, K.F.; Coffelt, T.A.; Conley, M.M.; Feldmann, K.A.; French, A.N.; Heun, J.T.; Hunsaker, D.J.; et al. Field-based phenomics for plant genetics research. Field Crops Res. 2012, 133, 101–112. [Google Scholar] [CrossRef]

- Cabrera-Bosquet, L.; Crossa, J.; von Zitzewitz, J.; Serret, M.D.; Araus, J.L. High-throughput phenotyping and genomic selection: The frontiers of crop breeding converge. J. Integr. Plant Biol. 2012, 54, 312–320. [Google Scholar] [CrossRef] [PubMed]

- Fussell, J.; Rundquist, D. On defining remote sensing. Photogramm. Eng. Remote Sens. 1986, 52, 1507–1511. [Google Scholar]

- Fiorani, F.; Schurr, U. Future scenarios for plant phenotyping. Annu. Rev. Plant Biol. 2013, 64, 267–291. [Google Scholar] [CrossRef] [PubMed]

- Furbank, R.T.; Tester, M. Phenomics-technologies to relieve the phenotyping bottleneck. Trends Plant Sci. 2011, 16, 635–644. [Google Scholar] [CrossRef] [PubMed]

- Walter, A.; Studer, B.; Kolliker, R. Advanced phenotyping offers opportunities for improved breeding of forage and turf species. Ann. Bot. 2012, 110, 1271–1279. [Google Scholar] [CrossRef] [PubMed]

- Rebetzke, G.J.; Fischer, R.T.A.; van Herwaarden, A.F.; Bonnett, D.G.; Chenu, K.; Rattey, A.R.; Fettell, N.A. Plot size matters: Interference from intergenotypic competition in plant phenotyping studies. Funct. Plant Biol. 2013, 41, 107–118. [Google Scholar] [CrossRef]

- Amani, I.; Fischer, R.A.; Reynolds, M.F.P. Canopy Temperature Depression Association with Yield of Irrigated Spring Wheat Cultivars in a Hot Climate. J. Agron. Crop Sci. 1996, 176, 119–129. [Google Scholar] [CrossRef]

- Brennan, J.P.; Condon, A.G.; van Ginkel, M.; Reynolds, M.P. An economic assessment of the use of physiological selection for stomatal aperture-related traits in the CIMMYT wheat breeding programme. J. Agric. Sci. 2007, 145, 187–194. [Google Scholar] [CrossRef]

- Condon, A.G.; Reynolds, M.P.; Rebetzke, G.J.; van Ginkel, M.; Richards, R.A.; Farquhar, G.D. Using stomatal aperture-related traits to select for high yield potential in bread wheat. Wheat Prod. Stressed Environ. 2007, 12, 617–624. [Google Scholar]

- Jones, H.G.; Serraj, R.; Loveys, B.R.; Xiong, L.; Wheaton, A.; Price, A.H. Thermal infrared imaging of crop canopies for the remote diagnosis and quantification of plant responses to water stress in the field. Funct. Plant Biol. 2009, 36, 978–989. [Google Scholar] [CrossRef]

- Prashar, A.; Yildiz, J.; McNicol, J.W.; Bryan, G.J.; Jones, H.G. Infra-red thermography for high throughput field phenotyping in Solanum tuberosum. PLoS One 2013, 8, e65816. [Google Scholar] [CrossRef] [PubMed]

- Anderson, K.; Gaston, K.J. Lightweight unmanned aerial vehicles will revolutionize spatial ecology. Front. Ecol. Environ. 2013, 11, 138–146. [Google Scholar] [CrossRef]

- Chapman, S.C.; Merz, T.; Chan, A.; Jackway, P.; Hrabar, S.; Dreccer, M.F.; Holland, E.; Zheng, B.; Ling, T.J.; Jimenez-Berni, J. Pheno-Copter: A Low-Altitude, Autonomous Remote-Sensing Robotic Helicopter for High-Throughput Field-Based Phenotyping. Agronomy 2014, 4, 279–301. [Google Scholar] [CrossRef]

- Labbé, S.; Lebourgeois, V.; Virlet, N.; Martínez, S.; Regnard, J.L. Contribution of airborne remote sensing to high- throughput phenotyping of a hybrid apple population in response to soil water constraints. In Proceedings of the 2nd International Plant Phenotyping Symposium, Jülich, Germany, 5–7 September 2011; International Plant Phenomics Network. pp. 185–191.

- Matese, A.; Primicerio, J.; di Gennaro, F.; Fiorillo, E.; Vaccari, F.P.; Genesio, L. Development and application of an autonomous and flexible unmanned aerial vehicle for precision viticulture. In Acta Horticulturae; Poni, S., Ed.; International Society for Horticultural Science (ISHS): Leuven, Belgium, 2013; pp. 63–69. [Google Scholar]

- Perry, E.M.; Brand, J.; Kant, S.; Fitzgerald, G.J. Field-based rapid phenotyping with Unmanned Aerial Vehicles (UAV). In Proceedings of 16th Agronomy Conference 2012, Armidale, NSW, Australia, 14–18 October 2012; Australian Society of Agronomy: Armidale, NSW, Australia, 2012. [Google Scholar]

- Zarco-Tejada, P.; Berni, J.; Suárez, L.; Sepulcre-Cantó, G.; Morales, F.; Miller, J. Imaging chlorophyll fluorescence with an airborne narrow-band multispectral camera for vegetation stress detection. Remote Sens. Environ. 2009, 113, 1262–1275. [Google Scholar] [CrossRef]

- Zarco-Tejada, P.; González-Dugo, V.; Berni, J. Fluorescence, temperature and narrow-band indices acquired from a UAV platform for water stress detection using a micro-hyperspectral imager and a thermal camera. Remote Sens. Environ. 2012, 117, 322–337. [Google Scholar] [CrossRef]

- Zarco-Tejada, P.; Guillén-Climent, M.; Hernández-Clemente, R.; Catalina, A.; González, M.; Martín, P. Estimating leaf carotenoid content in vineyards using high resolution hyperspectral imagery acquired from an unmanned aerial vehicle (UAV). Agric. For. Meteorol. 2013, 171–172, 281–294. [Google Scholar] [CrossRef]

- LemnaTec GmbH. Scanalyzer Field—LemnaTec. Available online: http://www.lemnatec.com/product/scanalyzer-field (accessed on 28 January 2014).

- ETH Zurich. ETH—Crop Science—Field Phenotyping Platform (FIP). Available online: http://www.kp.ethz.ch/infrastructure/FIP (accessed on 28 January 2014).

- Romano, G.; Zia, S.; Spreer, W.; Sanchez, C.; Cairns, J.; Araus, J.L.; Müller, J. Use of thermography for high throughput phenotyping of tropical maize adaptation in water stress. Comput. Electron. Agric. 2011, 79, 67–74. [Google Scholar] [CrossRef]

- White, J.W.; Conley, M.M. A Flexible, Low-Cost Cart for Proximal Sensing. Crop Sci. 2013, 53, 1646–1649. [Google Scholar] [CrossRef]

- Ruckelshausen, A.; Biber, P.; Doma, M.; Gremmes, H.; Klose, R.; Linz, A.; Rahne, R.; Resch, R.; Thiel, M.; Trautz, D.; et al. BoniRob: An autonomous field robot platform for individual plant phenotyping. In Proceedings of the Joint International Agricultural Conference (2009), Wageningen, Netherlands, 6–8 July 2009; van Henten, E.J., Goense, D., Lokhorst, C., Eds.; Wageningen Agricultural Publishers: Wageningen, Netherlands, 2009; pp. 841–847. [Google Scholar]

- Jensen, K.H.; Nielsen, S.H.; Jørgensen, R.N.; Bøgild, A.; Jacobsen, N.J.; Jørgensen, O.J.; Jaeger-Hansen, C.H. A Low Cost, Modular Robotics Tool Carrier For Precision Agriculture Research. In Proceedings of the 11th International Conference on Precision Agriculture, Indianapolis, IN, USA, 15–18 July 2012. International Society of Precision Agriculture.

- Andrade-Sanchez, P.; Gore, M.A.F.; Heun, J.T.; Thorp, K.R.; Carmo-Silva, A.E.; French, A.N.; Salvucci, M.E.; White, J.W. Development and evaluation of a field-based high-throughput phenotyping platform. Funct. Plant Biol. 2014, 41, 68–79. [Google Scholar] [CrossRef]

- Busemeyer, L.; Klose, R.; Linz, A.; Thiel, M.; Wunder, E.; Ruckelshausen, A. Agro-sensor systems for outdoor plant phenotyping in low and high density crop field plots. In Proceedings of the Landtechnik 2010—Partnerschaften für neue Innovationspotentiale, Düsseldorf, Germany, 27–28 October 2010; pp. 213–218.

- Busemeyer, L.; Mentrup, D.; Möller, K.; Wunder, E.; Alheit, K.; Hahn, V.; Maurer, H.P.; Reif, J.C.; Würschum, T.; Müller, J.; et al. BreedVision—A Multi-Sensor Platform for Non-Destructive Field-Based Phenotyping in Plant Breeding. Sensors 2013, 13, 2830–2847. [Google Scholar] [CrossRef] [PubMed]

- Comar, A.; Burger, P.; de Solan, B.; Baret, F.; Daumard, F.; Hanocq, J.F. A semi-automatic system for high throughput phenotyping wheat cultivars in-field conditions: Description and first results. Funct. Plant Biol. 2012, 39, 914–924. [Google Scholar] [CrossRef]

- Casadesús, J.; Kaya, Y.; Bort, J.; Nachit, M.M.; Araus, J.L.; Amor, S.; Ferrazzano, G.; Maalouf, F.; Maccaferri, M.; Martos, V.; et al. Using vegetation indices derived from conventional digital cameras as selection criteria for wheat breeding in water-limited environments. Ann. Appl. Biol. 2007, 150, 227–236. [Google Scholar] [CrossRef]

- Jones, H.G.; Vaughan, R.A. Remote Sensing of Vegetation: Principles, Techniques, and Applications; Oxford University Press: Oxford, UK, 2010; p. 369. [Google Scholar]

- Lee, K.J.; Lee, B.W. Estimation of rice growth and nitrogen nutrition status using color digital camera image analysis. Eur. J. Agron. 2013, 48, 57–65. [Google Scholar] [CrossRef]

- Liu, J.; Pattey, E. Retrieval of leaf area index from top-of-canopy digital photography over agricultural crops. Agric. For. Meteorol. 2010, 150, 1485–1490. [Google Scholar] [CrossRef]

- Liu, L.; Peng, D.; Hu, Y.; Jiao, Q. A novel in situ FPAR measurement method for low canopy vegetation based on a digital camera and reference panel. Remote Sens. 2013, 5, 274–281. [Google Scholar] [CrossRef]

- Baret, F.; de Solan, B.; Lopez-Lozano, R.; Ma, K.; Weiss, M. GAI estimates of row crops from downward looking digital photos taken perpendicular to rows at 57.5° zenith angle: Theoretical considerations based on 3D architecture models and application to wheat crops. Agric. For. Meteorol. 2010, 150, 1393–1401. [Google Scholar] [CrossRef]

- Foucher, P.; Revollon, P.; Vigouroux, B.; Chassériaux, G. Morphological Image Analysis for the Detection of Water Stress in Potted Forsythia. Biosyst. Eng. 2004, 89, 131–138. [Google Scholar] [CrossRef]

- Paproki, A.; Sirault, X.R.R.; Berry, S.; Furbank, R.T.; Fripp, J. A novel mesh processing based technique for 3D plant analysis. BMC Plant Biol. 2012, 12. [Google Scholar] [CrossRef] [PubMed]

- Wang, H.; Zhang, W.; Zhou, G.; Yan, G.; Clinton, N. Image-based 3D corn reconstruction for retrieval of geometrical structural parameters. Int. J. Remote Sens. 2009, 30, 5505–5513. [Google Scholar] [CrossRef]

- Huang, C.; Yang, W.; Duan, L.; Jiang, N.; Chen, G.; Xiong, L.; Liu, Q. Rice panicle length measuring system based on dual-camera imaging. Comput. Electron. Agric. 2013, 98, 158–165. [Google Scholar] [CrossRef]

- Eitel, J.U.H.; Vierling, L.A.; Long, D.S.; Hunt, E.R. Early season remote sensing of wheat nitrogen status using a green scanning laser. Agric. For. Meteorol. 2011, 151, 1338–1345. [Google Scholar] [CrossRef]

- Llorens, J.; Gil, E.; Llop, J.; Escolà, A. Ultrasonic and LIDAR sensors for electronic canopy characterization in vineyards: Advances to improve pesticide application methods. Sensors 2011, 11, 2177–2194. [Google Scholar] [CrossRef] [PubMed]

- Sanz, R.; Rosell, J.; Llorens, J.; Gil, E.; Planas, S. Relationship between tree row LIDAR-volume and leaf area density for fruit orchards and vineyards obtained with a LIDAR 3D Dynamic Measurement System. Agric. For. Meteorol. 2013, 171–172, 153–162. [Google Scholar] [CrossRef]

- Gebbers, R.; Ehlert, D.; Adamek, R. Rapid Mapping of the Leaf Area Index in Agricultural Crops. Agron. J. 2011, 103, 1532–1541. [Google Scholar] [CrossRef]

- Hosoi, F.; Omasa, K. Estimating vertical plant area density profile and growth parameters of a wheat canopy at different growth stages using three-dimensional portable lidar imaging. ISPRS J. Photogramm. Remote Sens. 2009, 64, 151–158. [Google Scholar] [CrossRef]

- Chéné, Y.; Rousseau, D.; Lucidarme, P.; Bertheloot, J.; Caffier, V.V.; Morel, P.; Belin, E.; Chapeau-Blondeau, F.F.; Chene, Y.; Belin, E. On the use of depth camera for 3D phenotyping of entire plants. Comput. Electron. Agric. 2012, 82, 122–127. [Google Scholar] [CrossRef]

- Klose, R.; Penlington, J.; Ruckelshausen, A. Usability of 3D time-of-flight cameras for automatic plant phenotyping. Bornimer Agrartechnische Berichte 2011, 69, 93–105. [Google Scholar]

- Azzari, G.; Goulden, M.L.; Rusu, R.B. Rapid characterization of vegetation structure with a Microsoft Kinect sensor. Sensors 2013, 13, 2384–2398. [Google Scholar] [CrossRef] [PubMed]

- Aziz, S.A.; Steward, B.L.; Birrell, S.J.; Shrestha, D.S.; Kaspar, T.C. Ultrasonic Sensing for Corn Plant Canopy Characterization. Paper Number 041120. In Proceedings of the 2004 ASAE Annual Meeting, Ottawa, ON, Canada, 1–4 August 2004; American Society of Agricultural and Biological Engineers: St. Joseph, Michigan; pp. 1–11.

- Makeen, K.; Kerssen, S.; Mentrup, D.; Oeleman, B. Multiple Reflection Ultrasonic Sensor System for Morphological Plant Parameters. Bornimer Agrartech. Berichte 2012, 78, 110–116. [Google Scholar]

- Tucker, C.J. Red and photographic infrared linear combinations for monitoring vegetation. Remote Sens. Environ. 1979, 8, 127–150. [Google Scholar] [CrossRef]

- Hilker, T.; Coops, N.C.; Coggins, S.B.; Wulder, M.A.; Brown, M.; Black, T.A.; Nesic, Z.; Lessard, D. Detection of foliage conditions and disturbance from multi-angular high spectral resolution remote sensing. Remote Sens. Environ. 2009, 113, 421–434. [Google Scholar] [CrossRef]

- Zarco-Tejada, P.; Berjón, A.; López-Lozano, R.; Miller, J.; Martín, P.; Cachorro, V.; González, M.; de Frutos, A. Assessing vineyard condition with hyperspectral indices: Leaf and canopy reflectance simulation in a row-structured discontinuous canopy. Remote Sens. Environ. 2005, 99, 271–287. [Google Scholar] [CrossRef]

- Zarco-Tejada, P.; Catalina, A.; González, M.; Martín, P. Relationships between net photosynthesis and steady-state chlorophyll fluorescence retrieved from airborne hyperspectral imagery. Remote Sens. Environ. 2013, 136, 247–258. [Google Scholar] [CrossRef]

- Dreccer, M.F.; Barnes, L.R.; Meder, R. Quantitative dynamics of stem water soluble carbohydrates in wheat can be monitored in the field using hyperspectral reflectance. Field Crops Res. 2014, 159, 70–80. [Google Scholar] [CrossRef]

- Darvishzadeh, R.; Skidmore, A.; Schlerf, M.; Atzberger, C.; Corsi, F.; Cho, M. LAI and chlorophyll estimation for a heterogeneous grassland using hyperspectral measurements. ISPRS J. Photogramm. Remote Sens. 2008, 63, 409–426. [Google Scholar] [CrossRef]

- Serbin, S.P.; Dillaway, D.N.; Kruger, E.L.; Townsend, P.A. Leaf optical properties reflect variation in photosynthetic metabolism and its sensitivity to temperature. J. Exp. Bot. 2012, 63, 489–502. [Google Scholar] [CrossRef] [PubMed]

- Zhao, K.; Valle, D.; Popescu, S.; Zhang, X.; Mallick, B. Hyperspectral remote sensing of plant biochemistry using Bayesian model averaging with variable and band selection. Remote Sens. Environ. 2013, 132, 102–119. [Google Scholar] [CrossRef]

- Römer, C.; Wahabzada, M.; Ballvora, A.; Pinto, F.; Rossini, M.; Panigada, C.; Behmann, J.; Léon, J.; Thurau, C.; Bauckhage, C.; et al. Early drought stress detection in cereals: Simplex volume maximisation for hyperspectral image analysis. Funct. Plant Biol. 2012, 39, 878–890. [Google Scholar] [CrossRef]

- Seiffert, U.; Bollenbeck, F.; Mock, H.P.; Matros, A. Clustering of crop phenotypes by means of hyperspectral signatures using artificial neural networks. In Proceedings of the 2nd Workshop Hyperspectral Image and Signal Processing: Evolution in Remote Sensing (WHISPERS), Reykjavik, Iceland, 14–16 June 2010; IEEE; pp. 1–4.

- Féret, J.B.; François, C.; Gitelson, A.; Asner, G.P.; Barry, K.M.; Panigada, C.; Richardson, A.D.; Jacquemoud, S. Optimizing spectral indices and chemometric analysis of leaf chemical properties using radiative transfer modeling. Remote Sens. Environ. 2011, 115, 2742–2750. [Google Scholar] [CrossRef]

- Garrity, S.R.; Eitel, J.U.H.; Vierling, L.A. Disentangling the relationships between plant pigments and the photochemical reflectance index reveals a new approach for remote estimation of carotenoid content. Remote Sens. Environ. 2011, 115, 628–635. [Google Scholar] [CrossRef]

- Serrano, L.; González-Flor, C.; Gorchs, G. Assessment of grape yield and composition using the reflectance based Water Index in Mediterranean rainfed vineyards. Remote Sens. Environ. 2012, 118, 249–258. [Google Scholar] [CrossRef]

- Thiel, M.; Rath, T.; Ruckelshausen, A. Plant moisture measurement in field trials based on NIR spectral imaging: A feasibility study. In Proceedings of the CIGR Workshop on Image Analysis in Agriculture, Budapest, Hungary, 26–27 August 2010; Commission Internationale du Genie Rural: Budapest, Hungary; pp. 16–29.

- Yi, Q.X.; Bao, A.M.; Wang, Q.; Zhao, J. Estimation of leaf water content in cotton by means of hyperspectral indices. Comput. Electron. Agric. 2013, 90, 144–151. [Google Scholar] [CrossRef]

- Gaulton, R.; Danson, F.M.; Ramirez, F.A.; Gunawan, O. The potential of dual-wavelength laser scanning for estimating vegetation moisture content. Remote Sens. Environ. 2013, 132, 32–39. [Google Scholar] [CrossRef]

- Cheng, T.; Rivard, B.; Sánchez-Azofeifa, A. Spectroscopic determination of leaf water content using continuous wavelet analysis. Remote Sens. Environ. 2011, 115, 659–670. [Google Scholar] [CrossRef]

- Ullah, S.; Schlerf, M.; Skidmore, A.K.; Hecker, C. Identifying plant species using mid-wave infrared (2.5–6 μm) and thermal infrared (8–14 μm) emissivity spectra. Remote Sens. Environ. 2012, 118, 95–102. [Google Scholar]

- Ullah, S.; Skidmore, A.K.; Groen, T.A.; Schlerf, M. Evaluation of three proposed indices for the retrieval of leaf water content from the mid-wave infrared (2–6 μm) spectra. Agric. For. Meteorol. 2013, 171–172, 65–71. [Google Scholar]

- De Bei, R.; Cozzolino, D.; Sullivan, W.; Cynkar, W.; Fuentes, S.; Dambergs, R.; Pech, J.; Tyerman, S. Non-destructive measurement of grapevine water potential using near infrared spectroscopy. Aust. J. Grape Wine Res. 2011, 17, 62–71. [Google Scholar] [CrossRef]

- Elsayed, S.; Mistele, B.; Schmidhalter, U. Can changes in leaf water potential be assessed spectrally? Funct. Plant Biol. 2011, 38, 523–533. [Google Scholar]

- Jones, H.G. The use of indirect or proxy markers in plant physiology. Plant, Cell Environ. 2014, 37, 1270–1272. [Google Scholar] [CrossRef] [PubMed]

- Gamon, J.A.; Peñuelas, J.; Field, C.B. A narrow-waveband spectral index that tracks diurnal changes in photosynthetic efficiency. Remote Sens. Environ. 1992, 41, 35–44. [Google Scholar] [CrossRef]

- Kolber, Z.; Klimov, D.; Ananyev, G.; Rascher, U.; Berry, J.; Osmond, B. Measuring photosynthetic parameters at a distance: Laser induced fluorescence transient (LIFT) method for remote measurements of photosynthesis in terrestrial vegetation. Photosynth. Res. 2005, 84, 121–129. [Google Scholar] [CrossRef] [PubMed]

- Perez-Priego, O.; Zarco-Tejada, P.; Miller, J.; Sepulcre-Canto, G.; Fereres, E. Detection of water stress in orchard trees with a high-resolution spectrometer through chlorophyll fluorescence In-Filling of the O2-A band. IEEE Trans. Geosci. Remote Sens. 2005, 43, 2860–2869. [Google Scholar] [CrossRef]

- Guanter, L.; Alonso, L.; Gómez-Chova, L.; Amorós, J.; Vuila, J.; Moreno, J. A method for detection of solar-induced vegetation fluorescence from MERIS FR data. In Proceedings of the Envisat Symposium 2007, Montreux, Switzerland, 23–27 April 2007; ESA Communication Production Office: Montreux, Switzerland, 2007. [Google Scholar]

- Liu, L.; Zhang, Y.; Jiao, Q.; Peng, D. Assessing photosynthetic light-use efficiency using a solar-induced chlorophyll fluorescence and photochemical reflectance index. Int. J. Remote Sens. 2013, 34, 4264–4280. [Google Scholar] [CrossRef]

- Meroni, M.; Rossini, M.; Guanter, L.; Alonso, L.; Rascher, U.; Colombo, R.; Moreno, J. Remote sensing of solar-induced chlorophyll fluorescence: Review of methods and applications. Remote Sens. Environ. 2009, 113, 2037–2051. [Google Scholar] [CrossRef]

- Mirdita, V.; Reif, J.C.; Ibraliu, A.; Melchinger, A.E.; Montes, J.M. Laser-induced fluorescence of maize canopy to determine biomass and chlorophyll concentration at early stages of plant growth. Albanian J. Agric. Sci. 2011, 10, 1–7. [Google Scholar]

- Jones, H.G. Application of Thermal Imaging and Infrared Sensing in Plant Physiology and Ecophysiology. Adv. Bot. Res. 2004, 41, 107–163. [Google Scholar]

- Jiménez-Bello, M.; Ballester, C.; Castel, J.; Intrigliolo, D. Development and validation of an automatic thermal imaging process for assessing plant water status. Agric. Water Manag. 2011, 98, 1497–1504. [Google Scholar] [CrossRef]

- Leinonen, I.; Jones, H.G. Combining thermal and visible imagery for estimating canopy temperature and identifying plant stress. J. Exp. Bot. 2004, 55, 1423–1431. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Yang, W.; Wheaton, A.; Cooley, N.; Moran, B. Automated canopy temperature estimation via infrared thermography: A first step towards automated plant water stress monitoring. Comput. Electron. Agric. 2010, 73, 74–83. [Google Scholar] [CrossRef]

- Jones, H.G. Use of infrared thermography for monitoring stomatal closure in the field: Application to grapevine. J. Exp. Bot. 2002, 53, 2249–2260. [Google Scholar] [CrossRef] [PubMed]

- Wang, X.; Yang Weiping, W.A.; Cooley, N.; Moran, B. Efficient registration of optical and IR images for automatic plant water stress assessment. Comput. Electron. Agric. 2010, 74, 230–237. [Google Scholar] [CrossRef]

- Leinonen, I.; Grant, O.M.; Tagliavia, C.P.P.; Chaves, M.M.; Jones, H.G. Estimating stomatal conductance with thermal imagery. Plant, Cell Environ. 2006, 29, 1508–1518. [Google Scholar] [CrossRef]

- Rebetzke, G.J.; Rattey, A.R.; Farquhar, G.D.; Richards, R.A.; Condon, A.T.G. Genomic regions for canopy temperature and their genetic association with stomatal conductance and grain yield in wheat. Funct. Plant Biol. 2013, 40, 14–33. [Google Scholar] [CrossRef]

- Saint Pierre, C.; Crossa, J.; Manes, Y.; Reynolds, M.P. Gene action of canopy temperature in bread wheat under diverse environments. TAG (Theor. Appl. Genet.; Theor. Angew. Genet.) 2010, 120, 1107–1117. [Google Scholar] [CrossRef] [PubMed]

- Romano, G.; Zia, S.; Spreer, W.; Cairns, J.; Araus, J.L.; MuÌĹller, J. Rapid phenotyping of different maize varieties under drought stress by using thermal images. In Proceedings of the CIGR International Symposium on Sustainable Bioproduction—Water, Energy and Food, Tokyo, Japan, 19–23 September 2011; CIGR (Commission Internationale du Genie Rural); p. 22B02.

- Ballester, C.; Jiménez-Bello, M.; Castel, J.; Intrigliolo, D. Usefulness of thermography for plant water stress detection in citrus and persimmon trees. Agric. For. Meteorol. 2013, 168, 120–129. [Google Scholar] [CrossRef]

- Winterhalter, L.; Mistele, B.; Jampatong, S.; Schmidhalter, U. High throughput phenotyping of canopy water mass and canopy temperature in well-watered and drought stressed tropical maize hybrids in the vegetative stage. Eur. J. Agron. 2011, 35, 22–32. [Google Scholar] [CrossRef]

- André, F.; van Leeuwen, C.; Saussez, S.; van Durmen, R.; Bogaert, P.; Moghadas, D.; de Rességuier, L.; Delvaux, B.; Vereecken, H.; Lambot, S. High-resolution imaging of a vineyard in south of France using ground-penetrating radar, electromagnetic induction and electrical resistivity tomography. J. Appl. Geophys. 2012, 78, 113–122. [Google Scholar] [CrossRef]

- Noh, H.; Zhang, Q. Shadow effect on multi-spectral image for detection of nitrogen deficiency in corn. Comput. Electron. Agric. 2012, 83, 52–57. [Google Scholar] [CrossRef]

- Suárez, L.; Zarco-Tejada, P.; Berni, J.; González-Dugo, V.; Fereres, E. Modelling PRI for water stress detection using radiative transfer models. Remote Sens. Environ. 2009, 113, 730–744. [Google Scholar] [CrossRef]

- Malenovský, Z.; Mishra, K.B.; Zemek, F.; Rascher, U.; Nedbal, L. Scientific and technical challenges in remote sensing of plant canopy reflectance and fluorescence. J. Exp. Bot. 2009, 60, 2987–3004. [Google Scholar] [CrossRef] [PubMed]

- Passioura, J.B. Grain Yield, Harvest Index, and Water Use of Wheat. J. Aust. Inst. Agric. Sci. 1977, 43, 117–120. [Google Scholar]

- Monteith, J.L. Climate and the Efficiency of Crop Production in Britain. Philos. Trans. R. Soc. Lond. B Biol. Sci. 1977, 281, 277–294. [Google Scholar] [CrossRef]

- Reynolds, M.; Tuberosa, R. Translational research impacting on crop productivity in drought-prone environments. Curr. Opin. Plant Biol. 2008, 11, 171–179. [Google Scholar] [CrossRef] [PubMed]

- Richards, R.A.; Rebetzke, G.J.; Watt, M.; Condon, A.G.T.; Spielmeyer, W.; Dolferus, R. Breeding for improved water productivity in temperate cereals: phenotyping, quantitative trait loci, markers and the selection environment. Funct. Plant Biol. 2010, 37, 85–97. [Google Scholar] [CrossRef]

- Rebetzke, G.J.; Chenu, K.; Biddulph, B.; Moeller, C.; Deery, D.M.; Rattey, A.R.; Bennett, D.; Barrett-Lennard, E.G.; Mayer, J.E. A multisite managed environment facility for targeted trait and germplasm phenotyping. Funct. Plant Biol. 2013, 40, 1–13. [Google Scholar] [CrossRef]

- Sinclair, T.R.; Muchow, R.C. Radiation Use Efficiency. In Advances in Agronomy; Sparks, D.L., Ed.; Elsevier: Philadelphia, PA, USA, 1999; Volume 65, pp. 215–265. [Google Scholar]

- Gastellu-Etchegorry, J.P.; Demarez, V.; Pinel, V.; Zagolski, F. Modeling radiative transfer in heterogeneous 3-D vegetation canopies. Remote Sens. Environ. 1996, 58, 131–156. [Google Scholar] [CrossRef]

- Gastellu-Etchegorry, J.P.; Martin, E.; Gascon, F. DART: A 3D model for simulating satellite images and studying surface radiation budget. Int. J. Remote Sens. 2004, 25, 73–96. [Google Scholar] [CrossRef]

- Brown, D.C. Close-range camera calibration. Photogramm. Eng. 1971, 37, 855–866. [Google Scholar]

- Fryer, J.G.; Brown, D.C. Lens Distortion for Close-Range Photogrammetry. Photogramm. Eng. Remote Sens. 1986, 52, 51–58. [Google Scholar]

- Trucco, E.; Verri, A. Introductory Techniques for 3-D Computer Vision; Prentice Hall PTR: Upper Saddle River, NJ, USA, 1998. [Google Scholar]

- Sun, C. Fast Stereo Matching Using Rectangular Subregioning and 3D Maximum-Surface Techniques. Int. J. Comput. Vis. 2002, 47, 99–117. [Google Scholar] [CrossRef]

- Hartley, R.I.; Zisserman, A. Multiple View Geometry in Computer Vision, 2nd ed.; Cambridge University Press: Cambridge, United Kingdom, 2004; ISBN 0521540518. [Google Scholar]

- Asner, G.P.; Martin, R.E. Airborne spectranomics: Mapping canopy chemical and taxonomic diversity in tropical forests. Front. Ecol. Environ. 2009, 7, 269–276. [Google Scholar] [CrossRef]

© 2014 by the authors; licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution license (http://creativecommons.org/licenses/by/3.0/).

Share and Cite

Deery, D.; Jimenez-Berni, J.; Jones, H.; Sirault, X.; Furbank, R. Proximal Remote Sensing Buggies and Potential Applications for Field-Based Phenotyping. Agronomy 2014, 4, 349-379. https://doi.org/10.3390/agronomy4030349

Deery D, Jimenez-Berni J, Jones H, Sirault X, Furbank R. Proximal Remote Sensing Buggies and Potential Applications for Field-Based Phenotyping. Agronomy. 2014; 4(3):349-379. https://doi.org/10.3390/agronomy4030349

Chicago/Turabian StyleDeery, David, Jose Jimenez-Berni, Hamlyn Jones, Xavier Sirault, and Robert Furbank. 2014. "Proximal Remote Sensing Buggies and Potential Applications for Field-Based Phenotyping" Agronomy 4, no. 3: 349-379. https://doi.org/10.3390/agronomy4030349

APA StyleDeery, D., Jimenez-Berni, J., Jones, H., Sirault, X., & Furbank, R. (2014). Proximal Remote Sensing Buggies and Potential Applications for Field-Based Phenotyping. Agronomy, 4(3), 349-379. https://doi.org/10.3390/agronomy4030349