1. Introduction

Tomatoes are one of the most widely grown greenhouse vegetables in the world [

1]. Although the temperature and humidity in greenhouses can be controlled, it is significantly difficult to prevent tomatoes from contracting diseases. Even some diseases are more likely to occur instead [

2]. If these diseases are detected and treated at the early stages, losses can be effectively reduced. However, if the diseases are detected too late, the diseased tomatoes, including their surrounding parts, may need to be abandoned, resulting in significant yield losses. Accordingly, tomatoes need to be monitored daily to detect diseases as early as possible. However, detecting diseases by human visual inspection for each plant is time-consuming and inefficient. In other words, monitoring all the tomatoes on a daily basis is impractical. Therefore, efficiently monitoring tomatoes to detect diseases in a timely manner has emerged as an important issue. With the increasing advancement in robotics and deep learning technologies, the use of robots for automatic tomato disease monitoring has become a viable solution. Specifically, there are two reasons for this:

First, the efficiency and accuracy of computer vision tasks have been significantly improved due to the advent of deep learning. Deep Neural Networks (DNNs) can extract representations with high abstraction levels and strong representational properties. It constructs a representation of all input data through a large amount of learning and has good generalization. Some networks have outperformed humans [

3]. Some recent studies have shown that deep learning techniques can be used to detect tomatoes and their diseases. As a representative of one-stage detection models, a method called “You Only Look Once” (YOLO) [

4] has been used for tomato disease detection in many methods, which is to solve object detection as a regression problem and outputs the object position and category based on an end-to-end network. For example, Wang et al. [

5] developed an improved YOLOv3 model to detect tomato anomalies. Similar works using the YOLO series for tomato detection or tomato disease detection are [

6,

7]. In addition to the YOLO series, Faster R-CNN [

8], a representative of two-stage object detection models, has higher accuracy than the one-stage object detection models of the same period, although the inference speed is slower. Unlike the one-stage network, it uses RPN to generate region suggestions in the first stage, while the location and class of the object are obtained in the second stage. Natarajan et al. [

9] used the Faster R-CNN to detect four kinds of diseases in tomatoes. Ref. [

10] also conducted a similar study. Ref. [

11] reported using Faster R-CNN can detect ten kinds of diseases associated with tomato fruits. In addition to detecting tomatoes or tomato diseases from images, many studies directly classify images to discern the type of tomatoes or the diseases. These methods employ the classification networks which extract image features and predict which category the input image belongs to. For example, Zaki et al. [

12] fine-tuned a network named MobileNetv2 to detect three tomato diseases. The MobileNet families [

13,

14,

15] are lightweight networks developed specifically for mobile devices. Their feature is high classification accuracy with low resource consumption. There is also a famous network that must be mentioned, ResNet [

16], which first proposed a residual block that allows extremely deep networks to be constructed. An improved ResNet created by Jiang et al. [

17] can identify spot blight, late blight, and yellow leaf curl on tomato leaves. Furthermore, Lu et al. [

18] classify the tomato species using DenseNet201. DenseNet [

19] densely connects all the previous layers to the later ones to reuse the features, thus allowing extremely deep networks to be constructed. Similar studies using classification networks have been conducted in [

20,

21,

22]. In other words, it is possible to automate the detection of diseases if the image data of the target tomatoes can be obtained.

Second, the collection of tomato images can be automated using robotics. Using mobile robots to collect image data is simpler and easier to maintain than retrofitting greenhouses. In contrast to the method that involves the installation of additional tracks to add cameras to monitor target crops proposed in [

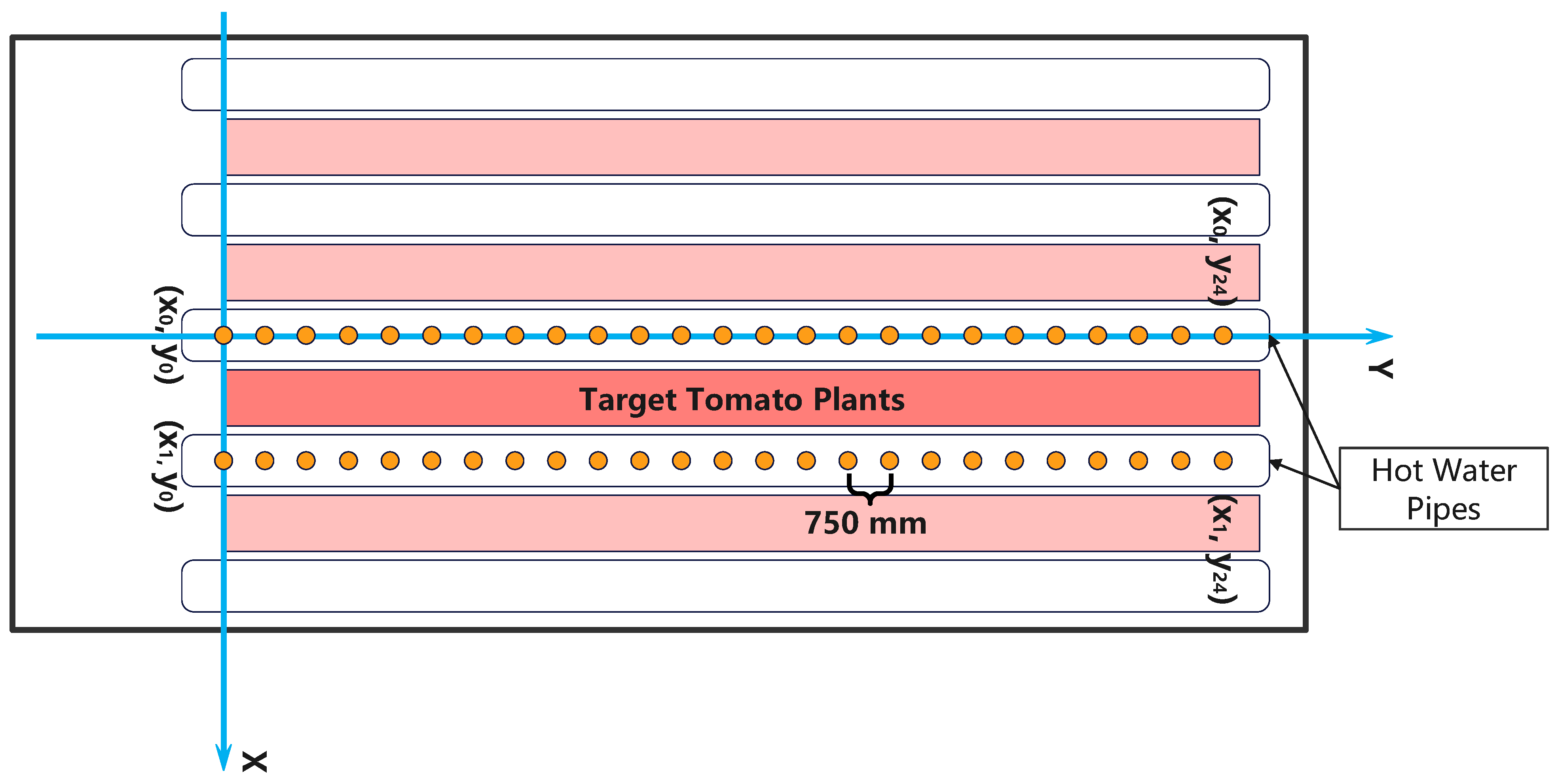

23], a robot does not require any changes to the existing environment. The greenhouse environment is also well-suited for robots. Crops are planted in rows in a regular pattern and are usually separated by straight aisles or hot water pipes. This implies that the robot can run automatically between the two rows of crops with a simple linear motion strategy and obtain images or video data of the crops through installed cameras. For tomato plants, in [

24], Zu et al. developed a mobile robot equipped with a 4G wireless camera to collect image data of green tomatoes. Seo et al. [

25] developed a tomato monitoring robot running on hot water pipes. The use of a mobile robot to photograph and count tomatoes has also been reported [

26]. In addition, Wspanialy et al. [

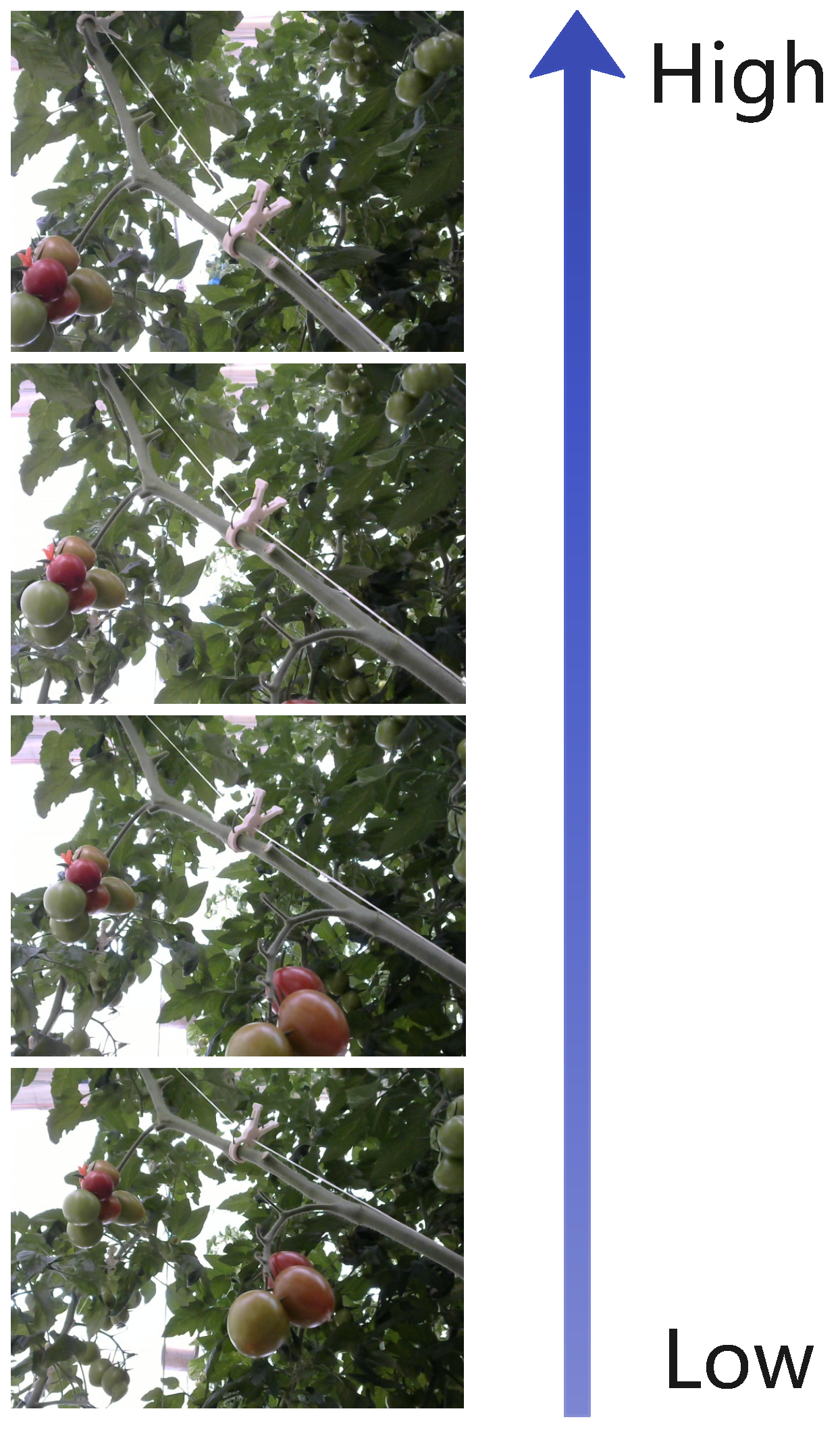

27] developed a mobile platform loaded with a linear actuator device to photograph the leaves of tomatoes. This device allows the camera to be moved to different vertical positions to accommodate different plant heights. A similar design is also reflected in the robot used in [

28]. The robot used by Fonteijn et al. [

29] in their study can raise multiple cameras to cover most of the tomatoes.

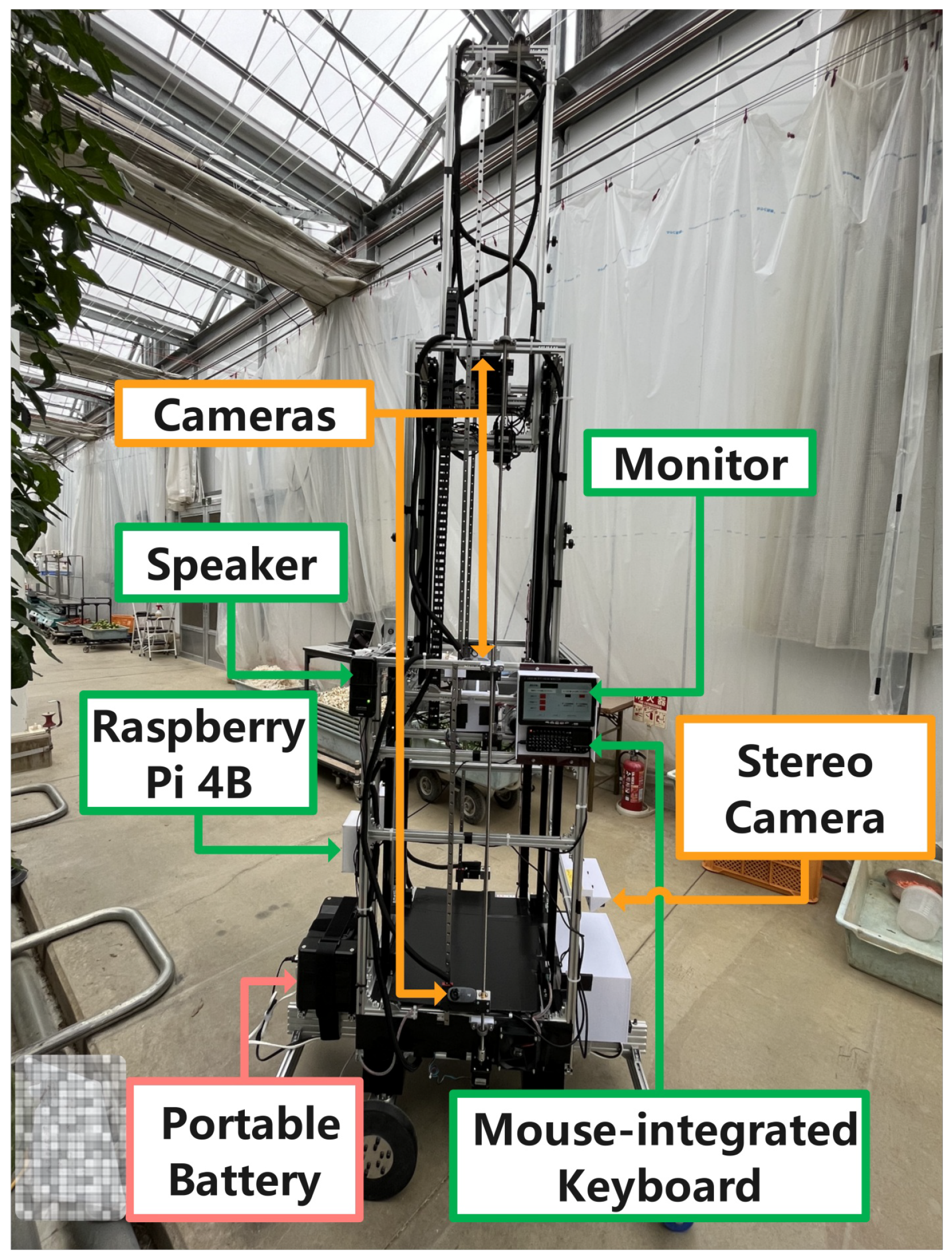

This study aims to create an automatic tomato disease monitoring system working in a greenhouse to detect diseases early. The system uses modular, extendable mobile robot we developed to collect the image data of tomatoes automatically. Our robot is designed can be modularly configured and extended according to the height of tomato plants and allows free control of the monitor range and cost. Then, a two-level disease detection model is used to detect tomato diseases based on the collected image data. Ultimately, the user can dispose of the diseased tomatoes according to their locations indicated by the system to reduce yield losses.

It should be noted that the robot we developed has been described in [

30]. In [

30], the robot designs and mechanisms, and results of data collection tests are described. In this paper, this paper briefly introduces the robot, and key details are added that have not yet been disclosed. In addition, the function of the robot in the system is also described. It is our hope that these details will serve as a reference for researchers who aim to rapidly develop a prototype robot or system that can be operated in greenhouses.

4. Discussion

4.1. Contribution to Automatic Disease Monitoring in Greenhouses

Tomato farming has a very large market size, but few studies have been conducted to automatically monitor tomato diseases in greenhouses. Moreover, since tomato cultivation in greenhouses is often guided to high places to make the best use of space, robots that can cover most of the tomatoes for monitoring are not only costly but also scarce. This paper introduces an automatic tomato disease monitoring system using a low-cost modular, nestable mobile robot we developed for greenhouses to overcome above-mentioned issues. The system automatically monitors tomatoes with minimal human assistance in the greenhouse for diseases and notifies users of the locations of diseased tomatoes on time. The nestable design of the robot used in the system allows it to be configured and extended according to the height of the tomato plants, such that users can choose to use several nestable modules to control costs according to their specific needs. In addition, the server used in the system does not require high performance, as the networks selected can run on lower-performance graphics cards, which further reduces costs.

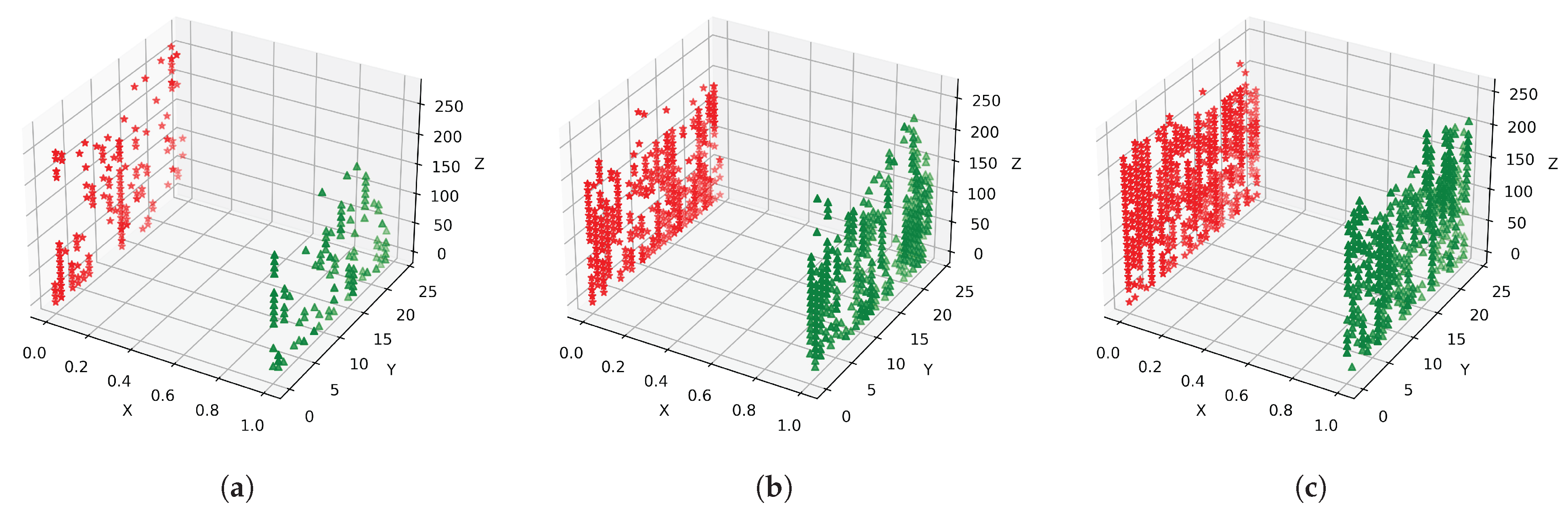

The results of the proposed system conform to the actual situation. For example, there were few predicted results on April 10, shown in

Figure 15 because our tomatoes started cultivation approximately at the end of March. It was higher on May 12 and June 10 because that was the time of high incidence period. In summary, this study gives a clear idea and basis to show that it is initially feasible to use a configurable mobile robot for automatically monitoring tomato diseases in a greenhouse.

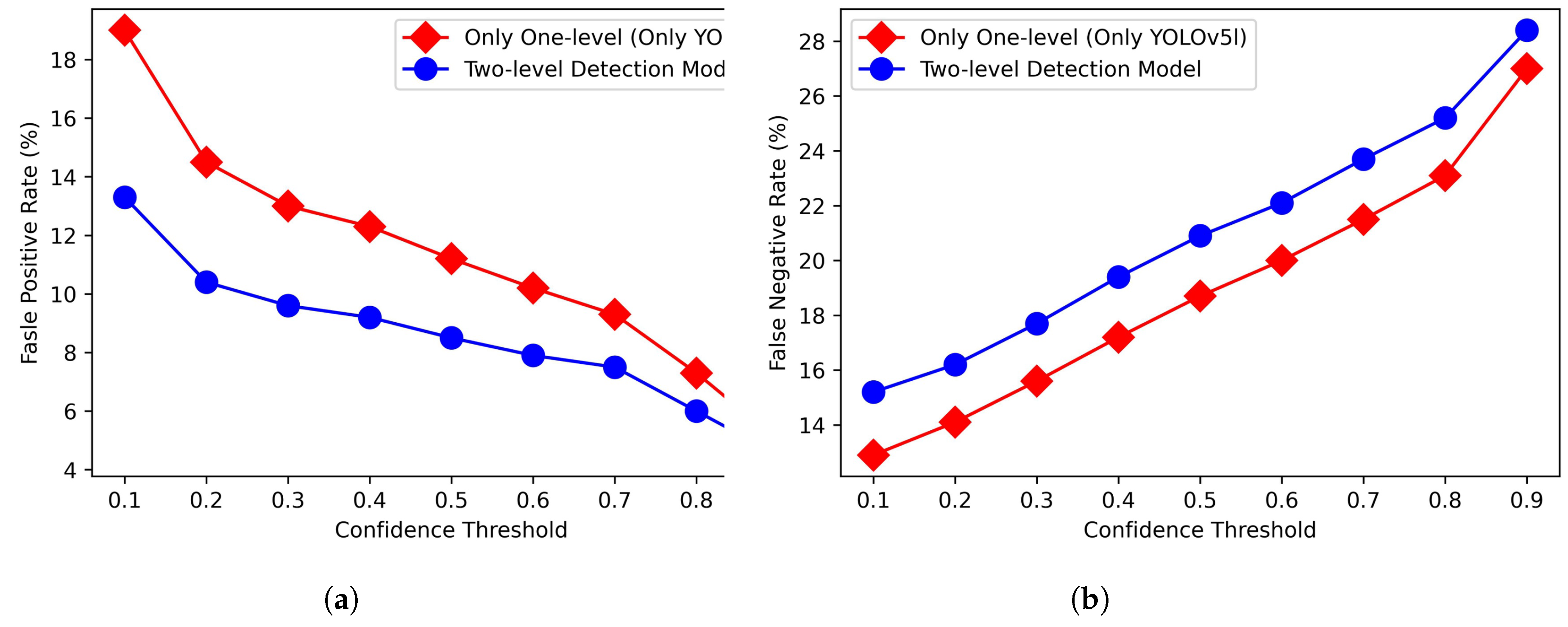

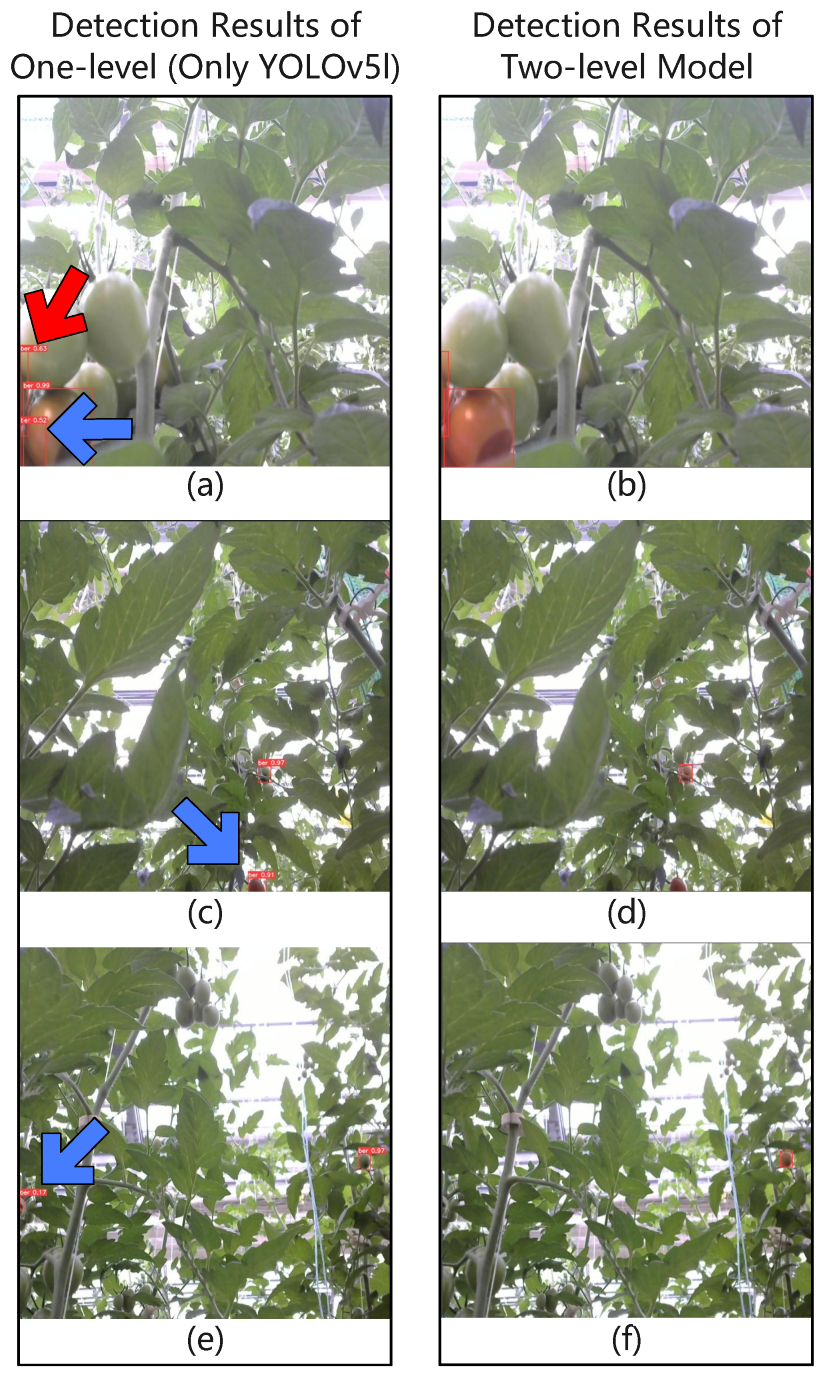

4.2. Contribution to Reduction of False Positive Rate

False positives and false negatives exist in any object detection network. For false positives, in this study, we propose a two-level disease detection model that reduces false positives by further classifying the outputs of the object detection network. In an experimental comparison with different confidence thresholds, the average false positive rate of our proposed two-level disease detection model is 2.8% lower, and the average false negative rate is 2.1% higher than that using only the object detection network. It is an acceptable result because the reduced false positives can save many users’ work time and improve productivity.

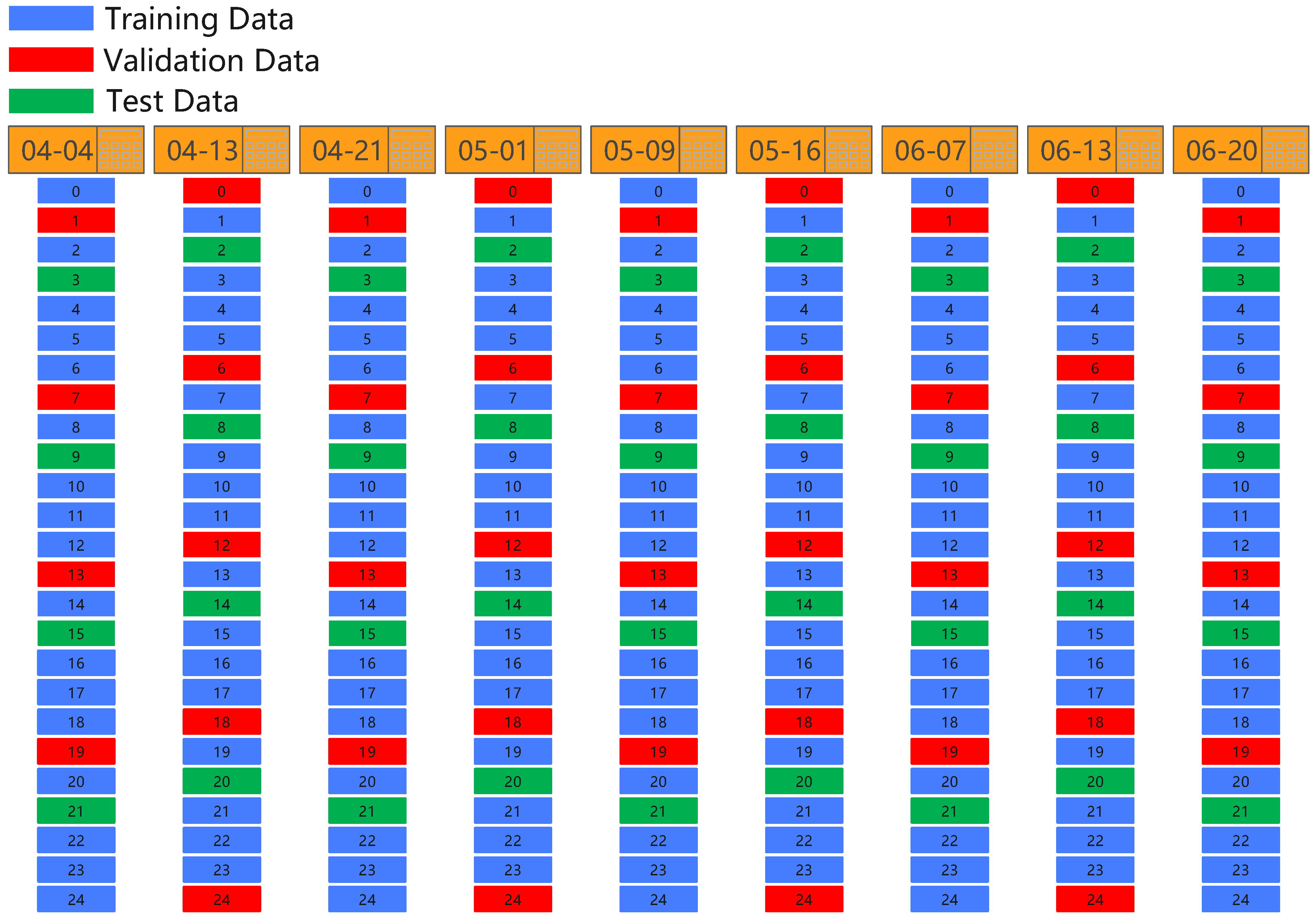

4.3. Comparison of Divisions and Networks

Three methods of dividing datasets were compared: random proportional division, division by dates, and division by locations. The experimental results in different object detection networks show that YOLOv5l has the best results in the first division.

None of the networks’

[email protected] values exceeded 80% on the data divided by dates. Our speculation is that the tomatoes with BER in the image data collected on different dates differed in the degree of lesions. This leads to poor generalization performance of the networks. Intuitively, the interlaced data should be sufficient for the networks to learn the features of diseased tomatoes from all locations. However, the networks performed much poorer on the dataset divided by locations than on the dataset divided randomly and proportionally. One possible reason is that the date interval is excessively long since users adjust or cultivate tomatoes every once in a while, which prevents the networks from learning the features of diseased tomatoes from all locations.

Additionally, in the case of the current data, the bigger, the better does not apply to classification networks. The selected MobileNetv2 has higher accuracy than ResNet50 (see

Table 7) and is smaller in size.

4.4. Limits and Future Work

Notably, there are certain limitations to this study.

Firstly, since the current task only requires automatic running on the hot water pipes, our robot omits encoders and LiDAR sensors in order to develop a prototype robot quickly. However, this resulted in limiting the development of the robot into fully automatic, and it is also not convenient to control the robot remotely to move onto the hot water pipes. In addition, although a human visual inspection area is set, a system that does not require human intervention is desired.

Secondly, in this system, only tomatoes with BER are detected from the collected images, and no experiments are performed for other diseases. Hence, the system’s efficiency in monitoring multiple diseases could not be confirmed.

Therefore, as future work, our plan is intended to add equipment to fully automate the tomato disease monitoring system and design it to be more efficient. For the two-level disease detection model, certain improvements to the network are optional in addition to increasing the training data. In addition to BER, there is a plan to monitor other tomato diseases to verify the system’s generality.

5. Conclusions

This paper presents an automatic tomato disease monitoring system for greenhouses. This system uses a modular, extendable mobile robot to collect images of tomatoes in the greenhouse. The design of the robot we developed allows it to be configured and extended to match the height of the tomato plant. A server is used to receive image data and detect the diseased tomatoes from the images through a two-level disease detection model consisting of an object detection network and a classification network. Ultimately, the user can effectively locate the diseased tomatoes and treat them in time.

As experiments, the BER in tomatoes was focused on in this paper. For determining the optimal division of the dataset for training the object detection networks, the labeled image data were divided based on different conditions: random division by scale, division by date, and division by locations of the images. Several object detection networks were trained and compared, and the results showed that YOLOv5l was able to obtain the best performance on the randomly divided dataset. The dataset produced using YOLOv5l was used to train multiple classification networks, and the results showed that MoileNetv2 has the best balance of accuracy and size. Ultimately, YOLOv5l and MobileNetv2 were deployed to the two-level disease detection model. When the confidence threshold of YOLOv5l was set to 0.1, its false positive and false negative rates were 19.0% and 12.9%, respectively, compared to 13.3% and 15.2% for the two-level model. It means that the system using the two-level disease detection model is able to report to the user the locations of diseased tomatoes with a lower false positive rate, thus reducing the time wasted due to false positive rate and increasing the user’s work efficiency.

Future research will focus on further achieving full automation. In addition to BER, monitoring other tomato diseases for the purpose of reducing yield losses is also planned.