Developments in Landsat Land Cover Classification Methods: A Review

Abstract

:1. Introduction

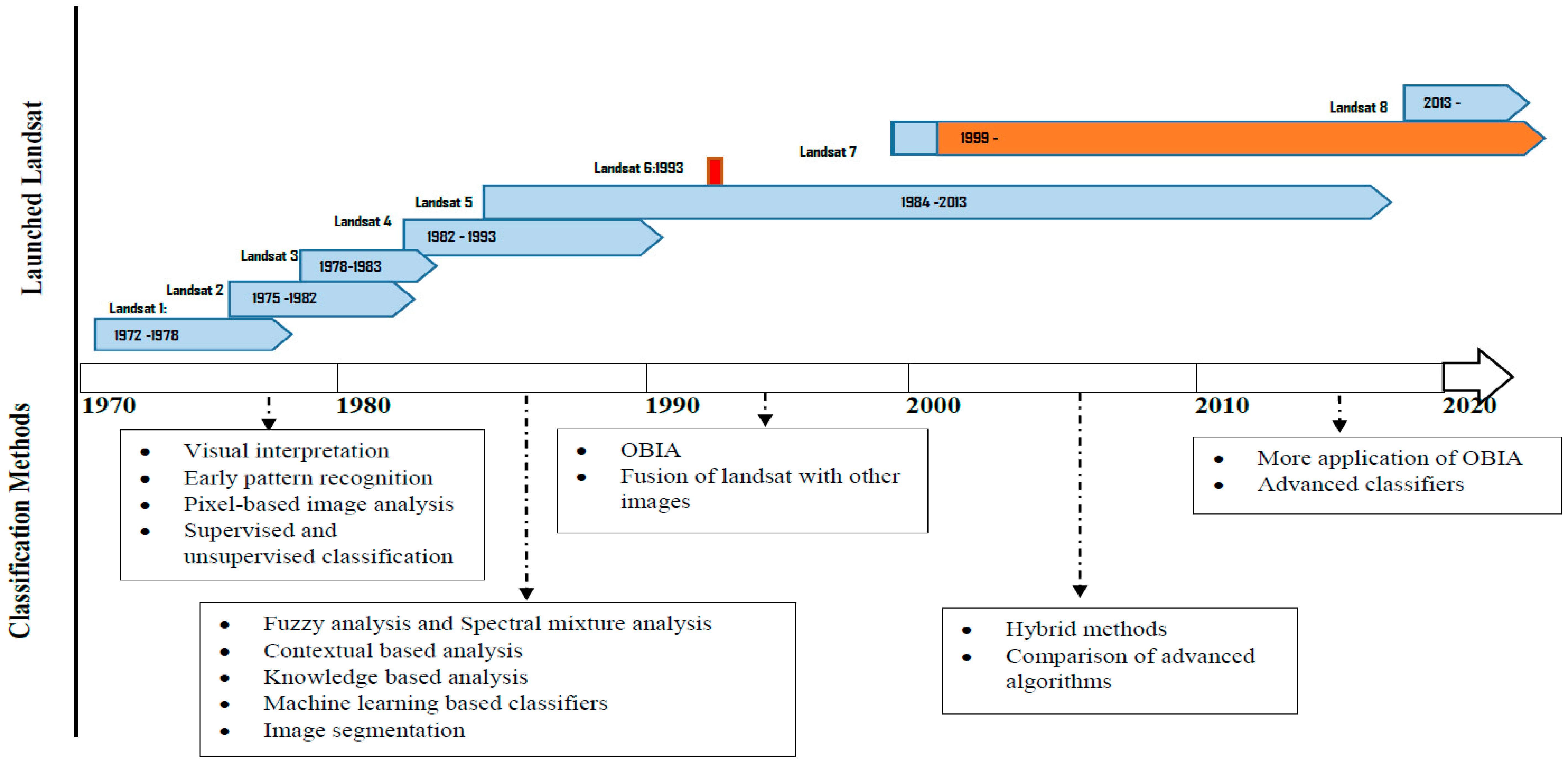

2. Developments of Landsat Data

3. Landsat Land Cover Classification Methods

3.1. Early Landsat Land Cover Classification: Visual Approach

3.2. Landsat Land Cover Classification Using Digital Format

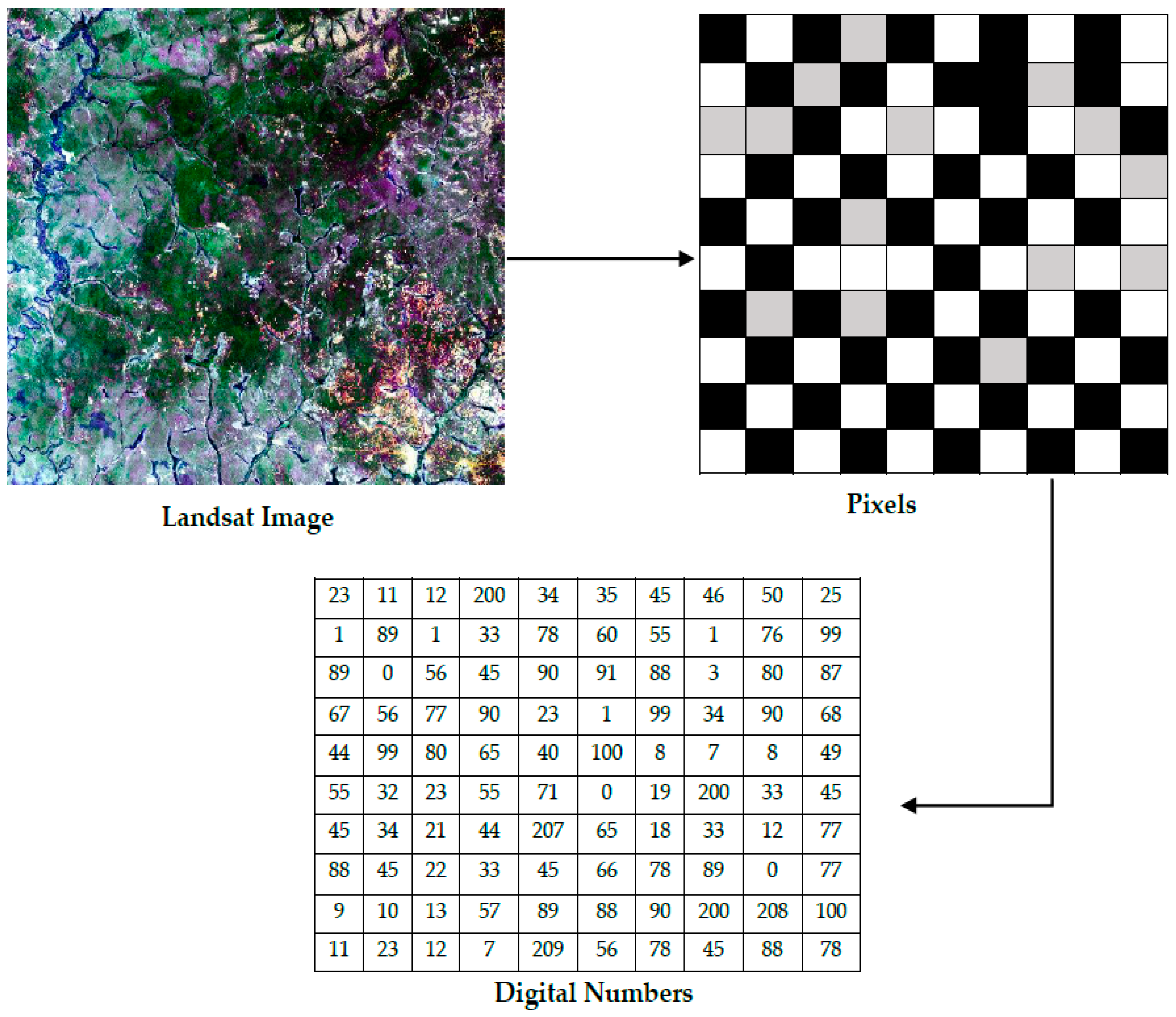

3.2.1. Digital Numbers

3.2.2. Early Landsat Digital Land Covers Classification Principles

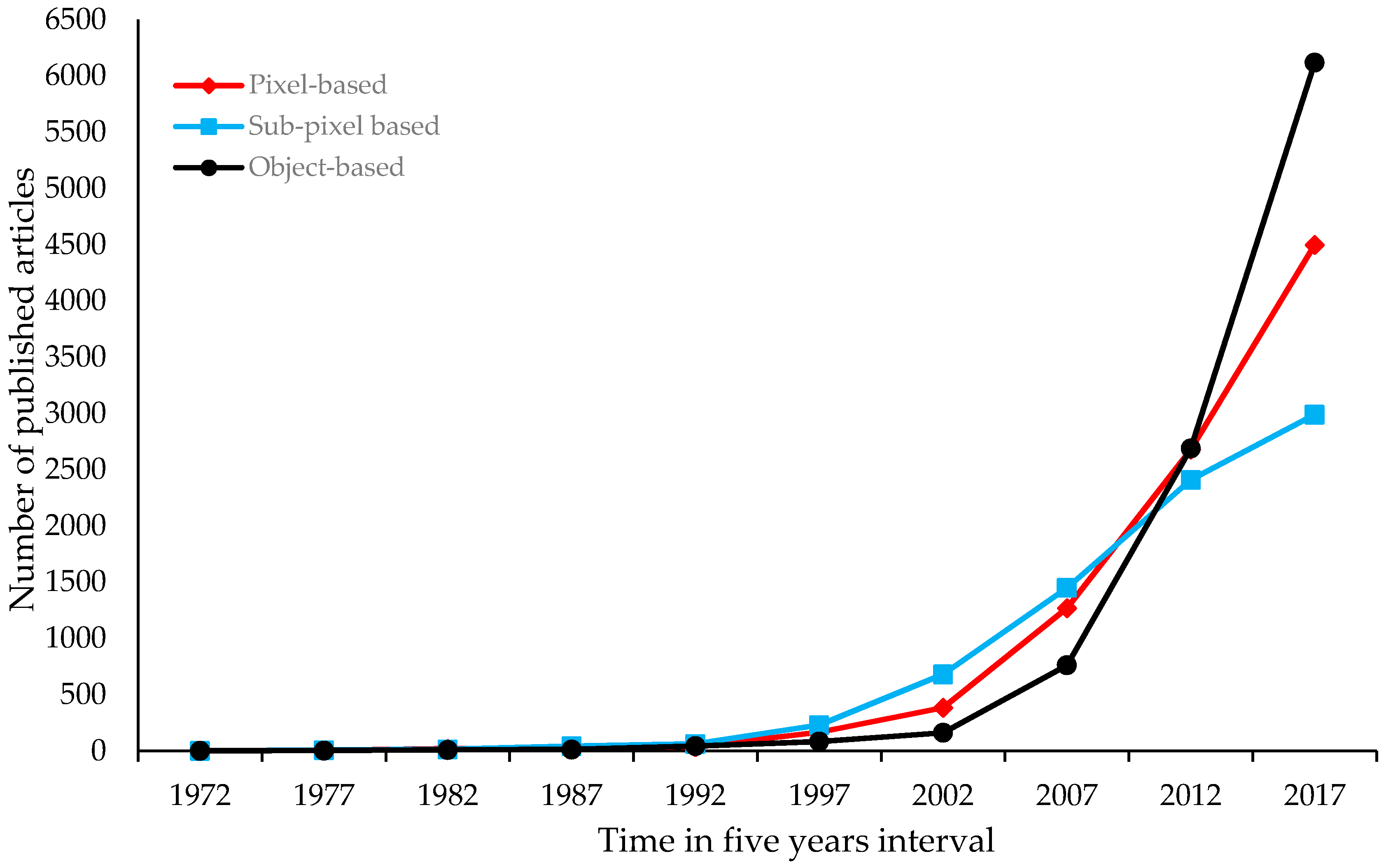

3.3. Developments of Computer-Based Land Cover Classification Methods

3.4. Pixel-Based Classification

3.4.1. Supervised and Unsupervised Classification

3.4.2. Parametric and Non-Parametric Classifiers

3.4.3. Contextual-Based Approach

3.4.4. Multiple (Hybrid) Classifier Approaches

3.5. Sub-Pixel Image Classification

3.5.1. Fuzzy Approach

3.5.2. Spectral Mixture Analysis (SMA)

3.6. Object-Based Approach

3.6.1. Comparisons of OBIA and Pixel-Based Classification Methods of Landsat Images

3.6.2. Limitations of OBIA Land Cover Classification of Landsat Images

3.6.3. Knowledge-Based Approaches

4. Landsat Image Fusions in Land Cover Classification

5. Comparative Performance of Different Landsat Images in Land Cover Classification

6. Best Practices for Landsat Land Cover Classification

7. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

References

- Haack, B.N. Landsat: A tool for development. World Dev. 1982, 10, 899–909. [Google Scholar] [CrossRef]

- Masek, J.G.; Honzak, M.; Goward, S.N.; Liu, P.; Pak, E. Landsat-7 ETM+ as an observatory for land cover: Initial radiometric and geometric comparisons with Landsat-5 Thematic Mapper. Remote Sens. Environ. 2001, 78, 118–130. [Google Scholar] [CrossRef]

- Masek, J.G.; Hayes, D.J.; Joseph Hughes, M.; Healey, S.P.; Turner, D.P. The role of remote sensing in process-scaling studies of managed forest ecosystems. For. Ecol. Manag. 2015, 355, 109–123. [Google Scholar] [CrossRef]

- Wulder, M.A.; White, J.C.; Loveland, T.R.; Woodcock, C.E.; Belward, A.S.; Cohen, W.B.; Fosnight, E.A.; Shaw, J.; Masek, J.G.; Roy, D.P. The global Landsat archive: Status, consolidation, and direction. Remote Sens. Environ. 2016, 185, 271–283. [Google Scholar] [CrossRef]

- Steiner, D. Automation in photo interpretation. Geoforum 1970, 1, 75–88. [Google Scholar] [CrossRef]

- Thompson, M.M.; Mikhail, E.M. Automation in photogrammetry: Recent developments and applications (1972–1976). Photogrammetria 1976, 32, 111–145. [Google Scholar] [CrossRef]

- Campbell, J.B.; Wynne, R.H. Introduction to Remote Sensing; Guilford Press: New York, NY, USA, 2011; Volume 5. [Google Scholar]

- Ahmad, W.; Jupp, L.B.; Nunez, M. Land cover mapping in a rugged terrain area using Landsat MSS data. Int. J. Remote Sens. 1992, 13, 673–683. [Google Scholar] [CrossRef]

- Lu, D.; Weng, Q. A survey of image classification methods and techniques for improving classification performance. Int. J. Remote Sens. 2007, 28, 823–870. [Google Scholar] [CrossRef]

- Colwell, R.N. The photo interpretation picture in 1960. Photogrammetria 1959, 16, 292–314. [Google Scholar] [CrossRef]

- Reinhold, A.; Wolff, G. Methods of representing the results of photo interpretation. Photogrammetria 1970, 25, 201–207. [Google Scholar] [CrossRef]

- Gordon, S.I. Utilizing Landsat imagery to monitor land-use change: A case study in Ohio. Remote Sens. Environ. 1980, 9, 189–196. [Google Scholar] [CrossRef]

- Lo, C.P. Landsat images as a tool in regional analysis: The example of Chu Chiang (Pearl River) delta in South China. Geoforum 1977, 8, 79–87. [Google Scholar] [CrossRef]

- Turner, W.; Rondinini, C.; Pettorelli, N.; Mora, B.; Leidner, A.K.; Szantoi, Z.; Buchanan, G.; Dech, S.; Dwyer, J.; Herold, M.; et al. Free and open-access satellite data are key to biodiversity conservation. Biol. Conserv. 2015, 182, 173–176. [Google Scholar] [CrossRef]

- Hansen, T. A review of large area monitoring of land cover change using Landsat data. Remote Sens. Environ. 2012, 122, 66–74. [Google Scholar] [CrossRef]

- Woodcock, C.E.; Allen, R.; Anderson, M.; Belward, A.; Bindschadler, R.; Cohen, W.; Gao, F.; Goward, S.N.; Helder, D.; Helmer, E.; et al. Free access to Landsat imagery. Science 2008, 320, 1011. [Google Scholar] [CrossRef] [PubMed]

- Cihlar, J. Land cover mapping of large areas from satellites: Status and research priorities. Int. J. Remote Sens. 2000, 21, 1093–1114. [Google Scholar] [CrossRef]

- Zhu, Z.; Fu, Y.; Woodcock, C.E.; Olofsson, P.; Vogelmann, J.E.; Holden, C.; Wang, M.; Dai, S.; Yu, Y. Including land cover change in analysis of greenness trends using all available Landsat 5, 7, and 8 images: A case study from Guangzhou, China (2000–2014). Remote Sens. Environ. 2016, 185, 243–257. [Google Scholar] [CrossRef]

- Li, M.; Zang, S.Y.; Zhang, B.; Li, S.S.; Wu, C.S. A review of remote sensing image classification techniques: The role of spatio-contextual information. Eur. J. Remote Sens. 2014, 47, 389–411. [Google Scholar] [CrossRef]

- De Sy, V.; Herold, M.; Achard, F.; Asner, G.P.; Held, A.; Kellndorfer, J.; Verbesselt, J. Synergies of multiple remote sensing data sources for REDD+ monitoring. Curr. Opin. Environ. Sustain. 2012, 4, 696–706. [Google Scholar] [CrossRef]

- Barbosa, J.; Broadbent, E.; Bitencourt, M. Remote sensing of aboveground biomass in tropical secondary forests: A review. Int. J. For. Res. 2014, 2014. [Google Scholar] [CrossRef]

- Chambers, J.Q.; Asner, G.P.; Morton, D.C.; Anderson, L.O.; Saatchi, S.S.; Espírito-Santo, F.D.; Palace, M.; Souza, C. Regional ecosystem structure and function: Ecological insights from remote sensing of tropical forests. Trends Ecol. Evol. 2007, 22, 414–423. [Google Scholar] [CrossRef] [PubMed]

- Mayes, M.T.; Mustard, J.F.; Melillo, J.M. Forest cover change in Miombo Woodlands: Modeling land cover of African dry tropical forests with linear spectral mixture analysis. Remote Sens. Environ. 2015, 165, 203–215. [Google Scholar] [CrossRef]

- Ernsta, C.; Verhegghena, A.; Bodartb, C.; Mayauxb, P.; de Wasseigec, C.; Bararwandikad, A.; Begotoe, G.; Mbaf, F.E.; Ibarag, M.; Shokoh, A.K. Congo basin forest cover change estimate for 1990, 2000 and 2005 by Landsat interpretation using an automated object-based processing chain. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2010, 38, 6. [Google Scholar]

- Pahlevan, N.; Lee, Z.; Wei, J.; Schaaf, C.B.; Schott, J.R.; Berk, A. On-orbit radiometric characterization of OLI (Landsat-8) for applications in aquatic remote sensing. Remote Sens. Environ. 2014, 154, 272–284. [Google Scholar] [CrossRef]

- Chander, G.; Markham, B.L.; Helder, D.L. Summary of current radiometric calibration coefficients for Landsat MSS, TM, ETM+, and EO-1 ALI sensors. Remote Sens. Environ. 2009, 113, 893–903. [Google Scholar] [CrossRef]

- Wu, M.; Wu, C.; Huang, W.; Niu, Z.; Wang, C.; Li, W.; Hao, P. An improved high spatial and temporal data fusion approach for combining Landsat and MODIS data to generate daily synthetic Landsat imagery. Inf. Fusion 2016, 31, 14–25. [Google Scholar] [CrossRef]

- Zeng, C.; Shen, H.; Zhang, L. Recovering missing pixels for Landsat ETM + SLC-off imagery using multi-temporal regression analysis and a regularization method. Remote Sens. Environ. 2013, 131, 182–194. [Google Scholar] [CrossRef]

- Poursanidis, D.; Chrysoulakis, N.; Mitraka, Z. Landsat 8 vs. Landsat 5: A comparison based on urban and peri-urban land cover mapping. Int. J. Appl. Earth Obs. Geoinf. 2015, 35(Part B), 259–269. [Google Scholar] [CrossRef]

- Fassnacht, F.E.; Li, L.; Fritz, A. Mapping degraded grassland on the Eastern Tibetan Plateau with multi-temporal Landsat 8 data—where do the severely degraded areas occur? Int. J. Appl. Earth Obs. Geoinf. 2015, 42, 115–127. [Google Scholar] [CrossRef]

- Irons, J.R.; Dwyer, J.L.; Barsi, J.A. The next Landsat satellite: The Landsat data continuity mission. Remote Sens. Environ. 2012, 122, 11–21. [Google Scholar] [CrossRef]

- Spurr, S.H. Aerial photographs in forest management. Photogrammetria 1952, 9, 33–41. [Google Scholar] [CrossRef]

- Shlien, S.; Smith, A. A rapid method to generate spectral theme classification of Landsat imagery. Remote Sens. Environ. 1975, 4, 67–77. [Google Scholar] [CrossRef]

- France, M.J.; Hedges, P.D. A hydrological comparison of Landsat, TM, Landsat MSS and black and white aerial photography (North Wales). Remote Sens. Resour. Dev. Environ. Manag. 1986, 2, 717–720. [Google Scholar]

- Venkataratnam, L. Use of remotely sensed data for soil mapping. J. Ind Soc. Photo-Interpret. Remote Sens. 1980, 8, 19–25. [Google Scholar]

- Galmier, D.; Lacot, R. Photo interpretation, with examples of its usefulness. Photogrammetria 1970, 25, 131139–135146. [Google Scholar] [CrossRef]

- Rao, D.P. Utility of Landsat coverage in small scale geomorphological mapping-some examples from India. J. Ind. Soc. Photo-Interpret. Remote Sens. 1978, 6, 49–56. [Google Scholar]

- Schowengerdt, R.A. Techniques for Image Processing and Classifications in Remote Sensing; Academic Press: Cambridge, MA, USA, 2012. [Google Scholar]

- Song, C.; Woodcock, C.E.; Seto, K.C.; Lenney, M.P.; Macomber, S.A. Classification and change detection using Landsat TM data: When and how to correct atmospheric effects? Remote Sens. Environ. 2001, 75, 230–244. [Google Scholar] [CrossRef]

- Webster, R.; Wong, I.F.T. A numerical procedure for testing soil boundaries interpreted from air photographs. Photogrammetria 1969, 24, 59–72. [Google Scholar] [CrossRef]

- Kirchhof, W.; Haberäcker, P.; Krauth, E.; Kritikos, G.; Winter, R. A rapid method to generate spectral theme classification of Landsat imagery. Acta Astronaut. 1980, 7, 243–253. [Google Scholar] [CrossRef]

- Hardin, P.J. Neural networks versus nonparametric neighbor-based classifiers for semisupervised classification of Landsat Thematic Mapper imagery. Opt. Eng. 2000, 39, 1898–1908. [Google Scholar] [CrossRef]

- Huang, C.; Davis, L.S.; Townshend, J.R.G. An assessment of support vector machines for land cover classification. Int. J. Remote Sens. 2002, 23, 725–749. [Google Scholar] [CrossRef]

- Fisher, P.F.; Pathirana, S. The evaluation of fuzzy membership of land cover classes in the suburban zone. Remote Sens. Environ. 1990, 34, 121–132. [Google Scholar] [CrossRef]

- Newman, M.E.; McLaren, K.P.; Wilson, B.S. Comparing the effects of classification techniques on landscape-level assessments: Pixel-based versus object-based classification. Int. J. Remote Sens. 2011, 32, 4055–4073. [Google Scholar] [CrossRef]

- Zhou, W.; Troy, A.; Grove, M. Object-based land cover classification and change analysis in the Baltimore metropolitan area using multitemporal high resolution remote sensing data. Sensors 2008, 8, 1613–1636. [Google Scholar] [CrossRef] [PubMed]

- Zhang, T.; Yang, X.; Hu, S.; Su, F. Extraction of coastline in aquaculture coast from multispectral remote sensing images: Object-based region growing integrating edge detection. Remote Sens. 2013, 5, 4470–4487. [Google Scholar] [CrossRef]

- Hussain, M.; Chen, D.; Cheng, A.; Wei, H.; Stanley, D. Change detection from remotely sensed images: From pixel-based to object-based approaches. ISPRS J. Photogramm. Remote Sens. 2013, 80, 91–106. [Google Scholar] [CrossRef]

- Sahai, B.; Dadhwal, V.K.; Chakraborty, M. Comparison of SPOT, TM and MSS data for agricultural land-use mapping in Gujarat (India). Acta Astronaut. 1989, 19, 505–511. [Google Scholar] [CrossRef]

- Duda, R.O.; Hart, P.E.; Stork, D.G. Pattern Classification and Scene Analysis Part 1: Pattern Classification; Wiley: Chichester, UK, 2000. [Google Scholar]

- Fukue, K.; Shimoda, H.; Matumae, Y.; Yamaguchi, R.; Sakata, T. Evaluations of unsupervised methods for land-cover/use classifications of Landsat TM data. Geocarto Int. 1988, 3, 37–44. [Google Scholar] [CrossRef]

- Miller, W.A.; Shasby, M.B. Refining Landsat classification results using digital terrain data. J. Appl. Photogr. Eng. 1982, 8, 35–40. [Google Scholar]

- Ritter, N.D.; Hepner, G.F. Application of an artificial neural network to land-cover classification of Thematic Mapper imagery. Comput. Geosci. 1990, 16, 873–880. [Google Scholar] [CrossRef]

- Townshend, J.R.; Justice, C.O. Unsupervised classification of MSS Landsat data for mapping spatially complex vegetation. Int. J. Remote Sens. 1980, 1, 105–120. [Google Scholar] [CrossRef]

- Lunetta, R.S.; Ediriwickrema, J.; Johnson, D.M.; Lyon, J.G.; McKerrow, A. Impacts of vegetation dynamics on the identification of land-cover change in a biologically complex community in North Carolina, USA. Remote Sens. Environ. 2002, 82, 258–270. [Google Scholar] [CrossRef]

- Rodriguez-Galiano, V.F.; Ghimire, B.; Rogan, J.; Chica-Olmo, M.; Rigol-Sanchez, J.P. An assessment of the effectiveness of a random forest classifier for land-cover classification. ISPRS J. Photogramm. Remote Sens. 2012, 67, 93–104. [Google Scholar] [CrossRef]

- Swain, P.H.; Vardeman, S.B.; Tilton, J.C. Contextual classification of multispectral image data. Pattern Recognit. 1981, 13, 429–441. [Google Scholar] [CrossRef]

- Tilton, J.C.; Swain, P.H. Contextual classification of multispectral image data. In Proceedings of the International Geoscience and Remote Sensing Symposium, Washington, DC, USA, 8–10 June 1981. [Google Scholar]

- Magnussen, S.; Boudewyn, P.; Wulder, M. Contextual classification of Landsat TM images to forest inventory cover types. Int. J. Remote Sens. 2004, 25, 2421–2440. [Google Scholar] [CrossRef]

- Liu, W.; Gopal, S.; Woodcock, C.E. Uncertainty and confidence in land cover classification using a hybrid classifier approach. Photogramm. Eng. Remote Sens. 2004, 70, 963–971. [Google Scholar] [CrossRef]

- Simpson, J.J.; McIntire, T.J.; Sienko, M. An improved hybrid clustering algorithm for natural scenes. IEEE Trans. Geosci. Remote Sens. 2000, 38, 1016–1032. [Google Scholar] [CrossRef]

- Warrender, C.E.; Augusteijn, M.F. Fusion of image classifications using Bayesian techniques with Markov random fields. Int. J. Remote Sens. 1999, 20, 1987–2002. [Google Scholar] [CrossRef]

- Youngentob, K.N.; Roberts, D.A.; Held, A.A.; Dennison, P.E.; Jia, X.; Lindenmayer, D.B. Mapping two Eucalyptus subgenera using multiple endmember spectral mixture analysis and continuum-removed imaging spectrometry data. Remote Sens. Environ. 2011, 115, 1115–1128. [Google Scholar] [CrossRef]

- Foody, G.; Cox, D. Sub-pixel land cover composition estimation using a linear mixture model and fuzzy membership functions. Remote Sens. 1994, 15, 619–631. [Google Scholar] [CrossRef]

- Binaghi, E.; Brivio, P.A.; Ghezzi, P.; Rampini, A. A fuzzy set-based accuracy assessment of soft classification. Pattern Recognit. Lett. 1999, 20, 935–948. [Google Scholar] [CrossRef]

- Somers, B.; Asner, G.P.; Tits, L.; Coppin, P. Endmember variability in spectral mixture analysis: A review. Remote Sens. Environ. 2011, 115, 1603–1616. [Google Scholar] [CrossRef]

- Wang, L.; Shi, C.; Diao, C.; Ji, W.; Yin, D. A survey of methods incorporating spatial information in image classification and spectral unmixing. Int. J. Remote Sens. 2016, 37, 3870–3910. [Google Scholar] [CrossRef]

- Mota, G.L.A.; Feitosa, R.Q.; Coutinho, H.L.C.; Liedtke, C.-E.; Müller, S.; Pakzad, K.; Meirelles, M.S.P. Multitemporal fuzzy classification model based on class transition possibilities. ISPRS J. Photogramm. Remote Sens. 2007, 62, 186–200. [Google Scholar] [CrossRef]

- Wang, L. Fuzzy supervised classification of remote sensing images. IEEE Trans. Geosci. Remote Sens. 1990, 28, 194–201. [Google Scholar] [CrossRef]

- Zhang, J.; Foody, G. A fuzzy classification of sub-urban land cover from remotely sensed imagery. Int. J Remote Sens. 1998, 19, 2721–2738. [Google Scholar] [CrossRef]

- Melgani, F.; Al Hashemy, B.A.; Taha, S.M. An explicit fuzzy supervised classification method for multispectral remote sensing images. IEEE Trans. Geosci. Remote Sens. 2000, 38, 287–295. [Google Scholar] [CrossRef]

- Ahmed, M.N.; Yamany, S.M.; Mohamed, N.; Farag, A.A.; Moriarty, T. A modified fuzzy c-means algorithm for bias field estimation and segmentation of MRI data. IEEE Trans. Med. Imaging 2002, 21, 193–199. [Google Scholar] [CrossRef] [PubMed]

- Peterson, S.H.; Stow, D.A. Using multiple image endmember spectral mixture analysis to study chaparral regrowth in southern California. Int. J. Remote Sens. 2003, 24, 4481–4504. [Google Scholar] [CrossRef]

- Dawelbait, M.; Morari, F. Monitoring desertification in a Savannah region in Sudan using Landsat images and spectral mixture analysis. J. Arid Environ. 2012, 80, 45–55. [Google Scholar] [CrossRef]

- Adams, J.B.; Sabol, D.E.; Kapos, V.; Almeida Filho, R.; Roberts, D.A.; Smith, M.O.; Gillespie, A.R. Classification of multispectral images based on fractions of endmembers: Application to land-cover change in the Brazilian Amazon. Remote Sens. Environ. 1995, 52, 137–154. [Google Scholar] [CrossRef]

- Roberts, D.A.; Gardner, M.; Church, R.; Ustin, S.; Scheer, G.; Green, R.O. Mapping chaparral in the Santa Monica Mountains using multiple endmember spectral mixture models. Remote Sens. Environ. 1998, 65, 267–279. [Google Scholar] [CrossRef]

- Powell, R.L.; Roberts, D.A.; Dennison, P.E.; Hess, L.L. Sub-pixel mapping of urban land cover using multiple endmember spectral mixture analysis: Manaus, Brazil. Remote Sens. Environ. 2007, 106, 253–267. [Google Scholar] [CrossRef]

- Dorren, L.K.A.; Maier, B.; Seijmonsbergen, A.C. Improved Landsat-based forest mapping in steep mountainous terrain using object-based classification. For. Ecol. Manag. 2003, 183, 31–46. [Google Scholar] [CrossRef]

- Peña, J.M.; Gutiérrez, P.A.; Hervás-Martínez, C.; Six, J.; Plant, R.E.; López-Granados, F. Object-based image classification of summer crops with machine learning methods. Remote Sens. 2014, 6, 5019–5041. [Google Scholar] [CrossRef]

- Moskal, L.M.; Styers, D.M.; Halabisky, M. Monitoring urban tree cover using object-based image analysis and public domain remotely sensed data. Remote Sens. 2011, 3, 2243–2262. [Google Scholar] [CrossRef]

- Kettig, R.L.; Landgrebe, D. Classification of multispectral image data by extraction and classification of homogeneous objects. IEEE Trans. Geosci. Electron. 1976, 14, 19–26. [Google Scholar] [CrossRef]

- Flanders, D.; Hall-Beyer, M.; Pereverzoff, J. Preliminary evaluation of eCognition object-based software for cut block delineation and feature extraction. Can. J. Remote Sens. 2003, 29, 441–452. [Google Scholar] [CrossRef]

- Trimble. Trimble acquires definiens’ earth sciences business to expand its geospatial portfolio. In eCognition to Power Trimble’s Image Analysis in Geospatial Industries; Trimble: Sunnyvale, CA, USA, 2010. [Google Scholar]

- Samal, D.R.; Gedam, S.S. Monitoring land use changes associated with urbanization: An object based image analysis approach. Eur. J. Remote Sens. 2015, 48, 85–99. [Google Scholar] [CrossRef]

- Li, Q.; Wang, C.; Zhang, B.; Lu, L. Object-based crop classification with Landsat-MODIS enhanced time-series data. Remote Sens. 2015, 7, 16091–16107. [Google Scholar] [CrossRef]

- Kindu, M.; Schneider, T.; Teketay, D.; Knoke, T. Land use/land cover change analysis using object-based classification approach in Munessa-Shashemene landscape of the Ethiopian Highlands. Remote Sens. 2013, 5, 2411–2435. [Google Scholar] [CrossRef]

- Tewolde, M.G.; Cabral, P. Urban sprawl analysis and modeling in Asmara, Eritrea. Remote Sens. 2011, 3, 2148–2165. [Google Scholar] [CrossRef]

- Wieland, M.; Pittore, M. Performance evaluation of machine learning algorithms for urban pattern recognition from multi-spectral satellite images. Remote Sens. 2014, 6, 2912–2939. [Google Scholar] [CrossRef]

- Li, C.; Wang, J.; Wang, L.; Hu, L.; Gong, P. Comparison of classification algorithms and training sample sizes in urban land classification with Landsat Thematic Mapper imagery. Remote Sens. 2014, 6, 964–983. [Google Scholar] [CrossRef]

- Gilbertson, J.K.; Kemp, J.; Van Niekerk, A. Effect of pan-sharpening multi-temporal Landsat 8 imagery for crop type differentiation using different classification techniques. Comput. Electron. Agric. 2017, 134, 151–159. [Google Scholar] [CrossRef]

- Budreski, K.A.; Wynne, R.H.; Browder, J.O.; Campbell, J.B. Comparison of segment and pixel-based non-parametric land cover classification in the Brazilian Amazon using multitemporal Landsat TM/ETM+ imagery. Photogramm. Eng. Remote Sens. 2007, 73, 813–827. [Google Scholar] [CrossRef]

- Vittek, M.; Brink, A.; Donnay, F.; Simonetti, D.; Desclée, B. Land cover change monitoring using Landsat MSS/TM satellite image data over West Africa between 1975 and 1990. Remote Sens. 2014, 6, 658–676. [Google Scholar] [CrossRef] [Green Version]

- Böhner, J.; Selige, T.; Ringeler, A. Image segmentation using representativeness analysis and region growing. In SAGA–Analysis and Modelling Applications; Gottinger Geographischne Abhandlungen; Boehner, J., McCloy, K.R., Strobl, J., Eds.; Geographischne Abhandlungen: Gottingen, Germany, 2006; pp. 29–38. [Google Scholar]

- Blaschke, T. Object based image analysis for remote sensing. ISPRS J. Photogramm. Remote Sens. 2010, 65, 2–16. [Google Scholar] [CrossRef]

- Riggan, N., Jr.; Weih, R.C., Jr. Comparison of pixel-based versus object-based land use/land cover classification methodologies. J. Ark. Acad. Sci. 2009, 63, 145–152. [Google Scholar]

- Blundell, J.; Opitz, D. Object recognition and feature extraction from imagery: The Feature Analyst® approach. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2006, 36, C42. [Google Scholar]

- Opitz, D.; Blundell, S. Object recognition and image segmentation: The Feature Analyst® approach. Object-Based Image Anal. 2008, 36, 153–167. [Google Scholar]

- Tsai, Y.H.; Stow, D.; Weeks, J. Comparison of object-based image analysis approaches to mapping new buildings in Accra, Ghana using multi-temporal QuickBird satellite imagery. Remote Sens. 2011, 3, 2707–2726. [Google Scholar] [CrossRef]

- Meinel, G.; Neubert, M. A comparison of segmentation programs for high resolution remote sensing data. Int. Arch. Photogramm. Remote Sens. 2004, 35, 1097–1105. [Google Scholar] [CrossRef]

- Cai, S.; Liu, D. A comparison of object-based and contextual pixel-based classifications using high and medium spatial resolution images. Remote Sens. Lett. 2013, 4, 998–1007. [Google Scholar] [CrossRef]

- Dingle Robertson, L.; King, D.J. Comparison of pixel- and object-based classification in land cover change mapping. Int. J. Remote Sens. 2011, 32, 1505–1529. [Google Scholar] [CrossRef]

- Frohn, R.; Autrey, B.; Lane, C.; Reif, M. Segmentation and object-oriented classification of wetlands in a Karst Florida landscape using multi-season Landsat-7 ETM+ imagery. Int. J. Remote Sens. 2011, 32, 1471–1489. [Google Scholar] [CrossRef]

- Zerrouki, N.; Bouchaffra, D. Pixel-based or object-based: Which approach is more appropriate for remote sensing image classification? In Proceedings of the 2014 IEEE International Conference on Systems, Man and Cybernetics (SMC), San Diego, CA, USA, 5–8 October 2014; IEEE: Piscataway, NJ, USA, 2014; pp. 864–869. [Google Scholar]

- Huth, J.; Kuenzer, C.; Wehrmann, T.; Gebhardt, S.; Tuan, V.Q.; Dech, S. Land cover and land use classification with TWOPAC: Towards automated processing for pixel-and object-based image classification. Remote Sens. 2012, 4, 2530–2553. [Google Scholar] [CrossRef]

- Liu, D.; Xia, F. Assessing object-based classification: Advantages and limitations. Remote Sens. Lett. 2010, 1, 187–194. [Google Scholar] [CrossRef]

- Myint, S.W.; Gober, P.; Brazel, A.; Grossman-Clarke, S.; Weng, Q. Per-pixel vs. Object-based classification of urban land cover extraction using high spatial resolution imagery. Remote Sens. Environ. 2011, 115, 1145–1161. [Google Scholar] [CrossRef]

- Darwish, A.; Leukert, K.; Reinhardt, W. Image Segmentation for the Purpose of Object-based Classification. In Proceedings of the 2003 IEEE International Conference on Geoscience and Remote Sensing Symposium, IGARSS’03, Toulouse, France, 21–25 July 2003; IEEE International: Piscataway, NJ, USA, 2003; pp. 2039–2041. [Google Scholar]

- Möller, M.; Lymburner, L.; Volk, M. The comparison index: A tool for assessing the accuracy of image segmentation. Int. J. Appl. Earth Obs. Geoinf. 2007, 9, 311–321. [Google Scholar] [CrossRef]

- Dronova, I.; Gong, P.; Clinton, N.E.; Wang, L.; Fu, W.; Qi, S.; Liu, Y. Landscape analysis of wetland plant functional types: The effects of image segmentation scale, vegetation classes and classification methods. Remote Sens. Environ. 2012, 127, 357–369. [Google Scholar] [CrossRef]

- Drǎguţ, L.; Tiede, D.; Levick, S.R. ESP: A tool to estimate scale parameter for multiresolution image segmentation of remotely sensed data. Int. J. Geogr. Inf. Sci. 2010, 24, 859–871. [Google Scholar] [CrossRef]

- Tailor, A.; Cross, A.; Hogg, D.C.; Mason, D.C. Knowledge-based interpretation of remotely sensed images. Image Vis. Comput. 1986, 4, 67–83. [Google Scholar] [CrossRef]

- Sikder, I.U. Knowledge-based spatial decision support systems: An assessment of environmental adaptability of crops. Expert Syst. Appl. 2009, 36, 5341–5347. [Google Scholar] [CrossRef]

- Wang, L.; Newkirk, R. A knowledge-based system for highway network extraction. IEEE Trans. Geosci. Remote Sens. 1988, 26, 525–531. [Google Scholar] [CrossRef]

- Ghassemian, H. A review of remote sensing image fusion methods. Inf. Fusion 2016, 32(Part A), 75–89. [Google Scholar] [CrossRef]

- Ehlers, M. Multisensor image fusion techniques in remote sensing. ISPRS J. Photogramm. Remote Sens. 1991, 46, 19–30. [Google Scholar] [CrossRef]

- Hansen, M.; DeFries, R.; Townshend, J.R.; Sohlberg, R. Global land cover classification at 1 km spatial resolution using a classification tree approach. Int. J. Remote Sens. 2000, 21, 1331–1364. [Google Scholar] [CrossRef]

- Otukei, J.R.; Blaschke, T.; Collins, M. Fusion of TerraSAR-X and Landsat ETM+ data for protected area mapping in Uganda. Int. J. Appl. Earth Obs. Geoinf. 2015, 38, 99–104. [Google Scholar] [CrossRef]

- Carrão, H.; Gonçalves, P.; Caetano, M. Contribution of multispectral and multitemporal information from MODIS images to land cover classification. Remote Sens. Environ. 2008, 112, 986–997. [Google Scholar] [CrossRef]

- Hyde, P.; Dubayah, R.; Walker, W.; Blair, J.B.; Hofton, M.; Hunsaker, C. Mapping forest structure for wildlife habitat analysis using multi-sensor (LiDAR, SAR/inSAR, ETM+, QuickBird) synergy. Remote Sens. Environ. 2006, 102, 63–73. [Google Scholar] [CrossRef]

- Xu, C.; Morgenroth, J.; Manley, B. Integrating data from discrete return airborne LiDAR and optical sensors to enhance the accuracy of forest description: A review. Curr. For. Rep. 2015, 1, 206–219. [Google Scholar] [CrossRef]

- Hudak, A.T.; Lefsky, M.A.; Cohen, W.B.; Berterretche, M. Integration of LiDAR and Landsat ETM+ data for estimating and mapping forest canopy height. Remote Sens. Environ. 2002, 82, 397–416. [Google Scholar] [CrossRef]

- Donoghue, D.N.M.; Watt, P.J. Using LiDAR to compare forest height estimates from IKONOS and Landsat ETM + data in Sitka spruce plantation forests. Int. J. Remote Sens. 2006, 27, 2161–2175. [Google Scholar] [CrossRef]

- Hilker, T.; Wulder, M.A.; Coops, N.C.; Linke, J.; McDermid, G.; Masek, J.G.; Gao, F.; White, J.C. A new data fusion model for high spatial- and temporal-resolution mapping of forest disturbance based on Landsat and MODIS. Remote Sens. Environ. 2009, 113, 1613–1627. [Google Scholar] [CrossRef]

- Pohl, C.; Van Genderen, J.L. Review article multisensor image fusion in remote sensing: Concepts, methods and applications. Int. J. Remote Sens. 1998, 19, 823–854. [Google Scholar] [CrossRef]

- Toll, D.L. Effect of Landsat Thematic Mapper sensor parameters on land cover classification. Remote Sens. Environ. 1985, 17, 129–140. [Google Scholar] [CrossRef]

- Haack, B.; Bryant, N.; Adams, S. An assessment of Landsat MSS and TM data for urban and near-urban land-cover digital classification. Remote Sens. Environ. 1987, 21, 201–213. [Google Scholar] [CrossRef]

- Mulligan, P.J.; Gervin, J.C.; Lu, Y.C. Comparison of MSS and TM Data for Landcover Classification in the Chesapeake Bay Area—A Preliminary Report; NASA: Washington, DC, USA, 1985; pp. 415–419.

- Heumann, B.W. An object-based classification of mangroves using a hybrid decision tree—Support vector machine approach. Remote Sens. 2011, 3, 2440–2460. [Google Scholar] [CrossRef]

- Pullanikkatil, D.; Palamuleni, L.; Ruhiiga, T. Assessment of land use change in Likangala River catchment, Malawi: A remote sensing and DPSIR approach. Appl. Geogr. 2016, 71, 9–23. [Google Scholar] [CrossRef]

- Sloan, S. Historical tropical successional forest cover mapped with Landsat MSS imagery. Int. J. Remote Sens. 2012, 33, 7902–7935. [Google Scholar] [CrossRef]

- Kumar, R.; Nandy, S.; Agarwal, R.; Kushwaha, S.P.S. Forest cover dynamics analysis and prediction modeling using logistic regression model. Ecol. Indic. 2014, 45, 444–455. [Google Scholar] [CrossRef]

- Vieira, I.C.G.; de Almeida, A.S.; Davidson, E.A.; Stone, T.A.; Reis de Carvalho, C.J.; Guerrero, J.B. Classifying successional forests using Landsat spectral properties and ecological characteristics in Eastern Amazônia. Remote Sens. Environ. 2003, 87, 470–481. [Google Scholar] [CrossRef]

- Justice, C.; Townshend, J. A comparison of unsupervised classification procedures on Landsat MSS data for an area of complex surface conditions in Basilicata, Southern Italy. Remote Sens. Environ. 1982, 12, 407–420. [Google Scholar] [CrossRef]

- Kirui, K.B.; Kairo, J.G.; Bosire, J.; Viergever, K.M.; Rudra, S.; Huxham, M.; Briers, R.A. Mapping of mangrove forest land cover change along the Kenya coastline using Landsat imagery. Ocean Coast. Manag. 2013, 83, 19–24. [Google Scholar] [CrossRef]

- Lunetta, R.S.; Johnson, D.M.; Lyon, J.G.; Crotwell, J. Impacts of imagery temporal frequency on land-cover change detection monitoring. Remote Sens. Environ. 2004, 89, 444–454. [Google Scholar] [CrossRef]

- Stuckens, J.; Coppin, P.R.; Bauer, M.E. Integrating contextual information with per-pixel classification for improved land cover classification. Remote Sens. Environ. 2000, 71, 282–296. [Google Scholar] [CrossRef]

- Flygare, A. A comparison of contextual classification methods using Landsat TM. Int. J. Remote Sens. 1997, 18, 3835–3842. [Google Scholar] [CrossRef]

- Lo, C.; Choi, J. A hybrid approach to urban land use/cover mapping using Landsat 7 Enhanced Thematic Mapper plus (ETM+) images. Int. J. Remote Sens. 2004, 25, 2687–2700. [Google Scholar] [CrossRef]

- Kuemmerle, T.; Radeloff, V.C.; Perzanowski, K.; Hostert, P. Cross-border comparison of land cover and landscape pattern in Eastern Europe using a hybrid classification technique. Remote Sens. Environ. 2006, 103, 449–464. [Google Scholar] [CrossRef]

- Hamada, Y.; Stow, D.A.; Roberts, D.A.; Franklin, J.; Kyriakidis, P.C. Assessing and monitoring semi-arid shrublands using object-based image analysis and multiple endmember spectral mixture analysis. Environ. Monit. Assess. 2013, 185, 3173–3190. [Google Scholar] [CrossRef] [PubMed]

- Théau, J.; Peddle, D.R.; Duguay, C.R. Mapping lichen in a caribou habitat of Northern Quebec, Canada, using an enhancement_classification method and spectral mixture analysis. Remote Sens. Environ. 2005, 94, 232–243. [Google Scholar] [CrossRef]

- Ton, J.; Sticklen, J.; Jain, A.K. Knowledge-based segmentation of Landsat images. IEEE Trans. Geosci. Remote Sens. 1991, 29, 222–232. [Google Scholar] [CrossRef]

- Manandhar, R.; Odeh, I.O.; Ancev, T. Improving the accuracy of land use and land cover classification of Landsat data using post-classification enhancement. Remote Sens. 2009, 1, 330–344. [Google Scholar] [CrossRef]

- Shimoda, H.; Fukue, K.; Yamaguchi, R.; Zi-Jue, Z.; Sakata, T. Accuracy of landcover classification of TM and SPOT data. In Proceedings of the 1988 IEEE International Conference on Geoscience ang Remote Sensing Symposium, IGARSS’88, Edinburgh, UK, 10–12 September 1988; Volume 1, pp. 529–535. [Google Scholar]

- Franklin, S.E. Topographic context of satellite spectral response. Comput. Geosci. 1990, 16, 1003–1010. [Google Scholar] [CrossRef]

- Huang, X.; Lu, Q.; Zhang, L.; Plaza, A. New postprocessing methods for remote sensing image classification: A systematic study. IEEE Trans. Geosci. Remote Sens. 2014, 52, 7140–7159. [Google Scholar] [CrossRef]

- Tatem, A.J.; Nayar, A.; Hay, S.I. Scene selection and the use of NASA’s global orthorectified Landsat dataset for land cover and land use change monitoring. Int. J. Remote Sens. 2006, 27, 3073–3078. [Google Scholar] [CrossRef] [PubMed]

- Gutman, G.; Huang, C.; Chander, G.; Noojipady, P.; Masek, J.G. Assessment of the NASA-USGS global land survey (GLS) datasets. Remote Sens. Environ. 2013, 134, 249–265. [Google Scholar] [CrossRef]

- Young, N.E.; Anderson, R.S.; Chignell, S.M.; Vorster, A.G.; Lawrence, R.; Evangelista, P.H. A survival guide to Landsat preprocessing. Ecology 2017, 98, 920–932. [Google Scholar] [CrossRef] [PubMed]

- Tucker, C.J.; Grant, D.M.; Dykstra, J.D. NASA’s global orthorectified Landsat data set. Photogramm. Eng. Remote Sens. 2004, 70, 313–322. [Google Scholar] [CrossRef]

- Roy, D.P.; Wulder, M.A.; Loveland, T.R.; Woodcock, C.E.; Allen, R.G.; Anderson, M.C.; Helder, D.; Irons, J.R.; Johnson, D.M.; Kennedy, R.; et al. Landsat-8: Science and product vision for terrestrial global change research. Remote Sens. Environ. 2014, 145, 154–172. [Google Scholar] [CrossRef]

- Franklin, S.E. Image transformations in mountainous terrain and the relationship to surface patterns. Comput. Geosci. 1991, 17, 1137–1149. [Google Scholar] [CrossRef]

- Fahsi, A.; Tsegaye, T.; Tadesse, W.; Coleman, T. Incorporation of digital elevation models with Landsat-TM data to improve land cover classification accuracy. For. Ecol. Manag. 2000, 128, 57–64. [Google Scholar] [CrossRef]

- Gao, Y.; Zhang, W. LULC classification and topographic correction of Landsat-7 ETM+ imagery in the Yangjia River Watershed: The influence of DEM resolution. Sensors 2009, 9, 1980–1995. [Google Scholar] [CrossRef] [PubMed]

- Castillejo-González, I.L.; Peña-Barragán, J.M.; Jurado-Expósito, M.; Mesas-Carrascosa, F.J.; López-Granados, F. Evaluation of pixel- and object-based approaches for mapping wild oat (Avena sterilis) weed patches in wheat fields using QuickBird imagery for site-specific management. Eur. J. Agron. 2014, 59, 57–66. [Google Scholar] [CrossRef]

- Araya, Y.H.; Cabral, P. Analysis and modeling of urban land cover change in Setúbal and Sesimbra, Portugal. Remote Sens. 2010, 2, 1549–1563. [Google Scholar] [CrossRef]

- Dronova, I. Object-based image analysis in wetland research: A review. Remote Sens. 2015, 7, 6380–6413. [Google Scholar] [CrossRef]

| Landsat 1–3 (MSS) 1 | Landsat 4–5 (MSS) | Landsat 4–5 (TM) | Landsat 7 (ETM+) | Landsat 8 (OLI) | |||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 1972–1983 | 1975–2013 | 1975–2013 | 1999 to present | 2013 to present | |||||||||||||||

| Temporal | Radiometric | Temporal | Radiometric | Temporal | Radiometric | Temporal | Radiometric | Temporal | Radiometric | ||||||||||

| 18 days | 6 bits | 18 days | 6 bits | 16 days | 8 bits | 16 days | 9 bits | 16 days | 12 bits | ||||||||||

| Band Name | Spectral (μm) | Spatial (m) | Band Name | Spectral (μm) | Spatial (m) | Band Name | Spectral (μm) | Spatial (m) | Band Name | Spectral (μm) | Spatial (m) | Band Name | Spectral (μm) | Spatial (m) | |||||

| Band 4-Green | 0.5–0.6 | 60 | Band 4-Green | 0.5–0.6 | 60 | Band 1-Blue | 0.45–0.52 | 30 | Band 1-Blue | 0.45–0.52 | 30 | Band 1-Ultra | 0.43–0.45 | 30 | |||||

| Band 5-Red | 0.6–0.7 | 60 | Band 5-Red | 0.6–0.7 | 60 | Band 2-Green | 0.52–0.60 | 30 | Band 2-Green | 0.52–0.60 | 30 | Band 2-Blue | 0.45–0.51 | 30 | |||||

| Band 6-NIR | 0.7–0.8 | 60 | Band 6-NIR | 0.7–0.8 | 60 | Band 3-Red | 0.63–0.69 | 30 | Band 3-Red | 0.63–0.69 | 30 | Band 3-Green | 0.53–0.59 | 30 | |||||

| Band 7-NIR | 0.8–1.10 | 60 | Band 7-NIR | 0.8–1.10 | 60 | Band 4-NIR | 0.76–0.90 | 30 | Band 4-NIR | 0.77–0.90 | 30 | Band 4-Red | 0.64–0.67 | 30 | |||||

| Band 5-NIR | 0.85–0.88 | 30 | |||||||||||||||||

| Band 5-SWIR1 | 1.55–1.75 | 30 | Band 5-SWIR1 | 1.55–1.75 | 30 | Band 6-SWIR1 | 1.57–1.65 | 30 | |||||||||||

| Band 7-SWIR2 | 2.08–2.35 | 30 | Band 7-SWIR2 | 2.09–2.35 | 30 | Band 7-SWIR2 | 2.11–2.29 | 30 | |||||||||||

| Band 8-Pan | 0.52–0.90 | 15 | Band 8-Pan | 0.50–0.68 | 15 | ||||||||||||||

| Band 9-Circus | 1.36–1.38 | 30 | |||||||||||||||||

| Band 6-TIR | 10.40–12.50 | 120 | Band 6-1-TIR | 10.40–12.50 | 60 | Band 10-TIR | 10.60–11.19 | 100 | |||||||||||

| Band 6-2-TIR | 10.40–12.50 | 60 | Band 11-TIR | 11.50–12.51 | 100 | ||||||||||||||

| Classification Approach | Method | Classifier Used | Landsat Images Used | Type of Land Cover | Accuracy Attained (%) | Source |

|---|---|---|---|---|---|---|

| Pixel-based | Supervised | ML, NN, SVM | MSS, TM, OLI | Urban area | 73–82 | [29,125,129] |

| ML | MSS, TM, OLI | Forest plantation | 61–90 | [129,130,131] | ||

| ML | MSS, OLI | Dense forest | 68–90 | [129,130,131] | ||

| ML | TM, OLI | Open forest | 52–81 | [129,132] | ||

| Unsupervised | ISODAT | TM | Urban area | 78–94 | [55,133] | |

| ISODAT | TM | Forest plantation | 71–87 | [133,134,135] | ||

| ISODAT | TM, OLI | Dense forest | 71–87 | [133,134,135] | ||

| ISODAT | TM | Open forest | 69–81 | [133,135] | ||

| Contextual | ECHO, Majority filter | TM | Urban area | 72–81 | [136,137] | |

| ECHO, Majority filter | TM | Forest plantation | 70–81 | [136,137] | ||

| ECHO, Majority filter | TM, ETM+ | Dense forest | 72–82 | [136,137] | ||

| NN | MSS | Open forest | 66–90 | [57,136,137] | ||

| ECHO, Majority filter | TM, ETM+ | Agricultural area | 66–97 | [136,137] | ||

| Hybrid | ISODAT, fuzzy, ML | TM, ETM+ | Urban area | 64–96 | [138,139] | |

| ML, Rule based, ISODAT | TM, ETM+, DEM | Forest plantation | 74–87 | [138,139] | ||

| ML, Rule based, ISODAT | TM, ETM+, DEM | Dense forest | 79–91 | [138,139] | ||

| ML, Rule based, ISODAT | TM, ETM+ | Agricultural area | 64–84 | [138,139] | ||

| Sub-pixel | SMA | LSMA, MESMA | TM, ETM+, OLI | Urban area | 83–90 | [29,77] |

| LSMA | TM | Forest plantation | 77–93 | [73,76] | ||

| LSMA | TM, OLI | Dense forest | 75–93 | [23,73] | ||

| LSMA | TM | Open forest | 77–87 | [73,140] | ||

| LSMA | TM, OLI | Agriculture area | 70–74 | [23,141] | ||

| Fuzzy analysis | Fuzzy C-Mean | MSS | Urban area | 70–90 | [44,71] | |

| Fuzzy partitioning | TM | Forest plantation | 74–90 | [68,69] | ||

| Fuzzy membership | TM | Dense forest | 74–70 | [44,64] | ||

| Explicit fuzzy | TM | Open forest | 56–79 | [44,71] | ||

| Explicit fuzzy | TM | Agriculture | 74–92 | [68,71] | ||

| Object-based | OBIA 1 | SVM, DT, RF, NN | ETM+, TM, MSS, OLI | Urban areas | 73–98 | [29,84] |

| Decision rule | ETM+, TM | Forest plantation | 80–97 | [45,84,101] | ||

| Decision rule | TM | Natural forest | 77–95 | [45,78,84,101] | ||

| Decision rule | TM | Agriculture area | 76–90 | [78,101] | ||

| Knowledge based | Expert-knowledge | MSS, TM | Urban area | 87–90 | [111,113,142] | |

| Spectral expert | MSS, TM, DEM | Forest plantation | 86–94 | [142,143] | ||

| Spectral expert | MSS, TM, DEM | Dense forest | 85–92 | [142,143] | ||

| Eco-SDSS | MSS, TM, GIS | Agriculture area | 85–88 | [112,142] |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Phiri, D.; Morgenroth, J. Developments in Landsat Land Cover Classification Methods: A Review. Remote Sens. 2017, 9, 967. https://doi.org/10.3390/rs9090967

Phiri D, Morgenroth J. Developments in Landsat Land Cover Classification Methods: A Review. Remote Sensing. 2017; 9(9):967. https://doi.org/10.3390/rs9090967

Chicago/Turabian StylePhiri, Darius, and Justin Morgenroth. 2017. "Developments in Landsat Land Cover Classification Methods: A Review" Remote Sensing 9, no. 9: 967. https://doi.org/10.3390/rs9090967

APA StylePhiri, D., & Morgenroth, J. (2017). Developments in Landsat Land Cover Classification Methods: A Review. Remote Sensing, 9(9), 967. https://doi.org/10.3390/rs9090967