Fast Segmentation and Classification of Very High Resolution Remote Sensing Data Using SLIC Superpixels

Abstract

:1. Introduction

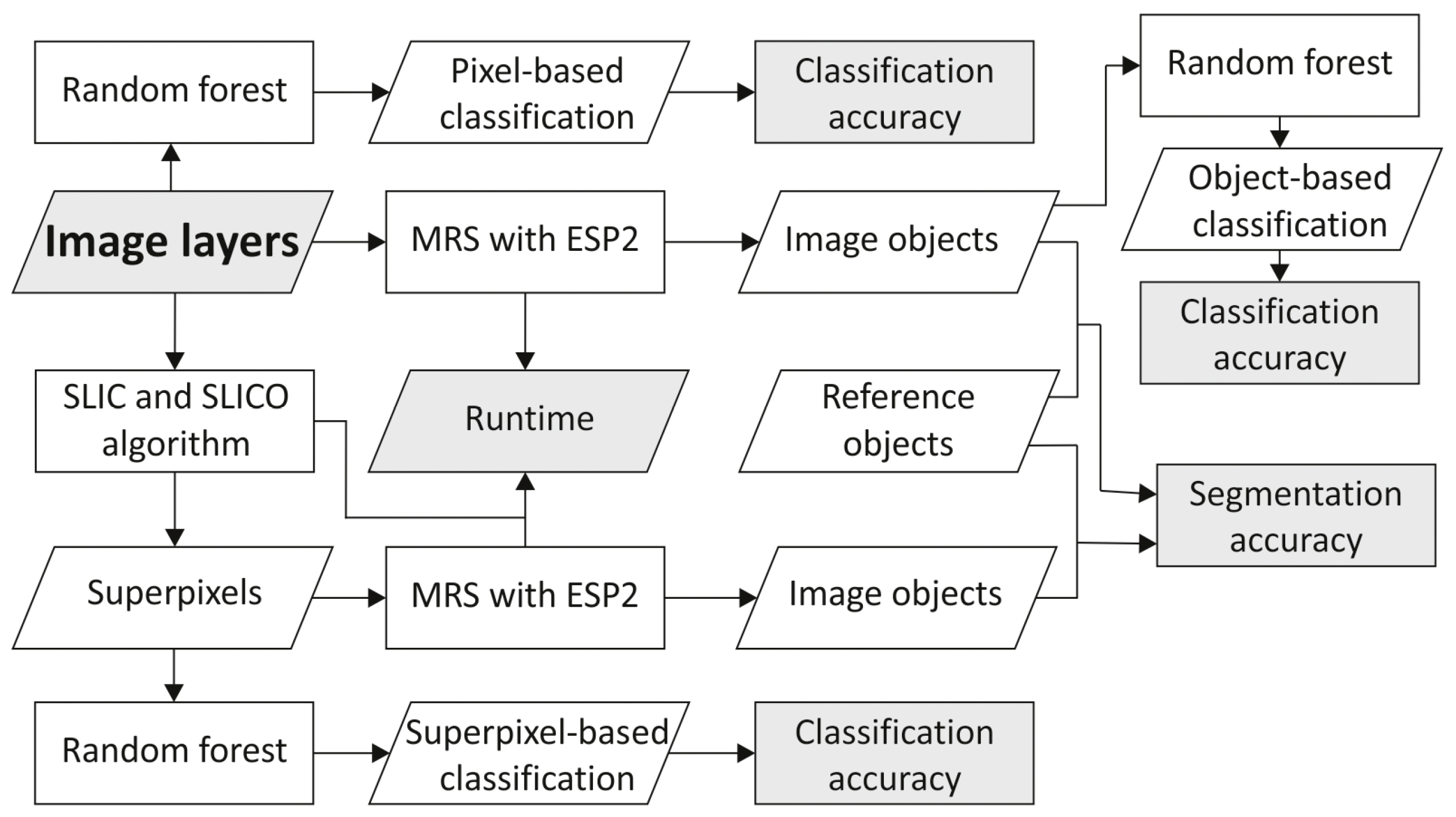

2. Materials and Methods

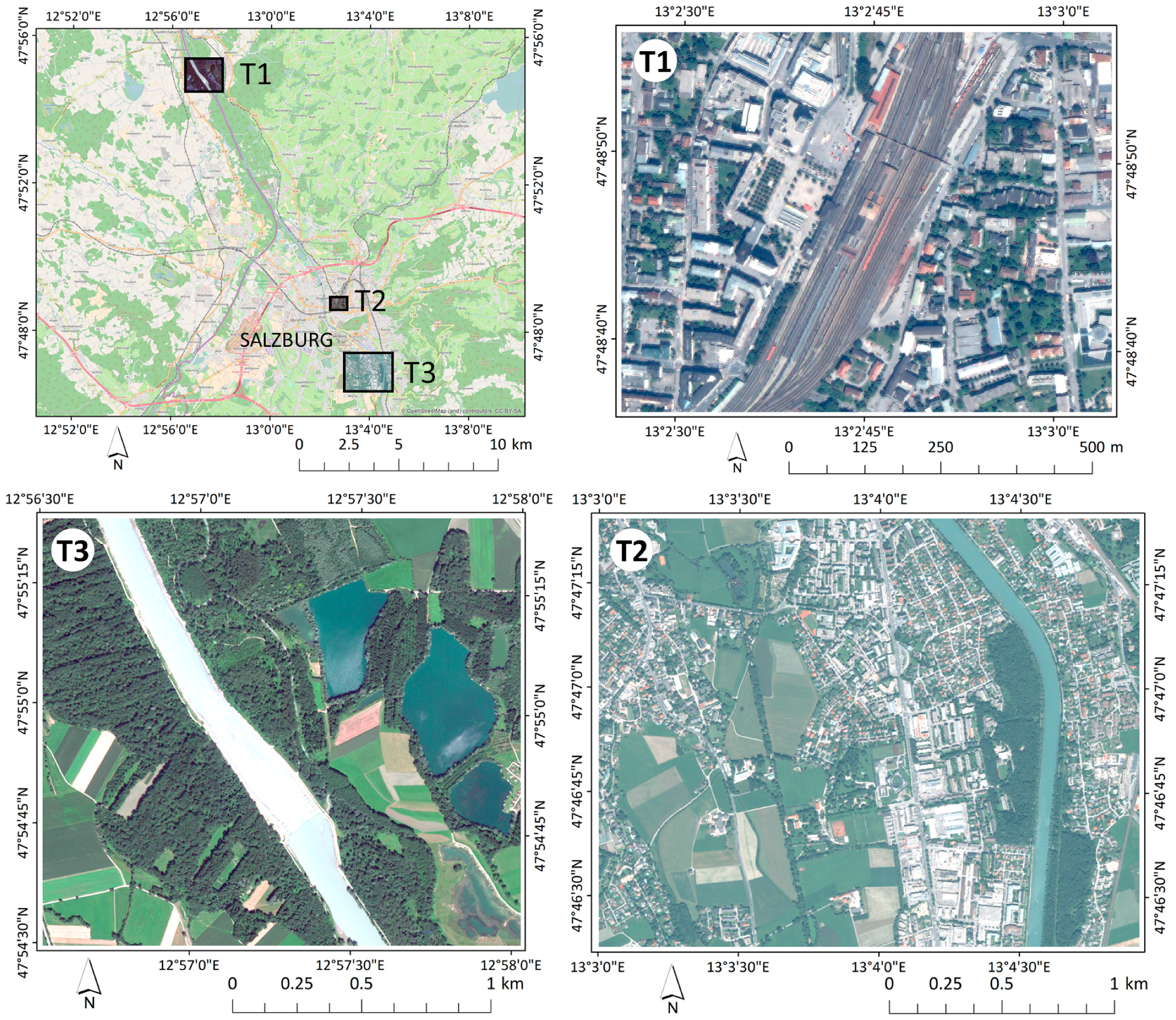

2.1. Datasets

2.2. Simple Linear Iterative Clustering (SLIC) Superpixels

2.3. Multiresolution Segmentation: Pixels vs. Superpixels

2.4. Assessment of Segmentation Results

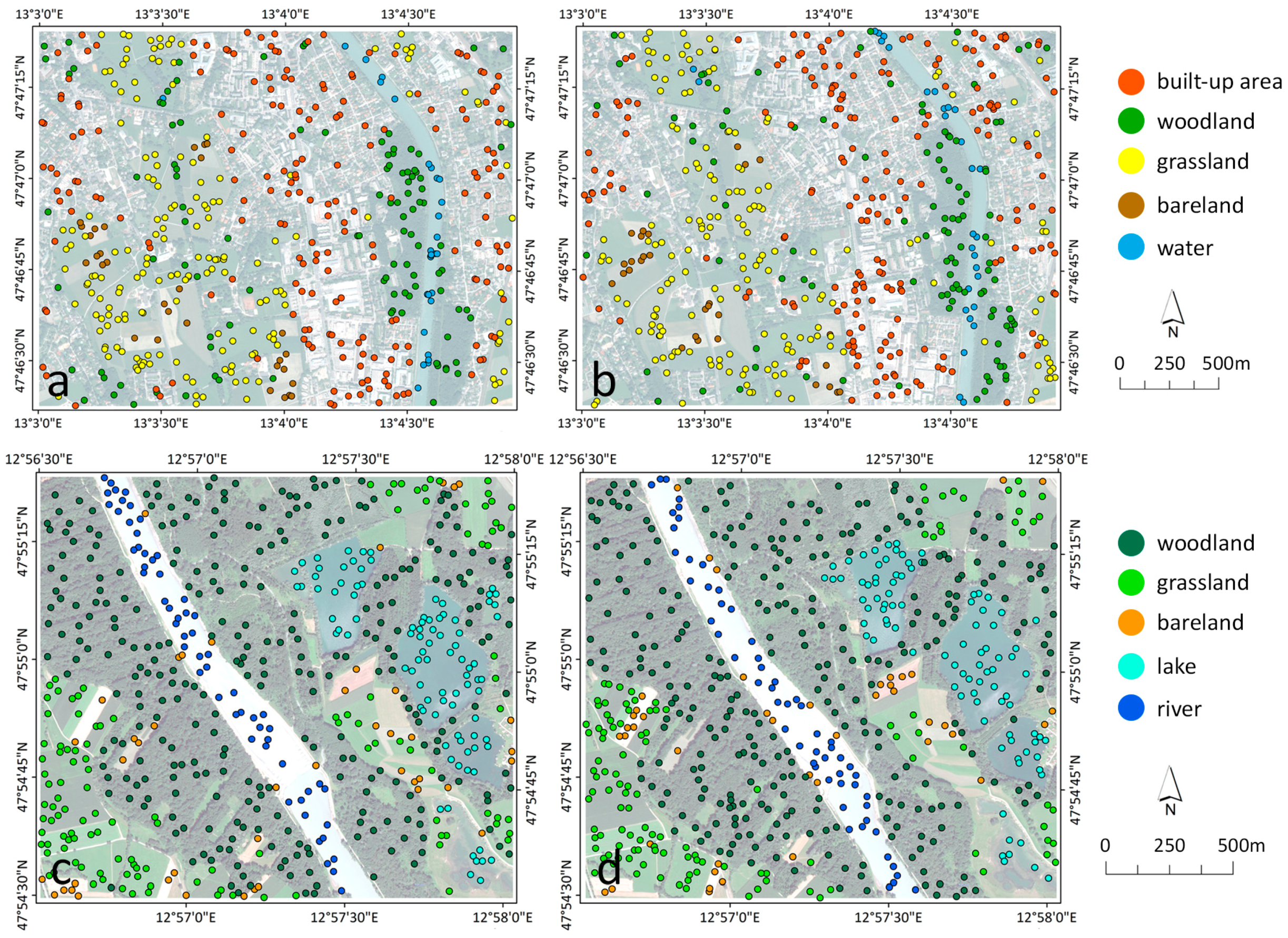

2.5. Training and Validation Samples

2.6. Random Forest Classification

2.7. Classification Accuracy Evaluation

3. Results

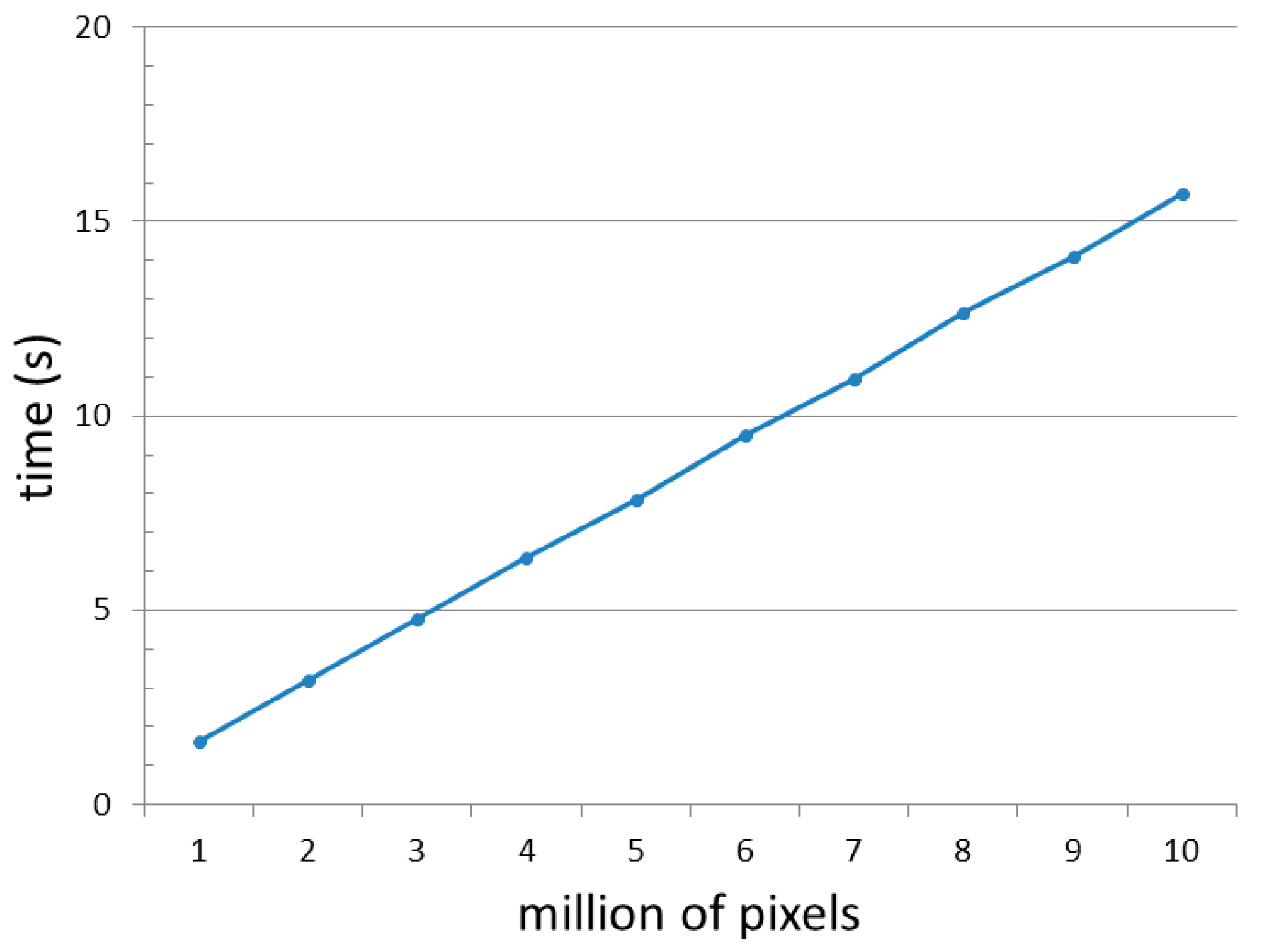

3.1. SLIC and SLICO Superpixel Generation

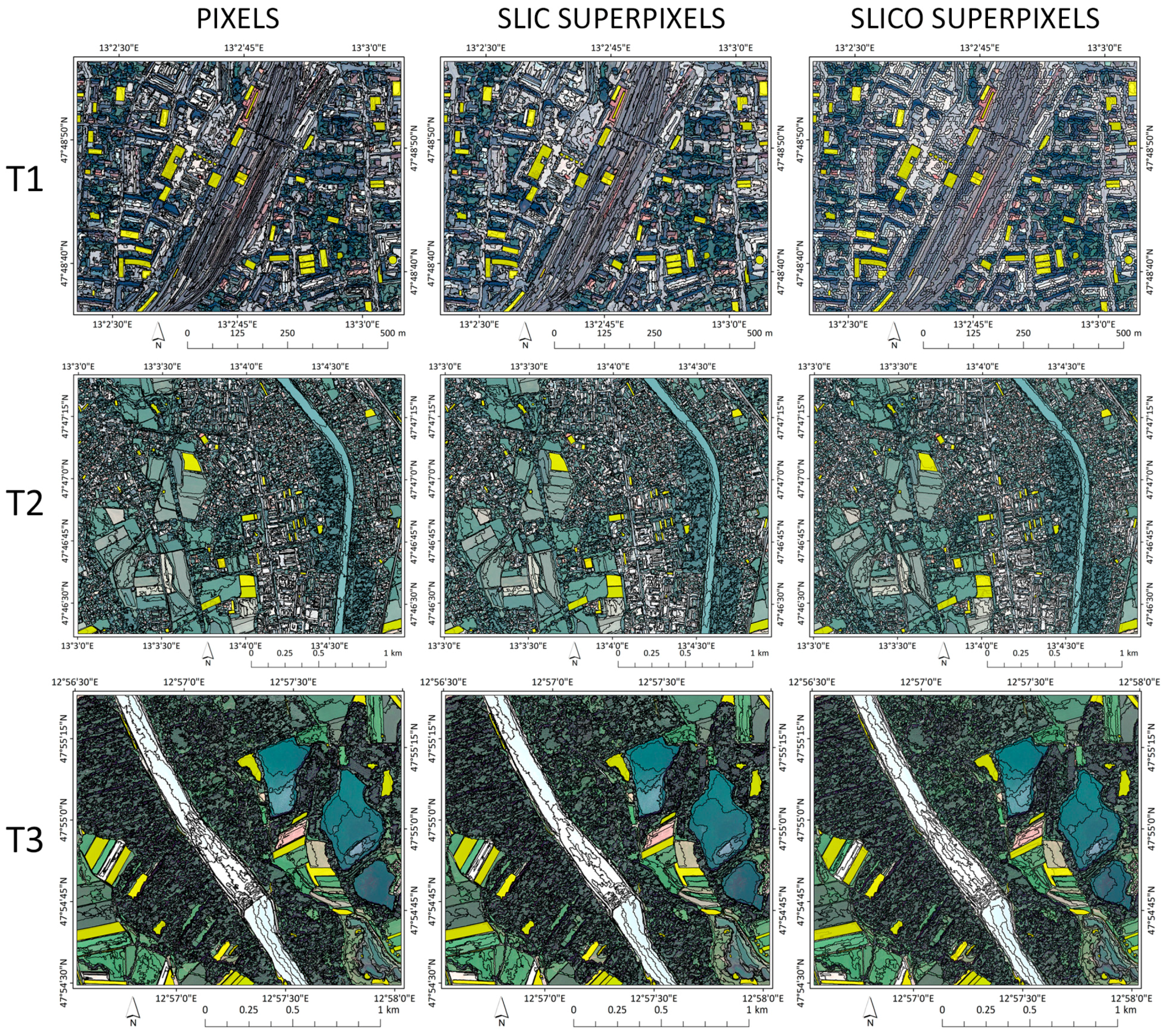

3.2. Multiresolution Segmentation: Pixels vs. Superpixel Results

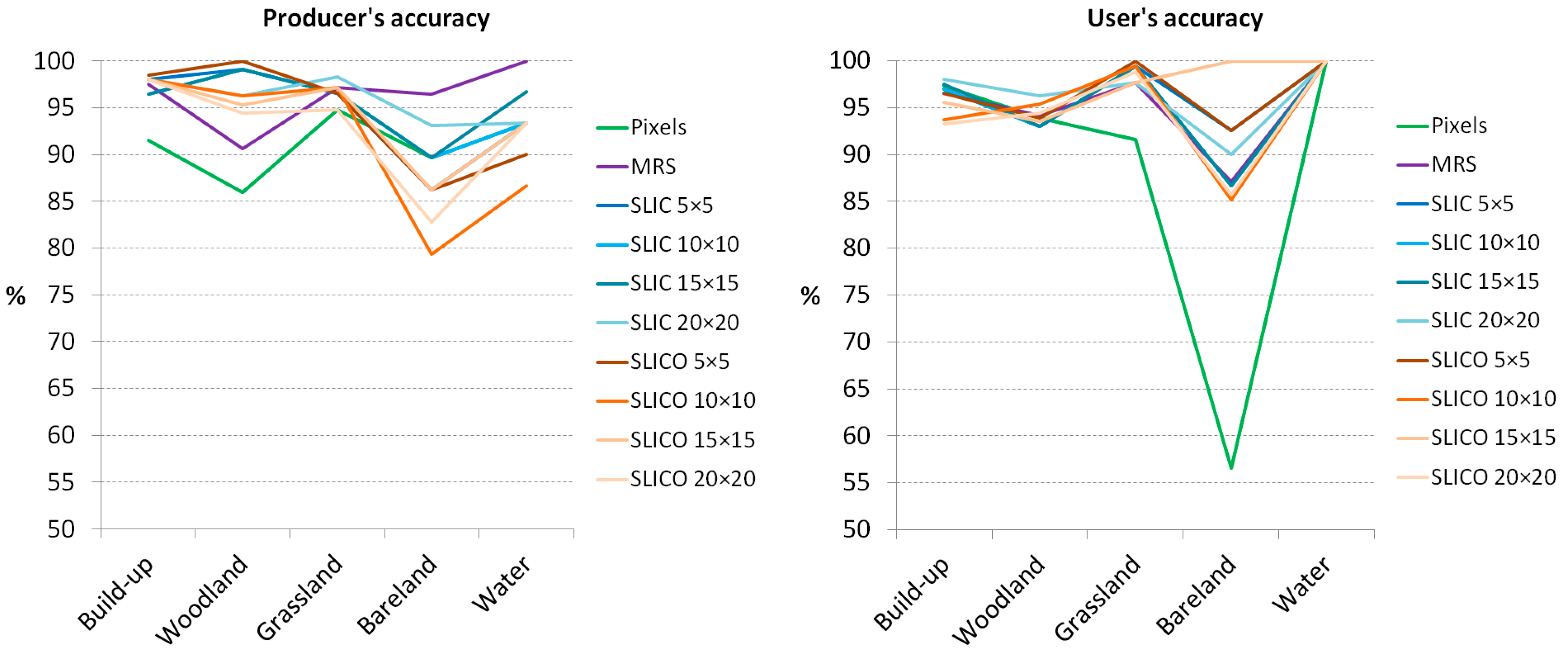

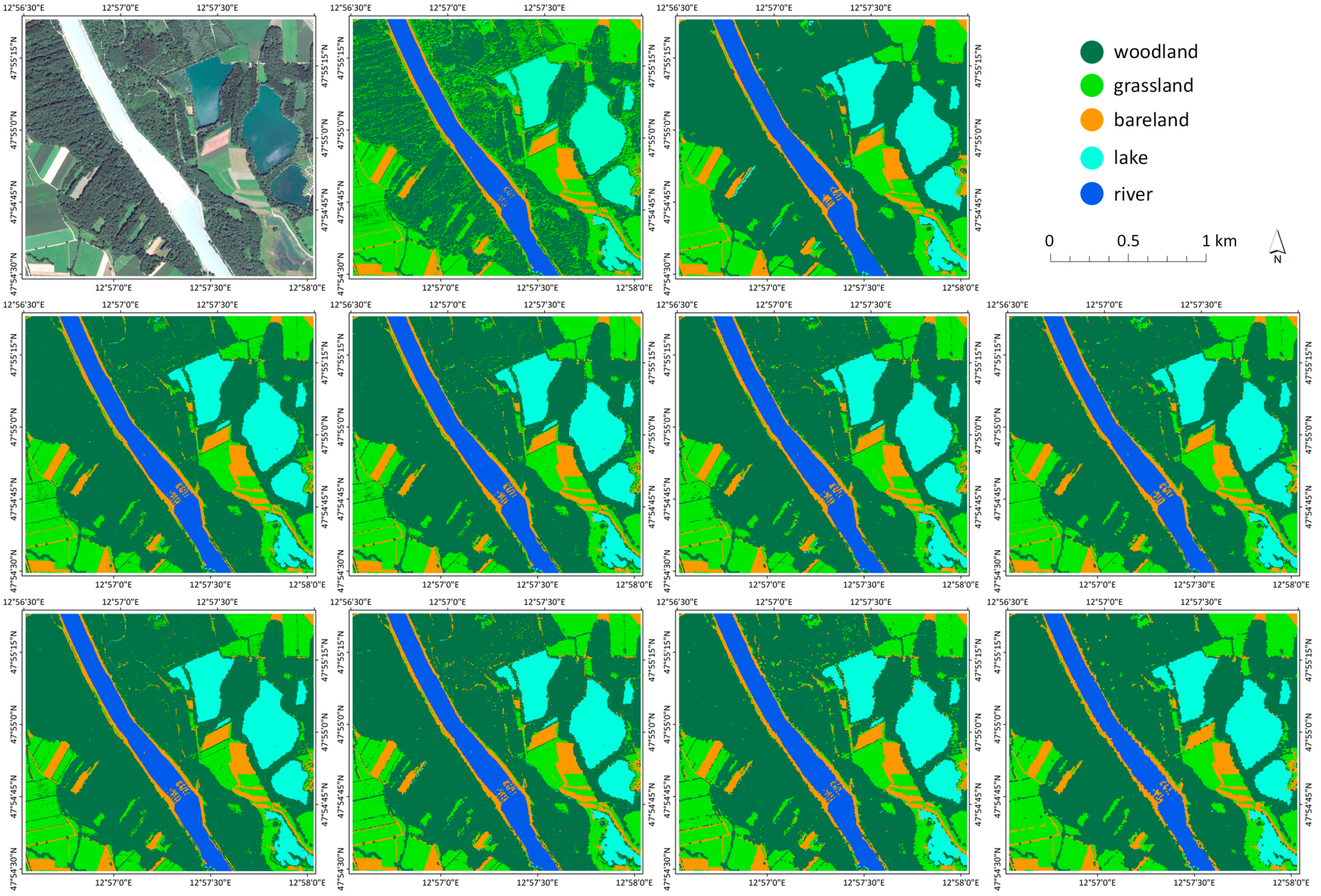

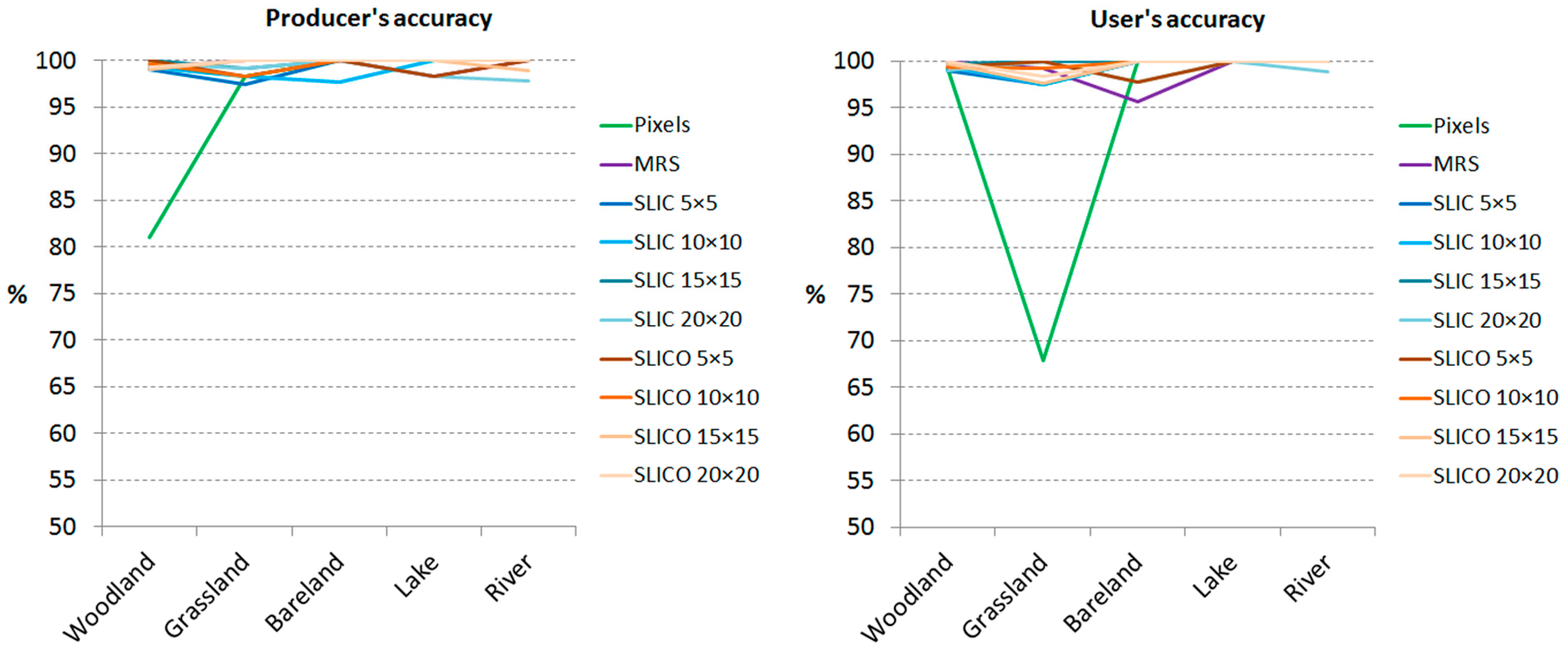

3.3. Pixel-Based, Superpixel-Based and MRS-Based RF Classification Results

4. Discussion

5. Conclusions

Acknowledgments

Conflicts of Interest

References

- Hay, G.J.; Castilla, G. Geographic object-based image analysis (geobia): A new name for a new discipline. In Object-Based Image Analysis; Blaschke, T., Lang, S., Hay, G., Eds.; Springer: Berlin/Heidelberg, Germany, 2008; pp. 75–89. [Google Scholar]

- Blaschke, T. Object based image analysis for remote sensing. ISPRS J. Photogramm. Remote Sens. 2010, 65, 2–16. [Google Scholar] [CrossRef]

- Cheng, G.; Han, J. A survey on object detection in optical remote sensing images. ISPRS J. Photogramm. Remote Sens. 2016, 117, 11–28. [Google Scholar] [CrossRef]

- Neubert, M.; Herold, H.; Meinel, G. Evaluation of remote sensing image segmentation quality—Further results and concepts. In Proceedings of the International Conference on Object-Based Image Analysis (ICOIA), Salzburg University, Salzburg, Austria, 4–5 July 2006.

- Arvor, D.; Durieux, L.; Andrés, S.; Laporte, M.-A. Advances in geographic object-based image analysis with ontologies: A review of main contributions and limitations from a remote sensing perspective. ISPRS J. Photogramm. Remote Sens. 2013, 82, 125–137. [Google Scholar] [CrossRef]

- Drăguţ, L.; Csillik, O.; Eisank, C.; Tiede, D. Automated parameterisation for multi-scale image segmentation on multiple layers. ISPRS J. Photogramm. Remote Sens. 2014, 88, 119–127. [Google Scholar] [CrossRef] [PubMed]

- Benz, U.C.; Hofmann, P.; Willhauck, G.; Lingenfelder, I.; Heynen, M. Multi-resolution, object-oriented fuzzy analysis of remote sensing data for gis-ready information. ISPRS J. Photogramm. Remote Sens. 2004, 58, 239–258. [Google Scholar] [CrossRef]

- Liu, D.; Xia, F. Assessing object-based classification: Advantages and limitations. Remote Sens. Lett. 2010, 1, 187–194. [Google Scholar] [CrossRef]

- Whiteside, T.G.; Boggs, G.S.; Maier, S.W. Comparing object-based and pixel-based classifications for mapping savannas. Int. J. Appl. Earth Obs. Geoinf. 2011, 13, 884–893. [Google Scholar] [CrossRef]

- Ouyang, Z.-T.; Zhang, M.-Q.; Xie, X.; Shen, Q.; Guo, H.-Q.; Zhao, B. A comparison of pixel-based and object-oriented approaches to vhr imagery for mapping saltmarsh plants. Ecol. Inf. 2011, 6, 136–146. [Google Scholar] [CrossRef]

- Im, J.; Jensen, J.R.; Tullis, J.A. Object-based change detection using correlation image analysis and image segmentation. Int. J. Remote Sens. 2008, 29, 399–423. [Google Scholar] [CrossRef]

- Chen, G.; Hay, G.J.; Carvalho, L.M.T.; Wulder, M.A. Object-based change detection. Int. J. Remote Sens. 2012, 33, 4434–4457. [Google Scholar] [CrossRef]

- Zhou, W.; Troy, A.; Grove, M. Object-based land cover classification and change analysis in the baltimore metropolitan area using multitemporal high resolution remote sensing data. Sensors 2008, 8, 1613–1636. [Google Scholar] [CrossRef] [PubMed]

- Baatz, M.; Schäpe, A. Multiresolution segmentation-an optimization approach for high quality multi-scale image segmentation. In Angewandte Geographische Informationsverarbeitung; Strobl, J., Blaschke, T., Griesebner, G., Eds.; Wichmann-Verlag: Heidelberg, Germany, 2000; Volume 12, pp. 12–23. [Google Scholar]

- Fisher, P. The pixel: A snare and a delusion. Int. J. Remote Sens. 1997, 18, 679–685. [Google Scholar] [CrossRef]

- Neubert, P.; Protzel, P. Superpixel benchmark and comparison. In Forum Bildverarbeitung 2012; Karlsruher Instituts für Technologie (KIT) Scientific Publishing: Karlsruhe, Germany, 2012; pp. 1–12. [Google Scholar]

- Ren, X.; Malik, J. Learning a classification model for segmentation. In Proceedings of the Ninth IEEE International Conference on Computer Vision, Marseille, France, 13–16 October 2003; pp. 10–17.

- Li, Z.; Chen, J. Superpixel segmentation using linear spectral clustering. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 1356–1363.

- Achanta, R.; Shaji, A.; Smith, K.; Lucchi, A.; Fua, P.; Süsstrunk, S. Slic superpixels compared to state-of-the-art superpixel methods. IEEE Trans. Pattern Anal. Mach. Intell. 2012, 34, 2274–2282. [Google Scholar] [CrossRef] [PubMed]

- Guangyun, Z.; Xiuping, J.; Jiankun, H. Superpixel-based graphical model for remote sensing image mapping. IEEE Trans. Geosci. Remote Sens. 2015, 53, 5861–5871. [Google Scholar]

- Shi, C.; Wang, L. Incorporating spatial information in spectral unmixing: A review. Remote Sens. Environ. 2014, 149, 70–87. [Google Scholar] [CrossRef]

- Van den Bergh, M.; Boix, X.; Roig, G.; de Capitani, B.; Van Gool, L. Seeds: Superpixels extracted via energy-driven sampling. In Proceedings of the European Conference on Computer Vision, Florence, Italy, 7–13 October 2012; Springer: Berlin/Heidelberg, Germany, 2012; pp. 13–26. [Google Scholar]

- Shi, J.; Malik, J. Normalized cuts and image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2000, 22, 888–905. [Google Scholar]

- Felzenszwalb, P.F.; Huttenlocher, D.P. Efficient graph-based image segmentation. Int. J. Comput. Vis. 2004, 59, 167–181. [Google Scholar] [CrossRef]

- Moore, A.P.; Prince, J.; Warrell, J.; Mohammed, U.; Jones, G. Superpixel lattices. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Anchorage, AK, USA, 23–28 June 2008; pp. 1–8.

- Veksler, O.; Boykov, Y.; Mehrani, P. Superpixels and supervoxels in an energy optimization framework. In Proceedings of the eleventh European Conference on Computer Vision, Crete, Greece, 5–11 September 2010; pp. 211–224.

- Comaniciu, D.; Meer, P. Mean shift: A robust approach toward feature space analysis. IEEE Trans. Pattern Anal. Mach. Intell. 2002, 24, 603–619. [Google Scholar] [CrossRef]

- Vedaldi, A.; Soatto, S. Quick shift and kernel methods for mode seeking. In Proceedings of the European Conference on Computer Vision, Marseille, France, 12–18 October 2008; pp. 705–718.

- Vincent, L.; Soille, P. Watersheds in digital spaces: An efficient algorithm based on immersion simulations. IEEE Trans. Pattern Anal. Mach. Intell. 1991, 13, 583–598. [Google Scholar] [CrossRef]

- Levinshtein, A.; Stere, A.; Kutulakos, K.N.; Fleet, D.J.; Dickinson, S.J.; Siddiqi, K. Turbopixels: Fast superpixels using geometric flows. IEEE Trans. Pattern Anal. Mach. Intell. 2009, 31, 2290–2297. [Google Scholar] [CrossRef] [PubMed]

- Fourie, C.; Schoepfer, E. Data transformation functions for expanded search spaces in geographic sample supervised segment generation. Remote Sens. 2014, 6, 3791–3821. [Google Scholar] [CrossRef]

- Ma, L.; Du, B.; Chen, H.; Soomro, N.Q. Region-of-interest detection via superpixel-to-pixel saliency analysis for remote sensing image. IEEE Geosci. Remote Sens. Lett. 2016, 13, 1752–1756. [Google Scholar] [CrossRef]

- Arisoy, S.; Kayabol, K. Mixture-based superpixel segmentation and classification of sar images. IEEE Geosci. Remote Sens. Lett. 2016, 13, 1721–1725. [Google Scholar] [CrossRef]

- Guo, J.; Zhou, X.; Li, J.; Plaza, A.; Prasad, S. Superpixel-based active learning and online feature importance learning for hyperspectral image analysis. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2017, 10, 347–359. [Google Scholar] [CrossRef]

- Li, S.; Lu, T.; Fang, L.; Jia, X.; Benediktsson, J.A. Probabilistic fusion of pixel-level and superpixel-level hyperspectral image classification. IEEE Trans. Geosci. Remote Sens. 2016, 54, 7416–7430. [Google Scholar] [CrossRef]

- Ortiz Toro, C.; Gonzalo Martín, C.; García Pedrero, Á.; Menasalvas Ruiz, E. Superpixel-based roughness measure for multispectral satellite image segmentation. Remote Sens. 2015, 7, 14620–14645. [Google Scholar] [CrossRef]

- Vargas, J.; Falcao, A.; dos Santos, J.; Esquerdo, J.; Coutinho, A.; Antunes, J. Contextual superpixel description for remote sensing image classification. In Proceedings of the 2015 IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Milan, Italy, 26–31 July 2015; pp. 1132–1135.

- Garcia-Pedrero, A.; Gonzalo-Martin, C.; Fonseca-Luengo, D.; Lillo-Saavedra, M. A geobia methodology for fragmented agricultural landscapes. Remote Sens. 2015, 7, 767–787. [Google Scholar] [CrossRef]

- Stefanski, J.; Mack, B.; Waske, B. Optimization of object-based image analysis with random forests for land cover mapping. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2013, 6, 2492–2504. [Google Scholar] [CrossRef]

- Chen, J.; Dowman, I.; Li, S.; Li, Z.; Madden, M.; Mills, J.; Paparoditis, N.; Rottensteiner, F.; Sester, M.; Toth, C.; et al. Information from imagery: Isprs scientific vision and research agenda. ISPRS J. Photogramm. Remote Sens. 2016, 115, 3–21. [Google Scholar] [CrossRef]

- Tiede, D.; Lang, S.; Füreder, P.; Hölbling, D.; Hoffmann, C.; Zeil, P. Automated damage indication for rapid geospatial reporting. Photogramm. Eng. Remote Sens. 2011, 77, 933–942. [Google Scholar] [CrossRef]

- Voigt, S.; Kemper, T.; Riedlinger, T.; Kiefl, R.; Scholte, K.; Mehl, H. Satellite image analysis for disaster and crisis-management support. IEEE Trans. Geosci. Remote Sens. 2007, 45, 1520–1528. [Google Scholar] [CrossRef]

- Lang, S.; Tiede, D.; Hölbling, D.; Füreder, P.; Zeil, P. Earth observation (eo)-based ex post assessment of internally displaced person (idp) camp evolution and population dynamics in zam zam, darfur. Int. J. Remote Sens. 2010, 31, 5709–5731. [Google Scholar] [CrossRef]

- Strasser, T.; Lang, S. Object-based class modelling for multi-scale riparian forest habitat mapping. Int. J. Appl. Earth Obs. Geoinf. 2015, 37, 29–37. [Google Scholar] [CrossRef]

- Achanta, R.; Shaji, A.; Smith, K.; Lucchi, A.; Fua, P.; Süsstrunk, S. Slic Superpixels; EPFL Technical Report 149300; School of Computer and Communication Sciences, Ecole Polytechnique Fedrale de Lausanne: Lausanne, Switzerland, 2010; pp. 1–15. [Google Scholar]

- Csillik, O. Superpixels: The end of pixels in obia. A comparison of stat-of-the-art superpixel methods for remote sensing data. In Proceedings of the GEOBIA 2016: Solutions and Synergies, Enschede, The Netherlands, 14–16 September 2016; Kerle, N., Gerke, M., Lefevre, S., Eds.; University of Twente Faculty of Geo-Information and Earth Observation (ITC): Enschede, The Netherlands, 2016. [Google Scholar]

- Drăguţ, L.; Tiede, D.; Levick, S. Esp: A tool to estimate scale parameters for multiresolution image segmentation of remotely sensed data. Int. J. Geogr. Inf. Sci. 2010, 24, 859–871. [Google Scholar] [CrossRef]

- Kim, M.; Madden, M. Determination of optimal scale parameter for alliance-level forest classification of multispectral ikonos images. In Proceedings of the 1st International Conference on Object-based Image Analysis, Salzburg, Austria, 4–5 July 2006; Available online: http://www.isprs.org/proceedings/xxxvi/4-c42/papers/OBIA2006_Kim_Madden.pdf (accessed on 19 December 2016).

- Zhang, Y.J. A survey on evaluation methods for image segmentation. Pattern Recognit. 1996, 29, 1335–1346. [Google Scholar] [CrossRef]

- Eisank, C.; Smith, M.; Hillier, J. Assessment of multiresolution segmentation for delimiting drumlins in digital elevation models. Geomorphology 2014, 214, 452–464. [Google Scholar] [CrossRef] [PubMed]

- Clinton, N.; Holt, A.; Scarborough, J.; Yan, L.I.; Gong, P. Accuracy assessment measures for object-based image segmentation goodness. Photogramm. Eng. Remote Sens. 2010, 76, 289–299. [Google Scholar] [CrossRef]

- Lucieer, A.; Stein, A. Existential uncertainty of spatial objects segmented from satellite sensor imagery. IEEE Trans. Geosci. Remote Sens. 2002, 40, 2518–2521. [Google Scholar] [CrossRef]

- Winter, S. Location similarity of regions. ISPRS J. Photogramm. Remote Sens. 2000, 55, 189–200. [Google Scholar] [CrossRef]

- Breiman, L. Random forests. Mach. Learn. 2001, 45, 5–32. [Google Scholar] [CrossRef]

- Belgiu, M.; Drăguţ, L. Random forest in remote sensing: A review of applications and future directions. ISPRS J. Photogramm. Remote Sens. 2016, 114, 24–31. [Google Scholar] [CrossRef]

- Trimble. Ecognition Reference Book; Trimble Germany GmbH: Munchen, Germany, 2012. [Google Scholar]

- Haralick, R.M.; Shanmugam, K.; Dinstein, I. Textural features for image classification. IEEE Trans. Syst. Man Cybern. 1973, SMC-3, 610–621. [Google Scholar] [CrossRef]

- Liaw, A.; Wiener, M. Classification and regression by randomforest. R News 2002, 2, 18–22. [Google Scholar]

- R Development Core Team. R: A Language and Environment for Statistical Computing; The R Foundation for Statistical Computing: Vienna, Austria, 2013. [Google Scholar]

- Liu, C.; Frazier, P.; Kumar, L. Comparative assessment of the measures of thematic classification accuracy. Remote Sens. Environ. 2007, 107, 606–616. [Google Scholar] [CrossRef]

- Congalton, R.G. A review of assessing the accuracy of classifications of remotely sensed data. Remote Sens. Environ. 1991, 37, 35–46. [Google Scholar] [CrossRef]

- Cohen, J. A coefficient of agreement for nominal scales. Educ. Psychol. Meas. 1960, 20, 37–46. [Google Scholar] [CrossRef]

- Congalton, R.G.; Green, K. Assessing the Accuracy of Remotely Sensed Data: Principles and Practices; CRC: Boca Raton, FL, USA, 2009. [Google Scholar]

- Gao, Y.; Mas, J.F.; Kerle, N.; Navarrete Pacheco, J.A. Optimal region growing segmentation and its effect on classification accuracy. Int. J. Remote Sens. 2011, 32, 3747–3763. [Google Scholar] [CrossRef]

- Belgiu, M.; Drǎguţ, L. Comparing supervised and unsupervised multiresolution segmentation approaches for extracting buildings from very high resolution imagery. ISPRS J. Photogramm. Remote Sens. 2014, 96, 67–75. [Google Scholar] [CrossRef] [PubMed]

- Pantofaru, C.; Schmid, C.; Hebert, M. Object recognition by integrating multiple image segmentations. In Computer Vision–ECCV 2008; Proceedings of the 10th European Conference on Computer Vision, Marseille, France, 12–18 October 2008; Springer: Berlin, Heidelberg, Germany, 2008; pp. 481–494. [Google Scholar]

- Li, Y.; Sun, J.; Tang, C.-K.; Shum, H.-Y. Lazy snapping. ACM Trans. Graph. (ToG) 2004, 23, 303–308. [Google Scholar] [CrossRef]

- Zitnick, C.L.; Kang, S.B. Stereo for image-based rendering using image over-segmentation. Int. J. Comput. Vis. 2007, 75, 49–65. [Google Scholar] [CrossRef]

- Fulkerson, B.; Vedaldi, A.; Soatto, S. Class segmentation and object localization with superpixel neighborhoods. In Proceedings of the 2009 IEEE 12th International Conference on Computer Vision, Kyoto, Japan, 27 September–4 October 2009; pp. 670–677.

- Lucchi, A.; Smith, K.; Achanta, R.; Lepetit, V.; Fua, P. A fully automated approach to segmentation of irregularly shaped cellular structures in em images. In Medical Image Computing and Computer-Assisted Intervention–Miccai 2010; Springer: Berlin/Heidelberg, Germany, 2010; pp. 463–471. [Google Scholar]

- Saxena, A.; Sun, M.; Ng, A.Y. Make3d: Learning 3d scene structure from a single still image. IEEE Trans. Pattern Anal. Mach. Intell. 2009, 31, 824–840. [Google Scholar] [CrossRef] [PubMed]

- Galasso, F.; Cipolla, R.; Schiele, B. Video segmentation with superpixels. In Computer Vision–ACCV 2012; 11th Asian Conference on Computer Vision, Daejeon, Korea, November 5–9, 2012; Springer: Berlin Heidelberg, Germany, 2012; pp. 760–774. [Google Scholar]

| Test | Imagery | Spatial Resolution (m) | Number of Bands | Extent (pixels) | Length × Width (pixels) | Location |

|---|---|---|---|---|---|---|

| T1 | QuickBird | 0.6 | 4 | 4,016,016 | 1347 × 1042 | City of Salzburg, Austria |

| T2 | QuickBird | 0.6 | 4 | 12,320,100 | 4004 × 3171 | City of Salzburg, Austria |

| T3 | WorldView-2 | 0.5 | 8 | 12,217,001 | 3701 × 3301 | 10 km north of city of Salzburg |

| Measure | Equation | Domain | Ideal Value | Authors |

|---|---|---|---|---|

| Over-segmentation | [0, 1] | 0 | Clinton, et al. [51] | |

| Under-segmentation | [0, 1] | 0 | Clinton, et al. [51] | |

| Area fit index | oversegmentation: AFI > 0 undersegmentation: AFI < 0 | 0 | Lucieer and Stein [52] | |

| Root mean square | [0, 1] | 0 | Clinton, et al. [51] | |

| Quality rate | [0, 1] | 1 | Winter [53] |

| T2—QuickBird | T3—WorldView-2 | ||||

|---|---|---|---|---|---|

| Training | Validation | Training | Validation | ||

| Build-up area | 199 | 199 | Woodland | 297 | 296 |

| Woodland | 107 | 107 | Grassland | 121 | 120 |

| Grassland | 174 | 173 | Bareland | 44 | 44 |

| Bareland | 30 | 29 | Lake | 59 | 58 |

| Water | 30 | 30 | River | 93 | 93 |

| Total | 540 | 538 | Total | 614 | 611 |

| Type | Variable | Definition [56] |

|---|---|---|

| Spectral | Mean band x | The mean layer x intensity value of an object/pixel |

| Standard deviation band x | The standard deviation of an object/pixel in band x | |

| Brightness | The mean value of all the layers used for RF | |

| NDVI | Normalized Difference Vegetation Index | |

| Texture | GLCM standard deviation | Gray level co-occurrence matrix (GLCM) [57] |

| GLCM homogeneity | ||

| GLCM correlation | ||

| Shape | Border index | The ration between the border lengths of the object and the smallest enclosing rectangle |

| Compactness | The product of the length and width, divided by the number of pixels | |

| Area | The area (in pixels) of an object |

| Test | Segmentation Results | Segmentation Accuracy Metrics | Time | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Number | SP | Number of Objects | AFI | OS | US | D | QR | |||

| T1 | Pixels | 1,403,574 | 69 | 3,109 | 0.499 | 0.560 | 0.121 | 0.405 | 0.414 | 1 min 29 s |

| SLIC | 13,835 | 81 | 2,017 | 0.388 | 0.463 | 0.122 | 0.338 | 0.499 | 27 s | |

| SLICO | 13,906 | 61 | 2,757 | 0.447 | 0.515 | 0.122 | 0.374 | 0.454 | 24 s | |

| T2 | Pixels | 12,696,684 | 172 | 4,670 | 0.174 | 0.229 | 0.067 | 0.169 | 0.729 | 2 h 42 min 40 s |

| SLIC | 123,153 | 173 | 4,204 | 0.088 | 0.161 | 0.079 | 0.127 | 0.782 | 13 min 02 s | |

| SLICO | 125,842 | 148 | 5,354 | 0.335 | 0.386 | 0.075 | 0.278 | 0.584 | 9 min 49 s | |

| T3 | Pixels | 12,217,001 | 220 | 1,632 | 0.100 | 0.148 | 0.052 | 0.111 | 0.813 | 5 h 35 min 24 s |

| SLIC | 131,415 | 212 | 1,702 | 0.062 | 0.124 | 0.066 | 0.099 | 0.823 | 13 min 03 s | |

| SLICO | 121,525 | 172 | 2,338 | 0.223 | 0.275 | 0.066 | 0.200 | 0.688 | 10 min 46 s | |

| T2-QuickBird | T3-WorldView-2 | |||

|---|---|---|---|---|

| OA (%) | Kappa | OA (%) | Kappa | |

| Pixels | 91.45 | 0.881 | 90.51 | 0.867 |

| MRS | 96.09 | 0.945 | 99.51 | 0.993 |

| SLIC 5 × 5 | 96.84 | 0.956 | 99.02 | 0.956 |

| SLIC 10 × 10 | 96.47 | 0.951 | 99.18 | 0.988 |

| SLIC 15 × 15 | 96.65 | 0.953 | 99.84 | 0.998 |

| SLIC 20 × 20 | 97.21 | 0.961 | 99.18 | 0.998 |

| SLICO 5 × 5 | 97.03 | 0.958 | 99.51 | 0.988 |

| SLICO 10 × 10 | 95.72 | 0.940 | 99.51 | 0.993 |

| SLICO 15 × 15 | 96.28 | 0.948 | 99.35 | 0.991 |

| SLICO 20 × 20 | 95.17 | 0.932 | 99.67 | 0.995 |

© 2017 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license ( http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Csillik, O. Fast Segmentation and Classification of Very High Resolution Remote Sensing Data Using SLIC Superpixels. Remote Sens. 2017, 9, 243. https://doi.org/10.3390/rs9030243

Csillik O. Fast Segmentation and Classification of Very High Resolution Remote Sensing Data Using SLIC Superpixels. Remote Sensing. 2017; 9(3):243. https://doi.org/10.3390/rs9030243

Chicago/Turabian StyleCsillik, Ovidiu. 2017. "Fast Segmentation and Classification of Very High Resolution Remote Sensing Data Using SLIC Superpixels" Remote Sensing 9, no. 3: 243. https://doi.org/10.3390/rs9030243

APA StyleCsillik, O. (2017). Fast Segmentation and Classification of Very High Resolution Remote Sensing Data Using SLIC Superpixels. Remote Sensing, 9(3), 243. https://doi.org/10.3390/rs9030243