1. Introduction

Crop residues or plant litter is the portion of a crop left in the field after harvest. Management of crop residues is an integral part of most conservation tillage systems. Crop residues on the soil surface provide a protective barrier against water and wind erosion and reduce the amount of soil, nutrients, and pesticides that reaches streams and rivers [

1,

2]. Crop residues also contribute to soil organic matter which improves soil quality and sequesters carbon. Long-term use of conservation tillage practices that leave crop residues undisturbed following harvest can lead to increased soil organic matter, improved soil structure, and increased aggregation compared with intensively tilled soils [

3]. The overall result is less soil erosion and improved soil and water quality. However, soil tillage and crop residue harvesting for feed, fiber, or bio-energy are management practices that reduce crop residue mass and cover.

The erosion protection afforded by crop residues on the soil surface diminishes as they decompose and lose both mass and cover. The decay processes in natural ecosystems have been described as a continuum beginning with fresh plant litter and leading to formation of refractory soil organic matter [

4]. The rates of mass loss have been related to both litter quality (litter type and chemistry), environmental conditions (temperature, moisture, and soil type), and biotic activity (microbial and faunal) [

5]. In many conservation tillage systems, the decay continuum, described for natural ecosystems [

4], is also evident as fresh crop residues are added at each harvest on top of partially decomposed crop residues from previous crops. In contrast, most of the crop residue for intensively-tilled systems is incorporated into the soil and decomposes rapidly. Managing crop residue and predicting its decomposition over time is critical for controlling water and wind erosion and sequestering carbon in soils. Methods for describing the rates of crop residue decomposition often normalize weather data to optimal temperature and moisture conditions and then accumulate the temperature and moisture factors, e.g., [

5,

6,

7,

8].

The Conservation Technology Information Center (CTIC) has defined three categories of tillage and planting systems based on crop residue cover after planting: intensive tillage has <15% residue cover; reduced tillage has 15–30% residue cover, and conservation tillage has >30% residue cover [

9]. Quantification of crop residue cover is required to evaluate the effectiveness and extent of conservation tillage practices, as well as the extent of bio-fuel harvesting. The standard technique for measuring mean crop residue cover in fields is the line-point transect method [

10,

11]. Regional assessments of conservation tillage practices based on annual roadside surveys of crop residue levels after planting are compiled for selected counties [

9]. However, these surveys are subjective and the techniques vary from county to county [

12,

13]. No program exists for objectively monitoring crop residue cover and tillage intensity over broad areas.

Traditional remote sensing approaches for identifying crops and estimating crop yields [

14,

15], have had mixed success because crop residues and soils are spectrally similar and often differ only in amplitude in the visible and near infrared wavelengths [

12,

13,

16,

17,

18]. Nevertheless numerous crop residue/soil tillage indices have been reported that use various combinations of the Landsat Thematic Mapper (TM) bands [

16,

18,

19,

20,

21,

22]. These indices are based on relative differences in broad band reflectance for soils and crop residues.

An alternative approach is based on detecting the absorption features in the 1,500–2,500 nm wavelength region that are overtones and combinations of the fundamental molecular vibrations occurring in the 2.5–25 μm region [

23]. In plants, three relatively narrow absorption features, centered near 1,730, 2,100, and 2,300 nm, are primarily associated with nitrogen (in proteins), cellulose, and lignin concentrations [

24,

25,

26]. These features are not readily discernible in the spectra of fresh vegetation [

26] or wet crop residues [

27], but are evident in reflectance spectra of dry plant litter [

24] and crop residues [

17,

28]. Reflectance spectra of dry soils also lack these absorption features, but may have additional absorption features associated with minerals [

17,

18,

27,

28].

Cellulose, lignin, and other structural polysaccharides (e.g., hemicellulose) are intertwined in plant cell walls and their spectra have overlapping absorptions in both the 2,100 nm and 2,300 nm regions [

26]. Continuum removal or baseline normalization is a spectroscopic analysis technique to estimate of the reflectance spectrum without the absorption due to the compound of interest [

25,

26]. The band center is the wavelength of the minimum value in the continuum-removed reflectance spectrum and its position and depth shifts as the relative proportions of structural polysaccharides change [

26]. The dynamic range of the continuum-removed reflectance spectra for dry crop residues on diverse soils was greater for the absorption feature near 2,100 nm than for the 2,300 nm feature [

27]. The cellulose absorption index (CAI) approximated the band center depth of the 2,100 nm feature using only three narrow spectral bands—two on the shoulders and one near the center [

17]. Both continuum-removed reflectance variables and CAI provided better discrimination of crop residues than reflectance factors because crop residues can be darker or brighter than the soils [

27]. Crop residue cover was linearly related to CAI using ground-based [

17,

29], aircraft [

28,

30], and satellite [

31] hyperspectral sensors. These relatively narrow spectral features are not detected by broad band multispectral sensors, e.g., Landsat TM.

As the chemical and physical properties of crop residues change during decomposition, the strength of their absorption features which could change their reflectance spectra and affect the ability of remote sensing methods to assess crop residue cover. Our objectives were to measure and model the changes in wheat straw fiber composition and spectral reflectance during decomposition and to assess the impact of these changes on remotely sensed estimates of residue cover.

3. Results and Discussion

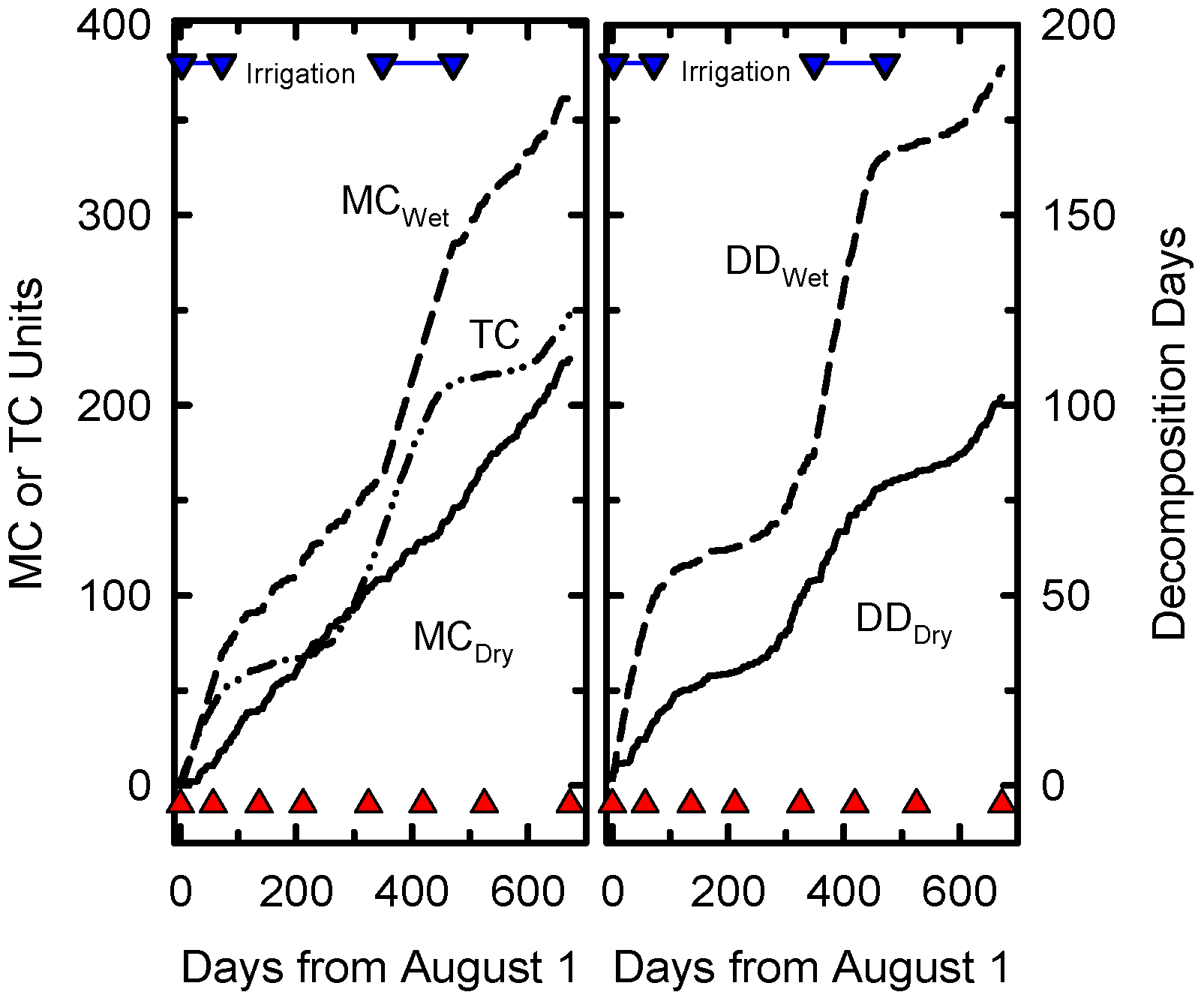

The accumulation of decomposition days at Beltsville was limited by moisture at some times of the year and temperature at others (

Figure 1). Temperature was the limiting factor when supplemental irrigation was applied to the wet treatment. Supplemental irrigation was applied for only 181 of the 673 days of the experiment but the irrigation treatment nearly doubled the cumulative DD compared to DD for normal precipitation.

Figure 1.

Cummulative daily Temperature Coefficients (TC), daily Moisture Coefficients (MC), Decomposition Days (DD) for dry and wet treatments as functions of calendar days from start of the experiment on August 1, 2006. The inverted blue triangles joined a blue line indicate days when irrigation was applied. Solid red triangles indicate sampling dates.

Figure 1.

Cummulative daily Temperature Coefficients (TC), daily Moisture Coefficients (MC), Decomposition Days (DD) for dry and wet treatments as functions of calendar days from start of the experiment on August 1, 2006. The inverted blue triangles joined a blue line indicate days when irrigation was applied. Solid red triangles indicate sampling dates.

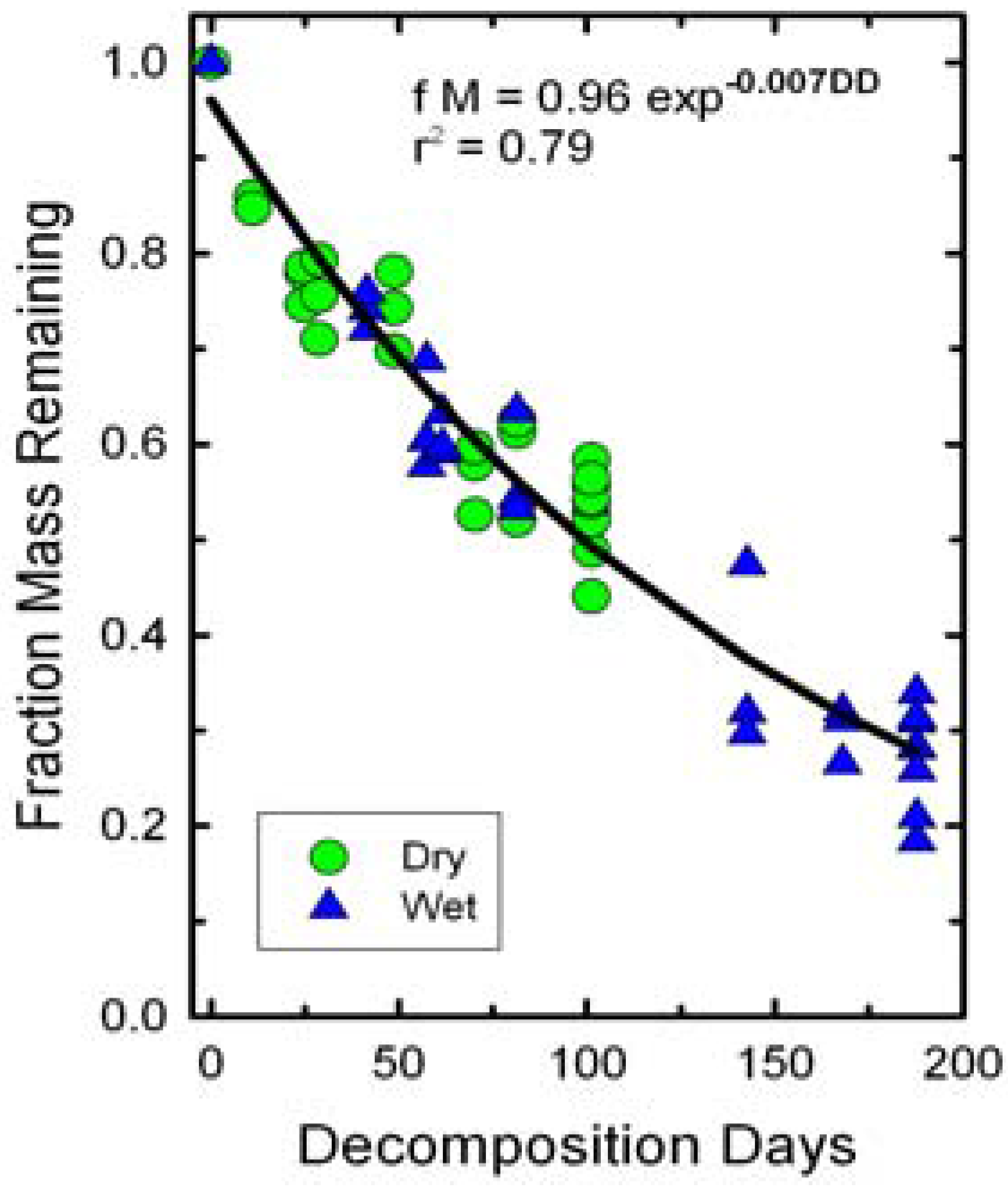

The fraction of dry mass remaining in the bags declined as a function of decomposition days (

Figure 2). Irrigation did not significantly affect slopes of the decline in dry mass remaining because of the normalization of DD. Thus, both wet and dry treatments were fitted with a single line. The slope of the decline in biomass or

k value was less than those reported for field observations of winter wheat residues [

6,

7,

8].

Figure 2.

Changes in initial wheat straw mass as a function of decomposition days (DD). A single exponential decay function fit both dry and wet treatments.

Figure 2.

Changes in initial wheat straw mass as a function of decomposition days (DD). A single exponential decay function fit both dry and wet treatments.

Three factors probably contributed to the lower decomposition rates observed in this study. First, the residue layer in the mesh bags was quite thick (~10 cm initially) and had limited contact with the soil where conditions were most favorable for decomposition. Steiner

et al. [

8] reported highest decomposition rates where a high proportion of the residue elements were in direct contact with the soil. Second, the soil in this study was a loamy sand with low soil organic matter content and low water holding capacity. Stroo

et al. [

6] showed that much higher decomposition rates for straw on silt loams than on sands were associated with physical factors controlling water movement and soil organic matter content that enhanced microbial populations. The clay loam used by Steiner

et al. [

8] has a higher water holding capacity than the loamy sand used in our study. Three, the generally lower decompositions rates for the litter bag approach has been attributed to exclusion of macro-organisms [

4,

5] and reduced movement by wind and water [

7].

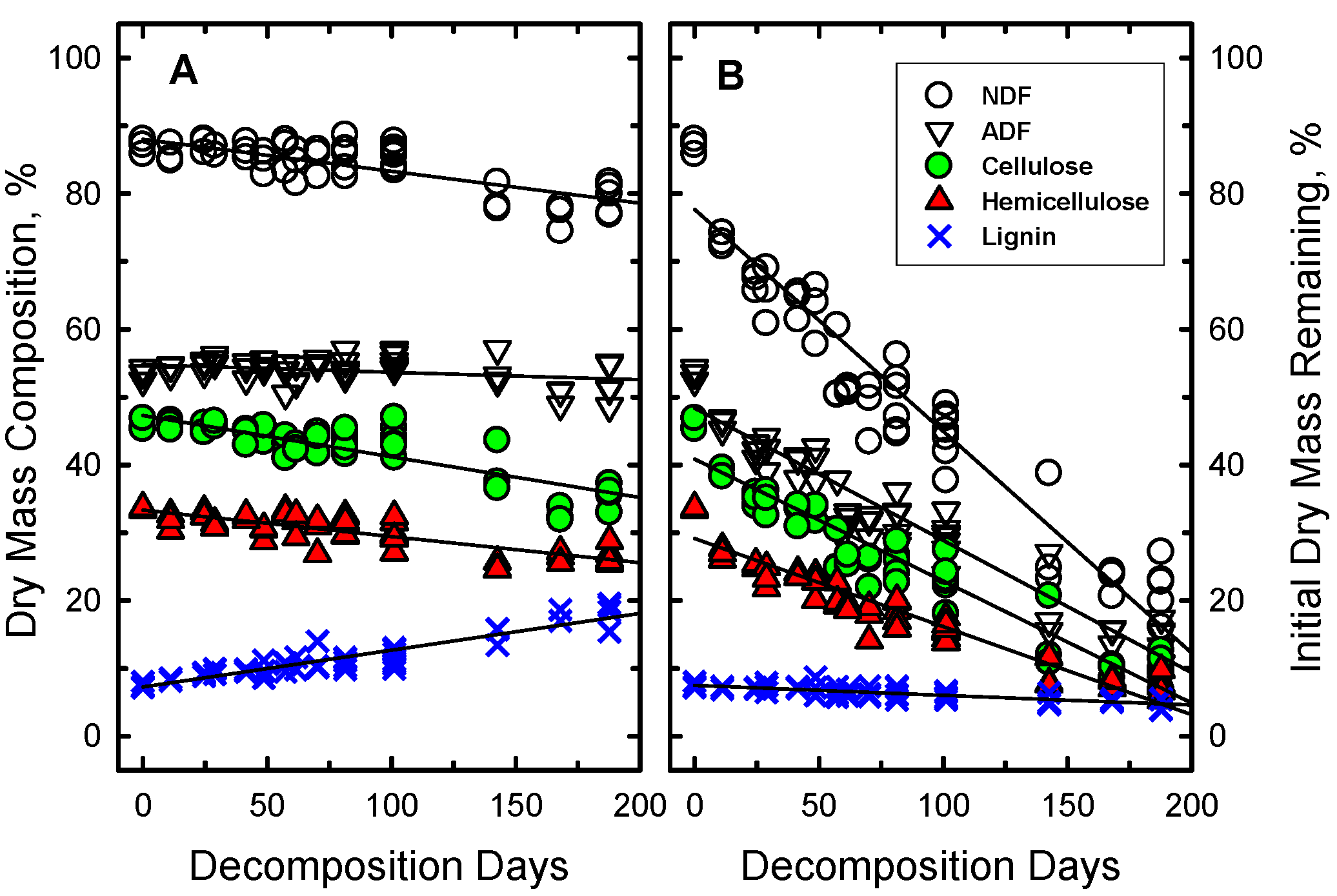

The wheat straw mass did not disappear uniformly (

Figure 3). Some components of the straw disappeared more rapidly than others. The relative proportions of NDF, hemicellulose and cellulose in the wheat straw declined significantly as a function of DD (

Figure 3A) while the relative proportion of lignin in the straw significantly increased (more than doubled). However, when the proportion of initial mass of each component was calculated, all declined significantly except lignin which remained unchanged (

Figure 3B). Similar patterns of changes in fiber composition also occurred in the weathered outer layers of large round bales of hay stored outdoors [

38] and in forest ecosystems during the early stage of litter decomposition when environmental conditions were major controlling factors [

5]. The onset of mass loss due to degradation of cellulose and hemicellulose began rapidly in pine litter, but the first net loss of lignin mass was after 2 years [

39].

Figure 3.

Changes in the (a) concentrations and (b) initial mass of the structural components of wheat straw as a function of decomposition days (DD). The root mean square error (RSME) of the regression line for each component, except NDF, is smaller than the symbol size. For NDF, the RMSE is 1.3 times the symbol size.

Figure 3.

Changes in the (a) concentrations and (b) initial mass of the structural components of wheat straw as a function of decomposition days (DD). The root mean square error (RSME) of the regression line for each component, except NDF, is smaller than the symbol size. For NDF, the RMSE is 1.3 times the symbol size.

The reflectance spectra of dry wheat straw at selected DD (

Figure 4) illustrate the subtle changes in spectrum shape as the wheat straw decomposed. These spectra lack the chlorophyll and water absorptions that dominate the spectra of green leaves [

24]. Structural components (cellulose, hemicellulose, and lignin) of the wheat straw dominate the spectrum of each sample at wavelengths >1,300 nm. The intensities of the cellulose and lignin absorption features near 1,710, 2,100, and 2,350 nm diminished as the wheat straw decomposed [

24,

25]. The relative intensity of the absorption feature near 2,100 nm, defined as the cellulose absorption index (CAI), is related to crop residue cover [

17,

28,

31].

Figure 4.

Spectral reflectance of wheat straw at selected decomposition days (DD). The spectra are displaced vertically to avoid overlap. Reflectance at 1,300 nm is provided for each spectrum.

Figure 4.

Spectral reflectance of wheat straw at selected decomposition days (DD). The spectra are displaced vertically to avoid overlap. Reflectance at 1,300 nm is provided for each spectrum.

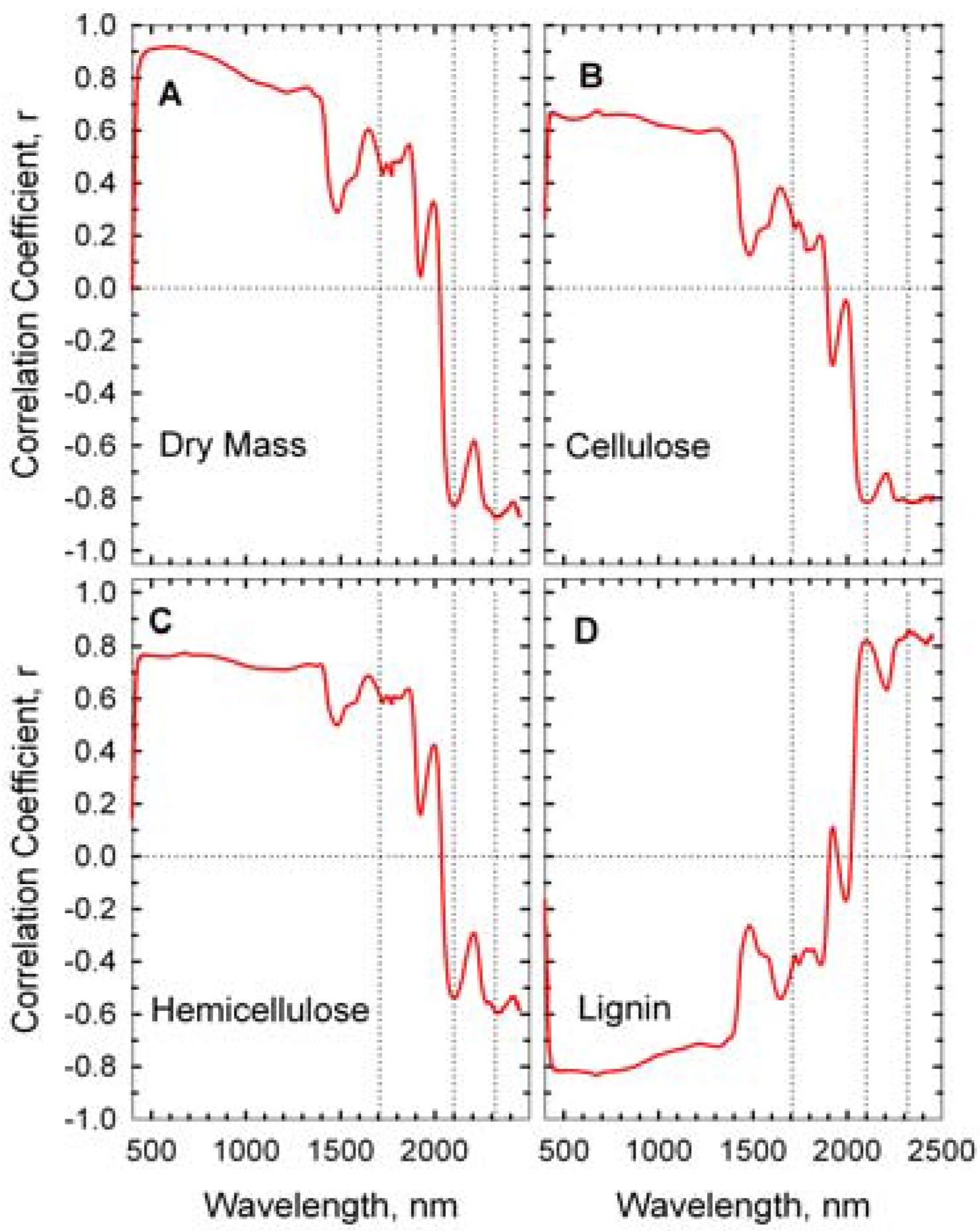

The correlations between wheat straw mass, its composition, and reflectance spectra are presented as correlograms in

Figure 5. Reflectance near 1,710, 2,100, and 2,350 nm were strongly correlated with the concentrations of hemicellulose, cellulose, and lignin [

24,

26]. Cellulose content of the wheat straw was negatively correlated to reflectance at 2,100 while lignin content was positively correlated to reflectance. High correlations in the visible and near infrared wavelengths (400–1,300 nm) were probably associated with overall decrease in straw reflectance (

Figure 4) rather than a specific fiber component.

Figure 5.

Correlograms of wheat straw components and reflectance spectra. Dotted vertical lines are at 1,710, 2,100, and 2,310 nm.

Figure 5.

Correlograms of wheat straw components and reflectance spectra. Dotted vertical lines are at 1,710, 2,100, and 2,310 nm.

In order to assess the impact of crop residue decomposition on the detection of crop residue cover, the reflectance of mixed scenes with various proportions of crop residue and soils was simulated using linear combinations of reflectance factors (Equation 1).

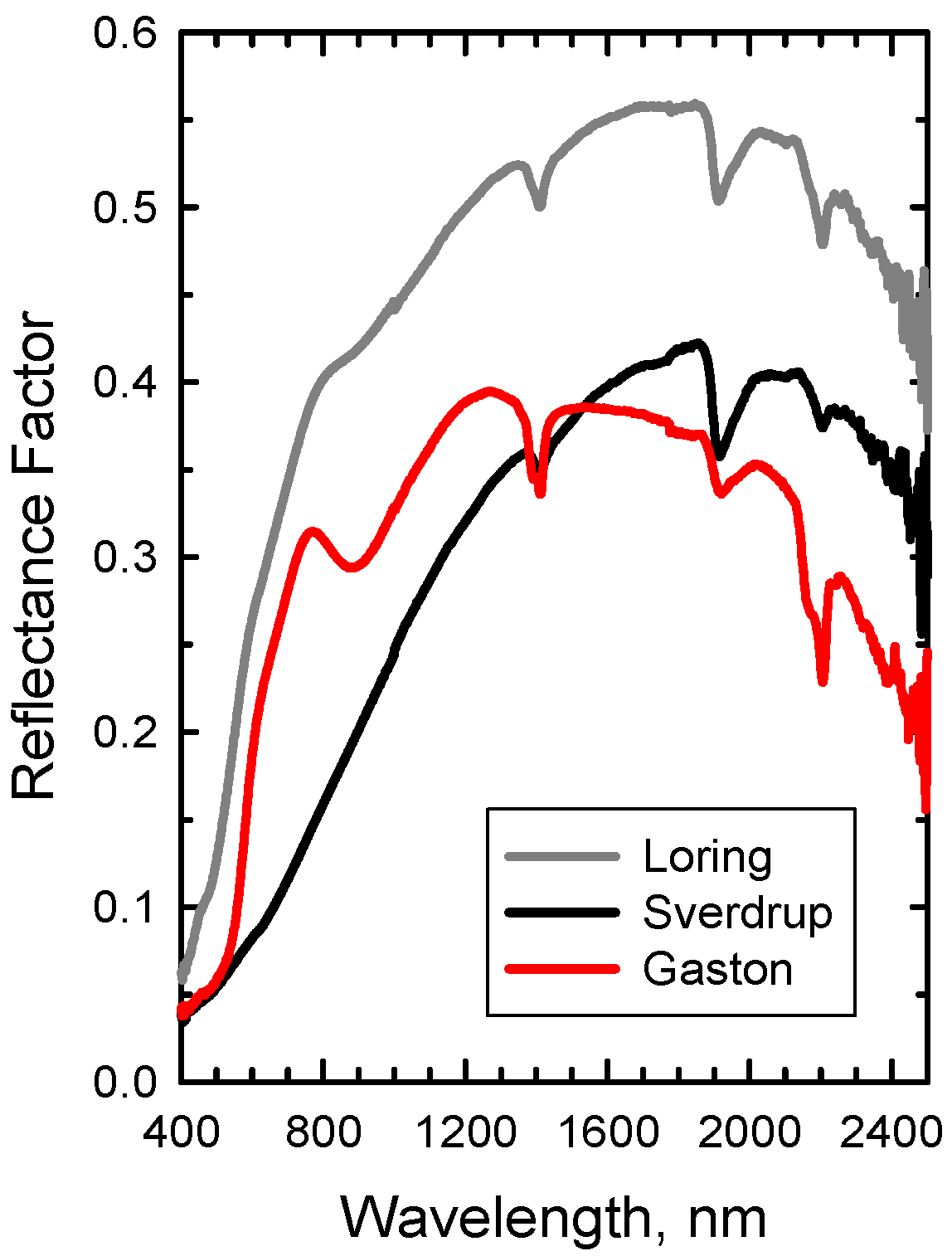

Figure 6.

Reflectance spectra of three diverse soils: Loring (Alfisol), Sverdrup (Mollisol), and Gaston (Ultisol).

Figure 6.

Reflectance spectra of three diverse soils: Loring (Alfisol), Sverdrup (Mollisol), and Gaston (Ultisol).

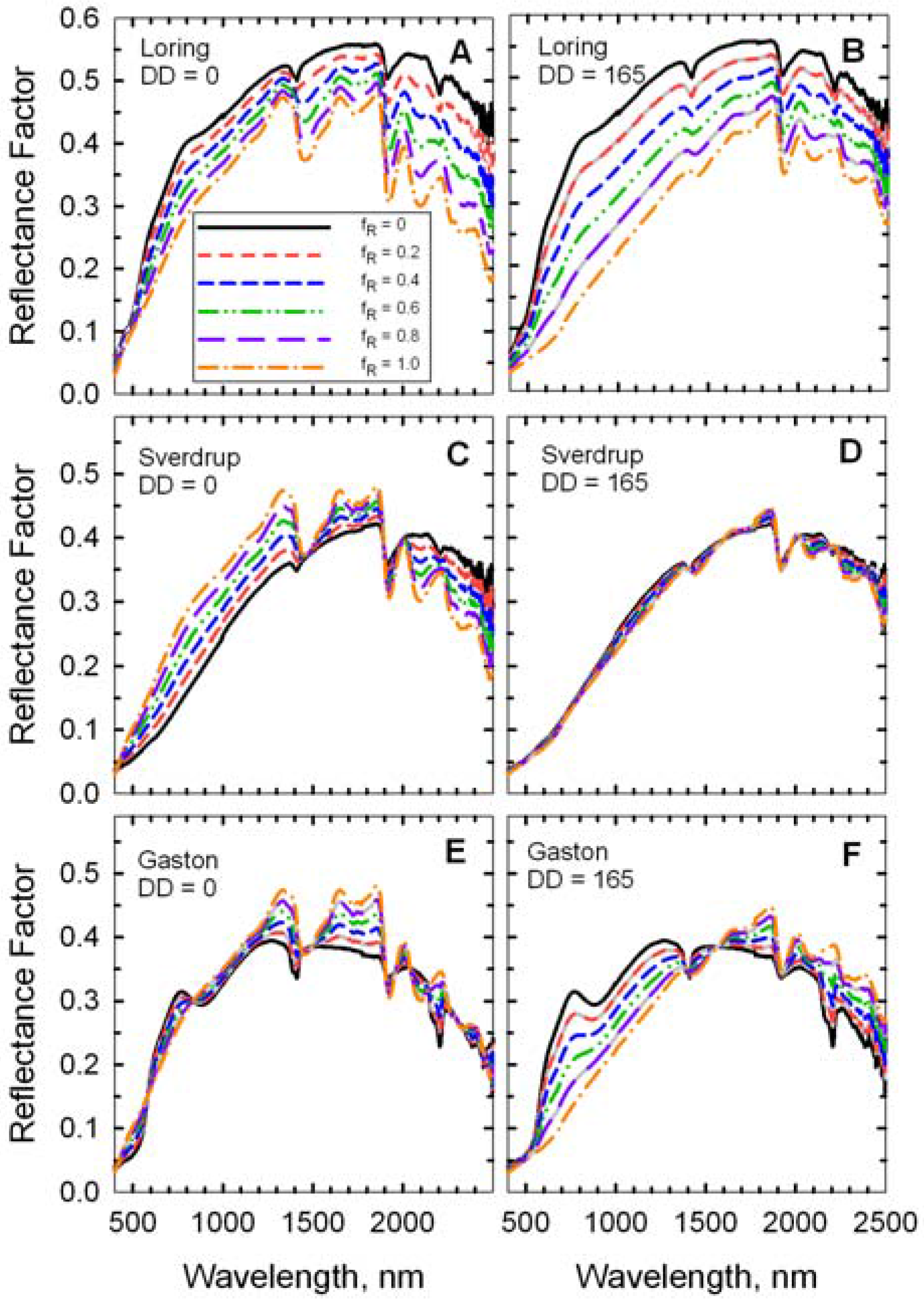

Figure 7.

Reflectance spectra of simulated scenes with mixtures of two wheat residues (DD = 0 and DD = 165) on three soils. The fraction residue cover (fR) ranged from 0 (100% soil) to 1.0 (100% wheat residue).

Figure 7.

Reflectance spectra of simulated scenes with mixtures of two wheat residues (DD = 0 and DD = 165) on three soils. The fraction residue cover (fR) ranged from 0 (100% soil) to 1.0 (100% wheat residue).

The mineral absorption feature near 2,200 m was evident in the spectrum of each bare soil (

Figure 6 and f

R = 0 in

Figure 7), but was attenuated as residue cover increased (

Figure 7). Likewise, the cellulose feature near 2100 nm was evident in the spectrum of each crop residue (

Figure 4 and f

R = 1 in

Figure 7), but diminished as residue cover decreased. The fresh straw (DD = 0) was darker than the Loring soil, but brighter than the Sverdrup soil at wavelengths <1,800 nm (

Figure 7). Reflectance of the fresh straw and Gaston soil were approximately equal at wavelengths <1,300 nm. As the residue decomposed, differences in reflectance increased for the Loring and Gaston soils, but diminished for the Sverdrup soil (

Figure 7). The responses of the four Landsat TM spectral residue indices (Equations 2–5) plus NDVI (Equation 6) to changes in residue cover for simulated scenes with four ages (

i.e., decomposition days, DD) of wheat straw on Loring, Sverdrup, and Gaston soils are plotted (

Figure 8).

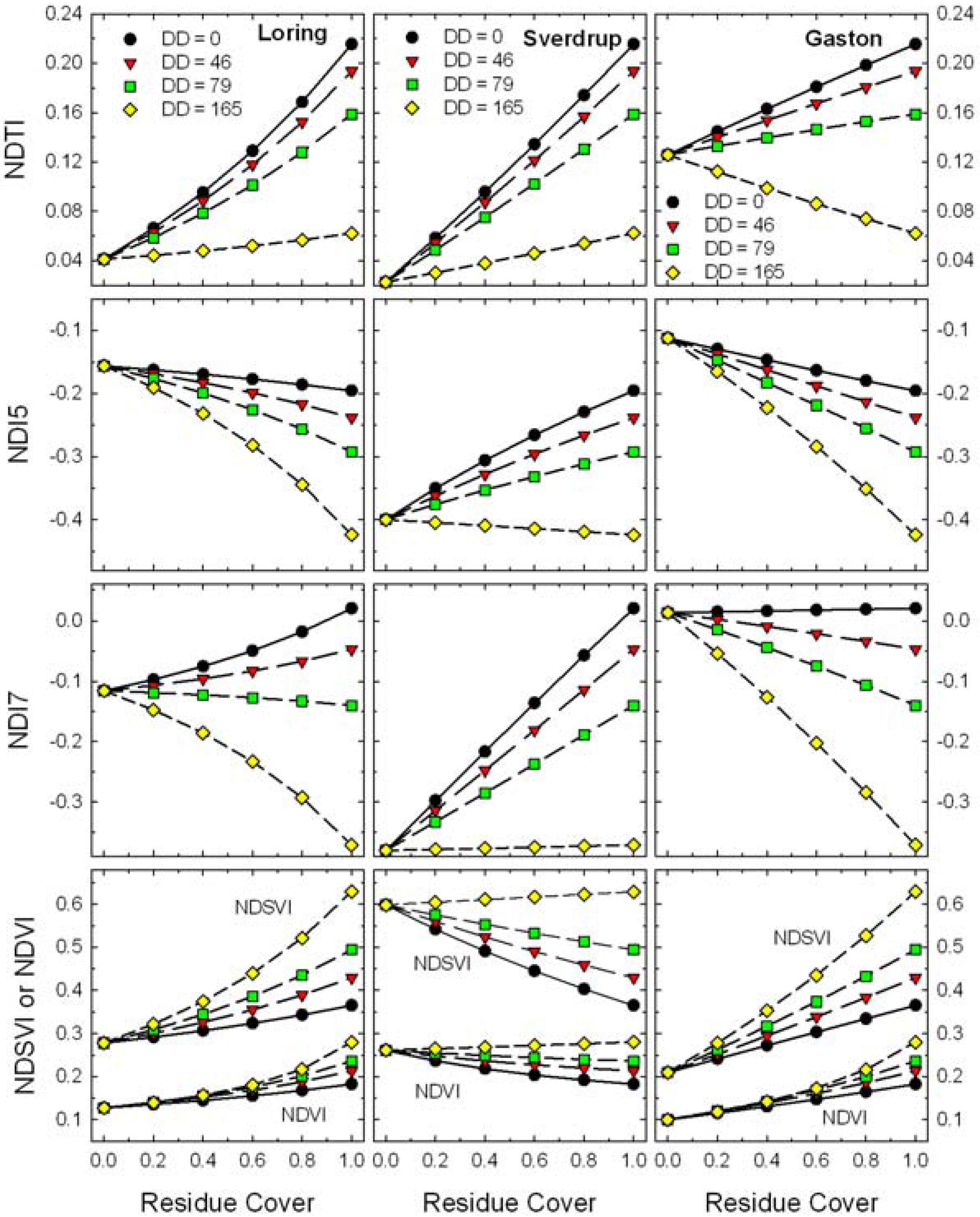

Figure 8.

Expected responses of Landsat TM band residue indices (Equations 2–6) to changes in residue cover for scenes with mixtures of four ages (i.e., decomposition days, DD) of wheat residues and three soils.

Figure 8.

Expected responses of Landsat TM band residue indices (Equations 2–6) to changes in residue cover for scenes with mixtures of four ages (i.e., decomposition days, DD) of wheat residues and three soils.

These contrasting soils produced large relative shifts in bare soil values for all of the Landsat TM indices (

Figure 8). Although the bare soil value for NDTI changed with soil type, the slope of the relationship remained positive except for the oldest residues (DD = 165). Local calibrations for soil and crop residue reflectance appear possible for NDTI [

17,

21]. However, the slopes of residue cover

vs. NDI5, NDI7, and NDSVI ranged from negative to positive as the soil background changed and positive to negative as the residues aged. Although these indices have been described for assessing crop residue cover [

19,

20], variations in soil and residue reflectance will cause problems assessing crop residue cover for these indices.

The slopes of residue cover

vs. CAI, LCA, and SINDRI were relatively constant for the three soils (

Figure 9). The strong clay mineral absorption observed near 2,200 nm in the Gaston soil (

Figure 6) influenced the bare soil values for all three indices (

Figure 9). As crop residues decompose, the slope of these indices

vs. residue cover also changed slightly, but remained positive. The changes in CAI with residue age (DD) are related to the changes in the relative depth of the cellulose absorption feature near 2,100 nm (

Figure 3) as the wheat straw decomposed and the proportion of cellulose declined (

Figure 4). The LCA and SINDRI (Equations 8, 9), which used bands located on the sides and shoulders of the cellulose and lignin absorption features, also tracked the changes in composition of the wheat straw.

Figure 9.

Expected responses of advanced residue indices (Equations 7–9) to changes in residue cover for scenes with mixtures of four ages (i.e., decomposition days, DD) of wheat residues and three soils.

Figure 9.

Expected responses of advanced residue indices (Equations 7–9) to changes in residue cover for scenes with mixtures of four ages (i.e., decomposition days, DD) of wheat residues and three soils.

What is the impact of decomposition on remote estimates of residue cover? In agricultural fields with limited tillage, the oldest crop residues (

i.e., DD = 165 in

Figure 8 and

Figure 9) would have lost >70% of their original mass (

Figure 2) and would be covered by or mixed with the newer residues of subsequent crops. Thus, from a pragmatic point of view, these oldest residues (DD = 165) are a minor component of the total crop residue cover and will be excluded from the following discussion. For each crop residue index in

Figure 8 and

Figure 9, the difference (range) between wheat residue end-point (f

R = 1) and the bare soil end-point (f

R = 0) was calculated. The impact of decomposition at DD = 79 (~55% of original dry mass lost) was expressed as the relative change in the range of each index and each soil (

Table 1). For the three narrow-band indices (

i.e., CAI, LCA, and SINDRI), the range decreased 15 to 21% depending on soil type. If these indices were calibrated with fresh residue (DD = 0) and then used to estimate the cover of aged residue (DD = 79), we would expect the aged residue cover on a dark-colored soil (e.g., Sverdrup) to be under-estimated by as much as 21% of true value using CAI or SINDRI. Since conservation tillage is defined as more than 30% residue cover (f

R > 0.3) [

9], an error of 21% of value (

i.e., ±6% cover) is within the accuracy expected from the line-point transect method [

10,

11] for measuring crop residue cover. The changes in range of the four broad-band indices associated with residue age and soil type were large (

Table 1). For example, if NDTI was calibrated with fresh residue (DD = 0) and then used to estimate the cover of age residue (DD = 79), we would expect the aged residue cover to under-estimated by 29 to 63%. The other broad-band indices showed even larger over- and under-estimates of residue cover. These results indicate that current broad-band, multispectral imaging systems will not provide robust estimates of crop residue cover when the reflectance of the soils and residues are variable across agricultural landscapes.

Table 1.

Effects of soil type and residue age on the range of spectral residue indices.

Table 1.

Effects of soil type and residue age on the range of spectral residue indices.

| Soil | Residue Age | | NDTI | NDI7 | NDI5 | NDSVI | CAI | LCA | SINDRI |

|---|

| Loring | DD = 0 | Rangea | 0.174 | 0.137 | −0.039 | 0.087 | 15.9 | 4.8 | 6.2 |

| | DD = 79 | Range | 0.118 | −0.024 | −0.136 | 0.216 | 12.9 | 4.1 | 5.0 |

| | | Changeb | −0.325 | −1.177 | 2.457 | 1.483 | −0.188 | −0.152 | −0.196 |

| | | | | | | | | | |

| Sverdrup | DD = 0 | Range | 0.193 | 0.401 | 0.204 | −0.233 | 13.9 | 4.0 | 5.7 |

| | DD = 79 | Range | 0.136 | 0.240 | 0.107 | −0.104 | 10.9 | 3.2 | 4.4 |

| | | Change | −0.294 | −0.401 | −0.474 | −0.554 | −0.214 | −0.184 | −0.214 |

| | | | | | | | | | |

| Gaston | DD = 0 | Range | 0.090 | 0.007 | −0.083 | 0.155 | 19.6 | 4.3 | 7.5 |

| | DD = 79 | Range | 0.033 | −0.154 | −0.180 | 0.285 | 16.6 | 3.6 | 6.3 |

| | | Change | −0.632 | −23.221 | 1.160 | 0.831 | −0.152 | −0.168 | −0.162 |