YOSDet: A YOLO-Based Oriented Ship Detector in SAR Imagery

Highlights

- YOSDet, a YOLO-based oriented ship detector, effectively handles arbitrarily oriented ships in SAR imagery, achieving high detection accuracy across SSDD+, HRSID, and SRSDD-v1.0 benchmarks.

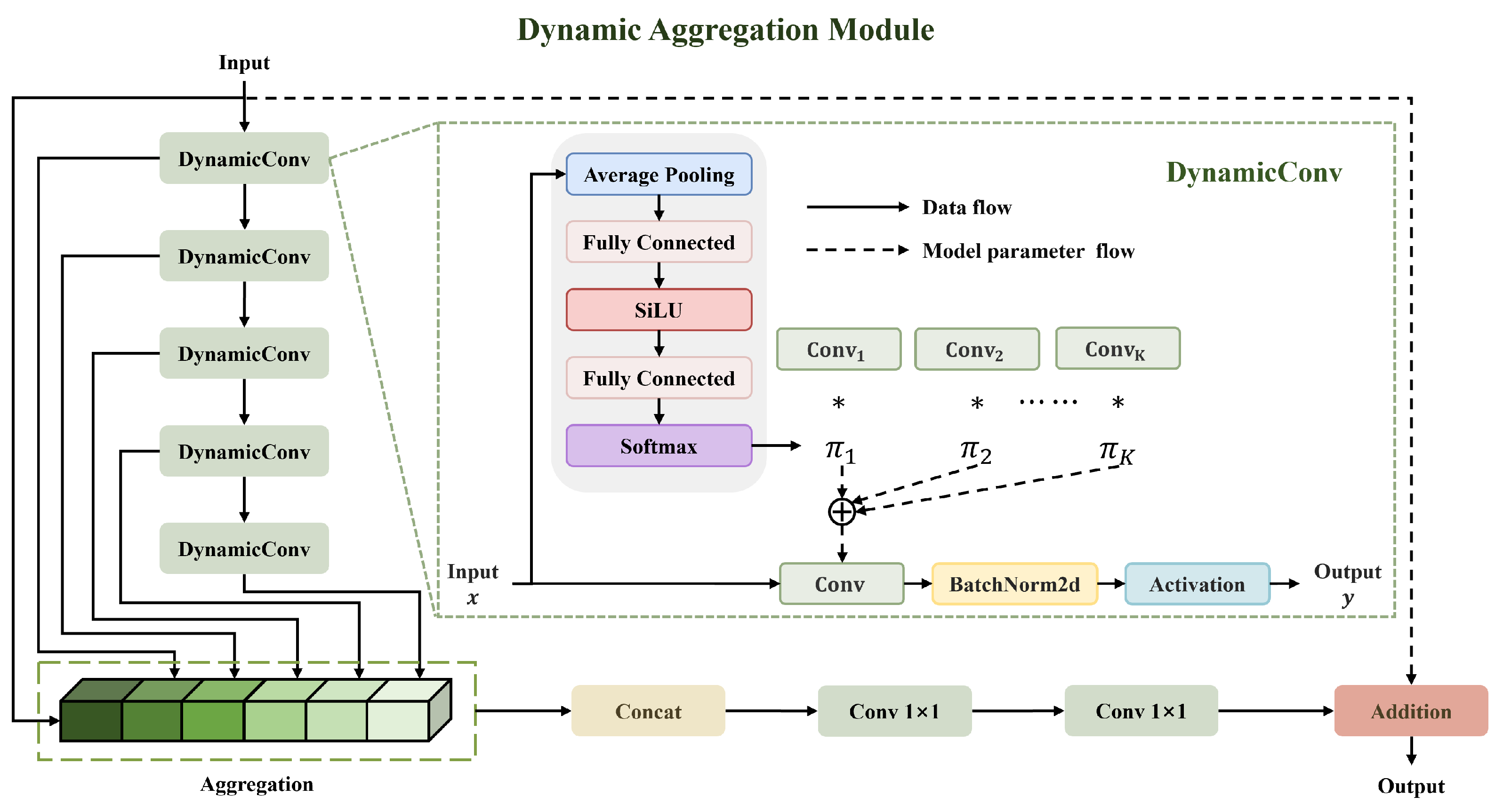

- The model integrates a dynamic aggregation module (DAM), an objective-guided detection head (OGDH), and a localization quality estimator (LQE), improving prediction consistency under noisy SAR imaging conditions.

- The results demonstrate robust generalization for both inshore and offshore scenarios, making the framework suitable for real-time maritime surveillance.

- This work provides a practical framework for oriented ship detection in complex SAR environments, highlighting the potential of deep learning in remote sensing applications.

Abstract

1. Introduction

- We propose YOSDet, an improved YOLO-based oriented architecture for SAR ship detection that seeks a balance between detection accuracy and inference efficiency.

- We introduce a dynamic aggregation module (DAM) for robust feature representation and an objective guided detection head (OGDH) with localization quality estimator (LQE) to ensure prediction consistency by mitigating the impact of SAR-specific scattering characteristics.

- Comprehensive evaluations on SSDD, HRSID, and SRSDD-v1.0 confirm that our YOSDet efficiently and reliably generalizes to both inshore and offshore SAR scenarios.

2. Related Work

2.1. General Object Detection

2.2. Oriented Bounding Boxes Object Detection

2.3. SAR Ship Detection

3. Proposed Method

3.1. Overall Architecture

3.2. Feature Extraction Backbone

3.3. Multi-Scale Feature Fusion Neck

3.4. Objective-Guided Detection Head

Localization Quality Estimator

3.5. Loss Function

4. Experiments and Results

4.1. Dataset

4.2. Implementation Details and Evaluation Metrics

4.3. Comparisons of Performance

4.4. Ablation Study

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Campbell, J.B. Introduction to Remote Sensing, 4th ed.; Guilford Press: New York, NY, USA, 2007. [Google Scholar]

- Toth, C.; Jóźków, G. Remote sensing platforms and sensors: A survey. ISPRS J. Photogramm. Remote Sens. 2016, 115, 22–36. [Google Scholar]

- Moreira, A.; Prats-Iraola, P.; Younis, M.; Krieger, G.; Hajnsek, I.; Papathanassiou, K.P. A tutorial on synthetic aperture radar. IEEE Geosci. Remote Sens. Mag. 2013, 1, 6–43. [Google Scholar] [CrossRef]

- Crisp, D.J. The State-of-the-Art in Ship Detection in Synthetic Aperture Radar Imagery; Department of Defence: Canberra, Australia, 2004; p. 115. [Google Scholar]

- Li, J.; Xu, C.; Su, H.; Gao, L.; Wang, T. Deep learning for SAR ship detection: Past, present and future. Remote Sens. 2022, 14, 2712. [Google Scholar] [CrossRef]

- LeCun, Y.; Bengio, Y.; Hinton, G. Deep learning. Nature 2015, 521, 436–444. [Google Scholar] [CrossRef]

- Lin, T.-Y.; Goyal, P.; Girshick, R.; He, K.; Dollár, P. Focal loss for dense object detection. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 2980–2988. [Google Scholar]

- Tian, Z.; Shen, C.; Chen, H.; He, T. FCOS: Fully convolutional one-stage object detection. arXiv 2019, arXiv:1904.01355. [Google Scholar] [CrossRef]

- Yaseen, M. What is YOLOv8: An in-depth exploration of the internal features of the next-generation object detector. arXiv 2024, arXiv:2408.15857. [Google Scholar]

- Khanam, R.; Hussain, M. YOLOv11: An overview of the key architectural enhancements. arXiv 2024, arXiv:2410.17725. [Google Scholar] [CrossRef]

- Lei, M.; Li, S.; Wu, Y.; Hu, H.; Zhou, Y.; Zheng, X.; Ding, G.; Du, S.; Wu, Z.; Gao, Y. YOLOv13: Real-time object detection with hypergraph-enhanced adaptive visual perception. arXiv 2025, arXiv:2506.17733. [Google Scholar]

- Ren, S.; He, K.; Girshick, R.; Sun, J. Faster R-CNN: Towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 1137–1149. [Google Scholar] [CrossRef]

- Carion, N.; Massa, F.; Synnaeve, G.; Usunier, N.; Kirillov, A.; Zagoruyko, S. End-to-end object detection with transformers. In Proceedings of the European Conference on Computer Vision (ECCV), Glasgow, UK, 23–28 August 2020; pp. 213–229. [Google Scholar]

- Zhu, X.; Su, W.; Lu, L.; Li, B.; Wang, X.; Dai, J. Deformable DETR: Deformable transformers for end-to-end object detection. arXiv 2020, arXiv:2010.04159. [Google Scholar]

- Zhao, Y.; Lv, W.; Xu, S.; Wei, J.; Wang, G.; Dang, Q.; Liu, Y.; Chen, J. DETRs beat YOLOs on real-time object detection. arXiv 2023, arXiv:2304.08069. [Google Scholar]

- Zhang, T.; Zhang, X.; Li, J.; Xu, X.; Wang, B.; Zhan, X.; Xu, Y.; Ke, X.; Zeng, T.; Su, H.; et al. SAR ship detection dataset (SSDD): Official release and comprehensive data analysis. Remote Sens. 2021, 13, 3690. [Google Scholar] [CrossRef]

- Wei, S.; Zeng, X.; Qu, Q.; Wang, M.; Su, H.; Shi, J. HRSID: A high-resolution SAR images dataset for ship detection and instance segmentation. IEEE Access 2020, 8, 120234–120254. [Google Scholar] [CrossRef]

- Lei, S.; Lu, D.; Qiu, X.; Ding, C. SRSDD-v1.0: A high-resolution SAR rotation ship detection dataset. Remote Sens. 2021, 13, 5104. [Google Scholar] [CrossRef]

- Li, X.; Wang, W.; Hu, X.; Li, J.; Tang, J.; Yang, J. Generalized focal loss v2: Learning reliable localization quality estimation for dense object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 19–25 June 2021; pp. 11632–11641. [Google Scholar]

- Cai, Z.; Vasconcelos, N. Cascade r-cnn: Delving into high quality object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–23 June 2018; pp. 6154–6162. [Google Scholar]

- Lin, T.-Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2117–2125. [Google Scholar]

- Tan, M.; Pang, R.; Le, Q.V. EfficientDet: Scalable and efficient object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 10781–10790. [Google Scholar]

- Feng, C.; Zhong, Y.; Gao, Y.; Scott, M.R.; Huang, W. TOOD: Task-aligned one-stage object detection. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 10–17 October 2021; pp. 3490–3499. [Google Scholar]

- Dai, X.; Chen, Y.; Xiao, B.; Chen, D.; Liu, M.; Yuan, L.; Zhang, L. Dynamic head: Unifying object detection heads with attentions. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 7373–7382. [Google Scholar]

- Zhang, H.; Li, F.; Liu, S.; Zhang, L.; Su, H.; Zhu, J.; Ni, L.M.; Shum, H.Y. DINO: Detr with improved denoising anchor boxes for end-to-end object detection. arXiv 2022, arXiv:2203.03605. [Google Scholar]

- Ding, J.; Xue, N.; Long, Y.; Xia, G.; Lu, Q. Learning RoI transformer for oriented object detection in aerial images. In Proceedings of the 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 16–17 June 2019; pp. 2844–2853. [Google Scholar]

- Xie, X.; Cheng, G.; Wang, J.; Yao, X.; Han, J. Oriented R-CNN for object detection. In Proceedings of the 2021 IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 11–17 October 2021; pp. 3520–3529. [Google Scholar]

- Han, J.; Ding, J.; Xue, N.; Xia, G.S. ReDet: A rotation-equivariant detector for aerial object detection. In Proceedings of the 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 2786–2795. [Google Scholar]

- Yang, X.; Yan, J.; Feng, Z.; He, T. R3Det: Refined single-stage detector with feature refinement for rotating object. arXiv 2019, arXiv:1908.05612. [Google Scholar] [CrossRef]

- Han, J.; Ding, J.; Li, J.; Xia, G.S. Align deep features for oriented object detection. IEEE Trans. Geosci. Remote Sens. 2021, 60, 5602511. [Google Scholar] [CrossRef]

- Yu, C.; Shin, Y. SAR ship detection based on improved YOLOv5 and BiFPN. ICT Express 2024, 10, 28–33. [Google Scholar] [CrossRef]

- Yu, C.; Shin, Y. An efficient YOLO for ship detection in SAR images via channel shuffled reparameterized convolution blocks and dynamic head. ICT Express 2024, 10, 673–679. [Google Scholar] [CrossRef]

- Zhang, T.; Zhang, X.; Ke, X. Quad-FPN: A novel quad feature pyramid network for SAR ship detection. Remote Sens. 2021, 13, 2771. [Google Scholar] [CrossRef]

- Yu, C.; Shin, Y. SMEP-DETR: Transformer-based ship detection for SAR imagery with multi-edge enhancement and parallel dilated convolutions. Remote Sens. 2025, 17, 953. [Google Scholar] [CrossRef]

- Sun, Z.; Leng, X.; Lei, Y.; Xiong, B.; Ji, K.; Kuang, G. BiFA-YOLO: A novel YOLO-based method for arbitrary-oriented ship detection in high-resolution SAR images. Remote Sens. 2021, 13, 4209. [Google Scholar] [CrossRef]

- Shao, Z.; Zhang, X.; Zhang, T.; Xu, X.; Zeng, T. RBFA-Net: A rotated balanced feature-aligned network for rotated SAR ship detection and classification. Remote Sens. 2022, 14, 3345. [Google Scholar] [CrossRef]

- Yue, T.; Zhang, Y.; Wang, J.; Xu, Y.; Liu, P. A weak supervision learning paradigm for oriented ship detection in SAR image. IEEE Trans. Geosci. Remote Sens. 2024, 62, 5207812. [Google Scholar] [CrossRef]

- Sun, Z.; Leng, X.; Zhang, X.; Zhou, Z.; Xiong, B.; Ji, K.; Kuang, G. Arbitrary-direction SAR ship detection method for multiscale imbalance. IEEE Trans. Geosci. Remote Sens. 2025, 63, 5208921. [Google Scholar]

- Yasir, M.; Liu, S.; Pirasteh, S.; Xu, M.; Sheng, H.; Wan, J.; de Figueiredo, F.A.; Aguilar, F.J.; Li, J. YOLOShipTracker: Tracking ships in SAR images using lightweight YOLOv8. Int. J. Appl. Earth Obs. Geoinf. 2024, 134, 104137. [Google Scholar] [CrossRef]

- Yasir, M.; Liu, S.; Xu, M.; Aguilar, F.J.; Wan, J.; Wei, S.; Pirasteh, S.; Fan, H.; Islam, Q.U. TFST: Two-frame ship tracking for SAR using YOLOv12 and feature-based matching. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 2025, 19, 3175–3189. [Google Scholar] [CrossRef]

- Yasir, M.; Liu, S.; Xu, M.; Sheng, H.; Aguilar, F.J.; do Lago Rocha, R.; de Figueiredo, F.A.P.; Colak, A.T.I.; Hossain, M.S. SSGNet: A single-source generalization model for ship detection in UAV imagery under challenging maritime environments. Ocean Eng. 2026, 348, 124120. [Google Scholar] [CrossRef]

- Chen, Y.; Dai, X.; Liu, M.; Chen, D.; Yuan, L.; Liu, Z. Dynamic convolution: Attention over convolution kernels. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 11030–11039. [Google Scholar]

- Zhu, X.; Hu, H.; Lin, S.; Dai, J. Deformable ConvNets v2: More deformable, better results. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, CA, USA, 15–20 June 2019; pp. 9308–9316. [Google Scholar]

- Llerena, J.M.; Zeni, L.F.; Kristen, L.N.; Jung, C. Gaussian bounding boxes and probabilistic intersection-over-union for object detection. arXiv 2021, arXiv:2106.06072. [Google Scholar]

- Zhou, Y.; Yang, X.; Zhang, G.; Wang, J.; Liu, Y.; Hou, L.; Jiang, X.; Liu, X.; Yan, J.; Lyu, C.; et al. Mmrotate: A rotated object detection benchmark using pytorch. In Proceedings of the 30th ACM International Conference on Multimedia, Lisbon, Portugal, 10–14 October 2022; pp. 7331–7334. [Google Scholar]

- Xu, Y.; Fu, M.; Wang, Q.; Wang, Y.; Chen, K.; Xia, G.S.; Bai, X. Gliding vertex on the horizontal bounding box for multi-oriented object detection. IEEE Trans. Pattern Anal. Mach. Intell. 2021, 43, 1452–1459. [Google Scholar] [CrossRef]

- Zhang, S.; Chi, C.; Yao, Y.; Lei, Z.; Li, S.Z. Bridging the gap between anchor-based and anchor-free detection via adaptive training sample selection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 9759–9768. [Google Scholar]

- Yang, Z.; Liu, S.; Hu, H.; Wang, L.; Lin, S. RepPoints: Point set representation for object detection. In Proceedings of the 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 9656–9665. [Google Scholar]

- Li, W.; Chen, Y.; Hu, K.; Zhu, J. Oriented RepPoints for aerial object detection. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, 18–24 June 2022; pp. 1829–1838. [Google Scholar]

- Hou, L.; Lu, K.; Xue, J.; Li, Y. Shape-adaptive selection and measurement for oriented object detection. In Proceedings of the AAAI Conference on Artificial Intelligence, Virtually, 22 February–1 March 2022; Volume 36, pp. 923–932. [Google Scholar]

- Gu, Y.; Fang, M.; Peng, D. TIAR-SAR: An oriented SAR ship detector combining a task interaction head architecture with composite angle regression. Remote Sens. 2025, 17, 2049. [Google Scholar] [CrossRef]

| Details | SSDD (SSDD+) | HRSID | SRSDD-v1.0 |

|---|---|---|---|

| Sources | RadarSat-2, TerraSAR-X, Sentinel-1 | Sentinel-1, TerraSAR-X, TanDEM-X | Gaofen-3 |

| Polarization | HH, HV, VV, VH | HH, HV, VV | HH, VV |

| Resolution (m) | 1∼15 | 0.5, 1, 3 | 1 |

| Dimensions (pixel) | 190∼668 | 800 × 800 | 1024 × 1024 |

| Images/Instances | 1160/2456 | 5604/16,951 | 666/2884 |

| Annotations | HBB, OBB, Polygon | Polygon | OBB |

| Categories | 1 | 1 | 6 |

| Method | Param (M) | FLOPs (G) | Entire Scene | Inshore Scene | Offshore Scene | FPS | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| P | R | mAP | F1 | P | R | mAP | F1 | P | R | mAP | F1 | ||||

| R-Faster-RCNN [12] | 41.12 | 63.25 | 87.8 | 78.9 | 78.0 | 83.1 | 66.2 | 60.5 | 53.7 | 63.2 | 91.4 | 93.3 | 89.2 | 92.3 | 88.1 |

| Gliding Vertex [46] | 41.13 | 63.25 | 89.6 | 80.8 | 80.2 | 85.0 | 78.4 | 57.0 | 57.9 | 66.0 | 92.1 | 93.6 | 90.0 | 92.8 | 86.1 |

| RoI Transformer [26] | 55.03 | 77.15 | 94.3 | 84.4 | 88.0 | 89.1 | 86.4 | 66.3 | 68.8 | 75.0 | 96.4 | 94.1 | 90.6 | 95.3 | 61.6 |

| Oriented R-CNN [27] | 41.35 | 63.28 | 92.3 | 83.5 | 87.2 | 87.7 | 76.9 | 65.7 | 66.9 | 70.8 | 97.2 | 92.8 | 90.4 | 94.9 | 52.8 |

| R-RetinaNet [7] | 36.13 | 52.39 | 84.0 | 79.9 | 78.4 | 81.9 | 55.7 | 51.2 | 45.6 | 53.3 | 95.1 | 93.9 | 90.0 | 94.5 | 132.7 |

| ReDet [28] | 31.54 | 40.88 | 96.2 | 89.2 | 90.3 | 92.6 | 88.4 | 70.9 | 71.3 | 78.7 | 97.9 | 98.9 | 90.9 | 98.4 | 34.5 |

| R3Det [29] | 41.58 | 82.17 | 97.0 | 84.2 | 87.5 | 90.2 | 90.6 | 61.6 | 67.5 | 73.4 | 98.4 | 96.3 | 90.8 | 97.3 | 45.6 |

| S2A-Net [30] | 38.54 | 49.05 | 92.0 | 90.7 | 89.9 | 91.3 | 82.1 | 77.3 | 76.3 | 79.6 | 96.3 | 96.8 | 90.7 | 96.5 | 90.6 |

| R-ATSS [47] | 36.01 | 51.79 | 93.9 | 87.0 | 88.5 | 90.3 | 82.6 | 66.3 | 66.4 | 73.5 | 97.6 | 97.9 | 90.7 | 97.7 | 141.9 |

| Rotated FCOS [8] | 31.89 | 51.55 | 87.8 | 80.0 | 79.4 | 83.7 | 63.4 | 56.4 | 53.3 | 59.7 | 93.6 | 93.6 | 89.6 | 93.6 | 144.9 |

| Rotated RepPoints [48] | 36.60 | 48.56 | 85.6 | 77.5 | 77.5 | 81.3 | 62.9 | 48.3 | 48.7 | 54.6 | 89.4 | 94.7 | 88.5 | 91.9 | 112.0 |

| Oriented RepPoints [49] | 36.60 | 48.56 | 95.1 | 85.0 | 89.0 | 89.7 | 84.9 | 62.2 | 70.6 | 71.8 | 97.3 | 96.8 | 90.8 | 97.1 | 76.5 |

| SASM RepPoints [50] | 36.60 | 48.56 | 90.9 | 85.5 | 87.8 | 88.1 | 86.2 | 61.6 | 69.6 | 71.9 | 97.2 | 94.1 | 90.7 | 95.7 | 111.6 |

| YOLOv11-OBB [10] | 2.65 | 4.20 | 97.2 | 90.3 | 94.0 | 93.6 | 93.4 | 74.4 | 82.3 | 82.9 | 98.1 | 98.1 | 98.6 | 98.1 | 120.5 |

| YOLOv13-OBB [11] | 2.52 | 4.10 | 92.7 | 92.3 | 94.9 | 92.5 | 81.6 | 79.6 | 83.8 | 80.6 | 98.1 | 98.1 | 98.6 | 98.1 | 117.6 |

| WSL paradigm [37] | 55.84 | - | - | 89.7 | 87.3 | - | - | 73.3 | 67.5 | - | - | 95.0 | 93.9 | - | 19.7 |

| YOSDet | 2.15 | 5.00 | 97.4 | 94.7 | 96.8 | 96.0 | 94.9 | 87.2 | 92.1 | 90.9 | 98.1 | 98.7 | 98.6 | 98.4 | 70.4 |

| Method | Param (M) | FLOPs (G) | Entire Scene | Inshore Scene | Offshore Scene | FPS | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| P | R | mAP | F1 | P | R | mAP | F1 | P | R | mAP | F1 | ||||

| R-Faster-RCNN [12] | 41.12 | 134.38 | 85.9 | 64.9 | 67.8 | 74.0 | 57.1 | 45.5 | 42.8 | 50.6 | 94.1 | 90.9 | 88.2 | 92.5 | 51.9 |

| Gliding Vertex [46] | 41.13 | 134.39 | 85.2 | 66.8 | 69.5 | 74.9 | 61.3 | 44.3 | 43.9 | 51.5 | 95.6 | 90.6 | 90.3 | 93.1 | 55.3 |

| RoI Transformer [26] | 55.03 | 148.28 | 86.7 | 72.7 | 76.6 | 79.1 | 69.3 | 52.0 | 53.0 | 59.4 | 97.2 | 93.4 | 90.7 | 95.2 | 42.3 |

| Oriented R-CNN [27] | 41.35 | 134.46 | 89.2 | 73.2 | 78.1 | 80.4 | 69.7 | 55.4 | 55.5 | 61.7 | 97.0 | 94.5 | 90.7 | 95.7 | 65.7 |

| R-RetinaNet [7] | 36.13 | 128.09 | 79.2 | 65.3 | 66.7 | 71.6 | 53.5 | 40.7 | 37.7 | 46.3 | 92.1 | 92.0 | 88.5 | 92.1 | 61.3 |

| ReDet [28] | 31.54 | 59.74 | 88.3 | 77.5 | 79.6 | 82.6 | 75.7 | 58.4 | 62.1 | 65.9 | 97.4 | 95.5 | 90.7 | 96.5 | 31.6 |

| R3Det [29] | 41.58 | 200.92 | 90.3 | 71.5 | 76.8 | 79.8 | 70.3 | 53.7 | 54.7 | 60.9 | 96.2 | 93.8 | 90.7 | 94.9 | 42.4 |

| S2A-Net [30] | 38.54 | 119.92 | 91.0 | 76.0 | 79.6 | 82.8 | 75.5 | 58.8 | 61.7 | 66.1 | 98.0 | 95.0 | 90.8 | 96.5 | 48.9 |

| R-ATSS [47] | 36.01 | 126.62 | 86.1 | 70.8 | 74.5 | 77.7 | 68.1 | 49.9 | 51.0 | 57.6 | 95.7 | 92.8 | 89.9 | 94.3 | 63.7 |

| Rotated FCOS [8] | 31.89 | 125.98 | 82.0 | 69.3 | 74.1 | 75.1 | 60.4 | 51.1 | 49.1 | 55.3 | 92.7 | 91.8 | 89.8 | 92.3 | 72.3 |

| Rotated RepPoints [48] | 36.60 | 118.72 | 76.7 | 70.8 | 71.7 | 73.6 | 59.5 | 51.2 | 49.5 | 55.1 | 90.7 | 92.7 | 88.6 | 91.7 | 59.6 |

| Oriented RepPoints [49] | 36.60 | 118.72 | 85.4 | 79.7 | 79.4 | 82.5 | 71.9 | 63.1 | 63.8 | 67.2 | 97.2 | 94.6 | 90.6 | 95.9 | 72.1 |

| SASM RepPoints [50] | 36.60 | 118.72 | 88.1 | 71.5 | 77.4 | 78.9 | 69.6 | 54.5 | 57.6 | 61.1 | 95.1 | 91.9 | 90.4 | 93.5 | 55.4 |

| YOLOv11-OBB [10] | 2.65 | 10.20 | 89.9 | 79.1 | 86.3 | 84.2 | 77.4 | 62.7 | 69.4 | 69.3 | 97.4 | 96.2 | 97.5 | 96.8 | 108.7 |

| YOLOv13-OBB [11] | 2.52 | 10.00 | 89.3 | 80.1 | 86.8 | 84.4 | 76.8 | 65.8 | 71.9 | 70.9 | 97.6 | 94.7 | 96.7 | 96.1 | 109.9 |

| WSL paradigm [37] | 55.84 | - | - | 85.0 | 81.5 | - | - | 78.9 | 71.6 | - | - | 96.2 | 94.8 | - | 16.9 |

| MSDFF-Net [38] | 8.94 | - | 83.6 | 88.1 | 83.1 | 85.8 | 69.7 | 75.5 | 70.1 | 72.5 | 98.4 | 98.0 | 91.9 | 98.2 | - |

| YOSDet | 2.15 | 12.30 | 90.2 | 82.2 | 88.5 | 86.0 | 80.1 | 68.5 | 75.4 | 73.9 | 96.9 | 96.0 | 97.1 | 96.5 | 108.7 |

| Method | Ore–Oil | Bulk-Cargo | Fishing | Law Enf. | Dredger | Container | mAP |

|---|---|---|---|---|---|---|---|

| R-Faster-RCNN [12] | 54.6 | 45.9 | 21.6 | 9.1 | 78.2 | 72.2 | 46.9 |

| Gliding Vertex [46] | 43.7 | 44.8 | 25.8 | 3.9 | 74.5 | 76.6 | 44.9 |

| RoI Transformer [26] | 64.8 | 49.4 | 24.3 | 13.6 | 70.7 | 71.0 | 49.0 |

| Oriented R-CNN [27] | 61.8 | 57.6 | 33.4 | 27.3 | 78.7 | 78.1 | 56.1 |

| R-RetinaNet [7] | 39.1 | 30.0 | 21.5 | 0.6 | 56.5 | 51.8 | 33.2 |

| ReDet [28] | 59.9 | 46.6 | 25.5 | 27.3 | 71.9 | 77.2 | 51.4 |

| R3Det [29] | 54.5 | 51.5 | 25.4 | 28.2 | 77.9 | 82.9 | 53.4 |

| S2A-Net [30] | 63.4 | 45.0 | 30.5 | 20.9 | 74.4 | 77.3 | 51.9 |

| R-ATSS [47] | 52.6 | 44.1 | 22.3 | 54.5 | 76.0 | 81.6 | 55.2 |

| Rotated FCOS [8] | 57.5 | 42.8 | 25.4 | 31.3 | 80.8 | 71.7 | 51.6 |

| Rotated RepPoints [48] | 40.5 | 36.6 | 21.9 | 0.1 | 78.0 | 63.2 | 40.1 |

| Oriented RepPoints [49] | 61.1 | 49.4 | 48.8 | 17.7 | 80.3 | 81.3 | 56.4 |

| SASM RepPoints [50] | 59.4 | 43.5 | 29.8 | 1.0 | 75.4 | 74.4 | 47.3 |

| YOLOv11-OBB [10] | 48.4 | 49.7 | 30.0 | 44.9 | 70.5 | 78.9 | 53.7 |

| YOLOv13-OBB [11] | 51.3 | 51.1 | 14.6 | 62.1 | 84.2 | 76.4 | 56.6 |

| RBFA-Net [36] | 59.4 | 57.4 | 41.5 | 73.5 | 77.2 | 71.6 | 63.4 |

| TIAR-SAR [51] | 55.7 | 69.3 | 33.1 | 100.0 | 70.8 | 54.5 | 63.9 |

| YOSDet | 49.4 | 60.2 | 46.2 | 78.9 | 83.4 | 85.9 | 67.3 |

| Component | Params (M) | SSDD+ | HRSID | SRSDD-v1.0 | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| DAM | OGDH | LQE | P | R | mAP | mAP 50:95 | P | R | mAP | mAP 50:95 | P | R | mAP | mAP 50:95 | ||

| (1) | − | − | − | 2.65 | 97.2 | 90.3 | 94.0 | 50.9 | 89.8 | 79.1 | 86.3 | 46.9 | 54.7 | 48.2 | 53.7 | 27.1 |

| (2) | + | − | − | 2.47 | 95.3 | 92.1 | 94.3 | 51.8 | 89.0 | 80.9 | 85.8 | 47.2 | 63.2 | 53.9 | 56.3 | 31.2 |

| (3) | − | + | − | 2.24 | 96.0 | 92.7 | 95.5 | 48.8 | 91.7 | 79.2 | 86.9 | 47.2 | 54.5 | 64.8 | 59.4 | 32.7 |

| (4) | − | − | + | 2.66 | 97.3 | 91.6 | 95.1 | 52.1 | 90.4 | 80.7 | 87.9 | 47.2 | 59.6 | 60.4 | 58.3 | 30.9 |

| (5) | + | + | − | 2.05 | 96.7 | 91.6 | 94.8 | 52.2 | 89.2 | 80.9 | 87.1 | 46.5 | 65.2 | 61.1 | 64.8 | 33.2 |

| (6) | + | + | + | 2.15 | 97.4 | 94.7 | 96.8 | 54.4 | 90.2 | 82.2 | 88.5 | 49.1 | 64.0 | 66.8 | 67.3 | 34.4 |

| (a) Impact of dynamic convolution kernel number k. | ||||||||||

| k | P | R | mAP | mAP50:95 | mAPin | mAPoff | Params | GFLOPs | FPS | |

| 2 | 96.2 | 92.5 | 96.7 | 52.7 | 91.4 | 98.7 | 1.98 | 5.00 | 69.9 | |

| DAM | 4 | 97.4 | 94.7 | 96.8 | 54.4 | 92.1 | 98.6 | 2.15 | 5.00 | 70.4 |

| 8 | 96.6 | 93.0 | 96.6 | 54.2 | 91.3 | 98.6 | 2.51 | 5.00 | 70.4 | |

| (b) Impact of group normalization number g. | ||||||||||

| g | P | R | mAP | mAP50:95 | mAPin | mAPoff | Params | GFLOPs | FPS | |

| 8 | 94.1 | 93.8 | 96.4 | 53.3 | 89.5 | 98.9 | 2.15 | 5.00 | 69.9 | |

| OGDH | 16 | 97.4 | 94.7 | 96.8 | 54.4 | 92.1 | 98.6 | 2.15 | 5.00 | 70.4 |

| 32 | 96.5 | 91.6 | 95.9 | 53.1 | 89.3 | 98.3 | 2.15 | 5.00 | 69.4 | |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yu, C.; Shin, O.-S.; Shin, Y. YOSDet: A YOLO-Based Oriented Ship Detector in SAR Imagery. Remote Sens. 2026, 18, 645. https://doi.org/10.3390/rs18040645

Yu C, Shin O-S, Shin Y. YOSDet: A YOLO-Based Oriented Ship Detector in SAR Imagery. Remote Sensing. 2026; 18(4):645. https://doi.org/10.3390/rs18040645

Chicago/Turabian StyleYu, Chushi, Oh-Soon Shin, and Yoan Shin. 2026. "YOSDet: A YOLO-Based Oriented Ship Detector in SAR Imagery" Remote Sensing 18, no. 4: 645. https://doi.org/10.3390/rs18040645

APA StyleYu, C., Shin, O.-S., & Shin, Y. (2026). YOSDet: A YOLO-Based Oriented Ship Detector in SAR Imagery. Remote Sensing, 18(4), 645. https://doi.org/10.3390/rs18040645