1. Introduction

With the rapid development of remote sensor technology, multimodal, and multispectral sensing data are generated. Optical and synthetic aperture radar (SAR) images are the most widely used to produce maps [

1]. Optical images accord with human vision and are easy interpretation but not more susceptible to cloud and fog. SAR images are obtained by using an active microwave imaging system, which is not affected by the weather condition but hard to be interpreted. Utilizing the complementary information of the optical and SAR images of the same object in the different environments and spectra, we could get important application values in image fusions [

2], pattern recognition [

3], and change detection [

4], etc. The effects of these applications are dependent on the accuracy of the optical and SAR registration. However, because of the serious speckle noise, non-linear radiation distortions (NRD) of SAR images and the large irradiance differences between optical and SAR images, optical, and SAR registration is still a challenging task [

5,

6].

The normal image registration methods are mainly divided into two categories: area-based matching methods [

7,

8] and feature-based matching methods. Area-based methods, include Fourier-based methods [

9], mutual information-based methods [

10], normalized cross-correlation methods [

11], and so on, where the original pixel values and specific similarity measures are used to match the optical and SAR images [

12]. However, when it comes to optical-SAR registration tasks, the manifestation of area-based methods is poor because they are sensitive to the intensity changes and the speckle noise. As for feature-based methods, the pairwise correspondence between optical-SAR images are found by their spatial relations or various descriptors of features. Among the field of feature-based methods, the SIFT-like (scale-invariant feature transform, SIFT) [

13] methods are the most accurate and fastest speed in most of tests. The SIFT derivation algorithm has made a lot of efforts to further improve efficiency [

14,

15], such as SURF (speeded-up robust features) [

16] algorithm based on SIFT and Hessian matrix detection method, PCA-SIFT [

17] algorithm which uses principal component analysis (PCA) to reduce dimension of SIFT descriptor. In addition, rely on affine-SIFT(ASIFT) [

18], the axial direction relationship between the two cameras from the sample view can be inferred. Nevertheless, these SIFT-like methods are based on gradient information which are also difficult to accomplish the optical-SAR registration task well because of the speckle noise and NRD [

19,

20]. To solve this problem, many improved SIFT algorithms are further proposed. The SAR-SIFT [

21] algorithm redefines the gradient of SAR images to improve the robustness to the speckle noise. Optical-SAR SIFT-like algorithm (OS-SIFT) [

22] uses multi-scale ratio of exponentially weighted averages (ROEWA) operator and multi-scale Sobel operator to improve the performance.

In recent years, due to the insensitivity to the speckle noise and NRD, phase congruency (PC) [

23,

24] based on the shift property of the Fourier transform has been widely applied in many optical-SAR registration methods. The PC algorithm in multi-model image matching is the histogram of orientated phase congruency (HOPC) [

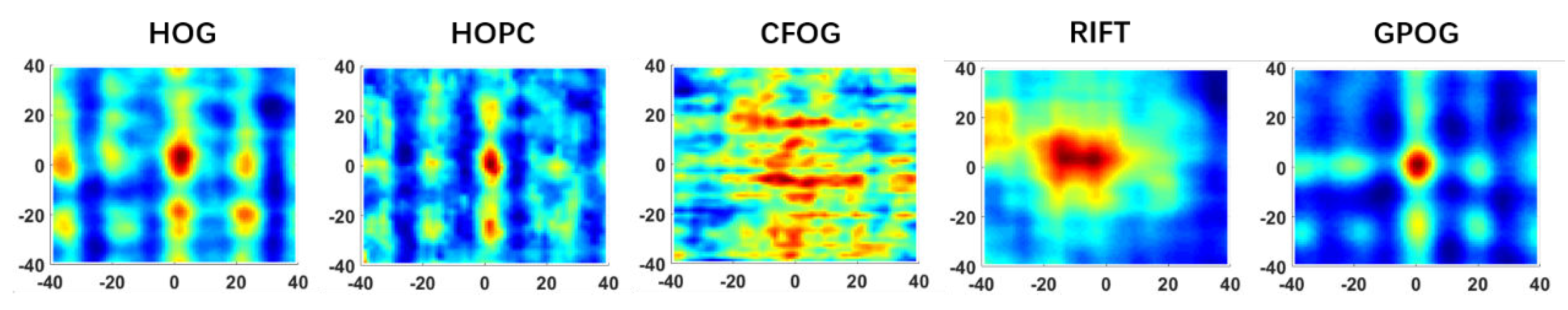

25] which takes the advantages of HOG [

26] and PC to improve the performance in illumination changes. On the basis of HOPC, this research team combines a feature detector named MMPC-lap and a feature descriptor named local histogram of orientated phase congruency (LHOPC) [

27] to further improve computational efficiency. To further improve the accuracy and robustness, the energy minimization method and high-order singular value decomposition of the PC matrix are investigated in Optica-SAR images and 3-D PC (OS-PC) [

28] algorithm. To achieve more robust to large NRD, a maximum index map (MIM) for feature description is proposed in radiation-invariant feature transform (RIFT) [

29]. In addition, The descriptor named the histograms of oriented magnitude and phase congruency (HOMPC) [

30] and a local feature descriptor based on the histogram of phase congruency orientation on multi-scale max amplitude index maps (HOSMI) [

31] are invented to further overcome NRD and the speckle noise inspired by RIFT.

However, above feature-based methods are unreliable for complex background variations, or non-linear grayscale deformations and the deep learning technology are introduced into optical-SAR images registration to generate a good feature descriptor. Siamese network, using the deep learning technology, is always applied in image registration methods [

32,

33]. Based on the application of Siamese network, DescNet generates a robust feature descriptor for feature matching [

34]. Moreover, generative adversarial networks (GAN) translate optical images into SAR images and transform the optical-SAR multi-model registration to the single-model registration [

35].

Even though many of optical-SAR image registration methods have been investigated in the past decade, few of them can solve the optical-SAR registration limitations listed below.

- 1.

The reliability of the algorithm depends on the accuracy of feature point extraction. Whereas, it is difficult in using these algorithms to accurately extract key points between optical and SAR images, since Harris, features from accelerated segment test (FAST) and other algorithms are highly sensitive to scattering phenomenology differences and speckle noise. It is obviously impossible to match images effectively by relying on these key points extraction algorithms.

- 2.

Because HOG descriptor is a cell-block system which needs interpolate procedures, it is time-consuming. During the building process of the HOG descriptor, it requires computing the weights of each pixel for orientation bins and each block descriptor. If we structure a HOG descriptor which block only has one cell, it shows no obvious performance in optical-SAR registration matching framework.

- 3.

Both HOG structure and PC response are sensitive to image rotation. Thus, once the image rotates, the accuracy of template matching becomes worse. Consequently, most template matching algorithms can obtain good performance only when optical and SAR images have little displacement and no rotation. This requirement places an large barrier on the application of template matching.

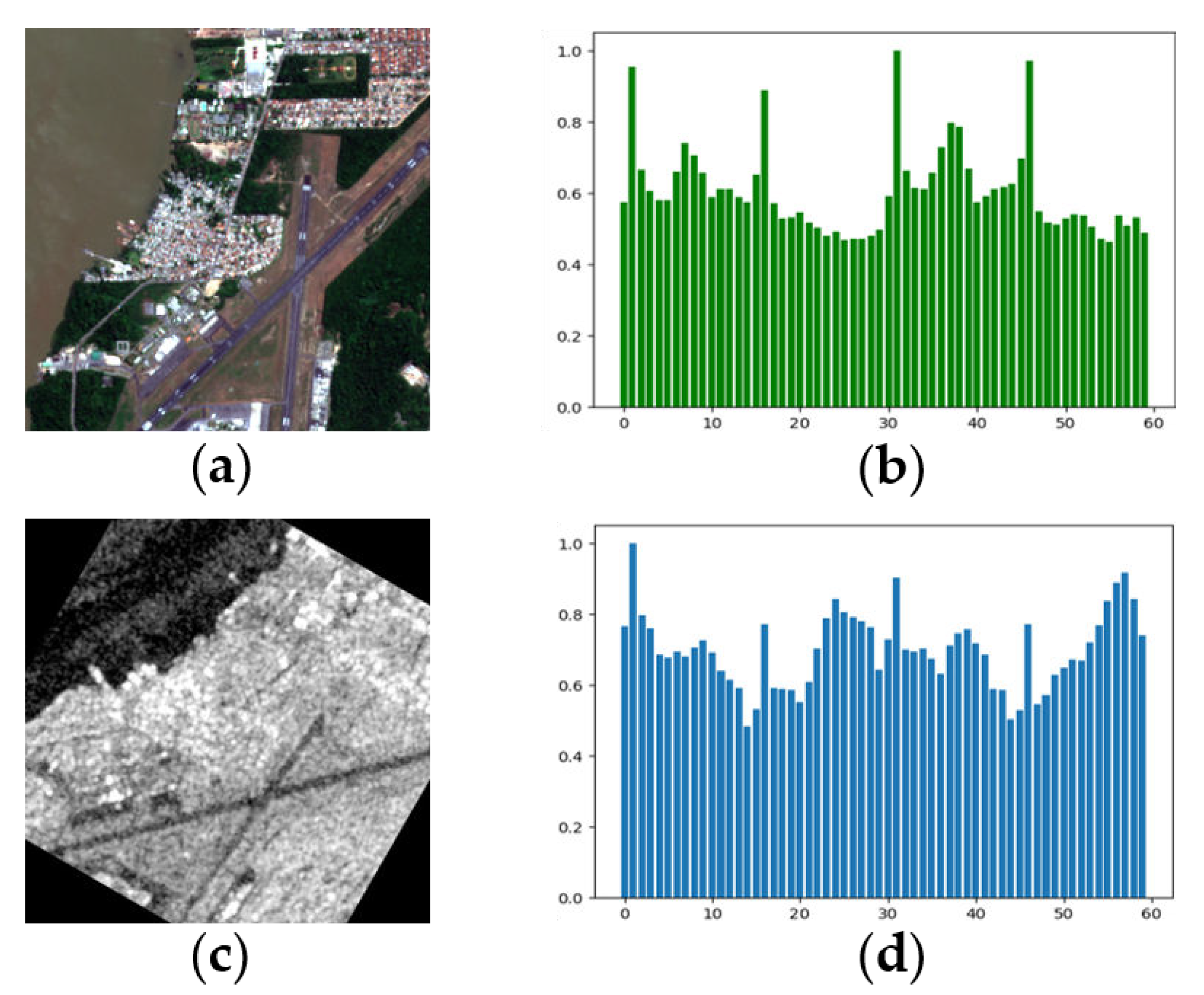

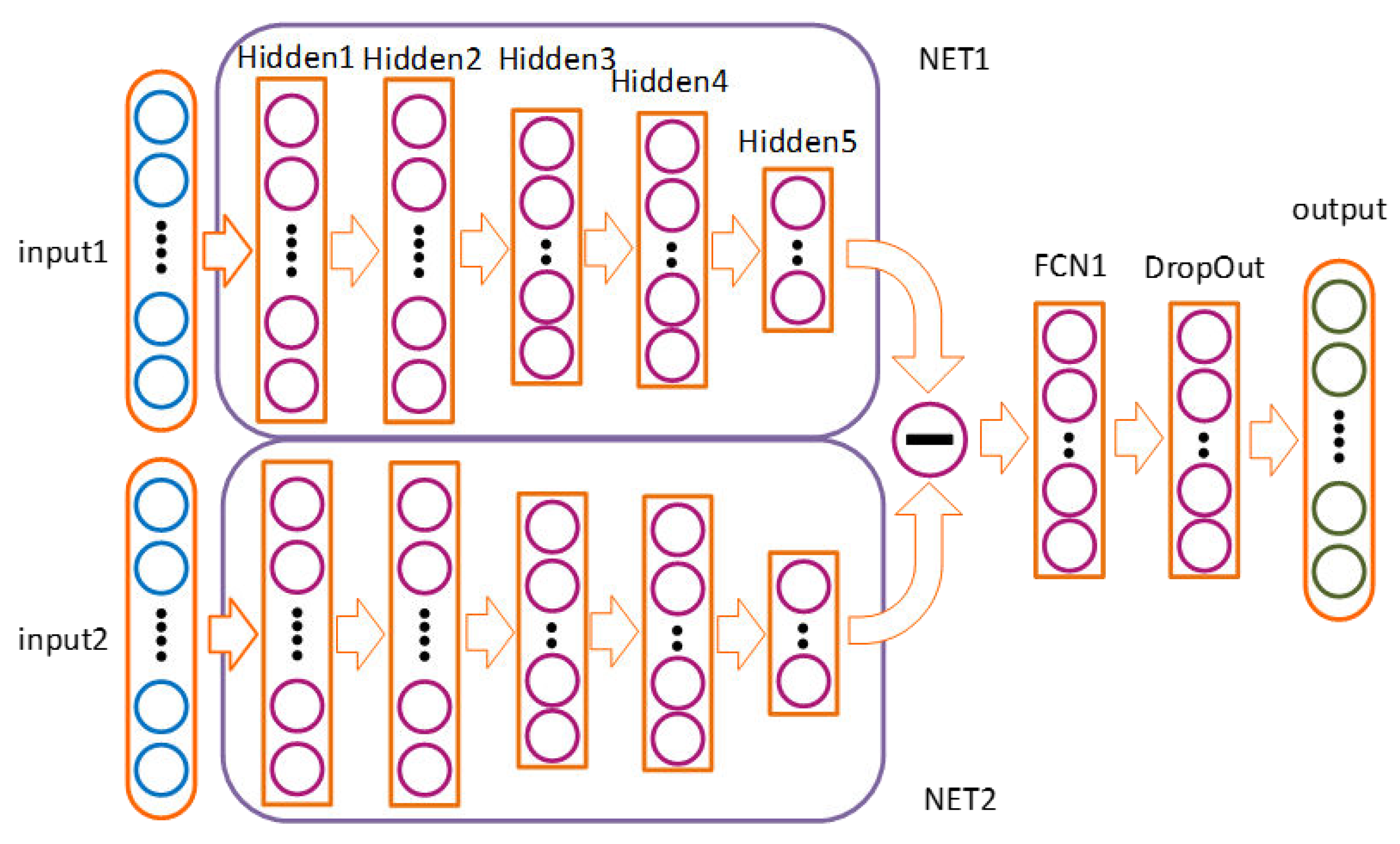

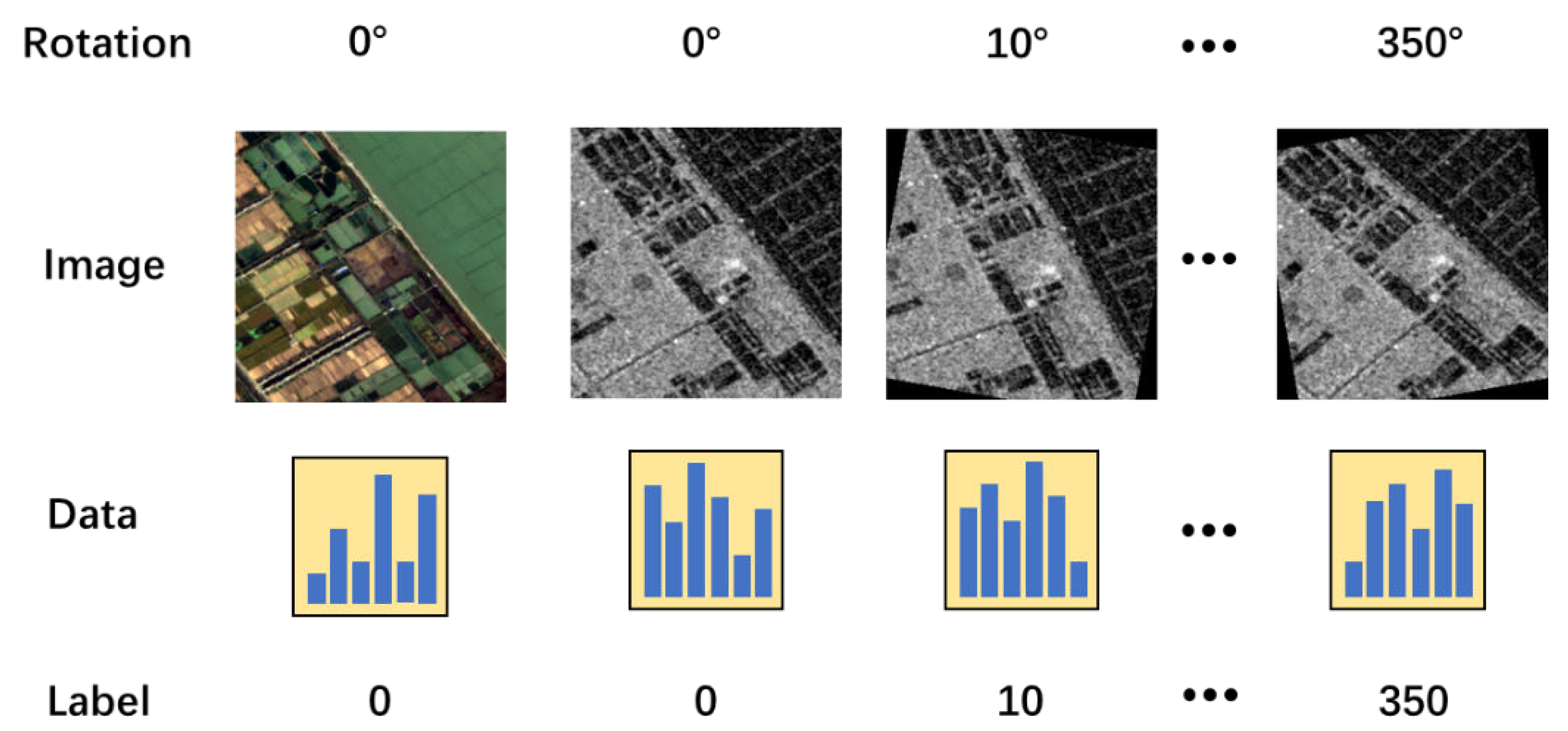

In this paper, we addressed the above limitations by proposing a robust optical and SAR image registration method based on deep and Gaussian features. We present a neural network named RotNET to predict the rotation relationship between optical and SAR images. In addition, we put forward a HOG-like algorithm on the basis of Gaussian pyramid. The proposed method mainly contains the following two works.

First, inspired by the Siamese network structure, this study proposes RotNET which was equipped with a two-branch network to predict the rotation relationship. Different from Siamese network used convolutional neural network structure, multi-layer neural network is applied in RotNET to predict the rotation relationship of two images. Besides, the RotNET is able to predict accurately the rotation relationship between optical and SAR images by inputting the gradient histograms of the two images.

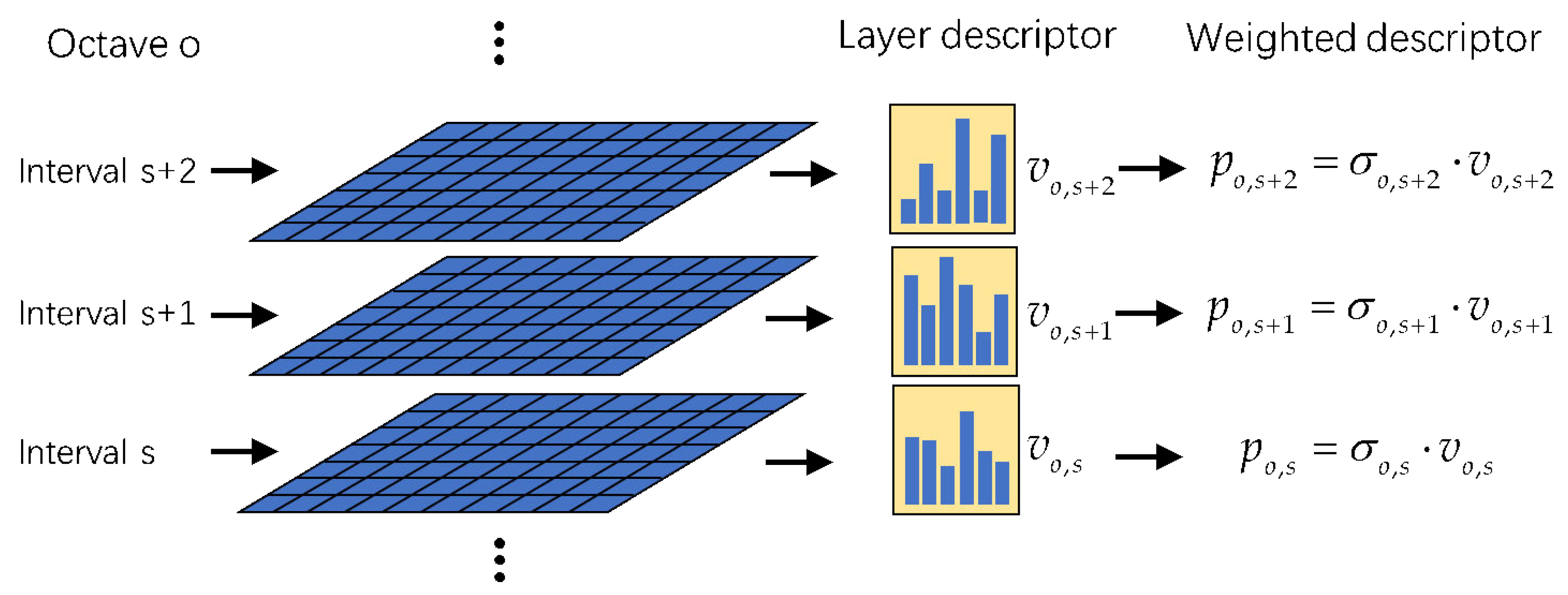

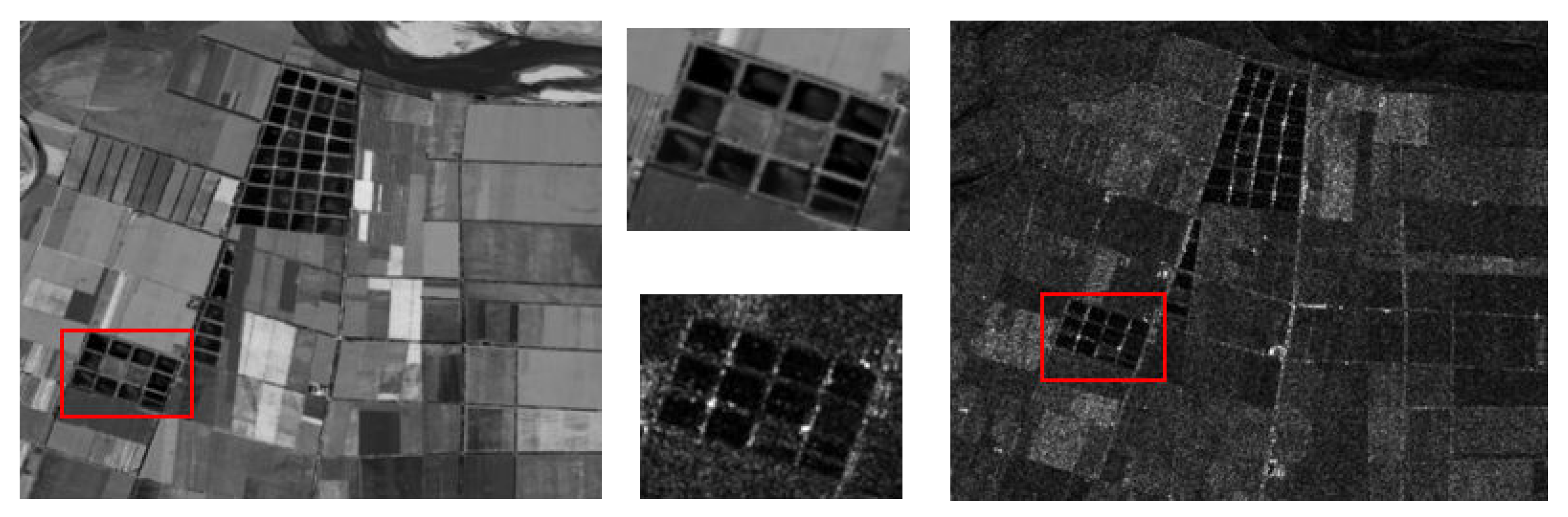

Second, we investigate whether a PC response is a necessary pre-step to constitute a descriptor and whether using a lot of computing resources to calculate the PC response can enhance the effect of algorithm. A novel descriptor, named Gaussian pyramid features of oriented gradients (GPOG), is proposed to establish one-cell block descriptor. The structural and shape properties in the local region of each keypoints are preferably reflected by the utilization of GPOG descriptor, which can tolerate the NRD and the speckle noise of SAR.

The main contributions of this work are as follows:

- 1.

The RotNET is proposed to precisely forecast the rotation relationship of optical and SAR images. Compared to other algorithms, RotNET is capable of solving the rotation problem by utilizing the deep learning technology.

- 2.

A one-block system is designed to describe the relationship between optical and SAR image. Using Gaussian pyramid to build a one-cell-block HOG descriptor, the novel descriptor is more robust against NRD and the speckle noise of SAR.

The rest of this paper is organized as follows: In

Section 2, the structure of the RotNET and details of GPOG descriptor based on the Gaussian pyramid are elaborately described, and a scheme of optical and SAR image registration is proposed. In

Section 3, some experiments related to the repeatability rate of rotation relationship by RotNET, the similarity map of GPOG descriptor, and the accuracy of GPOG descriptor are carried out. In

Section 4, the conclusions and recommendations are provided.

4. Conclusions

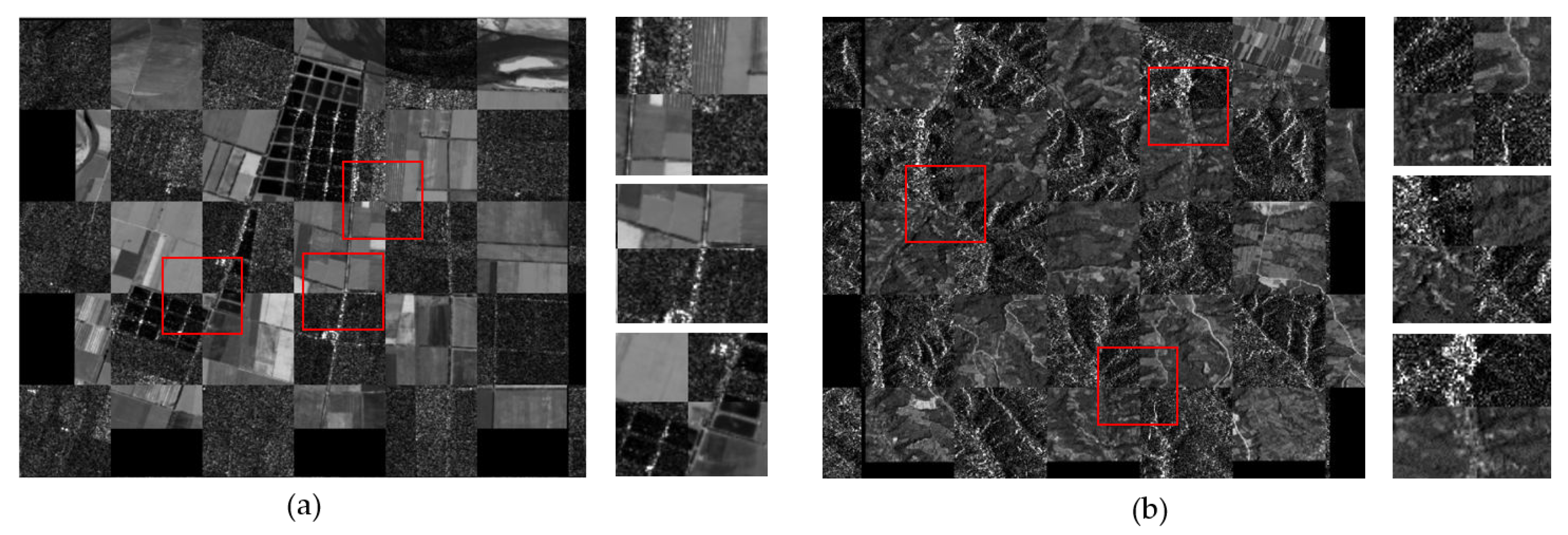

In this paper, inspired by the structure of the Siamese network, we propose a novel neural network framework (named RotNET) to predict the rotation relationship between SAR and optical image. For training the RotNET, we constructed a dataset based on gradient histogram based on the SEN1-2 dataset. Then we build the GPOG descriptor by used the Gaussian pyramid that is able to build the scale space and extract the important feature. By making use of the one-cell block system in the Gaussian pyramid we propose the GPOG descriptor.

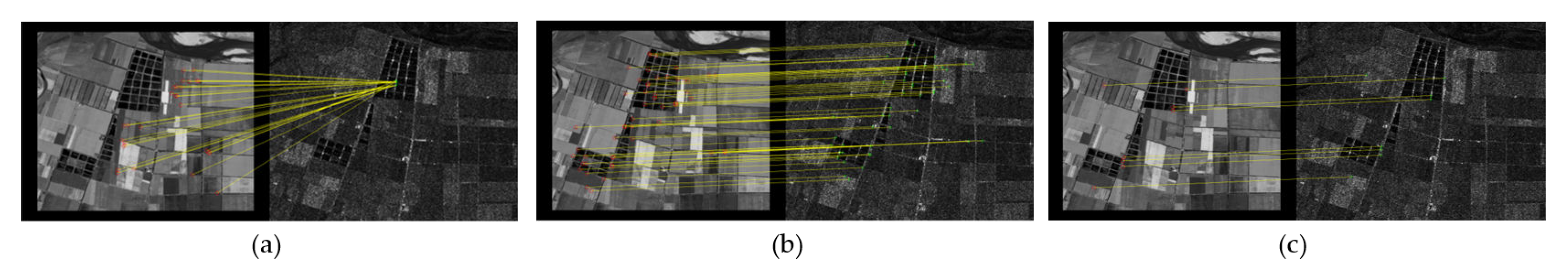

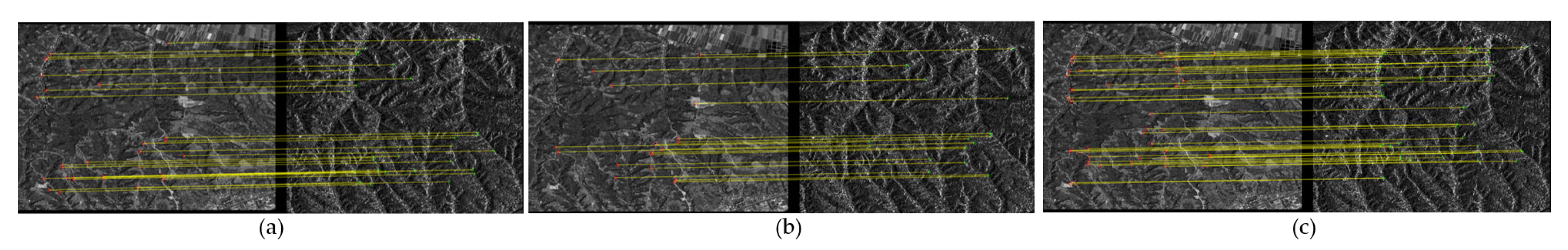

To validate the superiority of the proposed work, we carry out specific and quantitative experiments. First, we build our own dataset based on SEN1-2 dataset to train RotNET and respectively teste the RotNET with dataset images and real-world images. The experiment shows that the RotNET can find the rotation relationship between optical and SAR images, both in the dataset and in the real-world images. Second, we design two experiments to test the performance of GPOG descriptor. In the first test, we compare the GPOG descriptor with the other descriptors by similarity maps, and the results show that the applicability and convergence performance of GPOG are better. In the second test, we compare the GPOG descriptor with the other descriptors by using RMSE and NCM criteria, and the results show that GPOG descriptor is robust to SAR speckle noise and NRD.

The RotNET neural network framework can predict the rotation relationship ignoring the size of the two images and is applied to change detection, image analysis and image preprocessing. The GPOG descriptor can play a role in the image registration, fusion of multi-sensor images and image coding. In the future, we will test our RotNET and the GPOG descriptor on more multi-sensor images with irradiance difference, such as optical and light detection and ranging (LiDAR).