Rich CNN Features for Water-Body Segmentation from Very High Resolution Aerial and Satellite Imagery

Abstract

1. Introduction

- We propose a rich feature extraction network for the extraction of water-bodies in complex scenes from VHR remote sensing imagery. A novel multi-feature extraction and combination module is designed to consider feature information from a small receptive field and a large one, and between-channels. As a basic unit of the encoder, this module fully extracts feature information at each scale.

- We present a simple and effective multi-scale prediction optimization module to achieve finer water-body segmentation by aggregating prediction results from different scales.

- An encoder-decoder semantic feature fusion module is designed to promote the global consistency of feature representation between the encoder and decoder.

2. Methodology

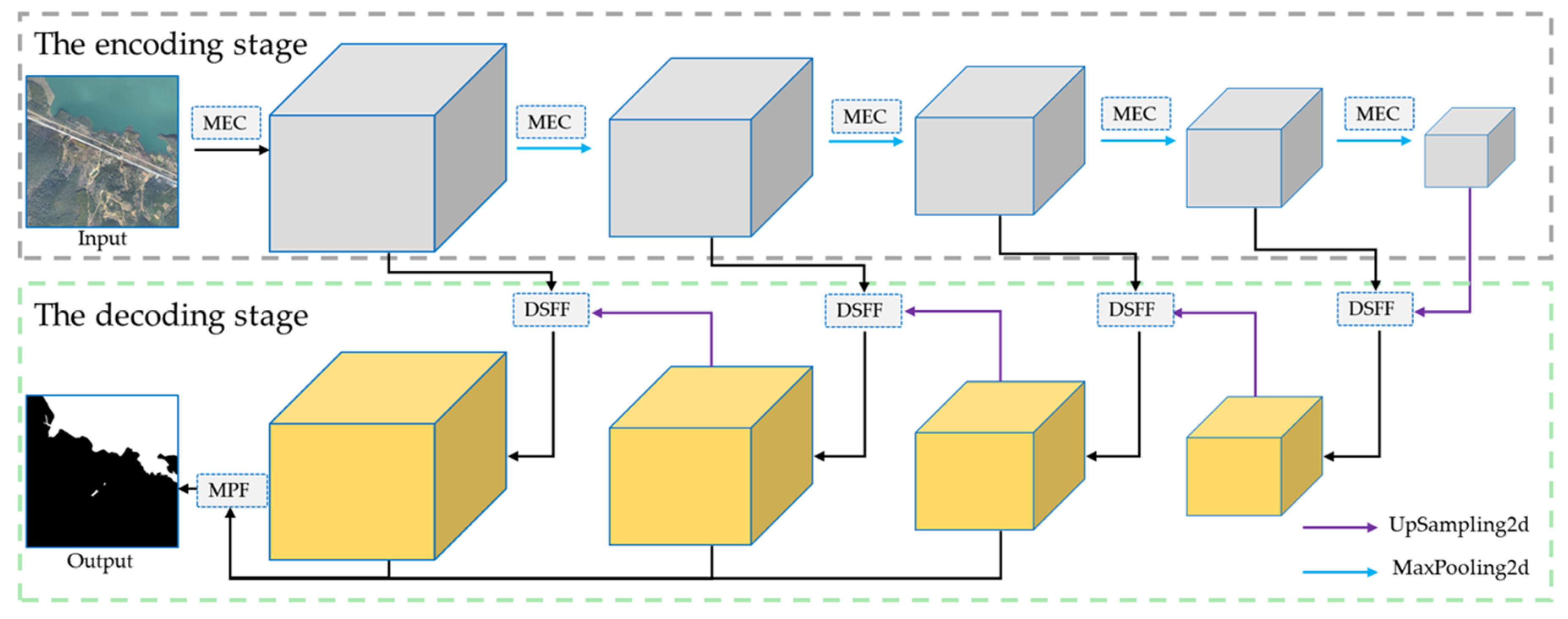

2.1. MECNet Architecture

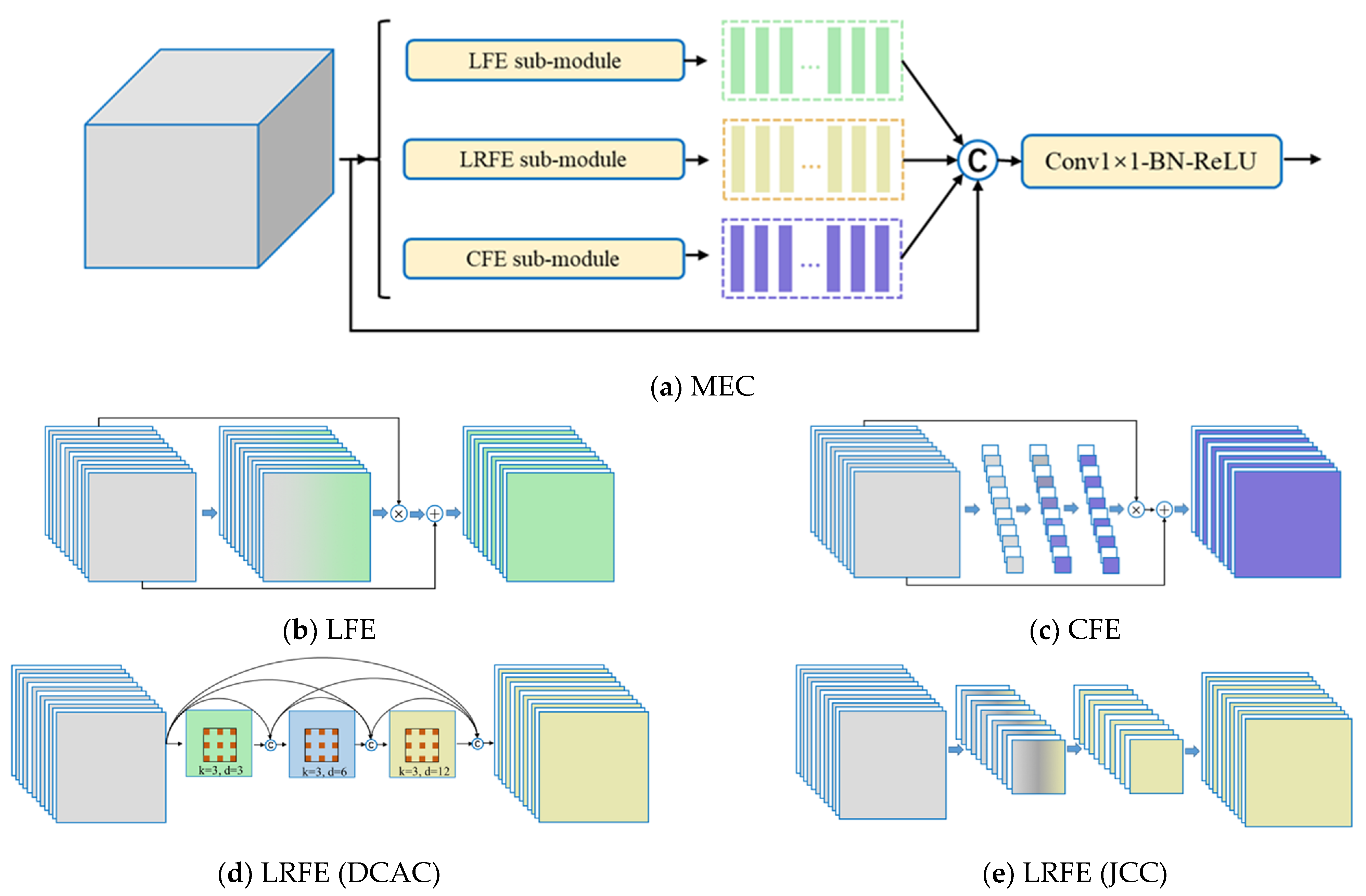

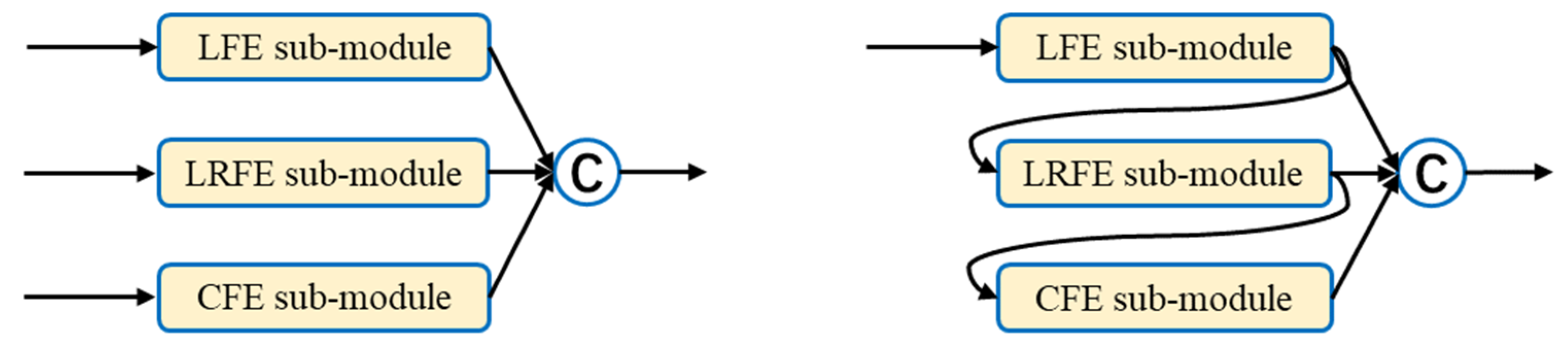

2.2. Multi-Feature Extraction and Combination Module

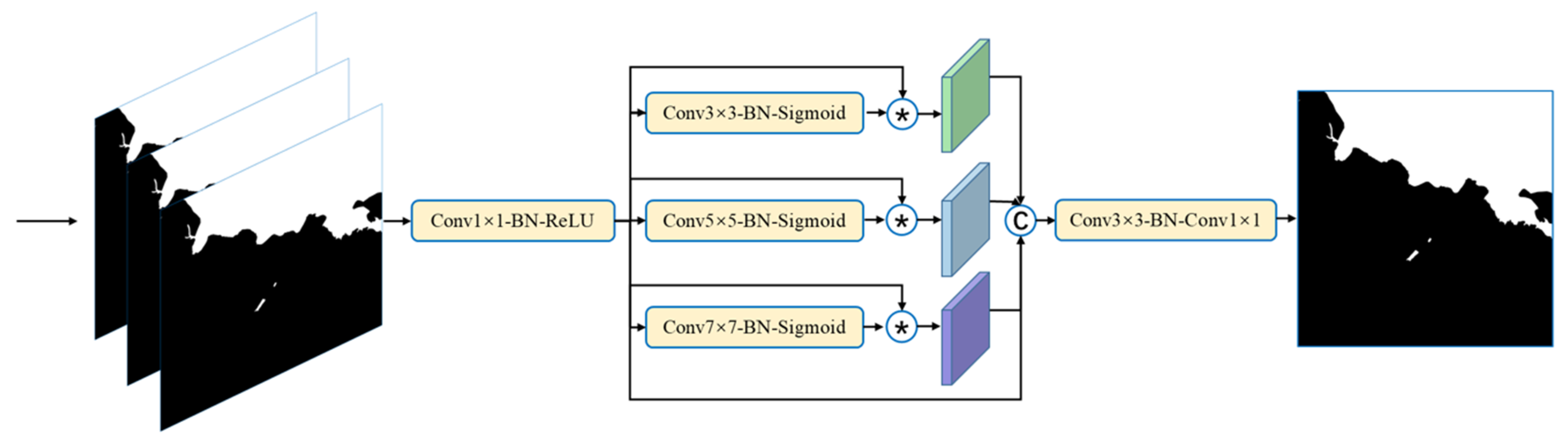

2.3. Multi-Scale Prediction Fusion Module

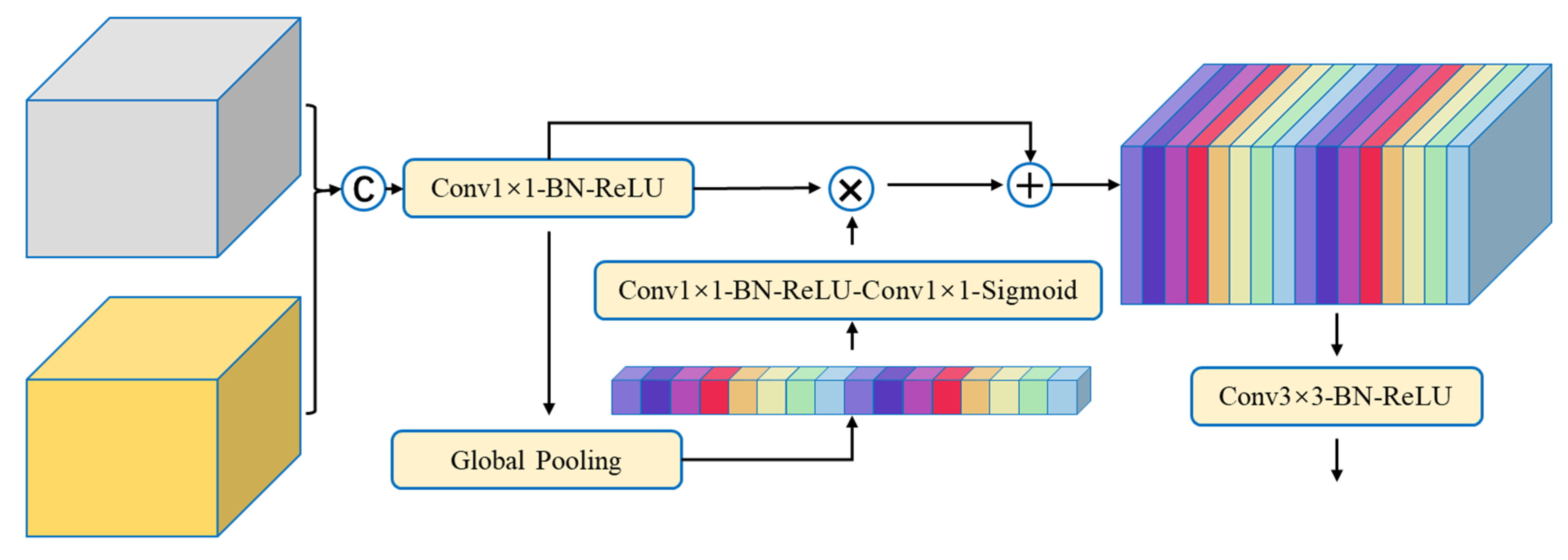

2.4. Encoder-Decoder Semantic Features Fusion Module

2.5. The Total Loss Function

2.6. Implementation Details

3. Results and Analysis

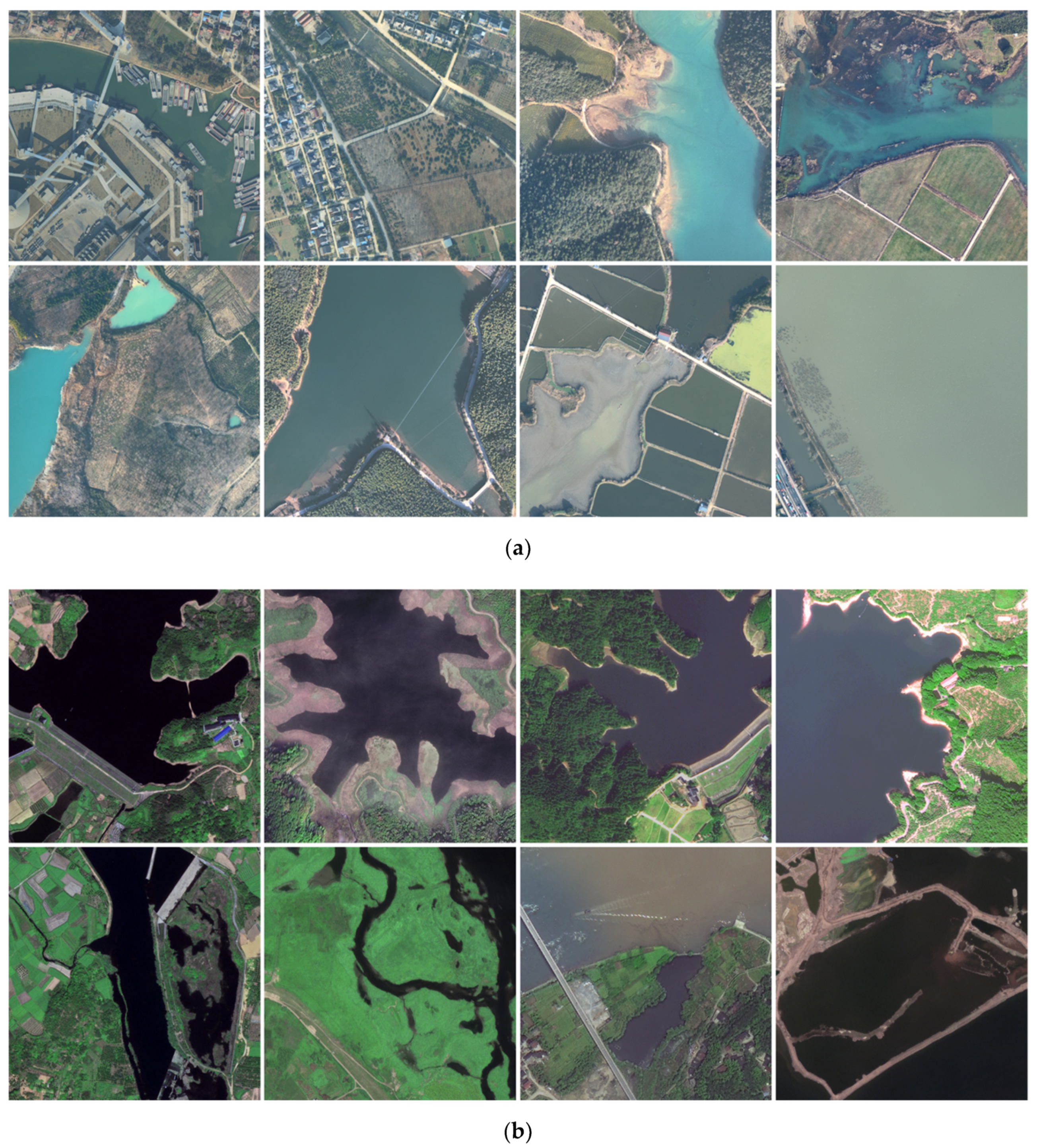

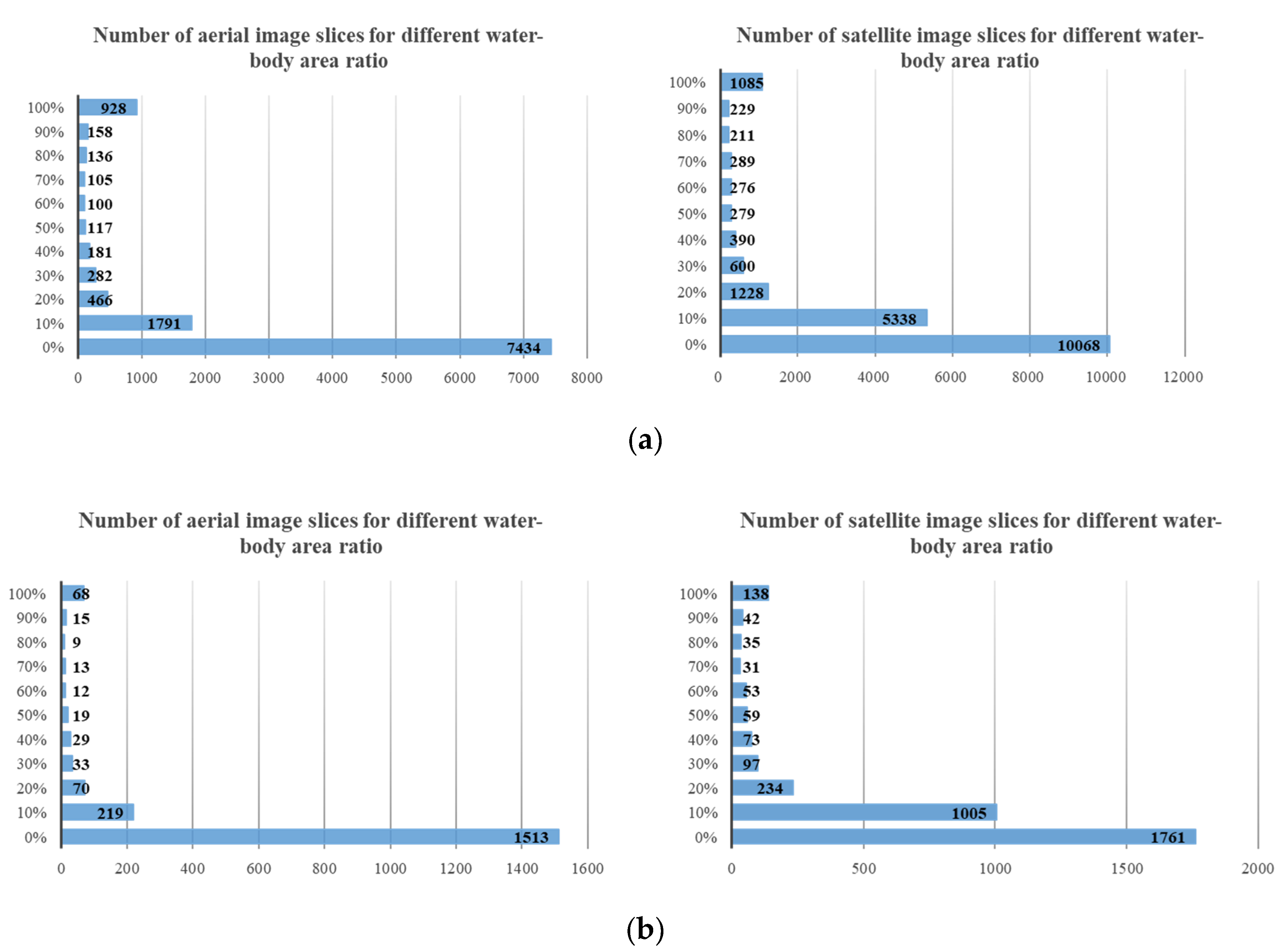

3.1. Water-Body Dataset

3.2. Evaluation Metrics

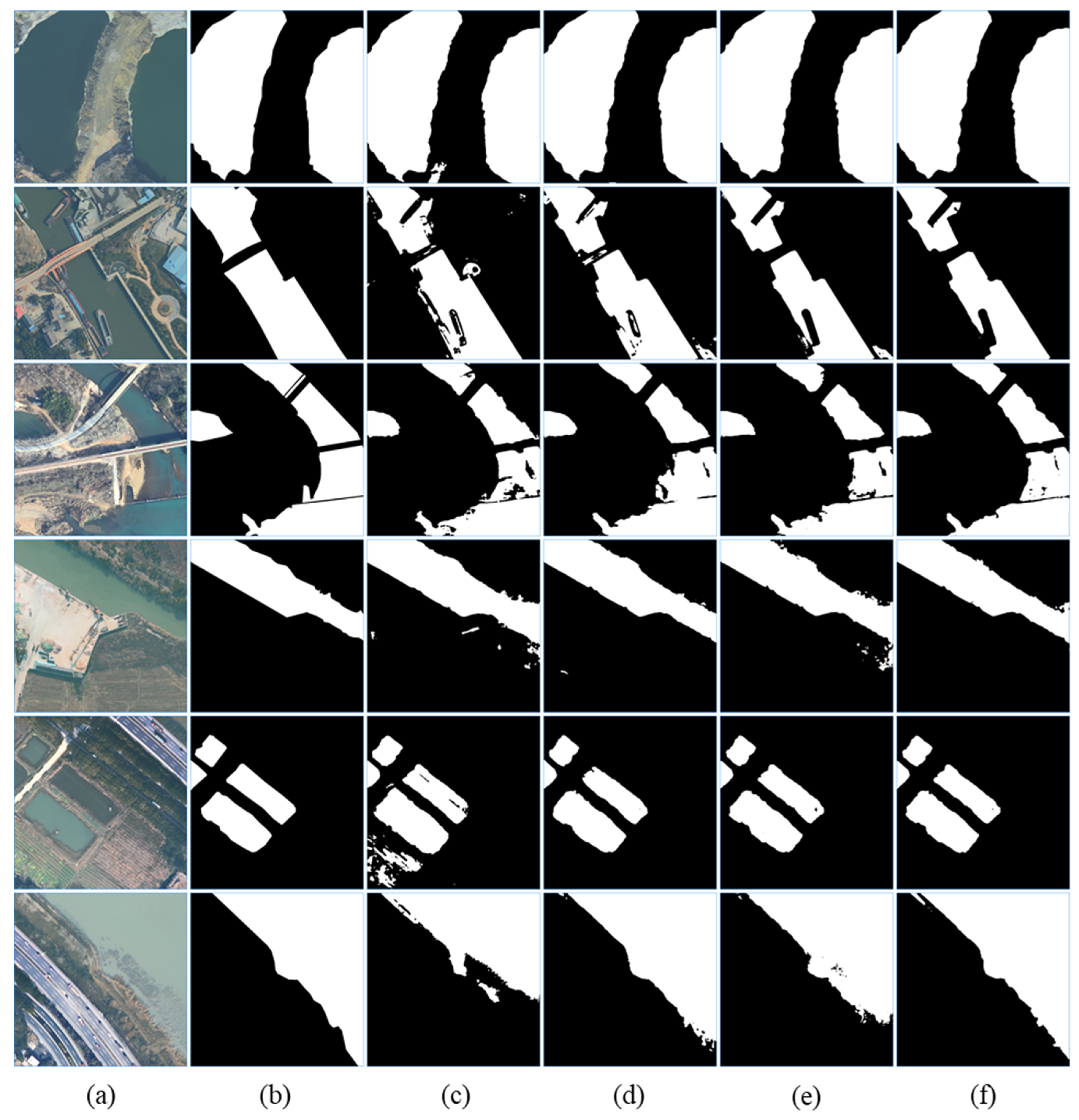

3.3. Water-Body Segmentation Results

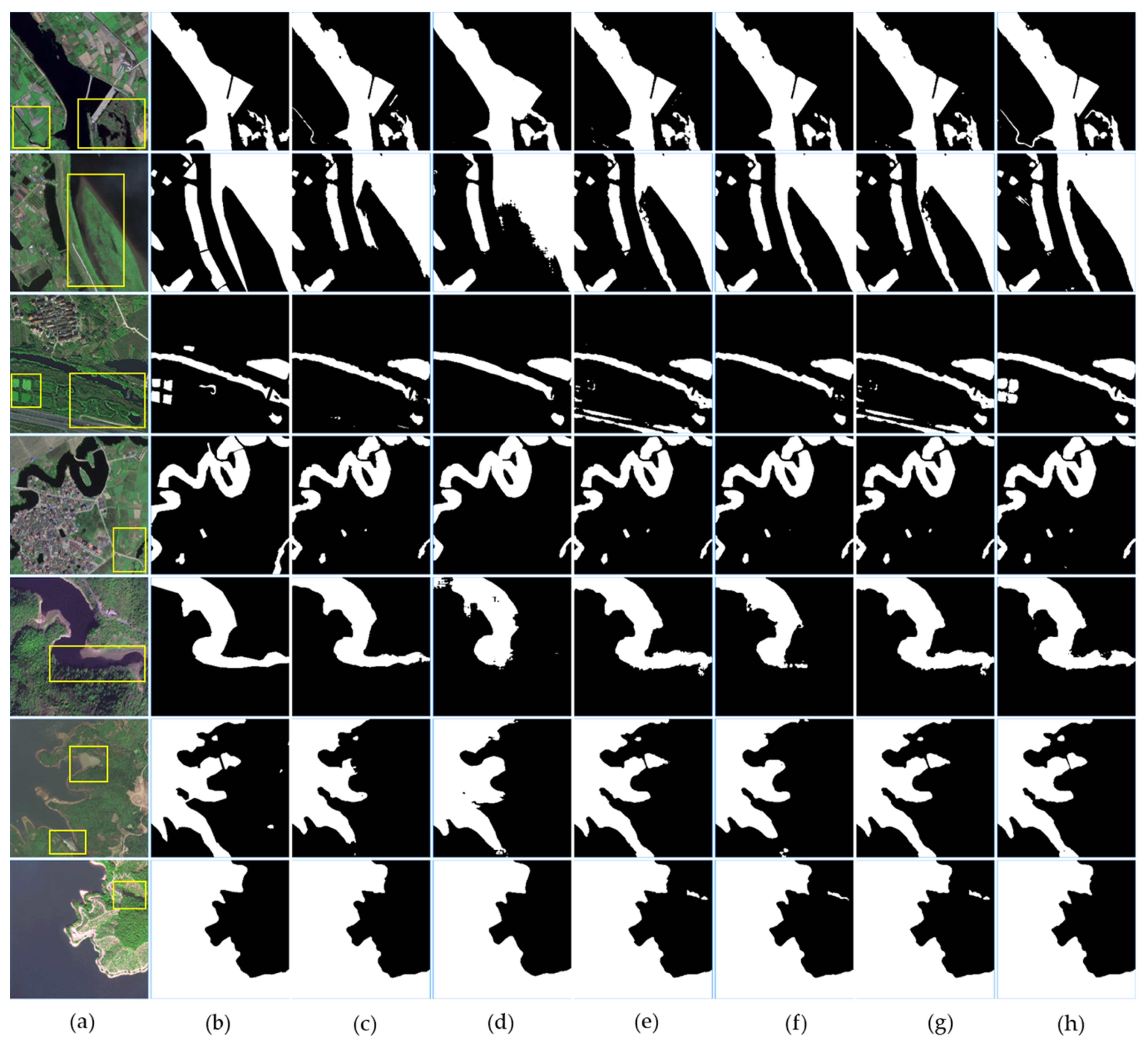

3.3.1. The Aerial Imagery

3.3.2. The Satellite Imagery

3.4. Ablation Studies

3.4.1. MECNet Components

3.4.2. LRFE Sub-Module

3.4.3. MEC Module

4. Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Mantzafleri, N.; Psilovikos, A.; Blanta, A. Water quality monitoring and modeling in Lake Kastoria, using GIS. Assessment and management of pollution sources. Water Resour. Manag. 2009, 23, 3221–3254. [Google Scholar] [CrossRef]

- Pawełczyk, A. Assessment of health hazard associated with nitrogen compounds in water. Water Sci. Technol. 2012, 66, 666–672. [Google Scholar] [CrossRef] [PubMed]

- Haibo, Y.; Zongmin, W.; Hongling, Z.; Yu, G. Water body extraction methods study based on RS and GIS. Procedia Environ. Sci. 2011, 10, 2619–2624. [Google Scholar] [CrossRef]

- Frazier, P.S.; Page, K.J. Water body detection and delineation with Landsat TM data. Photogramm. Eng. Remote Sens. 2000, 66, 1461–1468. [Google Scholar]

- Gautam, V.K.; Gaurav, P.K.; Murugan, P.; Annadurai, M. Assessment of surface water Dynamicsin Bangalore using WRI, NDWI, MNDWI, supervised classification and KT transformation. Aquat. Procedia 2015, 4, 739–746. [Google Scholar] [CrossRef]

- McFeeters, S.K. The use of the Normalized Difference Water Index (NDWI) in the delineation of open water features. Int. J. Remote Sens. 1996, 17, 1425–1432. [Google Scholar] [CrossRef]

- Zhao, X.; Wang, P.; Chen, C.; Jiang, T.; Yu, Z.; Guo, B. Waterbody information extraction from remote-sensing images after disasters based on spectral information and characteristic knowledge. Int. J. Remote Sens. 2017, 38, 1404–1422. [Google Scholar] [CrossRef]

- Long, J.; Shelhamer, E.; Darrell, T. Fully Convolutional Networks for Semantic Segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2015, 39, 640–651. [Google Scholar]

- Ronneberger, O.; Fischer, P.; Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Proceedings of the International Conference on Medical Image Computing and Computer-Assisted Intervention, Munich, Germany, 5–9 October 2015; pp. 234–241. [Google Scholar]

- Lin, G.; Milan, A.; Shen, C.; Reid, I. Refinenet: Multi-path refinement networks for high-resolution semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 22–25 July 2017; pp. 1925–1934. [Google Scholar]

- Yu, Z.; Feng, C.; Liu, M.-Y.; Ramalingam, S. Casenet: Deep category-aware semantic edge detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 5964–5973. [Google Scholar]

- Bertasius, G.; Shi, J.; Torresani, L. Deepedge: A multi-scale bifurcated deep network for top-down contour detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Boston, MA, USA, 8–10 June 2015; pp. 4380–4389. [Google Scholar]

- Xie, S.; Tu, Z. Holistically-nested edge detection. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 13–16 December 2015; pp. 1395–1403. [Google Scholar]

- Yu, L.; Wang, Z.; Tian, S.; Ye, F.; Ding, J.; Kong, J. Convolutional neural networks for water body extraction from Landsat imagery. Int. J. Comput. Intell. Appl. 2017, 16, 1750001. [Google Scholar] [CrossRef]

- Miao, Z.; Fu, K.; Sun, H.; Sun, X.; Yan, M. Automatic water-body segmentation from high-resolution satellite images via deep networks. IEEE Geosci. Remote Sens. Lett. 2018, 15, 602–606. [Google Scholar] [CrossRef]

- Li, L.; Yan, Z.; Shen, Q.; Cheng, G.; Gao, L.; Zhang, B. Water body extraction from very high spatial resolution remote sensing data based on fully convolutional networks. Remote Sens. 2019, 11, 1162. [Google Scholar] [CrossRef]

- Duan, L.; Hu, X. Multiscale Refinement Network for Water-Body Segmentation in High-Resolution Satellite Imagery. IEEE Geosci. Remote Sens. Lett. 2019, 17, 686–690. [Google Scholar] [CrossRef]

- Guo, H.; He, G.; Jiang, W.; Yin, R.; Yan, L.; Leng, W. A Multi-Scale Water Extraction Convolutional Neural Network (MWEN) Method for GaoFen-1 Remote Sensing Images. ISPRS Int. J. Geo Inf. 2020, 9, 189. [Google Scholar] [CrossRef]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Huang, G.; Liu, Z.; Van Der Maaten, L.; Weinberger, K.Q. Densely connected convolutional networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 4700–4708. [Google Scholar]

- Zhang, H.; Wu, C.; Zhang, Z.; Zhu, Y.; Zhang, Z.; Lin, H.; Sun, Y.; He, T.; Mueller, J.; Manmatha, R. Resnest: Split-attention networks. arXiv 2020, arXiv:2004.08955. [Google Scholar]

- Qin, X.; Zhang, Z.; Huang, C.; Gao, C.; Dehghan, M.; Jagersand, M. Basnet: Boundary-aware salient object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 7479–7489. [Google Scholar]

- Yu, C.; Wang, J.; Peng, C.; Gao, C.; Yu, G.; Sang, N. Learning a discriminative feature network for semantic segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–22 June 2018; pp. 1857–1866. [Google Scholar]

- Cheng, H.K.; Chung, J.; Tai, Y.-W.; Tang, C.-K. CascadePSP: Toward Class-Agnostic and Very High-Resolution Segmentation via Global and Local Refinement. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Virtual Conference, Seattle, WA, USA, 14–19 June 2020; pp. 8890–8899. [Google Scholar]

- Chen, L.-C.; Zhu, Y.; Papandreou, G.; Schroff, F.; Adam, H. Encoder-decoder with atrous separable convolution for semantic image segmentation. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 801–818. [Google Scholar]

- Liu, J.-J.; Hou, Q.; Cheng, M.-M.; Feng, J.; Jiang, J. A simple pooling-based design for real-time salient object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 3917–3926. [Google Scholar]

- Ji, S.; Zhang, Z.; Zhang, C.; Wei, S.; Lu, M.; Duan, Y. Learning discriminative spatiotemporal features for precise crop classification from multi-temporal satellite images. Int. J. Remote Sens. 2020, 41, 3162–3174. [Google Scholar] [CrossRef]

- Badrinarayanan, V.; Kendall, A.; Cipolla, R. Segnet: A deep convolutional encoder-decoder architecture for image segmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 39, 2481–2495. [Google Scholar] [CrossRef]

- Wei, S.; Ji, S.; Lu, M. Toward automatic building footprint delineation from aerial images using cnn and regularization. IEEE Trans. Geosci. Remote Sens. 2019, 58, 2178–2189. [Google Scholar] [CrossRef]

- Gu, Y.; Lu, X.; Yang, L.; Zhang, B.; Yu, D.; Zhao, Y.; Gao, L.; Wu, L.; Zhou, T. Automatic lung nodule detection using a 3D deep convolutional neural network combined with a multi-scale prediction strategy in chest CTs. Comput. Biol. Med. 2018, 103, 220–231. [Google Scholar] [CrossRef]

- Bernstein, L.S.; Adler-Golden, S.M.; Sundberg, R.L.; Levine, R.Y.; Perkins, T.C.; Berk, A.; Ratkowski, A.J.; Felde, G.; Hoke, M.L. Validation of the QUick Atmospheric Correction (QUAC) algorithm for VNIR-SWIR multi-and hyperspectral imagery. Proc. SPIE 2005, 5806, 668–678. [Google Scholar]

- Paszke, A.; Gross, S.; Massa, F.; Lerer, A.; Bradbury, J.; Chanan, G.; Killeen, T.; Lin, Z.; Gimelshein, N.; Antiga, L. Pytorch: An imperative style, high-performance deep learning library. In Proceedings of the Advances in Neural Information Processing Systems, Vancouver, BC, Canada, 8–14 December 2019; pp. 8026–8037. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In Proceedings of the IEEE International Conference on Computer Vision, Santiago, Chile, 13–16 December 2015; pp. 1026–1034. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

- Fu, J.; Liu, J.; Tian, H.; Li, Y.; Bao, Y.; Fang, Z.; Lu, H. Dual attention network for scene segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 16–20 June 2019; pp. 3146–3154. [Google Scholar]

| DCAC-Large1 | DCAC-Large2 | |||||

|---|---|---|---|---|---|---|

| Layeri | Kernel Size | Dilated Rate | RFS | Kernel Size | Dilated Rate | RFS |

| layer1 | 3 | (3, 6, 12, 18, 24) | 131 | 7 | (3, 6, 12) | 129 |

| layer2 | 3 | (3, 6, 12, 18) | 82 | 5 | (3, 6, 12) | 87 |

| layer3 | 3 | (3, 6, 12) | 45 | 3 | (3, 6, 12) | 45 |

| layer4 | 3 | (1, 3, 6) | 23 | 3 | (1, 3, 6) | 23 |

| layer5 | 3 | (1, 2, 3) | 15 | 3 | (1, 2, 3) | 15 |

| DCAC-Small | JCC | |||||

| layeri | Kernel Size | Dilated Rate | RFS | Kernel Size | Dilated Rate | RFS |

| layer1 | 3 | (3, 6, 12) | 45 | 7, 3 | (1) | 15 |

| layer2 | 3 | (3, 6, 12) | 45 | 7, 3 | (1) | 15 |

| layer3 | 3 | (3, 6, 12) | 45 | 7, 3 | (1) | 15 |

| layer4 | 3 | (1, 3, 6) | 23 | 7, 3 | (1) | 15 |

| layer5 | 3 | (1, 2, 3) | 15 | 7, 3 | (1) | 15 |

| Method | Backbone | Precision | Recall | IOU |

|---|---|---|---|---|

| U-Net | - | 0.9076 | 0.9374 | 0.8558 |

| RefineNet | resnet101 | 0.8741 | 0.9844 | 0.8621 |

| DeeplabV3+ | resnet101 | 0.9140 | 0.9417 | 0.8650 |

| DANet | resnet101 | 0.9259 | 0.9456 | 0.8790 |

| CascadePSP | DeeplabV3+&resnet50 | 0.9203 | 0.9409 | 0.8700 |

| MECNet (ours) | - | 0.9157 | 0.9888 | 0.9064 |

| Method | Backbone | Precision | Recall | IOU |

|---|---|---|---|---|

| U-Net | - | 0.9119 | 0.9756 | 0.8916 |

| RefineNet | resnet101 | 0.9176 | 0.9578 | 0.8820 |

| DeeplabV3+ | resnet101 | 0.9379 | 0.9582 | 0.9010 |

| DANet | resnet101 | 0.9156 | 0.9658 | 0.8868 |

| CascadePSP | DeeplabV3+&resnet50 | 0.9378 | 0.9586 | 0.9013 |

| MECNet (ours) | - | 0.9408 | 0.9630 | 0.9080 |

| Method | Parameter (M) | Flops (B) | IoU |

|---|---|---|---|

| FCN-8s | 15.31 | 81.00 | 0.8399 |

| FCN + MEC | 26.11 | 105.59 | 0.8930 |

| MEC + MPF | 35.46 | 254.29 | 0.8974 |

| MEC + MPF + DSFF (MECNet) | 30.07 | 185.58 | 0.9064 |

| Method | Parameters (M) | FLOPs(G) | IoU |

|---|---|---|---|

| FCN | 15.31 | 81.10 | 0.8399 |

| FCN+DCAC-large1 | 12.88 | 140.96 | 0.8801 |

| FCN+DCAC-large2 | 13.39 | 248.30 | 0.8841 |

| FCN+DCAC-small | 12.70 | 106.57 | 0.8823 |

| FCN+JCC | 19.08 | 55.29 | 0.8816 |

| Method | LFE | LRFE | CFE | IoU |

|---|---|---|---|---|

| FCN | 0.8399 | |||

| FCN | √ | 0.8478 | ||

| FCN | √ | 0.8816 | ||

| FCN | √ | 0.8835 | ||

| FCN | √ | √ | 0.8857 | |

| FCN | √ | √ | 0.8851 | |

| FCN | √ | √ | 0.8855 | |

| FCN (C) | √ | √ | √ | 0.8910 |

| FCN (P) | √ | √ | √ | 0.8930 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Zhang, Z.; Lu, M.; Ji, S.; Yu, H.; Nie, C. Rich CNN Features for Water-Body Segmentation from Very High Resolution Aerial and Satellite Imagery. Remote Sens. 2021, 13, 1912. https://doi.org/10.3390/rs13101912

Zhang Z, Lu M, Ji S, Yu H, Nie C. Rich CNN Features for Water-Body Segmentation from Very High Resolution Aerial and Satellite Imagery. Remote Sensing. 2021; 13(10):1912. https://doi.org/10.3390/rs13101912

Chicago/Turabian StyleZhang, Zhili, Meng Lu, Shunping Ji, Huafen Yu, and Chenhui Nie. 2021. "Rich CNN Features for Water-Body Segmentation from Very High Resolution Aerial and Satellite Imagery" Remote Sensing 13, no. 10: 1912. https://doi.org/10.3390/rs13101912

APA StyleZhang, Z., Lu, M., Ji, S., Yu, H., & Nie, C. (2021). Rich CNN Features for Water-Body Segmentation from Very High Resolution Aerial and Satellite Imagery. Remote Sensing, 13(10), 1912. https://doi.org/10.3390/rs13101912