Detecting Square Markers in Underwater Environments

Abstract

1. Introduction

- a new method for generating synthetic images of markers in underwater conditions;

- a new algorithm for the detection of square markers that is adapted for bad visibility in underwater environments;

- comparison of this algorithm with other state-of-the-art algorithms on synthetically-generated images and on real images.

Related Work

2. Marker Detection and Image-Improving Algorithms

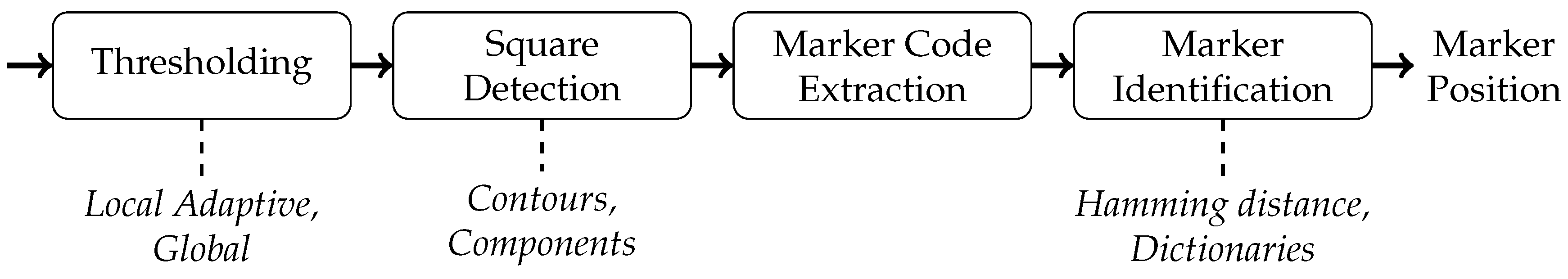

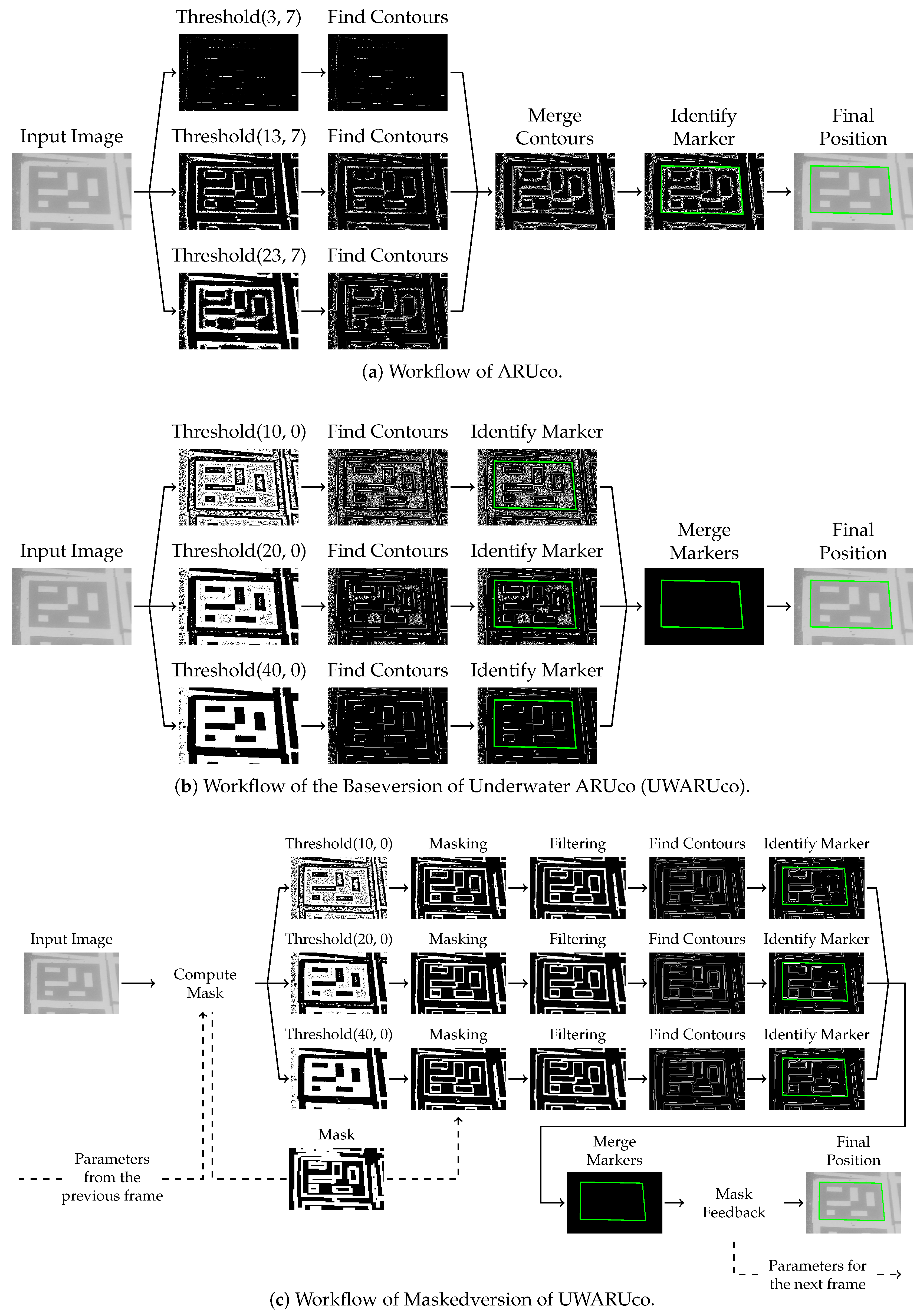

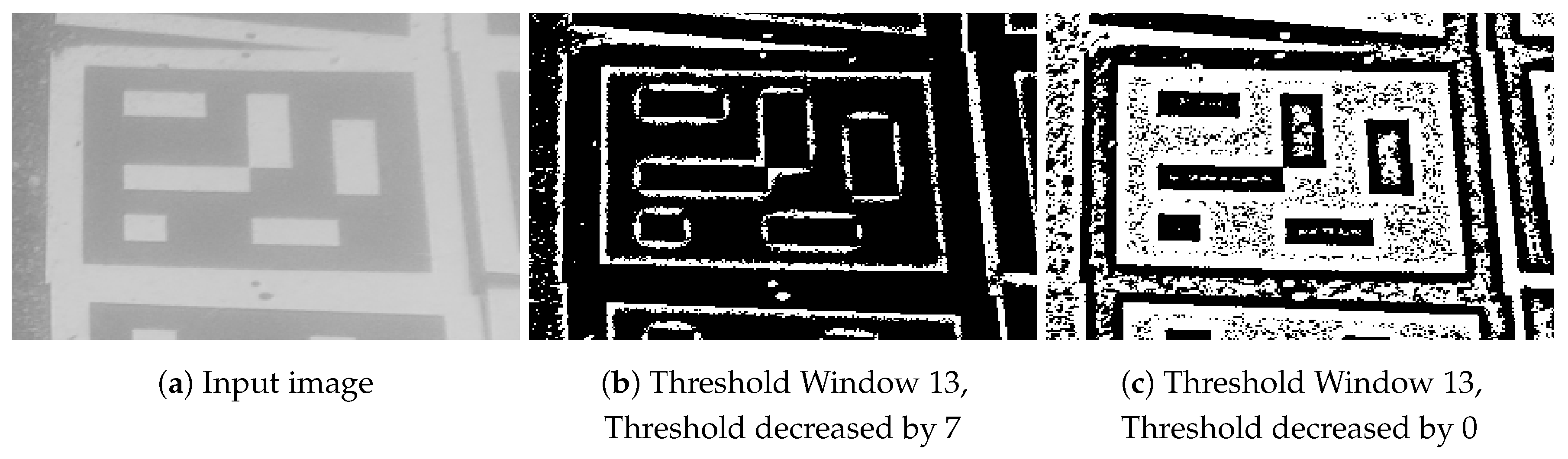

2.1. ARUco, ARUco3, and AprilTag2

2.2. Real-Time Algorithms Improving Underwater Images

2.3. Detection of Markers under Water

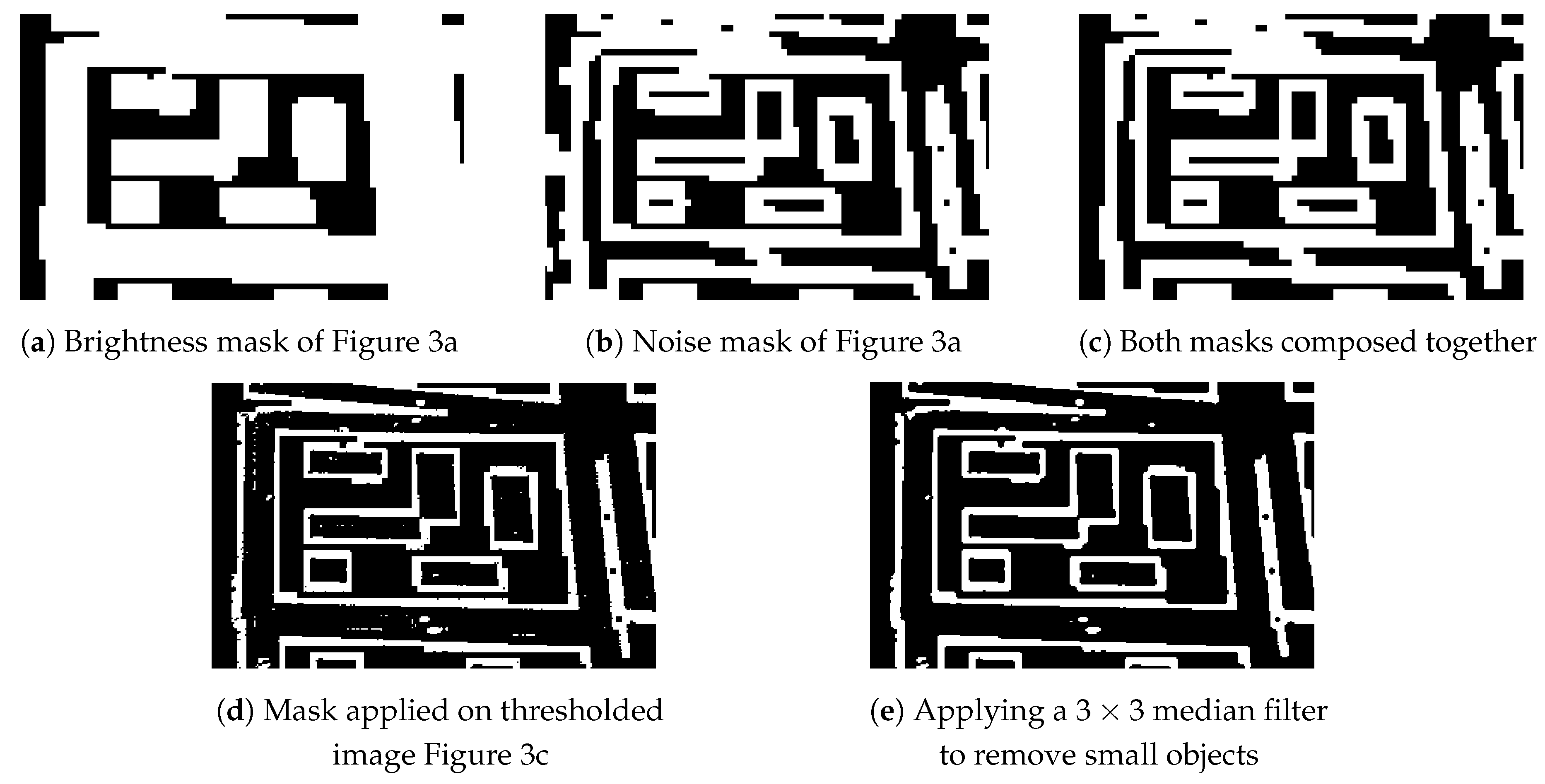

| Algorithm 1: Pseudocode of the algorithm for computing the brightness mask, the noise mask, and the final mask. |

| Input: Grey scale image whose mask is to be computed, threshold for brightness mask , threshold for noise mask |

| Output: Brightness and noise masks and |

| ← image of one fourth of the size of with minimums of pixels of ; |

| ← image of one fourth of the size of with maximums of pixels of ; |

| minimum of surrounding pixels of ; |

| maximum of surrounding pixels of ; |

| ; |

| ; |

| ; |

| ; |

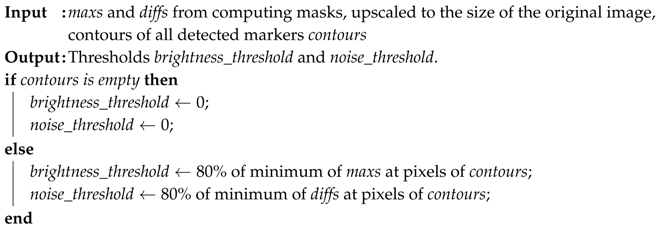

| Algorithm 2: Pseudocode of the algorithm for computing thresholds for the brightness mask and the noise mask. |

|

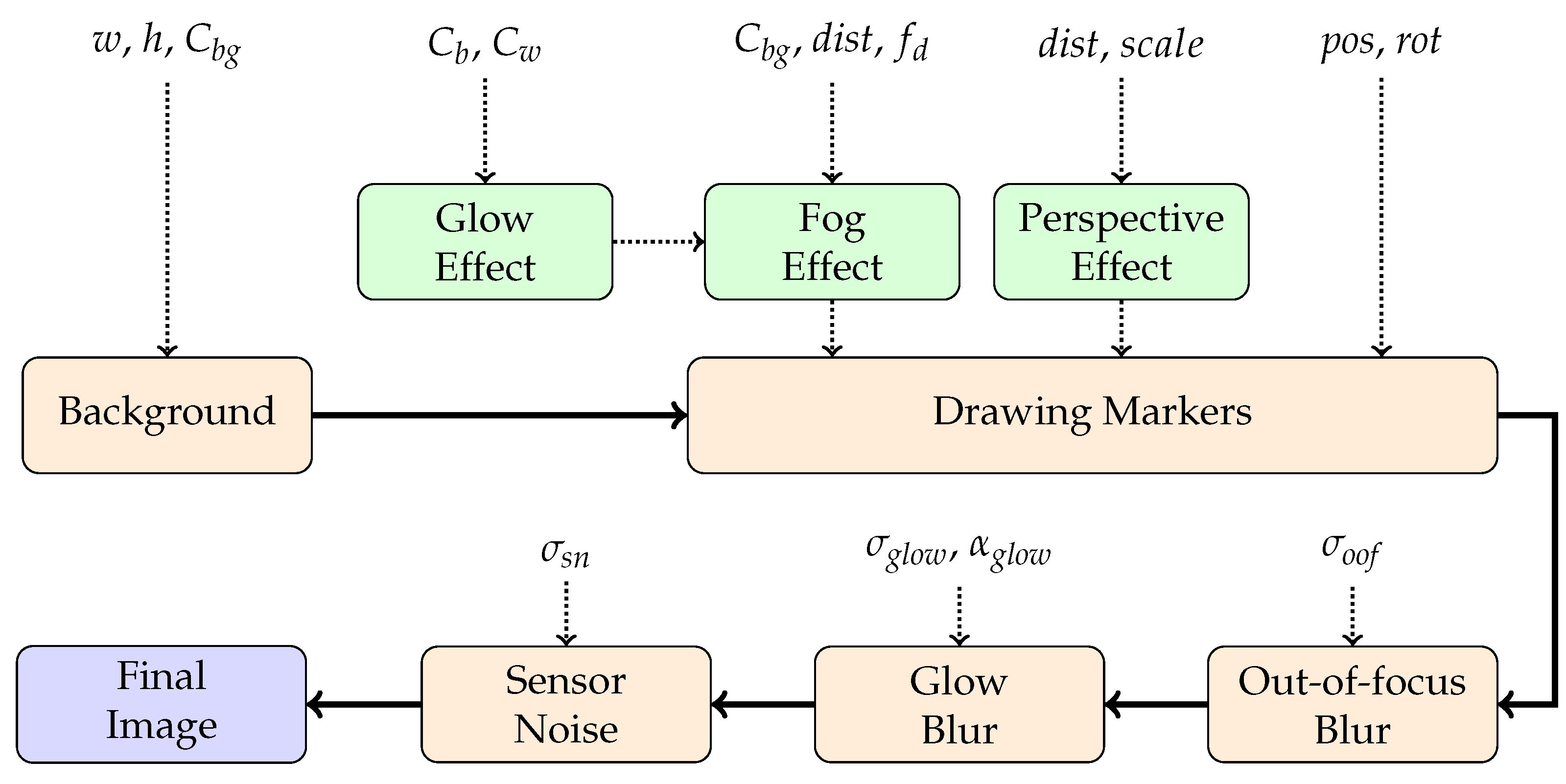

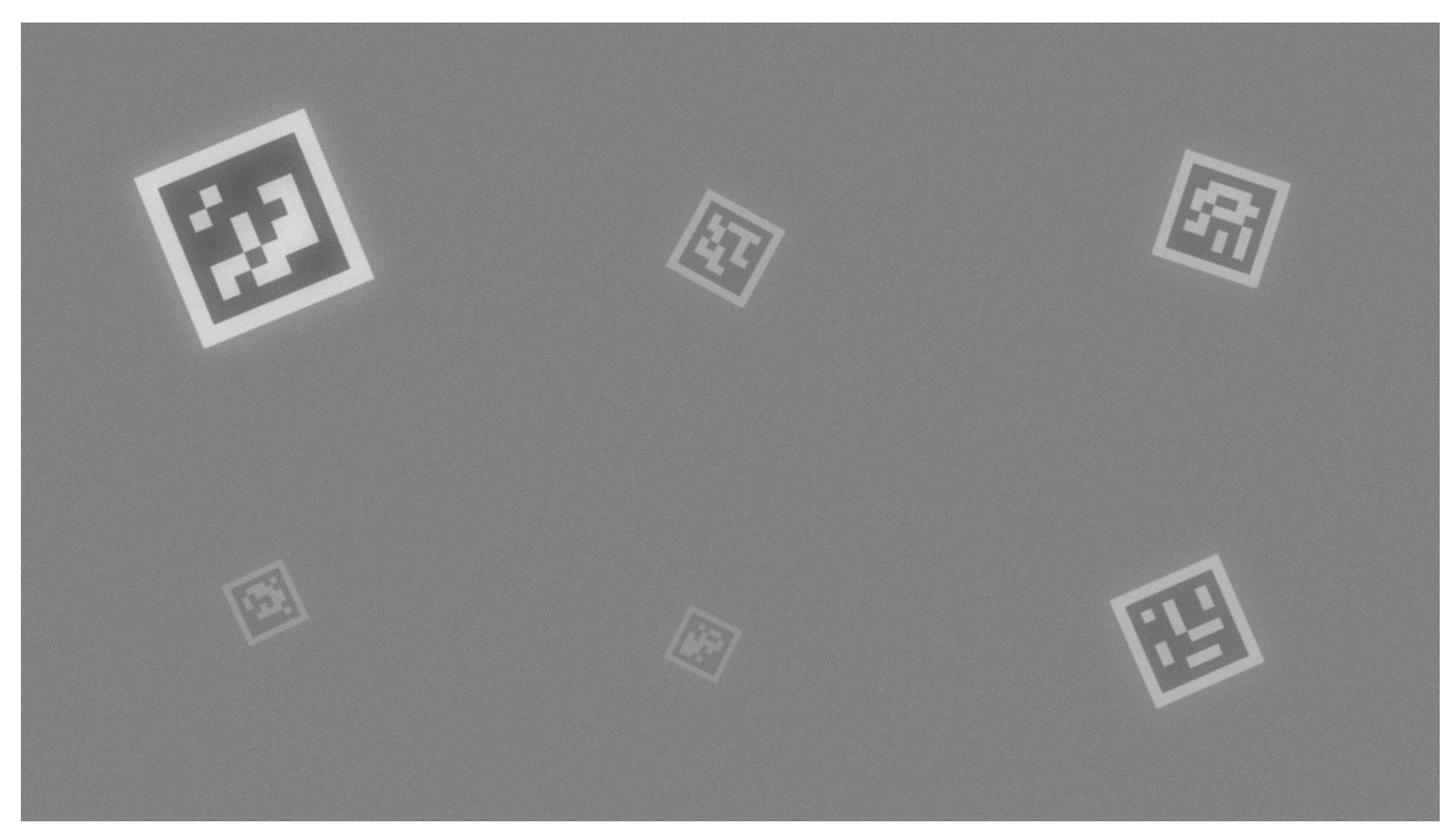

3. Generating Synthetic Images

4. Experiments with Synthetically-Generated Images

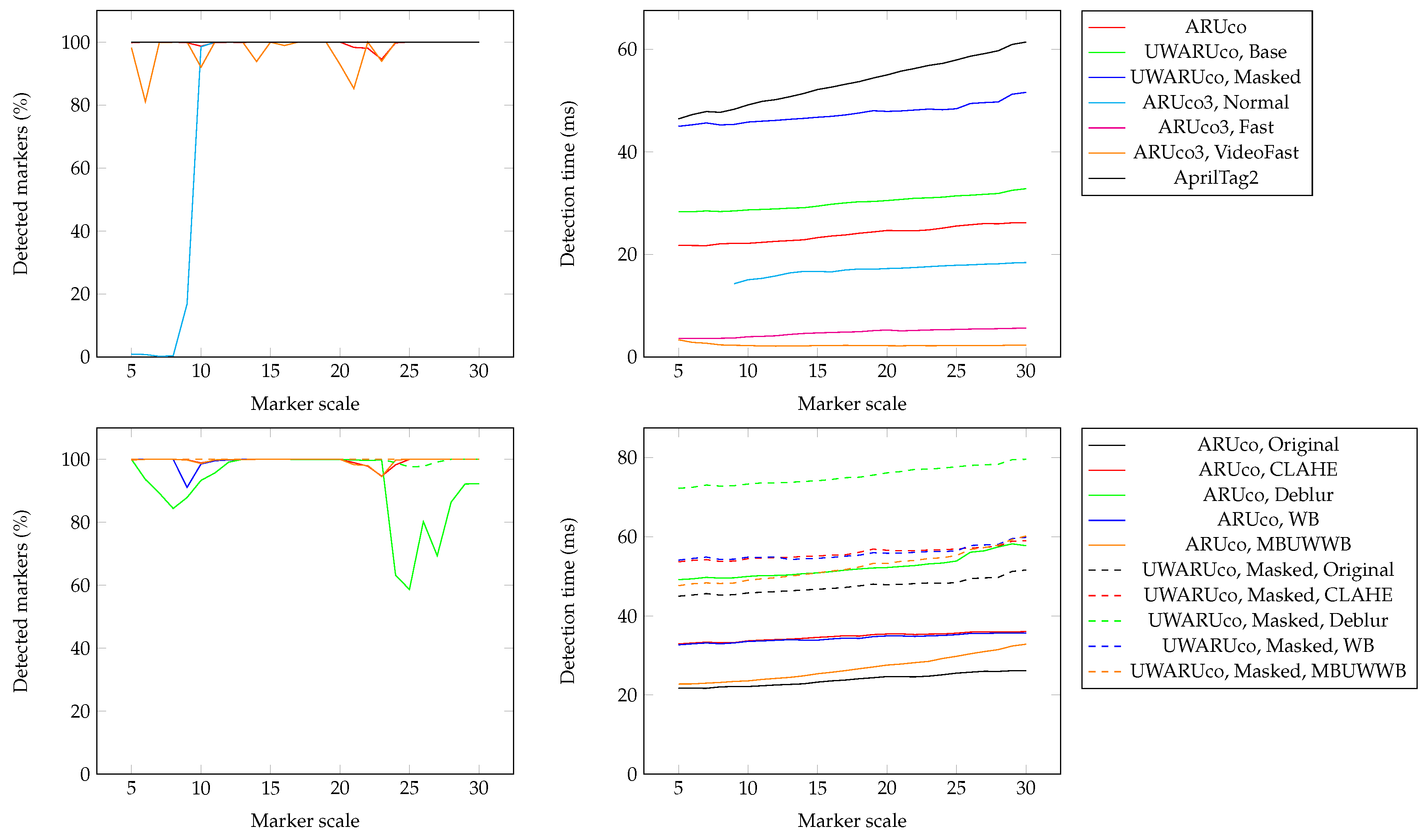

4.1. Reference with Good Visual Conditions

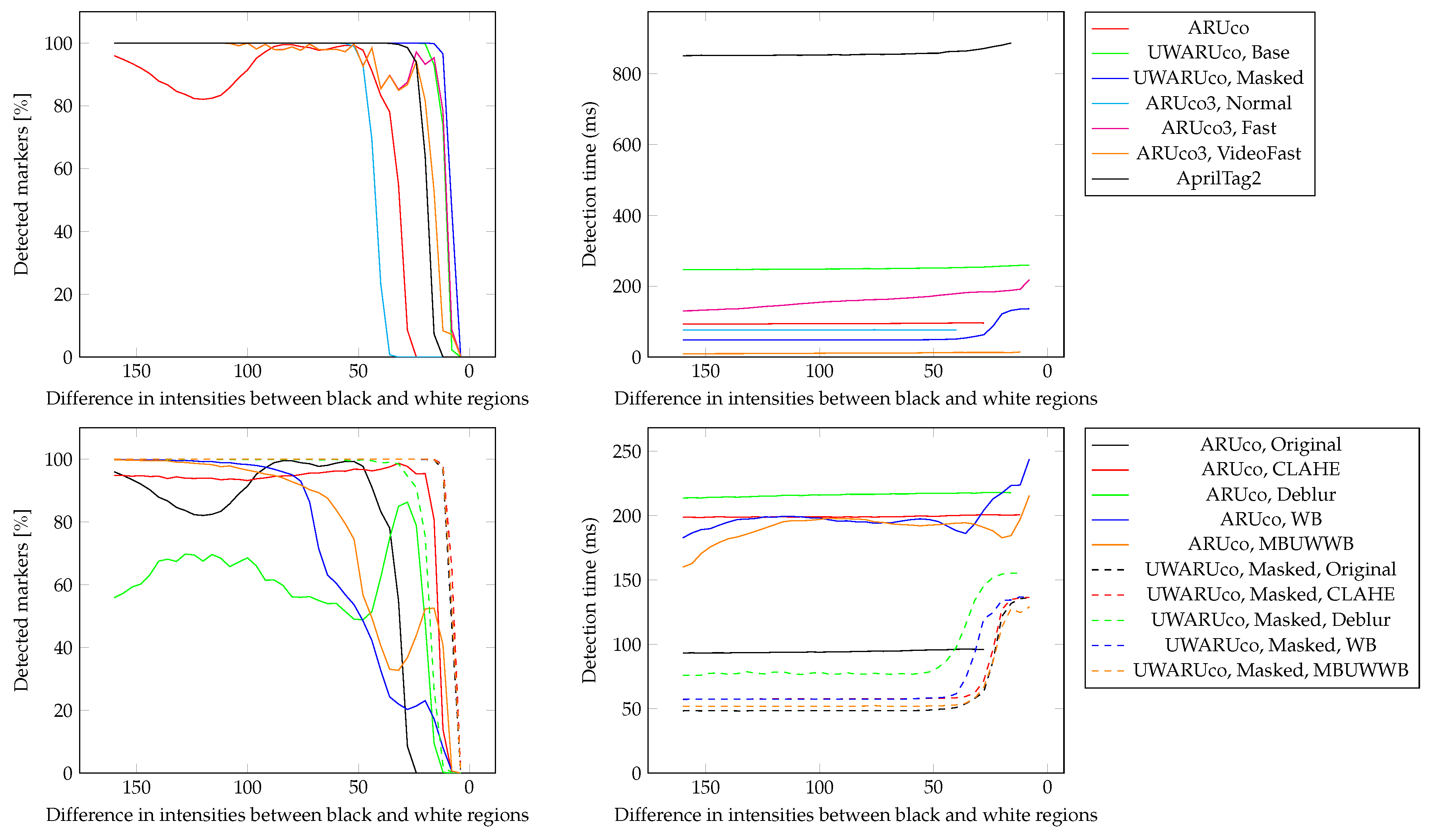

4.2. Bad Visibility Conditions

4.3. Foggy Conditions and Markers at Different Distances

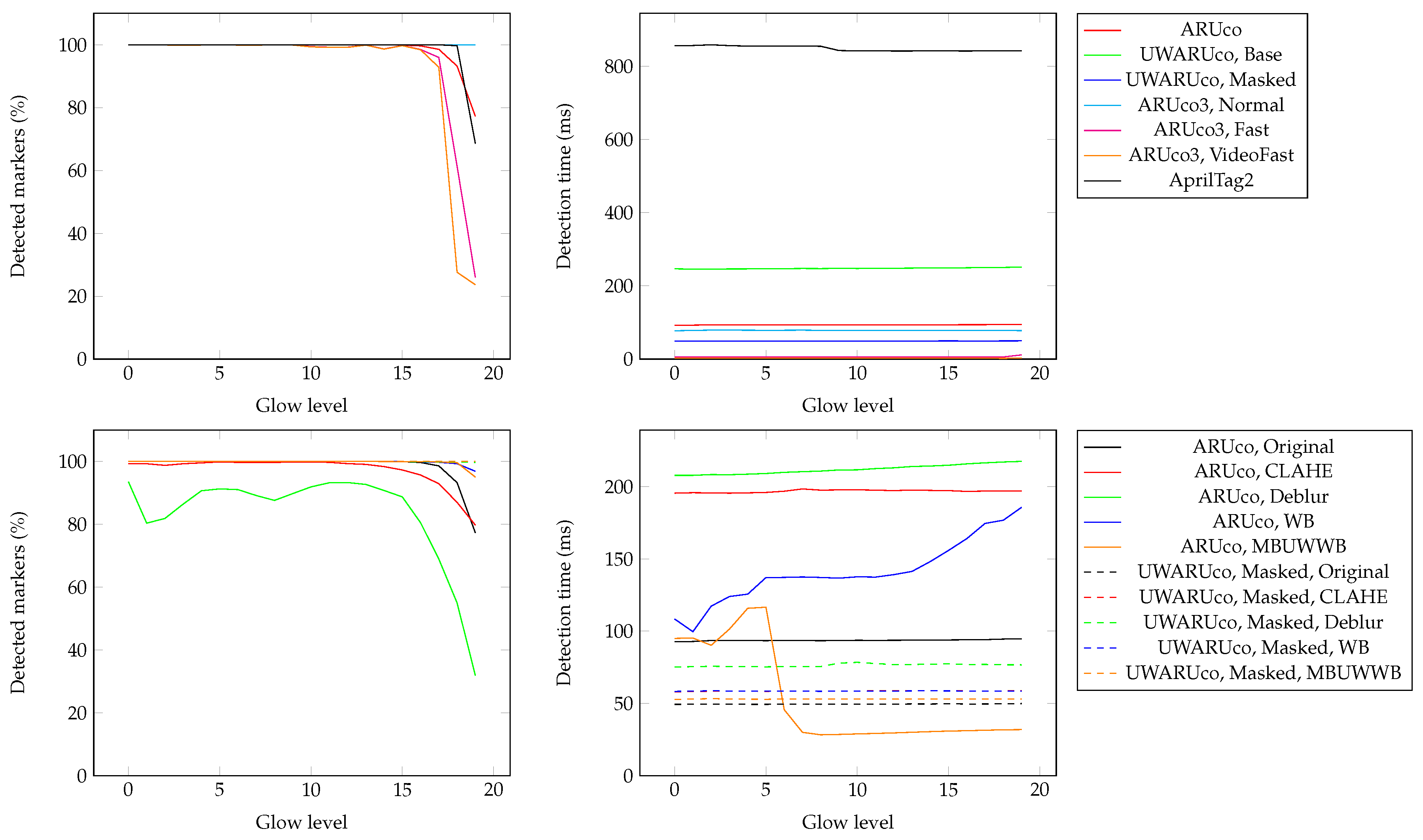

4.4. Glowing Markers

4.5. All Effects

Discussion of Synthetic Images

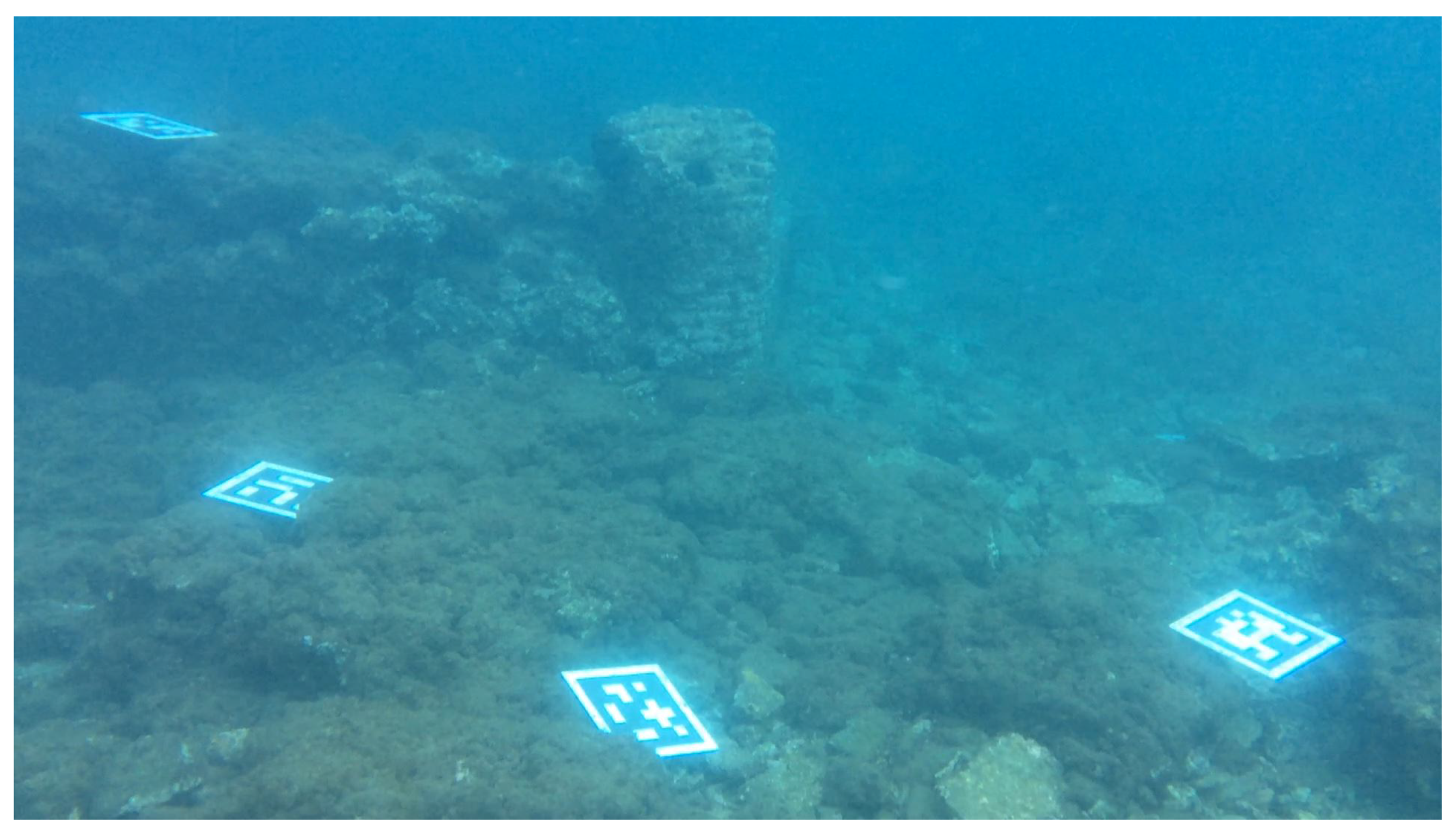

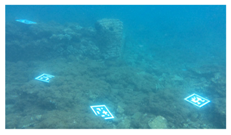

5. Evaluation of Real Underwater Images

5.1. Results of Underwater Tests

5.2. Discussion of Underwater Experiments

6. Conclusions

Author Contributions

Funding

Conflicts of Interest

References

- Skarlatos, D.; Agrafiotis, P.; Balogh, T.; Bruno, F.; Castro, F.; Petriaggi, B.D.; Demesticha, S.; Doulamis, A.; Drap, P.; Georgopoulos, A.; et al. Project iMARECULTURE: Advanced VR, iMmersive Serious Games and Augmented REality as Tools to Raise Awareness and Access to European Underwater CULTURal Heritage; Digital Heritage; Springer International Publishing: Cham, Switzerland, 2016; pp. 805–813. [Google Scholar]

- Edney, J.; Spennemann, D.H.R. Can Artificial Reef Wrecks Reduce Diver Impacts on Shipwrecks? The Management Dimension. J. Marit. Archaeol. 2015, 10, 141–157. [Google Scholar] [CrossRef]

- Vlahakis, V.; Ioannidis, N.; Karigiannis, J.; Tsotros, M.; Gounaris, M.; Stricker, D.; Gleue, T.; Daehne, P.; Almeida, L. Archeoguide: An Augmented Reality Guide for Archaeological Sites. IEEE Comput. Graph. Appl. 2002, 22, 52–60. [Google Scholar] [CrossRef]

- Panou, C.; Ragia, L.; Dimelli, D.; Mania, K. An Architecture for Mobile Outdoors Augmented Reality for Cultural Heritage. ISPRS Int. J. Geo-Inf. 2018, 7. [Google Scholar] [CrossRef]

- Von Lukas, U.F. Underwater Visual Computing: The Grand Challenge Just around the Corner. IEEE Comput. Graph. Appl. 2016, 36, 10–15. [Google Scholar] [CrossRef] [PubMed]

- Kato, H.; Billinghurst, M. Marker Tracking and HMD Calibration for a Video-Based Augmented Reality Conferencing System. In Proceedings of the 2nd IEEE and ACM International Workshop on Augmented Reality, San Francisco, CA, USA, 20–21 October 1999; pp. 85–94. [Google Scholar] [CrossRef]

- Wagner, D.; Schmalstieg, D. ARToolKitPlus for Pose Tracking on Mobile Devices. Proceedings of 12th Computer Vision Winter Workshop, St. Lambrecht, Austria, 6–8 February 2007; pp. 139–146. [Google Scholar]

- Fiala, M. ARTag, a Fiducial Marker System Using Digital Techniques. In Proceedings of the 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), San Diego, CA, USA, 20–25 June 2005; IEEE Computer Society: Washington, DC, USA, 2005; pp. 590–596. [Google Scholar] [CrossRef]

- Garrido-Jurado, S.; noz Salinas, R.M.; Madrid-Cuevas, F.J.; Marín-Jiménez, M.J. Automatic Generation and Detection of Highly Reliable Fiducial Markers under Occlusion. Pattern Recognit. 2014, 47, 2280–2292. [Google Scholar] [CrossRef]

- Romero-Ramirez, F.J.; noz Salinas, R.M.; Medina-Carnicer, R. Speeded up detection of squared fiducial markers. Image Vis. Comput. 2018, 76, 38–47. [Google Scholar] [CrossRef]

- Olson, E. AprilTag: A robust and flexible visual fiducial system. In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; pp. 3400–3407. [Google Scholar] [CrossRef]

- Wang, J.; Olson, E. AprilTag 2: Efficient and robust fiducial detection. In Proceedings of the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Beijing, China, 9–15 October 2016. [Google Scholar]

- Naimark, L.; Foxlin, E. Circular Data Matrix Fiducial System and Robust Image Processing for a Wearable Vision-Inertial Self-Tracker. In Proceedings of the International Symposium on Mixed and Augmented Reality, Darmstadt, Germany, 30 September–1 October 2002; pp. 27–36. [Google Scholar] [CrossRef]

- Köhler, J.; Pagani, A.; Stricker, D. Robust Detection and Identification of Partially Occluded Circular Markers. In Proceedings of the VISAPP 2010—Fifth International Conference on Computer Vision Theory and Applications, Angers, France, 17–21 May 2010; pp. 387–392. [Google Scholar]

- Bergamasco, F.; Albarelli, A.; Cosmo, L.; Rodolá, E.; Torsello, A. An Accurate and Robust Artificial Marker Based on Cyclic Codes. IEEE Trans. Pattern Anal. Mach. Intell. 2016, 38, 2359–2373. [Google Scholar] [CrossRef] [PubMed]

- Bencina, R.; Kaltenbrunner, M.; Jorda, S. Improved Topological Fiducial Tracking in the reacTIVision System. In Proceedings of the 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05)—Workshops, San Diego, CA, USA, 20–25 June 2005. [Google Scholar]

- Toyoura, M.; Aruga, H.; Turk, M.; Mao, X. Detecting Markers in Blurred and Defocused Images. In Proceedings of the 2013 International Conference on Cyberworlds, Yokohama, Japan, 21–23 October 2013; pp. 183–190. [Google Scholar] [CrossRef]

- Xu, A.; Dudek, G. Fourier Tag: A Smoothly Degradable Fiducial Marker System with Configurable Payload Capacity. In Proceedings of the 2011 Canadian Conference on Computer and Robot Vision, St Johns, NL, Canada, 25–27 May 2011; pp. 40–47. [Google Scholar] [CrossRef]

- Lowe, D.G. Object recognition from local scale-invariant features. In Proceedings of the Seventh IEEE International Conference on Computer Vision, Corfu, Greece, 20–25 September 1999; Volume 2, pp. 1150–1157. [Google Scholar] [CrossRef]

- Gordon, I.; Lowe, D.G. Scene modelling, recognition and tracking with invariant image features. In Proceedings of the Third IEEE and ACM International Symposium on Mixed and Augmented Reality, Arlington, VA, USA, 2–5 November 2004; pp. 110–119. [Google Scholar] [CrossRef]

- Bay, H.; Tuytelaars, T.; Van Gool, L. SURF: Speeded Up Robust Features. In Proceedings of the Computer Vision—ECCV 2006, Graz, Austria, 7–13 May 2006; Leonardis, A., Bischof, H., Pinz, A., Eds.; Springer: Berlin/Heidelberg, Germany, 2006; pp. 404–417. [Google Scholar]

- Rosten, E.; Drummond, T. Machine Learning for High-Speed Corner Detection. In Proceedings of the Computer Vision—ECCV 2006, Graz, Austria, 7–13 May 2006; Leonardis, A., Bischof, H., Pinz, A., Eds.; Springer: Berlin/Heidelberg, Germany, 2006; pp. 430–443. [Google Scholar]

- Calonder, M.; Lepetit, V.; Strecha, C.; Fua, P. BRIEF: Binary Robust Independent Elementary Features. In Proceedings of the Computer Vision—ECCV 2010, Heraklion, Crete, Greece, 5–11 September 2010; Daniilidis, K., Maragos, P., Paragios, N., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; pp. 778–792. [Google Scholar]

- Leutenegger, S.; Chli, M.; Siegwart, Y. Brisk: Binary robust invariant scalable keypoints. In Proceedings of the 2011 IEEE International Conference on Computer Vision (ICCV), Barcelona, Spain, 6–13 November 2011; pp. 2548–2555. [Google Scholar]

- Mair, E.; Hager, G.D.; Burschka, D.; Suppa, M.; Hirzinger, G. Adaptive and Generic Corner Detection Based on the Accelerated Segment Test. In Proceedings of the Computer Vision—ECCV 2010, Heraklion, Crete, Greece, 5–11 September 2010; Daniilidis, K., Maragos, P., Paragios, N., Eds.; Springer: Berlin/Heidelberg, Germany, 2010; pp. 183–196. [Google Scholar]

- Ortiz, R. FREAK: Fast Retina Keypoint. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Providence, RI, USA, 16–21 June 2012; IEEE Computer Society: Washington, DC, USA, 2012; pp. 510–517. [Google Scholar]

- Rublee, E.; Rabaud, V.; Konolige, K.; Bradski, G. ORB: An efficient alternative to SIFT or SURF. In Proceedings of the 2011 International Conference on Computer Vision, Tokyo, Japan, 25–27 May 2011; pp. 2564–2571. [Google Scholar] [CrossRef]

- Mur-Artal, R.; Montiel, J.M.M.; Tardós, J.D. ORB-SLAM: A Versatile and Accurate Monocular SLAM System. IEEE Trans. Robot. 2015, 31, 1147–1163. [Google Scholar] [CrossRef]

- Engel, J.; Schöps, T.; Cremers, D. LSD-SLAM: Large-Scale Direct Monocular SLAM. In Proceedings of the Computer Vision—ECCV 2014, Zurich, Switzerland, 6–12 September 2014; Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T., Eds.; Springer International Publishing: Cham, Switzerland, 2014; pp. 834–849. [Google Scholar]

- Agarwal, A.; Maturana, D.; Scherer, S. Visual Odometry in Smoke Occluded Environments; Technical Report; Robotics Institute, Carnegie Mellon University: Pittsburgh, PA, USA, 2014. [Google Scholar]

- Dos Santos Cesar, D.B.; Gaudig, C.; Fritsche, M.; dos Reis, M.A.; Kirchner, F. An Evaluation of Artificial Fiducial Markers in Underwater Environments. In Proceedings of the OCEANS 2015, Washington, DC, USA, 19–22 October 2015; pp. 1–6. [Google Scholar] [CrossRef]

- Briggs, A.J.; Scharstein, D.; Braziunas, D.; Dima, C.; Wall, P. Mobile robot navigation using self-similar landmarks. In Proceedings IEEE International Conference on Robotics and Automation, San Francisco, CA, USA. In Proceedings of the IEEE International Conference on Robotics and Automation, San Francisco, CA, USA, 24–28 April 2000; Volume 2, pp. 1428–1434. [Google Scholar] [CrossRef]

- Nègre, A.; Pradalier, C.; Dunbabin, M. Robust vision-based underwater homing using self-similar landmarks. J. Field Robot. 2008, 25, 360–377. [Google Scholar] [CrossRef]

- Žuži, M.; Čejka, J.; Bruno, F.; Skarlatos, D.; Liarokapis, F. Impact of Dehazing on Underwater Marker Detection for Augmented Reality. Front. Robot. AI 2018, 5, 1–13. [Google Scholar] [CrossRef]

- Čejka, J.; Žuži, M.; Agrafiotis, P.; Skarlatos, D.; Bruno, F.; Liarokapis, F. Improving Marker-Based Tracking for Augmented Reality in Underwater Environments. In Proceedings of the Eurographics Workshop on Graphics and Cultural Heritage, Vienna, Austria, 12–15 November 2018; Sablatnig, R., Wimmer, M., Eds.; Eurographics Association: Vienna, Austria, 2018; pp. 21–30. [Google Scholar] [CrossRef]

- Andono, P.N.; Purnama, I.K.E.; Hariadi, M. Underwater image enhancement using adaptive filtering for enhanced sift-based image matching. J. Theor. Appl. Inf. Technol. 2013, 51, 392–399. [Google Scholar]

- Ancuti, C.; Ancuti, C. Effective Contrast-Based Dehazing for Robust Image Matching. IEEE Geosci. Remote Sens. Lett. 2014, 11, 1871–1875. [Google Scholar] [CrossRef]

- Ancuti, C.; Ancuti, C.; Vleeschouwer, C.D.; Garcia, R. Locally Adaptive Color Correction for Underwater Image Dehazing and Matching. In Proceedings of the 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), Honolulu, HI, USA, 21–26 July 2017; pp. 997–1005. [Google Scholar] [CrossRef]

- Gao, Y.; Li, H.; Wen, S. Restoration and Enhancement of Underwater Images Based on Bright Channel Prior. Math. Probl. Eng. 2016, 2016, 1–15. [Google Scholar] [CrossRef]

- Agrafiotis, P.; Drakonakis, G.I.; Georgopoulos, A.; Skarlatos, D. The Effect Of Underwater Imagery Radiometry On 3D Reconstruction And Orthoimagery. ISPRS Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. 2017, XLII-2/W3, 25–31. [Google Scholar] [CrossRef]

- Mangeruga, M.; Bruno, F.; Cozza, M.; Agrafiotis, P.; Skarlatos, D. Guidelines for Underwater Image Enhancement Based on Benchmarking of Different Methods. Remote Sens. 2018, 10. [Google Scholar] [CrossRef]

- Morales, R.; Keitler, P.; Maier, P.; Klinker, G. An Underwater Augmented Reality System for Commercial Diving Operations. In Proceedings of the OCEANS 2009, Biloxi, MS, USA, 26–29 October 2009; pp. 1–8. [Google Scholar] [CrossRef]

- Bellarbi, A.; Domingues, C.; Otmane, S.; Benbelkacem, S.; Dinis, A. Augmented reality for underwater activities with the use of the DOLPHYN. In Proceedings of the 10th IEEE International Conference on Networking, Sensing and Control (ICNSC), Evry, France, 10–12 April 2013; pp. 409–412. [Google Scholar] [CrossRef]

- Oppermann, L.; Blum, L.; Shekow, M. Playing on AREEF: Evaluation of an Underwater Augmented Reality Game for Kids. In Proceedings of the 18th International Conference on Human-Computer Interaction with Mobile Devices and Services, Florence, Italy, 6–9 September 2016; ACM: New York, NY, USA, 2016; pp. 330–340. [Google Scholar] [CrossRef]

- Jasiobedzki, P.; Se, S.; Bondy, M.; Jakola, R. Underwater 3D mapping and pose estimation for ROV operations. In Proceedings of the OCEANS 2008, Quebec City, QC, Canada, 5–18 September 2008; pp. 1–6. [Google Scholar] [CrossRef]

- Hildebrandt, M.; Christensen, L.; Kirchner, F. Combining Cameras, Magnetometers and Machine-Learning into a Close-Range Localization System for Docking and Homing. In Proceedings of the OCEANS 2017—Anchorage, New York, NY, USA, 5–9 June 2017. [Google Scholar]

- Mueller, C.A.; Doernbach, T.; Chavez, A.G.; Köhntopp, D.; Birk, A. Robust Continuous System Integration for Critical Deep-Sea Robot Operations Using Knowledge-Enabled Simulation in the Loop. In Proceedings of the International Conference on Intelligent Robots and Systems (IROS 2018), Madrid, Spain, 1–5 October 2018; pp. 1892–1899. [Google Scholar] [CrossRef]

- Shortis, M. Calibration Techniques for Accurate Measurements by Underwater Camera Systems. Sensors 2015, 15, 30810–30826. [Google Scholar] [CrossRef] [PubMed]

- Drap, P.; Merad, D.; Mahiddine, A.; Seinturier, J.; Gerenton, P.; Peloso, D.; Boï, J.M.; Bianchimani, O.; Garrabou, J. In situ Underwater Measurements of Red Coral: Non-Intrusive Approach Based on Coded Targets and Photogrammetry. Int. J. Herit. Digit. Era 2014, 3, 123–139. [Google Scholar] [CrossRef]

- Pizer, S.M.; Amburn, E.P.; Austin, J.D.; Cromartie, R.; Geselowitz, A.; Greer, T.; Romeny, B.T.H.; Zimmerman, J.B. Adaptive Histogram Equalization and Its Variations. Comput. Vis. Graph. Image Process. 1987, 39, 355–368. [Google Scholar] [CrossRef]

- Krasula, L.; Callet, P.L.; Fliegel, K.; Klíma, M. Quality Assessment of Sharpened Images: Challenges, Methodology, and Objective Metrics. IEEE Trans. Image Process. 2017, 26, 1496–1508. [Google Scholar] [CrossRef] [PubMed]

- Limare, N.; Lisani, J.L.; Morel, J.; Petro, A.B.; Sbert, C. Simplest Color Balance. IPOL J. 2011, 1. [Google Scholar] [CrossRef]

- Koschmieder, H. Theorie der horizontalen Sichtweite; Beiträge zur Physik der freien Atmosphäre, Keim & Nemnich: Munich, Germany, 1924. [Google Scholar]

| Solution | ARUco | UWARUco Base | UWARUco Masked | ARUco3 Normal | ARUco3 Fast | ARUco3 VideoFast | AprilTag2 |

| Detected markers (%) | 32.067 | 64.658 | 75.050 | 24.000 | 60.392 | 45.450 | 51.342 |

| Detection time (ms) | 96.002 | 255.633 | 117.907 | 76.769 | 89.064 | 9.564 | 1220.796 |

| Solution | ARUco Original | ARUco CLAHE | ARUco Deblur | ARUco WB | ARUco MBUWWB | ||

| Detected markers (%) | 32.067 | 63.225 | 43.808 | 46.025 | 57.525 | ||

| Detection time (ms) | 96.002 | 201.421 | 218.623 | 200.801 | 193.417 | ||

| Solution | UWARUco Masked Original | UWARUco Masked CLAHE | UWARUco Masked Deblur | UWARUco Masked WB | UWARUco Masked MBUWWB | ||

| Detected markers (%) | 75.050 | 79.525 | 58.500 | 77.992 | 77.358 | ||

| Detection time (ms) | 117.907 | 128.644 | 141.740 | 128.436 | 117.376 |

| Solution | ARUco | UWARUco Base | UWARUco Masked | ARUco3 Normal | ARUco3 Fast | ARUco3 VideoFast | AprilTag2 |

| Detected markers (%) | 7.967 | 63.533 | 72.683 | 1.875 | 25.892 | 18.075 | 28.900 |

| Detection time (ms) | 97.862 | 263.899 | 128.422 | 77.829 | 56.790 | 6.979 | 871.732 |

| Solution | ARUco Original | ARUco CLAHE | ARUco Deblur | ARUco WB | ARUco MBUWWB | ||

| Detected markers (%) | 7.967 | 42.417 | 19.767 | 19.783 | 21.342 | ||

| Detection time (ms) | 97.862 | 200.967 | 219.783 | 206.210 | 195.214 | ||

| Solution | UWARUco Masked Original | UWARUco Masked CLAHE | UWARUco Masked Deblur | UWARUco Masked WB | UWARUco Masked MBUWWB | ||

| Detected markers (%) | 72.683 | 74.992 | 47.608 | 73.125 | 68.633 | ||

| Detection time (ms) | 128.422 | 136.058 | 156.065 | 117.667 | 94.246 |

|  |  | |||

| Nm: | Baiae1 | Nm: | Athens | Nm: | Constandis |

| Lc: | Baiae, Italy | Lc: | Athens, Greece | Lc: | Limassol, Cyprus |

| Tr: | High | Tr: | Moderate | Tr: | Moderate |

| Dp: | 5–6 m | Dp: | 7–9 m | Dp: | 20–22 m |

| Dv: | iPad Pro 9.7 inch | Dv: | GoPro camera | Dv: | GARMIN VIRB XE |

| Rs: | 1920 × 1080 | Rs: | 1920 × 1080 | Rs: | 1920 × 1440 |

| Cm: | MPEG-2 | Cm: | MPEG-4 | Cm: | MPEG-4 |

| FL: | 30 fps, 85 s | FL: | 30 fps, 31 s | FL: | 24 fps, 160 s |

|  |  | |||

| Nm: | Green Bay | Nm: | Villa | Nm: | Baiae2 |

| Lc: | Green Bay, Cyprus | Lc: | Villa a Protiro, B., It. | Lc: | Baiae, Italy |

| Tr: | Low | Tr: | Moderate | Tr: | Moderate |

| Dp: | 7–9 m | Dp: | 5–6 m | Dp: | 5–6 m |

| Dv: | NVIDIA SHIELD | Dv: | Samsung Galaxy S8 | Dv: | iPad Mini 2 |

| Rs: | 1920 × 1080 | Rs: | 1920 × 1080 | Rs: | 1920 × 1080 |

| Cm: | MPEG-4 | Cm: | no compression | Cm: | MPEG-4 |

| FL: | 30 fps, 81 s | FL: | 30 fps, 141 s | FL: | 30 fps, 421 s |

| |||||

| Nm: | Epidauros | ||||

| Lc: | Epidauros, Greece | ||||

| Tr: | Low | ||||

| Dp: | 4–6 m | ||||

| Dv: | Sony FDR-X1000V | ||||

| Rs: | 3840 × 2160 | ||||

| Cm: | MPEG-4 | ||||

| FL: | 24 fps, 180 s | ||||

| Solution | ARUco | UWARUco Base | UWARUco Masked | ARUco3 Normal | ARUco3 Fast | ARUco3 VideoFast | AprilTag2 | |

| Baiae1 | # of markers | 467 | 6004 | 6223 | 36 | 2145 | 1893 | 5695 |

| Time (ms) | 24.627 | 111.528 | 57.680 | 15.397 | 6.970 | 1.748 | 178.874 | |

| Athens | # of markers | 5272 | 5913 | 5832 | 4877 | 5565 | 2610 | 5923 |

| Time (ms) | 31.202 | 86.503 | 53.771 | 21.398 | 6.550 | 2.843 | 240.672 | |

| Constandis | # of markers | 6981 | 6944 | 6878 | 6578 | 5382 | 4511 | 6332 |

| Time (ms) | 39.010 | 139.514 | 65.565 | 25.030 | 6.672 | 5.446 | 327.887 | |

| Green Bay | # of markers | 7964 | 7932 | 7594 | 7062 | 6347 | 5784 | 7332 |

| Time (ms) | 27.531 | 74.712 | 49.915 | 18.040 | 10.537 | 5.288 | 222.003 | |

| Villa | # of markers | 14,457 | 19,879 | 20,145 | 9947 | 14,316 | 12,589 | 20,082 |

| Time (ms) | 75.747 | 140.659 | 62.872 | 58.266 | 6.700 | 2.368 | 323.005 | |

| Baiae2 | # of markers | 13,829 | 15,932 | 14,976 | 12,466 | 8257 | 3869 | 14,577 |

| Time (ms) | 34.552 | 83.614 | 55.093 | 21.535 | 4.563 | 1.672 | 230.910 | |

| Epidauros | # of markers | 18,749 | 25,126 | 25,713 | 13,864 | 10,480 | 6340 | 21,628 |

| Time (ms) | 181.441 | 425.286 | 252.327 | 152.590 | 23.575 | 4.692 | 1252.122 | |

| Solution | ARUco Original | ARUco CLAHE | ARUco Deblur | ARUco WB | ARUco MBUWWB | |||

| Baiae1 | # of markers | 467 | 3646 | 3857 | 4098 | 5140 | ||

| Time (ms) | 24.627 | 38.064 | 80.560 | 43.370 | 47.475 | |||

| Athens | # of markers | 5272 | 5504 | 5611 | 5640 | 5616 | ||

| Time (ms) | 31.202 | 48.424 | 86.524 | 45.867 | 38.271 | |||

| Constandis | # of markers | 6981 | 6279 | 6850 | 6737 | 6869 | ||

| Time (ms) | 39.010 | 73.406 | 136.165 | 86.436 | 53.236 | |||

| Green Bay | # of markers | 7964 | 6406 | 8355 | 7392 | 7896 | ||

| Time (ms) | 27.531 | 49.000 | 84.394 | 50.442 | 35.355 | |||

| Villa | # of markers | 14,457 | 18,981 | 18,958 | 19,029 | 19,398 | ||

| Time (ms) | 75.747 | 138.263 | 178.143 | 114.494 | 45.239 | |||

| Baiae2 | # of markers | 13,829 | 14,126 | 11,445 | 11,500 | 10,227 | ||

| Time (ms) | 34.552 | 62.603 | 100.112 | 50.598 | 27.755 | |||

| Epidauros | # of markers | 18,749 | 20,030 | 23,597 | 16,929 | 21,696 | ||

| Time (ms) | 181.441 | 341.750 | 586.785 | 296.562 | 181.483 | |||

| Solution | UWARUco Masked Original | UWARUco Masked CLAHE | UWARUco Masked Deblur | UWARUco Masked WB | UWARUco Masked MBUWWB | |||

| Baiae1 | # of markers | 6223 | 6612 | 6081 | 6129 | 5605 | ||

| Time (ms) | 57.680 | 64.902 | 93.618 | 64.998 | 55.866 | |||

| Athens | # of markers | 5832 | 5806 | 5848 | 5831 | 5829 | ||

| Time (ms) | 53.771 | 60.742 | 85.729 | 60.054 | 52.622 | |||

| Constandis | # of markers | 6878 | 6702 | 6959 | 6749 | 6843 | ||

| Time (ms) | 65.565 | 75.938 | 105.121 | 76.404 | 68.263 | |||

| Green Bay | # of markers | 7594 | 6776 | 7464 | 7149 | 7599 | ||

| Time (ms) | 49.915 | 56.289 | 78.405 | 56.515 | 51.390 | |||

| Villa | # of markers | 20,145 | 20,281 | 19,400 | 20,225 | 20,258 | ||

| Time (ms) | 62.872 | 82.574 | 114.332 | 65.231 | 61.647 | |||

| Baiae2 | # of markers | 14,976 | 15,996 | 15,356 | 12,988 | 13,244 | ||

| Time (ms) | 55.093 | 65.498 | 85.929 | 62.256 | 55.415 | |||

| Epidauros | # of markers | 25,713 | 26,012 | 23,618 | 21,991 | 25,623 | ||

| Time (ms) | 252.327 | 305.263 | 388.771 | 274.608 | 247.548 | |||

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Čejka, J.; Bruno, F.; Skarlatos, D.; Liarokapis, F. Detecting Square Markers in Underwater Environments. Remote Sens. 2019, 11, 459. https://doi.org/10.3390/rs11040459

Čejka J, Bruno F, Skarlatos D, Liarokapis F. Detecting Square Markers in Underwater Environments. Remote Sensing. 2019; 11(4):459. https://doi.org/10.3390/rs11040459

Chicago/Turabian StyleČejka, Jan, Fabio Bruno, Dimitrios Skarlatos, and Fotis Liarokapis. 2019. "Detecting Square Markers in Underwater Environments" Remote Sensing 11, no. 4: 459. https://doi.org/10.3390/rs11040459

APA StyleČejka, J., Bruno, F., Skarlatos, D., & Liarokapis, F. (2019). Detecting Square Markers in Underwater Environments. Remote Sensing, 11(4), 459. https://doi.org/10.3390/rs11040459