Operational Use of Surfcam Online Streaming Images for Coastal Morphodynamic Studies

Abstract

1. Introduction

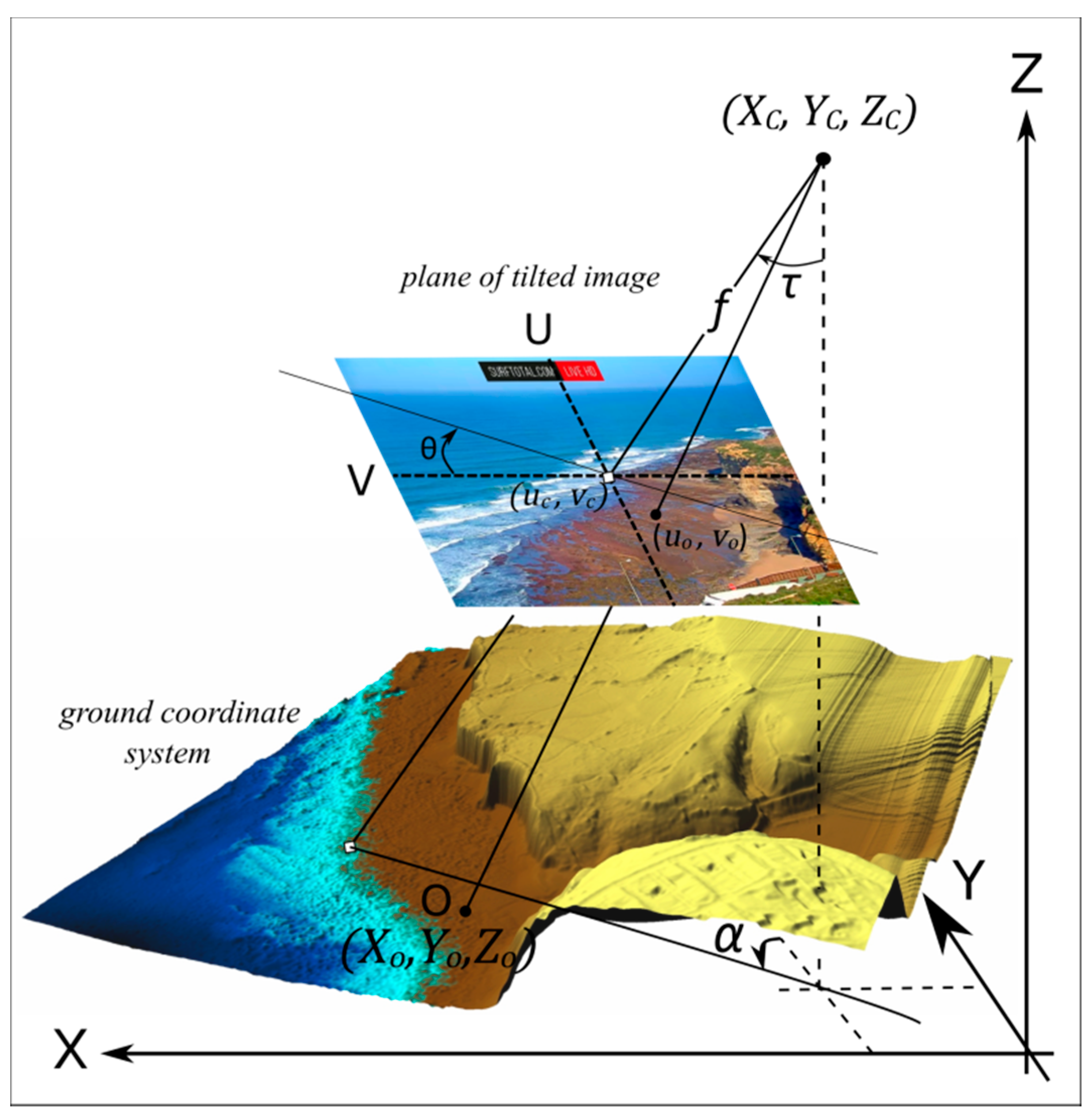

1.1. Standard Image Rectification Procedure

1.2. Coastal Video Monitoring Applications

1.3. Surfcam Images

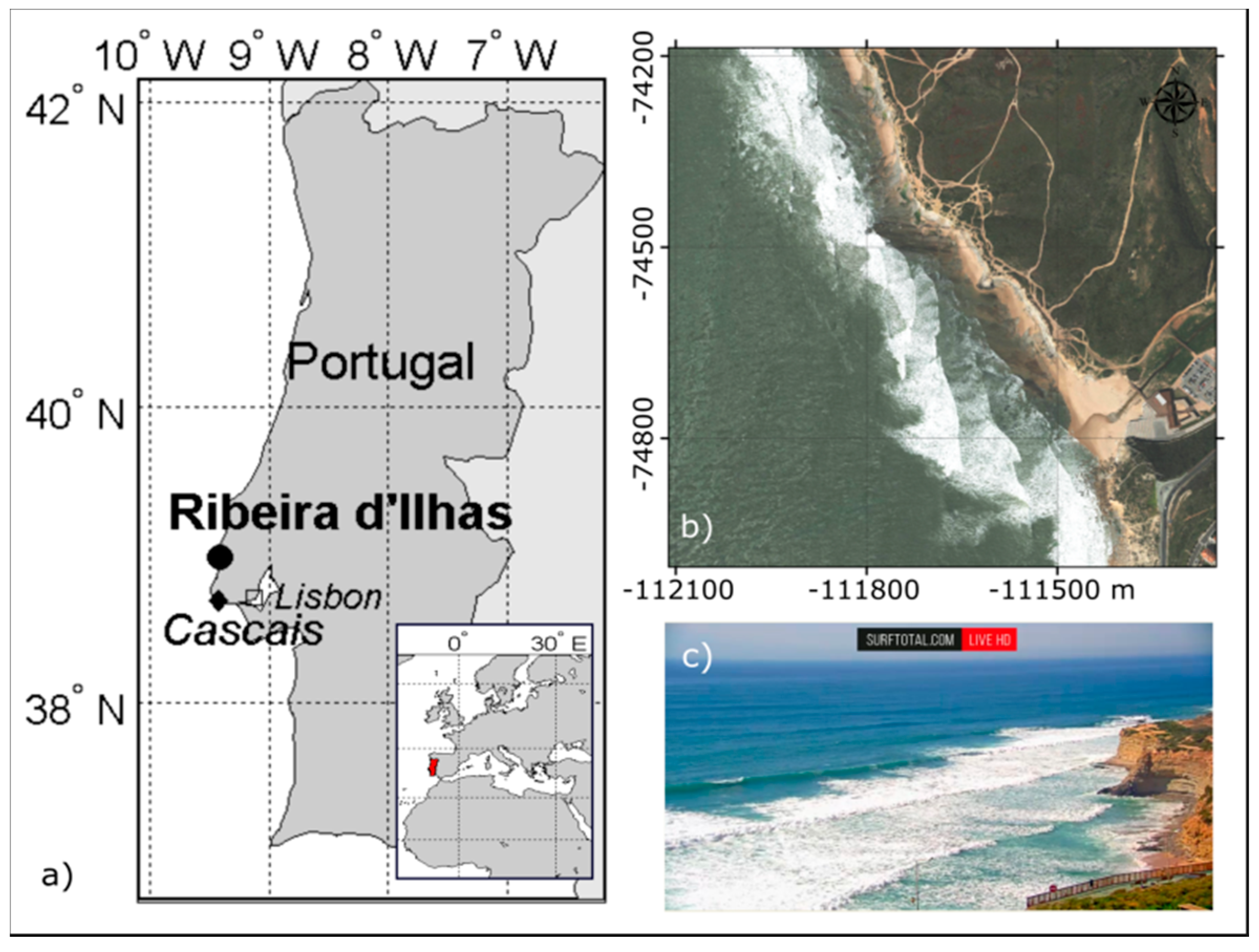

2. Study Site

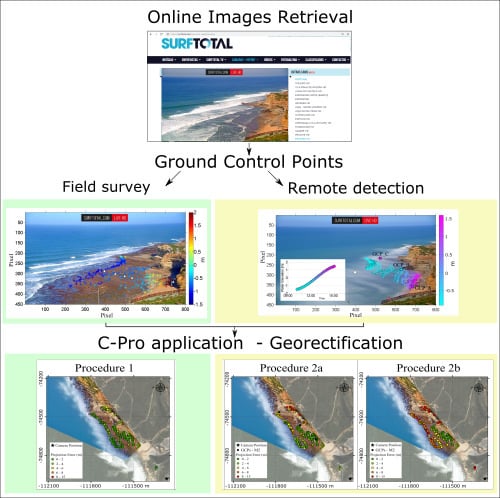

3. Methods

3.1. Surfcam Case Study

3.2. Water Level

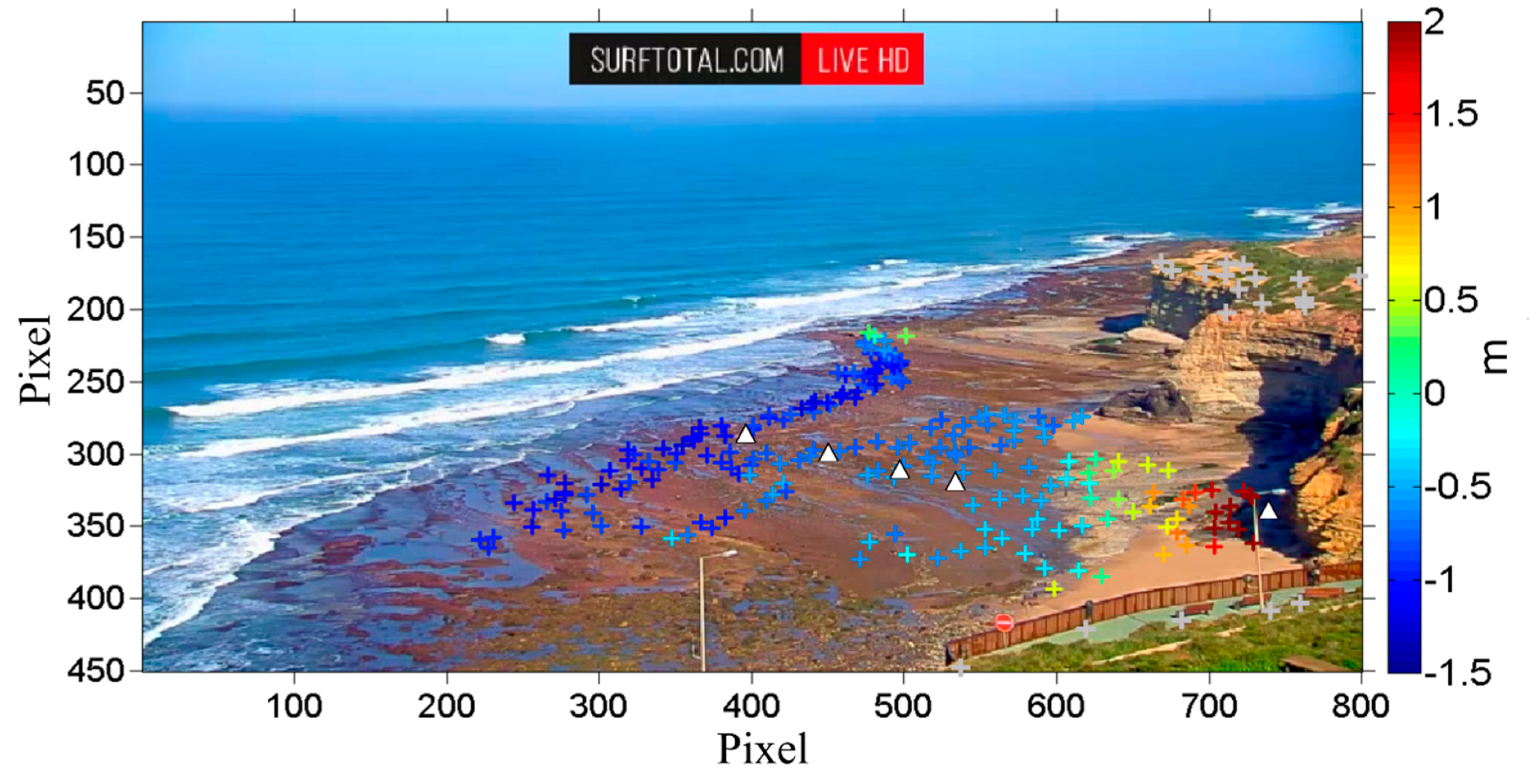

3.3. Method 1—In-Situ Acquisition of Ground Control Points (GCPs)

3.4. Method 2—Remote Acquisition of GCPs

3.5. Method 2—Camera Position from Web Tool

3.6. Practical Implementation of C-Pro

3.6.1. Procedure 1

3.6.2. Procedure 2

4. Results

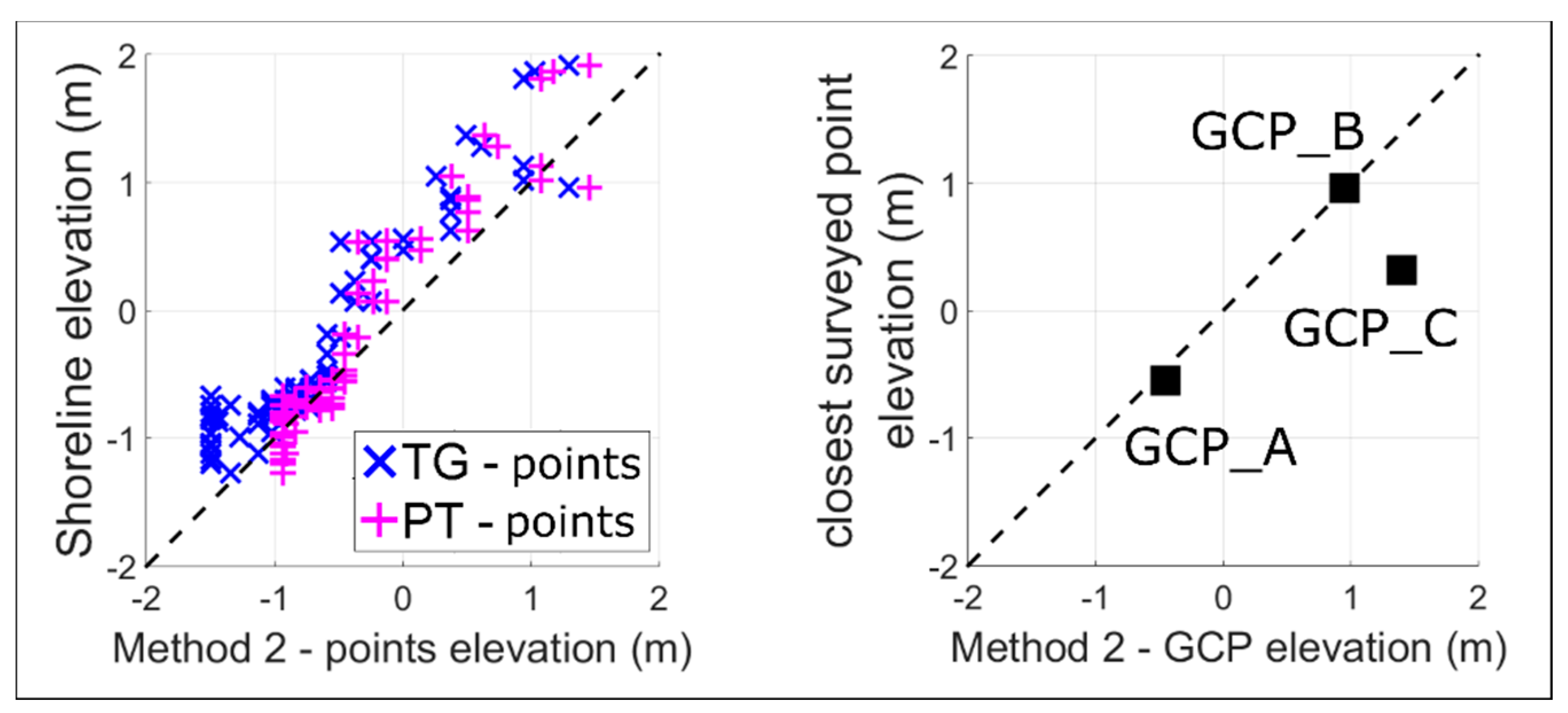

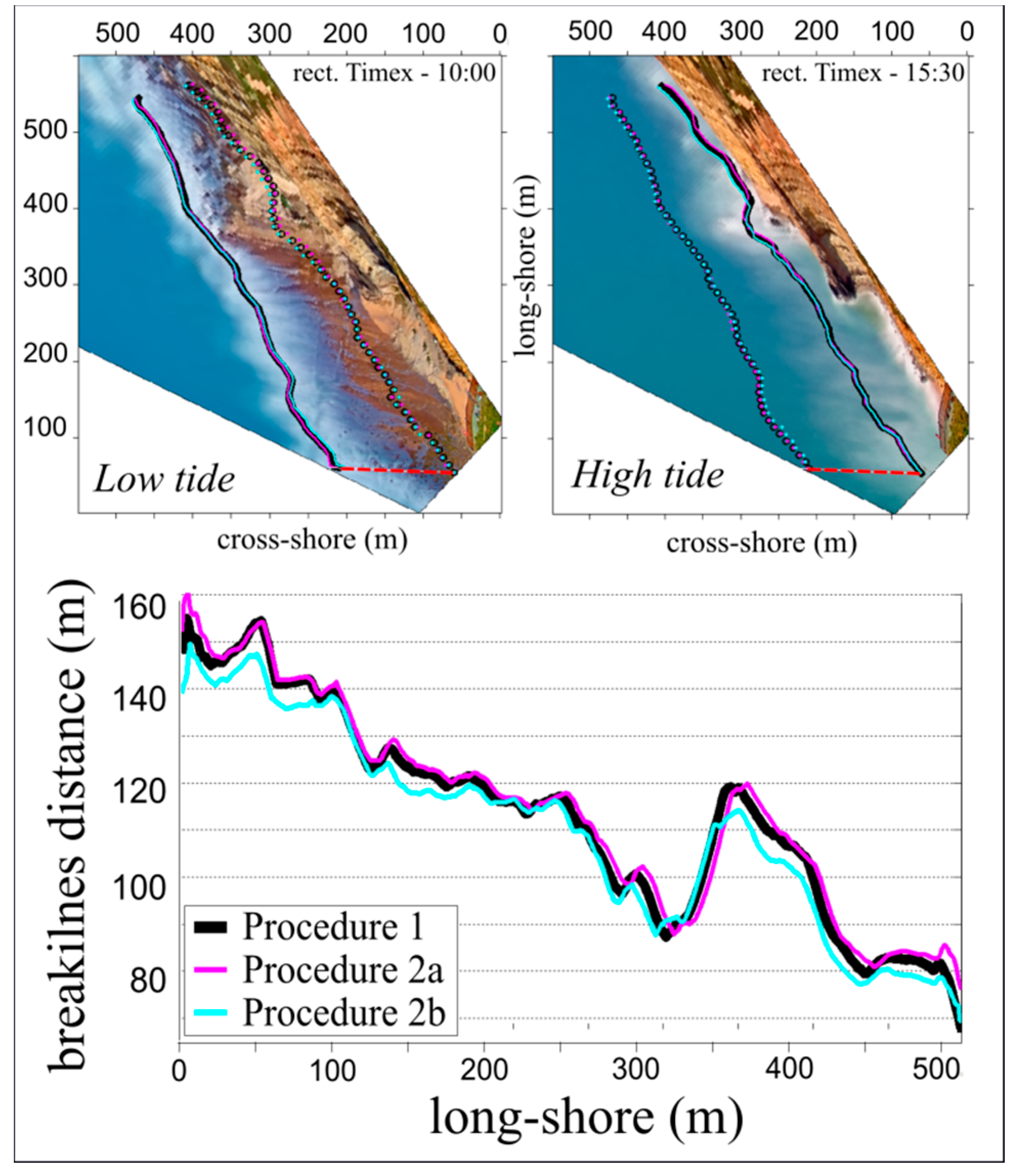

4.1. Surfcam Case Study

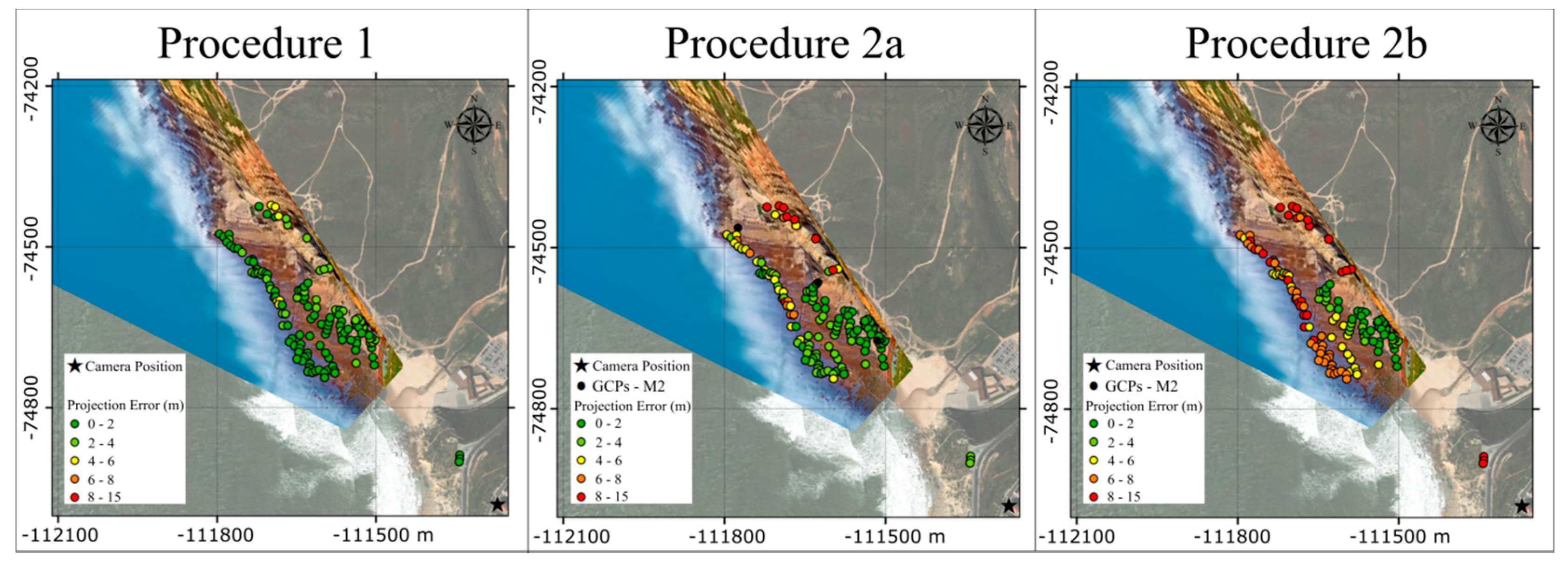

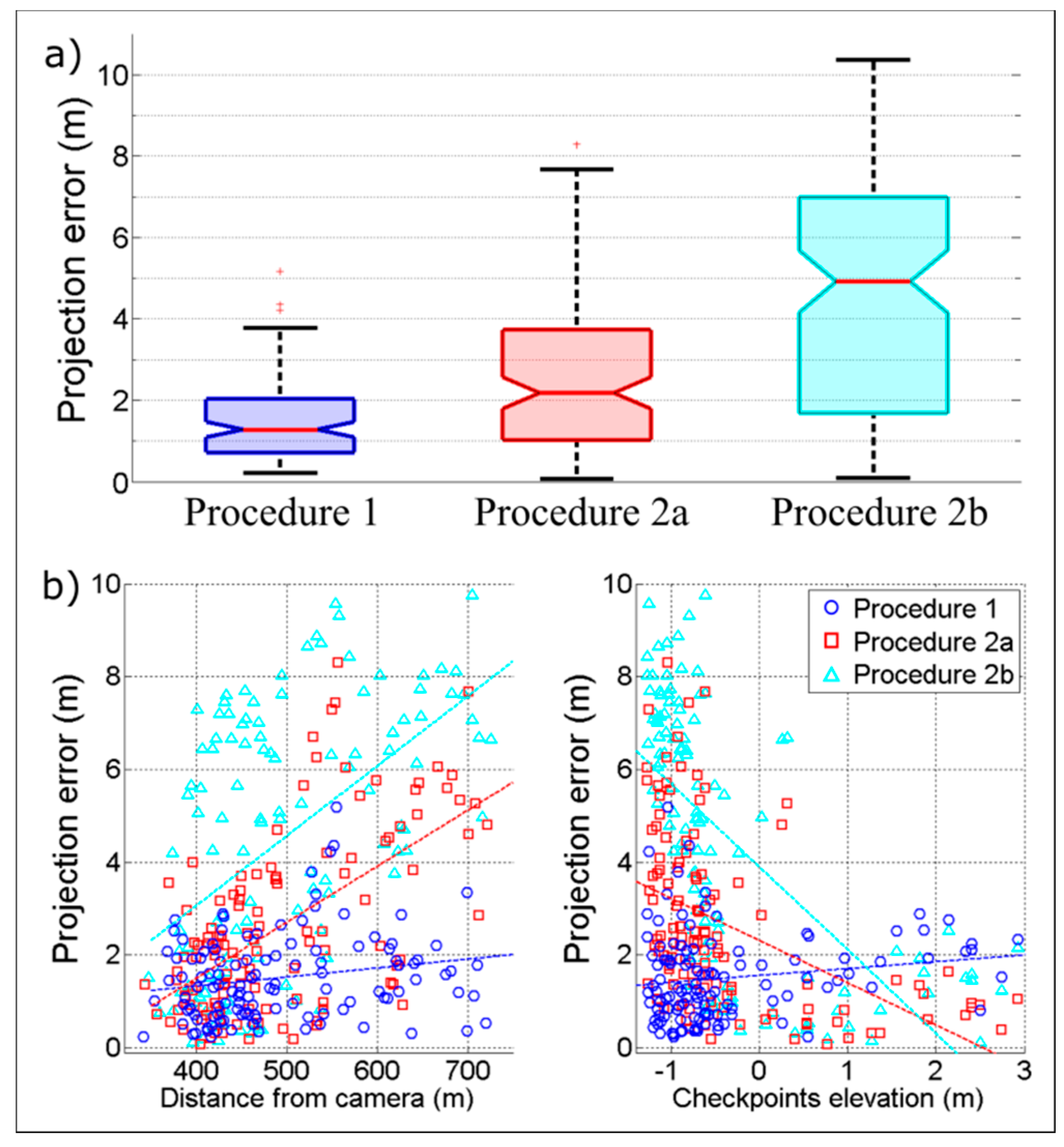

4.2. Projection Error

4.3. Camera Parameters

5. Discussion

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Short, A.D.; Trembanis, A.C. Decadal Scale Patterns in Beach Oscillation and Rotation Narrabeen Beach, Australia—Time Series, PCA and Wavelet Analysis. J. Coast. Res. 2004, 202, 523–532. [Google Scholar] [CrossRef]

- Mason, D.C.; Gurney, C.; Kennett, M. Beach topography mapping—A comparison of techniques. J. Coast. Conserv. 2000, 6, 113–124. [Google Scholar] [CrossRef]

- Holman, R.A.; Stanley, J. The history and technical capabilities of Argus. Coast. Eng. 2007, 54, 477–491. [Google Scholar] [CrossRef]

- Aarninkhof, S.G.J.; Turner, I.L.; Dronkers, T.D.T.; Caljouw, M.; Nipius, L. A video-based technique for mapping intertidal beach bathymetry. Coast. Eng. 2003, 49, 275–289. [Google Scholar] [CrossRef]

- Nieto, M.A.; Garau, B.; Balle, S.; Simarro, G.; Zarruk, G.A.; Ortiz, A.; Orfila, A. An open source, low cost video-based coastal monitoring system. Earth Surf. Process. Landf. 2010, 35, 1712–1719. [Google Scholar] [CrossRef]

- Taborda, R.; Silva, A. COSMOS: A lightweight coastal video monitoring system. Comput. Geosci. 2012, 49, 248–255. [Google Scholar] [CrossRef]

- Brignone, M.; Schiaffino, C.F.; Isla, F.I.; Ferrari, M. A system for beach video-monitoring: Beachkeeper plus. Comput. Geosci. 2012, 49, 53–61. [Google Scholar] [CrossRef]

- Simarro, G.; Ribas, F.; Álvarez, A.; Guillén, J.; Chic, Ò.; Orfila, A. ULISES: An Open Source Code for Extrinsic Calibrations and Planview Generations in Coastal Video Monitoring Systems. J. Coast. Res. 2017, 335, 1217–1227. [Google Scholar] [CrossRef]

- Vousdoukas, M.I.; Ferreira, P.M.; Almeida, L.P.; Dodet, G.; Psaros, F.; Andriolo, U.; Taborda, R.; Silva, A.N.; Ruano, A.; Ferreira, Ó.M. Performance of intertidal topography video monitoring of a meso-tidal reflective beach in South Portugal. Ocean Dyn. 2011, 61, 1521–1540. [Google Scholar] [CrossRef]

- Hartley, R.; Zisserman, A. Multiple View Geometry in Computer Vision, 2nd ed.; Cambridge University Press: Cambridge, UK, 2003. [Google Scholar]

- Camera Calibration Toolbox for Matlab. Available online: http://www.vision.caltech.edu/bouguetj/calib_doc/ (accessed on 20 November 2018).

- Andriolo, U.; Almeida, L.P.; Almar, R. Coupling terrestrial LiDAR and video imagery to perform 3D intertidal beach topography. Coast. Eng. 2018, 140, 232–239. [Google Scholar] [CrossRef]

- Holland, K.T.; Holman, R.A.; Lippmann, T.C. Practical use of video imagery in nearshore oceanographic field studies’. IEEE J. Ocean Eng. 1997, 22, 81–92. [Google Scholar] [CrossRef]

- Bechle, A.J.; Wu, C.H.; Liu, W.; Kimura, N. Development and Application of an Automated River-Estuary Discharge Imaging System. J. Hydraul. Eng. 2012, 138, 327–339. [Google Scholar] [CrossRef]

- Harley, M.D.; Andriolo, U.; Armaroli, C.; Ciavola, P. Shoreline rotation and response to nourishment of a gravel embayed beach using a low-cost video monitoring technique: San Michele-Sassi Neri, Central Italy. J. Coast. Conserv. 2013, 18, 551–565. [Google Scholar] [CrossRef]

- Lippmann, T.C.; Holman, R.A. Quantification of sand bar morphology: A video technique based on wave dissipation. J. Geophys. Res. 1989, 94, 995–1011. [Google Scholar] [CrossRef]

- Armaroli, C.; Ciavola, P. Dynamics of a nearshore bar system in the northern Adriatic: A video-based morphological classification. Geomorphology 2011, 126, 201–216. [Google Scholar] [CrossRef]

- Balouin, Y.; Tesson, J.; Gervais, M. Cuspate shoreline relationship with nearshore bar dynamics during storm events—Field observations at Sete beach, France. J. Coast. Res. 2013, 65, 440–445. [Google Scholar] [CrossRef]

- Angnuureng, D.B.; Almar, R.; Senechal, N.; Castelle, B.; Addo, K.A.; Marieu, V.; Ranasinghe, R. Shoreline resilience to individual storms and storm clusters on a meso-macrotidal barred beach. Geomorphology 2017, 290, 265–276. [Google Scholar] [CrossRef]

- Turner, I.L.; Whyte, D.; Ruessink, B.; Ranasinghe, R. Observations of rip spacing, persistence and mobility at a long, straight coastline. Mar. Geol. 2007, 236, 209–221. [Google Scholar] [CrossRef]

- Orzech, M.D.; Thornton, E.B.; MacMahan, J.H.; O’Reilly, W.C.; Stanton, T.P. Alongshore rip channel migration and sediment transport. Mar. Geol. 2010, 271, 278–291. [Google Scholar] [CrossRef]

- Gallop, S.; Bryan, K.; Coco, G.; Stephens, S. Storm-driven changes in rip channel patterns on an embayed beach. Geomorphology 2011, 127, 179–188. [Google Scholar] [CrossRef]

- Pitman, S.; Gallop, S.L.; Haigh, I.D.; Masselink, G.; Ranasinghe, R. Wave breaking patterns control rip current flow regimes and surfzone retention. Mar. Geol. 2016, 382, 176–190. [Google Scholar] [CrossRef]

- Ranasinghe, R.; Symonds, G.; Black, K.; Holman, R. Morphodynamics of intermediate beaches: A video imaging and numerical modelling study. Coast. Eng. 2004, 51, 629–655. [Google Scholar] [CrossRef]

- Quartel, S.; Addink, E.; Ruessink, B. Object-oriented extraction of beach morphology from video images. Int. J. Appl. Earth Obs. Geoinf. 2006, 8, 256–269. [Google Scholar] [CrossRef]

- Ortega-Sánchez, M.; Fachin, S.; Sancho, F.; Losada, M.A. Relation between beachface morphology and wave climate at Trafalgar beach (Cádiz, Spain). Geomorphology 2008, 99, 171–185. [Google Scholar] [CrossRef]

- Price, T.; Ruessink, B. Morphodynamic zone variability on a microtidal barred beach. Mar. Geol. 2008, 251, 98–109. [Google Scholar] [CrossRef]

- Masselink, G.; Austin, M.; Scott, T.; Poate, T.; Russell, P. Role of wave forcing, storms and NAO in outer bar dynamics on a high-energy, macro-tidal beach. Geomorphology 2014, 226, 76–93. [Google Scholar] [CrossRef]

- Alvarez-Ellacuria, A.; Orfila, A.; Gómez-Pujol, L.; Simarro, G.; Obregon, N. Decoupling spatial and temporal patterns in short-term beach shoreline response to wave climate. Geomorphology 2011, 128, 199–208. [Google Scholar] [CrossRef]

- Osorio, A.; Medina, R.; Gonzalez, M. An algorithm for the measurement of shoreline and intertidal beach profiles using video imagery: PSDM. Comput. Geosci. 2012, 46, 196–207. [Google Scholar] [CrossRef]

- Valentini, N.; Saponieri, A.; Molfetta, M.G.; Damiani, L. New algorithms for shoreline monitoring from coastal video systems. Earth Sci. Inform. 2017, 10, 495–506. [Google Scholar] [CrossRef]

- Ruiz de Alegria-Arzaburu, A.; Masselink, G. Storm response and beach rotation on a gravel beach, Slapton Sands, U.K. Mar. Geol. 2010, 278, 77–99. [Google Scholar] [CrossRef]

- Blossier, B.; Bryan, K.R.; Daly, C.J.; Winter, C. Spatial and temporal scales of shoreline morphodynamics derived from video camera observations for the island of Sylt, German Wadden Sea. Geo-Mar. Lett. 2017, 37, 111. [Google Scholar] [CrossRef]

- Fairley, I.; Davidson, M.; Kingston, K.; Dolphin, T.; Phillips, R. Empirical orthogonal function analysis of shoreline changes behind two different designs of detached breakwaters. Coast. Eng. 2009, 56, 1097–1108. [Google Scholar] [CrossRef]

- Simarro, G.; Bryan, K.R.; Guedes, R.M.; Sancho, A.; Guillen, J.; Coco, G. On the use of variance images for runup and shoreline detection. Coast. Eng. 2015, 99, 136–147. [Google Scholar] [CrossRef]

- Rigos, A.; Tsekouras, G.E.; Vousdoukas, M.I.; Chatzipavlis, A.; Velegrakis, A.F. A Chebyshev polynomial radial basis function neural network for automated shoreline extraction from coastal imagery. Integr. Comput-Aid. Eng. 2016, 23, 141–160. [Google Scholar] [CrossRef]

- Stockdon, H.F.; Holman, R.A.; Howd, P.A.; Sallenger, A.H. Empirical parameterization of setup, swash, and runup. Coast. Eng. 2006, 53, 573–588. [Google Scholar] [CrossRef]

- Almar, R.; Cienfuegos, R.; Catalán, P.A.; Birrien, F.; Castelle, B.; Michallet, H. Nearshore bathymetric inversion from video using a fully non-linear Boussinesq wave model. J. Coast. Res. 2011, 64, 3–7. [Google Scholar]

- Aagaard, T.; Holm, D. Digitization of wave runup using video records. J. Coast. Res. 1989, 5, 547–551. [Google Scholar]

- Holland, K.T.; Holman, R.A. The statistical distribution of swash maxima on natural beaches. J. Geophys. Res. 1993, 98, 10271–10278. [Google Scholar] [CrossRef]

- Birkemeier, W.A.; Donohue, C.; Long, C.E.; Hathaway, K.K.; Baron, C.F. The 1990 DELILAH Nearshore Experiment: Summary Report; Technical Report CHL-97-24; U.S. Army Corps of Engineers, Waterways Experiment Station: Vicksburg, MS, USA, 1997. [Google Scholar]

- Bailey, D.G.; Shand, R.D. Determining Wave Run-up using Automated Video Analysis. In Proceedings of the 2nd NZ Conference on Image and Vision Computing, Palmerston North, New Zealand, August 1994. [Google Scholar]

- Holland, K.T.; Raubenheimer, B.; Guza, R.T.; Holman, R.A. Runup kinematics on a natural beach. J. Geophys. Res. 1995, 100, 4985. [Google Scholar] [CrossRef]

- Ruggiero, P.; Holman, R.A.; Beach, R.A. Wave run-up on a high energy dissipative beach. J. Geophys. Res. 2004, 109. [Google Scholar] [CrossRef]

- Vousdoukas, M.I.; Velegrakis, A.F.; Dimou, K.; Zervakis, V.; Conley, D.C. Wave run-up observations in microtidal, sediment-starved pocket beaches of the Eastern Mediterranean. J. Mar. Syst. 2009, 78, S37–S47. [Google Scholar] [CrossRef]

- Guedes, R.M.C.; Bryan, K.R.; Coco, G.; Holman, R.A. The effects of tides on swash statistics on an intermediate beach. J. Geophys. Res. Oceans 2011, 116, 1–13. [Google Scholar] [CrossRef]

- Power, H.E.; Holman, R.A.; Baldock, T.E. Swash zone boundary conditions derived from optical remote sensing of swash zone flow patterns. J. Geophys. Res. Oceans 2011, 116. [Google Scholar] [CrossRef]

- Senechal, N.; Coco, G.; Bryan, K.R.; Holman, R.A. Wave runup during extreme storm conditions. J. Geophys. Res. Oceans 2011, 116. [Google Scholar] [CrossRef]

- Brinkkemper, J.A.; Lanckriet, T.; Grasso, F.; Puleo, J.A.; Ruessink, B.G. Observations of turbulence within the surf and swash zone of a field-scale sandy laboratory beach. Coast. Eng. 2014, 113, 62–72. [Google Scholar] [CrossRef]

- Stockdon, H.F.; Thompson, D.M.; Plant, N.G.; Long, J.W. Evaluation of wave runup predictions from numerical and parametric models. Coast. Eng. 2014, 92, 1–11. [Google Scholar] [CrossRef]

- Vousdoukas, M.; Kirupakaramoorthy, T.; Oumeraci, H.; De la Torre, M.; Wübbold, F.; Wagner, B.; Schimmels, S. The role of combined laser scanning and video techniques in monitoring wave-by-wave swash zone processes. Coast. Eng. 2014, 83, 150–165. [Google Scholar] [CrossRef]

- Blenkinsopp, C.E.; Matias, A.; Howe, D.; Castelle, B.; Marieu, V.; Turner, I.L. Wave runup and overwash on a prototype-scale sand barrier. Coast. Eng. 2016, 113, 88–103. [Google Scholar] [CrossRef]

- Almar, R.; Blenkinsopp, C.; Almeida, L.P.; Cienfuegos, R.; Catalán, P.A. Wave runup video motion detection using the Radon Transform. Coast. Eng. 2017, 130, 46–51. [Google Scholar] [CrossRef]

- Vousdoukas, M.I.; Wziatek, D.; Almeida, L.P. Coastal vulnerability assessment based on video wave run-up observations at a mesotidal, steep-sloped beach. Ocean Dynam. 2012, 62, 123–137. [Google Scholar] [CrossRef]

- Poate, T.G.; McCall, R.T.; Masselink, G. A new parameterisation for runup on gravel beaches. Coast. Eng. 2016, 117, 176–190. [Google Scholar] [CrossRef]

- Atkinson, A.L.; Power, H.E.; Moura, T.; Hammond, T.; Callaghan, D.P.; Baldock, T.E. Assessment of runup predictions by empirical models on non-truncated beaches on the south-east Australian coast. Coast. Eng. 2017, 119, 15–31. [Google Scholar] [CrossRef]

- Lippmann, T.C.; Holman, R.A. The spatial and temporal variability of sand bar morphology. J. Geophys. Res. 1990, 95, 11575–11590. [Google Scholar] [CrossRef]

- Almar, R. Morphodynamique Littorale Haute Fréquence Par Imagerie Vidéo. Ph.D. Thesis, University of Bordeaux, Bordeaux, France, 2009. [Google Scholar]

- Zikra, M.; Hashimoto, N.; Yamashiro, M.; Yokota, M.; Suzuki, K. Analysis of Directional Wave Spectra in Shallow Water Areas Using Video Image Data. Coast. Eng. J. 2012, 54. [Google Scholar] [CrossRef]

- Almar, R.; Bonneton, P.; Senechal, N.; Roelvink, D. Wave celerity from video imaging: A new method. In Proceedings of the 31st International Conference Coastal Engineering, Hamburg, Germany, 31 August–5 September 2008. [Google Scholar]

- Tissier, M.; Bonneton, P.; Almar, R.; Castelle, B.; Bonneton, N.; Nahon, A. Field measurements and non-linear prediction of wave celerity in the surf zone. Eur. J. Mech. B Fluids 2011, 30, 635–641. [Google Scholar] [CrossRef]

- Almar, R.; Michallet, H.; Cienfuegos, R.; Bonneton, P.; Tissier, M.; Ruessink, G. On the use of the Radon Transform in studying nearshore wave dynamics. Coast. Eng. 2014. [Google Scholar] [CrossRef]

- Postacchini, M.; Brocchini, M. A wave-by-wave analysis for the evaluation of the breaking-wave celerity. Appl. Ocean Res. 2014, 46, 15–27. [Google Scholar] [CrossRef]

- Stockdon, H.F.; Holman, R.A. Estimation of wave phase speed and nearshore bathymetry from video imagery. J. Geophys. Res. 2000, 15, 15–22. [Google Scholar] [CrossRef]

- Yoo, J. Nonlinear Bathymetry Inversion Based on Wave Property Estimation from Nearshore Video Imagery. Ph.D. Thesis, Georgia Institute of Technology, Atlanta, GA, USA, 2007. [Google Scholar]

- Holman, R.; Plant, N.; Holland, T. CBathy: A robust algorithm for estimating nearshore bathymetry. J. Geophys. Res. Oceans 2013, 118, 2595–2609. [Google Scholar] [CrossRef]

- Gal, Y.; Browne, M.; Lane, C. Automatic estimation of nearshore wave height from video timestacks. In Proceedings of the 2011 International Conference on Digital Image Computing: Techniques and Applications (DICTA), Noosa, Australia, 6–8 December 2011. [Google Scholar] [CrossRef]

- Almar, R.; Cienfuegos, R.; Catalán, P.A.; Michallet, H.; Castelle, B.; Bonneton, P.; Marieu, V. A new breaking wave height direct estimator from video imagery. Coast. Eng. 2012, 61, 42–48. [Google Scholar] [CrossRef]

- Gal, Y.; Browne, M.; Lane, C. Long-term automated monitoring of nearshore wave height from digital video. IEEE Trans. Geosci. Remote Sens. 2014, 52, 3412–3420. [Google Scholar] [CrossRef]

- Robertson, B.; Gharabaghi, B.; Hall, K. Prediction of Incipient Breaking Wave-Heights Using Artificial Neural Networks and Empirical Relationships. Coast. Eng. J. 2015, 57. [Google Scholar] [CrossRef]

- Matias, A.; Carrasco, A.R.; Loureiro, C.; Andriolo, U.; Masselink, G.; Guerreiro, M.; Pacheco, A.; McCall, R.; Ferreira, O.; Plomaritis, T.A. Measuring and modelling overwash hydrodynamics on a barrier island. In Proceedings of the Coastal Dynamics, ASCE, Helsingor, Denmark, 12–16 June 2017. [Google Scholar]

- Chickadel, C.C. Remote Measurements of Waves and Currents over Complex Bathymetry. Ph.D Thesis, College of Oceanic and Atmospheric Sciences, Oregon State University, Corvallis, OR, USA, 2007. [Google Scholar]

- Mole, M.A.; Mortlock, T.R.C.; Turner, I.L.; Goodwin, I.D.; Splinter, K.D.; Short, A.D. Capitalizing on the surfcam phenomenon: A pilot study in regional—Scale shoreline and inshore wave monitoring utilizing existing camera infrastructure. J. Coast. Res. 2013, 65. [Google Scholar] [CrossRef]

- Bracs, M.A.; Turner, I.L.; Splinter, K.D.; Short, A.D.; Lane, C.; Davidson, M.A.; Goodwin, I.D.; Pritchard, T.; Cameron, D. Evaluation of Opportunistic Shoreline Monitoring Capability Utilizing Existing “Surfcam” Infrastructure. J. Coast. Res. 2016, 319, 542–554. [Google Scholar] [CrossRef]

- Shand, T.D.; Bailey, D.G.; Shand, R.D. Automated Detection of Breaking Wave Height Using an Optical Technique. J. Coast. Res. 2012, 282, 671–682. [Google Scholar] [CrossRef]

- Sánchez-García, E.; Balaguer-Beser, A.; Pardo-Pascual, J.E. C-Pro: A coastal projector monitoring system using terrestrial photogrammetry with a geometric horizon constraint. ISPRS J. Photogramm. Remote Sens. 2017, 128, 255–273. [Google Scholar] [CrossRef]

- DGT. Available online: ftp://ftp.dgterritorio.pt/Maregrafos/Cascais (accessed on 20 November 2007).

- Google Earth. Available online: http://www.google.com/earth/download/ge (accessed on 20 November 2007).

- Wei, H.; Luan, X.; Li, H.; Jia, J.; Chen, Z.; Han, L. Elevation data fitting and precision analysis of Google Earth in road survey. In Proceedings of the AIP Conference Proceedings, Thessaloniki, Greece, 14–18 March 2018. [Google Scholar] [CrossRef]

- Wang, Y.; Zou, Y.; Henrickson, K.; Wang, Y.; Tang, J.; Park, B. Google Earth elevation data extraction and accuracy assessment for transportation applications. PLoS ONE 2017, 12. [Google Scholar] [CrossRef]

- El-Ashmawy, K.L. Investigation of the Accuracy of Google Earth Elevation Data. Artif. Satell. 2016, 51, 89–97. [Google Scholar] [CrossRef]

- Rusli, N.; Majid, M.R.; Din, A.H. Google Earth’s derived digital elevation model: A comparative assessment with Aster and SRTM data. In Proceedings of the IOP Conference Series: Earth and Environmental Science, Kuching, Malaysia, 26–29 August 2013. [Google Scholar] [CrossRef]

- Rusli, N.; Pa’suya, M.F.; Talib, N. A comparative accuracy of Google Earth height with MyGeoid, EGM96 and MSL. In Proceedings of the IOP Conference Series: Earth and Environmental Science, Kuala Lumpur, Malaysia, 13–14 April 2016. [Google Scholar] [CrossRef]

- Smith, R.K.; Bryan, K.R. Monitoring beach face volume with a combination of intermittent profiling and video imagery. J. Coast. Res. 2007, 23, 892–898. [Google Scholar] [CrossRef]

- Almar, R.; Ranasinghe, R.; Sénéchal, N.; Bonneton, P.; Roelvink, D.; Bryan, K.R.; Parisot, J. Video-Based detection of shorelines at complex meso–macro tidal beaches. J. Coast. Res. 2012, 284, 1040–1048. [Google Scholar] [CrossRef]

- Coordinates Transformation. Available online: https://epsg.io/ (accessed on 20 November 2007).

- Anguelov, D.; Dulong, C.; Filip, D.; Frueh, C.; Lafon, S.; Lyon, R.; Weaver, J. Google Street View: Capturing the World at Street Level. Computer 2010, 43, 32–38. [Google Scholar] [CrossRef]

- Wehr, A.; Lohr, U. Airborne laser scanning—An introduction and overview. ISPRS J. Photogramm. Remote Sens. 1999, 54, 68–82. [Google Scholar] [CrossRef]

- Vignudelli, S.A.; Kostianoy, P.; Cipollini, P.; Benveniste, J. Coastal Altimetry; Springer: Berlin/Heidelberg, Germany, 2011. [Google Scholar] [CrossRef]

- Florinsky, I.V. Digital Terrain Analysis in Soil Science and Geology; Elsevier: London, UK, 2016. [Google Scholar]

- Fu, L.; Cazenave, A.A. Satellite Altimetry and Earth Sciences: A Handbook of Techniques and Applications, 1st ed.; Academic Press: Cambridge, MA, USA, 2000; Volume 69. [Google Scholar]

- Turner, I.L.; Harley, M.D.; Drummond, C.D. UAVs for coastal surveying. Coast. Eng. 2016, 114, 19–24. [Google Scholar] [CrossRef]

- Potere, D. Horizontal Positional Accuracy of Google Earth’s High-Resolution Imagery Archive. Sensors 2008, 8, 7973–7981. [Google Scholar] [CrossRef] [PubMed]

- Yu, L.; Gong, P. Google Earth as a virtual globe tool for Earth science applications at the global scale: Progress and perspectives. Int. J. Remote Sens. 2011, 33, 3966–3986. [Google Scholar] [CrossRef]

- Andriolo, U. Nearshore Hydrodynamics and Morphology Derived From Video Imagery. Ph.D. Thesis, Faculty of Science, University of Lisbon, Lisbon, Portugal, 2018. [Google Scholar]

| Latitude | Longitude | North | East | u | v | Z | |

|---|---|---|---|---|---|---|---|

| GCP_A | 38°59′18.83″ | 9°25′12.69″ | −74,672.75 | −111,515.32 | 685 | 356 | 0.95 |

| GCP_B | 38°59′22.29″ | 9°25′17.33″ | −74,564.47 | −111,625.49 | 627 | 272 | −0.60 |

| GCP_C | 38°59′25.52″ | 9°25′23.60″ | −74,462.72 | −111,774.98 | 523 | 216 | 1.40 |

| Procedure | GCPs | Internal Camera Parameters | External Camera Parameters | Horizon Constraint | DoF | |||

|---|---|---|---|---|---|---|---|---|

| Number | Source | uc, vc | Focal | XC, YC, ZC | α, τ, θ | |||

| 1 | 72 | survey | x | o | o | o | √ | 139 |

| 2a | 3 | remote | x | o | o | o | √ | 1 |

| 2b’ | 3 | remote | x | ↓ | x | o | √ | 4 |

| 2b | 3 | remote | x | x | o | o | √ | 2 |

| Proc. | Internal Camera Parameters | External Camera Parameters | dx | dy | dz | Dist | ||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| uc, vc | Focal | X0 | Y0 | Z0 | α | τ | θ | |||||

| 1 | 400,225 | 1488 | −111,278.1 | −74,976.8 | 79.5 | 83.4 | 0.4 | 48.9 | 7.6 | −2.7 | −3.5 | 8.8 |

| 2a | 400,225 | 1484 | −111,279.1 | −74,980.5 | 80.4 | 83.4 | 0.4 | 48.5 | 8.6 | 1.1 | −4.4 | 9.8 |

| 2b’ | 400,225 | 1599 (↓) | −111,270.5 | −74,979.4 | 76.0 | 83.8 | 0.4 | 48.7 | ||||

| 2b | 400,225 | 1599 | −111,272.5 | −74,979.3 | 75.5 | 83.8 | 0.4 | 48.6 | 2.0 | −0.2 | 0.5 | 2.1 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Andriolo, U.; Sánchez-García, E.; Taborda, R. Operational Use of Surfcam Online Streaming Images for Coastal Morphodynamic Studies. Remote Sens. 2019, 11, 78. https://doi.org/10.3390/rs11010078

Andriolo U, Sánchez-García E, Taborda R. Operational Use of Surfcam Online Streaming Images for Coastal Morphodynamic Studies. Remote Sensing. 2019; 11(1):78. https://doi.org/10.3390/rs11010078

Chicago/Turabian StyleAndriolo, Umberto, Elena Sánchez-García, and Rui Taborda. 2019. "Operational Use of Surfcam Online Streaming Images for Coastal Morphodynamic Studies" Remote Sensing 11, no. 1: 78. https://doi.org/10.3390/rs11010078

APA StyleAndriolo, U., Sánchez-García, E., & Taborda, R. (2019). Operational Use of Surfcam Online Streaming Images for Coastal Morphodynamic Studies. Remote Sensing, 11(1), 78. https://doi.org/10.3390/rs11010078