Crowdsourcing Analysis of Twitter Data on Climate Change: Paid Workers vs. Volunteers

Abstract

1. Introduction

2. Crowdsourcing and Quality Control Issues

2.1. Crowdsourcing in Scientific Research

2.2. Quality Issues in Crowdsourcing

- Instrumental errors arising from complex data pre- and post-processing, which involves multiple third-party platforms used to prepare data for processing, send tasks to workers, collect processing results, and finally, join the processed data.

- Involuntary errors by human raters, e.g., due to insufficiently clear instructions and workers’ cognitive limitations.

- Deliberately poor performance of the human raters. A worker may vandalize the survey and provide wrong data, may try to maximize the number of tasks processed per time unit for monetary or other benefits, may provide incorrect information regarding its geographical location, or may lack motivation [28].

3. Data and Methodology

- −2:

- extremely negative attitude, denial, skepticism (“Man made GLOBAL WARMING HOAX EXPOSED”);

- −1:

- denying climate change (“UN admits there has been NO global warming for the last 16 years!”), or denying that climate change is a problem, or that it is man-made (“Sunning on my porch in December. Global warming ain’t so bad”);

- 0:

- neutral, unknown (“A new article on climate change is published in a newspaper”);

- 1:

- accepting that climate change exists, and/or is man-made, and/or can be a problem (“How’s planet Earth doing? Take a look at the signs of climate change here”);

- 2:

- extremely supportive of the idea of climate change (“Global warming? It’s like earth having a Sauna!”).

- Global warming phenomenon: (1) drivers of climate change, (2) science of climate change, and (3) denial and skepticism;

- Climate change impacts: (4) extreme events, (5) unusual weather, (6) environmental changes, and (7) society and economics;

- Adaptation and mitigation: (8) politics and (9) ethical concerns, and

- (10) Unknown.

4. Results

4.1. Descriptive Statistics

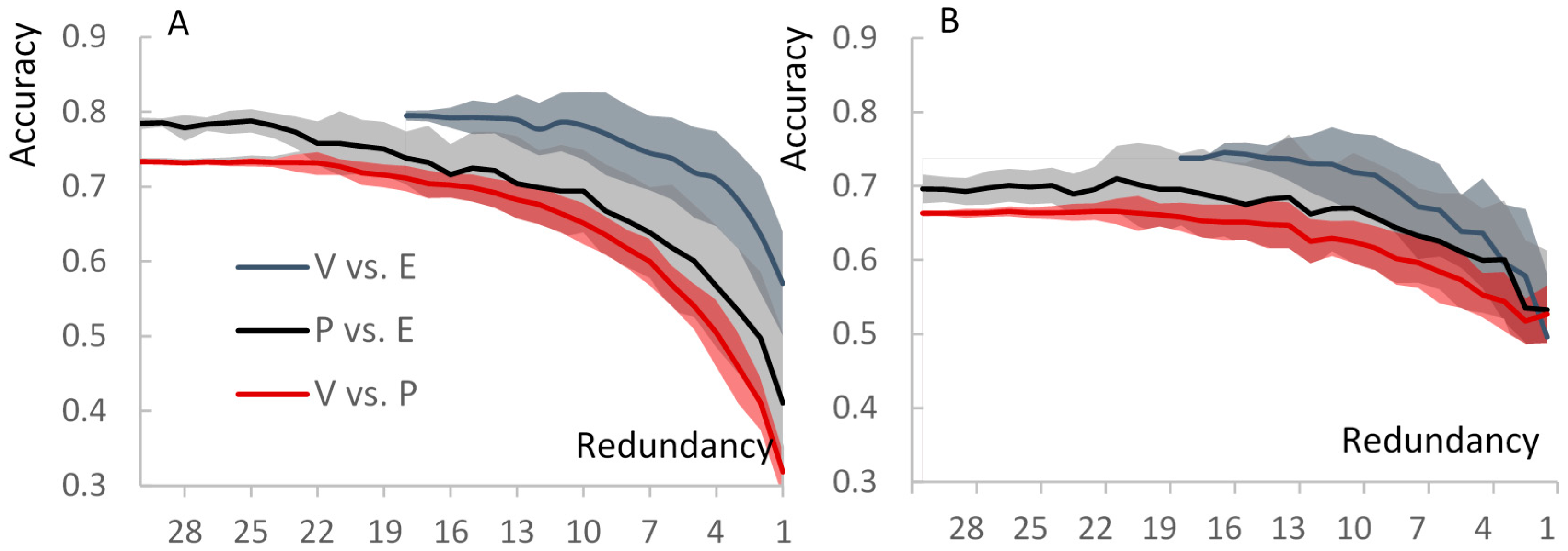

4.2. Crowdsourced vs. Expert Classification Quality

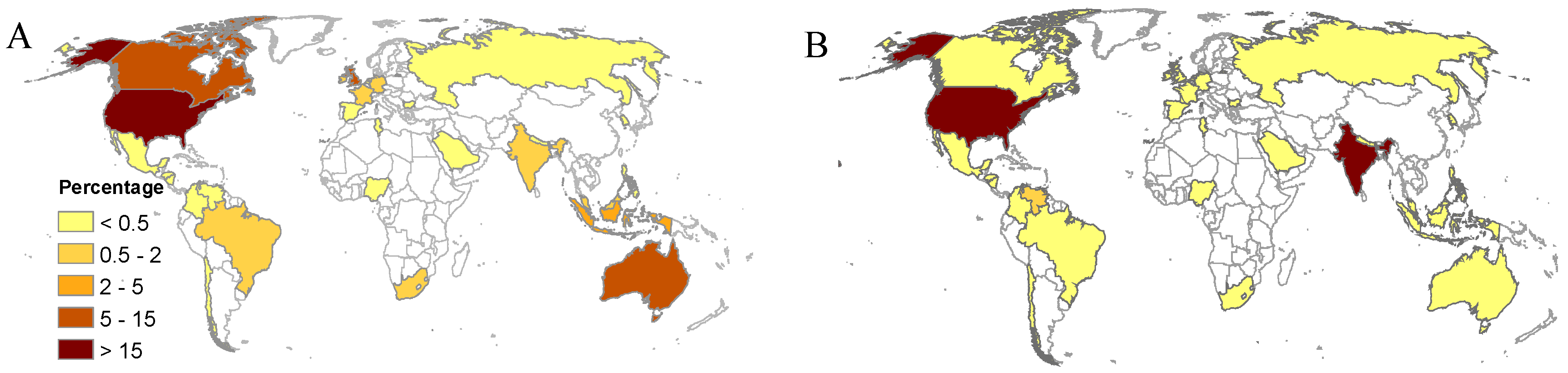

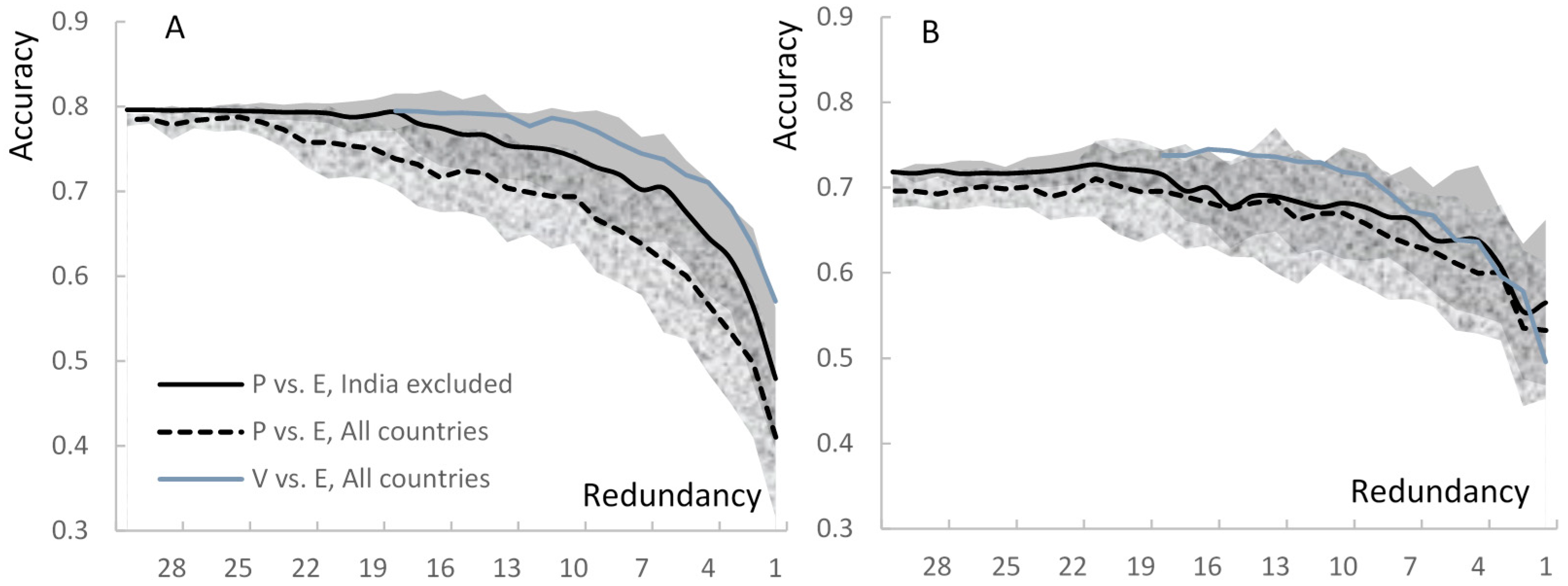

4.3. Geographical Variability

5. Discussion

6. Conclusions

Acknowledgments

Author Contributions

Conflicts of Interest

Appendix A. Coding Instructions

- 0:

- neutral, unknown (A new article on CC is published in a newspaper) (He talked about CC)

- 1:

- accepting that CC exists and/or is man-made and/or can be a problem (How’s planet Earth doing? Take a look at the signs of climate change here)

- 2:

- extremely supportive of the idea of CC (Global warming? It’s like earth having a Sauna!!). Think of code 2 as though it is code 1 plus a strong emotional component and/or a call for action

- −1:

- denying CC (UN admits there has been NO global warming for the last 16 years!) or denying that CC is a problem or that it is man-made (Sunning on my porch in December. Global warming ain’t so bad.)

- −2:

- extremely negative attitude, denial, skepticism (“Climate change” LOL) (Man made GLOBAL WARMING HOAX EXPOSED). Think of code −2 as though it is code −1 plus a strong emotional component.

- Drivers of CC. Examples:

- Greenhouse gases (Carbon Dioxide, Methane, Nitrous Oxide, etc.)

- Oil, gas, and coal

- Science. Examples:

- The scientists found that climate is in fact cooling

- IPCC said that the temperature will be up by 4 degrees C

- Denial, skepticism, Conspiracy Theory. Examples:

- Scientists are lying to the public

- 4.

- Extreme events. Examples:

- Hurricane Sandy, flooding, snowstorm

- 5.

- Weather is unusual. Examples:

- Hot or cold weather

- Too wet or too dry

- Heavy Snowfall

- 6.

- Environment. Examples:

- Acid rain, smog, pollution

- Deforestation, coral reef bleaching

- Pests, infections, wildfires

- 7.

- Society and Economics. Examples:

- Agriculture is threatened

- Sea rising will threaten small island nations

- Poor people are at risk

- Property loss, Insurance

- 8.

- Politics. Examples:

- Conservatives, liberals, elections

- Carbon tax; It is too expensive to control CC

- Treaties, Kyoto Protocol, WTO, UN, UNEP

- 9.

- Ethics, moral, responsibility. Examples:

- We need to fight Global Warming

- We need to give this planet to the next generation

- God gave us the planet to take care of

- 10.

- Unknown, jokes, irrelevant, hard to classify. Examples:

- Global warming is cool OMG a paradox

- This guy is so hot its global warming

References

- Leiserowitz, A.; Maibach, E.W.; Roser-Renouf, C.; Rosenthal, S.; Cutler, M. Climate Change in the American Mind: May 2017; Yale Program on Climate Change Communication; Yale University and George Mason University: New Haven, CT, USA, 2017. [Google Scholar]

- Kirilenko, A.P.; Stepchenkova, S.O. Public microblogging on climate change: One year of Twitter worldwide. Glob. Environ. Chang. 2014, 26, 171–182. [Google Scholar] [CrossRef]

- Cody, E.M.; Reagan, A.J.; Mitchell, L.; Dodds, P.S.; Danforth, C.M. Climate Change Sentiment on Twitter: An Unsolicited Public Opinion Poll. PLoS ONE 2015, 10, e0136092. [Google Scholar] [CrossRef] [PubMed]

- Yang, W.; Mu, L.; Shen, Y. Effect of climate and seasonality on depressed mood among twitter users. Appl. Geogr. 2015, 63, 184–191. [Google Scholar] [CrossRef]

- Holmberg, K.; Hellsten, I. Gender differences in the climate change communication on Twitter. Int. Res. 2015, 25, 811–828. [Google Scholar] [CrossRef]

- Leas, E.C.; Althouse, B.M.; Dredze, M.; Obradovich, N.; Fowler, J.H.; Noar, S.M.; Allem, J.-P.; Ayers, J.W. Big Data Sensors of Organic Advocacy: The Case of Leonardo DiCaprio and Climate Change. PLoS ONE 2016, 11, e0159885. [Google Scholar] [CrossRef] [PubMed]

- Kirilenko, A.P.; Molodtsova, T.; Stepchenkova, S.O. People as sensors: Mass media and local temperature influence climate change discussion on Twitter. Glob. Environ. Chang. 2015, 30, 92–100. [Google Scholar] [CrossRef]

- Sisco, M.; Bosetti, V.; Weber, E. When do extreme weather events generate attention to climate change? Clim. Chang. 2017, 143, 227–241. [Google Scholar] [CrossRef]

- Howe, J. The rise of crowdsourcing. Wired Mag. 2006, 14, 1–4. [Google Scholar]

- Clery, D. Galaxy Zoo volunteers share pain and glory of research. Science 2011, 333, 173–175. [Google Scholar] [CrossRef] [PubMed]

- Galaxy Zoo. Available online: https://www.galaxyzoo.org/ (accessed on 25 December 2016).

- Lintott, C.; Schawinski, K.; Bamford, S.; Slosar, A.; Land, K.; Thomas, D.; Edmondson, E.; Masters, K.; Nichol, R.C.; Raddick, M.J.; et al. Galaxy Zoo 1: Data release of morphological classifications for nearly 900,000 galaxies. Mon. Not. R. Astron. Soc. 2011, 410, 166–178. [Google Scholar] [CrossRef]

- Mao, A.; Kamar, E.; Chen, Y.; Horvitz, E.; Schwamb, M.E.; Lintott, C.J.; Smith, A.M. Volunteering versus work for pay: Incentives and tradeoffs in crowdsourcing. In Proceedings of the First AAAI Conference on Human Computation and Crowdsourcing, Palm Springs, CA, USA, 7–9 November 2013. [Google Scholar]

- Ross, J.; Irani, L.; Silberman, M.; Zaldivar, A.; Tomlinson, B. Who are the crowdworkers? Shifting demographics in mechanical Turk. In Proceedings of the CHI’10 Extended Abstracts on Human Factors in Computing Systems, Atlanta, GA, USA, 10–15 April 2001; ACM: New York, NY, USA, 2010; pp. 2863–2872. [Google Scholar]

- Redi, J.; Povoa, I. Crowdsourcing for Rating Image Aesthetic Appeal: Better a Paid or a Volunteer Crowd? In Proceedings of the 2014 International ACM Workshop on Crowdsourcing for Multimedia, Orlando, FL, USA, 7 November 2014; ACM: New York, NY, USA, 2014; pp. 25–30. [Google Scholar]

- Muller, C.L.; Chapman, L.; Johnston, S.; Kidd, C.; Illingworth, S.; Foody, G.; Overeem, A.; Leigh, R.R. Crowdsourcing for climate and atmospheric sciences: Current status and future potential. Int. J. Climatol. 2015, 35, 3185–3203. [Google Scholar] [CrossRef]

- Olteanu, A.; Castillo, C.; Diakopoulos, N.; Aberer, K. Comparing Events Coverage in Online News and Social Media: The Case of Climate Change. In Proceedings of the Ninth International AAAI Conference on Web and Social Media, Oxford, UK, 26–29 May 2015. [Google Scholar]

- Samsel, F.; Klaassen, S.; Petersen, M.; Turton, T.L.; Abram, G.; Rogers, D.H.; Ahrens, J. Interactive Colormapping: Enabling Multiple Data Range and Detailed Views of Ocean Salinity. In Proceedings of the 2016 CHI Conference Extended Abstracts on Human Factors in Computing Systems (CHI EA’16), San Jose, CA, USA, 7–12 May 2016; ACM: New York, NY, USA, 2016; pp. 700–709. [Google Scholar]

- Yzaguirre, A.; Warren, R.; Smit, M. Detecting Environmental Disasters in Digital News Archives. In Proceedings of the 2015 IEEE International Conference on Big Data, Santa Clara, CA, USA, 29 October–1 November 2015; pp. 2027–2035. [Google Scholar]

- Ranney, M.A.; Clark, D. Climate Change Conceptual Change: Scientific Information Can Transform Attitudes. Top. Cogn. Sci. 2016, 8, 49–75. [Google Scholar] [CrossRef] [PubMed]

- Attari, S.Z. Perceptions of water use. Proc. Natl. Acad. Sci. USA 2014, 111, 5129–5134. [Google Scholar] [CrossRef] [PubMed]

- Vukovic, M. Crowdsourcing for Enterprises. In Proceedings of the 2009 Congress on Services-I, Los Angeles, CA, USA, 6–10 July 2009; pp. 686–692. [Google Scholar]

- Overview of Mechanical Turk—Amazon Mechanical Turk. Available online: http://docs.aws.amazon.com/AWSMechTurk/latest/RequesterUI/OverviewofMturk.html (accessed on 28 December 2016).

- Mason, W.; Suri, S. Conducting behavioral research on Amazon’s Mechanical Turk. Behav. Res. Methods 2012, 44, 1–23. [Google Scholar] [CrossRef] [PubMed]

- Staffelbach, M.; Sempolinski, P.; Kijewski-Correa, T.; Thain, D.; Wei, D.; Kareem, A.; Madey, G. Lessons Learned from Crowdsourcing Complex Engineering Tasks. PLoS ONE 2015, 10, e0134978. [Google Scholar] [CrossRef] [PubMed]

- Kawrykow, A.; Roumanis, G.; Kam, A.; Kwak, D.; Leung, C.; Wu, C.; Zarour, E.; Sarmenta, L.; Blanchette, M.; Waldispühl, J.; et al. Phylo: A citizen science approach for improving multiple sequence alignment. PLoS ONE 2012, 7, e31362. [Google Scholar] [CrossRef] [PubMed]

- Poetz, M.K.; Schreier, M. The value of crowdsourcing: can users really compete with professionals in generating new product ideas? J. Prod. Innov. Manag. 2012, 29, 245–256. [Google Scholar] [CrossRef]

- Chandler, J.; Paolacci, G.; Mueller, P. Risks and rewards of crowdsourcing marketplaces. In Handbook of Human Computation; Springer: New York, NY, USA, 2013; pp. 377–392. [Google Scholar]

- Kittur, A.; Chi, E.H.; Suh, B. Crowdsourcing User Studies with Mechanical Turk. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems, Florence, Italy, 5–10 April 2008; ACM: New York, NY, USA, 2008; pp. 453–456. [Google Scholar]

- Raddick, M.J.; Bracey, G.; Gay, P.L.; Lintott, C.J.; Cardamone, C.; Murray, P.; Schawinski, K.; Szalay, A.S.; Vandenberg, J. Galaxy Zoo: Motivations of Citizen Scientists. Available online: http://arxiv.org/ftp/arxiv/papers/1303/1303.6886.pdf (accessed on 27 October 2017).

- Allahbakhsh, M.; Benatallah, B.; Ignjatovic, A.; Motahari-Nezhad, H.R.; Bertino, E.; Dustdar, S. Quality control in crowdsourcing systems. IEEE Int. Comput. 2013, 17, 76–81. [Google Scholar] [CrossRef]

- Rouse, S.V. A reliability analysis of Mechanical Turk data. Comp. Hum. Behav. 2015, 43, 304–307. [Google Scholar] [CrossRef]

- Peer, E.; Vosgerau, J.; Acquisti, A. Reputation as a sufficient condition for data quality on Amazon Mechanical Turk. Behav. Res. Methods 2014, 46, 1023–1031. [Google Scholar] [CrossRef] [PubMed]

- Eickhoff, C.; de Vries, A.P. Increasing cheat robustness of crowdsourcing tasks. Inf. Retr. 2013, 16, 121–137. [Google Scholar] [CrossRef]

- Dawid, A.P.; Skene, A.M. Maximum likelihood estimation of observer error-rates using the EM algorithm. Appl. Stat. 1979, 28, 20–28. [Google Scholar] [CrossRef]

- Goodman, J.K.; Cryder, C.E.; Cheema, A. Data collection in a flat world: The strengths and weaknesses of Mechanical Turk samples. J. Behav. Decis. Mak. 2013, 26, 213–224. [Google Scholar] [CrossRef]

- Climate Tweets. Available online: http://csgrid.org/csg/climate/ (accessed on 25 December 2016).

- Amazon Mechanical Turk Requester Best Practices Guide. Available online: https://mturkpublic.s3.amazonaws.com/docs/MTURK_BP.pdf (accessed on 29 December 2016).

- Uebersax, J.S. A design-independent method for measuring the reliability of psychiatric diagnosis. J. Psychiatr. Res. 1982, 17, 335–342. [Google Scholar] [CrossRef]

- Gwet, K.L. Handbook of Inter-Rater Reliability. The Definitive Guide to Measuring the Extent of Agreement among Raters, 4th ed.; Advanced Analytics, LLC: Gaithersburg, MD, USA, 2014. [Google Scholar]

- Donkor, B. Sentiment Analysis: Why It’s Never 100% Accurate. 2014. Available online: https://mturkpublic.s3.amazonaws.com/docs/MTURK_BP.pdf (accessed on 29 December 2016).

- Ogneva, M. How companies can use sentiment analysis to improve their business. Mashable, 19 April 2010. Available online: https://mturkpublic.s3.amazonaws.com/docs/MTURK_BP.pdf (accessed on 29 December 2016).

- Snow, R.; O’Connor, B.; Jurafsky, D.; Ng, A.Y. Cheap and Fast—But is it Good? Evaluating Non-Expert Annotations for Natural Language Tasks. In Proceedings of the Conference on Empirical Methods in Natural Language Processing, Honolulu, HI, USA, 25–27 October 2008; Association for Computational Linguistics: Stroudsburg, PA, USA, 2008; pp. 254–263. [Google Scholar]

- Welinder, P.; Branson, S.; Perona, P.; Belongie, S.J. The multidimensional wisdom of crowds. In Advances in Neural Information Processing Systems; NIPS: Vancouver, BC, Canada, 2010; pp. 2424–2432. [Google Scholar]

- Whitehill, J.; Wu, T.; Bergsma, J.; Movellan, J.R.; Ruvolo, P.L. Whose vote should count more: Optimal integration of labels from labelers of unknown expertise. In Advances in Neural Information Processing Systems; NIPS: Vancouver, BC, Canada, 2009; pp. 2035–2043. [Google Scholar]

- Ipeirotis, P.G.; Provost, F.; Wang, J. Quality Management on Amazon Mechanical Turk. In Proceedings of the ACM SIGKDD Workshop on Human Computation, Washington, DC, USA, 25 July 2010; ACM: New York, NY, USA, 2010; pp. 64–67. [Google Scholar]

- Gillick, D.; Liu, Y. Non-Expert Evaluation of Summarization Systems is Risky. In Proceedings of the NAACL HLT 2010 Workshop on Creating Speech and Language Data with Amazon’s Mechanical Turk, Los Angeles, CA, USA, 6 June 2010; Association for Computational Linguistics: Stroudsburg, PA, USA, 2010; pp. 148–151. [Google Scholar]

- Paolacci, G.; Chandler, J.; Ipeirotis, P.G. Running experiments on amazon mechanical Turk. Judgm. Decis. Mak. 2010, 5, 411–419. [Google Scholar]

- Amazon Mechanical Turk. Available online: https://www.mturk.com/mturk/help?helpPage=worker#how_paid (accessed on 30 December 2016).

| Comparison | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | A |

|---|---|---|---|---|---|---|---|---|---|---|---|

| V vs. E | 0.17 * | 0.41 ‡ | 0.57 ‡ | 0.40 ‡ | 0.34 ‡ | 0.57 ‡ | 0.46 | 0.31 ‡ | 0.32 ‡ | 0.40 ‡ | 0.46 ‡ |

| P vs. E | 0.13 | 0.24 ‡ | 0.39 ‡ | 0.36 ‡ | 0.24 † | 0.37 ‡ | 0.34 ‡ | 0.21 † | 0.21 † | 0.39 ‡ | 0.33 ‡ |

| Comparison | Matching Topics | Matching Attitudes | Opposite Attitudes |

|---|---|---|---|

| V vs. P (full dataset) | 0.73 | 0.65 | 0.01 |

| V vs. P (groundtruthing dataset) | 0.75 | 0.68 | 0.05 |

| V vs. E (groundtruthing dataset) | 0.80 | 0.70 | 0.04 |

| P vs. E (groundtruthing dataset) | 0.79 | 0.67 | 0.03 |

| Country | Volunteer Workers | Paid Workers | ||

|---|---|---|---|---|

| Tasks | Raters | Tasks | Raters | |

| U.S. | 64.4 | 17.1 | 75.7 | 76.4 |

| U.K. | 13.0 | 12.4 | 0.4 | 0.5 |

| Australia | 6.2 | 10.6 | 0.1 | 0.2 |

| Canada | 5.6 | 8.8 | 0.3 | 0.3 |

| Indonesia | 2.9 | 7.1 | 0.0 | 0.2 |

| Germany | 1.2 | 4.9 | 0.0 | 0.2 |

| Ireland | 1.1 | 5.4 | 0.0 | 0.2 |

| India | 1.0 | 4.2 | 20.6 | 18.2 |

| France | 0.8 | 3.9 | 0.0 | 0.2 |

| Brazil | 0.7 | 3.6 | 0.0 | 0.2 |

| Comparison | Matching Topics | Matching Attitude | Opposite Attitudes | |||

|---|---|---|---|---|---|---|

| V | P | V | P | V | P | |

| U.S. | 0.83 | 0.80 | 0.72 | 0.74 | 0.03 | 0.05 |

| India | 0.22 | 0.54 | 0.15 | |||

| Other countries | 0.72 | 0.47 | 0.78 | 0.47 | 0.03 | 0.11 |

© 2017 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kirilenko, A.P.; Desell, T.; Kim, H.; Stepchenkova, S. Crowdsourcing Analysis of Twitter Data on Climate Change: Paid Workers vs. Volunteers. Sustainability 2017, 9, 2019. https://doi.org/10.3390/su9112019

Kirilenko AP, Desell T, Kim H, Stepchenkova S. Crowdsourcing Analysis of Twitter Data on Climate Change: Paid Workers vs. Volunteers. Sustainability. 2017; 9(11):2019. https://doi.org/10.3390/su9112019

Chicago/Turabian StyleKirilenko, Andrei P., Travis Desell, Hany Kim, and Svetlana Stepchenkova. 2017. "Crowdsourcing Analysis of Twitter Data on Climate Change: Paid Workers vs. Volunteers" Sustainability 9, no. 11: 2019. https://doi.org/10.3390/su9112019

APA StyleKirilenko, A. P., Desell, T., Kim, H., & Stepchenkova, S. (2017). Crowdsourcing Analysis of Twitter Data on Climate Change: Paid Workers vs. Volunteers. Sustainability, 9(11), 2019. https://doi.org/10.3390/su9112019