Artificial Intelligence Alone Will Not Democratise Education: On Educational Inequality, Techno-Solutionism and Inclusive Tools

Abstract

1. Introduction

1.1. Paper Overview

1.2. Exclusion in Education Is Still Persistent

1.3. Motivation

1.4. Role of AI and Educational Technology

2. AI in Education (AIEd): The Promise and the Peril

2.1. The Promise

2.2. The Peril (Challenges)

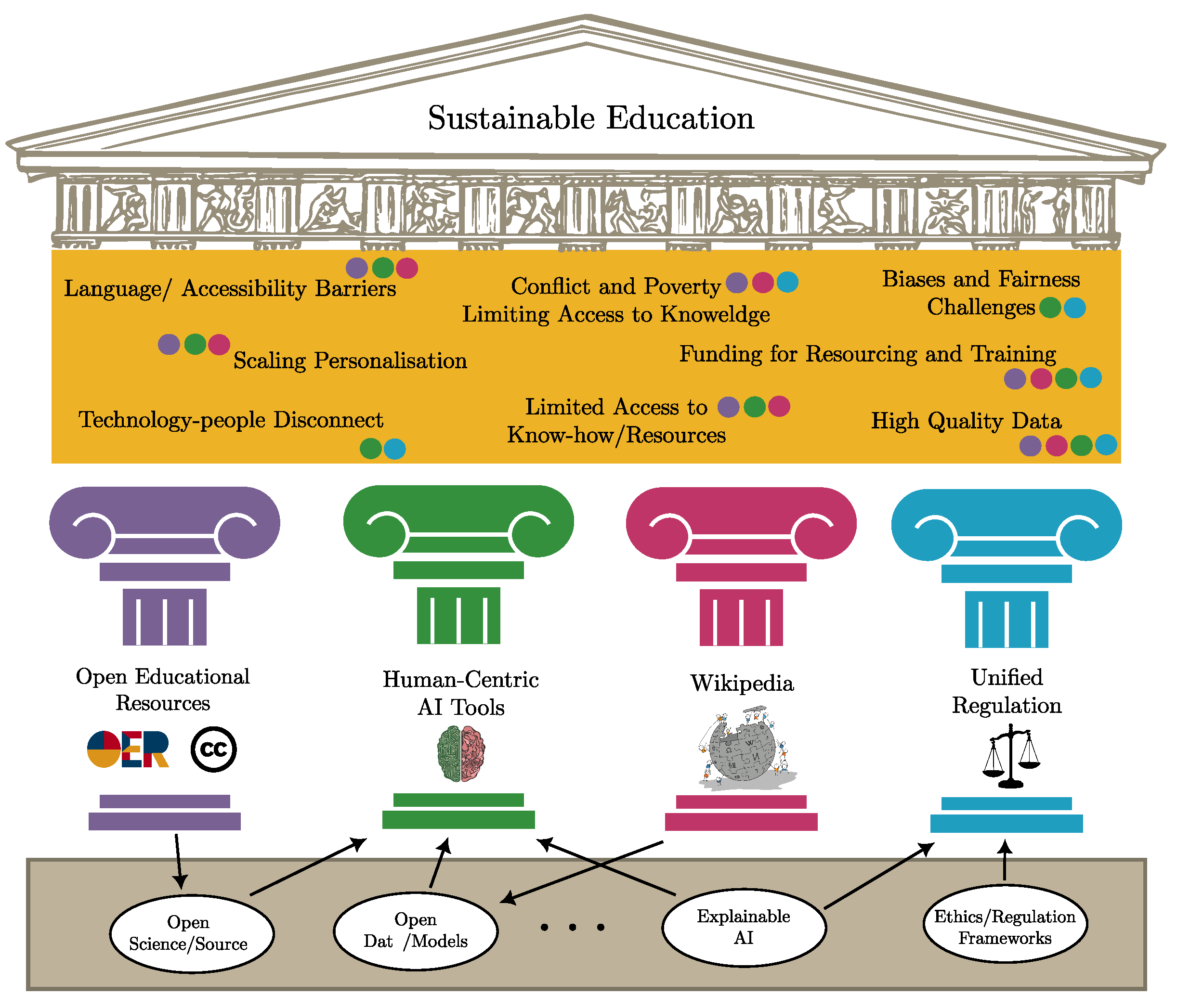

3. Proposed Pillars for Inclusive AIEd

- Target financing to those left behind: There is no inclusion while millions do not have access to education. We argue for making access to education one of the main foci of AIEd research and innovation.

- Share expertise and resources: It is the only way to sustain a transition to inclusion. We propose relying on open educational materials and open-source tools.

- Engage in meaningful consultation with communities and parents: Inclusion cannot be enforced with a top-down approach. We propose participatory tools as a core pillar of AIEd research and innovation.

- Apply universal design: Ensure inclusive systems fulfil every learner’s potential. We propose AI tools that are modality- and language-agnostic, aiming to adapt to the learners’ needs.

- Prepare, empower and motivate the education workforce: All teachers should be prepared to teach all students. Assistive technologies can play a key role in this regard if they are designed and developed in the right way.

- Collect data on and for inclusion with attention and respect: Avoid stigmatised labelling. A human-centric approach to AIEd is needed.

- Learn from peers: A shift to inclusion is not easy. For this, we propose creating education ecosystems where everyone can contribute, independently of language or ability. Language and translation models, OERs and open-knowledge bases like Wikipedia are key in this regard.

- Open Educational Resources: A large growing collection of freely available and accessible educational resources with appropriate diversity to suit a global learner population.

- A unifying taxonomy of knowledge: A region-, government- and language-agnostic representation of knowledge that can be used to build AIEd tools (we propose Wikipedia as a foundation).

- Human-centred AI: A suite of fair, interactive, collaborative and transparent AI algorithms that give full agency to the stakeholder.

- Streamlined and solidified regulation: A series of well-thought-out regulations that can govern the development of AIEd tools that can positively impact the globe as a whole.

3.1. Open Educational Resources (OERs)

3.2. Wikipedia, a Dynamic, Scalable Knowledge Base

3.3. Human-Centred Tools

- Are we accounting for the technological, societal and cultural differences across nations?

- Are we ensuring that the interests of low- and middle-income countries are represented in key debates and decisions?

- Are we creating the necessary bridges between these nations (end users) and countries where AI is currently being developed (producers)?

3.3.1. Explainable and Transparent AI

3.3.2. Open Models, Open Science and Open Source

3.3.3. Impact of Human-Centred AI

3.4. Unified Vision for Regulated AIEd

4. Concluding Remarks

- Working together on developing and leveraging the power of language and culturally diverse Open Educational Resources (OERs), which can be reused and consumed around the globe.

- Building standardised taxonomies and ontologies of knowledge (like Wikipedia), is one of the greatest technical challenges for AIEd at present.

- Investing in human-centric scientific advancement that entails open science, models and source code to enable civic engagement with the design of these technologies and thus support their sustainable use in local communities.

- Engagement in critical thinking and policymaking, where we question the social norms and politics embedded in AIEd systems and direct technological change towards meeting societal needs and reducing inequalities.

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| AI | Artificial Intelligence |

| AIEd | Artificial Intelligence in Education |

| LLM | Large Language Model |

| IDIA | International Development Innovation Alliance |

| IRCAI | International Research Centre on Artificial Intelligence |

| ITSs | Intelligent Tutoring Systems |

| ML | Machine Learning |

| MOOCs | Massively Open Online Courses |

| OECD | Organisation for Economic Co-operation and Development |

| OERs | Open Educational Resources |

| UNESCO | United Nations Educational, Scientific and Cultural Organisation |

| WIDE | World Inequality Database on Education |

References

- Nilsson, M.; Griggs, D.; Visbeck, M. Policy: Map the interactions between Sustainable Development Goals. Nat. News 2016, 534, 320. [Google Scholar] [CrossRef]

- Holmes, W.; Iniesto, F.; Anastopoulou, S.; Boticario, J.G. Stakeholder Perspectives on the Ethics of AI in Distance-Based Higher Education. Int. Rev. Res. Open Distrib. Learn. 2023, 24, 96–117. [Google Scholar] [CrossRef]

- Roser, M.; Ortiz-Ospina, E. Primary and secondary education. In Our World Data; 2013; Available online: https://ourworldindata.org/global-education (accessed on 20 April 2023).

- UNESCO. UNESCO’s Global Education Report in 2020. 2020. Available online: https://gem-report-2020.unesco.org/thematic/ (accessed on 20 April 2023).

- UNESCO. World Inequality Database on Education. 2023. Available online: https://www.education-inequalities.org/ (accessed on 20 April 2023).

- Cukurova, M.; Miao, X.; Brooker, R. Adoption of Artificial Intelligence in Schools: Unveiling Factors Influencing Teachers’ Engagement. In Proceedings of the International Conference on Artificial Intelligence in Education, Tokyo, Japan, 3 July 2023; Springer: Berlin/Heidelberg, Germany, 2023; pp. 151–163. [Google Scholar]

- Perez-Ortiz, M.; Novak, E.; Bulathwela, S.; Shawe-Taylor, J. An AI-based Learning Companion Promoting Lifelong Learning Opportunities for All. 2020. Available online: https://ircai.org/wp-content/uploads/2021/01/IRCAI_REPORT_01.pdf (accessed on 20 April 2023).

- X5GON Consortium. X5GON: Cross Modal, Cross Cultural, Cross Lingual, Cross Domain, and Cross Site Global OER Network. 2021. Available online: https://www.x5gon.org (accessed on 20 April 2023).

- Knowledge 4 All Foundation. HumaneAI Network. 2023. Available online: https://www.humane-ai.eu (accessed on 20 April 2023).

- Global Disability Innovation Hub. AT2030. 2020. Available online: https://at2030.org/ (accessed on 20 April 2023).

- Holmes, W.; Porayska-Pomsta, K. The Ethics of Artificial Intelligence in Education: Practices, Challenges, and Debates; Taylor & Francis: Oxfordshire, UK, 2022. [Google Scholar]

- Toward More Effective and Equitable Learning: Identifying Barriers and Solutions for the Future of Online Education. Technol. Mind, Behav. 2022, 3, 1–15. [CrossRef]

- UNESCO. Situation Analysis on the Effects of and Responses to COVID-19 on the Education Sector in Asia. 2021. Available online: https://www.unicef.org/rosa/media/16436/file/Regional%20Situation%20Analysis%20Report.pdf (accessed on 20 April 2023).

- Holmes, W.; Bialik, M.; Fadel, C. Artificial Intelligence in Education: Promises and Implications for Teaching and Learning; Center for Curriculum Redesign: Jamaica Plain, MA, USA, 2019. [Google Scholar]

- St-Hilaire, F.; Vu, D.D.; Frau, A.; Burns, N.; Faraji, F.; Potochny, J.; Robert, S.; Roussel, A.; Zheng, S.; Glazier, T.; et al. A New Era: Intelligent Tutoring Systems Will Transform Online Learning for Millions. arXiv 2022, arXiv:2203.03724. [Google Scholar]

- Woolf, B.P. Building Intelligent Interactive Tutors: Student-Centered Strategies for Revolutionizing E-Learning; Morgan Kaufmann: Cambridge, MA, USA, 2010. [Google Scholar]

- du Boulay, B. Recent meta-reviews and meta–analyses of AIED systems. Int. J. Artif. Intell. Educ. 2016, 26, 536–537. [Google Scholar] [CrossRef]

- Corbett, A. Cognitive Computer Tutors: Solving the Two-Sigma Problem. In International Conference on User Modeling, Proceedings of the User Modeling 2001, Sonthofen, Germany, 13–17 July 2001; Bauer, M., Gmytrasiewicz, P.J., Vassileva, J., Eds.; Springer: Berlin/Heidelberg, Germany, 2001; pp. 137–147. [Google Scholar]

- Meyer, D. Is This Press Release From 2012 or 1972? 2013. Available online: https://blog.mrmeyer.com/2013/is-this-press-release-from-2012-or-1972 (accessed on 1 May 2021).

- Novak, E.; Urbančič, J.; Jenko, M. Preparing multi-modal data for natural language processing. In Proceedings of the Slovenian KDD Conference on Data Mining and Data Warehouses (SiKDD), Ljubljana, Slovenia, 5 October 2018. [Google Scholar]

- Jiang, W.; Pardos, Z.A.; Wei, Q. Goal-based Course Recommendation. In Proceedings of the International Conference on Learning Analytics & Knowledge, Tempe, AZ, USA, 4–8 March 2019. [Google Scholar]

- Miao, F.; Holmes, W.; Huang, R.; Zhang, H. AI and Education: A Guidance for Policymakers; UNESCO Publishing: Paris, France, 2021. [Google Scholar]

- Januszewski, A.; Molenda, M. Educational Technology: A Definition with Commentary; Routledge: Oxfordshire, UK, 2013. [Google Scholar]

- Luppicini, R. A systems definition of educational technology in society. J. Educ. Technol. Soc. 2005, 8, 103–109. [Google Scholar]

- UNCTAD. Technology and Innovation Report 2021. 2021. Available online: https://unctad.org/page/technology-and-innovation-report-2021 (accessed on 20 April 2023).

- Kizilcec, R.F.; Halawa, S. Attrition and achievement gaps in online learning. In Proceedings of the Second (2015) ACM Conference on Learning@ Scale, Vancouver, BC, Canada, 14–18 March 2015; pp. 57–66. [Google Scholar]

- UNESCO. Open Educational Resources (OER). 2021. Available online: https://en.unesco.org/themes/building-knowledge-societies/oer (accessed on 1 April 2021).

- Ramesh, A.; Goldwasser, D.; Huang, B.; Daume, H., III; Getoor, L. Learning latent engagement patterns of students in online courses. In Proceedings of the AAAI Conference on Artificial Intelligence, Quebec City, QC, Canada, 27–31 July 2014. [Google Scholar]

- Bulathwela, S.; Pérez-Ortiz, M.; Yilmaz, E.; Shawe-Taylor, J. Power to the Learner: Towards Human-Intuitive and Integrative Recommendations with Open Educational Resources. Sustainability 2022, 14, 11682. [Google Scholar] [CrossRef]

- Bulathwela, S.; Verma, M.; Ortiz, M.P.; Yilmaz, E.; Shawe-Taylor, J. Can Population-based Engagement Improve Personalisation? A Novel Dataset and Experiments. In Proceedings of the 15th International Conference on Educational Data Mining, Durham, UK, 24–27 July 2022; p. 414. [Google Scholar]

- Corbett, A.T.; Anderson, J.R. Knowledge tracing: Modeling the acquisition of procedural knowledge. User Model. User-Adapt. Interact. 1994, 4, 253–278. [Google Scholar] [CrossRef]

- Yudelson, M.V.; Koedinger, K.R.; Gordon, G.J. Individualized Bayesian Knowledge Tracing Models. In Artificial Intelligence in Education: Proceedings of the 16th International Conference, AIED 2013, Memphis, TN, USA, 9–13 July 2013; Lane, H.C., Yacef, K., Mostow, J., Pavlik, P., Eds.; Springer: Berlin/Heidelberg, Germany, 2013. [Google Scholar]

- Bulut, O.; Shin, J.; Yildirim-Erbasli, S.N.; Gorgun, G.; Pardos, Z.A. An Introduction to Bayesian Knowledge Tracing with pyBKT. Psych 2023, 5, 770–786. [Google Scholar] [CrossRef]

- Guo, P.J.; Kim, J.; Rubin, R. How Video Production Affects Student Engagement: An Empirical Study of MOOC Videos. In Proceedings of the First ACM Conference on Learning @ Scale, Atlanta, GA, USA, 4–5 March 2014. [Google Scholar]

- Yu, J.; Luo, G.; Xiao, T.; Zhong, Q.; Wang, Y.; Feng, W.; Luo, J.; Wang, C.; Hou, L.; Li, J.; et al. MOOCCube: A large-scale data repository for NLP applications in MOOCs. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, Online, 5–10 July 2020; pp. 3135–3142. [Google Scholar]

- Baquero-Arnal, P.; Iranzo-Sánchez, J.; Civera, J.; Juan, A. The MLLP-UPV Spanish-Portuguese and Portuguese-Spanish Machine Translation Systems for WMT19 Similar Language Translation Task. In Proceedings of the Fourth Conference on Machine Translation (Volume 3: Shared Task Papers, Day 2), Florence, Italy, 1–2 August 2019; pp. 179–184. [Google Scholar] [CrossRef]

- Brank, J.; Leban, G.; Grobelnik, M. Annotating Documents with Relevant Wikipedia Concepts. In Proceedings of the Slovenian KDD Conference on Data Mining and Data Warehouses (SiKDD), Ljubljana, Slovenia, 5 October 2017. [Google Scholar]

- Pérez Ortiz, M.; Bulathwela, S.; Dormann, C.; Verma, M.; Kreitmayer, S.; Noss, R.; Shawe-Taylor, J.; Rogers, Y.; Yilmaz, E. Watch Less and Uncover More: Could Navigation Tools Help Users Search and Explore Videos? In Proceedings of the ACM SIGIR Conference on Human Information Interaction and Retrieval, Association for Computing Machinery, Regensburg, Germany, 14–18 March 2022; CHIIR’22. pp. 90–101. [Google Scholar] [CrossRef]

- Ingavélez-Guerra, P.; Robles-Bykbaev, V.E.; Pérez-Muñoz, A.; Hilera-González, J.; Otón-Tortosa, S. Automatic Adaptation of Open Educational Resources: An Approach From a Multilevel Methodology Based on Students’ Preferences, Educational Special Needs, Artificial Intelligence and Accessibility Metadata. IEEE Access 2022, 10, 9703–9716. [Google Scholar] [CrossRef]

- Garimella, P.; Varma, V. Learning through Wikipedia and Generative AI Technologies. In Proceedings of the 16th International Conference on Educational Data Mining, Bengaluru, India, 11 July 2023; Feng, M., Käser, T., Talukdar, P., Eds.; International Educational Data Mining Society: Bengaluru, India, 2023; pp. 575–577. [Google Scholar] [CrossRef]

- Bulathwela, S.; Muse, H.; Yilmaz, E. Scalable Educational Question Generation with Pre-trained Language Models. In Proceedings of the International Conference on Artificial Intelligence in Education, Tokyo, Japan, 3–7 July 2023; Springer: Berlin/Heidelberg, Germany, 2023; pp. 327–339. [Google Scholar]

- Fawzi, F.; Amini, S.; Bulathwela, S. Small Generative Language Models for Educational Question Generation. In Proceedings of the NeurIPS Workshop on Generative Artificial Intelligence for Education (GAIEd), New Orleans, LA, USA, 15 December 2023. [Google Scholar]

- Phung, T.; Cambronero, J.; Gulwani, S.; Kohn, T.; Majumdar, R.; Singla, A.; Soares, G. Generating High-Precision Feedback for Programming Syntax Errors using Large Language Models. In Proceedings of the 16th International Conference on Educational Data Mining, Bengaluru, India, 11–14 July 2023; Feng, M., Käser, T., Talukdar, P., Eds.; International Conference on Educational Data Mining: Bengaluru, India, 2023; pp. 370–377. [Google Scholar]

- Cachola, I.; Lo, K.; Cohan, A.; Weld, D. TLDR: Extreme Summarization of Scientific Documents. In Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2020, Online, 16–20 November 2020; pp. 4766–4777. [Google Scholar] [CrossRef]

- Elkins, S.; Kochmar, E.; Serban, I.; Cheung, J.C. How Useful are Educational Questions Generated by Large Language Models? In Proceedings of the International Conference on Artificial Intelligence in Education, Tokyo, Japan, 3–7 July 2023; Springer: Berlin/Heidelberg, Germany, 2023; pp. 536–542. [Google Scholar]

- Wang, Z.; Tschiatschek, S.; Woodhead, S.; Hernández-Lobato, J.M.; Peyton, J.S.; Baraniuk, R.G.; Zhang, C. Educational Question Mining At Scale: Prediction, Analysis and Personalization. In Proceedings of the Symposium on Educational Advances in Artificial Intelligence (AAAI-EAAI), Virtual, 2–9 February 2021. [Google Scholar]

- Leiker, D.; Finnigan, S.; Gyllen, A.R.; Cukurova, M. Prototyping the use of Large Language Models (LLMs) for adult learning content creation at scale. arXiv 2023, arXiv:2306.01815. [Google Scholar]

- Kamalov, F.; Santandreu Calonge, D.; Gurrib, I. New Era of Artificial Intelligence in Education: Towards a Sustainable Multifaceted Revolution. Sustainability 2023, 15, 2451. [Google Scholar] [CrossRef]

- Dignum, V. The role and challenges of education for responsible AI. Lond. Rev. Educ. 2021, 19, 1–11. [Google Scholar] [CrossRef]

- Toyama, K. Can technology end poverty. Boston Rev. 2010, 36, 12–29. [Google Scholar]

- Justino, P. Barriers to Education in Conflict-Affected Countries and Policy Opportunities; Paper Commissioned for Fixing the Broken Promise of Education for All: Findings from the Global Initiative on Out-of-School Children (UIS/UNICEF, 2015); UNESCO Institute for Statistics (UIS): Montreal, QC, Canada, 2014. [Google Scholar]

- World Bank. World Development Report 2017: Learning to Realize Education’s Promise; The World Bank: Washington, DC, USA, 2017. [Google Scholar]

- World Bank. World Development Report 2018: Learning to Realize Education’s Promise; The World Bank: Washington, DC, USA, 2018. [Google Scholar]

- IDIA Working Group for Artificial Intelligence. Artificial Intelligence in International Development. 2019. Available online: https://static1.squarespace.com/static/5b156e3bf2e6b10bb0788609/t/5e1f0a37e723f0468c1a77c8/1579092542334/AI+and+international+Development_FNL.pdf (accessed on 20 April 2023).

- Neumann, J.L. Mapping Open Collections for Higher Education. 2020. Available online: https://oerworldmap.wordpress.com/2020/11/24/mapping-open-collections-for-higher-education (accessed on 20 April 2023).

- Pérez, A.; Jorge, J.; Juan, A. D5.3—Final Report on Piloting. Available online: https://www.x5gon.org/wp-content/uploads/2021/01/D5.3_8Dec2020.pdf (accessed on 2 August 2021).

- Fyfe, E.R. Providing Feedback on Computer-Based Algebra Homework in Middle-School Classrooms. Comput. Hum. Behav. 2016, 63, 568–574. [Google Scholar] [CrossRef]

- Choi, Y.; Lee, Y.; Shin, D.; Cho, J.; Park, S.; Lee, S.; Baek, J.; Bae, C.; Kim, B.; Heo, J. Ednet: A large-scale hierarchical dataset in education. In Proceedings of the International Conference on Artificial Intelligence in Education, St. Petersburg, Russia, 16–19 September 2020; Springer: Berlin/Heidelberg, Germany, 2020; pp. 69–73. [Google Scholar]

- Global Disability Innovation Hub, University College London and UNESCO’s International Research Centre on Artificial Intelligence, European Disability Forum and Jožef Stefan Institute. Powering Inclusion: AI and AT. The Findings of an Online Expert Roundtable. 2021. Available online: https://at2030.org/powering-inclusion-ai-and-at (accessed on 20 April 2023).

- Google Research. Project Euphonia. 2023. Available online: https://sites.research.google/euphonia/about/ (accessed on 20 April 2023).

- Smith, P.; Smith, L. Artificial intelligence and disability: Too much promise, yet too little substance? AI Ethics 2021, 1, 81–86. [Google Scholar] [CrossRef]

- Haim, A.; Shaw, S.; Heffernan, N. How to Open Science: A Principle and Reproducibility Review of the Learning Analytics and Knowledge Conference. In Proceedings of the LAK23: 13th International Learning Analytics and Knowledge Conference, New York, NY, USA, 13–27 March 2023; LAK2023. pp. 156–164. [Google Scholar] [CrossRef]

- Voigt, M. Open Education and Open Source for Sustainable Economic Activity. Ökologisches Wirtsch. Fachz. 2021, 36, 25–27. [Google Scholar] [CrossRef]

- Gebru, T.; Morgenstern, J.; Vecchione, B.; Vaughan, J.W.; Wallach, H.M.; Daumé, H., III; Crawford, K. Datasheets for Datasets. arXiv 2018, arXiv:1803.09010. [Google Scholar] [CrossRef]

- Hylén, J. Open Educational Resources: Opportunities and Challenges. 2021. Available online: https://www.google.com.hk/url?sa=t&rct=j&q=&esrc=s&source=web&cd=&cad=rja&uact=8&ved=2ahUKEwjMot-cxuGDAxVqhv0HHTk5BMwQFnoECA0QAQ&url=https%3A%2F%2Fwww.oecd.org%2Feducation%2Fceri%2F37351085.pdf&usg=AOvVaw1JzqZp9LnWrR1T6tOzX91w&opi=89978449 (accessed on 20 April 2023).

- Paris Declaration. In Paris OER Declaration, 2012 World Open Educational Resources (OER) Congress; UNESCO: Paris, France, 2012.

- Commonwealth of Learning. Open Educational Resources: Global Report 2017; Commonwealth of Learning (COL): Vancouver, BC, Canada, 2017. [Google Scholar]

- Mishra, S. Technology applications in education: Policy and prospects. In Technology-Enabled Learning: Policy, Pedagogy and Practice; Commonwealth of Learning (COL): Vancouver, BC, Canada, 2020; pp. 19–31. [Google Scholar]

- Pawlowski, J.M.; Zimmermann, V. Open Content: A concept for the future of e-learning and knowledge management. Knowtech 2007 Frankf. 2007. Available online: https://www.google.com.hk/url?sa=t&rct=j&q=&esrc=s&source=web&cd=&cad=rja&uact=8&ved=2ahUKEwjm5_bJyeGDAxUFka8BHfnlCtAQFnoECBUQAQ&url=http%3A%2F%2Fusers.jyu.fi%2F~japawlow%2Fknowtech_20070907finalwithcitation.pdf&usg=AOvVaw2XmJGCdMNS8WKQeqq5QlKx&opi=89978449 (accessed on 20 April 2023).

- Ehlers, M.; Schuwer, R.; Janssen, B. OER in TVET: Open Educational Resources for Skills Development; UNESCO-UNEVOC International Centre for Technical and Vocational Education and Training: Bonn, Germany, 2018. [Google Scholar]

- UNHRC. Hackathon on Curriculum Alignment: Synthesis and Next Steps. 2019. Available online: https://www.unhcr.org/5e81cdd24.pdf (accessed on 20 April 2023).

- UNHRC. Design Sprint on Curriculum Alignment in Crisis Contexts. 2019. Available online: https://drive.google.com/file/d/1Js8KFGaQWp_iej81K4qWdUsNCqsYxCEa/view (accessed on 20 April 2023).

- Kreutzer, J.; Berger, N.; Riezler, S. Correct Me If You Can: Learning from Error Corrections and Markings. In Proceedings of the 22nd Annual Conference of the European Association for Machine Translation, Lisbon, Portugal, 3–5 November 2020; p. 135. [Google Scholar]

- Clinton, V.; Khan, S. Efficacy of open textbook adoption on learning performance and course withdrawal rates: A meta-analysis. AERA Open 2019, 5, 2332858419872212. [Google Scholar] [CrossRef]

- Orr, D.; Rimini, M.; Van Damme, D. Open Educational Resources: A Catalyst for Innovation, Educational Research and Innovation; Organisation for Economic Co-Operation and Development (OECD): Paris, France, 2015.

- Panda, S. Return on Investment from an Open Online Course on Open Educational Resources. In Technology-Enabled Learning: Policy, Pedagogy and Practice; Commonwealth of Learning: Vancouver, BC, Canada, 2020; pp. 199–212. [Google Scholar]

- Petroni, F.; Rocktäschel, T.; Riedel, S.; Lewis, P.; Bakhtin, A.; Wu, Y.; Miller, A. Language Models as Knowledge Bases? In Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), Hong Kong, China, 7 November 2019; pp. 2463–2473. [Google Scholar] [CrossRef]

- Sun, K.; Xu, Y.E.; Zha, H.; Liu, Y.; Dong, X.L. Head-to-Tail: How Knowledgeable are Large Language Models (LLM)? A.K.A. Will LLMs Replace Knowledge Graphs? arXiv 2023, arXiv:308.10168. [Google Scholar]

- Kizilcec, R.F. How Much Information? Effects of Transparency on Trust in an Algorithmic Interface. In Proceedings of the 2016 CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 7–12 May 2016; CHI’16. pp. 2390–2395. [Google Scholar] [CrossRef]

- Williamson, K.; Kizilcec, R.F. Effects of Algorithmic Transparency in Bayesian Knowledge Tracing on Trust and Perceived Accuracy. In Proceedings of the the 14th International Conference on Educational Data Mining (EDM21), Paris, France, 29 June–2 July 2021. [Google Scholar]

- Bull, S.; Kay, J. Metacognition and open learner models. In Proceedings of the 3rd Workshop on Meta-Cognition and Self-Regulated Learning in Educational Technologies, at ITS2008, Montreal, QC, Canada, 23–27 June 2008; pp. 7–20. [Google Scholar]

- Warncke-Wang, M.; Cosley, D.; Riedl, J. Tell Me More: An Actionable Quality Model for Wikipedia. In Proceedings of the 9th International Symposium on Open Collaboration, Hong Kong, China, 5–7 August 2013. WikiSym’13. [Google Scholar]

- Wulczyn, E.; Thain, N.; Dixon, L. Ex Machina: Personal Attacks Seen at Scale. In Proceedings of the 26th International Conference on World Wide Web, Republic and Canton of Geneva, CHE, New York, NY, USA, 3–7 April 2017; WWW’17. pp. 1391–1399. [Google Scholar] [CrossRef]

- Marr, B. The Amazing Ways How Wikipedia Uses Artificial Intelligence. 2018. Available online: https://www.forbes.com/sites/bernardmarr/2018/08/17/the-amazing-ways-how-wikipedia-uses-artificial-intelligence (accessed on 20 April 2023).

- Konieczny, P. From Adversaries to Allies? The Uneasy Relationship between Experts and the Wikipedia Community. She Ji J. Des. Econ. Innov. 2021, 7, 151–170. [Google Scholar] [CrossRef]

- Bruckman, A.S. Should You Believe Wikipedia?: Online Communities and the Construction of Knowledge; Cambridge University Press: Cambridge, UK, 2022. [Google Scholar] [CrossRef]

- Jemielniak, D. Wikipedia: Why is the common knowledge resource still neglected by academics? GigaScience 2019, 8, giz139. [Google Scholar] [CrossRef] [PubMed]

- Chiarello, F.; Trivelli, L.; Bonaccorsi, A.; Fantoni, G. Extracting and mapping industry 4.0 technologies using wikipedia. Comput. Ind. 2018, 100, 244–257. [Google Scholar] [CrossRef]

- Bonaccorsi, A.; Chiarello, F.; Fantoni, G.; Kammering, H. Emerging technologies and industrial leadership. A Wikipedia-based strategic analysis of Industry 4.0. Expert Syst. Appl. 2020, 160, 113645. [Google Scholar] [CrossRef]

- Ferragina, P.; Scaiella, U. TAGME: On-the-Fly Annotation of Short Text Fragments (by Wikipedia Entities). In Proceedings of the ACM International Conference on Information and Knowledge Management, Toronto, ON, Canada, 26–30 October 2010. CIKM’10. [Google Scholar]

- Piao, G.; Breslin, J.G. Exploring Dynamics and Semantics of User Interests for User Modeling on Twitter for Link Recommendations. In Proceedings of the 12th International Conference on Semantic Systems, New York, NY, USA, 12–15 September 2016; SEMANTICS 2016. pp. 81–88. [Google Scholar] [CrossRef]

- Ahmad, N.; Bull, S. Learner Trust in Learner Model Externalisations. In Proceedings of the 14th International Conference on Artificial Intelligence in Education, AIED 2009, Brighton, UK, 6–10 July 2009. [Google Scholar]

- Molnar, C. Interpretable Machine Learning; Lulu.com: Morrisville, NC, USA, 2020. [Google Scholar]

- Vrandečić, D.; Krötzsch, M. Wikidata: A free collaborative knowledgebase. Commun. ACM 2014, 57, 78–85. [Google Scholar] [CrossRef]

- Auer, S.; Bizer, C.; Kobilarov, G.; Lehmann, J.; Cyganiak, R.; Ives, Z. Dbpedia: A nucleus for a web of open data. In The Semantic Web; Springer: Berlin/Heidelberg, Germany, 2007; pp. 722–735. [Google Scholar]

- Miller, C. China and Taiwan Clash over Wikipedia Edits. 2019. Available online: https://www.bbc.co.uk/news/technology-49921173 (accessed on 20 April 2023).

- Tomašev, N.; Cornebise, J.; Hutter, F.; Mohamed, S.; Picciariello, A.; Connelly, B.; Belgrave, D.C.; Ezer, D.; van der Haert, F.C.; Mugisha, F.; et al. AI for social good: Unlocking the opportunity for positive impact. Nat. Commun. 2020, 11, 2468. [Google Scholar] [CrossRef]

- Holmes, W.; Porayska-Pomsta, K.; Holstein, K.; Sutherland, E.; Baker, T.; Shum, S.B.; Santos, O.C.; Rodrigo, M.T.; Cukurova, M.; Bittencourt, I.I.; et al. Ethics of AI in education: Towards a community-wide framework. Int. J. Artif. Intell. Educ. 2021, 32, 504–526. [Google Scholar] [CrossRef]

- Pozdniakov, S.; Martinez-Maldonado, R.; Tsai, Y.S.; Cukurova, M.; Bartindale, T.; Chen, P.; Marshall, H.; Richardson, D.; Gasevic, D. The question-driven dashboard: How can we design analytics interfaces aligned to teachers’ inquiry? In Proceedings of the LAK22: 12th International Learning Analytics and Knowledge Conference, Online, 21–25 March 2022; pp. 175–185. [Google Scholar]

- Brusilovsky, P. AI in Education, Learner Control, and Human-AI Collaboration. Int. J. Artif. Intell. Educ. 2023, 1–14. [Google Scholar] [CrossRef]

- Chaudhry, M.A.; Cukurova, M.; Luckin, R. A transparency index framework for AI in education. In Proceedings of the International Conference on Artificial Intelligence in Education, Durham, UK, 27–31 July 2022; Springer: Berlin/Heidelberg, Germany, 2022; pp. 195–198. [Google Scholar]

- Balog, K.; Radlinski, F.; Arakelyan, S. Transparent, Scrutable and Explainable User Models for Personalized Recommendation. In Proceedings of the 42nd International ACM SIGIR Conference on Research and Development in Information Retrieval, Paris, France, 21–25 July 2019. [Google Scholar]

- Nazaretsky, T.; Ariely, M.; Cukurova, M.; Alexandron, G. Teachers’ trust in AI-powered educational technology and a professional development program to improve it. Br. J. Educ. Technol. 2022, 53, 914–931. [Google Scholar] [CrossRef]

- Touvron, H.; Lavril, T.; Izacard, G.; Martinet, X.; Lachaux, M.A.; Lacroix, T.; Rozière, B.; Goyal, N.; Hambro, E.; Azhar, F.; et al. Llama: Open and efficient foundation language models. arXiv 2023, arXiv:2302.13971. [Google Scholar]

- Oquab, M.; Darcet, T.; Moutakanni, T.; Vo, H.; Szafraniec, M.; Khalidov, V.; Fernandez, P.; Haziza, D.; Massa, F.; El-Nouby, A.; et al. Dinov2: Learning robust visual features without supervision. arXiv 2023, arXiv:2304.07193. [Google Scholar]

- Das, D.; Kumar, N.; Longjam, L.A.; Sinha, R.; Roy, A.D.; Mondal, H.; Gupta, P. Assessing the capability of ChatGPT in answering first-and second-order knowledge questions on microbiology as per competency-based medical education curriculum. Cureus 2023, 15, e36034. [Google Scholar] [CrossRef]

- Rahman, M.M.; Watanobe, Y. ChatGPT for education and research: Opportunities, threats, and strategies. Appl. Sci. 2023, 13, 5783. [Google Scholar] [CrossRef]

- Raffel, C.; Shazeer, N.; Roberts, A.; Lee, K.; Narang, S.; Matena, M.; Zhou, Y.; Li, W.; Liu, P.J. Exploring the limits of transfer learning with a unified text-to-text transformer. J. Mach. Learn. Res. 2020, 21, 5485–5551. [Google Scholar]

- Lane, A. Open Information, Open Content, Open Source. In The Tower and The Cloud; EDUCAUSE: Boulder, CO, USA, 2010; pp. 158–168. [Google Scholar]

- Haven, J.; Boyd, D. Philanthropy’s Techno-Solutionism Problem; Data & Society Research Institute: New York, NY, USA, 2020. [Google Scholar]

- Milan, S. Techno-solutionism and the standard human in the making of the COVID-19 pandemic. Big Data Soc. 2020, 7. [Google Scholar] [CrossRef]

- Sætra, H.S. Technology and Sustainable Development: The Promise and Pitfalls of Techno-Solutionism; Taylor & Francis: Oxfordshire, UK, 2023. [Google Scholar]

- Metrôlho, J.; Ribeiro, F.; Araújo, R. A strategy for facing new employability trends using a low-code development platform. In Proceedings of the 14th International Technology, Education and Development Conference, Valencia, Spain, 2–4 March 2020; pp. 8601–8606. [Google Scholar]

- Wolf, T.; Debut, L.; Sanh, V.; Chaumond, J.; Delangue, C.; Moi, A.; Cistac, P.; Rault, T.; Louf, R.; Funtowicz, M.; et al. Transformers: State-of-the-Art Natural Language Processing. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing: System Demonstrations, Online, 16–20 November 2020; pp. 38–45. [Google Scholar]

- Herodotou, C.; Sharples, M.; Gaved, M.; Kukulska-Hulme, A.; Rienties, B.; Scanlon, E.; Whitelock, D. Innovative Pedagogies of the Future: An Evidence-Based Selection. Front. Educ. 2019, 4, 113. [Google Scholar] [CrossRef]

- Kukulska-Hulme, A.; Gaved, M.; Paletta, L.; Scanlon, E.; Jones, A.; Brasher, A. Mobile incidental learning to support the inclusion of recent immigrants. Ubiquitous Learn. Int. J. 2015, 7, 9–21. [Google Scholar] [CrossRef]

- Curtis, V. Online Citizen Science and the Widening of Academia: Distributed Engagement with Research and Knowledge Production; Springer: Berlin/Heidelberg, Germany, 2018. [Google Scholar]

- How, M.L.; Chan, Y.J.; Cheah, S.M.; Khor, A.C.; Say, E.M.P. Artificial Intelligence for Social Good in Responsible Global Citizenship Education: An Inclusive Democratized Low-Code Approach. In Proceedings of the 3rd World Conference on Teaching and Education, Vienna, Austria, 19–21 February 2021; pp. 81–89. [Google Scholar]

- Chan, C.K.Y. A comprehensive AI policy education framework for university teaching and learning. Int. J. Educ. Technol. High. Educ. 2023, 20, 1–25. [Google Scholar] [CrossRef]

- UK Department of Education. Generative Artificial Intelligence in Education: Departmental Statement. 2023. Available online: https://www.gov.uk/government/publications/generative-artificial-intelligence-in-education/generative-artificial-intelligence-ai-in-education (accessed on 22 November 2023).

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2024 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Bulathwela, S.; Pérez-Ortiz, M.; Holloway, C.; Cukurova, M.; Shawe-Taylor, J. Artificial Intelligence Alone Will Not Democratise Education: On Educational Inequality, Techno-Solutionism and Inclusive Tools. Sustainability 2024, 16, 781. https://doi.org/10.3390/su16020781

Bulathwela S, Pérez-Ortiz M, Holloway C, Cukurova M, Shawe-Taylor J. Artificial Intelligence Alone Will Not Democratise Education: On Educational Inequality, Techno-Solutionism and Inclusive Tools. Sustainability. 2024; 16(2):781. https://doi.org/10.3390/su16020781

Chicago/Turabian StyleBulathwela, Sahan, María Pérez-Ortiz, Catherine Holloway, Mutlu Cukurova, and John Shawe-Taylor. 2024. "Artificial Intelligence Alone Will Not Democratise Education: On Educational Inequality, Techno-Solutionism and Inclusive Tools" Sustainability 16, no. 2: 781. https://doi.org/10.3390/su16020781

APA StyleBulathwela, S., Pérez-Ortiz, M., Holloway, C., Cukurova, M., & Shawe-Taylor, J. (2024). Artificial Intelligence Alone Will Not Democratise Education: On Educational Inequality, Techno-Solutionism and Inclusive Tools. Sustainability, 16(2), 781. https://doi.org/10.3390/su16020781