Have Academics’ Citation Patterns Changed in Response to the Rise of World University Rankings? A Test Using First-Citation Speeds

Abstract

:1. Introduction

- (1)

- Have academics’ citation patterns changed since the establishment of world university rankings? In particular, has first-citation speed increased since the establishment of world university rankings?

- (2)

- What are the factors influencing first-citation probability? How have these factors changed with the emergence of world university rankings?

2. Literature Review

2.1. World University Rankings and Indicators

2.2. First Citation and Its Factors of Influence

3. Methodology

3.1. Sample

3.2. Data

3.3. Analytical Methods

3.4. Variables and Measures

4. Results

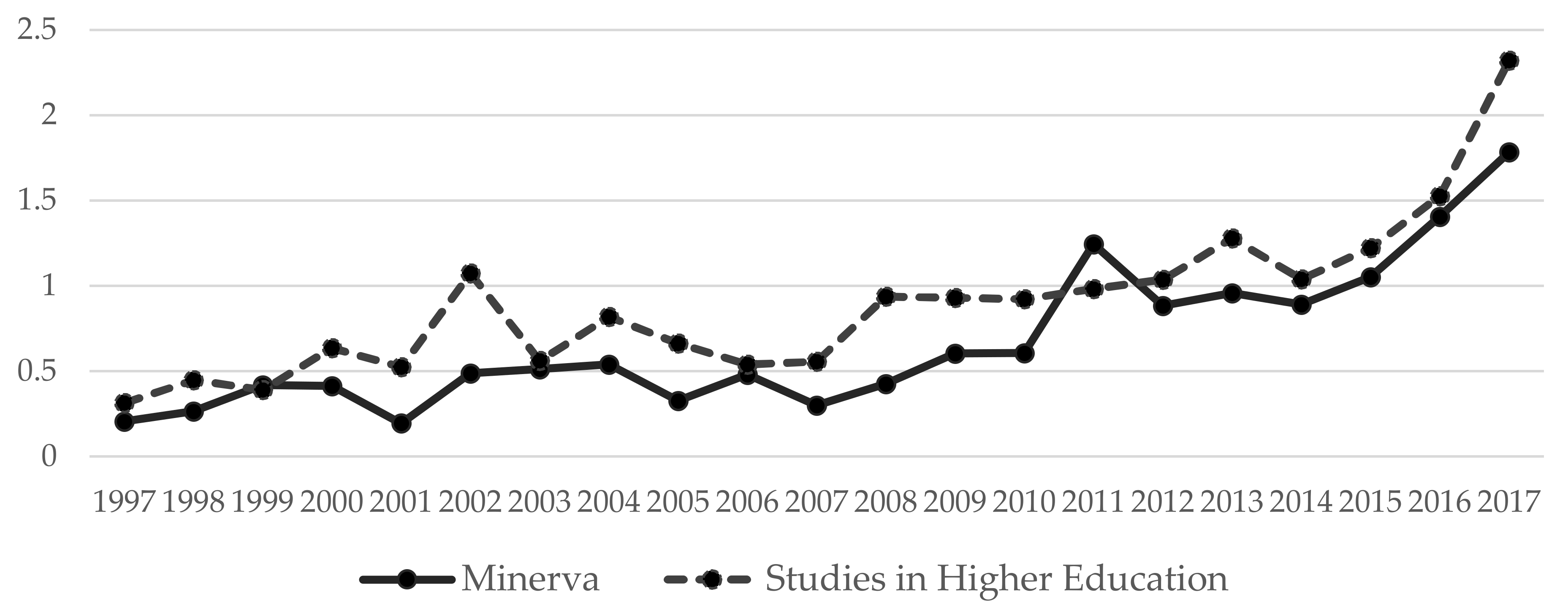

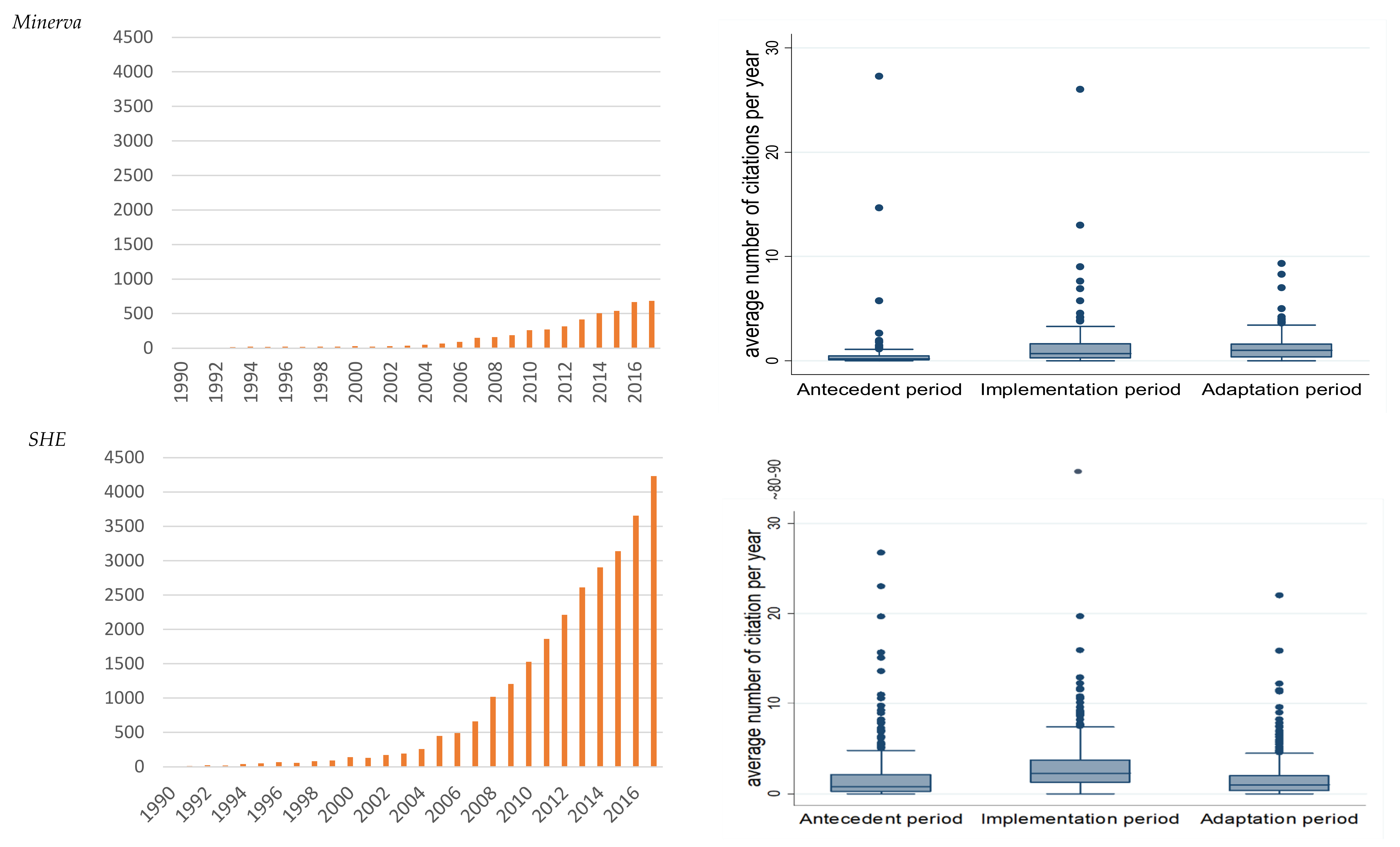

4.1. Changes in Citations over Time amid the Rise of WURs

4.2. First-Citation Speed by Journal and WURs Period

4.3. Factors Influencing First-Citation Probability

5. Discussion and Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- De la Poza, E.; Merello, P.; Barbera, A.; Celani, A. Universities’ Reporting on SDGs: Using THE Impact Rankings to Model and Measure Their Contribution to Sustainability. Sustainability 2021, 13, 2038. [Google Scholar] [CrossRef]

- Dill, D.D. Convergence and Diversity: The Role and Influence of University Rankings. In University Rankings, Diversity, and the New Landscape of Higher Education; Kehm, B., Stensaker, B., Eds.; Sense Publishers: Rotterdam, The Netherlands, 2009; pp. 97–116. [Google Scholar]

- Marginson, S. Global university rankings: Some potentials. In University Rankings, Diversity, and The New Landscape of Higher Education; Kehm, B., Stensaker, B., Eds.; Sense Publishers: Rotterdam, The Netherlands, 2009; pp. 85–96. [Google Scholar]

- Usher, A.; Medow, J. Global survey of university ranking and league tables. In University Rankings, Diversity, and the New Landscape of Higher Education; Kehm, B., Stensaker, B., Eds.; Sense Publishers: Rotterdam, The Netherlands, 2009; pp. 3–18. [Google Scholar]

- Dill, D.D.; Soo, M. Academic quality, league tables, and public policy: A cross-national analysis of university ranking systems. High. Educ. 2005, 49, 495–533. [Google Scholar] [CrossRef]

- Federkeil, G. Reputation Indicators in Rankings of Higher Education Institutions. In University Rankings, Diversity, and The New Landscape of Higher Education; Kehm, B., Stensaker, B., Eds.; Sense Publishers: Rotterdam, The Netherlands, 2009; pp. 19–34. [Google Scholar]

- Usher, A.; Savino, M. A World of Difference: A Global Survey of University League Tables; Educational Policy Insitute: Toronto, ON, Canada, 2006. [Google Scholar]

- Kauppi, N. The global ranking game: Narrowing academic excellence through numerical objectification. Stud. High. Educ. 2018, 43, 1750–1762. [Google Scholar] [CrossRef] [Green Version]

- De Rijcke, S.; Wouters, P.F.; Rushforth, A.D.; Franssen, T.P.; Hammarfelt, B. Evaluation practices and effects of indicator use-a literature review. Res. Eval. 2016, 25, 161–169. [Google Scholar] [CrossRef]

- Espeland, W.N.; Sauder, M. Rankings and reactivity: How public measures recreate social worlds. Am. J. Sociol. 2007, 113, 1–40. [Google Scholar] [CrossRef] [Green Version]

- Hazelkorn, E. Rankings and the Reshaping of Higher Education: The Battle for World-Class Excellence; Palgrave: London, UK, 2015. [Google Scholar]

- Butler, L. Explaining Australia’s increased share of ISI publications—The effects of a funding formula based on publication counts. Res. Policy 2003, 32, 143–155. [Google Scholar] [CrossRef]

- Butler, L. Modifying publication practices in response to funding formulas. Res. Eval. 2003, 12, 39–46. [Google Scholar] [CrossRef]

- Glaser, J.; Laudel, G. Advantages and dangers of ‘remote’ peer evaluation. Res. Eval. 2005, 14, 186–198. [Google Scholar] [CrossRef]

- Laudel, G.; Glaser, J. Beyond breakthrough research: Epistemic properties of research and their consequences for research funding. Res. Policy 2014, 43, 1204–1216. [Google Scholar] [CrossRef]

- Musselin, C. How peer review empowers the academic profession and university managers: Changes in relationships between the state, universities and the professoriate. Res. Policy 2013, 42, 1165–1173. [Google Scholar] [CrossRef]

- Van Noorden, R. Metrics: A Profusion of Measures. Nature 2010, 465, 864–866. [Google Scholar] [CrossRef]

- Woelert, P.; McKenzie, L. Follow the money? How Australian universities replicate national performance-based funding mechanisms. Res. Eval. 2018, 27, 184–195. [Google Scholar] [CrossRef]

- Han, J.; Kim, S. How rankings change universities and academic fields in Korea. Korean J. Sociol. 2017, 51, 1–37. [Google Scholar] [CrossRef]

- Baldock, C. Citations, Open Access and University Rankings. In World University Rankings and the Future of Higher Education; Downing, K., Ganotice, J., Fraide, A., Eds.; IGI Global: Hershey, PA, USA, 2016; pp. 129–139. [Google Scholar]

- Brankovic, J.; Ringel, L.; Werron, T. How Rankings Produce Competition: The Case of Global University Rankings. Z. Soziologie 2018, 47, 270–287. [Google Scholar] [CrossRef]

- Gu, X.; Blackmore, K. Quantitative study on Australian academic science. Scientometrics 2017, 113, 1009–1035. [Google Scholar] [CrossRef]

- Münch, R. Academic Capitalism: Universities in the Global Struggle for Excellence; Routledge: New York, NY, USA, 2014. [Google Scholar]

- Egghe, L. A heuristic study of the first-citation distribution. Scientometrics 2000, 48, 345–359. [Google Scholar] [CrossRef]

- Selten, F.; Neylon, C.; Huang, C.-K.; Groth, P. A longitudinal analysis of university rankings. Quant. Sci. Stud. 2020, 1, 1109–1135. [Google Scholar] [CrossRef]

- Moed, H.F. A critical comparative analysis of five world university rankings. Scientometrics 2017, 110, 967–990. [Google Scholar] [CrossRef] [Green Version]

- Marginson, S. Global University Rankings: Implications in general and for Australia. J. High. Educ. Policy Manag. 2007, 29, 131–142. [Google Scholar] [CrossRef]

- Uslu, B. A path for ranking success: What does the expanded indicator-set of international university rankings suggest? High. Educ. 2020, 80, 949–972. [Google Scholar] [CrossRef]

- Luque-Martínez, T.; Faraoni, N. Meta-ranking to position world universities. Stud. High. Educ. 2020, 45, 819–833. [Google Scholar] [CrossRef]

- Moshtagh, M.; Sotudeh, H. Correlation between universities’ Altmetric Attention Scores and their performance scores in Nature Index, Leiden, Times Higher Education and Quacquarelli Symonds ranking systems. J. Inform. Sci. 2021. [Google Scholar] [CrossRef]

- Garfield, E. Can citation indexing be automated. In Proceedings of the Statistical Association Methods for Mechanized Documentation, Symposium Proceedings; National Bureau of Standards: Washington, DC, USA, 1965; pp. 189–192. [Google Scholar]

- Judge, T.A.; Cable, D.M.; Colbert, A.E.; Rynes, S.L. What causes a management article to be cited—Article, author, or journal? Acad. Manag. J. 2007, 50, 491–506. [Google Scholar] [CrossRef]

- Goldratt, E. The Haystack Syndrome: Sifting Information out of the Data Ocean; North River Press: Great Barrington, MA, USA, 1990. [Google Scholar]

- Wang, J. Citation time window choice for research impact evaluation. Scientometrics 2013, 94, 851–872. [Google Scholar] [CrossRef]

- Huang, Y.; Bu, Y.; Ding, Y.; Lu, W. Exploring direct citations between citing publications. J. Inf. Sci. 2020. [Google Scholar] [CrossRef]

- Bornmann, L.; Daniel, H.D. Citation speed as a measure to predict the attention an article receives: An investigation of the validity of editorial decisions at Angewandte Chemie International Edition. J. Inf. 2010, 4, 83–88. [Google Scholar] [CrossRef]

- Van Dalen, H.P.; Henkens, K. Signals in science—On the importance of signaling in gaining attention in science. Scientometrics 2005, 64, 209–233. [Google Scholar] [CrossRef] [Green Version]

- Egghe, L.; Bornmann, L.; Guns, R. A proposal for a First-Citation-Speed-Index. J. Inf. 2011, 5, 181–186. [Google Scholar] [CrossRef]

- Van Raan, A.F.J. Sleeping Beauties in science. Scientometrics 2004, 59, 467–472. [Google Scholar] [CrossRef]

- Youtie, J. The use of citation speed to understand the effects of a multi-institutional science center. Scientometrics 2014, 100, 613–621. [Google Scholar] [CrossRef]

- Glanzel, W.; Schoepflin, U. A Bibliometric Study on Aging and Reception Processes of Scientific Literature. J. Inf. Sci. 1995, 21, 37–53. [Google Scholar] [CrossRef]

- Glanzel, W.; Rousseau, R.; Zhang, L. A Visual Representation of Relative First-Citation Times. J. Am. Soc. Inf. Sci. Tec. 2012, 63, 1420–1425. [Google Scholar] [CrossRef]

- Hancock, C.B. Stratification of Time to First Citation for Articles Published in the Journal of Research in Music Education: A Bibliometric Analysis. J. Res. Music Educ. 2015, 63, 238–256. [Google Scholar] [CrossRef]

- Yarbrough, C. Forum: Editor’s report. The status of the JRME, 2006 Cornelia Yarbrough, Louisiana State University JRME editor, 2000–2006. J. Res. Music Educ. 2006, 54, 92–96. [Google Scholar] [CrossRef]

- Zhao, S.X.; Lou, W.; Tan, A.M.; Yu, S. Do funded papers attract more usage? Scientometrics 2018, 115, 153–168. [Google Scholar] [CrossRef]

- Gläser, J. How can governance change research content? Linking science policy studies to the sociology of science. In Handbook on Science and Public Policy; Simon, D., Kuhlmann, S., Stamm, J., Canzler, W., Eds.; Edward Elgar Publishing: Cheltenham, UK, 2019; pp. 419–447. [Google Scholar]

- Wang, J.; Shapira, P. Funding acknowledgement analysis: An enhanced tool to investigate research sponsorship impacts: The case of nanotechnology. Scientometrics 2011, 87, 563–586. [Google Scholar] [CrossRef]

- Cantwell, B.; Taylor, B.J. Global Status, Intra-Institutional Stratification and Organizational Segmentation: A Time-Dynamic Tobit Analysis of ARWU Position Among U.S. Universities. Minerva 2013, 51, 195–223. [Google Scholar] [CrossRef]

- Wuchty, S.; Jones, B.F.; Uzzi, B. The increasing dominance of teams in production of knowledge. Science 2007, 316, 1036–1039. [Google Scholar] [CrossRef] [Green Version]

- Pislyakov, V.; Shukshina, E. Measuring excellence in Russia: Highly cited papers, leading institutions, patterns of national and international collaboration. J. Assoc. Inf. Sci. Tech. 2014, 65, 2321–2330. [Google Scholar] [CrossRef]

- Bornmann, L.; Daniel, H.D. What do citation counts measure? A review of studies on citing behavior. J. Doc. 2008, 64, 45–80. [Google Scholar] [CrossRef]

- Corbyn, Z. An easy way to boost a paper’s citations. Nature 2010. [Google Scholar] [CrossRef]

- Hedstrom, P.; Ylikoski, P. Causal Mechanisms in the Social Sciences. Ann. Rev. Sociol. 2010, 36, 49–67. [Google Scholar] [CrossRef] [Green Version]

- Rogers, E.M. Diffusion of Innovation; Free Press: New York, NY, USA, 1983. [Google Scholar]

- Bourgeois, L.J. Toward a Method of Middle-Range Theorizing. Acad. Manag. Rev. 1979, 4, 443–447. [Google Scholar] [CrossRef]

- Merton, R.K. On Sociological Theories of the Middle Range. In Social Theory and Social Structure; Mertion, R.K., Ed.; Simon & Schuster: New York, NY, USA, 1949; pp. 39–53. [Google Scholar]

- Suri, H. Purposeful sampling in qualitative research synthesis. Qual. Res. J. 2011, 11, 63–75. [Google Scholar] [CrossRef] [Green Version]

- Seawright, J.; Gerring, J. Case selection techniques in case study research—A menu of qualitative and quantitative options. Polit Res. Quart. 2008, 61, 294–308. [Google Scholar] [CrossRef]

- Costas, R.; van Leeuwen, T.N.; Bordons, M. Referencing patterns of individual researchers: Do top scientists rely on more extensive information sources? J. Am. Soc. Inf. Sci. Tec. 2012, 63, 2433–2450. [Google Scholar] [CrossRef] [Green Version]

- Kyvik, S.; Aksnes, D.W. Explaining the increase in publication productivity among academic staff: A generational perspective. Stud. High. Educ. 2015, 40, 1438–1453. [Google Scholar] [CrossRef]

- Hargens, L.L.; Hagstrom, W.O. Scientific Consensus and Academic Status Attainment Patterns. Sociol. Educ. 1982, 55, 183–196. [Google Scholar] [CrossRef]

- Daston, L. Science Studies and the History of Science. Crit. Inq. 2009, 35, 798–813. [Google Scholar] [CrossRef]

- Tight, M. Discipline and methodology in higher education research. High. Educ. Res. Dev. 2013, 32, 136–151. [Google Scholar] [CrossRef]

- Tight, M. Discipline and theory in higher education research. Res. Pap. Educ. 2014, 29, 93–110. [Google Scholar] [CrossRef]

- Dorta-Gonzalez, P.; Dorta-Gonzalez, M.I.; Santos-Penate, D.R.; Suarez-Vega, R. Journal topic citation potential and between-field comparisons: The topic normalized impact factor. J. Inf. 2014, 8, 406–418. [Google Scholar] [CrossRef] [Green Version]

- Waltman, L. A review of the literature on citation impact indicators. J. Inf. 2016, 10, 365–391. [Google Scholar] [CrossRef] [Green Version]

- Martin-Martin, A.; Orduna-Malea, E.; Thelwall, M.; Lopez-Cozar, E.D. Google Scholar, Web of Science, and Scopus: A systematic comparison of citations in 252 subject categories. J. Inf. 2018, 12, 1160–1177. [Google Scholar] [CrossRef] [Green Version]

- Hancock, C.B.; Price, H.E. First citation speed for articles in Psychology of Music. Psychol. Music 2016, 44, 1454–1470. [Google Scholar] [CrossRef]

- Cox, D.R. Regression Models and Life-Tables. J. R. Stat. Soc. B 1972, 34, 187–202. [Google Scholar] [CrossRef]

- Cleves, M.A.; Gould, W.M.; Gutierrez, R.; Marchenko, Y. An Introduction to Survival Analysis Using STATA; STATA Press: College Station, TX, USA, 2010. [Google Scholar]

- Bornmann, L.; Mutz, R. Growth rates of modern science: A bibliometric analysis based on the number of publications and cited references. J. Assoc. Inf. Sci. Tech. 2015, 66, 2215–2222. [Google Scholar] [CrossRef] [Green Version]

- Larsen, P.O.; von Ins, M. The rate of growth in scientific publication and the decline in coverage provided by Science Citation Index. Scientometrics 2010, 84, 575–603. [Google Scholar] [CrossRef] [Green Version]

- Abramo, G.; D’Angelo, C.A.; Cicero, T. What is the appropriate length of the publication period over which to assess research performance? Scientometrics 2012, 93, 1005–1017. [Google Scholar] [CrossRef]

- Nicolaisen, J.; Frandsen, T.F. Zero impact: A large-scale study of uncitedness. Scientometrics 2019, 119, 1227–1254. [Google Scholar] [CrossRef] [Green Version]

- Aspers, P. Knowledge and valuation in markets. Theory Soc. 2009, 38, 111–131. [Google Scholar] [CrossRef] [Green Version]

- Sauder, M.; Lynn, F.; Podolny, J.M. Status: Insights from Organizational Sociology. Ann. Rev. Sociol. 2012, 38, 267–283. [Google Scholar] [CrossRef] [Green Version]

- Lotka, A. The frequency distribution of scientific productivity. J. Wash. Acad. Sci. 1926, 16, 317–324. [Google Scholar]

- Creswell, J.W.; Plano-Clark, V.L. Designing and Conducting Mixed Methods Research; SAGE Publishing: Newbury Park, CA, USA, 2011. [Google Scholar]

- Adler, N.J.; Harzing, A.W. When Knowledge Wins: Transcending the Sense and Nonsense of Academic Rankings. Acad. Manag. Learn. Educ. 2009, 8, 72–95. [Google Scholar] [CrossRef] [Green Version]

- Agrawal, A.; McHale, J.; Oettl, A. How stars matter: Recruiting and peer effects in evolutionary biology. Res. Policy 2017, 46, 853–867. [Google Scholar] [CrossRef]

- Bozeman, B.; Corley, E. Scientists’ collaboration strategies: Implications for scientific and technical human capital. Res. Policy 2004, 33, 599–616. [Google Scholar] [CrossRef]

| ARWU a | QS b | THE c | Leiden d | SCImago e | U-Multirank f | |

|---|---|---|---|---|---|---|

| Since | 2003 | 2004 | 2004 | 2007 | 2009 | 2014 |

| Organisation | Shanghai Jiao Tong University | Quacquarelli Symonds | Times Higher Education | Centre for Science and Technology Studies (CWTS), Leiden University | SCImago Lab | Centre for Higher Education (CHE), Center for Higher Education Policy Studies (CHEPS), University of Twente, CWTS, and Fundación CYD, Spain. |

| Indicators | Highly cited researchers: 20% Papers published in Nature and Science: 20% Papers indexed in Science Citation Index-Expanded and Social Science Citation Index: 20% | Academic Reputation: 40% Citations per faculty: 20% | Research (volume, income and reputation): 30% Citations (research influence): 30% | Scientific impact Collaboration Open access | Research: 50% Normalized impact: 13% Output: 8% Not own journals: 3% Own journals: 3% Excellence: 2% High quality publication: 2% Open access: 2% Societal: 20% Altmetrics: 10% Inbound links: 5% Web size: 5% | Research |

| Bibliometric data source | Web of Science | Scopus | Web of Science | Web of Science | Scopus | Web of Science |

| Factor | Variable | Measurement |

|---|---|---|

| First-citation speed | First-citation speed | Time elapsed from year of publication to year of first citation |

| First-citation status | First-citation status | Cited case = 1; censored case (uncited) = 0 |

| Social mechanism | WURs periods | Antecedent period (1990–2003) (criterion variable) Implementation period (2004–2010) Adaptation period (2011–2017) |

| Prior recognition | Academic reputation | Corresponding author’s affiliated university within top 100 in ARWU = 1; other = 0 |

| JIF | SHE = 1; Minerva = 0 | |

| Third-party funding | Third-party funding sources acknowledged in a paper = 1; other = 0 | |

| Author characteristics | Number of authors | Number of authors in a paper |

| International collaboration | International collaboration = 1; others = 0 | |

| Paper-related factors | Number of references | Number of references cited in a paper |

| Paper length | Number of pages from first to last page |

| Variable | Minerva | SHE | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| N | Min. | Max. | Mean | SD | N | Min. | Max. | Mean | SD | |

| Total citations | 443 | 0 | 409 | 10.43 | 27.75 | 1346 | 0 | 1062 | 20.28 | 46.31 |

| No. of authors | 443 | 1 | 9 | 1.49 | 0.90 | 1346 | 1 | 11 | 2.14 | 1.26 |

| Paper length (no. of pages) | 443 | 1 | 76 | 21.36 | 7.05 | 1346 | 1 | 36 | 15.78 | 3.67 |

| No. of references | 443 | 0 | 234 | 46.87 | 28.57 | 1345 | 0 | 161 | 39.72 | 18.95 |

| Variable | Minerva | SHE | ||||||||

| N | % | N | % | |||||||

| Number of papers published in each WURs period | Antecedent period | 191 | 43.12 | 312 | 23.18 | |||||

| Implementation period | 109 | 24.60 | 316 | 23.48 | ||||||

| Adaptation period | 143 | 32.28 | 718 | 53.34 | ||||||

| First-citation status | Cited | 381 | 86.00 | 1184 | 87.96 | |||||

| Uncited | 62 | 14.00 | 162 | 12.04 | ||||||

| Academic reputation | Top-100 university in ARWU | 126 | 28.90 | 232 | 17.33 | |||||

| Other | 310 | 71.10 | 1107 | 82.67 | ||||||

| Third-party funding | Yes | 31 | 7.00 | 113 | 8.40 | |||||

| No | 412 | 93.00 | 1233 | 91.60 | ||||||

| International collaboration | Yes | 30 | 6.86 | 132 | 9.81 | |||||

| No | 407 | 93.14 | 1214 | 90.19 | ||||||

| Variable | Minerva | SHE | ||||

|---|---|---|---|---|---|---|

| Antecedent 1990–2003 | Implementation 2004–2010 | Adaptation 2011–2017 | Antecedent 1990–2003 | Implementation 2004–2010 | Adaptation 2011–2017 | |

| No. of papers | 191 | 109 | 143 | 312 | 316 | 718 |

| Average no. of papers per year | 13.64 | 15.57 | 20.43 | 22.29 | 45.14 | 102.57 |

| No. of citations | 2030 | 1751 | 838 | 11,262 | 11,441 | 4600 |

| Average no. of citations per paper | 10.63 | 16.06 | 5.86 | 36.10 | 36.21 | 6.41 |

| Average no. of citations of papers per year | 0.59 | 1.57 | 1.27 | 1.88 | 3.37 | 1.55 |

| Top-100 university in ARWU | 53 (28.80%) | 34 (31.19%) | 39 (27.27%) | 44 (14.19%) | 62 (19.62%) | 126 (17.67%) |

| Third-party funding | 0 (0.00%) | 4 (3.67%) | 27 (18.88%) | 0 (0.00%) | 1 (0.32%) | 112 (15.60%) |

| No. of authors | 1.30 | 1.41 | 1.80 | 1.72 | 1.99 | 2.39 |

| International collaboration | 4 (2.16%) | 3 (2.75%) | 23 (16.08%) | 11 (3.53%) | 16 (5.06%) | 105 (14.62%) |

| Average paper length | 20.84 | 20.34 | 22.84 | 13.83 | 15.97 | 16.54 |

| Total no. of references | 6780 | 5313 | 8695 | 8915 | 12,275 | 32,278 |

| No. of references from same journal | 230 (3.39%) | 105 (1.98%) | 376 (4.32%) | 274 (3.07%) | 533 (4.34%) | 1453 (4.50%) |

| Average no. of references in a paper | 35.50 | 48.74 | 60.80 | 28.57 | 38.84 | 45.08 |

| Journal | Period | N | First Citation Status | First-Citation Speed | ||

|---|---|---|---|---|---|---|

| Censored (Uncited, %) | Cited | Mean (Std. Err.) | Median (Std. Err.) | |||

| Minerva | Antecedent (1990–2003) | 191 | 28 (14.7%) | 163 | 9.78 (0.69) | 5.00 (0.51) |

| Implementation (2004–2010) | 109 | 9 (8.3%) | 100 | 4.38 (0.34) | 3.00 (0.18) | |

| Adaptation (2011–2017) | 143 | 25 (17.5%) | 118 | 2.66 (0.13) | 2.00 (0.13) | |

| Total | 443 | 62 (14.0%) | 381 | 6.66 (0.38) | 3.00 (0.15) | |

| SHE | Antecedent (1990–2003) | 312 | 17 (5.5%) | 295 | 5.91 (0.37) | 3.00 (0.16) |

| Implementation (2004–2010) | 316 | 1 (0.3%) | 315 | 2.56 (0.07) | 2.00 (0.06) | |

| Adaptation (2011–2017) | 718 | 144 (20.1%) | 574 | 2.14 (0.05) | 2.00 (0.05) | |

| Total | 1346 | 162 (12.0%) | 1184 | 3.36 (0.13) | 2.00 (0.04) | |

| Minerva | SHE | |||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Model 1 | Model 2 | Model 3 | Model 4 | |||||||||

| Hazard Ratio | Std. Err. | z | Hazard Ratio | Std. Err. | z | Hazard Ratio | Std. Err. | z | Hazard Ratio | Std. Err. | z | |

| WURs implementation period | - | - | - | 1.78 | 0.24 | 4.24 *** | - | - | - | 1.88 | 0.17 | 6.89 *** |

| WURs adaptation period | - | - | - | 2.72 | 0.41 | 6.63 *** | - | - | - | 1.96 | 0.18 | 7.37 *** |

| Top-100 university in ARWU | 0.96 | 0.11 | −0.34 | 1.00 | 0.12 | 0.02 | 1.12 | 0.09 | 1.44 | 1.10 | 0.09 | 1.21 |

| Third-party funding (TPF) | 2.13 | 0.49 | 3.25 ** | 1.55 | 0.37 | 1.87 + | 1.53 | 0.21 | 3.11 ** | 1.40 | 0.19 | 2.45 * |

| No. of authors | 1.09 | 0.06 | 1.47 | 1.05 | 0.06 | 0.73 | 1.05 | 0.03 | 2.01 * | 1.02 | 0.03 | 0.76 |

| International collaboration | 1.04 | 0.26 | 0.15 | 0.91 | 0.23 | −0.38 | 1.11 | 0.13 | 0.92 | 1.06 | 0.12 | 0.52 |

| Number of references | 1.01 | 0.00 | 5.51 *** | 1.01 | 0.00 | 3.43 ** | 1.01 | 0.00 | 4.84 *** | 1.00 | 0.00 | 2.59 * |

| Paper length | 0.99 | 0.01 | −0.93 | 1.00 | 0.01 | −0.37 | 1.03 | 0.01 | 2.91 ** | 1.02 | 0.01 | 1.90 + |

| N | 436 | 436 | 1270 | 1270 | ||||||||

| Log-likelihood | −2000.89 | −1978.16 | −7065.04 | −7031.86 | ||||||||

| LR chi2 | 45.26 *** | 90.72 *** | 82.70 *** | 149.05 *** | ||||||||

| Model 5 (WURs Antecedent) | Model 6 (WURs Implementation) | Model 7 (WURs Adaptation) | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Hazard Ratio | Std. Err. | z | Hazard Ratio | Std. Err. | z | Hazard Ratio | Std. Err. | z | |

| Top-100 university in ARWU | 1.08 | 0.13 | 0.63 | 1.15 | 0.14 | 1.15 | 1.03 | 0.10 | 0.25 |

| JIF (SHE) | 1.76 | 0.21 | 4.63 ** | 2.04 | 0.28 | 5.13 *** | 1.38 | 0.17 | 2.57 * |

| Third-party funding | (n.d.a.) | 2.03 | 0.96 | 1.50 | 1.36 | 0.17 | 2.47 * | ||

| No. of authors | 1.10 | 0.06 | 1.86 + | 1.05 | 0.05 | 1.02 | 0.99 | 0.03 | −0.34 |

| International collaboration | 0.87 | 0.27 | −0.45 | 1.07 | 0.27 | 0.25 | 1.04 | 0.13 | 0.34 |

| No. of references | 1.01 | 0.00 | 4.73 *** | 1.00 | 0.00 | 1.31 | 1.00 | 0.00 | 1.22 |

| Paper length | 1.00 | 0.01 | −0.12 | 1.01 | 0.01 | 0.98 | 1.01 | 0.01 | 1.09 |

| N | 494 | 425 | 787 | ||||||

| Log likelihood | −2467.18 | −2223.86 | −3713.74 | ||||||

| LR chi2 | 46.73 *** | 39.13 *** | 14.58 * | ||||||

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lee, S.J.; Schneijderberg, C.; Kim, Y.; Steinhardt, I. Have Academics’ Citation Patterns Changed in Response to the Rise of World University Rankings? A Test Using First-Citation Speeds. Sustainability 2021, 13, 9515. https://doi.org/10.3390/su13179515

Lee SJ, Schneijderberg C, Kim Y, Steinhardt I. Have Academics’ Citation Patterns Changed in Response to the Rise of World University Rankings? A Test Using First-Citation Speeds. Sustainability. 2021; 13(17):9515. https://doi.org/10.3390/su13179515

Chicago/Turabian StyleLee, Soo Jeung, Christian Schneijderberg, Yangson Kim, and Isabel Steinhardt. 2021. "Have Academics’ Citation Patterns Changed in Response to the Rise of World University Rankings? A Test Using First-Citation Speeds" Sustainability 13, no. 17: 9515. https://doi.org/10.3390/su13179515

APA StyleLee, S. J., Schneijderberg, C., Kim, Y., & Steinhardt, I. (2021). Have Academics’ Citation Patterns Changed in Response to the Rise of World University Rankings? A Test Using First-Citation Speeds. Sustainability, 13(17), 9515. https://doi.org/10.3390/su13179515