The State of Experimental Research on Community Interventions to Reduce Greenhouse Gas Emissions—A Systematic Review

Abstract

1. Introduction

2. Method

2.1. Eligibility Criteria

2.2. Information Sources

2.3. Search

2.4. Study Selection

2.5. Data Extraction Process

3. Results

4. Discussion

4.1. Limitations

4.2. Experimental Methods

4.3. The Nature of Community Interventions

4.4. The Power of Behavioral Science Research

5. Conclusions

Supplementary Materials

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

Author Note

Appendix A

- Step1:

- Open the corresponding Excel spreadsheet and locate the rows assigned to you for coding (i.e., cell A3).

- Step2:

- Navigate to the correct row and read the article title.

- Step3:

- Indicate Code 0a (code descriptions below) if the paper is clearly irrelevant based on title. If 0a is not immediately obvious, then go to step 4. If Code 0a is indicated, continue to next article and repeat.

- Step4:

- Read the abstract (you may need to double click the cell to view the whole abstract).

- Step5:

- Indicate Code 0b if the paper is irrelevant based on abstract. If indicated, continue to next article and repeat. If Code 0b not indicated, continue to Step 6.

- Step6:

- Indicate Codes 1–4 where relevant. Repeat for all articles.

- Step7:

- Return completed template by email to volunteer coordinators.

- Step1:

- Open the corresponding Excel spreadsheet and locate the rows assigned to you for coding (i.e., cell A3).

- Step2:

- Navigate to the correct row to locate the assigned article title. Retrieve the assigned article and read it.

- Step3:

- If the article does not contain a community intervention aimed at reducing greenhouse gas emissions indicated Code 0 (code descriptions below). If Code 0 is indicated, continue to the next article and repeat.

- Step4:

- Indicate Codes 1–8 where relevant. Repeat for all articles.

- Step5:

- Return completed template by email to volunteer coordinators.

- Step1:

- Open the corresponding Excel spreadsheet and locate the rows assigned to you for coding (i.e., cell A3).

- Step2:

- Navigate to the correct row to locate the assigned article title. Retrieve the assigned article and navigate to the reference section.

- Step3:

- Scan each article in the reference section looking for article titles that suggest a relevant evaluation may be contained therein.

- Step4:

- If relevant citation is found, paste it beside article title in corresponding spreadsheet.

- Step5:

- Repeat for all articles assigned.

- Step6:

- Return completed template to a volunteer coordinator.

References

- DeFries, R.S.; Edenhofer, O.; Halliday, A.N.; Heal, G.M.; Lenton, T.; Puma, M.; Rising, J.; Rockström, J.; Ruane, A.C.; Schellnhuber, H.J.; et al. The missing Economic Risks in Assessments of Climate Change Impacts; Grantham Research Institute: London, UK, 2019; Available online: https://www.lse.ac.uk/granthaminstitute/publication/the-missing-economic-risks-in-assessments-of-climate-change-impacts/ (accessed on 15 June 2020).

- Oreskes, N.; Oppenheimer, M.; Jamieson, D. Scientists Have Been Underestimating the Pace of Climate Change. Available online: https://blogs.scientificamerican.com/observations/scientists-have-been-underestimating-the-pace-of-climate-change/ (accessed on 14 June 2020).

- Friedlingstein, P.; Jones, M.W.; O’Sullivan, M.; Andrew, R.M.; Hauck, J.; Peters, G.P.; Peters, W.; Pongratz, J.; Sitch, S.; Le Quéré, C.; et al. Global carbon budget 2019. Earth Syst. Sci. Data 2019, 11, 1783–1838. [Google Scholar] [CrossRef]

- Luepker, R.V.; Murray, D.M.; Jacobs, D.R.; Mittelmark, M.B.; Bracht, N.; Carlaw, R.; Crow, R.; Elmer, P.; Finnegan, J.; Folsom, A.R. Community education for cardiovascular disease prevention: Risk factor changes in the Minnesota Heart Health Program. Am. J. Public Health 1994, 84, 1383–1393. [Google Scholar] [CrossRef] [PubMed]

- Fortmann, S.P.; Varady, A.N. Effects of a community-wide health education program on cardiovascular disease morbidity and mortality: The Stanford Five-City Project. Am. J. Epidemiol. 2000, 152, 316–323. [Google Scholar] [CrossRef] [PubMed]

- Community Intervention Trial for Smoking Cessation (COMMIT). I. cohort results from a four-year community intervention. Am. J. Public Health 1995, 85, 183–192. [Google Scholar]

- Biglan, A.; Ary, D.V.; Smolkowski, K.; Duncan, T.; Black, C. A randomised controlled trial of a community intervention to prevent adolescent tobacco use. Tob. Control 2000, 9, 24–32. [Google Scholar] [CrossRef] [PubMed][Green Version]

- Pentz, M.A.; Dwyer, J.H.; MacKinnon, D.P.; Flay, B.R.; Hansen, W.B.; Wang, E.; Johnson, C.A. A multicommunity trial for primary prevention of adolescent drug abuse. Effects on drug use prevalence. J. Am. Med. Assoc. 1989, 261, 3259–3266. [Google Scholar] [CrossRef]

- Oesterle, S.; Kuklinski, M.R.; Hawkins, J.D.; Skinner, M.L.; Guttmannova, K.; Rhew, I.C. Long-Term Effects of the Communities That Care Trial on Substance Use, Antisocial Behavior, and Violence Through Age 21 Years. Am. J. Public Health 2018, 108, 659–665. [Google Scholar] [CrossRef]

- Lin, E.S.; Flanagan, S.K.; Varga, S.M.; Zaff, J.F.; Margolius, M. The Impact of Comprehensive Community Initiatives on Population-Level Child, Youth, and Family Outcomes: A Systematic Review. Am. J. Community Psychol. 2020, 65, 479–503. [Google Scholar] [CrossRef]

- Henig, J.R.; Riehl, C.J.; Rebell, M.A.; Wolff, J.R. Putting Collective Impact in Context: A Review of the Literature on Local Cross-Sector Collaboration to Improve Education; Teachers College, Columbia University, Department of Education Policy and Social Analysis: New York, NY, USA, 2015. [Google Scholar]

- Abrahamse, W.; Steg, L.; Vlek, C.; Rothengatter, T. A review of intervention studies aimed at household energy conservation. J. Environ. Psychol. 2005, 25, 273–291. [Google Scholar] [CrossRef]

- Fischer, J.; Dyball, R.; Fazey, I.; Gross, C.; Dovers, S.; Ehrlich, P.R.; Brulle, R.J.; Christensen, C.; Borden, R.J. Human behavior and sustainability. Front. Ecol. Environ. 2012, 10, 153–160. [Google Scholar] [CrossRef]

- Osbaldiston, R.; Schott, J.P. Environmental Sustainability and Behavioral Science: Meta-Analysis of Proenvironmental Behavior Experiments. Environ. Behav. 2012, 44, 257–299. [Google Scholar] [CrossRef]

- Steg, L.; Vlek, C. Encouraging pro-environmental behaviour: An integrative review and research agenda. J. Environ. Psychol. 2009, 29, 309–317. [Google Scholar] [CrossRef]

- Wynes, S.; Nicholas, K.A.; Zhao, J.; Donner, S.D. Measuring what works: Quantifying greenhouse gas emission reductions of behavioural interventions to reduce driving, meat consumption, and household energy use. Environ. Res. Lett. 2018, 13, 113002. [Google Scholar] [CrossRef]

- Murray, B.; Rivers, N. British Columbia’s revenue-neutral carbon tax: A review of the latest “grand experiment” in environmental policy. Energy Policy 2015, 86, 674–683. [Google Scholar] [CrossRef]

- Sumner, J.; Bird, L.; Dobos, H. Carbon taxes: A review of experience and policy design considerations. Clim. Policy 2011, 11, 922–943. [Google Scholar] [CrossRef]

- Axon, S.; Morrissey, J.; Aiesha, R.; Hillman, J.; Revez, A.; Lennon, B.; Salel, M.; Dunphy, N.; Boo, E. The human factor: Classification of European community-based behaviour change initiatives. J. Clean. Prod. 2018, 182, 567–586. [Google Scholar] [CrossRef]

- Castán Broto, V.; Bulkeley, H. A survey of urban climate change experiments in 100 cities. Glob. Environ. Chang. 2013, 23, 92–102. [Google Scholar] [CrossRef]

- Penha-Lopes, G.; Henfrey, T. Reshaping the Future: How Local Communities Are Catalysing Social, Economic and Ecological Transformation in Europe; ECOLISE, the European Network for Community-Led Initiatives on Climate Change and Sustainability, Mountain View, CA; ECOLISE: Brussels, Belgium, 2019. [Google Scholar]

- Ford, J.D.; Berrang-Ford, L.; Paterson, J. A systematic review of observed climate change adaptation in developed nations. Clim. Chang. 2011, 106, 327–336. [Google Scholar] [CrossRef]

- Landholm, D.M.; Holsten, A.; Martellozzo, F.; Reusser, D.E.; Kropp, J.P. Climate change mitigation potential of community-based initiatives in Europe. Reg. Environ. Chang. 2018, 19, 927–938. [Google Scholar] [CrossRef]

- McNamara, K.E.; Buggy, L. Community-based climate change adaptation: A review of academic literature. Local Environ. 2016, 22, 443–460. [Google Scholar] [CrossRef]

- Moloney, S.; Horne, R.E.; Fien, J. Transitioning to low carbon communities—From behaviour change to systemic change: Lessons from Australia. Energy Policy 2010, 38, 7614–7623. [Google Scholar] [CrossRef]

- Grimshaw, J. So what has the Cochrane Collaboration ever done for us? A report card on the first 10 years. CMAJ 2004, 171, 747–749. [Google Scholar] [CrossRef][Green Version]

- Zettle, R.D.; Barnes-Holmes, D.; Hayes, S.C.; Biglan, A. The Wiley Handbook of Contextual Behavioral Science; John Wiley & Sons: New York, NY, USA, 2016. [Google Scholar]

- Biglan, A. The Nurture Effect: How the Science of Human Behavior Can Improve Our Lives and Our World; New Harbinger: Oakland, CA, USA, 2015. [Google Scholar]

- O’Connor, R.E.; Vadasy, P.F. Handbook of Reading Interventions; The Guilford Press: New York, NY, USA, 2011. [Google Scholar]

- Mudford, O.C.; Taylor, S.A.; Martin, N.T. Continuous recording and interobserver agreement algorithms reported in the Journal of Applied Behavior Analysis (1995–2005). J. Appl. Behav. Anal. 2009, 42, 165–169. [Google Scholar] [CrossRef] [PubMed]

- Gelino, B.; Erath, T.; Reed, D.D. Review of Sustainability-Focused Empirical Intervention Published in Behavior Analytic Outlets. 2020. Available online: https://osf.io/43xzb/ (accessed on 21 June 2020).

- Maki, A.; Burns, R.J.; Ha, L.; Rothman, A.J. Paying people to protect the environment: A meta-analysis of financial incentive interventions to promote proenvironmental behaviors. J. Environ. Psychol. 2016, 47, 242–255. [Google Scholar] [CrossRef]

- Nisa, C.F.; Bélanger, J.J.; Schumpe, B.M.; Faller, D.G. Meta-analysis of randomised controlled trials testing behavioural interventions to promote household action on climate change. Nat. Commun. 2019, 10, 4545. [Google Scholar] [CrossRef]

- Moher, D.; Liberati, A.; Tetzlaff, J.; Altman, D.G.; Group, P. Preferred reporting items for systematic reviews and meta-analyses: The PRISMA statement. PLoS Med. 2009, 6, e1000097. [Google Scholar] [CrossRef]

- Shadish, W.R.; Cook, T.D.; Campbell, D.T. Experimental and Quasi-Experimental Designs for Generalized Causal Inference; Houghton Mifflin Co.: Boston, MA, USA, 2002. [Google Scholar]

- Kazdin, A.E. Single-Case Research Designs: Methods for Clinical and Applied Settings, 2nd ed.; Oxford University Press: Oxford, UK, 2011. [Google Scholar]

- Grady, S.; Hirst, E. Evaluation of a Wisconsin utility home energy-audit program. J. Environ. Syst. 1982, 12, 303–320. [Google Scholar]

- Allcott, H.; Rogers, T. The Short-Run and Long-Run Effects of Behavioral Interventions: Experimental Evidence from Energy Conservation. Am. Econ. Rev. 2014, 104, 3003–3037. [Google Scholar] [CrossRef]

- Hutton, R.B.; McNeill, D.L. The Value of Incentives in Stimulating Energy Conservation. J. Consum. Res. 1981, 8, 291–298. [Google Scholar] [CrossRef]

- Cui, Z.; Zhang, H.; Chen, X.; Zhang, C.; Ma, W.; Huang, C.; Zhang, W.; Mi, G.; Miao, Y.; Li, X.; et al. Pursuing sustainable productivity with millions of smallholder farmers. Nature 2018, 555, 363–366. [Google Scholar] [CrossRef]

- McDougall, G.H.G.; Claxton, J.D.; Ritchie, J.R.B. Residential Home Audits: An Empirical Analysis of the Enersave Program. J. Environ. Syst. 1982, 12. [Google Scholar] [CrossRef]

- Alberts, H.; Moreira, C.; Pérez, R.M. Firewood substitution by kerosene stoves in rural and urban areas of Nicaragua, social acceptance, energy policies, greenhouse effect and financial implications. Energy Sustain. Dev. 1997, 3, 26–39. [Google Scholar] [CrossRef]

- Winett, R.A.; Leckliter, I.N.; Chinn, D.E.; Stahl, B.; Love, S.Q. Effects of television modeling on residential energy conservation. J. Appl. Behav. Anal. 1985, 18, 33–44. [Google Scholar] [CrossRef]

- Climate Mayors Climate Mayors Launches Steering Committee to Strengthen Climate Action. Available online: http://climatemayors.org/ (accessed on 14 June 2020).

- Stern, P.C. Individual and household interactions with energy systems: Toward integrated understanding. Energy Res. Soc. Sci. 2014, 1, 41–48. [Google Scholar] [CrossRef]

- Schapke, N.; Stelzer, F.; Caniglia, G.; Bergmann, M.; Wanner, M.; Singer-Brodowski, M.; Loorbach, D.; Olsson, P.; Baedeker, C.; Lang, D.J. Jointly Experimenting for Transformation? Shaping Real-World Laboratories by Comparing Them. Gaia-Ecol. Perspect. Sci. Soc. 2018, 27, 85–96. [Google Scholar] [CrossRef]

- Liedtke, C.; Baedeker, C.; Hasselkub, M.; Rohn, H.; Grinewitschus, V. User-integrated innovation in Sustainable LivingLabs: An experimental infrastructure for researching and developing sustainable product service systems. J. Clean. Prod. 2015, 97, 106–116. [Google Scholar] [CrossRef]

- Nevens, F.; Frantzeskaki, N.; Gorissen, L.; Loorbach, D. Urban Transition Labs: Co-creating transformative action for sustainable cities. J. Clean. Prod. 2013, 50, 111–122. [Google Scholar] [CrossRef]

- Wanner, M.; Hilger, A.; Westerkowski, J.; Rose, M.; Stelzer, F.; Schäpke, N. Towards a Cyclical Concept of Real-World Laboratories. Disp-Plan. Rev. 2018, 54, 94–114. [Google Scholar] [CrossRef]

- Hayes, S.C. A Liberated Mind: How to Pivot toward What Matters; Penguin Publishing Group: New York, NY, USA, 2019. [Google Scholar]

- Embry, D.D. The Good Behavior Game: A best practice candidate as a universal behavioral vaccine. Clin. Child Fam. Psychol. Rev. 2002, 5, 273–297. [Google Scholar] [CrossRef]

- Farrington, D.P.; Welsh, B.C. Family-based Prevention of Offending: A Meta-analysis. Aust. N. Z. J. Criminol. 2003, 36, 127–151. [Google Scholar] [CrossRef]

- Kumpfer, K.L.; Alvarado, R. Family-strengthening approaches for the prevention of youth problem behaviors. Am. Psychol. 2003, 58, 457–465. [Google Scholar] [CrossRef]

- Van Ryzin, M.J.; Roseth, C.J. Cooperative learning effects on peer relations and alcohol use in middle school. J. Appl. Dev. Psychol. 2019, 64, 101059. [Google Scholar] [CrossRef]

- Van Ryzin, M.J.; Roseth, C.J. Effects of cooperative learning on peer relations, empathy, and bullying in middle school. Aggress. Behav. 2019, 45, 643–651. [Google Scholar] [CrossRef]

- Van Ryzin, M.J.; Roseth, C.J.; Fosco, G.M.; Lee, Y.K.; Chen, I.C. A component-centered meta-analysis of family-based prevention programs for adolescent substance use. Clin. Psychol. Rev. 2016, 45, 72–80. [Google Scholar] [CrossRef]

- Cochrane Reviews|Cochrane Library. Available online: https://www.cochranelibrary.com/ (accessed on 13 August 2020).

- Green, D.P.; Gerber, A.S. The underprovision of experiments in political science. Ann. Am. Acad. Political Soc. Sci. 2003, 589, 94–112. [Google Scholar] [CrossRef]

- Arceneaux, K. The Benefits of Experimental Methods for the Study of Campaign Effects. Political Commun. 2010, 27, 199–215. [Google Scholar] [CrossRef]

- Cohen, A.M.; Stavri, P.Z.; Hersh, W.R. A categorization and analysis of the criticisms of Evidence-Based Medicine. Int. J. Med. Inform. 2004, 73, 35–43. [Google Scholar] [CrossRef]

- Kagel, J.H.; Roth, A.E. The Handbook of Experimental Economics; Princeton University Press: Princeton, NJ, USA, 2016; Volume 2. [Google Scholar]

- Sherman, L.W. Misleading Evidence and Evidence-Led Policy: Making Social Science more Experimental. Ann. Am. Acad. Political Soc. Sci. 2003, 589, 6–19. [Google Scholar] [CrossRef]

- Wolfram, M. Cities shaping grassroots niches for sustainability transitions: Conceptual reflections and an exploratory case study. J. Clean. Prod. 2018, 173, 11–23. [Google Scholar] [CrossRef]

- Heiskanen, E.; Jalas, M.; Rinkinen, J.; Tainio, P. The local community as a “low-carbon lab”: Promises and perils. Environ. Innov. Soc. Transit. 2015, 14, 149–164. [Google Scholar] [CrossRef]

- Biglan, A.; Ary, D.V.; Wagenaar, A.C. The value of interrupted time-series experiments for community intervention research. Prev. Res. 2000, 1, 31–49. [Google Scholar]

- Brown, C.A.; Lilford, R.J. The stepped wedge trial design: A systematic review. BMC Med. Res. Methodol. 2006, 6, 54. [Google Scholar] [CrossRef]

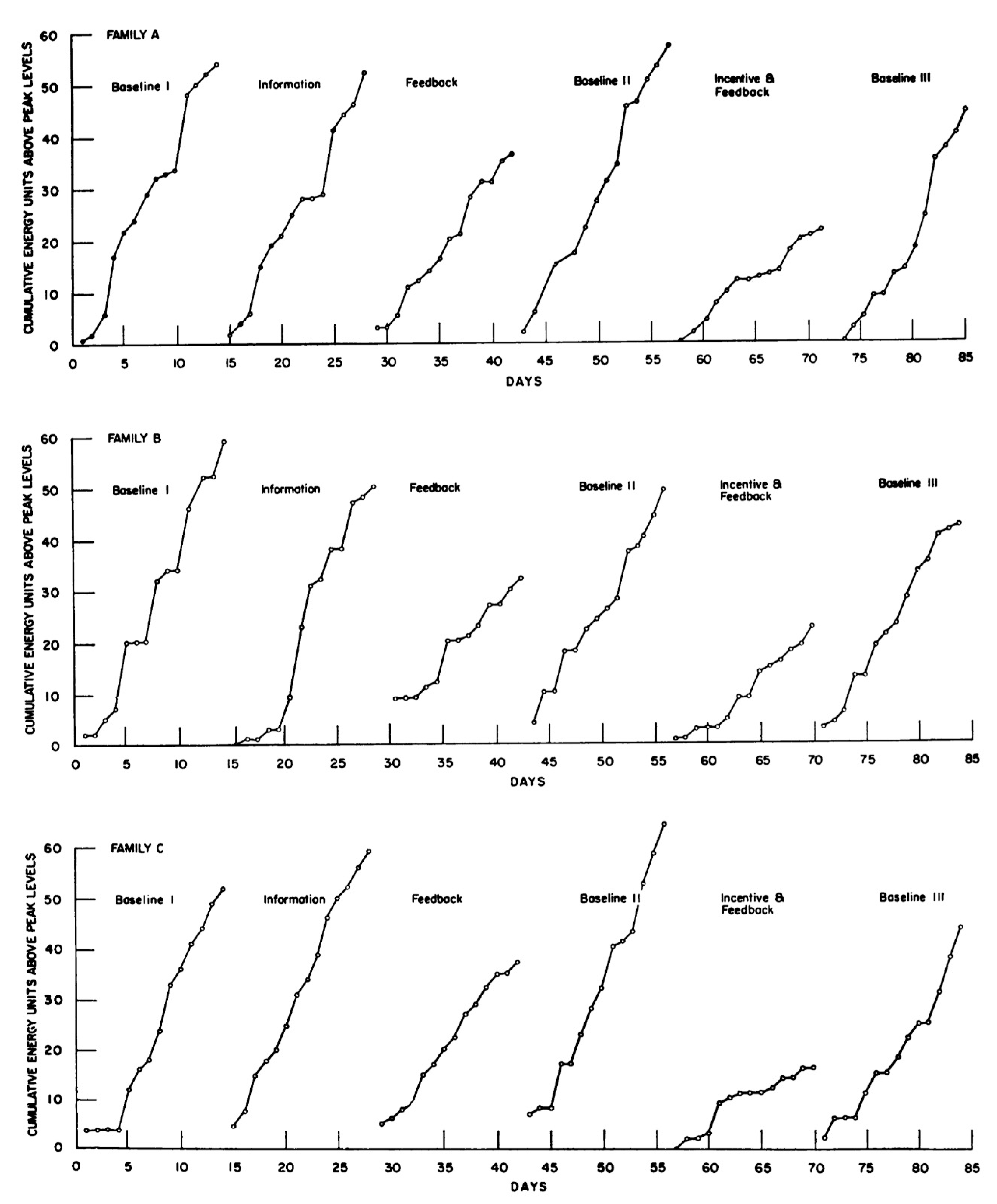

- Kohlenberg, R.; Phillips, T.; Proctor, W. A behavioral analysis of peaking in residential electrical-energy consumers. J. Appl. Behav. Anal. 1976, 9, 13–18. [Google Scholar] [CrossRef]

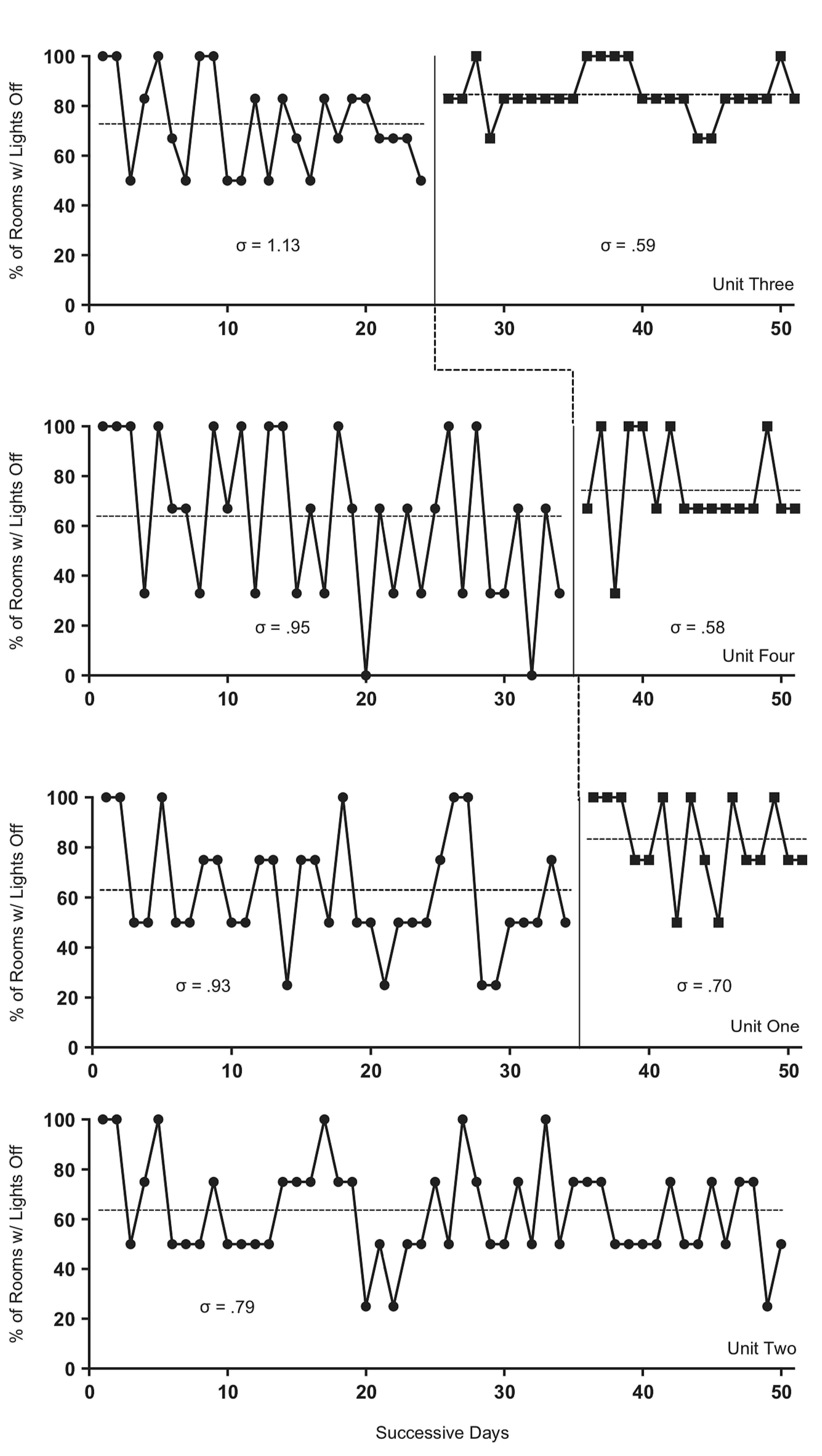

- Clayton, M.; Nesnidol, S. Reducing Electricity Use on Campus: The Use of Prompts, Feedback, and Goal Setting to Decrease Excessive Classroom Lighting. J. Organ. Behav. Manag. 2017, 37, 196–206. [Google Scholar] [CrossRef]

- Guastaferro, K.; Collins, L.M. Achieving the Goals of Translational Science in Public Health Intervention Research: The Multiphase Optimization Strategy (MOST). Am. J. Public Health 2019, 109, S128–S129. [Google Scholar] [CrossRef]

- Stern, P.C. A reexamination on how behavioral interventions can promote household action to limit climate change. Nat. Commun. 2020, 11, 918. [Google Scholar] [CrossRef]

- Stern, P.C.; Gardner, G.T.; Vandenbergh, M.P.; Dietz, T.; Gilligan, J.M. Design Principles for Carbon Emissions Reduction Programs. Environ. Sci. Technol. 2010, 44, 4847–4848. [Google Scholar] [CrossRef]

- Bracht, N.; Finnegan, J.R., Jr.; Rissel, C.; Weisbrod, R.; Gleason, J.; Corbett, J.; Veblen-Mortenson, S. Community ownership and program continuation following a health demonstration project. Health Educ. Res. 1994, 9, 243–255. [Google Scholar] [CrossRef]

- Cabaj, M.; Weaver, L. Collective Impact 3.0: An Evolving Framework for Community Change; Tamarack Institute: Waterloo, ON, Canada, 2016; pp. 1–14. [Google Scholar]

- Vandenbergh, M.P.; Gilligan, J.M. Beyond Politics: The Private Governance Response to Climate Change; Cambridge University Press: Cambridge, UK, 2017; p. 494. [Google Scholar]

- Dietz, T.; Gardner, G.T.; Gilligan, J.; Stern, P.C.; Vandenbergh, M.P. Household actions can provide a behavioral wedge to rapidly reduce US carbon emissions. Proc. Natl. Acad. Sci. USA 2009, 106, 18452–18456. [Google Scholar] [CrossRef]

- Biglan, A.; Ary, D.; Yudelson, H.; Duncan, T.E.; Hood, D. Experimental evaluation of a modular approach to mobilizing antitobacco influences of peers and parents. Am. J. Community Psychol. 1996, 24, 311–339. [Google Scholar] [CrossRef]

- Rothstein, R.N. Television feedback used to modify gasoline consumption. Behav. Ther. 1980, 11, 683–688. [Google Scholar] [CrossRef]

- Biglan, A.; Ary, D.V.; Koehn, V.; Levings, D.; Smith, S.; Wright, Z.; James, L.; Henderson, J. Mobilizing positive reinforcement in communities to reduce youth access to tobacco. Am. J. Community Psychol. 1996, 24, 625–638. [Google Scholar] [CrossRef]

- Behavior Science Coalition Climate Change Task Force. A Review of Research Funding for Reducing Greenhouse Gas Emissions. In preparation.

- Fischhoff, B. Making behavioral science integral to climate science and action. Behav. Public Policy 2020, 1–15. [Google Scholar] [CrossRef]

- McConnell, S. How can experiments play a greater role in public policy? Three notions from behavioral psychology. Behav. Public Policy 2020, 1–10. [Google Scholar] [CrossRef]

| TITLE-ABS-KEY(community OR communities) AND |

| TITLE-ABS-KEY(“climate change” OR “global warm*” OR “greenhouse gas*” OR ghg OR “carbon emission*” OR “co2 emission*”) AND |

| TITLE-ABS-KEY(trial* OR random* OR “interrupted time-series” OR “multiple baseline” OR “time-series design” OR “experiment*” OR single-case OR interven*) AND |

| TITLE-ABS-KEY(energy OR electricity OR food OR plant-based OR diet OR refriger* OR cool* OR chlorofluorocarbon OR cfc OR cryogenic* OR “heat remov*” OR “heat recov*” OR “heat exchange”) AND |

| (Exclude(subjarea, “chem”) or exclude(subjarea, “ceng”) or exclude(subjarea, “phys”) or exclude (subjarea, “mate”)) and (exclude (subjarea, “bioc”) or exclude(subjarea, “medi”) or exclude(subjarea, “comp”) or exclude(subjarea, “math”) or exclude(subjarea, “immu”) or exclude(subjarea, “nurs”) or exclude(subjarea, “phar”) or exclude(subjarea, “arts”) or exclude(subjarea, “vete”) or exclude(subjarea, “heal”)) |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Biglan, A.; Bonner, A.C.; Johansson, M.; Ghai, J.L.; Van Ryzin, M.J.; Dubuc, T.L.; Seniuk, H.A.; Fiebig, J.H.; Coyne, L.W. The State of Experimental Research on Community Interventions to Reduce Greenhouse Gas Emissions—A Systematic Review. Sustainability 2020, 12, 7593. https://doi.org/10.3390/su12187593

Biglan A, Bonner AC, Johansson M, Ghai JL, Van Ryzin MJ, Dubuc TL, Seniuk HA, Fiebig JH, Coyne LW. The State of Experimental Research on Community Interventions to Reduce Greenhouse Gas Emissions—A Systematic Review. Sustainability. 2020; 12(18):7593. https://doi.org/10.3390/su12187593

Chicago/Turabian StyleBiglan, Anthony, Andrew C. Bonner, Magnus Johansson, Jessica L. Ghai, Mark J. Van Ryzin, Tiffany L. Dubuc, Holly A. Seniuk, Julia H. Fiebig, and Lisa W. Coyne. 2020. "The State of Experimental Research on Community Interventions to Reduce Greenhouse Gas Emissions—A Systematic Review" Sustainability 12, no. 18: 7593. https://doi.org/10.3390/su12187593

APA StyleBiglan, A., Bonner, A. C., Johansson, M., Ghai, J. L., Van Ryzin, M. J., Dubuc, T. L., Seniuk, H. A., Fiebig, J. H., & Coyne, L. W. (2020). The State of Experimental Research on Community Interventions to Reduce Greenhouse Gas Emissions—A Systematic Review. Sustainability, 12(18), 7593. https://doi.org/10.3390/su12187593