1. Introduction

As the recent coronavirus outbreak prompted universities to start shifting classes online either for a few weeks or for the remainder of the spring semester of 2020, e-learning and remote education have popped up as the magical alternative for in-person classes in the time of the COVID-19 pandemic. However, e-learning was not originally launched as a result of the outbreak of coronavirus; in fact, it has been used as a solution for numerous problems in education systems for a while, especially in developing countries. The most important among these problems is the shortage of qualified and experienced faculty, which constricts the learning opportunities in not only rural areas, but also urban [

1]. E-learning systems are growing tremendously as an alternative means to offer educational services at self-pace, anywhere and anytime. Ozkan and Koseler define e-learning as an electronic mechanism used to deliver learning material to learners [

2]. According to the IEEE Technology Standard Committee, e-learning systems are learning systems that use web browsers to impart an online learning experience. Another definition is that e-learning is a combination of a computer, browser, and internet to provide online education and training [

3,

4].

There are several e-learning means to deliver education, such as CDs, online chats, forums, video conferencing, and webcasting. At present, e-learning websites are considered a common source of delivering subject-related material. The learners interact with these websites in order to perform learning tasks efficiently, effectively, and satisfactorily. However, quality continues to be a serious issue in these websites, since most of them are insufficiently effective. Therefore, learners find it difficult to perform their tasks using most of these websites [

4]. To impart the satisfactory learning experience, the websites should be effective, efficient, and usable so that extraneous cognitive load can be avoided. Thus, the quality of e-learning websites is an important aspect to meet the program learning objectives (PLO), and students heavily rely on high-quality e-learning websites to attain the course learning outcomes (CLO).

Consequently, educational institutes around the globe are switching towards an electronic mode of education in order to attract more and more international students. Besides developed countries, the developing countries are also ambitious to adopt the concept of e-learning; Singapore and Malaysia have completely revamped their educational system to integrate information and communication technologies (ICT) in their learning environments [

5,

6]. For the maximum utilization of e-learning websites to attain the PLO and CLO, quality is a mandatory aspect. Previous research has shown that the quality of e-learning resources influences e-learning students’ satisfaction, retention, and loyalty [

7]. Developing countries are striving for the implementation of e-learning resources with their limited budget to contend with educational problems, such as a lack of qualified teachers, issues of access, and poor learning outcomes [

8]. Web-based e-learning systems have successfully been implemented in many places; however, many of them have failed to realize the objectives, motives, and expectations behind their development. There could be many reasons for such failure, but the most obvious is the poor quality of e-learning resources [

9]. Hence, the availability of guidelines for the design and development of high-quality web-based e-learning systems is crucial.

It is widely recognized that the quality of web-based e-learning systems is of utmost importance to achieve the learning objectives and to resolve chronic educational problems [

10]. According to IEEE, the quality “is the degree to which a system, component, or process meets customer expectations” [

11]. The design of e-learning websites should enable efficient and effective human–computer interactions, as well as reduce the user’s workload by taking advantage of the computer’s capabilities. For the best possible learning outcomes, designers need to consider some important user interface parameters while developing e-learning websites. The exposure of such design parameters requires evidence-based information rather than mere opinions, and this can be achieved through the sound mechanism of evaluation for e-learning websites.

There are two major dimensions of e-learning systems with respect to evaluation: the first is pedagogical and the second is related to the design and development aspects. Numerous frameworks and models exist that could be used to assure the quality of web-based e-learning. However, these models lack the guidance of the e-learning industry, which is, among other factors, the most important factor affecting the quality of e-learning websites. Secondly, such models are mainly feasible to evaluate the pedagogical aspects of e-learning systems rather than the design and development aspects [

12]. In this study, we argue that an evaluation model is required to discover the factors related to the quality of the design and development of e-learning systems and to determine the relative importance of such factors in order to achieve a high-quality e-learning product. The outcome of this work would help designers, developers, and software project managers in making decisions regarding the parameters they should prioritize while producing a high-quality e-learning product. How the quality of web-based e-learning systems could be improved is an open research question that should be addressed forthwith. This study raises two important questions: (i) which factors influence the quality of e-learning through websites? (ii) How will these factors be ranked?

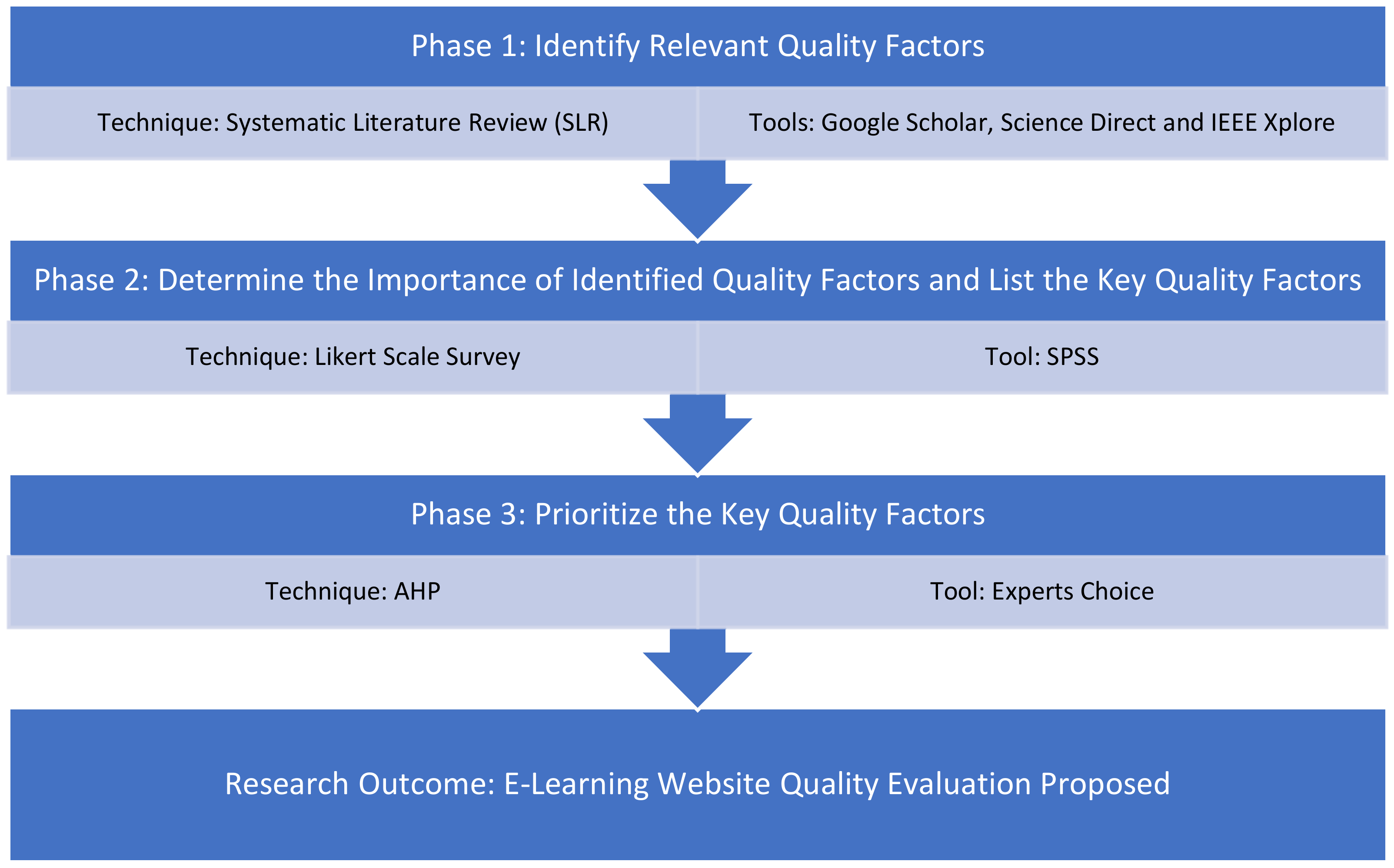

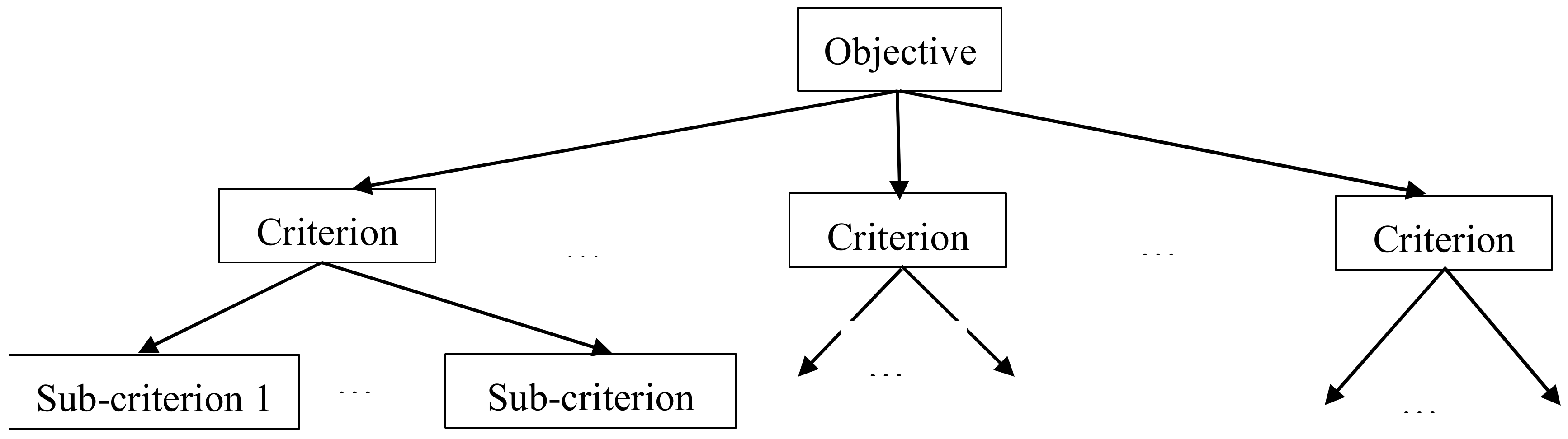

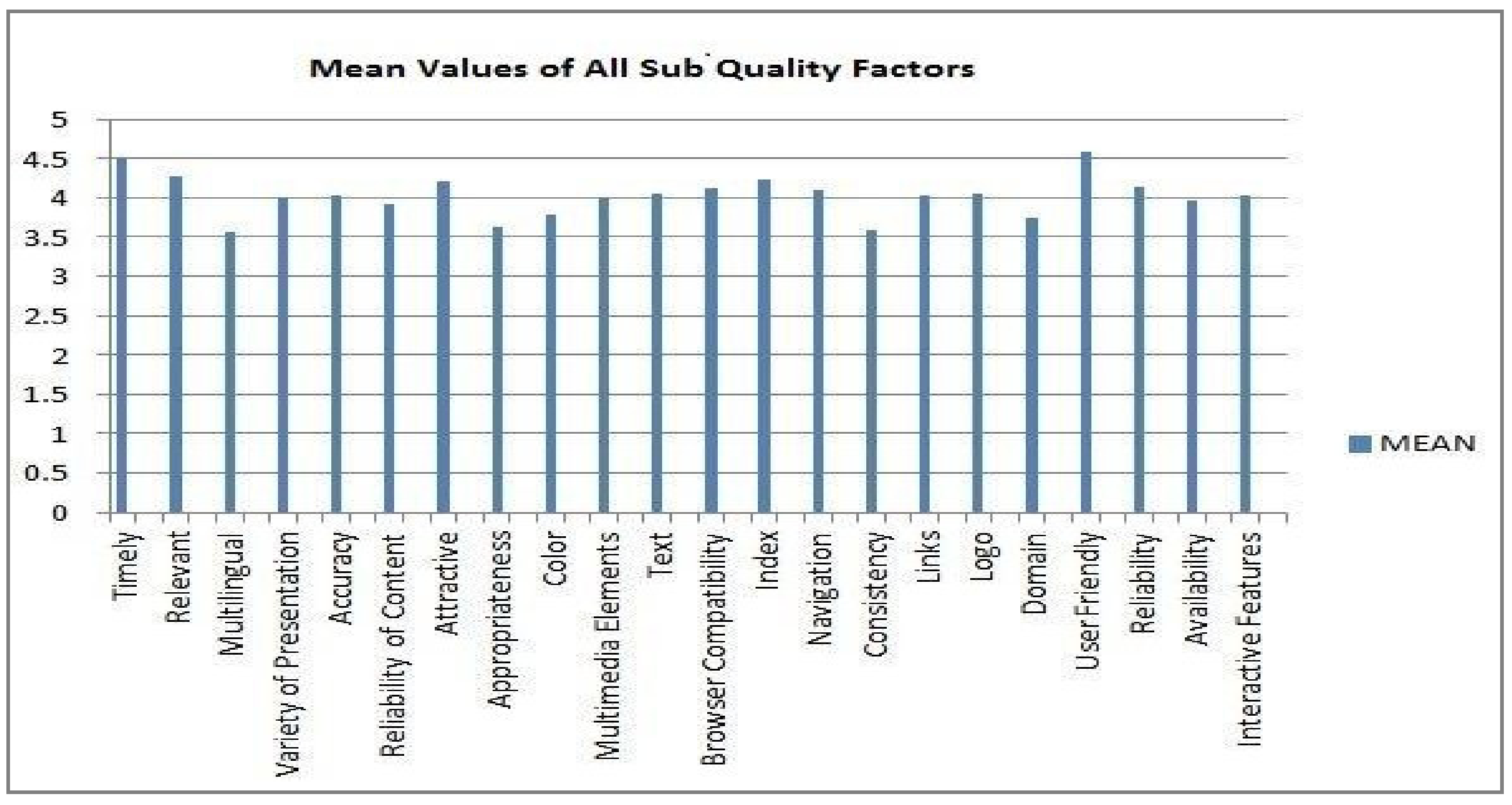

This study aimed particularly at (i) the identification of the key factors (i.e., the most important) affecting the quality of e-learning websites; (ii) the prioritization of the identified factors by defining their relative importance; (iii) the proposed hierarchical quality model for the evaluation of e-learning websites. The methodology used to attain these goals was as follows: firstly, an extensive literature review was conducted to explore and identify the factors that mostly affect the quality of web-based e-learning systems. Secondly, among the identified factors, only those with the most significant impact were considered in this study. To identify the most important quality criteria, an electronic and manual survey was conducted, and an instrument was deployed among 157 subjects, including e-learning designers, developers, students, teachers, and educational administrators. Finally, another instrument was distributed among 51 participants to make a pairwise comparison among the criteria and rank them according to their relative importance. The identified and prioritized key quality factors were classified into four categories, including content, design, usability, and organization. Among these four factors, content was identified as the most important factor, whereas design was found to be the least important factor.

The rest of this paper is organized as follows:

Section 2 presents related work and

Section 3 describes research methodology. In

Section 4, we present and discuss the findings of this study and in

Section 5 we propose the quality evaluation model based on these findings. Finally, in

Section 6, we give our conclusions and future work.

2. Related Work

Researchers have introduced many different evaluation models and frameworks [

13,

14]. However, these models lack the evaluation of websites with respect to software design and development perspectives. The e-learning quality models and frameworks introduced by prior research are as follows:

Jabr and Al-omari proposed a web service-based e-learning framework that increased the efficiency and effectiveness of collaborative learning in terms of reusability, interoperability, accessibility, and modularization [

15]. The model does not evaluate the e-learning websites either from pedagogical or developmental perspectives. Amin and Salih proposed a model premised on critical success factors (CSFs) from a university student’s perspective. They surveyed e-learning CSFs and classified them into four dimensions. The model aimed to achieve high quality in web-based e-learning systems [

16]. It considered only the single fraction of stakeholders to propose the dimension for the attainment of high quality in e-learning solutions. In our opinion, the model partially fulfils the quality needs of e-learning websites, as the findings are only based on the views of students.

Wang Shee and Wang proposed a multi-criteria evaluation method for web-based e-learning systems, considering only a single aspect of e-learning, which was learner’s satisfaction. The main dimensions of the model were learner interface, learning community, system, and personalization [

17]. There are also other important aspects of e-learning websites, such as effectiveness and efficiency, which are not catered to by the proposed model. Chua and Dyson introduced the ISO 9126 quality model as a tool to evaluate e-learning systems in order to improve the quality [

18]. Djouab and Bari introduced an extension of the ISO 9126 software quality model. However, the extended model was neither validated nor were the guidelines related to the usage given [

19].

Researchers have also proposed quality evaluation models specifically for websites. The website quality evaluation model (Web-QEM) was designed to evaluate the quality of web applications, which helps to meet the quality requirements, as well as discover the missing and poorly designed features. The Web-QEM model is based on the ISO 9126-model, so its quality characteristics include usability, reliability, efficiency, and functionality. This model evaluates the quality of a website, taking many different steps. It is an effective model to measure the quality of websites. However, Web-QEM does not address all the factors related to the software dimension of modern academic websites, such as learning experience [

20].

Vida and Jons proposed the Web Quality Model (WQM) to evaluate web applications with respect to three different dimensions, including web features, ISO/IEC 9126-1-based quality characteristics, and lifecycle process. The model lacked in presenting a step by step evaluation criteria and sub-characteristics of the factors. The SERVQUAL model was proposed to assess the satisfaction and quality of websites. The model intended to measure the gap between the expectation of the customers and their usage experience. The model addressed the five quality attributes called RATER: i.e., reliability, assurance, tangibles, empathy, and responsiveness. However, it did not consider the sub-attributes of the RATER quality constructs [

21]. Tsigereda proposed a model to evaluate the quality of academic websites. It evaluated websites from the perspective of students using four important quality factors, including content, reliability, efficiency, and functionality arranged in a hierarchal structure along with sub-quality factors. The downside of this model was that it did not take all important stakeholders into account, such as faculty members, educational administrators, and designers/developers. Moreover, the hierarchy model was not assigned weights or ranks to the quality factors [

22].

Although a variety of e-learning quality models and frameworks have been proposed by researchers, certain limitations have been observed in these e-learning evaluation models. Firstly, some of these models have not considered the stakeholders of paramount importance with regard to e-learning websites. For example, the student’s perspective is an important aspect of the quality of e-learning websites. The e-learning websites should not be something that are merely imparted to a passive student. Instead, high quality e-learning websites should be developed through a process of co-production between the students and the learning system. The teacher’s perspective is also critical to ensure the quality of e-learning websites. It is the teacher who can offer suggestions regarding the teaching/learning method. Similarly, the educational experts can provide an appropriate learning strategy for the students being served through the educational website. Furthermore, the players from the e-learning industry, including designers and developers, can also make suggestions to evaluate the web-based e-learning systems [

23]. Secondly, the majority of the quality models focused on pedagogical characteristics, including the learner, instructor, institution, social, and management, etc.. The software characteristics, such as design, usability, and digital contents have been ignored. Thirdly, some frameworks have addressed the limited number of software quality factors, including usability, portability, and reliability. The quality of e-learning websites from a software perspective cannot be gauged using such a limited number of quality factors. Finally, website evaluation is a multi-criteria decision making (MCDM) problem, as there are a number of different factors involved. MCDM methods are extensively applied in other areas, such as e-commerce [

24,

25], but, as per the author’s knowledge, they are rarely explored for the evaluation of web-based e-learning systems.

Keeping the shortcomings of previous research in mind, this study has considered the software perspective and key stakeholder of e-learning websites to propose a hierarchical quality evaluation model for e-learning websites. The domain of e-learning websites is very broad and there are numerous perspectives for the quality of e-learning websites, such as pedagogical, personal, institutional, software, and technical. The focus of this study is only on the software perspective.

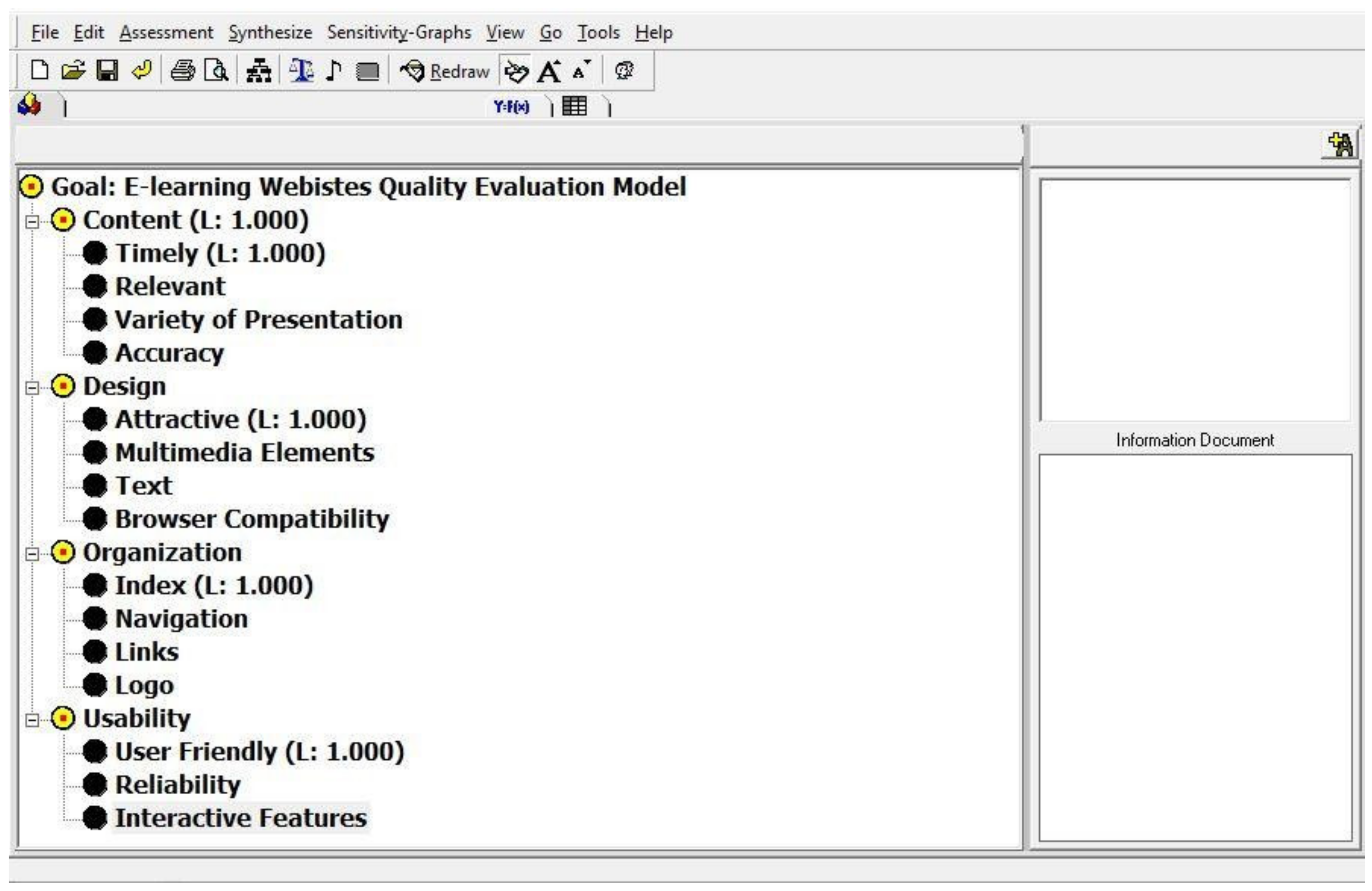

5. Proposed Model for Quality Evaluation

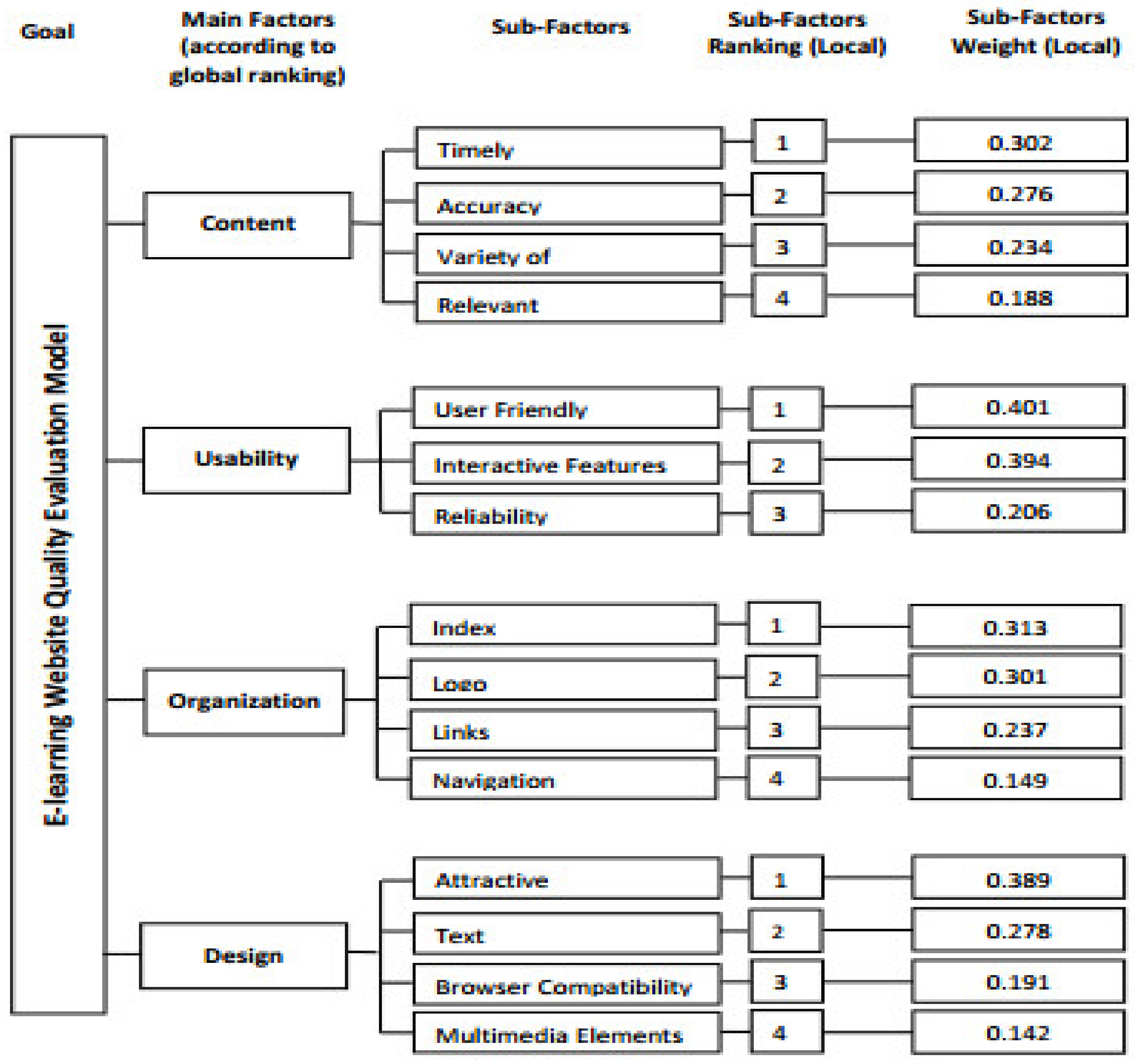

The objective of this research study is to develop a hierarchical quality model that could be used to evaluate the quality of e-learning websites. Thus, the main outcome this research is a hierarchal model to evaluate the quality of e-learning websites, this model is shown in

Figure 9.

‘Content’ is found as most significant quality factor, the basic objective of e-learning systems is to facilitate and help learners by imparting them with apposite learning content and services to meet their learning needs [

60]. The content factor is related to the learning material and the features presented by e-learning websites to learners. Therefore, this factor plays an important role in the success of web based e-learning systems as an effective content is the main concern of learners when visiting an educational system. The related sub-factors are also important as all of them emphasize on the effectiveness of content so that quality learning experience could be delivered. Firstly, ‘Timely’ sub-factor has been noticed to be the most important factor among others, it indicates that the content of the website should be updated on regular basis to reflect the latest development and progress made in the field or area of study [

31]. Secondly, the content should always be accurate and authentic so that e-learning system would be perceived as trustworthy learning source. Thirdly, the learning material should be presented using variety of resources including video, images, graphics, audio, animation and simulation to add value and to accommodate the learning needs of learners with diverse learning preferences [

31]. Lastly, the variety of learning material should be relevant to the topic being discussed and to the level of students to whom the contents are being delivered. The finding of this research is similar to previous research in which emphasis is also given on ‘Content’ and declared it as one of the most important factors to evaluate the quality of web based e-learning systems [

61].

The second most important quality factor in the proposed hierarchical model was usability. Usability is the ability of a system to help users in achieving their goals effectively, efficiently, and satisfactorily within the context of use [

60,

62]. Thus, web-based e-learning systems should support the learning process and allow learners to efficiently use the system. It should also be appropriate for the intended learning tasks [

61]. The related sub-factors were important, as they emphasized the usability of the system. The user friendly sub-factor indicated that the interface should be easy to use, allow learners to achieve learning objectives when they use e-learning systems for first time, and help them to perform learning tasks quickly in the future [

62]. The e-learning websites should consist of “interactive features” that allow learners to fully manipulate the course and have control over the learning objects [

61]. Reliability indicated that the web-based e-learning system should be accessed by a user anywhere and anytime using a diverse range of devices and platforms. Previous research supports the finding of this study. It is underlined that every software system has some functionality that is provided to its users, such as teaching/learning functionality in e-learning systems. However, the value of system functionality is noticeable only when the system can be effectively and efficiently utilized by its users. The real effectiveness of a software is realized when there is an appropriate balance between the functionality and usability of the system. Usability is, therefore, a significant factor which complements the system functionality and enables users to engage with the system to perform all actions and services offered by the system. In the absence of such factors, users remain unable to take full advantage of the system’s services and will not enjoy the system while using it [

62]. To achieve usability, human-centered methodology may be adopted in the development process [

63].

The third important quality factor is organization, which is related to the overall structure of the website. It describes how the important aspects of a web-based system are organized. The related sub-factors emphasized the need for an appropriate structure and the organization of educational websites so that it is accessible to all kinds of learners. Index is the main page of the website; it should present all learning categories and the classification of learning materials so that learners can easily access the intended learning path [

48,

52]. The logo should be available on e-learning websites to indicate that the learning content is being delivered from authentic and credible sources, which is a deep concern for learners of this information age [

53,

54,

64,

65]. The “links” or hyperlinks on websites must be active, as the dead links irritates the learners [

54]. On long pages, a list of contents with links should be available at the top of the page to take learners to the corresponding content farther down the web page [

66]. The links should be designed with glosses as short phrases to guide the learners to what is actually located behind the links. Lastly, navigation is a method used to find information on a web-based e-learning system. A navigation page assists learners in locating and connecting to desired pages. The navigation scheme should be designed in a way that allows learners to locate and access learning material effectively and efficiently [

66].

Finally, the quality factor design is concerned with the design and visual aspects of the website. It emphasizes the requirement that the important items of the e-learning website should be placed consistently and aligned appropriately. The design of the website must ensure that the web pages show a reasonable amount of white space, as too much white space can demand substantial scrolling, whereas too little may offer a presentation that looks too busy. The sub-factors of design include attractiveness, text, browser compatibility, and multimedia elements. The overall design of the website should be attractive to retain the learner’s attention for a longer period of time so that they spend most of their time with the learning application [

32,

35,

66]. The text should be presented with a font size, style, foreground, and background color that can support the learners in reading and comprehending the learning materials rather than hindering them [

53,

54,

65]. Different learners have different browser features and different defaults: for example, learners with visual impairments have a tendency to select larger fonts, whereas some other learners may turn off backgrounds, use fewer color options, or override the font. Therefore, the e-learning web-based system should be compatible with common browsers and with browser settings most commonly used by the learners [

46,

47,

66]. Moreover, the design of web-based e-learning system should have the support of multimedia elements, as these elements enforce the user to concentrate on the learning items [

66]. In short, for effective solutions, there is a need to rethink the customer’s needs and innovate.