1. Introduction

Early in 1998, Pin and Gilmore claimed that “from now on, leading-edge companies—whether they sell to consumers or businesses—will find that the next competitive battleground lies in staging experiences” [

1]. Experiences, as they suggested, have emerged as “the progression of economic value” [

1]. Good user experience (UX) is desirable for both users and companies. For users, a delightful interaction experience not only fulfils their instrumental needs, but also non-instrumental ones such as beauty, satisfaction, and pleasure [

2,

3], while for companies, a delightful user experience is regarded as one of the key drivers of sustainability development [

4,

5]. Evidence shows that providing positive UX can increase user satisfaction and loyalty, thus promoting the commercial success of the company [

6]. For products that are unable to offer good user experience, UX measurement feedback can assist development teams to fix their experiential problems, therefore improving the UX quality of the product [

7,

8].

“User experience” is a concept that includes all aspects of how individuals interact with a product [

9,

10]. The concept of UX is highly context-dependent and dynamic [

7,

11]. We respect its multipipeline nature, while, in this study, we adopt Hassenzahl and Tractinsky’s definition of UX: “a consequence of a user’s internal state (predispositions, expectations, needs, motivation, mood, etc.), the characteristics of the designed system (e.g., complexity, purpose, usability, functionality, etc.) and the context (or the environment) within which the interaction occurs (e.g., organizational/social setting, meaningfulness of the activity, voluntariness of use, etc.)” [

2]. Since the 1990s, UX has become a popular tool to evaluate human beings’ interactions with a product, system, or service [

12,

13,

14]. In contrast to usability research, which predominantly focused on task efficiency, UX research has emphasized experiential qualities. There are a few fundamental UX principles:

UX takes a holistic perspective of the user–product interaction [

15], including use of the products, as well as the meaning and emotion among users through their interactions with products [

16].

UX focuses on both pragmatic values and hedonic value [

6]. In general, UX studies explore the relationships between usability, symbolic, and aesthetic value for user experience with products.

UX emphasizes the importance of the context of use [

2], as different usage contexts can result in different experiences.

Both qualitative and quantitative approaches can be applied in UX research. Common qualitative approaches include interviews, focus groups, and user observation [

17]. UX metrics provide quantitative data, which can help to measure and track user experience. Popular UX metrics that are being used in studies are the Usability Scale (SUS), Customer Satisfaction Score (CSAT), Net Promoter Score (NPS), and Conversion rate. We listed only a very few here; for UX researchers, they can choose many other UX metrics depends on their goals.

Mobile apps have become more and more common in people’s daily lives. We have observed in the market that a task-oriented mobile application that fails to satisfy people’s non-instrumental needs, such as enjoyability and pleasure, is still likely to result in a market failure, and many apps that offer a lot of powerful features still cannot win popularity among users. We believe that the UX level of the products is the main reason behind this phenomenon. On one hand, when there are a large number of apps offering similar functions, a great first impression of the product experience often leads to users’ decision of which application to choose from. On the other hand, a pleasant long-term experience is the key to users’ loyalty and continuity towards a certain application. Thus, the first-time experience (FTUX) and long-term experience (LTUX) both play vital roles in mobile apps users’ life cycle, and need to be paid equal attention when studied.

Most UX studies regarding to mobile apps have either focused on users’ short-term experience, or long-term longitudinal UX measurements. Barely any of them have combined two kinds of UX in one study. To achieve sustainable development of mobile apps, we agree with researchers who emphasize positive long-term user experience. However, we propose that a great first impression matters as well. This is especially the case for mobile apps. Research shows that 25% of new users will log in to new apps only once, and 80% of new users will decide whether to continue using the app within three minutes of use [

18]. Similarly, Flurry, an iPhone app metrics company, found that a free iPhone application loses 95% of users after one month [

18].

Nevertheless, very few studies have evaluated the first-time user experience, despite its importance to the success of the product [

19]. To this end, we developed this study to assess users’ first impressions, as well as how their relationship with the product had changed in the long-term. From our perspective, both the first-time and the long-term user experience should be considered if companies aim to select the right design options from UX evaluation feedback. A great first impression helps to attract users, and a positive long-term UX further assists companies to retain users. The product we chose to study was a free mobile fitness application. Free apps tend to have a shorter life cycle, which means the development teams may be under more pressure to not only attract new users constantly, but also to retain existing users.

This study is based on a multi-method approach. We began by adopting the AttrakDiff Questionnaire to assess users’ first impression of the application, and then used the UX Curve method to evaluate how users’ experience with the app changed over time. As the two methods are able to investigate various experiential dimensions, we decided to follow the AttrakDiff to focus on four dimensions: “pragmatic qualities,” “hedonic qualities—identity,” “hedonic qualities—stimulation,” and “attractiveness.” Meanwhile, we interviewed the participants after each evaluation session, and asked them to explain which factors deteriorated their experience. Basing on collected qualitative data, we managed to identify critical issues with two separate types of user experience.

The study has the following organization:

Section 2 reports on the previous works on UX measures, and is then followed by

Section 3, which illustrates our research methodology, including data collection and data analysis.

Section 4 demonstrates the experimental study we conducted, in addition to the results. Lastly,

Section 5 presents the conclusion of this study and outlines our future work.

2. Literature Review

From the late 1990s, UX has gradually been adopted as a tool to evaluate the quality of human–product interactions [

15,

20]. The earlier studies on UX aimed to convince the Human-Computer Interaction (HCI) community to pay more attention to users’ internal state [

21]. For example, Alben and their colleagues first brought up the notion of UX in 1996; in their words, UX is “the way the product feels in hands, how well users understand how it works, how users feel about it while they’re using it, how well the product serves users’ purposes, and how well the product fits into the entire context in which users are using it” [

9]. Similarly, Prof. Hassenzahl states that “UX is about technology that fulfills more than just instrumental needs,” and it should “contribute to our quality of life by designing for pleasure” [

2]. In short, UX emphasizes both pragmatic qualities and hedonic qualities.

Previous UX studies have predominantly focused on short-term measurements, emphasizing experiential qualities related to improvements of product’s first impression, such as usability and usefulness [

11,

17,

22], while in recent years, an increasing number of researchers have started to emphasize the great importance of long-term user experience evaluation. Studies find that hedonic qualities like pleasure and beauty play an essential role in long-term use [

23]. Positive long-term user experiences suggest that improved customer satisfaction and loyalty can foster business sustainability [

16,

24]. This helps to explain why there has been a shift of emphasis from “an experience” (one test session only) to a longer period of use [

17,

25].

Researchers and practitioners have presented various methods to assess user experience. Karapanos et al. divided UX measure methods into three mainstream perspectives: cross-sectional, pre-post longitudinal, and retrospective reconstruction [

26]. Cross-sectional approaches distinguish users by different levels of expertise, or the length of time users use the product [

26]. Such approaches neglect that external variation is not entirely controllable, but attribute variation across users to the manipulated variables [

27]. This was supported by the study by Prümper et al., which found that different definitions of define “novice” and “expert” users led to different results [

28]. In this case, it is hard to say how close the research result is to the real user experience.

Pre–post approaches study the same users at two points in time [

26]. For instance, Karapanos et al. invited ten users to test a novel pointing device and record their user experience of the product during their first experiences with the product, as well as after four weeks of use [

29]. The limitation of pre–post approaches is that only two measurements are made of the user over an extended period of time, so time effects may not be the only reason for the changes in their experience with the product [

27]. Moreover, user experience changes through the process of interactions; merely assessing a short-term time frame or a few points in time is unlikely to provide precise information to forecast a fully comprehensive user experience in real life [

30]. There are few longitudinal studies covering several months or even the life cycle of a product [

27,

31], as they are expensive, impractical, and trained researchers are often required [

26]. Therefore, even companies that value the importance of long-term longitudinal UX measurement can seldom employ this approach.

Retrospective approaches support users to recall the most memorable experience they had with the product within a given time period [

27,

32]. These approaches derive from the CTI (critical incident technique). In CTI studies, participants are asked to reconstruct meaningful personal experiences based on memory. Von Wilamowitz-Moellendorff et al. proposed change-oriented analysis of the relationship between product and user (CORPUS), a structured interview technique to retrospectively evaluate users’ perceptions of different aspects of perceived product quality [

33]. Participants in the CORPUS are asked to compare their current attitude of the product to the moment when they purchased the product, and rank a few UX dimensions on a 10 point scale over the timelines. If there is any change in their experience, participants need to explain the trends and causes of changes further.

Kahneman et al. proposed the experience sampling method (ESM), a retrospective diary method to collect users’ experiences “in situ” [

34]. The in situ data collection can minimize the bias caused by memory effects, but it requires high compliance from participants, as well as rather high effort for participants. Building off the ESM, researchers further developed the day reconstruction method (DRM), which imposes a chronological process in the daily retrospective assessment. Instead of requiring participants to report all use cases with the product immediately, which might interrupt their ongoing activities, the DRM asks participants to reconstruct a few of the most impactful emotional experiences of the preceding day. Nevertheless, due to their time-consuming and laborious nature, long-term longitudinal studies like the ESM and DRM are still rare [

35].

There are examples in the literature of lightweight methods for evaluating long-term UX. Compared to interviews and transcripts, graphing is assumed to be a more straightforward method. Sonnemans and Frijda first adopted graphing to recall past emotional experiences in 1994 [

36]. Karapanos et al. presented iScale, an online survey tool for supporting users to retrospectively reconstruct their experience with a product by sketching curves from the moment of purchase up until the present [

26]. The pioneer study by Karapanos et al. showed that participants using the iScale tool were able to recall more experience reports than those who reported directly without any form of graphical representation [

26]. As an online survey tool, users can easily modify curves in iScale. However, comparing to a paper-and-pencil method, iScale provides a lower degree of freedom in sketching, and users may find it is harder to annotate their sketches in iScale as well. Thus, free-hand sketching is claimed to be a “more expressive” tool [

30]. Moreover, iScale is not publicly available yet, so it may not suit industrial contexts where resources are limited.

Kujala et al. developed the UX Curve method, a paper-and-pencil method which also requires participants to draw several curves describing how their experience with the product has changed over time. The UX Curve involves face-to-face interviews between researchers and participants, so that participants’ reasoning and thoughts can be better-collected [

30]. Researchers can further study the meaningfulness of how these recalled experiences affect user satisfaction and customer loyalty [

31]; this is essential for UX improvements. As Kujala et al. state, a “method is useful until it is known what the resulting data means and what separates happy and unhappy users” [

30].

Some may question the validity of retrospective studies, arguing that human memory is often incomplete and biased. Admittedly, memories are rarely accurate, but they should not be underestimated. Retrospective memories suggest a significant association between users’ recommendation and loyalty and product success. Shiffman et al. argue that retrospective recall has a huge effect on individuals’ later behavior, as it represents the information that individuals use to make future decisions [

32,

37]. Psychological studies also prove that memory introduces systematic biases into individuals’ evaluations, especially at the peak and end of the experience [

38]. Subjective factors such as reconstructions and emotions largely influence users’ decision-making and judgment [

39]. For users, their perception of the product determines whether they will continue to use, purchase, and recommend it to others in the future. The evidence presents in Garrett’s work suggests that customers who have a delightful experience with the product are more likely to purchase it again [

40]. As Karapanos et al. pointed out, “it may not matter how good a product is objective, it is the ‘subjective’, the ‘experienced’, which matters” [

26].

4. Experimental Section

In this chapter, we assessed the usefulness of our proposed UX assessment approach in a study with 20 participants. Our work aimed to investigate if our proposed UX assessment approach was practical, and, furthermore, whether the method could be used to identify factors deteriorating user experience of the app. The research work thus included three steps. First of all, we recruited a group of participants to evaluate their first-time user experience of the tested app. After four weeks of repeat use, these participants joined the UX Curve drawing session, where their long-term experience with the app was assessed. The last step was to analyze the data and information collected from the two UX evaluation sessions, and to conclude which were the most serious UX problems of the app.

The exploratory study was conducted in Beijing, China. We worked with a free fitness app running on Android and iOS platforms. The app was developed by a China Mobile subsidiary that specializes in mobile internet operations. As a fitness app, it targets users between the ages of 18 and 45 years, and aims to prompt users to follow through on their fitness goals by providing tools like activity tracking, video-based workout sessions, and nutritional information. The app was first launched in 2016, and its developers release an update every 6 weeks. The app remained unmodified during the 4 week study to ensure that the participants used and gave feedback on the same app.

Before the study, the UX of the fitness app was considered to be relatively poor, which can be seen from two facts: (1) Its retention rate was lower than average retention rate for mobile apps. By day 1, the app had an 18.9% retention rate, which reduced to 5% in 10 days, and then dropped to 0.87% by day 90, compared to 21.0%, 7.5%, and 1.8% for average apps [

43]. (2) Ratings of the app in major app stores have been dropping for two quarters with over 19% of user reviews negative.

4.1. Data Collection

4.1.1. First-Time User Experience

Twenty participants were recruited for the study. Participants were selected with different backgrounds, ages, genders, and mobile phone models. We also set a few qualifications for candidates. First of all, since the study needed to measure the users’ first-time user experience with the application, participants could not have used the application before. Secondly, participants were physically qualified to use the fitness application for at least five minutes on a daily basis during the study. They could explore any features the app provided at any time during the day.

The proportion of female (50%) and male (50%) participants was equal. Their ages ranged from 18 to 42 years (mean 27.2 years) and they belonged to the target group of fitness apps. All of the participants used a smartphone, though mobile phone models varied, representing five brands: iPhone, Huawei, Xiaomi, Vivo, and Oppo. Participants used their smartphones to access the Internet every day. The personal features of participants are shown in

Table 1.

The evaluation sessions with the participants consisted of an AttraDiff questionnaire and a curve-drawing session, as well as two interviews.

The 20 participants joined the AttraDiff questionnaire session on August 1, 2018. After using the app for ten minutes, participants filled out the questionnaire, which aimed to assess their first-time experiences with the app. The collected data are reported in

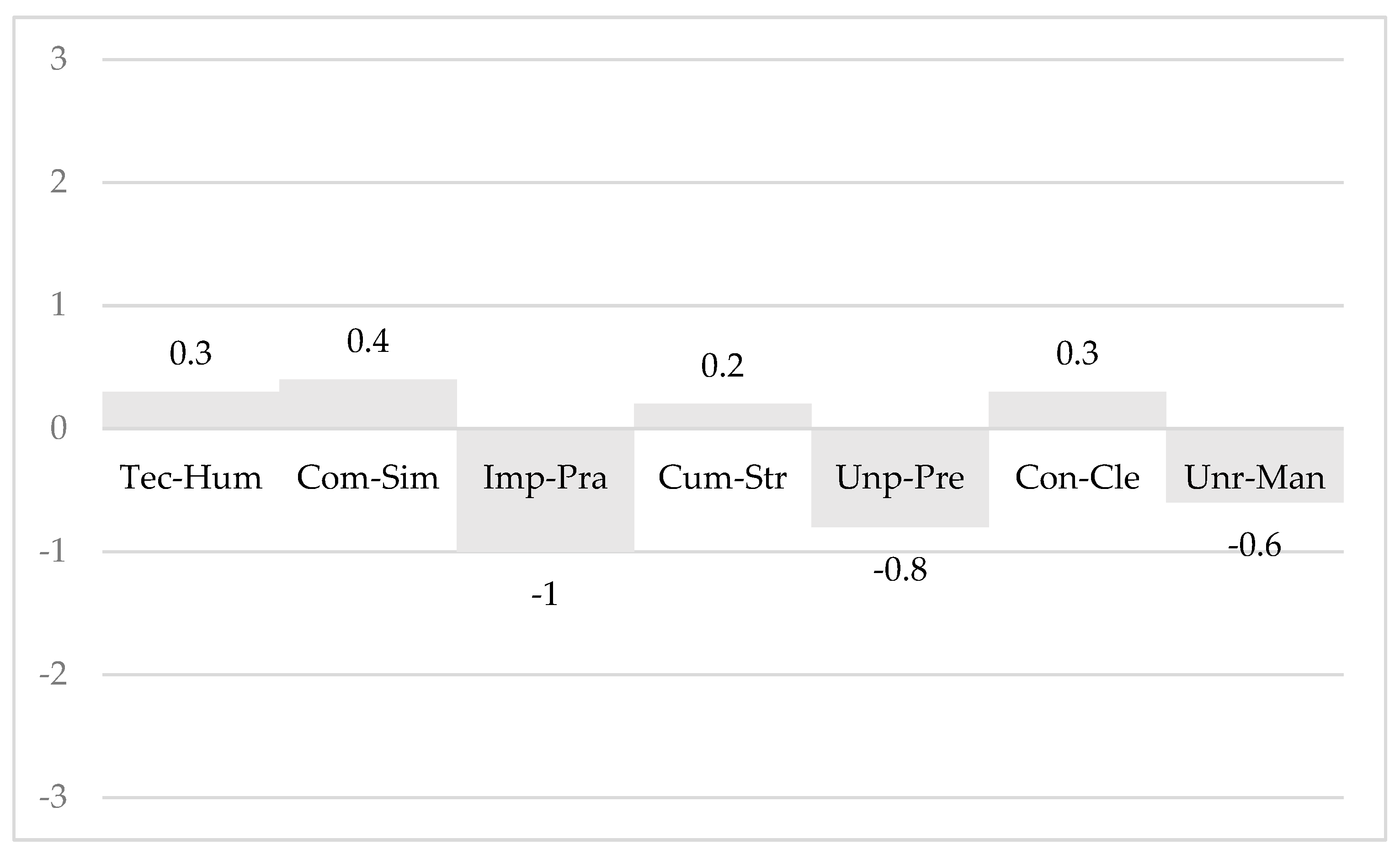

Table 2. The HQ-S dimension was the only dimension in which the mean value was greater than zero (0.6). The other three dimensions were rated between −1–0. HQ-I was scored with the second highest mean value, −0.3, followed by the ATT aspect (−0.4). It seems that participants did not enjoy their first interaction with the application much, especially its pragmatic qualities, which only scored −0.6; this indicated that the PQ dimension of the first-time user experience was most problematic. Thus, we decided to take a closer look at the evaluation of specific items contained in the PQ dimension.

Figure 3 provides the average values of seven items contained in the PQ aspect. The assessment of the item Impractical–Practical obtained the lowest score (−1). The other two pairs of items that were rated below zero were “Unpredictable-Predictable” and “Unruly–Manageable,” which scored −0.8 and −0.6 respectively. It is suggested that the app was unsuccessful in satisfying participants’ practical and functional needs when they first tried the application.

After filling out the AttraDiff questionnaire, participants had a break for 30 minutes before the first interview session, where they were required to explain why they were unsatisfied with the app after their first use. The reasons were content-analyzed and further categorized into pragmatic and hedonic issues. We used the user experience model by Hassenzahl for categorization [

6]. We collected 25 first-time user experience issues in total from participants, 15 for pragmatic issues, and 10 for hedonic issues.

Table 3 represents the categories and three most-mentioned issues related to each category. The pragmatic issues were mostly related to technical faults and bugs (mentioned five times). According to collected data, crashes were the most serious issue that impacted user experience. One participant mentioned that the application had crashed twice in eight minutes, and that he would uninstall the app straight away if he was not involved in this study. Another critical issue was associated with “registration process”; four participants complained about it. Three participants mentioned that it would be better if the app could connect with their fitness trackers.

The hedonic reasons (including the HQ-I and the HQ-S issues) largely referred to identification and beauty; four participants complained that, compared to other popular fitness apps, the test app was more “isolated,” since they could not share their performance and achievement with friends. Three participants scored the hedonic qualities dimension below zero because there were not enough workout options, so they described the application as “unprofessional.” Two participants stated that they did not like the design and visual appearance of the application.

4.1.2. The Long-Term User Experience Evaluation

Four weeks later, the twenty participants joined the second evaluation session, in which they reconstructed how their relationship and user experience with the app had changed during four weeks of use by drawing curves. We collected four different curves with the four curve templates from each user, so a total of 80 curves were collected, including 20 for each of the four UX dimensions. The curve forms were categorized according to the difference between the vertical values of the ending and starting points of the curve to positive, negative, or zero. The results are reported in

Table 4. In total, most of the differences were positive, implying an improving long-term user experience, but the PQ dimension had more negative differences than positive ones, suggesting a deteriorated experience of the PQ aspect. More than a half of the HQ-I differences were positive, which means that most participants became more satisfied with the hedonic quality—identity. Half of the participants also had a better experience of the hedonic quality—stimulation. This dimension had the least negative difference as well; only 25% of participants drew decreasing HQ-S curves. As for the ATT dimension, 65% of participants graphed an improving or stable curve, suggesting that most participants had an improving or stable user experience in the four weeks.

After the curve drawing session, we had the second interviews with participants regarding what caused negative experiences during their four weeks of use. Some reasons have been marked on their curve templates. We picked out all the negative reasons that participants stated during the second interview session, as well as those written on the UX Curve temples. In total, we collected 39 reasons and then categorized them according to Hassenzahl’s model into pragmatic and hedonic issues. Of collected reasons, 25 related to pragmatic qualities and 14 were hedonic issues. In detail, the most mentioned long-term UX issues were bug crashes (eight times), followed by “unable to sync activity data” (five times). Four participants complained about the “counting function” while explaining why there was a decrease in their long-term user experience. Other issues related to the pragmatic aspect were “the app drains the battery” (mentioned by three), “unable to incorporate music into the app” (three times), and “too many ads” (two times).

As for hedonic issues, five participants stated that “there were too many notifications”. One participant described the notifications for being “very annoying”; “I almost want to uninstall the app when I received its notifications three times in one day.” Another five participants hoped the app could provide a larger range of workouts. “I even considered paying for a Premium subscription for more workout guides,” said one of the participants. Four negative reasons were associated with “social sharing,” and one participant worried about “information security.” Details show in

Table 5.

We compared the three pragmatic issues and the three hedonic issues that were most frequently mentioned in the two interviews and found that more pragmatic issues were reported than hedonic issues. Apparently, “crash bugs” was the most critical issue of the two types of user experience. Another technical bug that bothered participants in the long-term was “unable to sync activity data”. The issue “limited workout choices” was mentioned in two interviews as well. For users, they expected to experience more functions that enabled them to go through physical transformations. Participants also valued social features, as the “restricted social sharing” issue was also mentioned during two interviews. Indeed, with the development of the mobile internet, many individuals have the need to share their activities on social networks. Though participants showed an interest in connecting to and sharing with others, they did not want to be sent too many notifications.

There were a few issues that we believe can be addressed easily, and which could help improve user experience quickly, for example, providing a simpler and faster registration process. There were two problems that were difficult to fix, namely connecting to other sports trackers and battery drain. Interestingly, when asked to explain why they were not satisfied with the app, participants tended to compare this application with a few popular fitness apps that they had used before, and told us the difference between two apps. For example, one participant mentioned that “compared to Nike Training, this app has limited workout options, while I can tailor my workout to suit my ability, fitness level, and strengths with Nike Training.”

4.2. UX Coordinate Planes

We entered two kinds of user experience data for each user into UX coordinate planes. As mentioned before, the

X-axis of the coordinate represented the value of the specific AttrakDiff dimension, and the

Y-axis represented the difference of vertical values of the ending and starting point of the curve.

Figure 4,

Figure 5,

Figure 6 and

Figure 7 show Pragmatic Quality, Hedonic Quality—Identity, Hedonic Quality—Stimulation, and Attractiveness respectively.

The PQ coordinate plane (

Figure 4) showed that most points were located in Quadrant III, which means that most participants were not satisfied with the app’s usability and usefulness after the first encounter, and their long-term UX became even poorer. This was consistent with the pragmatic issues they reported during two interviews. Crash bugs were suggested to be the most negative issue that deteriorated both the first-time and long-term user experience.

As for the app’s hedonic qualities, according to the HQ-I coordinate plane, most points were located in Quadrant II, indicating that participants had a negative experience with the application during the first encounter, but their experience improved after long-term use. As for the other hedonic qualities dimension, most points in the HQ-S coordinate plane were located in Quadrant I, suggesting that participants held a positive attitude towards the app’s hedonic—stimulation qualities, and their user experience of this dimension even saw an increase. In generally, participants’ evaluations of the hedonic aspects were better than their evaluations for the pragmatic qualities. Especially in HQ-S, for which the dimension not only obtained the highest values during the first-time UX evaluation, but also provided an improved experience in the long-term. It was suggested that the app was successful in stimulating participants. Regarding the hedonic quality—identity, limited workout options (five mentions) and social isolation (four mentions) resulted in a negative first impression, and these issues also had long-term effects on user experience.

Finally, as shown in

Figure 7, most points in the ATT coordinate plane were located in Quadrant II, which means that participants might initially think that the application was not attractive, but the perceived attractiveness increased over time.

Overall, we collected more UX issues when evaluating the long-term user experience, but, interestingly, and there was an increasing trend of the user experience for three dimensions. It is necessary for the development team to pay great attention to the pragmatic issues. We also collected problems from the FTUX evaluation. Some of these problems were unable to be detected from a single long-term experience evaluation, but were likely to cause users to abandon their continued use: long registration process, for example.

5. Discussion and Conclusions

In this study, we aimed to adopt two different methods to evaluate the first-time and long-term user experience separately. User experience studies have previously largely focused on short-term evaluation. In recent years, a few UX studies have claimed the importance of positive long-term user experience, especially its contribution to user satisfaction, loyalty, and the company commercial success [

30]. We agree on that; however, we also suggest developers pay attention to users’ initial experiences with the product, particularly for mobile apps. Apps are suggested to have shorter life cycle; evidence shows that 25% of users will log into new apps only once, and 80% of first-time users will decide whether to continue using the application within three minutes of use [

18]. Unfortunately, we have not seen any UX research that has emphasized the importance of first-time user experience, nor have we seen research that has studied both the first-time and the long-term experience. To this end, we developed this study, which involved 20 participants to evaluate the two types of user experience of a fitness app. The first-time experience was evaluated using the AttraDiff questionnaire, while after four weeks’ use, participants were asked to describe their experiences by means of the UX Curve method. We obtained both quantitative and qualitative data from two evaluation sessions. The quantitative data assisted us in determining qualities of two types of UX. In order to analyze how the user experience had evolved in four weeks, we developed four UX coordinate planes for four different UX dimensions, namely, Pragmatic Quality, Hedonic Quality—Identity, Hedonic Quality— Stimulation, and Attractiveness. In each coordinate plane, the

X-axis represented the value of the specific AttrakDiff dimension, and the

Y-axis represented the difference of vertical values of the ending and starting point of the curve.

Qualitative data were collected from interviews with participants. Each evaluation session included interviews with participants, where participants could provide more feedback that enabled developers to fix experiential problems. Participants were required to explain why they had negative experiences when using the app, and we categorized their comments according to Hassenzahl’s model into pragmatic and hedonic issues [

6]. For each UX evaluation, we picked out three most-mentioned issues of pragmatic and hedonic aspects, so, in total, we concluded with 12 critical issues that deteriorated the user experience. It was suggested that application developers and designers solve these problems first. Therefore, we recommend that app companies prioritize their problems according to the severity of the problems. This can be achieved by comparing how users rated the four dimensions of the two kinds of user experience. Being able to set a priority of user experience improvement is essential for application development teams with limited resources.

This study might provide new insights into user experience evaluation, but also raises challenges for further research. We realize the potential for our continuous improvement. For example, we only focused on the issues that damaged user experience in this study, while during the process of measurement, we found that the user experiences of three dimensions were improved after four weeks. If developers can understand what factors can improve the user experience, they could further optimize user experience of the application. Therefore, we will continue to investigate the factors that will improve user experience in our future work. We also hope to work with application development teams to apply the usefulness of the evaluation results for user experience design.