Exploring Factors, and Indicators for Measuring Students’ Sustainable Engagement in e-Learning

Abstract

:1. Introduction

2. Theoretical Background

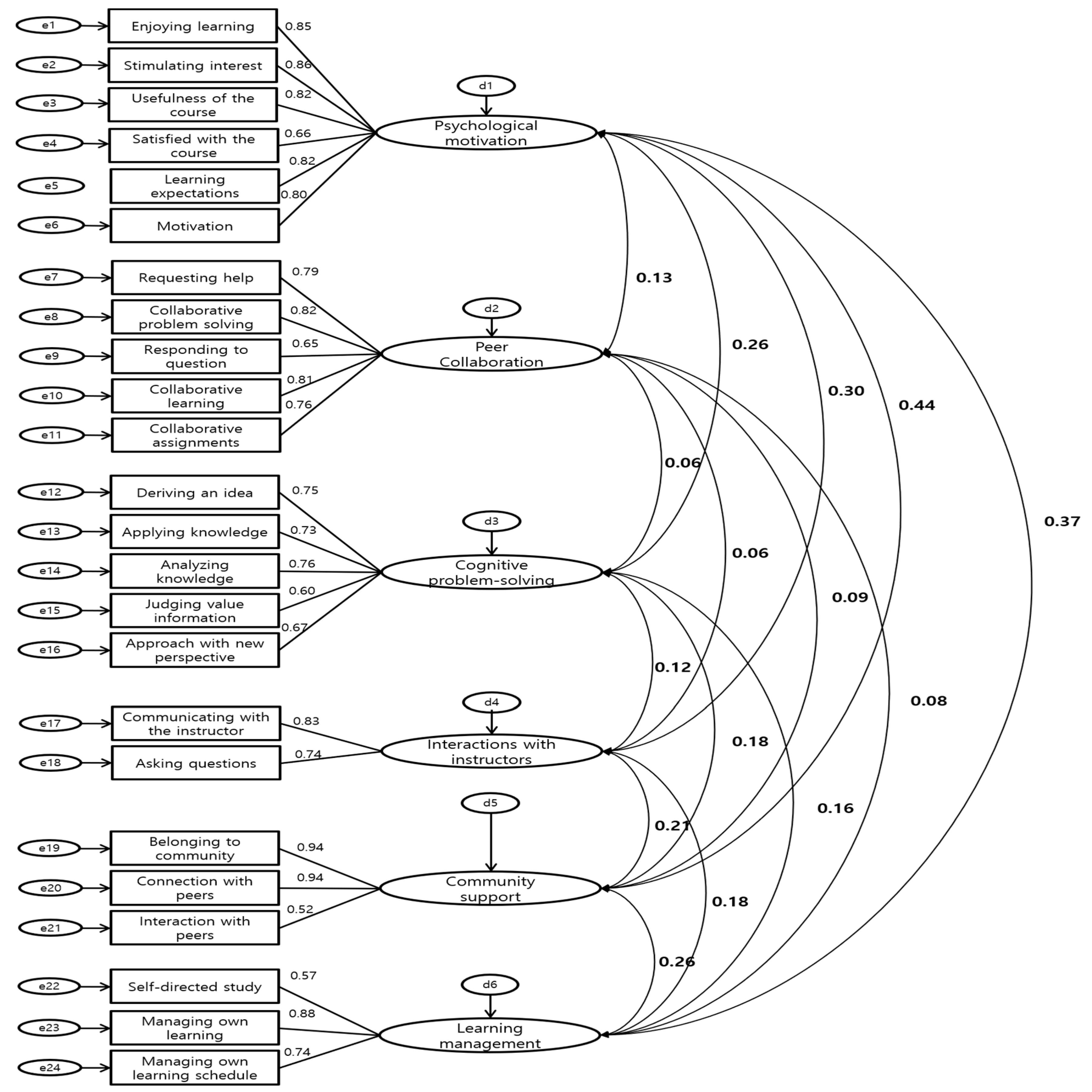

2.1. Factors of Student Engagement

2.2. Indicators that Characterize Student Engagement in the e-Learning Environment

3. Methods

3.1. Context and Sample Characteristics

3.2. Scale Development Process

4. Results

5. Discussion

6. Implications and Limitation of the Study

Author Contributions

Funding

Conflicts of Interest

References

- Chen, P.S.D.; Lambert, A.D.; Guidry, K.R. Engaging online learners: The impact of Web-based learning technology on college student engagement. Comput. Educ. 2010, 54, 1222–1232. [Google Scholar] [CrossRef]

- Robinson, C.C.; Hullinger, H. New benchmarks in higher education: Student engagement in online learning. J. Educ. Bus. 2008, 84, 101–109. [Google Scholar] [CrossRef]

- Kim, T.D.; Yang, M.Y.; Bae, J.; Min, B.A.; Lee, I.; & Kim, J. Escape from infinite freedom: Effects of constraining user freedom on the prevention of dropout in an online learning context. Comput. Hum. Behav. 2017, 66, 217–231. [Google Scholar] [CrossRef]

- Cho, M.H.; Cho, Y. Instructor scaffolding for interaction and students’ academic engagement in online learning: Mediating role of perceived online class goal structures. Int. High. Educ. 2014, 21, 25–30. [Google Scholar] [CrossRef]

- Leeds, E.; Campbell, S.; Baker, H.; Ali, R.; Brawley, D.; Crisp, J. The impact of student retention strategies: An empirical study. Int. J. Manag. Educ. 2013, 7, 22–43. [Google Scholar] [CrossRef]

- Dabbagh, N.; Kitsantas, A. Supporting self-regulation in student-centered web-based learning environments. Int. J. E-Learn. 2004, 3, 40–47. [Google Scholar]

- Lee, Y.; Choi, J. A review of online course dropout research: Implications for practice and future research. Educ. Technol. Res. Dev. 2011, 59, 593–618. [Google Scholar] [CrossRef]

- Lewis, A.D.; Huebner, E.S.; Malone, P.S.; Valois, R.F. Life satisfaction and student engagement in adolescents. J. Youth. Adolesc. 2011, 4, 249–262. [Google Scholar] [CrossRef]

- Carini, R.M.; Kuh, G.D.; Klein, S.P. Student engagement and student learning: Testing the linkages. Res. High. Educ. 2006, 47, 1–32. [Google Scholar] [CrossRef]

- Murray, J. Student led action for sustainability in higher education: A literature review. Int. J. Sustain. High. Educ. 2018, 19, 1095–1110. [Google Scholar] [CrossRef]

- Fredricks, J.; McColskey, W.; Meli, J.; Mordica, J.; Montrosse, B.; Mooney, K. Measuring Student Engagement in Upper Elementary through High School: A Description of 21 Instruments [Online]. January 2011; pp. 22–59, Regional Educational Laboratory Program. Available online: http://ies.ed.gov/ncee/edlabs (accessed on 20 January 2011).

- NSSE. Engagement Insights. Survey Findings on the Quality of Undergraduate Education; National Survey of Student Engagement, Indiana University Center for Postsecondary Research and Planning: Bloomington, IN, USA, 2017. [Google Scholar]

- Henrie, C.R.; Bodily, R.; Manwaring, K.C.; Graham, C.R. Exploring intensive longitudinal measures of student engagement in blended learning. Int. Rev. Res. Open. Distrib. Learn. 2015, 16, 131–155. [Google Scholar] [CrossRef]

- Li, F.; Qi, J.; Wang, G.; Wang, X. Traditional Classroom VS E-learning in Higher Education: Difference between Students’ Behavioral Engagement. Int. J. Emerg. Technol. Learn. 2014, 9, 48–52. [Google Scholar] [CrossRef]

- Connell, J.P.; Halpem-Felsher, B.L.; Clifford, E.; Crichlow, W.; Usinger, P. Hanging in there: Behavioral, psychological, and contextual factors affecting whether African American adolescents stay in high school. J. Adolesc. Res. 1995, 10, 41–63. [Google Scholar] [CrossRef]

- Finn, J.D.; Rock, D.A. Academic success among students at risk for school failure. J. Appl. Psychol. 1997, 82, 221–234. [Google Scholar] [CrossRef] [PubMed]

- Marks, H.M. Student engagement in instructional activity: Patterns in the elementary, middle, and high school years. Amer. Educ. Res. J. 2000, 37, 153–184. [Google Scholar] [CrossRef]

- Natriello, G. Problems in the evaluation of students and student disengagement from secondary schools. J. Res. Dev. Educ. 1984, 17, 14–24. [Google Scholar]

- Mosher, R.; MacGowan, B. Assessing Student Engagement in Secondary Schools: Alternative Conceptions, Strategies of Assessing, and Instruments. 1985; pp. 1–44. Available online: https://eric.ed.gov/?id=ED272812 (accessed on 16 January 2019).

- Fredricks, J.A.; Blumenfeld, P.C.; Paris, A.H. School engagement: Potential of the concept, state of the evidence. Rev. Educ. Res. 2004, 74, 59–109. [Google Scholar] [CrossRef]

- Reschly, A.L.; Christenson, S.L. Jingle, jangle, and conceptual haziness: Evolution and future directions of the engagement construct. In Handbook of Research on Student Engagement; Christenson, S.L., Reschly, A.L., Wylie, C., Eds.; Springer: New York, NY, USA, 2012; pp. 97–131. [Google Scholar]

- Hu, S.; Kuh, G.D. Being (dis) engaged in educationally purposeful activities: The influences of student and institutional characteristics. Res. High. Educ. 2002, 43, 555–575. [Google Scholar] [CrossRef]

- Newmann, F.M. Student Engagement and Achievement in American Secondary Schools; Teachers College Press: New York, NY, USA, 1992; pp. 51–53. [Google Scholar]

- Finn, J.D. Withdrawing from school. Revi. Educ. Res. 1989, 59, 117–142. [Google Scholar] [CrossRef]

- Appleton, J.J.; Christenson, S.L.; Kim, D.; Reschly, A.L. Measuring cognitive and psychological engagement: Validation of the Student Engagement Instrument. J. Sch. Psychol. 2006, 44, 427–445. [Google Scholar] [CrossRef]

- Handelsman, M.M.; Briggs, W.L.; Sullivan, N.; Towler, A. A measure of college student course engagement. J. Educ. Res. 2005, 98, 184–192. [Google Scholar] [CrossRef]

- Schaufeli, W.B.; Salanova, M.; González-Romá, V.; Bakker, A.B. The measurement of engagement and burnout: A two sample confirmatory factor analytic approach. J. Happiness. Stud. 2002, 3, 71–92. [Google Scholar] [CrossRef]

- Gunuc, S.; Kuzu, A. Student engagement scale: Development, reliability and validity. Assess. Eval. High. Educ. 2015, 40, 587–610. [Google Scholar] [CrossRef]

- Kahu, E.R. Framing student engagement in higher education. Stud. High. Educ. 2013, 38, 758–773. [Google Scholar] [CrossRef]

- Burch, G.F.; Heller, N.A.; Burch, J.J.; Freed, R.; Steed, S.A. Student engagement: Developing a conceptual framework and survey instrument. J. Educ. Bus. 2015, 9, 224–229. [Google Scholar] [CrossRef]

- Hu, S.; Kuh, G.D.; Li, S. The effects of engagement in inquiry-oriented activities on student learning and personal development. Innov. High. Educ. 2008, 33, 71–81. [Google Scholar] [CrossRef]

- Heaven, P.C.L.; Mark, A.; Barry, J.; Ciarrochi, J. Personality and family influences on adolescent attitudes to school and self-rated academic performance. Personal. Indiv. Differ. 2002, 32, 453–462. [Google Scholar] [CrossRef]

- Abbott, R.D.; O’Donnell, J.; Hawkins, J.D.; Hill, K.G.; Kosterman, R.; Catalano, R.F. Changing teaching practices to promote achievement and bonding to school. Am. J. Orthopsychiatry 1998, 68, 542–552. [Google Scholar] [CrossRef]

- Golladay, R.M.; Prybutok, V.R.; Huff, R.A. Critical success factors for the online learner. J. Comp. Inform. Syst. 2000, 40, 69–71. [Google Scholar]

- Hong, S. Developing competency model of learners in distance universities. J. Educ. Technol. 2009, 25, 157–186. [Google Scholar] [CrossRef]

- Dixson, M.D. Measuring Student Engagement in the Online Course: The Online Student Engagement Scale (OSE). Online Learn. 2015, 19, 51–65. [Google Scholar] [CrossRef]

- Choi, H.; Lee, Y.; Jung, I.; Latchem, C. The extent of and reasons for non-re-enrollment: A case of Korea National Open University. Int. Rev. Res. Open Distrib. Learn. 2013, 14, 19–36. [Google Scholar]

- Devellis, R. Scale Development: Theory and Application, 3rd ed.; Sage Publication: London, UK, 2012. [Google Scholar]

- Meir, E.I.; Gati, I. Guidelines for Item Selection in Inventories Yielding Score Profiles12. Educat. Psychol. Measur. 1981, 41, 1011–1016. [Google Scholar] [CrossRef]

- Unsworth, N.; Brewer, G.A.; Spillers, G.J. There’s more to the working memory capacity—Fluid intelligence relationship than just secondary memory. Psychon. Bullet. Rev. 2009, 16, 931–937. [Google Scholar] [CrossRef] [PubMed]

- Shroff, R.H.; Vogel, D.R.; Coombes, J.; Lee, F. Student e-learning intrinsic motivation: A qualitative analysis. Commun. Assoc. Inform. Syst. 2007, 19, 241–260. [Google Scholar] [CrossRef]

- Horton, W. E-learning by Design; John Wiley & Sons: San Francisco, CA, USA, 2011. [Google Scholar]

- Klem, A.M.; Connell, J.P. Relationships matter: Linking teacher support to student engagement and achievement. J. Sch. Health 2004, 74, 262–273. [Google Scholar] [CrossRef]

- Jung, Y.J.; Lee, J.M. Learning engagement and persistence in massive open online courses (MOOCS). Comput. Educ. 2018, 122, 9–22. [Google Scholar] [CrossRef]

- Garrison, D.R.; Anderson, T.; Archer, W. The first decade of the community of inquiry framework: A retrospective. Internet. High. Educ. 2010, 13, 5–9. [Google Scholar] [CrossRef]

- Joo, Y.J.; Lim, K.Y.; Kim, E.K. Online university students’ satisfaction and persistence: Examining perceived level of presence, usefulness and ease of use as predictors in a structural model. Comput. Educ. 2011, 57, 1654–1664. [Google Scholar] [CrossRef]

- Stefanou, C.R.; Perencevich, K.C.; DiCintio, M.; Turner, J.C. Supporting autonomy in the classroom: Ways teachers encourage student decision making and ownership. Educ. Psychol. 2004, 39, 97–110. [Google Scholar] [CrossRef]

- Parkes, M.; Reading, C.; Stein, S. The competencies required for effective performance in a university e-learning environment. Australas. J. Educ. Technol. 2013, 29, 777–791. [Google Scholar] [CrossRef]

| Factor Items | Psychological Motivation | Peer Collaboration | Cognitive Problem-Solving | Interactions with Instructors | Community Support | Learning Management |

|---|---|---|---|---|---|---|

| Enjoying learning | 0.774 | −0.095 | 0.079 | 0.061 | −0.097 | 0.041 |

| Stimulating interest | 0.750 | −0.044 | 0.144 | −0.011 | −0.106 | 0.034 |

| Usefulness of the course | 0.725 | −0.037 | −0.009 | −0.033 | −0.045 | 0.197 |

| Satisfied with the course | 0.721 | 0.056 | 0.162 | −0.064 | 0.029 | −0.033 |

| Learning expectations | 0.704 | −0.102 | 0.027 | 0.210 | −0.055 | 0.093 |

| Motivation | 0.702 | −0.066 | 0.118 | 0.067 | −0.217 | 0.069 |

| Requesting help | −0.042 | 0.893 | −0.057 | 0.029 | −0.018 | 0.011 |

| Collaborative problem solving | −0.038 | 0.843 | 0.059 | 0.075 | −0.053 | −0.024 |

| Responding to questions | 0.111 | 0.813 | 0.075 | −0.084 | 0.195 | 0.022 |

| Collaborative learning | −0.006 | 0.811 | −0.011 | 0.110 | −0.150 | −0.032 |

| Collaborative assignments | −0.087 | 0.663 | 0.069 | 0.175 | −0.220 | 0.077 |

| Deriving an idea | −0.023 | 0.006 | 0.849 | 0.039 | 0.038 | −0.043 |

| Applying knowledge | −0.047 | 0.087 | 0.788 | −0.098 | −0.041 | 0.060 |

| Analyzing knowledge | −0.012 | 0.007 | 0.780 | 0.038 | 0.175 | 0.092 |

| Judging value of information | 0.136 | −0.034 | 0.703 | −0.047 | 0.035 | 0.026 |

| Approach with new perspective | 0.135 | −0.052 | 0.703 | 0.107 | −0.172 | −0.076 |

| Communicating with the instructor | 0.049 | 0.036 | 0.048 | 0.871 | 0.124 | −0.061 |

| Asking questions | −0.005 | 0.049 | −0.037 | 0.836 | 0.113 | 0.064 |

| Belonging to community | 0.314 | 0.222 | −0.049 | −0.047 | −0.649 | 0.118 |

| Connection with peers | 0.341 | 0.223 | −0.030 | −0.067 | −0.636 | 0.101 |

| Interaction with peers | −0.055 | 0.455 | 0.128 | −0.005 | −0.503 | 0.114 |

| Self-directed study | −0.127 | 0.093 | 0.024 | 0.022 | −0.050 | 0.764 |

| Managing own learning | 0.063 | −0.158 | 0.045 | −0.010 | −0.242 | 0.761 |

| Managing own learning schedule | 0.041 | −0.056 | 0.147 | 0.062 | −0.039 | 0.664 |

| Eigenvalues | 11.235 | 3.633 | 1.982 | 1.522 | 1.19 | 1.186 |

| Explained variance (%) | 35.108 | 11.354 | 6.195 | 4.756 | 3.722 | 3.706 |

| Total explained variance (%) | 35.108 | 46.462 | 52.657 | 57.413 | 61.135 | 64.841 |

| SRMR (<0.10) | RMSEA (<0.08) | CFI (>0.90) | TLI (>0.90) | AGFI | RFI | ||

|---|---|---|---|---|---|---|---|

| LO 90 | M | HI 90 | |||||

| 0.0891 | 0.061 | 0.066 | 0.071 | 0.919 | 0.910 | 0.865 | 0.874 |

| Factors | Items |

|---|---|

| Psychological motivation (6) | Online classes enhance my interest in learning. |

| I am motivated to study when I take an online class. | |

| Online classes are very useful to me. | |

| It is very interesting to take online classes. | |

| After taking an online lesson, I look forward to the next one. | |

| I am satisfied with the online class I am taking. | |

| Peer collaboration (5) | I study the lesson contents with other students. |

| I try to solve difficult problems with other students when I encounter them. | |

| I work with other students on online projects or assignments. | |

| I ask other students for help when I can’t understand a concept taught in my online class. | |

| I try to answer the questions that other students ask. | |

| Cognitive problem solving (5) | I can derive new interpretations and ideas from the knowledge I have learned in my online classes. |

| I can deeply analyze thoughts, experiences, and theories about the knowledge I have learned in my online classes. | |

| I can judge the value of the information related to the knowledge learned in my online classes. | |

| I tend to apply the knowledge I have learned in online classes to real problems or new situations. | |

| I try to approach the subject of my online class with a new perspective. | |

| Interactions with instructors (2) | I communicate with the instructor privately for extra help. |

| I often ask the instructor about the contents of the lesson. | |

| Community support (3) | I feel a connection with the students who are in my online classes. |

| I feel a sense of belonging to the online class community. | |

| I frequently interact with other students in my online classes. | |

| Learning management (4) | I study related learning contents by myself after the online lesson. |

| I remove all distracting environmental factors when taking online classes. | |

| I manage my own learning using the online system. | |

| When I take an online course, I plan a learning schedule. |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Lee, J.; Song, H.-D.; Hong, A.J. Exploring Factors, and Indicators for Measuring Students’ Sustainable Engagement in e-Learning. Sustainability 2019, 11, 985. https://doi.org/10.3390/su11040985

Lee J, Song H-D, Hong AJ. Exploring Factors, and Indicators for Measuring Students’ Sustainable Engagement in e-Learning. Sustainability. 2019; 11(4):985. https://doi.org/10.3390/su11040985

Chicago/Turabian StyleLee, Jeongju, Hae-Deok Song, and Ah Jeong Hong. 2019. "Exploring Factors, and Indicators for Measuring Students’ Sustainable Engagement in e-Learning" Sustainability 11, no. 4: 985. https://doi.org/10.3390/su11040985

APA StyleLee, J., Song, H.-D., & Hong, A. J. (2019). Exploring Factors, and Indicators for Measuring Students’ Sustainable Engagement in e-Learning. Sustainability, 11(4), 985. https://doi.org/10.3390/su11040985