EGGA: An Error-Guided Generative Augmentation and Optimized ML-Based IDS for EV Charging Network Security

Abstract

1. Introduction

- It proposes EGGA, a novel error-guided generative augmentation strategy that identifies misclassified samples during cross-validation and uses a cGAN model to generate hard case-focused synthetic data and strengthen error-prone decision regions.

- It proposes an AutoML-based optimized XGBoost model using BO-TPE for maximizing intrusion detection performance.

- It designs a multi-stage closed-loop IDS pipeline for EV charging networks that tightly integrates a strong base learner, error-guided generative augmentation, and automated model tuning, enabling more autonomous and robust detection under imbalanced, multi-class, and nonstationary EVCS network traffic.

- The code for the proposed methods will be released and made publicly available on GitHub (https://github.com/LiYangHart/EGGA-Error-Guided-Generative-AI-and-Optimized-Machine-Learning-based-Intrusion-Detection-System (accessed on 9 April 2026)).

2. Related Work

2.1. Optimized ML-Based IDSs

2.2. Generative AI-Based IDSs

2.3. Literature Comparison and Our Contributions

3. Proposed cGAN-Based IDS Framework

3.1. System Overview

3.2. Data Pre-Processing

3.3. Proposed cGAN-Based Error-Guided Generative Augmentation (EGGA) Method

- Step 1: Error mining via stratified cross-validation: Given the training set , EGGA runs stratified K fold cross-validation with a base learner (default XGBoost configured for multi-class probabilities). In each fold, the learner is trained on folds and evaluated on the held-out fold. For each validation sample i, the predicted label is and misclassified samples are collected when . The union across folds forms the mistake set:This design ensures that contains samples that are difficult under out-of-fold evaluation, rather than merely capturing training noise. In addition, the code stores metadata such as fold index and predicted probabilities, but EGGA uses only the original feature structure and the ground truth labels for generator training.

- Step 2: Train a single cGAN on the aggregated mistake set: Let and denote the features and labels extracted from . EGGA trains one cGAN on , where labels are transformed to one-hot vectors using a deterministic mapping class_to_index. During each epoch, EGGA samples a minibatch of real samples from and samples ∼. It also samples a minibatch of labels for synthetic generation by drawing from the empirical label distribution in , producing . The generator produces , and the discriminator is updated by minimizing:Then the generator is updated through the combined model by minimizing:which encourages synthetic samples to be classified as real under the same label condition. The implementation further applies a simple stabilization heuristic by scaling the generator target label with a schedule factor that increases during training, which reduces overly aggressive generator updates in early epochs.

- Step 3: Error-guided synthesis with mistake-proportional allocation: After training, EGGA generates synthetic samples per class using the learned condition. Let be the mistake count for class c. EGGA allocates a synthetic budget:where m is a user-controlled multiplier. For each class c with , EGGA samples ∼ and sets the condition to , then generates . Finally, the augmented training set is constructed as:where denotes concatenation.

| Algorithm 1: EGGA: Error-guided generative augmentation. |

|

3.4. Optimized XGBoost Model Using Bayesian Optimization with Tree-Structured Parzen Estimator (BO-TPE)

- Boosted trees and XGBoost remain highly competitive or superior to deep neural networks on medium-scale tabular problems, often with lower tuning burden and stronger out-of-the-box performance [7].

- The tree ensemble structure captures non-linear feature interactions that occur naturally in network behavior, while maintaining efficient inference that is suitable for real-time or near-real-time detection [30].

- XGBoost includes explicit regularization and shrinkage mechanisms that are effective against overfitting on imbalanced security datasets, especially after the training distribution is reshaped by targeted augmentation [31].

| Algorithm 2: BO-TPE hyperparameter optimization for XGBoost on EGGA-augmented data. |

|

4. Performance Evaluation

4.1. Experimental Setup

4.2. Experimental Results and Discussion

5. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Tanyıldız, H.; Şahin, C.B.; Dinler, Ö.B.; Migdady, H.; Saleem, K.; Smerat, A.; Gandomi, A.H.; Abualigah, L. Detection of Cyber Attacks in Electric Vehicle Charging Systems Using a Remaining Useful Life Generative Adversarial Network. Sci. Rep. 2025, 15, 10092. [Google Scholar] [CrossRef] [PubMed]

- Morgan, E.F.; Ali, M.H. Digital Twin-Driven Cybersecurity for 5G/6G-Enabled Electric Vehicle Charging Infrastructure: A Review. Energies 2025, 18, 6048. [Google Scholar] [CrossRef]

- Fatemeh, D.; Li, Y.; Firouz, B.A.; Abdallah, S. On TinyML and Cybersecurity: Electric Vehicle Charging Infrastructure Use Case. IEEE Access 2024, 12, 108703–108730. [Google Scholar] [CrossRef]

- Gupta, K.; Panigrahi, B.K.; Joshi, A.; Paul, K. Demonstration of Denial of Charging Attack on Electric Vehicle Charging Infrastructure and Its Consequences. Int. J. Crit. Infrastruct. Prot. 2024, 46, 100693. [Google Scholar] [CrossRef]

- Ronanki, D.; Karneddi, H. Electric Vehicle Charging Infrastructure: Review, Cyber Security Considerations, Potential Impacts, Countermeasures, and Future Trends. IEEE J. Emerg. Sel. Top. Power Electron. 2024, 12, 242–256. [Google Scholar] [CrossRef]

- Johnson, J.; Berg, T.; Anderson, B.; Wright, B. Review of Electric Vehicle Charger Cybersecurity Vulnerabilities, Potential Impacts, and Defenses. Energies 2022, 15, 3931. [Google Scholar] [CrossRef]

- Yang, L. Optimized and Automated Machine Learning Techniques Towards IoT Data Analytics and Cybersecurity. Ph.D. Thesis, The University of Western Ontario (Canada), London, ON, Canada, 2022. [Google Scholar]

- Liu, M.; Teng, F.; Zhang, Z.; Ge, P.; Sun, M.; Deng, R.; Cheng, P.; Chen, J. Enhancing Cyber-Resiliency of DER-Based Smart Grid: A Survey. IEEE Trans. Smart Grid 2024, 15, 4998–5030. [Google Scholar] [CrossRef]

- Zhang, Z.; Liu, M.; Sun, M.; Deng, R.; Cheng, P.; Niyato, D.; Chow, M.-Y.; Chen, J. Vulnerability of Machine Learning Approaches Applied in IoT-Based Smart Grid: A Review. IEEE Internet Things J. 2024, 11, 18951–18975. [Google Scholar] [CrossRef]

- Gui, G.; Xue, Z.; Zhao, R.; Deng, X.; Zhan, M. Lightweight Intrusion Detection Methods Based on Artificial Intelligence for IoT Networks. Adv. Mach. Learn. Cyber-Attack Detect. IoT Netw. 2025, 193–225. [Google Scholar] [CrossRef]

- Yang, L.; Shami, A. Toward Autonomous and Efficient Cybersecurity: A Multi-Objective AutoML-Based Intrusion Detection System. IEEE Trans. Mach. Learn. Commun. Netw. 2025, 3, 1244–1264. [Google Scholar] [CrossRef]

- Radanliev, P.; Santos, O.; Ani, U.D. Generative AI Cybersecurity and Resilience. Front. Artif. Intell. 2025, 8, 1568360. [Google Scholar] [CrossRef]

- Buedi, E.D.; Ghorbani, A.A.; Dadkhah, S.; Ferreira, R.L. Enhancing EV Charging Station Security Using a Multi-Dimensional Dataset: CICEVSE2024. In Data and Applications Security and Privacy XXXVIII, Proceedings of the 38th Annual IFIP WG 11.3 Conference, DBSec 2024, San Jose, CA, USA, 15–17 July 2024; Springer: Cham, Switzerland, 2024; Volume 14901, pp. 171–190. [Google Scholar] [CrossRef]

- Sharafaldin, I.; Lashkari, A.H.; Ghorbani, A.A. Toward Generating a New Intrusion Detection Dataset and Intrusion Traffic Characterization. Proceedings of the 4th International Conference on Information Systems Security and Privacy ICISSP, Funchal, Portugal, 22–24 January 2018, Volume 1, pp. 108–116. [CrossRef]

- Bakro, M.; Kumar, R.R.; Husain, M.; Ashraf, Z.; Ali, A.; Yaqoob, S.I.; Ahmed, M.N.; Parveen, N. Building a Cloud-IDS by Hybrid Bio-Inspired Feature Selection Algorithms Along With Random Forest Model. IEEE Access 2024, 12, 8846–8874. [Google Scholar] [CrossRef]

- Elmasry, W.; Akbulut, A.; Zaim, A.H. Evolving Deep Learning Architectures for Network Intrusion Detection Using a Double PSO Metaheuristic. Comput. Netw. 2020, 168, 107042. [Google Scholar] [CrossRef]

- Yang, L.; Shami, A. A Transfer Learning and Optimized CNN Based Intrusion Detection System for Internet of Vehicles. Proceedings of the 2022 IEEE International Conference on Communications (ICC), Seoul, Republic of Korea, 16–20 May 2022, IEEE: Piscataway, NJ, USA; pp. 1–6.

- Naeem, H.; Ullah, F.; Krejcar, O.; Li, D.; Vasan, D. Optimizing Vehicle Security: A Multiclassification Framework Using Deep Transfer Learning and Metaheuristic-Based Genetic Algorithm Optimization. Int. J. Crit. Infrastruct. Prot. 2025, 49, 100745. [Google Scholar] [CrossRef]

- Khan, M.A.; Iqbal, N.; Imran; Jamil, H.; Kim, D.H. An Optimized Ensemble Prediction Model Using AutoML Based on Soft Voting Classifier for Network Intrusion Detection. J. Netw. Comput. Appl. 2023, 212, 103560. [Google Scholar] [CrossRef]

- Singh, A.; Amutha, J.; Nagar, J.; Sharma, S.; Lee, C.C. AutoML-ID: Automated Machine Learning Model for Intrusion Detection Using Wireless Sensor Network. Sci. Rep. 2022, 12, 9074. [Google Scholar] [CrossRef] [PubMed]

- Asim, M.; Sair, F.; Ishaq, M.; Cengiz, K.; Akleylek, S.; Ivković, N. VAE-XGBoost: A Hybrid Intrusion Detection System for next Generation EV Charging Networks. PeerJ Comput. Sci. 2026, 12, e3506. [Google Scholar] [CrossRef]

- Lee, J.H.; Park, K.H. GAN-Based Imbalanced Data Intrusion Detection System. Pers. Ubiquitous Comput. 2021, 25, 121–128. [Google Scholar] [CrossRef]

- Habibi, O.; Chemmakha, M.; Lazaar, M. Imbalanced Tabular Data Modelization Using CTGAN and Machine Learning to Improve IoT Botnet Attacks Detection. Eng. Appl. Artif. Intell. 2023, 118, 105669. [Google Scholar] [CrossRef]

- Bouzeraib, W.; Ghenai, A.; Zeghib, N. Enhancing IoT Intrusion Detection Systems Through Horizontal Federated Learning and Optimized WGAN-GP. IEEE Access 2025, 13, 45059–45076. [Google Scholar] [CrossRef]

- Jiang, J.; Tang, Q.; Wang, B.; Tang, X.; Zhu, F.; Yadav, K.; Filali, A.; Vasilakos, A.V. DEAL: Dynamic Ensemble Algorithm for Imbalanced Streaming Data in UAV-Assisted Consumer IoT. IEEE Trans. Consum. Electron. 2026, Early Access. [Google Scholar] [CrossRef]

- Wang, Z.; Wang, P.; Liu, K.; Wang, P.; Fu, Y.; Lu, C.T.; Aggarwal, C.C.; Pei, J.; Zhou, Y. A Comprehensive Survey on Data Augmentation. IEEE Trans. Knowl. Data Eng. 2026, 38, 47–66. [Google Scholar] [CrossRef]

- Shi, R.; Wang, Y.; Du, M.; Shen, X.; Chang, Y.; Wang, X. A Comprehensive Survey of Synthetic Tabular Data Generation. arXiv 2025, arXiv:2504.16506. [Google Scholar] [CrossRef]

- Mirza, M.; Osindero, S. Conditional Generative Adversarial Nets. arXiv 2014, arXiv:1411.1784. [Google Scholar] [CrossRef]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining; Association for Computing Machinery: New York, NY, USA, 2016; pp. 785–794. [Google Scholar]

- Le, T.-T.-H.; Oktian, Y.E.; Kim, H. XGBoost for Imbalanced Multiclass Classification-Based Industrial Internet of Things Intrusion Detection Systems. Sustainability 2022, 14, 8707. [Google Scholar] [CrossRef]

- Grinsztajn, L.; Oyallon, E.; Varoquaux, G. Why do tree-based models still outperform deep learning on tabular data? arXiv 2022, arXiv:2207.08815. [Google Scholar] [CrossRef]

- Bergstra, J.; Bardenet, R.; Bengio, Y.; Kégl, B. Algorithms for Hyper-Parameter Optimization. In Proceedings of the 25th International Conference on Neural Information Processing Systems; Curran Associates Inc.: Red Hook, NY, USA, 2011; pp. 2546–2554. [Google Scholar]

- Pedregosa, F.; Varoquaux, G.; Gramfort, A.; Michel, V.; Thirion, B.; Grisel, O.; Blondel, M.; Prettenhofer, P.; Weiss, R.; Dubourg, V.; et al. Scikit-Learn: Machine Learning in Python. J. Mach. Learn. Res. 2011, 12, 2825–2830. [Google Scholar]

- Chollet, F. Keras. GitHub Repository. 2015. Available online: https://github.com/keras-team/keras (accessed on 9 April 2026).

- Pineau, J.; Vincent Larivière, P.; Sinha, K.; Larivière, V.; Beygelzimer, A.; d’Alché Buc, F.; Fox, E.; Larochelle, H. Improving Reproducibility in Machine Learning Research: A Report from the NeurIPS 2019 Reproducibility Program. J. Mach. Learn. Res. 2021, 22, 1–20. [Google Scholar]

- Salo, F.; Injadat, M.; Nassif, A.B.; Shami, A.; Essex, A. Data Mining Techniques in Intrusion Detection Systems: A Systematic Literature Review. IEEE Access 2018, 6, 56046–56058. [Google Scholar] [CrossRef]

- Chawla, N.V.; Bowyer, K.W.; Hall, L.O.; Kegelmeyer, W.P. SMOTE: Synthetic Minority over-Sampling Technique. J. Artif. Intell. Res. 2002, 16, 321–357. [Google Scholar] [CrossRef]

- Jiang, J.; Liu, F.; Liu, Y.; Tang, Q.; Wang, B.; Zhong, G.; Wang, W. A Dynamic Ensemble Algorithm for Anomaly Detection in IoT Imbalanced Data Streams. Comput. Commun. 2022, 194, 250–257. [Google Scholar] [CrossRef]

- Zhang, Z.; Wang, B.; Liu, M.; Qin, Y.; Wang, J.; Tian, Y.; Ma, J. Limitation of Reactance Perturbation Strategy Against False Data Injection Attacks on IoT-Based Smart Grid. IEEE Internet Things J. 2024, 11, 11619–11631. [Google Scholar] [CrossRef]

- Liu, M.; Zhang, X.; Zhang, R.; Zhou, Z.; Zhang, Z.; Deng, R. Detection-Triggered Recursive Impact Mitigation Against Secondary False Data Injection Attacks in Cyber-Physical Microgrid. IEEE Trans. Smart Grid 2025, 16, 1744–1761. [Google Scholar] [CrossRef]

| Class Label (Attack Type or Normal) | Sample Counts | Class Distribution (%) | Original Training Sample Counts | XGBoost Error Counts in Five-Fold CV | Training Sample Counts After EGGA | Test Sample Counts |

|---|---|---|---|---|---|---|

| TCP floods | 7696 | 21.413 | 6157 | 1 | 6177 | 1539 |

| Stealth SYN scanning | 5807 | 16.157 | 4645 | 1 | 4665 | 1162 |

| Port scanning | 4739 | 13.185 | 3791 | 1 | 3811 | 948 |

| Service detection | 3690 | 10.267 | 2952 | 6 | 3072 | 738 |

| Identity rotation and rotation flood | 3421 | 9.518 | 2737 | 0 | 2737 | 684 |

| Vulnerability scan | 3110 | 8.653 | 2488 | 1 | 2508 | 622 |

| OS fingerprinting | 2787 | 7.754 | 2229 | 0 | 2229 | 558 |

| Aggressive scan | 1898 | 5.281 | 1518 | 1 | 1538 | 380 |

| UDP flood | 1577 | 4.388 | 1262 | 0 | 1262 | 315 |

| Slow request starvation | 1044 | 2.905 | 835 | 0 | 835 | 209 |

| ICMP flood or fragmentation | 90 | 0.250 | 72 | 5 | 172 | 18 |

| Normal | 82 | 0.228 | 66 | 0 | 66 | 16 |

| Class Label (Attack Type or Normal) | Sample Counts | Class Distribution (%) | Original Training Sample Counts | XGBoost Error Counts in Five-Fold CV | Training Sample Counts After EGGA | Test Sample Counts |

|---|---|---|---|---|---|---|

| Normal | 22,731 | 40.118 | 18,129 | 45 | 19,029 | 4602 |

| DoS | 19,035 | 33.595 | 15,225 | 15 | 15,525 | 3810 |

| Port scan | 7946 | 14.024 | 6382 | 9 | 6562 | 1564 |

| Brute force | 2767 | 4.883 | 2243 | 9 | 2423 | 524 |

| Web-attack | 2180 | 3.847 | 1736 | 14 | 2016 | 444 |

| Bot | 1966 | 3.470 | 1584 | 15 | 1884 | 382 |

| Infiltration | 36 | 0.064 | 29 | 6 | 149 | 7 |

| Category | Method | Accuracy (%) | Precision (%) | Recall (%) | F1 (%) | Training Time (s) | Avg Test Time per Sample (ms) |

|---|---|---|---|---|---|---|---|

| Optimized ML Methods in the Literature | GA-RF [15] | 99.917 | 99.916 | 99.917 | 99.916 | 36.86 | 0.0276 |

| PSO-LSTM [16] | 83.948 | 81.537 | 83.948 | 82.174 | 219.09 | 0.1461 | |

| PSO-CNN [17] | 95.966 | 96.027 | 95.966 | 95.895 | 245.33 | 0.0723 | |

| GA-CNN [18] | 96.036 | 96.174 | 96.036 | 95.978 | 242.15 | 0.1130 | |

| OE-IDS [19] | 99.930 | 99.930 | 99.930 | 99.930 | 165.24 | 0.0386 | |

| AutoML-ID [20] | 99.930 | 99.930 | 99.930 | 99.930 | 116.23 | 0.0044 | |

| Generative AI Methods in the Literature | XGBoost + EGGA-VAE [21] | 99.930 | 99.930 | 99.930 | 99.930 | 32.62 | 0.0024 |

| XGBoost + EGGA-GAN [22] | 99.930 | 99.930 | 99.930 | 99.930 | 68.47 | 0.0033 | |

| XGBoost + EGGA-CTGAN [23] | 99.930 | 99.930 | 99.930 | 99.930 | 74.61 | 0.0020 | |

| XGBoost + EGGA-WGAN-GP [24] | 99.917 | 99.916 | 99.917 | 99.916 | 38.54 | 0.0030 | |

| XGBoost + SMOTE [37] | 99.930 | 99.930 | 99.930 | 99.930 | 7.39 | 0.0034 | |

| Proposed Framework (with Ablation Studies) | XGBoost | 99.917 | 99.916 | 99.917 | 99.916 | 6.86 | 0.0022 |

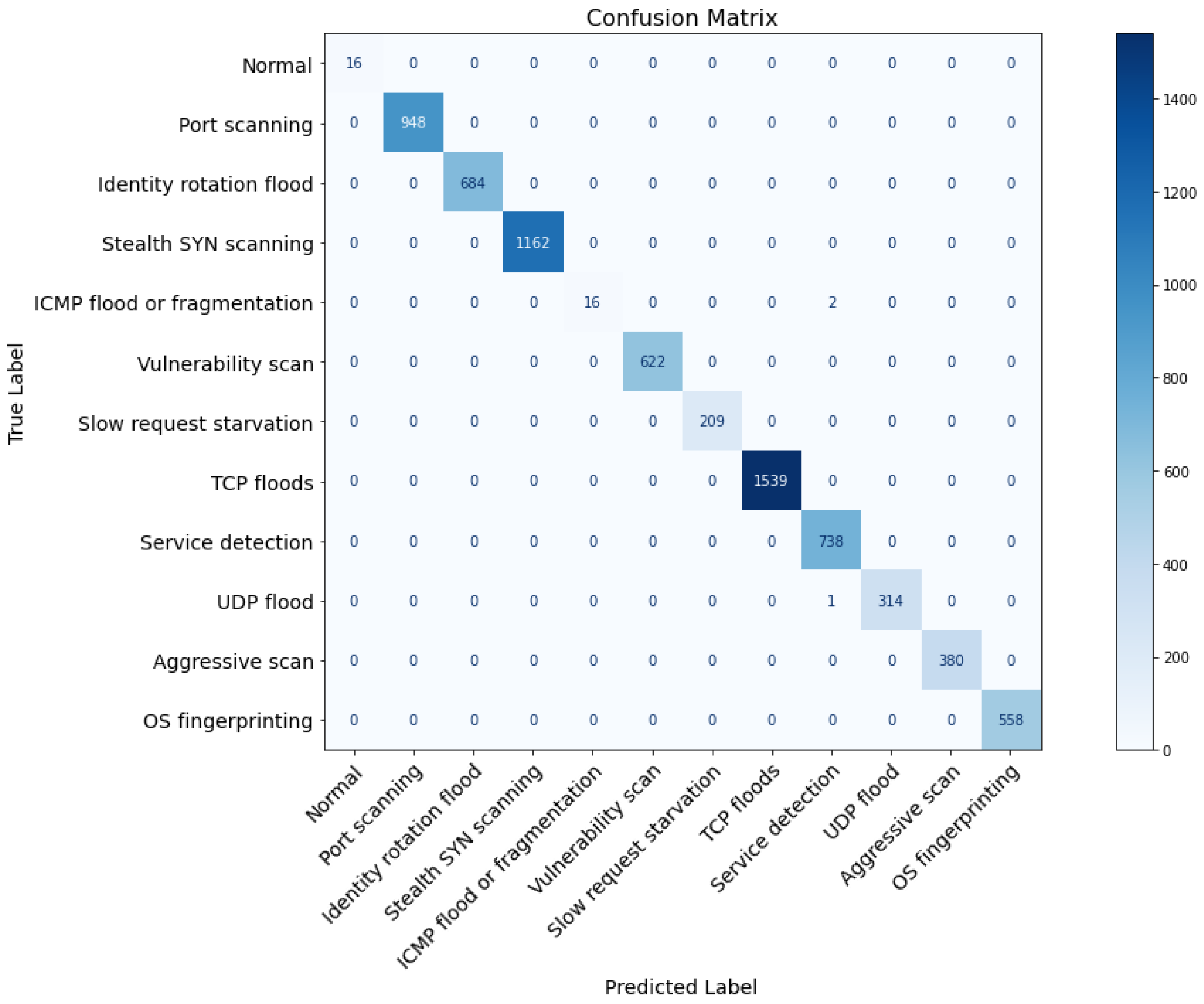

| XGBoost + EGGA-cGAN | 99.944 | 99.945 | 99.944 | 99.944 | 23.18 | 0.0023 | |

| Full Proposed Framework (XGBoost + EGGA-cGAN + BO-TPE) | 99.958 | 99.958 | 99.958 | 99.957 | 56.32 | 0.0018 |

| Category | Method | Accuracy (%) | Precision (%) | Recall (%) | F1 (%) | Training Time (s) | Avg Test Time per Sample (ms) |

|---|---|---|---|---|---|---|---|

| Optimized ML Methods in the Literature | GA-RF [15] | 99.532 | 99.534 | 99.532 | 99.529 | 58.28 | 0.0294 |

| PSO-LSTM [16] | 93.109 | 94.679 | 93.109 | 93.354 | 169.31 | 0.0289 | |

| PSO-CNN [17] | 94.635 | 95.700 | 94.635 | 94.844 | 218.19 | 0.0507 | |

| GA-CNN [18] | 94.617 | 95.614 | 94.617 | 94.783 | 241.14 | 0.0528 | |

| OE-IDS [19] | 99.541 | 99.543 | 99.541 | 99.583 | 243.12 | 0.0318 | |

| AutoML-ID [20] | 99.665 | 99.665 | 99.665 | 99.661 | 173.60 | 0.0045 | |

| Generative AI Methods in the Literature | XGBoost + EGGA-VAE [21] | 99.806 | 99.806 | 99.806 | 99.802 | 58.71 | 0.0024 |

| XGBoost + EGGA-GAN [22] | 99.797 | 99.797 | 99.797 | 99.794 | 80.82 | 0.0033 | |

| XGBoost + EGGA-CTGAN [23] | 99.779 | 99.780 | 99.779 | 99.776 | 86.38 | 0.0020 | |

| XGBoost + EGGA-WGAN-GP [24] | 99.797 | 99.797 | 99.797 | 99.794 | 47.65 | 0.0030 | |

| XGBoost + SMOTE [37] | 99.797 | 99.797 | 99.797 | 99.794 | 47.65 | 0.0030 | |

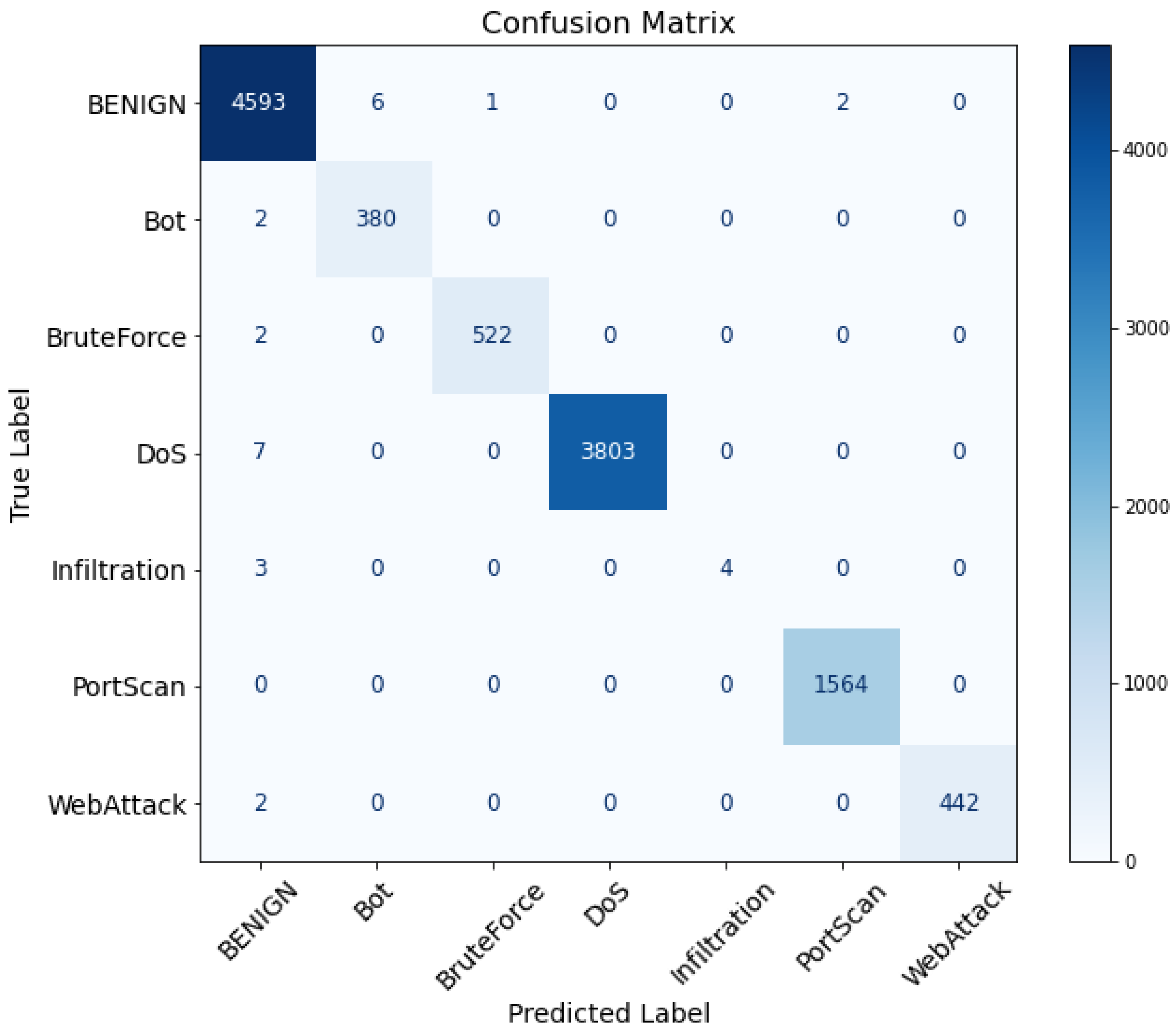

| Proposed Framework (with Ablation Studies) | XGBoost | 99.779 | 99.780 | 99.779 | 99.776 | 10.32 | 0.0018 |

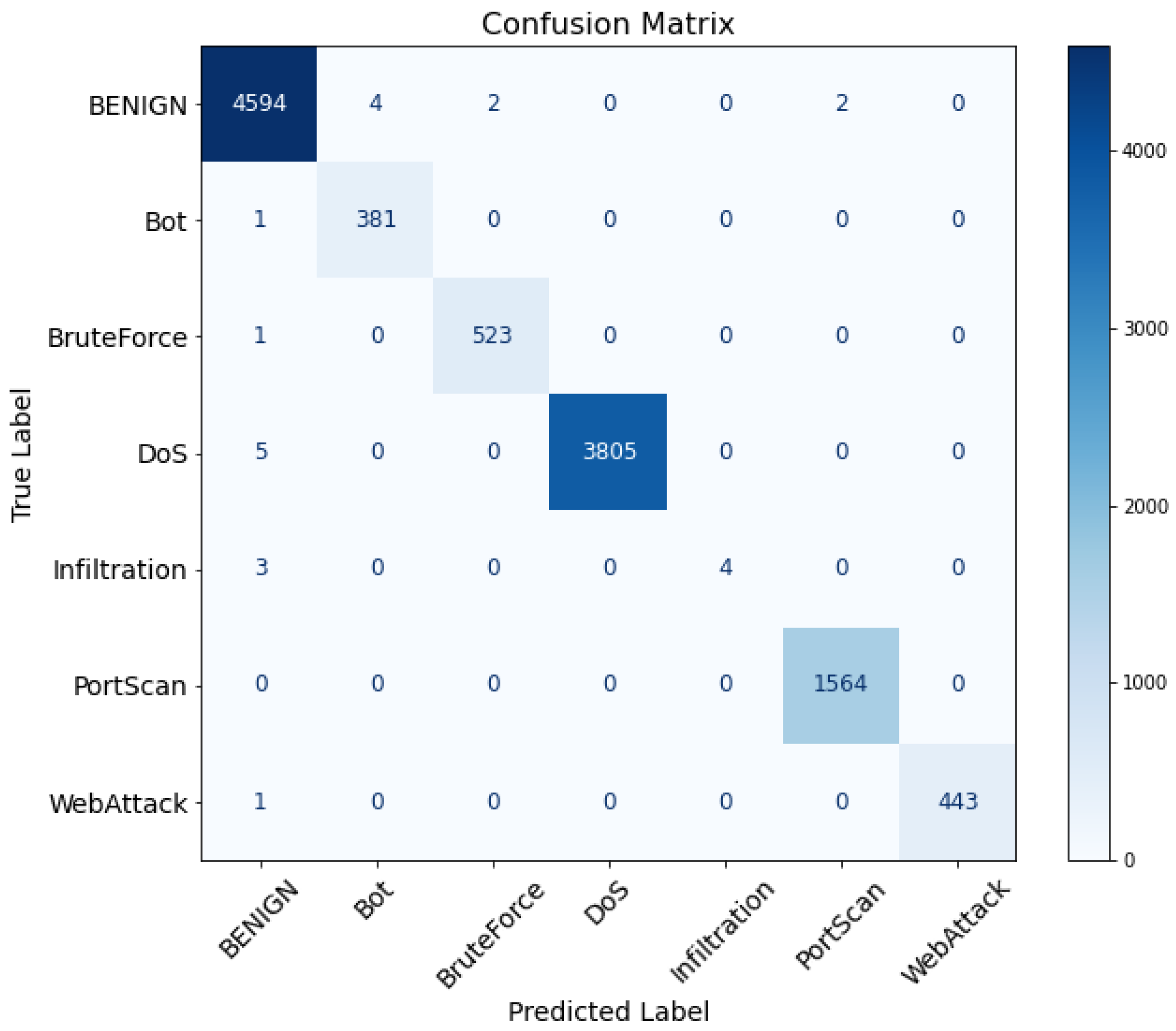

| XGBoost + EGGA-cGAN | 99.815 | 99.815 | 99.815 | 99.811 | 42.02 | 0.0019 | |

| Full Proposed Framework (XGBoost + EGGA-cGAN + BO-TPE) | 99.832 | 99.833 | 99.832 | 99.829 | 78.39 | 0.0018 |

| Hyperparameter of XGBoost | Search Space | Optimal Value on the CICEVSE2024 Dataset | Optimal Value on the CICIDS2017 Dataset |

|---|---|---|---|

| n_estimators | [20, 200] | 80 | 80 |

| max_depth | [5, 100] | 30 | 20 |

| learning_rate | [0.001, 1] | 0.733 | 0.789 |

| Class Label (Attack Type or Normal) | Test Set Sample Counts | Original XGBoost Error Counts on the Test Set | Original XGBoost Per-Class F1 (%) | Proposed Method Error Counts on the Test Set | Proposed Method Per-Class F1 (%) |

|---|---|---|---|---|---|

| TCP floods | 1539 | 0 | 99.976 | 0 | 100.00 |

| Stealth SYN scanning | 1162 | 0 | 100.00 | 0 | 100.00 |

| Port scanning | 948 | 0 | 100.00 | 0 | 100.00 |

| Service detection | 738 | 2 | 99.661 | 0 | 99.797 |

| Identity rotation and rotation flood | 684 | 0 | 100.00 | 0 | 100.00 |

| Vulnerability scan | 622 | 1 | 99.920 | 0 | 100.00 |

| OS fingerprinting | 558 | 0 | 100.00 | 0 | 100.00 |

| Aggressive scan | 380 | 0 | 100.00 | 0 | 100.00 |

| UDP flood | 315 | 1 | 99.841 | 1 | 99.841 |

| Slow request starvation | 209 | 0 | 99.761 | 0 | 100.00 |

| ICMP flood or fragmentation | 18 | 2 | 91.429 | 2 | 94.117 |

| Normal | 16 | 0 | 100.00 | 0 | 100.00 |

| Overall | 7189 | 6 | 99.916 | 3 | 99.957 |

| Class Label (Attack Type or Normal) | Test Set Sample Counts | Original XGBoost Error Counts on the Test Set | Original XGBoost Per-Class F1 (%) | Proposed Method Error Counts on the Test Set | Proposed Method Per-Class F1 (%) |

|---|---|---|---|---|---|

| Normal | 4602 | 9 | 99.729 | 8 | 99.794 |

| DoS | 3810 | 7 | 99.908 | 5 | 99.934 |

| Port scan | 1564 | 0 | 99.936 | 0 | 99.936 |

| Brute force | 524 | 2 | 99.713 | 1 | 99.714 |

| Web-attack | 444 | 2 | 99.774 | 1 | 99.887 |

| Bot | 382 | 2 | 98.958 | 1 | 99.348 |

| Infiltration | 7 | 3 | 72.727 | 3 | 72.727 |

| Overall | 11,333 | 25 | 99.776 | 19 | 99.829 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.

Share and Cite

Yang, L.; Kirubavathi, G. EGGA: An Error-Guided Generative Augmentation and Optimized ML-Based IDS for EV Charging Network Security. Future Internet 2026, 18, 202. https://doi.org/10.3390/fi18040202

Yang L, Kirubavathi G. EGGA: An Error-Guided Generative Augmentation and Optimized ML-Based IDS for EV Charging Network Security. Future Internet. 2026; 18(4):202. https://doi.org/10.3390/fi18040202

Chicago/Turabian StyleYang, Li, and G. Kirubavathi. 2026. "EGGA: An Error-Guided Generative Augmentation and Optimized ML-Based IDS for EV Charging Network Security" Future Internet 18, no. 4: 202. https://doi.org/10.3390/fi18040202

APA StyleYang, L., & Kirubavathi, G. (2026). EGGA: An Error-Guided Generative Augmentation and Optimized ML-Based IDS for EV Charging Network Security. Future Internet, 18(4), 202. https://doi.org/10.3390/fi18040202