Deep Learning-Based Sentimental Analysis for Large-Scale Imbalanced Twitter Data

Abstract

1. Introduction

1.1. Challenges in Tweets’ Contents

- Mostly users type in casual text that may contain different spelling and grammatical mistakes [11]. Tweets may contain poor grammar, poor punctuations, and incomplete sentences.

- Different cultured users may have different types of emotions and communication barriers

- Sometimes humans cannot express their feelings in text messages because the mood is subjective, and we are interested in sentimental analysis.

- There are different fuzzy boundaries of emotions with various facial expressions, and it is difficult to read all the emotions boundaries of human behavior to automate the system [12].

- The labelling and annotating of a large number of topics in different domains discussed on social media is a challenging task that can cover all emotional states [13].

1.2. Motivation

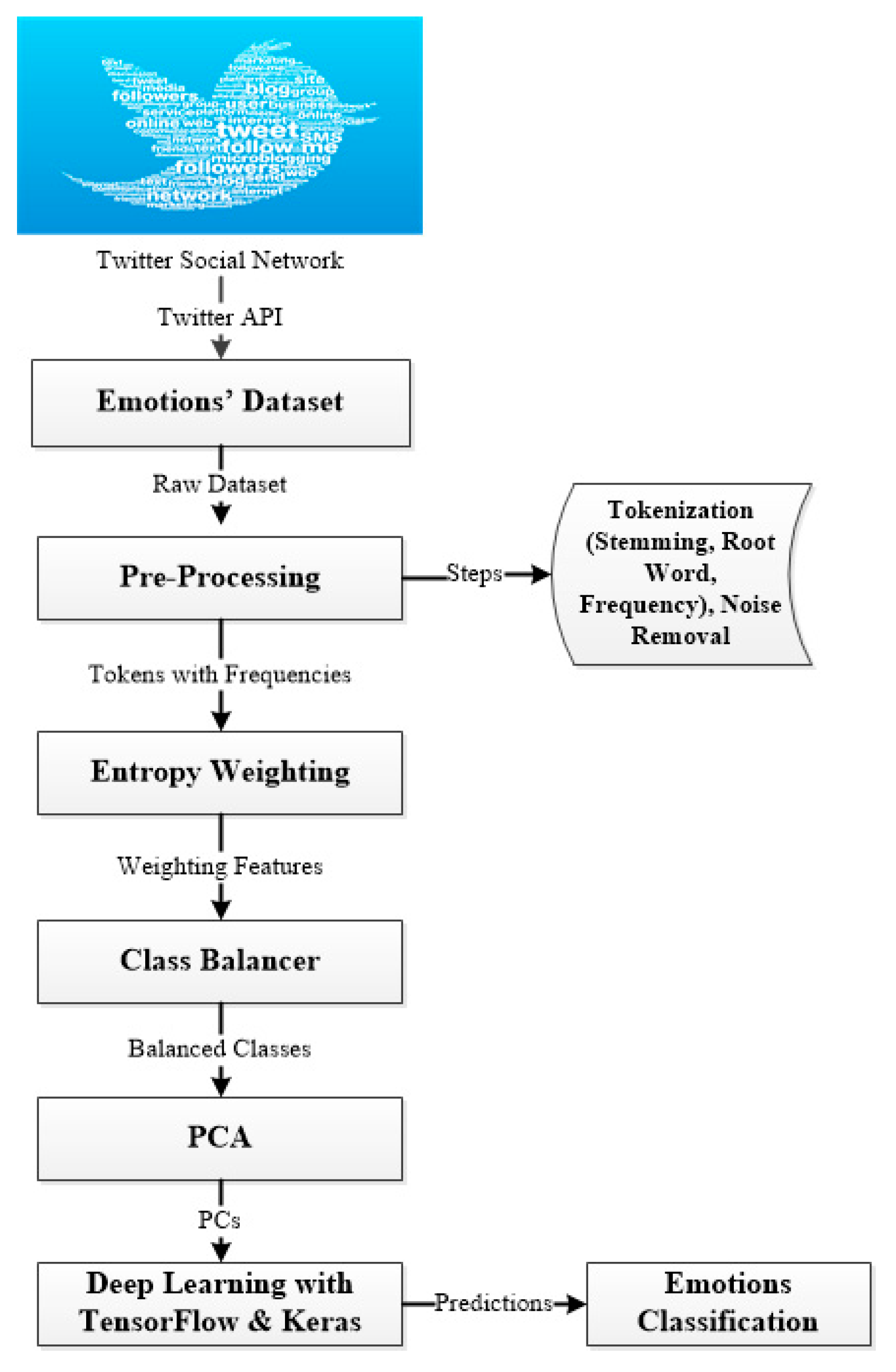

- Designed a hybrid deep learning approach based on TensorFlow with Keras API to classify the emotions enclosed in tweets.

- The overfitting and class imbalance problems really affect the accuracy, loss, and misclassification values. Dropout layer, number of densely connected neurons, and activation function were applied to fine-tune the proposed deep learning model and resolve the overfitting issue. The imbalanced classes problem is tackled by using the class balancer method. We have shown with and without fine-tune configuration results the importance of these factors.

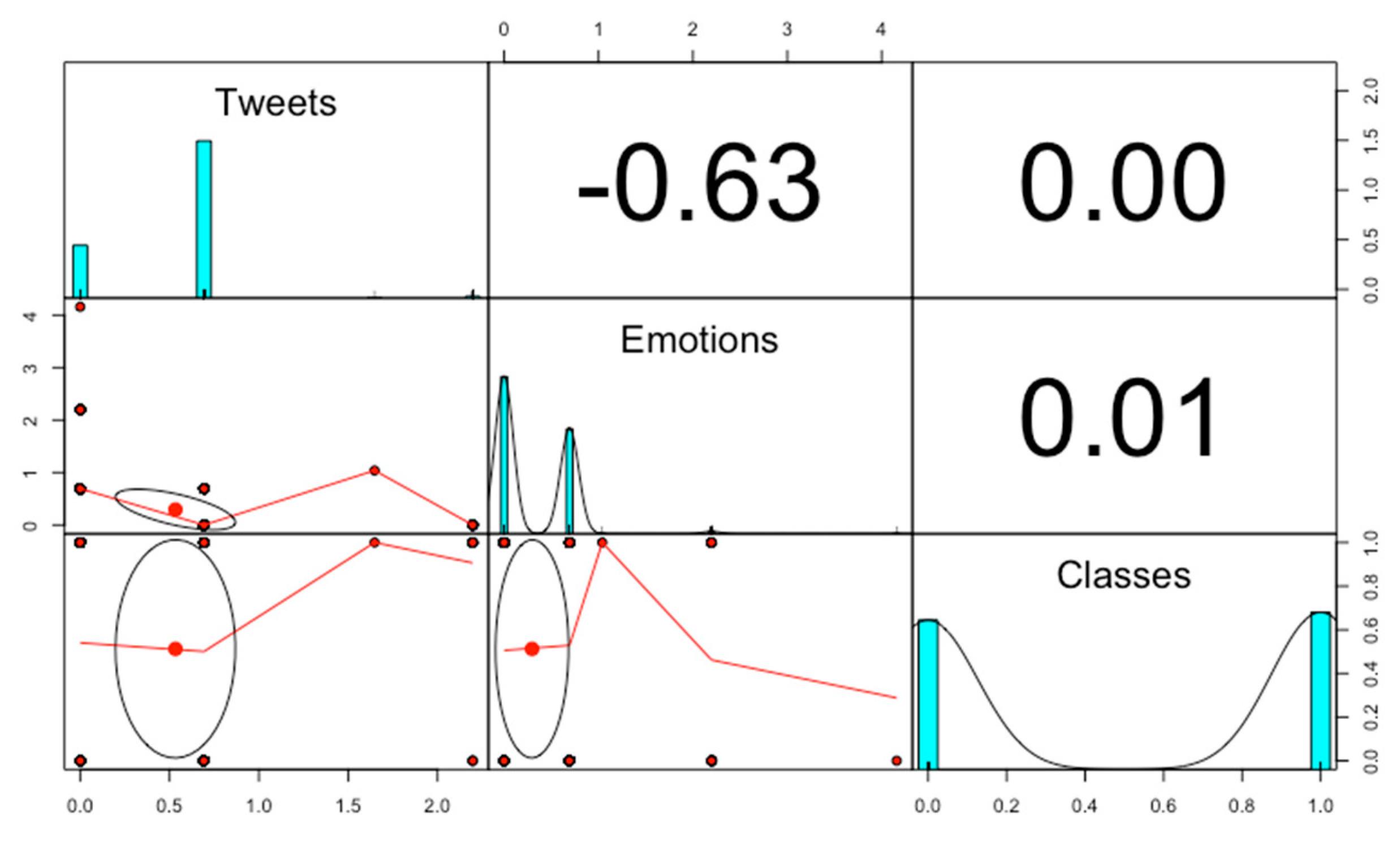

- There is a high correlation among a large number of values in tweets. The PCA technique is applied to target the issue of correlation.

- The comparison with other state of the art techniques verifies the efficiency of the proposed method.

2. Literature Review

- Can we label the sparse and incomplete tweet messages?

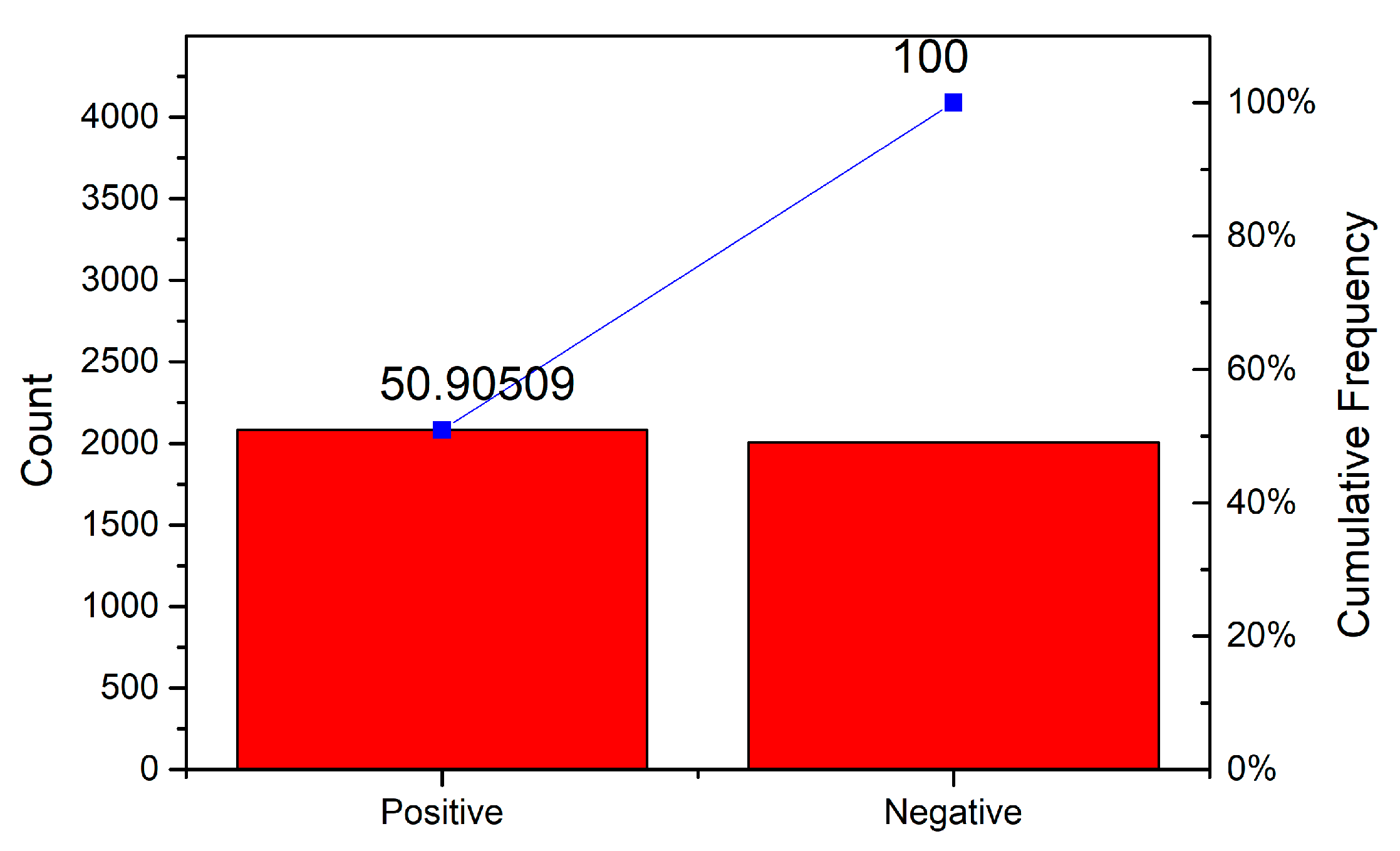

- Can we solve the imbalanced data problem in sparse tweet dataset?

- Can deep learning algorithm give better accuracy for large scale of tweets data?

- Can we retune the deep learning algorithm to get the optimal solution?

3. Proposed Methodology

3.1. Principal Component Analysis

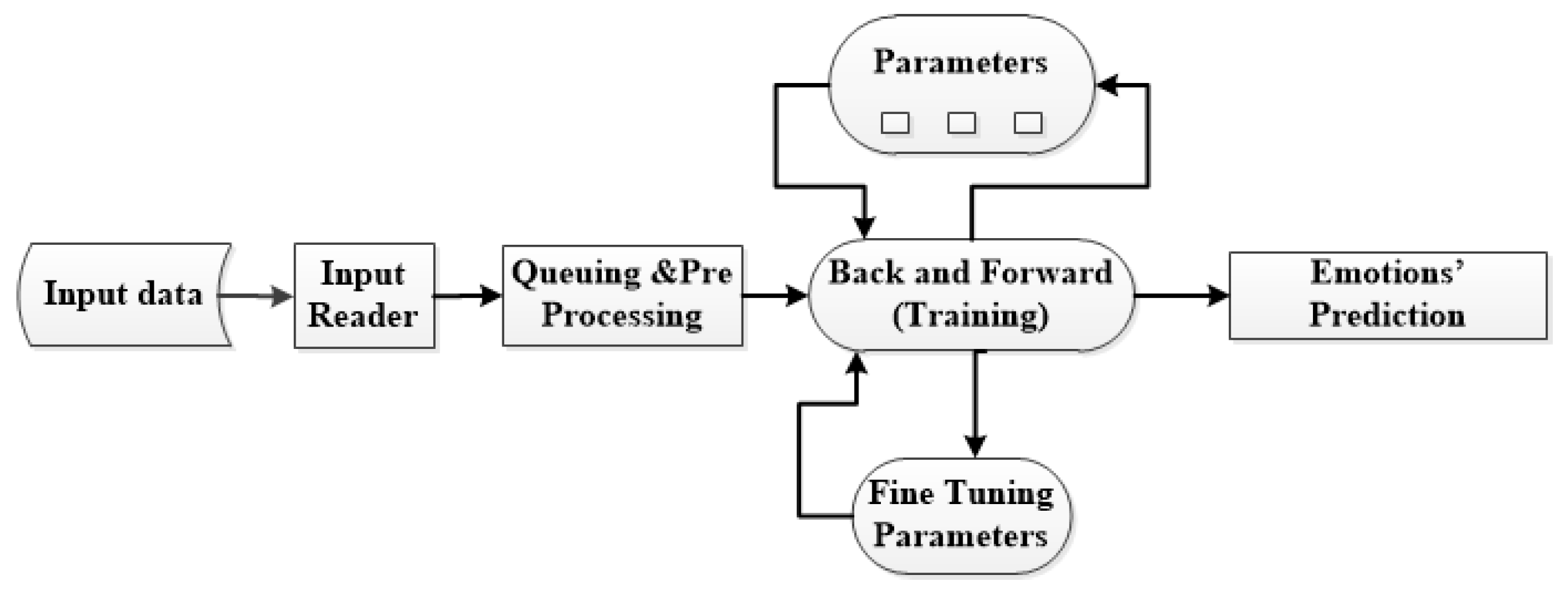

3.2. Deep Learning with TensorFlow Framework and Keras

4. Results and Discussions

5. Conclusions

- It provides excellent visualization and high computation services for a large scale of tweets data.

- TensorFlow-based algorithms can be deployed easily from a cellular device to a huge number of complex networks.

- It provides unified functions and fast updates as it is maintained by a big organization, i.e., Google.

- It has a great feature of flexibility and can be easily extendable.

- To get better accuracy, we may configure the dense layers according to our requirements in terms of a number of neurons and activation methods.

- Dropout layer configuration is another great feature which solves the overfitting problem. It can be easily fine-tuned with learning error rate and type of activation function.

Author Contributions

Funding

Conflicts of Interest

References

- Ji, X.; Chun, S.A.; Wei, Z.; Geller, J. Twitter sentiment classification for measuring public health concerns. Soc. Netw. Anal. Min. 2015, 5, 13. [Google Scholar] [CrossRef]

- Peltola, M.J.; Forssman, L.; Puura, K.; Van Ijzendoorn, M.H.; Leppänen, J.M. Attention to Faces Expressing Negative Emotion at 7 Months Predicts Attachment Security at 14 Months. Child Dev. 2015, 86, 1321–1332. [Google Scholar] [CrossRef] [PubMed]

- Whitehill, J.; Serpell, Z.; Lin, Y.-C.; Foster, A.; Movellan, J.R. The Faces of Engagement: Automatic Recognition of Student Engagementfrom Facial Expressions. IEEE Trans. Affect. Comput. 2014, 5, 86–98. [Google Scholar] [CrossRef]

- Neppalli, V.K.; Caragea, C.; Squicciarini, A.; Tapia, A.; Stehle, S. Sentiment analysis during Hurricane Sandy in emergency response. Int. J. Disaster Risk Reduct. 2017, 21, 213–222. [Google Scholar] [CrossRef]

- Kaplan, A.M.; Haenlein, M. Users of the world, unite! The challenges and opportunities of Social Media. Bus. Horizons 2010, 53, 59–68. [Google Scholar] [CrossRef]

- Khan, F.H.; Bashir, S.; Qamar, U. TOM: Twitter opinion mining framework using hybrid classification scheme. Decis. Support Syst. 2014, 57, 245–257. [Google Scholar] [CrossRef]

- Bel-Enguix, G.; Gómez-Adorno, H.; Reyes-Magaña, J.; Sierra, G. Wan2vec: Embeddings learned on word association norms. Semant. Web 2019, 1–16, (Preprint). [Google Scholar]

- Stein, R.A.; Jaques, P.A.; Valiati, J.F. An analysis of hierarchical text classification using word embeddings. Inf. Sci. 2019, 471, 216–232. [Google Scholar] [CrossRef]

- Olson, R.S.; Moore, J.H. TPOT: A Tree-Based Pipeline Optimization Tool for Automating Machine Learning. In Automated Machine Learning; Springer: Berlin, Germany, 2019; pp. 151–160. [Google Scholar]

- Hubara, I.; Courbariaux, M.; Soudry, D.; El-Yaniv, R.; Bengio, Y. Binarized neural networks. In Advances in Neural Information Processing Systems; Neural Information Processing Systems Foundation, Inc.: Barlcelona, Spain, 2016; pp. 4107–4115. [Google Scholar]

- Beigi, G.; Hu, X.; Maciejewski, R.; Liu, H. An overview of sentiment analysis in social media and its applications in disaster relief. In Sentiment Analysis and Ontology Engineering; Springer: Berlin, Germany, 2016; pp. 313–340. [Google Scholar]

- Gunes, H.; Schuller, B.; Pantic, M. Emotion representation, analysis and synthesis in continuous space: A survey. In Face and Gesture 2011; IEEE: Piscataway, NJ, USA, 2011; pp. 827–834. [Google Scholar]

- Hasan, M.; Rundensteiner, E. Agu Emotex: Detecting Emotions in Twitter Messages. 2014. Available online: http://web.cs.wpi.edu/~emmanuel/publications/PDFs/C30.pdf. (accessed on 29 August 2019).

- Felbo, B.; Mislove, A.; Søgaard, A.; Rahwan, I.; Lehmann, S. Using millions of emoji occurrences to learn any-domain representations for detecting sentiment, emotion and sarcasm. arXiv 2017, arXiv:1708.00524. [Google Scholar]

- Wang, W.; Chen, L.; Thirunarayan, K.; Sheth, A.P. Harnessing twitter “big data” for automatic emotion identification. In Proceedings of the 2012 International Conference on Privacy, Security, Risk and Trust and 2012 International Confernece on Social Computing, Amsterdam, The Netherlands, 3–5 September 2012. [Google Scholar]

- Mohammad, S.M.; Kiritchenko, S. Using hashtags to capture fine emotion categories from tweets. Comput. Intell. 2015, 31, 301–326. [Google Scholar] [CrossRef]

- Mohammad, S.M.; Kiritchenko, S.; Zhu, X. NRC-Canada: Building the State-of-the-Art in Sentiment Analysis of Tweets. arXiv 2013, arXiv:1308.6242. [Google Scholar]

- Ekman, P. An argument for basic emotions. Cogn. Emot. 1992, 6, 169–200. [Google Scholar] [CrossRef]

- Plutchik, R. A general psychoevolutionary theory of emotion. In Theories of Emotion; Academic Press: Cambridge, MA, USA, 1980; pp. 3–33. [Google Scholar]

- Norcross, J.C.; Guadagnoli, E.; Prochaska, J.O. Factor structure of the Profile of Mood States (POMS): Two partial replications. J. Clin. Psychol. 1984, 40, 1270–1277. [Google Scholar] [CrossRef]

- Deriu, J.; Lucchi, A.; De Luca, V.; Severyn, A.; Müller, S.; Cieliebak, M.; Hofmann, T.; Jaggi, M. Leveraging Large Amounts of Weakly Supervised Data for Multi-Language Sentiment Classification. In Proceedings of the 26th International Conference on World Wide Web, Perth, Australia, 3–7 April 2017. [Google Scholar]

- Bifet, A.; Frank, E. Sentiment knowledge discovery in twitter streaming data. In International Conference on Discovery Science; Springer: Berlin/Heidelberg, Germany, 2010; pp. 1–15. [Google Scholar]

- Summa, A.; Resch, B.; Strube, M. Microblog emotion classification by computing similarity in text, time, and space. In Proceedings of the Workshop on Computational Modeling of People’s Opinions, Personality, and Emotions in Social Media (PEOPLES), Osaka, Japan, 12 December 2016. [Google Scholar]

- Häberle, M.; Werner, M.; Zhu, X.X. Geo-spatial text-mining from Twitter – a feature space analysis with a view toward building classification in urban regions. Eur. J. Remote. Sens. 2019, 52, 2–11. [Google Scholar] [CrossRef]

- Wang, T.; Cai, Y.; Leung, H.-F.; Cai, Z.; Min, H. Entropy-based term weighting schemes for text categorization in VSM. In Proceedings of the 2015 IEEE 27th International Conference on Tools with Artificial Intelligence (ICTAI), Vietri sul Mare, Italy, 9–11 November 2015. [Google Scholar]

- Borrajo, L.; Romero, R.; Iglesias, E.L.; Marey, C.M.R. Improving imbalanced scientific text classification using sampling strategies and dictionaries. J. Integr. Bioinform. 2011, 8, 90–104. [Google Scholar] [CrossRef]

- Olive, D.J. Principal component analysis. In Robust Multivariate Analysis; Springer: Berlin, Germany, 2017; pp. 189–217. [Google Scholar]

- Jolliffe, I.T.; Cadima, J. Principal component analysis: A review and recent developments. Philos. Trans. R. Soc. A Math. Phys. Eng. Sci. 2016, 374, 20150202. [Google Scholar] [CrossRef] [PubMed]

- Vidal, R.; Ma, Y.; Sastry, S. Generalized principal component analysis (GPCA). IEEE Trans. Pattern Anal. Mach. Intell. 2005, 27, 1945–1959. [Google Scholar] [CrossRef] [PubMed]

- Abadi, M.; Agarwal, A.; Barham, P.; Brevdo, E.; Chen, Z.; Citro, C.; Corrado, G.S.; Davis, A.; Dean, J.; Devin, M.; et al. TensorFlow: Large-Scale Machine Learning on Heterogeneous Distributed Systems. arXiv 2016, arXiv:1603.04467. [Google Scholar]

- Sergeev, A.; Del Balso, M. Horovod: Fast and easy distributed deep learning in TensorFlow. arXiv 2018, arXiv:1802.05799. [Google Scholar]

- Abadi, M.; Barham, P.; Chen, J.; Chen, Z.; Davis, A.; Dean, J.; Devin, M.; Ghemawat, S.; Irving, G.; Isard, M.; et al. TensorFlow: A system for large-scale machine learning. In Proceedings of the 12th {USENIX} Symposium on Operating Systems Design and Implementation, Savannah, GA, USA, 2–4 November 2016. [Google Scholar]

- Baylor, D.; Breck, E.; Cheng, H.T.; Fiedel, N.; Foo, C.Y.; Haque, Z. Tfx: A tensorflow-based production-scale machine learning platform. In Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, Halifax, NS, Canada, 13–17 August 2017. [Google Scholar]

- Tato, A.; Nkambou, R. Improving Adam Optimizer. 2018. Available online: https://openreview.net/forum?id=HJfpZq1DM (accessed on 29 August 2019).

- Zhang, Z. Improved Adam Optimizer for Deep Neural Networks. In Proceedings of the 2018 IEEE/ACM 26th International Symposium on Quality of Service (IWQoS), Banff, AB, Canada, 4–6 June 2018. [Google Scholar]

- Ruder, S. An overview of gradient descent optimization algorithms. arXiv 2016, arXiv:1609.04747. [Google Scholar]

- Rogati, M.; Yang, Y. High-performing feature selection for text classification. In Proceedings of the eleventh international conference on Information and knowledge management, McLean, VA, USA, 4–9 November 2002. [Google Scholar]

- Ullah, F.; Wang, J.; Farhan, M.; Habib, M.; Khalid, S. Software plagiarism detection in multiprogramming languages using machine learning approach. Concurr. Comput. Pr. Exp. 2018, e5000. [Google Scholar] [CrossRef]

- Jia, C.; Carson, M.B.; Wang, X.; Yu, J. Concept decompositions for short text clustering by identifying word communities. Pattern Recognit. 2018, 76, 691–703. [Google Scholar] [CrossRef]

- Setareh, H.; Deger, M.; Petersen, C.C.H.; Gerstner, W. Cortical Dynamics in Presence of Assemblies of Densely Connected Weight-Hub Neurons. Front. Comput. Neurosci. 2017, 11, 52. [Google Scholar] [CrossRef] [PubMed]

| Statistical Measures | PC1 | PC2 | PC3 | PC4 | PC5 | PC6 | PC7 | PC8 | PC9 | PC10 | PC11 | PC12 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Standard Deviation | 1.6253 | 1.2234 | 1.1529 | 1.09513 | 1.02088 | 0.94565 | 0.93161 | 0.88161 | 0.84315 | 0.79726 | 0.63662 | 2.43 × 10−16 |

| Proportion of Variance | 0.2201 | 0.1247 | 0.1108 | 0.09994 | 0.08685 | 0.07452 | 0.07233 | 0.06477 | 0.05924 | 0.05297 | 0.03377 | 0.00 |

| Cumulative Proportion | 0.2201 | 0.3448 | 0.4556 | 0.55555 | 0.6424 | 0.71692 | 0.78925 | 0.85402 | 0.91326 | 0.96623 | 1 | 1.00 |

| Layer | Type | Shape | Parameters |

|---|---|---|---|

| Dense 1 | Dense | 100 | 1200 |

| Dropout 1 | Dropout | 100 | 0 |

| Dense 2 | Dense | 80 | 8080 |

| Dropout 2 | Dropout | 80 | 0 |

| Dense 3 | Dense | 60 | 4860 |

| Dropout 3 | Dropout | 60 | 0 |

| Dense 4 | Dense | 40 | 2440 |

| Dropout 4 | Dropout | 40 | 0 |

| Dense 5 | Dense | 2 | 41 |

| Total Parameters | 16,621 | ||

| Trainable Parameters | 16,621 | ||

| Non-trainable | 0 |

| Technique | 90% | 80% | 70% | 60% | 50% | 40% | 30% |

|---|---|---|---|---|---|---|---|

| Support Vector Machine | 70 | 80 | 86.66 | 75 | 70 | 66.66 | 71.42 |

| Multi-Layer Perceptron | 70 | 85 | 80 | 82.54 | 84 | 81.66 | 77.14 |

| Random Forest | 60 | 75 | 76.66 | 80 | 76 | 81.66 | 77.14 |

| Logit Boost | 60 | 70 | 76.66 | 77.52 | 66 | 76.66 | 75.71 |

| Logistic Regression | 60 | 70 | 80 | 75 | 70 | 78.33 | 74.28 |

| K-Nearest Neighbor | 60 | 85 | 80 | 80 | 76 | 80 | 82.85 |

| Proposed Technique | 98.4 | 93.33 | 90.91 | 90.62 | 96.36 | 89.75 | 86.53 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Jamal, N.; Xianqiao, C.; Aldabbas, H. Deep Learning-Based Sentimental Analysis for Large-Scale Imbalanced Twitter Data. Future Internet 2019, 11, 190. https://doi.org/10.3390/fi11090190

Jamal N, Xianqiao C, Aldabbas H. Deep Learning-Based Sentimental Analysis for Large-Scale Imbalanced Twitter Data. Future Internet. 2019; 11(9):190. https://doi.org/10.3390/fi11090190

Chicago/Turabian StyleJamal, Nasir, Chen Xianqiao, and Hamza Aldabbas. 2019. "Deep Learning-Based Sentimental Analysis for Large-Scale Imbalanced Twitter Data" Future Internet 11, no. 9: 190. https://doi.org/10.3390/fi11090190

APA StyleJamal, N., Xianqiao, C., & Aldabbas, H. (2019). Deep Learning-Based Sentimental Analysis for Large-Scale Imbalanced Twitter Data. Future Internet, 11(9), 190. https://doi.org/10.3390/fi11090190