Impact of Modern Virtualization Methods on Timing Precision and Performance of High-Speed Applications

Abstract

1. Introduction

Paper Contribution

2. Testbed Setup and Measurement Methodology

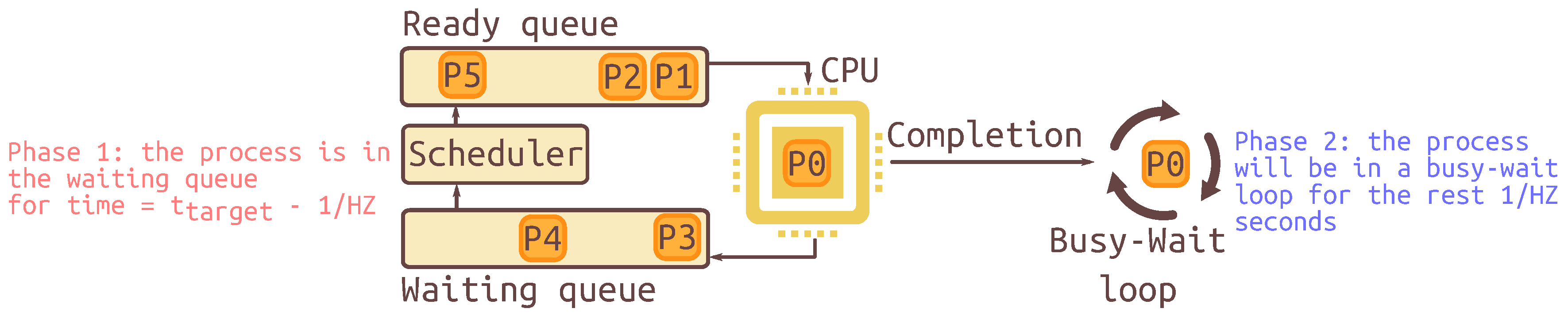

2.1. Experimental Setup for Timing Measurements

2.1.1. Used Timing Tools

2.1.2. System Configuration

2.1.3. Load Types

- Idle system: In this load type, the operating system works in normal mode, without any additional working applications. An idle system represents the best-case scenario.

- CPU-bound load: This type creates a very high CPU load using up to 100% of available CPU resources. For this, a utility creates 100 threads with square root calculations. This type of load is often created by using video/audio conversion/compression algorithms or by applications for graphics processing.

- IO/network-bound load: This load type simulates typical VMs load, which can exist on hosting in a large data center. For this, we create an NFS server on the VM and start sending and receiving data calls as standard read/write calls. This workload results in a large number of context switches and disk interrupts. As an example, this scenario can be used for solving such problems as distributed processing of big data.

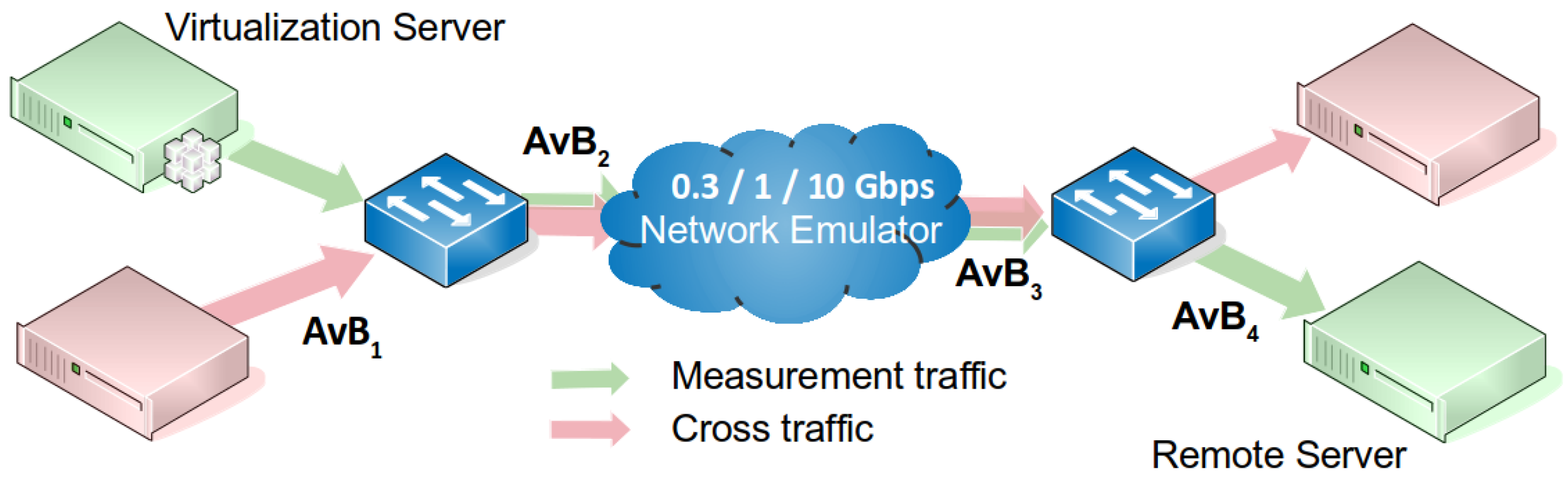

2.2. Experimental Setup for Available Bandwidth Estimation

2.2.1. Used AvB Tools

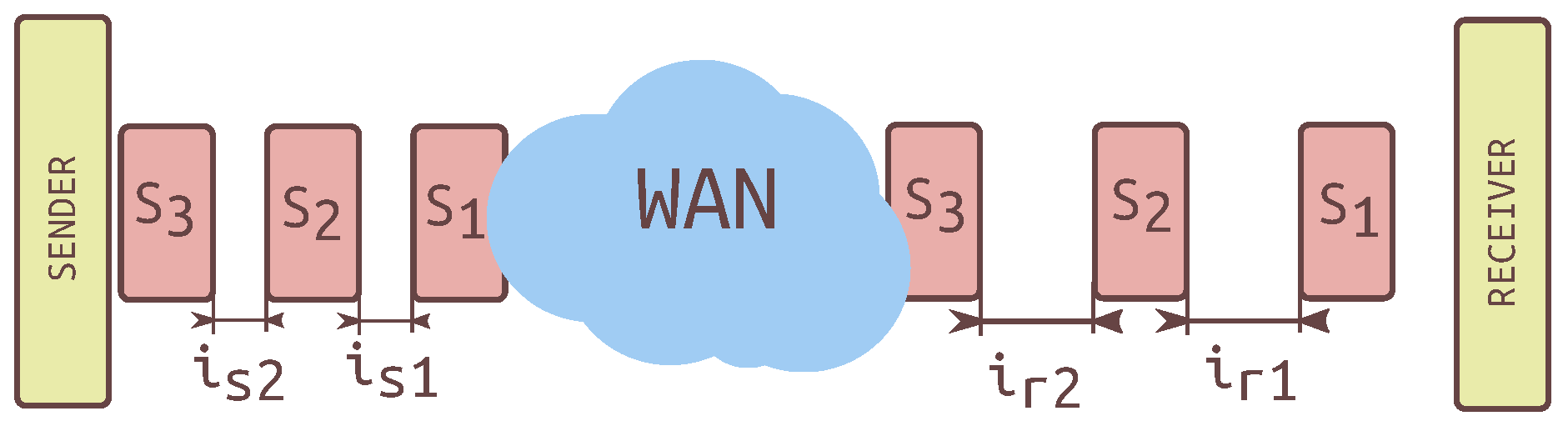

2.2.2. Network Topology for AvB Estimations

- a Netropy 10G WAN Emulator, for emulating wide area network using different inferences such as packet loss ratio up to 100%, delays of up to 100 s, and various types of packet corruption and duplication;

- two instances of servers used for the cross traffic for an additional impact on AvB estimation equipped with 12× Intel Xeon®CPUX5690 3.47 GHz, 32 GB RAM, and 10G Chelsio NIC;

- one instance of server used for measurement traffic in the 10G network, equipped with 6× Intel® Xeon®;

- one instance of a physical server with VMs described in Section 2.1.2, periodically hosted VMs with XEN, QEMU, VirtualBox and ESXI equipped with a 1GE Intel I-219 NIC; and

- 10GE network switches Summit x650 from Extreme Networks.

2.2.3. Test Scenarios

- Between VMs and a remote server with an end-to-end path limited to 300 Mbps capacity, using Yaz for AvB estimation. Cross traffic data rate in this scenario was varied from 0 to 250 Mbps with 50-Mbps increments and was generated by iperf [24] tool.

- Between VMs and a remote server connected over 1 Gbps path. For the experiment, a patched version of Yaz was used. To achieve specified data rate, this version uses a smaller inter-packet spacing than the original one. The reduced inter-packet spacing resulted in the AvB tool using inaccurate sleep time for inter-packet interval setting. As mentioned above, the concept of the algorithm is based on the iterative adjustments of inter-packet time interval until the maximal data rate is achieved, which also corresponds to the available bandwidth of the path. The accuracy of AvB tool was predicted to suffer from the algorithm using cross traffic set as 500 Mbps in this case.

- Experiments were performed between a VM and host; and between two hosts to compare the virtualization impact on estimation accuracy. Servers were connected over 10 Gbps path with injected 20 ms RTT and 1 Gbps cross traffic. Measurements were performed using Kite2 tool.

2.3. Measurement Methodology

3. Results Analysis

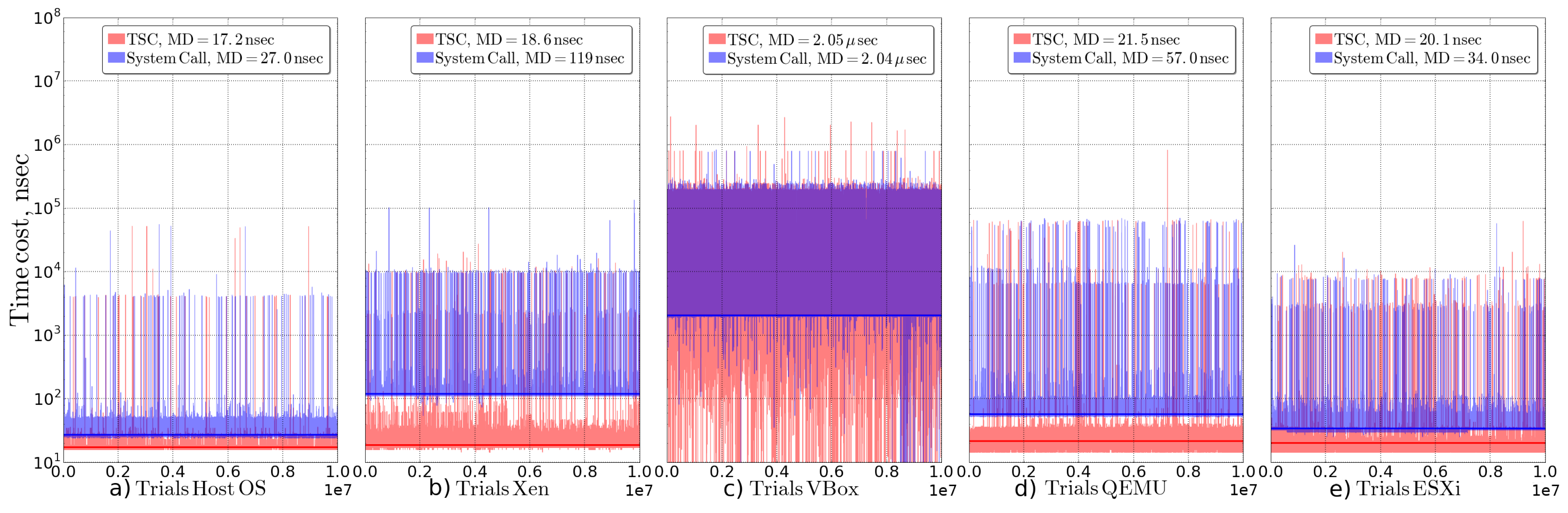

3.1. Experimental Results for Time Measurements

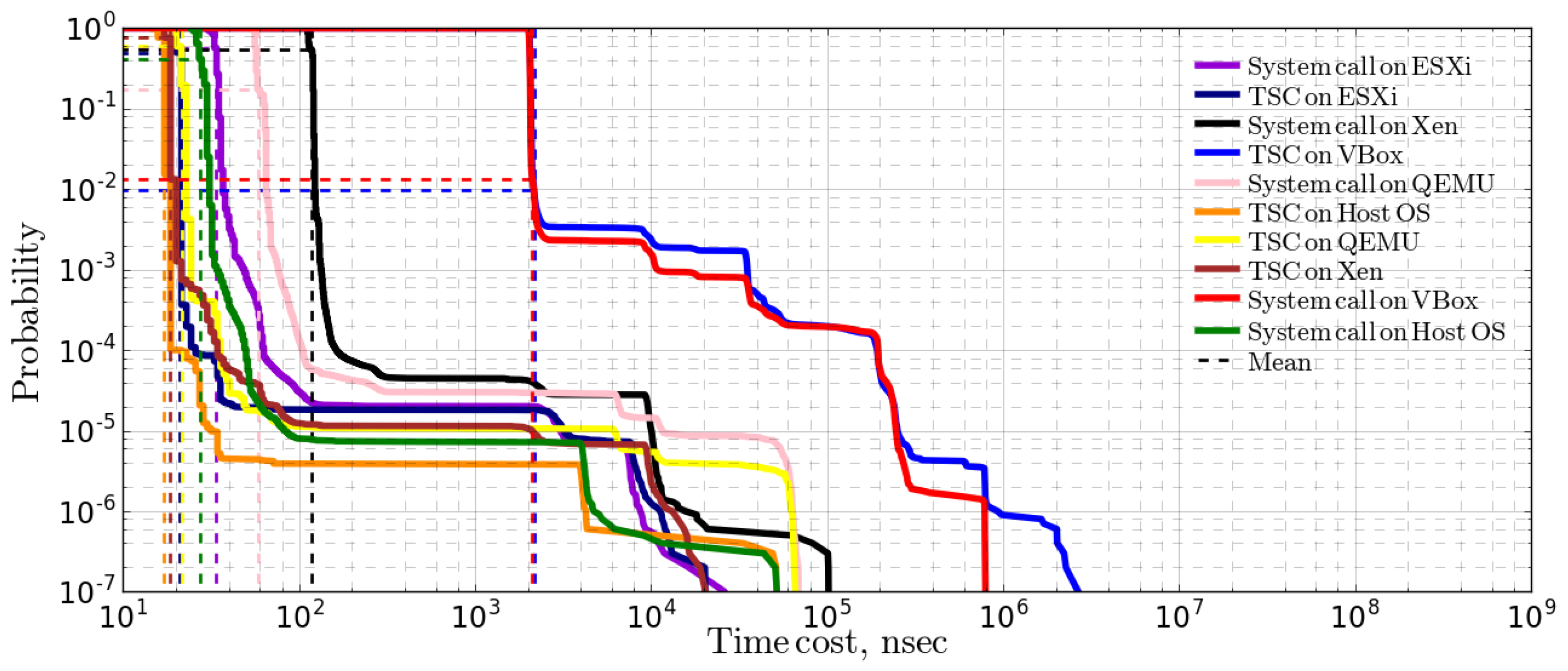

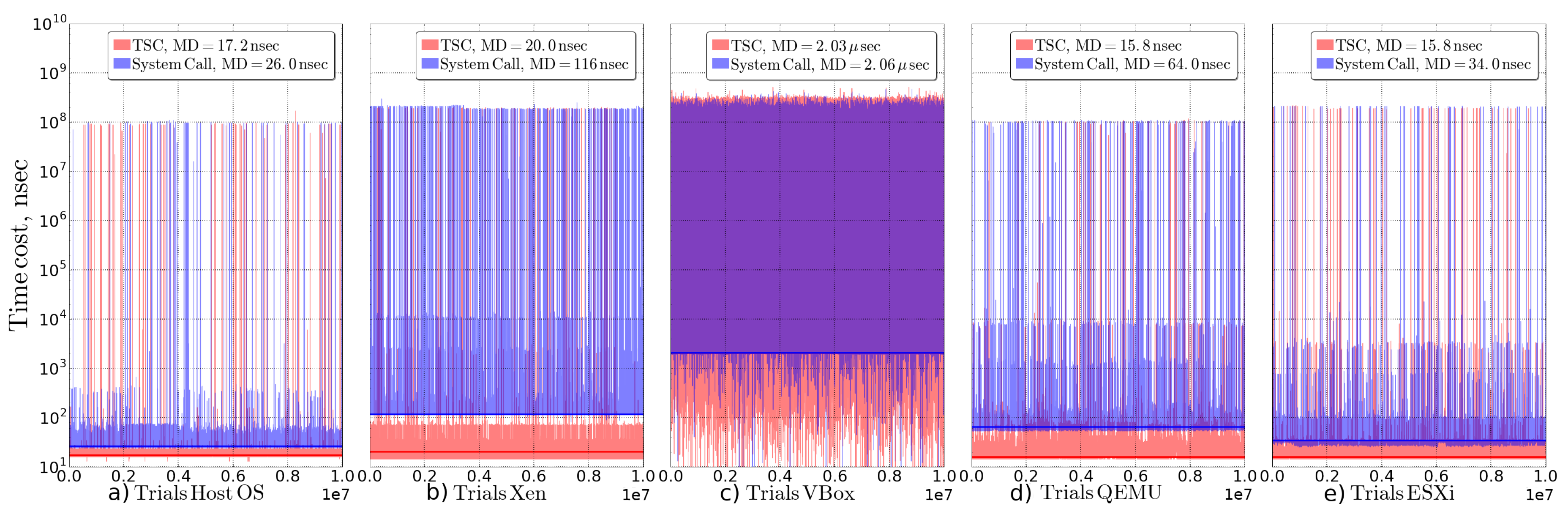

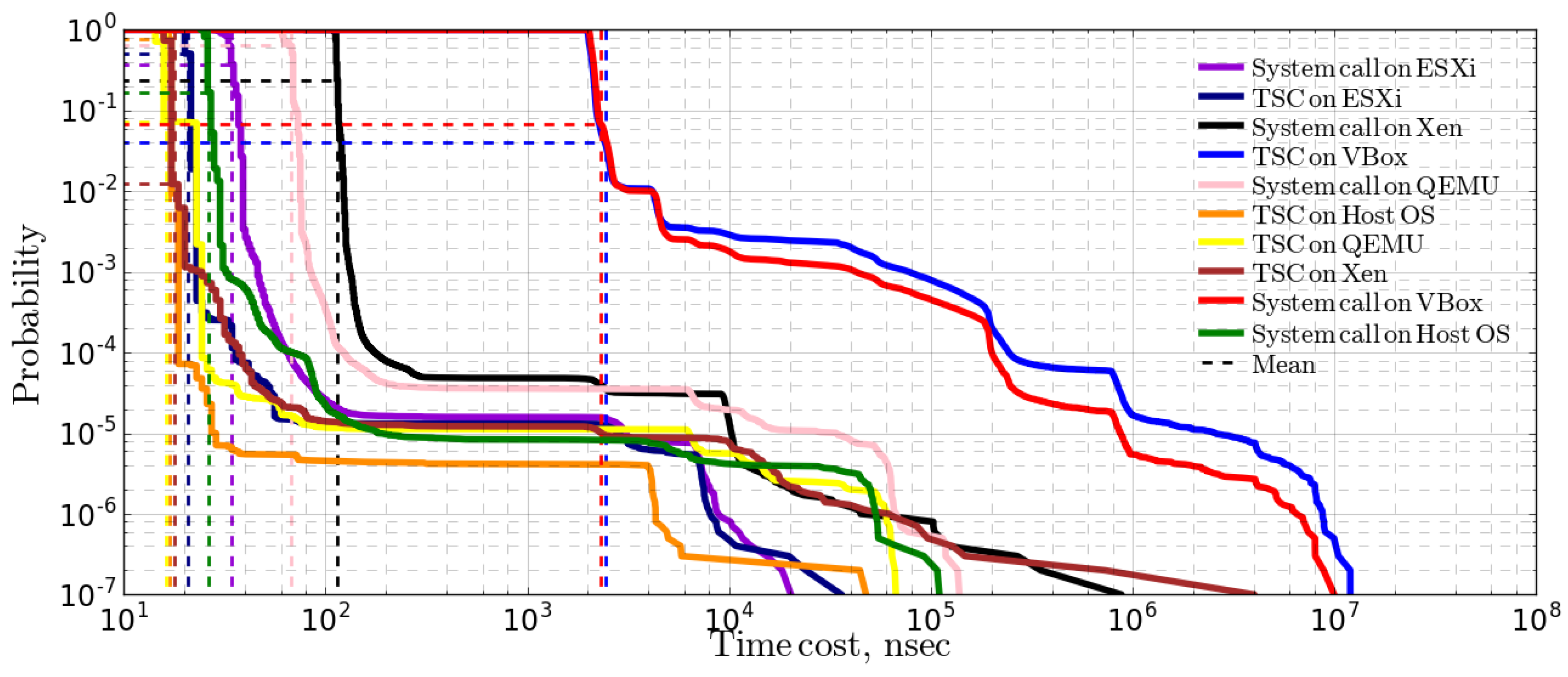

3.1.1. Idle System Test Results

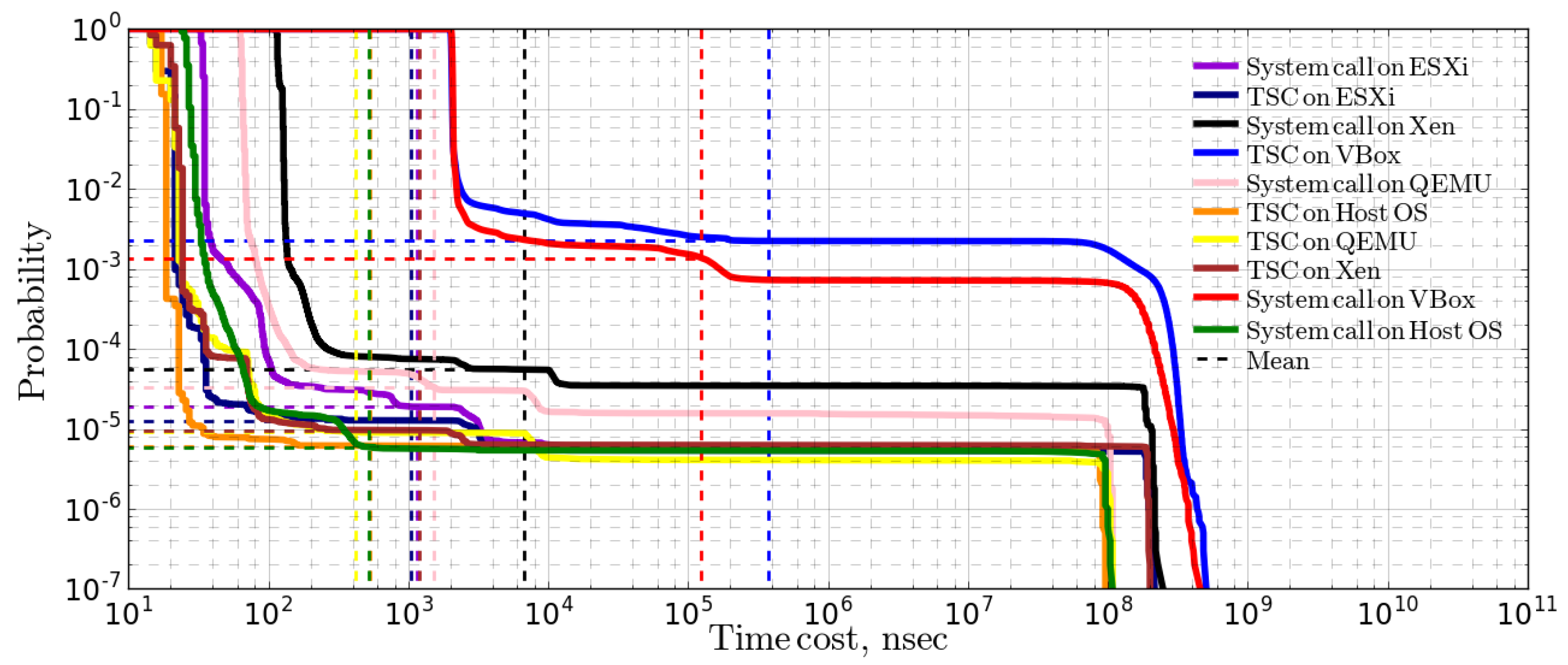

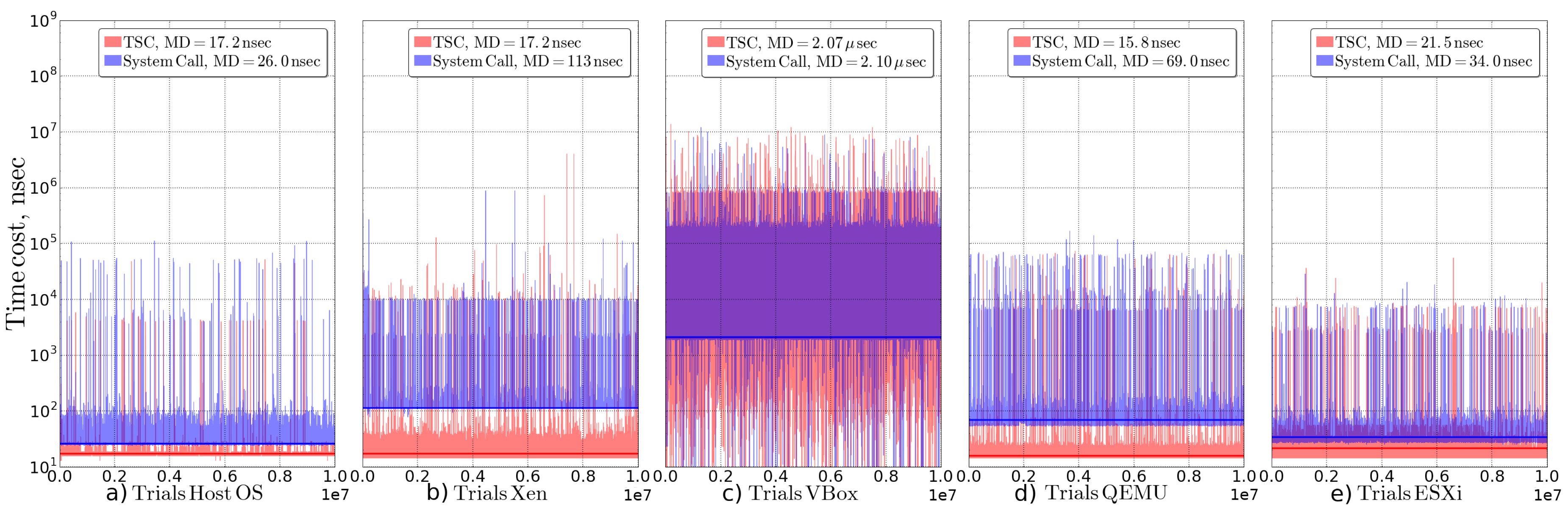

3.1.2. CPU-Bound Load Test Results

3.1.3. IO/Network-Bound Load Test Results

3.1.4. Summary of Time Acquisition Experiment Results

3.2. Experimental Results for Sleep Measurements

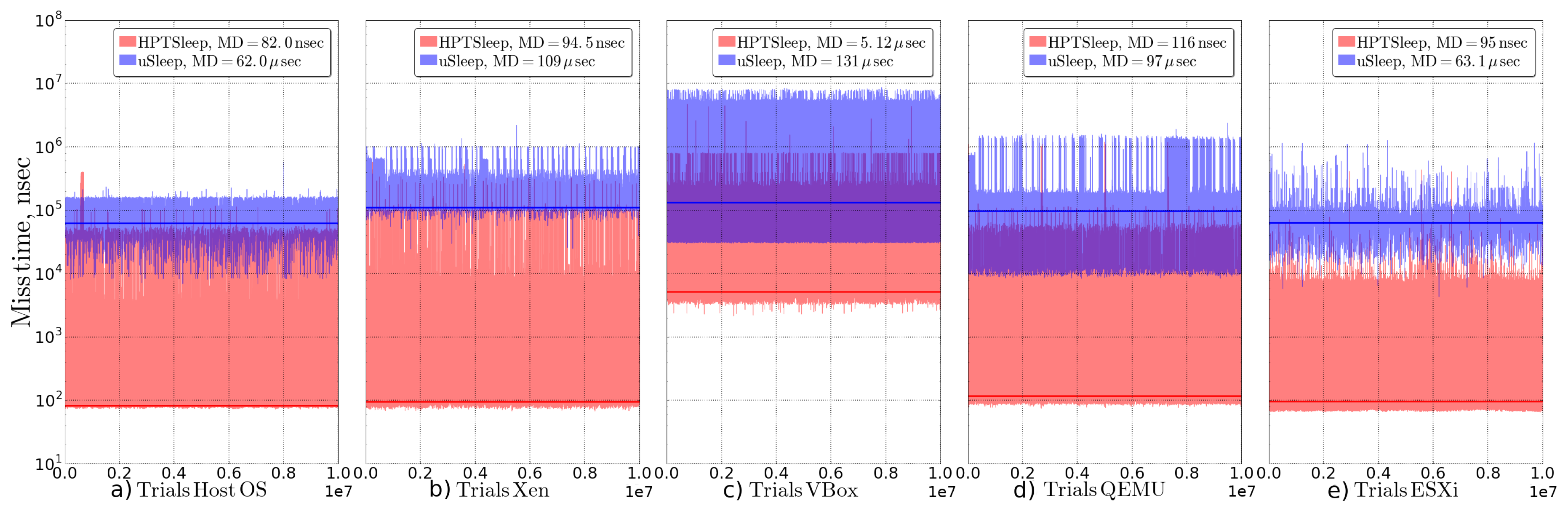

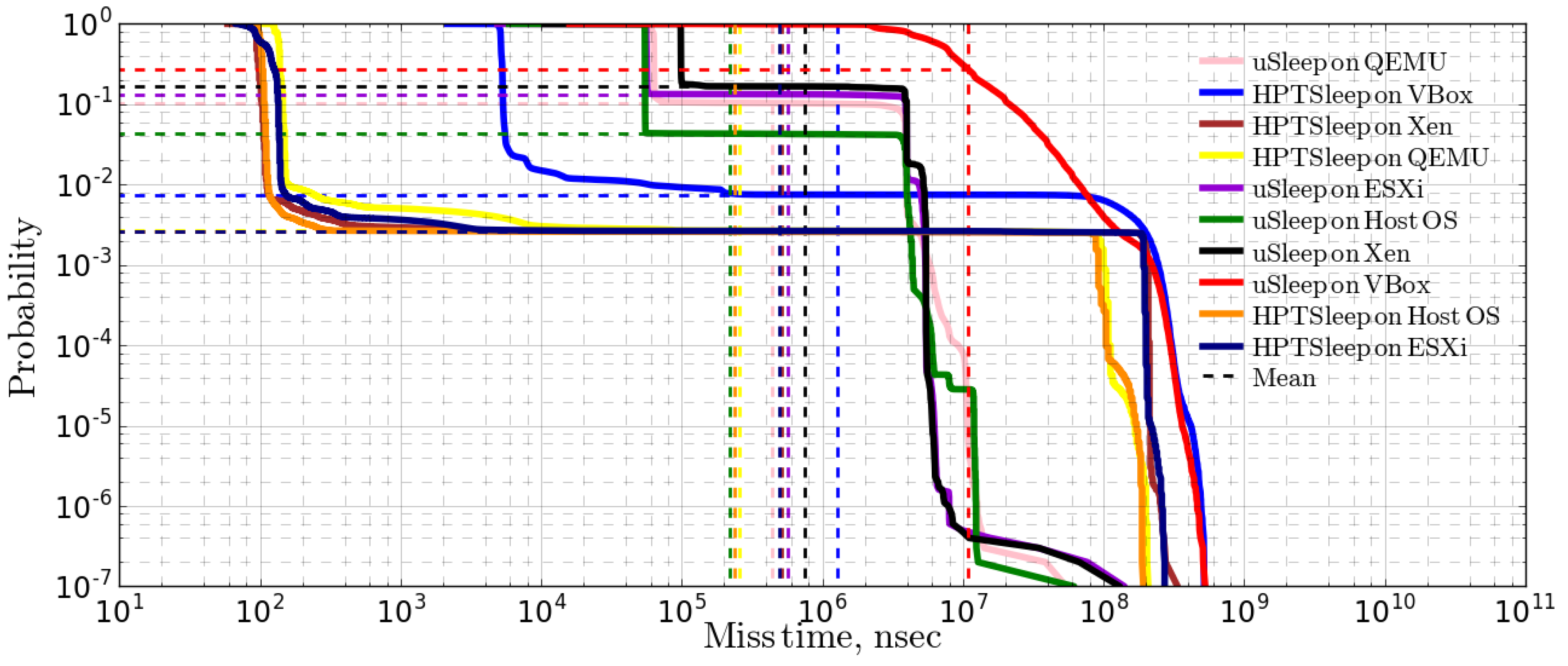

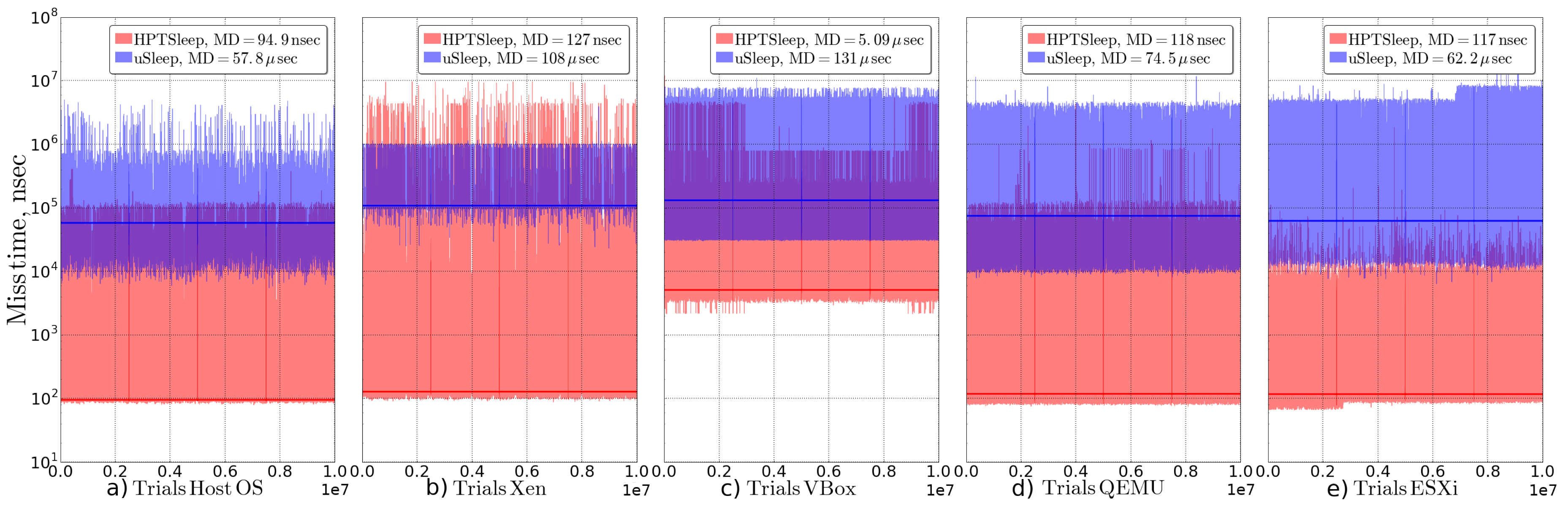

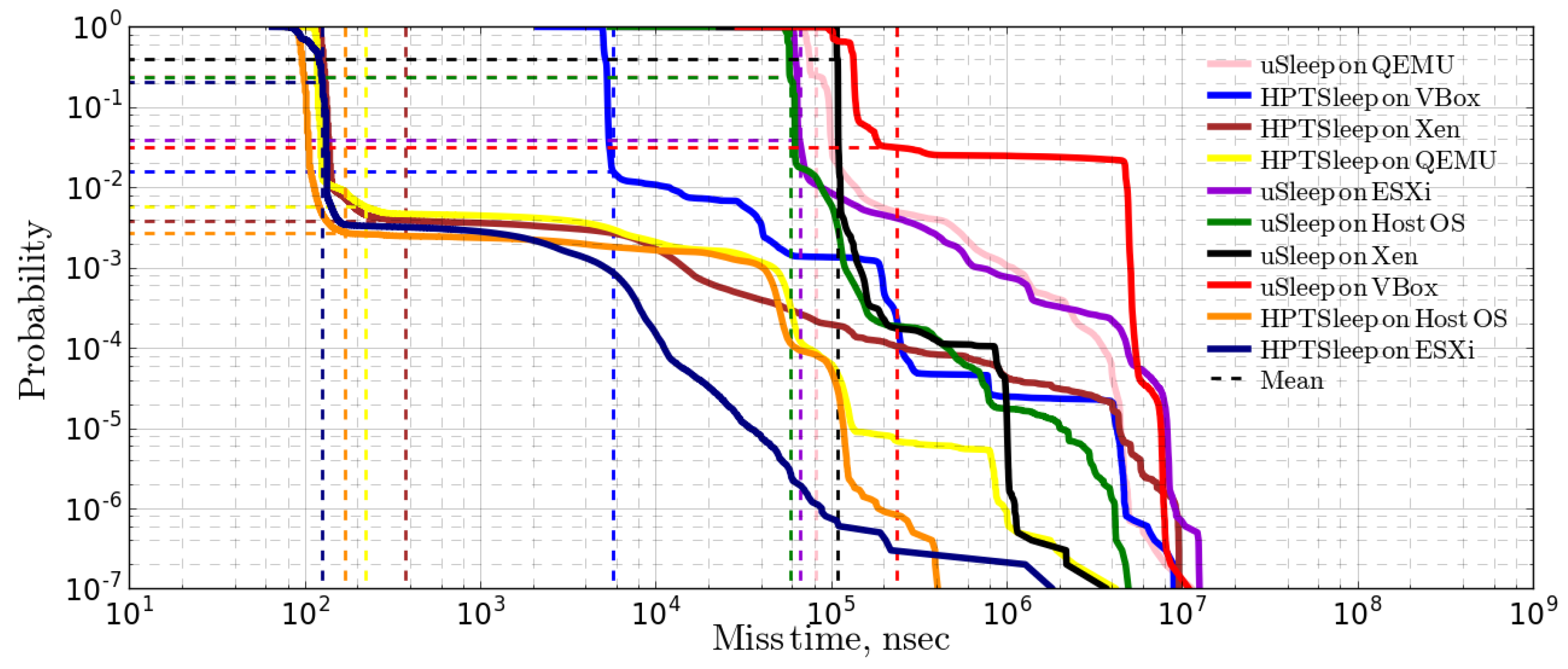

3.2.1. Idle System Test Results

3.2.2. CPU-Bound Load Test Results

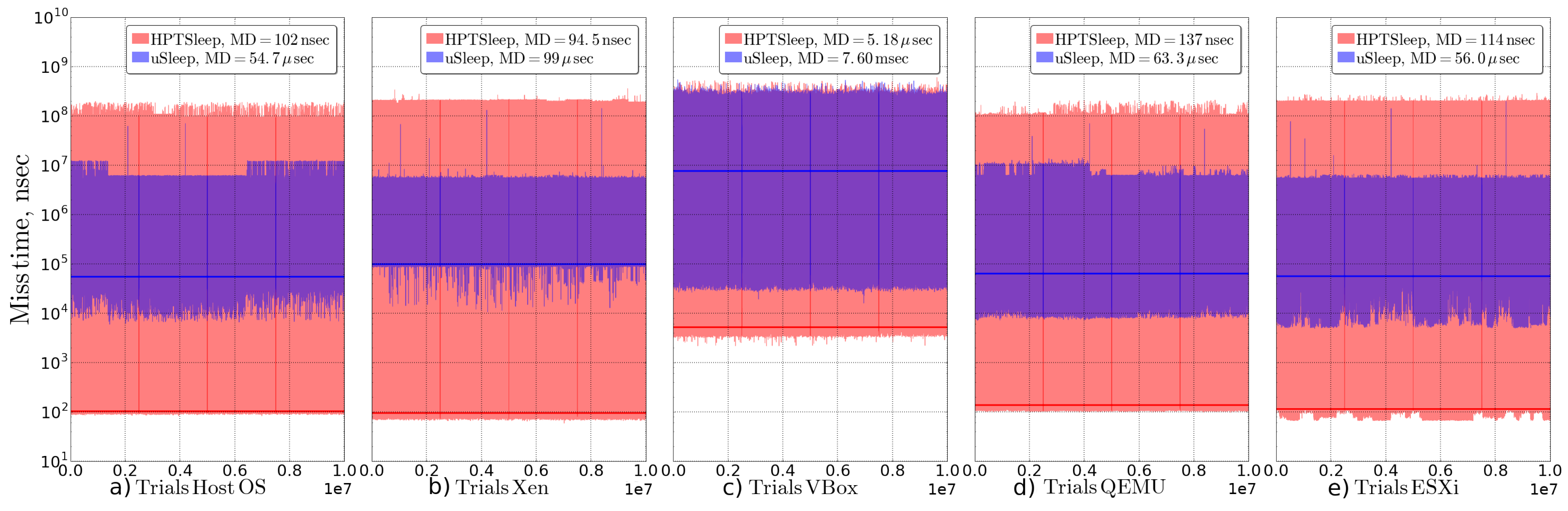

3.2.3. IO/Network-Bound Load Test Results

3.2.4. Summary of Sleep Functions Experiment Results

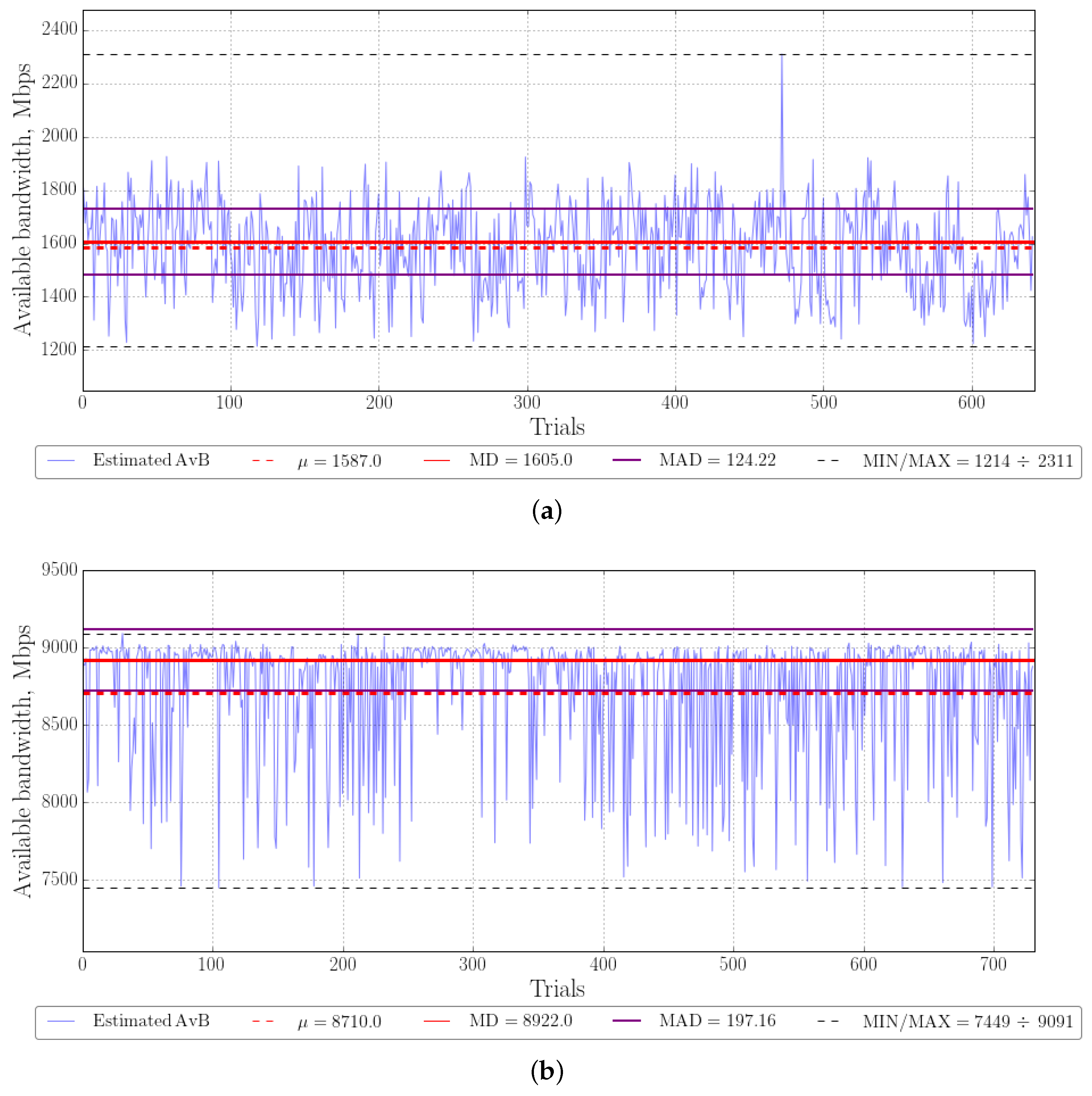

3.3. AvB Estimation Results

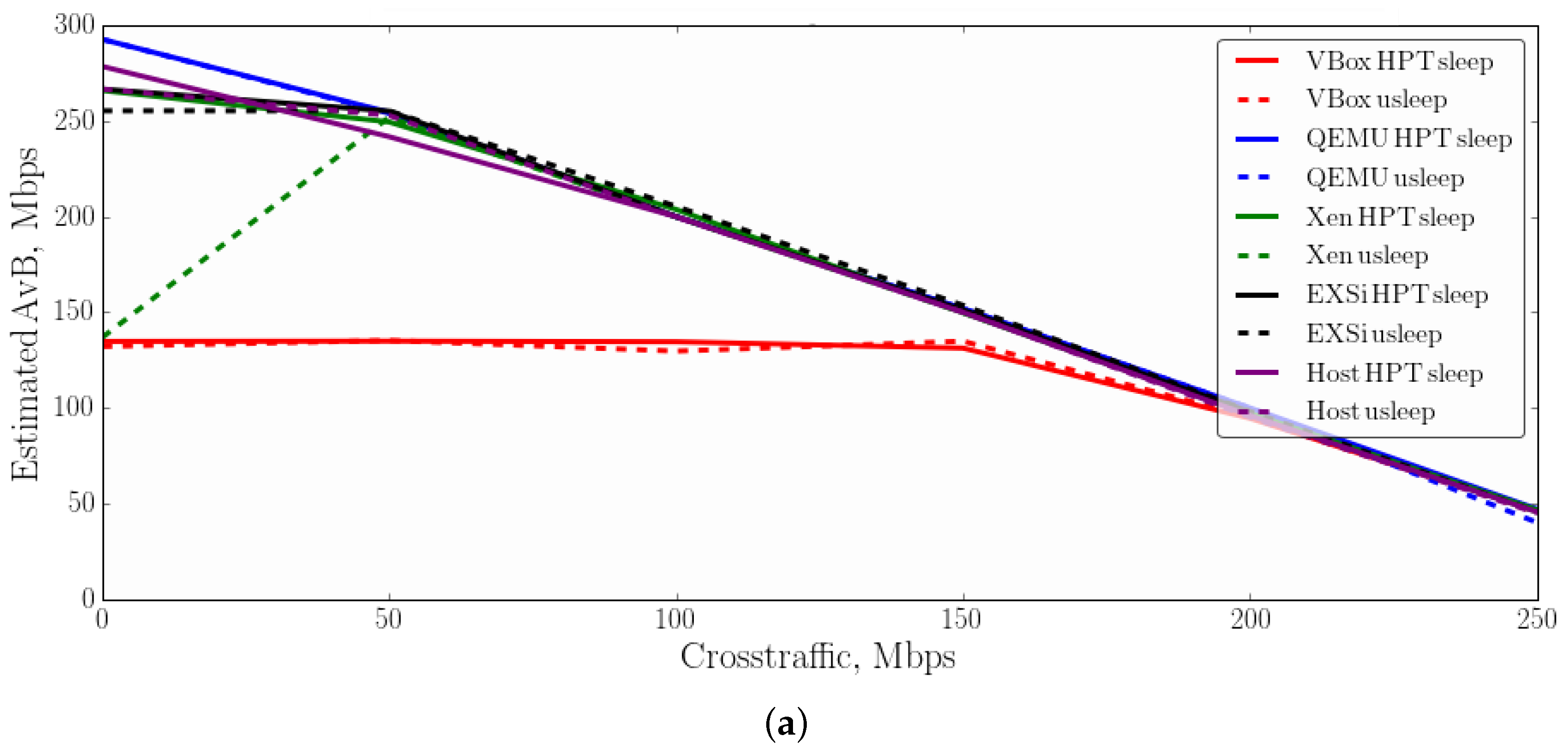

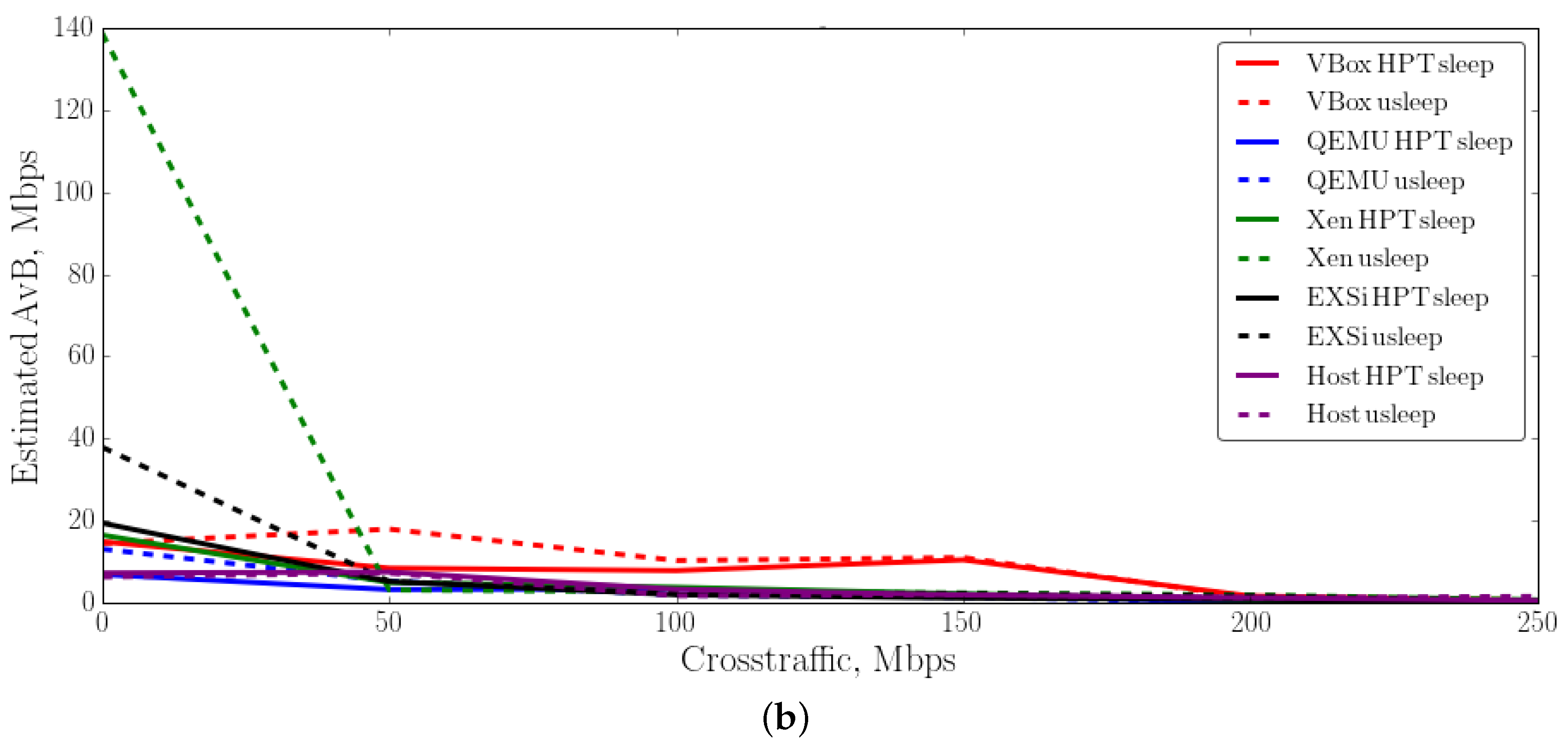

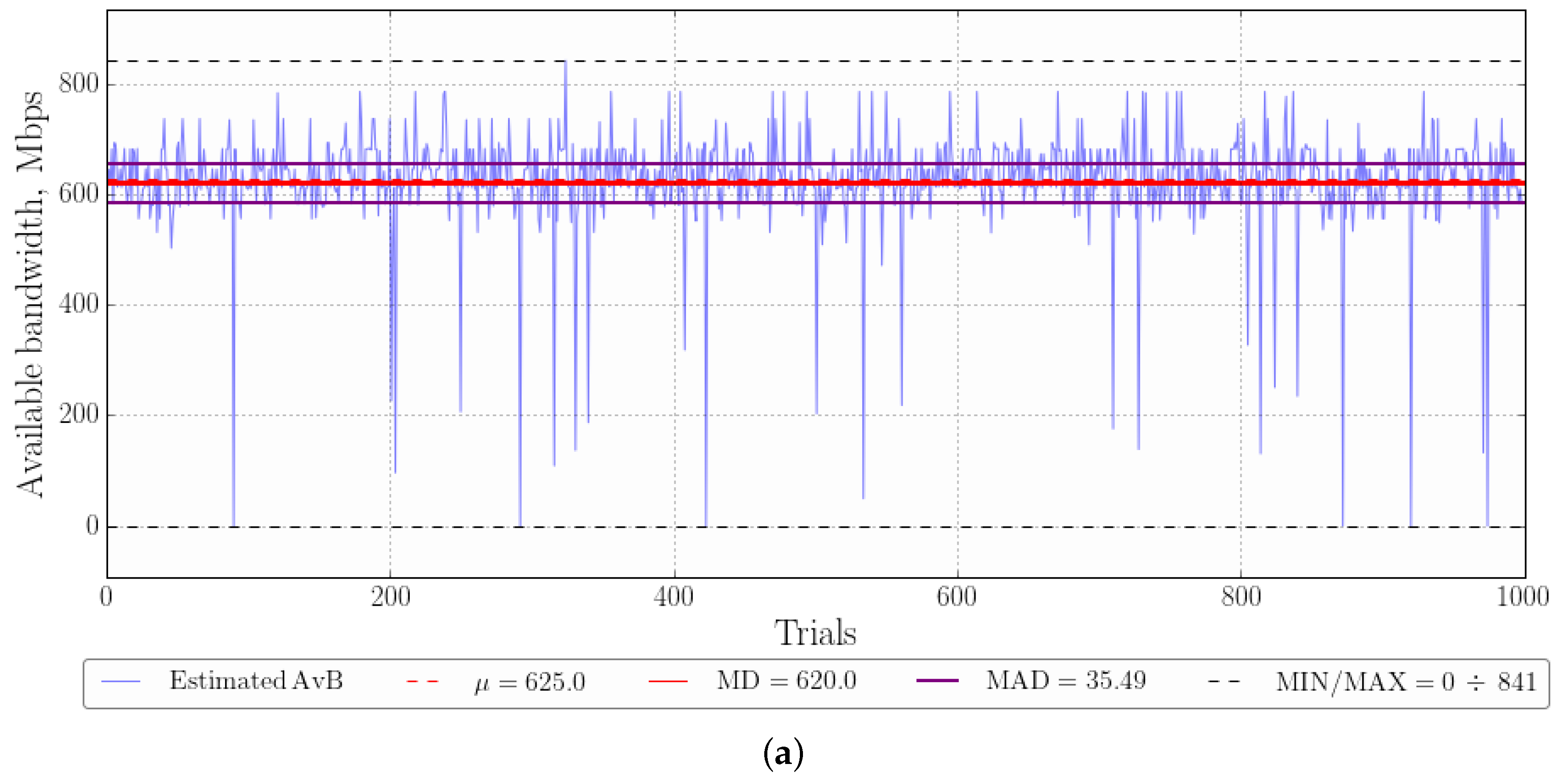

3.3.1. Experimental Results in 300 Mbps Network

3.3.2. Experimental Results in 1 Gbps Network

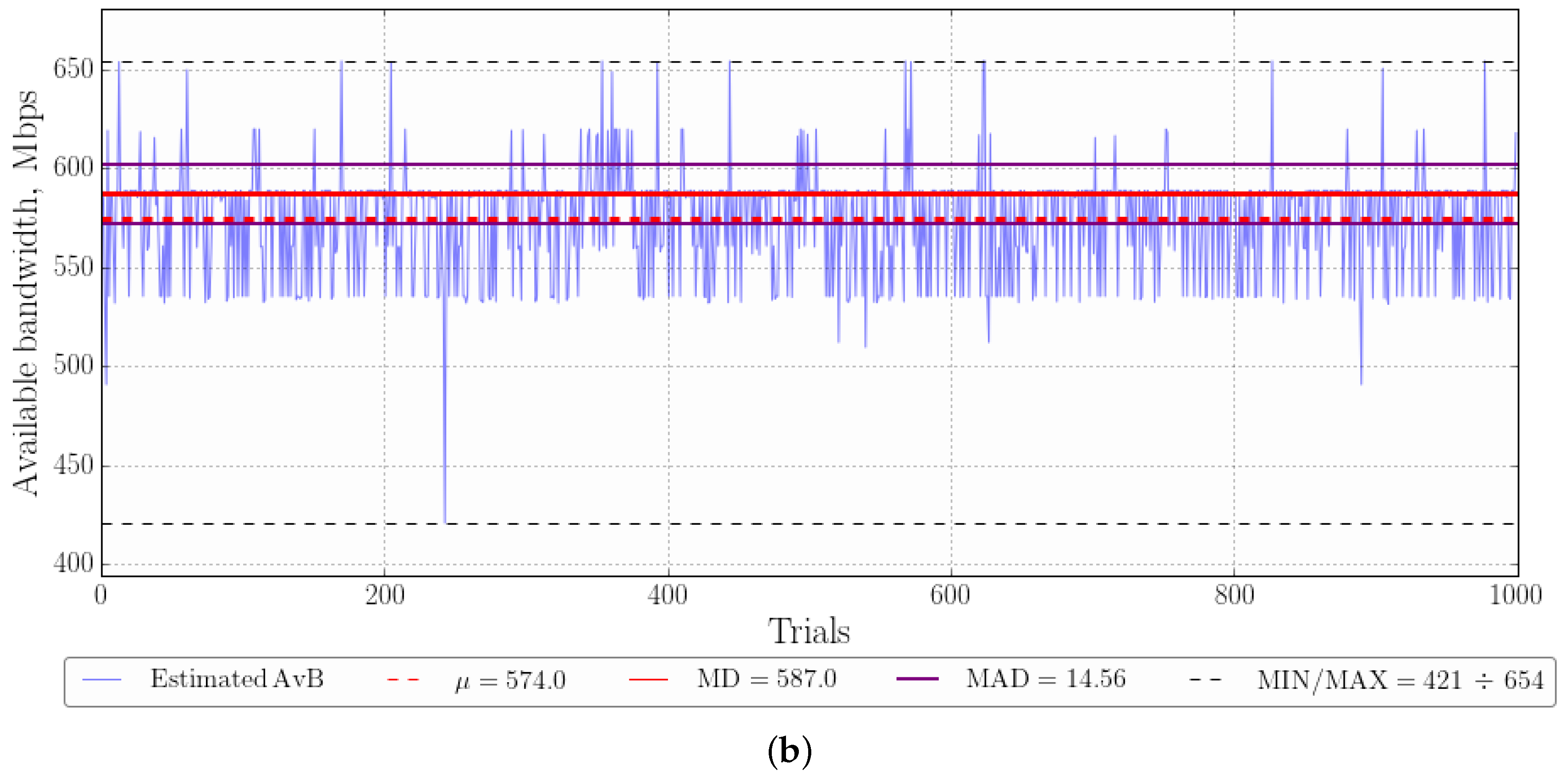

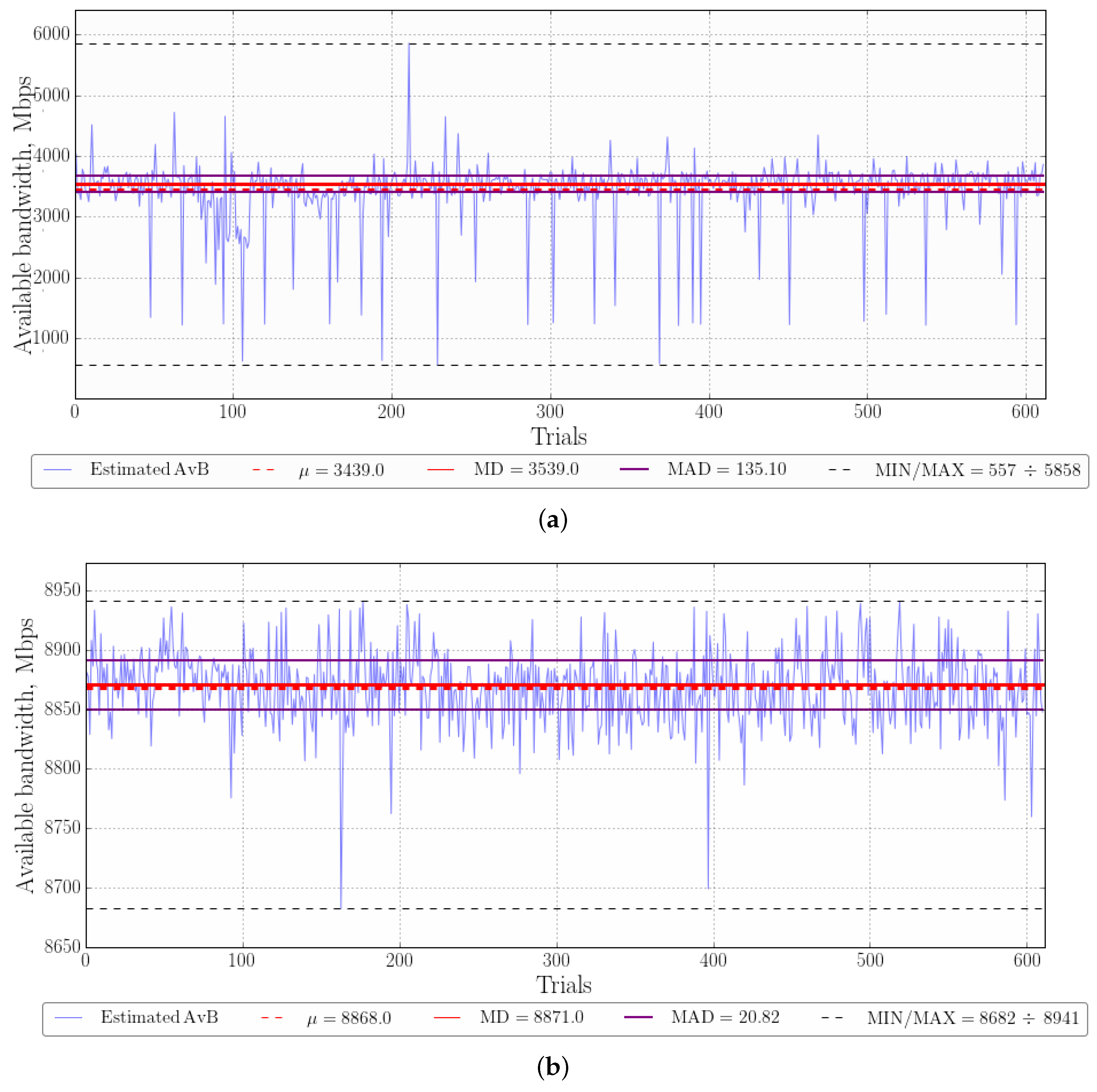

3.3.3. Experimental Results in 10 Gbps Network

4. Discussion

Author Contributions

Funding

Conflicts of Interest

Abbreviations

| CCDF | Complementary Cumulative Distribution Function |

| VM | Virtual Machine |

| Vbox | Virtual Box |

| HPT | High-Per-Timer |

| OS | Operating System |

| MD | Median |

| MAD | Median absolute deviation |

| RTT | Round Trip-Time Delay |

| AvB | Available bandwidth |

| GE | Gigabit Ethernet |

| PRM | Probe Rate Model |

| NIC | Network Interface Card |

| TSC | Time Stamp Counter |

| VDSO | Virtual Dynamic Shared Object |

| RDTSCP | Read Time-Stamp Counter and Processor ID |

References

- Kipp, S. The 2019 Ethernet Roadmap. Available online: https://ethernetalliance.org/technology/2019-roadmap/ (accessed on 16 August 2019).

- Wang, H.; Lee, K.S.; Li, E.; Lim, C.L.; Tang, A.; Weatherspoon, H. Timing is Everything: Accurate, Minimum Overhead, Available Bandwidth Estimation in High-speed Wired Networks. In Proceedings of the 2014 Conference on Internet Measurement Conference—IMC’14, Vancouver, BC, Canada, 5–7 November 2014; pp. 407–420. [Google Scholar] [CrossRef]

- Karpov, K.; Fedotova, I.; Siemens, E. Impact of Machine Virtualization on Timing Precision for Performance-critical Tasks. J. Phys. Conf. Ser. 2017, 870, 012007. [Google Scholar] [CrossRef]

- Karpov, K.; Fedotova, I.; Kachan, D.; Kirova, V.; Siemens, E. Impact of virtualization on timing precision under stressful network conditions. In Proceedings of the IEEE EUROCON 2017 17th International Conference on Smart Technologies, Ohrid, Macedonia, 6–8 July 2017; pp. 157–163. [Google Scholar]

- Korniichuk, M.; Karpov, K.; Fedotova, I.; Kirova, V.; Mareev, N.; Syzov, D.; Siemens, E. Impact of Xen and Virtual Box Virtualization Environments on Timing Precision under Stressful Conditions. MATEC Web Conf. 2017, 208, 02006. [Google Scholar] [CrossRef][Green Version]

- Fedotova, I.; Siemens, E.; Hu, H. A high-precision time handling library. J. Commun. Comput. 2013, 10, 1076–1086. [Google Scholar]

- Fedotova, I.; Krause, B.; Siemens, E. Upper Bounds Prediction of the Execution Time of Programs Running on ARM Cortex—A Systems. In Technological Innovation for Smart Systems; IFIP Advances in Information and Communication Technology Series; Camarinha-Matos, L.M., Parreira-Rocha, M., Ramezani, J., Eds.; Springer International Publishing: Cham, Switzerland, 2017; pp. 220–229. [Google Scholar]

- Kirova, V.; Siemens, E.; Kachan, D.; Vasylenko, O.; Karpov, K. Optimization of Probe Train Size for Available Bandwidth Estimation in High-speed Networks. MATEC Web Conf. 2018, 208, 02001. [Google Scholar] [CrossRef][Green Version]

- Sommers, J.; Barford, P.; Willinger, W. A Proposed Framework for Calibration of Available Bandwidth Estimation Tools. In Proceedings of the 11th IEEE Symposium on Computers and Communications (ISCC’06), Cagliari, Italy, 26–29 June 2006; pp. 709–718. [Google Scholar] [CrossRef]

- Kachan, D.; Kirova, V.; Korniichuk, M. Performance Evaluation of PRM-Based Available Bandwidth Using Estimation Tools in a High-Speed Network Environment; Scientific Works of ONAT Popova: Odessa, Ukraine, 2017; pp. 160–168. Available online: http://irbis-nbuv.gov.ua/cgi-bin/irbis_nbuv/cgiirbis_64.exe?C21COM=2&I21DBN=UJRN&P21DBN=UJRN&IMAGE_FILE_DOWNLOAD=1&Image_file_name=PDF/Nponaz_2017_2_24.pdf (accessed on 16 August 2019).

- Goldoni, E.; Schivi, M. End-to-End Available Bandwidth Estimation Tools, An Experimental Comparison. In Proceedings of the International Workshop on Traffic Monitoring and Analysis, Zurich, Switzerland, 7 April 2010; Volume 6003, pp. 171–182. [Google Scholar] [CrossRef]

- Aceto, G.; Palumbo, F.; Persico, V.; Pescapé, A. An experimental evaluation of the impact of heterogeneous scenarios and virtualization on the available bandwidth estimation tools. In Proceedings of the 2017 IEEE International Workshop on Measurement and Networking (M&N), Naples, Italy, 27–29 September 2017; pp. 1–6. [Google Scholar] [CrossRef]

- Kachan, D.; Siemens, E.; Shuvalov, V. Available bandwidth measurement for 10 Gbps networks. In Proceedings of the 2015 International Siberian Conference on Control and Communications (SIBCON), Omsk, Russia, 21–23 May 2015; pp. 1–10. [Google Scholar] [CrossRef]

- Maksymov, S.; Kachan, D.; Siemens, E. Connection Establishment Algorithm for Multi-destination Protocol. In Proceedings of the 4th International Conference on Applied Innovations in IT, Koethen, Germany, 10 March 2016; pp. 57–60. [Google Scholar]

- Bakharev, A.V.; Siemens, E.; Shuvalov, V.P. Analysis of performance issues in point-to-multipoint data transport for big data. In Proceedings of the 2014 12th International Conference on Actual Problems of Electronics Instrument Engineering (APEIE), Novosibirsk, Russia, 2–4 October 2014; pp. 431–441. [Google Scholar]

- Syzov, D.; Kachan, D.; Karpov, K.; Mareev, N.; Siemens, E. Custom UDP-Based Transport Protocol Implementation over DPDK. In Proceedings of the 7th International Conference on Applied Innovations in IT, Koethen, Germany, 6 March 2019. [Google Scholar]

- Karpov, K.; Kachan, D.; Mareev, N.; Kirova, V.; Syzow, D.; Siemens, E.; Shuvalov, V. Adopting Minimum Spanning Tree Algorithm for Application-Layer Reliable Mutlicast in Global Mutli-Gigabit Networks. In Proceedings of the 7th International Conference on Applied Innovations in IT, Koethen, Germany, 6 March 2019. [Google Scholar]

- Uhlig, R.; Neiger, G.; Rodgers, D.; Santoni, A.L.; Martins, F.C.; Anderson, A.V.; Bennett, S.M.; Kagi, A.; Leung, F.H.; Smith, L. Intel Virtualization Technology. Computer 2005, 38, 48–56. [Google Scholar] [CrossRef]

- Litayem, N.; Saoud, S.B. Impact of the Linux real-time enhancements on the system performances for multi-core intel architectures. Int. J. Comput. Appl. 2011, 17, 17–23. [Google Scholar] [CrossRef]

- Cerqueira, F.; Brandenburg, B. A Comparison of Scheduling Latency in Linux, PREEMPT-RT, and LITMUS RT. In Proceedings of the 9th Annual Workshop on Operating Systems Platforms for Embedded Real-Time Applications, Paris, France, 9 July 2013; pp. 19–29. [Google Scholar]

- Lao, L.; Dovrolis, C.; Sanadidi, M. The Probe Gap Model can underestimate the available bandwidth of multihop paths. ACM SIGCOMM Comput. Commun. Rev. 2006, 36, 29–34. [Google Scholar] [CrossRef]

- Low, P.J.; Alias, M.Y. Enhanced bandwidth estimation design based on probe-rate model for multimedia network. In Proceedings of the 2014 IEEE 2nd International Symposium on Telecommunication Technologies (ISTT), Langkawi, Malaysia, 24–26 November 2014; pp. 198–203. [Google Scholar]

- Jain, M.; Dovrolis, C. End-to-end available bandwidth.: Measurement methodology, dynamics, and relation with TCP throughput. In Proceedings of the 2002 Conference on Applications, Technologies, Architectures, and Protocols for Computer Communications (SIGCOMM’02), Pittsburgh, PA, USA, 19–23 August 2002. [Google Scholar]

- Dugan, J. Iperf Tutorial. Available online: https://slideplayer.com/slide/4463879/ (accessed on 16 August 2019).

- Katz, B.M.; McSweeney, M. A multivariate Kruskal-Wallis test with post hoc procedures. Multivar. Behav. Res. 1980, 15, 281–297. [Google Scholar] [CrossRef] [PubMed]

- Abdi, H.; Williams, L.J. Tukey’s honestly significant difference (HSD) test. In Encyclopedia of Research Design; Sage: Thousand Oaks, CA, USA, 2010; pp. 1–5. [Google Scholar]

| Function | Platform | Load | MAD, ns | Max, ns | Median, ns | Min, ns |

|---|---|---|---|---|---|---|

| TSC | ESXi | CPU-bound | 2.9 | 216,009,000.0 | 15.8 | 14.3 |

| TSC | ESXi | IO/network-bound | 0.7 | 55,265.8 | 21.5 | 14.3 |

| TSC | ESXi | Idle System | 0.7 | 62,208.5 | 20.1 | 14.3 |

| TSC | HostOS | CPU-bound | 0.0 | 168,004,000.0 | 17.2 | 12.9 |

| TSC | HostOS | IO/network-bound | 0.0 | 51,820.0 | 17.2 | 12.9 |

| TSC | HostOS | Idle System | 0.0 | 51,732.5 | 17.2 | 15.8 |

| TSC | QEMU | CPU-bound | 0.7 | 116,009,000.0 | 15.8 | 14.3 |

| TSC | QEMU | IO/network-bound | 0.7 | 73,509.8 | 15.8 | 14.3 |

| TSC | QEMU | Idle System | 0.7 | 818,343.0 | 21.5 | 14.3 |

| TSC | VBox | CPU-bound | 12.9 | 507,099,000.0 | 2033.0 | 0.7 |

| TSC | VBox | IO/network-bound | 57.6 | 13,733,500.0 | 2070.0 | 1.1 |

| TSC | VBox | Idle System | 10.9 | 2,744,610.0 | 2053.9 | 1.1 |

| TSC | Xen | CPU-bound | 2.9 | 200,008,000.0 | 20.0 | 14.3 |

| TSC | Xen | IO/network-bound | 0.7 | 4,046,770.0 | 17.2 | 14.3 |

| TSC | Xen | Idle System | 0.0 | 27,250.5 | 18.6 | 14.3 |

| System Call | ESXi | CPU-bound | 1.0 | 212,006,000.0 | 34.0 | 25.0 |

| System Call | ESXi | IO/network-bound | 1.0 | 28,608.0 | 34.0 | 25.0 |

| System Call | ESXi | Idle System | 1.0 | 56,966.0 | 34.0 | 25.0 |

| System Call | HostOS | CPU-bound | 0.5 | 108,005,000.0 | 26.0 | 24.0 |

| System Call | HostOS | IO/network-bound | 0.0 | 112,208.0 | 26.0 | 24.0 |

| System Call | HostOS | Idle System | 1.5 | 55,249.0 | 27.0 | 24.0 |

| System Call | QEMU | CPU-bound | 1.0 | 108,022,000.0 | 64.0 | 51.0 |

| System Call | QEMU | IO/network-bound | 3.5 | 167,598.0 | 69.0 | 52.0 |

| System Call | QEMU | Idle System | 1.0 | 69,456.0 | 57.0 | 51.0 |

| System Call | VBox | CPU-bound | 14.5 | 464,957,000.0 | 2060.0 | 1.0 |

| System Call | VBox | IO/network-bound | 57.5 | 12,064,200.0 | 2104.0 | 1.0 |

| System Call | VBox | Idle System | 13.0 | 826,773.0 | 2042.0 | 2.0 |

| System Call | Xen | CPU-bound | 1.5 | 296,001,000.0 | 116.0 | 80.0 |

| System Call | Xen | IO/network-bound | 0.5 | 885,662.0 | 113.0 | 78.0 |

| System Call | Xen | Idle System | 3.5 | 133,775.0 | 119.0 | 54.0 |

| Function | Platform | Load | MAD, ns | Max, ns | Median, ns | Min, ns |

|---|---|---|---|---|---|---|

| HPTSleep | ESXi | CPU-bound | 18.6 | 287,997,000.0 | 113.9 | 66.6 |

| HPTSleep | ESXi | IO/network-bound | 14.3 | 2,024,870.0 | 116.7 | 65.2 |

| HPTSleep | ESXi | Idle System | 6.4 | 432,825.0 | 95.2 | 65.2 |

| HPTSleep | Host OS | CPU-bound | 3.6 | 196,002,000.0 | 102.4 | 82.3 |

| HPTSleep | Host OS | IO/network-bound | 2.9 | 407,121.0 | 94.9 | 79.1 |

| HPTSleep | Host OS | Idle System | 0.7 | 402,269.0 | 82.0 | 72.0 |

| HPTSleep | QEMU | CPU-bound | 2.9 | 208,008,000.0 | 136.8 | 96.7 |

| HPTSleep | QEMU | IO/network-bound | 0.7 | 4,351,030.0 | 117.8 | 74.8 |

| HPTSleep | QEMU | Idle System | 1.4 | 1,187,960.0 | 116.4 | 74.8 |

| HPTSleep | VBox | CPU-bound | 76.1 | 596,501,000.0 | 5183.1 | 2161.1 |

| HPTSleep | VBox | IO/network-bound | 125.0 | 12,048,600.0 | 5087.4 | 2134.6 |

| HPTSleep | VBox | Idle System | 121.1 | 4,697,970.0 | 5117.3 | 2142.5 |

| HPTSleep | Xen | CPU-bound | 2.9 | 355,964,000.0 | 94.5 | 58.7 |

| HPTSleep | Xen | IO/network-bound | 3.6 | 9,644,930.0 | 127.5 | 87.4 |

| HPTSleep | Xen | Idle System | 4.3 | 573,593.0 | 94.5 | 67.3 |

| uSleep | ESXi | CPU-bound | 294.3 | 196,105,000.0 | 56,044.5 | 4883.0 |

| uSleep | ESXi | IO/network-bound | 1123.6 | 12,689,600.0 | 62,199.1 | 6365.4 |

| uSleep | ESXi | Idle System | 851.4 | 1,272,060.0 | 63,085.2 | 4300.1 |

| uSleep | Host OS | CPU-bound | 55.2 | 69,705,100.0 | 54,674.9 | 5918.4 |

| uSleep | Host OS | IO/network-bound | 792.7 | 4,971,040.0 | 57,756.1 | 5478.3 |

| uSleep | Host OS | Idle System | 680.0 | 689,113.0 | 61,996.3 | 6848.2 |

| uSleep | QEMU | CPU-bound | 290.0 | 69,372,000.0 | 63,264.2 | 7132.5 |

| uSleep | QEMU | IO/network-bound | 3738.6 | 11,674,900.0 | 74,540.7 | 7807.0 |

| uSleep | QEMU | Idle System | 1820.0 | 4,150,180.0 | 97,351.3 | 8167.9 |

| uSleep | VBox | CPU-bound | 3,611,915.2 | 539,619,000.0 | 7,601,230.0 | 15,913.7 |

| uSleep | VBox | IO/network-bound | 16,410.0 | 11,469,400.0 | 131,192.0 | 29,421.1 |

| uSleep | VBox | Idle System | 16,395.5 | 8,670,880.0 | 131,252.0 | 29,471.2 |

| uSleep | Xen | CPU-bound | 229.8 | 141,160,000.0 | 98,544.0 | 10,624.7 |

| uSleep | Xen | IO/network-bound | 935.0 | 3,924,120.0 | 107,852.0 | 23,060.8 |

| uSleep | Xen | Idle System | 260.0 | 2,176,090.0 | 109,411.0 | 24,399.8 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Kirova, V.; Karpov, K.; Siemens, E.; Zander, I.; Vasylenko, O.; Kachan, D.; Maksymov, S. Impact of Modern Virtualization Methods on Timing Precision and Performance of High-Speed Applications. Future Internet 2019, 11, 179. https://doi.org/10.3390/fi11080179

Kirova V, Karpov K, Siemens E, Zander I, Vasylenko O, Kachan D, Maksymov S. Impact of Modern Virtualization Methods on Timing Precision and Performance of High-Speed Applications. Future Internet. 2019; 11(8):179. https://doi.org/10.3390/fi11080179

Chicago/Turabian StyleKirova, Veronika, Kirill Karpov, Eduard Siemens, Irina Zander, Oksana Vasylenko, Dmitry Kachan, and Sergii Maksymov. 2019. "Impact of Modern Virtualization Methods on Timing Precision and Performance of High-Speed Applications" Future Internet 11, no. 8: 179. https://doi.org/10.3390/fi11080179

APA StyleKirova, V., Karpov, K., Siemens, E., Zander, I., Vasylenko, O., Kachan, D., & Maksymov, S. (2019). Impact of Modern Virtualization Methods on Timing Precision and Performance of High-Speed Applications. Future Internet, 11(8), 179. https://doi.org/10.3390/fi11080179