Artificial Intelligence Imagery Analysis Fostering Big Data Analytics

Abstract

1. Introduction

2. Artificial Intelligence Imagery Analysis: Background, Evolution, and Illustration

2.1. Background

2.2. Evolution

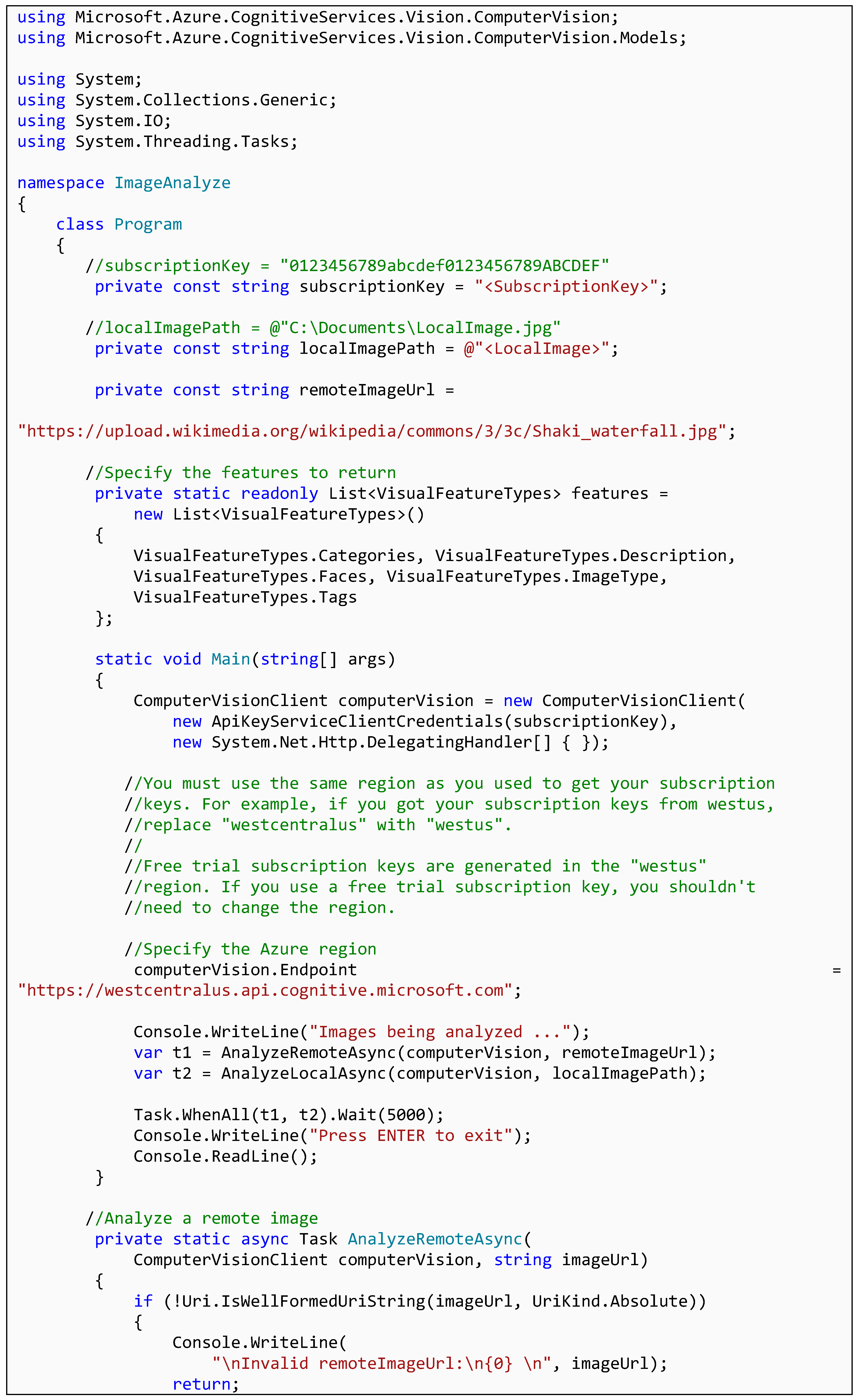

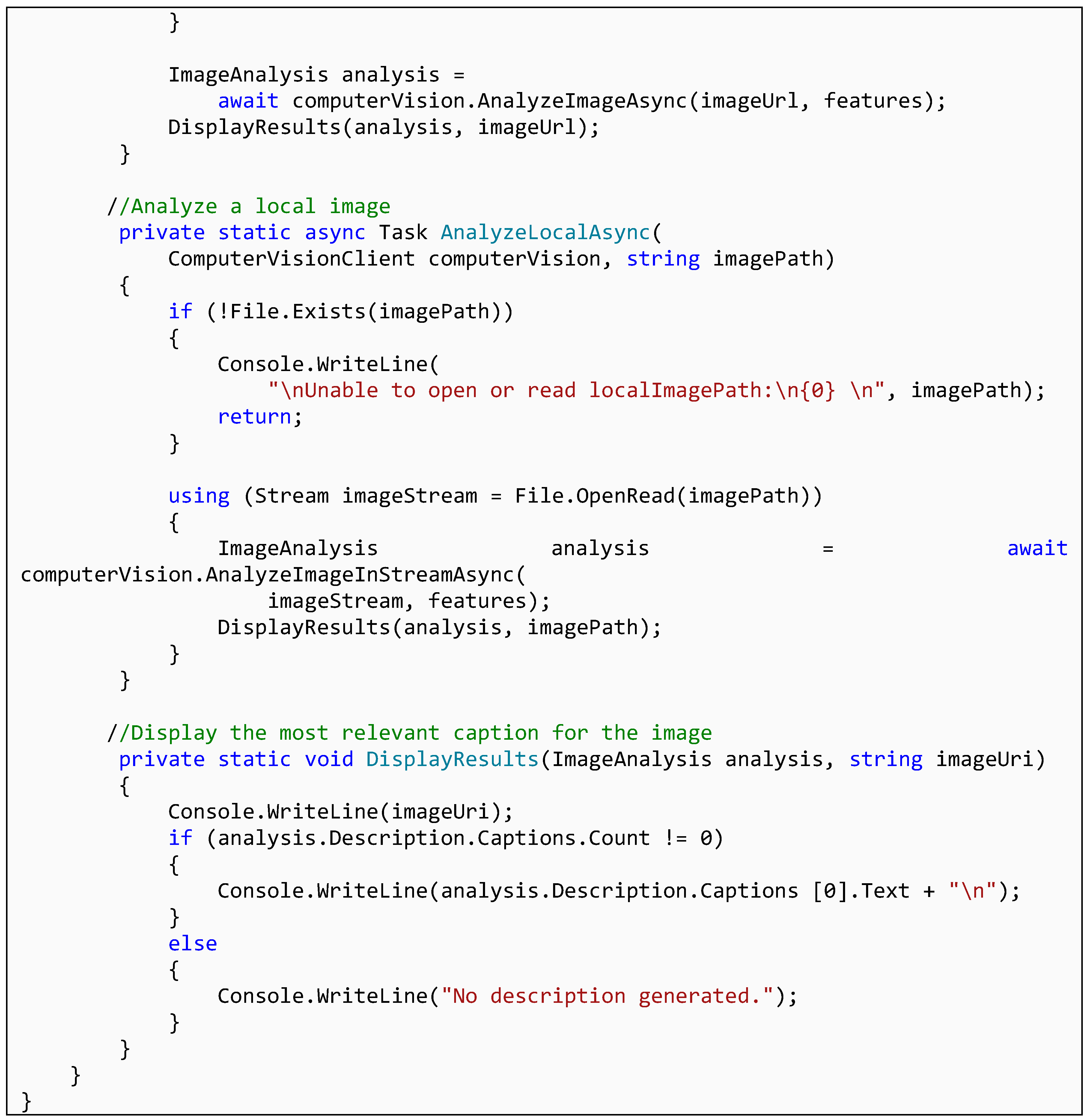

2.3. Azure Cognitive Services from Microsoft

- Provide the subscription key for Azure Cognitive Services for the purpose of invoicing

- Provide the URL of the input image

- Specify for what features the API should analyze the image

- Display the results of the analysis

- Image URL or path to locally stored image

- Supported input methods: raw image binary in the form of an application/octet stream or image URL

- Supported image formats: JPEG, PNG, GIF, BMP,

- Image file size: less than 4 MB, and

- Image dimension: greater than 50 × 50 pixels.

3. Potential Applications

3.1. Supporting Research Designs and Processes

3.2. Applications in Practice

4. Research Illustration

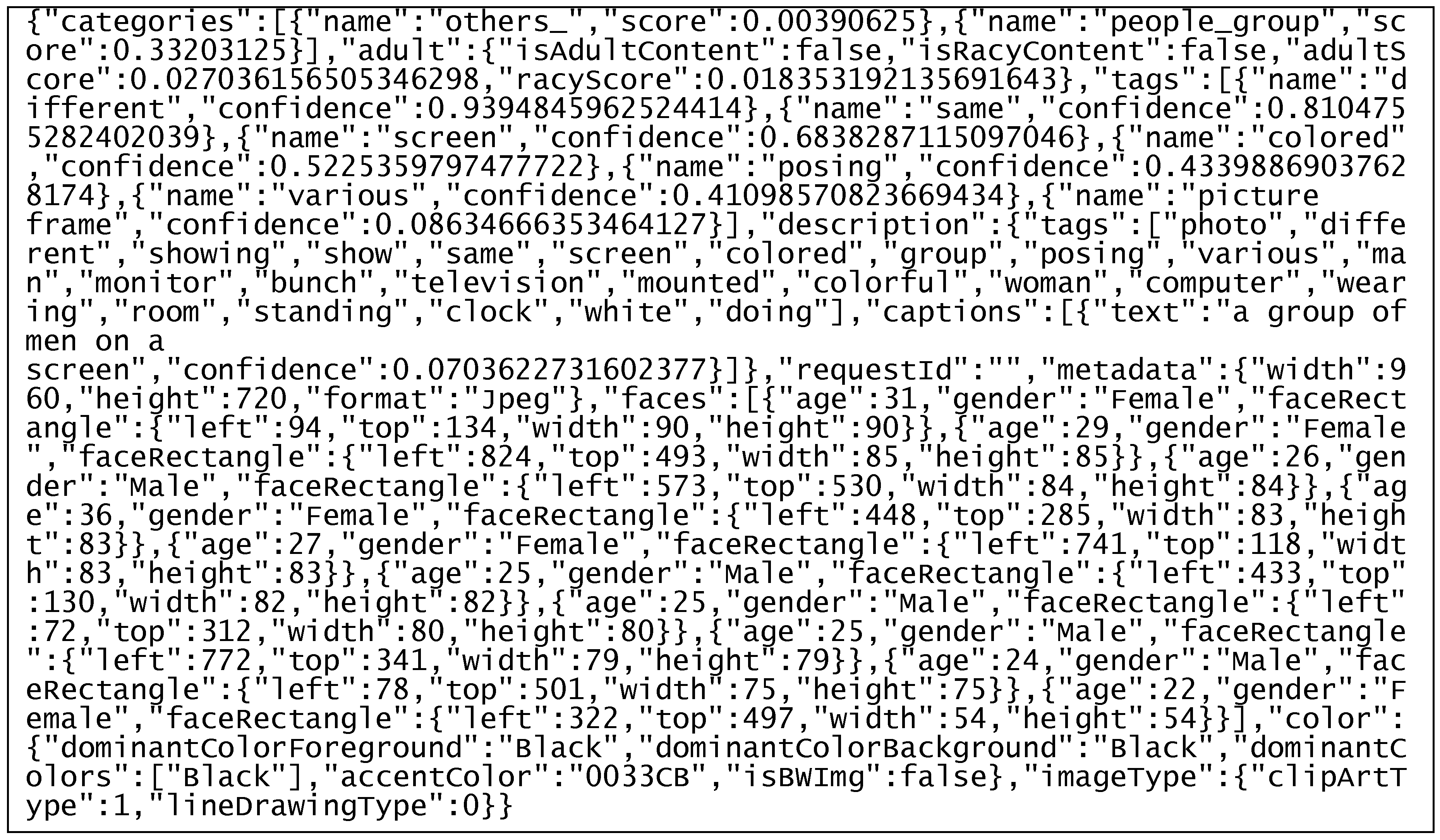

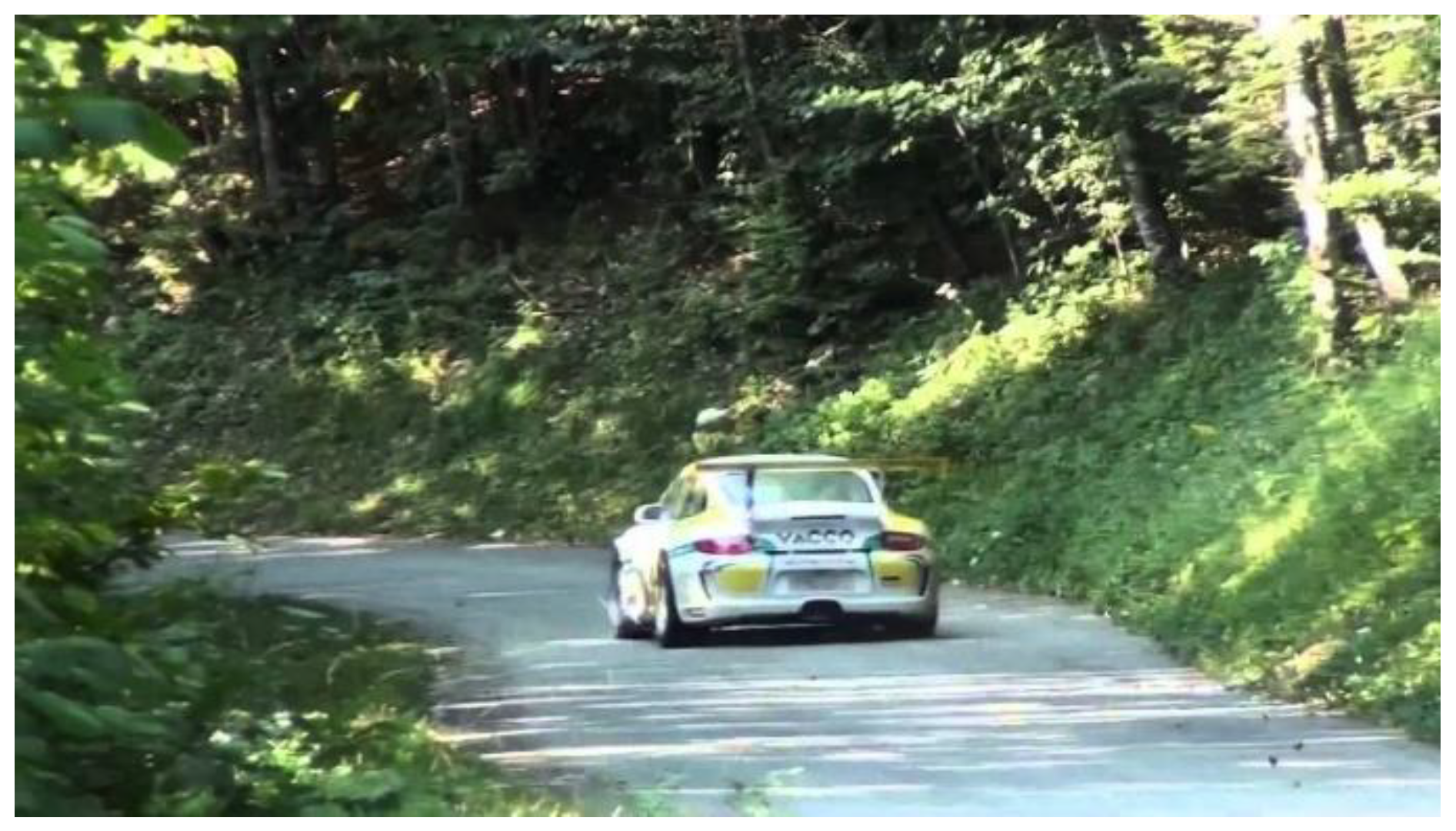

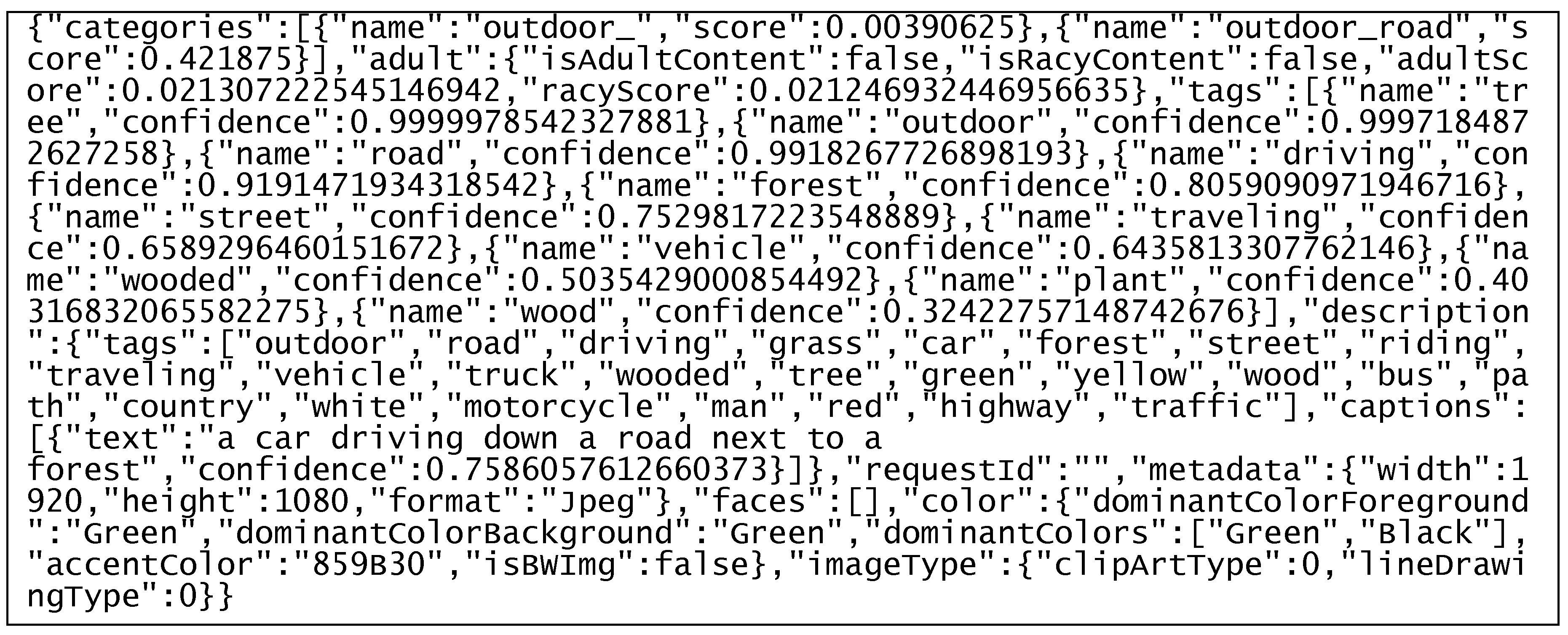

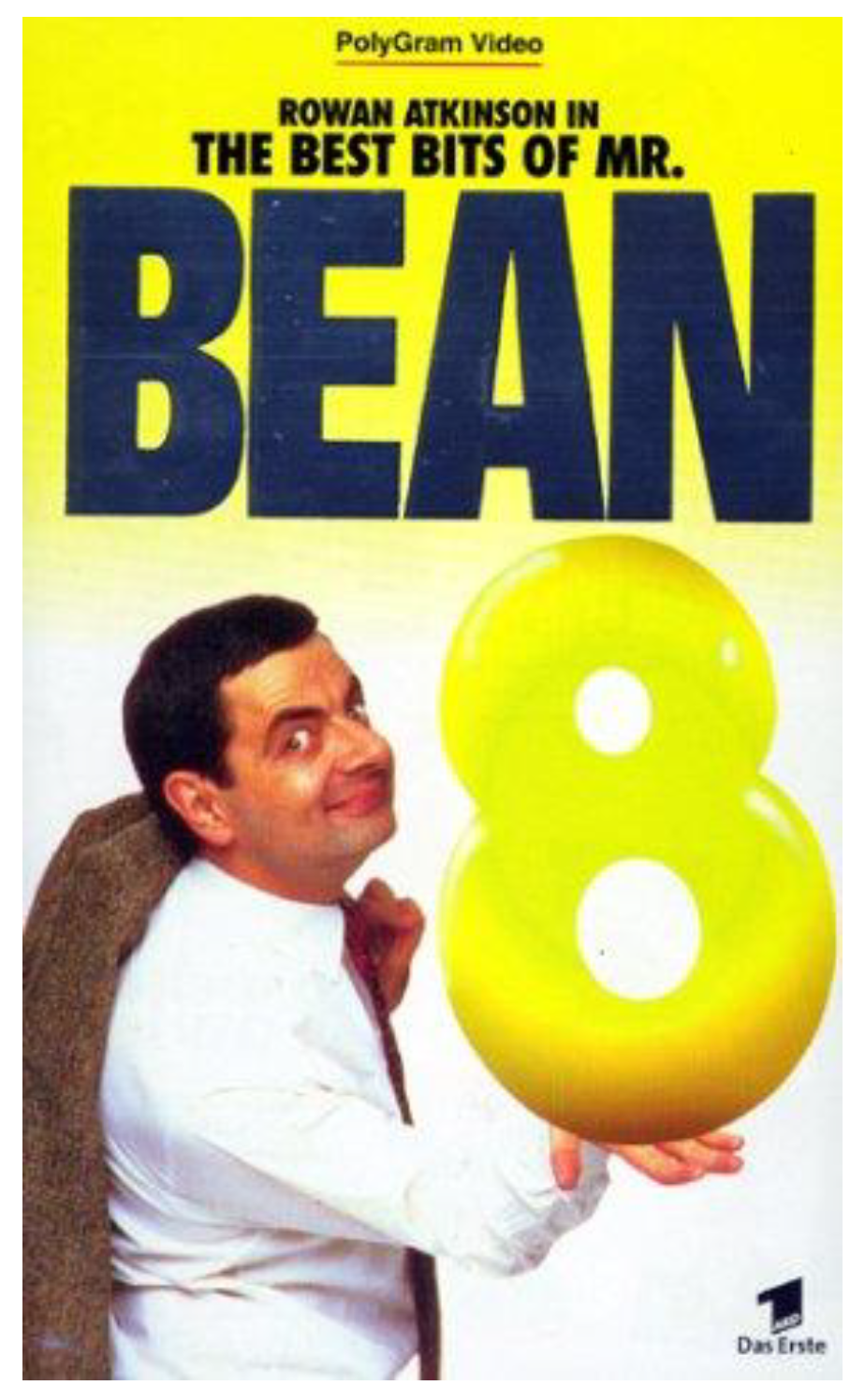

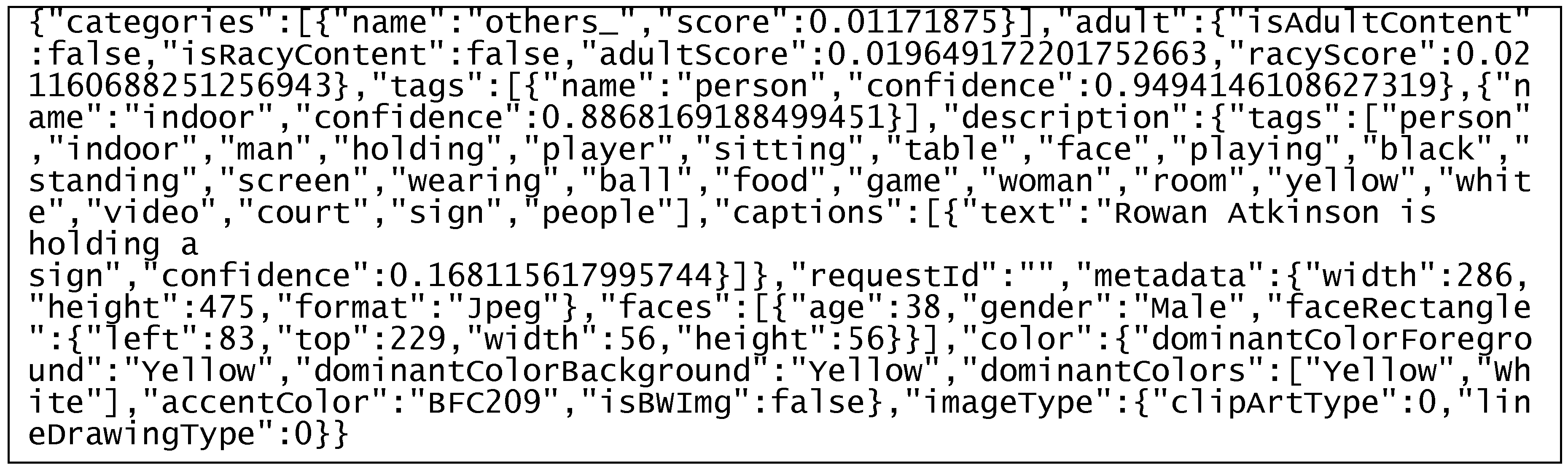

- The input image uploaded to the API: Every image is a thumbnail shown on YouTube. By clicking on the thumbnail, the user can access the YouTube video behind.

- The computer vision API output follows the general format of first stating an assessment with regard to the input image and then, where applicable, a confidence score (indicating to what degree the API was “confident” to make this assessment, with 1 as the highest level of confidence). The output starts with a general categorization of the image and then indicates to what extent the image shows content that is adult or racy. Then, it lists tags assigned either to the image as a whole, or certain objects in the image. What follows is a summarized text description for the image as a whole, for instance “a group of men on a screen” and some metadata of the image. The output then lists the faces identified in the image, including coordinates and dimensions, age and gender. In the end, the API output identifies dominant colors, accent colors, whether the image is black and white and what type the image is, for instance clipart or a naturalistic photography.

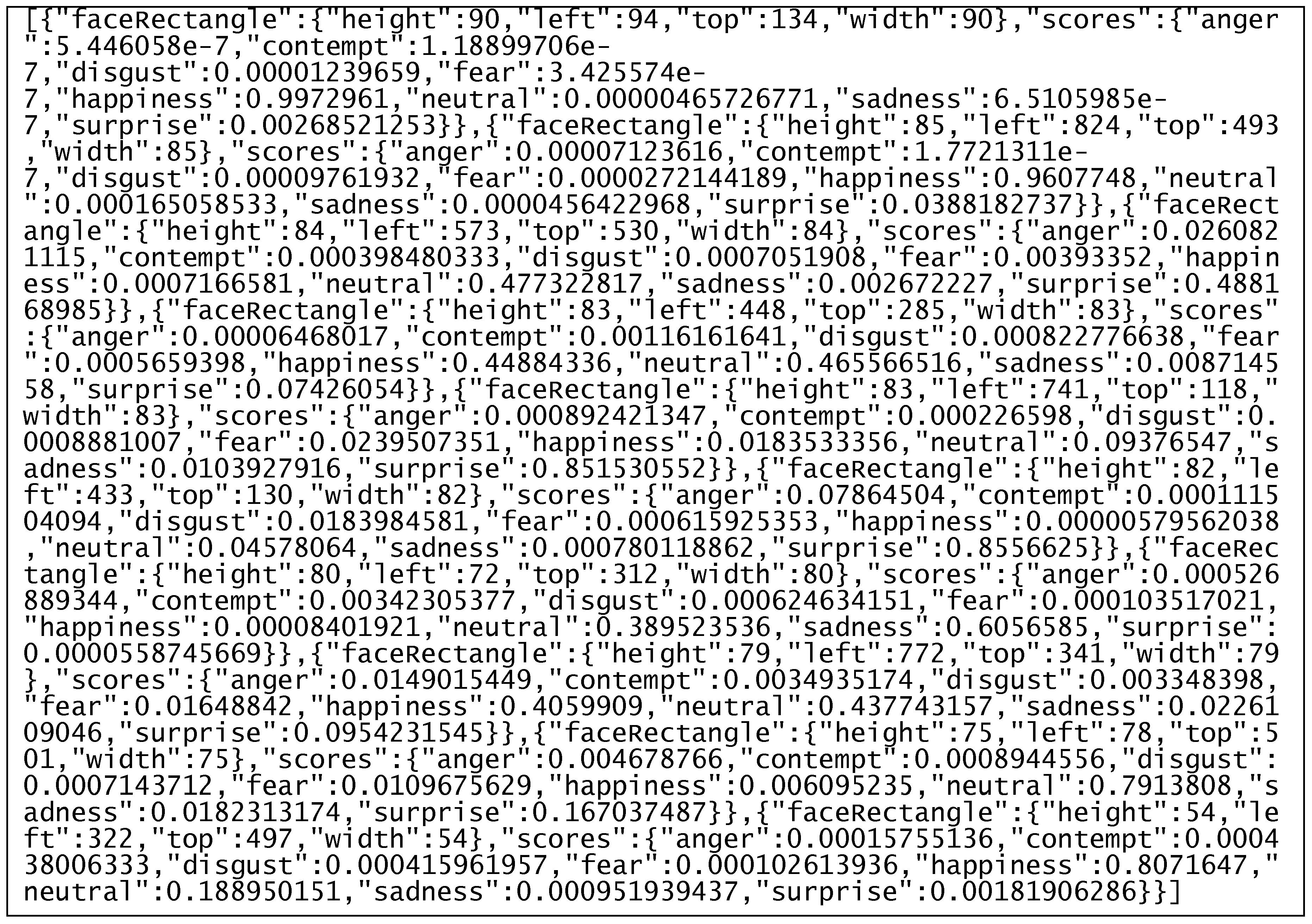

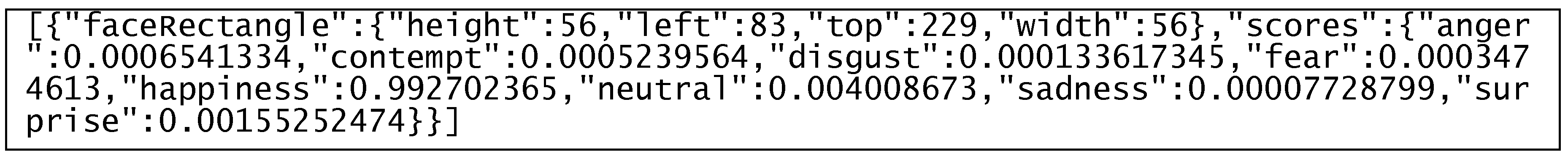

- The emotion API output is organized around the faces identified in the input image. For every face, the output first states the coordinates and dimensions. It then provides scores that indicate to what degree the face shows anger, contempt, disgust, fear, happiness, sadness, surprise, or is totally neutral. For each emotion, there is a score between 0 and 1, with 1 indicating that the face expresses the emotion as strongly as possible. The API does not only assign a single dominant emotion to a face, but recognizes when different types of emotions are present in different degrees. The categorization for negative emotions (5 categories) is more fine-grained than for positive emotions (1 category), because human emotions are more complex in the negative range than in the positive range.

- The Optical Character Recognition (OCR) output originates from the Tesseract open-source software and first states the two-digit language code for the primary language the OCR engine has looked for in the thumbnail. It further identifies whether all text in the image is rotated by a certain angle and whether the text orientation is “up” or “down”. Afterwards, the output is organized by regions of text, and then by bounding boxes that contain a piece of text with characters that are spatially close, such as a word. For every bounding box, the output identifies coordinates and dimensions and the characters recognized within the box.

5. Outlook

Author Contributions

Funding

Conflicts of Interest

References

- Diaz Andrade, A.; Urquhart, C.; Arthanari, T. Seeing for understanding: Unlocking the potential of visual research in Information Systems. J. Assoc. Inf. Syst. 2015, 16, 646–673. [Google Scholar] [CrossRef]

- Jiang, Z.; Benbasat, I. The effects of presentation formats and task complexity on online consumers’ product understanding. Manag. Inf. Syst. Q. 2007, 31, 475–500. [Google Scholar] [CrossRef]

- Gregor, S.; Lin, A.; Gedeon, T.; Riaz, A.; Zhu, D. Neuroscience and a nomological network for the understanding and assessment of emotions in Information Systems research. J. Manag. Inf. Syst. 2014, 30, 13–48. [Google Scholar] [CrossRef]

- Benbasat, I.; Dimoka, A.; Pavlou, P.; Qiu, L. Incorporating social presence in the design of the anthropomorphic interface of recommendation agents: Insights from an fMRI study. In Proceedings of the 2010 International Conference on Information Systems, Saint Louis, MO, USA, 6–8 June 2010. [Google Scholar]

- Gefen, D.; Ayaz, H.; Onaral, B. Applying Functional Near Infrared (fNIR) spectroscopy to enhance MIS research. Trans. Hum. Comput. Interact. 2014, 6, 55–73. [Google Scholar] [CrossRef][Green Version]

- Cyr, D.; Head, M.; Larios, H.; Pan, B. Exploring human images in website design: A multi-method approach. Manag. Inf. Syst. Q. 2009, 33, 539–566. [Google Scholar] [CrossRef]

- Djamasbi, S.; Siegel, M.; Tullis, T. Generation Y, web design, and eye tracking. Int. J. Hum. Comput. Stud. 2010, 68, 307–323. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification. 2015. Available online: arxiv.org/pdf/1502.01852v1 (accessed on 25 July 2019).

- Morris, T. Computer Vision and Image Processing; Palgrave Macmillan: London, UK, 2004. [Google Scholar]

- Brown, C. Computer vision and natural constraints. Science 1984, 224, 1299–1305. [Google Scholar] [CrossRef] [PubMed]

- Jianu, S.; Ichim, L.; Popescu, D. Automatic Diagnosis of Skin Cancer Using Neural Networks. In Proceedings of the 2019—11th International Symposium on Advanced Topics in Electrical Engineering (ATEE), Bucharest, Romania, 28–30 March 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 1–4. [Google Scholar]

- Byeon, W.; Domínguez-Rodrigo, M.; Arampatzis, G.; Baquedano, E.; Yravedra, J.; Maté-González, M.; Koumoutsakos, P. Automated identification and deep classification of cut marks on bones and its paleoanthropological implications. J. Comput. Sci. 2019, 32, 36–43. [Google Scholar] [CrossRef]

- De Fauw, J.; Keane, P.; Tomasev, N.; Visentin, D.; van den Driessche, G.; Johnson, M.; Hughes, C.; Chu, C.; Ledsam, J.; Back, T.; et al. Automated analysis of retinal imaging using machine learning techniques for computer vision. F1000Resarch 2016, 5, 1573. [Google Scholar]

- Zhu, X.; Gao, M.; Li, S. A real-time Road Boundary Detection Algorithm Based on Driverless Cars. In Proceedings of the 2015 4th National Conference on Electrical, Electronics and Computer Engineering, Xi’an, China, 12–13 December 2015; Atlantis Press: Paris, France. [Google Scholar]

- Houben, S.; Stallkamp, J.; Salmen, J.; Schlipsing, M.; Igel, C. Detection of traffic signs in real-world images: The German traffic sign detection benchmark. In Proceedings of the 2013 International Joint Conference on Neural Networks (IJCNN 2013), Dallas, TX, USA, 4–9 August 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 1–8. [Google Scholar]

- Wolcott, R.; Eustice, R. Visual localization within LIDAR maps for automated urban driving. In Proceedings of the 2014 IEEE/RSJ International Conference on Intelligent Robots and Systems, (IROS 2014), Chicago, IL, USA, 14–18 September 2014; IEEE: Piscataway, NJ, USA, 2014; pp. 176–183. [Google Scholar]

- Takacs, M.; Bencze, T.; Szabo-Resch, M.; Vamossy, Z. Object recognition to support indoor robot navigation. In Proceedings of the CINTI 2015, 16th IEEE International Symposium on Computational Intelligence and Informatics: Budapest, Hungary, 19–21 November 2015; Szakál, A., Ed.; IEEE: Piscataway, NJ, USA, 2015; pp. 239–242. [Google Scholar]

- Giusti, A.; Guzzi, J.; Ciresan, D.; He, F.; Rodriguez, J.; Fontana, F.; Faessler, M.; Forster, C.; Schmidhuber, J.; Di Caro, G.; et al. A Machine Learning Approach to Visual Perception of Forest Trails for Mobile Robots. IEEE Robot. Autom. Lett. 2016, 1, 661–667. [Google Scholar] [CrossRef]

- Silwal, A.; Gongal, A.; Karkee, M. Apple identification in field environment with over the row machine vision system. Agric. Eng. Int. CIGR J. 2014, 16, 66–75. [Google Scholar]

- Hornberg, A. Handbook of Machine Vision; Wiley-VCH: Hoboken, NJ, USA, 2007. [Google Scholar]

- Szeliski, R. Computer Vision. Algorithms and Applications; Springer: London, UK, 2011. [Google Scholar]

- Dechow, D. Explore the Fundamentals of Machine Vision: Part 1. Vision Systems Design. Available online: https://www.vision-systems.com/cameras-accessories/article/16736053/explore-the-fundamentals-of-machine-vision-part-i (accessed on 24 July 2019).

- Microsoft 2019a. Cognitive Services | Microsoft Azure. Available online: azure.microsoft.com/en-us/services/cognitive-services/ (accessed on 25 July 2019).

- Microsoft 2019b. Cognitive Services Directory | Microsoft Azure. Available online: azure.microsoft.com/en-us/services/cognitive-services/directory/vision/ (accessed on 25 July 2019).

- He, K.; Zhang, X.; Ren, S.; Sun, J. Spatial pyramid pooling in deep convolutional networks for visual recognition. In Computer Vision—ECCV 2014, Proceedings of the Computer Vision – ECCV 2014: 13th European Conference, Zurich, Switzerland, 6–12 September 2014; Fleet, D., Ed.; Springer: Cham, Switzerland, 2014; pp. 346–361. [Google Scholar]

- Chen, D.; Cao, X.; Wen, F.; Sun, J. Blessing of dimensionality: High-dimensional feature and its Efficient Compression for Face Verification. In Proceedings of the 2013 IEEE Conference on Computer Vision and pattern recognition, Portland, OR, USA, 23–28 June 2013; IEEE: Piscataway, NJ, USA, 2013; pp. 3025–3032. [Google Scholar]

- Chen, D.; Ren, S.; Wei, Y.; Cao, X.; Sun, J. Joint Cascade Face Detection and Alignment. In Proceedings of the Computer Vision—ECCV 2014: 13th European Conference, Zurich, Switzerland, 6–12 Septembe 2014; Fleet, D., Ed.; Springer: Cham, Switzerland, 2014; pp. 109–122. [Google Scholar]

- Yu, Z.; Zhang, C. Image based static facial expression recognition with multiple deep network learning. In Proceedings of the 2015 International Conference on Multimodal Interaction, Seattle, WA, USA, 9–13 November 2015; Zhang, Z., Cohen, P., Bohus, D., Horaud, R., Meng, H., Eds.; Association for Computing Machinery: New York, NY, USA, 2015; pp. 435–442. [Google Scholar]

- Microsoft 2019c. What are Azure Cognitive Services? Available online: docs.microsoft.com/en-us/azure/cognitive-services/welcome (accessed on 25 July 2019).

- Goodfellow, I.; Bengio, Y.; Courville, A. Deep Learning; MIT Press: Cambridge, MA, UK, 2016. [Google Scholar]

- Microsoft 2019d. Example: Call the Analyze Image API—Computer Vision. Available online: docs.microsoft.com/en-us/azure/cognitive-services/computer-vision/vision-api-how-to-topics/howtocallvisionapi (accessed on 25 July 2019).

- Aslam, B.; Karjaluoto, H. Digital advertising around paid spaces, e-advertising industry’s revenue engine: A review and research agenda. Telemat. Inform. 2017, 34, 1650–1662. [Google Scholar] [CrossRef]

- Henderson, J.; Hollingworth, A. High-level scene perception. Annu. Rev. Psychol. 1999, 50, 243–271. [Google Scholar] [CrossRef] [PubMed]

- Snowden, R.; Thompson, P.; Troscianko, T. Basic Vision: An Introduction to Visual Perception; Oxford University Press: Oxford, London, UK, 2012. [Google Scholar]

- Speier, C. The influence of information presentation formats on complex task decision-making performance. Int. J. Hum. Comput. Stud. 2006, 64, 1115–1131. [Google Scholar] [CrossRef]

- Djamasbi, S.; Siegel, M.; Tullis, T. Faces and viewing behavior: An exploratory investigation. Trans. Hum. Comput. Interact. 2012, 4, 190–211. [Google Scholar] [CrossRef][Green Version]

- Cremer, S.; Ma, A. Predicting e-commerce sales of hedonic information goods via artificial intelligence imagery analysis of thumbnails. In Proceedings of the 2017 International Conference on Electronic Commerce, Pangyo, Korea, 17–18 August 2017; Association for Computing Machinery: New York, NY, USA, 2017; pp. 1–8. [Google Scholar]

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Cremer, S.; Loebbecke, C. Artificial Intelligence Imagery Analysis Fostering Big Data Analytics. Future Internet 2019, 11, 178. https://doi.org/10.3390/fi11080178

Cremer S, Loebbecke C. Artificial Intelligence Imagery Analysis Fostering Big Data Analytics. Future Internet. 2019; 11(8):178. https://doi.org/10.3390/fi11080178

Chicago/Turabian StyleCremer, Stefan, and Claudia Loebbecke. 2019. "Artificial Intelligence Imagery Analysis Fostering Big Data Analytics" Future Internet 11, no. 8: 178. https://doi.org/10.3390/fi11080178

APA StyleCremer, S., & Loebbecke, C. (2019). Artificial Intelligence Imagery Analysis Fostering Big Data Analytics. Future Internet, 11(8), 178. https://doi.org/10.3390/fi11080178