1. Introduction

Floating action buttons (FAB) are shaped like a circled icon and positioned mostly on the lower right of the screen, floating above the user interface (UI) [

1]. Functionally, floating action buttons provide quick access to important or common actions within an app. Usually, it should be a positive interaction, such as creating, sharing, exploring, and so on. Since its release in 2014, Google fused the FAB UI element in many of its web and mobile applications with the goal of making a visual language that integrates the standards of good design with the advancement in engineering. It has turned out to be a standout amongst the most striking components of the Material Design UI language [

2]. Basically, FABs can be portrayed as a suggestion to act, since they attempt to persuade the user to perform a specific activity (for example begin transferring or adding content). It can be a trigger to an activity either on the present screen, or it can play out an activity that makes another screen.

Research by Google shows that when faced with an unfamiliar screen many users rely on FAB to navigate. Thus, it is a way to prioritize the most important action a designer wants users to take [

3]. More research has confirmed the good implications FAB has on user experience. Analysis from 40 users tested in the qualitative research proved users’ preference of the FAB over the conventional navigation component defined by the “+” icon placed in the top right of the screen. When users had successfully finished an assignment using the FAB they were capable of using it more effectively than the toolbar alternative [

4]. However, some authors claim that, similar to the Hamburger Menu, the FAB solves the designer’s problem, not the users’ [

5]. Adding a FAB would automatically result in a user experience (UX) that is less immersive, particularly affecting apps (or screens) that aim to provide an immersive experience [

6]. An example can be found in Google’s new Photos application. Additionally, the FAB takes up a certain amount of screen space and can divert users from the content. It is intended to grab user’s visual attention with its prominent and grid-breaking design, but in certain cases its presence can represent a distraction from the main content. UX investigation of the Gmail application can set up a model. In the Gmail applications’ FAB is the “compose” button, suggesting that the essential activity users perform is to compose an email. Various studies have proven that at least 50% of emails are now read on a mobile device, however little to none demonstrate a similar move as far as creating messages. Specifically, most mobile users read their emails on the go and a FAB can cause annoyance among users, as it hides important content. [

6,

7] An inquiry which emerges is consider the possibility that the “advanced activity” simply is not utilized that frequently by users.

Even though those findings are based on data collected from real users, thorough research of FAB user experience has not been conducted. The FAB’s greatest advantage is that it is a standardized UI navigation pattern, meaning that standardized navigation patterns alleviate users’ cognitive load considerably. Least psychological burden implies most extreme ease of use. Also, following Google material design guidelines regarding the FAB functionality allows Android application developers and designers to improve design consistency. However, as explained before, the FAB works for some UIs more than others. FAB navigation is recommended if the user needs to access a specific section or functionality of an app in a quick way regardless of hierarchy or in case the space to show such navigation is limited. When there is a specific functionality or pages that are more frequently used than other parts of the app, FAB serves as the shortcut box to show that choice and to shorten the path for the users. In other cases, expandable FAB makes the navigational transition from state to state a more fluent experience. Because of interaction, animated FAB triggers and extend a series of actions by expanding and collapsing itself in a morphing animation.

Previous research has confirmed that usage of appropriate animation-function relations can be beneficial to the static interface and have an important role in communicating UI element functions [

8]. Animation in graphical UI helps improve the user experience by attracting and directing attention, reducing cognitive load, and preventing change blindness [

9]. Movement signals can bridge micro-interaction gaps by acknowledging input immediately and animating in ways that closely resemble direct control [

10]. A designer should also consider animation potential for offloading some of the cognitive burden associated with deciphering moments of change from higher cognitive centers to the periphery of the nervous system [

11]. Research has confirmed that the properties of the animation of UI, such as image realism, transition smoothness, and interactivity style, have a direct impact on a user’s understanding of the state changes [

12]. Animation can also add aesthetic pleasure, which contributes to the overall user experience [

13]. Researchers have concluded that if used correctly animated FAB can be an astoundingly helpful pattern for the end-user. [

14]

However, there are some occasions when a FAB detracts from usability rather than enhancing it, so they need an alternative. According to Cassandra Naji, there are at least three alternatives to FAB. The most obvious ones, the bottom or top toolbars, can be utilized instead of the FAB in apps that have more than one primary action. Users can easily locate specific features and content using a flat navigation structure, such as navigation tabs with icons that provide a clear indication of the current location [

15]. Therefore, if a designer does decide to replace a FAB with a toolbar, care should be taken not to mix actions with navigation in the bar, and ensure recognized, consistent icons are used [

16].

Based on the previous findings and recommendations, the aim of this paper is to investigate whether QoE of FAB surpasses a toolbar alternative and whether it is really a natural cue to users for what to do next. Alben’s definition of the “experience” encompasses all aspects of how people use an interactive product; the manner in which it feels in their grasp, how well they understand how it functions, how they feel about it while they are utilizing it, how well it fulfills their needs, and how well it fits into the whole setting in which they are utilizing it [

17]. In recent years, researchers have developed a scientific framework to measure and quantify the quality of user experience. Nielsen considers usability as one of the basic aspects of user experience that needs to be measured. Usability refers to the ease of access or use of a product or service [

18]. The level of usability is determined not only by design, but by the system features and the context of the user (the user’s goals from the interaction with the system and the user’s environment). To determine the impact of FAB on Quality of Experience (QoE), as the degree of delight or irritation of the user of an application or service as a result from the fulfilment of their expectations, the outcome of the user’s comparison and judgment process should be measured [

19]. Content, device properties, network speed and stability, user expectations and goals, and context of use can influence the QoE in the context of communication services [

20]. Because QoE expresses user satisfaction both objectively and subjectively, if one wants to compare the QoE of FAB and a toolbar alternative it can be done by combining qualitative and quantitative methods of research.

Our assumption is that a visually prominent UI element, such as FAB, might create positive UX effects if a user is pleased to use an app because they find it visually attractive. Also, the FAB motion behaviors, which include morphing, launching, and transferring anchor point, could help to surface a set of actions, such as a menu that does not really belong at the top of the screen but is still important. To prove these assumptions, we have conducted a series of user studies on two variants of the same application prototypes, namely address book contact application prototypes (

Document S1). An address book application is common for every mobile phone, as mentioned in previous research. To separate the influence of motion on usability results and overall QoE measure, studies were separated into two distinctive experiments. Three hypotheses were proposed:

Hypothesis 1 (H1). Usability of the UI prototype with FAB surpasses usability of a toolbar alternative.

Hypothesis 2 (H2). FAB animation will have a positive effect on the usability perception of the UI prototype compared to the static alternative.

Hypothesis 3 (H3). FAB animation will have a positive effect on the overall QoE of the same prototype.

2. Methods

To measure the usability of prototypes (Hypotheses 1 and 2) and see which one performs better (prototype A or prototype B) on the immediate action, we conducted an A/B test [

21]. According to Nielsen, best results come from running many small tests with a limited number of participants (no more than 5 users) [

22]. The formula is only relevant for testing with similar user profiles, or users who will be using the application or service in similar ways. In our case, targeted users were familiar with new technologies and using mobile phones more frequently than other groups (e.g., children, elderly).

According to ISO 9241-11 standard, usability is defined as “the extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency, and satisfaction in a specified context of use” [

23]. This means that usability is not a single property but rather a combination of factors. Metrics that should be included in usability tests are suggested in the ISO/IEC 9126-4 Metrics [

24].

Effectiveness: The accuracy and completeness with which users accomplish tasks and achieve defined goals. It can be calculated by measuring the task completion rate. Effectiveness can be represented as a percentage by using simple equations (Equations (1) and (2)).

Another approach to measure effectiveness is by counting the number of errors the participant makes during the attempt to complete a task.

Efficiency: The resources expended in relation to the accuracy and completeness with which users achieve goals. Efficiency is measured in terms of task time. that is, the time (in seconds or minutes) the participant takes to successfully complete a task. Efficiency can then be calculated in one of two ways:

(a) Time-Based Efficiency.

The time taken to complete a task can then be calculated by simply subtracting the start time from the end time. The equation can, thus, be represented as follows in Equation (1):

where

N is the total number of tasks,

R is number of users,

nij is the result of task i by user

j; if the user successfully completes the task, then

nij = 1, if not, then

nij = 0; t

ij is the time spent by user

j to complete task

i in seconds. If the task is not successfully completed, then time is measured till the moment the user quits the task

(b) Overall Relative Efficiency

The overall relative efficiency uses the ratio of the time taken by the users who successfully completed the task in relation to the total time taken by all users, shown below in Equation (2).

Satisfaction: The comfort and acceptability of use. A/B testing method provides a great supplement to qualitative studies, namely a survey, being a quick and reliable tool for measuring usability.

Task satisfaction level was measured by giving a formalized questionnaire to each test participant at the end of each test session. We used Single Ease Question (1 question) since it is short and easy to respond to, administer, and score.

Test level satisfaction was measured by the System Usability Scale (SUS), which consists of a 10-item questionnaire with five response options for respondents, from strongly agree to strongly disagree [

25,

26].

Purely usability-oriented test approaches are, in general, not sufficient for measuring users’ satisfaction while using an app, calling for the need to consider QoE as a multidimensional concept (Hypothesis 3). User experience designers are concerned with different and strongly interrelated factors that may influence the QoE of an application and its animation encompassing human, system, and context influence factors. Aesthetics and semantics of navigational design are clearly important as well, since they affect the perception of the product. They are a part of the basis for achieving higher user delight when using a given product [

27].

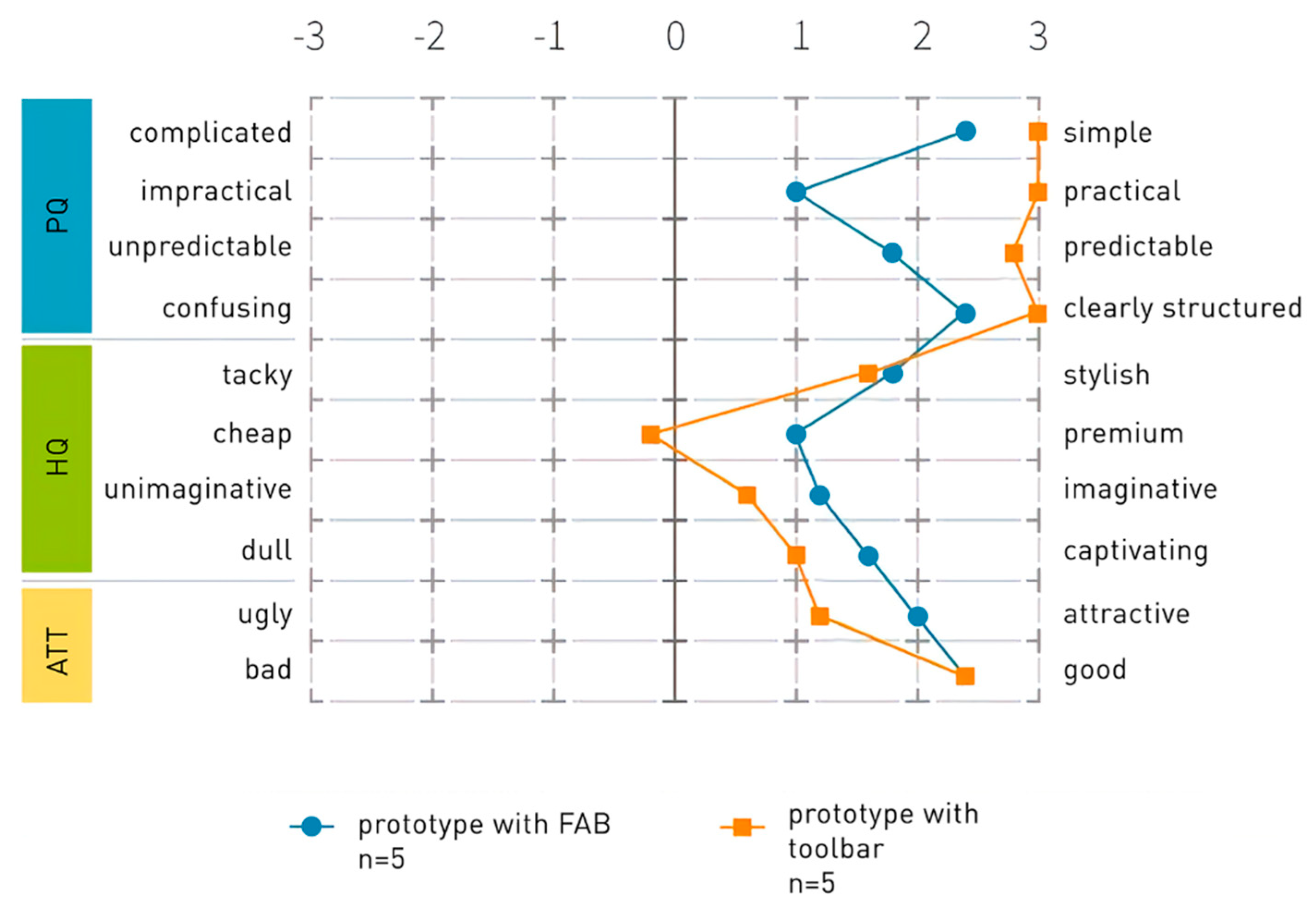

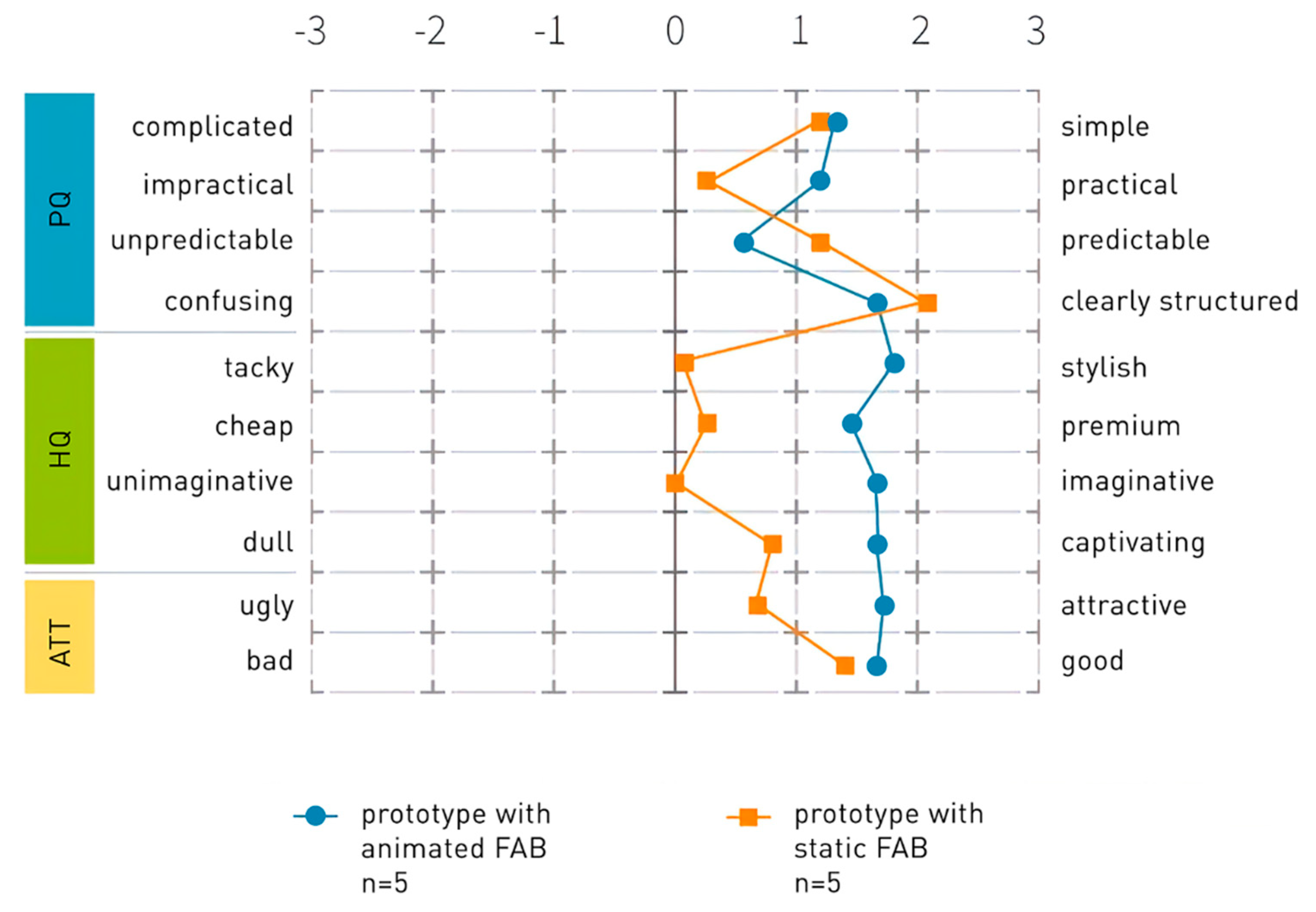

To understand how users personally rate semantics of both interactive prototypes, the online tool AttrakDiff was used. The best-suited method was comparison between product A and product B module. In AttrakDiff, a semantic differential method is used for evaluation of the perceived pragmatic quality, the hedonic quality, and the attractiveness of interactive products. For the evaluation of the interactive prototypes, 10 semantic differential pairs were used with a 7-point Likert scale covering the range between the two extremes (“simple-complicated”, “attractive-ugly”, “practical-impractical”, “stylish-tacky”, “predictable-unpredictable”, “premium-cheap”, “imaginative-unimaginative”, “good-bad”, “clearly structured-confusing”, “captivating-dull). Each set of adjective items is ordered into a scale of intensity [

28].

Aesthetics appraisal (low versus high) and overall satisfaction (following the procedure published by Jones), with the navigational design of both prototypes, were evaluated by collecting subjective user feedback at the end of the experiment.

3. Tasks and Procedures

For the first experiment, two high fidelity interactive UI prototypes of the address book contact application were created using the digital product design platform InVision [

29]: prototype A with a toolbar with four icon actions, namely adding a new contact, adding a new group of contacts, contact sharing, and contact import and export; and prototype B, with FAB instead of a toolbar, with the same set of actions (

Document S1).

For the second experiment, two videos were created, showing the process of usage of prototype B. The aim of the experiment was to test the UX of a static FAB versus animated FAB and determine which influence factors impact QoE rating the most. To provide a comparable experience, the user actions were represented with the animated cursor on the screen. The first video, showing user action of adding a new contact, was titled B1. In this video, the UI was not animated. The second video, titled B2, presents the animated FAB and animated transitions between screens (

Video S1B1, Video S1B2).

To avoid personal color preference in user experience, most of the UI elements on the prototype were neutral in color (light and dark grey), except for the toolbar and FAB, which were blue. This color scheme is suggested by Google Material design principles. The same principles were followed in app design and FAB animation. All the animations, similarly to the previous research examples, were created following the principles of UI animations: easing, follow through, and overlapping action, offset and delay, transformation, parenting, cloning, and obscuration.

Both experiments were conducted in the Faculty of Graphic Arts user experience research laboratory. Before testing, participants were informed about the testing procedure and written consent was obtained that testing results could be used for research purposes. Participants were free to quit the task whenever they felt.

3.1. Experiment No 1

In the first part of an experiment, two groups (A and B) of users were tested. The first group consisted of five students, three women, and two men, age 20–28. The second group consisted of three men and two women, age 19–31.

The interactive prototype A was shown on the computer screen to the first group of participants, and prototype B to the second. The participants were informed about the objectives of the test, a procedure that they should follow, and were asked to solve two simple tasks. Tasks were just one sentence long and consisted of the interactions that need to be performed by the participants.

Task 1: Add a new contact to an address book:

The tester measured the time needed for task completion from the start until the “save” command was performed. After completion, the task difficulty questionnaire was given to the participant. Then, the second task was presented.

Task 2: Share your contact using Gmail:

Completion time was measured again, and two questionnaires were given to the participant: task difficulty and SUS questionnaires.

We conducted two kinds of surveys:

After users finished the task (irrespective of whether they manage to achieve its goal or not), they were immediately given a questionnaire aimed to measure how difficult that task was. The questionnaire consisted of one question in the form of Likert scale rating, and the goal was to provide insight into task difficulty, as seen from the participants’ perspective [

30].

In the end, the participants were asked to observe both prototypes and judge on a scale from 1 to 5 which one they perceive as aesthetically higher and better. Also, they were asked to explain their opinion in a few sentences [

31].

3.2. Experiment No 2

In the second experiment, one group of users was tested. The group consisted of fifteen students (eight women and seven men), age 18–35. Both videos (static FAB-B1 and animated FAB-B2) were presented to this (the third) group of participants. To find out how the pragmatic and hedonic qualities influence the subjective perception of the attractiveness of both prototypes, participants were asked to fill out the AttractDiff form after watching videos.

5. Discussion

The theoretical framework showed that usability, as a part of a larger framework called user experience, is key to the success of mobile applications. Usable mobile applications are perceived by users as being easy to learn, user-friendly, and less time-consuming when completing tasks. Researchers have identified a direct link between mobile application usability and user experience, but differences have to be cleared out. Since user experience encompasses many aspects of the user’s interaction with the product beyond its functional scope, qualitative research must uncover the meaning and interpretation of the experience and how this meaning organizes the user’s actions. Therefore, a thorough understanding of the most important influence factors, with the aim of enhancing the overall user satisfaction with the application, must be attained. Based on the previous research, we have identified key quality indicators and corresponding quality dimensions for the evaluation of user experience. The experience was evaluated during structured activities in two experiments and data were collected as a means of analyzing user performance and achievements, as well as satisfaction. We have combined quantitative and qualitative measurements of changes in the user performance while using two different navigational designs, and applied an evaluation method that records the perceived pragmatic quality, the hedonic quality, and the attractiveness of each design solution.

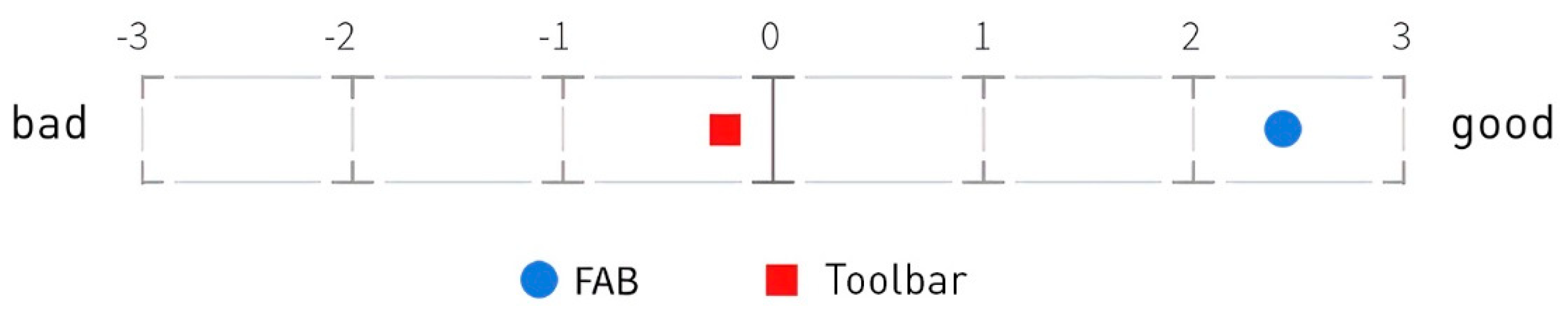

The analysis of results of two experiments in terms of usability showed a clear advantage of the toolbar prototype. For that reason, hypothesis 1 was disproved. A toolbar is a better solution in terms of functionality and ease of use. The AttractDiff study confirmed that the toolbar prototype is more simple, practical, predictable, and clearly structured than the FAB prototype. However, the FAB solution is perceived as higher in terms of hedonic quality, attractiveness, aesthetics, and overall rating. Therefore, users would prefer this UI navigational design to be included in their mobile phone applications (

Figure S1).

The second part of the experiments, which compared static to animated FABs, showed that the static design solution was perceived as more pragmatic and task oriented. Therefore, the second hypothesis can be rejected as well.

The animated FAB was perceived as more interesting, creative, stylish, and desirable. From these results, we can conclude that the third hypotheses is confirmed. The studies showed that hedonic and pragmatic qualities are perceived consistently and independent of one another; both contribute equally to the rating of attractiveness, which was confirmed by this study.