A Dynamic Application-Partitioning Algorithm with Improved Offloading Mechanism for Fog Cloud Networks

Abstract

:1. Introduction

- How to develop the data flow and data process in a fog cloud paradigm for the workflow IoT application? Due to the fact that it is different from traditional data flow and data process mechanism, which have been adopted by many cloud architectures to process the real time data [17].

- How to make the assignment of local tasks on devices without degrading application performance and device energy must be under given threshold value?

- How to make the assignment of remote tasks on the fog nodes efficiently with minimal consumption of the fog resources and without degrading the quality of experience (QoE) of the application?

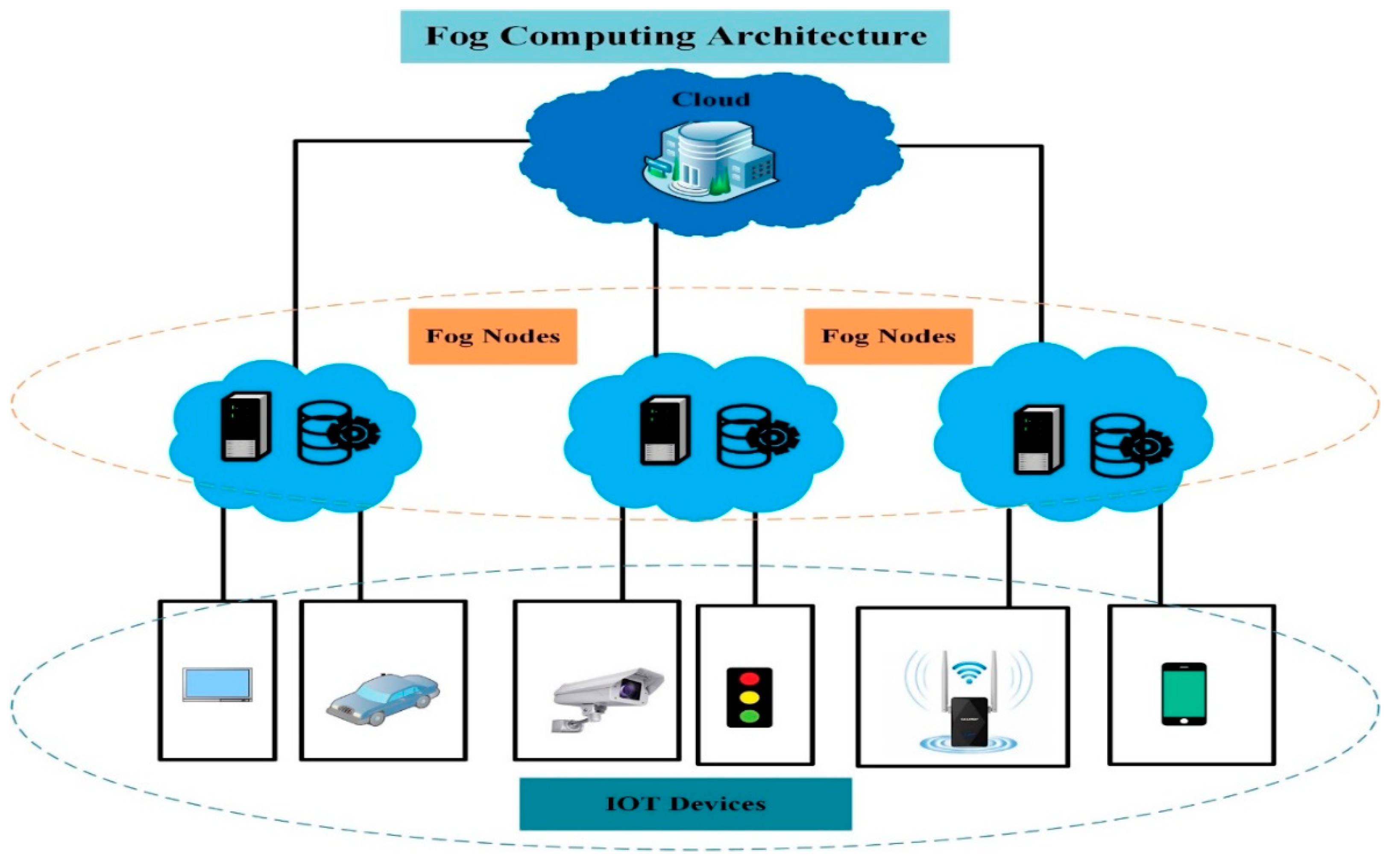

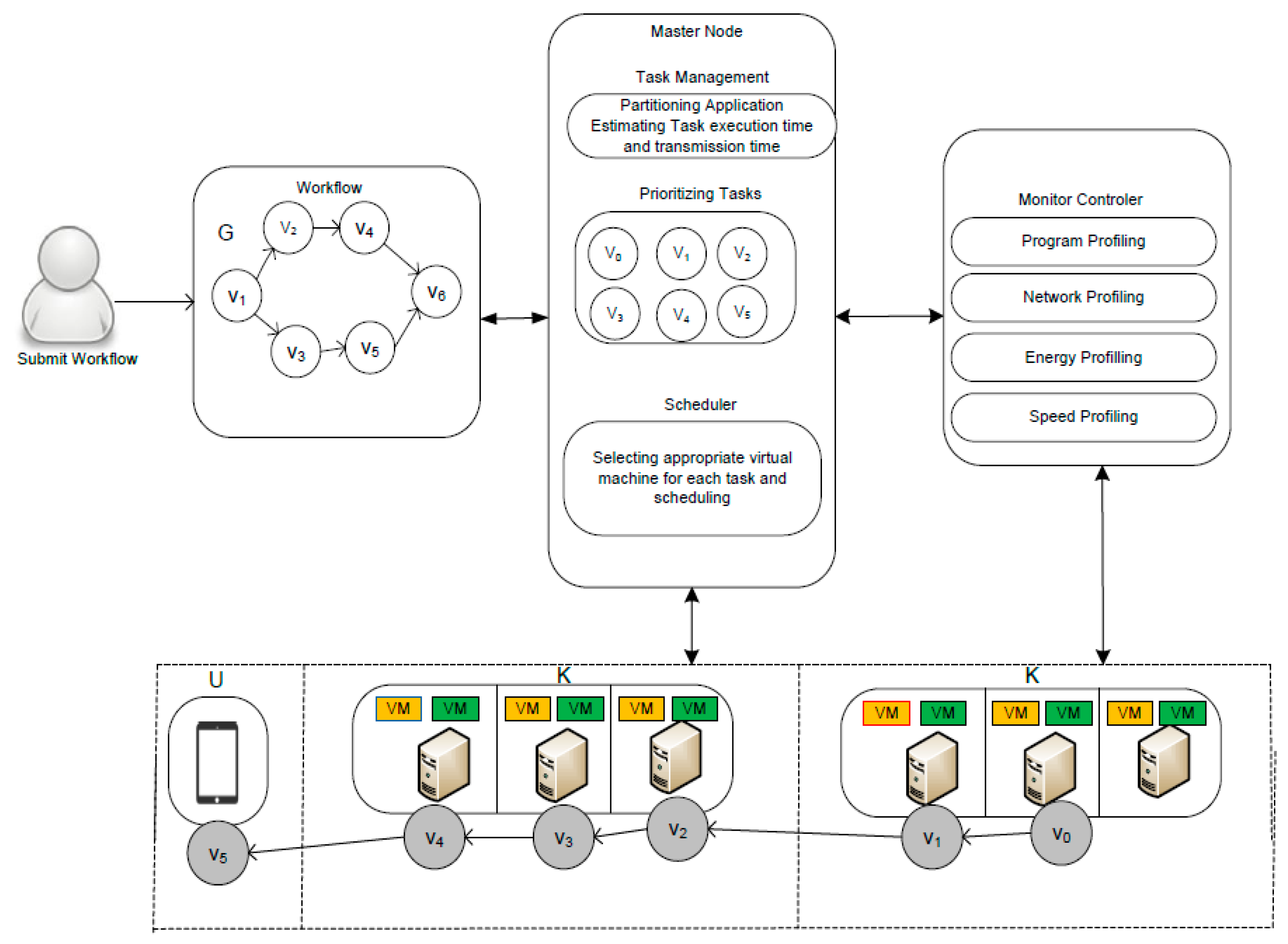

- This paper proposes new fog cloud architecture (FCA), which efficiently manages the data flow and data processing mechanism for all IoT applications without losing any generosity of the system. FCA exploited many profiling technologies to regularly monitor the entire system performance in term of resource utilization and energy management. The propose FCA architecture will be explained in the proposed description section.

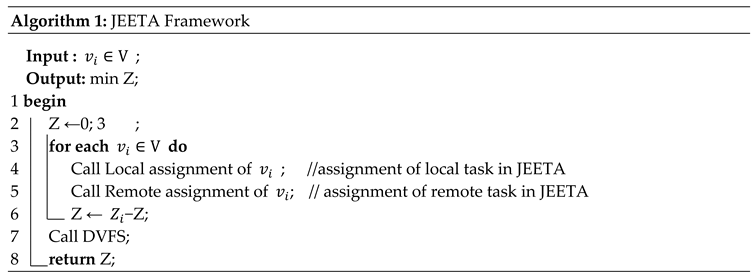

- A joint energy efficient task assignment (JEETA) method is proposed to cope up with the problem in the fog cloud environment.

- In order to minimize the total energy consumption of the entire system, a dynamic application partitioning task assignment algorithm (DAPTS) is presented. This DAPTS determines how to perform the assignment of local tasks on the devices and remote tasks on the fog nodes.

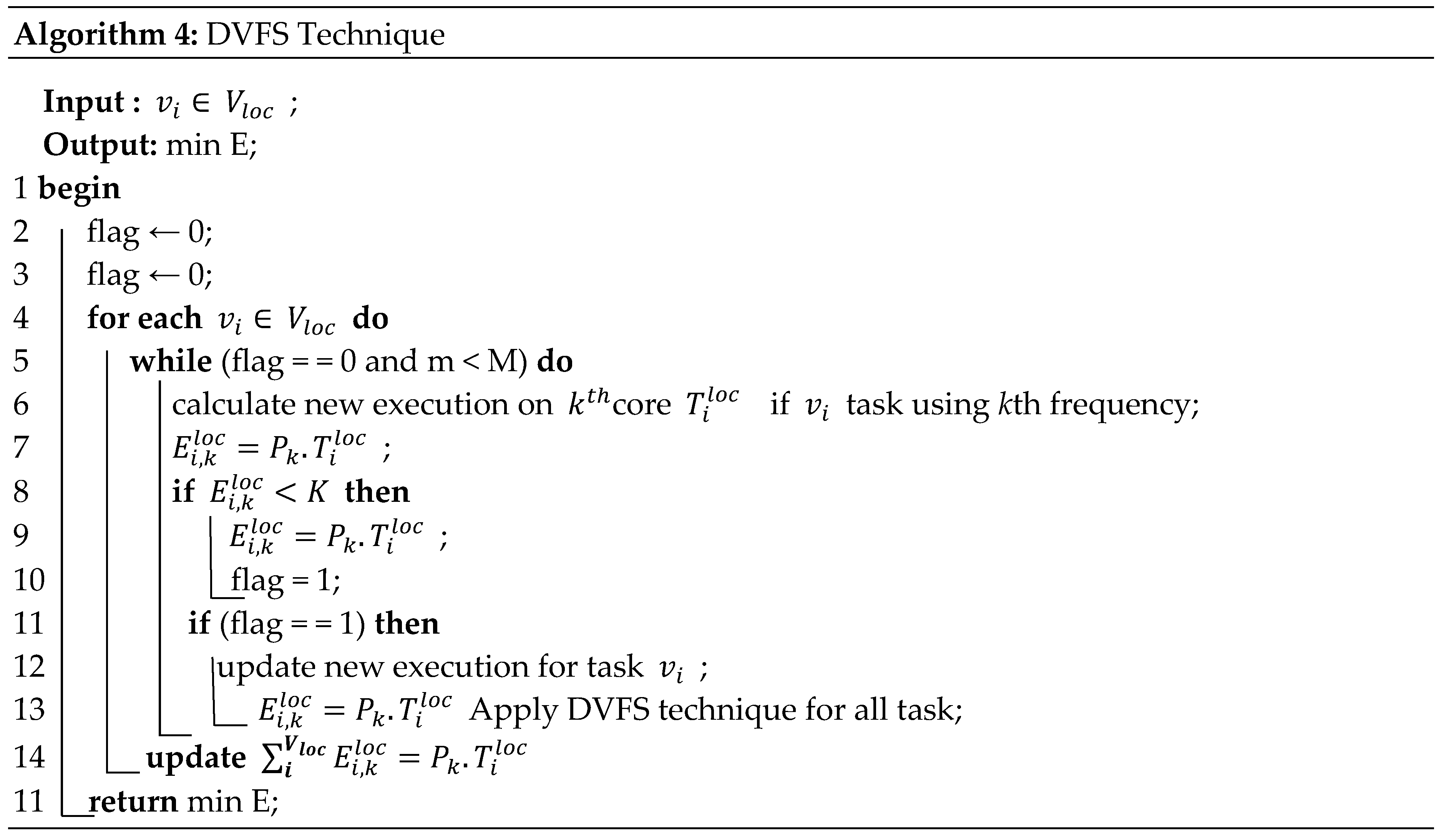

- DAPTS exploits dynamic voltage frequency scaling (DVFS) method to manage and reduce the entire energy consumption of the FCA after task assignment phase without degrading any system performance.

2. Related Work

3. Proposed Model

3.1. Offloading Cost

3.2. System Model and Problem Formulation

3.3. Application Energy Consumption

4. Proposed Algorithm DAPTS

4.1. Local Task Assignment

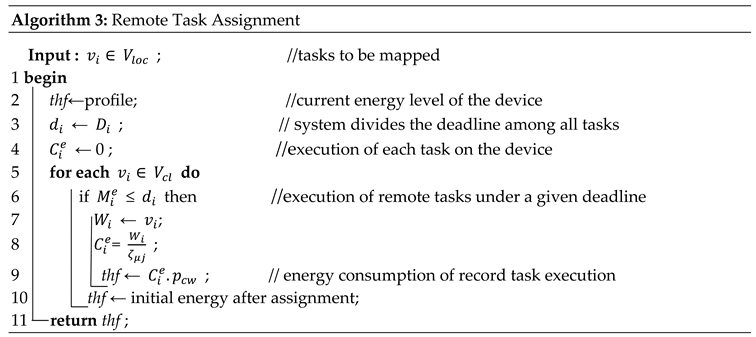

4.2. Remote Task Assignment

4.3. Energy Efficiency Phase

5. Performance Evaluation

5.1. Workflow Complex Application

5.2. Profiling Technologies

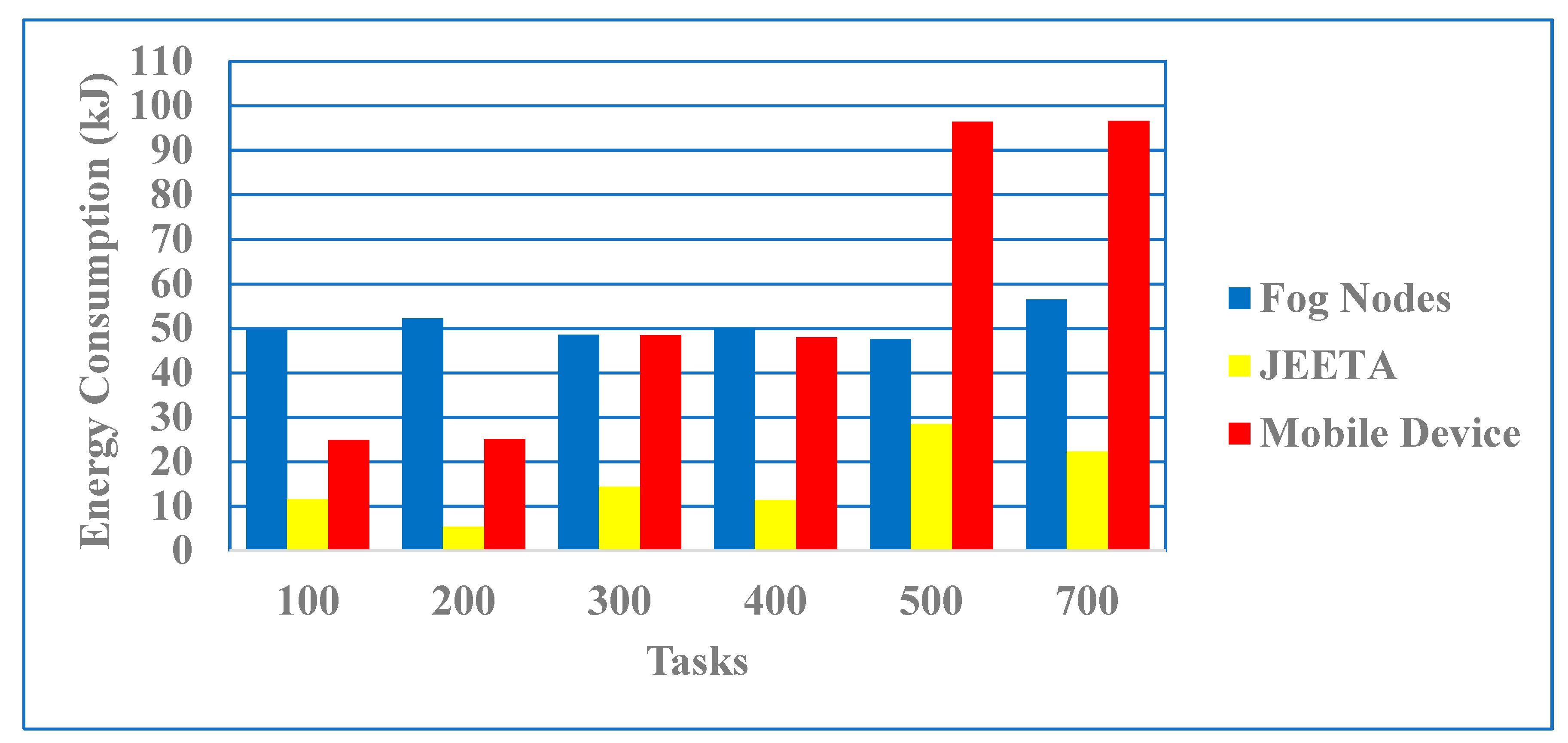

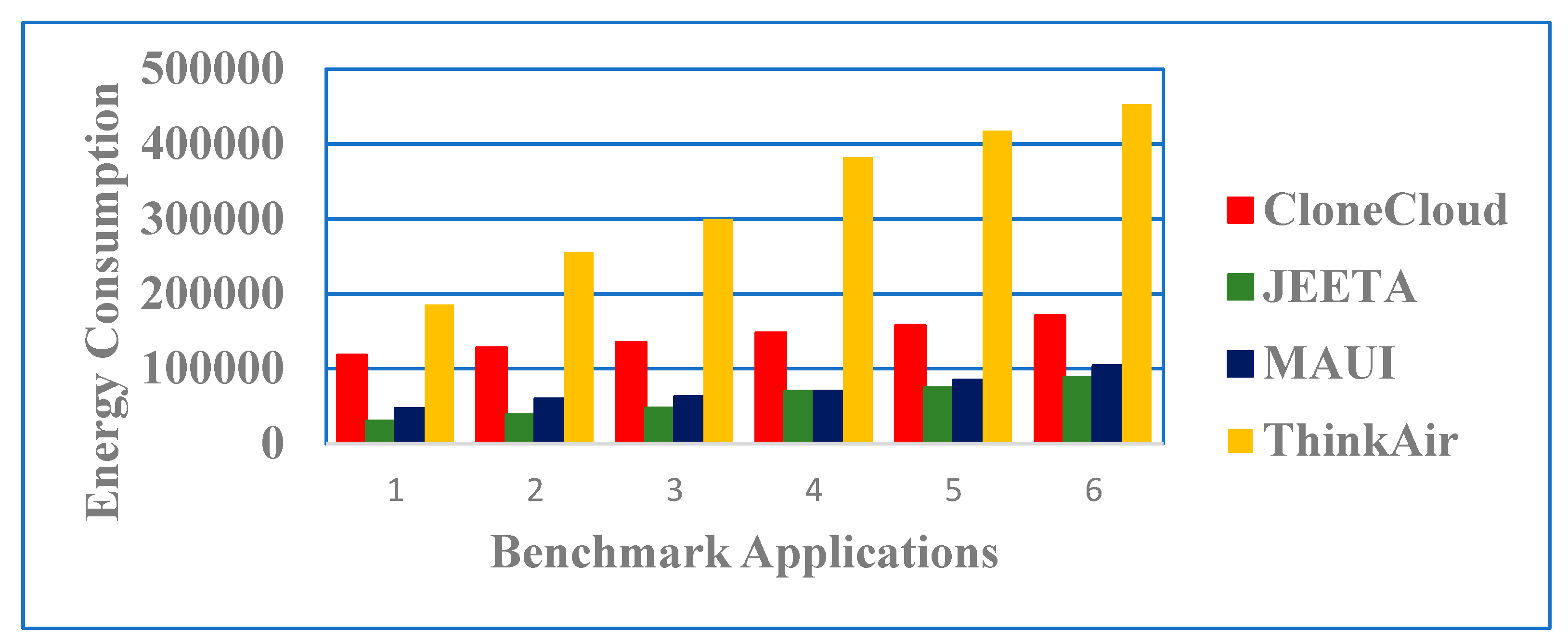

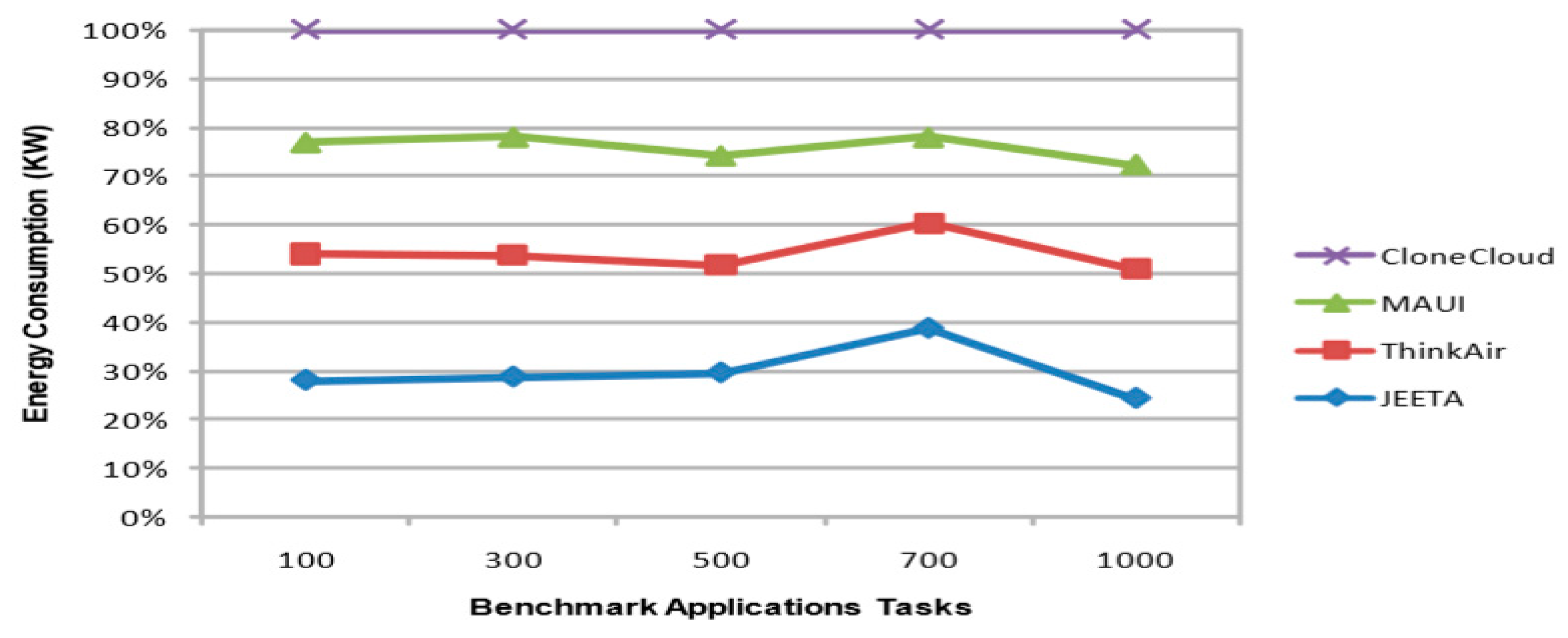

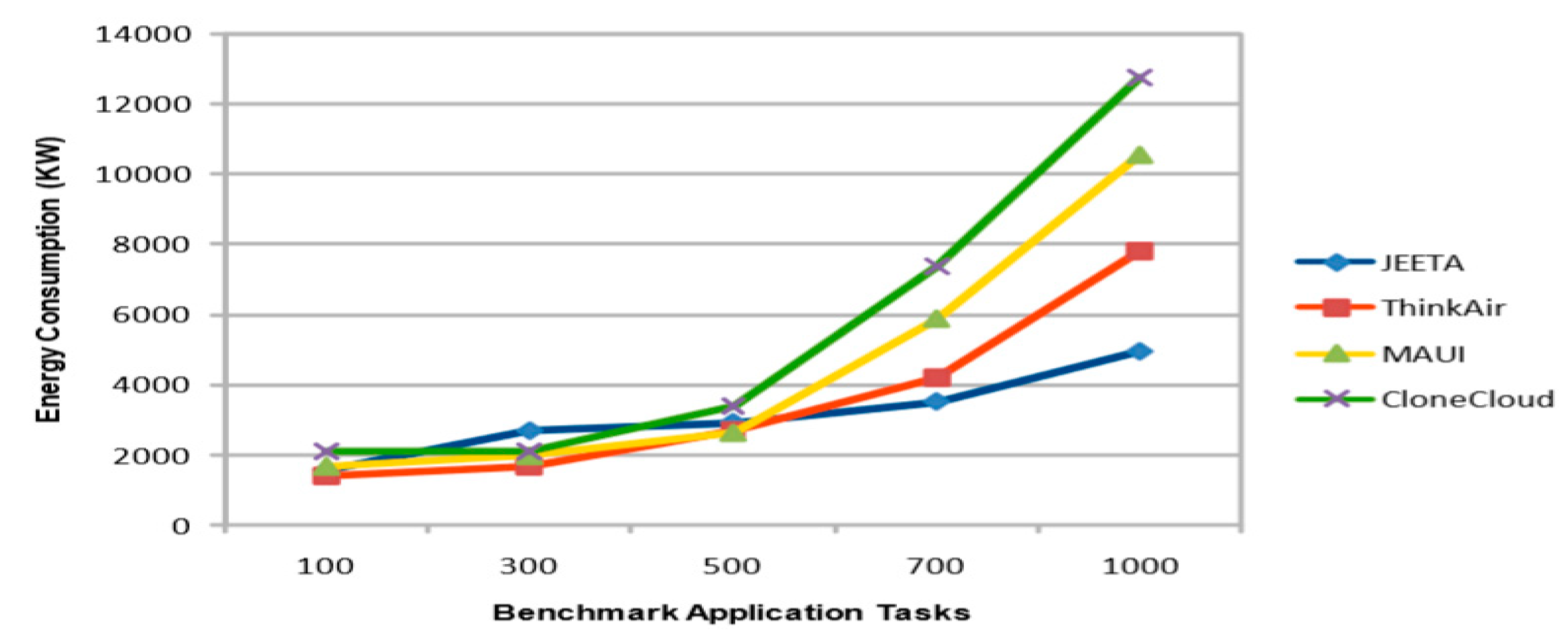

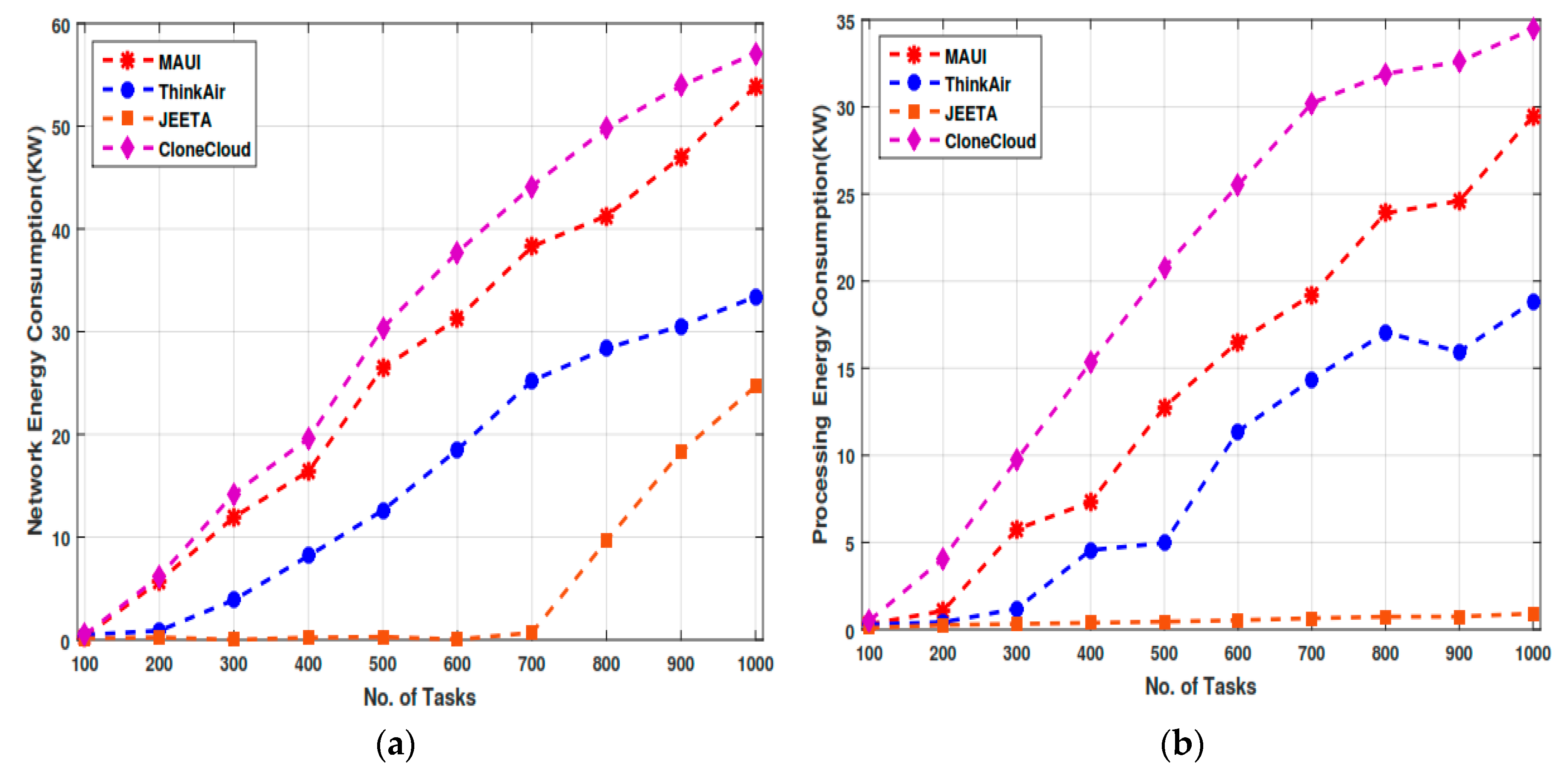

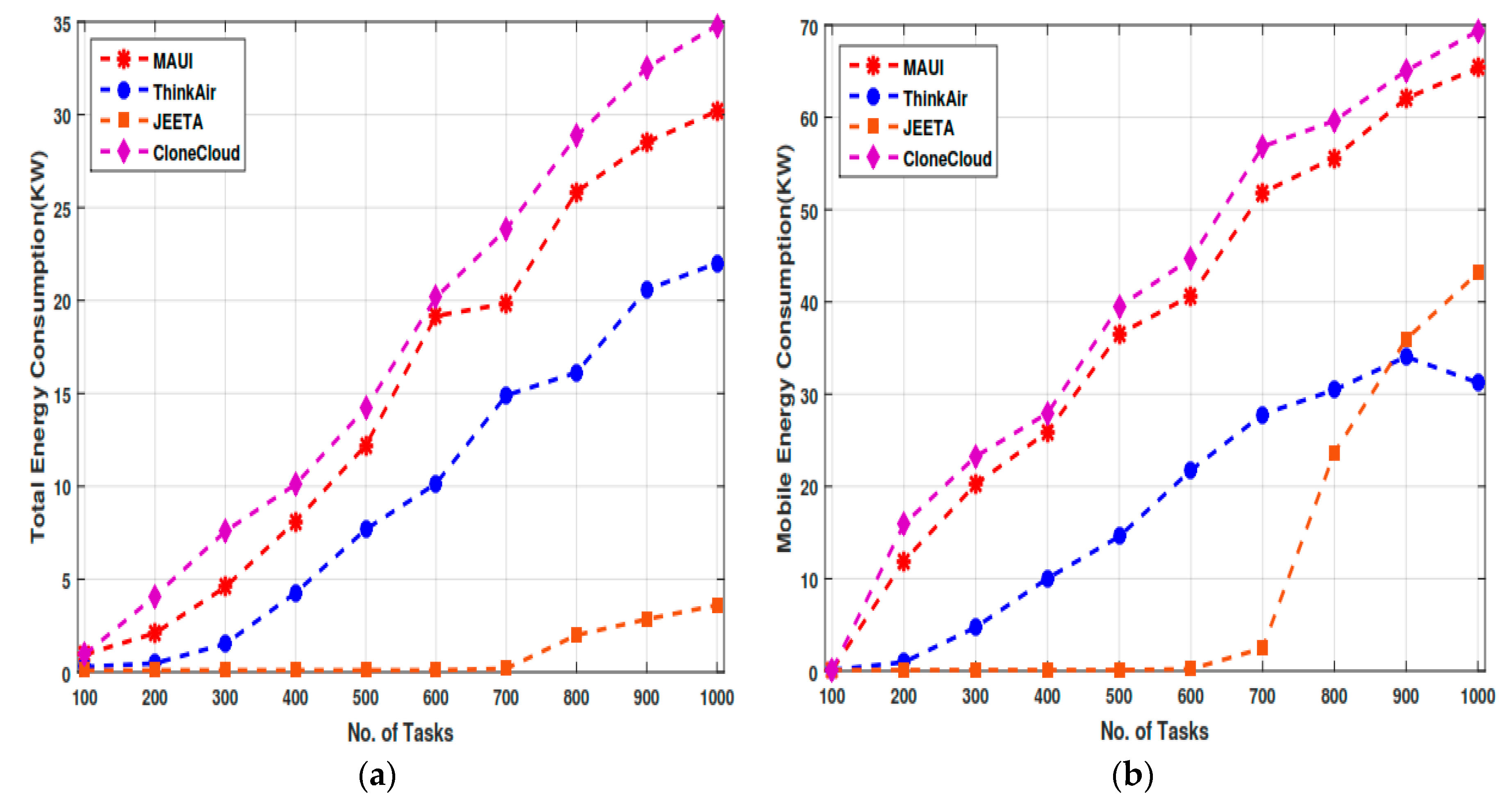

5.3. Total Energy Consumption in Scenario

6. Conclusions

Author Contributions

Funding

Acknowledgments

Conflicts of Interest

References

- Jeon, K.E.; She, J.; Soonsawad, P.; Ng, P. BLE Beacons for Internet of Things Applications: Survey, Challenges and Opportunities. IEEE Int. Things J. 2018, 5, 17685540. [Google Scholar] [CrossRef]

- Laghari, A.A.; He, H.; Khan, A.; Kumar, N.; Kharel, R. Quality of Experience Framework for Cloud Computing (QoC). IEEE Access 2018, 6, 64876–64890. [Google Scholar] [CrossRef]

- Laghari, A.A.; He, H. Analysis of Quality of Experience Frameworks for Cloud Computing. Int. J. Comput. Sci. Netw. Secur. 2017, 17, 228. [Google Scholar]

- Usman, M.J.; Ismail, A.S.; Abdul-Salaam, G.; Chizari, H.; Kaiwartya, O.; Gital, A.Y.; Abdullahi, M.; Aliyu, A.; Dishing, S.I. Energy-efficient Nature-Inspired techniques in Cloud computing datacenters. Telecommun. Syst. 2019, 71, 275–302. [Google Scholar] [CrossRef]

- Mahmud, R.; Kotagiri, R.; Buyya, R. Fog Computing: A Taxonomy, Survey and Future Directions BT—Internet of Everything: Algorithms, Methodologies, Technologies and Perspectives; Di Martino, B., Li, K.-C., Yang, L.T., Esposito, A., Eds.; Springer: Singapore, 2018; pp. 103–130. [Google Scholar]

- Kumar, V.; Laghari, A.A.; Karim, S.; Shakir, M.; Brohi, A.A. Comparison of Fog Computing & Cloud Computing. Int. J. Math. Sci. Comput. 2019, 1, 31–41. [Google Scholar] [CrossRef]

- Asif-Ur-Rahman, M.; Afsana, F.; Mahmud, M.; Kaiser, M.S.; Ahmed, M.R.; Kaiwartya, O.; James-Taylor, A. Towards a Heterogeneous Mist, Fog, and Cloud based Framework for the Internet of Healthcare Things. IEEE Internet Things J. 2018. [Google Scholar] [CrossRef]

- Aliyu, A.; Tayyab, M.; Abdullah, A.H.; Joda, U.M.; Kaiwartya, O. Mobile Cloud Computing: Layered Architecture. In Proceedings of the 2018 Seventh ICT International Student Project Conference, Nakhon Pathom, Thailand, 11–13 July 2018. [Google Scholar]

- Usman, M.J.; Ismail, A.S.; Chizari, H.; Abdul-Salaam, G.; Usman, A.M.; Gital, A.Y.; Kaiwartya, O.; Aliyu, A. Energy-efficient Virtual Machine Allocation Technique Using Flower Pollination Algorithm in Cloud Datacenter: A Panacea to Green Computing. J. Bionic Eng. 2019, 16, 354–366. [Google Scholar] [CrossRef]

- Kaiwartya, O.; Abdullah, A.H.; Cao, Y.; Altameem, A.; Prasad, M.; Lin, C.T.; Liu, X. Internet of Vehicles: Motivation, Layered Architecture Network Model Challenges and Future Aspects. IEEE Access 2016, 4, 5356–5373. [Google Scholar] [CrossRef]

- Zheng, J.; Cai, Y.; Wu, Y.; Shen, X. Dynamic Computation Offloading for Mobile Cloud Computing: A Stochastic Game-Theoretic Approach. IEEE Trans. Mobile Comput. 2018, 18, 771–786. [Google Scholar] [CrossRef]

- Ravi, A.; Peddoju, S.K. Mobile Computation Bursting: An application partitioning and offloading decision engine. In Proceedings of the 19th International Conference on Distributed Computing and Networking, Varanasi, India, 4–7 January 2018. [Google Scholar]

- Aliyu, A.; Abdullah, A.H.; Kaiwartya, O.; Cao, Y.; Usman, M.J.; Kumar, S.; Lobiyal, D.K.; Raw, R.S. Cloud Computing in VANETs: Architecture, Taxonomy, and Challenges. IETE Tech. Rev. 2018, 35, 523–547. [Google Scholar] [CrossRef]

- Cao, Y.; Song, H.; Kaiwartya, O.; Zhou, B.; Zhuang, Y.; Cao, Y.; Zhang, X. Mobile Edge Computing for Big Data-Enabled Electric Vehicle Charging. IEEE Commun. Mag. 2018, 56, 150–156. [Google Scholar] [CrossRef]

- Jalali, F.; Hinton, K.; Ayre, R.S.; Alpcan, T.; Tucker, R.S. Fog Computing May Help to Save Energy in Cloud Computing. IEEE J. Sel. Areas Commun. 2016, 34, 1728–1739. [Google Scholar] [CrossRef]

- Baccarelli, E.; Naranjo, P.G.V.; Scarpiniti, M.; Shojafar, M.; Abawajy, J.H. Fog of Everything: Energy-Efficient Networked Computing Architectures, Research Challenges, and a Case Study. IEEE Access 2017, 5, 9882–9910. [Google Scholar] [CrossRef]

- Peng, M.; Yan, S.; Zhang, K.; Wang, C. Fog Computing based Radio Access Networks: Issues and Challenges. arXiv 2015, arXiv:1506.04233. [Google Scholar] [CrossRef]

- Yuan, D.; Yang, Y.; Liu, X.; Chen, J. A data placement strategy in scientific cloud workflows. Future Gener. Comput. Syst. 2010, 26, 1200–1214. [Google Scholar] [CrossRef]

- Gai, K.; Qiu, M.; Zhao, H. Energy-aware task assignment for mobile cyber-enabled applications in heterogeneous cloud computing. J. Parallel Distrib. Comput. 2018, 111, 126–135. [Google Scholar] [CrossRef]

- Gai, K.; Qiu, M.; Zhao, H.; Liu, M. Energy-Aware Optimal Task Assignment for Mobile Heterogeneous Embedded Systems in Cloud Computing. In Proceedings of the 2016 IEEE 3rd international conference on cyber security and cloud computing, Beijing, China, 25–27 June 2015. [Google Scholar]

- Chen, X.; Pu, L.; Gao, L.; Wu, W.; Wu, D. Exploiting Massive D2D Collaboration for Energy-Efficient Mobile Edge Computing. IEEE Wirel. Commun. 2017, 24, 64–71. [Google Scholar] [CrossRef]

- Souza, V.; Masip, X.; Marin-Tordera, E.; Ramírez, W.; Sanchez, S. Towards Distributed Service Allocation in Fog-to-Cloud (F2C) Scenarios. In Proceedings of the 2016 IEEE Global Communications Conference (GLOBECOM), Washington, DC, USA, 4–8 December 2016. [Google Scholar]

- Zhu, Q.; Si, B.; Yang, F.; Ma, Y. Task offloading decision in fog computing system. China Commun. 2017, 14, 59–68. [Google Scholar] [CrossRef]

- Pu, L.; Chen, X.; Xu, J.; Fu, X. D2D Fogging: An Energy-Efficient and Incentive-Aware Task Offloading Framework via Network-Assisted D2D Collaboration. IEEE J. Sel. Areas Commun. 2016, 34, 3887–3901. [Google Scholar] [CrossRef]

- Aliyu, A.; Abdullah, H.; Kaiwartya, O.; Usman, M.; Abd Rahman, S.; Khatri, A. Mobile Cloud Computing Energy-Aware Task Offloading (MCC: ETO); Taylor and Francis CRC Press: Gurgaon, India, 2016. [Google Scholar] [CrossRef]

- Verma, J.K.; Kumar, S.; Kaiwartya, O.; Cao, Y.; Lloret, J.; Katti, C.P.; Kharel, R. Enabling Green Computing in Cloud Environments: Network Virtualization Approach Towards 5G Support. Trans. Emerg. Telecommun. Technol. 2018, 29, e3434. [Google Scholar] [CrossRef]

- Yadav, R.; Zhang, W.; Kaiwartya, O.; Singh, P.; Elgendy, I.; Tian, Y.-C. Adaptive energy-aware algorithms for minimizing energy consumption and SLA violation in cloud computing. IEEE Access 2018, 6, 55923–55936. [Google Scholar] [CrossRef]

- Yadav, R.; Zhang, W.; Li, K.; Liu, C.; Shafiq, M.; Karn, N. An adaptive heuristic for managing energy consumption and overloaded hosts in a cloud data center. Wirel. Netw. 2018. [Google Scholar] [CrossRef]

- Vasile, M.-A.; Pop, F.; Tutueanu, R.-I.; Cristea, V.; Kołodziej, J. Resource-aware hybrid scheduling algorithm in heterogeneous distributed computing. Future Gener. Comput. Syst. 2015, 51, 61–71. [Google Scholar] [CrossRef]

- Satyanarayanan, M.; Bahl, P.; Caceres, R.; Davies, N. The case for VM-based cloudlets in mobile computing. IEEE Pervasive Comput. 2009, 4, 14–23. [Google Scholar] [CrossRef]

- Folkerts, E.; Alexandrov, A.; Sachs, K.; Iosup, A.; Markl, V.; Tosun, C. Benchmarking in the Cloud: What It Should, Can, and Cannot Be. In Technology Conference on Performance Evaluation and Benchmarking; Springer: Berlin/Heidelberg, Germany, 2012; pp. 173–188. [Google Scholar]

- Face-Recognition. Available online: http://darnok.org/programming/face-recognition/ (accessed on 24 June 2019).

- Health Application. Available online: https://github.com/mHealthTechnologies/mHealthDroid (accessed on 24 June 2019).

- Application. Available online: https://powertutor.org/ (accessed on 24 April 2019).

- Speed Test. Available online: www.speedtest.net/ (accessed on 24 June 2019).

- Khadija, A.; Gerndt, M.; Harroud, H. Mobile cloud computing for computation offloading: Issues and challenges. Appl. Comput. Inf. 2018, 14, 1–16. [Google Scholar]

| Notation | Description |

|---|---|

| N | Number of tasks v |

| Application partitioning factor during offloading | |

| Deadline of the application | |

| Speed rate of jth virtual machine VM | |

| Speed rate of mobile processor | |

| Execution cost of the task on cloudlet k | |

| Execution cost of the task on mobile | |

| Power consumption rate at cloudlet virtual machine VM | |

| Power consumption rate at the mobile device | |

| Assignment of task on virtual machine VM, j | |

| Begin time of the task | |

| Finish time of the task | |

| G (V, E) | DAG call graph |

| Experiment Parameters | Values |

|---|---|

| user arrival time | 5 s |

| Languages | JAVA |

| Workload | A-G, Health-care |

| Experiment time | 12 h |

| Replication Repetition | 12 |

| No. of Mobile devices | 100 to 1000 |

| Location user Mobility | Trajectory function |

| No. of VMs. | 10–200 |

| Workload | N | ||

|---|---|---|---|

| Healthcare | 825 | 5.8 | 700 |

| Augmented Reality | 525 | 4.8 | 800 |

| Business | 525 | 4.8 | 800 |

| 3D-Game | 325 | 2.8 | 1000 |

| Face-Recognition | 725 | 7.8 | 700 |

| Social Net | 625 | 9.8 | 800 |

| Fog Node VM | CORE | MIPS/CORE | Storage (GB) |

| VM1 | 1 | 200 | 200 |

| VM2 | 1 | 400 | 400 |

| VM3 | 1 | 600 | 600 |

| VM4 | 1 | 800 | 800 |

| VM5 | 1 | 1000 | 1000 |

| Public Cloud VM | CORE | MIPS/CORE | Storage (GB) |

| VM1 | 1 | 500 | 500 |

| VM2 | 1 | 1000 | 1000 |

| VM3 | 1 | 1500 | 1500 |

| VM4 | 1 | 2000 | 2000 |

| VM5 | 1 | 3000 | 3000 |

© 2019 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Abro, A.; Deng, Z.; Memon, K.A.; Laghari, A.A.; Mohammadani, K.H.; ul Ain, N. A Dynamic Application-Partitioning Algorithm with Improved Offloading Mechanism for Fog Cloud Networks. Future Internet 2019, 11, 141. https://doi.org/10.3390/fi11070141

Abro A, Deng Z, Memon KA, Laghari AA, Mohammadani KH, ul Ain N. A Dynamic Application-Partitioning Algorithm with Improved Offloading Mechanism for Fog Cloud Networks. Future Internet. 2019; 11(7):141. https://doi.org/10.3390/fi11070141

Chicago/Turabian StyleAbro, Adeel, Zhongliang Deng, Kamran Ali Memon, Asif Ali Laghari, Khalid Hussain Mohammadani, and Noor ul Ain. 2019. "A Dynamic Application-Partitioning Algorithm with Improved Offloading Mechanism for Fog Cloud Networks" Future Internet 11, no. 7: 141. https://doi.org/10.3390/fi11070141

APA StyleAbro, A., Deng, Z., Memon, K. A., Laghari, A. A., Mohammadani, K. H., & ul Ain, N. (2019). A Dynamic Application-Partitioning Algorithm with Improved Offloading Mechanism for Fog Cloud Networks. Future Internet, 11(7), 141. https://doi.org/10.3390/fi11070141