Dynamic Traffic Scheduling and Congestion Control across Data Centers Based on SDN

Abstract

1. Introduction

2. Related Work

2.1. Data Center Based on Traditional Network

2.2. Data Center Based on SDN

3. The Design and Implementation of DSCSD

3.1. System Model

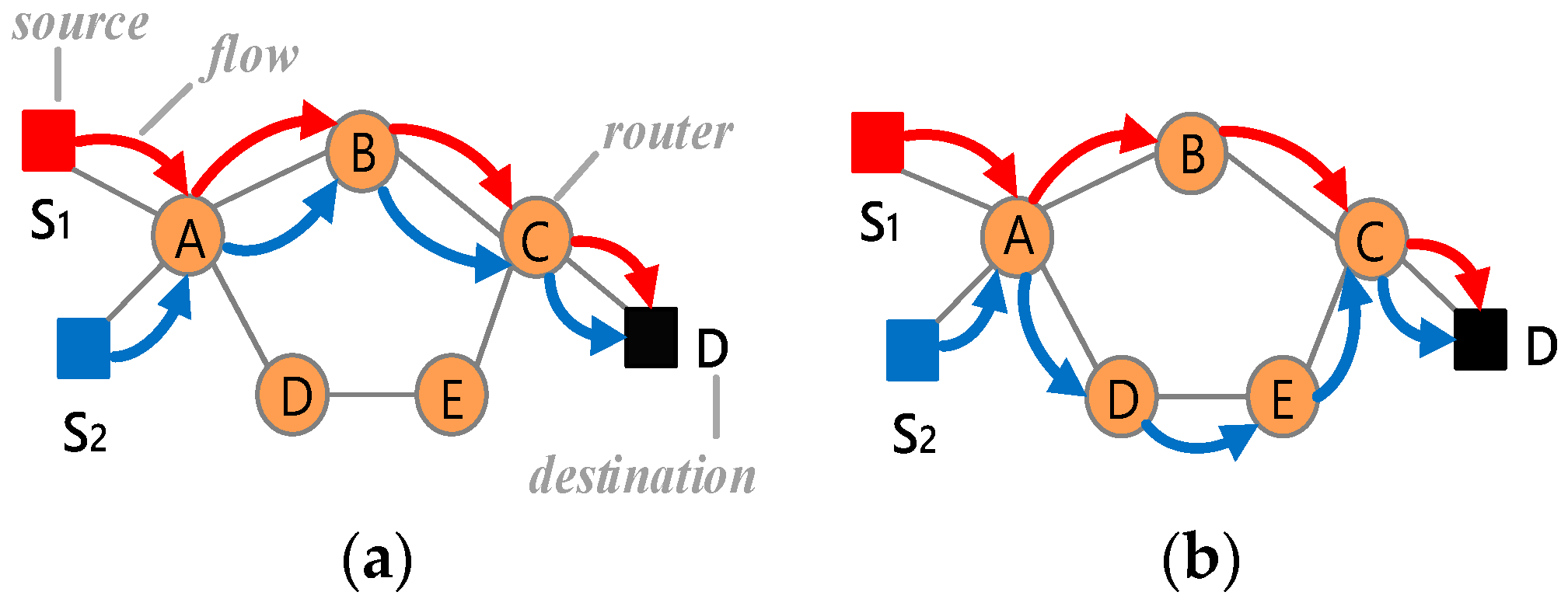

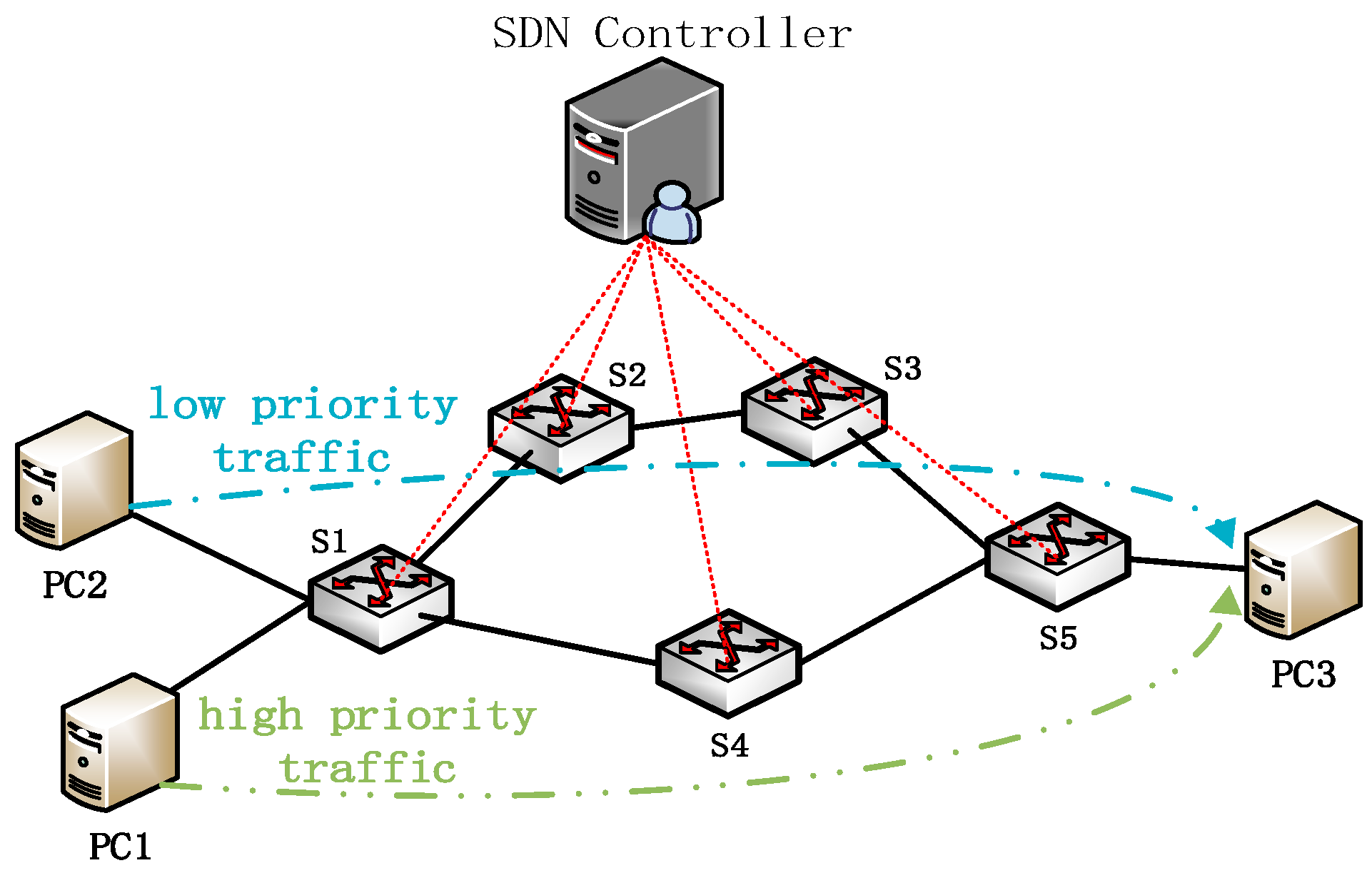

3.2. Dynamic Traffic Scheduling

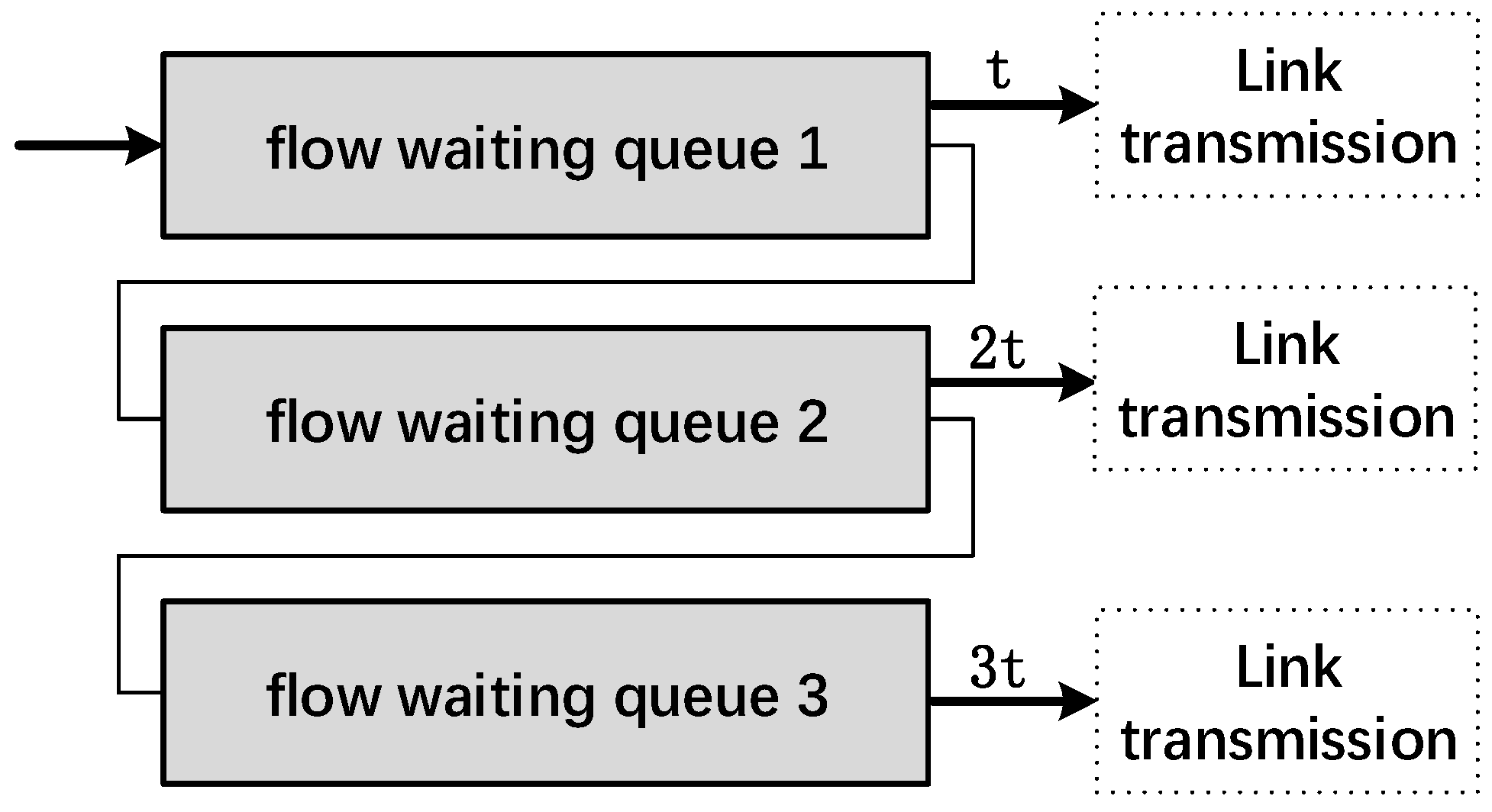

3.3. Congestion Control with Multilevel Feedback Queues

| Algorithm 1: The Algorithm of Multilevel Feedback Queue |

| Input: : topology of data center network; |

| : set of active flows; |

| : set of suspended flows; |

| : a new flow; |

| : a flow from the first of the . |

| Output: {<e.state, e.path>}: scheduling state and path selection of each flow in G. When a new flow arrives |

| 1 if (e.path = IDLEPATH) then |

| 2 ←; |

| 3 end |

| 4 else ←; |

| 5 while ) do |

| 6 if (e.path = IDLEPATH) then |

| 7 if ) then |

| 8 Select the flow at the first of ; |

| 9 ←; |

| 10 ←; |

| 11 Transmission time of the is t; |

| 12 end |

| 13 else if ) then |

| 14 Select the flow at the first of ; |

| 15 ←; |

| 16 ←; |

| 17 Transitssion time of the is 2t; |

| 18 end |

| 19 else |

| 20 Select the flow at the first of ; |

| 21 ←; |

| 22 ←; |

| 23 Transit time of the is 3t; |

| 24 end |

| 25 return {<e.state, e.path>}; |

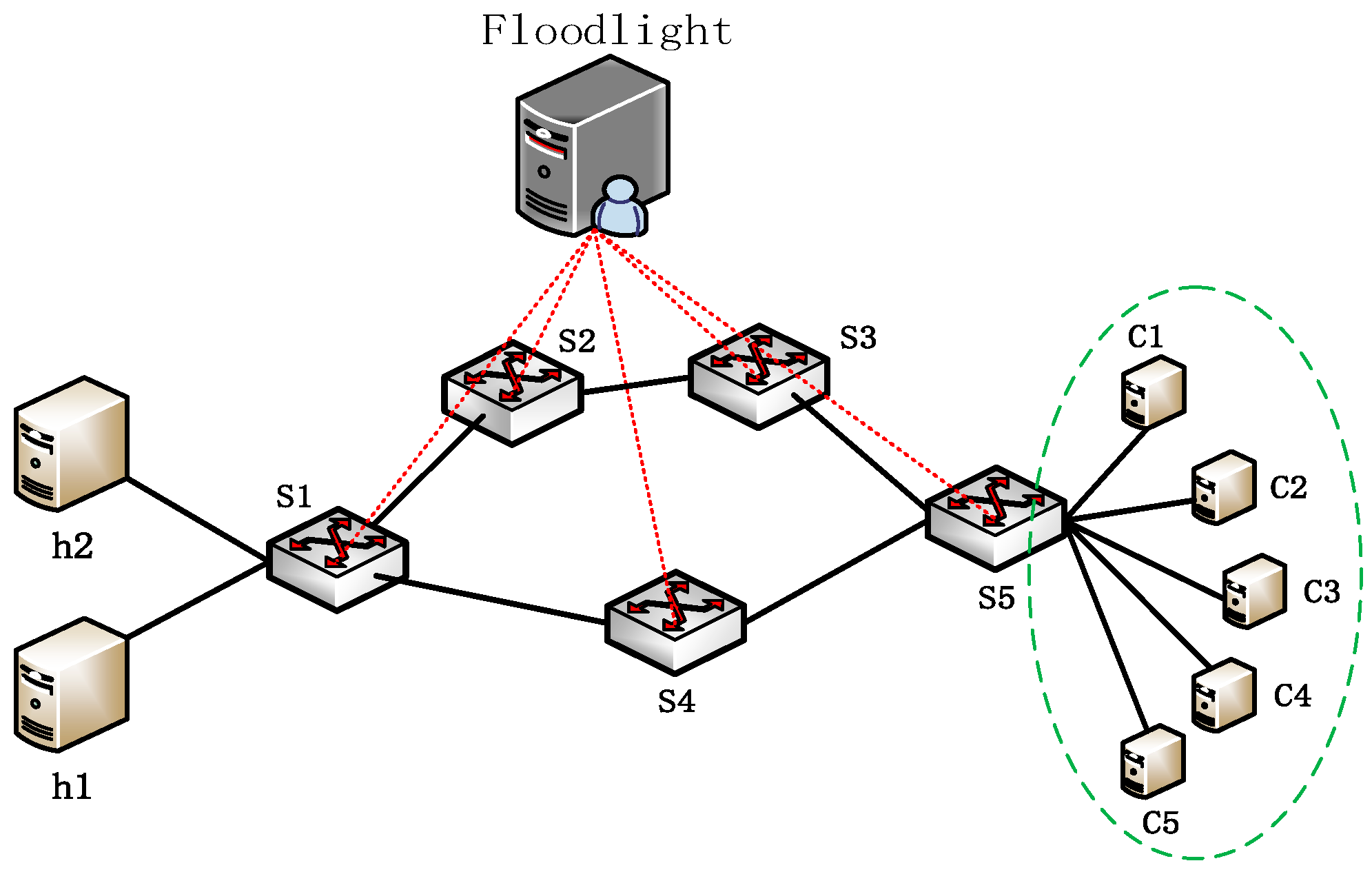

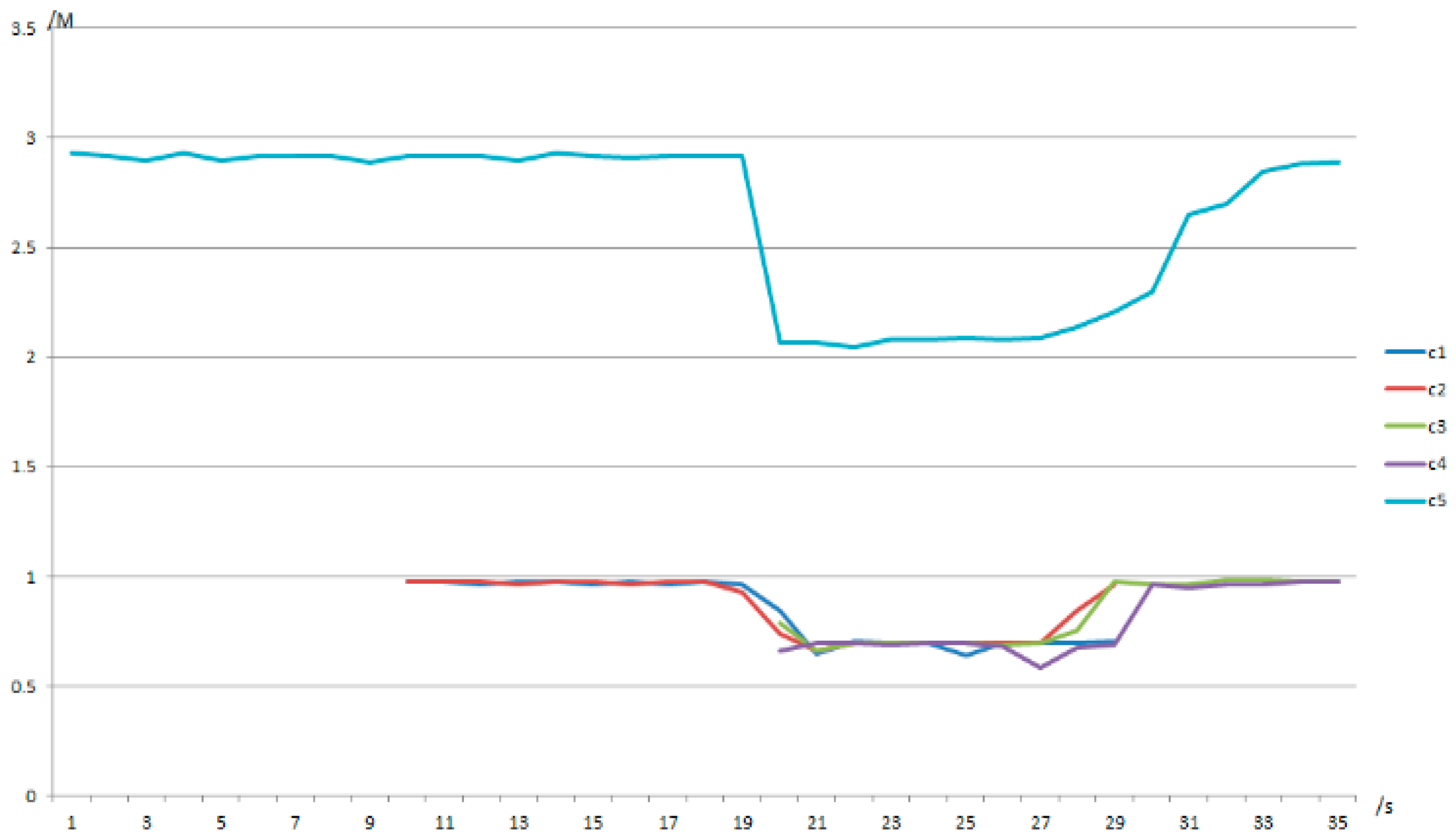

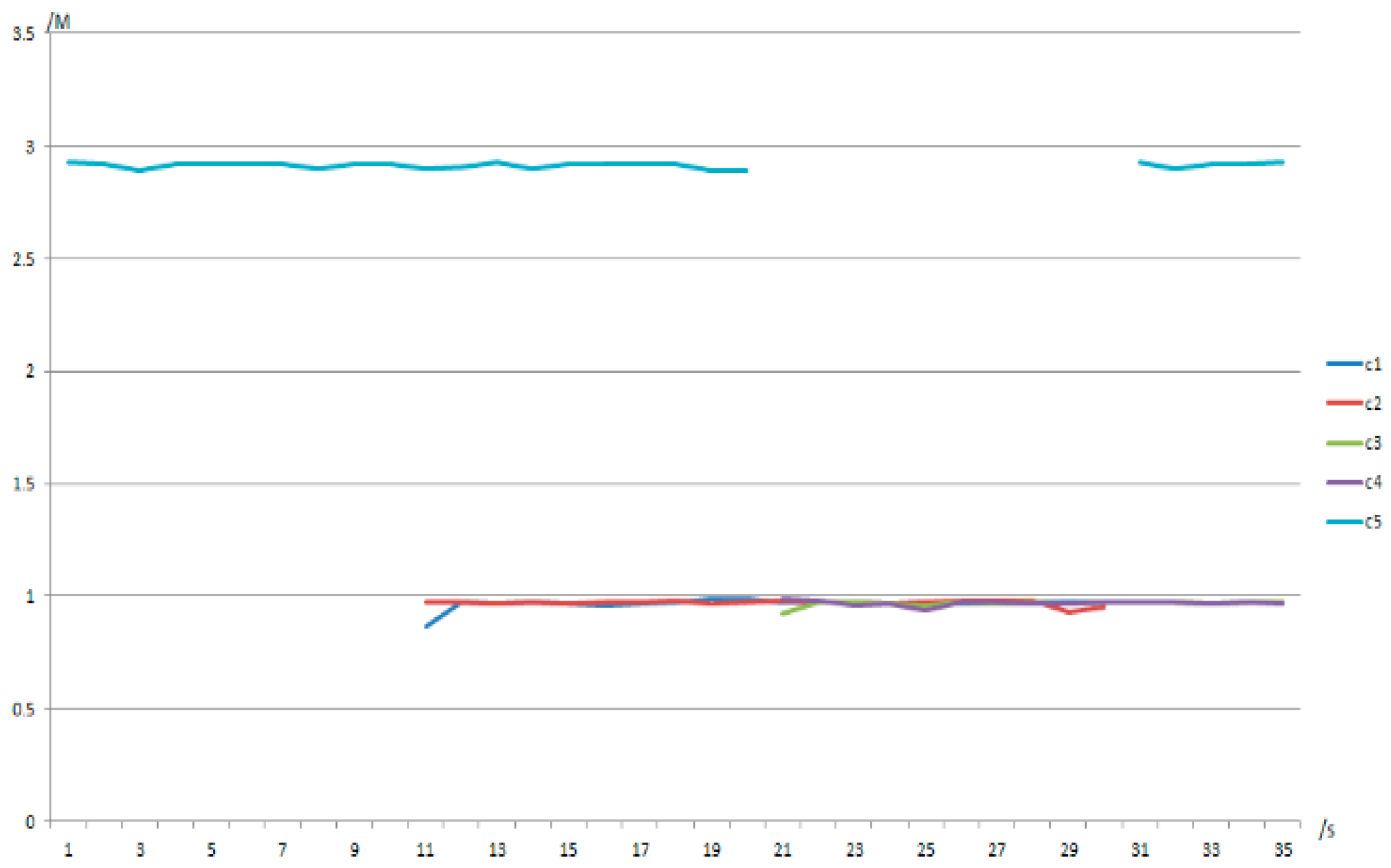

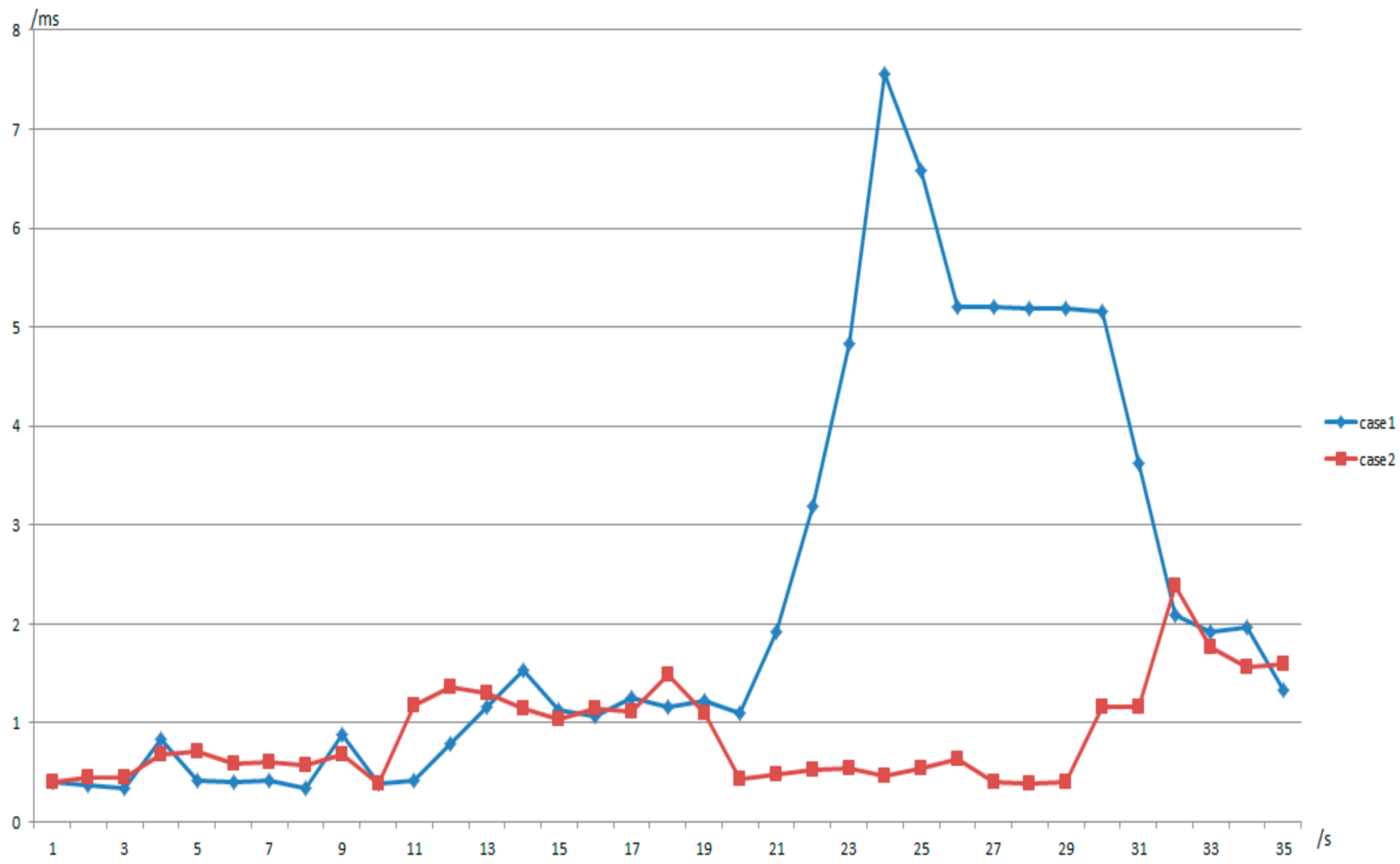

4. Experiment Results and Performance Analysis

5. Conclusions and Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Cui, L.; Yu, F.R.; Yan, Q. When big data meets software-defined networking: SDN for big data and big data for SDN. IEEE Netw. 2016, 30, 58–65. [Google Scholar] [CrossRef]

- Lan, Y.L.; Wang, K.; Hsu, Y.H. Dynamic load-balanced path optimization in SDN-based data center networks. In Proceedings of the 10th International Symposium on Communication Systems, Networks and Digital Signal Processing, Prague, Czech Republic, 20–22 July 2016; pp. 1–6. [Google Scholar]

- Ghaffarinejad, A.; Syrotiuk, V.R. Load Balancing in a Campus Network Using Software Defined Networking. In Proceedings of the Third GENI Research and Educational Experiment Workshop, Atlanta, GA, USA, 19–20 March 2014; pp. 75–76. [Google Scholar]

- Xia, W.; Wen, Y.; Foh, C.; Niyato, D.; Xie, H. A Survey on Software-Defined Networking. IEEE Commun. Surv. Tutor. 2015, 17, 27–51. [Google Scholar] [CrossRef]

- Nunes, A.; Mendonca, M.; Nguyen, X.; Obraczka, K.; Turletti, T. A Survey of Software-Defined Networking: Past, Present, and Future of Programmable Networks. IEEE Commun. Surv. Tutor. 2014, 16, 1617–1634. [Google Scholar] [CrossRef]

- Lin, P.; Bi, J.; Wang, Y. WEBridge: West–east bridge for distributed heterogeneous SDN NOSes peering. Secur. Commun. Netw. 2015, 8, 1926–1942. [Google Scholar] [CrossRef]

- Sezer, S.; Scott-Hayward, S.; Chouhan, P.K.; Fraser, B.; Lake, D.; Finnegan, J.; Viljoen, N.; Miller, M.; Rao, N. Are we ready for SDN? Implementation challenges for software-defined networks. IEEE Commun. Mag. 2013, 51, 36–43. [Google Scholar] [CrossRef]

- Mckeown, N.; Anderson, T.; Balakrishnan, H.; Parulkar, G.; Peterson, L.; Rexford, J.; Shenker, S.; Turner, J. OpenFlow: Enabling innovation in campus networks. ACM SIGCOMM Comput. Commun. Rev. 2008, 38, 69–74. [Google Scholar] [CrossRef]

- Kim, H.; Feamster, N. Improving network management with software defined networking. IEEE Commun. Mag. 2013, 51, 114–119. [Google Scholar] [CrossRef]

- Greenberg, A.; Hamilton, J.; Maltz, D.A.; Patel, P. The cost of a cloud: Research problems in data center networks. ACM SIGCOMM Comput. Commun. Rev. 2008, 39, 68–73. [Google Scholar] [CrossRef]

- Cheng, J.; Cheng, J.; Zhou, M.; Liu, F.; Gao, S.; Liu, C. Routing in Internet of Vehicles: A Review. IEEE Trans. Intell. Transp. Syst. 2015, 16, 2339–2352. [Google Scholar] [CrossRef]

- Ghemawat, S.; Gobioff, H.; Leung, S.T. The Google file system. In Proceedings of the Nineteenth ACM Symposium on Operating Systems Principles, Bolton Landing, NY, USA, 19–22 October 2003; pp. 29–43. [Google Scholar]

- Shvachko, K.; Kuang, H.; Radia, S.; Chansler, R. The Hadoop Distributed File System. In Proceedings of the IEEE 26th Symposium on MASS Storage Systems and Technologies, Incline Village, NV, USA, 3–7 May 2010; pp. 1–10. [Google Scholar]

- Dean, J.; Ghemawat, S. MapReduce: Simplified Data Processing on Large Clusters. In Proceedings of the 6th Conference on Symposium on Opearting Systems Design & Implementation, San Francisco, CA, USA, 6–8 December 2004; pp. 137–150. [Google Scholar]

- Ali, S.T.; Sivaraman, V.; Radford, A.; Jha, S. A Survey of Securing Networks Using Software Defined Networking. IEEE Trans. Reliab. 2015, 64, 1–12. [Google Scholar] [CrossRef]

- Tavakoli, A.; Casado, M.; Koponen, T.; Shenker, S. Applying NOX to the Datacenter. In Proceedings of the Eighth ACM Workshop on Hot Topics in Networks (HotNets-VIII), New York, NY, USA, 22–23 October 2009. [Google Scholar]

- Tootoonchian, A.; Ganjali, Y. HyperFlow: A distributed control plane for OpenFlow. In Proceedings of the Internet Network Management Conference on Research on Enterprise Networking, San Jose, CA, USA, 27 April 2010; p. 3. [Google Scholar]

- Yu, Y.; Lin, Y.; Zhang, J.; Zhao, Y.; Han, J.; Zheng, H.; Cui, Y.; Xiao, M.; Li, H.; Peng, Y.; et al. Field Demonstration of Datacenter Resource Migration via Multi-Domain Software Defined Transport Networks with Multi-Controller Collaboration. In Proceedings of the Optical Fiber Communication Conference, San Francisco, CA, USA, 9–13 March 2014; pp. 1–3. [Google Scholar]

- Zhang, C.; Hu, J.; Qiu, J.; Chen, Q. Reliable Output Feedback Control for T-S Fuzzy Systems with Decentralized Event Triggering Communication and Actuator Failures. IEEE Trans. Cybern. 2017, 47, 2592–2602. [Google Scholar] [CrossRef] [PubMed]

- Zhang, C.; Feng, G.; Qiu, J.; Zhang, W. T-S Fuzzy-model-based Piecewise H_infinity Output Feedback Controller Design for Networked Nonlinear Systems with Medium Access Constraint. Fuzzy Sets Syst. 2014, 248, 86–105. [Google Scholar] [CrossRef]

- Koponen, T.; Casado, M.; Gude, N.S.; Stribling, J.; Poutievski, L.; Zhu, M.; Ramanathan, R.; Iwata, Y.; Inoue, H.; Hama, T.; et al. Onix: A distributed control platform for large-scale production networks. In Proceedings of the Usenix Symposium on Operating Systems Design and Implementation, Vancouver, BC, Canada, 4–6 October 2010; pp. 351–364. [Google Scholar]

- Benson, T.; Anand, A.; Akella, A.; Zhang, M. MicroTE: Fine grained traffic engineering for data centers. In Proceedings of the CONEXT, Tokyo, Japan, 6–9 December 2011. [Google Scholar]

- Hindman, B.; Konwinski, A.; Zaharia, M.; Ghodsi, A.; Joseph, A.D.; Katz, R.; Shenker, S.; Stoica, I. Mesos: A Platform for Fine-Grained Resource Sharing in the Data Center. In Proceedings of the 8th USENIX Conference on Networked Systems Design and Implementation, San Jose, CA, USA, 25–27 April 2012; pp. 429–483. [Google Scholar]

- Curtis, A.R.; Kim, W.; Yalagandula, P. Mahout: Low-overhead datacenter traffic management using end-host-based elephant detection. In Proceedings of the 2011 Proceedings IEEE INFOCOM, Shanghai, China, 10–15 April 2011; pp. 1629–1637. [Google Scholar]

- Kanagavelu, R.; Mingjie, L.N.; Mi, K.M.; Lee, B.; Francis; Heryandi. OpenFlow based control for re-routing with differentiated flows in Data Center Networks. In Proceedings of the 18th IEEE International Conference on Networks, Singapore, 12–14 December 2012; pp. 228–233. [Google Scholar]

- Khurshid, A.; Zou, X.; Zhou, W.; Caesar, M.; Godfrey, P.B. Veriflow: Verifying network-wide invariants in real time. ACM SIGCOMM Comput. Commun. Rev. 2012, 42, 467–472. [Google Scholar] [CrossRef]

- Tso, F.P.; Pezaros, D.P. Baatdaat: Measurement-based flow scheduling for cloud data centers. In Proceedings of the 2013 IEEE Symposium on Computers and Communications (ISCC), Split, Croatia, 7–10 July 2013. [Google Scholar]

- Li, J.; Chang, X.; Ren, Y.; Zhang, Z.; Wang, G. An Effective Path Load Balancing Mechanism Based on SDN. In Proceedings of the IEEE 13th International Conference on Trust, Security and Privacy in Computing and Communications, Beijing, China, 24–26 September 2014; pp. 527–533. [Google Scholar]

- Li, D.; Wang, S.; Zhu, K.; Xia, S. A survey of network update in SDN. Front. Comput. Sci. 2017, 11, 4–12. [Google Scholar] [CrossRef]

- Jain, S.; Kumar, A.; Mandal, S.; Ong, J.; Poutievski, L.; Singh, A.; Venkata, S.; Wanderer, J.; Zhou, J.; Zhu, M.; et al. B4: Experience with a globally-deployed software defined wan. ACM SIGCOMM Comput. Commun. Rev. 2013, 43, 3–14. [Google Scholar] [CrossRef]

- Alizadeh, M.; Atikoglu, B.; Kabbani, A.; Lakshmikantha, A.; Pan, R.; Prabhakar, B.; Seaman, M. Data center transport mechanisms: Congestion control theory and IEEE standardization. In Proceedings of the 46th Annual Allerton Conference on Communication, Control, and Computing, Urbana-Champaign, IL, USA, 23–26 September 2008; pp. 1270–1277. [Google Scholar]

- Duan, Q.; Ansari, N.; Toy, M. Software-defined network virtualization: An architectural framework for integrating SDN and NFV for service provisioning in future networks. IEEE Netw. 2016, 30, 10–16. [Google Scholar] [CrossRef]

- Zhong, H.; Fang, Y.; Cui, J. Reprint of “LBBSRT: An efficient SDN load balancing scheme based on server response time”. Futur. Gener. Comput. Syst. 2018, 80, 409–416. [Google Scholar] [CrossRef]

- Shu, R.; Ren, F.; Zhang, J.; Zhang, T.; Lin, C. Analysing and improving convergence of quantized congestion notification in Data Center Ethernet. Comput. Netw. 2018, 130, 51–64. [Google Scholar] [CrossRef]

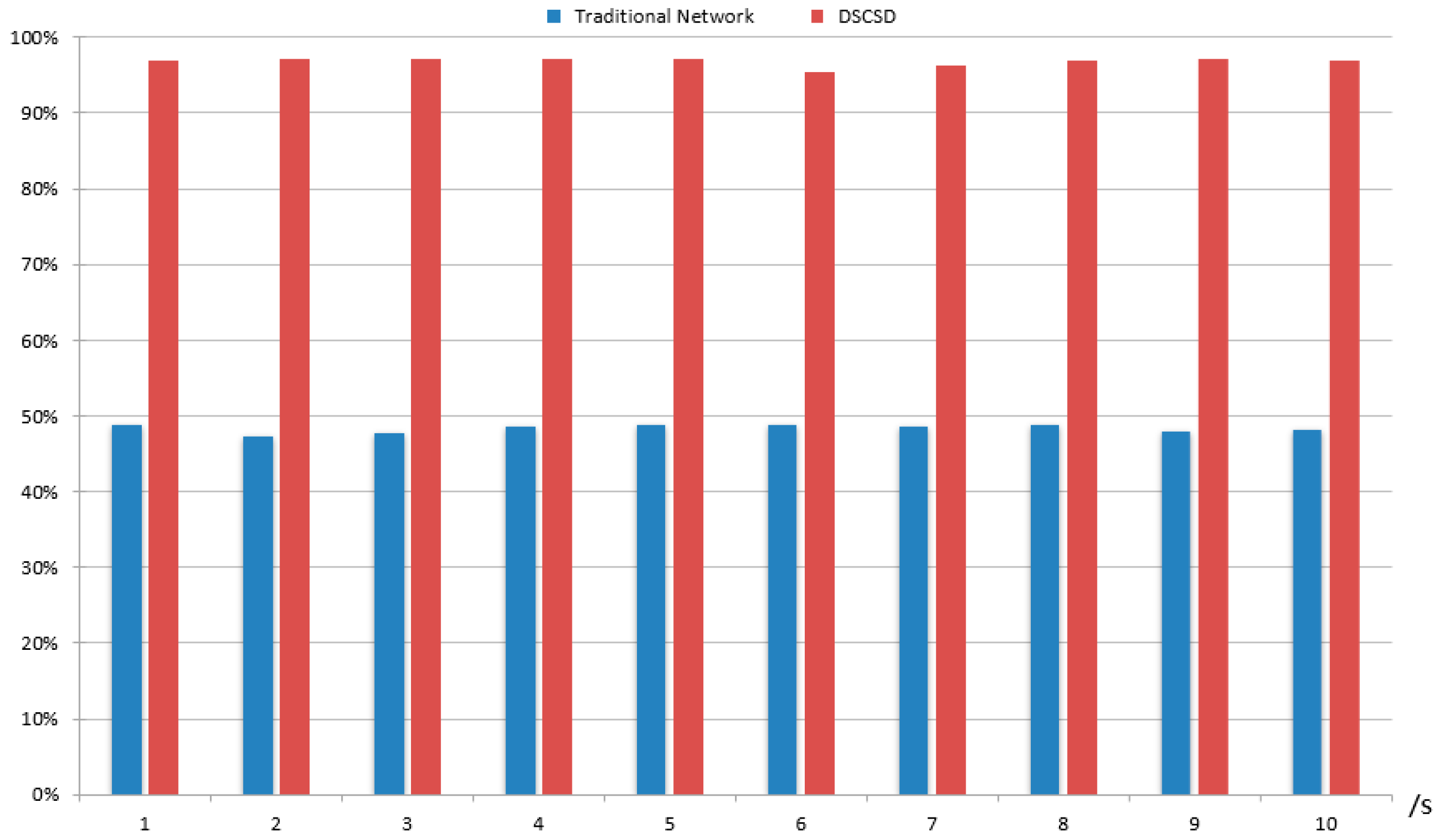

| bps | Traditional Network | DSCSD | ||||||

|---|---|---|---|---|---|---|---|---|

| Time | h1–1 | h1–c2 | h2–c3 | h2–c4 | h2–c4 | h2–c4 | h2–c4 | h2–c4 |

| 0–1 s | 106 k | 1.87 M | 1.91 M | 25.3 k | 1.95 M | 1.94 M | 1.95 M | 1.94 M |

| 1–2 s | 11.8 k | 1.86 M | 1.81 M | 70.6 k | 1.94 M | 1.95 M | 1.94 M | 1.94 M |

| 2–3 s | 11.8 k | 1.89 M | 1.89 M | 35.3 k | 1.94 M | 1.94 M | 1.94 M | 1.95 M |

| 3–4 s | 153 k | 1.92 M | 1.80 M | 11.8 k | 1.95 M | 1.94 M | 1.94 M | 1.94 M |

| 4–5 s | 35.3 k | 1.75 M | 1.94 M | 176 k | 1.94 M | 1.95 M | 1.95 M | 1.94 M |

| 5–6 s | 82.3 k | 1.94 M | 1.89 M | 82.3 k | 1.82 M | 1.94 M | 1.94 M | 1.94 M |

| 6–7 s | 35.3 k | 1.95 M | 1.88 M | 23.5 k | 1.94 M | 1.94 M | 1.87 M | 1.95 M |

| 7–8 s | 23.5 k | 1.94 M | 1.88 M | 58.8 k | 1.95 M | 1.94 M | 1.93 M | 1.94 M |

| 8–9 s | 11.8 k | 1.89 M | 1.92 M | 23.5 k | 1.95 M | 1.95 M | 1.94 M | 1.94 M |

| 9–10 s | 23.5 k | 1.91 M | 1.90 M | 11.8 k | 1.94 M | 1.95 M | 1.94 M | 1.93 M |

© 2018 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Sun, D.; Zhao, K.; Fang, Y.; Cui, J. Dynamic Traffic Scheduling and Congestion Control across Data Centers Based on SDN. Future Internet 2018, 10, 64. https://doi.org/10.3390/fi10070064

Sun D, Zhao K, Fang Y, Cui J. Dynamic Traffic Scheduling and Congestion Control across Data Centers Based on SDN. Future Internet. 2018; 10(7):64. https://doi.org/10.3390/fi10070064

Chicago/Turabian StyleSun, Dong, Kaixin Zhao, Yaming Fang, and Jie Cui. 2018. "Dynamic Traffic Scheduling and Congestion Control across Data Centers Based on SDN" Future Internet 10, no. 7: 64. https://doi.org/10.3390/fi10070064

APA StyleSun, D., Zhao, K., Fang, Y., & Cui, J. (2018). Dynamic Traffic Scheduling and Congestion Control across Data Centers Based on SDN. Future Internet, 10(7), 64. https://doi.org/10.3390/fi10070064