Abstract

In this paper, we propose a particle swarm optimization variant based on a novel evaluation of diversity (PSO-ED). By a novel encoding of the sub-space of the search space and the hash table technique, the diversity of the swarm can be evaluated efficiently without any information compression. This paper proposes a notion of exploration degree based on the diversity of the swarm in the exploration, exploitation, and convergence states to characterize the degree of demand for the dispersion of the swarm. Further, a disturbance update mode is proposed to help the particles jump to the promising regions while reducing the cost of function evaluations for poor particles. The effectiveness of PSO-ED is validated on the CEC2015 test suite by comparison with seven popular PSO variants out of 12 benchmark functions; PSO-ED achieves six best results for both 10-D and 30-D.

1. Introduction

Inspired by the emergent motion of the foraging behavior of a flock of birds in nature, the particle swarm optimization (PSO) was first proposed by Kennedy and Eberhart [1,2] in 1995 to solve the continuous nonlinear optimization problems. Compared with other evolutionary algorithms, the PSO has attracted much attention since it was proposed because of its fewer control parameters and better convergence. Nowadays, PSO has been widely used in many fields, such as communication networks [3,4], medicine engineering [5,6,7], task scheduling [8,9], energy management [10], linguistics studies [11], supply chain management [12] and neural networks [13,14].

Despite its robustness for solving complex optimization problems, rapid convergence may cause the swarm to be easily trapped into some local optima when solving multimodal problems via PSO [15,16,17,18,19,20,21,22]. Therefore, reasonably using the swarm’s exploration ability (global investigation of the search place) and exploitation ability (finer search around a local optimum) is a crucial factor for PSO’s success, especially for complex multimodal problems having a large number of local minima. For this purpose, several PSO variants have been reported. In the following, we shall discuss three main types: PSO with adjusted parameters, PSO with various topology structures, and PSO with hybrid strategies.

PSO with adjusted parameters: It is evident that appropriate control parameters, such as the inertia weight ω and the acceleration coefficients c1 and c2, have significant effects on the exploration and exploitation abilities of the swarm. By increasing the value of ω significantly diversity of the swarm increases while increasing the values of c1 and c2 accelerates the particles towards the historical optimal position or the optimal position of the whole swarm. Zhan [15] proposed an adaptive particle swarm optimization (APSO) algorithm in 2009, which updated control parameters (ω, c1 and c2) adaptively based on the distribution of positions and fitness of the swarm. Zhang et al. [23] proposed an inertia weight adjustment scheme based on Bayesian techniques to enhance the swarm’s exploitation ability. Tanweer et al. [24] proposed a PSO algorithm (SRPSO) that employed a self-regulating inertia weight strategy to the best particle to enhance the exploration ability. Taherkhani [25] proposed an adaptive approach which determines the inertia weight in different dimensions for each particle, based on its performance and distance from its best position.

PSO with various topology structures: PSO with different topology structures, namely: improved fixed topology structures, dynamic topology structures, and multi-swarms, have been shown to be efficient in controlling the exploration and exploitation capabilities [26,27,28].

Kennedy [29] proposed a small-world social network and studied different topologies’ influences on PSO algorithms’ performances. It was found that sparsely connected networks were suitable for complex functions, and densely connected networks were useful for simple functions.

Suganthan [30] first introduced the concept of dynamic topologies for PSO, where sub-swarms (each was a particle initially) were gradually merged as the evolution progressed. Cooren et al. [28] proposed an adaptive PSO called TRIBES, which were multiple sub-swarms with independent topological structures changing over time. Bonyadi et al. [31] presented dynamic topologies by growing the sub-swarms’ sizes, merging the sub-swarms. Zhang [32] proposed DEPSO, which generated a weighted search center based on top-k elite particles to guide the swarm.

Bergh and Engelbrecht [33] divided a d-dimension swarm into k (k < d) sub-swarms and made sub-swarms cooperated by exchanging their information (e.g., the best particle). Blackwell and Branke [34] proposed a multi-swarm PSO for dynamic functions with the optimal values changing over time. Liang’s group [35,36] presented a dynamic multi-swarm PSO with small sub-swarms frequently regrouped using various schedules. Xu et al. [37] hybridized the dynamic multi-swarm PSO with a new cooperative learning strategy in which the worst two particles learned from the two better sub-swarms. Chen et al. [21] proposed a dynamic multi-swarm PSO with a differential learning strategy. It combined the differential mutation into PSO and employed Quasi-Newton method as a local searcher.

PSO with hybrid strategies: To improve the performances of PSO, combining excellent strategies into PSO has been shown to be an effective approach. For example, Mirjalili et al. [38] combined PSO with gravitational search algorithm for efficiently training feedforward neural networks. Zhang et al. [39] combined PSO with a back-propagation algorithm to efficiently train the weights of feedforward neural networks. Nagra et al. [40] developed a hybrid of dynamic multi-swarm PSO with a gravitational search algorithm for improving the performance of PSO. Zhan et al. [41] combined PSO with orthogonal experimental design to discover an excellent exemplar from which the swarm can quickly learn and speed up the searching process. Bonyadi et al. [31] hybridized PSO with a covariance matrix adaptation strategy to improve the solutions in the latter phases of the searching process. Garg [42] combined PSO with genetic algorithms (GA): creating a new population by replacing weak particles with excellent ones via selection, crossover, and mutation operators. Plevris and Papadrakakis [43] combined PSO with a gradient-based quasi-Newton SQP algorithm for optimizing engineering structures. N. Singh and S.B. Singh [44] combined PSO with grey wolf optimizer for improving the convergence rate of the iterations. Raju et al. [45] combined PSO with a bacterial foraging optimization for 3D printing parameters of complicated models. Visalakshi and Sivanandam [46] combined PSO and the simulated annealing algorithm for processing dynamic task scheduling. Kang [47] introduced opposition-based learning (OBL) into PSO to improve the swarm’s performance in noisy environments. Cao et al. [48] embedded the comprehensive learning particle swarm optimizer (CLPSO) with local search (CL) to take advantage of both the exploration ability of CLPSO and the exploitation ability of CL.

Many scholars have conducted intensive research on the theory and applications of the PSO algorithms. However, there are still some shortcomings. For example, the parameter adaptive adjustment strategy cannot truly reflect the evolution of the population, and the particle swarm cannot effectively jump out of local optimal areas. To address these issues, a particle swarm optimization variant based on a novel evaluation of diversity (PSO-ED) is proposed in this paper and the major innovation is listed as follows:

- We propose a novel approach to compute swarm diversity based on the particles’ positions. By a series of encoding operations on the sub-space of the search space and the aid of hash table, the diversity can be determined in time, and it can reflect the distribution of the swarm without any information compression. Section 3.2.1 details the related techniques.

- We proposed a novel notion of exploration degree based on the diversity in the exploration, exploitation, and convergence states. It reflects the degree of demand for the swarm’s dispersion, and it can be used to realize adaptive update of the inertial weight of the PSO’s velocity function. Section 3.2.3 details the related techniques.

- We proposed a disturbance update mode based on the particles’ fitness. We replace the positions of the poor particles with new positions obtained by disturbing the best position. It saves the cost of function evaluations and improves convergence efficiency. Section 3.5 details the related techniques.

2. Related Work

2.1. Standard PSO

PSO is a population-based stochastic optimization algorithm introduced in 1995 by Kennedy and Eberhart without inertia weight [1,2]. Since the introduction of inertia weight was introduced by Shi and Eberhart [49] in 1998, it has shown its power in controlling the exploration and exploitation processes of evolution (Equations (1) and (2)).

When searching in a D-dimensional hyperspace, each particle i has a velocity vector and a position vector for the t-th iteration. is the historically best position of particle i, and is the position of the globally best particle. Acceleration coefficients c1 and c2 are commonly set in the range [0.5, 2.5] ([50,51]). and are two randomly generated values within range [0, 1], ω typically decreases linearly from 0.9 to 0.4 [49].

2.2. Existing Diversity Evaluation Strategies

Diversity, a parameter for evaluating the degree of dispersion in a swarm, is widely used to improve the PSO’s performance by adaptively balancing the swarm’s exploration and exploitation capabilities [17,52,53,54,55]. When diversity is small, the swarm can explore more accurate solutions; on the other hand, it can explore a large space to avoid premature convergence when the diversity is large. Therefore, it is suggested to maintain a large diversity in the early stage of evolution to explore a vast space where the optimal solution may exist, and reduce the swarm’s diversity near the end of the evolution to refine the result locally.

A proper diversity value should reflect the real characteristics of the swarm. If the diversity value is incorrectly evaluated, it may mislead the swarm’s movement. However, measuring the diversity of the swarm is a somewhat challenging problem.

At present, a lot of researchers have proposed different diversity evaluation schemes, which can be divided into two categories: distance-based diversity evaluation schemes [56] and information entropy-based diversity evaluation schemes [57,58,59,60,61,62].

The distance-based diversity evaluation methods can be further divided into two categories: (1) the average distance between each pair of particles in the swarm [63]; (2) the average distance between each particle and geometric center of the particle swarm [64].

The information entropy-based diversity evaluation scheme is developed from the information theory proposed by Shannon in 1948 [65]. After that, many scholars introduced this concept into particle swarm optimization algorithm. For example, Pires [60] proposed an entropy-based index to measure the diversity of the swarm. The basic idea of this method is as follows: (1) evenly dividing the search space into Q subspace; (2) counting the number Zq of particles in each subspace; (3) calculating the probability in the subspace by Equation (3); (4) evaluating the diversity (the information entropy) by Equation (4).

where Ns represents the total number of particles, q represents the q-th subspace, and E represents the information entropy and is taken as the value of diversity.

3. PSO-ED

This section presents the technical details of PSO-ED. Section 3.1 introduces the main idea of PSO-ED; Section 3.2 presents our evaluation strategy of computing the exploration degree; Section 3.3 presents an adaptive update of inertia weight based on the exploration degree; Section 3.4 presents the swarm reinitialization mechanism that helps the swarm escape local traps; Section 3.5 presents two update modes, i.e., normal update mode and disturbance update mode, for saving function evaluations (FEs) on poor-performed particles.

3.1. The Main Idea of PSO-ED

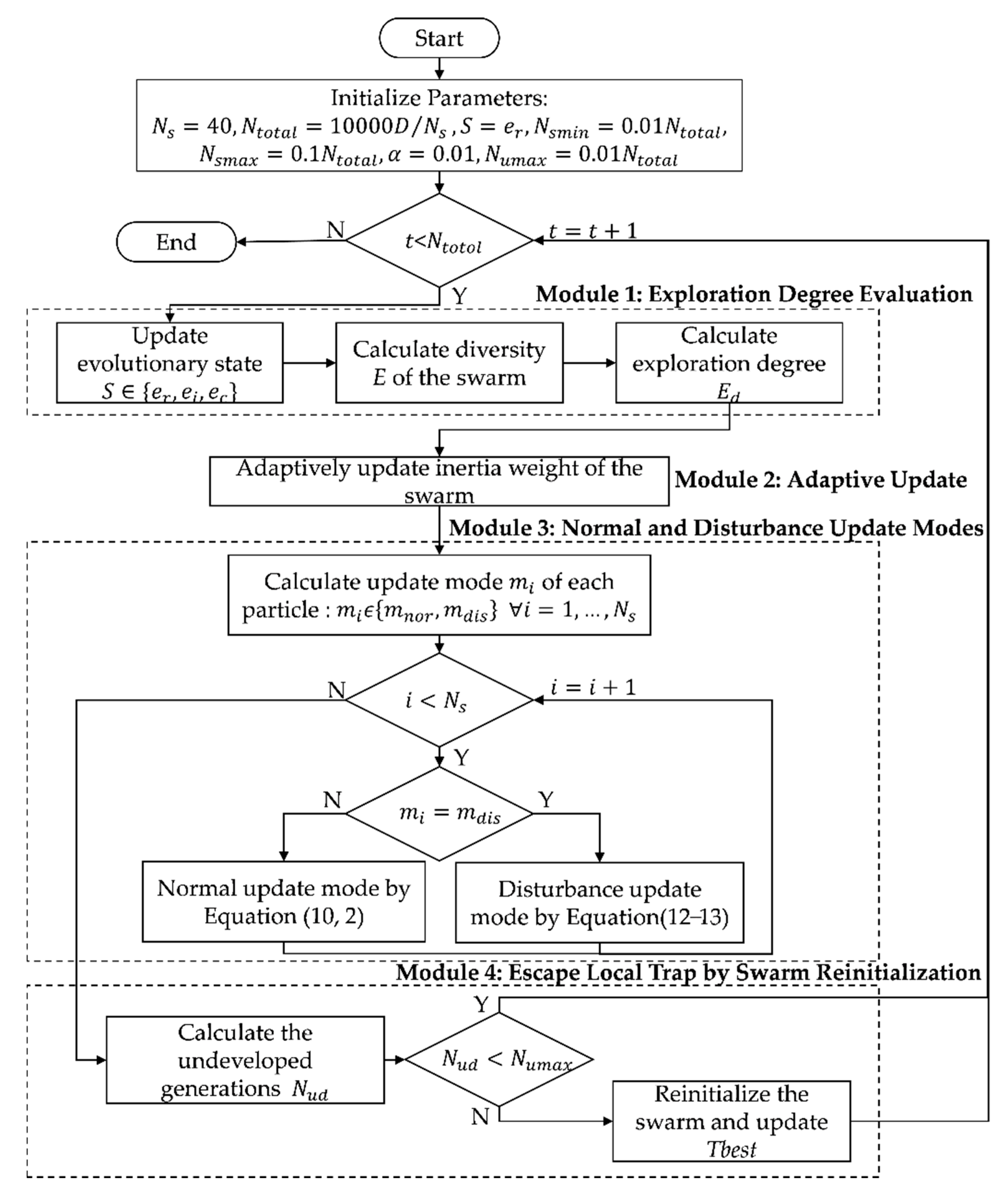

Given a dimension D (D = 10 or 30 for CEC2015), the flowchart of the PSO-ED is shown in Figure 1. PSO-ED has four main modules: exploration degree evaluation, an adaptive update of inertia weight, normal and disturbance update modes, and swarm reinitialization.

Figure 1.

Flowchart of the particle swarm optimization variant based on a novel evaluation of diversity (PSO-ED).

As mentioned above, the core problem of particle swarm optimization algorithm is how to balance the exploration and exploitation abilities of the swarm. To address this problem, in Module 1, we develop a technique of exploration degree based on the diversity of the swarm in the exploration, exploitation, and convergence states. In module 2, with the help of exploration degree, we adaptively update the inertial weight of the swarm to ensure that the swarm has a larger exploration degree in the exploration state and a smaller one in the convergence state. In Module 3, we give two update modes (normal and disturbance update modes) for each particle according to its fitness, so as to achieve the global optimum quickly. In Module 4, to help the swarm escape from local traps, a novel swarm reinitialization scheme is proposed.

For ease of description, we summarize the parameters used in the rest of the paper in Table 1. The definition of each critical parameter is also given when it first appears in the context.

Table 1.

Nomenclature.

3.2. Evaluation of the Exploration Degree

3.2.1. A Novel Diversity Evaluation Scheme

As mentioned above, the distance-based diversity is evaluated by computing the distances between particles. The essence of this scheme is space compression, which compresses the D-dimensional space features into one-dimensional space. For example, assume that there are three particles in the three-dimensional space, their positions are p1 = {1,0,0}, p2 = {0,1,0} and p3 = {0,0,1}, then the mutual distance between any two particles is {(p1, p2) = 20.5, (p1, p3) = 20.5, (p2, p3) = 20.5}, which has only one dimension. Observe that the three points are separated by a distance of 20.5, but they are regarded as being aggregated together since the information entropy of the distance information is 0 (Equations (3) and (4)). Therefore, the information entropy based on distance cannot reflect the swarm diversity very well. On the other hand, if space is divided into multiple small subspaces, the complexity of traversing all subspaces is fairly high. For example, in the D-dimensional space, if each dimension is divided into K equal parts, then the complexity of traveling all subspaces is (), which is formidable when D is larger than 10.

To calculate the information entropy of the swarm efficiently and evaluate the degree of dispersion of the swarm as accurately as possible, we propose a novel diversity Evaluation scheme. The crucial steps of our approach are as follows:

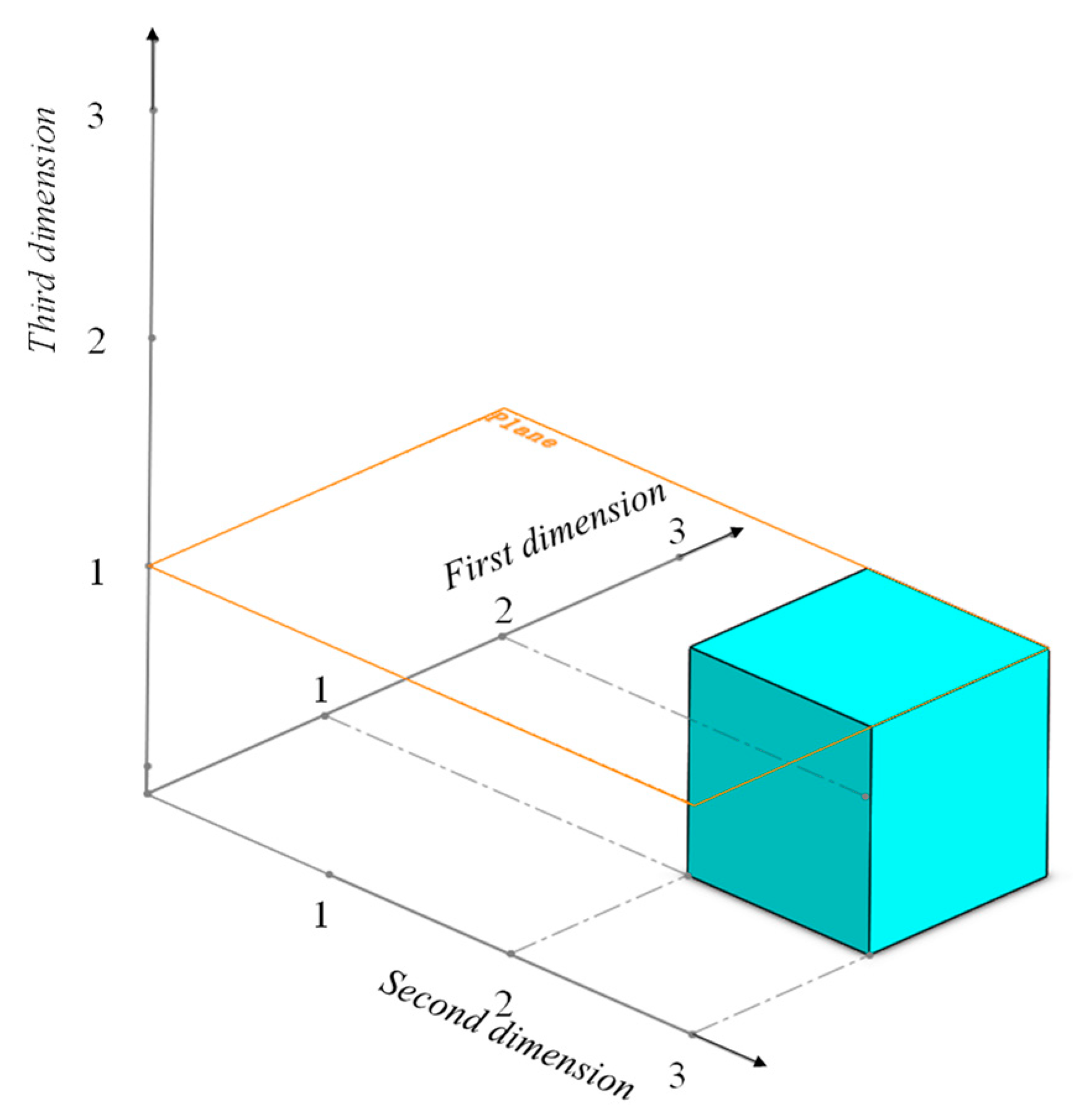

For each particle in the swarm, compute the index (ID) of the subspace where the particle is located. First of all, each dimension of the search space is divided into K equal parts. Then the ID of a subspace is a string of D numbers, each of which represents the index of the part of the dimension occupied by the subspace. For example, in the 3-dimensional space in Figure 2, the ID of the blue subspace is {2, 3, 1}.

Figure 2.

The relation of the ID of the blue subspace and the dimensions of the search space.

Denote as the ID of subspace where the i-th particle is located, where is obtained from Equation (5):

Build a hash table h by traversing the IDs obtained in the Step (1). h is composed of key-value pairs {key, value}, where key is the ID of the subspace, and value is the number of particles in the subspace. We traverse the IDs of the subspaces obtained by the above steps, if a key c exists in h its value h(c) is increased by 1, else a new key-value pair {c,1} is inserted into h.

Determine the information entropy E of the swarm based on h. Note that the empty subspace without any particle contributes 0 to the calculation of information entropy since the probability p of particles falling into this subspace is 0 by Equations (3) and (4). Therefore, only the subspace corresponding to a key of h can contribute to the information entropy. in Equation (3) represents the probability of falling into the subspace q, and . Therefore, E can be obtained by Equations (3) and (4) based on the keys of h and can be used in evaluating the exploration degree in Equation (8).

The time complexity of the above procedure is analyzed as follows: in step 1, we need to calculate the of each particle i in each dimension d, so its time complexity is , where is the size of the swarm, and D is the dimension of the search space; in step 2, inserting a key-value pair into hash table h can be done in O(1) time, the time of traversing the IDs to establish hash table h is ; in the last step, because only the key of the hash table h needs to be traversed, and the number of IDs is no more than , the time complexity is also . In summary, the running time of the above procedures is , which is significantly smaller than .

3.2.2. Switch of Evolutionary States

A conventional state transition of PSO simply set the initial state, the middle state and the last state of evolution as Exploration, Exploitation and Convergence, respectively. We are more flexible in switching the evolution states, and we allow multiple state switches during the whole evolution process. The swarm starts with the Exploration state, and its switching circle is Exploration→Exploitation→Convergence→Exploration. The process terminates as the maximum number of FEs is invoked.

As for the conditions of state switches, let be the number of consecutive generations when the result of the swarm evolution has not been improved, if , the state should be switched at this time to enhance the chance of achieving better results (exploration→exploitation, exploitation→convergence) or jumping out of the local trap (convergence→exploration). However, if the state switches too frequently, it may not lead to any promising result while wasting time in state switch. To address this issue, let be the number of generations the current state has been maintained, depending on whether when , we constraint in a range of generations (i.e., [, ]) to control the switching frequency as follows:

- : No switch

- : Switch only when

- : Switch

Note that the state S shall be used in the Section 3.2.3 to determine the upper and lower bound of the exploration degree.

Since the swarm may not evolve in every iteration during the evolution process, we use the notion of average evolution rate to judge whether the current iteration has evolved. As shown in Equation (6), the average evolution rate of consecutive K generations is obtained as the total evolution rate of these K generations divided by K. When the evolution rate is less than a user-defined threshold α, we increase by 1, otherwise, we set as 0.

where the F(t) is the best result obtained in t-generation.

3.2.3. Exploration Degree

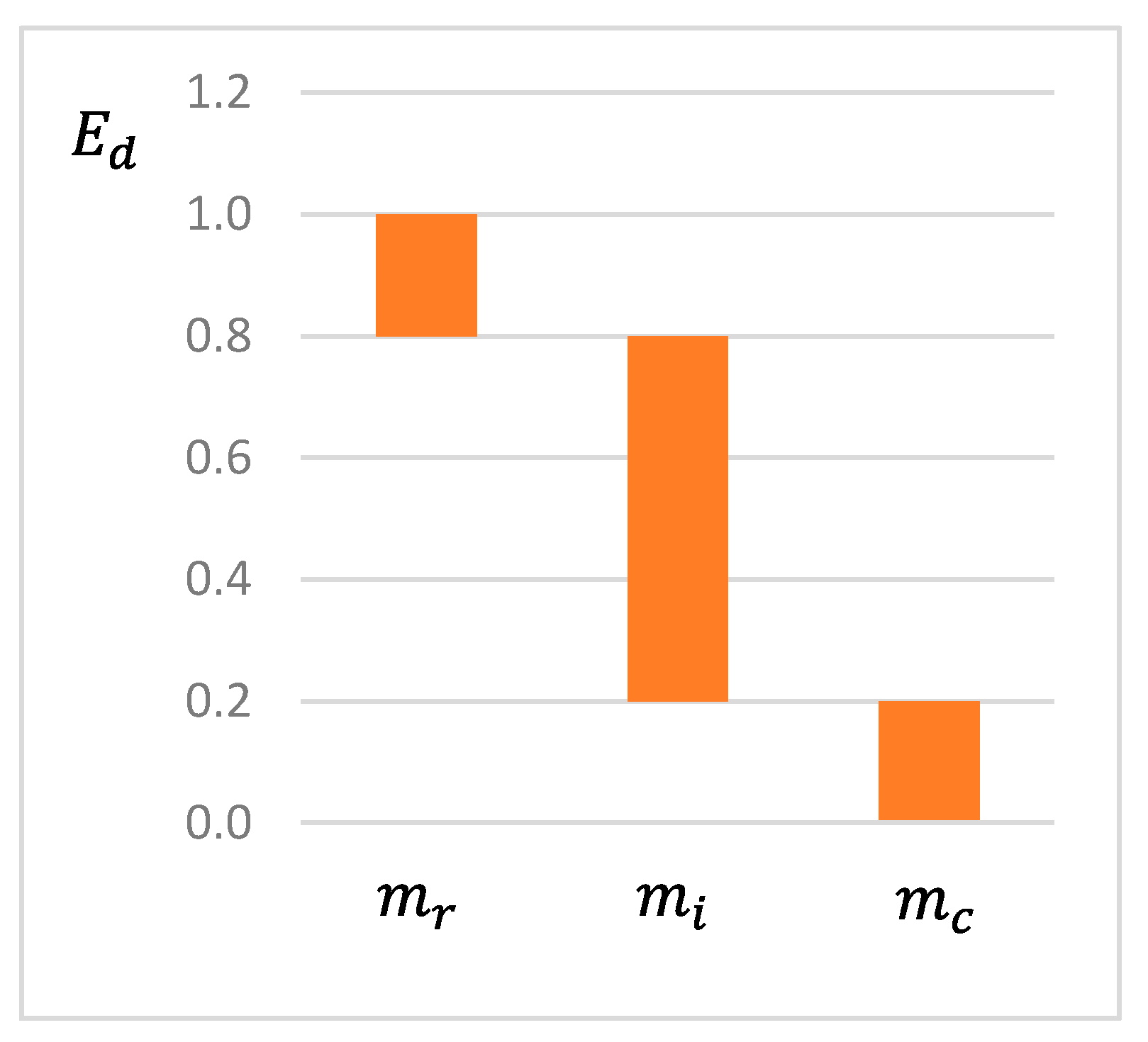

Let denote the exploration degree, which characterizes the degree of demand for the dispersion of the swarm. By trial and error, the relationship of the upper and lower bounds of . The three evolutionary states can lead to fairly fine results if set, as shown in Figure 3.

Figure 3.

The relationship between Ed and State.

We use the diversity E(t) to determine the exact value of . When E(t) is high, it indicates that the swarm may still explore a wider area and the corresponding value of should be larger, and vice versa. To mimic this, we develop a linear function to express the relationship between and E(t) in Equation (8).

In the following, we show how to realize an adaptive update of the inertial weight and, therefore, the balance of the exploration and exploitation by .

3.3. Adaptive Update of Inertia Weight

The inertia weight plays a critical role in balancing the swarm’s exploration and exploitation capabilities, and it also significantly affects the result’s accuracy [23,24,25]. Therefore, we adaptively adjust the swarm’s inertia weight to ensure that the swarm has a larger exploration degree in the exploration stage and a smaller one in the convergence stage. Finally, we use a sigmoid-like mapping that decreases from 0.9 to 0.4 [66,67] to characterize our inertia weight adjustment (Equation (9)). It is evident from this formula that when the exploration degree Ed of the population is large (close to 1.0), the inertia weight ω will also be large (close to 0.9) to ensure that the swarm can continue to explore a wider space. In contrast, when Ed becomes small, the inertia weight is also reduced to enhance the swarm’s convergence ability.

3.4. Excape Local Trap by Swarm Reintialization

Note that Module 3 (Normal and Disturbance Update Modes) relies on Tbest, which is equal to Gbest when no reinitialization is conducted, therefore we shall present Module 4 ahead of Module 3 for ease of description. When the swarm falls into the trap of local optimal and cannot rely on its own to jump out, reinitializing the swarm has been proved to be an effective method of addressing this issue [68,69,70].

A crucial issue is how to judge whether the swarm has fallen into a local optimum or not. In this article, we say that the swarm has fallen into a local optimum if the iterations of undeveloped generation of the swarm exceeds a maximum allowed number .

Another crucial issue is how to help the swarm jump out of a local optimum. When the swarm is trapped in a local optimum, the conventional solution is to reinitialize the swarm. If we abandon the global best (Gbest) by convention, then we waste a lot of early effort; on the other hand, if we use it, the swarm has a high probability of falling into this local trap again due to its powerful attraction. To address this issue, let Gbest denote the best position of the swarm of the current round of evolution (with a single initialization or reinitialization), and let Tbest denotes the best position of the swarm for all historical rounds of evolutions, thus Tbest is at least as good as Gbest. Finally, we add the Tbest term to the particle update in Equation (10), which ensures that the optimal position (Tbest) has only a moderate effect on the swarm with less attraction power.

where Pt is the position of Tbest.

For ease of computation, we fix c1 = c2 = 1.49445 based on a large set of experimental results of previous PSO variants (e.g., [23,36,71]). As for c3, since its role is to guide the swarm to the position of Tbest in a slow manner, we can set it to a very small number c3 = 0.01. In this way, we will update Tbest with this Gbest if the result of Gbest is better than the current Tbest; otherwise, Tbest still has the power of pulling the swarm to its position after several evolutionary generations.

3.5. Normal and Disturbance Update Modes

Note that Gbest and Pbest are more likely to be closer to the real global optimal solution in some dimensions. On the other hand, a poor particle’s current position is far away from the optimal solution, and it requires more cost (e.g., FEs) to move to a promising region.

Based on this consideration, we use different update modes for particles with excellent fitness and poor fitness to speed up the search process. We take the worst particles in each iteration as the particles with poor fitness. As shown in Equation (11), is proportional to . The larger the value of is, the more dispersed the particles are. In this way, we force more particles to jump to a promising region to while reducing the waste of the FEs on poor particles.

- (1)

- For particles with better fitness, the update mode follows the usual manner shown in Equations (2 and 10). Note that this update mode is slightly different from the traditional update due to the Tbest term in the velocity update formula.

- (2)

- For particles with poor fitness, we use a disturbance update mode as follows.

Step 1: Select the position of Pbest or Gbest as the seed (denoted as P) for generating new positions. Refer to Equation (12), the choice of Pbest position (denoted as Pi) or Gbest position (denoted as ) depends on the value of . When is large, we tend to choose Pbest (i.e., self-cognition) to avoid fast convergence to some local optimum; when is small, we choose Gbest (social-cognition) to achieve a finer result.

where r is a random number uniformly distributed in [0, 1].

Step 2: Since P is very close to the optimal solution in some dimensions, we can randomly select a dimension d of P and perturb it into a new position, and keep the other dimensions remain the same thus to maintain the property of P as much as possible.

where is a random number and .

Step 3: Replace the position of poor particles with the new position generated by the disturbance update mode.

4. Experiments and Comparisons

As shown in Table 2, to validate the effectiveness of PSO-ED, we use the same comparison set provided by a recent paper on MPSO [72] and choose 12 representative test functions from CEC2015 benchmark functions [73] as the test suite. The test suite consists of 2 unimodal functions (F1 and F2), 3 multimodal functions (F3, F4 and F5), 3 hybrid functions (F6, F7 and F8) and 4 composition functions (F9, F10, F11 and F12). All the guidelines of CEC2015 have been strictly followed for the experiments. For example, every function in this test suite is conducted for 30 runs independently, the search range of each dimension is set as [−100, 100], and the maximum function evaluation FEs is equal to 10,000 D. The fitness value is Fi(x) − Fi(x*) after the maximum iteration is reached, where Fi(x*) is just a number about the corresponding function for instruction.

Table 2.

The 12 CEC2015 benchmark functions.

4.1. Sensitivity Analysis

In this article, two parameters ( and ) are very critical to performance of PSO-ED. In order to examine the influence of the parameters on precision and efficiency, a sensitivity analysis was conducted to each parameter.

As shown in Equation (7), is a user-defined threshold used to judge whether the swarm is evolving into a better result. A proper value of can reflect the evolutionary state of the swarm and help the swarm choose an appropriate evolution strategy. In order to determine an appropriate value of , we have selected five values (1.0, 0.1, 0.01, 0.001 and 0.0001) and conducted for 30 runs independently for each value on 12 test functions. Note that the other parameters are set as follows: , .

As shown in Table 3, the statistical values (Mean and Rank) are listed and it is obvious that the result is best (smallest average ranking) when .

Table 3.

Sensitivity analysis for .

Another important parameter is the swarm size . We choose five values (20, 30, 40, 50 and 60) to conduct experiments. And the other parameters are set as follows: . The results are shown in Table 4. The average ranking shows that PSO-ED performs best when .

Table 4.

Sensitivity analysis for .

4.2. Comparison with Other PSO Variants

We use the same criteria provided by MPSO [72] to compare our PSO-ED with MPSO and its corresponding comparison algorithms for fair comparison. As shown in on Table 5, these popular algorithms are GPSO [49], LPSO [26], SPSO [74], CLPSO [75], FIPS [27], and DMSPSO [35]. The algorithms’ performances are characterized by statistical means and standard deviations. Note that the results of the seven PSO variants are directly referenced from literature [72]. For all the algorithms, the swarm size is set to 40, therefore the maximum number of iterations is 2500 for 10-D and 7500 for 30-D. The statistical results for the 12 test functions in 10-D and 30-D are summarized in Table 6 and Table 7, respectively. Following conventions, the lowest mean and standard deviation in each line are regarded as the best results and are highlighted in bold. To validate the significance of the PSO-ED, Non-parametric Wilcoxon signed-rank test is conducted between PSO-ED and other PSO variants. The symbol “+”, “0” and “-” means that the statistical mean values of the proposed PSO-ED are better, equal and worse than those of the compared algorithms.

Table 5.

The popular algorithms used for comparison.

Table 6.

Comparison of PSO-ED with 7 popular PSO variants for 10-D case.

Table 7.

Comparison of PSO-ED with 7 popular PSO variants for 30-D case.

While conducting the comparison experiments, the crucial control parameters of our PSO-ED are set as follows: (same as the setting of MPSO [72]), . As shown in Table 6 for the 10-D case, compared with the other 7 algorithms, out of the total of 12 functions, the mean values of our algorithm achieve better solutions than the other 7 algorithms on 4 functions (F1, F2, F6 and F11), and achieve exactly the same best mean values with other 5 algorithms on 2 functions (F9 and F12). However, compared with MPSO on F3, F4, F5, DMSPSO on F8, FIPS on F7, CLPSO on F10, our PSO-ED is slightly weaker.

As shown in Table 7 for 30-D case, compared to the counterparts, out of the total of 12 functions, our algorithm obtains the best mean values on 6 functions (F2, F3, F6, F8, F11 and F12). However, PSO-ED is slight weaker than MPSO on F4, F5, F8, SPSO on F1.

As shown in Table 8, in the Non-parametric Wilcoxon signed-rank test of PSO-ED and other algorithms, PSO-ED have achieved obvious advantages on 10-D and 30-D except that MPOS and PSO-ED achieved the same optimal result on 10-D.

Table 8.

Statistical analysis of Wilcoxon signed-rank test between PSO-ED and other PSO variants.

In conclusion, although PSO-ED is weak in solving a few test functions, its results are superior to those of popular PSO variants such as MPSO, DMSPSO, FIPS, CLPSO, SPSO, LPSO, and GPSO.

5. Conclusions

In this paper, we proposed a novel measure of diversity based on sub-space encoding for the search space; a notion of exploration degree based on the diversity in the exploration, exploitation and convergence states, which efficiently evaluates the degree of demand for the dispersion of the swarm; a technique of disturbance update mode for updating the poor-performed particles’ positions to save the cost of function evaluations (FEs) on them. Since the diversity evaluation can reflect the swarm distribution very well, it can provide a better basis for adaptive parameter adjustment strategy and assist the swarm to jump out of the local traps. Therefore, this method is more suitable for the complex multimodal optimization.

The effectiveness of the developed techniques was validated through a set of benchmark functions in CEC2015. Compared with 7 popular PSO variants, out of the 12 benchmark functions, PSO-ED obtains 6 best results for both the 10-D and 30-D cases.

However, the stability of the developed PSO-ED can be further improved and is worthy of investigation in the future work. For example, for F12 in 10-D and 30-D, although PSO-ED and SPSO both achieve the optimal mean, PSO-ED is slightly weaker in the std term, which means that PSO-ED suffers from risk of falling into some local optimum.

Author Contributions

Conceptualization, H.Z. and X.W.; methodology, H.Z. and X.W.; software, H.Z.; validation, H.Z. and X.W.; formal analysis, H.Z. and X.W.; investigation, H.Z. and X.W.; resources, H.Z. and X.W.; data curation, H.Z. and X.W.; writing—original draft preparation, H.Z. and X.W.; writing—review and editing, H.Z. and X.W.; visualization, H.Z.; supervision, X.W.; project administration, X.W.; funding acquisition, X.W. All authors have read and agreed to the published version of the manuscript.

Funding

This work was supported in part by the Science and Technology Commission of Shanghai Municipality Fund No. 18510745700.

Institutional Review Board Statement

Not applicable.

Informed Consent Statement

Not applicable.

Data Availability Statement

Not applicable.

Acknowledgments

This work was supported in part by the Science and Technology Commission of Shanghai Municipality Fund No. 18510745700.

Conflicts of Interest

The authors declare no conflict of interest.

References

- Eberhart, R.; Kennedy, J. Particle swarm optimization. In Proceedings of the ICNN’95 International Conference on Neural Networks, Perth, WA, Australia, 27 November—1 December 1995; pp. 1942–1948. [Google Scholar]

- Eberhart, R.; Kennedy, J. A new optimizer using particle swarm theory. In Proceedings of the MHS’95 Sixth International Symposium on Micro Machine and Human Science, Nagoya, Japan, 4–6 October 1995; pp. 39–43. [Google Scholar]

- Kuila, P.; Jana, P.K. Energy efficient clustering and routing algorithms for wireless sensor networks: Particle swarm optimization approach. Eng. Appl. Artif. Intell. 2014, 33, 127–140. [Google Scholar] [CrossRef]

- Shen, M.; Zhan, Z.H.; Chen, W.N.; Gong, Y.J.; Zhang, J.; Li, Y. Bi-velocity discrete particle swarm optimization and its application to multicast routing problem in communication networks. IEEE Trans. Ind. Electron. 2014, 61, 7141–7151. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, S.; Ji, G.; Dong, Z. An MR Brain Images Classifier System via Particle Swarm Optimization and Kernel Support Vector Machine. Sci. World J. 2013, 2013, 130–134. [Google Scholar] [CrossRef] [PubMed]

- Tan, T.Y.; Zhang, L.; Lim, C.P.; Fielding, B.; Yu, Y.; Anderson, E. Evolving Ensemble Models for Image Segmentation Using Enhanced Particle Swarm Optimization. IEEE Access 2019, 7, 34004–34019. [Google Scholar] [CrossRef]

- Sakri, S.B.; Rashid, N.B.A.; Zain, Z.M. Particle Swarm Optimization Feature Selection for Breast Cancer Recurrence Prediction. IEEE Access 2018, 6, 29637–29647. [Google Scholar] [CrossRef]

- Dhinesh Babu, L.D.; Venkata Krishna, P. Honey bee behavior inspired load balancing of tasks in cloud computing environments. Appl. Soft. Comput. J. 2013, 13, 2292–2303. [Google Scholar]

- Wang, Z.J.; Zhan, Z.H.; Kwong, S.; Jin, H.; Zhang, J. Adaptive Granularity Learning Distributed Particle Swarm Optimization for Large-Scale Optimization. IEEE Trans. Cybern. 2020, 1–14. [Google Scholar] [CrossRef]

- Sharafi, M.; ELMekkawy, T.Y. Multi-objective optimal design of hybrid renewable energy systems using PSO-simulation based approach. Renew. Energy 2014, 68, 67–79. [Google Scholar] [CrossRef]

- Cabrerizo, F.J.; Herrera-Viedma, E.; Pedrycz, W. A method based on PSO and granular computing of linguistic information to solve group decision making problems defined in heterogeneous contexts. Eur. J. Oper. Res. 2013, 230, 624–633. [Google Scholar] [CrossRef]

- Zhang, X.; Du, K.J.; Zhan, Z.H.; Kwong, S.; Gu, T.L.; Zhang, J. Cooperative Coevolutionary Bare-Bones Particle Swarm Optimization with Function Independent Decomposition for Large-Scale Supply Chain Network Design with Uncertainties. IEEE Trans. Cybern. 2019, 50, 4454–4468. [Google Scholar] [CrossRef]

- Xue, Y.; Tang, T.; Liu, A.X. Large-Scale Feedforward Neural Network Optimization by a Self-Adaptive Strategy and Parameter Based Particle Swarm Optimization. IEEE Access 2019, 7, 52473–52483. [Google Scholar] [CrossRef]

- Ali, M.H.; Al Mohammed, B.A.D.; Ismail, A.; Zolkipli, M.F. A New Intrusion Detection System Based on Fast Learning Network and Particle Swarm Optimization. IEEE Access 2018, 6, 20255–20261. [Google Scholar] [CrossRef]

- Zhan, Z.H.; Zhang, J.; Li, Y.; Chung, H.S.H. Adaptive particle swarm optimization. IEEE Trans. Syst. Man. Cybern Part. B Cybern. 2009, 39, 1362–1381. [Google Scholar] [CrossRef]

- Gou, J.; Lei, Y.X.; Guo, W.P.; Wang, C.; Cai, Y.Q.; Luo, W. A novel improved particle swarm optimization algorithm based on individual difference evolution. Appl. Soft Comput. J. 2017, 57, 468–481. [Google Scholar] [CrossRef]

- Niu, B.; Zhu, Y.; He, X.; Wu, H. MCPSO: A multi-swarm cooperative particle swarm optimizer. Appl. Math. Comput. 2007, 185, 1050–1062. [Google Scholar] [CrossRef]

- Wang, L.; Yang, B.; Chen, Y. Improving particle swarm optimization using multi-layer searching strategy. Inf. Sci. 2014, 274, 70–94. [Google Scholar] [CrossRef]

- Han, F.; Liu, Q. A diversity-guided hybrid particle swarm optimization based on gradient search. Neurocomputing 2014, 137, 234–240. [Google Scholar] [CrossRef]

- Zhao, F.; Tang, J.; Wang, J.; Jonrinaldi. An improved particle swarm optimization with decline disturbance index (DDPSO) for multi-objective job-shop scheduling problem. Comput. Oper. Res. 2014, 45, 38–50. [Google Scholar] [CrossRef]

- Chen, Y.; Li, L.; Peng, H.; Xiao, J.; Wu, Q. Dynamic multi-swarm differential learning particle swarm optimizer. Swarm Evol. Comput. 2018, 39, 209–221. [Google Scholar] [CrossRef]

- Lynn, N.; Ali, M.Z.; Suganthan, P.N. Population topologies for particle swarm optimization and differential evolution. Swarm Evol. Comput. 2018, 39, 24–35. [Google Scholar] [CrossRef]

- Zhang, L.; Tang, Y.; Hua, C.; Guan, X. A new particle swarm optimization algorithm with adaptive inertia weight based on Bayesian techniques. Appl. Soft Comput. J. 2015, 28, 138–149. [Google Scholar] [CrossRef]

- Tanweer, M.R.; Suresh, S.; Sundararajan, N. Self regulating particle swarm optimization algorithm. Inf. Sci. 2015, 294, 182–202. [Google Scholar] [CrossRef]

- Taherkhani, M.; Safabakhsh, R. A novel stability-based adaptive inertia weight for particle swarm optimization. Appl. Soft Comput. J. 2016, 38, 281–295. [Google Scholar] [CrossRef]

- Kennedy, J.; Mendes, R. Population structure and particle swarm performance. In Proceedings of the 2002 Congress on Evolutionary Computation, Honolulu, HI, USA, 12–17 May 2002; pp. 1671–1676. [Google Scholar]

- Mendes, R.; Kennedy, J.; Neves, J. The fully informed particle swarm: Simpler, maybe better. IEEE Trans. Evol. Comput. 2004, 8, 204–210. [Google Scholar] [CrossRef]

- Cooren, Y.; Clerc, M.; Siarry, P. Performance evaluation of TRIBES, an adaptive particle swarm optimization algorithm. Swarm Intell. 2009, 3, 149–178. [Google Scholar] [CrossRef]

- Kennedy, J. Small worlds and mega-minds: Effects of neighborhood topology on particle swarm performance. In Proceedings of the 1999 Congress on Evolutionary Computation-CEC99 (Cat. No. 99TH8406), Washington, DC, USA, 6–9 July 1999; pp. 1931–1938. [Google Scholar]

- Suganthan, P.N. Particle swarm optimiser with neighbourhood operator. In Proceedings of the 1999 Congress on Evolutionary Computation-CEC99 (Cat. No. 99TH8406), Washington, DC, USA, 6–9 July 1999; pp. 1958–1962. [Google Scholar]

- Bonyadi, M.R.; Li, X.; Michalewicz, Z. A hybrid particle swarm with a time-adaptive topology for constrained optimization. Swarm Evol. Comput. 2014, 18, 22–37. [Google Scholar] [CrossRef]

- Zhang, J.; Zhu, X.; Wang, Y.; Zhou, M. Dual-Environmental Particle Swarm Optimizer in Noisy and Noise-Free Environments. IEEE Trans. Cybern. 2019, 49, 2011–2021. [Google Scholar] [CrossRef]

- van den Bergh, F.; Engelbrecht, A.P. A cooperative approach to participle swam optimization. IEEE Trans. Evol. Comput. 2004, 8, 225–239. [Google Scholar] [CrossRef]

- Blackwell, T.; Branke, J. Multi-Swarm Optimization in Dynamic Environments. In Workshops on Applications of Evolutionary Computation; Springer: Berlin/Heidelberg, Germany, 2004; pp. 489–500. [Google Scholar]

- Liang, J.J.; Suganthan, P.N. Dynamic multi-swarm particle swarm optimizer with local search. In Proceedings of the 2005 IEEE Congress on Evolutionary Computation, Scotland, UK, 2–5 September 2005; pp. 522–528. [Google Scholar]

- Zhao, S.Z.; Liang, J.J.; Suganthan, P.N.; Tasgetiren, M.F. Dynamic multi-swarm particle swarm optimizer with local search for large scale global optimization. In Proceedings of the 2008 IEEE Congress on Evolutionary Computation, Hong Kong, China, 1–6 June 2008; pp. 3845–3852. [Google Scholar]

- Xu, X.; Tang, Y.; Li, J.; Hua, C.; Guan, X. Dynamic multi-swarm particle swarm optimizer with cooperative learning strategy. Appl. Soft Comput. J. 2015, 29, 169–183. [Google Scholar] [CrossRef]

- Mirjalili, S.; Mohd Hashim, S.Z.; Moradian Sardroudi, H. Training feedforward neural networks using hybrid particle swarm optimization and gravitational search algorithm. Appl. Math. Comput. 2012, 218, 11125–11137. [Google Scholar] [CrossRef]

- Zhang, J.R.; Zhang, J.; Lok, T.M.; Lyu, M.R. A hybrid particle swarm optimization-back-propagation algorithm for feedforward neural network training. Appl. Math. Comput. 2007, 185, 1026–1037. [Google Scholar] [CrossRef]

- Nagra, A.A.; Han, F.; Ling, Q.-H.; Mehta, S. An Improved Hybrid Method Combining Gravitational Search Algorithm with Dynamic Multi Swarm Particle Swarm Optimization. IEEE Access 2019, 7, 50388–50399. [Google Scholar] [CrossRef]

- Zhan, Z.H.; Zhang, J.; Li, Y.; Shi, Y.H. Orthogonal learning particle swarm optimization. IEEE Trans. Evol. Comput. 2011, 15, 832–847. [Google Scholar] [CrossRef]

- Garg, H. A hybrid PSO-GA algorithm for constrained optimization problems. Appl. Math. Comput. 2016, 274, 292–305. [Google Scholar] [CrossRef]

- Plevris, V.; Papadrakakis, M. A Hybrid Particle Swarm-Gradient Algorithm for Global Structural Optimization. Comput. Civ. Infrastruct Eng. 2011, 26, 48–68. [Google Scholar] [CrossRef]

- Singh, N.; Singh, S.B. Hybrid Algorithm of Particle Swarm Optimization and Grey Wolf Optimizer for Improving Convergence Performance. J. Appl. Math. 2017. [Google Scholar] [CrossRef]

- Raju, M.; Gupta, M.K.; Bhanot, N.; Sharma, V.S. A hybrid PSO–BFO evolutionary algorithm for optimization of fused deposition modelling process parameters. J. Intell. Manuf. 2019, 30, 2743–2758. [Google Scholar] [CrossRef]

- Sivanandam, S.N.; Visalakshi, P. Dynamic task scheduling with load balancing using parallel orthogonal particle swarm optimization. Int. J. Bio-Inspired Comput. 2009, 1, 276–286. [Google Scholar] [CrossRef]

- Kang, Q.; Xiong, C.; Zhou, M.; Meng, L. Opposition-Based Hybrid Strategy for Particle Swarm Optimization in Noisy Environments. IEEE Access 2018, 6, 21888–21900. [Google Scholar] [CrossRef]

- Cao, Y.; Zhang, H.; Li, W.; Zhou, M.; Zhang, Y.; Chaovalitwongse, W.A. Comprehensive Learning Particle Swarm Optimization Algorithm with Local Search for Multimodal Functions. IEEE Trans. Evol. Comput. 2019, 23, 718–731. [Google Scholar] [CrossRef]

- Shi, Y.; Eberhart, R.C. A modified particle swarm optimizer. In Proceedings of the 1998 IEEE International Conference on Evolutionary Computation Proceedings, IEEE world congress on computational intelligence (Cat. No. 98TH8360), Anchorage, AK, USA, 4–9 May 1988; pp. 69–73. [Google Scholar]

- Chih, M.; Lin, C.J.; Chern, M.S.; Ou, T.Y. Particle swarm optimization with time-varying acceleration coefficients for the multidimensional knapsack problem. Appl. Math. Model. 2014, 38, 1338–1350. [Google Scholar] [CrossRef]

- Ratnaweera, A.; Halgamuge, S.K.; Watson, H.C. Self-organizing hierarchical particle swarm optimizer with time-varying acceleration coefficients. IEEE Trans. Evol. Comput. 2004, 8, 240–255. [Google Scholar] [CrossRef]

- Wu, Y.; Gao, X.Z.; Huang, X.L.; Zenger, K. A hybrid optimization method of Particle Swarm Optimization and Cultural Algorithm. In Proceedings of the 2010 6th International Conference on Natural Computation, Yantai, China, 10–12 August 2010; pp. 2515–2519. [Google Scholar]

- Xu, M.; You, X.; Liu, S. A Novel Heuristic Communication Heterogeneous Dual Population Ant Colony Optimization Algorithm. IEEE Access 2017, 5, 18506–18515. [Google Scholar] [CrossRef]

- Netjinda, N.; Achalakul, T.; Sirinaovakul, B. Particle Swarm Optimization inspired by starling flock behavior. Appl. Soft. Comput. J. 2015, 35, 411–422. [Google Scholar] [CrossRef]

- Fang, W.; Sun, J.; Chen, H.; Wu, X. A decentralized quantum-inspired particle swarm optimization algorithm with cellular structured population. Inf. Sci. 2016, 330, 19–48. [Google Scholar] [CrossRef]

- Zhu, J.; Lin, Y.; Lei, W.; Liu, Y.; Tao, M. Optimal household appliances scheduling of multiple smart homes using an improved cooperative algorithm. Energy 2019, 171, 944–955. [Google Scholar] [CrossRef]

- Zhang, W.X.; Chen, W.N.; Zhang, J. A dynamic competitive swarm optimizer based-on entropy for large scale optimization. In Proceedings of the 2016 Eighth International Conference on Advanced Computational Intelligence (ICACI), Chiang Mai, Thailand, 14–16 February 2016; pp. 365–371. [Google Scholar]

- Ran, M.P.; Wang, Q.; Dong, C.Y. A dynamic search space Particle Swarm Optimization algorithm based on population entropy. In Proceedings of the 26th Chinese Control and Decision Conference (2014 CCDC), Changsha, China, 31 May—2 June 2014; pp. 4292–4296. [Google Scholar]

- Tang, K.; Li, Z.; Luo, L.; Liu, B. Multi-strategy adaptive particle swarm optimization for numerical optimization. Eng. Appl. Artif. Intell. 2015, 37, 9–19. [Google Scholar] [CrossRef]

- Solteiro Pires, E.J.; Machado, J.A.T.; de Moura Oliveira, P.B. Entropy diversity in multi-objective particle swarm optimization. Entropy 2013, 15, 5475–5491. [Google Scholar] [CrossRef]

- Solteiro Pires, E.J.; Tenreiro Machado, J.A.; de Moura Oliveira, P.B. Dynamic shannon performance in a multiobjective particle swarm optimization. Entropy 2019, 21, 1–10. [Google Scholar]

- Solteiro Pires, E.J.; Tenreiro Machado, J.A.; de Moura Oliveira, P.B. PSO Evolution Based on a Entropy Metric. Adv. Intell. Syst. Comput. 2020, 923, 238–248. [Google Scholar]

- Olorunda, O.; Engelbrecht, A.P. Measuring exploration/exploitation in particle swarms using swarm diversity. In Proceedings of the 2008 IEEE Congress on Evolutionary Computation, Hong Kong, China, 1–6 June 2008; pp. 1128–1134. [Google Scholar]

- Riget, J.; Vesterstrøm, J.S. A Diversity-Guided Particle Swarm Optimizer—the ARPSO; Technical Report; (riget: 2002: DGPSO), no. 2 EVA Life; Department of Computer Science, University of Aarhus: Aarhus, Denmark, 2002. [Google Scholar]

- Shannon, C.E. A mathematical theory of communication. Bell Syst. Tech. J. 1948, 27, 379–423. [Google Scholar] [CrossRef]

- Eberhart, R.C.; Shi, Y. Comparing inertia weights and constriction factors in particle swarm optimization. In Proceedings of the 2000 Congress on Evolutionary Computation, LA Jolla, CA, USA, 16–19 July 2000; pp. 84–88. [Google Scholar]

- Xu, G.; Cui, Q.; Shi, X.; Ge, H.; Zhan, Z.H.; Lee, H.P. Particle swarm optimization based on dimensional learning strategy. Swarm Evol. Comput. 2019, 45, 33–51. [Google Scholar] [CrossRef]

- Kaucic, M. A multi-start opposition-based particle swarm optimization algorithm with adaptive velocity for bound constrained global optimization. J. Glob. Optim. 2013, 55, 165–188. [Google Scholar] [CrossRef]

- Zhu, J.; Lauri, F.; Koukam, A.; Hilaire, V. Scheduling optimization of smart homes based on demand response. In IFIP International Conference on Artificial Intelligence Applications and Innovations; Springer: Berlin/Heidelberg, Germany, 2015; Volume 458, pp. 223–236. [Google Scholar]

- Tian, D. Particle swarm optimization with chaos-based initialization for numerical optimization. Intell. Autom. Soft Comput. 2018, 24, 331–342. [Google Scholar] [CrossRef]

- Ye, W.; Feng, W.; Fan, S. A novel multi-swarm particle swarm optimization with dynamic learning strategy. Appl. Soft Comput. J. 2017, 61, 832–843. [Google Scholar] [CrossRef]

- Tian, D.; Shi, Z. MPSO: Modified particle swarm optimization and its applications. Swarm Evol. Comput. 2018, 41, 49–68. [Google Scholar] [CrossRef]

- Liang, J.J.; Qu, B.; Suganthan, P.; Chen, Q. Problem Definitions and Evaluation Criteria for the CEC 2015 Competition on Learning-Based Real-Parameter Single Objective Optimization; Technical Report 201411A; Computational Intelligence Laboratory, Zhengzhou University: Zhengzhou, China; Nanyang Technological University: Singapore, 2014. [Google Scholar]

- Bratton, D.; Kennedy, J. Defining a Standard for Particle Swarm Optimization. In Proceedings of the 2007 IEEE Swarm Intelligence Symposium, Honolulu, HI, USA, 1–5 April 2007; pp. 120–127. [Google Scholar]

- Liang, J.J.; Qin, A.K.; Suganthan, P.N.; Baskar, S. Comprehensive learning particle swarm optimizer for global optimization of multimodal functions. IEEE Trans. Evol. Comput. 2006, 10, 281–295. [Google Scholar] [CrossRef]

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).