1. Introduction

Dictionary learning (DL) is now a mature field [

1,

2,

3], with several efficient algorithms for solving the basic problem or its variants and with numerous applications in image processing (denoising and inpainting), classification, compressed sensing and others. The basic DL problem is: given

N training signals gathered as the columns of the matrix

and the sparsity level

s, find the dictionary

by solving

Here, is the Frobenius norm; and and are columns of and , respectively. The first constraint says that the matrix has at most s nonzeros on each column. The second constraint is the normalization of the atoms (columns of the dictionary).

An alternative view is to relate Equation (

1) to the sparse matrix factorization—or dictionary recovery (DR)—problem. The different assumption is that there indeed exist a dictionary

and a sparse matrix

such that

; the purpose is to recover them from the data

. Such a ground truth is usually not available in practical applications. However, investigating DR is useful for theoretical and practical developments. Significant recent work on this matter can be found in [

4,

5,

6,

7]. Our contribution belongs more to the DR line of thought.

In most DR algorithms, the dictionary size n (the number of atoms) is assumed to be known. In DL applications, n is chosen with a rather informal trial-and-error procedure: a few sizes are used and that giving the best performance in the application at hand is selected. A dictionary with more atoms typically gives a smaller error: if and , with , we expect that . However, this does not mean that is necessarily better. We aim here at an automatic choice of the size that is the most appropriate to the data, based on Information Theoretic Criteria (ITC).

The only previous work based on ITC [

8] uses Minimum Description Length for the choice of the size and the sparsity level. Their algorithm implements a virtual coder and searches exhaustively over all considered dictionary sizes. A recent method [

9] reporting promising results has a similar purpose, but is based on geometric properties of the DR problem.

All other methods are based on heuristics that essentially aim to obtain a dictionary having the same representation error as that obtained with a standard method, but with smaller size. We list a few of the successful approaches. In [

10,

11], a small dictionary is grown by adding representative atoms from time to time in the iterative DL process. Clustering ideas are used in [

12,

13,

14] to reduce the size of a large dictionary. A direct attempt of optimizing the size is employed in [

15] by introducing in the objective a proxy for the size as penalization. An Indian Buffet Process is the tool in [

16]. Other techniques based on Bayesian learning are [

17,

18].

Unlike the method in [

8] but similarly to that in [

9], our approach tries to adapt the size during the DL process. It also has a much simpler implementation, using standard ITC that do not intervene in the learning itself, but only in the selection of the atoms. Hence, the complexity is not much higher than that of the underlying DL algorithm.

Section 2 presents the ITC, specialized to the DL problem, and describes the pool of candidate dictionaries during the DL algorithm.

Section 3 gives the details of our algorithm for adapting the dictionary size.

Section 4 is dedicated to experimental results on synthetic data that show that our algorithm is able to recover the size of the true dictionary, in various noise and sparsity level conditions and with various initializations. Our algorithm also gives, almost always, recovery errors on test data that are better than those given by the underlying DL algorithm in possession of the true dictionary size. Comparison with the method in [

9] is also favorable.

2. Ingredients

2.1. Information Theoretic Criteria

ITC serve for assessing the adequacy of a model to a process described by experimental data, by combining its goodness of fit (approximation error) with its complexity. The underlying model in Equation (

1) is

, where

is a matrix with entries that follow a Gaussian distribution with zero mean and an unknown variance (the same for all entries) [

19]. Denoting

, the goodness of fit is expressed via the Root Mean Square Error

The complexity depends primarily on the number of parameters; in the DL or DR case, this is

The first term corresponds to the number of nonzeros in . The second term is the number of independent elements of the dictionary; we subtract n from the total of elements to account for the atom normalization constraints.

After preliminary investigation with several ITC, we kept only two for our DL approach. The first is Bayesian Information Criterion (BIC) [

20], extended to the form

The first two terms are the standard ones; we have added a third term to account for all possible positions of the nonzero entries in the matrix

, inspired by [

21].

The second ITC is Renormalized Maximum Likelihood (RNML) [

22,

23], adapted as in [

24] to our context, to which we have added the same combinatorial term as above:

We note that a different version, ERNML

, derived in [

25], further analyzed in [

26] and adapted as Equation (

5), gave results similar to those of ERNML

, thus we do not report it here. The ITC dismissed after preliminary (and thus possibly insufficient) investigation are those from [

27,

28].

2.2. Candidate Dictionaries

Application of ITC needs several candidate dictionaries from which selection is made with the minimum ITC value. Since we aim to run a single instance of the learning algorithm and, as such, we have a single dictionary, the only possibility is to compare smaller dictionaries made of a subset of the atoms. Even so, there are too many possible combinations. To reduce their number, we order the atoms based on their importance in the representations. Since

where

is the

j-th row of

, we sort the atoms in decreasing order of their “power”

We still name the sorted dictionary. For selection, we consider dictionaries that are made of the first atoms of . Thus, there are at most n candidates. However, since small dictionaries are certainly not useful, we can also impose a lower bound and take . One can choose or even larger values.

2.3. DL Algorithm

Many DL algorithms are suited to the framework that we propose. We confine the discussion to standard algorithms, which aim to iteratively improve the dictionary and whose iterations have two stages: (i) sparse coding, in which the representation matrix

is computed for fixed dictionary

; and (ii) dictionary update, in which

is improved, possibly together with the nonzero elements of

, but without changing the nonzero positions. Such algorithms are impervious to dictionary size changes; atoms can be removed or added between iterations without any change in the algorithm. Since they give good results and are fast, we adopt some of the simplest algorithms: Orthogonal Matching Pursuit (OMP) [

29] for sparse coding and Approximate K-SVD (AK-SVD) [

30] for dictionary update.

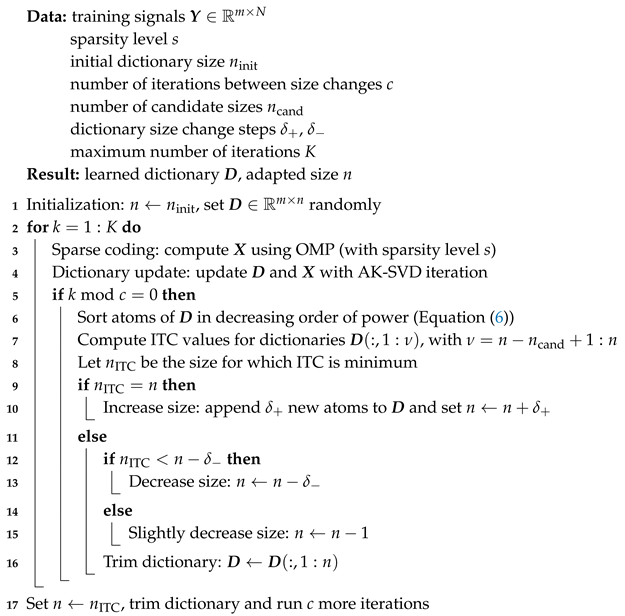

3. Algorithm

We assume first that the sparsity level is known. Our strategy is implemented by Algorithm 1, named ITC-ADL. Starting with an initial dictionary of size

, we run the DL algorithm. We change the size only every

c iterations, to allow the current set of atoms to be sufficiently trained together. Thus, the selection based on ITC described in

Section 2.2 can be meaningful.

Although we could compute ITC values for all dictionary sizes between and the current n, it is more efficient to consider only a smaller number of candidates ; note that the representations have to be recomputed for each sub-dictionary; although this can be done economically (see below the discussion on complexity), it may become a significant burden. Let us denote the size with minimum ITC value among the largest possible dictionaries (those with sizes n, , …, ).

The main question is now how to use this value. If

, we might be tempted to continue the learning process with only

atoms. However, this seems to be (assertion confirmed by numerical experiments) a too drastic decision, that can easily lead to premature shrinking of the dictionary. Instead, we take

as an indicator of the direction where

n must evolve. If

is much smaller than the current

n, we decrease the size by

(this number is 5 in our experiments); if

is only slightly smaller, than we decrease the size by one; finally, we interpret

as a sign that the size needs to be increased and add

atoms to the dictionary (we take

). There are several methods for generating new atoms [

3] (Section 3.9); we choose the simplest: random atoms.

The whole algorithm is run, as typical in DL, for a preset number K of iterations. Only at the end of these iterations we take as a true size information. With this size, we run c more DL iterations, as a final refinement.

| Algorithm 1: ITC-ADL: DL with ITC-adapted dictionary size. |

![Algorithms 12 00178 i001 Algorithms 12 00178 i001]() |

It is relatively hard to estimate the complexity of ITC-ADL, due to the dictionary size variations. We describe only the extra operations with respect to a standard DL algorithm, disregarding the size. There are two main categories of operations that increase the complexity. The first is the total number of iterations, that has to be larger than for standard DL, in order to let the dictionary size converge. The ITC give reliable information if the dictionary is well trained, hence neither K nor c can be small. In the tests reported below, we took and .

The second extra operation is the computation of ITC, which involves the recomputation of the representations for each dictionary. Since we already have the representations for the full dictionary of size n, we can progressively recompute only the representations that change as the size decreases. For example, the dictionary of size lacks atom , but is otherwise identical with . We need to recompute only the representations that contain . Their number should be less than , since atom , which is the least used, should appear in less representations than the average. Thus, overall, the number of recomputed representations is likely bounded by , which is comparable with N (the number of signals represented at each iteration); since this happens only every cth iteration, the extra complexity is relatively small.

In the experimental conditions described in the next section, ITC-ADL is about 3–5 times slower than the underlying AK-SVD, which is one of the fastest DL algorithms. This is not an excessive computational burden.

If the sparsity level s is not known, we simply run ITC-ADL for several candidate values; the best ITC value decides the best pair. Adapting also s during the algorithm may be possible, but seems more difficult than adapting only the size and was left for future work.

4. Numerical Results

We tested our algorithm on synthetic data, obtained with “true” dictionaries whose unit norm atoms are generated randomly following a Gaussian distribution. Given the sparsity level s, the representations are also generated randomly, with nonzeros on random positions. The data are , where the entries of are statistically independent, Gaussian distributed, with zero mean. The variance of the additive noise is chosen to have four different values for the signal-to-noise ratio (SNR): 10 dB, 20 dB, 30 dB and 40 dB. The dictionary sizes are ; the overcompleteness factor has moderate values, as in most applications.

Some of the input data for Algorithm 1 are constant throughout all the experiments. The number of signals is and their size is . The number of iterations is , increasing with n. The dictionary size changes are made every iterations. The size steps are . The number of size candidates is . All the results are obtained with 50 runs for the same data, but with different realizations of and .

4.1. Experiments with Known Sparsity Level

In all the experiments reported in this section, ITC-ADL is in possession of the true value of s, which takes even values from 4 to 12. In the first round of experiments, the dictionary has atoms. The size of the initial dictionary takes random values between 80 and 180, in order to test the robustness of the algorithm to initialization.

Table 1 reports the average, minimum and maximum size

n computed by ITC-ADL over the 50 runs. Our algorithm is able to find very good estimates of the size, for all considered noise and sparsity level values. An important conclusion is that the size of the initial dictionary has no impact on the results.

We evaluate the performance of the dictionaries given by ITC-ADL on 1000 test signals generated similar to the training ones. For comparison, we compute the RMSE for two dictionaries: (i) , for which the representations are computed with OMP; and (ii) the dictionary computed by AK-SVD with the true size and the same number of iterations (, in this case). We denote RMSEt and RMSEn the RMSE obtained with these dictionaries. Both approaches have an advantage over ours: the first has the true dictionary, so it is in fact an oracle; the second uses the same DL algorithm that we use, but knows the size.

Table 2 shows the average value of the ratios RMSE/RMSEt and RMSE/RMSEn. Values below 1 mean that our algorithm is better. Our algorithm always gives worse results than the oracle, which is expected, but the difference is often very small. Compared with AK-SVD, our algorithm is superior in all cases, the advantage growing with the SNR. Another conclusion that could be drawn is that the problem becomes harder as

s and the SNR grow. The first part is natural; for the second part, an explanation can be that, as the SNR grows, there are fewer local minima with values close to the global one; our algorithm appears to be able to find them, while AK-SVD may be trapped in poor local minima; running it with several initializations can improve the results.

The results for

are only slightly worse, thus we jump to those for

, shown in

Table 3. The size

takes random values between 160 and 360. Now, some of the harder problems with large sparsity level (

) are no longer well solved: some size estimations are wrong. However, the good behavior of the RMSE persists: most results are near-oracle and clearly better than AK-SVD.

We can see now some differences between the two ITC: ERNML

tends to overestimate

n, but hardly ever underestimates it, while EBIC is more prone to underestimation. However, in most setups, both ITC give sizes that are near from the true one. Regarding the RMSE (see

Table 4), the situation is somewhat reversed: ERNML

is slightly better than EBIC.

4.2. Execution Times and Discussion of Parameter Values

We present here some characteristics of ITC-ADL based on experimental evidence.

The average running times of ITC-ADL, in the configurations described above, are shown in

Table 5, together with those of AK-SVD. Over the considered

n and

s values, the ratio between the execution times of ITC-ADL and AK-SVD varies between

and

.

Without reporting any actual times, we note that the ITC and the SNR have almost no influence. In addition, the execution time is roughly proportional with the number of iterations

K and the number of signals

N. The other parameters have obvious influences: more frequent dictionary size changes (smaller

c) increase the time; same effect has a larger number of candidates

; the size steps

and

only slightly affect the time. We note that our implementation is not fully optimized, but it is based on a very efficient implementation of AK-SVD [

30].

We chose the parameter values for the experiments reported in the previous section with the aim to show that a single set of values ensures good results for all considered dictionary sizes and sparsity levels. In fact, ITC-ADL is quite robust to the parameter values. Nevertheless, fine tuning is possible when n and s have a more limited range of values. We present below a few results that show the effect of the parameters on the outcome of ITC-ADL. We considered only the cases , , SNR dB; the ITC is ERNML. Since we have run again the algorithm, some results may be different from those from the previous section, due to the random factors involved.

We gave the size steps

and

values between 2 and 8. For

, the results are very similar. For

, a trend is (barely) visible in

Table 6: larger size steps lead to poorer results. This is natural, since a large dictionary increase is a perturbing factor when the algorithm is near convergence. On the other hand, a small size step is not useful in the first iterations of the algorithm; if the initial size is far from the true one, the convergence can be very slow. Thus, although we have obtained good results with constant

, some refinements are certainly possible. It makes sense to decrease

,

as the algorithm evolves. This allows fine tuning of the size when the algorithm approaches convergence.

The number of candidates

has more influence on the results but again this is visible especially for

.

Table 7 shows the average dictionary sizes given by ITC-ADL for

. It is clear that a larger number of candidates is beneficial; since this leads to a larger execution time, a compromise is necessary. This was our reason for taking

. However, for

, even

seems sufficient, as the sizes (not shown here) are virtually the same for all considered

values.

We turn now to the parameter

c, the number of iterations between size changes. Intuitively, small values of

c lead to better results, but with more computational effort.

Table 8 shows the results for

, for

. The effect of

c is visible for the more difficult problems. For small

n and

s, a larger

c can give faster good results.

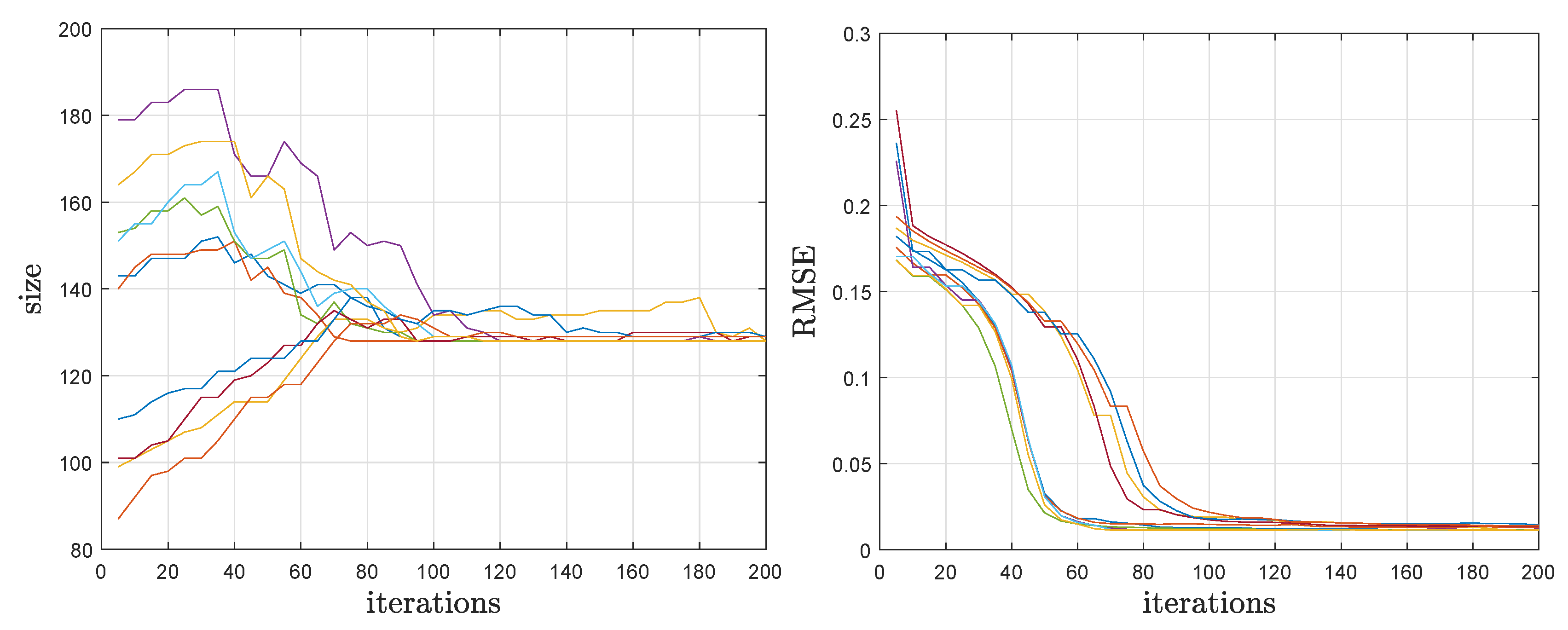

Finally, we partially justify our choices for the number of iterations. Generally, the rule is simple: more iterations lead to better results. However, there are many factors that influence the convergence speed and there are many random elements in the algorithm (the initial dictionary, the added atoms, the initial size and, of course, the noise). We illustrate in

Figure 1 the evolution of the main variables, the current dictionary size (as given by the ITC) and RMSE (on the training data), for

,

and 10 runs of ITC-ADL with random initial sizes. The values are shown for iterations that are multiple of

c; the size is constant between them and the RMSE typically decreases. It is visible that, after 100 iterations, the size is close to the true one and the RMSE is at about its final (and almost optimal, as we have seen) value. Typically, the size becomes larger than the true one when the RMSE reaches the lowest value. Then, ITC-ADL gradually trims the dictionary almost without changing the RMSE. Thus, if our purpose is dictionary learning (and perfect recovery is not sought because there is actually no true dictionary), the number of iterations can be smaller than for dictionary recovery.

4.3. Experiments with Unknown Sparsity Level

Using the same setup as in

Section 4.1, we run ITC-ADL with several values of

s, not only the true one, and choose the sparsity level that gives the best ITC value. Otherwise, the algorithm is unchanged and estimates the dictionary size as usual.

Table 9 presents the average values of the sparsity level and of the dictionary size given by the above procedure for

.

The variance of the estimated s is very low: typically, only two values are obtained. For example, when , for SNR = 10 dB, only values of 6 and 7 are obtained; when SNR=40 dB, only values of 8 and 9 are obtained; etc. One can see that the sparsity level estimations are rather accurate; the only deviation is at low SNR, when s is underestimated. The dictionary size is well estimated, in accordance with the previous results.

A competing algorithm is Adaptive ITKrM [

9] (Matlab sources available at

https://www.uibk.ac.at/mathematik/personal/schnass/code/adl.zip), reported to give very good results in DR problems. However, it seems that this algorithm needs more signals than ours. In our setup with

, A-ITKrM gives very poor results.

Table 10 gives results for

N = 20,000, where A-ITKrM becomes competitive. Due to time constraints, the results are averaged over only 20 runs. The table shows the obtained average sparsity level, dictionary size, and ratio RMSE/RMSEi, where RMSE is the error of our algorithm (using ERNML

) and RMSEi is the error of A-ITKrM. Since A-ITKrM severely underestimates

s, we have computed RMSEi (and RMSE) using OMP with the true

s. Even so, our algorithm finds a more accurate version of the dictionary. While ITKrM gives very good estimates of

n and a good approximation of the dictionary, it seems unable to refine the dictionary as well as our algorithm.

In the current unoptimized implementations, the execution times of ITC-ADL and A-ITKrM are comparable, our algorithm being faster for small s and slower for large s. Limited trials with N = 50,000 suggest that the above remarks continue to stand true.

5. Conclusions

We present a dictionary learning algorithm that works for unknown dictionary size. Using specialized Information Theoretic Criteria, based on BIC and RNML, the size is adapted during the evolution of a standard DL algorithm (AK-SVD in our case). Experimental results show that the algorithm is able to discover the true size of the dictionary used to generate data and, somewhat surprisingly, can give better results than AK-SVD run with the true size.

There are several possible directions for future research. Testing ITC-ADL in various applications is a first aim; they can range from direct applications, such as denoising or missing data estimation (inpainting), to more complex ones, e.g. classification. We also plan to combine the ITC idea with other DL algorithms, not only AK-SVD, especially with algorithms for which the sparsity level is not the same for all signals. Another direction is to find ITC that are suited for other types of noise, not Gaussian as here, with the final purpose of obtaining a single tool that analyzes data, finds the appropriate sparse representation model and designs the optimal dictionary.