1. Introduction

In this paper, we address the multi-criteria analysis of image processing with communication workflow scheduling algorithms and study the applicability of Digital Signal Processor (DSP) cluster architectures.

The problem of scheduling jobs with precedence constraints is a fundamental problem in scheduling theory [

1,

2]. It arises in many industrial and scientific applications, particularly, in image and signal processing, and has been extensively studied. It has been shown to be NP-hard and includes solving a complex task allocation problem that depends not only on workflow properties and constraints, but also on the nature of the infrastructure.

In this paper, we consider a DSP compatible with TigerSHARC TS201S [

3,

4]. This processor was designed in response to the growing demands of industrial signal processing systems for real-time processing of real-world data, performing the high-speed numeric calculations necessary to enable a broad range of applications. It is optimized for both floating point and fixed point operations. It provides ultra-high performance; static superscalar processing optimized for memory-intensive digital signal processing algorithms from fully implemented 5G stations; three-dimensional ultrasound scanners and other medical imaging systems; radio and sonar; industrial measurement; and control systems.

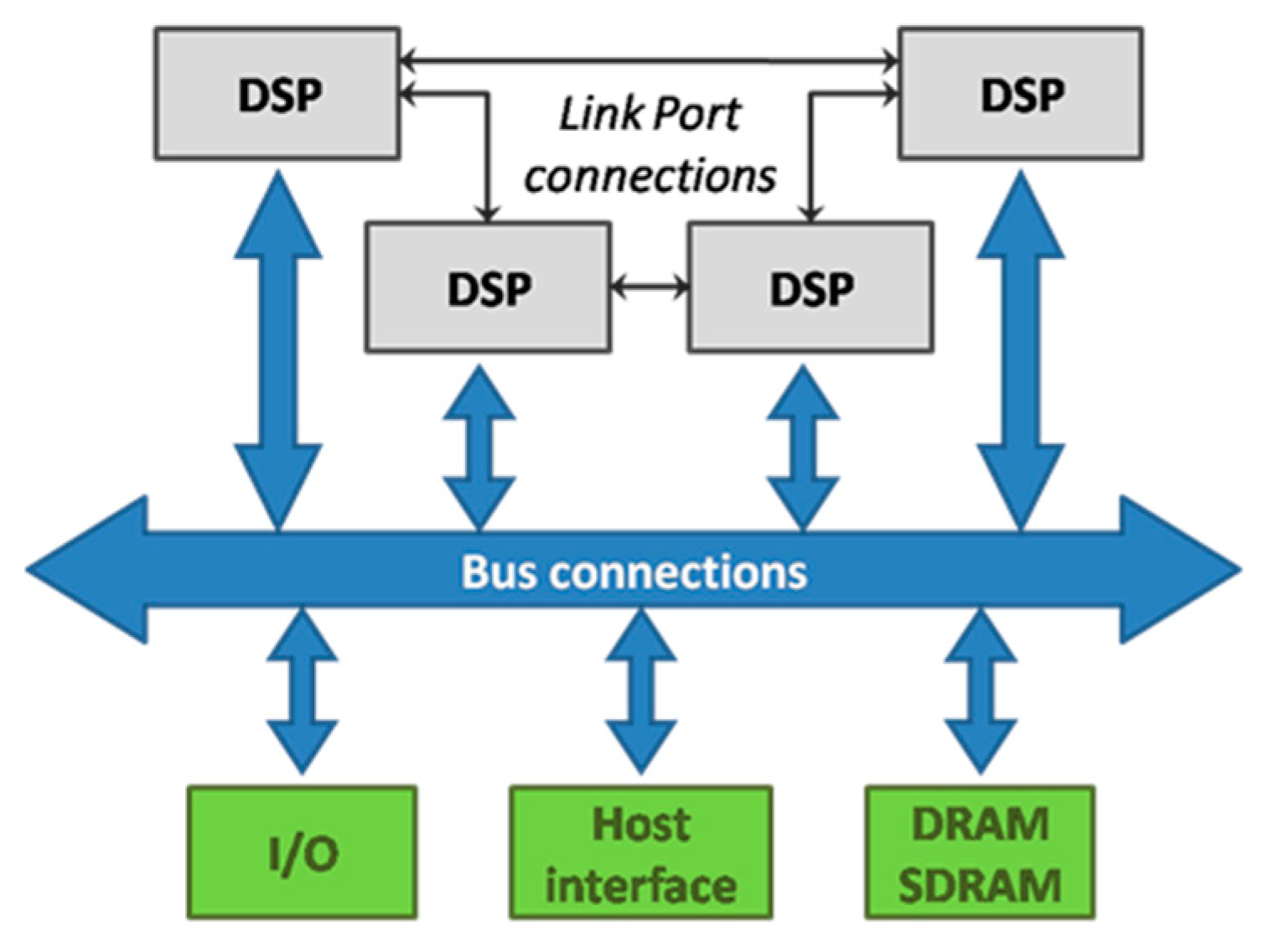

It supports low overhead DMA transfers between internal memory, external memory, memory-mapped peripherals, link ports, host processors, and other DSPs, providing high performance for I/O algorithms.

Flexible instruction sets and high-level language-friendly DSP support the ease of implementation of digital signal processing with low communications overhead in scalable multiprocessing systems. With software that is programmable for maximum flexibility and supported by easy-to-use, low-cost development tools, DSPs enable designers to build innovative features with high efficiency.

The DSP combines very wide memory widths with execution six floating-point and 24 64-bit fixed-point operations for digital signal processing. It maintains a system-on-chip scalable computing design, including 24 M bit of on-chip DRAM, six 4 K word caches, integrated I/O peripherals, a host processor interface, DMA controllers, LVDS link ports, and shared bus connectivity for Glueless Multiprocessing without special bridges and chipsets.

It typically uses two methods to communicate between processor nodes. The first one is dedicated point-to-point communication through link ports. Other method uses a single shared global memory to communicate through a parallel bus.

For full performance of such a combined architecture, sophisticated resource management is necessary. Specifically, multiple instructions must be dispatched to processing units simultaneously, and functional parallelism must be calculated before runtime.

In this paper, we describe an approach for scheduling image processing workflows using the networks of a DSP-cluster (

Figure 1).

2. Model

2.1. Basic Definitions

We address an offline (deterministic) non-preemptive, clairvoyant workflow scheduling problem on a parallel cluster of DSPs.

DSP-clusters consist of integrated modules () , , …, . Let be the size of (number of DSP-processors). Let n workflow jobs , , …, be scheduled on the cluster.

A workflow is a composition of tasks subject to precedence constraints. Workflows are modeled as a Directed Acyclic Graph (DAG) , where is the set of tasks, and , with no cycles.

Each arc is associated with a communication time representing the communication delay, if and are executed on different processors. Task must be completed, and data must be transmitted during prior to when execution of task is initiated. If and are executed on the same processor, no data transmission between them is needed; hence, communication delay is not considered.

Each workflow task is a sequential application (thread) and described by the tuple , with release date , and execution time .

Due to the offline scheduling model, the release date of a workflow . However, the release date of a task is not available before the task is released. Tasks are released only after all dependencies have been satisfied and data are available. At its release date, a task can be allocated to a DSP-processor for an uninterrupted period of time . is completion time of the job .

Total workflow processing time and critical path execution cost are unknown until the job has been scheduled. We allow multiprocessor workflow execution; hence, tasks of can be run on different DSPs.

2.2. Performance Metrics

Three criteria are used to evaluate scheduling algorithms: makespan, critical path waiting time, and critical path slowdown. Makespan is used to qualify the efficiency of scheduling algorithms. To estimate the quality of workflow executions, we apply two workflow metrics: critical path waiting time and critical path slowdown.

Let be the maximum completion time (makespan) of all tasks in the schedule . The waiting time of a task is the difference between the completion time of the task, its execution time, and its release date. Note that a task is not preemptable and it is immediately released when the input data it needs from predecessors are available. However, note that we do not require that a job is allocated to processors immediately at its submission time as in some online problems.

Waiting time of a critical path is the difference between the completion time of the workflow, length of its critical path and data transmission time between all tasks in the critical path. It takes into account waiting times of all tasks in the critical path and communication delay.

The critical path execution time depends on the schedule that allocates tasks on the processor. The minimal value of includes only execution time of the tasks that belong to the critical path. The maximal value includes maximal data transmission times between all tasks in the critical path.

The waiting time of a critical path is defined as . Critical path slowdown is the relative critical path waiting time and evaluates the quality of the critical path execution. A slowdown of one indicates zero waiting times for critical path tasks, while a value greater than one indicates that the critical path completion is increased by increasing the waiting time of critical path tasks. Mean critical path waiting time is , and mean critical path slowdown is .

2.3. DSP Cluster

DSP-clusters consist of m integrated modules (). Each contains DPS-processors with their own local memory. Data exchange between DPS-processors of the same is performed through local ports. The exchange of data between DPS-processors from different is performed via external memory, which needs a longer transmission time than through the local ports. The speed of data transfer between processors depends on their mutual arrangement in the cluster.

Let

be the data rate coefficient from the processor of the

to the processor of

. We neglect the communication delay

inside DSP; however, we take into account the communication delay between DSP-processors of the same

. Data rate coefficients of this communication are represented as a matrix

of the size

. We assume that the transmission rates between different

are equal to

.

Table 1 shows a complete matrix of data rate coefficients for a DSP-cluster with four

s.

The values of the matrix depend on the specific communication topology of the s.

In

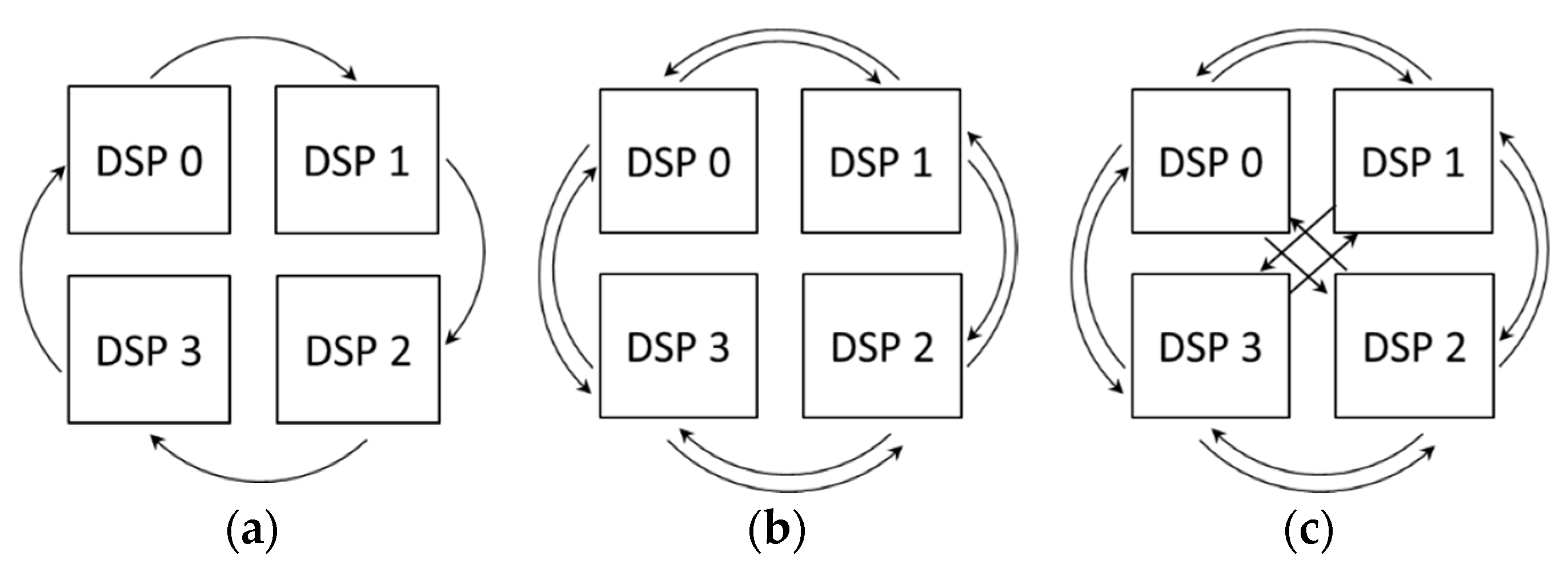

Figure 2, we consider three examples of the

communication topology for

.

Figure 2a shows uni-directional DSP communication. Let us assume that the transfer rate between processors connected by an internal link port is equal to

α. The corresponding matrix of data rate coefficients is presented in

Table 2a.

Figure 2b shows bi-directional DSP communication. The corresponding matrix of data rate coefficients is presented in

Table 2b.

Figure 2c shows all-to-all communication of DSP.

Table 2c shows the corresponding data rate coefficients.

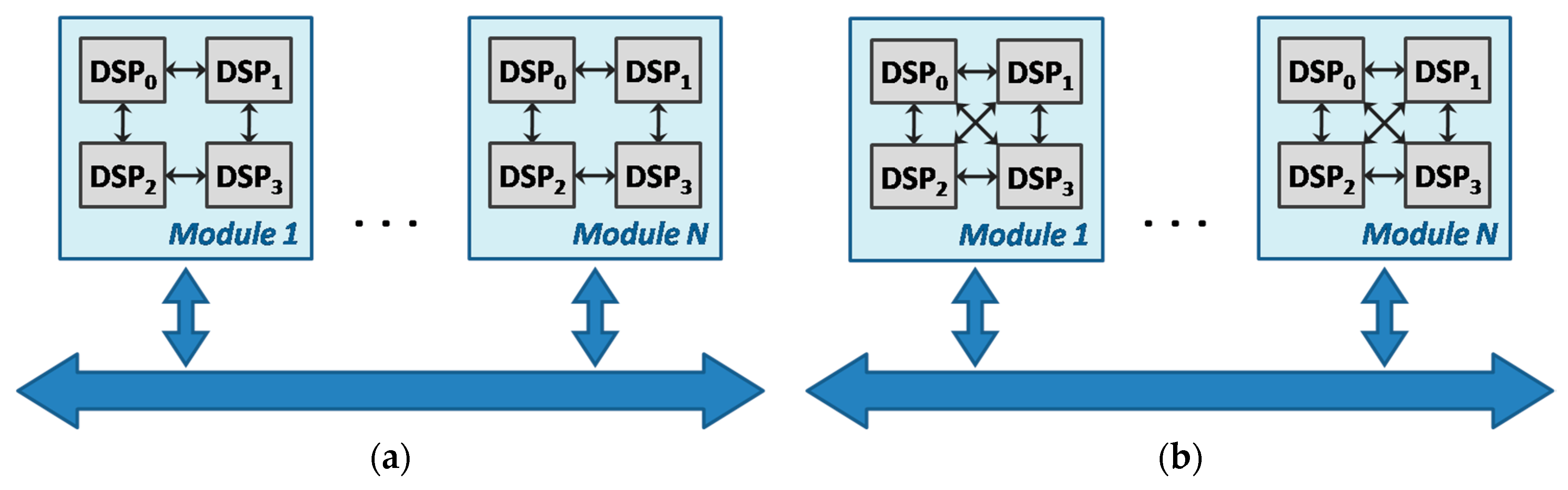

For the experiments, we take into account two models of the cluster (

Figure 3). In the cluster

A, ports connect only neighboring DSPs, as shown in

Figure 3a. In the cluster

B, DSPs are connected to each other, as shown in

Figure 3b.

are interconnected by a bus. In the current model, for each connection, different data transmission coefficients are used. Data transfer within the same DSP has a coefficient of 0, between adjacent DSPs in a single has a coefficient of 1, and between s, has a data transmission coefficient of 10.

3. Related Work

State of the art studies tackle different workflow scheduling problems by focusing on general optimization issues; specific workflow applications; minimization of critical path execution time; selection of admissible resources; allocation of suitable resources for data-intensive workflows; Quality of Service (QoS) constraints; and performance analysis, among other factors. [

5,

6,

7,

8,

9,

10,

11,

12,

13,

14,

15,

16,

17].

Many heuristics have been developed for scheduling DAG-based task graphs in multiprocessor systems [

18,

19,

20]. In [

21], the authors discussed clustering DAG tasks into chains and allocating them to single machines. In [

22], two strategies were considered: Fairness Policy based on Finishing Time (FPFT) and Fairness Policy based on Concurrent Time (FPCT). Both strategies arranged DAGs in ascending order of their slowdown value, selected independent tasks from the DAG with the minimum slowdown, and scheduled them using Heterogeneous Earliest Finishing Time first (HEFT) [

23] or Hybrid.BMCT [

24]. FPFT recalculates the slowdown of a DAG each time the task of a DAG completes execution, while FPCT recalculates the slowdown of all DAGs each time any task in a DAG completes execution.

HEFT is considered as an extension of the classical list scheduling algorithm to cope with heterogeneity and has been shown to produce good results more often than other comparable algorithms. Many improvements and variations to HEFT have been proposed considering different ranking methods, looking ahead algorithms, clustering, and processor selection, for example [

25].

The multi-objective workflow allocation problem has rarely been considered so far. It is important, especially in scenarios that contain aspects that are multi-objective by nature: Quality of Service (QoS) parameters, costs, system performance, response time, and energy, for example [

14].

4. Proposed DSP Workflow Scheduling Strategies

The scheduling algorithm assigns to each graph’s task start execution time. The time assigned to the stop task is the main result metric of the algorithm. The lower the time, the better the scheduling of the graph.

The algorithm uses a list of ready for scheduling tasks and a waiting list of scheduling tasks. If all predecessors of the task are scheduled, then it is inserted into the waiting list. If all incoming data are ready, then the task is inserted into the ready list, otherwise, into the waiting list. Available DPS-processors are placed into the appropriate list.

The list of tasks that are ready to be started is maintained. Independent tasks with no predecessors and with predecessors that completed their execution and available input data are entered into the list. Allocation policies are responsible for selecting a suitable DSP for task allocation.

We introduce five task allocation strategies: PESS (Pessimistic), OPTI (Optimistic), OHEFT (Optimistic Heterogeneous Earliest Finishing Time), PHEFT (Pessimistic Heterogeneous Earliest Finishing Time), and BC (Best Core).

Table 3 briefly describes the strategies.

OHEFT and PHEFT are based on HEFT, a workflow scheduling strategy used in many performance evaluation studies.

HEFT schedules DAGs in two phases: job labeling and processor selection. In the job labeling phase, a rank value (upward rank) based on mean computation and communication costs is assigned to each task of a DAG. The upward rank of a task is recursively computed by traversing the graph upward, starting from the exit task, as follows: , where is the set of immediate successors of task ; is the average communication cost of over all processor pairs; and is the average of the set of computation costs of task .

Although HEFT is well-known, the study of different possibilities for computing rank values in a heterogeneous environment is limited. In some cases, the use of the mean computation and communication as the rank value in the graph may not produce a good schedule [

26].

In this paper, we consider two methods of calculating the rank: best and worst. The best version assumes that tasks are allocated to the same DSP. Hence, no data transmission is needed. Alternatively, the worst version assumes that tasks are allocated to the DSP from different nodes, so data transmission is maximal. To determine the critical path, we need to know the execution time of each task of the graph and the data transfer time, considering every combination of DSPs, where the two given tasks may be executed taking into account the data transfer rate between the two connected nodes.

Tasks labeling prioritizes workflow tasks. Labels are not changed nor recomputed on completion of predecessor tasks. This also distinguishes our model from previous research (see, for instance [

22]). Task labels are used to identify properties of a given workflow. We distinguish four labeling approaches: Best Downward Rank (BDR), Worst Downward Rank (WDR), Best Upward Rank (BUR), and Worst Upward Rank (WUR).

BDR estimates the length of the path from considered task to a root passing a set of immediate predecessors in a workflow without communication costs. WDR estimates the length of the path from considered task to a root passing a set of immediate predecessors in a workflow with worst communications. The descending order of BDR and WDR supports scheduling tasks by the depth-first approach.

BUR estimates the length of the path from the considered task to a terminal start task passing a set of the immediate successors in a workflow without communication costs.

WUR estimates the length of the path from the considered task to a terminal task passing a set of immediate successors in a workflow with the worst communication costs. The descending order of BUR and WUR supports scheduling tasks on the critical path first. The upward rank represents the expected distance of any task to the end of the computation. The downward rank represents the expected distance of any task from the start of the computation.

5. Experimental Setup

This section presents the experimental setup, including workload and scenarios, and describes the methodology used for the analysis.

5.1. Parameters

To provide a performance comparison, we used workloads from a parametric workload generator that produces workflows such as Ligo and Montage [

27,

28]. They are a complex workflow of parallelized computations to process larger-scale images.

We considered three clusters with different numbers of DSPs and two architectures of individual DSPs (

Table 4). Their clock frequency was considered to be equal.

5.2. Methodology of Analysis

Workflow scheduling involves multiple objectives and may use multi-criteria decision support. The classical approach is to use a concept of Pareto optimality. However, it is very difficult to achieve the fast solutions needed for DSP resource management by using the Pareto dominance.

In this paper, we converted the problem to a single objective optimization problem by multiple-criteria aggregation. First, we made criteria comparable by normalizing them to the best values found during each experiment. To this end, we evaluated the performance degradation of each strategy under each metric. This was done relative to the best performing strategy for the metric, as follows:

To provide effective guidance in choosing the best strategy, we performed a joint analysis of several metrics according to the methodology used in [

14,

29]. We aggregated the various objectives to a single one by averaging their values and ranking. The best strategy with the lowest average performance degradation had a rank of 1.

Note that we tried to identify strategies that performed reliably well in different scenarios; that is, we tried to find a compromise that considered all of our test cases with the expectation that it also performed well under other conditions, for example, with different DSP-cluster configurations and workloads. For example, the rank of the strategy could not be the same for any of the metrics individually or any of the scenarios individually.

6. Experimental Results

6.1. Performance Degradation Analysis

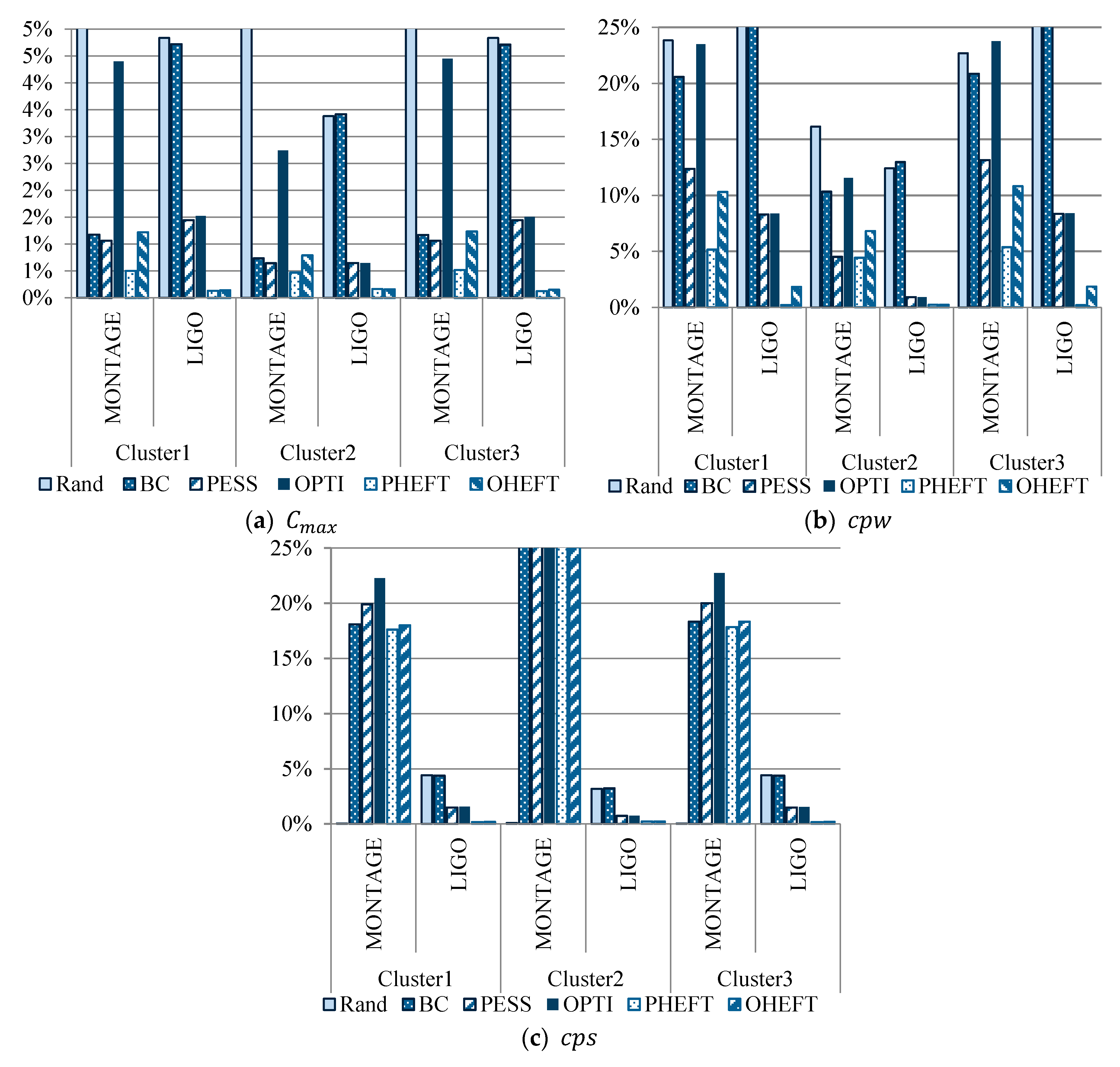

Figure 4 and

Table 5 show the performance degradation of all strategies for

,

, and

.

Table 5 also shows the mean degradation of the strategies and ranking when considering all averages and all test cases.

A small percentage of degradation indicates that the performance of a strategy for a given metric is close to the performance of the best performing strategy for the same metric. Therefore, small degradations represent better results.

We observed that Rand was the strategy with the worst makespan, with up to 318 percent performance degradation compared with the best-obtained result. PHEFT strategy had a small percent of degradation, almost in all metrics and test cases. We saw that had less variation compared with and . It yielded to lesser impact on the overall score. The makespan of PHEFT and OHEFT were near the lower values.

Because our model is a simplified representation of a system, we can conclude that these strategies might have similar efficiency in real DSP-cluster environments when considering the above metrics. However, there exist differences between PESS and OPTI, comparing . In PESS strategy, the critical path completion time did not grow significantly. Therefore, tasks in the critical path experienced small waiting times. Results also showed that for all strategies, small mean critical path waiting time degradation corresponded to small mean critical path slowdown.

BC and Rand strategies had rankings of 5 and 6. Their average degradations were within 67% and 18% of the best results. While PESS and OPTI had rankings of 3 and 4, with average degradations within 8% and 11%.

PHEFT and OHEFT showed the best results. Their degradations were within 6% and 7%, with rankings of 1 and 2.

6.2. Performance Profile

In the previous section, we presented the average performance degradations of the strategies over three metrics and test cases. Now, we analyze results in more detail. Our sampling data were averaged over a large scale. However, the contribution of each experiment varied depending on its variability or uncertainty [

30,

31,

32]. To analyze the probability of obtaining results with a certain quality and their contributors on average, we present the performance profiles of the strategies. Measures of result deviations provide useful information for strategies analysis and interpretation of the data generated by the benchmarking process.

The performance profile

pτ is a non-decreasing, piecewise constant function that presents the probability that a ratio

is within a factor

of the best ratio [

33]. The function

is the cumulative distribution function. Strategies with larger probabilities

for smaller

will be preferred.

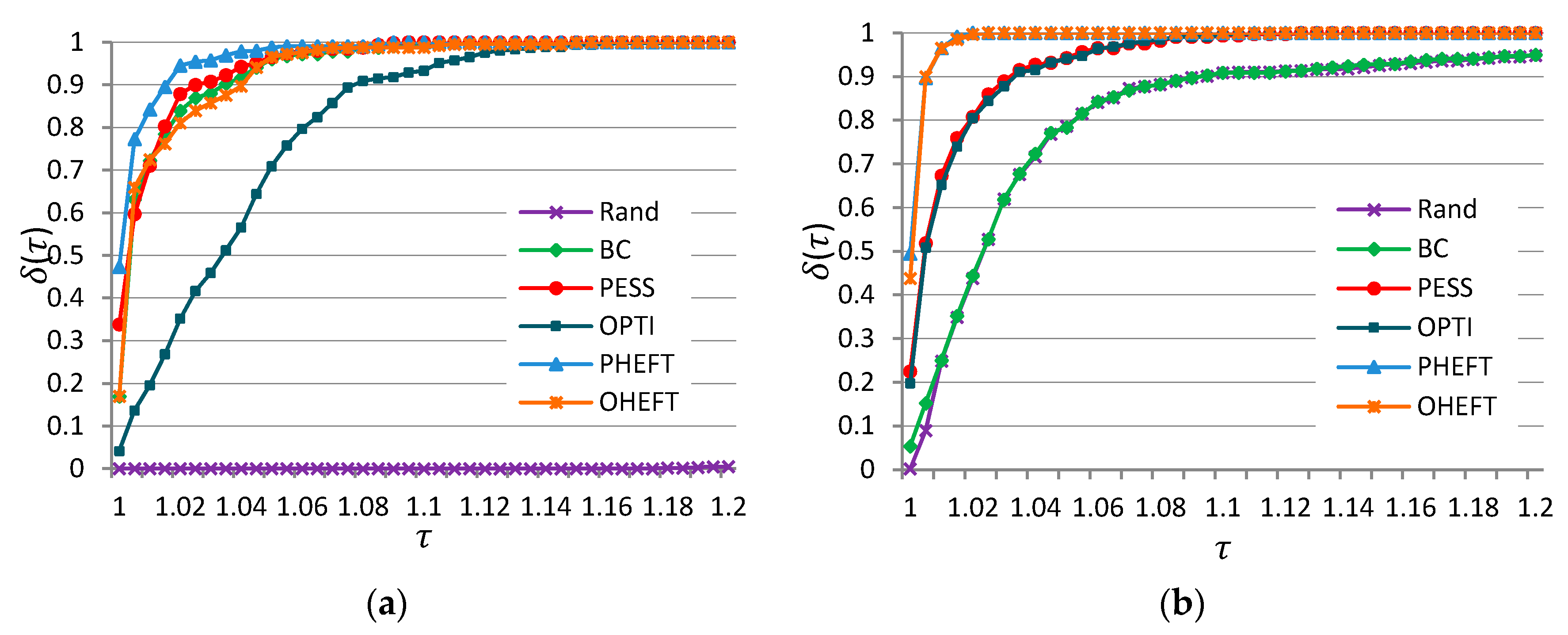

Figure 5 shows the performance profiles of the strategies according to total completion time, in the interval

, to provide objective information for analysis of a test set.

Figure 5a displays results for Montage workflows. PHEFT had the highest probability of being the better strategy. The probability that it was the winner on a given problem within factors of 1.02 of the best solution was close to 0.9. If we chose to be within a factor of 1.1 as the scope of our interest, then strategies except Rand and OPTI would have sufficed with a probability of 1.

Figure 5b displays results for Ligo workflows. Here, PHEFT and OHEFT were the best strategies, followed by OPTI and PESS.

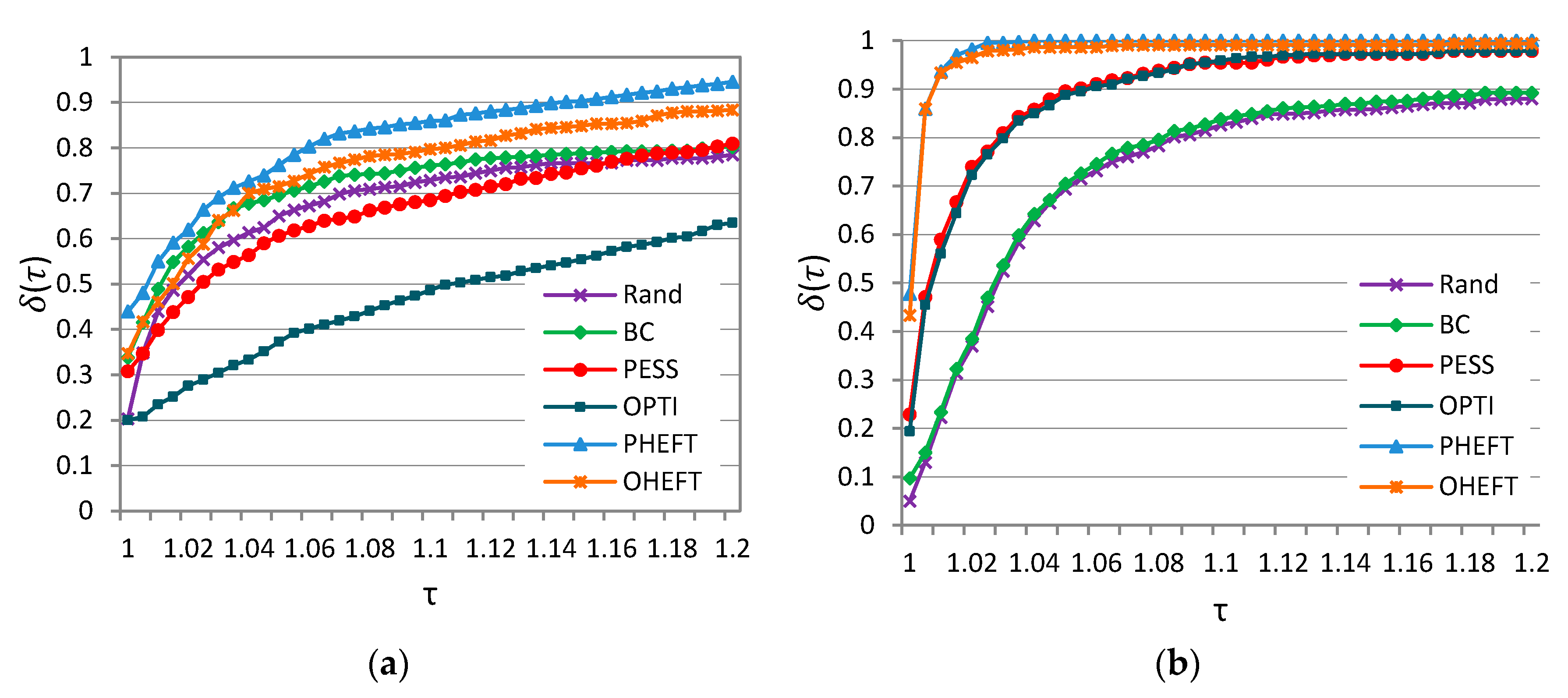

Figure 6 shows

performance profiles of six strategies for Montage and Ligo workflows considering

. In both cases, PHEFT had the highest probability of being the better strategy for

optimization. The probability that it was the winner on a given problem within factors of 1.1 of the best solution was close to 0.85 and 1 for Montage and Ligo, respectively.

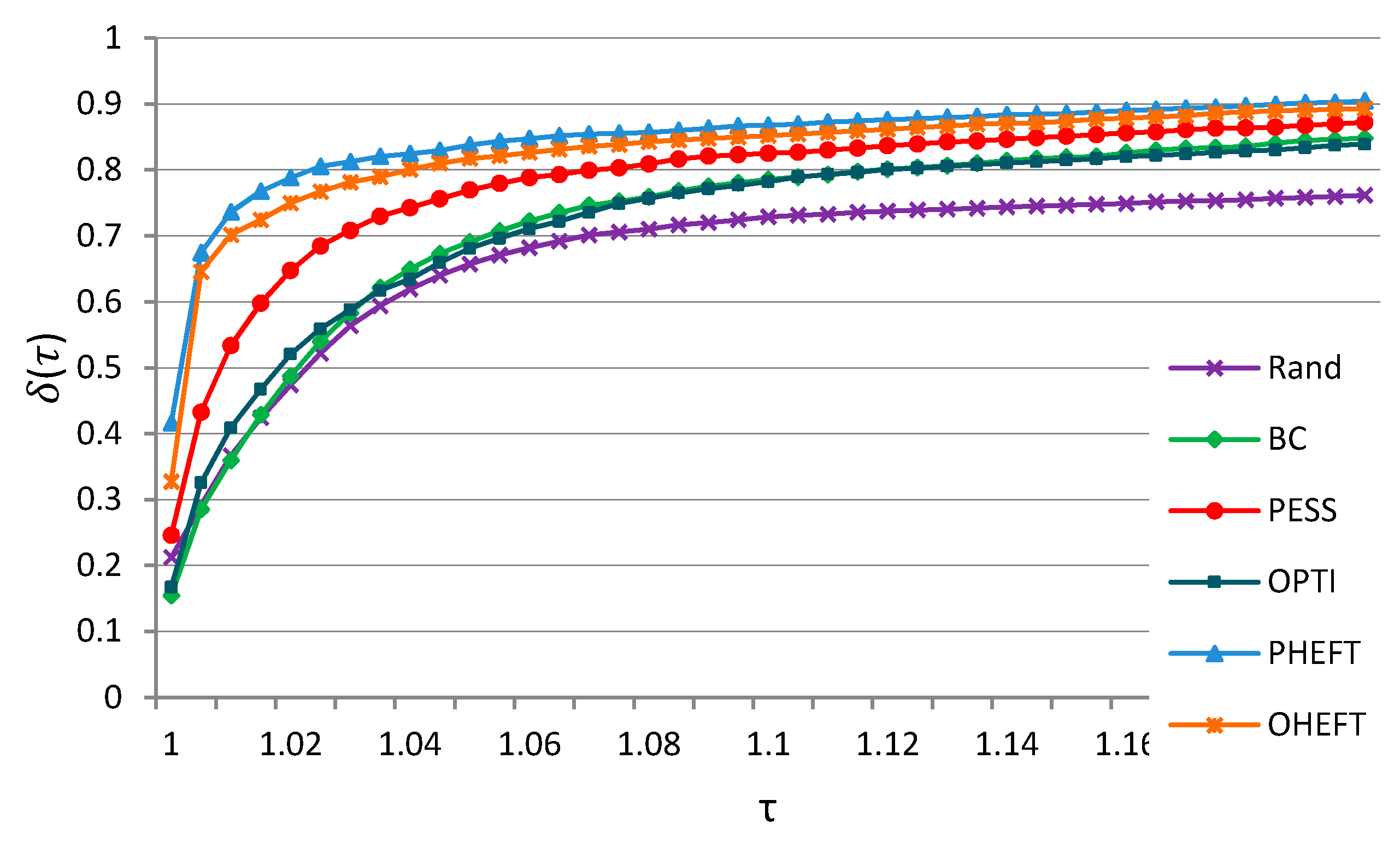

Figure 7 shows the mean performance profiles of all metrics, scenarios and test cases, considering

. There were discrepancies in performance quality. If we want to obtain results within a factor of 1.02 of the best solution, then PHEFT generated them with probability 0.8, while Rand with a probability of 0.47. If we chose

, then PHEFT produced results with a probability of 0.9, and Rand with a probability of 0.76.

7. Conclusions

Effective image and signal processing workflow management requires the efficient allocation of tasks to limited resources. In this paper, we presented allocation strategies that took into account both infrastructure information and workflow properties. We conducted a comprehensive performance evaluation study of six workflow scheduling strategies using simulation. We analyzed strategies that included task labeling, prioritization, resource selection, and DSP-cluster scheduling.

To provide effective guidance in choosing the best strategy, we performed a joint analysis of three metrics (makespan, mean critical path waiting time, and critical path slowdown) according to a degradation methodology and multi-criteria analysis, assuming the equal importance of each metric.

Our goal was to find a robust and well-performing strategy under all test cases, with the expectation that it would also perform well under other conditions, for example, with different cluster configurations and workloads.

Our study resulted in several contributions:

- (1)

We examined overall DSP-cluster performance based on real image and signal processing data, considering Ligo and Montage applications;

- (2)

We took into account communication latency, which is a major factor in DSP scheduling performance;

- (3)

We showed that efficient job allocation depends not only on application properties and constraints but also on the nature of the infrastructure. To this end, we examined three configurations of DSP-clusters.

We found that an appropriate distribution of jobs over the clusters using a pessimistic approach had a higher performance than an allocation of jobs based on an optimistic one.

There were two differences to PHEFT strategy, compared to its original HEFT version. First, the data transfer cost within a workflow was set to maximal values for a given infrastructure to support pessimistic scenarios. All data transmissions were assumed to be made between different integrated modules and different DSPs to obtain the worst data transmission scenario with the maximal data rate coefficient.

Second, PHEFT had reduced time complexity compared to HEFT. It did not need to consider every combination of DSPs, where the two given tasks were executed, and did not need to take into account the data transfer rate between the two nodes to calculate a rank value (upward rank) based on mean computation and communication costs. Low complexity is important for industrial signal processing systems and real-time processing.

We conclude that for practical purposes, the scheduler PHEFT can improve the performance of workflow scheduling on DSP clusters. Although, more comprehensive algorithms can be adopted.