Automatic Concrete Damage Recognition Using Multi-Level Attention Convolutional Neural Network

Abstract

1. Introduction

2. Related Work

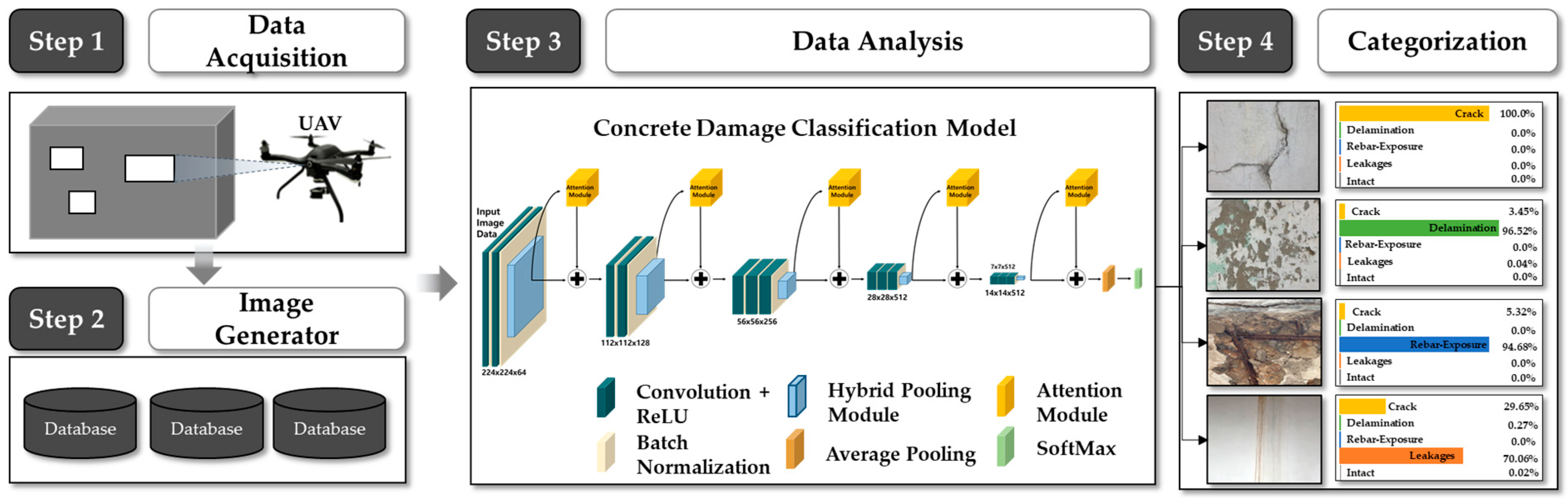

3. Methodology

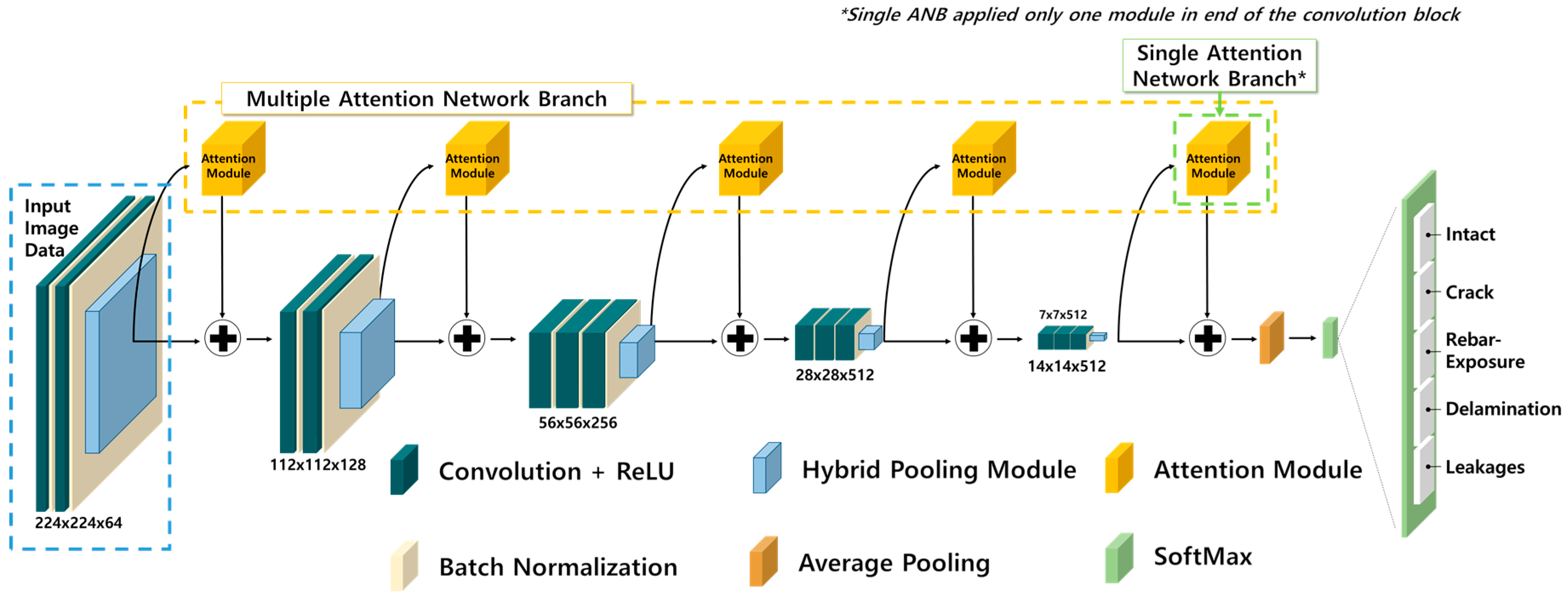

3.1. Proposed Model Architecture

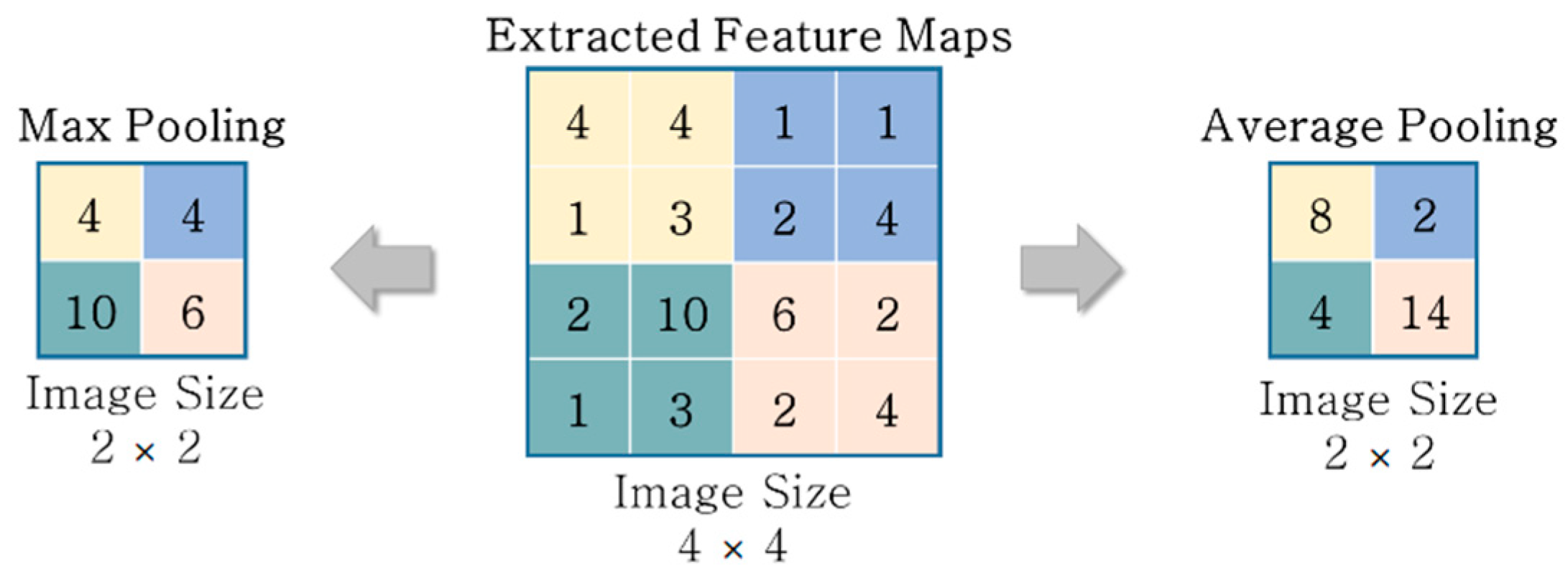

3.2. Hybrid Pooling

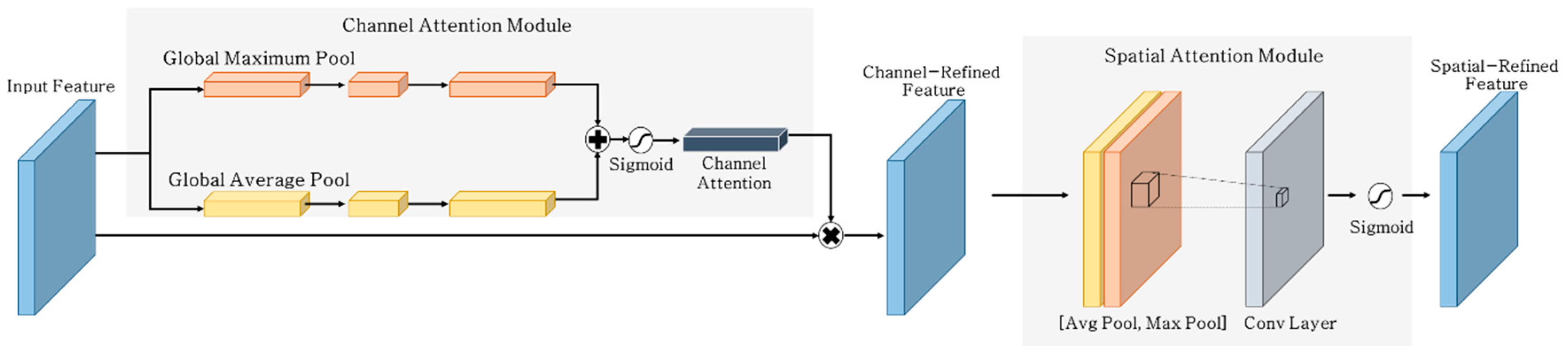

3.3. Attention Network

4. Implementation

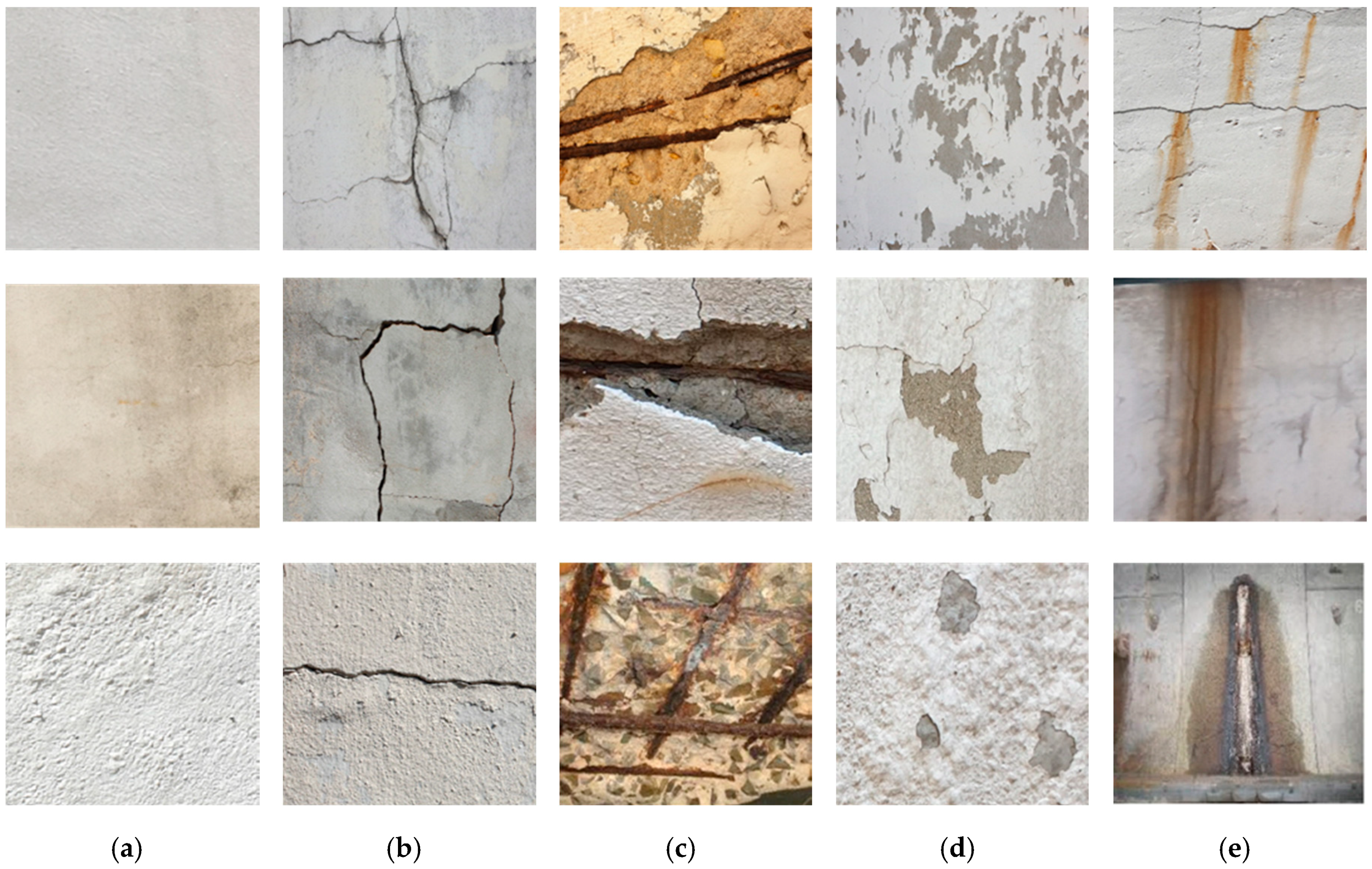

4.1. Establishing Concrete Surface Damage Dataset

4.2. Experimental Settings

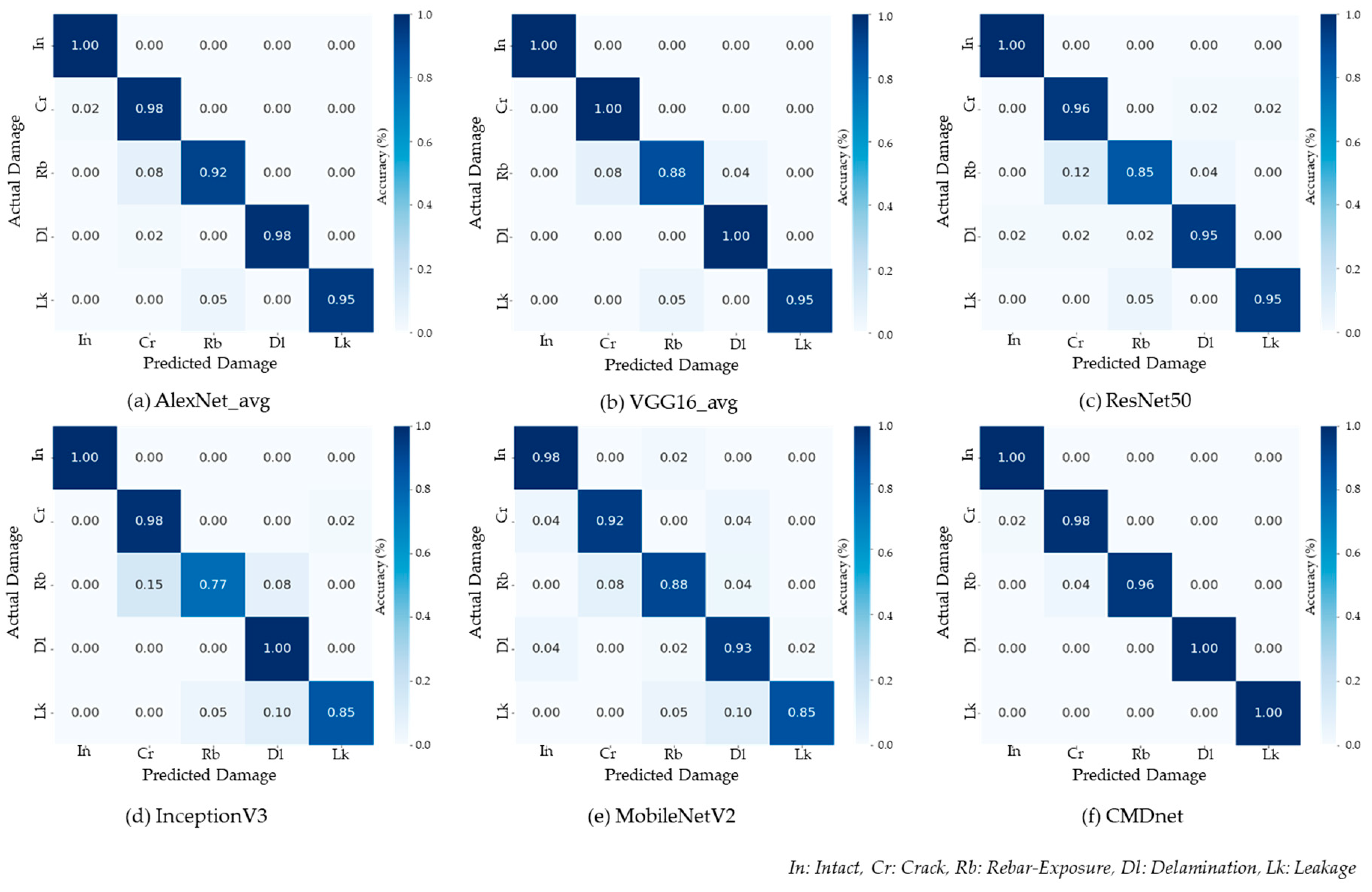

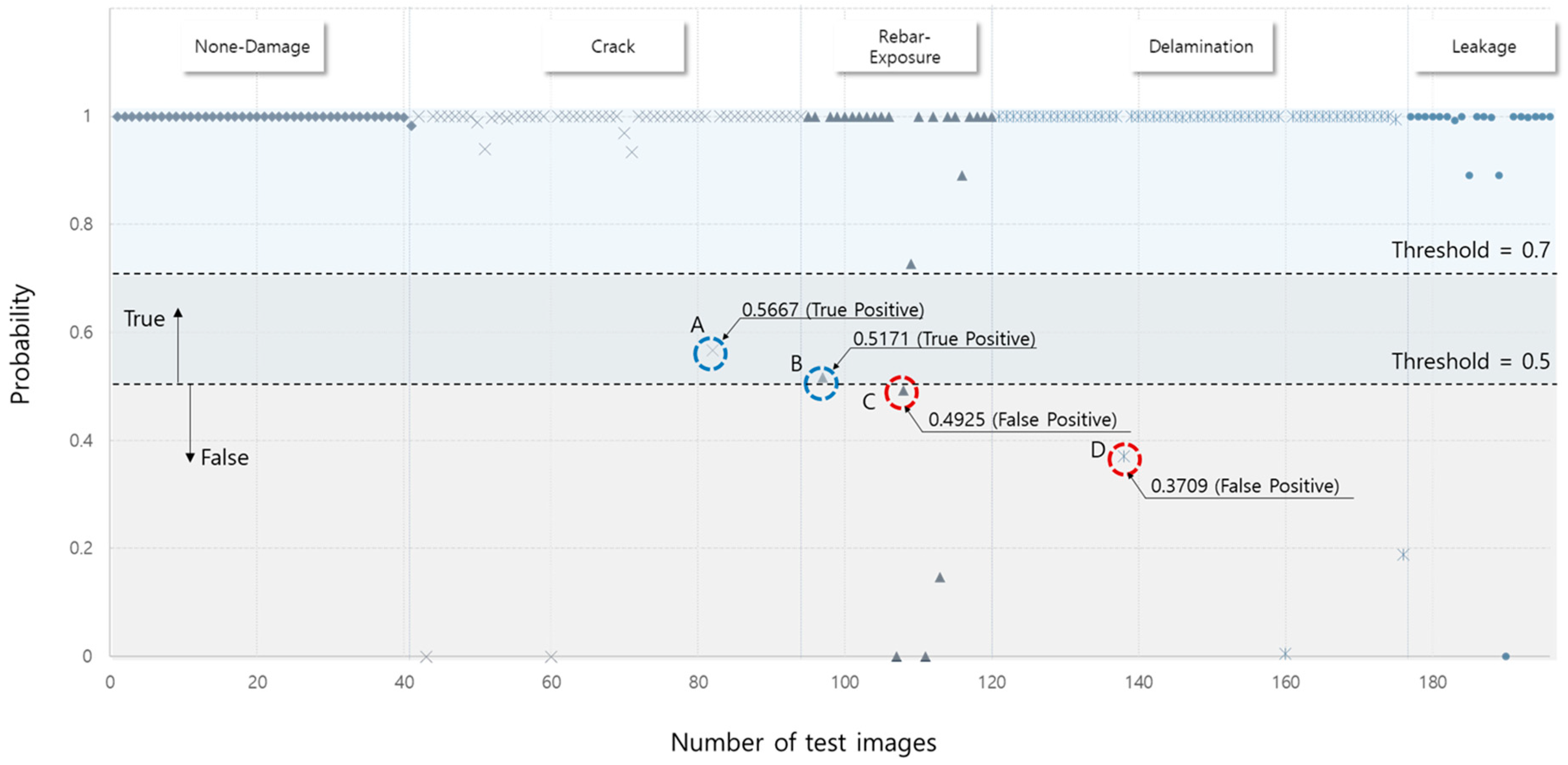

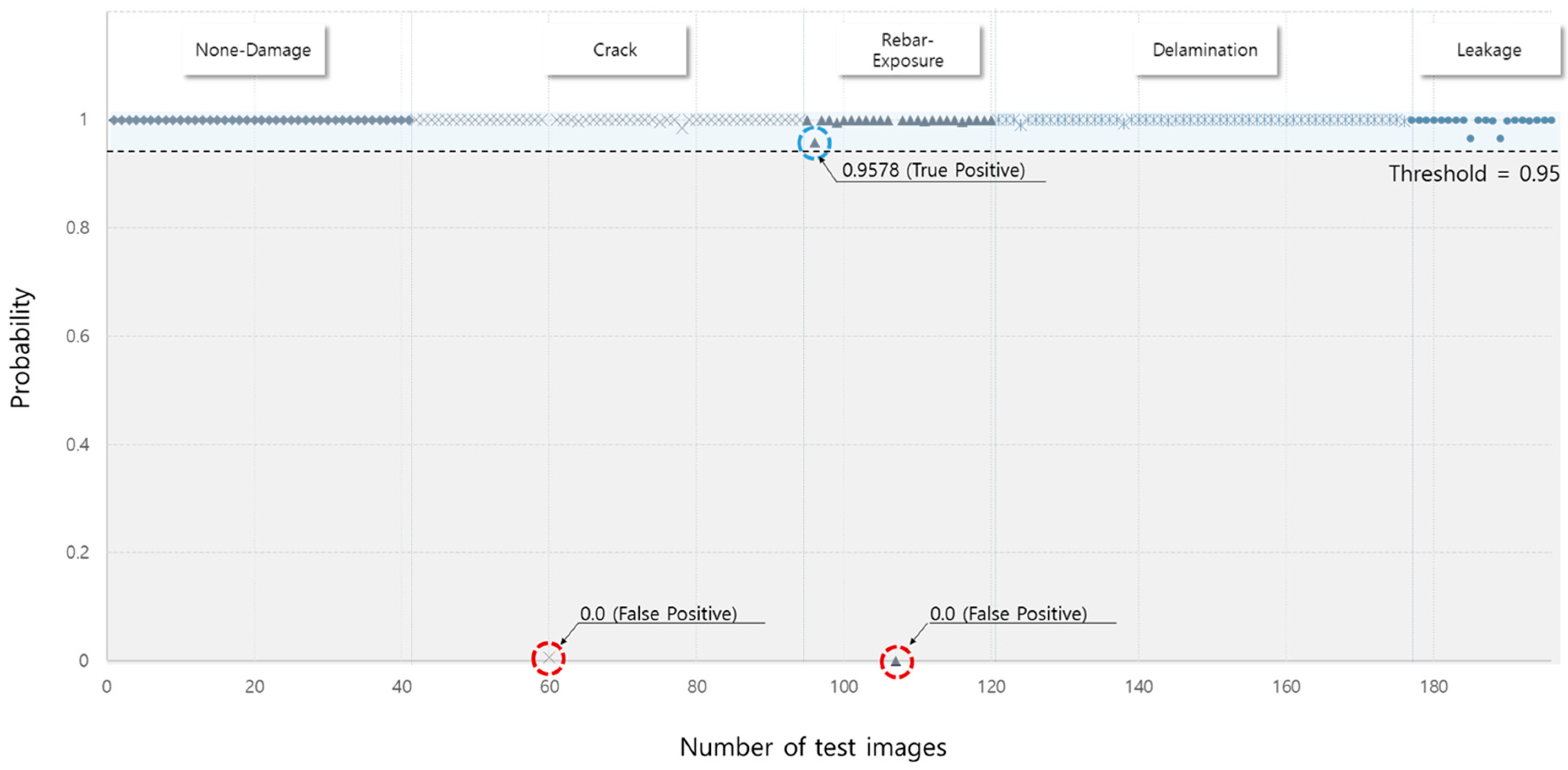

5. Results and Discussion

5.1. Performance Evaluation Metrics

5.2. Experimental Results

6. Conclusions and Scope for Future Work

Author Contributions

Funding

Conflicts of Interest

References

- Cheng, H.D.; Shi, X.J.; Glazier, C. Real-Time Image Thresholding Based on Sample Space Reduction and Interpolation Approach. J. Comput. Civ. Eng. 2003, 17, 264–272. [Google Scholar] [CrossRef]

- German, S.; Brilakis, I.; Desroches, R. Rapid entropy-based detection and properties measurement of concrete spalling with machine vision for post-earthquake safety assessments. Adv. Eng. Inform. 2012, 26, 846–858. [Google Scholar] [CrossRef]

- Koch, C.; Brilakis, I. Pothole detection in asphalt pavement images. Adv. Eng. Inform. 2011, 25, 507–515. [Google Scholar] [CrossRef]

- Yamaguchi, T.; Nakamura, S.; Saegusa, R.; Hashimoto, S. Image-Based Crack Detection for Real Concrete Surfaces. IEEJ Trans. Electr. Electron. Eng. 2008, 3, 128–135. [Google Scholar] [CrossRef]

- Yeum, C.M.; Dyke, S.J. Vision-Based Automated Crack Detection for Bridge Inspection. Comput. Civ. Infrastruct. Eng. 2015, 30, 759–770. [Google Scholar] [CrossRef]

- Deng, W.; Mou, Y.; Kashiwa, T.; Escalera, S.; Nagai, K.; Nakayama, K.; Matsuo, Y.; Prendinger, H. Vision based pixel-level bridge structural damage detection using a link ASPP network. Autom. Constr. 2020, 110. [Google Scholar] [CrossRef]

- Cha, Y.J.; Choi, W.; Büyüköztürk, O. Deep learning-based crack damage detection using convolutional neural networks. Comput. Civ. Infrastruct. Eng. 2017, 32, 361–378. [Google Scholar] [CrossRef]

- Ye, X.; Dong, C.Z.; Liu, T. A Review of Machine Vision-Based Structural Health Monitoring: Methodologies and Applications. J. Sensors 2016, 2016, 1–10. [Google Scholar] [CrossRef]

- Liu, Z.; Suandi, S.A.; Ohashi, T.; Ejima, T. Tunnel Crack Detection and Classification System Based on Image Processing. Mach. Vis. Appl. Ind. Insp. X 2002, 4664, 145–152. [Google Scholar] [CrossRef]

- Zalama, E.; Gómez-García-Bermejo, J.; Medina, R.; Llamas, J. Road crack detection using visual features extracted by Gabor filters. Comput. Civ. Infrastruct. Eng. 2014, 29, 342–358. [Google Scholar] [CrossRef]

- Ying, L.; Salari, E. Beamlet Transform-Based Technique for Pavement Crack Detection and Classification. Comput. Civ. Infrastruct. Eng. 2010, 25, 572–580. [Google Scholar] [CrossRef]

- Kim, H.; Lee, J.H.; Ahn, E.; Cho, S.; Shin, M.; Sim, S.-H. Concrete Crack Identification Using a UAV Incorporating Hybrid Image Processing. Sensors 2017, 17, 2052. [Google Scholar] [CrossRef] [PubMed]

- Hoang, N.-D.; Nguyen, Q.-L.; Tran, V.-D. Automatic recognition of asphalt pavement cracks using metaheuristic optimized edge detection algorithms and convolution neural network. Autom. Constr. 2018, 94, 203–213. [Google Scholar] [CrossRef]

- Shahrokhinasab, E.; Hosseinzadeh, N.; Monir Abbasi, A.; Torkaman, S. Performance of Image-Based Crack Detection Systems in Concrete Structures. J. Soft Comput. Civ. Eng. 2020, 4, 127–139. [Google Scholar]

- Nishikawa, T.; Yoshida, J.; Sugiyama, T.; Fujino, Y. Concrete Crack Detection by Multiple Sequential Image Filtering. Comput. Civ. Infrastruct. Eng. 2011, 27, 29–47. [Google Scholar] [CrossRef]

- Lin, Y.-Z.; Nie, Z.-H.; Ma, H.-W. Structural Damage Detection with Automatic Feature-Extraction through Deep Learning. Comput. Civ. Infrastruct. Eng. 2017, 32, 1025–1046. [Google Scholar] [CrossRef]

- Wang, N.; Zhao, Q.; Li, S.; Zhao, X.; Zhao, P. Damage Classification for Masonry Historic Structures Using Convolutional Neural Networks Based on Still Images. Comput. Civ. Infrastruct. Eng. 2018, 33, 1073–1089. [Google Scholar] [CrossRef]

- Yang, X.; Li, H.; Yu, Y.; Luo, E.; Huang, T.; Yang, X. Automatic Pixel-Level Crack Detection and Measurement Using Fully Convolutional Network. Comput. Civ. Infrastruct. Eng. 2018, 33, 1090–1109. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. Imagenet Classification with Deep Convolutional Neural Networks. In Advances in Neural Information Processing Systems; MIT Press: Cambridge, MA, USA, 2012; pp. 1097–1105. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. arXiv 2014, arXiv:1409.1556. [Google Scholar]

- Szegedy, C.; Liu, W.; Jia, Y.; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the IEEE Computer Society Conference onComputer Vision and Pattern Recognition, Boston, MA, USA, 7–12 June 2015; pp. 1–9. [Google Scholar]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Ciresan, D.; Meier, U.; Schmidhuber, J. Multi-Column Deep Neural Networks for Image Classification. In Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition; Institute of Electrical and Electronics Engineers (IEEE), Providence, RI, USA, 16–21 June 2012; pp. 3642–3649. [Google Scholar]

- Zeiler, M.D.; Fergus, R. Stochastic pooling for regularization of deep convolutional neural networks. arXiv 2013, arXiv:1301.3557. [Google Scholar]

- Zhu, S.; Du, J. Visual Tracking Using Max-Average Pooling and Weight-Selection Strategy. J. Appl. Math. 2014, 2014, 1–10. [Google Scholar] [CrossRef]

- Song, Z.; Liu, Y.; Song, R.; Chen, Z.; Yang, J.; Zhang, C.; Jiang, Q. A sparsity-based stochastic pooling mechanism for deep convolutional neural networks. Neural Networks 2018, 105, 340–345. [Google Scholar] [CrossRef] [PubMed]

- LeCun, Y.; Boser, B.E.; Denker, J.S.; Henderson, D.; Howard, R.E.; Hubbard, W.E.; Jackel, L.D. Handwritten digit recognition with a back-propagation network. Adv. Neural Inf. Process. Syst. 1989, 2, 396–404. [Google Scholar]

- Boureau, Y.-L.; Bach, F.; LeCun, Y.; Ponce, J. Learning Mid-Level Features for Recognition. In Proceedings of the 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition; Institute of Electrical and Electronics Engineers (IEEE), San Francisco, CA, USA, 13–18 June 2010; pp. 2559–2566. [Google Scholar]

- Wang, F.; Jiang, M.; Qian, C.; Yang, S.; Li, C.; Zhang, H.; Wang, X.; Tang, X. Residual Attention Network for Image Classification. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Honolulu, HI, USA, 21–26 July 2017; pp. 3156–3164. [Google Scholar]

- Park, J.; Woo, S.; Lee, J.-Y.; Kweon, I.S. Bam: Bottleneck attention module. arXiv 2018, arXiv:1807.06514. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. CBAM: Convolutional Block Attention Module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Antoniou, A.; Storkey, A.; Edwards, H. Data augmentation generative adversarial networks. arXiv 2017, arXiv:1711.04340. [Google Scholar]

- Shorten, C.; Khoshgoftaar, T.M. A survey on Image Data Augmentation for Deep Learning. J. Big Data 2019, 6, 60. [Google Scholar] [CrossRef]

- Hauberg, S.; Freifeld, O.; Larsen, A.B.L.; Fisher, J.; Hansen, L. Dreaming more data: Class-dependent distributions over diffeomorphisms for learned data augmentation. In Proceedings of the 19th International Artificial Intelligence and Statistics, Cadiz, Spain, 9–11 May 2016; pp. 342–350. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar]

| Models | Accuracy (%) | Precision (%) | Recall (%) | F1-score (%) |

|---|---|---|---|---|

| AlexNet_avg * | 0.97449 | 0.97449 | 0.974490 | 0.97449 |

| VGG16_avg * | 0.97959 | 0.97943 | 0.974489 | 0.97692 |

| ResNet50 | 0.94879 | 0.95408 | 0.948979 | 0.95149 |

| InceptionV3 | 0.94879 | 0.94898 | 0.948979 | 0.94898 |

| MobileNetV2 | 0.92347 | 0.92824 | 0.923469 | 0.92582 |

| Proposed model—CMDnet | 0.98980 | 0.98980 | 0.989780 | 0.98978 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2020 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Shin, H.K.; Ahn, Y.H.; Lee, S.H.; Kim, H.Y. Automatic Concrete Damage Recognition Using Multi-Level Attention Convolutional Neural Network. Materials 2020, 13, 5549. https://doi.org/10.3390/ma13235549

Shin HK, Ahn YH, Lee SH, Kim HY. Automatic Concrete Damage Recognition Using Multi-Level Attention Convolutional Neural Network. Materials. 2020; 13(23):5549. https://doi.org/10.3390/ma13235549

Chicago/Turabian StyleShin, Hyun Kyu, Yong Han Ahn, Sang Hyo Lee, and Ha Young Kim. 2020. "Automatic Concrete Damage Recognition Using Multi-Level Attention Convolutional Neural Network" Materials 13, no. 23: 5549. https://doi.org/10.3390/ma13235549

APA StyleShin, H. K., Ahn, Y. H., Lee, S. H., & Kim, H. Y. (2020). Automatic Concrete Damage Recognition Using Multi-Level Attention Convolutional Neural Network. Materials, 13(23), 5549. https://doi.org/10.3390/ma13235549