Enhancing the Breast Histopathology Image Analysis for Cancer Detection Using Variational Autoencoder

Abstract

1. Introduction

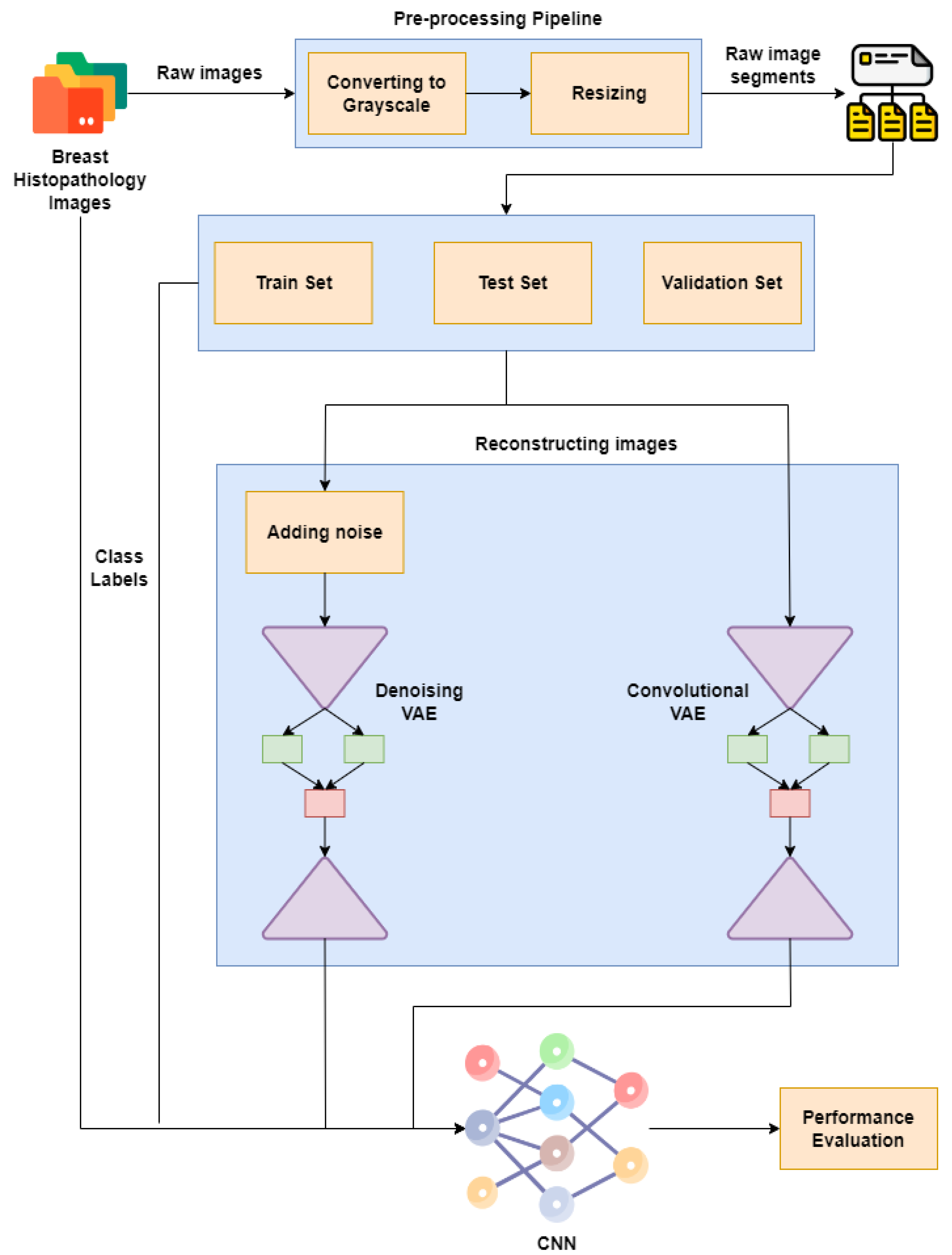

Major Contributions

- In this paper, we provide a background study and a new approach to breast cancer detection;

- In the proposed approach, VAE is used to reconstruct images by using CNNs to improve breast cancer detection;

- Histopathological images are processed and presented;

- The prediction results of the proposed approach are provided using different configurations of CNNs with autoencoder variants.

2. Related Work

Research Gaps

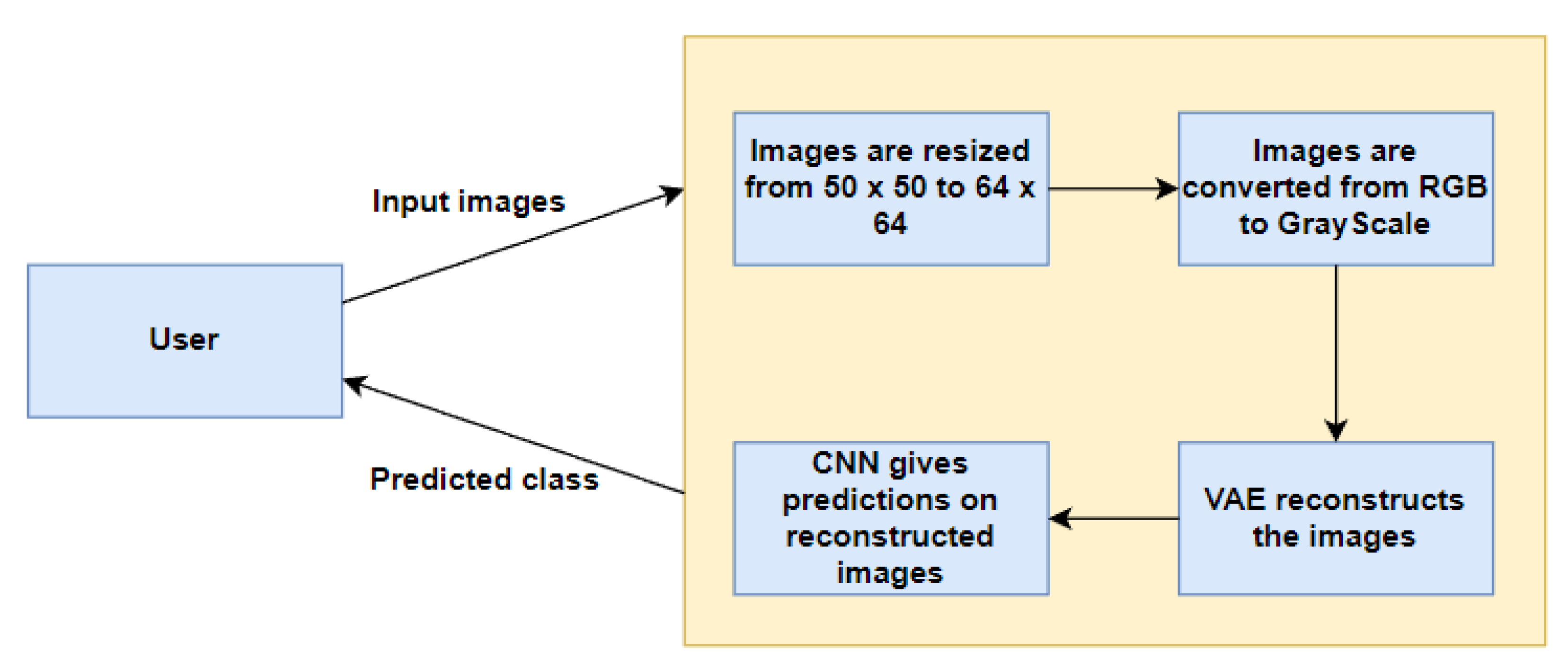

3. Proposed Approach

3.1. Convolutional Neural Network (CNN)

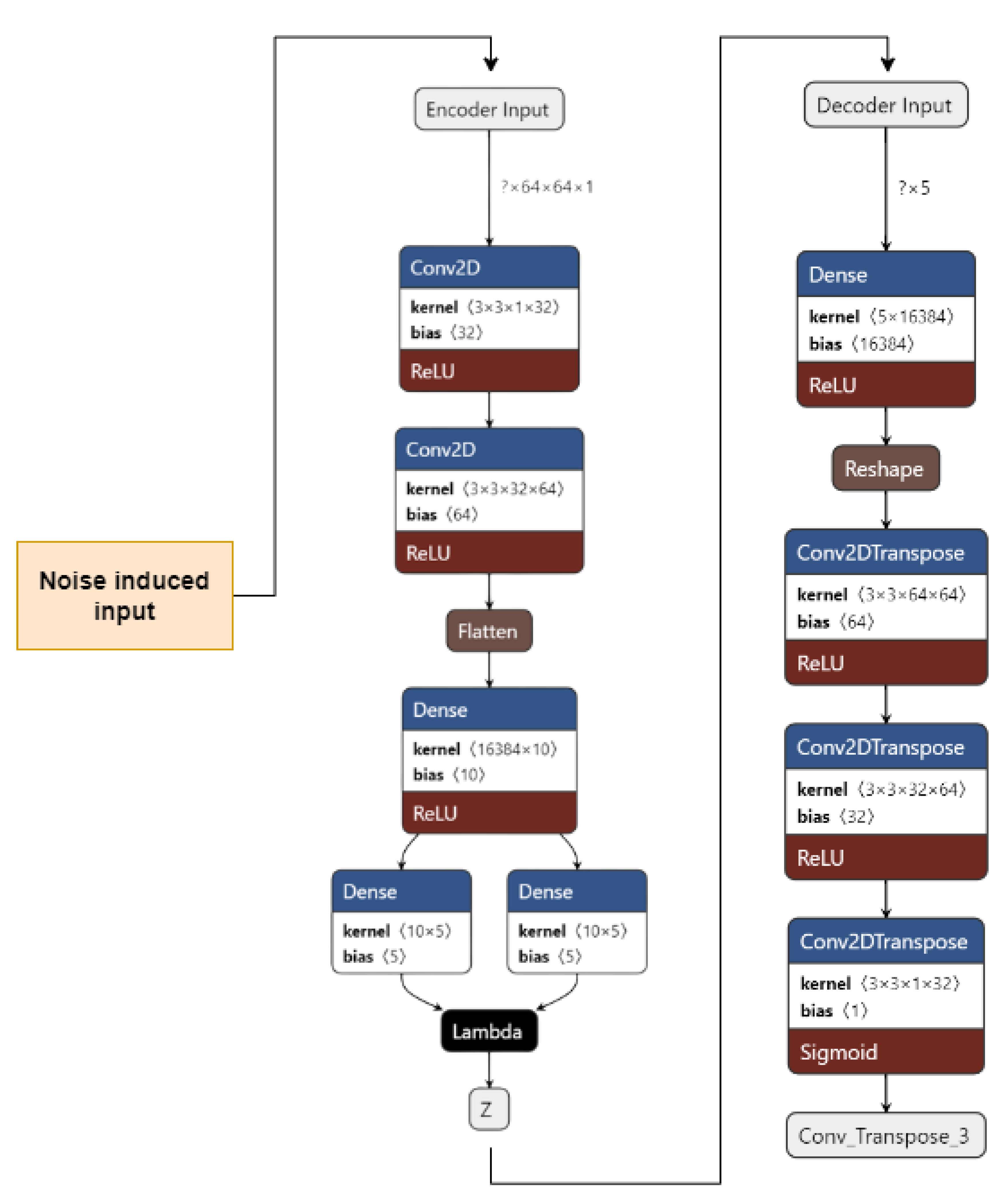

3.2. Variational Autoencoder

3.3. Denoising Variational Autoencoder

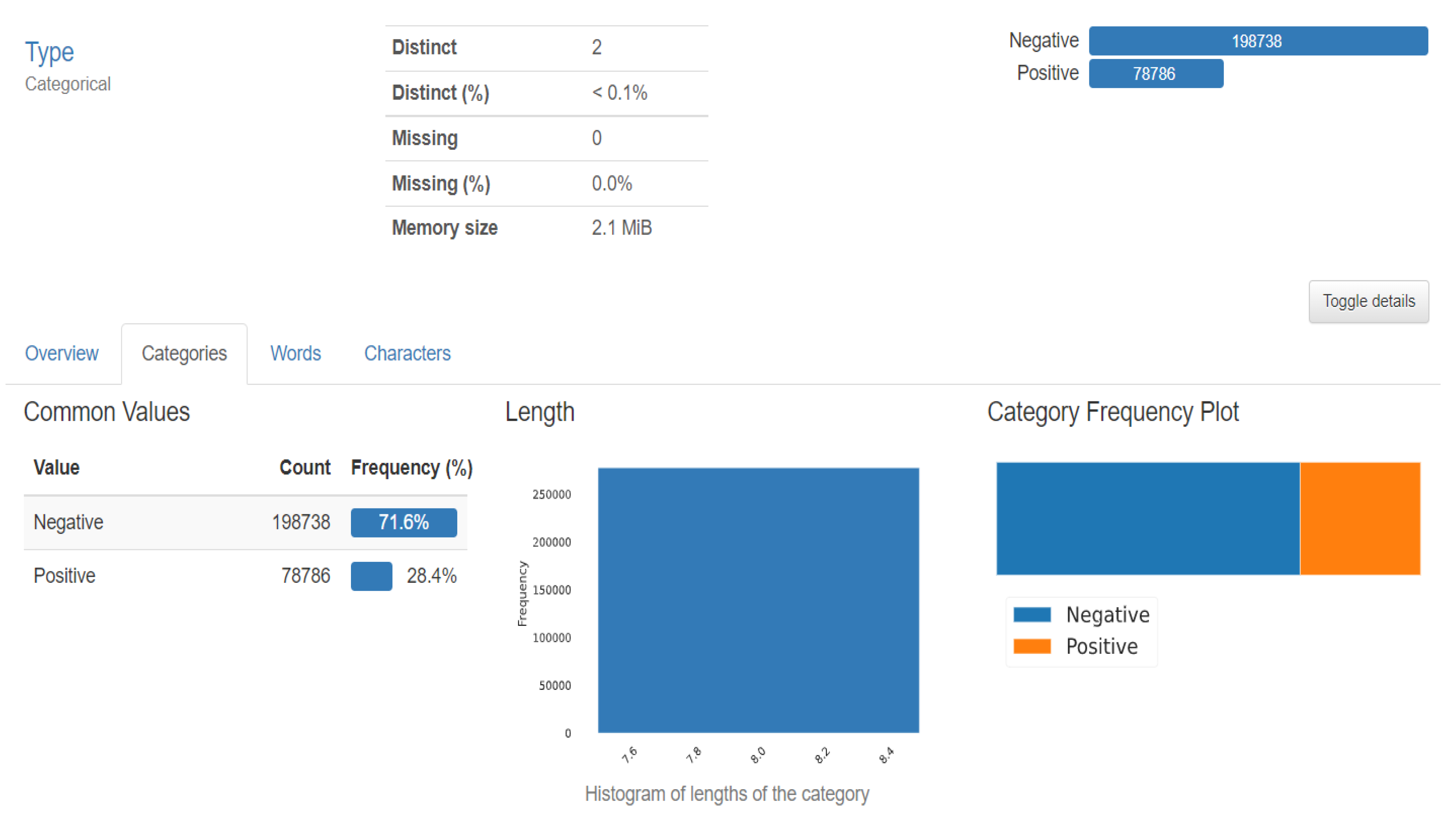

3.4. Dataset

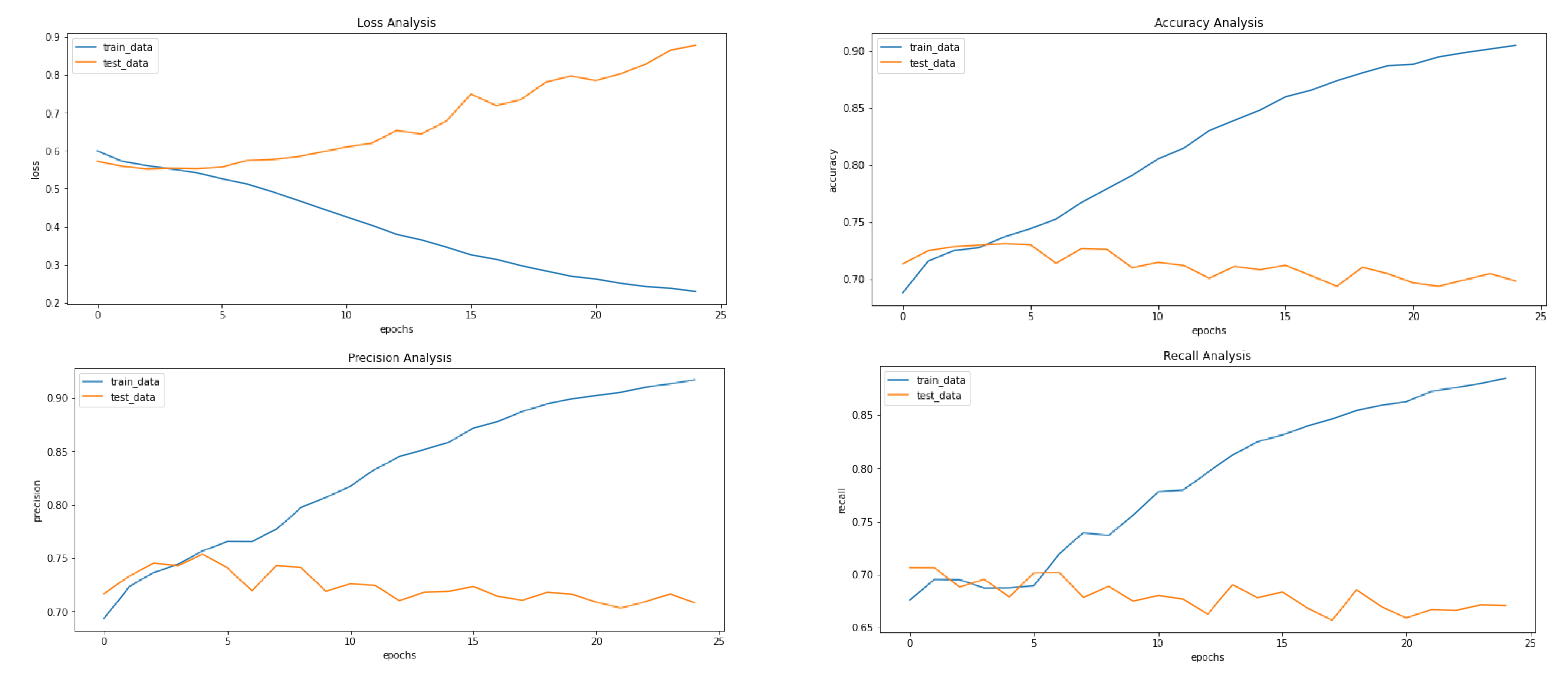

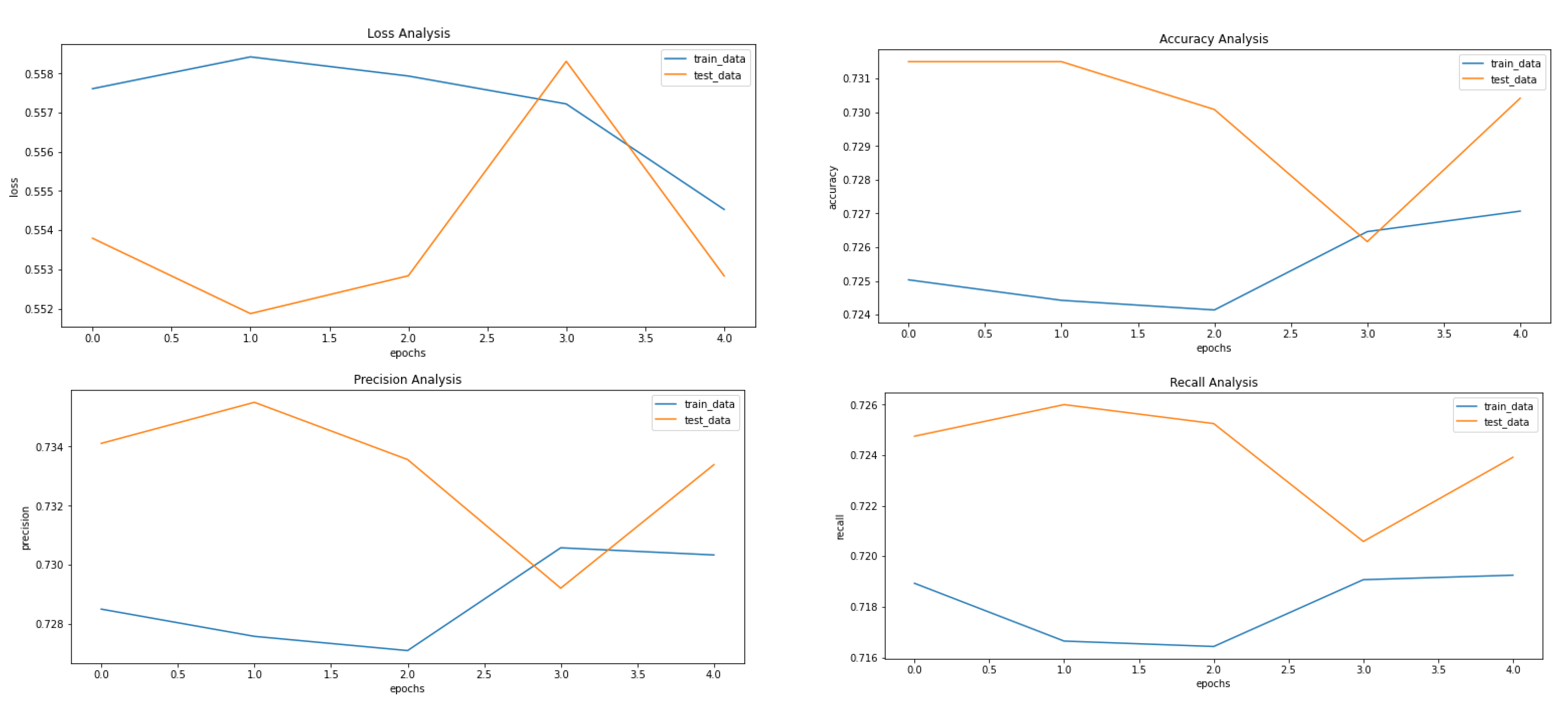

4. Experimental Setting and Result Analysis

Classification Metrics

- True Positive Rate (Equation (6))

- False Positive Rate (Equation (7))

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Zhou, X.; Li, C.; Rahaman, M.; Yao, Y.; Ai, S.; Sun, C.; Wang, Q.; Zhang, Y.; Li, M.; Li, X.; et al. A Comprehensive Review for Breast Histopathology Image Analysis Using Classical and Deep Neural Networks. IEEE Access 2020, 8, 90931–90956. [Google Scholar] [CrossRef]

- Senan, E.M.; Alsaade, F.W.; Al-Mashhadani, M.I.A.; Theyazn, H.H.; Al-Adhaileh, M.H. Classification of histopathological images for early detection of breast cancer using deep learning. J. Appl. Sci. Eng. 2021, 24, 323–329. [Google Scholar]

- Kerlikowske, K.; Chen, S.; Golmakani, M.K.; Sprague, B.L.; Tice, J.A.; Tosteson, A.N.; Miglioretti, D.L. Cumulative advanced breast cancer risk prediction model developed in a screening mammography population. JNCI J. Natl. Cancer Inst. 2022, 114, 676–685. [Google Scholar] [CrossRef]

- Nassif, A.B.; Abu Talib, M.; Nasir, Q.; Afadar, Y.; Elgendy, O. Breast cancer detection using artificial intelligence techniques: A systematic literature review. Artif. Intell. Med. 2022, 127, 102276. [Google Scholar] [CrossRef]

- Assegie, T.A.; Tulasi, R.L.; Kumar, N.K. Breast cancer prediction model with decision tree and adaptive boosting. IAES Int. J. Artif. Intell. (IJ-AI) 2021, 10, 184–190. [Google Scholar] [CrossRef]

- Arya, N.; Saha, S. Multi-modal advanced deep learning architectures for breast cancer survival prediction. Knowledge-Based Syst. 2021, 221, 106965. [Google Scholar] [CrossRef]

- Ghosh, P.; Azam, S.; Hasib, K.M.; Karim, A.; Jonkman, M.; Anwar, A. A Performance Based Study on Deep Learning Algorithms in the Effective Prediction of Breast Cancer. In Proceedings of the 2021 International Joint Conference on Neural Networks (IJCNN), Shenzhen, China, 18–22 July 2021. [Google Scholar] [CrossRef]

- Gurcan, M.N.; Boucheron, L.; Can, A.; Madabhushi, A.; Rajpoot, N.; Yener, B. Histopathological image analysis: A review. IEEE Rev. Biomed. Eng. 2009, 2, 147–171. [Google Scholar] [CrossRef]

- Available online: https://www.geeksforgeeks.org/variational-autoencoders/ (accessed on 11 February 2023).

- Kingma, D.P.; Welling, M. Auto-encoding variational bayes. In Proceedings of the 2nd International Conference on Learning Representations (ICLR), Banff, AB, Canada, 14–16 April 2014. [Google Scholar]

- Fuchs, T.J.; Buhmann, J.M. Computational pathology: Challenges and promises for tissue analysis. Comput. Med. Imaging Graph. 2011, 35, 515–530. [Google Scholar] [CrossRef]

- Din, N.M.U.; Dar, R.A.; Rasool, M.; Assad, A. Breast cancer detection using deep learning: Datasets, methods, and challenges ahead. Comput. Biol. Med. 2022, 149, 106073. [Google Scholar] [CrossRef]

- Li, J.; Zhou, Z.; Dong, J.; Fu, Y.; Li, Y.; Luan, Z.; Peng, X. Predicting breast cancer 5-year survival using machine learning: A systematic review. PLoS ONE 2021, 16, e0250370. [Google Scholar] [CrossRef]

- Naji, M.A.; El Filali, S.; Aarika, K.; Benlahmar, E.H.; Abdelouhahid, R.A.; Debauche, O. Machine learning algorithms for breast cancer prediction and diagnosis. Procedia Comput. Sci. 2021, 191, 487–492. [Google Scholar] [CrossRef]

- Katari, M.S.; Shasha, D.; Tyagi, S. Breast Cancer Classification. In Statistics Is Easy; Springer: Cham, Switzerland, 2021; pp. 23–41. [Google Scholar]

- Veta, M.; Pluim, J.; Van Diest, P.; Viergever, M.A. Breast cancer histopathology image analysis: A review. IEEE Trans. Biomed. Eng. 2014, 61, 400–1411. [Google Scholar] [CrossRef]

- Irshad, H.; Veillard, A.; Roux, L. Racoceanu, Methods for nuclei detection, segmentation, and classification in digital his-topathology: A review—Current status and future potential. IEEE Rev. Biomed. Eng. 2013, 7, 97–114. [Google Scholar] [CrossRef]

- Janowczyk, A.; Madabhushi, A. Deep learning for digital pathology image analysis: A comprehensive tutorial with selected use cases. J. Pathol. Informatics 2016, 7, 29. [Google Scholar] [CrossRef] [PubMed]

- Loukas, C.G.; Linney, A. A survey on histological image analysis-based assessment of three major biological factors influencing radiotherapy: Proliferation, hypoxia and vasculature. Comput. Methods Programs Biomed. 2004, 74, 183–199. [Google Scholar] [CrossRef]

- Zhang, Y.-N.; Xia, K.-R.; Li, C.-Y.; Wei, B.-L.; Zhang, B. Review of Breast Cancer Pathologigcal Image Processing. BioMed Res. Int. 2021, 2021, 1994764. [Google Scholar] [CrossRef]

- Litjens, G.; Kooi, T.; Bejnordi, B.; Setio, A.; Ciompi, F.; Ghafoorian, M.; Van Der Laak, J.; Van Ginneken, B.; Sánchez, C. A survey on deep learning in medical image analysis. Med. Image Anal. 2017, 42, 60–88. [Google Scholar] [CrossRef]

- Astaraki, M.; Zakko, Y.; Dasu, I.T.; Smedby, Ö.; Wang, C. Benign-malignant pulmonary nodule classification in low-dose CT with convolutional features. Phys. Medica 2021, 83, 146–153. [Google Scholar] [CrossRef]

- Benhammou, Y.; Achchab, B.; Herrera, F.; Tabik, S. BreakHis based breast cancer automatic diagnosis using deep learning: Taxonomy, survey and insights. Neurocomputing 2019, 375, 9–24. [Google Scholar] [CrossRef]

- Bidwe, R.V.; Mishra, S.; Patil, S.; Shaw, K.; Vora, D.R.; Kotecha, K.; Zope, B. Deep learning approaches for video compression: A bibliometric analysis. Big Data Cogn. Comput. 2022, 6, 44. [Google Scholar] [CrossRef]

- Joseph, A.A.; Abdullahi, M.; Junaidu, S.B.; Ibrahim, H.H.; Chiroma, H. Improved multi-classification of breast cancer histopathological images using handcrafted features and deep neural network (dense layer). Intell. Syst. Appl. 2022, 14, 200066. [Google Scholar] [CrossRef]

- Srinidhi, C.L.; Ciga, O.; Martel, A.L. Deep neural network models for computational histopathology: A survey. Med. Image Anal. 2020, 67, 101813. [Google Scholar] [CrossRef] [PubMed]

- Thawkar, S.; Sharma, S.; Khanna, M.; Singh, L.K. Breast cancer prediction using a hybrid method based on Butterfly Optimization Algorithm and Ant Lion Optimizer. Comput. Biol. Med. 2021, 139, 104968. [Google Scholar] [CrossRef] [PubMed]

- Ahmad, N.; Asghar, S.; Gillani, S. Transfer learning-assisted multiresolution breast cancer histopathological images classification. Vis. Comput. 2021, 38, 2751–2770. [Google Scholar]

- Zou, Y.; Zhang, J.; Huang, S.; Liu, B. Breast cancer histopathological image classification using attention high-order deep network. Int. J. Imaging Syst. Technol. 2021, 32, 266–279. [Google Scholar] [CrossRef]

- Ghulam, A.; Ali, F.; Sikander, R.; Ahmad, A.; Ahmed, A.; Patil, S. ACP-2DCNN: Deep learning-based model for improving prediction of anticancer peptides using two-dimensional convolutional neural network. Chemom. Intell. Lab. Syst. 2022, 226, 104589. [Google Scholar] [CrossRef]

- Yan, R.; Ren, F.; Wang, Z.; Wang, L.; Zhang, T.; Liu, Y.; Rao, X.; Zheng, C.; Zhang, F. Breast cancer histopathological image classification using a hybrid deep neural network. Methods 2019, 173, 52–60. [Google Scholar] [CrossRef]

- Komolovaitė, D.; Maskeliūnas, R.; Damaševičius, R. Deep Convolutional Neural Network-Based Visual Stimuli Classification Using Electroencephalography Signals of Healthy and Alzheimer’s Disease Subjects. Life 2022, 12, 374. [Google Scholar] [CrossRef]

- Singh, T.; Mohadikar, M.; Gite, S.; Patil, S.; Pradhan, B.; Alamri, A. Attention span prediction using head-pose estimation with deep neural networks. IEEE Access 2021, 9, 142632–142643. [Google Scholar] [CrossRef]

- Wei, B.; Han, Z.; He, X.; Yin, Y. Deep learning model based breast cancer histopathological image classification. In Proceedings of the 2017 IEEE 2nd International Conference on Cloud Computing and Big Data Analysis (ICCCBDA), Chengdu, China, 28–30 April 2017; pp. 348–353. [Google Scholar]

- Mansour, R.F.; Escorcia-Gutierrez, J.; Gamarra, M.; Gupta, D.; Castillo, O.; Kumar, S. Unsupervised deep learning based variational autoencoder model for COVID-19 diagnosis and classification. Pattern Recognit. Lett. 2021, 151, 267–274. [Google Scholar] [CrossRef]

- Wang, Y.; Acs, B.; Robertson, S.; Liu, B.; Solorzano, L.; Wählby, C.; Hartman, J.; Rantalainen, M. Improved breast cancer histological grading using deep learning. Ann. Oncol. 2021, 33, 89–98. [Google Scholar] [CrossRef] [PubMed]

- Elbattah, M.; Loughnane, C.; Guérin, J.-L.; Carette, R.; Cilia, F.; Dequen, G. Variational Autoencoder for Image-Based Augmentation of Eye-Tracking Data. J. Imaging 2021, 7, 83. [Google Scholar] [CrossRef] [PubMed]

- Addo, D.; Zhou, S.; Jackson, J.K.; Nneji, G.U.; Monday, H.N.; Sarpong, K.; Patamia, R.A.; Ekong, F.; Owusu-Agyei, C.A. EVAE-Net: An Ensemble Variational Autoencoder Deep Learning Network for COVID-19 Classification Based on Chest X-ray Images. Diagnostics 2022, 12, 2569. [Google Scholar] [CrossRef] [PubMed]

- Van Dao, T.; Sato, H.; Kubo, M. An Attention Mechanism for Combination of CNN and VAE for Image-Based Malware Classification. IEEE Access 2022, 10, 85127–85136. [Google Scholar] [CrossRef]

- Sammut, S.-J.; Crispin-Ortuzar, M.; Chin, S.-F.; Provenzano, E.; Bardwell, H.A.; Ma, W.; Cope, W.; Dariush, A.; Dawson, S.-J.; Abraham, J.E.; et al. Multi-omic machine learning predictor of breast cancer therapy response. Nature 2021, 601, 623–629. [Google Scholar] [CrossRef]

- Cruz-Roa, A.; Basavanhally, A.; Gonz, F.; Gilmore, H.; Feldman, M.; Ganesan, S.; Shih, N.; Tomaszewski, J.; Madabhus, A. Automatic detection of invasive ductal carcinoma in whole slide images with convolutional neural networks. In Medical Imaging 2014: Digital Pathology; SPIE: Bellingham, WA, USA, 2014; Volume 9041, p. 904103. [Google Scholar]

- Gupta, S.; Gupta, M.K. A comparative analysis of deep learning approaches for predicting breast cancer survivability. Arch. Comput. Methods Eng. 2022, 29, 2959–2975. [Google Scholar] [CrossRef]

- Saldanha, J.; Chakraborty, S.; Patil, S.; Kotecha, K.; Kumar, S.; Nayyar, A. Data augmentation using VAE for improvement of respiratory disease classification. PLoS ONE 2022, 17, e0266467. [Google Scholar] [CrossRef]

- Shon, H.-S.; Batbaatar, E.; Cha, E.-J.; Kang, T.-G.; Choi, S.-G.; Kim, K.-A. Deep Autoencoder based Classification for Clinical Prediction of Kidney Cancer. Trans. Korean Inst. Electr. Eng. 2022, 71, 1393–1404. [Google Scholar] [CrossRef]

- Kingma, D.P.; Ba, J. Adam: A method for stochastic optimization. In Proceedings of the 3rd International Conference on Learning Representations (ICLR), San Diego, CA, USA, 7–9 May 2015. [Google Scholar]

- Zewdie, E.T.; Tessema, A.W.; Simegn, G.L. Classification of breast cancer types, sub-types and grade from histopathological images using deep learning technique. Health Technol. 2021, 11, 1277–1290. [Google Scholar] [CrossRef]

- Wu, Y.; Xu, L. Image Generation of Tomato Leaf Disease Identification Based on Adversarial-VAE. Agriculture 2021, 11, 981. [Google Scholar] [CrossRef]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. (JMLR) 2014, 15, 1929–1958. [Google Scholar]

- Abadi, M.; Barham, P.; Chen, J.; Chen, Z.; Davis, A.; Dean, J.; Devin, M.; Ghemawat, S.; Irving, G.; Isard, M.; et al. TensorFlow: A system for large-scale machine learning. In Proceedings of the 12th (USENIX) Symposium on Operating Systems Design and Implementation (OSDI 16), Savannah, GA, USA, 2–4 November 2016; pp. 265–283. [Google Scholar]

- Chollet, F.K. GitHub Repository. 2015. Available online: https://github.com/fchollet/keras (accessed on 11 January 2023).

| Property | Value |

|---|---|

| Number of samples | 277,524 |

| Type of image | RGB |

| Number of classes | 2 (IDC positive and IDC negative) |

| Image size | 50 × 50 |

| Image magnification | 40 times |

| Model | Hyperparameters | Value |

|---|---|---|

| Convolutional Neural Network | Input shape (layer 1) | (64, 64, 1) |

| Number of filters in Conv2D layers (layers 2–3, 6) | 64, 128, 256 | |

| Strides in Conv2D layers (layers 2–3, 6) | (2, 2) | |

| Kernel Size in Conv2D layers (layers 2–3, 6) | 3 | |

| Activation function in Conv2D layers (layers 2–3, 6) | Relu | |

| Pool size in MaxPool2D layers (layers 4, 7) | (1, 1) | |

| Dropout [48] rate in Dropout [48] layers (layers 5, 8) | 0.2 | |

| Units in Dense layer (layers 10) | 2 | |

| Activation function in Dense layer (10) | Sigmoid | |

| Convolutional and Denoising Variational Autoencoder(Encoder) | Input shape (layer 1) | (64, 64, 1) |

| Number of filters in Conv2D layers (layers 2–3) | 32, 64 | |

| Strides in Conv2D layers (layers 2–3) | 2 | |

| Kernel Size in Conv2D layers (layers 2–3) | 3 | |

| Activation function in Conv2D layers (layers 2–3) | Relu | |

| Padding in Conv2D layers (layers 2–3) | same | |

| Units in Dense layers (layers 5, 6–7) | 10, 5 | |

| Activation function used in Dense layer (layer 5) | Relu | |

| Units in Lambda layer (layer 9) | 5 | |

| Convolutional and Denoising Variational Autoencoder(Decoder) | Input shape (layer 1) | 5 |

| Units used in Dense layer (layer 2) | (16 × 16 × 64) = 16,384 | |

| Target Shape used in Reshape layer (layer 3) | (16, 16, 64) | |

| Number of filters used in Conv2DTranspose layers (layers 4–6) | 64, 32, 1 | |

| Kernel size used in Conv2DTranspose layers (layers 4–6) | 3 | |

| Strides used in Conv2DTranspose layers (layers 4–6) | 2, 2, 1 | |

| Padding used in Conv2DTranspose layers (layers 4–6) | same | |

| Activation function used in Conv2DTranspose layers (layers 4–6) | Relu, Relu, Sigmoid |

| Classifier | Metrics | Before VAE | After VAE | After DVAE |

|---|---|---|---|---|

| CNN | Loss | 0.9449 | 0.5545 | 0.6932 |

| CNN | Accuracy | 0.6876 | 0.7365 | 0.5002 |

| CNN | Precision | 0.6805 | 0.7369 | 0.2502 |

| CNN | Recall or Sensitivity | 0.6984 | 0.7365 | 0.5002 |

| CNN | F1 Score | 0.6868 | 0.7363 | 0.3335 |

| CNN | Specificity | 0.69 | 0.74 | 0.50 |

| CNN | Cohen’s Kappa | 0.3749 | 0.4730 | 0.000 |

| CNN | ROC AUC | 0.7205 | 0.7969 | 0.5000 |

| Model | Result |

|---|---|

| CNN (without VAE) | Accuracy: 68% |

| EfficientNetB3 | Accuracy: 69% |

| EfficientNetB0 | Accuracy: 69% |

| CNN (with VAE) | Accuracy: 73% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Guleria, H.V.; Luqmani, A.M.; Kothari, H.D.; Phukan, P.; Patil, S.; Pareek, P.; Kotecha, K.; Abraham, A.; Gabralla, L.A. Enhancing the Breast Histopathology Image Analysis for Cancer Detection Using Variational Autoencoder. Int. J. Environ. Res. Public Health 2023, 20, 4244. https://doi.org/10.3390/ijerph20054244

Guleria HV, Luqmani AM, Kothari HD, Phukan P, Patil S, Pareek P, Kotecha K, Abraham A, Gabralla LA. Enhancing the Breast Histopathology Image Analysis for Cancer Detection Using Variational Autoencoder. International Journal of Environmental Research and Public Health. 2023; 20(5):4244. https://doi.org/10.3390/ijerph20054244

Chicago/Turabian StyleGuleria, Harsh Vardhan, Ali Mazhar Luqmani, Harsh Devendra Kothari, Priyanshu Phukan, Shruti Patil, Preksha Pareek, Ketan Kotecha, Ajith Abraham, and Lubna Abdelkareim Gabralla. 2023. "Enhancing the Breast Histopathology Image Analysis for Cancer Detection Using Variational Autoencoder" International Journal of Environmental Research and Public Health 20, no. 5: 4244. https://doi.org/10.3390/ijerph20054244

APA StyleGuleria, H. V., Luqmani, A. M., Kothari, H. D., Phukan, P., Patil, S., Pareek, P., Kotecha, K., Abraham, A., & Gabralla, L. A. (2023). Enhancing the Breast Histopathology Image Analysis for Cancer Detection Using Variational Autoencoder. International Journal of Environmental Research and Public Health, 20(5), 4244. https://doi.org/10.3390/ijerph20054244