2. Materials and Methods

Information to develop an evaluation conceptual model to frame and identify common metrics, best practices, and challenges was obtained using a multiphase mixed-methods approach: an in-depth discussion at a workshop for RCMI evaluators, synthesis of logic model metrics to identify common metrics, iterative discussions about a proposed conceptual framework and a common metrics survey questionnaire administered to RCMIs. All RCMIs’ evaluators and/or other key individuals were invited to participate in data collection activities: the workshop, iterative discussions, and the common metrics survey.

2.1. Phase 1: RCMI Evaluators’ Workshop (December 2019)

The evaluation experts from each of the RCMI were invited to participate in a discussion workshop of RCMI evaluation methods, indicators, and metrics at the RCMI 2019 National Conference, Collaborative Solutions to Improve Minority Health and Reduce Health Disparities, held December 14 and 15, in Bethesda, Maryland. The RCMI conference hosts emailed invitations directly to RCMI evaluators. Initially, the RCMI conference hosts included this “by invitation only” session for RCMI evaluators and/or designees as part of the conference registration process. Later the workshop was open to any conference attendees.

The purpose of the workshop was to elucidate RCMIs evaluation challenges and successes and to establish a comprehensive evaluation framework that includes how community engagement may contribute to reduction in health disparities. The workshop was facilitated by two representatives of the RCMI Translational Research Network (A.S. and T.H.) and two RCMI evaluators (K.L. and L.R.). At the workshop, the participants discussed a comprehensive evaluation framework that establishes standards and priorities for a synergistic consortium while considering the unique needs of the individual RCMIs. The evaluators were asked to prepare materials (logic model, short/medium term goals for each core, barriers, challenges, and best practices) in advance of the workshop as a tool to engage in meaningful discussion. Each evaluator in attendance shared this information in individual presentations and made the information available to the entire group via a shared “cloud based” folder.

2.2. Phase 2: Developing the RCMI Conceptual Evaluation Model (January 2020)

After the workshop, a report summarizing key points was disseminated to participants to guide subsequent discussions for the evaluators working group (EWG) and develop the RCMI evaluation framework. The RCMI evaluators were invited to participate in two meetings to identify next steps for developing the framework. Collaborating via bimonthly videoconferences, the EWG synthesized data from workshop presentation slides and other materials prepared by each participating RCMI that included program logic models and existing evaluation frameworks. The EWG also conducted a literature review of existing evaluation frameworks and conceptual models to inform the development of the proposed evaluation model [

24,

25]. Further, the EWG defined components of a conceptual model. Using the iterative nature of this multiphase mixed-methods process, information gleaned in subsequent phases was also used to inform the final conceptual model.

2.3. Phase 3: Identifying Common and Key Evaluation Metrics (January–February 2020)

To develop a metrics table that was common across sites, the EWG requested a copy of logic models from participating evaluators (n = 10); eight sites shared a logic model. The metrics were methodically selected from logic models and classified as primary targets, secondary targets, outcomes, and impact metrics. Metrics were also examined based on their recurrence in the logic models. Less common metrics were included in the model with an asterisk. Next, the EWG reviewed the metrics and excluded those that were deemed irrelevant based on general consensus.

2.4. Phase 4: A Case Study: (March–June 2020)

The EWG developed a survey questionnaire based on the key metrics identified in previous phases to outline definitions, approaches, and data collection practices used among current RCMI evaluators. Evaluators of currently funded RCMIs (n = 18) were invited via email to complete the online survey with reminders. Six key metrics associated with primary evaluation targets were selected. The survey contained eight questions about how each of the metrics are conceptualized and measured. The final instrument consisted of 62 questions, including a section (12 questions) related to the RCMI site information. For the purpose of this paper the EWG focused on finding answers to the following key questions:

How was the metric operationalized?

What are the primary approaches and methods for data collection?

Does the RCMI use primary or secondary data?

What is the periodicity for data collection?

In the analysis of open-ended responses to the survey items, a thematic coding process was used to identify common themes and outliers. Two EWG members examined responses to each question and identified themes that emerged from their independent coding process. After independent coding, the evaluators met and iteratively refined the themes to verify inter-rater reliability. Once the process was complete, frequencies and percent of respondents who mentioned each of the themes were calculated.

3. Results

3.1. Phase 1: RCMI Evaluators’ Workshop

Nineteen RCMI evaluation representatives (i.e., evaluators, principal investigators—PIs, and other key program staff) representing 10 RCMIs attended the facilitated workshop. The discussion explored in-depth, the programs’ various metrics and measures, the challenges of evaluating the multi-faceted programs, and the resources utilized and needed to collect and manage the data to address key performance measures.

Evaluation best practices and challenges shared during the RCMI Evaluator’s Workshop included a focus on the importance of (1) continuous RCMI team/institutional engagement, (2) establishing a multidisciplinary evaluation team, and (3) extracting multiple data sources to obtain baseline data for ongoing annual benchmarking and systematic data collection. The challenges in conducting RCMI evaluations involved barriers in being able to conduct the evaluations and limited evaluation guidance comprised of (1) RCMI cost-sharing, (2) revenue generation through charge-back plans to collect fees from researchers funded by other grants who use RCMI-funded facilities/equipment, (3) data collection, access to the data and infrastructure, (4) decision making (about evaluation), (5) disaster/crisis management (e.g., financial, natural disasters), (6) having full time employees and staffing (FTEs), and (7) how to evaluate the broader community impact of the RCMI program. The RCMIs shared that they were diligent in their efforts to incorporate technologies to streamline data collection and improve monitoring. To improve evaluation tracking, the RCMI’s use online platforms (Altmerics, Scopus, NIH RePORTER, PubMed, etc.) that track scholarly activities and use diverse data management tools including Research Electronic Data Capture (REDCap, Vanderbilt University, Nashville, TN, USA), Profiles Networking (Harvard University, Cambridge, MA, USA) and eagle-I (Harvard University, Cambridge, MA, USA).

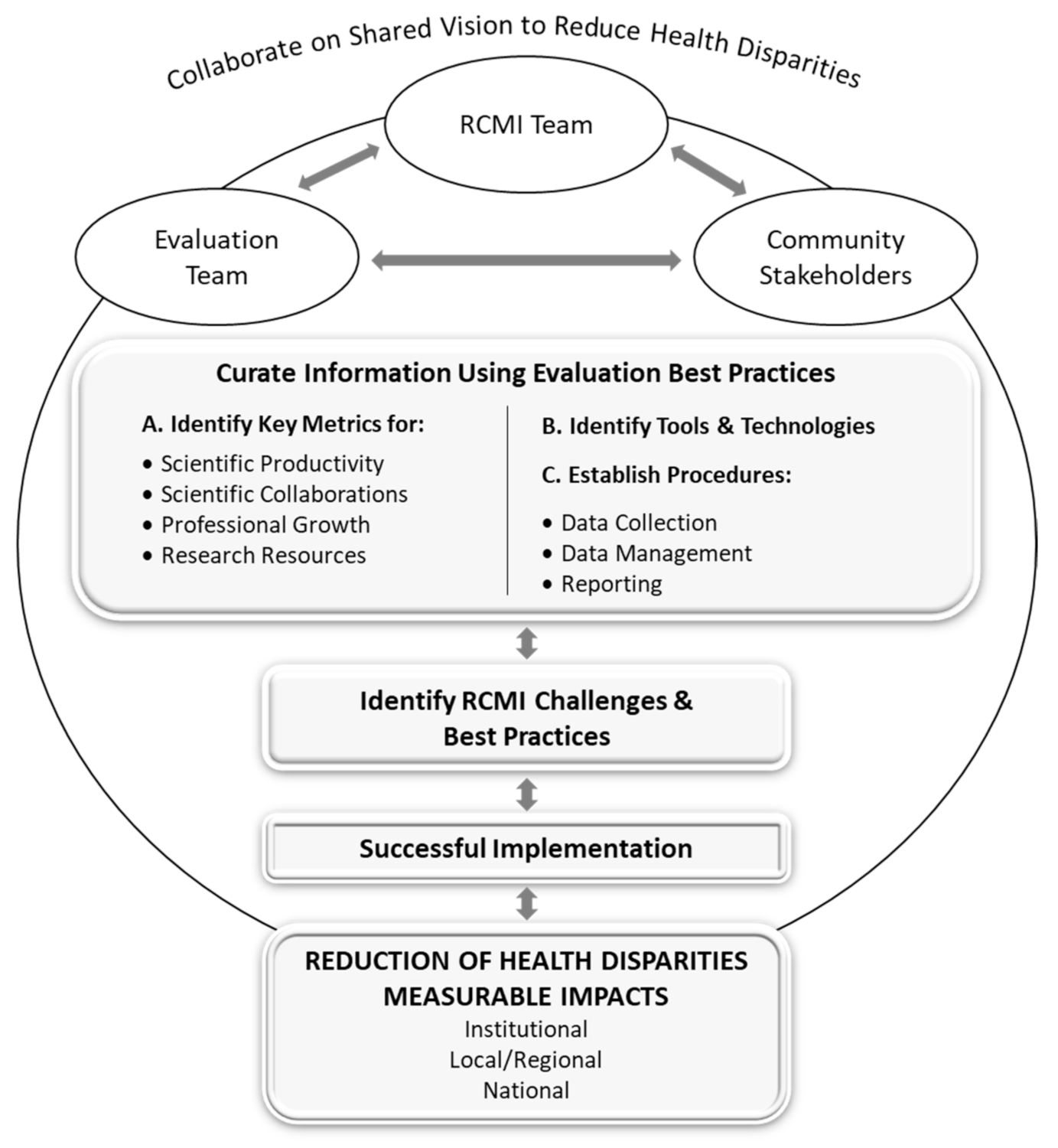

3.2. Phase 2: RCMI Evaluation Conceptual Model

The iterative process of the in-depth discussions among the EWG and review of the literature resulted in the RCMI Evaluation Conceptual Model and the identification of key evaluation metrics and extrapolated measures (

Figure 1). The model was then further refined using information collected in Phase 4 of the study. The proposed model emphasizes a practical on-going evaluation approach to document the RCMIs’ institutional and national impact consistent with NIMHD’s mission to address health disparities. It summarizes essential elements of the program and system-level evaluation and seeks to present commonalities and variances that affect program effectiveness. The model also considers the complex and multi-dimensional nature of research on minority health and the reduction of health disparities, including research that crosses domains and levels of influence [

9]. A detailed description on how the RCMI Evaluation Conceptual Model will guide evaluation efforts of RCMIs (framed with the literature) is provided.

The model reflects how the RCMI programs share a common vision that aligns with that of the NIMHD. The RCMI programs individually and collectively aim to reduce health disparities among minority and other underserved populations through collaborative, interdisciplinary, transdisciplinary, and community engaged research. Community impacts contribute to national efforts to reduce health disparities through collaborative translational research.

The RCMI evaluation teams are the curators of the site metrics and direct the evaluation of the program performance, coordinate and harmonize data collection, and enable the integration of relevant components for favorable program amendment [

26]. The evaluation team works with all stakeholders to ensure documentation and records are maintained and available for ongoing program monitoring. The RCMI program administration works in tandem with the evaluation team and the community stakeholders, who consist of professionally, geographically, and socio-demographically diverse individuals representing academia, industry, community partners, and government interested in discovery and implementation [

13]. The stakeholders are partners in the evaluation process contributing information and knowledge to obtain greater capacity to achieve the shared vision and program goal. Meaningful collaboration at various programmatic levels is fundamental to the RCMI program and is essential for transdisciplinary research [

13].

Key metrics are performance measures critical in determining the effectiveness and efficiency of the program toward achieving its mission [

11]. Universal success metrics of research infrastructure programs include the number of publications acknowledging the program funder and grant number, researchers hired to the program, and grants submitted and awarded to faculty funded by the RCMI. Other metrics are novel and aim to evaluate the conceptual outcomes such as, “increased trust in the research process” and “increased willingness to access local health services.” The value of these factors varied and, therefore, the inclusion of a factor in a specific metric category also varied. Most importantly, the RCMI key metrics were broadly categorized into: (1) scientific productivity, (2) scientific collaborations, (3) professional growth, and (4) research resources. Although establishing common metrics for the reduction of health disparities was beyond the scope of this study, future collaborations should work to establish these.

Tools and technologies contribute to the collaborative process of the RCMI programs. The management of evaluation data requires the use of widely accepted applications and those designed for specific site needs. The evaluation process relies on data curation tools and analytical approaches to document the integration of the various program components and core facilities (cores). Widely accepted tools such as the NIH RePORTER (

https://reporter.nih.gov/) or Altmetric (Altmetric, London, UK), and customizable applications such as Profiles Networking (Harvard University, Cambridge, MA, USA), along with quantitative (e.g., REDCap) and qualitative data collection analysis tools facilitate a thorough and efficient evaluation process [

8]. The curation of the types of data varies by institution, but the requirement to show productivity and value-added of the RCMI program is universal.

3.3. Phase 3: RCMI Evaluation Metrics

In phase 3, the EWG reviewed information provided by RCMIs to identify common evaluation metrics from logic models shared by evaluation group representing eight RCMI evaluation groups.

Table 1 summarizes common metrics used by RCMI evaluation teams that are used to evaluate the progress of RCMI efforts that aim to support and expand health disparities research. The table is organized in four parts encompassing broad primary and secondary targets along with examples of commonly used outcome metrics. On the broadest level (and reflected in the model described in the previous sections), four overarching essential areas (primary targets) of focus for evaluation commonalities across all RCMIs were identified—increasing scientific productivity, increasing scientific collaborations, fostering professional growth, and expanding research resources—and are described in detail.

First, increasing scientific productivity with regards to research focusing on health disparities and minority health was identified as a primary focus of the RCMIs as part of the NIMHD RCMI funding mechanism. This area is intertwined with the RCMI program’s goals of fostering health disparity research by growing infrastructures that enable collaborative investigative work. Secondary targets in this area provide metrics for the growth of scientific studies, defined by pilot project specific productivity, funded grants, publications, scientific dissemination, and community dissemination. Examples of outcome metrics include—(1) number of grant submissions, (2) number of grant awards, (3) number of peer-reviewed publications, and (4) number of presentations or symposia given (conference/symposia or community-based).

Second, increasing scientific collaborations is a broad primary target for measuring the growth of the RCMI research infrastructures. Secondary targets commonly utilized as indicators of research growth within an RCMI are evaluating both the number and types of research partners and community partners. Scientific collaborations are often measured as process metrics, such as networking events or outcome metrics, such as the number of grant collaborators.

An increasingly important component of the RCMI program is community engagement, as new and renewed RCMIs funded since 2016 are required to include a Community Engagement Core. Measures related to this concept include (1) number of memoranda of understanding (MOUs) signed, (2) expansion of community advisory boards, and (3) academic-community grants submitted.

The third primary target area for evaluating RCMI programs, fostering professional growth, focuses on the training, professional development, and support of early career investigators (ECIs), particularly those who are from historically underrepresented groups in academia (i.e., race, ethnicity, and gender). Comprehensive evaluation that entails both results on process and outcomes of various professional development efforts is important in the context of how RCMIs foster career development of RCMI-funded early investigators.

The fourth primary target area for RCMI evaluation, expanding research resources includes metrics that evaluate the growth in physical infrastructure, intellectual resources, and hiring faculty needed to conduct and expand health disparities and minority health research. Metrics in this category include the number of training seminars/workshops (e.g., statistics or research methodology) and/or biostatistics consultations for RCMI-affiliated faculty or early-career investigators. Other metrics in this area frequently include the tabulation of increases in physical research space, acquisition of technology or software, and developing or expanding data repository capacity. Finally, the number of researchers hired as a result of RCMI recruitment efforts is often documented as a direct measure of expansion of the RCMIs’ resources.

3.4. Phase 4: Case Study

Fourteen of the 18 RCMIs responded to the survey; only one set of responses was collected from each RCMI.

Table 2 summarizes the number of responses to the case study survey. Response rates ranged from 55.6%, (

n = 10) for scientific productivity (publications) and fostering professional growth (mentoring) metrics to 11.1% (

n = 2) for the health disparity (external grants submitted) metric (

Table 2). Select items that are non-identifying from the RCMI site profile are included in the findings, including from NIH RePORTER. The mean duration of funding of these RCMIs is 20 years (SD = 14.4) with nine that are over 27 years old.

The EWG compiled the case study results to discuss the breadth of approaches used to measure and operationalize the same metrics across centers. The case study survey results are summarized in

Table 3,

Table 4,

Table 5,

Table 6 and

Table 7. The findings related to the metric selected for each primary evaluation target included in the survey are discussed including how metrics are operationalized and measured across RCMIs. The number of responses for each sub-question may be greater than the number of respondents, because sub-questions were coded with multiple themes.

3.4.1. Scientific Productivity

The metric, number of peer-reviewed publications, was selected from the measures for scientific productivity (primary target). As presented in

Table 3, all nine RCMIs which responded to this sub-question operationalized the metric as publications in a peer-reviewed journal by investigators, studies, or affiliated faculty supported by RCMI. Progress reports/surveys and online databases, such as Scopus, Google Scholar, Web of Science, among others were reported by eight of ten RCMIs. All nine responding institutions indicated that they collected data from both primary and secondary sources. Five of nine responses each indicated data were collected at variable time points (annually, bi-annually, monthly, or bi-monthly).

3.4.2. Scientific Collaboration

A measure of scientific collaboration examined was the number of research partners (

Table 4). Eight of the nine respondents operationalized the metric as an individual or group participating in grant/research related activities (e.g., mentors, PI, co-investigator/s), organization, consortium, etc.). Four of eight responses indicated survey and tracking system/administrative records as the most common data collection method. All eight responding institutions reported that they collected data from both primary and secondary sources. Three of eight responses indicated that data collection was ongoing, biannual, and annual.

3.4.3. Professional Growth

Mentoring quality was examined as a metric for fostering the

professional growth of underrepresented investigators, a secondary target (

Table 5). Eight of nine respondents operationalized this metric as the perceived quality and satisfaction of professional growth activities for underrepresented investigators. All eight respondents indicated using survey methods to collect mentoring quality data. Six of nine respondents reported both primary and secondary data sources of collection. Data collection is conducted annually.

3.4.4. Research Resources

Intellectual resources was a secondary target measured as the number of biostatistics consults, workshops, seminars, and training (

Table 6). All seven respondents operationalized the metric as training activities (workshops, seminars) offered through RCMI communities. All eight respondents indicated data collection occurs through questionnaires or surveys with seven of eight respondents reporting primary data collection. Seven of eight responding RCMIs reported ongoing data collection efforts.

3.4.5. Community Engagement

A metric of community engagement examined was the number of formal agreements, MOUs or partnerships with community partners (

Table 7). Five of six respondents operationalized the measure as (1) community members are involved in the RCMI activities and decision making, and (2) community partnerships are included in proposals and research studies supported by RCMI. Six of seven institutions responded that surveys and interviews were used to collect data. Four of seven respondents indicated that data were collected from both primary and secondary sources. All six responding institutions indicated that data collection for their community engagement measure was ongoing.

3.4.6. Health Disparities

The number of external health disparity focused grants submitted by RCMI-funded pilot project investigators was the metric selected to measure health disparities. Given the very low response rate (11.1%, n = 2), results are not reported.

4. Discussion

The aims of this multiphase mixed-methods RCMI evaluation study were threefold: (1) develop an RCMI conceptual evaluation framework; (2) identify and examine shared evaluation metrics; and (3) identify and discuss challenges and best practices for evaluating the RCMI programs. The multiphase study resulted in establishing a uniform evaluation framework and specific metrics that can be used to demonstrate short and long-term success of the individual RCMIs and the collective RCMI consortium.

The RCMI Evaluation Conceptual Model (

Figure 1) illustrates the importance of collaborations with the key stakeholders (evaluation team, RCMI team, and other stakeholders) within each RCMI for a shared vision on evaluation approaches, activities, and procedures of each RCMI toward reducing health disparities. Similar to other research infrastructure programs that have addressed the need for common metrics (e.g., the CTSA) [

7], the subsequent key metrics identified in this study focus on stimulating and expanding research capacity for the RCMI. Moreover, integrating the evaluation tools and technologies, as well as best practices and procedures to collect, manage, and report the findings were identified as an essential part of the evaluation framework.

Previous examinations of research center evaluation metrics noted that in addition to those commonly used for academic research centers, expanded indicators are needed to sufficiently address the complexity of research initiatives such as the RCMI [

27]. To that end, in this project, the RCMI Evaluation Conceptual Model includes another essential aspect of a thriving evaluation process—i.e., identifying and addressing ongoing program challenges. In order to maximize the usefulness of the evaluation, the feedback process should be iterative, flexible, and dynamic. Incorporating these distinctions from its internal and external stakeholders ensures the RCMIs are adaptable and capable of reasonably responding to everchanging issues of the health environment while providing relevant research products [

28]. The evaluation team should continuously document programmatic challenges (“what can the RCMI improve?”) and best practices (“what is the RCMI doing that is highly successful or innovative?”); and engage with the RCMI leadership and community stakeholders to share those findings so they can make data-driven decisions that inform program improvement. The RCMI leadership must be adaptable and open to implementing changes. For instance, the current global pandemic has exposed us to a “new normal” and resulted in a transition to and adaptation of new technologies that warrants new metrics. Successful uptake of evaluation feedback at the RCMI program level results in key outcomes for RCMIs: increased underrepresented investigator-generated research and institutional research capacity, strengthened research infrastructure, and established sustainable, community-engaged partnerships focused on RCMIs’ long-term aims to reduce health disparities.

Comprehensive metrics were identified for each of the primary and secondary evaluation targets. For each of the four primary target areas—scientific productivity, scientific collaborations, professional growth, and research resources—a key metric was selected for further examination to obtain greater detail on how these outcomes are measured and collected across RCMIs. Although all respondents reported utilizing both primary and secondary sources to measure scientific productivity, significant variability in secondary data sources was noted across RCMI programs. Since most of the secondary sources for publication data are open-source, the sponsor NIMHD could consider establishing standard metrics and practices of data abstraction about peer-reviewed publications among the tools available: Scopus, Google Scholar, Web of Science, PubMed, or PubCrawler.

Increases in scientific collaborations have been shown to benefit research productivity [

16] and may eliminate barriers toward advancement for early career investigators [

17]. There were diverse operational definitions to evaluate scientific collaborations (e.g., number of research partners). This is likely due to the variability of program composition nationwide, in terms of populations served, age of the RCMI program (number of years funded), and the configuration of the program cores. Several newer RCMIs reported offering community support (funding) for linking community and RCMI partners to facilitate partnership building, planting the seeds for future research partnerships. The variety of these measures demonstrates the sharing between RCMIs of evaluation ideas to benefit the overall RCMI evaluation effort. In addition to the common measure (number of collaborations), scientific collaborations could also be measured by the breadth of affiliations and locations of collaborators to include those within and outside of the institution, including scientific collaborations that are regional as well as national.

Fostering professional growth through quality mentoring of early career and underrepresented investigators was operationalized as the perceived quality and satisfaction of professional growth activities, mentorship benefit or impact, trainee needs, and promotion and tenure. Although the field has made some progress in this area [

29,

30], an ongoing RCMI evaluation challenge for this measure is that common tools and instruments to measure each of these constructs are not being used, limiting the capacity to make cross-site comparisons. Metrics for the research resources target area were the number of biostatistics consults/supports, workshops, seminars, and trainings. Institutions that responded reported collecting data for these metrics through surveys or questionnaires, and tracking systems and administrative records were widely used.

Community engagement (the number of formal agreements, MOUs, or partnerships with community partners) was operationalized as community members involved in the RCMI activities and decision making, formal community partnerships in proposals, and partners associated with research studies supported by RCMI-related/affiliated activities. Data sources for this metric varied widely, including surveys, interviews, tracking systems, administrative records, needs assessments, progress reports, and focus groups. In order to support a robust national evaluation of the RCMI program by the NIMHD, Centers could benefit from sharing specific tools and instruments for evaluating community engagement and reviewing them to find common questions. A review of community engagement metrics from similarly complex initiatives [

31] and other common RCMI evaluation outcomes may also inform metrics for community engagement. For example the RCMI target “increase scientific collaboration” is also measured by “community partnerships.” Thus, those metrics that inform more than one RCMI target should be especially retained as they are robust evaluation measures.

Metrics and methods for data collection were not established for health disparities (e.g., the number of external health disparity-focused grants submitted by RCMI pilot project investigators) due to the low response on this outcome. In the RCMI context, reducing health disparities is a key dimension of the evaluation targets, not a stand-alone metric. Several examples to evaluate this outcome include grant proposals submitted with health disparity topics through the Investigator Development Core or an increase in underrepresented investigators at the RCMI institution who are conducting health disparities research. Additional metrics identified to evaluate health disparities as a target area would inform the RCMI impact and value added of this unique but necessary feature of RCMIs. At a minimum, a common understanding (shared vision) is needed among RCMI leadership, evaluators, and community stakeholders as to the interpretation of (what is meant by) this metric. Moreover, an agreement on operational definitions of key collective impacts for RCMI aligned with NIMHD’s mission such as “health disparity” and “health disparities research” as well as related concepts, such as “health inequities” and “social determinants of health”, would provide a shared vision across programs.

4.1. Recommendations and Future Directions

A well-defined evaluation approach is critical to RCMI program success. An inclusive pragmatic conceptual framework is an evaluation best practice that enables continuous program improvement strategies and supports the centers in meeting goals at their respective institutional level. The RCMI Evaluation Conceptual Model provides a structure to facilitate the close monitoring and careful documentation of RCMI program activities and initiatives to better understand the value-added of this program for individual programs and across RCMI programs [

14]. Moreover, establishing standard metrics strengthens the ability of the NIMHD to conduct national evaluation of the RCMI program. Both longstanding and newer centers could benefit from sharing of tools and instruments and finding common questions that site evaluators could adopt. Once instruments/tools are reviewed, a set of questions tied to each of the identified targets could be shared and suggested for use across sites. This would support a more robust national evaluation of the RCMI initiative by the NIMHD.

One of the primary NIMHD RCMI program goals is to foster early stage investigators’ careers, particularly those who focus on health disparity and minority health research. The key metrics identified from this effort should be applied to document productivity and outcomes associated with investigators and early-career faculty who are funded by the RCMI. The RCMI’s Investigator Development Core includes a pilot project program to provide research funding to investigators and foster early research careers. Process metrics are critical to document career advancement of early career investigators and can be used in continuous process improvement. These may include the number and types of workshops attended or feedback via mock reviews. Outcome metrics should include grant submissions/awards and publications among early career investigators funded by their RCMI, including the RCMI Pilot Project programs.

Long-term metrics for documenting the success of the pilot project program should focus on documenting the impact of the RCMI in fostering investigator career progression to becoming an independent researcher, demonstrated by converting pilot or early career funding into efforts to secure research funding on NIH Research Program Project Grants (R01 mechanism) or equivalent grants. Evaluation of how the RCMIs foster investigators’ careers should rely on secondary data sources, whenever possible, to reduce respondent burden. However, the inclusion of primary data sources for selected key metrics ensures investigators’ involvement in the evaluation process and provides a means of demonstrating their individual success. This way, RCMI researchers can serve as “champions” of ongoing evaluation data collection.

Community engagement is a distinctive value-added component of the RCMI programs. As such, evaluation of community engagement is fundamental to supporting the broader goals of RCMI efforts. Assessment of engagement with communities may be measured in various ways, due to the complexity of capturing these activities accurately—i.e., community-specific activities, with little guidance on metrics. However, evaluations should go beyond the current commonly used process metrics (e.g., documentation of memoranda of understanding, use of community engagement principles, or citing the number of community partnerships in funding opportunities). Attempts should be made to document the direction of engagement (community-initiated or investigator-initiated) as well as the level of involvement of communities in all stages of the research process [

10]. In cases of RCMI community-engaged research, community partners should be engaged in the decision making process to identify relevant evaluation metrics to ensure that the measures and subsequent results are meaningful to the community [

32]. Moreover, evaluating the extent to which RCMIs engage community partners as research collaborators to ensure that communities receive the benefits of the research is fundamental to the mission of the RCMI program and important for continued support by NIMHD.

A culture of evaluation, continuous dissemination of findings to the academic and lay communities, and opportunities for leveraging program and consortium-driven data for program decision making must be created to foster consistent and responsive evaluation tracking. Existing research infrastructure programs, such as NIH’s IDeA Networks of Biomedical Research Excellence [

33] and the CTSA [

7,

29,

31], PACHE [

19], and National Research Mentoring Network [

34] initiatives, may provide guidance on best practices that should be explored.

The EWG’s multiphase mixed-methods approach operationalized and validated the disparate evaluation activities across RCMIs, resulted in an RCMI Evaluation Conceptual Model, and identified metrics and best practices while depicting the diversity of RCMI communities. Meaningful, successful evaluation and tracking are continual processes that facilitate the documentation of impact and should be championed across the institutions [

35]. RCMIs should continue to include qualitative methods recognizing that qualitative data is critical to produce results for process and outcome evaluations. Results obtained from interviews, focus groups, observations, and case studies, for example, would help describe and inform impacts of the RCMI program. [

36] A key evaluation next step for RCMIs should be to engage a broader collaboration across the programs and include the engagement of NIMHD program leadership and community stakeholders. This effort would establish and designate key, cross-cutting (and unique) RCMI metrics that could demonstrate the successful impact of both long-standing and recent RCMIs.

4.2. Limitations

An overall limitation is that not all RCMI evaluators or other key personnel participated in this process, as participation in this effort was voluntary and optional (not a grant or evaluation requirement). Additionally, time constraints and competing demands may not have allowed for survey respondents (evaluators) to consult with their RCMI stakeholders, or respond at all. Having a longer timeline to allow for RCMI evaluators to complete the survey, especially amidst the COVID-19 pandemic, would have been ideal. Furthermore, we did not obtain responses to some survey questions that originally were not phrased as a question. Including pilot testing of our survey items may have caught our inconsistent format of asking for information. Finally, this was not an anonymous survey, and since a Google document was used for collecting information, respondents could see each other’s entries. These limitations might have impacted the accuracy and reliability of responses. The EWG acknowledges that the evaluation metrics presented here may need to be better defined and further refined; however, this was beyond the scope of this manuscript. A working group specifically to critically examine each metric is recommended.

5. Conclusions

The process and approach for evaluating the progress of the individual RCMIs and a program-wide evaluation are described in this manuscript, providing original guidance that should be prioritized for RCMI evaluations. Our effort to identify an RCMI evaluation conceptual model, metrics and measures, and best practices and challenges resulted in the development of an integrative pragmatic framework for collecting common critical data points and operationalizing measures and data collection approaches.

Challenges and best practices of evaluating programs are varied and many. The RCMIs are positioned in communities that appreciate a mutually-beneficial research process in an effort to effectively change behaviors and policies that address health disparities in traditionally disenfranchised communities [

8,

37]. A systematic approach to evaluation must be championed by all program stakeholders and requires the ongoing recording and documenting of the inputs, activities, and outputs to assess key process and outcome metrics. Failure to include the diverse stakeholders and agreed-upon sources of data or information can limit the evaluation team’s ability to accurately identify performance gaps and derive appropriate solutions.

Findings address existing gaps about evaluation approaches for defining metrics and collecting critical data that demonstrate the aggregated impact of the RCMI consortium. This unique and vigorous collaboration of RCMI evaluators and the RCMI Translational Research Network coordinators established key metrics and measures for RCMIs. Collaborative and coordinated data collection, management, analysis, and reporting guided by the RCMI Evaluation Conceptual Model will support each Center in fostering health disparities research, and allow the centers to leverage their collective strength as a consortium to address health disparities. The availability of a guiding evaluation framework and identification of metrics may facilitate more rigorous evaluation approaches and improve the ability of RCMIs to demonstrate the collective impact of their health disparities research programs. Furthermore, obtaining relevant, robust evaluation results will also aid the funding agency, NIMHD, in demonstrating the added value of the RCMI program in achieving the agency’s mission. Ultimately, the impacts of the RCMIs (individually and collectively) will benefit marginalized communities and increase the likelihood of health equity.