Sparse Depth-Guided Image Enhancement Using Incremental GP with Informative Point Selection

Abstract

1. Introduction

- Online depth learning using iGP;

- Information theoretic data point selection;

- Quantitative measures for dehazing qualities based on information metrics;

- Independence of the channel number (both color and gray images).

2. Atmospheric Scattering Model

3. Incremental Gaussian Process

3.1. Gaussian Process Regression

3.2. iGP Kernel Function

3.3. iGP with QR Decomposition

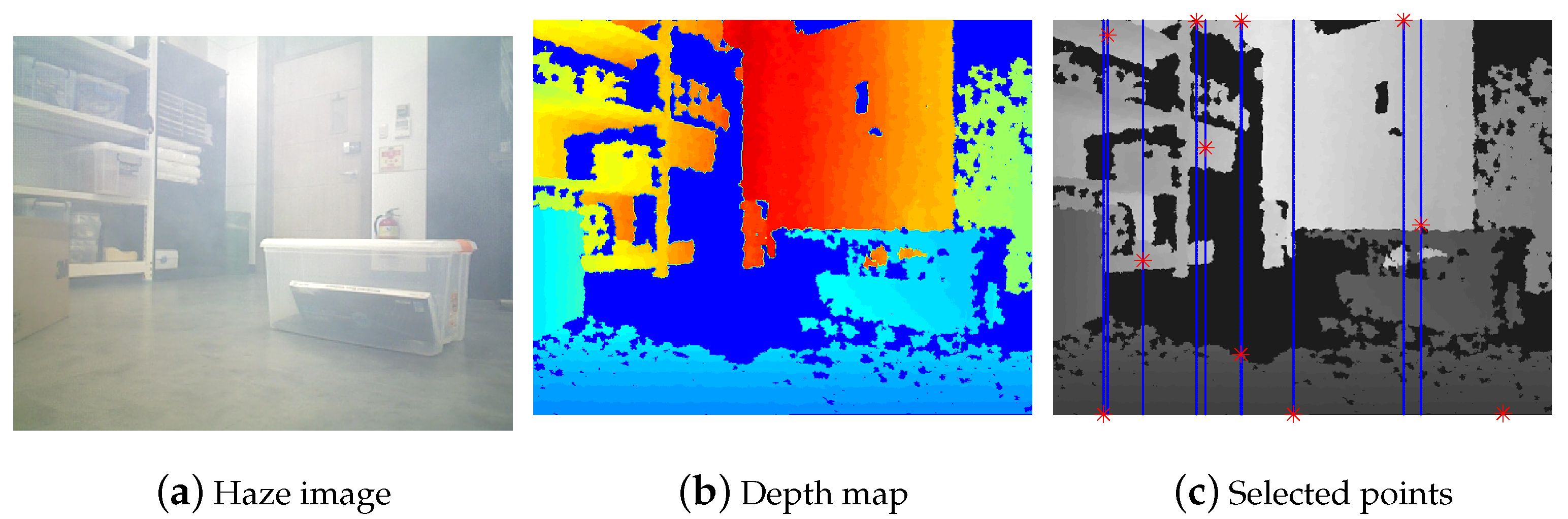

4. Information Enhanced Online Dehazing

4.1. Active Measurement Selection

| Algorithm 1 Active point(s) selection |

|

4.2. Stop Criterion

| Algorithm 2 Online Dehazing with iGP |

|

4.3. Dense Depth Map-Based Haze Removal

| Algorithm 3 Image Dehaze |

|

5. Experimental Results

5.1. Datasets

- Synthetic haze images with ground-truth;

- Real indoor and outdoor haze images;

- Grayscale haze images;

- Real underwater images without ground-truth.

5.2. Implementation Details

5.3. Evaluation Metrics

5.4. Synthetic Haze with Ground-Truth

| Data | PSNR ↑ | SSIM ↑ | ↑ | r ↑ |

|---|---|---|---|---|

| He [7] | 18.598 | 0.537 | 0.554 | 1.402 |

| Kim [28] | 10.820 | 0.380 | 0.450 | 1.413 |

| Cai [30] | 12.61 | 0.357 | 0.448 | 0.975 |

| Cho [30] | 11.732 | 0.431 | 0.542 | 1.681 |

| Griddehaze [22] | 12.55 | 0.374 | 0.448 | 1.826 |

| FFA-Net [29] | 11.154 | 0.330 | 0.416 | 1.818 |

| AOD-Net [20] | 11.342 | 0.366 | 0.527 | 1.422 |

| DehazeFormer [21] | 12.63 | 0.372 | 0.529 | 1.512 |

| Proposed | 19.945 | 0.567 | 0.590 | 2.152 |

5.5. Real Indoor/Outdoor Haze

| Data | PSNR ↑ | SSIM ↑ | ↑ | r ↑ |

|---|---|---|---|---|

| He [7] | 22.012 | 0.600 | 0.156 | 1.165 |

| Kim [28] | 16.814 | 0.514 | 0.089 | 0.891 |

| Cai [30] | 14.95 | 0.458 | 0.135 | 0.975 |

| Cho [12] | 16.49 | 0.553 | 0.144 | 0.987 |

| Griddehaze [22] | 12.68 | 0.429 | 0.162 | 0.932 |

| FFA-Net [29] | 11.45 | 0.369 | 0.105 | 0.524 |

| AOD-Net [20] | 12.17 | 0.382 | 0.158 | 0.648 |

| DehazeFormer [21] | 11.34 | 0.366 | 0.126 | 0.648 |

| Proposed | 24.302 | 0.588 | 0.170 | 1.011 |

5.6. Real Indoor Haze (Gray Images)

5.7. Real Underwater Scene

5.8. Feature Detection and Processing Time

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Kim, A.; Eustice, R.M. Real-time visual SLAM for autonomous underwater hull inspection using visual saliency. IEEE Trans. Robot. 2013, 29, 719–733. [Google Scholar] [CrossRef]

- Engel, J.; Schöps, T.; Cremers, D. LSD-SLAM: Large-Scale Direct Monocular SLAM. In European Conference on Computer Vision, Proceedings of the 13th European Conference, Zurich, Switzerland, 6–12 September 2014; Springer: Cham, Switzerland, 2014. [Google Scholar]

- Lenz, I.; Lee, H.; Saxena, A. Deep learning for detecting robotic grasps. Int. J. Robot. Res. 2015, 34, 705–724. [Google Scholar] [CrossRef]

- Li, J.; Eustice, R.M.; Johnson-Roberson, M. Underwater robot visual place recognition in the presence of dramatic appearance change. In Proceedings of the IEEE/MTS OCEANS Conference and Exhibition, Washington, DC, USA, 19–22 October 2015. [Google Scholar]

- Kunz, C.; Murphy, C.; Singh, H.; Pontbriand, C.; Sohn, R.A.; Singh, S.; Sato, T.; Roman, C.; Nakamura, K.i.; Jakuba, M.; et al. Toward extraplanetary under-ice exploration: Robotic steps in the Arctic. J. Field Robot. 2009, 26, 411–429. [Google Scholar]

- Fattal, R. Single image dehazing. ACM Trans. Graph. 2008, 27, 72. [Google Scholar] [CrossRef]

- He, K.; Sun, J.; Tang, X. Single image haze removal using dark channel prior. IEEE Trans. Pattern Anal. Mach. Intell. 2011, 33, 2341–2353. [Google Scholar] [PubMed]

- Zhu, Q.; Mai, J.; Shao, L. A fast single image haze removal algorithm using color attenuation prior. Image Process. IEEE Trans. 2015, 24, 3522–3533. [Google Scholar]

- Carlevaris-Bianco, N.; Mohan, A.; Eustice, R.M. Initial results in underwater single image dehazing. In Proceedings of the IEEE/MTS OCEANS Conference and Exhibition, Seattle, WA, USA, 20–23 September 2010; IEEE: Toulouse, France, 2010; pp. 1–8. [Google Scholar]

- Ancuti, C.; Ancuti, C.O.; Haber, T.; Bekaert, P. Enhancing underwater images and videos by fusion. In IEEE Conference on Computer Vision and Pattern Recognition, Proceedings of the 2012 IEEE Conference on Computer Vision and Pattern Recognition, Providence, RI, USA, 16–21 June 2012; IEEE: Toulouse, France, 2012; pp. 81–88. [Google Scholar]

- He, K.; Sun, J.; Tang, X. Guided image filtering. IEEE Trans. Pattern Anal. Mach. Intell. 2013, 35, 1397–1409. [Google Scholar] [CrossRef]

- Cho, Y.; Shin, Y.S.; Kim, A. Online depth estimation and application to underwater image dehazing. In Proceedings of the OCEANS 2016 MTS/IEEE Monterey, Monterey, CA, USA, 19–23 September 2016; IEEE: Toulouse, France, 2016; pp. 1–7. [Google Scholar]

- Berman, D.; Treibitz, T.; Avidan, S. Diving into haze-lines: Color restoration of underwater images. In Proceedings of the British Machine Vision Conference (BMVC), London, UK, 4–7 September 2017; Volume 1. [Google Scholar]

- Babaee, M.; Negahdaripour, S. Improved range estimation and underwater image enhancement under turbidity by opti-acoustic stereo imaging. In Proceedings of the IEEE/MTS OCEANS Conference and Exhibition, Genova, Italy, 18–21 May 2015; IEEE: Toulouse, France, 2015; pp. 1–7. [Google Scholar]

- Cho, Y.; Kim, A. Channel invariant online visibility enhancement for visual SLAM in a turbid environment. J. Field Robot. 2018, 35, 1080–1100. [Google Scholar] [CrossRef]

- Li, C.; Anwar, S.; Porikli, F. Underwater scene prior inspired deep underwater image and video enhancement. Pattern Recognit. 2020, 98, 107038. [Google Scholar] [CrossRef]

- Wang, Y.; Guo, J.; Gao, H.; Yue, H. UIEC 2-Net: CNN-based underwater image enhancement using two color space. Signal Process. Image Commun. 2021, 96, 116250. [Google Scholar] [CrossRef]

- Fabbri, C.; Islam, M.J.; Sattar, J. Enhancing underwater imagery using generative adversarial networks. In Proceedings of the 2018 IEEE International Conference on Robotics and Automation (ICRA), South Brisbane, Australia, 21–25 May 2018; IEEE: Toulouse, France, 2018; pp. 7159–7165. [Google Scholar]

- Islam, M.J.; Xia, Y.; Sattar, J. Fast underwater image enhancement for improved visual perception. IEEE Robot. Autom. Lett. 2020, 5, 3227–3234. [Google Scholar] [CrossRef]

- Li, B.; Peng, X.; Wang, Z.; Xu, J.; Feng, D. Aod-net: All-in-one dehazing network. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 4770–4778. [Google Scholar]

- Song, Y.; He, Z.; Qian, H.; Du, X. Vision Transformers for Single Image Dehazing. arXiv 2022, arXiv:2204.03883. [Google Scholar]

- Liu, X.; Ma, Y.; Shi, Z.; Chen, J. Griddehazenet: Attention-based multi-scale network for image dehazing. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Republic of Korea, 27 October–2 November 2019; pp. 7314–7323. [Google Scholar]

- Narasimhan, S.G.; Nayar, S.K. Vision and the atmosphere. Int. J. Comput. Vis. 2002, 48, 233–254. [Google Scholar] [CrossRef]

- Ranganathan, A.; Yang, M.H.; Ho, J. Online sparse Gaussian process regression and its applications. IEEE Trans. Image Process. 2011, 20, 391–404. [Google Scholar] [CrossRef]

- Snelson, E.L. Flexible and Efficient Gaussian Process Models for Machine Learning. Ph.D. Thesis, University of Cambridge, Cambridge, UK, 2007. [Google Scholar]

- Contal, E.; Perchet, V.; Vayatis, N. Gaussian process optimization with mutual information. arXiv 2013, arXiv:1311.4825. [Google Scholar]

- Choe, Y.; Ahn, S.; Chung, M.J. Online urban object recognition in point clouds using consecutive point information for urban robotic missions. Robot. Auton. Syst. 2014, 62, 1130–1152. [Google Scholar] [CrossRef]

- Kim, J.H.; Jang, W.D.; Sim, J.Y.; Kim, C.S. Optimized contrast enhancement for real-time image and video dehazing. J. Vis. Commun. Image Represent. 2013, 24, 410–425. [Google Scholar] [CrossRef]

- Qin, X.; Wang, Z.; Bai, Y.; Xie, X.; Jia, H. FFA-Net: Feature fusion attention network for single image dehazing. Natl. Conf. Artif. Intell. 2020, 34, 11908–11915. [Google Scholar] [CrossRef]

- Cai, B.; Xu, X.; Jia, K.; Qing, C.; Tao, D. Dehazenet: An end-to-end system for single image haze removal. IEEE Trans. Image Process. 2016, 25, 5187–5198. [Google Scholar] [CrossRef]

- Meng, G.; Wang, Y.; Duan, J.; Xiang, S.; Pan, C. Efficient image dehazing with boundary constraint and contextual regularization. In Proceedings of the IEEE International Conference on Computer Vision, Sydney, NSW, Australia, 1–8 December 2013; pp. 617–624. [Google Scholar]

- Zhu, Q.; Mai, J.; Shao, L. Single Image Dehazing Using Color Attenuation Prior. In Proceedings of the British Machine Vision Conference, Nottingham, UK, 1–5 September 2014. [Google Scholar]

- Handa, A.; Whelan, T.; McDonald, J.; Davison, A. A Benchmark for RGB-D Visual Odometry, 3D Reconstruction and SLAM. In Proceedings of the IEEE International Conference on Robotics and Automation, Hong Kong, China, 31 May–7 June 2014; pp. 1524–1531. [Google Scholar]

- Zhang, Y.; Ding, L.; Sharma, G. Hazerd: An outdoor scene dataset and benchmark for single image dehazing. In Proceedings of the 2017 IEEE International Conference on Image Processing (ICIP), Beijing, China, 17–20 September 2017; IEEE: Toulouse, France, 2017; pp. 3205–3209. [Google Scholar]

- Ancuti, C.O.; Ancuti, C.; Timofte, R.; De Vleeschouwer, C. O-haze: A dehazing benchmark with real hazy and haze-free outdoor images. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Salt Lake City, UT, USA, 16–22 June 2018; pp. 754–762. [Google Scholar]

- Shim, I.; Lee, J.Y.; Kweon, I.S. Auto-adjusting camera exposure for outdoor robotics using gradient information. In Proceedings of the 2014 IEEE/RSJ International Conference on Intelligent Robots and Systems, Chicago, IL, USA, 14–18 September 2014; IEEE: Toulouse, France, 2014; pp. 1011–1017. [Google Scholar]

- Tarel, J.P.; Hautiere, N. Fast visibility restoration from a single color or gray level image. In Proceedings of the 2009 IEEE 12th International Conference on Computer Vision, Kyoto, Japan, 29 September–2 October 2009; IEEE: Toulouse, France, 2009; pp. 2201–2208. [Google Scholar]

- Panetta, K.; Gao, C.; Agaian, S. Human-visual-system-inspired underwater image quality measures. IEEE J. Ocean. Eng. 2015, 41, 541–551. [Google Scholar] [CrossRef]

- Yang, M.; Sowmya, A. An underwater color image quality evaluation metric. IEEE Trans. Image Process. 2015, 24, 6062–6071. [Google Scholar] [CrossRef] [PubMed]

- Lowe, D.G. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis. 2004, 60, 91–110. [Google Scholar] [CrossRef]

- Alcantarilla, P.F.; Bartoli, A.; Davison, A.J. KAZE features. In Computer Vision–ECCV 2012; Springer: New York, NY, USA, 2012; pp. 214–227. [Google Scholar]

| Num. of Matches | Num. of Inliers | Inlier Ratio | ||||

|---|---|---|---|---|---|---|

| SIFT | KAZE | SIFT | KAZE | SIFT | KAZE | |

| Clean | 142 | 130 | 45 | 41 | 31.69 % | 31.53 % |

| Haze | 0 | 5 | 0 | 0 | 0 | 0 |

| Proposed | 88 | 82 | 30 | 30 | 34.09 % | 36.58 % |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2023 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Yang, G.; Lee, J.; Kim, A.; Cho, Y. Sparse Depth-Guided Image Enhancement Using Incremental GP with Informative Point Selection. Sensors 2023, 23, 1212. https://doi.org/10.3390/s23031212

Yang G, Lee J, Kim A, Cho Y. Sparse Depth-Guided Image Enhancement Using Incremental GP with Informative Point Selection. Sensors. 2023; 23(3):1212. https://doi.org/10.3390/s23031212

Chicago/Turabian StyleYang, Geonmo, Juhui Lee, Ayoung Kim, and Younggun Cho. 2023. "Sparse Depth-Guided Image Enhancement Using Incremental GP with Informative Point Selection" Sensors 23, no. 3: 1212. https://doi.org/10.3390/s23031212

APA StyleYang, G., Lee, J., Kim, A., & Cho, Y. (2023). Sparse Depth-Guided Image Enhancement Using Incremental GP with Informative Point Selection. Sensors, 23(3), 1212. https://doi.org/10.3390/s23031212