1. Introduction

Three-dimensional (3D) reconstruction is the process of creating a three-dimensional representation of a physical object or environment from two-dimensional images or other sources of data. The goal of 3D reconstruction is to create a digital model that accurately represents the shape, size, and texture of the object or environment. It can create accurate models of buildings, terrain, and archaeological sites, as well as virtual environments for video games and other applications. These 3D models can be created by automatic scanning of static objects using LiDAR scanners [

1] or structured light scanners [

2]. However, structured light scanning is sometimes expensive and is viable under certain conditions. Another solution is to create 3D models directly from high-resolution camera images captured under favorable lighting conditions. One such solution is a multi-camera-based photogrammetric setup capturing a fixed-size volume. Such camera setups are typically calibrated and capture high-resolution static photos simultaneously. These camera setups produce high-quality 3D models and precise measurements. However, such a setup is also very expensive due to the requirement of special equipment such as multiple cameras, special light sources, and studio setups. A low-cost solution to this problem is Structure from Motion (SfM), which aims to create sparse 3D models using multiple images of the same object, captured from different viewpoints using a single camera, and without requiring camera locations and orientations.

SfM has become a popular choice to create 3D models due to its low-cost nature and simplicity. Structure from Motion is a very well-studied research problem. In early research works, Pollefeys et al. [

3] developed a complete system to build a sparse 3D model of the scene from uncalibrated image sequences captured using a hand-held camera. At the time of writing, there is a plethora of choices for SfM software packages, each with its unique features and capabilities. Some are open-source software, such as COLMAP [

4], MicMac [

5], OpenMVS [

6], and so on, while some others are commercial software packages, such as Metashape (

https://www.agisoft.com (accessed on 23 May 2023)), RealityCapture (

https://www.capturingreality.com (accessed on 23 May 2023)), etc. They rely on automatic keypoint detection and matching algorithms to estimate 3D structures. The input to such an SfM software is only a collection of digital photographs, generally captured by the same camera. However, these fully automatic tools usually require suitable lighting conditions and high-quality photographs, to generate high-quality 3D models. These conditions are very difficult to be fulfilled in industrial environments because there may be low lighting (which exacerbates blurring) and utility companies may have legacy video cameras capturing videos at low resolution. These legacy cameras are meant for plants’ visual inspection and enduring chemical, temperature, and radiation stresses.

The mentioned issues may become more severe in video-based SfM because video frames have motion blur and are aggressively compressed, leading to strong compression artifacts (e.g., ringing, blocking, etc.). Most modern cameras capture videos at 30 fps, so a few minutes of video produces a high number of frames, e.g., 10 min of footage is already 18,000 frames. Such a high number of frames not only increase computational time significantly but also give low-quality 3D output due to insufficient camera motion in consecutive frames. If we pass such featureless images (e.g., see

Figure 1) as inputs to an SfM software, the number of accurately detected features and correspondences will be very low, leading to a low-quality 3D output. In this context, we have developed Movie Reconstruction Laboratory (MoReLab) (

https://github.com/cnr-isti-vclab/MoReLab (accessed on 23 May 2023)), which is a software tool to perform user-assisted reconstruction on uncalibrated camera videos. MoReLab will address the problem of SfM in the case of featureless and poor-quality videos by exploiting the user indications about the structure to be reconstructed. A small amount of manual assistance can produce accurate models also in these difficult settings. User-assisted 3D reconstruction can significantly decrease the computational burden and also reduce the number of input images required for 3D reconstruction.

In contrast to automatic feature detection and matching-based SfM systems, the main contribution of MoReLab is a user-friendly interactive way that allows the user to provide topology prior to reconstruction. This modification allows MoReLab to achieve better results in featureless videos by leveraging the user’s knowledge of visibility and understanding of the video across frames. Once the user has added features and correspondences manually on 2D images, a bundle adjustment algorithm [

7] is utilized to estimate camera poses and a sparse 3D point cloud corresponding to these features. MoReLab achieves accurate sparse 3D points estimation by adding features on as few as two or three images. The estimated 3D point cloud is overlaid on manually added 2D feature points to give a visual indication of the accuracy of estimated 3D points. Then, MoReLab provides several primitives such as rectangles, cylinders, curved cylinders, etc., to model parts of the scene. Based on a visual understanding of the shape of the desired object, the user selects the appropriate primitive and marks vertices or feature points to define it in a specific location. This approach gives control to the user to extract specific shapes and objects in the scene. By exploiting inputs from the user at several stages, it is possible to obtain 3D reconstruction even from poor-quality videos. Additionally, the overall computational burden with regard to a fully automatic pipeline is significantly reduced.

2. Related Work

There have been several research works in the field of user-assisted reconstruction from unordered and multi-view photographs. Early research works include VideoTrace [

8], which is an interface to generate realistic 3D models from video. Initially, automatic feature detection-based SfM is applied to video frames, and a sparse 3D point cloud is overlaid on the video frame. Then, the user traces out the desired boundary lines, and a closed set of line segments generates an object face. Sinha et al. [

9] modeled architectures using a combination of piecewise planar 3D models. Their system also computes sparse 3D data in such a way that lines are extracted, and vanishing points are estimated in the scene as well. After this automatic preprocessing, the user draws outlines on 2D photographs. Piecewise planar 3D models are estimated by combining user-provided 2D outlines and automatically computed sparse 3D points. A few such user interactions can create a realistic 3D model of the scene quickly. Hu et al. [

10] developed an interface for creating accurate 3D models of complex mechanical objects and equipment. First, sparse 3D points are estimated from multi-view images and are overlaid on 2D images. Second, stroke-based sweep modeling creates 3D parts, which are also overlaid on the image. Third, the motion structure of the equipment is recovered. For this purpose, a video clip recording of the working mechanism of the equipment is provided, and a stochastic optimization algorithm recovers motion parameters. Rasmuson et al. [

11] employ COLMAP [

4] as a preprocessing stage to calibrate images. Their interface allows users to mark image points and place quads on top of images. The complete 3D model is obtained by applying global optimization on all quad patches. By exploiting user-provided information about topology and visibility, they are able to model complex objects as a combination of a large number of quads.

Some researchers developed interfaces where users can paint desired foreground regions using brush strokes. Such an interface was developed by Habbecke and Kobbelt [

12]. Their interface consists of a 2D image viewer and a 3D object viewer. The user paints the 2D image in a 2D image viewer with the help of a stroke. The system computes an optimal mesh corresponding to the user-painted region of input images. During the modeling session, the system incrementally continues to build 3D surface patches and guide the surface reconstruction algorithm. Similarly, in the interface developed by Baldacci et al. [

13], the user indicates foreground and background regions with different brush strokes. Their interface allows the user to provide localized hints about the curvature of a surface. These hints are utilized as constraints for the reconstruction of smooth surfaces from multiple views. Doron et al. [

14] require stroke-based user annotations on calibrated images, to guide multi-view stereo algorithms. These annotations are added into a variational optimization framework in the form of smoothness, discontinuity, and depth ordering constraints. They show that their user-directed multi-view stereo algorithm improves the accuracy of the reconstructed depth map in challenging situations.

Another direction in which user interfaces need to be developed is single-view reconstruction. Single-view reconstruction is complicated without any prior knowledge or manual assistance because epipolar cannot be established. Töppe et al. [

15] introduced convex shape optimization to minimize weighted surface area for a fixed user-specified volume in single-view 3D reconstruction. Their method relies on implicit surface representation to generate high-quality 3D models by utilizing a few user-provided strokes on the image. 3-Sweep [

16] is an interactive and easy-to-use tool for extracting 3D models from a single photo. When a photo is loaded into the tool, it estimates the boundary contour. Once the boundary contour is defined, the user selects the model shape and creates an outline of the desired object using three painting brush strokes, one in each dimension of the image. By applying the foreground texture segmentation, the interface quickly creates an editable 3D mesh object which can be scaled, rotated, or translated.

Recently, researchers have made significant progress in the area of 3D reconstruction using deep learning approaches. The breakthrough work by Mildenahall et al. [

17] introduced NeRF, which synthesizes novel views of a scene using a small set of input views. A NeRF is a fully connected deep neural network whose input is a single 5D coordinate (spatial location

and viewing direction

), and output is emitted radiance and volume density. To the best of our knowledge, a NeRF-like method that tackles at the same time all conditions of low-quality videos (blurred frames, low resolution, turbulence caused by liquids, etc.) have not been presented yet [

18]. A GAN-based work, Pi-GAN [

19], is a promising generative model-based architecture for 3D-aware image synthesis. However, their method has the main focus on faces and cars, so to be applicable in our context, there is the need to build a specific dataset for re-training (e.g., a dataset of industrial equipment, 3D man-made objects, and so on). Tu et al. [

20] presented a self-supervised reconstruction model to estimate texture, shape, pose, and camera viewpoint using a single RGB input and a trainable 2D keypoint estimator. Although this method may be seminal for more general 3D reconstructions, the current work is currently focused on human hands.

Existing research works pose several challenges for low-quality industrial videos, which are typically captured by industrial utility companies. First, most works [

8,

9,

10,

11,

14] in user-assisted reconstruction, still require high-quality images because they are using automatic SfM pipelines as their initial step. Our focus is on low-quality videos in industrial scenarios, where SfM generates an extremely sparse point cloud, making subsequent 3D operations extremely difficult. Second, these research works lack sufficient functionalities to be able to model a variety of industrial equipment. Third, these research works are not available as open-source, limiting their usage for non-technical users. Hence, our research contributions are as follows:

In MoReLab, there is no feature detection and matching stage. Instead, the user needs to add features manually based on the visual understanding of the scene. We have implemented several user-friendly functionalities to speed up this tedious process for the user. MoReLab is open-source software targeted for modeling industry scenarios and available for non-commercial applications for everyone.

4. Experiments and Results

We analyzed the performance of MoReLab and other approaches on some videos for modeling different industrial equipment. We started our comparison using an image-based reconstruction software package, showing that the results are of poor quality in these cases. Then, we will show what we obtain with user-assisted tools for the same videos. We performed our experiments on two datasets. The first dataset consists of videos provided by a utility company in the energy sector. Ground-truth measurements have also been provided for two videos of this dataset for quantitative testing purposes. The second dataset was captured in our research institute to provide some additional results.

Agisoft Metashape is a popular high-quality commercial SfM software, which we applied to our datasets. Such software extracts features automatically, matches them, calibrates cameras, densely reconstructs the final scene, and generates a final mesh. The output mesh can be visualized in a 3D mesh processing software such as MeshLab [

26].

Results obtained with SfM software allow us to model these videos with user-assisted tools, e.g., see Figure 7b. 3-Sweep is an example of software for user-assisted 3D reconstruction from a single image. It requires the user to have an understanding of the shapes of the components. Initially, the border detection stage uses edge detectors to estimate the outline of different components. The user selects a particular primitive shape, and three strokes generate a 3D component that snaps to the object outline. Such a user-interactive interface combines the cognitive abilities of humans with fat image processing algorithms. We will perform a visual comparison of modeling different objects with an SfM software package, 3-Sweep, and our software.

Table 1 presents a qualitative comparison of the functionalities of software packages being used in our experiments. The measuring tool in MeshLab performs measurements on models exported from Metashape and 3-Sweep.

4.1. Cuboid Modeling

3-Sweep allows us to model cuboids. In MoReLab, flat 2D surfaces can be modeled with the rectangle tool and quadrilateral tool. To estimate a cuboid, more rectangles and quadrilaterals need to be estimated in other views as well to form a cuboid.

Figure 4 shows the results of modeling an image segment containing a cuboid with Metashape, 3-Sweep, and MoReLab.

Figure 4b shows the result of the approximation of the cuboid with Metashape. There is a very high degree of approximation and the surface is not smooth.

Figure 4c,d show the result of extracting a cuboid using 3-Sweep. The modeling in 3-Sweep starts by detecting the boundaries of objects at the start. Despite changing thresholds, this detection stage is prone to errors and shows very little robustness. Hence, the boundary of the extracted model is not smooth, and the shape of the model is irregular.

Figure 4e,f illustrate modeling in MoReLab using the rectangle tool and quadrilateral tool. Every rectangle in

Figure 4e is planar with 90-degree inner angles. However, each quadrilateral in

Figure 4f may or may not be planar, depending on the 3D locations of the points being connected. In other words, orthogonality is not enforced in

Figure 4f.

4.2. Jet Pump Beam Modeling

The jet pump beam is monitored in underwater and industrial scenarios, to observe deformations or any other issues. The jet pump beam is also modeled with different software programs in

Figure 5. Metashape reconstructs a low-quality 3D model of the jet pump beam. Another view of Figure 8a shows that Metashape has estimated two jet pump beams instead of a single jet pump beam. The beam model is passing through the floor in this reconstruction. The jet pump beam model is missing surfaces at different viewpoints, and the model is merged with the floor at different places. This low-quality result can be attributed to dark environments, the featureless surface of the pump, and the low distance of the object from the camera. The mesh, obtained by modeling the jet pump beam with 3-Sweep, has a low-quality boundary and does not represent the original shape of the jet pump beam (see

Figure 5d).

The jet pump beam has also been modeled with MoReLab in

Figure 5e. The quadrilateral tool has been used to estimate the surface of the jet pump beam. The output mesh is formed by joining piecewise quadrilaterals on the surface of the jet pump beam. Quadrilaterals on the upper part of the jet pump are aligned very well together; but, some misalignment can be observed on surfaces at the side of the jet pump beam. The resulting mesh has a smooth surface and reflects the original shape of the jet pump beam. Hence, this result is better than the mesh in

Figure 5b and mesh in

Figure 5d.

4.3. Cylinder Modeling

Equipment of cylindrical shape is common in different industrial plants. We have also modeled a cylinder with our tested approaches, and the results have been presented in

Figure 6. In the Metashape reconstruction of the cylinder in

Figure 6b, some geometric artifacts are observed, and the surface is not smooth.

Figure 6c,d show the result of using 3-Sweep. While the boundary detection is better than that in

Figure 4c, the cylinder still does not have a smooth surface. On the other hand, the cylinder mesh obtained by modeling with MoReLab has a smoother surface and is more consistent than that obtained with 3-Sweep.

Figure 6e,f show the result of modeling a cylinder, using the base cylinder tool in MoReLab. The reason to use this specific tool is that the center of the cylinder base is not visible, and features are visible only on the surface of the cylinder. As just stated, the cylinder obtained is more consistent and smooth than the one obtained with Metashape and 3-Sweep.

4.4. Curved Pipe Modeling

Figure 7 compares the modeling of curved pipes in Metashape, 3-Sweep, and MoReLab. In general, the reconstruction of curved pipes is difficult due to the lack of features.

Figure 7b shows the result of modeling curved pipes using Metashape. The result is extremely low-quality because background walls are merged with the pipes, and visually similar pipes produce different results. The result of using 3-Sweep is shown in

Figure 7c. As shown in

Figure 7d, the mesh obtained with 3-Sweep hardly reflects the original pipe. Due to discontinuous outline detection and curved shape, multiple straight cylinders are estimated to model a single curved pipe.

Figure 7e–j show the steps of modeling two curved cylinders in MoReLab. The results are quite good, even if there is a small misalignment between the pipes and the underlying frame.

4.5. Additional Experiments

After observing the results of the data provided by the utility company, we captured a few more videos to conduct additional experiments and better evaluate our approach. These videos are captured on the roof of our research institute, which is full of steel pipes and other featureless objects.

Figure 8 shows the result of modeling a video with Metashape, 3-Sweep, and MoReLab. While the overall 3D model obtained with Metashape (

Figure 8a) looks good, a visual examination of the same model from a different viewpoint (

Figure 8b) shows that the T-shaped object and curved pipe lack a surface from behind. This can be due to the lack of a sufficient number of features and views at the back side of the T-shaped object and curved pipe. 3-Sweep output in

Figure 8d shows gaps in 3D models of T-shaped object and curved pipe. As shown in

Figure 8e,f, MoReLab is able to model desired objects more accurately, and a fine mesh can be exported easily from MoReLab.

Figure 9 shows the result of modeling another video. Metashape output (see

Figure 9a) shows a high level of approximation. The red rectangular region represents the curved pipe in the frame, and

Figure 9b shows the zoom-in of this rectangular region. The lack of a smooth surface reduces the recognizability of the pipe and introduces inaccuracies in the measurements.

Figure 9d shows gaps in the 3D output model of a curved pipe. However, outputs obtained with MoReLab are more accurate and represent the underlying objects more accurately.

4.6. Discussion

The results obtained with SfM packages (e.g., see

Figure 4b,

Figure 6b,

Figure 7b,

Figure 8b, and

Figure 9a) elicit the need to identify features manually and develop software for user-assisted reconstruction. The reason for low-quality output models obtained using 3-Sweep can be attributed to low-quality border detection. This is due to dark light conditions in these low-resolution images. 3-Sweep modeled high-resolution images in their paper and reported high-quality results in their work for high-quality images. However, our experiments indicate that 3-Sweep is not suitable for low-resolution images and industrial scenarios mentioned in

Figure 1. In these difficult scenarios, 3-Sweep suffers from low robustness and irregularity in the shapes of meshes.

MoReLab does not rely on the boundary detection stage and hence generates more robust results. After computing sparse 3D points on the user-provided features, our software provides tools to the user to quickly model objects of different shapes.

Figure 4f,

Figure 5e,

Figure 6e,

Figure 7i,

Figure 8e, and

Figure 9e demonstrate the effectiveness of our software by showing the results obtained with our software tools.

4.7. Measurement Results

Given the availability of ground-truth data for two videos in the first dataset, we performed a quantitative analysis. The evaluation metric being used for quantitative analysis, is a relative error,

:

where

is the ground-truth measurement, and

is a measure length from the estimated 3D model.

4.7.1. 1-Measurement Calibration

In this section, we perform calibration with one ground-truth measurement. In all experiments, the longest measurement was taken as ground truth to have a more stable reference measure. This helps in mitigating the error of the calculated measurements.

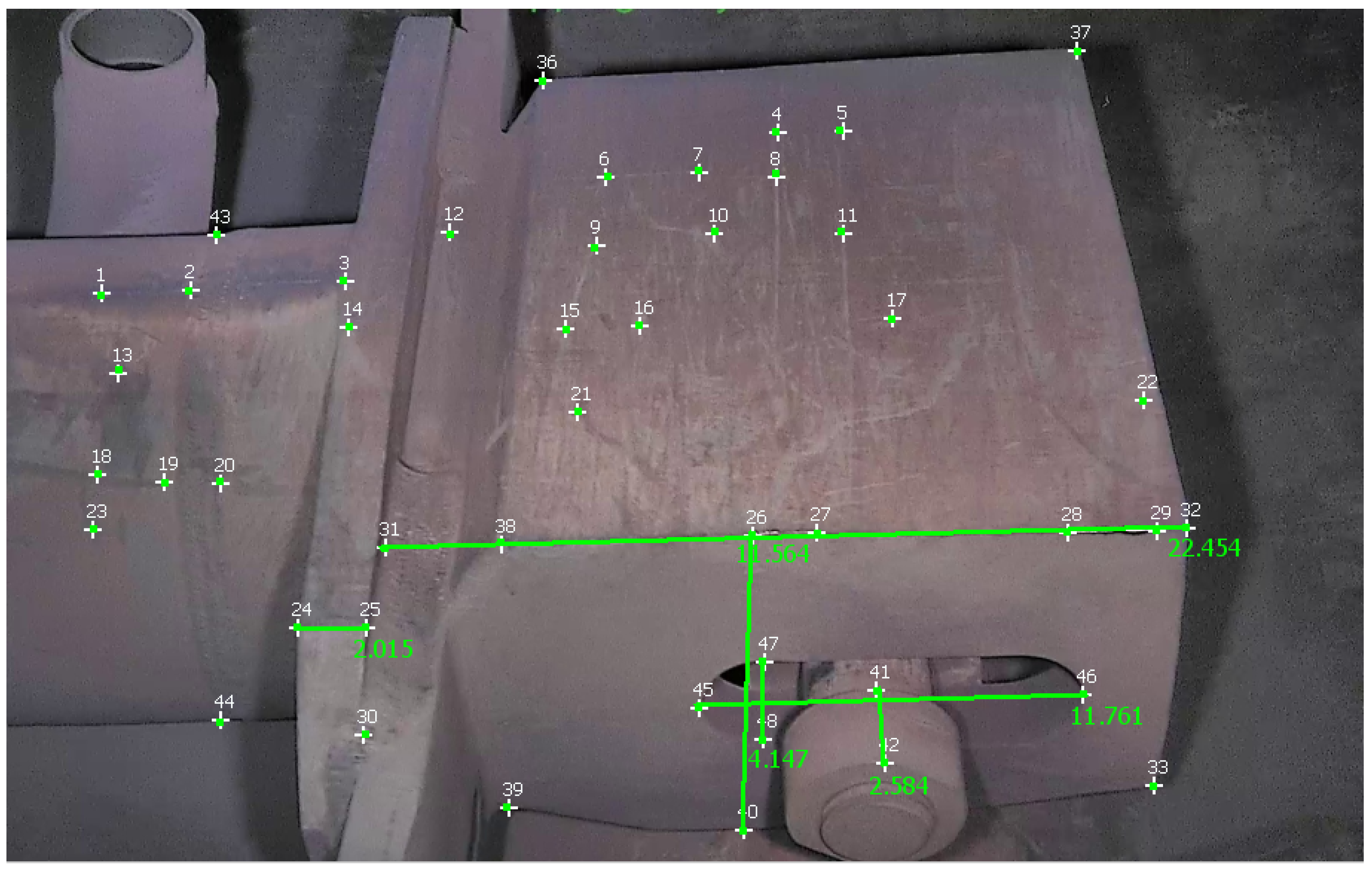

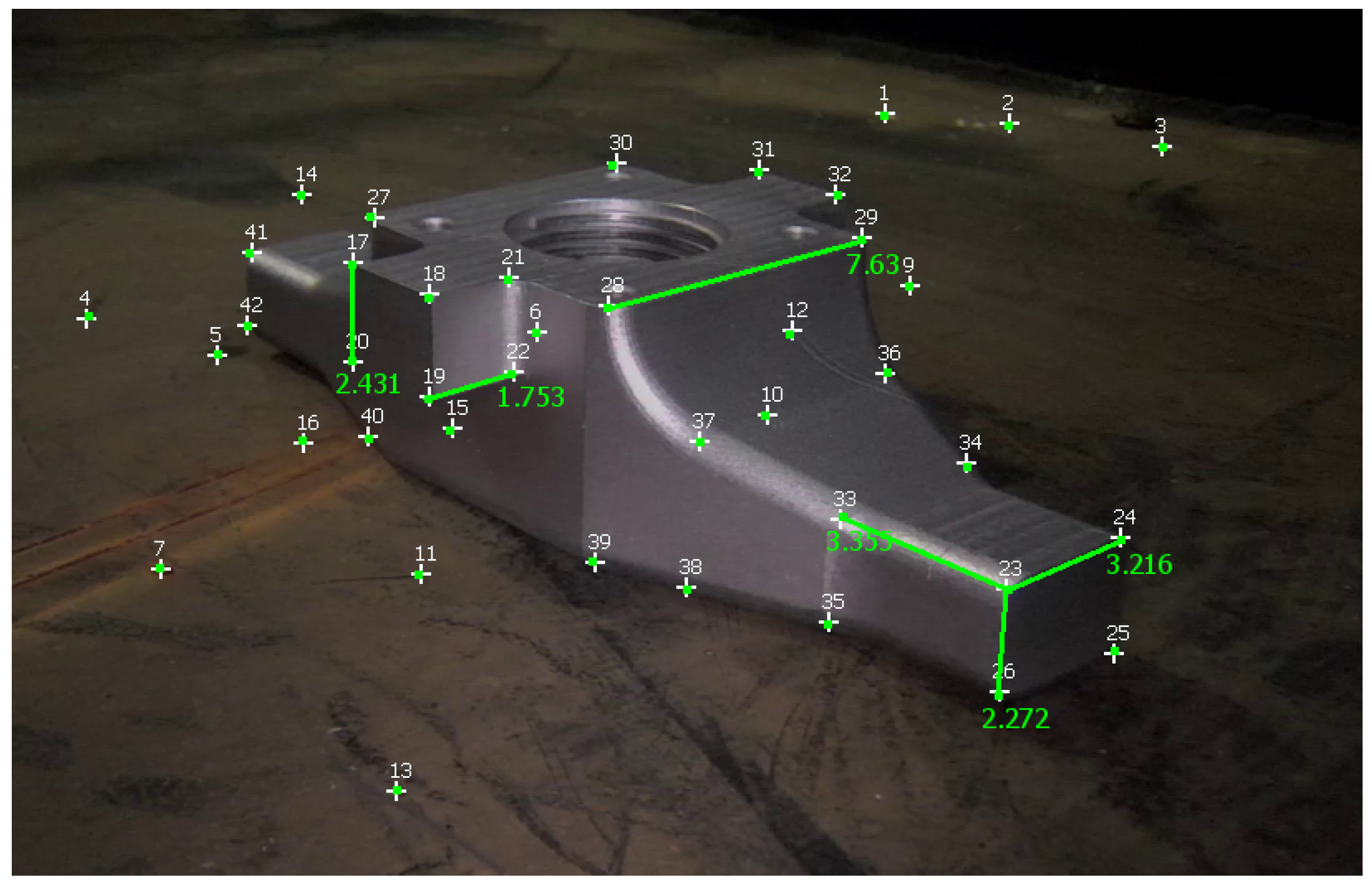

Table 2 reports measurements obtained with the different approaches on a video of the first dataset, and

Figure 10 shows these measurements taken in MoReLab.

The selection of measurements has been done according to the available ground-truth measurements from diagrams of equipment.

Table 2 also presents a comparison of relative errors with these three software packages. Among the five measurements under consideration, MoReLab achieves the lowest errors in three measurements and the lowest average relative error.

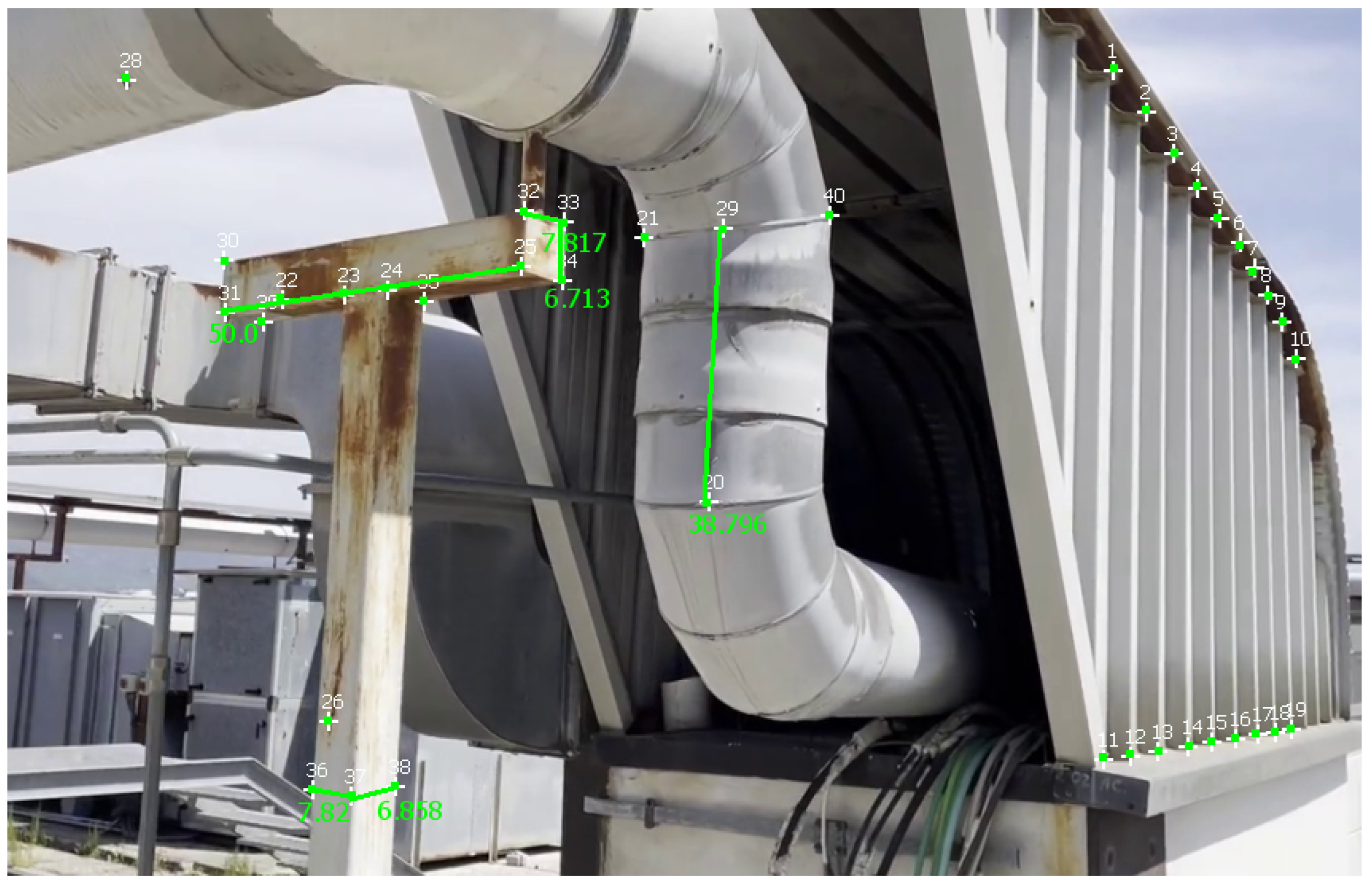

Table 3 reports measurements obtained with Metashape, 3-Sweep, and MoReLab on another video of the first dataset, and

Figure 11 shows these measurements taken in MoReLab. Given the availability of a CAD model for the jet pump, we take meaningful measurements between corners in a CAD file and use these measurements as ground truths.

Table 3 also presents a comparison of relative errors with these three software packages. Among the five measurements under consideration, MoReLab achieves the lowest errors in three measurements and the lowest average relative error.

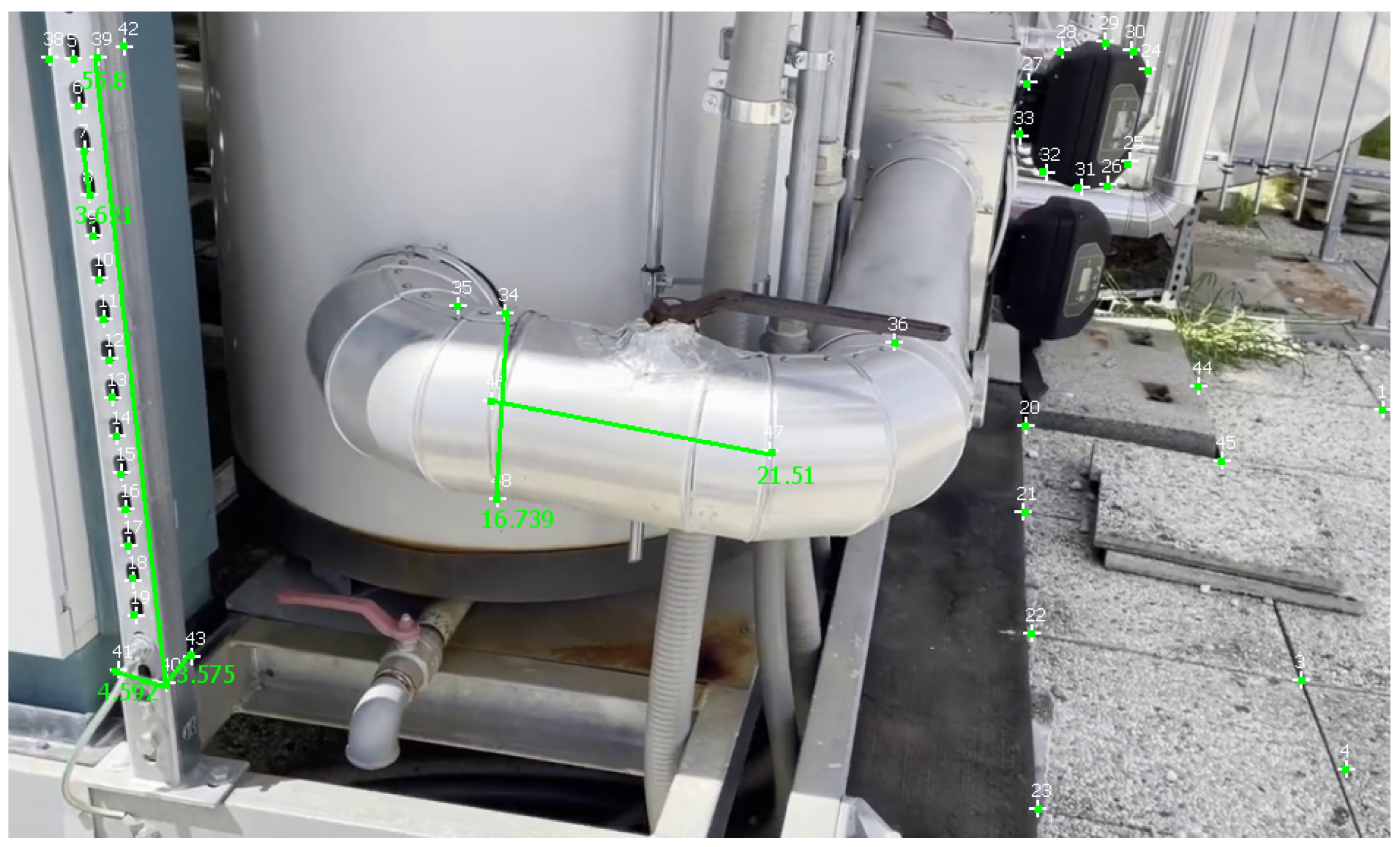

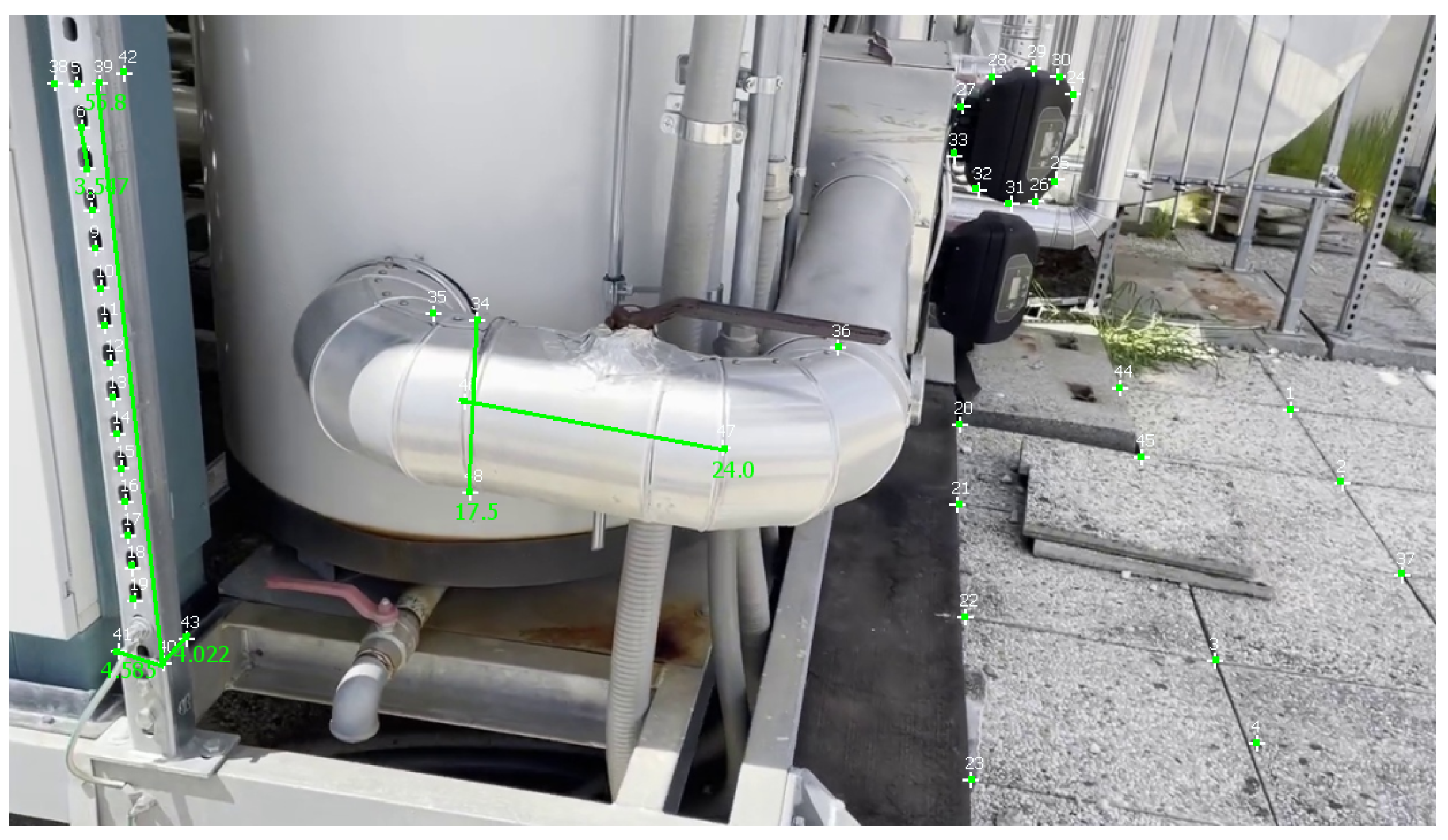

Table 4 reports measurements and calculations for a video of the second dataset, and

Figure 12 illustrates these measurements in MoReLab. We took some meaningful measurements to be used as ground truth for measurements with Metashape, 3-Sweep, and MoReLab. Relative errors are also calculated for these measurements and reported in

Table 4. All software programs have achieved more accurate measurements in this video with respect to videos of the first dataset. This can be due to more favorable lighting conditions and high-resolution frames containing a higher number of recognizable features. Similar to

Table 2 and

Table 3, five measurements have been considered and MoReLab achieves the lowest relative errors in three measurements and the lowest average relative error in comparison to other software programs.

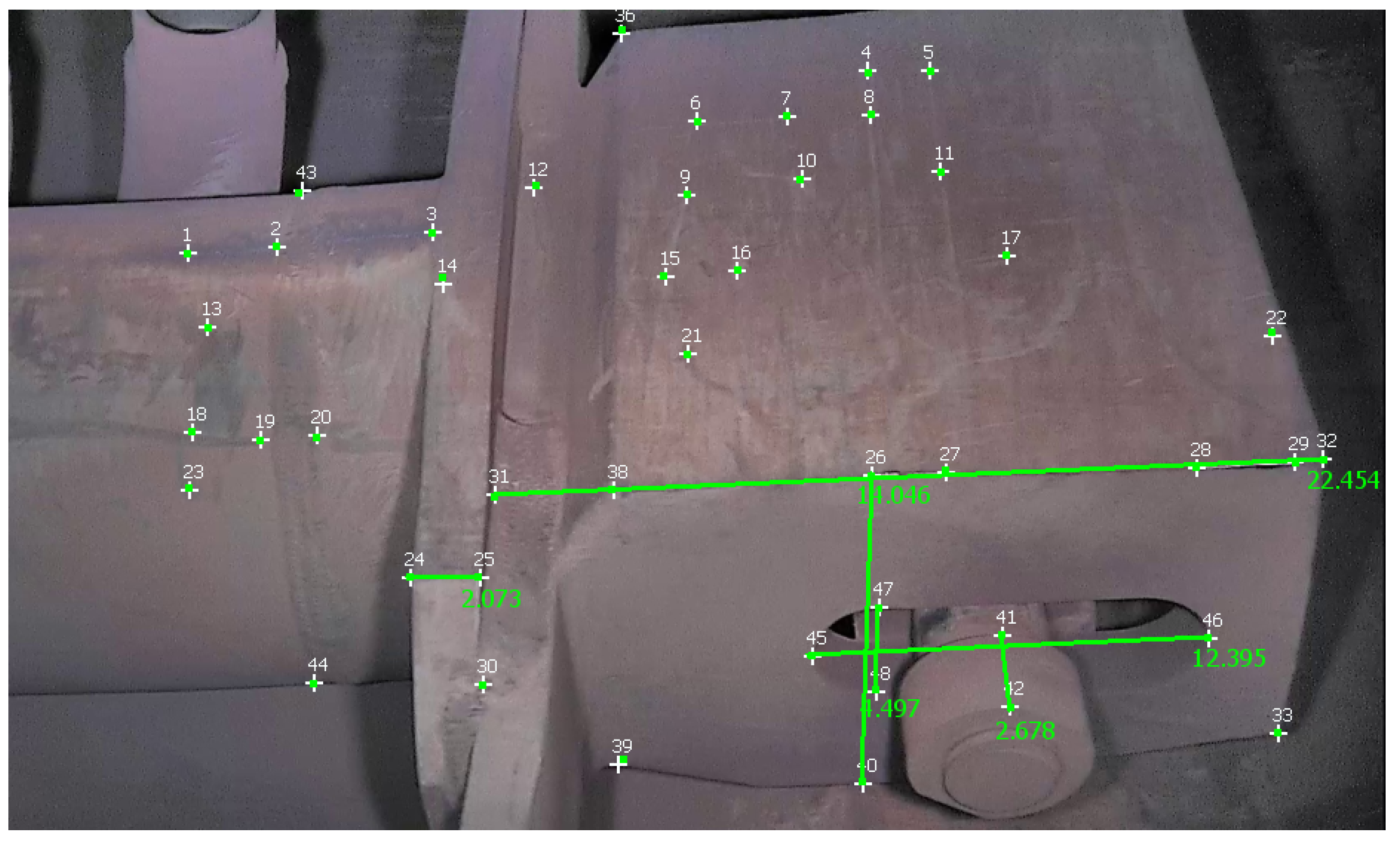

Table 5 reports measurements obtained with Metashape, 3-Sweep, and MoReLab on another video of the second dataset, and

Figure 13 illustrates these measurements in MoReLab. Among the five measurements under consideration, MoReLab achieves minimum error in four measurements and the lowest average relative error.

4.7.2. Three-Measurement Calibration

To assess the robustness of the results presented now, we re-ran them by using the calibration factor for the measurements of the average of three calibration factors computed on three different measures. After the three-measurement calibration, we re-measured the distances in our four videos.

Table 6,

Table 7,

Table 8 and

Table 9 report measurements and their relative errors, where the three largest distances have been provided as calibration values for each video. Such results confirm the trend that we had before in

Table 2,

Table 3,

Table 4 and

Table 5, which have a single measurement for calibration. This trend shows that MoReLab provides less relative error on average than using 3-Sweep and Metashape for the 3D reconstruction of industrial equipment and plants.

4.7.3. Limitations

From our evaluation, we have shown that our method performs better than other approaches for our scenario of industrial plants. However, users need to be accurate and precise when adding feature points and to use a high-quality methodology when performing measurements. Overall, all image-based 3D reconstruction methods, including ours, cannot achieve a precision of millimeters (at our scale) or less for many factors (e.g., sensor resolution). Therefore, if an object has a small scale the error introduced by the tolerance is lower than the reconstruction error.

5. Conclusions

We have developed a user-interactive 3D reconstruction tool for modeling low-quality videos. MoReLab can handle long videos and is well-suited to model featureless objects in videos. It allows the user to load a video, extract frames, mark features, estimate the 3D structure of the video, add primitives (e.g., quads, cylinders, etc.), calibrate, and perform measurements. These functionalities lay the foundations of the software and present a general picture of its use. MoReLab allows users to estimate shapes that are typical of industrial equipment (e.g., cylinders, curved cylinders, etc.) and measure them. We evaluated our tool for several scenes and compared results against the automatic SfM software program, Metashape, and another modeling software, 3-Sweep [

16]. Such comparisons show that MoReLab can generate 3D reconstructions from low-quality videos with less relative error than these state-of-the-art approaches. This is fundamental in the industrial context when there is the need to obtain measurements of objects in difficult scenarios, e.g., in areas with chemical and radiation hazards.

In future work, we plan to extend MoReLab tools for modeling more complex industrial equipment and to show that we are not only more effective than other state-of-the-art approaches in terms of measurement errors but also more efficient in terms of the time that the user needs to spend to achieve an actual reconstruction.