Impact of the Dropping Function on Clustering of Packet Losses

Abstract

1. Introduction

2. The Model

2.1. Queueing System

2.2. Clustering of Losses

3. Analysis

4. Examples

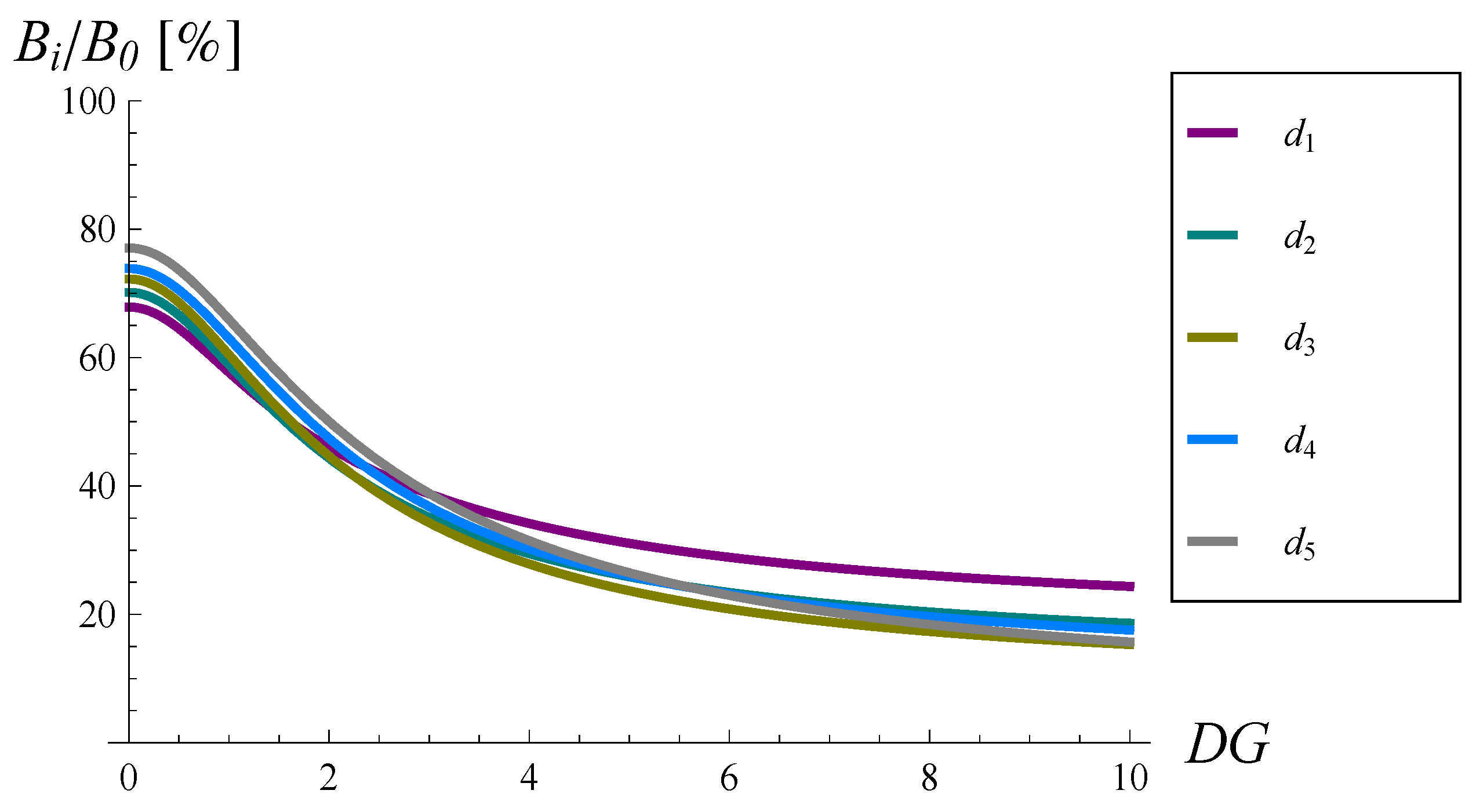

4.1. Impact of Shape

4.2. Impact of

4.3. Impact of

4.4. Verification via Simulations

5. Conclusions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Internet Engineering Task Force. Request for Comments 7567; Baker, F., Fairhurst, G., Eds.; 2015; ISSN 2070-1721. Available online: https://datatracker.ietf.org/doc/pdf/rfc7567 (accessed on 18 August 2022).

- Samociuk, D.; Chydzinski, A.; Barczyk, M. Experimental Measurements of the Packet Burst Ratio Parameter. In Communications in Computer and Information Science, Poznan, Poland, 18–20 September 2018; Springer: Cham, Switzerland, 2018; Volume 928, pp. 455–466. [Google Scholar]

- Samociuk, D.; Chydzinski, A.; Barczyk, M. Measuring and Analyzing the Burst Ratio in IP Traffic. Broadband Commun. Netw. Syst. 2019, 303, 86–101. [Google Scholar]

- Khoshnevisan, L.; Salmasi, F.R. A robust and high-performance queue management controller for large round trip time networks. Int. J. Syst. Sci. 2016, 47, 1–12. [Google Scholar] [CrossRef]

- Wang, P.; Zhu, D.; Lu, X. Active queue management algorithm based on data-driven predictive control. Telecommun. Syst. 2017, 64, 1–9. [Google Scholar] [CrossRef]

- Kahe, G.; Jahangir, A.H. A self-tuning controller for queuing delay regulation in TCP/AQM networks. Telecommun. Syst. 2019, 71, 215–229. [Google Scholar] [CrossRef]

- Bisoy, S.K.; Pattnaik, P.K.; Sain, M.; Jeong, D.U. A Self-Tuning Congestion Tracking Control for TCP/AQM Network for Single and Multiple Bottleneck Topology. IEEE Access 2021, 9, 27723–27735. [Google Scholar] [CrossRef]

- Menacer, O.; Messai, A.; Kassa-Baghdouche, L. Improved Variable Structure Proportional-Integral Controller for TCP/AQM Network Systems. J. Electr. Eng. Technol. 2021, 16, 2235–2243. [Google Scholar] [CrossRef]

- Chrost, L.; Chydzinski, A. On the deterministic approach to active queue management. Telecommun. Syst. 2016, 63, 27–44. [Google Scholar] [CrossRef]

- Wang, C.; Chen, X.; Cao, J.; Qiu, J.; Liu, Y.; Luo, Y. Neural Network-Based Distributed Adaptive Pre-Assigned Finite-Time Consensus of Multiple TCP/AQM Networks. IEEE Trans. Circuits Syst. 2021, 68, 387–395. [Google Scholar] [CrossRef]

- Al-Faiz, M.Z. Optimal linear quadratic controller based on genetic algorithm for TCP/AQM router. In Proceedings of the International Conference on Future Communication Networks (ICFCN), Niagara Falls, ON, Canada, 9–11 August 2022; pp. 78–83. [Google Scholar]

- Chen, J.V.; Chen, F.C.; Tarn, J.M.; Yen, D.C. Improving network congestion: A RED-based fuzzy PID approach. Comput. Stand. Interfaces 2012, 34, 426–438. [Google Scholar] [CrossRef]

- Floyd, S.; Jacobson, V. Random early detection gateways for congestion avoidance. IEEE/Acm Trans. Netw. 1993, 1, 397–413. [Google Scholar] [CrossRef]

- Rosolen, V.; Bonaventure, O.; Leduc, G. A RED discard strategy for ATM networks and its performanceevaluation with TCP/IP traffic. Acm Sigcomm Comput. Commun. Rev. 1999, 29, 23–43. [Google Scholar] [CrossRef]

- Athuraliya, S.; Li, V.H.; Low, S.H.; Yin, Q. REM: Active Queue Management. IEEE Netw. 2001, 15, 48–53. [Google Scholar] [CrossRef]

- Zhou, K.; Yeung, K.L.; Li, V. Nonlinear RED: A simple yet efficient active queue management scheme. Comput. Netw. 2006, 50, 3784–3794. [Google Scholar] [CrossRef]

- Feng, C.; Huang, L.; Xu, C.; Chang, Y. Congestion Control Scheme Performance Analysis Based on Nonlinear RED. IEEE Syst. J. 2017, 11, 2247–2254. [Google Scholar] [CrossRef]

- Patel, S.; Karmeshu. A New Modified Dropping Function for Congested AQM Networks. Wirel. Pers. Commun. 2019, 104, 37–55. [Google Scholar] [CrossRef]

- Barczyk, M.; Chydzinski, A. AQM based on the queue length: A real-network study. PLoS ONE 2022, 17, e0263407. [Google Scholar] [CrossRef] [PubMed]

- Chydzinski, A.; Samociuk, D. Burst ratio in a single-server queue. Telecommun. Syst. 2019, 70, 263–276. [Google Scholar] [CrossRef]

- Chydzinski, A.; Samociuk, D.; Adamczyk, B. Burst ratio in the finite-buffer queue with batch Poisson arrivals. Appl. Math. Comput. 2018, 330, 225–238. [Google Scholar] [CrossRef]

- Chydzinski, A. Burst ratio for a versatile traffic model. PLoS ONE 2022, 17, e0272263. [Google Scholar] [CrossRef] [PubMed]

- Chydzinski, A.; Barczyk, M.; Samociuk, D. The Single-Server Queue with the Dropping Function and Infinite Buffer. Math. Probl. Eng. 2018, 2018, 3260428. [Google Scholar] [CrossRef]

- McGowan, J.W. Burst Ratio: A Measure of Bursty Loss on Packet-Based Networks. U.S. Patent 6,931,017, 2005. [Google Scholar]

- Rachwalski, J.; Papir, Z. Analysis of Burst Ratio in Concatenated Channels. J. Telecommun. Inf. Technol. 2015, 65–73. [Google Scholar]

- Bergstra, J.A.; Middelburg, C.A. ITU-T Recommendation G.107: The E-Model, a Computational Model for Use in Transmission Planning; Technical Report number G.107; International Telecommunication Union: Geneva, Switzerland, 2014. [Google Scholar]

- OMNeT++ Discrete Event Simulator. Available online: www.omnetpp.org (accessed on 26 September 2022).

| Dropping Function | None | |||||

|---|---|---|---|---|---|---|

| 2.8764 | 1.3236 | 1.2746 | 1.2839 | 1.3634 | 1.4389 | |

| 100% | 46% | 44% | 45% | 47% | 50% |

| v | 0 | 0.1 | 0.5 | 1.5 | 5 | 10 | 50 | 100 | ∞ |

| B | 2.6906 | 2.015 | 1.3953 | 1.2674 | 1.4047 | 1.6360 | 2.5622 | 2.8186 | 2.8764 |

| no drop. fun. | 2.3660 | 2.7029 | 2.8764 | 2.6484 | 2.4036 | 2.2212 | 2.0819 |

| 1.0383 | 1.1499 | 1.3236 | 1.3390 | 1.2904 | 1.2442 | 1.2064 | |

| 1.0947 | 1.1989 | 1.2746 | 1.2361 | 1.1794 | 1.1412 | 1.1160 | |

| 1.1566 | 1.2455 | 1.2839 | 1.2287 | 1.1616 | 1.1167 | 1.0888 | |

| 1.2006 | 1.3174 | 1.3634 | 1.2903 | 1.2049 | 1.1477 | 1.1122 | |

| 1.2999 | 1.4104 | 1.4389 | 1.3633 | 1.2700 | 1.1987 | 1.1488 |

| 44% | 43% | 46% | 51% | 54% | 56% | 58% | |

| 46% | 44% | 44% | 47% | 49% | 51% | 54% | |

| 49% | 46% | 45% | 46% | 48% | 50% | 52% | |

| 51% | 49% | 47% | 49% | 50% | 52% | 53% | |

| 55% | 52% | 50% | 51% | 53% | 54% | 55% |

| no drop. fun. | 1.5662 | 2.8764 | 5.3032 | 7.8608 | 10.2240 | 12.3063 |

| 1.0629 | 1.3236 | 1.8112 | 2.2706 | 2.6652 | 2.9969 | |

| 1.0987 | 1.2746 | 1.5621 | 1.8400 | 2.0836 | 2.2907 | |

| 1.1313 | 1.2839 | 1.4761 | 1.6377 | 1.7716 | 1.8832 | |

| 1.1569 | 1.3634 | 1.6010 | 1.8218 | 2.0098 | 2.1609 | |

| 1.2071 | 1.4389 | 1.6685 | 1.8043 | 1.8837 | 1.9317 |

| System Parameters | Simulation | Theory |

|---|---|---|

| no drop. f., , | 5.3724 | 5.3719 |

| , , | 1.5649 | 1.5647 |

| , , | 1.2689 | 1.2688 |

| , , | 1.1316 | 1.1316 |

| , , | 1.0972 | 1.0972 |

| , , | 1.0924 | 1.0924 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Chydzinski, A. Impact of the Dropping Function on Clustering of Packet Losses. Sensors 2022, 22, 7878. https://doi.org/10.3390/s22207878

Chydzinski A. Impact of the Dropping Function on Clustering of Packet Losses. Sensors. 2022; 22(20):7878. https://doi.org/10.3390/s22207878

Chicago/Turabian StyleChydzinski, Andrzej. 2022. "Impact of the Dropping Function on Clustering of Packet Losses" Sensors 22, no. 20: 7878. https://doi.org/10.3390/s22207878

APA StyleChydzinski, A. (2022). Impact of the Dropping Function on Clustering of Packet Losses. Sensors, 22(20), 7878. https://doi.org/10.3390/s22207878