1. Introduction

In actual imaging processes, the acquired images always face blurring problems caused by the imaging equipment and environment. In order to remove the blurs, image restoration technology has gradually attracted the attention of researchers and developed into a significant branch of image processing. The ideal image degradation process can be simply described as:

where

Y,

K,

X, and

N represent the observed blurry image, blur kernel, original clear image, and noise, respectively, and ∗ represents the convolution operator. In fact, the cause of blurring is unknown, i.e., lack of prior information, which makes it an ill-conditioned problem with infinite solutions when simultaneously solving the blur kernel and the clear image. A traditional blind image deblurring algorithm is dedicated to finding the optimal global solution, i.e., the blur kernel, by using image information to optimize the equation, then utilizing the non-blind image deblurring algorithm to obtain a clear image.

Due to the complexity and diversity of actual situations, parametric models [

1,

2] obviously cannot accurately describe the blur kernel, so they are not competent for the work of image restoration. With the research results by Rudin et al. [

3] in 1992, the theory of partial differential has gradually become an essential part of image restoration. Traditional image restoration models can be roughly divided into two frameworks: Maximum A Posterior (MAP) [

4,

5,

6,

7] and Variable Bayesian (VB) [

8,

9,

10,

11,

12], which are based on probability theory and statistics. Considering the computation and complexity of the algorithm, although the VB framework is more stable, most methods still choose the MAP framework. At this time, the introduction of appropriate conditions can make the MAP framework avoid the problem that the naive MAP approaches with a sparse derivative prior tend to the trivial solutions, which were proven by Levin et al. [

13]. The MAP framework considers that solving the blur kernel and the clear image at the same time can be regarded as solving the standard maximum posterior probability, which is expressed as:

where

is the noise distribution, and

and

are the prior distributions of the clear image and blur kernel, respectively. We take the negative logarithm of each item in Equation (

2) to construct the following regularized model:

where

is the fidelity term,

and

are the regularization functions on

X and

K, and

and

are the corresponding parameters.

As one of the most prominent features in images, edge information has always played an important role in image restoration algorithms. However, if there is an image that lacks sharp edges, it is necessary to take prior knowledge as the core, which can distinguish clear images from blurry images, and use image edge information as an auxiliary to achieve the desired deblurring. Channels are independent planes that store the color information of an image. The channel prior has gradually entered researchers’ vision since Pan et al. [

14] verified that the distribution of dark channel pixels is significantly different for clear and blurry images. The dark channel prior performs well when dealing with natural, text, facial, and low-light images. Yan et al. [

15] designed a restoration algorithm based on extreme channel prior, including dark and bright channels, to deal with insufficient dark channel pixels in the image. Ge et al. [

16] proposed a non-linear channel prior with extreme channels, which has been proven by experiments to effectively solve the problem of algorithm performance degradation due to the lack of extreme pixels. At the same time, the local feature of images as the prior knowledge also shines in the field of image deblurring, e.g., local maximum gradient prior [

17], local maximum difference prior [

18], patch-wise minimal pixels prior [

19], etc.

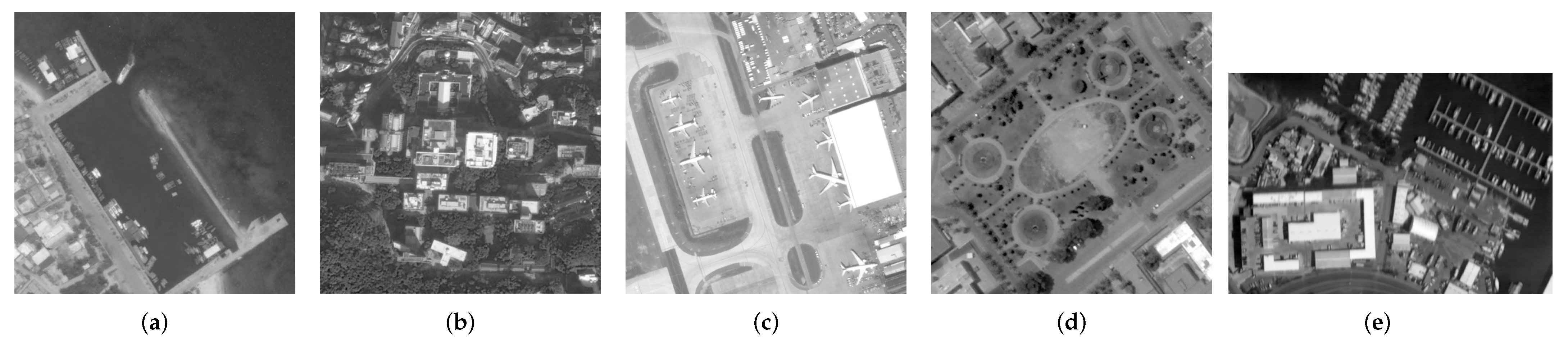

In this paper, inspired by the non-linear channel prior and the patch-wise minimal pixels prior, we design a new non-linear prior and develop the corresponding algorithm to deal with the task of remote sensing image restoration. To further improve operational efficiency and reduce running time, our method does not traverse all of an image’s pixels but the patches divided by the image when extracting features. Each patch will have a partial overlap to record more detailed feature information. After that, similar to how the extreme channels extract image features, the extreme pixels of each patch are found and made into two sets, i.e., the local minimum intensity set and the local maximum intensity set. We use a convex L1-norm constraint on the ratio of corresponding elements in the two local intensity sets as a non-linear prior. It is clear that OPNL prior prefers clear images rather than blurry images through the analysis of more than 5000 remote sensing images. It is difficult to solve the image restoration model based on OPNL prior directly, so we use the half-quadratic splitting method to decompose the whole model into several subproblems that are easier to solve. The non-linear term can be converted into a linear term by constructing a linear operator using the result of the previous iteration in the algorithm. The contributions of this research are as follows:

- (1)

In this paper, we propose a new prior based on extreme pixels in patches, i.e., OPNL prior. The analysis and tests of more than 5000 remote sensing images show that OPNL prior is more conducive to clear images.

- (2)

A new image restoration algorithm is designed based on OPNL prior for remote sensing images, which can effectively deblur while maintaining good convergence and stability.

- (3)

The experimental results show that the proposed method outperforms the comparative methods in dealing with blurry remote sensing images. Even in the face of remote sensing images with complex textures and many details, the method can still obtain very competitive deblurring results.

The paper’s outline is as follows:

Section 2 organizes the achievements made in the field of blind image deblurring over the years. In

Section 3, we will elaborate on the OPNL prior.

Section 4 establishes an image restoration model and designs the corresponding optimization algorithm.

Section 5 presents the experimental results of our algorithms.

Section 6 quantitatively analyzes the performance of our method.

Section 7 is the conclusion.

4. Solving Process of Image Deblurring Algorithm

Under the MAP framework, we introduce a new prior, i.e., OPNL prior, into the image deblurring model and develop a corresponding solution algorithm. The objective function of our algorithm is:

where

,

, and

are the weights of the corresponding regularization terms. The first term is the fidelity term, which aims to mitigate the influence of noise on the restoration result by minimizing it. The other items are the correlation term of OPNL prior, the regularization term of the image gradient, and the constraint term of the blur kernel constrained by the L2-norm to smooth the blur kernel. It is not practical to directly solve the objective function. Thus, we can use the projected alternating minimization (PAM) method to decompose the model to obtain the clear image and blur kernel, respectively. We replace

of the OPNL prior term with

, where

is the image result of the previous iteration:

Based on the multi-scale image pyramid, the estimated blur kernel can be obtained after the loop iteration. Then, with the blur kernel and the blurry image as initial conditions, an image non-blind deblurring algorithm is used to restore the clear image.

4.1. Estimating the Latent Image

To further reduce the difficulty of solving, we apply the half-quadratic splitting method to separate the prior term and the regularization term of the gradient by introducing auxiliary variables. Equation (

13) can be rewritten as:

where

and

are the penalty parameters, and

t and

r are auxiliary variables. When

and

grow to infinity, Equations (13) and (15) are equivalent. After that, other variables are fixed, and each variable can be solved alternately. Based on the blurry image, the auxiliary variable

t is solved by:

The relationship between the non-linear operators (

and

) and the pixels of the original image is expressed by constructing the mapping matrices (

I and

A), that is:

The same as in Ge et al. [

16], the sparse matrix

by explicit calculation, Equation (

16) is rewritten as follows:

where

,

, and

are vector forms of

t,

X, and

Ma(

x), respectively. Further, we rewrite Equation (

19):

We can handle the classic convex L1-regularized problem by applying the fast iterative shrinkage-thresholding algorithm (FISTA) [

46], whose contraction operator is:

The solution process of

t is shown in Algorithm 1.

| Algorithm 1 Solving auxiliary variables in (20). |

Input: , , , , , , . Maximum iteration M, initial value . While End while

|

Then solve the auxiliary variable

r:

The result of Equation (

22) is:

After completing the solution of the auxiliary variables, we solve clear image

X by:

We replace all items with matrix vector form:

represents the blur kernel Toeplitz form. Equation (

25) appears to be a simple least squares problem, but it cannot be solved directly using Fast Fourier Transform (FFT). Therefore, we continue to introduce an auxiliary variable

:

where

is a penalty parameter. We decompose Equation (

26) into two sub-problems to solve the clear image

and the auxiliary variable

, respectively:

Both sub-problems have closed solutions. The solution of Equation (

27) is:

Equation (

28) is solved by FFT:

where

F,

, and

represent FFT, the inverse FFT, and the complex conjugate operators of FFT, respectively. The above solution process is shown in Algorithm 2.

| Algorithm 2 Solving the Latent Image X. |

|

4.2. Estimating the Blur Kernel

As proposed in the literature [

14,

15,

16,

17,

18,

19,

25,

31,

32], a more accurate blur kernel can be estimated by replacing the image gray term in Equation (

14) with the image gradient term. Equation (

14) can be modified as follows:

FFT can estimate the blur kernel:

Note that the blur kernel

K requires non-negative and normalization processing. The solution process is shown in Algorithm 3.

| Algorithm 3 Estimating the blur kernel K. |

Input: Blurry image Y. Initialize K with results from the coarser level. While, do Solve for X using Algorithm 2. Solve for K using Equation ( 31). End while Output: Blur kernel K and intermediate latent image X.

|

4.3. Details about the Algorithm

This section describes the settings for the parameters in our algorithm. The algorithm uses a coarse-to-fine image pyramid with a down-sampling factor of

, and the number of loops for each layer is 5. In the loop of each layer, the algorithm will complete the image estimation, blur kernel estimation, and normalization, in turn. Then, the blur kernel estimated in the loop of this layer is expanded by up-sampling and passed to the next layer. The algorithm solution process is shown in

Figure 2. Through a large number of experiments, we usually set the relevant parameters as

,

, and

, the

is

, and the patch overlap rate is

. The maximum number of loops in Algorithm 1 is set to 500. All parameters are not unique and can be adjusted according to needs. Finally, using the blurry image and the estimated blur kernel as the initial conditions, a clear image will be obtained by the non-blind image deblurring algorithm.

6. Analysis and Discussion

This section presents the performance analysis of our proposed algorithm, including the effectiveness of OPNL prior, the influence of hyper-parameters, algorithm convergence, computational speed, and algorithm limitations. All the tests are based on the Levin dataset [

13], consisting of four images and eight blur kernels. To maintain test accuracy, we uniformly specify the number of iterations and estimated blur kernel size and use the same non-blind restoration method for all algorithms. Quantitative evaluation parameters are chosen as Error-Ratio [

13], Peak-Signal-to-Noise Ratio (PSNR), Structural-Similarity (SSIM) [

47], and Kernel Similarity [

50]. All experiments are run on a computer with an Intel Core i5-1035G1 CPU and 8 GB RAM.

6.1. Effectiveness of the OPNL Prior

Theoretically, the OPNL prior tends to clear images in the minimization problem, i.e., the restoration model based on OPNL prior can complete the task of image deblurring. However, the performance of OPNL prior in practice still needs to be quantitatively evaluated.

Figure 13 shows the results of comparing our algorithm with other algorithms, Dark, L0, PMP, and NLC. It can be seen that the cumulative Error-Ratio of the other methods, except L0, all have little difference, but our algorithm has higher PSNR and SSIM. In summary, OPNL prior has proven to be effective in restoring degraded images in theory and practice.

6.2. Effect of Hyper-Parameters

The proposed restoration model mainly includes five super parameters, i.e.,

,

,

,

, and overlap rate (

f). To explore the impact of changes in hyper-parameters on the processing results, we adopt a single-variable method, i.e., change only one parameter at a time, and calculate the kernel similarity between the estimated blur kernel and the ground truth kernel. The experimental results are shown in

Figure 14. A large number of experiments show that the algorithm is stable, whose processing results will not be affected by significant changes in the hyper-parameters.

6.3. Algorithm Convergence and Running Time

The projected alternating minimization (PAM) algorithm aims to find the optimal solution for the image restoration model. Reference [

6] considers that the delay normalization of the blur kernel in the iterative process of the PAM algorithm makes the algorithms, which are based on the total variation, converge. Compared with reference [

6], OPNL prior and L0-norm of the image gradient in our algorithm undoubtedly increase the complexity of the model. Using the PAM algorithm and the half-quadratic splitting method can simplify the restoration model into several sub-problems, all of which have convergent solutions. However, the convergence of the restoration model still needs to be further verified. Based on the Levin dataset, the convergence can be quantitatively tested by calculating the mean value of the objective function with Equation (

12) and the mean value of kernel similarity on the premise of several iterations at the optimal scale of the image pyramid. From the results in

Figure 15, it can be seen that our algorithm converges after 20 iterations, and the kernel similarity tends to be stable after 25 iterations, both of which prove the effectiveness of our method.

In addition, we also test the running time of each algorithm, which is shown in

Table 5. Our algorithm can obtain more competitive results in less time by comprehensive comparison.

6.4. Algorithm Limitations

Although our algorithm has excellent performance, it still has limitations. First, our algorithm cannot take into account the effects of blur and noise simultaneously. The deblurring ability of our algorithm will decrease when the image is seriously polluted by noise, such as stripe noise caused by the non-uniform response of the detector. It means that image restoration requires additional steps to denoise. In addition, the proposed algorithm builds an image pyramid, which uses the PAM algorithm, the half-quadratic splitting method, and others in the loop of each layer. This structure will undoubtedly increase the algorithm’s computational complexity and running time, which is a common problem with traditional algorithms. Therefore, future research will focus on designing algorithms with broader applications and faster operation based on OPNL prior to create conditions for practical engineering applications.