Affordable Artificial Intelligence-Assisted Machine Supervision System for the Small and Medium-Sized Manufacturers

Abstract

:1. Introduction

1.1. Background

1.2. Related Works

1.3. Contribution

- A novel method for anomaly detection and state change detection based on the AI-assisted method;

- To significantly reduce the computational difficulties while clarifying logic and reducing system maintenance difficulties;

- Deployed cloud computing technology and developed the system on embedded devices to make it affordable for SMMs to implement the AI-based Smart Manufacturing practices;

- Incorporated the human-computer interaction monitoring to ensure that the corresponding action has an expected impact on the machine state, which has safety and cybersecurity implications.

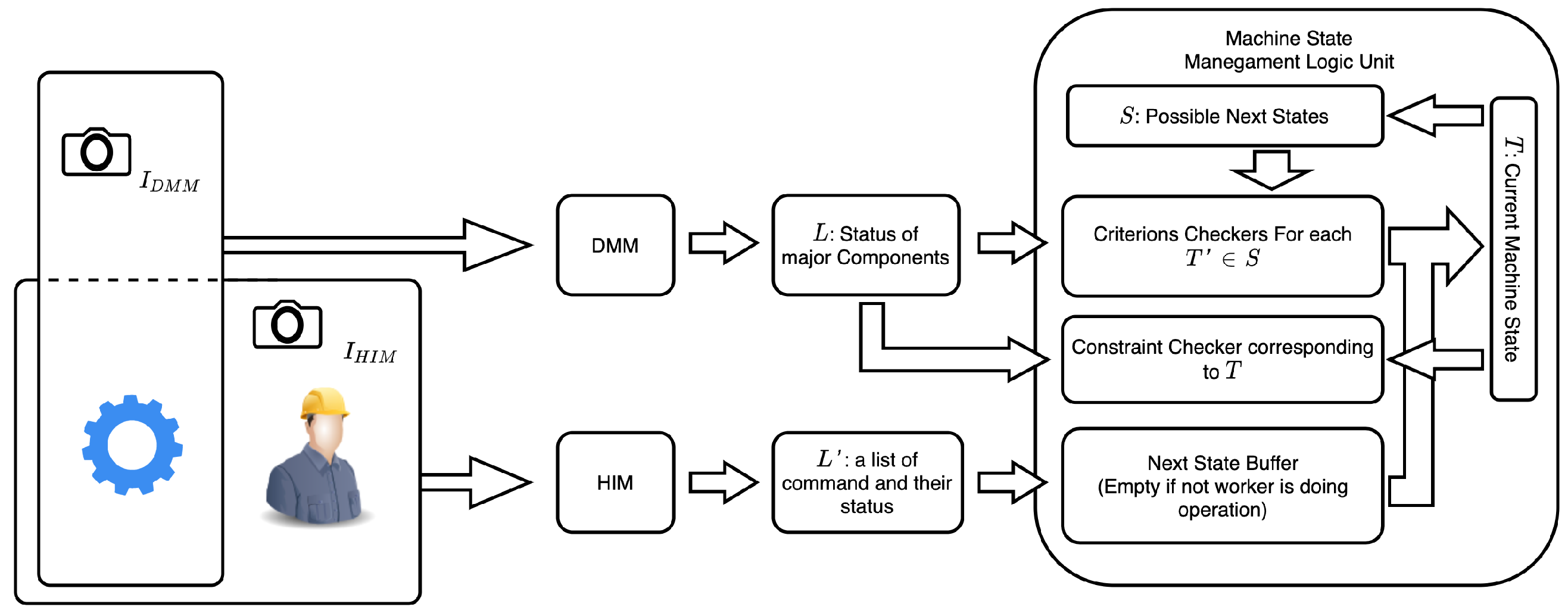

2. Framework

2.1. Machines Applicable to AIMS

2.1.1. Identification of Major Working Components

2.1.2. Machine State Transition Diagram

2.1.3. Working Condition Analysis

2.2. Direct Machine Monitoring (DMM)

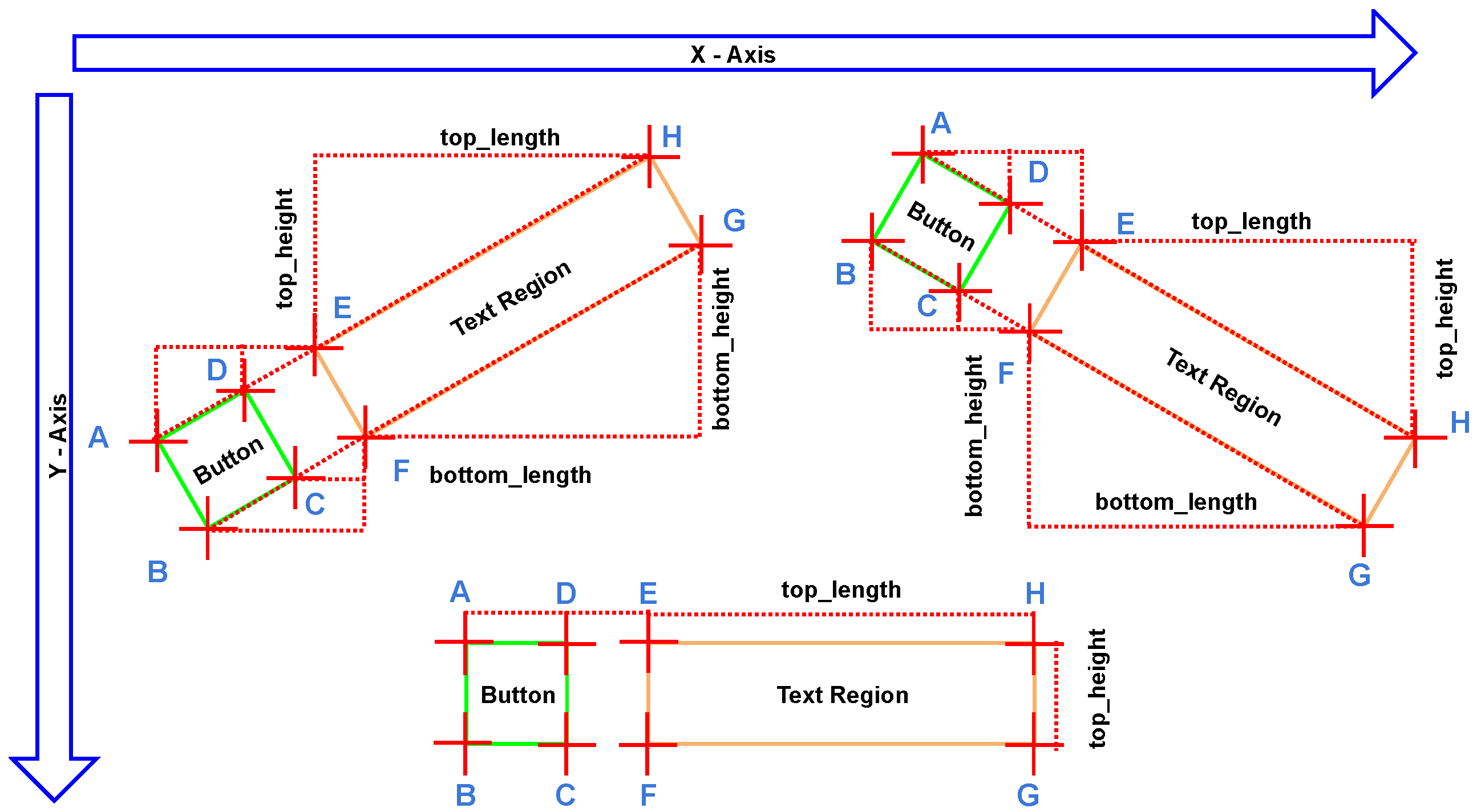

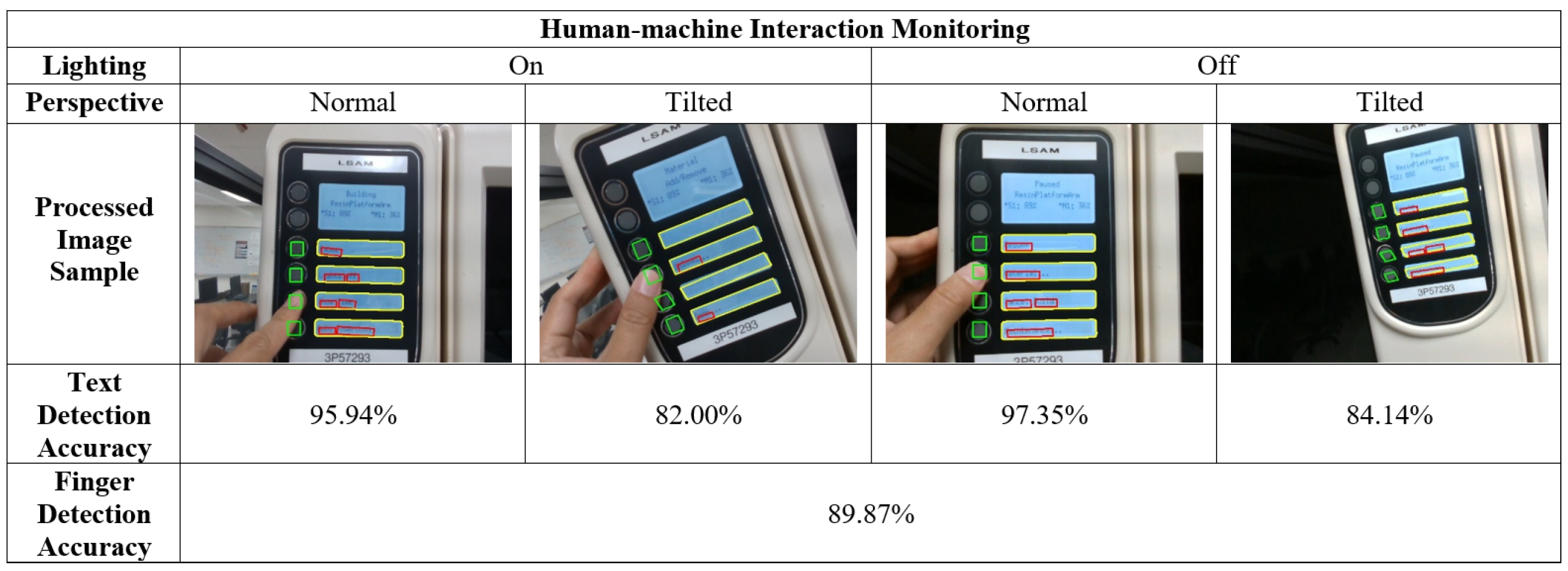

2.3. Human-Machine Interaction Monitoring (HIM)

2.4. Hardware Requirement

2.5. Evaluation of The Model

3. Experiment: Case Study on a 3D Printer

3.1. Analysis of Supervised Production Equipment

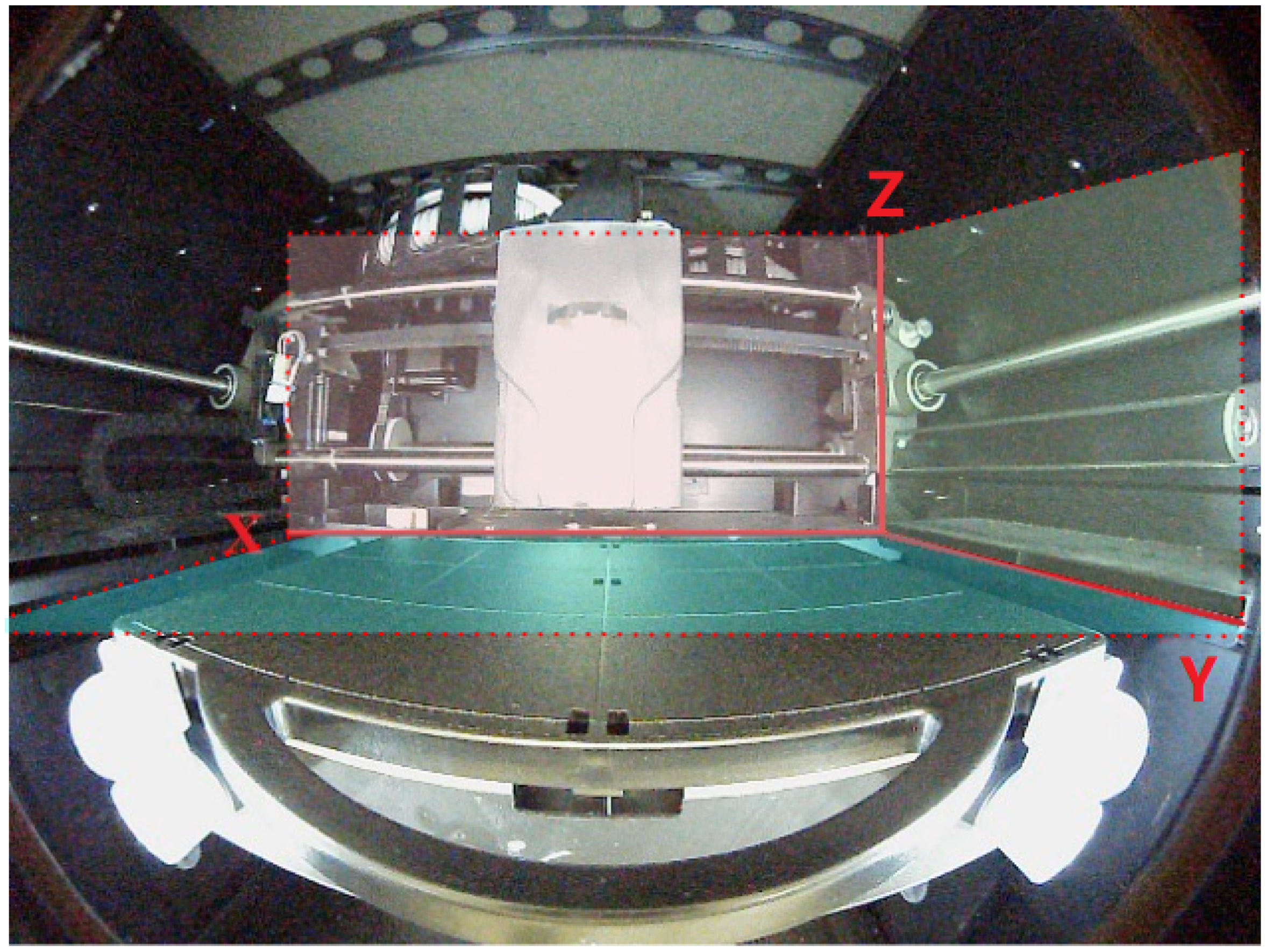

3.2. Building the Model for Direct Monitoring

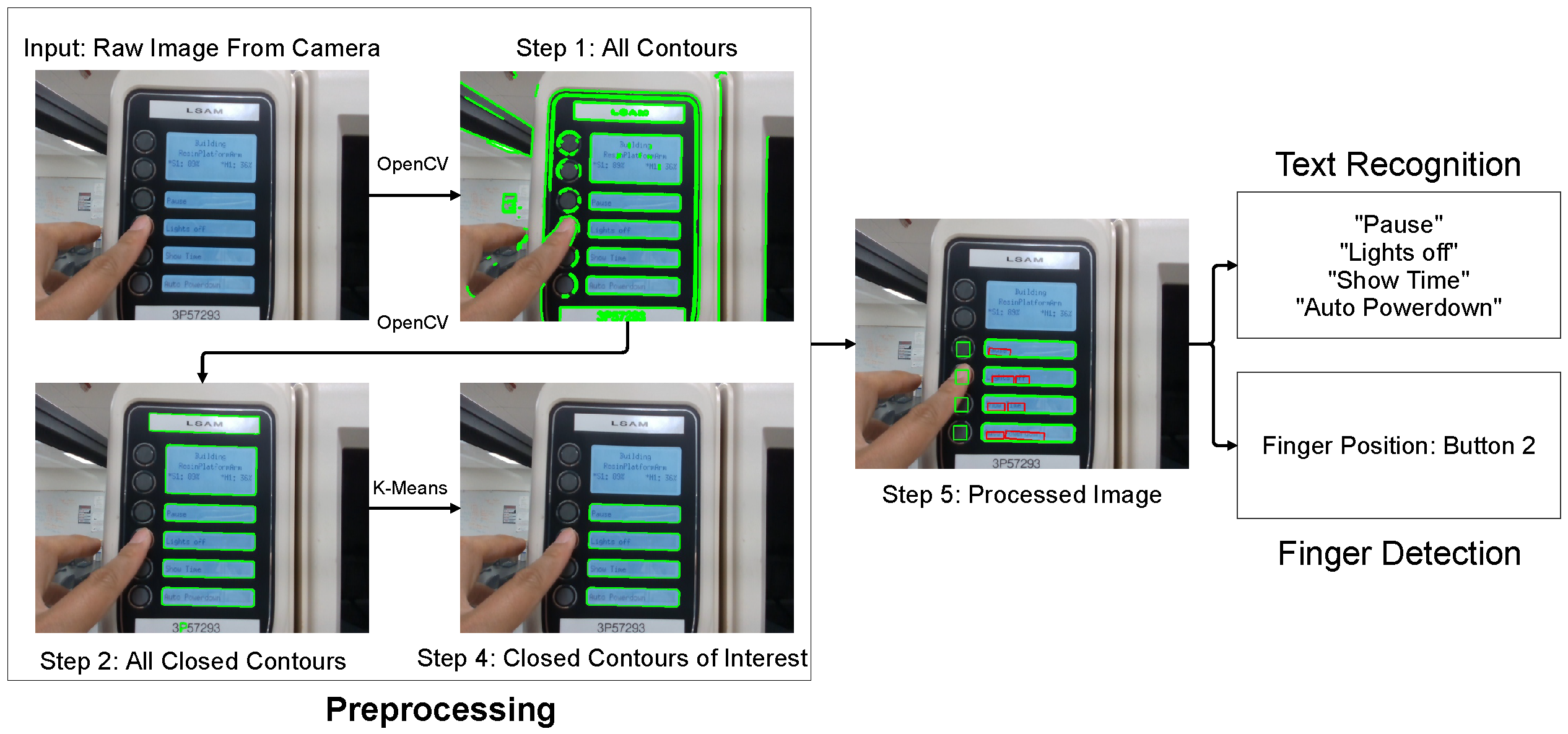

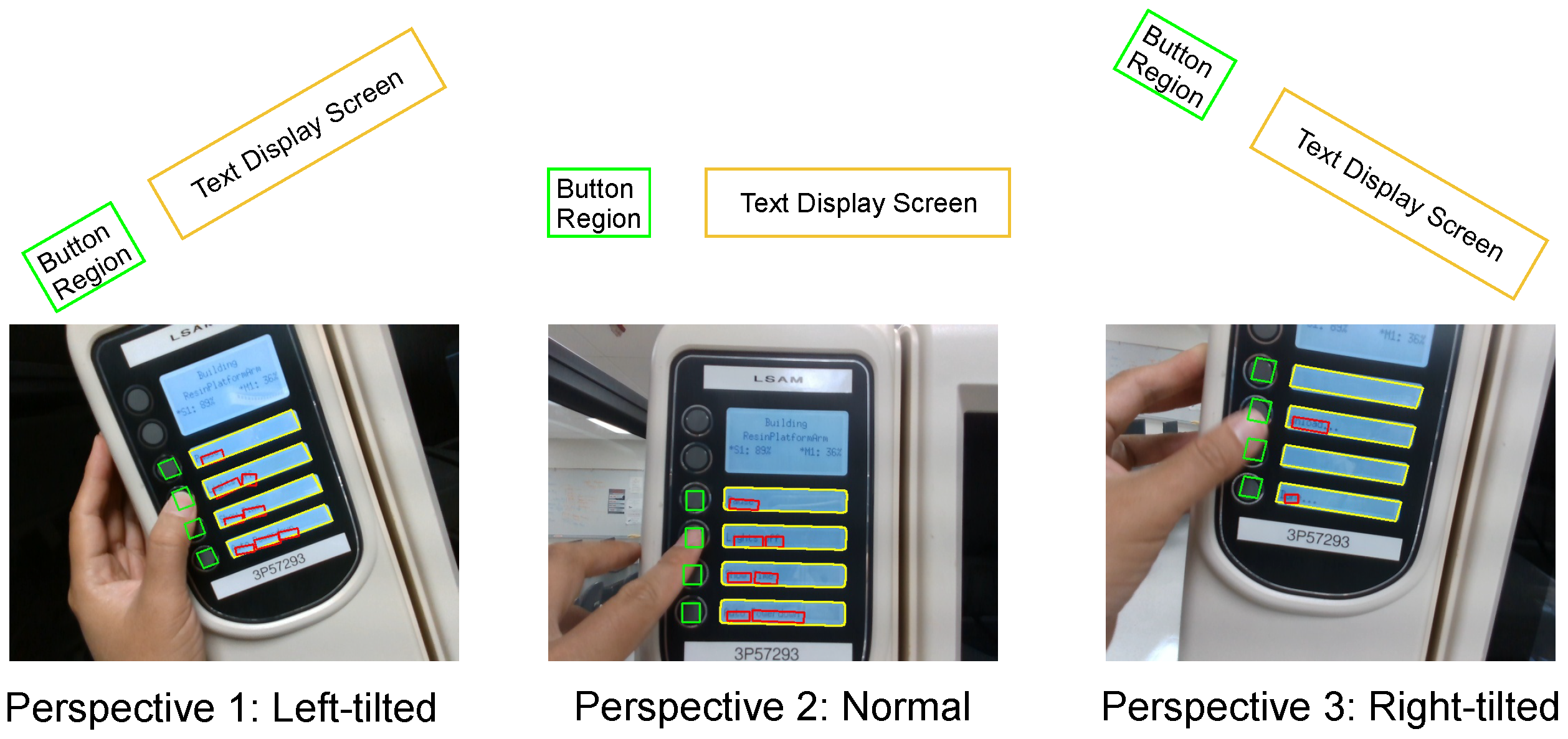

3.3. Building the Model for Human-Machine Interaction Monitoring

4. Results

4.1. Experimental Setup

4.2. DMM Result

4.3. HIM Result

4.4. AIMS Result

4.4.1. Normal Operation Test

4.4.2. Abnormal Condition Test

4.4.3. Numerical Result

5. Discussion

5.1. Application

5.2. Limitations

5.3. Future Work

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Bian, S.; Li, C.; Fu, Y.; Ren, Y.; Wu, T.; Li, G.P.; Li, B. Machine learning-based real-time monitoring system for smart connected worker to improve energy efficiency. J. Manuf. Syst. 2021, 61, 66–76. [Google Scholar] [CrossRef]

- Peres, R.S.; Rocha, A.D.; Leitao, P.; Barata, J. IDARTS—Towards intelligent data analysis and real-time supervision for industry 4.0. Comput. Ind. 2018, 101, 138–146. [Google Scholar] [CrossRef]

- Chen, X.; Huang, R.; Shen, L.; Xiong, D.; Xiao, X.; Liu, M.; Xu, R. Intellectual production supervision perform based on RFID smart electricity meter. In Proceedings of the IOP Conference Series: Earth and Environmental Science; IOP Publishing: Bristol, UK, 2018; Volume 128, p. 012050. [Google Scholar]

- Tiboni, M.; Aggogeri, F.; Pellegrini, N.; Perani, C.A. Smart Modular Architecture for Supervision and Monitoring of a 4.0 Production Plant. Int. J. Autom. Technol. 2019, 13, 310–318. [Google Scholar] [CrossRef]

- Lapidus, A.; Topchiy, D. Construction supervision at the facilities renovation. In Proceedings of the E3S Web of Conferences, EDP Sciences, Moscow, Russia, 3–5 December 2018; Volume 91, p. 08044. [Google Scholar]

- Forsyth, D.A.; Ponce, J. Computer Vision—A Modern Approach, 2nd ed.; Pearson: Upper Saddle River, NJ, USA, 2012; pp. 1–791. [Google Scholar]

- Valero, M.; Newman, S.; Nassehi, A. Link4Smart: A New Framework for Smart Manufacturing Linking Industry 4.0 Relevant Technologies. Procedia CIRP 2022, 107, 1594–1599. [Google Scholar] [CrossRef]

- Ferreira, L.; Putnik, G.; Varela, L.; Manupati, V.; Lopes, N.; Cruz-Cunha, M.; Alves, C.; Castro, H. A Framework for Collaborative Practices Platforms for Humans and Machines in Industry 4.0–Oriented Smart and Sustainable Manufacturing Environments; In book: Smart and Sustainable Manufacturing Systems for Industry 4.0; CRC Press: Boca Raton, FL, USA, 2022; pp. 1–24. [Google Scholar] [CrossRef]

- Li, Z.; Fei, F.; Zhang, G. Edge-to-Cloud IIoT for Condition Monitoring in Manufacturing Systems with Ubiquitous Smart Sensors. Sensors 2022, 22, 5901. [Google Scholar] [CrossRef] [PubMed]

- Wiech, M.; Böllhoff, J.; Metternich, J. Development of an optical object detection solution for defect prevention in a Learning Factory. Procedia Manuf. 2017, 9, 190–197. [Google Scholar] [CrossRef]

- Cong, Y.; Tian, D.; Feng, Y.; Fan, B.; Yu, H. Speedup 3-D texture-less object recognition against self-occlusion for intelligent manufacturing. IEEE Trans. Cybern. 2018, 49, 3887–3897. [Google Scholar] [CrossRef] [PubMed]

- Lemos, C.B.; Farias, P.C.; Simas Filho, E.F.; Conceição, A.G. Convolutional neural network based object detection for additive manufacturing. In Proceedings of the 2019 19th International Conference on Advanced Robotics (ICAR), Belo Horizonte, Brazil, 2–6 December 2019; IEEE: Piscataway, NJ, USA, 2019; pp. 420–425. [Google Scholar]

- Bian, S.; Lin, T.; Li, C.; Fu, Y.; Jiang, M.; Wu, T.; Hang, X.; Li, B. Real-Time Object Detection for Smart Connected Worker in 3D Printing. In Proceedings of the Computational Science—ICCS 2021, Krakow, Poland, 16–18 June 2021; Paszynski, M., Kranzlmüller, D., Krzhizhanovskaya, V.V., Dongarra, J.J., Sloot, P.M., Eds.; Springer International Publishing: Cham, Switzerland, 2021; pp. 554–567. [Google Scholar]

- Wang, W.; Xie, E.; Li, X.; Hou, W.; Lu, T.; Yu, G.; Shao, S. Shape robust text detection with progressive scale expansion network. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 9336–9345. [Google Scholar]

- Yu, D.; Li, X.; Zhang, C.; Liu, T.; Han, J.; Liu, J.; Ding, E. Towards accurate scene text recognition with semantic reasoning networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, WA, USA, 13–19 June 2020; pp. 12113–12122. [Google Scholar]

- Liao, M.; Wan, Z.; Yao, C.; Chen, K.; Bai, X. Real-time scene text detection with differentiable binarization. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020; Volume 34, pp. 11474–11481. [Google Scholar]

- Hu, W.; Cai, X.; Hou, J.; Yi, S.; Lin, Z. Gtc: Guided training of ctc towards efficient and accurate scene text recognition. In Proceedings of the AAAI Conference on Artificial Intelligence, New York, NY, USA, 7–12 February 2020; Volume 34, pp. 11005–11012. [Google Scholar]

- Rusu, R.B.; Cousins, S. 3d is here: Point cloud library (pcl). In Proceedings of the 2011 IEEE International Conference on Robotics and Automation, Shanghai, China, 9–13 May 2011; IEEE: Piscataway, NJ, USA, 2011; pp. 1–4. [Google Scholar]

- Girshick, R.; Donahue, J.; Darrell, T.; Malik, J. Rich feature hierarchies for accurate object detection and semantic segmentation. arXiv 2014, arXiv:1311.2524. [Google Scholar]

- Liu, W.; Anguelov, D.; Erhan, D.; Szegedy, C.; Reed, S.; Fu, C.Y.; Berg, A.C. SSD: Single Shot MultiBox Detector. In Proceedings of the Computer Vision—ECCV 2016, Amsterdam, The Netherlands, 11–14 October 2016; pp. 21–37. [Google Scholar]

- Girshick, R. Fast R-CNN. arXiv 2015, arXiv:1504.08083. [Google Scholar]

- He, K.; Gkioxari, G.; Dollár, P.; Girshick, R. Mask r-cnn. In Proceedings of the IEEE International Conference on Computer Vision, Venice, Italy, 22–29 October 2017; pp. 2961–2969. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. arXiv 2015, arXiv:1409.1556. [Google Scholar]

- Redmon, J.; Farhadi, A. YOLOv3: An Incremental Improvement. arXiv 2018, arXiv:1804.02767. [Google Scholar]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. Yolov4: Optimal speed and accuracy of object detection. arXiv 2020, arXiv:2004.10934. [Google Scholar]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the European Conference on Computer Vision, Zurich, Switzerland, 6–12 September 2014; Springer: Berlin/Heidelberg, Germany, 2014; pp. 740–755. [Google Scholar]

- Robertson, S. A new interpretation of average precision. In Proceedings of the 31st Annual International ACM SIGIR Conference on Research and Development in Information Retrieval, Singapore, 20–24 July 2008; pp. 689–690. [Google Scholar]

- Rezatofighi, H.; Tsoi, N.; Gwak, J.; Sadeghian, A.; Reid, I.; Savarese, S. Generalized intersection over union: A metric and a loss for bounding box regression. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Long Beach, CA, USA, 15–20 June 2019; pp. 658–666. [Google Scholar]

- Bradski, G. The OpenCV Library. Dr. Dobb’s J. Softw. Tools 2000, 25, 120–125. [Google Scholar]

- Likas, A.; Vlassis, N.; Verbeek, J.J. The global k-means clustering algorithm. Pattern Recognit. 2003, 36, 451–461. [Google Scholar] [CrossRef]

- Baek, Y.; Lee, B.; Han, D.; Yun, S.; Lee, H. Character Region Awareness for Text Detection. arXiv 2019, arXiv:1904.01941. [Google Scholar]

- Baek, J.; Kim, G.; Lee, J.; Park, S.; Han, D.; Yun, S.; Oh, S.J.; Lee, H. What Is Wrong With Scene Text Recognition Model Comparisons? Dataset and Model Analysis. arXiv 2019, arXiv:1904.01906. [Google Scholar]

- Bisong, E. Building Machine Learning and Deep Learning Models on Google Cloud Platform: A Comprehensive Guide for Beginners; Apress: New York, NY, USA, 2019. [Google Scholar]

- Kurniawan, A. Introduction to NVIDIA Jetson Nano. In IoT Projects with NVIDIA Jetson Nano: AI-Enabled Internet of Things Projects for Beginners; Apress: Berkeley, CA, USA, 2021; pp. 1–6. [Google Scholar] [CrossRef]

- Sanders, J.; Kandrot, E. CUDA by Example: An Introduction to General-Purpose GPU Programming; Addison-Wesley Professional: Boston, MA, USA, 2010. [Google Scholar]

- Abdelhafez, H.A.; Halawa, H.; Pattabiraman, K.; Ripeanu, M. Snowflakes at the Edge: A Study of Variability among NVIDIA Jetson AGX Xavier Boards. In Proceedings of the 4th International Workshop on Edge Systems, Analytics and Networking, online, 26 April 2021; pp. 1–6. [Google Scholar]

- Davis, J.; Goadrich, M. The relationship between Precision-Recall and ROC curves. In Proceedings of the 23rd International Conference on Machine Learning, Pittsburgh, PA, USA, 25–29 June 2006; pp. 233–240. [Google Scholar]

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. Mobilenetv2: Inverted residuals and linear bottlenecks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 18–23 June 2018; pp. 4510–4520. [Google Scholar]

- Donovan, R.P.; Kim, Y.G.; Manzo, A.; Ren, Y.; Bian, S.; Wu, T.; Purawat, S.; Helvajian, H.; Wheaton, M.; Li, B.; et al. Smart connected worker edge platform for smart manufacturing: Part 2—Implementation and on-site deployment case study. J. Adv. Manuf. Process. 2022, e10130. [Google Scholar] [CrossRef]

| COCO | 3D Printer | |||

|---|---|---|---|---|

| AP | FPS | AP | FPS | |

| YOLOv3 | 51.5 | 23.8 | 98.48 | 29.4 |

| YOLOv4 | 64.9 | 19.2 | 99.80 | 22.3 |

| Mask-RCNN | 60.0 | 5.00 | 98.80 | 5.90 |

| Index | Class Name | AP |

|---|---|---|

| 0 | extruder | 0.99935 |

| 1 | buildplate | 0.99946 |

| 2 | axis | 0.99539 |

| Precision | Recall |

|---|---|

| 0.923 | 0.936 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2022 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (https://creativecommons.org/licenses/by/4.0/).

Share and Cite

Li, C.; Bian, S.; Wu, T.; Donovan, R.P.; Li, B. Affordable Artificial Intelligence-Assisted Machine Supervision System for the Small and Medium-Sized Manufacturers. Sensors 2022, 22, 6246. https://doi.org/10.3390/s22166246

Li C, Bian S, Wu T, Donovan RP, Li B. Affordable Artificial Intelligence-Assisted Machine Supervision System for the Small and Medium-Sized Manufacturers. Sensors. 2022; 22(16):6246. https://doi.org/10.3390/s22166246

Chicago/Turabian StyleLi, Chen, Shijie Bian, Tongzi Wu, Richard P. Donovan, and Bingbing Li. 2022. "Affordable Artificial Intelligence-Assisted Machine Supervision System for the Small and Medium-Sized Manufacturers" Sensors 22, no. 16: 6246. https://doi.org/10.3390/s22166246

APA StyleLi, C., Bian, S., Wu, T., Donovan, R. P., & Li, B. (2022). Affordable Artificial Intelligence-Assisted Machine Supervision System for the Small and Medium-Sized Manufacturers. Sensors, 22(16), 6246. https://doi.org/10.3390/s22166246