Intelligent Video Highlights Generation with Front-Camera Emotion Sensing

Abstract

1. Introduction

- A novel multi-modal system combining human emotion and audio-visual features to achieve an intelligent, personalized, and user-oriented highlight extraction.

- The design of a scalable event timeline to coherently fuse extracted features and enable the synchronisation of heterogeneous input streams.

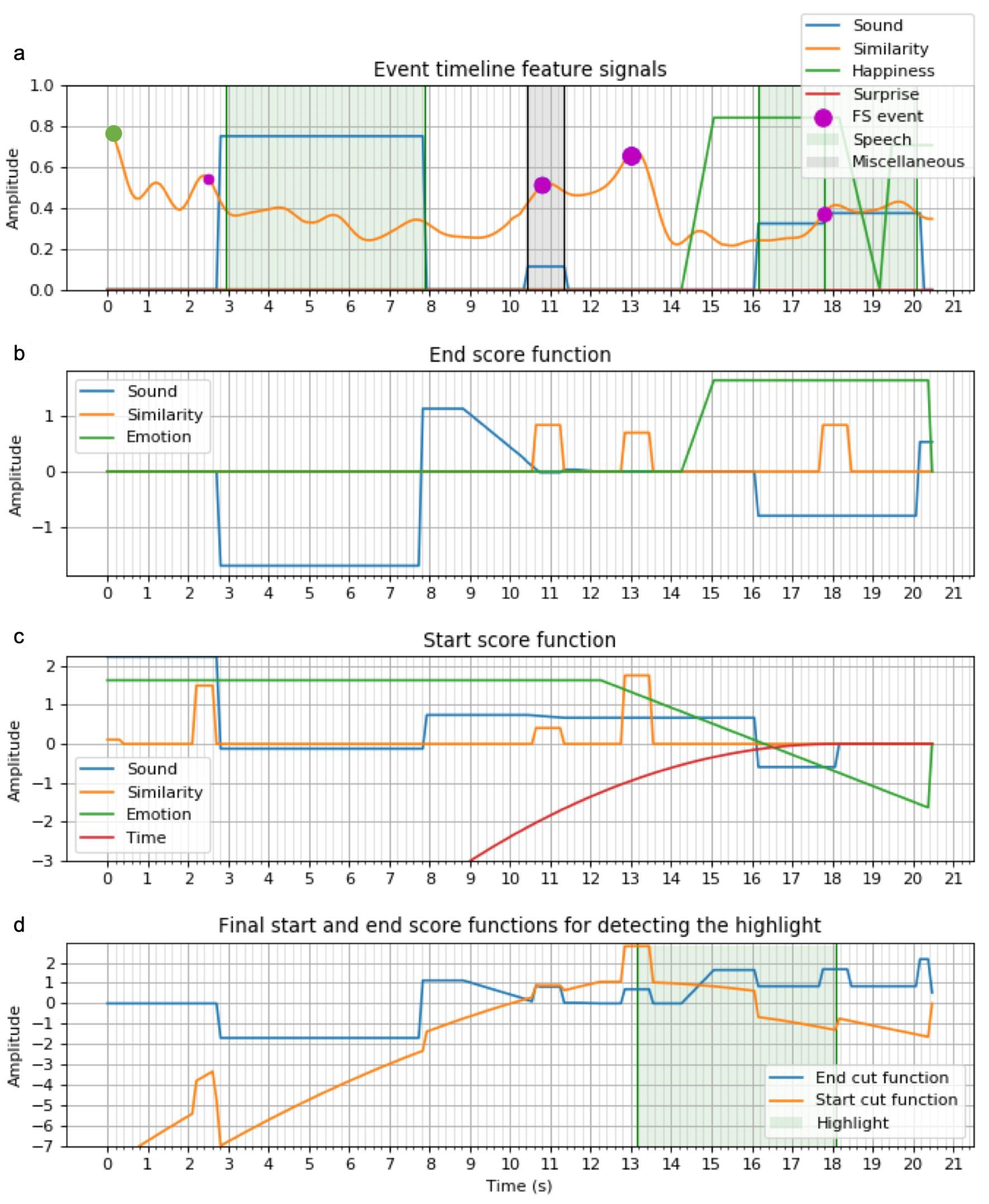

- A novel algorithm mapping event timeline features into two inter-dependant score functions for optimal highlight cuts.

- The usage of video frame similarity to detect dynamic variations in a video for enhancing the generalization of highlight content.

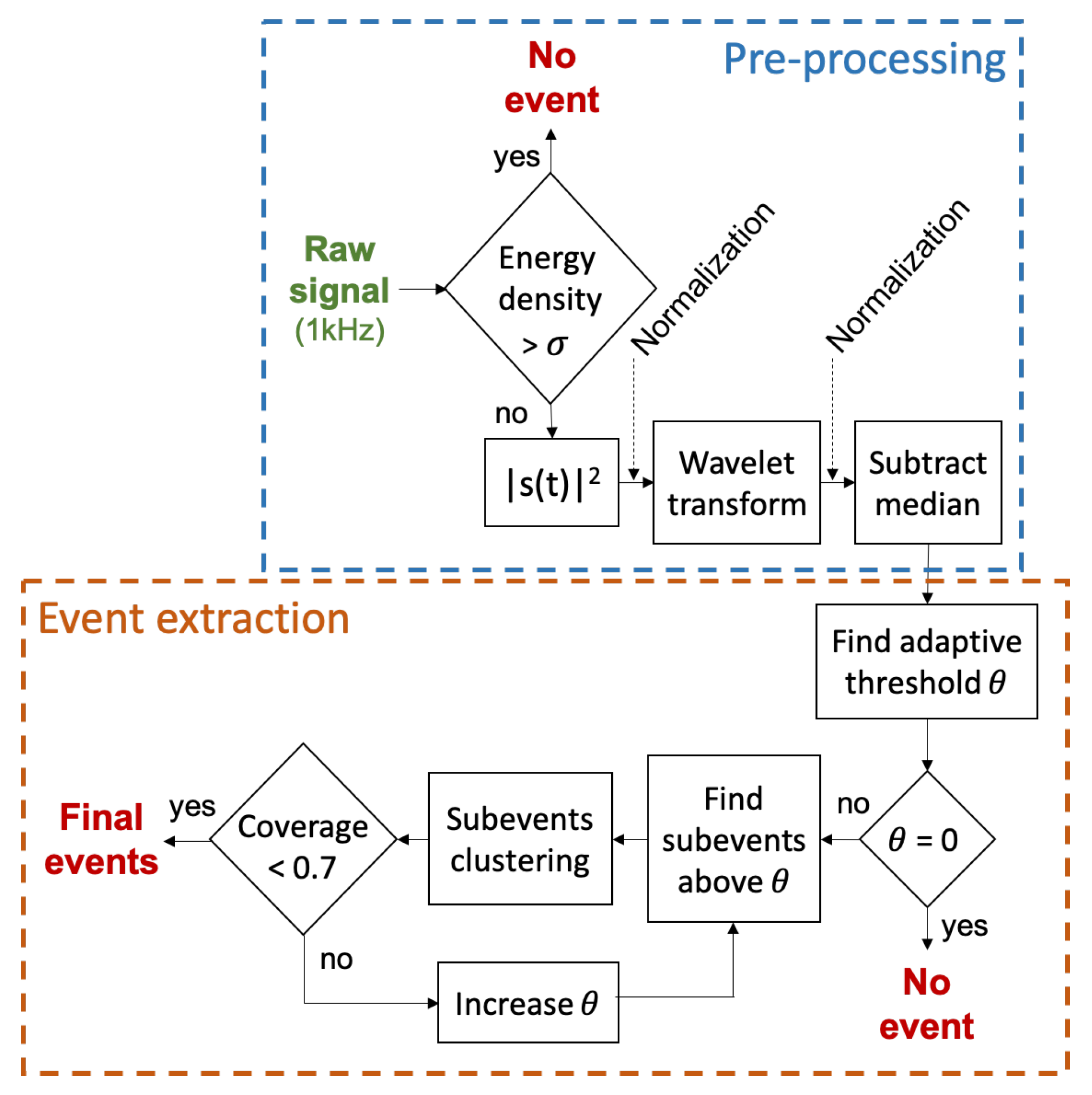

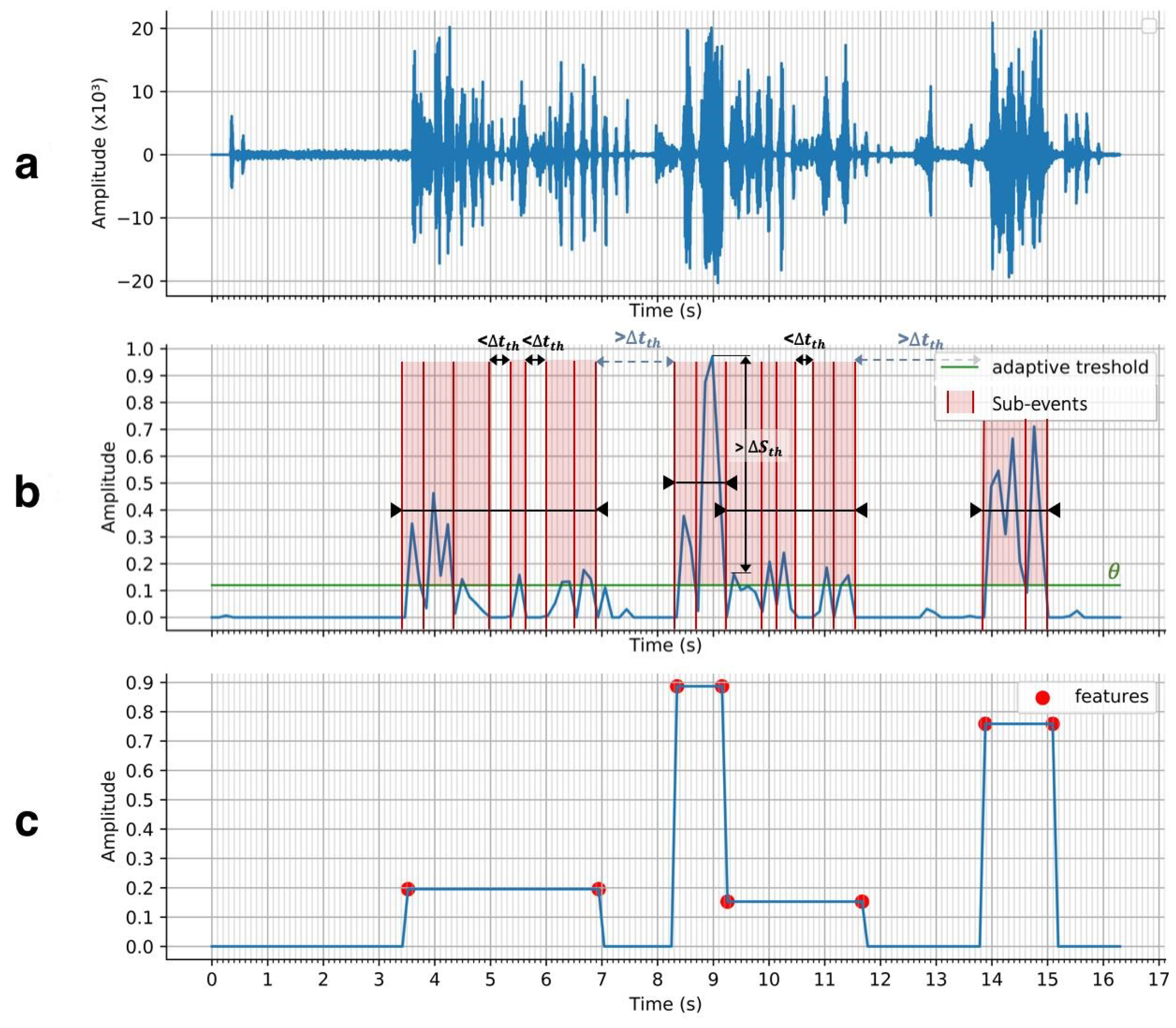

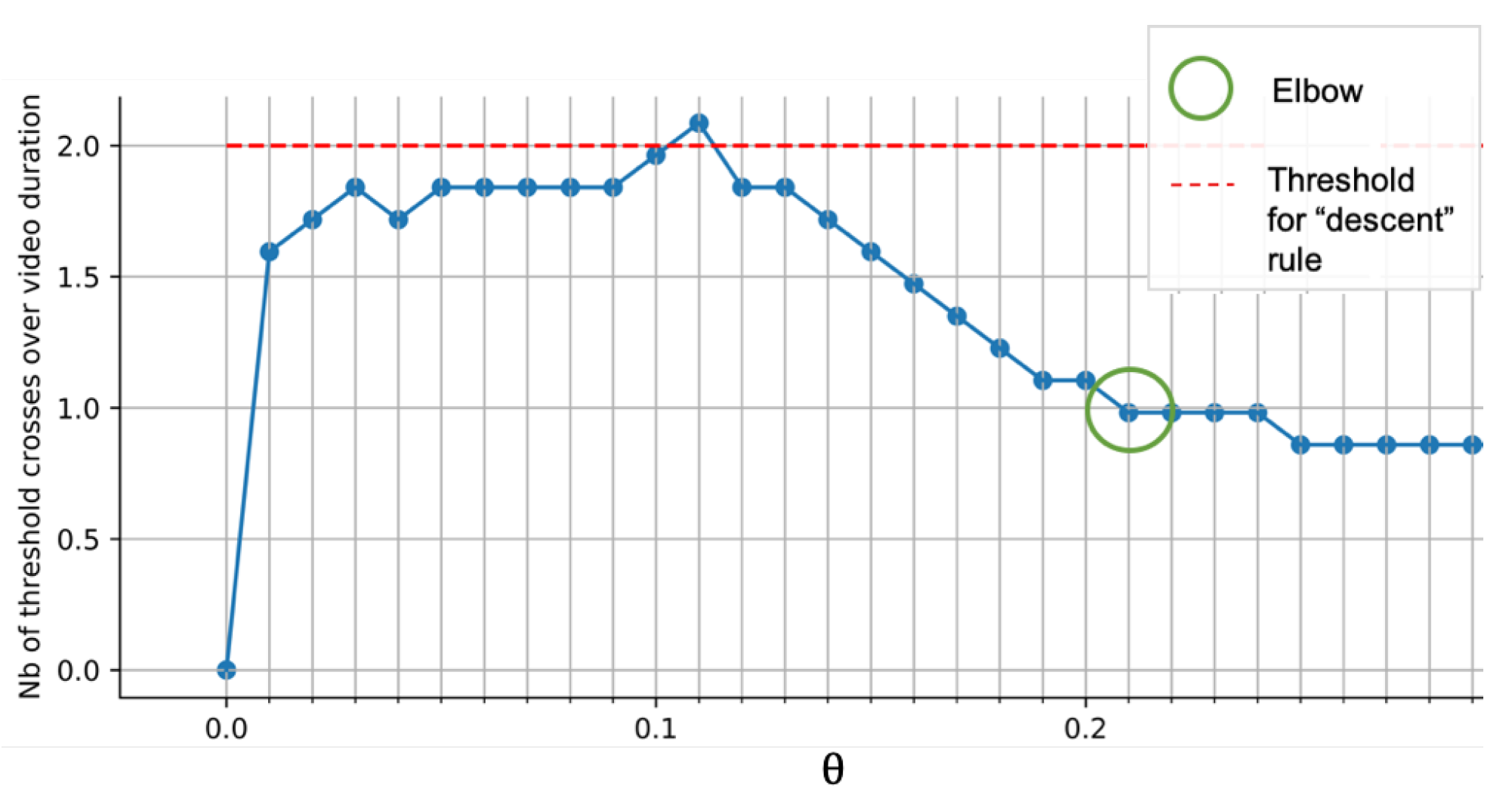

- A high detection accuracy of sound events by utilizing the wavelet transform and a bottom-up peaks clustering algorithm.

2. Background

2.1. Internal Content Techniques

2.2. External Content Techniques

2.3. Hybrid Content Techniques

3. System Design

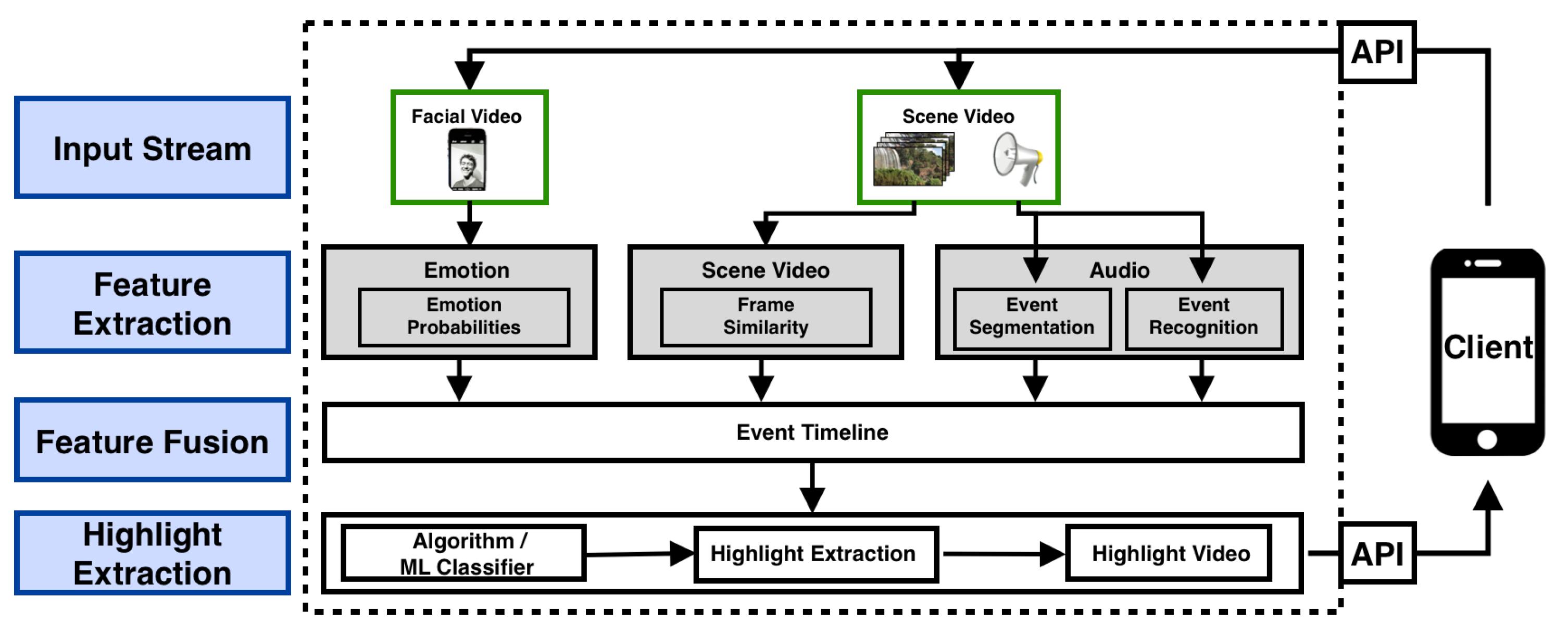

3.1. System Architecture

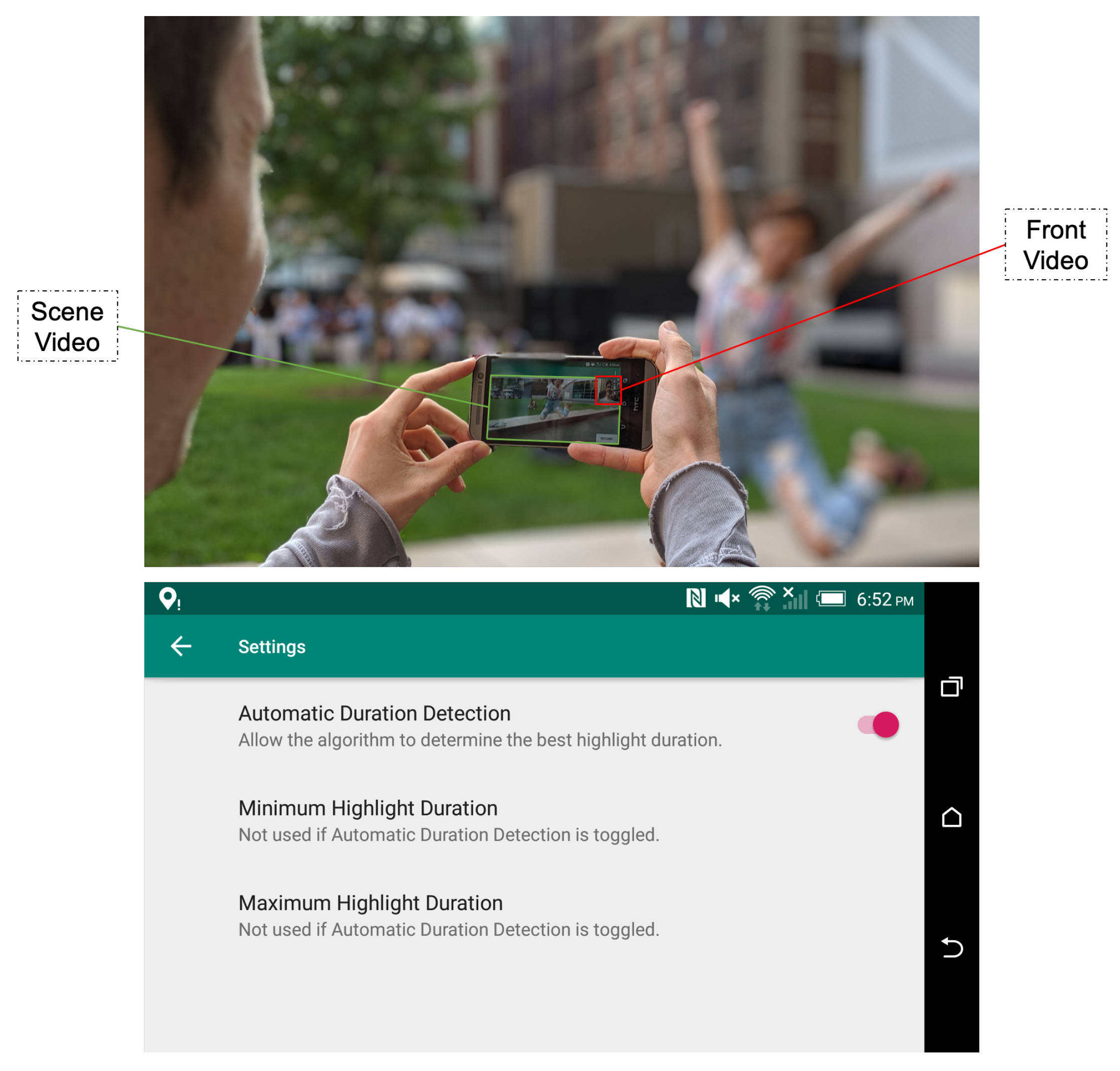

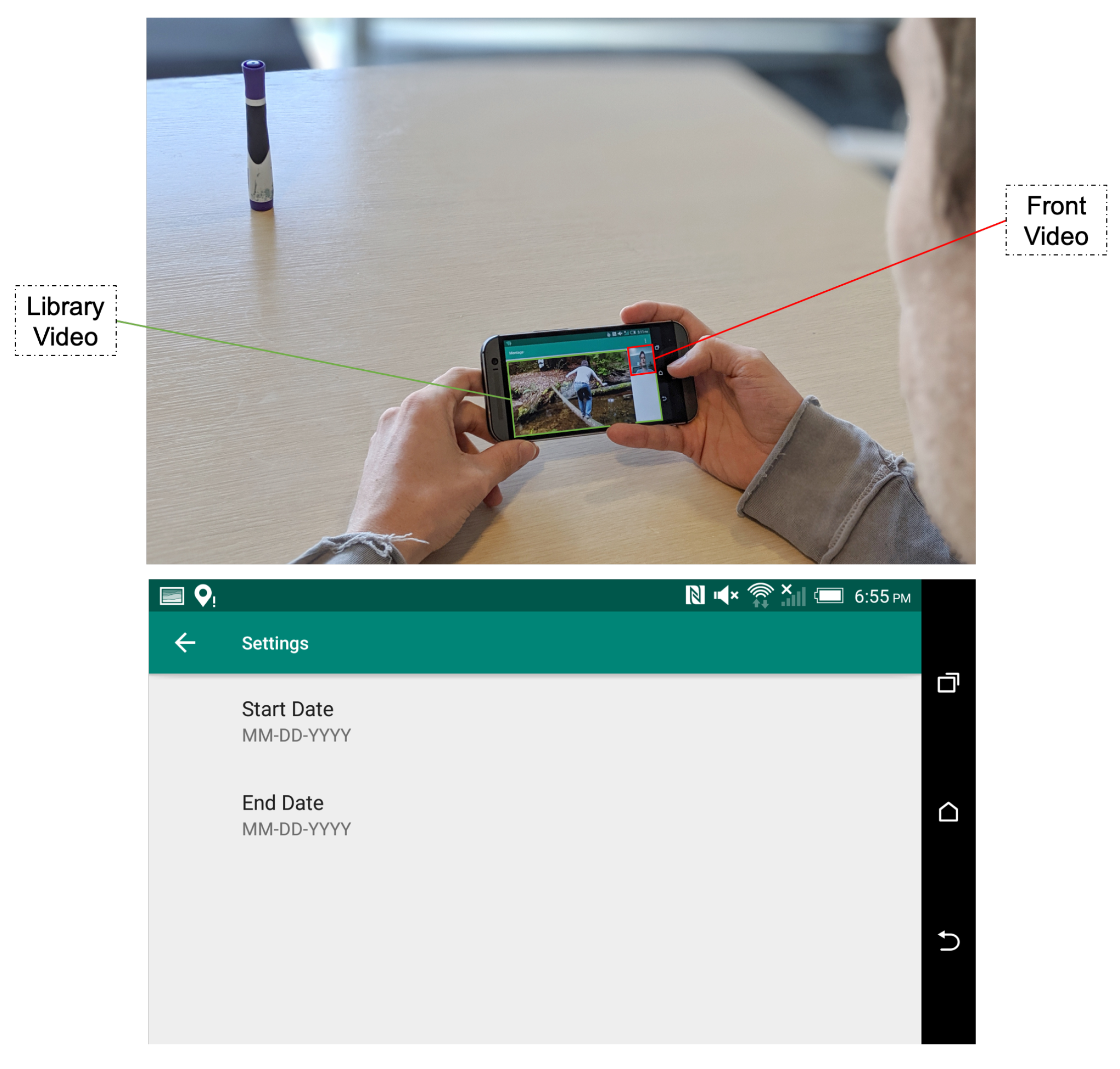

3.2. Input Stream

- Scene Video: video to be highlight-extracted. Both video frames and audio signals are extracted as distinct raw signal inputs for the highlight extraction pipeline.

- Facial Video: video of the user’s face recorded in reaction to the recorded scene. Its duration must be equal to the scene video duration.

- : parameter indicating the desired highlight minimal duration. If set to −1, the algorithm will automatically decide the highlight length.

- : parameter indicating the desired highlight maximal duration. If set to −1, the algorithm will automatically decide the highlight length.

- : boolean indicating whether multiple highlights should be generated.

3.3. Signal Pre-Processing and Feature Extraction

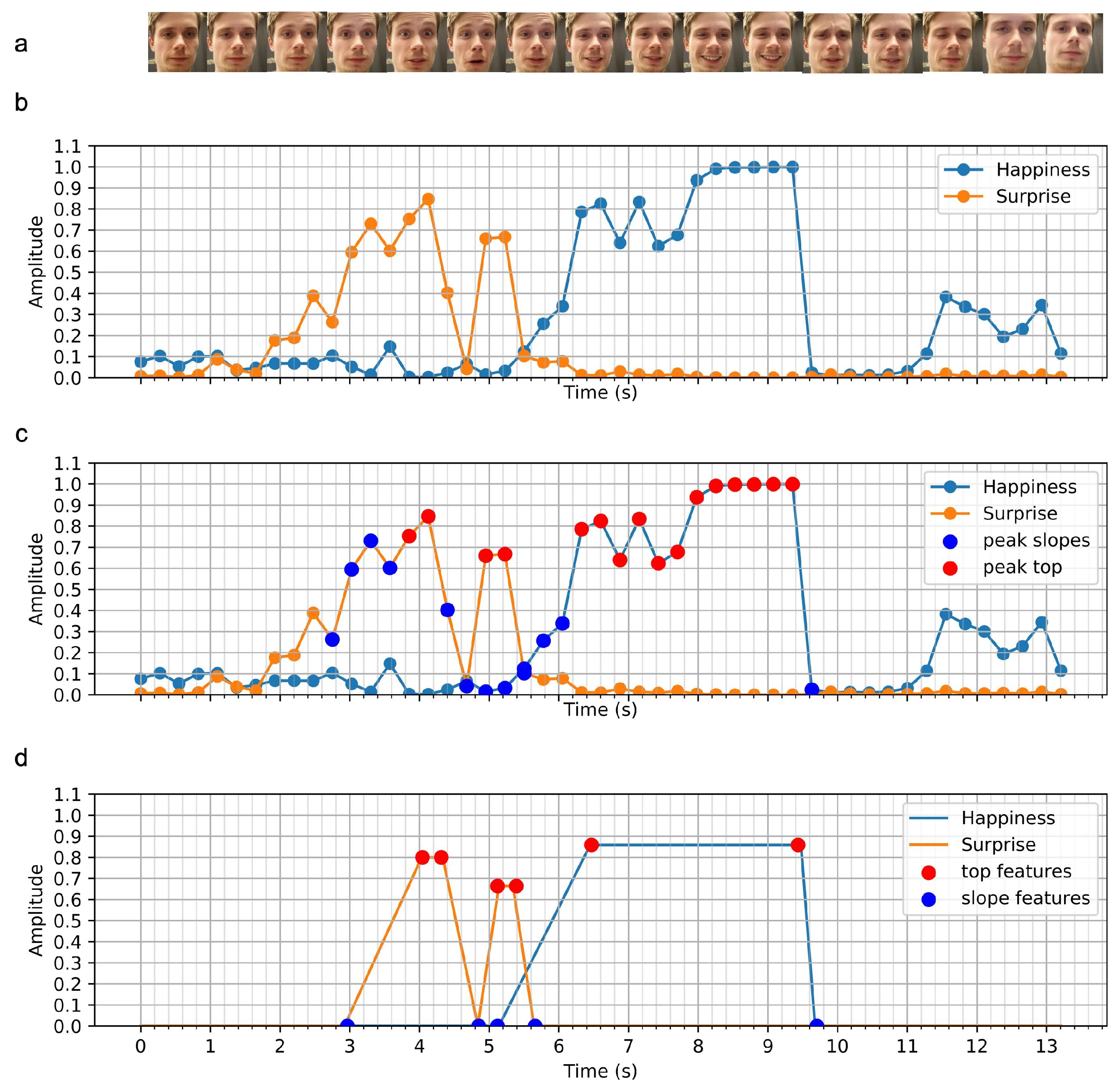

3.3.1. Emotion

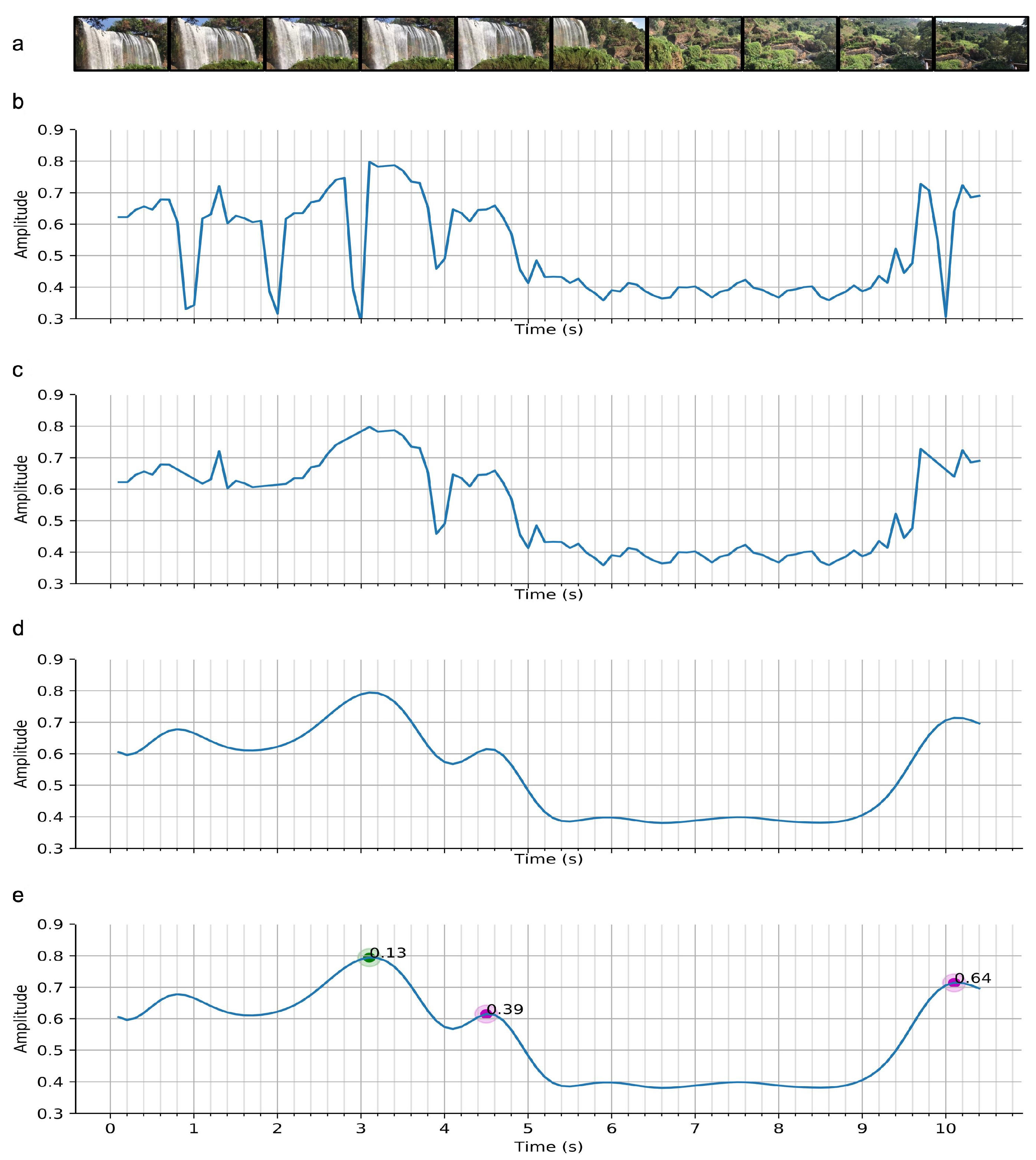

3.3.2. Video

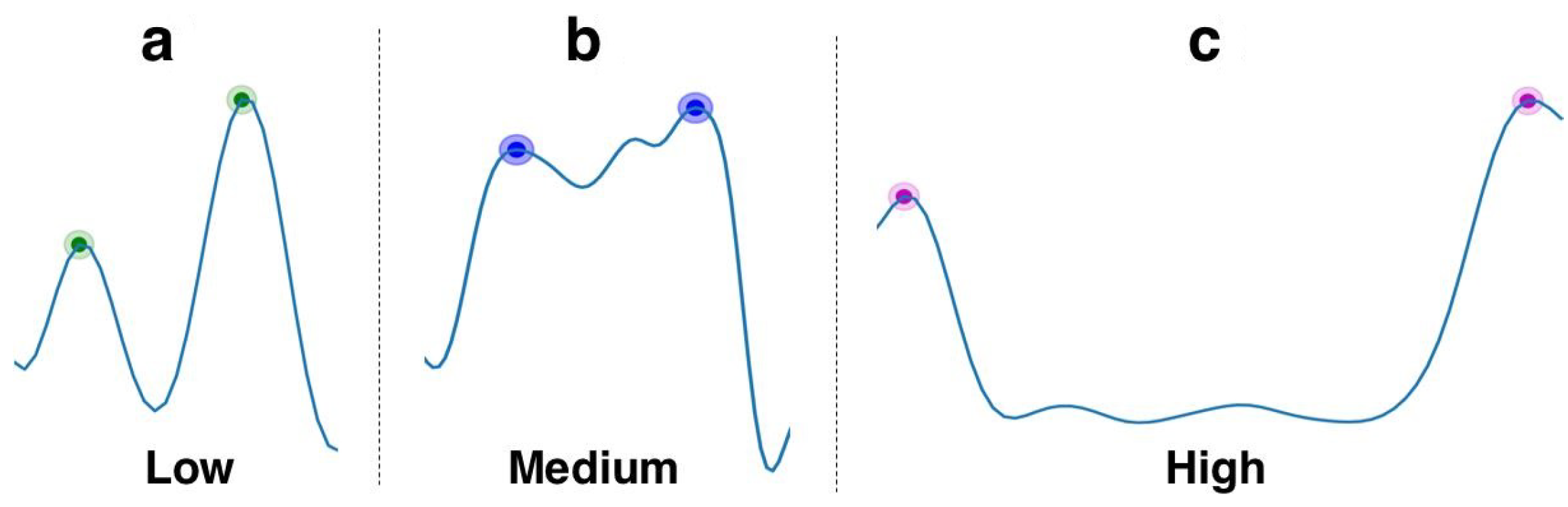

- “Hills”: these frame similarity peaks translate either to brief camera stop-motions or noise, and thus are assigned low importance.

- “Plateau”: this pattern translates to an extended camera stop, often due to an event of interest. This frame similarity shape also appears when a camera zoom-in/zoom-out is performed. The medium level of importance was chosen instead of high because of the lack of summarisation this pattern offers. Only one scene of the video would be contained in the generated highlight, versus two for the “Valley” pattern (see below).

- “Valley”: this pattern often translates to a camera pan or tilt, from one motion stop to another. This pattern was assigned high importance for its semantic generalization. This pattern contains information from the first motion stop scene, information from the second motion stop scene and information between both scenes. Furthermore, the in-between content often presents important information, due to the constant low similarity value. As an example, this pattern occurs when the user is performing a constant pan/tilt to show an area or a beautiful landscape.

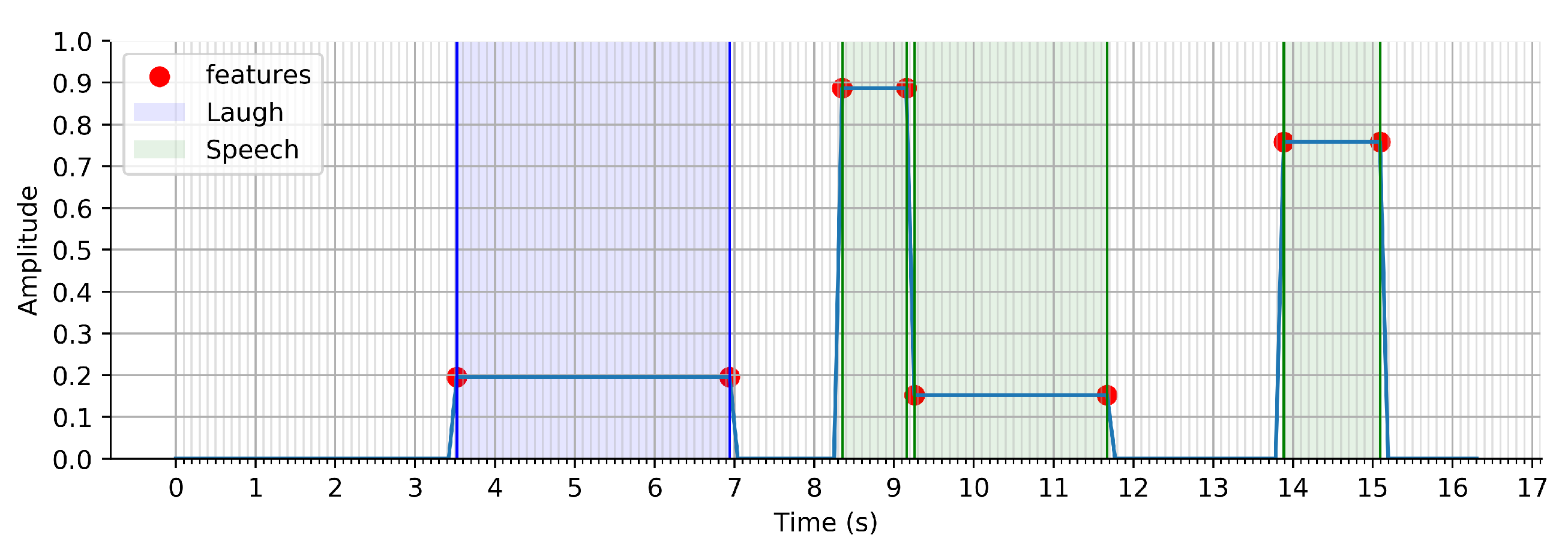

3.3.3. Audio

| Algorithm 1 Adaptive threshold computation |

Require: sound signal of n values Ensure: adaptive threshold value

|

| Algorithm 2 Sound events clustering |

Require: sub_events D, , , Ensure: events E

|

3.4. Feature Fusion

Events Timeline

3.5. Highlight Extraction

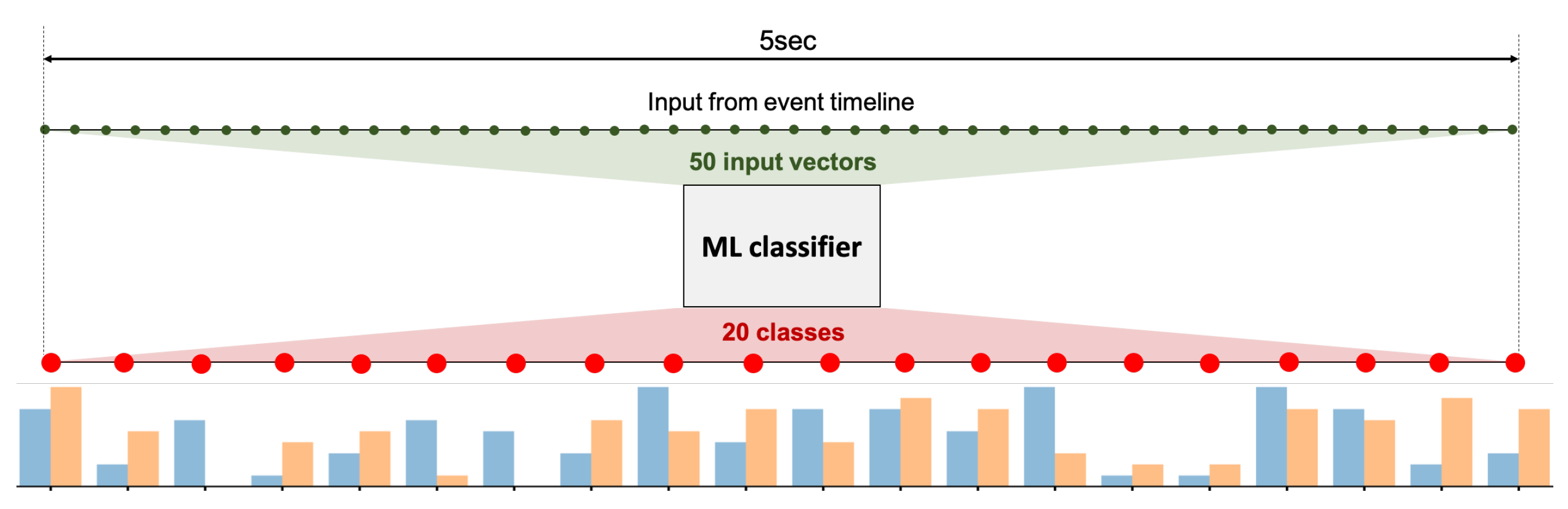

3.5.1. Machine Learning Approach

3.5.2. Hand-Designed Algorithm

- –

- The score of is lower than a threshold value

- –

- exceeds a user-specified maximum number of highlights

3.6. Output Stream

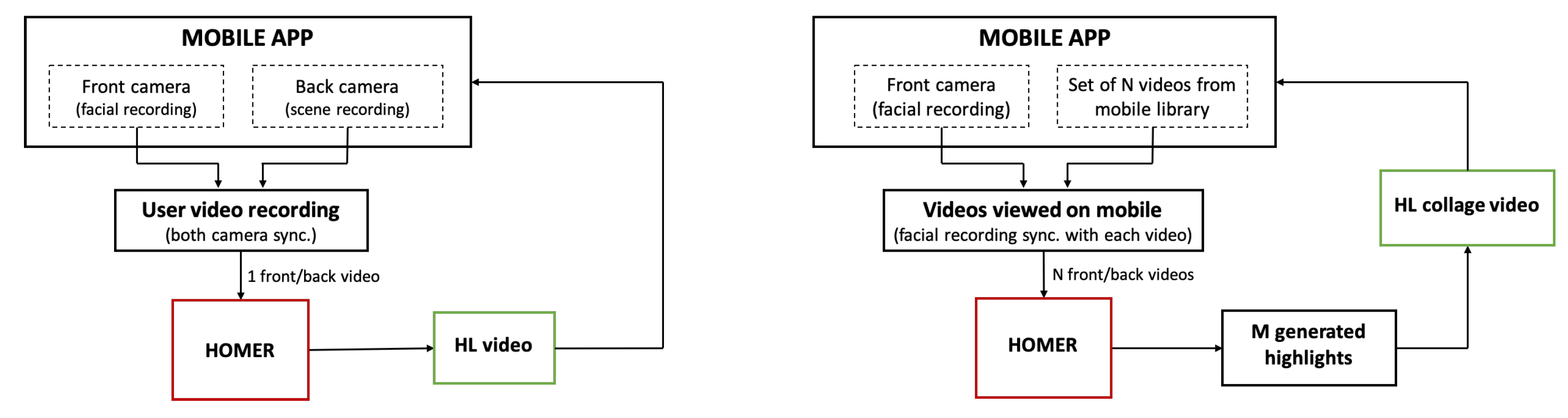

4. Applications

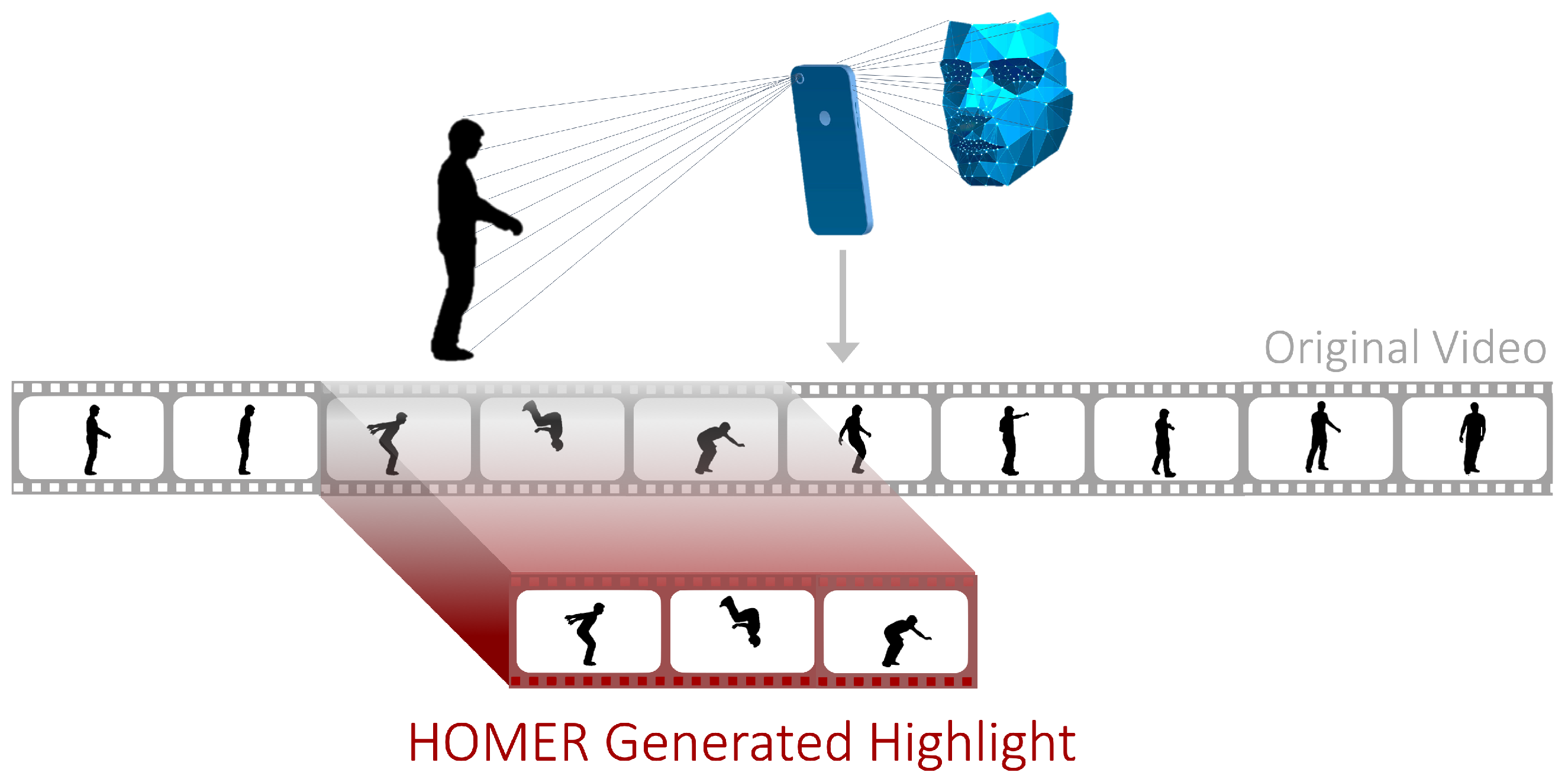

4.1. Application 1: Automated Highlight Generation Using Dual Camera

4.2. Application 2: Highlights Montage Creation for Library Videos

4.3. Future Applications

- With the emergence of the streaming platform Twitch, watching gamers play video games live is a rapidly growing source of entertainment [65]. Often, streamers include a camera preview of their face in their stream alongside the live recording of the game that they are playing, providing both a facial video and a scene video that can together be processed by the web service to generate highlights. Indeed, a 2016 survey found that Twitch viewers watch streams for approximately h per week [66], and currently, Twitch only provides a manual highlight annotation interface. Thus, bringing automatic highlight generation to a streaming platform like Twitch can save significant time on both the streamer’s end when making highlight videos and the viewer’s end when wanting to watch a missed stream. This application would make the frame similarity an irrelevant feature for the system, and would rely on audio and emotional features only.

- Video calling applications such as FaceTime and Skype canonically involve two facial videos, but one can be treated as the scene video as well. In that case, highlights of a video call can be generated, for example capturing funny jokes or exciting parts of the conversation.

5. Evaluation and Discussion

5.1. Experimental Setup

5.1.1. Data Acquisition

5.1.2. Participants

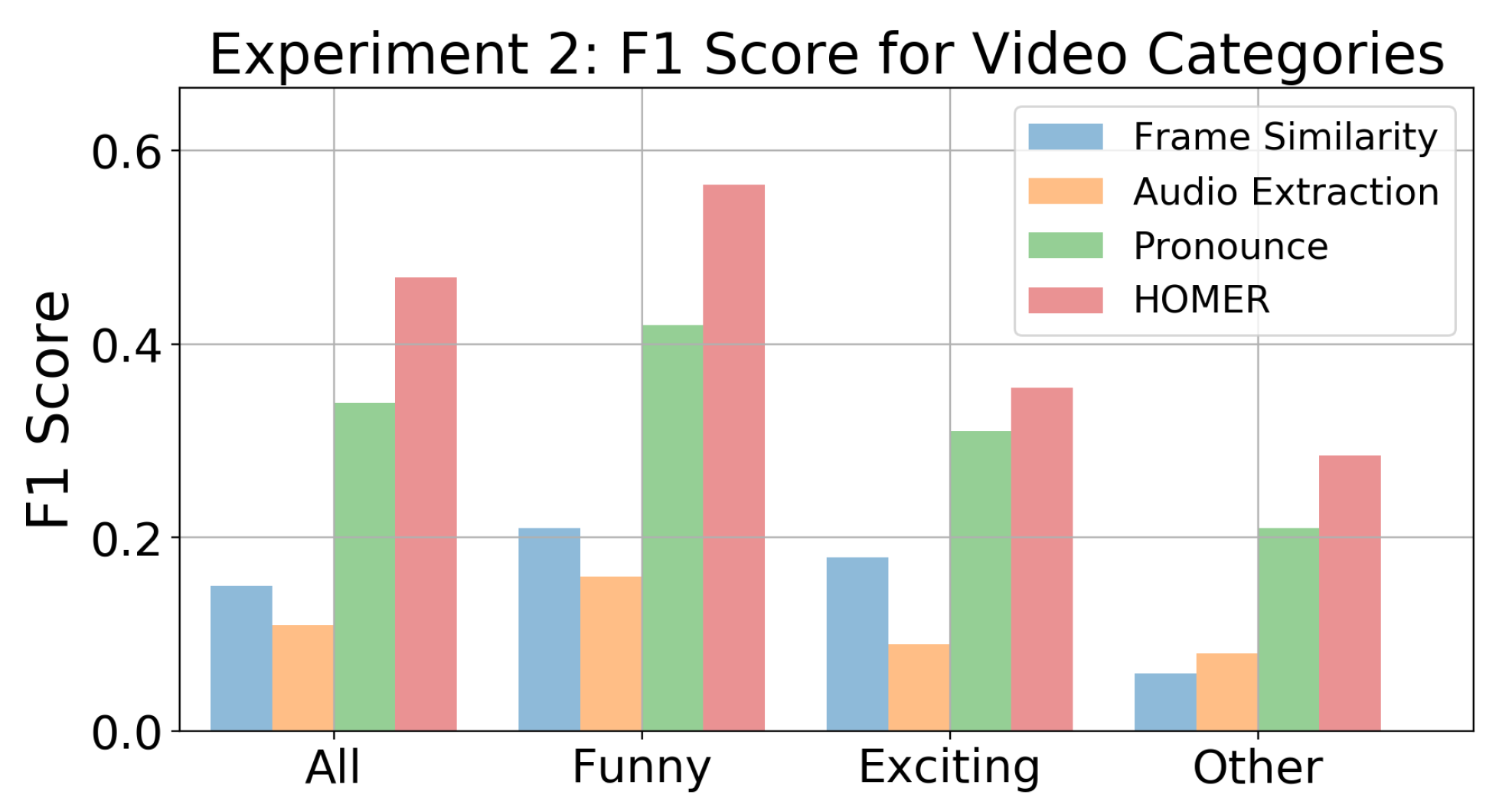

5.2. Results

5.2.1. Metric

5.2.2. Baseline

5.2.3. Highlight Generation

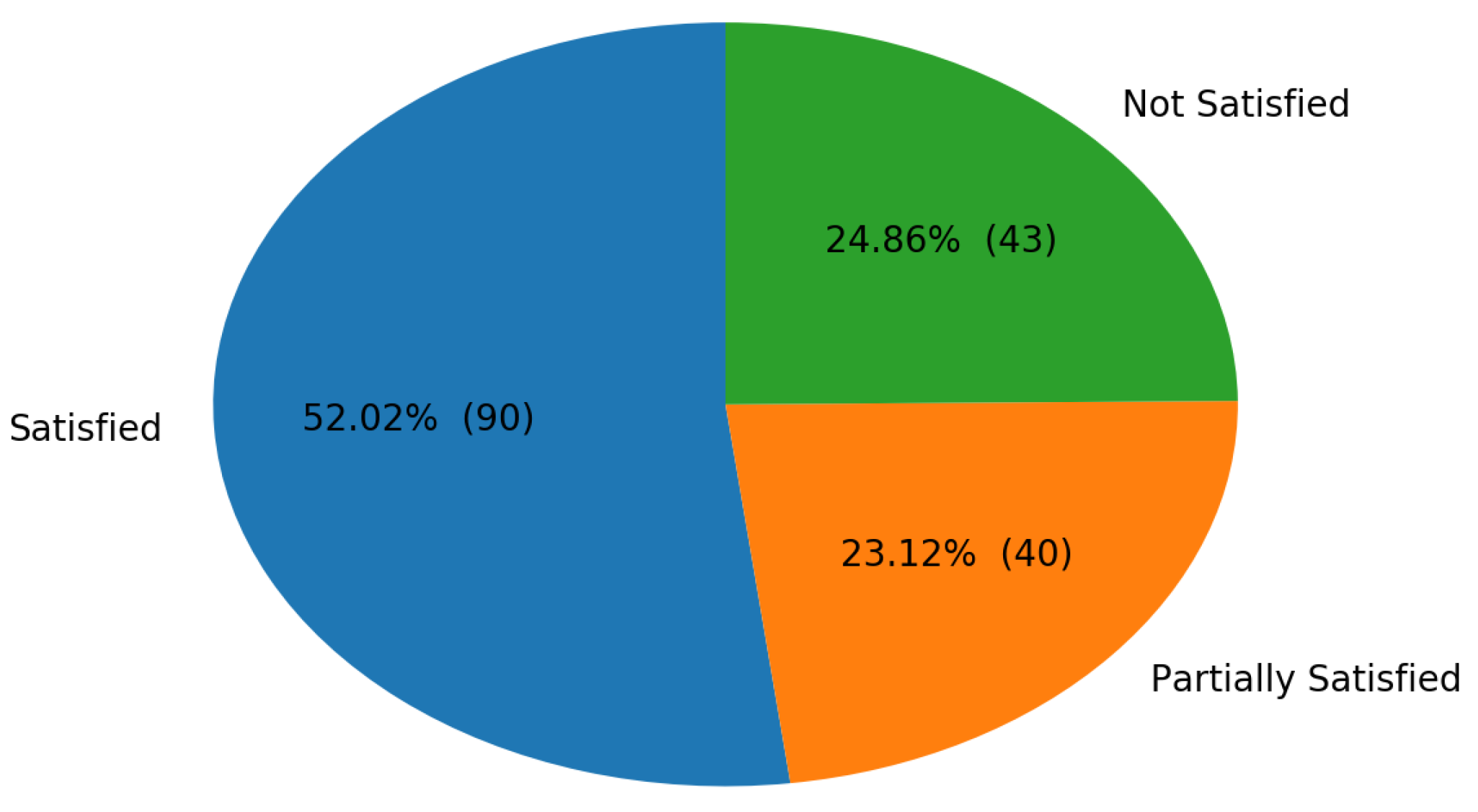

5.2.4. Satisfaction

5.2.5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Cisco Visual Networking Index: Forecast and Trends, 2017–2022. Technical Report. 2019. Available online: https://www.cisco.com/c/en/us/solutions/collateral/service-provider/visual-networking-index-vni/white-paper-c11-741490.html (accessed on 31 August 2019).

- Peng, W.; Chu, W.; Chang, C.; Chou, C.; Huang, W.; Chang, W.; Hung, Y. Editing by Viewing: Automatic Home Video Summarization by Viewing Behavior Analysis. IEEE Trans. Multimed. 2011, 13, 539–550. [Google Scholar] [CrossRef]

- Zhang, S.; Tian, Q.; Huang, Q.; Gao, W.; Li, S. Utilizing affective analysis for efficient movie browsing. In Proceedings of the 2009 16th IEEE International Conference on Image Processing (ICIP), Cairo, Egypt, 7–10 November 2009; pp. 1853–1856. [Google Scholar] [CrossRef]

- Lew, M.S.; Sebe, N.; Djeraba, C.; Jain, R. Content-based Multimedia Information Retrieval: State of the Art and Challenges. ACM Trans. Multimed. Comput. Commun. Appl. 2006, 2, 1–19. [Google Scholar] [CrossRef]

- Hanjalic, A. Generic approach to highlights extraction from a sport video. In Proceedings of the 2003 International Conference on Image Processing (Cat. No.03CH37429), Barcelona, Spain, 14–17 September 2003; Volume 1, pp. 1–4. [Google Scholar] [CrossRef]

- Hanjalic, A. Adaptive extraction of highlights from a sport video based on excitement modeling. IEEE Trans. Multimed. 2005, 7, 1114–1122. [Google Scholar] [CrossRef]

- Assfalg, J.; Bertini, M.; Colombo, C.; Bimbo, A.D.; Nunziati, W. Automatic extraction and annotation of soccer video highlights. In Proceedings of the 2003 International Conference on Image Processing (Cat. No.03CH37429), Barcelona, Spain, 14–17 September 2003; Volume 2, pp. 527–530. [Google Scholar] [CrossRef]

- Chakraborty, P.R.; Zhang, L.; Tjondronegoro, D.; Chandran, V. Using Viewer’s Facial Expression and Heart Rate for Sports Video Highlights Detection. In Proceedings of the 5th ACM on International Conference on Multimedia Retrieval, Shanghai, China, 23–26 June 2015; ACM: New York, NY, USA, 2015; pp. 371–378. [Google Scholar] [CrossRef]

- Butler, D.; Ortutay, B. Facebook Auto-Generates Videos Celebrating Extremist Images; AP News: New York, NY, USA, 2019. [Google Scholar]

- Joho, H.; Jose, J.M.; Valenti, R.; Sebe, N. Exploiting Facial Expressions for Affective Video Summarisation. In Proceedings of the ACM International Conference on Image and Video Retrieval, Santorini Island, Greece, 8–10 July 2009; ACM: New York, NY, USA, 2009; pp. 31:1–31:8. [Google Scholar] [CrossRef]

- Joho, H.; Staiano, J.; Sebe, N.; Jose, J.M. Looking at the viewer: Analysing facial activity to detect personal highlights of multimedia contents. Multimed. Tools Appl. 2011, 51, 505–523. [Google Scholar] [CrossRef]

- Pan, G.; Zheng, Y.; Zhang, R.; Han, Z.; Sun, D.; Qu, X. A bottom-up summarization algorithm for videos in the wild. EURASIP J. Adv. Signal Process. 2019, 2019, 15. [Google Scholar] [CrossRef]

- Al Nahian, M.; Iftekhar, A.S.M.; Islam, M.; Rahman, S.M.M.; Hatzinakos, D. CNN-Based Prediction of Frame-Level Shot Importance for Video Summarization. In Proceedings of the 2017 International Conference on New Trends in Computing Sciences (ICTCS), Amman, Jordan, 11–13 October 2017. [Google Scholar]

- Ma, Y.-F.; Hua, X.-S.; Lu, L.; Zhang, H.-J. A generic framework of user attention model and its application in video summarization. IEEE Trans. Multimed. 2005, 7, 907–919. [Google Scholar] [CrossRef]

- Zhang, K.; Chao, W.; Sha, F.; Grauman, K. Video Summarization with Long Short-term Memory. In Computer Vision—ECCV 2016; Springer: Cham, Switzerland, 2016; pp. 766–782. [Google Scholar]

- Otani, M.; Nakashima, Y.; Rahtu, E.; Heikkilä, J.; Yokoya, N. Video Summarization Using Deep Semantic Features. In Computer Vision—ACCV 2016; Lai, S.H., Lepetit, V., Nishino, K., Sato, Y., Eds.; Springer International Publishing: Cham, Switzerland, 2017; pp. 361–377. [Google Scholar]

- Yang, H.; Wang, B.; Lin, S.; Wipf, D.P.; Guo, M.; Guo, B. Unsupervised Extraction of Video Highlights Via Robust Recurrent Auto-encoders. In Proceedings of the IEEE International Conference on Computer Vision 2015, Santiago, Chile, 7–13 December 2015; pp. 4633–4641. [Google Scholar]

- Mahasseni, B.; Lam, M.; Todorovic, S. Unsupervised Video Summarization With Adversarial LSTM Networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017. [Google Scholar]

- Sun, M.; Farhadi, A.; Seitz, S. Ranking Domain-Specific Highlights by Analyzing Edited Videos. In Computer Vision—ECCV 2014; Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T., Eds.; Springer International Publishing: Cham, Switzerland, 2014; pp. 787–802. [Google Scholar]

- Wang, S.; Ji, Q. Video Affective Content Analysis: A Survey of State-of-the-Art Methods. IEEE Trans. Affect. Comput. 2015, 6, 410–430. [Google Scholar] [CrossRef]

- Wang, S.; Zhu, Y.; Wu, G.; Ji, Q. Hybrid video emotional tagging using users’ EEG and video content. Multimed. Tools Appl. 2014, 72, 1257–1283. [Google Scholar] [CrossRef]

- Soleymani, M.; Pantic, M.; Pun, T. Multimodal Emotion Recognition in Response to Videos. IEEE Trans. Affect. Comput. 2012, 3, 211–223. [Google Scholar] [CrossRef]

- Soleymani, M.; Asghari-Esfeden, S.; Fu, Y.; Pantic, M. Analysis of EEG Signals and Facial Expressions for Continuous Emotion Detection. IEEE Trans. Affect. Comput. 2016, 7, 17–28. [Google Scholar] [CrossRef]

- Fleureau, J.; Guillotel, P.; Orlac, I. Affective Benchmarking of Movies Based on the Physiological Responses of a Real Audience. In Proceedings of the 2013 Humaine Association Conference on Affective Computing and Intelligent Interaction, Geneva, Switzerland, 3–5 September 2013; pp. 73–78. [Google Scholar] [CrossRef]

- Wang, S.; Liu, Z.; Zhu, Y.; He, M.; Chen, X.; Ji, Q. Implicit video emotion tagging from audiences’ facial expression. Multimed. Tools Appl. 2015, 74, 4679–4706. [Google Scholar] [CrossRef]

- Money, A.G.; Agius, H. Video summarisation: A conceptual framework and survey of the state of the art. J. Vis. Commun. Image Represent. 2008, 19, 121–143. [Google Scholar] [CrossRef]

- Shukla, P.; Sadana, H.; Bansal, A.; Verma, D.; Elmadjian, C.; Raman, B.; Turk, M. Automatic cricket highlight generation using event-driven and excitement-based features. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops, Salt Lake City, UT, USA, 18–22 June 2018; pp. 1800–1808. [Google Scholar]

- Wang, J.; Chng, E.; Xu, C.; Lu, H.; Tian, Q. Generation of Personalized Music Sports Video Using Multimodal Cues. IEEE Trans. Multimed. 2007, 9, 576–588. [Google Scholar] [CrossRef]

- Yao, T.; Mei, T.; Rui, Y. Highlight Detection with Pairwise Deep Ranking for First-Person Video Summarization. In Proceedings of the 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 26 June–1 July 2016; pp. 982–990. [Google Scholar] [CrossRef]

- Panda, R.; Das, A.; Wu, Z.; Ernst, J.; Roy-Chowdhury, A.K. Weakly Supervised Summarization of Web Videos. In Proceedings of the 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, 22–29 October 2017; pp. 3677–3686. [Google Scholar] [CrossRef]

- Gong, B.; Chao, W.L.; Grauman, K.; Sha, F. Diverse Sequential Subset Selection for Supervised Video Summarization. In Advances in Neural Information Processing Systems 27; Ghahramani, Z., Welling, M., Cortes, C., Lawrence, N.D., Weinberger, K.Q., Eds.; Citeseer: Princeton, NJ, USA, 2014; pp. 2069–2077. [Google Scholar]

- Sharghi, A.; Gong, B.; Shah, M. Query-Focused Extractive Video Summarization. In Proceedings of the European Conference on Computer Vision, Amsterdam, The Netherlands, 8–16 October 2016; pp. 3–19. [Google Scholar]

- Zhang, K.; Chao, W.; Sha, F.; Grauman, K. Summary Transfer: Exemplar-based Subset Selection for Video Summarization. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 2016, Las Vegas, NV, USA, 27–30 June 2016; pp. 1059–1067. [Google Scholar]

- Gygli, M.; Grabner, H.; Van Gool, L. Video summarization by learning submodular mixtures of objectives. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 7–12 June 2015; pp. 3090–3098. [Google Scholar] [CrossRef]

- Morère, O.; Goh, H.; Veillard, A.; Chandrasekhar, V.; Lin, J. Co-regularized deep representations for video summarization. In Proceedings of the 2015 IEEE International Conference on Image Processing (ICIP), Quebec City, QC, Canada, 27–30 September 2015; pp. 3165–3169. [Google Scholar] [CrossRef]

- De Avila, S.E.F.; Lopes, A.P.B.; da Luz, A.; de Albuquerque Araújo, A. VSUMM: A mechanism designed to produce static video summaries and a novel evaluation method. Pattern Recognit. Lett. 2011, 32, 56–68. [Google Scholar] [CrossRef]

- Khosla, A.; Hamid, R.; Lin, C.; Sundaresan, N. Large-Scale Video Summarization Using Web-Image Priors. In Proceedings of the 2013 IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 2698–2705. [Google Scholar] [CrossRef]

- Mundur, P.; Rao, Y.; Yesha, Y. Keyframe-based video summarization using Delaunay clustering. Int. J. Digit. Libr. 2006, 6, 219–232. [Google Scholar] [CrossRef]

- Ngo, C.-W.; Ma, Y.-T.; Zhang, H.-J. Automatic video summarization by graph modeling. In Proceedings of the Ninth IEEE International Conference on Computer Vision, Nice, France, 13–16 October 2003; Volume 1, pp. 104–109. [Google Scholar] [CrossRef]

- Lu, Z.; Grauman, K. Story-Driven Summarization for Egocentric Video. In Proceedings of the 2013 IEEE Conference on Computer Vision and Pattern Recognition, Portland, OR, USA, 23–28 June 2013; pp. 2714–2721. [Google Scholar] [CrossRef]

- Nie, J.; Hu, Y.; Wang, Y.; Xia, S.; Jiang, X. SPIDERS: Low-Cost Wireless Glasses for Continuous In-Situ Bio-Signal Acquisition and Emotion Recognition. In Proceedings of the 2020 IEEE/ACM Fifth International Conference on Internet-of-Things Design and Implementation (IoTDI), Sydney, Australia, 21–24 April 2020; pp. 27–39. [Google Scholar]

- Chênes, C.; Chanel, G.; Soleymani, M.; Pun, T. Highlight Detection in Movie Scenes Through Inter-users, Physiological Linkage. In Social Media Retrieval; Ramzan, N., van Zwol, R., Lee, J.S., Clüver, K., Hua, X.S., Eds.; Springer: London, UK, 2013; pp. 217–237. [Google Scholar] [CrossRef]

- Fião, G.; Romão, T.; Correia, N.; Centieiro, P.; Dias, A.E. Automatic Generation of Sport Video Highlights Based on Fan’s Emotions and Content. In Proceedings of the 13th International Conference on Advances in Computer Entertainment Technology, Osaka, Japan, 9–12 November 2016; pp. 1–6. [Google Scholar]

- Ringer, C.; Nicolaou, M.A. Deep unsupervised multi-view detection of video game stream highlights. In Proceedings of the 13th International Conference on the Foundations of Digital Games, Malmö, Sweden, 7–10 August 2018; pp. 1–6. [Google Scholar]

- Kaklauskas, A.; Zavadskas, E.; Banaitis, A.; Meidute-Kavaliauskiene, I.; Liberman, A.; Dzitac, S.; Ubarte, I.; Binkyte, A.; Cerkauskas, J.; Kuzminske, A.; et al. A neuro-advertising property video recommendation system. Technol. Forecast. Soc. Chang. 2018, 131, 78–93. [Google Scholar] [CrossRef]

- Kaklauskas, A.; Bucinskas, V.; Vinogradova, I.; Binkyte-Veliene, A.; Ubarte, I.; Skirmantas, D.; Petric, L. INVAR Neuromarketing Method and System. Stud. Inform. Control 2019, 28, 357–370. [Google Scholar] [CrossRef]

- Gunawardena, P.; Amila, O.; Sudarshana, H.; Nawaratne, R.; Luhach, A.K.; Alahakoon, D.; Perera, A.S.; Chitraranjan, C.; Chilamkurti, N.; De Silva, D. Real-time automated video highlight generation with dual-stream hierarchical growing self-organizing maps. J. Real Time Image Process. 2020, 147. [Google Scholar] [CrossRef]

- Zhang, Y.; Liang, X.; Zhang, D.; Tan, M.; Xing, E.P. Unsupervised object-level video summarization with online motion auto-encoder. Pattern Recognit. Lett. 2020, 130, 376–385. [Google Scholar] [CrossRef]

- Moses, T.M.; Balachandran, K. A Deterministic Key-Frame Indexing and Selection for Surveillance Video Summarization. In Proceedings of the 2019 International Conference on Data Science and Communication (IconDSC), Bangalore, India, 1–2 March 2019; pp. 1–5. [Google Scholar] [CrossRef]

- Lien, J.J.; Kanade, T.; Cohn, J.F.; Ching-Chung, L. Automated facial expression recognition based on FACS action units. In Proceedings of the Third IEEE International Conference on Automatic Face and Gesture Recognition, Nara, Japan, 14–16 April 1998; pp. 390–395. [Google Scholar] [CrossRef]

- Lien, J.J.J.; Kanade, T.; Cohn, J.F.; Li, C.C. Detection, tracking, and classification of action units in facial expression. Robot. Auton. Syst. 2000, 31, 131–146. [Google Scholar] [CrossRef]

- Kahou, S.E.; Bouthillier, X.; Lamblin, P.; Gulcehre, C.; Michalski, V.; Konda, K.; Jean, S.; Froumenty, P.; Dauphin, Y.; Boulanger-Lewandowski, N.; et al. EmoNets: Multimodal deep learning approaches for emotion recognition in video. J. Multimodal User Interfaces 2016, 10, 99–111. [Google Scholar] [CrossRef]

- Mollahosseini, A.; Hasani, B.; Mahoor, M.H. AffectNet: A Database for Facial Expression, Valence, and Arousal Computing in the Wild. IEEE Trans. Affect. Comput. 2019, 10, 18–31. [Google Scholar] [CrossRef]

- Zhang, Y.; Wei, Z.; Wang, Y. Video frames similarity function based gaussian video segmentation and summarization. Int. J. Innov. Comput. Inf. Control 2014, 10, 481–494. [Google Scholar]

- Cakir, E.; Heittola, T.; Huttunen, H.; Virtanen, T. Polyphonic sound event detection using multi label deep neural networks. In Proceedings of the 2015 International Joint Conference on Neural Networks (IJCNN), Killarney, Ireland, 12–16 July 2015; pp. 1–7. [Google Scholar] [CrossRef]

- Parascandolo, G.; Huttunen, H.; Virtanen, T. Recurrent neural networks for polyphonic sound event detection in real life recordings. In Proceedings of the 2016 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Shanghai, China, 20–25 March 2016; pp. 6440–6444. [Google Scholar] [CrossRef]

- Gorin, A.; Makhazhanov, N.; Shmyrev, N. DCASE 2016 sound event detection system based on convolutional neural network. In Proceedings of the IEEE AASP Challenge: Detection and Classification of Acoustic Scenes and Events, Budapest, Hungary, 3 September 2016. [Google Scholar]

- Wagner, J.; Schiller, D.; Seiderer, A.; André, E. Deep Learning in Paralinguistic Recognition Tasks: Are Hand-crafted Features Still Relevant? In Proceedings of the Interspeech, Hyderabad, India, 2–6 September 2018; pp. 147–151. [Google Scholar]

- Choi, Y.; Atif, O.; Lee, J.; Park, D.; Chung, Y. Noise-Robust Sound-Event Classification System with Texture Analysis. Symmetry 2018, 10, 402. [Google Scholar] [CrossRef]

- Arroyo, I.; Cooper, D.G.; Burleson, W.; Woolf, B.P.; Muldner, K.; Christopherson, R. Emotion Sensors Go To School. In Proceedings of the 2009 Conference on Artificial Intelligence in Education: Building Learning Systems That Care: From Knowledge Representation to Affective Modelling, Brighton, UK, 6–10 July 2009; IOS Press: Amsterdam, The Netherlands, 2009; pp. 17–24. [Google Scholar]

- Kapoor, A.; Burleson, W.; Picard, R.W. Automatic prediction of frustration. Int. J. Hum. Comput. Stud. 2007, 65, 724–736. [Google Scholar] [CrossRef]

- Castellano, G.; Kessous, L.; Caridakis, G. Affect and Emotion in Human-Computer Interaction; Chapter Emotion Recognition Through Multiple Modalities: Face, Body Gesture, Speech; Springer: Berlin/Heidelberg, Germeny, 2008; pp. 92–103. [Google Scholar] [CrossRef]

- Kang, H.B. Affective content detection using HMMs. In Proceedings of the eleventh ACM international conference on Multimedia, Berkeley, CA, USA, 2–8 November 2003; pp. 259–262. [Google Scholar] [CrossRef]

- Caridakis, G.; Karpouzis, K.; Kollias, S. User and context adaptive neural networks for emotion recognition. Neurocomputing 2008, 71, 2553–2562. [Google Scholar] [CrossRef]

- Wulf, T.; Schneider, F.M.; Beckert, S. Watching Players: An Exploration of Media Enjoyment on Twitch. Games Cult. 2020, 15, 328–346. [Google Scholar] [CrossRef]

- Sjöblom, M.; Hamari, J. Why do people watch others play video games? An empirical study on the motivations of Twitch users. Comput. Hum. Behav. 2017, 75, 985–996. [Google Scholar] [CrossRef]

- Zeng, K.H.; Chen, T.H.; Niebles, J.C.; Sun, M. Title Generation for User Generated Videos. arXiv 2016, arXiv:1608.07068. [Google Scholar]

| Key | Limitation |

|---|---|

| 1 | Videos Scope |

| 2 | Audio/Visual Features Only |

| 3 | External Hardware |

| 4 | Not Mobile Platform |

| Source | Year | Sensing Inputs | Summarization Category | Limitations |

|---|---|---|---|---|

| Fiao et al. [43] | 2016 | Emotions, Audio, Video | Hybrid | 1 (sports), 3, 4 |

| Yang et al. [17] | 2015 | Audio, Video | Internal | 2, 4 |

| Shukla et al. [27] | 2018 | Audio, Video | Internal | 1 (sports), 2, 4 |

| Kaklauskas et al. [45,46] | 2018, 2019 | Audio, Video, Eye tracking, Facial Video, IR Camera, Personalized Questionnaire | Hybrid | 1 (video ads), 3, 4 |

| Gunawardena et al. [47] | 2020 | Video | Internal | 2, 4 |

| Zhang et al. [48] | 2020 | Video | Internal | 2, 4 |

| Moses and Balachandran [49] | 2018 | Video | Internal | 1 (surveillance), 2, 4 |

| Ringer and Nicolaou [44] | 2018 | Emotions, Audio, Video | Hybrid | 1 (video games), 4 |

| Joho et al. [10] | 2009 | Emotion | External | 3, 4 |

| Chênes et al. [42] | 2012 | Skin Temperature EMG, EDA, BVP | External | 1 (Movies), 3, 4 |

| Rand. | LR | SVM | kNN | RF | Adaboost | |

|---|---|---|---|---|---|---|

| Acc. | 0.05 | 0.13 | 0.10 | 0.10 | 0.12 | 0.12 |

| Acc. ( s) | 0.20 | 0.35 | 0.27 | 0.35 | 0.31 | 0.32 |

Publisher’s Note: MDPI stays neutral with regard to jurisdictional claims in published maps and institutional affiliations. |

© 2021 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).

Share and Cite

Meyer, H.; Wei, P.; Jiang, X. Intelligent Video Highlights Generation with Front-Camera Emotion Sensing. Sensors 2021, 21, 1035. https://doi.org/10.3390/s21041035

Meyer H, Wei P, Jiang X. Intelligent Video Highlights Generation with Front-Camera Emotion Sensing. Sensors. 2021; 21(4):1035. https://doi.org/10.3390/s21041035

Chicago/Turabian StyleMeyer, Hugo, Peter Wei, and Xiaofan Jiang. 2021. "Intelligent Video Highlights Generation with Front-Camera Emotion Sensing" Sensors 21, no. 4: 1035. https://doi.org/10.3390/s21041035

APA StyleMeyer, H., Wei, P., & Jiang, X. (2021). Intelligent Video Highlights Generation with Front-Camera Emotion Sensing. Sensors, 21(4), 1035. https://doi.org/10.3390/s21041035