In order to check the compatibility with the real-time implementation, an analysis of the computational load of the complete OSNMA-ready receiver is presented hereafter.

5.2. Profiling Analysis of the Complete Receiver Chain

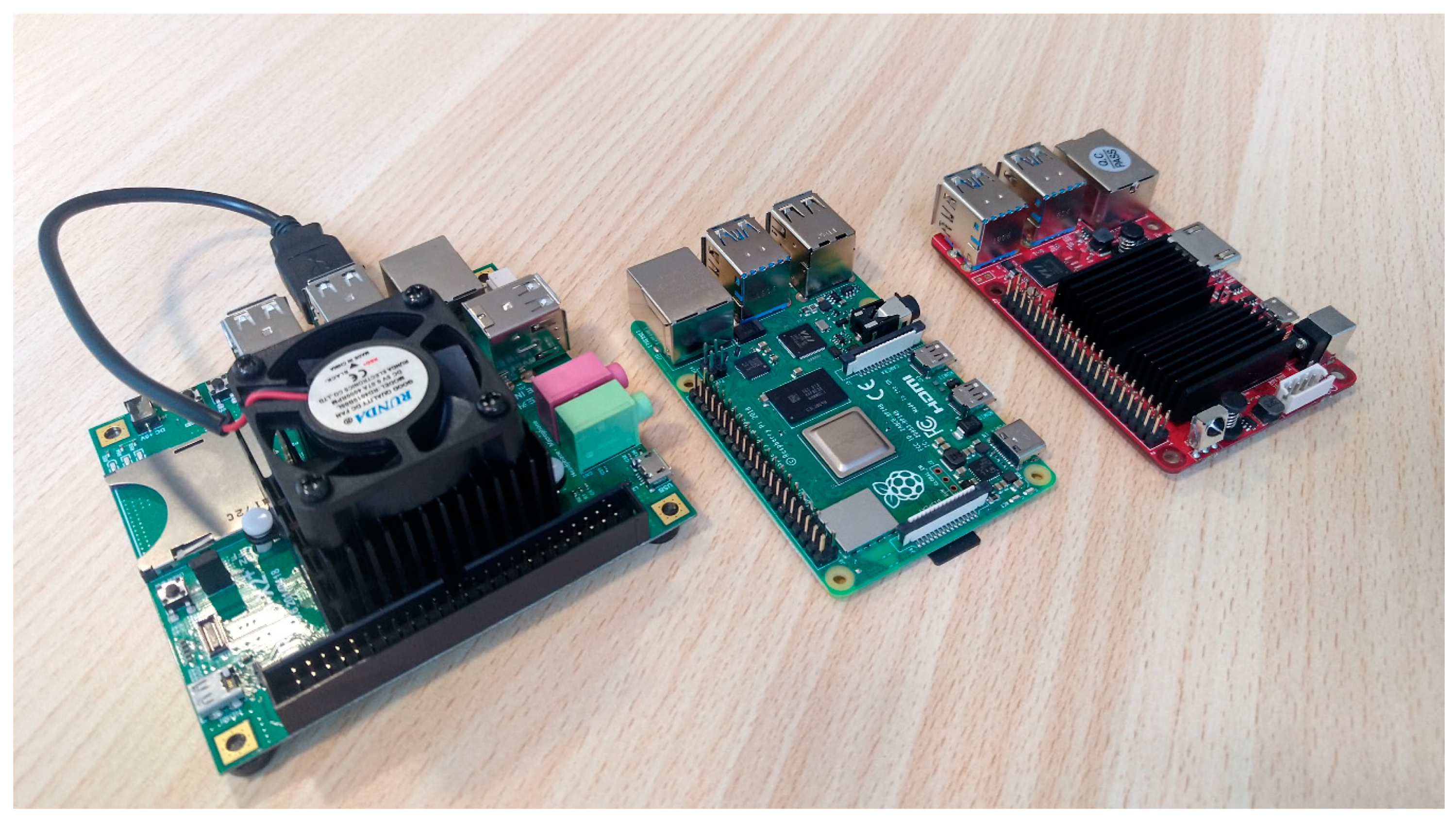

In order to perform the profiling of the complete receiving chain, the receiver has been fed by a 10 min file of raw samples, configured to elaborate 12 satellites, i.e., six GPS and six Galileo, and executed iterating six times, for a total of about 1 h of equivalent running time on all four platforms listed in

Table 2.

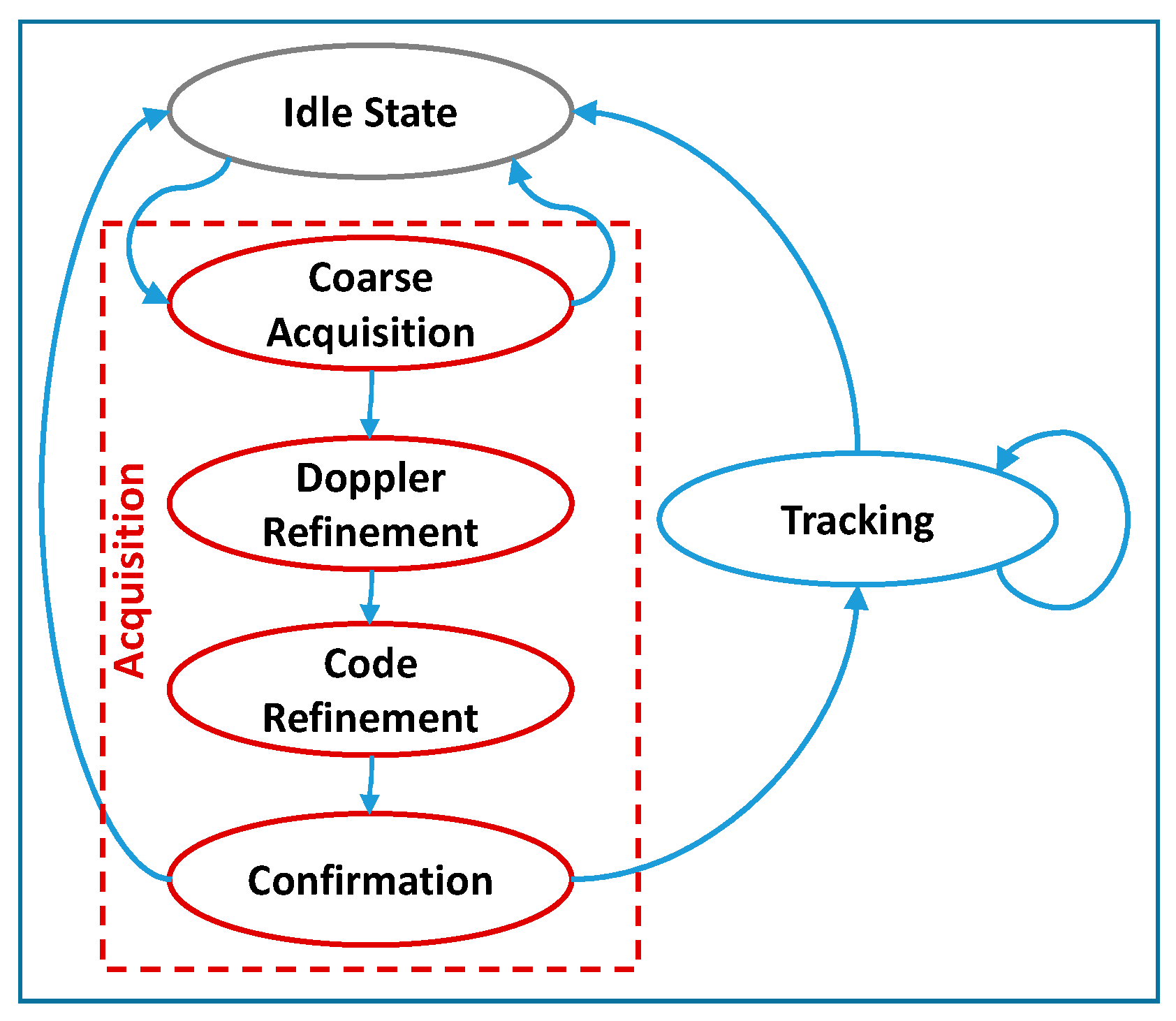

Table 9 reports the average execution time required by the main processing steps performed to elaborate one code period of input GNSS samples, i.e., 1 ms for GPS and 4 ms for Galileo, and compute the PVT. In particular, as better detailed in [

17], and recalled hereafter for the reader’s convenience, the receiver main loop elaborates 1 ms bunches of samples for each channel, i.e., satellite, through a finite state machine, illustrated in

Figure 6. Such finite state machine includes acquisition, further detailed in coarse acquisition, Doppler and code refinements and confirmation, and tracking for all the channels. The tracking includes the calls to the Galileo OSNMA functionalities.

In a global picture, the PVT computation certainly represents the heaviest processing step, as expected; besides, its very low call rate makes it less critical than the channel state machine’s operations, showing call rates of at least three orders of magnitude higher. The coarse acquisition is the processing step that requires the highest execution time and the highest call rate, as already pointed out in [

20], making it the most demanding one for a real-time execution. It is worth noticing that the impact of the OSNMA verifications on the Galileo tracking burden was expected to be negligible on average, due to their much lower call rate. This was confirmed by the achieved result, which shows it to be comparable to the Galileo tracking without OSNMA shown in [

20] for the standard PC and ODROID-X2.

As expected and already observed in

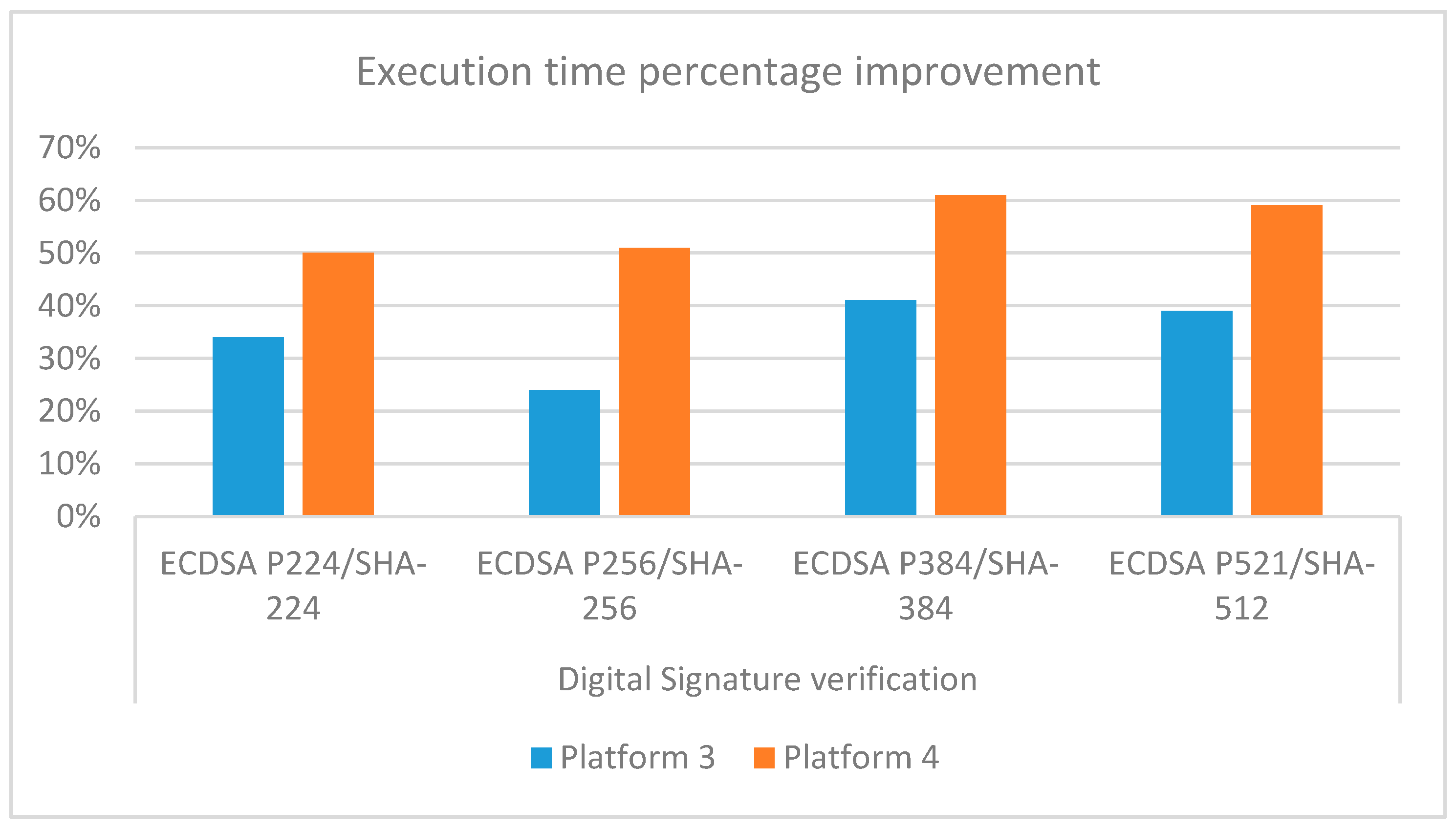

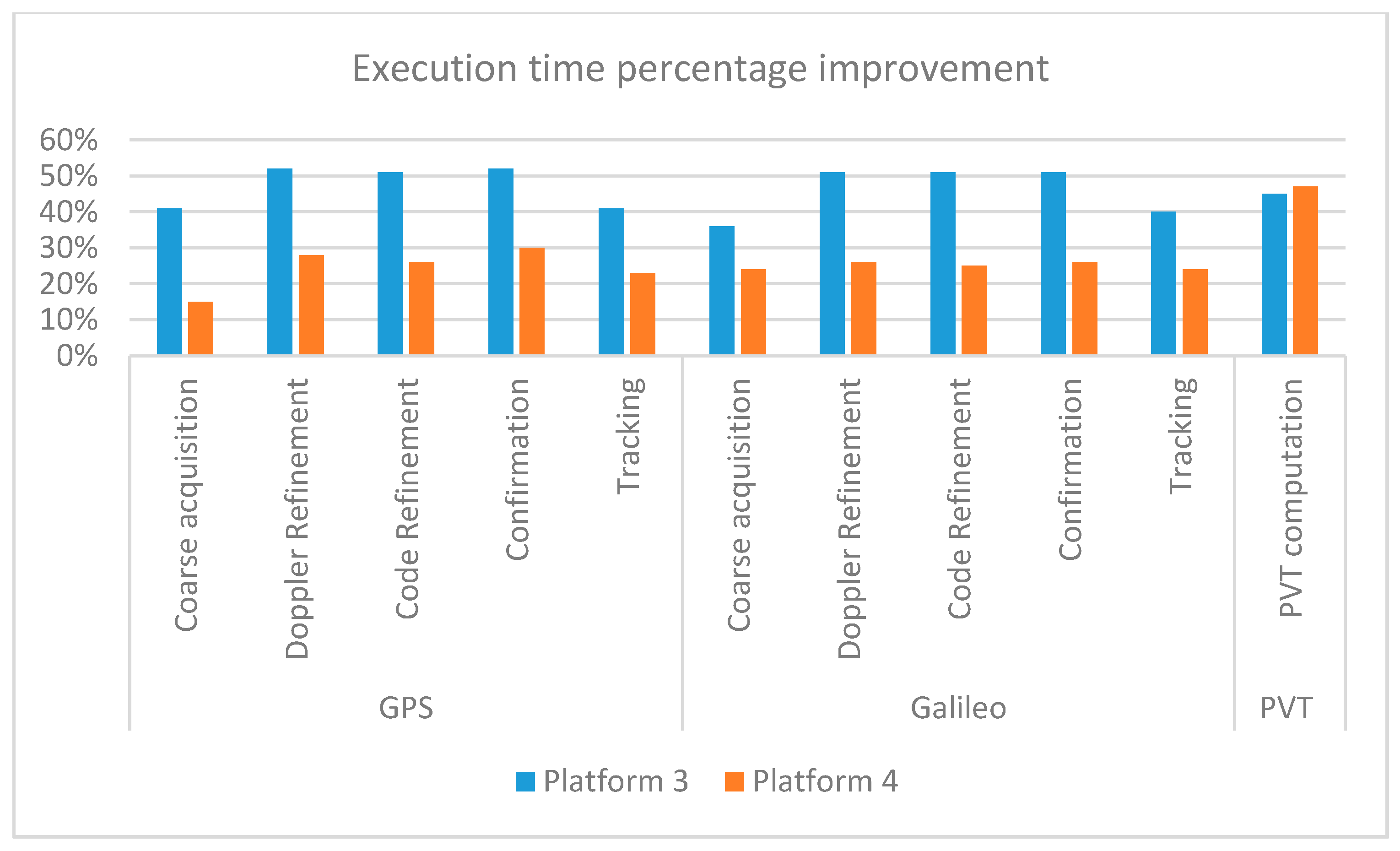

Section 4, the standard PC outperforms all other platforms: the performance degradations in terms of execution time are reported in

Table 10 and roughly range in the intervals 13–16 times, 6–9 times and 8–13 times for all the processing steps in the ODROID-X2, Raspberry Pi4 and ODROID-C4 machines, respectively. Furthermore, differently from what observed in

Section 4 where the newer platforms basically showed similar performances with the advantage of ODROID-C4 being limited to the digital signature verification, here Raspberry Pi4 (platform 3) overcomes ODROID-C4 (platform 4), as is clearly visible in

Figure 7 with improvements from 16% to 34%, except regarding the PVT computation with a degradation of about 4%. Although the two boards, namely, platforms 3 and 4, have somewhat similar hardware features, as reported in

Table 2, the different results could be partially explained by the final purposes of the two different processors: indeed, as declared by the manufacturer, Cortex-A72, released in 2015, is a high single-threaded performance CPU, whereas Cortex-A55, released in 2017, targets more high power-efficiency mid-range applications. Another aspect to be taken into account is that, as described in

Section 3.3, the original 32-bit ARM v7 assembly code has been rewritten into the equivalent 64-bits ARM v8 assembly to make it compatible with ODROID-C4, mainly focusing on the direct translation of the assembly instructions, rather than on the full exploitation of the instruction set features offered by the new architecture. A specific optimization phase could likely reduce the performance gap.

5.3. Real-Time Processor Load Analysis

The profiling presented in the previous subsection provides an estimation of the computational weight of each software function, allowing to identify any possible bottleneck that can then be addressed by the developer to improve performance. Anyway, a software profiling alone cannot show a whole and final picture of the real time performance. Indeed, the total receiver computational load is directly dependent on the number of satellite signals to be simultaneously elaborated. Thus, an analysis of the processor load performed on the application in real-time is required to evaluate the limit each platform is able to reach.

For this test campaign, the receiver was directly fed by the output of the RF FE, thereby processing on the fly the generated raw digital samples.

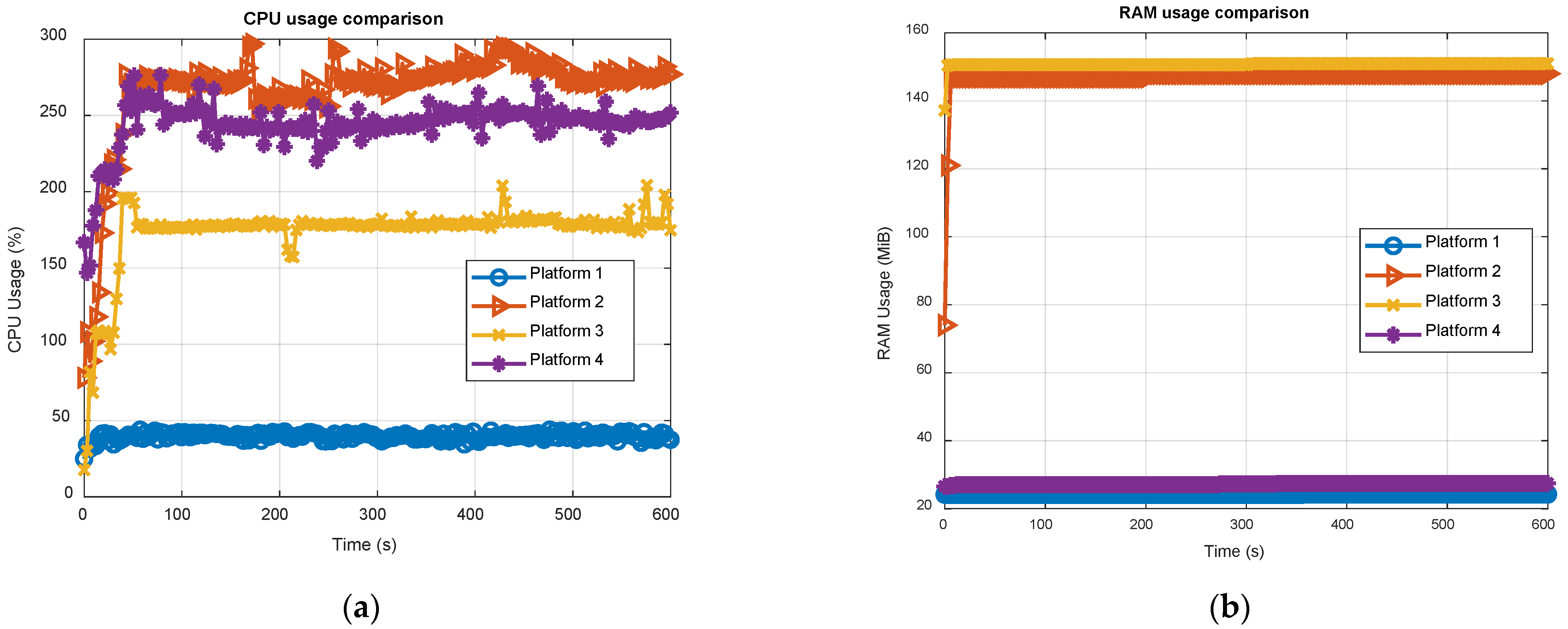

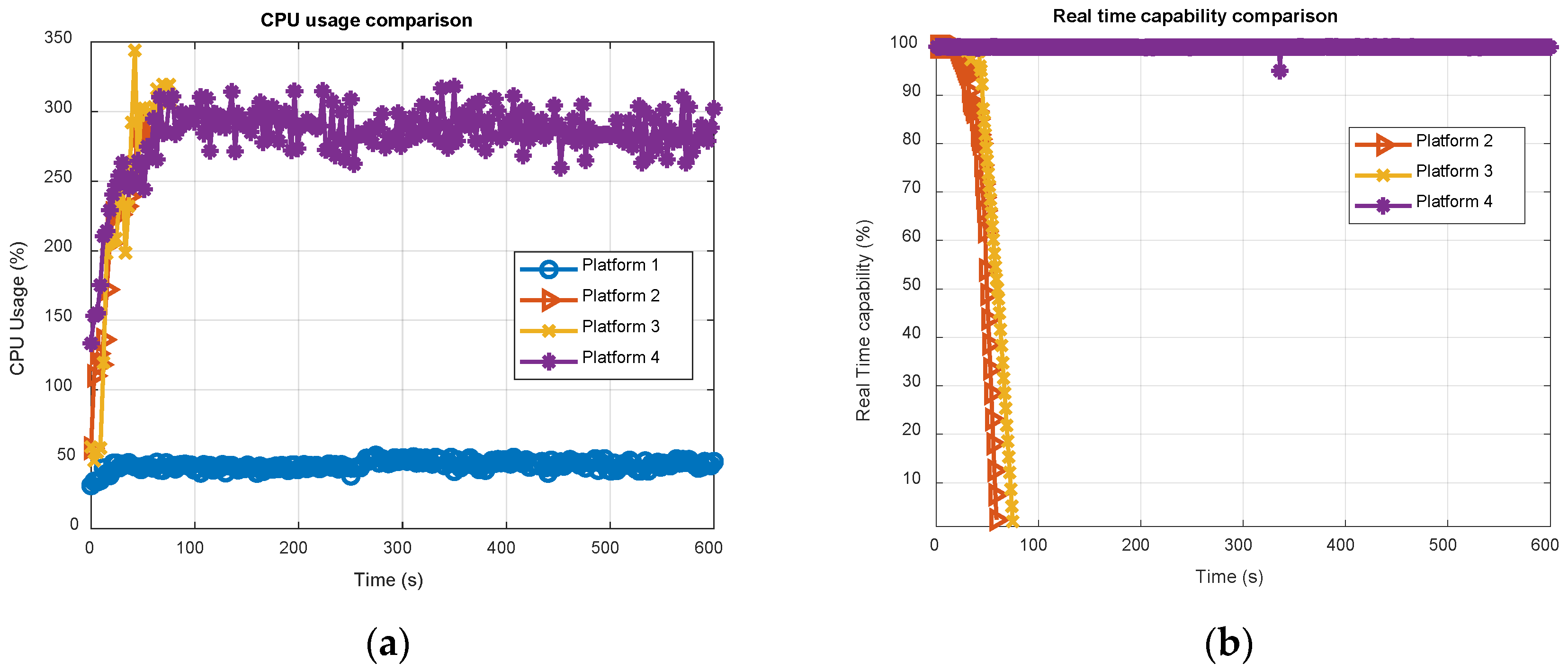

Figure 8 reports the CPU and RAM usage of the software receiver, running in real-time on all the four platforms for 12 satellites, i.e., six GPS and six Galileo, simultaneously tracked, across 10 min of total execution time. The results were obtained using the Linux utility top. It is worth noticing that eNGene features a multi-thread structure, exploiting all available cores hosted by the embedded platforms, i.e., four, as shown in

Table 2, implying a CPU usage ranging from 0% up to 400% in

Figure 8. On the contrary, running on a standard PC, NGene is a single-core process, with no need to specifically split the processing on all the eight available cores.

Results in

Figure 8a are in line with the profiling results described in the previous subsection. Indeed, with a usage less than 50% of the total Intel CPU power, the standard PC (see the light blue plot) exhibits performance far superior than any other platforms. Among the embedded boards, ODROID-X2 (red plot) shows the worst result, as expected, and Raspberry Pi4 (yellow plot) overtakes ODROID-C4. The RAM usage, expressed in MiB unit (1 MiB = 2

20 bytes), deserves a separate discussion. The presence of two main clusters can be clearly noticed in

Figure 8b: a former around 25 MiB for the standard PC and ODROID-C4 (both with a 64-bits OS) and a latter around 150 MiB for the remaining boards (both with a 32-bit OS). Such difference is not negligible and requires further investigations. In this regard, it is worth noticing that top does not report only the memory statically or dynamically allocated by the program, which is the same on all the considered boards, but the so-called resident memory or resident RAM, defined as the non-swapped physical memory a task is currently using, including all stack and heap memory, and memory and pages actually in memory from shared libraries. According to this, it is clear how the RAM usage strictly depends on the OS and is not in the direct control of the developer. Anyway, as a general observation, and considering the increasing size of available RAM abord the modern processors, the observed values cannot be considered critical.

Table 11 summarizes the CPU and RAM usage results, showing the average and maximum CPU load, and the maximum RAM usage. The Raspberry Pi4 is the one getting the best results among the embedded boards, showing 28% and 26% improvements with respect to ODROID-C4 in terms of average and peak CPU usages respectively, thus being perfectly in line with the results shown in

Figure 7. On the other hand, ODROID-C4 exhibits a much more efficient RAM usage, but, as already said, this is not a critical indicator for the real-time execution.

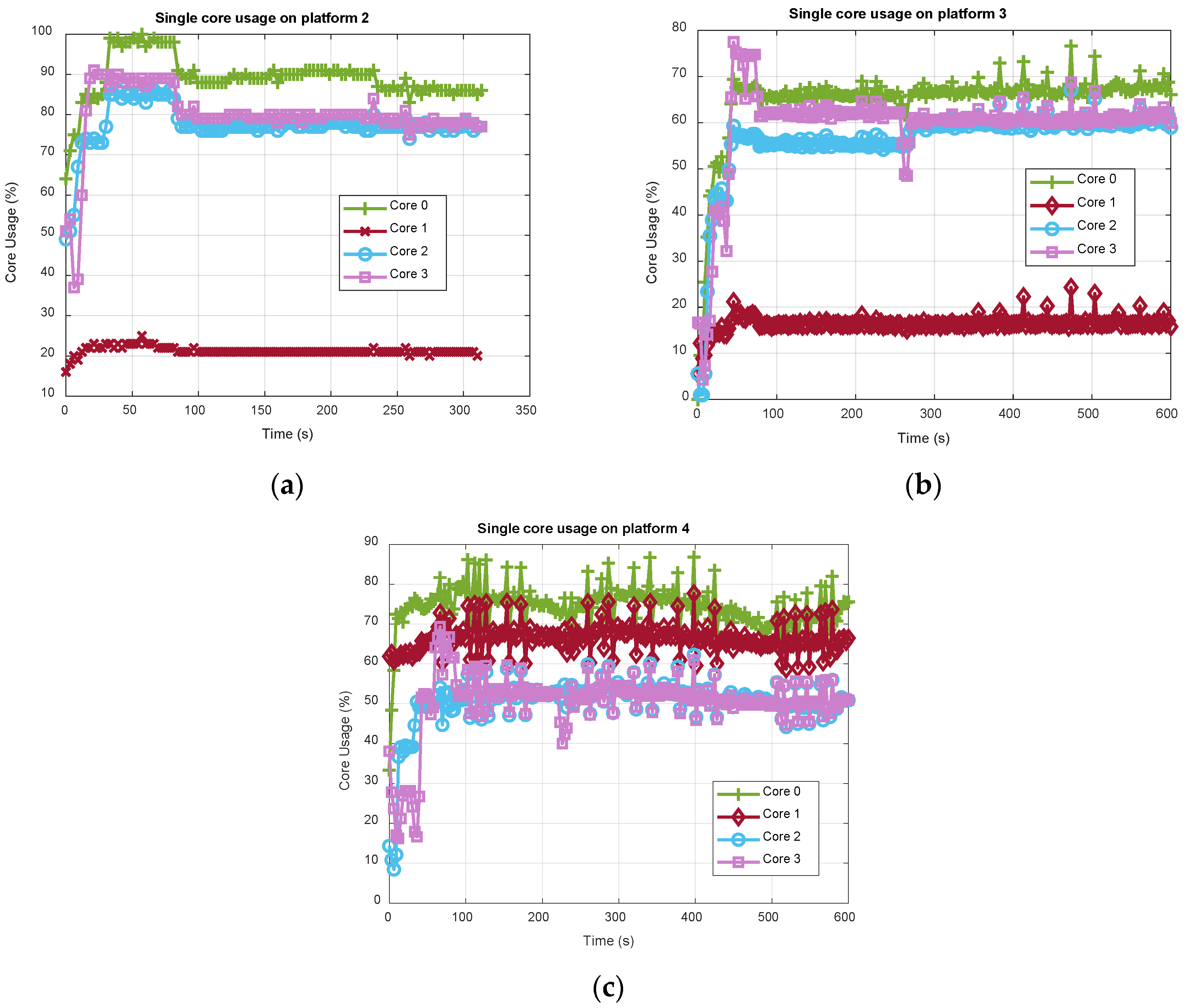

Now, focusing on the embedded boards only,

Figure 9 shows how the CPU usage reported in

Figure 8 distributes over each single core. In this regard, with

being the total number of configured channels (total GPS and Galileo), the GNSS processing is allocated on the four cores as follows:

Core 0 is scheduled for the execution of the main thread, in charge of performing the main function, the tracking of number of channels and the PVT computation.

Core 1 is devoted to the FE thread, in charge of managing the FE and USB only.

Core 2 is allocated to a channel thread, dedicated to tracking number of channels.

Core 3 is allocated to another channel thread, dedicated to acquiring 1 channel and tracking number of channels. It is also in charge of executing the TESLA key verification thread, created only for the first received TESLA key in case the distance in the chain from the root key is above 700 steps.

The interested reader can refer to [

20] for details about the multi-thread structure of eNGene. The above-described channels-cores mapping rule is totally empirical, and for the considered test setup where

, cores 0, 2 and 3 elaborate

channels each. With the acquisition being the heaviest function from a computational point of view, as already said, only one channel is acquired at a time and always allocated on a specific core, i.e., 3.

Looking at

Figure 9, as expected the core 0 (green plot with “plus” markers) is the one showing the highest CPU usage, since it performs PVT computation in addition to the tracking of four channels, whereas cores 2 (light blue plot with “circle” markers) and 3 (purple plot with “square” markers) have similar loads, being dedicated to the tracking of the same number of channels. The slight increase of the core 3 load, particularly visible in the first part of the test in

Figure 9a,b, is justified by the acquisition stage. Once all channels have been successfully acquired and are in the tracking loop, no additional channel is scheduled for acquisition, unless a tracking lost occurs. Finally, the core 1 load roughly stands at around 20% for both ODROID-X2 (

Figure 9a) and Raspberry Pi4 (

Figure 9b), whereas it is much higher, roughly around 65% for ODROID-C4 (

Figure 9c). Again, a clear difference of the 32 bit and 64 bit OS behavior is evident as for the RAM usage. Now, eNGene makes use of the libusb library to manage the USB stream of raw samples from the FE; thus such behavior could be likely due to a different libusb library implementation and handling from the OS side. It is worth noticing that, although specifically and properly setting both thread-cores mapping and the threads priority with root permissions at the receiver power on, the developer has not full control of the OS task scheduler. This probably suggests implementing a real-time core load check, thereby changing dynamically the allocation of the tasks. Indeed, while the load among cores looks more balanced on ODROID-C4, it seems core 1 could bear additional tasks on ODROID-X2 and Raspberry Pi4. The requirement for a better balance can also be deduced looking at the results in

Figure 10a, where the number of channels has been increased to 16, i.e., 10 GPS and 6 Galileo, while keeping the same cores allocation (five channels on cores 0 and 3, and six on core 2). It can be observed how, among the embedded boards, only ODROID-C4 is able to bear such a load, whereas both ODROI-X2 and Raspberry Pi4 stopped working before 80 s for a buffer overrun error. Indeed, as better detailed in [

20], an intermediate buffer is used to store the samples coming from the USB FE and makes them available for the channels’ elaboration, in the so-called producer-consumer relationship. When new samples from the USB FE are available but the intermediate buffer does not have free locations, a buffer overrun happens. The percentage of available memory locations in the intermediate buffer is therefore a good indicator of the real-time capability of the receiver, as reported in

Figure 10b for the embedded boards only.

Other aspects that should be carefully considered are the different clock values and speed controller policies (governors) of the processors under consideration. For instance, ODROID-X2 has been overclocked to 2 GHz [

20] and the governor set to performance, while no specific setup has been forced on Raspberry Pi4 and ODROID-C4 (default governor set to on-demand and performance respectively).

As is clearly visible from the above analysis, the maximum number of signals simultaneously tracked (and acquired) in real-time strictly depends on the platform, since the software functions’ execution time relies on the computational power offered by the specific processor, and on the OS task scheduling policy. In this regard, some duration tests have also been performed running the receiver for days: such tests confirm that ODROID-C4 (platform 4) is able to bear up to 16 satellites: this can be considered as a maximum limit in this current setup and for this specific platform. That limit drops to 12 for both ODROID-X2 (platform 2) and Raspberry Pi-4 (platform 3). Anyway, as pointed out above, a possible performance improvement for the platforms 2 and 3 cannot be excluded when implementing, for instance, a different task-core allocation rule, currently under investigation. According to this, newer and possibly more powerful boards are expected to be able to bear at least 12 satellites, which could be considered somehow a lower bound of general validity.